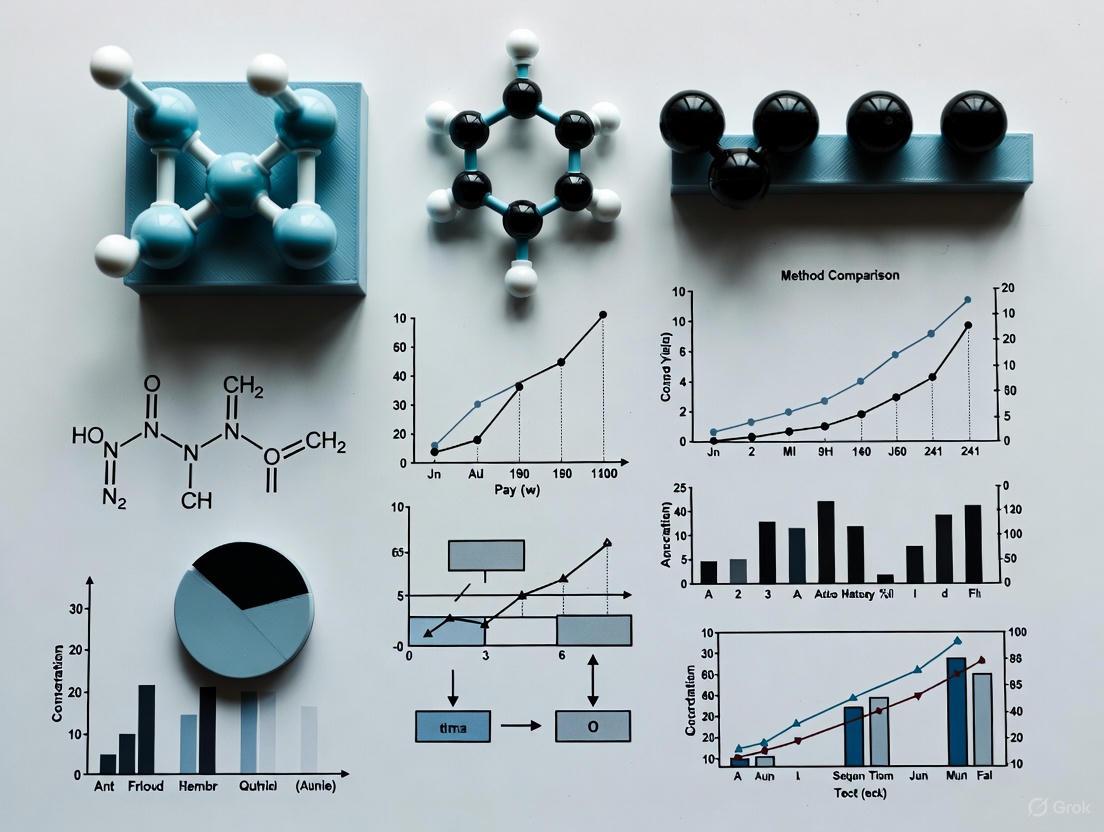

A 2025 Framework for Robust Method Comparison in Biomedical Research

This article provides a comprehensive guide to data analysis for method comparison studies, tailored for researchers and drug development professionals.

A 2025 Framework for Robust Method Comparison in Biomedical Research

Abstract

This article provides a comprehensive guide to data analysis for method comparison studies, tailored for researchers and drug development professionals. It covers foundational principles, advanced statistical methodologies, troubleshooting for common pitfalls, and validation frameworks to ensure analytical reliability. The guide synthesizes current best practices with emerging trends, including the role of AI and pharmacometric modeling, to help scientists design rigorous experiments, select appropriate analytical techniques, and generate defensible evidence for regulatory and clinical decision-making.

Core Principles and Strategic Planning for Method Comparison

In the rigorous field of method comparison studies, particularly within pharmaceutical development and clinical research, the core objective is to determine if two analytical methods can be used interchangeably. Interchangeability, in this context, means that a new or alternative method can replace a current one without affecting patient results, clinical decisions, or research outcomes [1]. This objective is fundamentally challenged by various forms of bias, or systematic error, which can distort results and lead to incorrect conclusions.

This guide details the process of designing a robust method comparison study, from establishing the objective to executing a statistically sound experimental protocol, all within the framework of ensuring data integrity by mitigating bias.

The Foundation of Interchangeability

At its heart, a method comparison study is an assessment of the agreement between two measurement procedures. The goal is to estimate the bias—the consistent difference—between a new test method and a comparative method (which may be an established reference method) [2]. If the observed bias is small enough to be deemed medically or analytically insignificant across the clinically relevant range, the methods may be considered interchangeable [1].

Crucially, interchangeability is not demonstrated by a mere association between methods. Statistical tools like correlation coefficients (r) only measure the strength of a linear relationship, not agreement. As shown in the example below, two methods can be perfectly correlated yet have a large, unacceptable bias, rendering them non-interchangeable [1].

Table: Example Illustrating that Correlation Does Not Imply Interchangeability

| Sample Number | Glucose by Method 1 (mmol/L) | Glucose by Method 2 (mmol/L) |

|---|---|---|

| 1 | 1 | 5 |

| 2 | 2 | 10 |

| 3 | 3 | 15 |

| 4 | 4 | 20 |

| 5 | 5 | 25 |

| 6 | 6 | 30 |

| 7 | 7 | 35 |

| 8 | 8 | 40 |

| 9 | 9 | 45 |

| 10 | 10 | 50 |

In this dataset, the correlation coefficient (r) is a perfect 1.00, but Method 2 consistently yields results five times higher than Method 1, indicating a massive proportional bias and a clear lack of interchangeability [1].

Critical Biases in Data Analysis and Method Comparison

Bias is a systematic error in thinking, data collection, or analysis that leads to a distortion of reality. In method comparison studies, biases can infiltrate various stages, from experimental design to data interpretation. Understanding and mitigating these biases is paramount.

Table: Common Types of Bias in Method Comparison and Data Analysis

| Type of Bias | Description | Example in Method Comparison | How to Avoid |

|---|---|---|---|

| Selection Bias [3] [4] | An error where the study sample is not representative of the target population. | Using only samples from healthy volunteers when the method will be used to monitor a disease state, failing to cover the entire clinically meaningful range [1]. | Use a deliberate sampling strategy to ensure samples cover the entire analytical measurement range and represent the spectrum of expected conditions [1] [2]. |

| Confirmation Bias [3] [5] | The tendency to search for, interpret, and recall information that confirms one's pre-existing beliefs or hypotheses. | Unconsciously discounting or re-running outlier results that do not fit the expected agreement between methods. | Clearly state the research question and acceptance criteria before starting. Actively seek and investigate evidence that contradicts the hypothesis of interchangeability [3] [5]. |

| Historical Bias [3] [5] | When systematic cultural prejudices or inaccuracies from past data are embedded into current processes or models. | Training a new algorithm on historical data from a method that was later found to have an unacceptably high bias for a specific patient subgroup. | Acknowledge and identify biases in historic data sources. Regularly audit incoming data and establish inclusivity frameworks [3]. |

| Survivorship Bias [3] [5] | An error of focusing only on data that has "survived" a selection process while ignoring data that did not. | Basing performance estimates only on samples that were stable enough to be analyzed, ignoring results from samples that degraded and were discarded. | Actively consider the entire data collection process, including samples or data points that were excluded, and ensure they are not omitted for reasons that could skew results [3]. |

Experimental Protocol for a Method Comparison Study

A well-designed and carefully planned experiment is the key to a successful and conclusive method comparison [1]. The following protocol outlines the critical steps.

Pre-Experimental Planning and Definition

- Define Acceptance Criteria: Before any data is collected, define the acceptable bias based on clinical requirements, biological variation, or state-of-the-art performance [1].

- Select the Comparative Method: Ideally, use a reference method with documented correctness. If using a routine method, understand that any large, unacceptable differences will require further investigation to determine which method is inaccurate [2].

Sample Selection and Preparation

- Sample Number: A minimum of 40 patient specimens is recommended, with 100 or more being preferable to identify unexpected errors due to interferences [1] [2].

- Measurement Range: Specimens must be carefully selected to cover the entire clinically meaningful measurement range, not just a convenient or normal range [1].

- Replication: Perform duplicate measurements for both methods, ideally in different analytical runs, to minimize random variation and identify sample mix-ups or transposition errors [2].

- Time Period: Conduct the study over a minimum of 5 days, and preferably up to 20 days, using multiple runs to mimic real-world conditions and account for day-to-day variability [1] [2].

- Sample Stability: Analyze specimens by both methods within 2 hours of each other to prevent stability issues from being mistaken for analytical bias. Define and systematize specimen handling procedures [2].

Data Analysis and Interpretation

- Graphical Analysis (Visual Inspection): Begin by graphing the data to identify outliers and general patterns of disagreement.

- Difference Plot (Bland-Altman): Plot the difference between the test and comparative method (y-axis) against the average of the two methods (x-axis). This helps visualize the magnitude of differences across the measurement range [1].

- Comparison Plot (Scatter Plot): Plot the test method results (y-axis) against the comparative method results (x-axis). A line of equality (y=x) can be drawn to visually assess deviations [1].

- Statistical Analysis:

- For a Wide Analytical Range: Use linear regression analysis (e.g., Deming or Passing-Bablok) to calculate the slope and y-intercept. The slope indicates a proportional bias, and the y-intercept indicates a constant bias. The systematic error (SE) at a critical decision concentration (Xc) is calculated as:

SE = (a + b*Xc) - Xc, whereais the intercept andbis the slope [1] [2]. - For a Narrow Analytical Range: Calculate the average difference (bias) and the standard deviation of the differences between the paired measurements [2].

- Inappropriate Statistics: Avoid using only a correlation coefficient (

r) or a t-test, as they are not adequate for assessing agreement and can be highly misleading [1].

- For a Wide Analytical Range: Use linear regression analysis (e.g., Deming or Passing-Bablok) to calculate the slope and y-intercept. The slope indicates a proportional bias, and the y-intercept indicates a constant bias. The systematic error (SE) at a critical decision concentration (Xc) is calculated as:

The following workflow diagram summarizes the key stages of a method comparison study:

The Scientist's Toolkit: Essential Reagents and Materials

A properly executed method comparison study relies on more than just protocol; it requires high-quality materials and a clear understanding of data structure.

Table: Essential Research Reagents and Materials for Method Comparison

| Item / Concept | Function / Description |

|---|---|

| Patient Samples | The core reagent. Must be fresh, stable, and representative of the entire pathological and physiological spectrum to validate method performance across real-world conditions [1] [2]. |

| Reference Material | A substance with one or more properties that are sufficiently homogeneous and well-established to be used for the calibration of an apparatus or the validation of a measurement method. Serves as a truth-bearer for assessing trueness. |

| Control Materials | Stable materials with known expected values used to monitor the precision and stability of both the test and comparative methods throughout the study duration. |

| Structured Data Table | A well-constructed table with rows representing individual specimens and columns representing variables (e.g., Sample ID, Result Method A, Result Method B). This structure is fundamental for accurate analysis in statistical software [6]. |

| Data Granularity | The level of detail in the data. In a comparison study, the granularity is typically a single measurement (or the mean of replicates) per specimen per method. Understanding this is critical for correct statistical analysis [6]. |

Defining the objective of interchangeability and executing a method comparison study free from critical biases is a disciplined process. It requires moving beyond simplistic statistical associations to a thorough investigation of systematic error. By implementing a robust experimental design, utilizing appropriate graphical and statistical tools, and proactively mitigating cognitive and data biases, researchers and drug development professionals can generate defensible evidence to conclude whether two methods are truly interchangeable, thereby ensuring the reliability of data that underpins critical healthcare and research decisions.

A robust study design is the cornerstone of reliable and interpretable research, particularly in method comparison studies within drug development. It ensures that findings are not only statistically significant but also generalizable and reproducible. Three pillars—sample size justification, selection bias mitigation, and stability assessment—are critical for upholding the integrity of the research process. This guide provides an in-depth technical examination of these components, synthesizing current methodologies and emerging best practices to equip researchers with the tools needed to design defensible and impactful studies.

Sample Size Determination: Beyond Rules of Thumb

Sample size determination is a fundamental step that influences a study's ability to draw valid conclusions. While rules of thumb are commonly used, a more principled approach is necessary for robust design.

The Limitation of Common Practices

A review of recently published feasibility studies reveals that sample size justifications are often inadequate. A survey of 20 studies showed that 40% justified sample size based on rules of thumb, while 15% provided no justification at all [7]. Common rules, such as 12 participants per arm for estimating standard deviation or a flat 50 participants total, can be misleading. For instance, a simulation demonstrates that a sample size of N=24, chosen based on such a rule, leads to a 21% probability that the estimated monthly recruitment rate will differ from the true rate by 5 or more participants. Increasing the sample size to N=50 reduces this probability to 9%, highlighting the risk of underpowered feasibility assessments when relying on oversimplified guidelines [7].

A Framework for Principled Sample Size Justification

A robust justification should be based on the operating characteristics (OCs) of the study, specifically the probability of correctly determining a future trial is feasible when it is, and vice versa [7]. Researchers must:

- Define the Statistical Analysis: Specify the primary endpoints and statistical tests upfront [8].

- Determine Acceptable Precision Levels: Decide on the margin of error for estimates.

- Decide on Study Power: Typically set at 80% or higher for primary outcomes.

- Specify the Confidence Level: Usually 95% [8].

- Determine the Effect Size: Establish the magnitude of a practically significant difference.

Table 1: Key Considerations for Sample Size Calculation

| Consideration | Description | Practical Impact |

|---|---|---|

| Statistical Power | The probability of correctly rejecting a false null hypothesis (detecting an effect if it exists). | Inadequate power increases the risk of Type II errors (false negatives). |

| Precision Level | The acceptable margin of error for an estimate (e.g., ±5%). | A smaller margin of error requires a larger sample size. |

| Effect Size | The magnitude of the difference or relationship the study aims to detect. | Smaller, more subtle effects require larger samples to be detected. |

| Statistical Analysis Plan | The specific statistical methods to be applied (e.g., t-test, regression). | The choice of model influences the sample size formula and requirements. |

The following workflow outlines the decision process for justifying a sample size, moving from simplistic rules to more principled characteristics.

Mitigating Selection Bias: Strategies for Representative Sampling

Selection bias occurs when the study sample is not representative of the target population, threatening the external validity and generalizability of the results.

Proactive Recruitment and Design Strategies

The COMO study, a nationwide health survey, provides a robust framework for minimizing selection bias. The study employed a two-stage, register-based sampling procedure, randomly selecting 177 municipalities, then 200 addresses per municipality from local population registries [9]. To combat declining response rates, a multi-stage communication and reminder strategy was critical. This included:

- Personalized postal invitations with access codes and QR codes.

- Up to five postal and electronic reminders.

- The use of non-monetary incentives (e.g., branded notepads).

- Social media outreach and a dedicated service hotline [9]. This strategy resulted in a 17.3% participation rate, demonstrating that persistent, multi-channel engagement is necessary for adequate enrollment [9].

Corrective Analytical Techniques

When proactive measures are insufficient, post-hoc statistical adjustments are essential. The COMO study developed design weights and calibration weights to correct for demographic imbalances, as adolescents, boys, and households with lower parental education were underrepresented [9]. For more complex scenarios, such as nonprobability samples of hard-to-reach populations (e.g., sexual minority men), advanced data integration methods are required. The Adjusted Logistic Propensity (ALP) method integrates a nonprobability sample with an external probability-based survey to model and correct for participation probabilities [10]. A novel two-step approach further extends this by first correcting for misclassification bias (e.g., underreporting of minority status in government surveys) before applying the ALP method, thereby addressing multiple sources of bias simultaneously [10].

Table 2: Strategies to Minimize Selection Bias at Different Study Stages

| Study Stage | Strategy | Technical Description |

|---|---|---|

| Recruitment | Probability Sampling | Using a known sampling frame (e.g., population registers) to randomly select participants, giving each eligible individual a known, non-zero probability of selection [9]. |

| Recruitment | Multimodal Engagement | Employing a structured sequence of contact methods (post, email, phone) and reminders, alongside clear communication and trust-building materials [9]. |

| Data Processing | Weighting Procedures | Applying design weights (inverse of selection probability) and calibrating them to known population benchmarks (e.g., from a microcensus) to adjust for nonresponse and covariate imbalances [9]. |

| Data Analysis | Data Integration (ALP) | Integrating nonprobability and probability samples to model participation probabilities (propensity scores) and generate pseudo-weights for bias correction [10]. |

The following diagram summarizes the comprehensive two-step approach to correct for both selection and misclassification bias.

Stability in Study Design: Ensuring Reliable and Reproducible Results

In method comparison studies, "stability" refers to the consistency and reliability of measurements over time and under varying conditions, which is critical for assessing the shelf-life of pharmaceutical products and the robustness of analytical methods.

Innovative Approaches to Stability Study Design

Traditional stability testing, guided by ICH Q1D, uses bracketing and matrixing to reduce the testing burden. Factorial analysis is an emerging, powerful alternative not yet covered in ICH guidelines. This method uses data from accelerated stability studies to identify critical factors (e.g., batch, container orientation, filling volume, drug substance supplier) and their interactions that influence product stability [11]. For example, a study on three parenteral dosage forms used factorial analysis to identify worst-case scenarios, enabling a reduction of long-term stability testing by at least 50% while maintaining reliability, as confirmed by regression analysis [11].

Predictive Stability Modeling

Predictive computational modeling is a transformative tool for prospectively assessing long-term stability. Advanced Kinetic Modeling (AKM) uses short-term accelerated stability data to build Arrhenius-based kinetic models, allowing for forecasts of product shelf-life under recommended storage conditions [12]. Case studies on biotherapeutics and vaccines have shown excellent agreement between AKM predictions and real-time data for up to three years [12]. Further innovations include a hybrid frequentist-Bayesian approach for modeling degradation kinetics, which offers superior coverage probabilities, and physics-informed AI that uses neural ordinary differential equations (ODEs) to capture complex, non-linear stability influences beyond temperature, such as pH or material variability [12].

Experimental Protocol: Forced Degradation Study

A key experimental protocol for establishing a stability-indicating method is the forced degradation study. The following workflow details the steps as demonstrated in the development of an RP-HPLC method for Upadacitinib [13].

In the case of Upadacitinib, this protocol revealed significant degradation under acidic (15.75%), alkaline (22.14%), and oxidative (11.79%) conditions, while the drug remained stable under thermal and photolytic stress [13]. This specificity confirms the method's ability to monitor stability accurately.

The Scientist's Toolkit: Essential Reagents and Materials

The following table lists key materials used in the experimental protocols cited in this guide, with an explanation of their function.

Table 3: Key Research Reagent Solutions for Stability and Analytical Methods

| Reagent / Material | Function in the Experiment |

|---|---|

| COSMOSIL C18 Column | A reverse-phase high-performance liquid chromatography (RP-HPLC) column used for the separation of a drug (e.g., Upadacitinib) from its degradation products [13]. |

| Acetonitrile (HPLC Grade) | A key organic solvent used in the mobile phase for RP-HPLC to elute analytes from the stationary phase [13]. |

| Formic Acid (0.1%) | A mobile phase additive in RP-HPLC that helps improve peak shape and ionization efficiency in analytical methods [13]. |

| Hydrogen Peroxide (H₂O₂) | An oxidizing agent used in forced degradation studies to simulate oxidative stress on a drug substance and identify potential degradants [13]. |

| Hydrochloric Acid (HCl) & Sodium Hydroxide (NaOH) | Used in forced degradation studies to subject the drug substance to acidic and alkaline hydrolysis, respectively, to assess chemical stability [13]. |

| Type I Glass Vials | The highest quality of pharmaceutical glass with high resistance to chemical attack, used as primary packaging for parenteral drug products in stability studies [11]. |

A robust study design is an integrated system where sample size, selection methods, and stability assessments are interdependently optimized. Moving beyond simplistic rules of thumb to justify sample sizes, implementing proactive and corrective strategies against selection bias, and adopting innovative, predictive stability models are no longer best practices but necessities for generating credible and actionable data. As methodological research advances, the integration of these principles—buttressed by sophisticated statistical techniques and a commitment to rigorous design—will continue to be the foundation of reliable method comparison studies and successful drug development.

Why Correlation Analysis and T-Tests Are Inadequate for Method Comparison

In scientific research and drug development, the comparison of measurement methods—such as a new automated technique against a manual or established standard—is fundamental. For decades, correlation analysis and the t-test have been widely used as the default statistical tools for such comparisons. However, a deeper examination reveals that these methods are often inadequate and misleading for this specific purpose. This guide explores the statistical pitfalls of misapplying these tools and outlines robust alternative frameworks designed to deliver trustworthy, evidence-based conclusions in method comparison studies.

The Fundamental Pitfalls of Correlation Analysis

The correlation coefficient, particularly Pearson's r, is a statistical measure often used in studies to show an association between variables or to look at the agreement between two methods. Despite its widespread use, it possesses critical limitations that make it invalid for assessing agreement [14].

The Linearity Assumption and Its Consequences

An inherent limitation of the Pearson correlation coefficient is that it only measures the strength of a linear association between two variables [14]. In essence, it indicates how well the data fit a straight line. This becomes problematic when two methods exhibit a consistent bias; even if one method consistently gives values that are 10 units higher than the other, the correlation can still be perfect (r = 1), as the data points lie perfectly on a straight line. The correlation coefficient is completely blind to this systematic error [14]. Furthermore, variables may have a strong non-linear association, which could still yield a low correlation coefficient, creating a false impression of poor relationship or agreement [14].

Sensitivity to the Data Range

The correlation coefficient is profoundly influenced by the range of the observations in the sample [14]. A wider range of values tends to inflate the correlation coefficient, while a narrower range suppresses it. This makes correlation coefficients fundamentally incomparable across different groups or studies that have varying data distributions. Researchers could, either intentionally or unintentionally, inflate the correlation coefficient simply by including additional data points with very low and very high values [14]. This property undermines the objective assessment of a method's performance across its intended operating range.

The Illusion of Agreement

Perhaps the most critical flaw is that correlation is not agreement [14]. The correlation coefficient assesses whether two variables are related, not whether they produce identical results. If two methods are to be used interchangeably, we need to know if one method yields the same value as the other for a given sample. A high correlation can exist even when the two methods never produce the same value, rendering it an invalid measure for assessing the practical interchangeability of two methods [14].

The Inadequacy of the T-Test for Method Comparison

The t-test is a staple tool for comparing means, but its application in method comparison is often scientifically inappropriate. Its misuse stems from a fundamental misunderstanding of the research question.

Confounding Group Differences with Individual Disagreement

A t-test, whether paired or two-sample, is designed to answer one question: is there a statistically significant difference between the mean values of two groups? [15] [16]. In method comparison, a non-significant t-test (p > 0.05) is often incorrectly interpreted as evidence that the two methods agree. However, this is a dangerous oversimplification. It is entirely possible for two methods to have identical mean values (thus, a non-significant t-test) while showing massive disagreement on individual sample measurements—where one method consistently overestimates at low values and underestimates at high values [14]. The t-test fails to capture this individual-level disagreement, which is crucial for determining clinical or analytical interchangeability.

The Fallacy of the "Average Performance"

Relying on the average difference alone is insufficient for method comparison. A t-test does not provide any information about the distribution of differences between paired measurements. It offers no insight into the limits of agreement—the range within which most differences between the two methods will lie. Consequently, it cannot inform a researcher or clinician about the potential magnitude of discrepancy they might encounter when using the new method in place of the old one for a single patient or sample.

A Robust Framework for Method Comparison: Beyond Correlation and T-Tests

To overcome the limitations of correlation and t-tests, a comprehensive framework centered on Bland-Altman analysis is recommended. This approach, now considered the standard for assessing agreement between two measurement methods, shifts the focus from association to individual differences [17].

The Bland-Altman Limits of Agreement

The core of this method is a simple yet powerful visualization and calculation. The workflow for conducting a robust method comparison study is systematic and reveals the true nature of the disagreement between methods.

The Bland-Altman plot provides an intuitive visual assessment of the agreement. The following table outlines the key elements to extract from this analysis for a conclusive report.

Table 1: Key Metrics Derived from a Bland-Altman Analysis

| Metric | Calculation | Interpretation |

|---|---|---|

| Mean Difference (Bias) | d = Σ(Method A - Method B) / N | The systematic, constant bias between methods. A positive value indicates Method A consistently reads higher than Method B. |

| Standard Deviation (SD) of Differences | SD = √[ Σ(dᵢ - d)² / (N-1) ] | The random variation or scatter of the differences around the mean bias. |

| 95% Limits of Agreement | d - 1.96×SD to d + 1.96×SD | The range within which 95% of the differences between the two methods are expected to lie. |

Complementary Metrics for a Comprehensive View

While Bland-Altman analysis is central, a thorough comparison should include additional metrics that capture different aspects of performance.

- Mean Absolute Error (MAE) and Mean Squared Error (MSE): These metrics provide deeper insights into the predictive accuracy of models by capturing the error distribution, which cannot be fully captured by the correlation coefficient alone [18]. They are more direct measures of average error magnitude than a correlation coefficient.

- Intraclass Correlation Coefficient (ICC): Unlike Pearson's r, the ICC assesses consistency or agreement by comparing the variability between different subjects to the total variability, including that introduced by the different methods [14]. It is a more appropriate measure of reliability.

- Baseline Comparisons: A powerful strategy is to compare the performance of the new method against a simple baseline, such as predicting the mean value of the reference method or using a simple linear regression model. This establishes a reference point for evaluating the added value of more complex methods [18].

Table 2: Comparison of Statistical Methods for Method Comparison

| Method | Primary Question | Strengths | Weaknesses for Method Comparison |

|---|---|---|---|

| Pearson Correlation | How strong is the linear relationship? | Easy to compute, unitless. | Does not measure agreement; insensitive to bias; highly dependent on data range. |

| T-Test | Are the population means different? | Tests for systematic bias. | Does not assess individual disagreement; a non-significant result is not proof of agreement. |

| Bland-Altman Analysis | What are the limits of disagreement for an individual measurement? | Visual and quantitative; estimates both bias and random error; identifies relationship patterns. | Requires multiple samples; clinical acceptability of limits is a subjective judgment. |

| ICC | How reproducible are the measurements? | Directly measures reliability/agreement for repeated measures. | Can be complex to calculate and interpret correctly; several forms exist for different scenarios. |

Case Study in Practice: Automated vs. Manual Measurement

A study in radiology provides a clear example of these principles in action. Researchers compared automated CT volumetry (AV) with manual unidimensional measurements (MD) for assessing treatment response in pulmonary metastases [19].

The study found that while both methods might be correlated with the true tumor burden, agreement between human observers was the critical differentiator. The relative measurement errors were significantly higher for MD than for AV. Most tellingly, there was total intra- and inter-observer agreement on treatment response classification when using AV (kappa=1), whereas agreement using MD was only moderate to good (kappa=0.73-0.84) [19]. This demonstrates that a method can be precise and reliable (AV) even when compared against an imperfect standard, and that metrics of agreement and error are more informative than correlation alone.

Essential Research Reagents for Method Comparison Studies

To conduct a rigorous method comparison study, researchers should ensure they have the following "toolkit" of statistical and methodological reagents.

Table 3: Essential Research Reagents for Method Comparison Studies

| Reagent / Tool | Function in Method Comparison |

|---|---|

| Bland-Altman Analysis Script | A pre-validated statistical script (e.g., in R or Python) to calculate bias, limits of agreement, and generate the corresponding plot. |

| Dataset with Paired Measurements | A sufficient number of samples (typically >50) measured by both the new and reference method, covering the entire expected measurement range. |

| Clinical Acceptability Criteria | Pre-defined, clinically justified thresholds for the limits of agreement, determining when a method is "good enough" for its intended use. |

| Intraclass Correlation (ICC) | A statistical measure used to supplement Bland-Altman by quantifying reliability and consistency between the two methods. |

| Error Metric Calculators (MAE, MSE) | Tools to compute mean absolute error and mean squared error, providing alternative views of average model performance and error magnitude [18]. |

The automatic use of correlation coefficients and t-tests for method comparison is a pervasive but flawed practice in research and drug development. Correlation confuses association with agreement, while the t-test is blind to individual-level discrepancies. The scientific community must move beyond these inadequate tools and adopt a framework designed for the task. The Bland-Altman limits of agreement method, supported by metrics like the ICC and MAE, provides a transparent, comprehensive, and clinically relevant assessment of whether two methods can be used interchangeably. By embracing this robust framework, researchers can generate trustworthy evidence, ensure the reliability of their measurements, and make data-driven decisions with greater confidence.

In method comparison studies, a cornerstone of research and development, validating a new measurement technique against an existing standard is paramount. This process ensures the reliability, accuracy, and transferability of data upon which critical decisions are made. The initial exploratory phase of such studies sets the stage for all subsequent statistical analysis. This whitepaper details the foundational role of two essential graphical tools in this phase: the scatter plot for visualizing correlation and distribution, and the difference plot (specifically the Bland-Altman plot) for quantifying agreement. We provide researchers with a rigorous framework for their application, complete with experimental protocols, data presentation standards, and visualization guidelines tailored for scientific rigor and regulatory scrutiny.

In fields such as pharmaceutical development and clinical diagnostics, the introduction of a new, potentially faster, cheaper, or more precise analytical method must be preceded by a comprehensive comparison against a validated reference method. While advanced statistical models have their place, the initial exploration of the data via visualization offers an irreplaceable, intuitive understanding of the relationship and agreement between two methods. These visualizations help to quickly identify trends, biases, outliers, and other patterns that might be obscured in purely numerical analysis [20].

A well-constructed plot can reveal the story of the data, allowing scientists to form hypotheses and select appropriate confirmatory statistical tests. This guide focuses on the two most critical plots for this purpose, providing a detailed protocol for their execution and interpretation within the context of robust scientific research.

The Scatter Plot: Visualizing Correlation and Distribution

Conceptual Foundation and Applications

A scatter plot is a fundamental data visualization technique that displays the relationship between two continuous variables by plotting individual data points on a Cartesian plane [21] [22]. In a method comparison study, one axis (typically the X-axis) represents the values obtained from the reference method, while the other (the Y-axis) represents the values from the new test method.

The primary strength of the scatter plot lies in its ability to reveal patterns in the data [20]. It is used to:

- Identify Correlations: Visualize whether the two methods move in tandem (positive correlation), in opposite directions (negative correlation), or show no relationship.

- Spot Non-Linear Relationships: Reveal if the agreement between methods changes across the measurement range, which is often missed by correlation coefficients alone.

- Detect Clusters and Outliers: Uncover subgroups within the data or identify anomalous measurements that may require further investigation [22].

Experimental Protocol for Scatter Plot Analysis

The following protocol ensures the consistent and correct generation of scatter plots for analytical studies.

Step 1: Data Collection and Preparation

- Sample Selection: Select a sufficient number of samples (N ≥ 40 is often recommended for reliable estimates) that cover the entire expected measurement range of the clinical or analytical application [22].

- Paired Measurements: Each sample must be measured by both the reference and the test method, ensuring the results are paired for analysis.

- Data Logging: Record results in a structured table with columns for Sample ID, Reference Method Value, and Test Method Value.

Step 2: Plot Construction

- Axis Definition: Plot the reference method values on the X-axis and the test method values on the Y-axis.

- Scale Setting: Ensure both axes are on the same scale. This is critical for a proper visual assessment of agreement.

- Data Point Plotting: Represent each paired measurement as a single point (e.g., a circle) on the graph.

Step 3: Enhanced Visualization

- Reference Line: Add a line of identity (Y=X). If the test method perfectly agrees with the reference, all points would lie on this line.

- Regression Line: Fit and plot a regression line (e.g., linear, Loess) to summarize the observed relationship between the two methods. The equation and R² value should be displayed on the plot.

- Confidence Intervals: Add a confidence band around the regression line to visualize the uncertainty in the relationship.

Step 4: Interpretation and Reporting

- Analyze the deviation of data points from the line of identity.

- Examine the slope and intercept of the regression line for systematic biases.

- Document any outliers or evidence of non-constant variance (heteroscedasticity).

Table 1: Scatter Plot Interpretation Guide

| Visual Pattern | Potential Interpretation | Suggested Action |

|---|---|---|

| Points closely follow the line of identity | Strong agreement between methods | Proceed to quantitative agreement analysis (e.g., Bland-Altman). |

| Points are scattered but show a linear trend | Correlation without perfect agreement; constant or proportional bias may be present. | Calculate regression equation; proceed to Bland-Altman analysis to quantify bias. |

| Points form a curved pattern | Non-linear relationship between methods. | Method agreement is range-dependent; standard linear statistics may be invalid. Consider data transformation or segmental analysis. |

| Distinct clusters of points | Subpopulations may be influencing measurements. | Investigate sample sources; consider stratified analysis. |

| Isolated point(s) far from others | Potential outlier(s). | Investigate the measurement process for those samples; consider repeat analysis. |

The following workflow diagram outlines the key decision points in the scatter plot analysis process:

The Difference Plot (Bland-Altman Plot): Quantifying Agreement

Conceptual Foundation and Applications

While a scatter plot shows correlation, it is not the optimal tool for assessing agreement. The Bland-Altman plot (or Difference Plot) is specifically designed to quantify the agreement between two quantitative measurement methods [21]. It moves beyond "Are they related?" to answer "How well do they agree?"

The plot visually displays the difference between the two methods against their average. This allows for a direct assessment of the bias (systematic difference) and the limits of agreement (random variation around the bias). Its key applications are:

- Estimating Average Bias: Calculating the mean difference to identify any systematic over- or under-estimation by the test method.

- Defining Limits of Agreement: Establishing an interval (typically bias ± 1.96 SD) within which 95% of the differences between the two methods are expected to lie.

- Identifying Heteroscedasticity: Revealing whether the variability of the differences is consistent across the measurement range or if it increases with the magnitude of the measurement.

Experimental Protocol for Bland-Altman Analysis

This protocol guides the creation and interpretation of a Bland-Altman plot using the same paired dataset as the scatter plot.

Step 1: Data Calculation

- For each sample i, calculate:

- Average Value: ( Ai = \frac{(Referencei + Testi)}{2} )

- Difference Value: ( Di = Testi - Referencei )

Step 2: Plot Construction

- Axis Definition: Plot the Average Value (( Ai )) on the X-axis and the Difference Value (( Di )) on the Y-axis.

- Data Point Plotting: Plot each calculated (( Ai, Di )) point.

Step 3: Key Reference Line Addition

- Mean Difference Line: Draw a solid horizontal line at the mean of all differences (( \bar{D} )). This represents the average bias.

- Limits of Agreement (LoA): Draw dashed horizontal lines at ( \bar{D} + 1.96s ) and ( \bar{D} - 1.96s ), where ( s ) is the standard deviation of the differences.

- Zero Line: Draw a dotted horizontal line at Y=0 for visual reference.

Step 4: Interpretation and Reporting

- Assess the magnitude and clinical/analytical significance of the average bias (( \bar{D} )).

- Determine if the 95% LoA are sufficiently narrow for the test method to replace the reference method in practice.

- Check for heteroscedasticity; if present, consider data transformation or reporting range-specific LoA.

Table 2: Bland-Altman Plot Interpretation Guide

| Visual Pattern | Potential Interpretation | Suggested Action |

|---|---|---|

| Differences are normally distributed around the mean bias, within LoA. | Consistent agreement across the measurement range. | The test method may be interchangeable with the reference if bias and LoA are clinically acceptable. |

| The mean bias line is significantly above or below zero. | Significant systematic bias exists. | The test method consistently over- or under-estimates values. A constant adjustment may be needed. |

| The spread of differences widens as the average value increases (funnel shape). | Presence of heteroscedasticity. | Limits of agreement are not constant. Consider logarithmic transformation or report conditional LoA. |

| Data points show a sloping pattern relative to the X-axis. | Proportional bias exists. | The difference between methods changes with the magnitude of measurement. Analysis may require more complex modeling. |

The logical flow for creating and acting upon a Bland-Altman plot is summarized below:

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key components required for executing a robust method comparison study, from data collection to visualization.

Table 3: Research Reagent Solutions for Method Comparison Studies

| Item / Solution | Function / Purpose |

|---|---|

| Reference Standard Material | A well-characterized, high-purity substance used to calibrate the reference method and establish traceability. Serves as the benchmark for accuracy. |

| Test Kits/Reagents | The complete set of reagents, buffers, and consumables specific to the new test method being validated. |

| Calibrators | A series of samples with known analyte concentrations, used to construct the calibration curve for both the reference and test methods. |

| Quality Control (QC) Samples | Materials with known, stable concentrations (low, medium, high) used to monitor the performance and stability of both measurement methods throughout the study. |

| Statistical Analysis Software | Software (e.g., R, Python, SAS, specialized IVD validation packages) essential for calculating descriptive statistics, performing regression analysis, and generating high-quality scatter and Bland-Altman plots. |

| Data Visualization Library | Programming libraries (e.g., ggplot2 for R, Matplotlib/Seaborn for Python) that provide the functions needed to create publication-quality plots with precise control over scales, colors, and annotations. |

The path to adopting a new analytical method is paved with rigorous evidence of its equivalence to an established standard. Initial data exploration using scatter plots and Bland-Altman plots is not a mere preliminary step but a critical phase of analysis. The scatter plot effectively screens for the fundamental relationship and gross anomalies, while the Bland-Altman plot provides a definitive, intuitive assessment of the agreement that is directly relevant to clinical or analytical practice. By adhering to the detailed protocols, visualization standards, and interpretative frameworks outlined in this guide, researchers in drug development and beyond can ensure their method comparison studies are built on a foundation of visual and quantitative clarity, leading to more reliable and defensible scientific conclusions.

Selecting and Applying Advanced Statistical Techniques

In clinical laboratory science and drug development, the comparison of measurement methods is a critical component of method validation. When replacing an existing analytical procedure with a new one, researchers must rigorously demonstrate that both methods produce equivalent results to ensure patient safety and data reliability. Traditional statistical approaches such as Pearson's correlation and ordinary least squares (OLS) regression are often misapplied in method comparison studies, leading to incorrect conclusions about method agreement. This technical guide examines two specialized regression techniques—Deming and Passing-Bablok regression—that properly account for measurement errors in both methods. Within the broader context of analytical method validation, this review provides researchers, scientists, and drug development professionals with a comprehensive framework for selecting and implementing the appropriate regression methodology based on their specific data characteristics and study objectives.

Method comparison studies are fundamental to clinical laboratory science, pharmacology, and biomedical research whenever a new measurement procedure is introduced. These studies assess the agreement between two measurement methods—typically an established method and a new candidate method—to determine whether they can be used interchangeably without affecting clinical interpretations or research conclusions [1]. The core question is whether systematic differences (bias) exist between methods and whether this bias is clinically or analytically significant.

Common scenarios requiring method comparison include: implementing a new automated analyzer alongside an existing one, validating a less expensive alternative method, replacing an invasive with a non-invasive technique, or introducing a point-of-care testing device. In pharmaceutical development, method comparisons are essential when transitioning between different analytical platforms during drug discovery and development phases.

A critical limitation of conventional statistical approaches in this context is their improper application. Pearson's correlation coefficient measures the strength of association between two variables but does not indicate agreement. As demonstrated in Table 1, two methods can show perfect correlation (r = 1.00) while having substantial proportional differences that make them clinically non-interchangeable [1]. Similarly, t-tests only assess differences in means (constant bias) but fail to detect proportional differences and are sensitive to sample size in ways that may either mask clinically relevant differences or highlight statistically significant but clinically irrelevant ones [1].

Fundamental Principles of Regression in Method Comparison

Limitations of Ordinary Least Squares (OLS) Regression

Ordinary Least Squares (OLS) regression, the most common form of linear regression, imposes critical assumptions that are frequently violated in method comparison studies:

- OLS assumes that the independent variable (X) is measured without error, which is unrealistic when comparing two measurement methods where both are subject to analytical variation [23]

- OLS is highly sensitive to outliers and non-normal distribution of errors

- OLS slope estimates are biased when both variables contain measurement error

- OLS results depend on which method is assigned as the independent variable

These limitations necessitate specialized regression techniques that properly account for measurement errors in both methods and are robust to departures from ideal statistical distributions.

Key Statistical Concepts in Method Comparison

Constant bias refers to a systematic difference between methods that remains consistent across the measuring range. It is represented by the intercept in regression equations. Proportional bias indicates that differences between methods change proportionally with the analyte concentration, represented by the slope in regression equations. The identity line (x = y) represents perfect agreement between methods, where the regression line would ideally fall in the absence of any systematic differences [23].

Theoretical Foundation

Deming regression is an errors-in-variables model that accounts for measurement errors in both compared methods. Unlike OLS, which minimizes the sum of squared vertical distances between points and the regression line, Deming regression minimizes the sum of squared distances between points and the line at an angle determined by the ratio of the variances of the measurement errors for both methods [24]. This approach provides unbiased estimates of the regression parameters when both methods contain measurement error.

The fundamental model assumes a linear relationship between the true values measured by both methods: Y~i~ = α + βX~i~, where the observed values are x~i~ = X~i~ + ε~i~ and y~i~ = Y~i~ + η~i~, with ε~i~ and η~i~ representing measurement errors for both methods [24].

Types of Deming Regression

Simple Deming regression assumes constant measurement error variances across the concentration range. It requires the user to specify an error ratio (δ), which represents the ratio between the variances of the measurement errors of both methods [24]. When the error ratio is set to 1, Deming regression is equivalent to orthogonal regression.

Weighted Deming regression should be used when the measurement errors are proportional to the analyte concentration rather than constant. This method assumes a constant ratio of coefficients of variation (CV) rather than constant variances across the measuring interval [24]. Weighted Deming regression is more appropriate when working with data spanning a wide concentration range.

Calculation Methods

Deming regression parameters are calculated using iterative approaches. The slope estimate is obtained as:

β = [ (θ - λ) + √((θ - λ)² + 4θλr²) ] / (2θ)

Where θ is the ratio of error variances, λ is a correction factor, and r is the correlation coefficient between the measurements. Confidence intervals for parameter estimates are typically computed using jackknife procedures, which provide more reliable inference than analytical formulas, especially with smaller sample sizes [24].

Table 1: Deming Regression Applications and Assumptions

| Aspect | Simple Deming Regression | Weighted Deming Regression |

|---|---|---|

| Error Structure | Constant measurement error variances | Proportional measurement errors (constant CV) |

| Error Ratio Requirement | Must be specified or estimated from replicates | Must be specified or estimated from replicates |

| Optimal Use Case | Narrow concentration range | Wide concentration range |

| Variance Assumption | Constant variance across range | Variance proportional to concentration |

Theoretical Foundation

Passing-Bablok regression is a non-parametric approach to method comparison that makes no assumptions about the distribution of errors or data points [25] [26]. This method is particularly valuable when dealing with non-normal distributions, outliers, or when the relationship between methods deviates from standard parametric assumptions. The procedure is based on Kendall's rank correlation and is robust to extreme values that would disproportionately influence OLS regression [26].

A key advantage of Passing-Bablok regression is that the result does not depend on which method is assigned to the X or Y axis, making it symmetric—a crucial property when comparing two methods without a clear reference [26]. The method requires continuously distributed data covering a broad concentration range and assumes a linear relationship between the two methods [25].

Calculation Procedure

The Passing-Bablok procedure follows these computational steps:

- Slope Calculation: All possible pairwise slopes S~ij~ between data points are calculated as S~ij~ = (Y~j~ - Y~i~)/(X~j~ - X~i~) for i < j

- Slope Adjustment: The median of these slopes is calculated after excluding slopes of 0/0 or -1, and applying a correction factor K for bias adjustment, where K equals the number of slopes less than -1

- Intercept Calculation: The intercept is determined as the median of the values {Y~i~ - B1X~i~} after the slope estimation

This non-parametric approach makes the method particularly robust against outliers and non-normal error distributions [26].

Interpretation of Results

The intercept (A) represents the constant systematic difference between methods. If the 95% confidence interval for the intercept includes 0, no significant constant bias exists. The slope (B) represents proportional differences between methods. If the 95% confidence interval for the slope includes 1, no significant proportional bias exists [26] [23].

The Cusum test for linearity assesses whether a linear model adequately describes the relationship between methods. A non-significant result (P ≥ 0.05) indicates no significant deviation from linearity, validating the model assumption [26]. A significant Cusum test suggests nonlinearity, making the regression results unreliable [23].

Table 2: Passing-Bablok Regression Interpretation Guide

| Parameter | Value Indicating No Bias | Statistical Test | Clinical Interpretation |

|---|---|---|---|

| Intercept (A) | 95% CI includes 0 | CI exclusion of 0 suggests constant bias | Consistent difference across all concentrations |

| Slope (B) | 95% CI includes 1 | CI exclusion of 1 suggests proportional bias | Difference increases/decreases with concentration |

| Linearity | Cusum test P ≥ 0.05 | Significant deviation suggests nonlinearity | Relationship may be curved, not straight |

| Residuals | Random scatter around zero | Pattern suggests model inadequacy | Unexplained variability or systematic error |

Experimental Design and Protocol

Sample Selection and Preparation

Proper experimental design is crucial for obtaining valid method comparison results. Key considerations include:

- Sample Size: A minimum of 40 samples is recommended, though 100 or more provide more reliable estimates, especially for detecting proportional biases [26] [1]. Sample sizes below 40 increase the risk of falsely concluding method agreement due to wide confidence intervals.

- Concentration Range: Samples should cover the entire clinically meaningful range, from low to high values, with even distribution across this range [1]. Gaps in the concentration spectrum can invalidate the comparison.

- Sample Type: Fresh patient samples should be used rather than spiked samples or controls, as they represent the actual matrix and interference potential encountered in practice.

- Stability: Samples should be analyzed within their stability period, preferably within 2 hours of collection if applicable, and always within the same analytical run to minimize pre-analytical variation [1].

Measurement Protocol

- Duplicate Measurements: Whenever possible, perform duplicate measurements with both methods to better estimate random variation and identify outliers [1].

- Randomization: The sample sequence should be randomized to avoid carry-over effects and time-related biases.

- Duration: Measurements should be conducted over multiple days (at least 5) and multiple analytical runs to capture typical routine variability [1].

- Blinding: Operators should be blinded to the results of the comparative method when performing measurements with the new method to prevent observational bias.

Decision Framework for Regression Selection

Decision Flowchart for Regression Method Selection

Comparative Analysis of Regression Methods

Table 3: Comprehensive Comparison of Regression Methods for Method Comparison

| Characteristic | Deming Regression | Passing-Bablok Regression | Ordinary Least Squares (OLS) |

|---|---|---|---|

| Measurement Error | Accounts for errors in both methods | Accounts for errors in both methods | Assumes no error in X variable |

| Distribution Assumptions | Parametric (requires normal distribution) | Non-parametric (no distribution assumptions) | Parametric (requires normal distribution) |

| Outlier Sensitivity | Moderately sensitive | Robust | Highly sensitive |

| Data Requirements | Known or estimable error ratio | Linear relationship, broad concentration range | Normal distribution, homoscedasticity |

| Symmetry | Symmetric when error ratio=1 | Always symmetric | Not symmetric |

| Sample Size Needs | ≥ 40 samples | ≥ 40 samples (preferably 50-90) | ≥ 40 samples |

| Implementation Complexity | Moderate | Moderate | Simple |

| Best Application | Known error structure, normal data | Non-normal data, outliers, unknown error structure | Reference method with negligible error |

Selection Guidelines

Deming regression is preferable when:

- The error ratio between methods is known or can be reliably estimated from replicate measurements

- Data and errors follow approximately normal distributions

- The research question requires efficient parameter estimates with minimal variance

- Working with a wide concentration range with proportional errors (weighted Deming)

Passing-Bablok regression is preferable when:

- The error structure is unknown and cannot be estimated from replicates

- Data contain outliers or exhibit non-normal distributions

- The relationship between methods is linear but parametric assumptions are violated

- Working with a broad concentration range where linearity is expected

Both methods require:

- A linear relationship between methods across the measurement range

- Adequate sample size (minimum 40, preferably more)

- Continuous data covering the clinically relevant range

- Absence of significant nonlinearity (verified via Cusum test for Passing-Bablok)

Implementation and Analysis Workflow

Method Comparison Implementation Workflow

Statistical Software Implementation

Most modern statistical packages offer implementations of both Deming and Passing-Bablok regression:

- MedCalc includes comprehensive Passing-Bablok implementation with Cusum test for linearity, residual plots, and bootstrap options [26]

- NCSS provides both Deming and Passing-Bablok regression procedures with detailed graphical outputs [27]

- R packages such as 'mcr' (Method Comparison Regression) implement both techniques with various diagnostic tools [28]

- Analyse-it adds Deming regression capabilities to Excel with jackknife confidence intervals [24]

Complementary Analytical Techniques

Regardless of the primary regression method chosen, these additional analyses strengthen method comparison studies:

- Bland-Altman plots (also called difference plots) visualize agreement between methods by plotting differences against averages, helping identify concentration-dependent bias and agreement limits [27] [1]

- Mountain plots (folded CDF plots) provide another visual assessment of distribution differences between methods

- Residual analysis examines patterns in the differences between observed and predicted values, helping identify heteroscedasticity, outliers, and model inadequacy [26] [23]

Advanced Considerations and Recent Developments

Handling Repeated Measurements

In studies with repeated measurements from the same subjects, standard Passing-Bablok assumptions are violated due to correlated data. A modified approach called Block-Passing-Bablok regression has been developed to handle grouped data with repeated measurements by excluding meaningless slopes within the same subject [28]. This prevents distortion of estimates and maintains appropriate statistical power for equivalence testing.

Sample Size Optimization

While a minimum of 40 samples is widely recommended, optimal sample sizes depend on the specific comparison context [26]:

- For detecting small constant biases, 40-60 samples may suffice

- For identifying proportional biases, especially subtle ones, 60-90 samples provide better power

- When developing methods for regulated environments, larger sample sizes (100+) may be warranted

- When high clinical consequences exist for method disagreement, larger sample sizes are prudent

Defining Acceptance Criteria

Before conducting method comparison studies, researchers should define clinically acceptable bias based on:

- Clinical outcomes data linking analytical performance to patient outcomes (ideal but often unavailable)

- Biological variation data, using established quality specifications based within-subject and between-subject variation

- State-of-the-art performance achievable with current technology

- Regulatory requirements for specific applications or contexts

Selecting between Deming and Passing-Bablok regression for clinical method comparison requires careful consideration of data characteristics, error structures, and distributional assumptions. Deming regression provides efficient parameter estimation when error structures are known and data are normally distributed, while Passing-Bablok regression offers robustness against outliers and distributional violations. Both methods properly account for measurement errors in both compared methods, overcoming critical limitations of ordinary least squares regression.

A well-designed method comparison study incorporates appropriate sample sizes, covers clinically relevant concentration ranges, utilizes complementary graphical techniques like Bland-Altman plots, and interprets results in the context of clinically meaningful differences. By applying the decision framework presented in this guide, researchers and laboratory professionals can select the optimal statistical approach for demonstrating method equivalence, ultimately ensuring the reliability of clinical measurements and the safety of patient care.

In the field of laboratory medicine, the reliability of data generated from method comparison studies is foundational to clinical decision-making. Systematic error, or bias, represents a constant deviation of measured results from the true value, potentially leading to misdiagnosis, incorrect treatment planning, and increased healthcare costs [29]. Within the context of data analysis for method comparison studies, the precise quantification of this bias at clinically relevant decision levels is not merely a statistical exercise but a critical component of analytical quality management. This guide provides researchers and drug development professionals with in-depth methodologies for quantifying bias, ensuring that laboratory tests are fit for their intended clinical purpose.

Theoretical Foundations of Systematic Error

Defining Bias and Trueness

In metrological terms, bias is defined as the "estimate of a systematic measurement error" [29]. Closely related is the concept of measurement trueness, which refers to the closeness of agreement between the average of an infinite number of replicate measured quantity values and a reference quantity value [29]. Mathematically, bias for an analyte A can be expressed as: Bias(A) = O(A) - E(A) where O(A) is the observed (measured) value and E(A) is the expected or reference value [29].

Types of Bias

Bias in laboratory measurements can manifest in two primary forms:

- Constant Bias: The difference between the target and measured values remains constant across the concentration range of the measurand.

- Proportional Bias: The difference between the target and measured values changes in proportion to the concentration of the measurand [29].

The distinction is critical, as a proportional bias indicates that the measurement error is concentration-dependent, requiring a more nuanced correction strategy. These biases can be evaluated analytically using tools such as Bland-Altman plots for assessing agreement and Passing-Bablok regression for detecting the presence and type of bias [29].

Methodologies for Quantifying Bias

Establishing Reference Quantity Values

The accurate estimation of bias requires two core components: a reference quantity value and the mean of repeated measurements [29]. The reference value can be established through:

- Certified Reference Materials (CRMs): These provide a traceable and internationally recognized reference point.

- Fresh Patient Samples Measured with Reference Methods: This approach utilizes well-established methods to assign a value to patient samples.

- Assigned Values: When a reference value is unavailable, a consensus value from a higher-order method can be used as the target [29].

Table 1: Sources for Reference Values in Bias Estimation

| Source Type | Description | Key Advantage | Consideration |

|---|---|---|---|

| Certified Reference Materials (CRMs) | Commercially available materials with certified analyte concentrations. | Provides metrological traceability. | Can be expensive; may not fully mimic patient sample matrix. |

| Fresh Patient Samples | Authentic patient samples measured with a reference method. | Matrix effects are representative of routine practice. | Requires access to a higher-order reference method. |

| Commutable Samples | Processed samples that behave like fresh patient samples across methods. | Balances standardization with practical applicability. | Commutability must be verified. |

Experimental Protocols for Bias Measurement

The conditions under which bias is measured significantly impact the results and their interpretation. Three primary measurement conditions are recognized in metrology [29]:

- Repeatability Conditions: Measurements are performed using the same procedure, instrument, operator, and location within a short period (e.g., a single run). This yields the smallest random variation, making it easier to detect a true bias.

- Intermediate Precision Conditions: Measurements are performed in a single laboratory over an extended period (e.g., several months) with deliberate changes in factors like instruments, operators, and reagent lots. This provides a more realistic estimate of routine performance.

- Reproducibility Conditions: Measurements are performed across different laboratories, incorporating the widest possible sources of variation. This is the most stringent condition and reflects the total variation in the measurement system.

The following workflow outlines the core process for a bias estimation experiment, which can be adapted for different measurement conditions.

Diagram 1: Bias Estimation Workflow

Assessing the Significance of Bias

A calculated bias is an estimate, and its statistical and clinical significance must be evaluated. From a statistical perspective, a t-test can be employed. A more visual, practical assessment can be made using the 95% Confidence Interval (CI) of the mean of the repeated measurements [29]:

- If the 95% CI of the mean overlaps the target reference value, the bias is not considered statistically significant.

- If the 95% CI of the mean does not overlap the target reference value, the bias is considered statistically significant.

The imprecision of the method directly impacts the width of the CI; a method with high imprecision (high CV) will have a wider CI, making it less likely to detect a significant bias.

The Role of Medical Decision Levels

Defining Medical Decision Levels

Medical decision levels are specific concentrations of an analyte at which clinical actions are triggered, such as diagnosis, further testing, or initiation/modification of therapy [30]. Unlike reference intervals, which describe the range of values for a "healthy" population, decision levels are tied to pathological states and critical clinical outcomes. Evaluating bias at these levels is paramount, as even a small, statistically insignificant bias at a non-critical level can become clinically unacceptable at a decision threshold.

Applying Decision Levels to Bias Evaluation

The following table provides examples of medical decision levels for common laboratory tests, illustrating the points where bias assessment is most critical [30].

Table 2: Exemplary Medical Decision Levels for Select Analytes

| Test | Units | Reference Interval | Decision Level 1 | Decision Level 2 | Decision Level 3 | Clinical Context of Decision Levels |

|---|---|---|---|---|---|---|

| Hemoglobin | g/dL | 14-17.8 (M)12-15.6 (F) | 4.5 | 10.5 | 17 | Transfusion trigger, anemia diagnosis, polycythemia |

| Platelet Count | K/uL | 150-400 | 10 | 50 | 1000 | Risk of spontaneous bleeding, surgical safety, thrombocytosis |

| White Blood Cell Count | K/uL | 4-11 | 0.5 | 3 | 30 | Severe neutropenia, infection, leukemia suspicion |

| Thyroxine (T4) | ug/dL | 5.5-12.5 | 5 | 7 | 14 | Hypothyroidism, hyperthyroidism |

| Theophylline | ug/mL | 10-20 (asthma) | 10 | 20 | 35 | Therapeutic range, toxicity |

When bias is identified, its impact must be judged against the Total Allowable Error (TEa), which is the maximum error that can be tolerated without invalidating the clinical utility of the test result [31]. The relationship between bias, imprecision, and TEa is often synthesized into a Sigma-metric, which provides a powerful tool for evaluating method performance. A Sigma-metric greater than 6 indicates world-class performance, while a metric below 3 is generally considered unacceptable for many clinical applications [31].

Advanced Statistical Analysis and Tools

Regression Analysis for Bias Characterization

Method comparison studies often employ regression analysis to characterize bias across a range of concentrations. Passing-Bablok regression is a non-parametric method particularly robust against outliers and not reliant on specific distribution assumptions [29]. The regression equation is: y = ax + b where y is the test method, x is the comparative method, a is the slope (indicating proportional bias), and b is the intercept (indicating constant bias) [29].

- No significant bias is concluded if the 95% CI of the slope a includes 1 and the 95% CI of the intercept b includes 0.

- If the 95% CI for the slope does not include 1, a proportional bias is present.

- If the 95% CI for the intercept does not include 0, a constant bias is present.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials required for conducting rigorous bias quantification studies.

Table 3: Essential Reagents and Materials for Bias Studies

| Item | Function/Description | Criticality |

|---|---|---|

| Certified Reference Materials (CRMs) | Provides an unbiased, traceable reference value for target assignment, forming the gold standard for bias estimation. | Essential |

| Commutable Quality Control Materials | Processed human serum-based controls that mimic the behavior of fresh patient samples across different methods; used for long-term precision and bias monitoring. | Highly Recommended |

| Fresh/Frozen Patient Samples | Authentic specimens that represent the true matrix; used in comparison studies to assess method performance under realistic conditions. | Essential |

| Statistical Software (e.g., R, MedCalc) | Performs advanced statistical analyses like Passing-Bablok regression, Bland-Altman plots, and confidence interval calculations. | Essential |

| Data Collection Form (Electronic) | Standardized template for capturing instrument ID, reagent lot, date, operator, and raw results to ensure data integrity and traceability. | Essential |

Visualizing the Integrated Workflow

The complete process of quantifying and interpreting systematic error, from experimental design to clinical decision-making, is summarized in the following comprehensive workflow.

Diagram 2: From Data to Decision Workflow

The rigorous quantification of systematic error at critical medical decision levels is a non-negotiable standard in method comparison studies and drug development research. By employing a structured approach that combines metrological principles with clinical context, researchers can move beyond simple statistical significance to a meaningful assessment of analytical performance. The methodologies outlined—from establishing traceable reference values and executing controlled experiments under defined conditions, to analyzing data with robust statistical tools and interpreting results against clinically relevant thresholds—provide a framework for ensuring data integrity. Ultimately, this process safeguards the translation of laboratory data into reliable clinical decisions, enhancing patient safety and the efficacy of therapeutic interventions.

Leveraging Pharmacometric Models to Drastically Reduce Sample Sizes

In the landscape of modern drug development, increasing complexity and rising costs demand more efficient clinical trial designs. This technical guide explores the paradigm shift from conventional statistical methods to pharmacometric (PMx) model-based approaches for sample size estimation. By integrating prior knowledge and leveraging data from multiple sources and timepoints, PMx methods demonstrate a proven capability to reduce required sample sizes while maintaining, or even increasing, statistical power. A highlighted case study reveals that a PMx approach achieved over 80% power with a sample size allocation of just 26%, a feat unmatched by conventional methods. Framed within the broader context of data analysis for method comparison studies, this whitepaper provides researchers and drug development professionals with a detailed examination of the methodologies, workflows, and practical applications of these transformative quantitative strategies.

A foundational step in clinical trial design is determining the sample size required to reliably detect a clinically relevant treatment effect. Conventional statistical methods, often based on power analysis for a single primary endpoint, can be inefficient. They typically rely on end-of-trial observations from a single dose group, failing to incorporate the rich, longitudinal data on dose-exposure-response (D-E-R) relationships and prior knowledge gathered in earlier development phases [32]. This inefficiency can lead to unnecessarily large, costly, and time-consuming trials, or conversely, underpowered studies that fail to detect true effects.

The pursuit of more efficient drug development has catalyzed the adoption of Model-Informed Drug Development (MIDD). MIDD is a framework that uses quantitative modeling and simulation to integrate nonclinical and clinical data, as well as prior knowledge, to inform decision-making [33]. A critical application of MIDD is the use of pharmacometric models to optimize trial design, with sample size allocation being a area of significant impact. This approach is particularly valuable in multi-regional clinical trials (MRCTs), where developers must balance characterizing the overall D-E-R relationship with assessing potential inter-regional heterogeneity in treatment response [32].

Quantitative Evidence: PMx vs. Conventional Approaches

Direct comparisons between pharmacometric and conventional statistical approaches demonstrate the profound efficiency gains achievable through modeling.

Case Study: Multi-Regional Phase 2 Dose-Ranging Trial

A seminal case study involved a hypothetical multi-regional Phase 2 trial for an anti-psoriatic drug with a total sample size of N = 175. The study aimed to determine the sample size needed for a region of interest (Region X) to achieve over 80% power in detecting a clinically relevant inter-regional difference. The key assumption was that patients in Region X, when administered the highest dose (210 mg), would exhibit a median reduction in Psoriasis Area and Severity Index (PASI) score of 50% at Week 12—representing the minimum clinically meaningful therapeutic improvement and a borderline inter-regional difference [32] [34].

Table 1: Sample Size Allocation Power - PMx vs. Conventional Approach

| Methodological Approach | Data Utilized | Maximum Power with 50% Sample Allocation | Sample Allocation for >80% Power |

|---|---|---|---|

| Conventional Statistical | End-of-trial observations from a single dose group | < 40% | Not Achievable |

| Pharmacometric (PMx) Model-Based | Multiple dose groups across trial duration | - | 26% |

The results were striking. The conventional method, relying on a single endpoint, was profoundly underpowered, unable to reach 80% power even when half the patients were from Region X. In contrast, the PMx approach, which efficiently used data from all dose levels and the entire trial duration, required only 26% of the total sample size (approximately 45 subjects) to achieve the target power [32]. This represents a drastic reduction in the number of subjects needed from a specific region to inform global development decisions.

The Workflow of a Pharmacometric Sample Size Analysis

The implementation of a PMx approach for sample size allocation follows a structured, iterative workflow that integrates modeling, simulation, and evaluation.