A 9-Step Protocol for Robust Method Comparison Experiments in Biomedical Research

This article provides a comprehensive, step-by-step framework for planning and executing method comparison experiments, a critical process for validating new analytical methods in clinical and biomedical research.

A 9-Step Protocol for Robust Method Comparison Experiments in Biomedical Research

Abstract

This article provides a comprehensive, step-by-step framework for planning and executing method comparison experiments, a critical process for validating new analytical methods in clinical and biomedical research. Tailored for researchers, scientists, and drug development professionals, the guide covers foundational principles, a detailed 9-step methodological protocol, strategies for troubleshooting common pitfalls, and advanced techniques for data validation and analysis. By synthesizing current best practices, the content aims to ensure regulatory compliance, data integrity, and the generation of reliable, actionable results for laboratory and clinical applications.

Laying the Groundwork: Core Principles and Planning for Method Comparison

Method comparison is a fundamental process in laboratory medicine and analytical science, serving as a critical component of the broader method validation and verification framework. It involves a systematic experimental investigation to assess whether a new or alternative measurement method produces results comparable to an established method [1]. This process is essential in clinical pathology laboratories and other regulated environments where introducing new instrumentation or procedures requires objective assessment of analytical agreement before implementation for patient testing or product release [1].

Within laboratory quality systems, method comparison occupies a specific role distinct from but complementary to method validation and verification. While method validation is a comprehensive process that proves an analytical method is acceptable for its intended use, typically required when developing new methods, method verification confirms that a previously validated method performs as expected in a specific laboratory setting [2]. Method comparison serves as the practical experimental bridge, often forming the core of both validation and verification activities by providing the empirical data needed to assess analytical agreement between methods [1].

Theoretical Framework: Distinguishing Method Comparison from Validation and Verification

Understanding the relationship between method comparison, validation, and verification is crucial for implementing appropriate quality assurance protocols. These distinct but interconnected processes serve different purposes within the laboratory quality system:

Method Validation: A comprehensive documented process proving that an analytical procedure is suitable for its intended purpose, assessing parameters such as accuracy, precision, specificity, detection limit, quantitation limit, linearity, and robustness [2]. Validation is typically performed during method development and is required by regulatory bodies for new drug submissions, diagnostic test approvals, and environmental monitoring protocols [2] [3].

Method Verification: A process confirming that a previously validated method performs as expected in a specific laboratory, typically employing limited testing focused on critical parameters like accuracy, precision, and detection limits [2]. Verification is commonly used when adopting standard methods in a new laboratory or with different instruments [2].

Method Comparison: The experimental process of comparing paired results from two methods (typically a new method versus an established method) to objectively investigate sources of analytical error and determine comparability [1]. This provides the empirical evidence needed for both validation and verification activities.

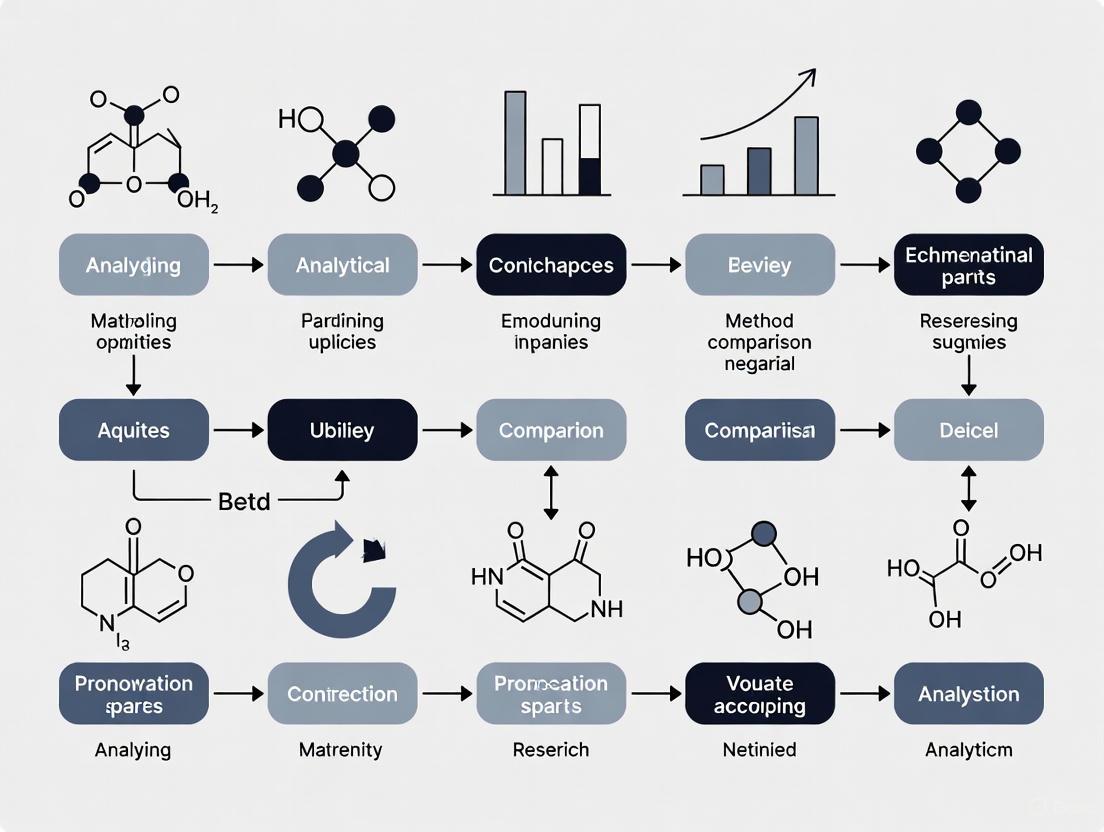

The relationship between these processes can be visualized as follows:

The Purpose and Significance of Method Comparison

Method comparison serves multiple critical purposes in laboratory medicine and analytical science:

Primary Objectives

The fundamental purpose of method comparison is to objectively assess whether a new measurement method produces results that are analytically equivalent to an established method [1]. This assessment involves statistical analysis of paired results to investigate sources of analytical error, including total, random, and systematic error components [1]. By quantifying these error sources, laboratories can make informed decisions about method implementation.

Practical Applications

Method comparison is routinely employed when laboratories introduce new analyzers, replace aging instrumentation, or implement alternative methodologies to improve efficiency, reduce costs, or enhance test performance [1]. In regulated environments, method comparison provides the evidentiary basis for compliance with quality standards and regulatory requirements, forming an essential component of the data package submitted to agencies like the FDA, EMA, and other regulatory bodies [3].

Patient Safety Implications

Ultimately, method comparison serves as a critical safeguard for patient safety by ensuring that clinical decisions based on laboratory results remain consistent regardless of methodological changes [1]. This process helps maintain the longitudinal consistency of patient results, enabling valid comparisons of results over time even when testing methodologies evolve.

Comprehensive Protocol for Method Comparison Experiments

A robust method comparison experiment follows a structured protocol to ensure scientifically valid and defensible results. The following 9-step protocol provides a framework for conducting method comparison studies:

The 9-Step Method Comparison Protocol

Step 1: State the Purpose of the Experiment Clearly define the objectives of the comparison, including the specific methods being compared, the analytical measurements being assessed, and the clinical or analytical decisions that will be informed by the results [1].

Step 2: Establish a Theoretical Basis Define the statistical approaches that will be used to assess agreement, including correlation analysis, regression analysis, difference plots (Bland-Altman), and error partitioning [1].

Step 3: Become Familiar with the New Method Ensure operational competency with the new method through training and preliminary practice runs to minimize operator-induced variability during the formal comparison [1].

Step 4: Obtain Estimates of Random Error for Both Methods Determine within-run and total precision for both methods to understand the inherent random error of each method [1].

Step 5: Estimate the Number of Samples Include sufficient samples to ensure adequate statistical power, typically 40-100 patient samples covering the analytical measurement range, with particular attention to medically important decision levels [1].

Step 6: Define Acceptable Difference Between the Two Methods Establish predefined acceptance criteria based on analytical performance goals, biological variation data, or clinical requirements [1].

Step 7: Measure the Patient Samples Analyze all selected samples using both methods within a clinically relevant timeframe (typically within 2-4 hours) to minimize sample deterioration effects [1].

Step 8: Analyze the Data Perform appropriate statistical analyses to assess agreement, including regression analysis, difference plots, and correlation assessments [1].

Step 9: Judge Acceptability Compare the observed differences against predefined acceptance criteria to determine whether the methods are sufficiently comparable for their intended use [1].

Key Statistical Approaches in Method Comparison

Method comparison employs specific statistical techniques to evaluate analytical agreement:

- Correlation Analysis: Assesses the strength and direction of the relationship between methods but does not necessarily demonstrate agreement [4].

- Regression Analysis: Quantifies the systematic and proportional differences between methods [1].

- Difference Plots (Bland-Altman): Visualizes the agreement between two methods by plotting differences against averages, highlighting systematic bias and trends [1].

- Error Partitioning: Separates total analytical error into random and systematic components to understand the nature and magnitude of method discrepancies [1].

Essential Research Reagents and Materials for Method Comparison

Successful method comparison experiments require careful selection and preparation of materials. The following table details essential research reagent solutions and materials:

Table 1: Essential Research Reagents and Materials for Method Comparison Studies

| Reagent/Material | Function and Purpose | Specification Requirements |

|---|---|---|

| Patient Samples | Primary material for method comparison; should cover entire analytical measurement range | 40-100 individual patient samples; covering low, medium, and high concentrations; stored appropriately to maintain stability [1] |

| Quality Control Materials | Monitor precision and stability of both methods during comparison study | Commercially available control materials at multiple concentrations; preferably with validated target values [3] |

| Calibrators | Establish analytical calibration for both methods | Manufacturer-recommended calibrators; proper reconstitution and handling; traceable to reference materials when available [3] |

| Reagents | Method-specific reagents required for analyte measurement | Lot-matched reagents to minimize variability; sufficient volume to complete entire study; proper storage conditions [3] |

| Internal Standards | Correct for analytical variability in complex methods (e.g., LC-MS/MS) | Stable isotope-labeled analogs for mass spectrometry methods; highly pure and well-characterized [3] |

Quantitative Data Analysis in Method Comparison

Method comparison generates quantitative data that requires appropriate statistical analysis and visualization. The selection of appropriate graphical representations is critical for accurate interpretation of comparison data:

Table 2: Quantitative Data Analysis Methods for Method Comparison

| Analysis Method | Primary Application | Key Parameters | Interpretation Guidelines |

|---|---|---|---|

| Difference Plot (Bland-Altman) | Visualizing agreement between methods; identifying bias and trends | Mean difference (bias); limits of agreement; trend patterns | Consistent scatter around zero indicates good agreement; trends suggest proportional error [1] |

| Linear Regression | Quantifying systematic and proportional differences | Slope (proportional error); Intercept (constant error); r² (strength of relationship) | Slope=1 and intercept=0 indicates perfect agreement; significant deviations indicate systematic differences [1] [4] |

| Correlation Analysis | Assessing strength of relationship between methods | Correlation coefficient (r); coefficient of determination (r²) | High correlation does not guarantee agreement; assesses strength of linear relationship only [4] |

| Error Partitioning | Separating total error into components | Systematic error; random error; total analytical error | Compare total error to allowable total error based on clinical requirements [1] |

Visualization Techniques for Comparison Data

Appropriate graphical representation enhances interpretation of method comparison data:

- Difference Plots (Bland-Altman): The most direct visualization of agreement between methods, displaying differences against averages with bias and limits of agreement [1].

- Scatter Plots with Regression Line: Display the relationship between methods across the measurement range with a line of equality for reference [5].

- Box Plots: Compare distributions of results from both methods, highlighting differences in central tendency and dispersion [6].

- Histograms of Differences: Visualize the distribution of differences between methods to assess normality and identify outliers [5].

Method Comparison in Regulated Environments

Method comparison practices must adhere to regulatory standards and guidelines in pharmaceutical, clinical, and analytical laboratories:

Regulatory Framework

Various regulatory bodies provide guidance on method comparison and validation:

- FDA Analytical Procedures: Focus on risk management and lifecycle validation for United States regulatory submissions [3].

- ICH Q2(R1): Provides international harmonized guidance on analytical method validation characteristics [3].

- EMA Guidelines: Emphasize harmonization across European Union member states [3].

- CLIA Requirements: Govern clinical test methods in the United States, with specific verification requirements [2] [3].

Documentation Requirements

Comprehensive documentation is essential for regulatory compliance and technical defensibility:

- Validation/Verification Protocol: Predefined experimental plan specifying objectives, acceptance criteria, and methodology [3].

- Raw Data: Complete dataset of all measurements from both methods with appropriate metadata [3].

- Statistical Analysis: Detailed analysis including all relevant statistical tests and interpretations [1] [3].

- Final Report: Comprehensive summary comparing results against acceptance criteria with definitive conclusions about method comparability [3].

Advanced Considerations in Method Comparison

Troubleshooting Common Issues

Method comparison studies may encounter specific challenges that require troubleshooting:

- Non-Constant Variance: When variability changes across the measurement range, consider weighted regression or data transformation [1].

- Outliers: Investigate and document outliers thoroughly; determine whether they represent methodological differences or pre-analytical errors [1] [6].

- Limited Measurement Range: When patient samples do not cover the entire reportable range, consider supplemented samples or alternative statistical approaches [1].

- Sample Stability: Ensure sample integrity throughout testing period; document storage conditions and time-between measurements [1].

Comparison of Semiquantitative Methods

For semiquantitative methods (e.g., ordinal scale measurements), modified comparison approaches are necessary:

- Percentage Agreement: Calculate overall agreement and agreement within adjacent categories [1].

- Weighted Kappa Statistics: Account for the degree of disagreement between ordinal categories [1].

- ROC Analysis: When comparing to a quantitative method, assess optimal categorical thresholds [1].

Method comparison serves as the experimental cornerstone of method validation and verification in laboratory medicine and analytical science. By implementing a structured 9-step protocol—from defining purpose and acceptance criteria through statistical analysis and acceptability judgment—laboratories can generate defensible evidence of methodological comparability [1]. This process ensures that new or modified methods provide equivalent results to established procedures, thereby maintaining analytical quality and supporting valid clinical or product decisions.

The increasing regulatory emphasis on method lifecycle management underscores the continuing importance of robust method comparison practices [2] [3]. By adhering to standardized protocols, employing appropriate statistical techniques, and maintaining comprehensive documentation, laboratories can successfully navigate method transitions while ensuring uninterrupted quality and compliance.

Establishing the Purpose and Scope of Your Experiment

In analytical science and drug development, the introduction of a new measurement method necessitates a rigorous comparison against an established procedure to ensure the generation of reliable, equivalent, and interchangeable data. A method-comparison study is specifically designed to answer a fundamental clinical question: Can one measure an analyte using either Method A or Method B and obtain the same results? [7] The core indication for such a study is the need to determine if two methods for measuring the same variable (e.g., a biomarker concentration, enzymatic activity) do so in an equivalent manner, thereby assessing the potential for substituting one method for the other [7]. This application note, framed within a comprehensive 9-step protocol for method-comparison research, details the critical first phase: establishing a well-defined purpose and scope, which forms the bedrock for all subsequent experimental and analytical steps [1].

Defining the Purpose and Scope

Core Objectives and Terminology

The primary purpose of a method-comparison study is to objectively assess the overall analytical performance of a new method relative to a reference or established method, specifically investigating sources of analytical error [1]. This involves a statistical analysis of paired results to quantify total, random, and systematic error [1]. A crucial initial step is to define the key terminology that will guide the study's goals and interpretation.

Table 1: Key Terminology in Method-Comparison Studies

| Term | Definition | Question it Answers |

|---|---|---|

| Bias | The mean (overall) difference in values obtained with two different methods of measurement [7]. | How much higher or lower are the values from the new method, on average? |

| Precision | The degree to which the same method produces the same results on repeated measurements (repeatability) [7]. | How reproducible are the measurements for each method? |

| Limits of Agreement | A range (bias ± 1.96 SD) within which 95% of the differences between the two methods are expected to fall [7]. | What is the expected spread of differences for most paired measurements? |

| Accuracy | The degree to which an instrument measures the true value of a variable, assessed against a calibrated gold standard [7]. | Note: In method-comparison, the established method often acts as the reference, and the difference is referred to as "bias." |

Establishing the Scope: Defining Acceptable Difference

Before any data collection begins, it is imperative to define what constitutes an acceptable difference between the two methods [1]. This pre-defined criterion, based on clinical or analytical requirements, is the benchmark against which the success or failure of the method-comparison will be judged. The scope of the experiment must be designed to test whether the observed bias is less than this acceptable limit.

Acceptable performance specifications should be defined a priori based on one of the following models from the Milano hierarchy [8]:

- Clinical Outcomes: The effect of analytical performance on clinical outcomes (direct or indirect outcome studies).

- Biological Variation: Based on the components of biological variation of the measurand.

- State-of-the-Art: The best performance achievable by current technology.

Experimental Protocol for Purpose and Scope Definition

Preliminary Planning and Theoretical Foundation

A method-comparison study requires meticulous planning to ensure its validity. The initial steps focus on conceptual groundwork [1].

Step 1: State the Purpose of the Experiment

- Action: Formally document the primary objective. This is typically to determine if a new method (Method B) is equivalent to an established method (Method A) and can be used interchangeably without affecting patient results or medical decisions [8].

- Example Statement: "The purpose of this experiment is to evaluate the bias and precision of the new point-of-care glucose meter (Method B) against the central laboratory analyzer (Method A) across the clinically relevant range of 3.0-25.0 mmol/L to determine if the methods can be used interchangeably for patient monitoring."

Step 2: Establish a Theoretical Basis

- Action: Ensure that the two methods are designed to measure the same underlying analyte or physiological parameter. Comparing methods that measure different things is a fundamental flaw [7].

- Verification: Confirm that both the established method and the new method target the same measurand (e.g., blood glucose, cardiac output, body temperature).

Step 3: Define Acceptable Difference A Priori

- Action: Based on the chosen model from the Milano hierarchy, define and document the maximum allowable bias that would be considered clinically or analytically insignificant. This value is essential for sample size estimation and final judgement of acceptability [1] [8].

Key Design Considerations for Scope

The scope of the study is operationalized through several critical design elements that ensure the results will be valid and generalizable.

Table 2: Essential Design Considerations for Method-Comparison Studies

| Design Element | Consideration | Protocol Recommendation |

|---|---|---|

| Sample Number | Number of paired measures sufficient to decrease chance findings and validate statistical application. | At least 40, and preferably 100, patient samples should be used [8]. |

| Measurement Range | The physiological or clinical range over which the methods will be used. | Samples should be selected to cover the entire clinically meaningful measurement range [7] [8]. |

| Timing of Measurement | The requirement for simultaneous or near-simultaneous measurement. | The variable of interest must be measured at the same time with the two methods. The definition of "simultaneous" is determined by the rate of change of the variable [7]. |

| Conditions of Measurement | The environmental and physiological conditions during measurement. | The design should allow for paired measurements across the physiological range of values for which the methods will be used. Measurements should be taken over several days (at least 5) and multiple runs [7] [8]. |

Data Presentation and Statistical Workflow

A clear plan for data analysis and presentation is a critical part of the experimental scope. The following workflow outlines the key steps from data collection to final judgement, highlighting the role of the purpose and scope defined at the outset.

Diagram 1: The 9-step method-comparison protocol workflow, with the initial purpose and scope driving subsequent steps.

The Scientist's Toolkit: Essential Reagents and Materials

The following reagents and materials are fundamental for conducting a robust method-comparison study in a biomedical or drug development context.

Table 3: Key Research Reagent Solutions for Method-Comparison Studies

| Item / Solution | Function in the Experiment |

|---|---|

| Patient Samples | A sufficient number (≥40) of fresh, stable, and ethically sourced human samples (e.g., serum, plasma, whole blood) that cover the clinically relevant range of the analyte [8]. |

| Reference Method Reagents | The specific calibrators, controls, and consumables required for the established, reference method to ensure it is performing within specified parameters. |

| New Method Reagents | The specific calibrators, controls, and consumables required for the novel method or instrument under evaluation. |

| Quality Control Materials | Commercially available control materials at multiple levels (low, medium, high) to monitor the precision and stability of both measurement methods throughout the study period [7]. |

| Data Analysis Software | Statistical software capable of performing specialized method-comparison analyses, including Bland-Altman difference plots with bias and limits of agreement, and regression analysis [7] [8]. |

Analytical Considerations and Pitfalls

A properly scoped study also involves knowing which analytical approaches to avoid. Using inappropriate statistical tests is a common pitfall that can lead to incorrect conclusions.

- Avoid Relying Solely on Correlation Analysis: Correlation evaluates the strength of a linear relationship (association) between two methods but cannot detect constant or proportional bias. A perfect correlation (r=1.00) can exist even when two methods give vastly different values, providing false confidence in agreement [8].

- Avoid Using Only the t-test: A paired t-test may fail to detect a clinically significant difference if the sample size is too small. Conversely, with a very large sample, it may detect a statistically significant difference that is clinically meaningless. It is not a sufficient tool for assessing agreement [8].

The subsequent stages of the 9-step protocol, including detailed statistical analysis and data visualization techniques like scatter and difference plots (Bland-Altman), will build upon this firmly established foundation of purpose and scope to deliver a definitive judgement on method acceptability [1] [7] [8].

Within the framework of planning a method comparison experiment, the selection of an appropriate comparative method is a foundational decision that determines the validity and applicability of the entire study. Method comparison studies are conducted to assess the comparability of a new or alternative method against an established one, ultimately determining if they can be used interchangeably without affecting patient results or clinical outcomes [8]. The core question these studies answer is whether a significant bias exists between the methods. If this bias is larger than a pre-defined acceptable limit, the methods are not comparable [8]. This application note provides detailed protocols and guidance for researchers, scientists, and drug development professionals on selecting between reference and routine methods for a robust method comparison, aligning with the 9-step protocol for method validation.

Defining Reference and Routine Methods

The choice of comparator is critical and hinges on the purpose of the experiment [1]. The two primary categories are:

- Reference Method: A reference method is characterized by its high accuracy and specificity. It is often a definitive method, such as one using mass spectrometry detection, which can provide unequivocal peak purity and structural information [9]. These methods are typically used in the research and development phase to establish the true value of an analyte.

- Routine Method: This is the established method currently in use in the laboratory. It is often optimized for high throughput, cost-effectiveness, and robustness in a routine operational environment, such as a quality control (QC) laboratory [8] [9].

The comparison can take two forms, as outlined in Table 1.

Table 1: Types of Method Comparison Studies

| Comparison Type | Purpose | Typical Context |

|---|---|---|

| New Method vs. Reference Method | To establish the trueness and accuracy of the new method. | Method development and initial validation [9]. |

| New Method vs. Established Routine Method | To verify that the new method provides comparable patient results and can be seamlessly integrated into routine use. | Laboratory method verification before implementation [8]. |

A 9-Step Protocol for Method Comparison

The following protocol integrates the selection of the comparative method into a comprehensive 9-step framework for conducting a method comparison experiment [1].

Step 1: State the Purpose of the Experiment

Clearly define whether the goal is to validate a new method's fundamental accuracy against a reference standard or to demonstrate its equivalence to an existing routine method for clinical or QC purposes.

Step 2: Establish a Theoretical Basis

Understand the technical principles of both the new and the comparative method. This knowledge helps anticipate potential sources of error, such as different interference effects or calibration biases [1].

Step 3: Become Familiar with the New Method

Before formal comparison, ensure personnel are thoroughly trained and the new method is operating stably according to the manufacturer's specifications [1].

Step 4: Obtain Estimates of Random Error

Determine the imprecision (random error) for both methods by performing replicate measurements of quality control materials. This is often reported as % Relative Standard Deviation (%RSD) [1] [9].

Step 5: Estimate the Number of Samples

A sufficient sample size is critical for reliable results. At least 40, and preferably 100, patient samples should be used to compare two methods and to identify unexpected errors from interferences or sample matrix effects [8].

Step 6: Define Acceptable Difference

Before experimentation, define the allowable total error based on clinical or analytical requirements. This can be derived from models of biological variation, clinical outcomes, or state-of-the-art capabilities [10] [8].

Step 7: Measure the Patient Samples

- Sample Selection: Samples should cover the entire clinically meaningful measurement range [8].

- Experimental Design: Analyze samples over several days (at least 5) and multiple runs to capture real-world variation. Perform measurements in a randomized sequence to avoid carry-over effects, and analyze samples within their stability period [8].

Step 8: Analyze the Data

Initial data analysis should include graphical presentations:

- Scatter Plots: To visualize the relationship and variability between methods across the measurement range [8].

- Difference Plots (e.g., Bland-Altman): To assess the agreement between methods and identify any concentration-dependent bias [8].

- Statistical Methods: Use appropriate regression models like Deming or Passing-Bablok, which are more suitable than correlation analysis or t-tests for method comparison [8].

Step 9: Judge Acceptability

Compare the observed total error (a combination of random and systematic error) with the allowable total error defined in Step 6. If the observed error is less than or equal to the allowable error, the method is considered acceptable for its intended use [1] [10].

The following workflow diagram illustrates the decision-making process within this 9-step protocol:

Essential Reagents and Materials

A successful method comparison relies on high-quality, well-characterized materials. Key reagents and their functions are listed in Table 2.

Table 2: Key Research Reagent Solutions for Method Comparison

| Reagent / Material | Function | Key Considerations |

|---|---|---|

| Patient Samples | The primary matrix for comparison, covering the analytical measurement range [8]. | Should be fresh, stable, and reflect the typical sample matrix (e.g., serum, plasma). |

| Reference Material | Provides an accepted reference value to establish accuracy and trueness [9]. | Should be certified and traceable to a national or international standard. |

| Quality Control (QC) Materials | Used to monitor the precision (repeatability) of both methods during the comparison study [1] [9]. | Should include at least two levels (normal and pathological). |

| Calibrators | Used to establish the calibration curve for quantitative methods. | Calibration hierarchy and traceability must be documented. |

| Potential Interferents | Used in specificity studies to demonstrate the method's ability to measure the analyte accurately in the presence of other components [9]. | May include metabolites, degradants, or concomitant medications. |

Statistical Analysis and Data Visualization Workflow

Once data is collected, a systematic approach to analysis is required. The following diagram outlines the key steps from data collection to the final acceptability judgment, highlighting appropriate statistical techniques.

Protocol for Statistical Analysis

- Graphical Presentation (Scatter and Difference Plots): Begin by plotting the data to visualize the relationship between methods and to identify outliers, extreme values, or non-constant variance [8].

- Regression Analysis: Apply robust regression models. Deming regression is suitable when both methods have measurable error, while Passing-Bablok regression is non-parametric and handles outliers well [8].

- Bias Estimation: From the regression equation, quantify both constant (y-intercept) and proportional (slope) systematic error.

- Acceptability Judgment: Combine estimates of random error (from precision studies) and systematic error (from regression) to calculate total error. This total error is compared against the predefined allowable total error from Step 6 [10].

Common Pitfalls and Inadequate Statistical Methods

A well-designed experiment can still yield misleading conclusions if inappropriate statistical methods are employed. Correlation analysis (r) and t-tests are not adequate for assessing method comparability [8].

- Correlation Coefficient (r): Measures the strength of a linear relationship, not agreement. A high correlation can exist even when a large, consistent bias is present [8].

- Paired t-test: Detects a statistically significant difference in means but does not indicate whether that difference is clinically or analytically meaningful. With a large sample size, a trivial difference may be significant, while with a small sample size, a large, important bias may be deemed non-significant [8].

Selecting between a reference method and a routine method sets the context for the entire method comparison experiment. Integrating this critical choice into the structured 9-step protocol ensures a objective and defensible assessment of method performance. By adhering to a rigorous experimental design, utilizing appropriate statistical tools for data analysis, and making a final judgment based on pre-defined allowable error, researchers and drug development professionals can ensure the reliability and comparability of analytical data, which is fundamental to patient safety and product quality.

In the context of clinical pathology and drug development, the validation of analytical methods is paramount. The reliability of any measurement procedure is quantitatively assessed through key performance parameters including accuracy, precision, and specificity. These metrics are foundational to a 9-step protocol for method comparison experiments, which objectively investigates sources of analytical error (total, random, and systematic) to determine if a new method's measurements are comparable to an established one [1]. Understanding and controlling these parameters ensures that diagnostic tests and laboratory measurements are fit for purpose, ultimately supporting robust scientific research and clinical decision-making.

Defining the Core Parameters

Accuracy

Accuracy refers to the closeness of agreement between a measured value and its corresponding true value. An accurate test method successfully measures what it is intended to measure. In practical terms, it is the ability of a method to determine the true amount or concentration of a substance in a sample. Visually, this can be pictured as a dart hitting the bull's-eye of a target [11].

Precision

Precision describes the closeness of agreement between a series of measurements obtained from multiple sampling of the same homogeneous sample under prescribed conditions. It is a measure of the random variation and reproducibility of a method. A precise method will yield very similar results upon repeated analyses of the same sample. Using the bull's-eye analogy, a precise but inaccurate method would produce darts clustered tightly together, but not necessarily in the centre [11]. Precision is independent of accuracy; a method can be precise without being accurate, and vice versa.

Specificity

Specificity is the ability of an analytical method to assess unequivocally the analyte in the presence of components that may be expected to be present, such as impurities, degradants, or matrix components. In diagnostic terms, it is a test's ability to correctly exclude individuals who do not have a given disease or disorder. A highly specific test (e.g., 90% specific) will correctly identify a high percentage of healthy individuals as "normal," thereby producing few false-positive results [11] [12]. This is particularly crucial when a positive test result could lead to unnecessary, invasive diagnostic procedures or therapies [11].

Related Parameter: Sensitivity

While not the primary focus, sensitivity is often discussed alongside specificity. Sensitivity is the ability of a test to correctly identify individuals who have a given disease. A test with high sensitivity (e.g., 90%) will correctly detect the disease in a high percentage of truly sick individuals, resulting in few false negatives. This is especially important when the goal is to rule out a dangerous disease [11] [12].

Table 1: Core Parameters of Analytical Performance

| Parameter | Definition | Impact of Low Performance | Ideal Scenario |

|---|---|---|---|

| Accuracy | Measures the true value or concentration [11]. | Systematic error (bias); incorrect results. | Measured value equals the true value. |

| Precision | Closeness of repeated measurements [11]. | Random error; unreliable and non-reproducible data. | Low variation between replicate measurements. |

| Specificity | Correctly identifies true negatives [11] [12]. | False positives; misdiagnosis of healthy individuals. | All healthy individuals test negative. |

| Sensitivity | Correctly identifies true positives [11] [12]. | False negatives; failure to detect the condition. | All individuals with the condition test positive. |

The 9-Step Method Comparison Protocol

The following protocol provides a structured framework for planning and executing a method comparison experiment, which is essential for validating a new analytical method against an established one [1].

Step-by-Step Experimental Protocol

Table 2: 9-Step Method Comparison Protocol

| Step | Protocol Title | Detailed Methodology |

|---|---|---|

| 1 | State the Purpose | Clearly define the experiment's goal: to assess whether a new method's measurements are comparable to an established reference method. |

| 2 | Establish Theoretical Basis | Define the statistical models and acceptance criteria for total, random, and systematic error before data collection. |

| 3 | Familiarization | Conduct preliminary runs with the new method to ensure operational competency and understand its characteristics. |

| 4 | Estimate Random Error | Determine the imprecision (e.g., standard deviation) for both the new and established methods using repeated measurements. |

| 5 | Determine Sample Size | Calculate the number of patient samples required to achieve sufficient statistical power for the comparison. |

| 6 | Define Acceptable Difference | Establish an a priori clinical or analytical allowable difference between the two methods. |

| 7 | Measure Patient Samples | Analyze an appropriate number of patient samples covering the assay's reportable range using both methods. |

| 8 | Analyze the Data | Use statistical analyses (e.g., regression, difference plots) to quantify the agreement and identify error components. |

| 9 | Judge Acceptability | Compare the observed differences and errors against the predefined criteria from Step 6 to decide if the new method is acceptable. |

Workflow Diagram of the Protocol

Diagram 1: Method comparison experiment workflow.

Experimental Protocols for Parameter Assessment

Protocol for Accuracy Assessment

Principle: Quantify the agreement between the measured value from the new method and the reference value. Procedure:

- Obtain certified reference materials (CRMs) or samples with known concentrations (spiked samples).

- Analyze these samples using the new method in replicate (e.g., n=5).

- Calculate the mean measured value for each sample.

- Compute the percent recovery or bias:

(Mean Measured Value / Known Value) * 100. - Compare the observed bias against predefined acceptance limits.

Protocol for Precision Estimation

Principle: Determine the random error (impression) of the method under specified conditions. Procedure:

- Within-Run Precision: Analyze a single sample at least 10 times in one analytical run. Calculate the mean, standard deviation (SD), and coefficient of variation (CV%).

- Between-Run Precision: Analyze the same sample in duplicate once per day for at least 10 days. Calculate the overall mean, SD, and CV%.

- Compare the calculated CV% to the maximum allowable imprecision based on clinical or analytical requirements.

Protocol for Specificity and Sensitivity Evaluation

Principle: Assess the method's ability to correctly identify true negatives (specificity) and true positives (sensitivity) relative to a gold standard method [12]. Procedure:

- Select a panel of known positive (n=100) and known negative (n=100) samples, as determined by a gold standard test.

- Analyze all samples using the new method.

- Tabulate the results in a 2x2 contingency table.

- Calculate performance metrics:

- Sensitivity = True Positives / (True Positives + False Negatives)

- Specificity = True Negatives / (True Negatives + False Positives)

Table 3: Specificity and Sensitivity Calculation Table

| Gold Standard Positive | Gold Standard Negative | Total | |

|---|---|---|---|

| New Method Positive | True Positive (TP) | False Positive (FP) | TP + FP |

| New Method Negative | False Negative (FN) | True Negative (TN) | FN + TN |

| Total | TP + FN | FP + TN | N |

| Calculation | Sensitivity = TP / (TP + FN) | Specificity = TN / (TN + FP) |

Interrelationship of Performance Parameters

Diagram 2: How key parameters contribute to analytical reliability.

The Scientist's Toolkit: Essential Reagents and Materials

Table 4: Key Research Reagent Solutions for Method Validation

| Item | Function in Experiment |

|---|---|

| Certified Reference Materials (CRMs) | Provides a matrix-matched sample with a known concentration of the analyte, essential for assessing accuracy and calibrating instruments. |

| Quality Control (QC) Materials | Used to monitor the stability and precision of the method over time (within-run and between-run). |

| Patient Samples | Covers the clinical range of interest and provides a real-world matrix for the method comparison experiment. |

| Interferent Substances | Used to challenge the method and evaluate specificity by testing for cross-reactivity or interference. |

| Calibrators | A series of samples with known concentrations used to construct the standard curve for quantitative analysis. |

| Sample Matrix (e.g., serum, plasma) | The biological fluid in which the analyte is suspended; used for preparing spiked samples and for dilution studies. |

Understanding Regulatory and Clinical Requirements (FDA, CLSI)

Method comparison studies are a critical component of method verification in clinical and pharmaceutical laboratories, serving to assess the comparability of a new measurement procedure against an established one [8]. The fundamental question these studies answer is whether two methods can be used interchangeably without affecting patient results and clinical outcomes [8]. In the United States, these activities are governed by stringent regulatory frameworks, primarily established by the U.S. Food and Drug Administration (FDA) and informed by standards from the Clinical and Laboratory Standards Institute (CLSI).

The FDA's oversight of in vitro diagnostic devices and laboratory-developed tests has evolved significantly, most notably with the 2024 final rule on LDTs that phases out the previous enforcement discretion policy [13]. Simultaneously, CLSI develops consensus standards that provide the technical methodology for performing method comparison studies, including guidelines like EP09-A3 for method comparison and EP25-A for reagent stability evaluation [8] [14]. The early 2025 recognition of many CLSI breakpoints by the FDA represents a major regulatory advancement, creating a more pragmatic pathway for laboratories to implement updated testing standards [13].

Key Regulatory Standards and Interpretive Criteria

FDA-Recognized Susceptibility Test Interpretive Criteria

The FDA maintains specific Antibacterial Susceptibility Test Interpretive Criteria (STIC), commonly known as breakpoints, which define whether a bacterial isolate is categorized as susceptible, intermediate, or resistant to an antibacterial drug [15]. These breakpoints are essential for ensuring consistent interpretation of antimicrobial susceptibility testing (AST) results across clinical laboratories.

As of 2025, the FDA recognizes numerous standards published by CLSI, including those found in M100 (35th edition), M45 (3rd edition), M24S (2nd edition), and M43-A (1st edition) [15] [13]. This recognition signifies a substantial alignment between FDA requirements and CLSI standards, particularly for microorganisms that represent an unmet clinical need. The current FDA approach lists only exceptions or additions to the recognized CLSI standards, rather than duplicating all recognized breakpoints [13]. This streamlined approach provides clarity for laboratories implementing these standards.

CLSI Guidelines for Method Comparison

CLSI standards provide the technical foundation for designing, conducting, and analyzing method comparison studies. The EP09-A3 standard specifically defines procedures for using patient samples to compare measurement procedures and estimate bias [8]. Key CLSI guidelines relevant to method comparison include:

- EP09-A3: Measurement Procedure Comparison and Bias Estimation Using Patient Samples

- EP15-A2: Verification of Precision and Trueness

- EP25-A: Evaluation of Stability of In Vitro Diagnostic Reagents [14]

These guidelines establish rigorous methodologies for determining whether a new method demonstrates sufficient agreement with an existing method to be considered interchangeable for clinical use.

Experimental Design and Protocol

Critical Design Considerations

A properly designed method comparison study requires careful planning to generate meaningful, actionable results. The essential design elements include:

- Sample Selection and Number: A minimum of 40 patient samples is recommended, with 100 samples being preferable to identify unexpected errors due to interferences or sample matrix effects [8]. Samples should cover the entire clinically meaningful measurement range rather than focusing on a narrow concentration interval [8].

- Timing of Measurements: Simultaneous sampling of the variable of interest is essential, with the definition of "simultaneous" determined by the rate of change of the variable being measured [7]. For stable analytes, measurements within several seconds or minutes may be acceptable, while for rapidly changing parameters, truly simultaneous measurement is required.

- Measurement Replication: Duplicate measurements for both current and new methods are recommended to minimize random variation effects [8]. When duplicates are performed, the mean of two measurements should be used in data plotting and analysis [8].

- Study Duration: Samples should be measured over several days (at least 5) and multiple runs to mimic real-world testing conditions and capture typical laboratory variability [8].

Defining Acceptable Performance

Before conducting a method comparison experiment, laboratories must define acceptable bias based on performance specifications selected according to established models [8]. The Milano hierarchy provides a framework for establishing these specifications:

- Clinical Outcomes: Based on the effect of analytical performance on clinical outcomes

- Biological Variation: Based on components of biological variation of the measurand

- State-of-the-Art: Based on the best performance technically achievable [8]

These predetermined specifications form the basis for determining whether the observed bias between methods is clinically acceptable.

Statistical Analysis and Data Interpretation

Inappropriate Statistical Methods

A critical understanding in method comparison is recognizing that some common statistical approaches are inappropriate for assessing method agreement:

- Correlation Analysis: Correlation coefficients (r) measure the strength of a relationship between two variables but cannot detect constant or proportional bias between methods [8]. Two methods can show perfect correlation (r=1.00) while having clinically unacceptable differences [8].

- t-Tests: Neither paired t-tests nor t-tests for independent samples adequately assess method comparability [8]. T-tests may fail to detect clinically meaningful differences with small sample sizes or flag statistically significant but clinically irrelevant differences with large sample sizes [8].

Appropriate Statistical Approaches

Proper statistical analysis for method comparison studies involves both visual and quantitative methods:

- Bland-Altman Plots: Also called difference plots, these graphs plot the differences between methods against the average of the two methods [8] [7]. The plot includes a line for the mean difference (bias) and lines representing the limits of agreement (bias ± 1.96 standard deviations) [7].

- Bias and Precision Statistics: The bias represents the mean difference between methods, while the standard deviation of the differences quantifies variability [7]. The limits of agreement indicate the range within which 95% of differences between the two methods are expected to fall [7].

- Regression Analysis: Deming regression or Passing-Bablok regression accounts for measurement error in both methods and provides more reliable estimates of constant and proportional bias than ordinary least squares regression [8].

The table below summarizes key statistical terms and their interpretation in method comparison studies:

Table 1: Statistical Terms in Method Comparison Analysis

| Term | Definition | Interpretation |

|---|---|---|

| Bias | The mean difference between values obtained with two methods [7] | Quantifies how much higher (positive bias) or lower (negative bias) the new method is compared to the established method |

| Limits of Agreement | Bias ± 1.96 × standard deviation of differences [7] | The range where 95% of differences between the two methods are expected to fall |

| Precision | The degree to which the same method produces the same results on repeated measurements [7] | Necessary but insufficient condition for agreement between methods |

Regulatory Compliance and Documentation

Implementation Timeframes

Regulatory compliance requires adherence to specific timelines for implementing updated standards. The College of American Pathologists requires laboratories to make updates to AST breakpoints within 3 years of publication by the FDA [13]. Similarly, the FDA provides transition periods when standards are updated, such as allowing declarations of conformity to CLSI EP25-A until December 20, 2025, before requiring transition to the newer EP25 (2nd edition) [14].

Documentation Requirements

Comprehensive documentation is essential for demonstrating regulatory compliance. Method comparison studies should include:

- Experimental Design: Sample selection criteria, sample size justification, measurement procedures, and acceptance criteria

- Raw Data: All paired measurements with information about sample handling and storage

- Statistical Analysis: Graphical displays (scatter plots, difference plots), statistical calculations, and interpretation of results

- Conclusion Statement: Clear determination of whether methods are interchangeable based on predefined acceptance criteria

Research Reagent Solutions

Table 2: Essential Research Reagents and Materials

| Reagent/Material | Function | Regulatory Considerations |

|---|---|---|

| Reference Materials | Provide known values for calibration and trueness assessment | Should be traceable to reference measurement procedures |

| Quality Control Materials | Monitor assay performance over time | Should span clinically relevant decision levels |

| Stability Testing Reagents | Establish shelf life and in-use stability claims | CLSI EP25-A provides guidance for stability studies [14] |

| Matrix-matched Samples | Assess commutability of calibrators | Ensure samples behave similarly to patient specimens |

Method Comparison Workflow

The following diagram illustrates the complete method comparison protocol from planning through implementation:

Data Analysis Workflow

The statistical analysis phase follows a systematic approach to ensure proper interpretation:

Successful method comparison studies require integration of regulatory requirements with robust experimental design and appropriate statistical analysis. The recent FDA recognition of CLSI standards represents a significant advancement in creating a unified approach to antimicrobial susceptibility testing and method validation [13]. By following established protocols, using proper statistical methods beyond simple correlation analysis, and documenting studies thoroughly, laboratories can ensure regulatory compliance while implementing method changes that maintain the quality of patient testing and clinical outcomes.

Executing the 9-Step Protocol: A Practical Guide for Laboratory Application

Purpose Statement

The foundational step in any method-comparison study is to clearly articulate its primary purpose. This involves defining the clinical or research question with precision, establishing the context for the investigation, and stating the ultimate goal of the experimental work.

Clinical and Research Context

In clinical practice and research, new measurement technologies and methodologies are continuously emerging. The essential question a method-comparison study answers is whether a new measurement method can be used interchangeably with an established method already in clinical or research use [7]. The core purpose is not merely to observe correlation, but to determine if two methods for measuring the same variable produce equivalent results, thereby informing decisions about substitution in practical applications [7].

For studies conducted within drug development, this purpose must be framed within the regulatory requirements for an Investigational New Drug (IND) application. The IND serves as an exemption to transport an investigational drug across state lines for clinical trials and must contain, among other elements, detailed clinical protocols that demonstrate the compound will not expose humans to unreasonable risks [16].

Defining the Primary Objective

The objective must be specific, measurable, and directly related to the clinical question of substitution. A well-defined objective typically follows this structure: "To determine if [New Method B] provides equivalent measurements of [Analyte/Variable] compared to [Established Method A] in [Specific Population/Matrix]."

Table: Core Components of a Study Purpose Statement

| Component | Description | Example |

|---|---|---|

| New Method | The novel device, assay, or technique under evaluation. | Non-invasive infrared thermometer. |

| Established Method | The current, validated standard of practice or reference method. | Pulmonary artery catheter thermal sensor. |

| Measured Variable | The specific physiological or analytical parameter being measured. | Core body temperature. |

| Population/Matrix | The specific subject population, sample type, or matrix. | Critically ill adult patients. |

| Goal | The ultimate decision the study will inform. | To validate the new thermometer for clinical use. |

Defining the Acceptable Difference

A priori definition of the acceptable difference (also termed the "equivalence margin" or "clinically acceptable bias") is the most critical analytical consideration in a method-comparison study. This pre-defined value represents the maximum amount of bias between the two methods that is considered clinically or analytically insignificant, thus permitting the methods to be used interchangeably.

Establishing the Equivalence Margin

The acceptable difference is not a statistical value to be derived from the collected data, but a consensus value determined from clinical relevance, biological variation, and analytical performance goals [7]. The choice of this margin has direct implications for the study's sample size and the ultimate interpretation of its results.

Table: Considerations for Defining the Acceptable Difference

| Basis for Definition | Description | Application Example |

|---|---|---|

| Clinical Agreement | The difference that would lead to a change in clinical decision-making. | A glucose measurement difference that would alter insulin dosing. |

| Biological Variation | Based on known within-subject and between-subject variability of the analyte. | Defining acceptable bias for cortisol measurement as a fraction of its normal diurnal variation. |

| Regulatory Guidelines | Recommendations from bodies like the FDA, CLSI (Clinical and Laboratory Standards Institute). | Using CLSI EP09c guidelines for laboratory method validation. |

| State of the Art | The performance achievable by current best-in-class technologies. | The typical precision of high-performance liquid chromatography (HPLC) assays for a new drug. |

Statistical and Analytical Framework

The defined acceptable difference is used to set up formal equivalence hypotheses. These are fundamentally different from the standard null hypothesis of no difference.

- Null Hypothesis (H₀): The true bias between the methods is greater than the acceptable difference (i.e., the methods are not equivalent).

- Alternative Hypothesis (H₁): The true bias between the methods is less than or equal to the acceptable difference (i.e., the methods are equivalent) [17].

The subsequent statistical analysis, often involving confidence intervals for the mean difference (bias), will test these hypotheses. If the entire confidence interval for the bias falls within the range of -Δ to +Δ (where Δ is the acceptable difference), equivalence can be claimed.

Experimental Protocol

This section provides a detailed, step-by-step methodology for the initial phase of planning a method-comparison study.

Materials and Reagents

Table: Research Reagent Solutions for Method-Comparison Studies

| Item Category | Specific Function |

|---|---|

| Established Reference Method | Serves as the benchmark against which the new method is compared. Provides the reference values for all paired measurements. |

| New Method/Technology | The device, instrument, or assay under evaluation for precision, bias, and agreement with the reference. |

| Calibration Standards | Certified reference materials used to ensure both measurement methods are operating within their specified performance ranges. |

| Control Samples | Materials with known or stable characteristics run alongside test samples to monitor the daily performance and stability of both methods. |

| Data Collection Platform | Software or electronic data capture system designed to record paired measurements simultaneously, minimizing transcription errors. |

Step-by-Step Procedure

Draft the Purpose Statement:

- Formulate a single, concise sentence stating the intent to evaluate the agreement between the new method (B) and the established method (A) for measuring a specific variable.

- Justify the clinical or research need for the new method (e.g., faster, cheaper, less invasive).

Conduct a Literature Review:

- Investigate existing data on the biological and analytical variation of the analyte.

- Review published method-comparison studies for similar technologies to inform the expected range of bias and a justifiable equivalence margin.

Convene an Expert Panel:

- Assemble a multidisciplinary team including clinical experts, laboratory scientists, and statisticians.

- Present literature findings and propose a preliminary value for the acceptable difference.

Define the Acceptable Difference (Δ):

- Facilitate a discussion within the expert panel to reach a consensus on the value of Δ.

- Ensure the chosen value is documented with a clear rationale referencing clinical impact, biological variation, or regulatory guidance.

Formalize Hypotheses and Analysis Plan:

Workflow Diagram

The following diagram illustrates the logical sequence and decision points for this first step of the protocol.

Theoretical Basis for Method Comparison

Establishing a robust theoretical basis is a critical step that precedes data collection in a method comparison experiment. This foundation defines the analytical principles of the methods, identifies potential sources of error, and sets objective criteria for evaluating the new method's performance against an established reference [1].

A method comparison study objectively investigates sources of analytical error, which are categorized as total error, random error, and systematic error [1]. The theoretical framework should explicitly state how the new method correlates with the established method in terms of these measurement principles.

Key Performance Characteristics for Theoretical Review

The theoretical assessment should focus on several core analytical performance characteristics, summarized in the table below.

Table 1: Key Analytical Performance Characteristics for Theoretical Assessment

| Performance Characteristic | Description | Impact on Comparison |

|---|---|---|

| Measurement Principle | The fundamental chemical, biological, or physical principle used for quantification (e.g., immunoassay, chromatography, mass spectrometry). | Determines the potential for specific and non-specific interference, impacting systematic error. |

| Calibration Model | The mathematical model used to convert instrument signal to analyte concentration (e.g., linear, quadratic). | Influences the accuracy and reportable range of the method. |

| Analytic Specificity | The ability of the method to measure solely the intended analyte in the presence of cross-reactants or interferents. | A primary source of constant or proportional systematic error if different from the reference method. |

| Reportable Range | The span of analyte values that can be reliably measured, from the lower to the upper limit. | Defines the concentration range over which samples must be selected for the comparison. |

Protocol for Familiarization with the New Method

Familiarization is a hands-on process where laboratory personnel gain operational proficiency with the new method or instrument. This phase focuses on assessing the method's practical performance and identifying any procedural nuances not apparent from the theoretical review [1].

Step-by-Step Familiarization Protocol

Objective: To ensure consistent and reliable operation of the new method and obtain preliminary estimates of its random error.

Materials and Reagents:

- New analytical instrument or test system.

- Manufacturer-provided calibration materials and reagents.

- Quality Control (QC) materials at multiple clinically relevant levels.

- Protocol for initial precision estimation (e.g., Clinical and Laboratory Standards Institute (CLSI) EP15 or a internal pilot protocol).

Procedure:

- Training: Ensure all operators complete manufacturer-recommended training sessions.

- Instrument Setup and Calibration: Install the instrument and perform initial setup and calibration according to the manufacturer's specifications.

- Preliminary Precision Testing: a. Perform replicate analyses (e.g., n=20) of at least two QC levels within a single run to estimate within-run precision. b. Perform replicate analyses of the same QC levels over multiple days (e.g., once daily for 10 days) to estimate between-run precision.

- Procedure Refinement: Document any deviations from the manufacturer's instructions and optimize pipetting, mixing, and incubation steps for the local environment.

- Troubleshooting: Create a log to document any operational errors, instrument flags, or unexpected results encountered during the familiarization phase.

Data Analysis and Interpretation

Calculate the mean, standard deviation (SD), and coefficient of variation (CV%) for the replicate measurements at each QC level. Compare these initial precision estimates (random error) with the manufacturer's claims and the laboratory's required performance specifications.

Table 2: Example Data Sheet for Familiarization Phase Precision Estimation

| QC Level | Theoretical Value (mg/dL) | Run Type | Number of Replicates (n) | Mean (mg/dL) | SD (mg/dL) | CV% | Manufacturer's Claim CV% |

|---|---|---|---|---|---|---|---|

| Level 1 (Low) | 50.0 | Within-Run | 20 | 49.8 | 0.95 | 1.91 | 2.0 |

| Level 2 (High) | 300.0 | Within-Run | 20 | 302.1 | 4.21 | 1.39 | 1.5 |

| Level 1 (Low) | 50.0 | Between-Run | 10 | 50.2 | 1.12 | 2.23 | 2.5 |

| Level 2 (High) | 300.0 | Between-Run | 10 | 299.5 | 5.10 | 1.70 | 2.0 |

Experimental Workflow and Signaling

The following diagram illustrates the logical sequence and decision points for completing Step 2 of the method comparison protocol.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials required for the successful execution of the theoretical review and familiarization phase.

Table 3: Essential Research Reagent Solutions for Method Familiarization

| Item | Function / Purpose |

|---|---|

| Reference Method Reagents | Provides the benchmark for comparison against the new method. Must be traceable to a higher-order standard. |

| Calibrators | Used to establish the analytical measurement range and calibration curve for the new method. |

| Quality Control (QC) Materials | Used to monitor the stability and precision of the new method during the familiarization phase and beyond. Should include multiple concentration levels. |

| Panel of Patient Samples | A diverse set of remnant patient samples covering the analytical measurement range and various disease states, intended for the main comparison study. |

| Interference Check Samples | Solutions containing potential interferents (e.g., bilirubin, hemoglobin, lipids) to theoretically and practically assess method specificity. |

| Standard Operating Procedure (SOP) Document | A detailed, step-by-step protocol for operating the new method, ensuring consistency across operators and runs. |

| Data Collection and Statistical Software | Tools for calculating basic statistics (mean, SD, CV%), performing regression analysis, and creating difference plots for the main comparison. |

This application note provides detailed protocols for Step 3 of planning a method comparison experiment, focusing on sample size determination, specimen selection, and handling. Proper execution of this step is critical for ensuring that the experimental data will be reliable, clinically relevant, and capable of detecting medically important errors between measurement procedures. The guidance herein is framed within a comprehensive 9-step protocol for designing robust method comparison studies, aligning with standards such as CLSI EP09-A3 [8].

Sample Size Determination

A sufficiently large sample size is essential to achieve reliable estimates of systematic error (bias) and to ensure the experiment has the power to detect clinically significant differences between methods. The recommended sample size depends on the specific goals of the comparison and the required statistical confidence.

Table 1: Sample Size Recommendations for Method Comparison Studies

| Scenario / Guideline | Minimum Recommended Sample Size | Key Rationale and Considerations |

|---|---|---|

| Basic CLSI EP09 Guidance [18] [8] | 40 patient specimens | Provides a baseline for estimating systematic error across the analytical measurement range. |

| Enhanced Reliability & Specificity Assessment [18] [8] | 100 to 200 patient specimens | A larger sample size is preferable to identify unexpected errors due to interferences or sample matrix effects and to better assess method specificity. |

| Cross-Validation of Bioanalytical Methods [19] | 100 incurred matrix samples | Utilizes samples from four concentration quartiles; equivalence is concluded if the 90% confidence interval for the mean percent difference falls within ±30%. |

| Data Distribution Over Time [18] | 2-5 specimens per day over 5-20 days | Distributing sample analysis over multiple days and analytical runs minimizes the impact of systematic errors that might occur in a single run and better mimics real-world conditions. |

Specimen Selection and Coverage

The quality of the patient specimens selected is as important as the quantity. Careful selection ensures that the comparison tests the methods over the full range of conditions they will encounter in routine use.

Core Principles for Specimen Selection

- Cover the Clinically Meaningful Range: Specimens should be selected to cover the entire working range of the method, from low to high medical decision points [18] [8].

- Represent Pathological Diversity: The sample set should represent the spectrum of diseases and conditions expected in routine application, as different sample matrices (e.g., from patients with renal disease, hyperlipidemia) can reveal method-specific interferences [18].

- Avoid Gaps in the Data Range: Specimens should be collected to ensure continuous coverage across the measurement range. Gaps can invalidate regression statistics and lead to unreliable error estimates [8].

Workflow for Specimen Selection and Handling

The following diagram outlines the logical workflow for the specimen selection, handling, and analysis process.

Sample Matrix and Stability

The integrity of comparison data is highly dependent on maintaining specimen stability from collection through analysis. Differences observed due to poor handling are indistinguishable from true analytical bias.

Table 2: Specimen Stability and Handling Guidelines

| Factor | Protocol Requirement | Rationale and Consequences |

|---|---|---|

| General Stability & Simultaneous Analysis [18] [7] | Analyze patient specimens by both methods within 2 hours of each other. | Prevents time-dependent changes in the analyte (e.g., degradation, cellular metabolism) from being misattributed as systematic analytical error. |

| Short-Stability Analytes [18] | Analyze within a shorter, analyte-specific timeframe (e.g., for ammonia, lactate). | For labile analytes, even short delays can cause significant concentration changes. |

| Stabilization Techniques [18] | Employ methods such as:• Serum/plasma separation• Refrigeration or freezing• Addition of preservatives | Defined handling protocols prior to the study are critical to improve stability for specific tests and prevent pre-analytical errors. |

| Sample Integrity [8] | Analyze samples on the day of blood collection. | Ensures that results reflect the in vivo state of the patient and are not compromised by long-term storage artifacts. |

The Scientist's Toolkit: Essential Research Materials

Table 3: Key Reagents and Materials for Method Comparison Studies

| Item / Solution | Function / Application in Protocol |

|---|---|

| Characterized Patient Pool | Serves as the primary sample source for the comparison. Specimens must be well-characterized and cover the required pathological and concentration range [18] [8]. |

| Appropriate Sample Collection Tubes | Ensures proper specimen integrity at the point of collection (e.g., EDTA plasma, serum separator tubes). The matrix must be compatible with both the test and comparative methods. |

| Aliquoting Tubes/Vials | Allows for the creation of identical sample portions to be analyzed by each method, and for stable storage of reserves. |

| Specimen Preservation Solutions | Stabilizes specific analytes during storage (e.g., protease inhibitors for protein assays, fluoride for glucose). |

| Stable Control Materials | Used to monitor the performance of both the test and comparative methods throughout the data collection period, ensuring both are in a state of control. |

Experimental Protocol: Executing the Comparison Study

This section provides a step-by-step protocol for the specimen analysis phase of the method comparison.

Protocol: Specimen Analysis in a Method Comparison Study

Objective: To generate paired measurement data from the test and comparative methods under conditions that minimize pre-analytical and analytical bias.

Materials:

- Pre-selected and aliquoted patient specimens (see Table 1 for n)

- Test method instrumentation/reagents

- Comparative method instrumentation/reagents

- Quality control materials for both methods

Procedure:

- Schedule Analysis: Plan the analysis of the full specimen set over a minimum of 5 different days, analyzing 2-5 specimens per day to incorporate routine between-run variation [18].

- Quality Control: Prior to analyzing patient specimens, run quality control materials on both methods to verify proper performance.

- Randomize Run Order: For each day's analysis, randomize the order of all patient specimens (for both methods) to avoid systematic effects of carryover or instrument drift [8].

- Analyze in Duplicate (Recommended): If possible, analyze each specimen in duplicate by both methods. Ideally, duplicates should be different aliquots analyzed in different runs or in a different order, not simple back-to-back replicates [18].

- Analyze Simultaneously: Analyze the paired aliquots by the two methods within 2 hours of each other to ensure analyte stability [18] [7].

- Immediate Data Review: Graph the comparison data (e.g., using a difference plot) as it is collected to visually identify any discrepant results or outliers [18] [8].

- Repeat Discrepant Analyses: If large differences are observed for any specimen, repeat the analysis for that specimen on both methods while the original sample is still available and stable [18].

Adherence to the principles and protocols outlined in this document for sample size, selection, and stability is fundamental to the success of any method comparison experiment. A well-designed experiment using an adequate number of appropriately selected and handled patient specimens provides a solid foundation for the subsequent statistical analysis and final decision on the acceptability of the new method. The subsequent steps in the 9-step protocol will build upon this foundation to complete a comprehensive method comparison.

This protocol provides a detailed framework for the fourth step in planning a method-comparison experiment: experimental design, with a specific focus on duplicate measurements, run-to-run variation, and timeframe. A robust design is critical for producing reliable, reproducible data that can accurately characterize the agreement or disagreement between a new measurement method and an established one. This step ensures that the resulting bias and precision statistics truly reflect the performance of the methods under investigation across realistic and varied conditions [7].

Core Concepts and Definitions

Table 1: Key Terminology for Experimental Design

| Term | Definition & Application in Method-Comparison |

|---|---|

| Duplicate Measurements | Repeated measurements of the same sample or subject taken under identical conditions during the same analytical run. These are used to assess repeatability (within-run precision). |

| Run-to-Run Variation | The variability in measurement results observed between different analytical runs, which may be conducted on different days, by different operators, or with different reagent lots. Assessing this is key to understanding reproducibility. |

| Timeframe | The temporal design of the experiment, encompassing the definition of "simultaneous" measurement, the total duration of data collection, and the interval between repeated measurements on the same subject. |

| Repeatability | The degree to which the same method produces the same results on repeated measurements under identical, within-run conditions. This is a necessary precondition for assessing agreement between methods [7]. |

| Bias | In a method-comparison study, this is the mean overall difference in values obtained with the two different methods (new method minus established method). It quantifies how much higher (positive bias) or lower (negative bias) the new method is compared to the established one [7]. |

| Precision | The degree to which the same method produces the same results on repeated measurements (repeatability). It also refers to the degree to which values cluster around the mean of their distribution, which informs the confidence in the results [7]. |

Detailed Experimental Protocols

Protocol for Incorporating Duplicate Measurements

The purpose of this protocol is to quantify the inherent short-term variability (repeatability) of each method. This must be established before meaningful comparison between methods can be made, as poor repeatability in either method will obscure the true agreement between them [7].

- Design: For a subset of the samples or subjects (e.g., 10-20%), collect two measurements using the same method within a single analytical run. The process should be identical and immediate.

- Execution: The duplicate measurements should be performed by the same operator, using the same instrument and reagents, in a time frame so short that the true value of the analyte is not expected to change.