A Comprehensive Guide to Machine Learning Validation Metrics for Robust Model Comparison in Biomedical Research

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to select, apply, and interpret validation metrics for robust comparison of machine learning models.

A Comprehensive Guide to Machine Learning Validation Metrics for Robust Model Comparison in Biomedical Research

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to select, apply, and interpret validation metrics for robust comparison of machine learning models. Covering foundational concepts, methodological application, troubleshooting for common pitfalls, and rigorous statistical validation, it addresses the critical need for unbiased model evaluation in biomedical contexts like disease prediction and genomics. The guide synthesizes current best practices and metrics—from accuracy and AUC-ROC to statistical testing—to ensure reliable, reproducible, and clinically relevant model selection.

Understanding the Core Metrics: A Primer on Evaluation for Machine Learning

The Critical Role of Validation Metrics in Biomedical Machine Learning

In biomedical machine learning (ML), where model predictions can directly influence patient care and therapeutic development, validation metrics are not merely performance indicators but are fundamental to ensuring model reliability, safety, and clinical utility. The selection and interpretation of these metrics form the bedrock of rigorous model comparison and evaluation. While generic metrics like accuracy and Area Under the Receiver Operating Characteristic Curve (AUC-ROC) provide a baseline assessment, their limitations become starkly apparent when faced with the complex realities of biomedical data, such as imbalanced datasets, rare events, and multi-modal inputs [1]. Consequently, a nuanced understanding of validation metrics is essential for researchers and drug development professionals to navigate the transition from a model that is statistically promising to one that is clinically actionable.

This guide objectively compares the performance of various ML approaches, from conventional statistics to advanced deep learning, across different biomedical domains. It synthesizes experimental data to highlight how the choice of validation strategy and metrics directly impacts conclusions about model efficacy. By providing detailed methodologies and standardized comparisons, this article aims to equip scientists with the knowledge to critically appraise ML studies and implement robust validation practices that are commensurate with the high stakes of biomedical research and development.

A Comparative Framework for ML Model Performance

To objectively compare the performance of machine learning models against conventional statistical methods, researchers must adopt a standardized framework centered on robust validation metrics. The area under the receiver operating characteristic curve (AUC) or the concordance index (C-index) are most frequently used for assessing a model's discriminative ability, that is, its capacity to distinguish between classes or events [2] [3]. However, a full picture of model performance requires a suite of metrics. Accuracy, precision, recall, and F1-score offer complementary views, particularly for classification tasks [4] [5]. In domains like drug discovery, domain-specific metrics such as Precision-at-K and Rare Event Sensitivity are increasingly critical for evaluating models on tasks like ranking candidate drugs or identifying rare adverse events [1].

A critical, yet often overlooked, aspect of comparison is the statistical rigor applied during validation. Studies have shown that the common practice of using a simple paired t-test on accuracy scores from cross-validation runs can be fundamentally flawed. The statistical significance of the difference between two models can be artificially inflated by the specific cross-validation setup, such as the number of folds (K) and the number of repetitions (M), leading to unreliable conclusions and a risk of p-hacking [6]. Therefore, a rigorous comparison must control for these factors to ensure reported differences are genuine and not artifacts of the validation procedure.

Table 1: Core Performance Metrics for Biomedical ML Model Validation

| Metric Category | Metric Name | Primary Function | Ideal Context of Use |

|---|---|---|---|

| Discrimination | AUC-ROC / C-index | Measures the model's ability to distinguish between classes or rank events. | General model performance; comparison across studies. |

| Classification Accuracy | Accuracy | Measures the overall proportion of correct predictions. | Balanced datasets where all classes are equally important. |

| Precision | Measures the proportion of positive identifications that were actually correct. | Critical when the cost of false positives is high (e.g., drug candidate selection). | |

| Recall (Sensitivity) | Measures the proportion of actual positives that were correctly identified. | Critical when the cost of false negatives is high (e.g., disease screening). | |

| F1-Score | The harmonic mean of precision and recall. | Balanced view when class distribution is imbalanced. | |

| Domain-Specific | Precision-at-K | Measures precision when considering only the top K ranked predictions. | Prioritizing candidates in early-stage drug discovery. |

| Rare Event Sensitivity | Specifically measures the model's ability to detect low-frequency events. | Predicting adverse drug reactions or rare disease subtypes. |

Performance Comparison Across Biomedical Domains

Empirical evidence from systematic reviews and meta-analyses reveals a nuanced landscape when comparing machine learning models to conventional statistical methods like logistic regression (LR). The performance advantage of ML is not universal and is often contingent on the clinical context, data characteristics, and model architecture.

In cardiology, a systematic review of 59 studies on percutaneous coronary intervention (PCI) outcomes found that while ML models showed higher pooled c-statistics for predicting mortality, major adverse cardiac events (MACE), bleeding, and acute kidney injury, these differences were not statistically significant. For instance, for short-term mortality, ML models achieved a c-statistic of 0.91 compared to 0.85 for LR (P=0.149) [3]. Similarly, a meta-analysis focused on ML versus conventional risk scores (TIMI, GRACE) for predicting major adverse cardiovascular and cerebrovascular events (MACCE) after PCI did find superior performance for ML models (AUC: 0.88 vs 0.79) [7]. This suggests that while ML can capture complex patterns, its marginal gain over well-established, simpler models may be limited in some applications.

Conversely, in other domains, the type of ML model is a significant differentiator. A systematic review of cardiovascular event prediction in dialysis patients found that deep learning models significantly outperformed both conventional statistical models and traditional ML algorithms (P=0.005). However, when considering traditional ML models as a whole (e.g., Random Forest, SVM), they showed no significant advantage over conventional models (P=0.727) [2]. This highlights that the "ML advantage" is often driven by specific, advanced architectures rather than being a universal property of all data-driven algorithms.

Table 2: Experimental Performance Data Across Clinical Domains

| Clinical Domain | Outcome Predicted | Best Performing Model | Performance (AUC/C-statistic) | Conventional Model Performance (AUC/C-statistic) |

|---|---|---|---|---|

| Cardiology | MACCE after PCI | Machine Learning (ensemble) | 0.88 [7] | 0.79 (GRACE/TIMI) [7] |

| Long-term Mortality after PCI | Machine Learning | 0.84 [3] | 0.79 (Logistic Regression) [3] | |

| Short-term Mortality after PCI | Machine Learning | 0.91 [3] | 0.85 (Logistic Regression) [3] | |

| Coronary Artery Disease | Random Forest (with BESO feature selection) | 0.92 (Accuracy) [8] | 0.71-0.73 (Clinical Risk Scores) [8] | |

| Nephrology | Cardiovascular Events in Dialysis | Deep Learning | Significantly higher than CSMs (P=0.005) [2] | 0.772 (Mean AUC) [2] |

| Cardiovascular Events in Dialysis | Traditional Machine Learning | Not significantly different from CSMs (P=0.727) [2] | 0.772 (Mean AUC) [2] | |

| Infectious Disease | Early Prediction of Sepsis | Random Forest | 0.818 (Internal); 0.771 (External) [5] | N/A (Compared to other ML models) |

| Medical Text Analysis | Disease Classification from Notes | Logistic Regression | 0.83 (Accuracy) [4] | N/A (Outperformed other ML models) |

Detailed Experimental Protocols for Model Validation

The reliability of the performance data presented in the previous section hinges on the experimental protocols used for model training and validation. The following details two key methodologies commonly employed in rigorous biomedical ML research.

K-Fold Cross-Validation with Statistical Testing

This protocol is widely used for model assessment and comparison, especially with limited data. Its goal is to provide a robust estimate of model performance and statistically compare different algorithms.

Workflow Overview:

Protocol Steps:

- Dataset Preparation: The entire available dataset is first preprocessed (handling missing values, normalization, etc.) and then randomly partitioned into K subsets of approximately equal size (folds).

- Iterative Training & Validation: For each iteration

i(from 1 to K):- Training Set: Folds {1, ..., K} excluding fold

iare combined. - Test Set: Fold

iis used. - Model Training: Each competing model (e.g., Model A and Model B) is trained from scratch on the training set.

- Performance Evaluation: Both models are evaluated on the test set, and their performance metrics (e.g., AUC, accuracy) are recorded as a paired score.

- Training Set: Folds {1, ..., K} excluding fold

- Performance Aggregation: After K iterations, each model has K performance scores. The mean and standard deviation of these scores are reported as the model's cross-validated performance.

- Statistical Comparison: The K paired scores from the two models are compared using an appropriate statistical test, such as a paired t-test, to determine if the observed performance difference is statistically significant. It is critical to note that this test can be sensitive to the number of folds (K) and repetitions (M), and improper setup can lead to overconfidence in results [6].

Holdout Validation with External Testing

This protocol is considered the gold standard for evaluating a model's generalizability to unseen data from potentially different distributions, simulating real-world deployment.

Workflow Overview:

Protocol Steps:

- Internal Split: The initial dataset is divided into an internal development set (e.g., 70-80%) and a held-out internal test set (e.g., 20-30%) [8]. The internal test set is not used for any aspect of model training or hyperparameter tuning.

- Model Development: The development set is further split (e.g., via cross-validation) into training and validation sets to train and tune the model's hyperparameters.

- Internal Locking: A final model is trained on the entire development set using the optimal hyperparameters. This model is then "locked" – no further changes are allowed.

- Initial Evaluation: The locked model is evaluated on the internal test set to get an initial estimate of its performance on unseen data.

- External Validation: The locked model is applied to a completely separate, external dataset collected from a different institution, time period, or population [5]. This step is the strongest test of a model's robustness and generalizability. The performance metrics obtained from this external validation cohort are the most reliable indicators of how the model will perform in practice.

The Scientist's Toolkit: Key Reagents for Rigorous ML Validation

Building and validating machine learning models in biomedicine requires more than just algorithms and code. It demands a suite of methodological "reagents" and tools to ensure the process is sound, reproducible, and clinically relevant. The following table details essential components of this toolkit.

Table 3: Essential Toolkit for Biomedical ML Model Validation

| Tool Category | Tool Name | Primary Function | Relevance to Validation |

|---|---|---|---|

| Reporting Guidelines | TRIPOD+AI [7] | A checklist for transparent reporting of multivariable prediction models that use AI/ML. | Ensures all critical information about model development and validation is reported, enhancing reproducibility and critical appraisal. |

| Risk of Bias Assessment | PROBAST [7] [2] [3] | A tool to assess the risk of bias and applicability of prediction model studies. | Allows researchers to systematically evaluate the methodological quality of their own or others' studies, identifying potential flaws in the validation process. |

| Data Analysis Framework | CHARMS [7] [3] | A checklist for data extraction in systematic reviews of prediction modeling studies. | Provides a structured framework for designing studies and extracting data, ensuring key methodological elements are considered. |

| Model Explainability | SHAP [5] | A method to explain the output of any ML model by quantifying the contribution of each feature. | Helps validate model plausibility by identifying the most important predictors, allowing clinicians to assess if the model's reasoning aligns with medical knowledge. |

| Feature Selection | BESO [8] | An optimization algorithm used for selecting the most relevant features for model input. | Improves model performance and generalizability by reducing dimensionality and removing redundant variables. |

| Statistical Testing | Paired Statistical Tests | Tests like the paired t-test for comparing performance metrics from cross-validation. | Used to determine if the performance difference between two models is statistically significant. Must be applied with care to avoid inflated significance [6]. |

The rigorous comparison of machine learning models in biomedicine is a multifaceted challenge that extends beyond simply selecting the algorithm with the highest AUC. As the data demonstrates, the performance advantage of ML is not a given and is highly context-dependent. The critical differentiator between a promising model and a clinically useful one often lies in the rigor of its validation. This involves the conscientious application of appropriate metrics, robust experimental protocols like external validation, and transparent reporting guided by tools like PROBAST and TRIPOD+AI.

Future progress in the field must prioritize validation frameworks and clinical implementation over marginal gains in accuracy. This will require a concerted shift towards prospective, multi-center studies with external validation to address current limitations in generalizability [7] [2]. Furthermore, closing the gap between model interpretability and clinical workflow integration is essential. By adhering to the principles of rigorous validation metrics and methodologies outlined in this guide, researchers and drug developers can ensure that biomedical machine learning fulfills its potential to enhance patient outcomes and advance therapeutic discovery.

In machine learning, particularly for high-stakes fields like pharmaceutical research and drug development, the performance of a classification model cannot be captured by a single number. The confusion matrix is a fundamental diagnostic tool that provides a complete picture of a model's performance by breaking down its predictions into four core categories: True Positives (TP), True Negatives (TN), False Positives (FP), and False Negatives (FN) [9] [10]. This structured visualization allows researchers to move beyond simplistic accuracy measures and understand the precise nature of a model's errors—a critical insight when the cost of different errors varies dramatically, such as in predicting drug efficacy or patient safety.

For researchers comparing machine learning methods, the confusion matrix serves as the foundational data structure from which a suite of more nuanced evaluation metrics are derived. These metrics, including accuracy, precision, recall, and specificity, each illuminate a different aspect of model behavior [9] [11]. The choice of which metric to prioritize is not merely a statistical decision but is deeply rooted in the specific context and the relative costs of different types of misclassification within a research problem [12] [13]. This guide deconstructs the confusion matrix and its derived metrics, providing a framework for their application in method comparison for drug development and biomedical research.

Core Components of the Confusion Matrix

The confusion matrix is a structured table that allows for detailed analysis of a classification model's performance. For a binary classification problem, it is a 2x2 matrix where the rows represent the actual classes and the columns represent the predicted classes [10]. The four fundamental components are:

- True Positive (TP): The model correctly predicted the positive class. (e.g., a patient with a disease was correctly identified as having the disease) [9].

- True Negative (TN): The model correctly predicted the negative class. (e.g., a healthy patient was correctly identified as not having the disease) [9].

- False Positive (FP): The model incorrectly predicted the positive class when the actual class was negative. This is also known as a Type I error [9]. In a diagnostic test, this would be a false alarm.

- False Negative (FN): The model incorrectly predicted the negative class when the actual class was positive. This is also known as a Type II error [9]. In a medical context, this is often the most dangerous error, as it represents a missed case.

Table 1: Structure of a Binary Confusion Matrix

| Predicted Positive | Predicted Negative | |

|---|---|---|

| Actual Positive | True Positive (TP) | False Negative (FN) |

| Actual Negative | False Positive (FP) | True Negative (TN) |

The following diagram illustrates the logical relationship between a model's predictions and the resulting confusion matrix components, which form the basis for all subsequent metric calculations.

Key Metrics Derived from the Confusion Matrix

From the four counts in the confusion matrix, several key metrics can be calculated, each providing a different perspective on model performance. The following table summarizes the most critical metrics for model evaluation.

Table 2: Core Classification Metrics Derived from the Confusion Matrix

| Metric | Formula | Interpretation | Use Case Focus |

|---|---|---|---|

| Accuracy | (TP + TN) / (TP + TN + FP + FN) [9] | Overall proportion of correct predictions. | A coarse measure for balanced datasets [12]. |

| Precision | TP / (TP + FP) [9] | Proportion of positive predictions that are correct. | When the cost of a False Positive is high (e.g., spam detection) [12] [14]. |

| Recall (Sensitivity) | TP / (TP + FN) [9] | Proportion of actual positives that are correctly identified. | When the cost of a False Negative is high (e.g., disease screening) [12] [14]. |

| Specificity | TN / (TN + FP) [9] | Proportion of actual negatives that are correctly identified. | When correctly identifying negatives is crucial (e.g., confirming health). |

| F1-Score | 2 * (Precision * Recall) / (Precision + Recall) [9] | Harmonic mean of precision and recall. | Single metric to balance precision and recall for imbalanced data [12]. |

The Precision-Recall Tradeoff

A fundamental concept in classification is the tradeoff between precision and recall. It is often impossible to increase both simultaneously without a fundamental improvement in the model [13] [14]. This tradeoff is controlled by the decision threshold—the probability level above which an instance is classified as positive.

- Increasing the threshold makes the model more "conservative." It requires stronger evidence to predict a positive, which typically increases precision (fewer false positives) but decreases recall (more false negatives) [14].

- Decreasing the threshold makes the model more "liberal." It is more willing to predict a positive, which typically increases recall (fewer false negatives) but decreases precision (more false positives) [14].

The correct balance depends entirely on the research or business objective. For instance, in a preliminary screening for a disease, a high recall might be prioritized to ensure no cases are missed. In contrast, when confirming a diagnosis before a costly or invasive treatment, high precision becomes paramount.

Experimental Protocols for Metric Evaluation in Research

To ensure robust and reproducible comparison of machine learning models, a standardized experimental protocol is essential. The following workflow outlines the key steps from data preparation to metric calculation and interpretation.

Detailed Methodological Steps

Data Splitting and Preparation: Partition the dataset into a training set (e.g., 70%), a validation set (e.g., 15%), and a held-out test set (e.g., 15%). The validation set is used for hyperparameter tuning and threshold selection, while the test set is used only once for the final, unbiased evaluation [11]. It is critical that any class imbalance present in the real world is preserved in these splits or explicitly addressed through sampling techniques.

Model Training and Prediction: Train the candidate models on the training set. For each model, obtain not just the final class predictions but also the continuous probability scores or decision function outputs on the validation set [14].

Threshold Selection and Metric Calculation: Using the validation set predictions, construct a confusion matrix across a range of decision thresholds. Calculate the resulting precision, recall, and other metrics for each threshold. Select the optimal threshold based on the primary metric for your research goal (e.g., maximize recall if false negatives are critical) [14].

Final Evaluation and Statistical Comparison: Apply the final, threshold-tuned model to the held-out test set. Calculate the evaluation metrics from the test set's confusion matrix. To compare multiple models, use appropriate statistical tests (e.g., McNemar's test, paired t-test on cross-validated metric scores) to determine if performance differences are statistically significant, rather than relying on point estimates alone [11].

Application in Pharmaceutical and Biotech Research

The theoretical concepts of classification metrics find critical application in the pharmaceutical and biotechnology industry, where AI and machine learning are projected to generate up to $410 billion annually by 2025 [15]. The choice of evaluation metric directly impacts decision-making in high-stakes scenarios.

Table 3: Metric Selection for Pharmaceutical Applications

| Research Application | Primary Metric | Rationale | Supporting Experimental Data |

|---|---|---|---|

| Early Disease Screening | High Recall [14] | Minimizing false negatives is critical to avoid missing patients with the disease. | AI models for analyzing X-rays and PET scans are evaluated on their ability to identify all potential pathological findings [11]. |

| Diagnostic Confirmation | High Precision [14] | Ensuring a positive prediction is highly reliable before proceeding with invasive treatments. | In AI-assisted diagnostic platforms, the focus is on the percentage of flagged cases that are true positives. |

| Patient Recruitment for Clinical Trials | High Recall & F1-Score | Maximizing the identification of all eligible patients (recall) while balancing the workload of manual verification (precision). | AI tools like TrialGPT analyze EHRs to match patients to trials, aiming for high recall to avoid missing candidates, with F1 providing a balance [15]. |

| Predictive Toxicology | High Specificity | Correctly identifying compounds that are not toxic is crucial to avoid prematurely discarding viable drug candidates. | Models predicting drug-target interactions are assessed on their low false positive rate in toxicity prediction [15]. |

The Scientist's Toolkit: Essential Reagents for ML Evaluation

For researchers implementing these evaluation protocols, the following tools and conceptual "reagents" are essential.

Table 4: Essential Research Reagents for ML Model Evaluation

| Tool / Concept | Function in Evaluation | Example/Implementation |

|---|---|---|

| Probability Scores | Provides the continuous output from a classifier, required for ROC/AUC analysis and threshold tuning. | Output from model.predict_proba() in scikit-learn [14]. |

| Validation Set | A subset of data used for hyperparameter tuning and selecting the optimal decision threshold. | A holdout set not used for training the model's weights [11]. |

| Statistical Tests | To determine if the difference in performance between two models is statistically significant. | McNemar's test, bootstrapping confidence intervals for AUC [11]. |

| Imbalanced Data Strategies | Techniques to handle datasets where one class is vastly underrepresented, which can make accuracy misleading. | Oversampling (SMOTE), undersampling, or using appropriate metrics like F1 or MCC [12] [10]. |

| Matthews Correlation Coefficient (MCC) | A more reliable metric than F1 for imbalanced datasets, as it considers all four corners of the confusion matrix [10]. | MCC = (TP*TN - FP*FN) / sqrt((TP+FP)*(TP+FN)*(TN+FP)*(TN+FN)) [11]. |

The confusion matrix and its derived metrics form an indispensable toolkit for the rigorous comparison of machine learning methods in scientific research. Accuracy provides a top-level view but is a dangerously misleading guide for imbalanced datasets common in drug development, such as in rare disease prediction or adverse event detection [12] [16]. A disciplined, context-driven approach is required, where precision is prioritized when false positives are costly, and recall is paramount when false negatives carry the greatest risk.

For researchers in pharmaceuticals and biotechnology, this framework is not just academic. It directly supports the evaluation of AI models that can reduce drug discovery costs by up to 40% and slash development timelines [15]. By systematically applying these evaluation protocols—leveraging validation sets for threshold tuning, using held-out test sets for final evaluation, and employing statistical tests for model comparison—scientists can ensure that the machine learning models they develop and select are robust, reliable, and fit for their intended purpose in improving human health.

In the rigorous landscape of machine learning (ML) for scientific discovery, the selection of an appropriate validation metric is paramount. While accuracy has long served as a default for model evaluation, its efficacy diminishes significantly when applied to imbalanced datasets, a common occurrence in fields like drug development. This guide provides an objective comparison of performance metrics, championing the F1-score as a balanced harmonic mean of precision and recall. Through experimental data and detailed protocols, we demonstrate that the F1-score offers a more reliable and truthful assessment of model performance in scenarios where class distribution is skewed and both false positives and false negatives carry substantial cost.

Evaluation metrics are the compass by which machine learning models are navigated and refined. In scientific research, particularly in drug discovery, the consequences of selecting an inadequate metric are not merely statistical but can translate to missed therapeutic candidates or misallocated resources. The accuracy of a model, defined as (TP + TN) / (TP + TN + FP + FN), where TP is True Positives, TN is True Negatives, FP is False Positives, and FN is False Negatives, measures overall correctness [12]. However, this metric becomes misleading under class imbalance [17]. For instance, a model predicting a disease with a 1% prevalence can achieve 99% accuracy by simply classifying all cases as negative, a useless outcome for identifying unwell patients [18]. This flaw necessitates metrics that are sensitive to the distribution and criticality of different classes.

Deconstructing the F1-Score: A Harmonic Mean

The F1-score emerges as a robust alternative, specifically designed to balance two critical metrics: precision and recall [19].

- Precision (

TP / (TP + FP)) is the measure of a model's reliability. It answers the question: "Of all the instances the model predicted as positive, how many are actually positive?" High precision is crucial when the cost of false positives is high, such as in suggesting a compound for costly clinical trials [17]. - Recall (

TP / (TP + FN)) is the measure of a model's completeness. It answers the question: "Of all the actual positive instances, how many did the model successfully find?" High recall is vital when missing a positive case is dangerous, such as in early cancer detection [17] [12].

The F1-score is the harmonic mean of these two metrics, calculated as F1 = 2 * (Precision * Recall) / (Precision + Recall) [19]. The harmonic mean, unlike the simpler arithmetic mean, penalizes extreme values. A model with high precision but low recall (or vice-versa) will have a low F1-score, reflecting an undesirable trade-off [17]. This property makes the F1-score a single, stringent metric that only achieves high values when both precision and recall are high.

Visualizing the Precision-Recall Trade-Off

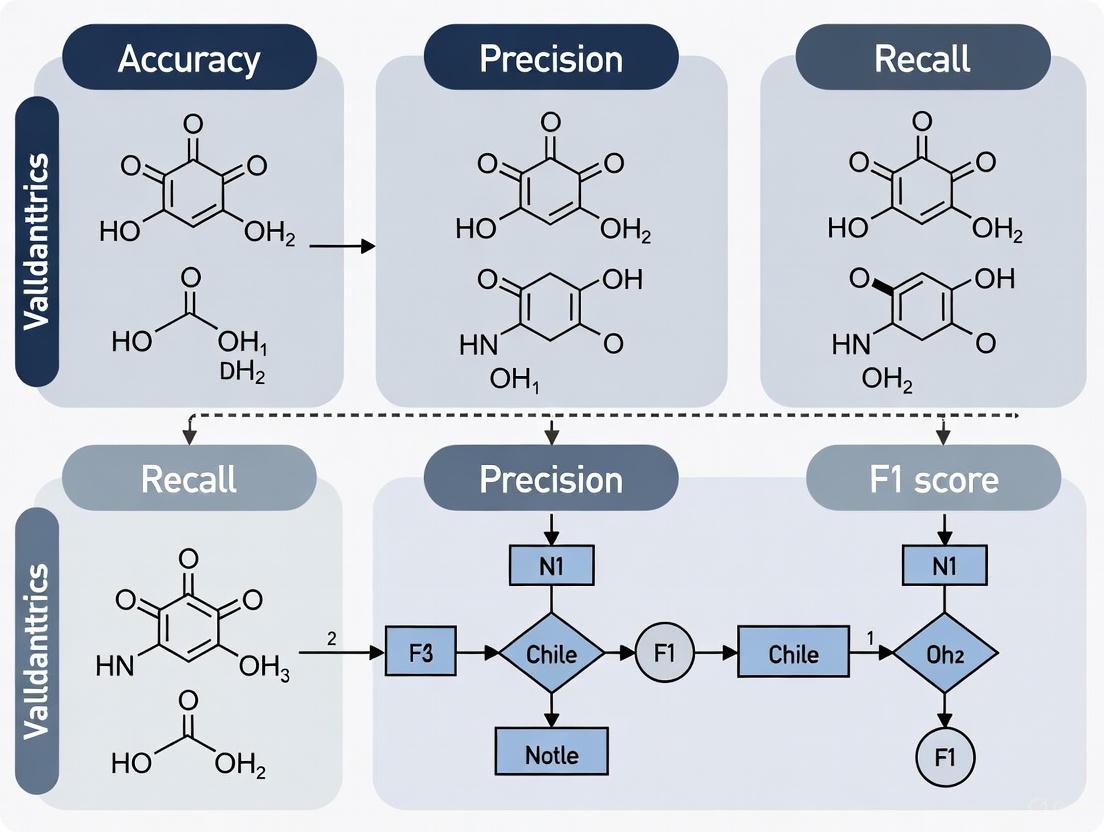

The following diagram illustrates the conceptual relationship between precision, recall, and the F1-score, showing how it balances the two metrics.

Diagram Title: The F1-Score as a Harmonic Mean

Comparative Analysis of Evaluation Metrics

The table below provides a concise comparison of key classification metrics, highlighting their respective use cases and limitations, particularly in the context of imbalanced data.

Table 1: Comparison of Key Classification Metrics for Model Evaluation

| Metric | Formula | Ideal Use Case | Limitations in Imbalanced Context |

|---|---|---|---|

| Accuracy | (TP + TN) / Total [12] | Balanced datasets where the cost of FP and FN is similar [12]. | Highly misleading; can be artificially inflated by predicting the majority class [18] [12]. |

| Precision | TP / (TP + FP) [19] | When the cost of false positives is high (e.g., qualifying a drug candidate for trials) [17]. | Does not account for false negatives; a model can have high precision by identifying few positives correctly while missing many others. |

| Recall | TP / (TP + FN) [19] | When the cost of false negatives is high (e.g., disease screening) [17] [12]. | Does not account for false positives; a model can have high recall by flagging many instances as positive, including many incorrect ones. |

| F1-Score | 2 * (Precision * Recall) / (Precision + Recall) [19] | Imbalanced datasets where a balance between FP and FN is critical (e.g., fraud detection, diagnostic aids) [17] [20]. | Gives equal weight to precision and recall, which may not be optimal for all domains. Less interpretable on its own than its components. |

Experimental Protocol: Validating Metrics in Drug Discovery

To objectively compare these metrics, we can analyze a real-world ML application in drug discovery. The following protocol and resulting data are adapted from a study predicting clinical trial outcomes.

Experimental Workflow for Clinical Trial Prediction

The diagram below outlines the key steps in a typical machine learning workflow for predicting clinical trial success, highlighting where evaluation metrics are applied.

Diagram Title: ML Validation Workflow for Trial Prediction

4.1.1 Dataset Curation and Preprocessing

- Source: The dataset used is from the PrOCTOR study, comprising 828 drugs (757 approved, 71 failed) [21].

- Class Imbalance: The imbalance ratio (majority to minority) is 10.66, making it a quintessential case for metrics beyond accuracy [21].

- Features: 47 features per drug, including 10 molecular properties (e.g., molecular weight, polar surface area), 34 target-based properties (e.g., median gene expression across 30 tissues), and 3 drug-likeness rule outcomes (e.g., Lipinski's Rule of Five) [21].

- Preprocessing: Missing values were imputed with median values [21].

4.1.2 Model Training and Validation

- Model Architectures: The study proposed an Outer Product-based Convolutional Neural Network (OPCNN) to integrate chemical and target-based features effectively. This was compared against other Deep Multimodal Neural Networks (DMNNs) using early, intermediate, and late fusion techniques [21].

- Validation Protocol: A 10-fold cross-validation strategy was employed to ensure robust performance estimation and mitigate overfitting [21].

Quantitative Results and Metric Comparison

The performance of the OPCNN model and the comparative performance of different metrics are summarized in the tables below.

Table 2: Performance of the OPCNN Model in Clinical Trial Prediction (10-Fold CV) [21]

| Metric | Score | Interpretation |

|---|---|---|

| Accuracy | 0.9758 | Superficially excellent, but potentially misleading due to imbalance. |

| Precision | 0.9889 | Extremely high, indicating very few false positives among predicted successes. |

| Recall | 0.9893 | Extremely high, indicating the model found nearly all actual successful drugs. |

| F1-Score | 0.9868 | Reflects the near-perfect balance between high precision and high recall. |

| MCC | 0.8451 | A more reliable statistical rate for biomedicine, confirming strong model performance. |

Table 3: Hypothetical Model Comparison Illustrating Metric Trade-Offs

| Model | Accuracy | Precision | Recall | F1-Score | Suitability for Imbalanced Task |

|---|---|---|---|---|---|

| Dummy Classifier (Always "Pass") | ~91.4% | ~91.4% | 100% | ~95.5% | Poor. F1 is high due to perfect recall, but precision is flawed. Fails to identify failures. |

| Conservative Model | 95.0% | 0.99 | 0.85 | 0.91 | Good. High precision but lower recall means it misses some true positives. |

| Sensitive Model | 93.0% | 0.85 | 0.99 | 0.91 | Good. High recall but lower precision means it generates more false alarms. |

| Balanced Model (OPCNN) | 97.6% | 0.99 | 0.99 | 0.99 | Excellent. Achieves a near-perfect balance, correctly identifying both classes effectively. |

Note: Table 3 uses the dataset imbalance from [21] for the Dummy Classifier and presents illustrative data for other models to demonstrate conceptual trade-offs.

The Scientist's Toolkit: Essential Reagents for ML Evaluation

The following table details key computational "reagents" and frameworks essential for conducting rigorous ML model evaluation in drug discovery.

Table 4: Key Research Reagent Solutions for ML Evaluation

| Item / Solution | Function in Evaluation | Example in Context |

|---|---|---|

| Structured Biological & Chemical Datasets | Provides the foundational data for training and testing models; requires features relevant to the domain (e.g., molecular properties, target profiles) [21]. | Dataset with 47 chemical and target-based features for 828 drugs from [21]. |

| Cross-Validation Frameworks | A resampling procedure used to evaluate a model on limited data, ensuring that performance estimates are not dependent on a particular train-test split [21]. | 10-fold cross-validation as used in the OPCNN experiment [21]. |

| Multimodal Deep Learning Architectures | Neural networks designed to learn from and integrate multiple types of data (e.g., chemical structures and biological targets) for more powerful predictions [21]. | Outer Product-based CNN (OPCNN) for integrating chemical and target features [21]. |

| Metric Calculation Libraries | Software libraries that provide standardized, optimized functions for computing accuracy, precision, recall, F1-score, and other metrics. | Scikit-learn's metrics module in Python (e.g., sklearn.metrics.f1_score) [22]. |

| Domain-Specific Metrics | Metrics tailored to the specific needs and challenges of a field, which may be more informative than generic metrics [1]. | Precision-at-K for ranking top drug candidates, Rare Event Sensitivity for detecting low-frequency adverse effects [1]. |

The experimental data clearly demonstrates that in imbalanced but critical contexts like clinical trial prediction, the F1-score provides a more truthful and actionable assessment of model performance than accuracy. While a high accuracy score can be a dangerous illusion, a high F1-score signifies a model that has successfully navigated the precision-recall trade-off [21] [17]. This makes it an indispensable metric for researchers and drug development professionals who rely on ML models to make high-stakes decisions.

However, the F1-score is not a panacea. Its assumption of equal weight for precision and recall may not align with all business or research objectives. In such cases, the Fβ-score, a generalized form where β can be adjusted to weight recall higher than precision (or vice-versa), offers a more flexible alternative [18]. Ultimately, the choice of metric must be guided by the specific costs of prediction errors within the research domain. For a broad range of imbalanced classification tasks in science and medicine, the F1-score stands as a robust, balanced, and essential tool for validation and model comparison, truly moving the field beyond the deceptive simplicity of accuracy.

Logarithmic Loss, commonly known as Log Loss or cross-entropy loss, serves as a crucial evaluation metric for probabilistic classification models. Unlike binary metrics that merely assess classification correctness, Log Loss quantifies the accuracy of predicted probabilities by measuring the divergence between these probabilities and the actual class labels [23] [24]. This capability makes it particularly valuable in contexts where understanding prediction confidence is as important as the prediction itself, such as in medical risk prediction and drug development [25] [26].

Within the broader thesis of validation metrics for machine learning, Log Loss occupies a distinct position. It provides a continuous, differentiable measure of model performance that penalizes both incorrect classifications and overconfident, incorrect predictions [27]. This review situates Log Loss alongside alternative metrics, examining its theoretical foundations, practical applications in scientific domains, and empirical performance through comparative analysis.

Theoretical Foundations of Log Loss

Mathematical Formulation

Log Loss is calculated as the negative average of the logarithms of the predicted probabilities assigned to the correct classes. For binary classification problems, the formula is expressed as:

[ \text{Log Loss} = -\frac{1}{N} \sum{i=1}^N \left[ yi \cdot \log(pi) + (1 - yi) \cdot \log(1 - p_i) \right] ]

Where:

- ( N ) is the number of observations

- ( y_i ) is the true label (0 or 1) for observation ( i )

- ( p_i ) is the predicted probability that observation ( i ) belongs to class 1

- ( \log ) denotes the natural logarithm [28] [23]

For multi-class classification problems, the formula extends to:

[ \text{Log Loss} = -\frac{1}{N} \sum{i=1}^N \sum{j=1}^M y{ij} \cdot \log(p{ij}) ]

Where:

- ( M ) is the number of classes

- ( y_{ij} ) is a binary indicator (1 if observation ( i ) belongs to class ( j ), 0 otherwise)

- ( p_{ij} ) is the predicted probability that observation ( i ) belongs to class ( j ) [29]

Conceptual Interpretation and Behavior

Conceptually, Log Loss measures how closely the predicted probabilities match the actual outcomes, with lower values indicating better alignment [30]. The metric exhibits several important behavioral characteristics:

- Confidence Penalization: Log Loss heavily penalizes confident but incorrect predictions. For instance, if a model assigns a probability of 0.99 to an event that does not occur, it incurs a much higher penalty (-log(0.01) ≈ 4.6) than if it had assigned 0.7 (-log(0.3) ≈ 1.2) [28] [30].

- Theoretical Basis: Log Loss is fundamentally connected to maximum likelihood estimation and information theory, specifically representing the cross-entropy between the true distribution and the predicted probabilities [31].

The following diagram illustrates how Log Loss varies with predicted probability for both actual positive and actual negative instances:

Log Loss Behavior for Binary Classification

Comparative Analysis of Classification Metrics

Log Loss vs. Alternative Metrics

The following table summarizes key characteristics of Log Loss compared to other common classification metrics:

Table 1: Comparison of Classification Evaluation Metrics

| Metric | Interpretation | Range | Optimal Value | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| Log Loss | Divergence between predicted probabilities and actual labels | 0 to ∞ | 0 | Probabilistic interpretation, penalizes over-confidence, continuous and differentiable | Sensitive to class imbalance, infinite for perfect misclassification |

| Accuracy | Proportion of correct predictions | 0 to 1 | 1 | Simple to interpret, intuitive | Misleading with class imbalance, ignores prediction confidence |

| Brier Score | Mean squared difference between predicted probabilities and actual outcomes | 0 to 1 | 0 | Proper scoring rule, less sensitive to extreme probabilities | Less emphasis on probability calibration |

| AUC-ROC | Model's ability to distinguish between classes | 0 to 1 | 1 | Threshold-independent, useful for class imbalance | Does not evaluate calibrated probabilities |

Theoretical and Practical Distinctions

Log Loss vs. Accuracy: While accuracy simply measures the percentage of correct predictions, Log Loss provides more detailed information by considering the confidence of these predictions [24]. Accuracy can be misleading with imbalanced datasets, whereas Log Loss offers a more nuanced evaluation of probabilistic models [24].

Log Loss vs. Brier Score: Both are proper scoring rules that evaluate probabilistic predictions, but they differ significantly in their characteristics. The Brier score is essentially the mean squared error of probabilistic predictions, while Log Loss employs a logarithmic penalty [31]. Log Loss heavily penalizes confident but wrong predictions, whereas the Brier score is more lenient toward extreme probabilities [31]. Theoretically, Log Loss is the only scoring rule that satisfies additivity, locality, and properness conditions for finitely many possible events [31].

Experimental Protocols and Empirical Comparisons

Methodology for Metric Evaluation

Standard experimental protocols for comparing classification metrics involve:

- Dataset Preparation: Multiple datasets with varying characteristics (balanced/imbalanced, clean/noisy) should be used [25].

- Model Training: Multiple classification algorithms (logistic regression, decision trees, neural networks, etc.) are trained on each dataset [25].

- Probability Calibration: Some models may require calibration (e.g., via Platt scaling) to ensure their probability estimates are meaningful [24].

- Metric Calculation: All metrics are computed using out-of-sample predictions, typically via cross-validation or hold-out testing [25].

- Statistical Analysis: Performance differences should be assessed for statistical significance using appropriate tests [25].

The following diagram illustrates the experimental workflow for metric comparison:

Metric Comparison Experimental Workflow

Case Study: AKI Risk Prediction in Immunotherapy Patients

A recent study developing machine learning models for predicting Acute Kidney Injury (AKI) risk in patients treated with PD-1/PD-L1 inhibitors provides a practical illustration of Log Loss application in medical research [25].

Experimental Protocol:

- Objective: Develop and validate interpretable ML models for early AKI prediction in patients receiving PD-1/PD-L1 inhibitor therapy [25].

- Dataset: 1,663 patients treated at Zhejiang Provincial People's Hospital between January 2018 and January 2024 [25].

- Methods: Nine different machine learning models were evaluated using a retrospective cohort design. The dataset was split into training (80%) and test (20%) sets. Models included Gradient Boosting Machine (GBM), logistic regression, random forests, and others [25].

- Feature Selection: 94 clinical variables were initially considered, with 38 features ultimately selected using LASSO regression after addressing multicollinearity [25].

- Evaluation Metrics: AUC, specificity, sensitivity, accuracy, F1 score, Brier score, and Log Loss were all calculated to assess model performance [25].

Results: The GBM model demonstrated the best predictive performance, achieving an AUC of 0.850 (95% CI: 0.830-0.870) in the validation set and 0.795 (95% CI: 0.747-0.844) in the test set [25]. While the study reported multiple metrics, Log Loss provided crucial information about the quality of the probability estimates, which is essential for clinical decision-making where risk stratification is needed [25].

Quantitative Comparison of Metrics

Table 2: Performance Metrics from AKI Prediction Study (Gradient Boosting Machine Model)

| Metric | Validation Set | Test Set | Interpretation |

|---|---|---|---|

| AUC | 0.850 (0.830-0.870) | 0.795 (0.747-0.844) | Very good discrimination in validation, good in test |

| Sensitivity | Reported | Reported | Proportion of actual positives correctly identified |

| Specificity | Reported | Reported | Proportion of actual negatives correctly identified |

| Brier Score | Reported | Reported | Measure of probability calibration |

| Log Loss | Reported | Reported | Quality of probability estimates |

Table 3: Comparative Performance of Multiple Models in AKI Prediction Study

| Model Type | AUC | Log Loss | Brier Score | Rank Based on Composite Performance |

|---|---|---|---|---|

| Gradient Boosting Machine | 0.850 | Lowest among models | Best calibration | 1 |

| Random Forest | 0.832 | Moderate | Good calibration | 2 |

| Logistic Regression | 0.815 | Moderate to high | Moderate calibration | 3 |

| Support Vector Machine | 0.798 | Higher | Poorer calibration | 4 |

Research Reagent Solutions

Table 4: Essential Tools for Implementing and Evaluating Log Loss in Research Settings

| Tool/Resource | Function | Example Implementations |

|---|---|---|

| scikit-learn | Python library providing log_loss function for metric calculation | from sklearn.metrics import log_loss loss = log_loss(y_true, y_pred) |

| PyTorch | Deep learning framework with cross-entropy loss functions | torch.nn.CrossEntropyLoss() |

| TensorFlow/Keras | ML frameworks with categorical cross-entropy implementations | tf.keras.losses.CategoricalCrossentropy() |

| Caret R Package | Comprehensive modeling package with log loss calculation | trainControl(summaryFunction=defaultSummary) |

| XGBoost/LightGBM | Gradient boosting frameworks with internal log loss optimization | objective="binary:logistic" |

Implementation Considerations

- Baseline Establishment: Always compare Log Loss values against a baseline model, typically a naive classifier that predicts the majority class or class proportions [29] [30]. For a binary classification problem with class ratio of 40:60, the baseline Log Loss would be approximately 0.673 [29].

- Class Imbalance Adjustment: With significant class imbalance, the majority class may dominate the Log Loss [29]. Consider using class weights or alternative metrics in such scenarios.

- Probability Calibration: For models that produce poorly calibrated probabilities (e.g., SVMs, random forests), apply calibration methods like Platt scaling or isotonic regression before calculating Log Loss [24].

Log Loss provides a sophisticated approach to evaluating classification models, particularly when assessing prediction confidence is crucial. Its theoretical foundation in information theory, sensitivity to prediction confidence, and compatibility with probability-focused model evaluation make it particularly valuable for scientific applications including drug discovery and development [26].

However, Log Loss should not be used in isolation. A comprehensive evaluation framework for classification models should incorporate multiple metrics, including Log Loss for probabilistic assessment, AUC-ROC for discrimination ability, and accuracy for overall classification performance [25] [24]. The choice of metrics should align with the specific research objectives and application requirements, with Log Loss being particularly valuable when well-calibrated probability estimates are essential for decision-making [31] [24].

For drug development professionals and researchers, Log Loss offers a mathematically rigorous approach to model validation that emphasizes the quality of probability estimates—a critical consideration when models inform high-stakes decisions regarding patient care and therapeutic development [25] [26].

The Area Under the Receiver Operating Characteristic Curve (AUC-ROC) is a fundamental performance measurement for evaluating binary classification models in machine learning and diagnostic research. The ROC curve itself is a graphical plot that illustrates the diagnostic ability of a binary classifier system by plotting the True Positive Rate (TPR) against the False Positive Rate (FPR) at various classification thresholds [32] [33]. This curve was first developed during World War II for analyzing radar signals to detect enemy objects, and was later introduced to psychology and medicine, where it has become an established evaluation tool [34] [33].

The AUC-ROC metric provides a single number that summarizes the classifier's performance across all possible classification thresholds, offering a robust measure of a model's ability to distinguish between positive and negative classes [35] [36]. The value ranges from 0 to 1, where an AUC of 1 represents a perfect classifier, 0.5 corresponds to random guessing, and values below 0.5 indicate performance worse than random chance [37] [33]. This comprehensive metric is particularly valuable in research settings where model selection and performance comparison are critical, such as in drug development and biomedical diagnostics.

Theoretical Foundations of ROC Analysis

Core Components and Terminology

Understanding the AUC-ROC curve requires familiarity with the fundamental concepts derived from the confusion matrix and the relationship between sensitivity and specificity:

- True Positive Rate (TPR/Sensitivity/Recall): Measures the proportion of actual positives that are correctly identified: TPR = TP / (TP + FN) [32] [35]

- False Positive Rate (FPR): Measures the proportion of actual negatives that are incorrectly classified as positive: FPR = FP / (FP + TN) [32] [37]

- Specificity: Measures the proportion of actual negatives correctly identified: Specificity = TN / (TN + FP) = 1 - FPR [32] [34]

- Classification Threshold: The probability cutoff used to assign class labels, which when varied generates the different points on the ROC curve [35] [37]

The following diagram illustrates the conceptual relationship between these components and the ROC curve:

Statistical Interpretation of AUC

The AUC-ROC score has an important probabilistic interpretation: it equals the probability that a randomly chosen positive instance will be ranked higher than a randomly chosen negative instance by the classifier [36] [38]. This interpretation makes AUC particularly valuable for assessing a model's ranking capability independent of any specific classification threshold.

Mathematically, this can be represented as:

AUC = P(score(x⁺) > score(x⁻))

Where x⁺ represents a positive instance and x⁻ represents a negative instance [38]. This statistical property explains why AUC-ROC is considered a measure of discriminatory power rather than mere classification accuracy.

Experimental Protocols for AUC-ROC Evaluation

Standard Experimental Methodology

To ensure reproducible and comparable AUC-ROC evaluations, researchers should follow standardized experimental protocols:

Data Preparation Protocol:

Model Training Protocol:

- Train multiple candidate models (e.g., logistic regression, random forest, SVM) on the training set [32]

- Generate probability estimates rather than binary predictions for all models

- Use consistent random seeds for reproducible results

ROC Curve Generation:

Validation Procedures:

The following workflow diagram illustrates the complete experimental process for AUC-ROC evaluation:

Computational Implementation

The following Python code demonstrates a standardized implementation for AUC-ROC calculation:

Comparative Analysis of Classification Metrics

Quantitative Comparison of Evaluation Metrics

The table below provides a comprehensive comparison of AUC-ROC against other common classification metrics:

Table 1: Comparative Analysis of Binary Classification Metrics

| Metric | Definition | Range | Optimal Value | Strengths | Limitations |

|---|---|---|---|---|---|

| AUC-ROC | Area under ROC curve | 0-1 | 1.0 | Threshold-independent, measures ranking quality, works well with balanced datasets [36] [39] | Over-optimistic for imbalanced data, doesn't reflect specific business costs [39] |

| Accuracy | (TP + TN) / (P + N) | 0-1 | 1.0 | Simple to interpret, works well with balanced classes [39] [16] | Misleading with class imbalance, depends on threshold [39] [16] |

| F1-Score | Harmonic mean of precision and recall | 0-1 | 1.0 | Balances precision and recall, suitable for imbalanced data [39] [40] | Threshold-dependent, ignores true negatives [39] |

| Precision | TP / (TP + FP) | 0-1 | 1.0 | Measures false positive cost, crucial when FP costs are high [39] [40] | Ignores false negatives, depends on threshold [39] |

| Recall (Sensitivity) | TP / (TP + FN) | 0-1 | 1.0 | Measures false negative cost, crucial when FN costs are high [35] [40] | Ignores false positives, depends on threshold [39] |

Performance Under Different Dataset Conditions

The appropriateness of classification metrics varies significantly depending on dataset characteristics and research objectives:

Table 2: Metric Selection Guide Based on Dataset Characteristics

| Scenario | Recommended Primary Metric | Rationale | AUC-ROC Interpretation |

|---|---|---|---|

| Balanced Classes | AUC-ROC or Accuracy | Both provide reliable performance assessment [39] [16] | Values >0.9 excellent, >0.8 good, >0.7 acceptable [35] |

| Imbalanced Classes | F1-Score or PR-AUC | Focuses on positive class performance [39] | May be overly optimistic; use with caution [39] |

| High FP Cost | Precision or Specificity | Minimizes false positive impact [36] [39] | Use partial AUC focusing on low FPR region [34] |

| High FN Cost | Recall or Sensitivity | Minimizes false negative impact [36] [39] | Use points on left upper ROC curve [36] |

| Ranking Focus | AUC-ROC | Directly measures ranking quality [36] [38] | Direct interpretation as probability of correct ranking [38] |

Computational Tools and Libraries

Table 3: Essential Software Tools for ROC Analysis in Research

| Tool/Library | Primary Function | Application Context | Key Features |

|---|---|---|---|

| scikit-learn (Python) | ROC curve calculation and visualization [32] | General machine learning | roc_curve(), auc(), RocCurveDisplay [32] |

| pROC (R) | Advanced ROC analysis | Statistical analysis | Confidence intervals, statistical tests, curve comparisons [34] |

| MATLAB | Statistical and ROC analysis | Engineering and signal processing | perfcurve() function with various metrics [34] |

| MedCalc | Diagnostic ROC analysis | Clinical research | Cut-off point analysis, comparison of multiple tests [34] |

| Pandas & NumPy | Data manipulation | Data preprocessing | Data cleaning, transformation before ROC analysis [32] |

| Matplotlib & Seaborn | Visualization | Publication-quality figures | Customizable ROC plots with confidence bands [32] |

Experimental Design Reagents

For researchers conducting AUC-ROC analyses in drug development and biomedical contexts, the following "research reagents" are essential:

Reference Datasets: Balanced and imbalanced benchmark datasets with known prevalence rates for method validation [34] [39]

Classification Algorithms: Standardized implementations of logistic regression, random forest, SVM, and neural networks as reference models [32] [39]

Statistical Validation Tools: Bootstrapping scripts for confidence intervals, DeLong test implementation for curve comparisons [34]

Visualization Templates: Standardized plotting scripts for publication-ready ROC curves with multiple classifiers [32]

The AUC-ROC curve remains a cornerstone metric for evaluating binary classification models in machine learning and diagnostic research. Its threshold-independent nature and probabilistic interpretation make it particularly valuable for assessing a model's fundamental discriminatory power [36] [38]. However, researchers must recognize its limitations, particularly with imbalanced datasets where precision-recall analysis may provide more realistic performance assessment [39].

The comprehensive analysis presented in this guide demonstrates that while AUC-ROC provides an excellent overall measure of model performance, informed metric selection should consider specific research contexts, dataset characteristics, and relative costs of different error types [36] [39]. For drug development professionals and researchers, combining AUC-ROC with complementary metrics and following standardized experimental protocols will ensure robust model evaluation and meaningful performance comparisons across studies.

In the empirical sciences, particularly in data-driven fields such as drug development, the validation of predictive models is paramount. Regression analysis serves as a fundamental tool for modeling continuous outcomes, from biochemical reaction yields to patient response predictions. The selection of appropriate evaluation metrics directly influences model interpretation, deployment decisions, and scientific validity. This guide provides a systematic comparison of four essential regression metrics—Mean Absolute Error (MAE), Mean Squared Error (MSE), Root Mean Squared Error (RMSE), and R-squared (R²)—framed within the broader context of validation metrics for machine learning method comparison research. These metrics quantify the discrepancy between predicted values generated by a model and actual observed values, each offering distinct perspectives on model performance [41] [42].

Understanding the mathematical properties, sensitivities, and interpretability of these metrics enables researchers to select the most appropriate measure for their specific experimental context. For instance, a toxicology study predicting compound lethality may prioritize different error characteristics than a pharmacoeconomic model forecasting drug production costs. This analysis synthesizes quantitative comparisons, experimental protocols, and practical guidelines to assist scientists in making informed decisions when evaluating regression models in research applications [43] [44].

Metric Definitions and Mathematical Foundations

Formal Definitions

Mean Absolute Error (MAE): MAE calculates the average magnitude of absolute differences between predicted and actual values, providing a linear score where all errors contribute equally according to their magnitude [43] [44]. The formula is expressed as:

MAE = (1/n) * Σ|y_i - ŷ_i|where

y_irepresents the actual value,ŷ_irepresents the predicted value, andnis the number of observations [45].Mean Squared Error (MSE): MSE computes the average of squared differences between predictions and observations [43] [44]. By squaring the errors, it amplifies the penalty for larger errors. The formula is:

MSE = (1/n) * Σ(y_i - ŷ_i)²[45]Root Mean Squared Error (RMSE): RMSE is derived as the square root of MSE, returning the error metric to the original unit of the target variable, thereby enhancing interpretability [43] [42]. It is calculated as:

R-squared (R²): Also known as the coefficient of determination, R² measures the proportion of variance in the dependent variable that is predictable from the independent variables [43] [45]. It is defined as:

R² = 1 - (SS_res / SS_tot)where

SS_resrepresents the sum of squares of residuals andSS_totrepresents the total sum of squares [44] [45].

Conceptual Relationships

The following diagram illustrates the conceptual relationships and computational dependencies between these four core regression metrics:

Comparative Metric Analysis

Key Characteristics and Mathematical Properties

Table 1: Fundamental Characteristics of Regression Metrics

| Metric | Optimal Range | Scale Sensitivity | Outlier Sensitivity | Interpretability | Differentiable |

|---|---|---|---|---|---|

| MAE | [0, ∞), closer to 0 better | Same as target variable | Robust [46] | High (direct error meaning) | No [43] |

| MSE | [0, ∞), closer to 0 better | Squared units | High [44] | Moderate (squared units) | Yes [43] [46] |

| RMSE | [0, ∞), closer to 0 better | Same as target variable | High [44] | High (original units) | Yes [43] |

| R² | (-∞, 1], closer to 1 better | Unit-free | Moderate | High (variance explained) | Yes |

Performance Comparison Across Dataset Types

Table 2: Metric Performance Across Different Data Scenarios

| Metric | Clean Data | Outlier-Prone Data | Large-Scale Data | Heteroscedastic Data | Business Context |

|---|---|---|---|---|---|

| MAE | Excellent | Excellent [46] | Good | Good | Moderate |

| MSE | Good | Poor [44] | Good | Poor | Poor |

| RMSE | Good | Moderate | Good | Moderate | Good |

| R² | Excellent | Good | Excellent | Good | Excellent |

Quantitative Comparison on Sample Dataset

The following experimental data demonstrates how these metrics perform when applied to a common regression problem—predicting California housing prices [45]. This dataset contains over 20,000 observations of housing information with eight numeric feature variables and one continuous target variable (median house value).

Table 3: Experimental Results on California Housing Dataset

| Metric | Value | Baseline Comparison | Unit Interpretation | Performance Interpretation |

|---|---|---|---|---|

| MAE | 0.533 | 37% improvement over mean | Thousands of dollars | Average prediction error is $533 |

| MSE | 0.556 | 45% improvement over mean | Squared thousands of dollars | Difficult to interpret directly |

| RMSE | 0.746 | 41% improvement over mean | Thousands of dollars | Typical error is $746 |

| R² | 0.576 | N/A | Unitless | 57.6% of variance explained |

Experimental Framework for Metric Evaluation

Standardized Experimental Protocol

To ensure consistent evaluation and comparison of regression metrics across research studies, the following experimental protocol is recommended:

Data Partitioning: Employ stratified train-test splits (typically 70-30 or 80-20) with random state fixation for reproducibility [45]. For time-series data, use chronological splits to maintain temporal integrity.

Baseline Establishment: Implement a simple mean predictor as a baseline model to calculate relative performance improvements [47].

Metric Computation: Calculate all metrics on the test set only to avoid overfitting bias. Training metrics should be used exclusively for model development, not final evaluation.

Statistical Validation: Perform multiple runs with different random seeds and report mean ± standard deviation for all metrics to account for variance.

Error Distribution Analysis: Examine residual plots (predicted vs. actual) and error histograms to understand the distribution characteristics of prediction errors [47].

Research Reagent Solutions

Table 4: Essential Tools for Regression Metric Analysis

| Tool Category | Specific Implementation | Research Function |

|---|---|---|

| Programming Language | Python 3.8+ | Primary implementation language |

| Machine Learning Library | Scikit-learn 1.0+ [45] | Metric calculation and model implementation |

| Numerical Computation | NumPy 1.20+ [45] | Efficient mathematical operations |

| Data Handling | pandas 1.3+ [45] | Dataset manipulation and preprocessing |

| Visualization | Matplotlib 3.5+ [46] | Error distribution and residual plots |

| Statistical Analysis | SciPy 1.7+ | Advanced statistical testing |

Experimental Workflow

The following diagram outlines the standardized experimental workflow for comprehensive regression metric evaluation:

Metric Selection Guidelines for Research Applications

Context-Specific Recommendations

Different research domains and application scenarios warrant specific metric preferences:

Drug Discovery and Biochemical Applications: When predicting continuous biochemical parameters (e.g., IC₅₀ values, binding affinities), where error magnitude directly correlates with experimental significance, RMSE provides the most appropriate balance between interpretability and outlier sensitivity [47]. The unit preservation allows direct comparison with experimental measurement error.

Clinical Outcome Prediction: For patient-specific prognostic models where all errors have similar clinical consequences regardless of magnitude (e.g., risk score miscalibration), MAE offers the most clinically interpretable measure of average prediction error [46].

Pharmacoeconomic Modeling: When evaluating cost prediction models where large overestimates or underestimates have disproportionate business impact, MSE appropriately emphasizes these critical errors through its squaring mechanism [43] [44].

Comparative Algorithm Studies: In methodological research comparing multiple machine learning approaches, R² provides the most standardized measure for comparing model performance across different datasets and domains, as it is scale-independent [43] [47].

Implementation Considerations

Several practical factors influence metric selection in research settings:

Dataset Size: For small datasets (n < 100), MAE is preferred due to its more stable estimation properties. With larger datasets (n > 1000), RMSE and R² become more reliable [45].

Error Distribution: When residuals follow a normal distribution, MSE/RMSE are optimal. For heavy-tailed distributions, MAE is more appropriate [47] [48].

Objective Alignment: If the research goal is explanation rather than prediction, R² provides better insight into model adequacy. For pure prediction tasks, error-based metrics (MAE, RMSE) are more relevant [43].

The comprehensive analysis of MAE, MSE, RMSE, and R-squared reveals that no single metric universally supersedes others across all research contexts. MAE provides robust, interpretable error measurement particularly valuable in clinical and biochemical applications where all errors have similar importance. MSE and its derivative RMSE offer heightened sensitivity to large errors, making them suitable for applications where outlier predictions carry disproportionate consequences. R-squared remains invaluable for comparing model performance across domains and communicating the proportion of variance explained, though it should not be used in isolation.

For rigorous model evaluation in scientific research, particularly in drug development and biomedical applications, a multi-metric approach is strongly recommended. Reporting MAE or RMSE for absolute error interpretation alongside R² for explanatory context provides the most comprehensive assessment of model performance. This balanced methodology ensures that regression models are evaluated from multiple perspectives, leading to more reliable and interpretable predictive models in scientific research.

From Theory to Practice: Implementing Metrics in Your Biomedical Research Pipeline

In biomedical machine learning, the choice of an evaluation metric is a critical decision that extends beyond technical performance to encompass clinical relevance and ethical implications. The selected metric directly influences how model performance is assessed and must align with the problem's specific objectives and the very real costs of diagnostic or prognostic errors [49]. While accuracy is often an intuitive starting point, it can be profoundly misleading in biomedical contexts where class imbalances are common, such as in disease detection where the prevalence of a condition is low [49] [50]. A model can achieve high accuracy by simply predicting the majority class yet fail catastrophically to identify the critical minority class (e.g., diseased patients) [50]. This accuracy paradox necessitates a more nuanced approach to model evaluation, one that carefully considers the clinical context and the relative consequences of different types of errors—false positives versus false negatives [49]. This guide provides a structured framework for selecting metrics that ensure machine learning models deliver genuine value in biomedical research and clinical applications.

A Taxonomy of Core Evaluation Metrics

Classification Metrics

Table 1: Key Classification Metrics for Biomedical Machine Learning

| Metric | Formula | Clinical Interpretation | Primary Use Case in Biomedicine |

|---|---|---|---|

| Accuracy | (TP+TN)/(TP+TN+FP+FN) [11] | Overall probability that a classification is correct | Initial screening for balanced datasets where all error types are equally important [49] |

| Sensitivity (Recall) | TP/(TP+FN) [11] | Ability to correctly identify patients with the disease | Cancer detection, infectious disease screening; when missing a positive case is catastrophic [49] |

| Specificity | TN/(TN+FP) [11] | Ability to correctly identify patients without the disease | Confirmatory testing; when false alarms lead to harmful, expensive, or invasive follow-ups [49] |

| Precision | TP/(TP+FP) [49] | When the model predicts "disease," how often it is correct | Spam detection for clinical alerts; when false positives are costly or undesirable [49] [51] |

| F1-Score | 2 × (Precision × Recall)/(Precision + Recall) [11] | Harmonic mean of precision and recall | Imbalanced datasets [49]; when a single metric summarizing the balance between FP and FN is needed [51] |

| AUC-ROC | Area under the ROC curve [49] | Model's ability to separate classes across all thresholds | Binary classification [49]; overall ranking performance independent of a specific threshold [51] |

| Log Loss | -Σ [pᵢ log(qᵢ)] [11] | How close the predicted probabilities are to the true labels | Probabilistic models [49]; when confidence-calibrated predictions are required for risk stratification [51] |

Regression and Clustering Metrics

For regression tasks common to biomarker level prediction or drug dosage estimation, Root Mean Squared Error (RMSE) is a standard metric that measures the square root of the average squared differences between predicted and actual values, penalizing larger errors more heavily [49] [51]. Mean Absolute Error (MAE) provides a more robust alternative in the presence of outliers [51].

In unsupervised learning, such as identifying novel disease subtypes from genomic data, the Adjusted Rand Index (ARI) measures the similarity between the algorithm's clusters and a known ground truth, accounting for chance [52]. Without a ground truth, intrinsic measures like the Silhouette Index evaluate clustering quality by measuring intra-cluster similarity against inter-cluster similarity [52].

Aligning Metrics with Biomedical Problem Types and Error Costs

The fundamental principle for metric selection is aligning with the clinical objective and the relative cost of errors.

When to Prioritize Sensitivity (Recall)

Prioritize sensitivity in screening scenarios where the cost of missing a positive case (false negative) is unacceptably high [49] [51]. For example, in a model for cancer detection or early-stage disease screening, a false negative could mean a missed opportunity for life-saving early intervention [49]. In such cases, it is clinically preferable to have a higher false positive rate (lower specificity) to ensure that most true cases are captured [51].

When to Prioritize Precision

Prioritize precision when a false positive prediction has severe consequences [49]. For instance, in a model that flags patients for invasive diagnostic procedures (e.g., biopsy) or for initiating treatments with significant side effects, a false positive could lead to unnecessary risk, cost, and patient anxiety [49]. A high-precision model ensures that when a positive prediction is made, there is high confidence that it is correct.

When a Balanced Metric is Essential

The F1-Score is ideal when a balance between precision and recall is needed and there is an imbalanced class distribution [49] [40]. It is commonly used in social media fake news detection from a biomedical perspective, or in information retrieval tasks like identifying relevant scientific publications, where both false alarms and missed information are problematic [49].

Considering Model Output and Decision Thresholds

The AUC-ROC metric is valuable for evaluating the overall ranking capability of a model that outputs probabilities, especially when the optimal decision threshold for clinical deployment is not yet known [49] [51]. Conversely, Log Loss provides a stricter evaluation of the quality of the probability estimates themselves, which is critical when these probabilities are used for risk assessment, such as predicting patient mortality risk [51].

Figure 1: A Decision Framework for Selecting Core Classification Metrics in Biomedicine.

Experimental Protocols for Metric Comparison in Biomedical Research

Case Study 1: Predicting Major Adverse Cardiovascular Events

A 2025 systematic review and meta-analysis provides a robust protocol for comparing machine learning models against conventional risk scores in a clinical prediction task [7].

Objective: To compare the performance of ML models and conventional risk scores (GRACE, TIMI) for predicting Major Adverse Cardiovascular and Cerebrovascular Events (MACCEs) in patients with Acute Myocardial Infarction (AMI) undergoing Percutaneous Coronary Intervention (PCI) [7].

Methods:

- Study Design: Systematic review and meta-analysis of 10 retrospective cohort studies (total n=89,702 individuals) [7].

- Model Comparison: The most frequently used ML algorithms (Random Forest, Logistic Regression) were compared against conventional risk scores (GRACE, TIMI) [7].

- Performance Quantification: The primary metric for comparison was the Area Under the Receiver Operating Characteristic Curve (AUC-ROC), with summary estimates calculated via random-effects meta-analysis [7].

Results: The meta-analysis demonstrated that ML-based models (summary AUC: 0.88, 95% CI 0.86–0.90) outperformed conventional risk scores (summary AUC: 0.79, 95% CI 0.75–0.84) in predicting mortality risk [7]. This protocol validates the use of AUC-ROC for a high-level comparison of model discrimination in a clinical context with significant class imbalance.

Case Study 2: Predicting Student Outcomes in Coding Courses

This study from 2025 illustrates a multi-metric evaluation approach in an educational context, a methodology directly transferable to biomedical classification problems like predicting patient outcomes [53].

Objective: To develop and evaluate a predictive framework that identifies students at risk of underperforming in initial coding courses by leveraging behavioral and academic data [53].

Methods:

- Model Training: A range of ML algorithms, including Long Short-Term Memory (LSTM) networks and Support Vector Machines (SVM), were trained on a hybrid dataset combining academic history and in-class behavioral data [53].

- Data Augmentation: Techniques like SMOTE (Synthetic Minority Over-sampling Technique) were employed to address class imbalance [53].

- Performance Evaluation: Models were evaluated using a suite of metrics: accuracy, precision, recall, and F1-score [53].

Results: The LSTM algorithm achieved the highest performance, with an accuracy of 94% and an F1-score of 0.87 [53]. The reporting of both overall accuracy and the F1-score, which is more robust to imbalance, provides a more complete picture of model efficacy, a practice essential for biomedical applications.

Table 2: Summary of Experimental Findings from Case Studies

| Study Domain | Primary Comparative Metric | Key Performance Result | Supported Thesis on Metric Use |

|---|---|---|---|

| Cardiovascular Event Prediction [7] | AUC-ROC (for model discrimination) | ML Models: AUC 0.88 (0.86-0.90) vs. Conventional Scores: AUC 0.79 (0.75-0.84) | AUC-ROC is effective for summarizing overall performance and comparing models, especially with class imbalance. |

| Educational Outcome Prediction [53] | F1-Score (for balance on imbalanced data) | LSTM model achieved an F1-Score of 0.87 (and Accuracy of 94%) | A single threshold-based metric (F1) is valuable for summarizing performance when both false positives and false negatives are concerning. |

| Accuracy, Precision, Recall (comprehensive view) | A suite of metrics provides a more nuanced understanding of model strengths and weaknesses than any single metric. |

The Scientist's Toolkit: Essential Research Reagents for Metric Evaluation