A Comprehensive Protocol for Method Comparison in the Clinical Laboratory: From Foundational Principles to Advanced Validation

This article provides a detailed, step-by-step framework for conducting a robust method comparison study in the clinical laboratory, a critical process for ensuring the quality and reliability of patient testing.

A Comprehensive Protocol for Method Comparison in the Clinical Laboratory: From Foundational Principles to Advanced Validation

Abstract

This article provides a detailed, step-by-step framework for conducting a robust method comparison study in the clinical laboratory, a critical process for ensuring the quality and reliability of patient testing. Tailored for researchers, scientists, and drug development professionals, the content spans from foundational concepts and experimental design to advanced statistical analysis, troubleshooting, and final validation. By synthesizing guidelines from authoritative bodies like CLSI and addressing common pitfalls, this guide empowers laboratories to objectively assess new measurement procedures, verify their equivalence to established methods, and confidently implement changes that safeguard patient care and support rigorous biomedical research.

Laying the Groundwork: Core Principles and Planning for a Robust Method Comparison

In the field of clinical laboratory science, method comparison is a fundamental process used to evaluate the systematic errors, or inaccuracy, of a new measurement procedure (the test method) against a comparative method. The primary purpose is to determine whether the test method provides results that are comparable to those from an established method, ensuring that patient results are reliable and clinically usable. This process is a central requirement for the implementation of new test methods and is critical for regulatory approvals, such as those from the FDA [1]. At its core, method comparison is an exercise in error analysis, seeking to understand the types and sizes of errors present and their potential impact on clinical decision-making [2]. The findings from these studies ensure that laboratory results are consistent, reliable, and suitable for their intended medical use, forming a cornerstone of quality in evidence-based laboratory medicine.

Core Terminology and Definitions

Understanding the key terms is essential for interpreting method comparison studies. The following table defines the central concepts.

Table 1: Key Terminology in Method Comparison

| Term | Definition |

|---|---|

| Bias (Systematic Error) | A consistent deviation of the test method results from the comparative method results. It represents the inaccuracy of the method [2]. |

| Precision (Random Error) | The random scatter of measured values around the mean. It describes the reproducibility of the method and is often quantified as a standard deviation or coefficient of variation [3]. |

| Agreement | The overall closeness of results between the test and comparative methods. It is a composite of both bias and precision [4] [2]. |

| Comparative Method | The established method used for comparison. It can be a reference method (with documented correctness) or a routine method whose accuracy is relative [2]. |

| Cutoff Interval | For qualitative tests, the range of analyte concentrations where results transition from consistently negative to consistently positive, describing the uncertainty of the binary result [3]. |

Relationship Between Statistical and Clinical Significance

A crucial concept in method comparison is distinguishing between a difference that is statistically significant and one that is clinically significant. Statistical significance, often indicated by a p-value < 0.05, shows that an observed effect is unlikely to be due to chance alone. However, this is heavily influenced by sample size; a large study can detect a tiny, clinically irrelevant bias as "statistically significant." Clinical significance, in contrast, assesses whether the observed bias is substantial enough to impact medical decisions. It evaluates if the error is large enough to be medically unacceptable, regardless of its statistical properties [4]. Therefore, a method can be statistically different from its comparator but still clinically acceptable, and vice versa.

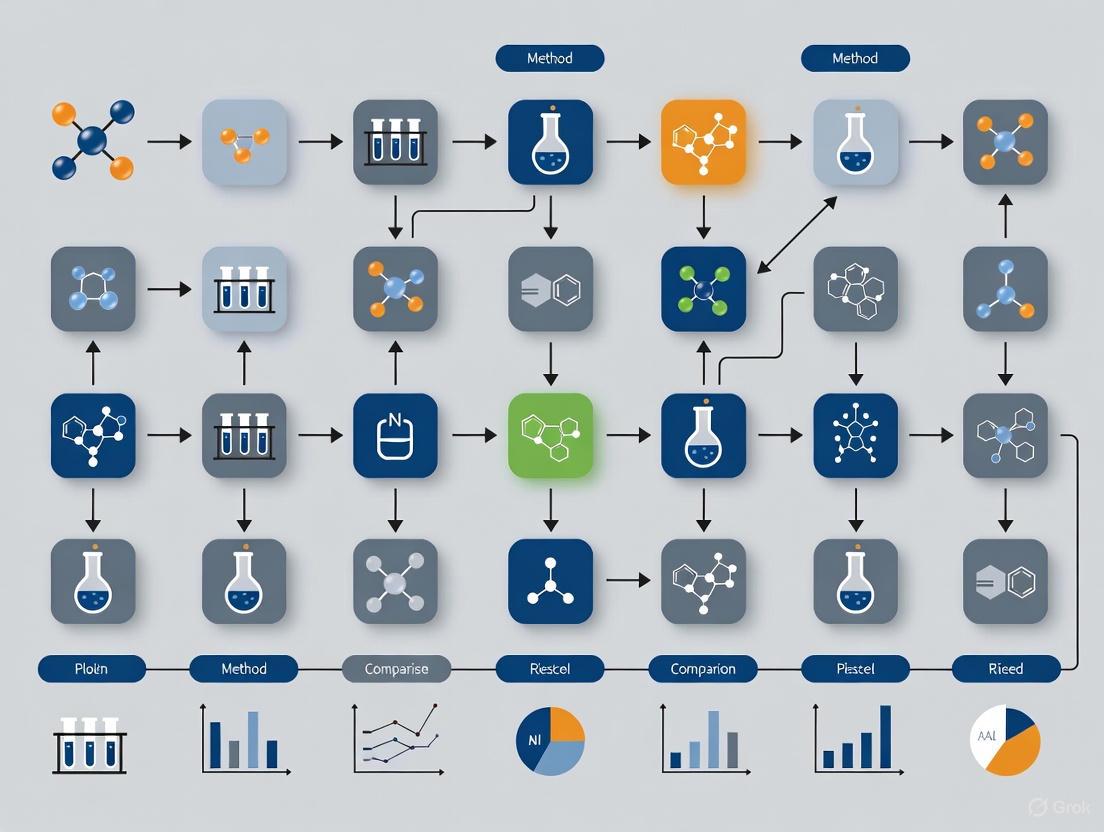

The diagram below illustrates the workflow of a method comparison study and the relationship between its core components.

Diagram 1: Method comparison workflow.

Experimental Protocols for Method Comparison

A robust experimental design is critical for obtaining reliable estimates of systematic error. The following protocols outline standard practices for both quantitative and qualitative method comparisons.

Protocol for Quantitative Methods

For quantitative tests that produce continuous numerical results, the comparison of methods experiment follows a structured approach [2].

- Sample Specifications: A minimum of 40 different patient specimens is recommended. The samples should be carefully selected to cover the entire working range of the method and should represent the spectrum of diseases expected in routine practice. The quality and range of specimens are more critical than the total number, though larger numbers (100-200) help assess method specificity [2].

- Experimental Timeline: The experiment should span a minimum of 5 days, but extending it over a longer period (e.g., 20 days) is preferable. This helps minimize systematic errors that might occur in a single analytical run [2].

- Measurement Process: Each patient specimen is analyzed by both the test method and the comparative method. While single measurements are common, performing duplicate measurements on different samples or in different runs is advantageous as it helps identify sample mix-ups or transposition errors [2].

- Specimen Handling: Specimens should be analyzed within two hours of each other by the two methods to prevent stability issues from affecting the observed differences. Handling procedures must be carefully defined and systematized [2].

Protocol for Qualitative Methods

For qualitative tests that provide binary results (e.g., positive/negative), the validation process differs and relies on a Clinical Agreement Study [3]. The experiment involves testing a set of characterized clinical samples (both positive and negative) with the candidate method and a comparative method. The results are then organized into a 2x2 contingency table to calculate performance metrics [1].

Table 2: 2x2 Contingency Table for Qualitative Method Comparison

| Comparative Method: Positive | Comparative Method: Negative | Total | |

|---|---|---|---|

| Candidate Method: Positive | a (True Positive, TP) | b (False Positive, FP) | a + b |

| Candidate Method: Negative | c (False Negative, FN) | d (True Negative, TN) | c + d |

| Total | a + c | b + d | n |

From this table, the following key metrics are calculated [1]:

- Positive Percent Agreement (PPA): = 100 × [a / (a + c)]. Estimates clinical sensitivity; the ability of the test to correctly identify positive samples.

- Negative Percent Agreement (NPA): = 100 × [d / (b + d)]. Estimates clinical specificity; the ability of the test to correctly identify negative samples.

Data Analysis and Statistical Evaluation

Graphical Analysis

The first step in data analysis is always to graph the data for visual inspection. This helps identify patterns, the presence of constant or proportional errors, and any outliers [2].

- Difference Plot: For methods expected to show one-to-one agreement, the difference between the test and comparative results (test minus comparative) is plotted on the y-axis against the comparative result value on the x-axis. The data points should scatter randomly around the zero line [2].

- Comparison Plot (Scatter Plot): The test method result is plotted on the y-axis against the comparative method result on the x-axis. A visual line of best fit can be drawn to show the general relationship between the methods [2].

Statistical Calculations for Quantitative Data

Statistical calculations provide numerical estimates of the errors observed graphically.

- Linear Regression Analysis: For data covering a wide analytical range, linear regression is the preferred method. It provides the slope (b), which indicates a proportional error, and the y-intercept (a), which indicates a constant error. The standard error of the estimate (S~y/x~) describes the scatter of the points around the regression line [2]. The systematic error (SE) at any critical medical decision concentration (X~c~) can then be calculated as: Y~c~ = a + bX~c~, then SE = Y~c~ - X~c~ [2].

- Bias and t-test: For comparisons with a narrow analytical range, it is often best to simply calculate the average difference, or bias, between the two methods. This is equivalent to the difference between the averages and is typically derived from a paired t-test calculation [2].

- Correlation Coefficient (r): The correlation coefficient is mainly useful for assessing whether the range of data is wide enough to provide reliable estimates of the slope and intercept. An r value of 0.99 or greater is desirable for simple linear regression to be reliable [2].

Statistical Concepts and Their Clinical Interpretation

The following diagram conceptualizes how the statistical findings from a method comparison study are ultimately interpreted through the lens of clinical relevance.

Diagram 2: Interpreting statistical vs. clinical significance.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of a method comparison study relies on a range of specific materials and solutions.

Table 3: Essential Materials for Method Comparison Experiments

| Item | Function in the Experiment |

|---|---|

| Patient Specimens | The core material for the study. They should cover the analytical measurement range and represent the intended patient population and disease states to validate method performance under real-world conditions [2]. |

| Reference Materials | Used for calibration and verifying the correctness of the comparative method, especially if it is designated as a reference method. Their traceability to higher-order standards is key [2]. |

| Internal Quality Control (QC) Materials | Stable, assayed materials run at regular intervals to monitor the stability and precision of both the test and comparative methods throughout the duration of the study [4]. |

| Interference Substances | Substances like hemoglobin (hemolysis), bilirubin (icterus), and lipids (lipemia) used in specific experiments to test the analytical specificity of the test method and identify potential cross-reactivities [5] [3]. |

| Calibrators | Solutions with known analyte concentrations used to calibrate both instruments before the experiment, ensuring that comparisons start from a standardized baseline [2]. |

Establishing the Study's Objective and Pre-defining Acceptable Performance Goals

In clinical laboratory research, the introduction of a new measurement procedure—whether to replace an aging instrument or to bring a novel test in-house—necessitates a rigorous method comparison study. The core objective of such a study is to determine whether the candidate method and the comparative method can be used interchangeably without affecting patient results or clinical outcomes [6] [7]. This determination hinges on estimating the bias between the two methods [8]. Achieving this objective is impossible without first establishing a clear, quantitative goal for what constitutes an acceptable level of performance. Pre-defining these performance goals is not merely a preliminary step; it is the foundational act that ensures the entire evaluation is objective, scientifically valid, and fit for its intended clinical purpose [7]. This guide details the protocols for setting these goals and designing the subsequent comparison within the framework of a comprehensive method comparison thesis.

Defining the Objective and Performance Specifications

The Core Objective: Interchangeability Through Bias Estimation

The primary question a method comparison study seeks to answer is whether the bias between a candidate method and a comparator is sufficiently small to be clinically insignificant [6]. The key determination is the estimate of bias and its uncertainty at various medical decision levels [8]. This process involves the comparison of results from patient samples measured by two different procedures intended to measure the same analyte [8]. If the observed bias is larger than a pre-defined acceptable limit, the two methods cannot be considered interchangeable.

Performance specifications should be defined before the experiment begins, using the models outlined in the Milano hierarchy [6]. The table below summarizes the primary sources for establishing Allowable Total Error (ATE) goals.

Table 1: Models for Defining Performance Specifications (ATE)

| Model Basis | Description | Application Consideration |

|---|---|---|

| Clinical Outcomes [6] [7] | Defines ATE based on the demonstrated effect of analytical performance on clinical decisions or patient outcomes. | Considered the most desirable but often difficult and costly to establish. |

| Biological Variation [9] [6] | Sets goals based on the innate within-subject and between-subject biological variation of the measurand. | A common and scientifically grounded approach; databases of biological variation data are available. |

| State of the Art [6] [7] | Defines ATE based on the highest level of performance (lowest achievable error) currently attainable by leading laboratories or technologies. | Useful when other models are not available, but may not be stringent enough for clinical needs. |

| Regulatory/Proficiency [7] | Uses performance criteria set by regulatory bodies (e.g., CLIA) or observed from proficiency testing (PT) programs. | Provides a practical, legally mandated baseline for performance. |

Experimental Protocol for Method Comparison

A well-designed and carefully planned experiment is the key to a successful method comparison [6]. The following workflow and protocols ensure the collection of high-quality, reliable data.

Sample Selection and Handling

The integrity of a method comparison study depends heavily on the quality of the patient samples used.

- Sample Size: A minimum of 40, and preferably 100, unique patient samples should be used [6] [7]. A larger sample size increases the power to detect unexpected errors from interferences or sample matrix effects [6].

- Measurement Range: Samples must be carefully selected to cover the entire clinically meaningful measurement range [6]. A common flaw is having gaps in the data, which can invalidate the comparison across the analytical measurement range (AMR) [6].

- Replication and Randomization: Where possible, duplicate measurements for both the current and new method should be performed to minimize the effects of random variation [6]. The sample sequence should be randomized to avoid carry-over effects, and samples should be analyzed within their stability period, ideally within 2 hours and on the day of blood collection [6].

- Study Duration: To mimic real-world conditions and capture day-to-day variation, samples should be measured over several days (at least 5) and multiple runs [6].

Key Experiments and Analytical Protocols

The following experiments are central to a comprehensive method evaluation. The table provides an overview of typical verification protocols.

Table 2: Key Experiments in Method Verification/Validation

| Study Type | Protocol Summary | Performance Goals (Examples) |

|---|---|---|

| Precision [9] [7] | Analyze 2-3 QC or patient samples in 10-20 replicates within a run (within-run) and over 5-20 days (day-to-day). | CV < ¼ ATE (common) or CV < ⅙ ATE (stringent) [7]. |

| Accuracy (Method Comparison) [6] [7] | Run 40 patient samples spanning the AMR in a single measurement on both old and new methods over 5-20 days. | Slope 0.9-1.1; Total Analytical Error (TAE) < ATE [7]. |

| Reportable Range [7] | Measure 5 samples across the AMR in triplicate. The lowest and highest samples should be within 10% of the range limits. | Slope 0.9-1.1 for linearity [7]. |

| Analytical Sensitivity [7] | Over 3 days, perform 10-20 replicate measurements of a low-level sample to determine the Limit of Quantitation (LoQ). | At LoQ, CV ≤ 20% [7]. |

Initial Data and Statistical Analysis

A robust analysis plan moves beyond inadequate statistical tests to proper regression and difference plots.

- Inadequate Statistical Methods: Neither correlation analysis nor the t-test is adequate for assessing method comparability [6]. Correlation measures the strength of a linear relationship, not agreement, while a t-test may miss clinically significant differences in small samples or flag statistically insignificant differences in large ones [6].

- Graphical Presentation: The first step in data analysis should always be graphical. Scatter plots help visualize the relationship and identify gaps or non-linearity across the measurement range [6]. Difference plots (Bland-Altman plots) are then used to visualize the agreement between methods by plotting the differences between the two methods against their averages, making it easy to spot constant or proportional bias [6].

- Regression Analysis: For estimating constant and proportional bias, Deming regression or Passing-Bablok regression are the recommended techniques, as they account for errors in both methods, unlike ordinary least squares regression [6] [7]. The choice between them can be guided by the data distribution and the correlation coefficient (r); an r > 0.975 may permit Deming regression, while an r < 0.975 dictates the use of Passing-Bablok regression [7].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following reagents and materials are fundamental for executing the experiments described in this guide.

Table 3: Essential Research Reagent Solutions for Method Comparison

| Item | Function / Purpose |

|---|---|

| Patient Samples [6] | The primary matrix for comparison; used to assess bias across the clinical range and to detect matrix-specific interferences. |

| Quality Control (QC) Materials [9] [7] | Stable, characterized materials run repeatedly to verify the precision and stability of both measurement procedures over time. |

| Calibrators [7] | Solutions with known analyte concentrations used to calibrate the instruments, ensuring both methods are traceable to a reference. |

| Linearity/Calibration Verification Materials [7] | A set of materials with known concentrations spanning the assay range, used to verify the reportable range of the method. |

| Interference Testing Kits | Commercial kits containing potential interferents (e.g., hemoglobin, bilirubin, lipids) to evaluate the analytical specificity of the new method. |

Establishing a crystal-clear objective and pre-defining stringent, clinically relevant performance goals are the most critical steps in a method comparison study. They transform the evaluation from a simple data collection exercise into a scientifically defensible decision-making process. By adhering to a rigorous experimental protocol that includes appropriate sample selection, a comprehensive suite of verification experiments, and robust statistical analysis focused on bias estimation, laboratory professionals can ensure that new methods are implemented with confidence, ultimately safeguarding patient care.

In clinical laboratory research, the validity of a new measurement method is fundamentally determined by the quality of the comparison against which it is judged. Selecting an appropriate comparator method is therefore a critical decision that directly impacts the reliability, traceability, and clinical utility of the resulting data. This foundational choice determines whether observed differences are correctly attributed to the test method or represent shared inaccuracies within the measurement system. The process must be framed within the context of metrological traceability—the property of a measurement result whereby it can be related to a stated reference through a documented unbroken chain of calibrations, each contributing to the measurement uncertainty [10]. This technical guide examines the hierarchy of comparator methods, from definitive reference procedures to established routine methods, and provides a structured framework for their implementation within method comparison protocols, ensuring that results are not only statistically sound but also metrologically traceable.

The Hierarchy of Comparator Methods

The selection of a comparator method is not a matter of convenience but should be guided by a defined hierarchy based on the metrological quality and the established accuracy of the available methods. The following table summarizes the core types of comparators and their characteristics.

Table 1: Hierarchy of Comparator Methods in Clinical Laboratory Research

| Comparator Type | Metrological Level | Key Characteristics | When to Use | Interpretation of Differences |

|---|---|---|---|---|

| Reference Method | Highest (Definitive) | - Highest metrological order [2]- Thoroughly validated specificity and uncertainty [10]- Results are traceable to SI or international units [10] | - To establish trueness of a new routine method [2]- To assign values to reference materials [10] [11] | Differences are attributed to the test method. |

| Established Routine Method | Intermediate (Routine) | - Well-documented performance in clinical practice [2]- Good precision and known, acceptable bias- May not have highest-order traceability | - When a reference method is unavailable or impractical.- To verify a new method performs equivalently to a current laboratory standard. | Differences must be interpreted with caution; it may not be clear which method is inaccurate [2]. |

| Reference Materials | (Used for Calibration) | - Certified values with stated uncertainty [10] [12]- Must be commutable (behave like patient samples) [10] [11] | - To calibrate both test and comparator methods to a common standard.- To verify analytical recovery and linearity. | Non-commutability can lead to incorrect calibration and increased between-method bias [11]. |

This hierarchy directly enables traceability. For well-defined Type A analytes (e.g., electrolytes, metabolites, steroid hormones), full traceability chains to International System (SI) units are possible [10]. For Type B analytes (e.g., proteins, tumor markers, antibodies), which are often heterogeneous and not traceable to SI units, standardization relies on harmonization to international consensus standards (e.g., WHO International Units) or to a widely accepted master method [10] [13].

A Protocol for Method Comparison Experiments

A robust method comparison experiment is designed to objectively quantify the systematic error (bias) between the test method and the chosen comparator. The following workflow and subsequent detailed protocol ensure a comprehensive assessment.

Diagram 1: A 9-step workflow for a method comparison experiment, adapted from established clinical laboratory practices [14].

Experimental Design and Execution

- Purpose and Comparator Selection: Clearly state whether the goal is to validate trueness against a reference method or to verify equivalent performance against an established routine method. The choice of comparator, as per the hierarchy, directly dictates how resulting differences will be interpreted [14].

- Sample Considerations: A minimum of 40 patient specimens is recommended, with some guidelines suggesting 100-200 samples to adequately assess specificity and identify interferences [2]. Specimens should cover the entire analytical range and be representative of the expected pathologies. They should be analyzed in multiple runs over at least 5 days to capture routine sources of variation [2]. Stability is critical; samples should be analyzed by both methods within two hours unless stability is known to be longer [2].

- Measurement Protocol: Analyze fresh patient samples by both the test and comparator methods. While single measurements are common, duplicate analysis of each specimen provides a valuable check for sample mix-ups or transcription errors [2].

Data Analysis and Interpretation

- Graphical Analysis: Before statistical calculations, data must be visualized.

- Statistical Analysis:

- For data covering a wide analytical range, use linear regression to obtain the slope (proportional error) and y-intercept (constant error). The systematic error (SE) at a critical medical decision concentration (Xc) is calculated as:

SE = (a + b * Xc) - Xc, whereais the intercept andbis the slope [2]. - For a narrow analytical range, the mean difference (bias) with its standard deviation, derived from a paired t-test, is a sufficient estimate of systematic error [2].

- The correlation coefficient (r) is more useful for verifying a sufficient data range (r ≥ 0.99 is desirable) than for judging method acceptability [2].

- For data covering a wide analytical range, use linear regression to obtain the slope (proportional error) and y-intercept (constant error). The systematic error (SE) at a critical medical decision concentration (Xc) is calculated as:

The Researcher's Toolkit: Essential Reagents and Materials

The implementation of a traceable method comparison requires specific, high-quality materials. The following table details key research reagent solutions.

Table 2: Essential Research Reagent Solutions for Method Comparison

| Reagent/Material | Function & Purpose | Critical Considerations |

|---|---|---|

| Certified Reference Materials (CRMs) | To provide a metrological anchor for calibration and trueness verification. Values are certified with a defined uncertainty. | Commutability is the most critical property. The CRM must behave like a native patient sample in all methods involved [10] [11]. |

| Commercial Calibrators | To transfer assigned values from the CRM to the routine measurement system, establishing the traceability chain. | Value assignment must be performed using a reference measurement procedure and a commutable CRM [10]. |

| Native Human Serum Panels | To act as a secondary, commutable reference material when primary CRMs are not commutable. Used for direct method comparison and value assignment. | Comprised of fresh or frozen pooled human serum/plasma to mimic the native matrix. The commutability of the panel must be validated [10] [11]. |

| Quality Control Materials | To monitor the precision and stability of both the test and comparator methods throughout the validation period. | While essential for monitoring performance, these materials are often not commutable and should not be used for calibration [10]. |

Standardization, Harmonization, and the Challenge of Commutability

The Goal of Equivalent Results

When different measurement procedures for the same analyte produce equivalent results for a patient sample, they are said to be standardized or harmonized.

- Standardization is the ideal approach, achieved when results are traceable to a higher-order reference, such as SI units, via a validated traceability chain involving reference methods and commutable reference materials [13]. This is the method of choice for well-defined analytes like cholesterol and creatinine.

- Harmonization is the process of achieving agreement among different measurement procedures when standardization is not yet possible (e.g., for many Type B analytes). This is often accomplished by aligning results using a consensus material or a designated comparison method, making the results valid for a particular period but not permanently anchored to a stable reference [13].

The Central Challenge: Commutability

A core challenge in implementing traceability is the commutability of reference and calibrator materials. Commutability is defined as the ability of a reference material to demonstrate inter-assay properties comparable to those of native clinical samples [10]. When a non-commutable material is used for calibration, the numerical relationship observed for the reference material will differ from that of patient samples, breaking the traceability chain and potentially increasing, rather than decreasing, between-method differences [10] [11]. Matrix-based human serum materials are preferred, but their commutability must be experimentally proven for each method pair [10].

Selecting the appropriate comparator is a foundational decision that dictates the validity and metrological value of a method comparison study. A rigorous protocol, beginning with a choice informed by the hierarchy of methods and executed with attention to sample selection, commutability, and appropriate data analysis, is essential. By systematically embedding the principles of traceability and a critical understanding of standardization and harmonization into the validation workflow, researchers and drug development professionals can ensure that the laboratory data generated are reliable, comparable over time and space, and ultimately fit for supporting critical healthcare decisions.

Within the framework of a robust method comparison protocol in clinical laboratory research, the pre-study phase is foundational to ensuring the validity and reliability of subsequent data. A well-executed method comparison assesses the systematic error or bias between a new test method and a comparative method, providing critical evidence on whether methods can be used interchangeably without affecting patient care [2] [6]. The credibility of this assessment hinges on addressing three core analytical factors—sample stability, matrix effects, and interferences—before the first specimen is analyzed. Neglecting these considerations introduces uncontrolled variables, potentially leading to inaccurate bias estimates, flawed statistical analysis, and ultimately, medically misleading conclusions. This guide details the experimental methodologies and strategic planning required to secure the integrity of method comparison studies from the ground up.

Sample Stability

The Impact of Stability on Data Integrity

In a method comparison experiment, patient samples are analyzed by both the test and comparative methods. If the analyte concentration in a sample changes between these two measurements, the observed difference will be incorrectly attributed to the systematic error of the test method [2]. This degradation of sample integrity directly inflates the estimated bias and increases the scatter of data points around the regression line, compromising the assessment of method acceptability. Therefore, establishing stability is not merely a precautionary step but a direct contributor to data quality.

Designing a Stability Validation Experiment

Objective: To determine the maximum time interval and optimal handling conditions under which patient samples maintain analyte stability for both methods involved in the comparison.

Protocol:

- Sample Selection: Select a minimum of 3-5 different patient samples for each analyte, covering the clinically relevant range (low, medium, and high concentrations) [15]. Pooled samples should be avoided unless the assay is validated for their use.

- Storage Conditions: Define and test the conditions relevant to your laboratory workflow. Common conditions include:

- Room temperature (e.g., 20-25°C)

- Refrigerated (e.g., 2-8°C)

- Frozen (e.g., -20°C or -70°C), including freeze-thaw stability.

- Time Points: Analysis should be performed at multiple time points. A typical scheme includes time zero (baseline), 2, 4, 8, 24, and 48 hours. The total duration should exceed the maximum expected storage time in your routine workflow.

- Analysis: Analyze each sample in duplicate at each time point against freshly prepared calibrators. The test method should be compared to the baseline (T0) measurement.

- Control: The stability of quality control (QC) materials should be monitored in parallel, but the primary focus should be on native patient samples, as their stability may differ [15].

Data Analysis: Stability is confirmed if the mean recovery at each time point is within the pre-defined acceptance limits, typically ±10% of the baseline concentration or within the allowable total error based on biological variation.

Table 1: Key Experimental Parameters for Sample Stability Testing

| Parameter | Recommended Practice | Rationale |

|---|---|---|

| Number of Samples | 3-5 patient samples per analyte [15] | Captures matrix variability across the measuring range. |

| Replication | Duplicate measurements at each time point | Controls for random analytical error. |

| Key Time Points | Baseline (T=0), 2h, 4h, 8h, 24h [2] [6] | Covers typical pre-analytical holding times. |

| Acceptance Criterion | Mean recovery within ±10% of baseline | A common benchmark for stability; can be tightened based on clinical requirements. |

Practical Workflow for Stability Assurance

The following workflow integrates stability testing into the pre-study phase and the subsequent method comparison experiment.

Matrix Effects

Understanding Matrix Effects in Separation Techniques

Matrix effects represent a critical challenge in chromatographic-mass spectrometric methods (e.g., LC-MS/MS), where non-analyte components in the sample co-elute and alter the ionization efficiency of the target analyte [15] [16]. This can cause either suppression or enhancement of the signal. In a method comparison, if the new test method (e.g., an LC-MS/MS LDT) is susceptible to matrix effects while the comparative method (e.g., an immunoassay) is not, a proportional bias that is sample-dependent may be observed, leading to an incorrect conclusion about the new method's performance.

Protocols for Assessing Matrix Effects

The most definitive experiment for evaluating matrix effects is the post-column infusion assay. Experimental Protocol:

- Setup: Infuse a solution of the analyte of interest directly into the MS source at a constant rate using a syringe pump, post-column.

- Chromatography: Inject a processed "blank" sample extract (from multiple different patient matrices) and run the chromatographic method as usual.

- Monitoring: Monitor the selected reaction monitoring (SRM) trace for the infused analyte. A stable signal indicates no matrix effects. A depression or elevation in the baseline signal during the region where the analyte normally elutes indicates ion suppression or enhancement, respectively.

For a more quantitative assessment, the post-extraction spike method is used. Experimental Protocol:

- Prepare Samples: Take extracts from at least 10 different sources of native patient matrix [15]. The matrices should be as diverse as possible (e.g., from patients with different diseases, lipidemic/hemolyzed samples if relevant).

- Spike: Divide each extract into two aliquots. Spike one with the analyte at a known concentration (A). The other serves as the unspiked blank (B).

- Compare: Prepare the same analyte concentration in a pure solvent (C).

- Calculate Matrix Factor (MF):

MF = (Peak Area of A - Peak Area of B) / Peak Area of C.- An MF of 1.0 indicates no matrix effects.

- MF < 1.0 indicates ion suppression.

- MF > 1.0 indicates ion enhancement. The College of American Pathologists (CAP) recommends investigating further if the mean matrix effect is > ±25% or the CV of the MF across the 10 matrices is >15% [15].

Mitigation Strategies and Experimental Considerations

Table 2: Strategies to Overcome Matrix Effects in Method Development

| Strategy | Description | Experimental Consideration |

|---|---|---|

| Improved Sample Cleanup | Moving from protein precipitation (PPT) to solid-phase extraction (SPE) or phospholipid removal (PLR) plates [16]. | SPE provides superior cleanliness; method development plates can streamline optimization. PLR specifically targets phospholipids, a major cause of ion suppression. |

| Chromatographic Resolution | Modifying the LC method to separate the analyte from interfering matrix components. | Using columns with different selectivity (e.g., biphenyl or phenyl-hexyl instead of C18) can resolve co-eluting interferences [16]. |

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Using a SIL-IS that co-elutes with the analyte and experiences the same matrix effects. | The IS corrects for suppression/enhancement. It is the most effective and widely accepted mitigation strategy in quantitative LC-MS/MS. |

Interferences

Defining Interference and Its Clinical Impact

Interference occurs when a substance other than the target analyte is measured by the assay, leading to a falsely elevated or depressed result [17]. In the context of method comparison, a new method with different specificity (e.g., using a different antibody or chemical reaction) may be affected by interferences that the old method was not, or vice versa. A classic example is the enzymatic alcohol dehydrogenase (ADH) method for ethanol, which can be interfered with by lactate dehydrogenase (LD) and lactic acid in patients with conditions like diabetic ketoacidosis, potentially causing false-positive results [17]. Identifying such discrepancies is a primary goal of the comparison study.

Protocol for Interference Testing (CLSI EP07)

Objective: To systematically evaluate the effect of potentially interfering substances on the test method.

Protocol:

- Sample Preparation (Basis of Comparison):

- Pooled Patient Sample: Use a pooled patient sample with a clinically relevant concentration of the analyte.

- Prepare Pairs: Create two sets of samples for each potential interferent:

- Test Sample: Pooled sample + potential interferent.

- Control Sample: Pooled sample + same volume of interferent solvent (e.g., water or saline).

- Interferents and Concentrations: Test a wide panel of substances at physiologically and supra-physiologically relevant concentrations. Common interferents include:

- Hemoglobin (from hemolyzed blood)

- Bilirubin (icteria)

- Lipids (lipemia)

- Common Metabolites (e.g., glucose, urea)

- Common Drugs and their metabolites (e.g., acetaminophen)

- Analysis: Analyze the test and control samples in duplicate or triplicate in a single run to minimize the impact of drift.

- Calculation: For each interferent, calculate the difference between the test sample and the control sample:

Bias = (Test Result - Control Result).

Data Interpretation: The interference is considered clinically significant if the observed bias exceeds a pre-defined allowable limit, often based on the allowable total error or biological variation.

Table 3: Example Interference Testing Protocol for an Enzymatic Ethanol Assay

| Potential Interferent | Test Concentration | Interference Mechanism | Acceptance Criterion |

|---|---|---|---|

| Hemoglobin (Hemolysis) | 500 mg/dL | Spectral interference or negative bias [17] | Bias < ± Critical decision level (e.g., 10 mg/dL) |

| Lactic Acid/Lactate | 20 mmol/L | Cross-reaction with LDH in reagent [17] | Bias < ± Critical decision level (e.g., 10 mg/dL) |

| Triglycerides (Lipemia) | 1000 mg/dL | Spectral scattering or volume displacement | Bias < ± Critical decision level (e.g., 10 mg/dL) |

| Isopropanol | 100 mg/dL | Potential cross-reactivity | Bias < ± Critical decision level (e.g., 10 mg/dL) |

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and solutions critical for executing the experiments described in this guide.

Table 4: Key Research Reagent Solutions for Pre-Study Experiments

| Tool/Reagent | Function | Application Example |

|---|---|---|

| Native Patient Samples | Provides a true biological matrix for testing stability, matrix effects, and interferences. More reliable than pooled samples or commercial quality controls [15]. | Core material for all pre-study validation experiments. |

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Corrects for losses during sample preparation and variability in ionization efficiency due to matrix effects in LC-MS/MS [15]. | Essential for accurate quantification in LC-MS/MS method development and validation. |

| Phospholipid Removal (PLR) Plates | A solid-phase extraction technique designed to remove phospholipids from biological samples, significantly reducing a major cause of ion suppression in LC-MS/MS [16]. | Sample preparation for LC-MS/MS assays to improve robustness and accuracy. |

| Mixed-Mode Solid Phase Extraction (SPE) Sorbents | Polymeric sorbents that retain analytes through multiple mechanisms (e.g., reversed-phase and ion-exchange), providing superior sample cleanup compared to protein precipitation [16]. | Method development for complex drug panels where a high degree of sample cleanliness is required. |

| Specialized LC Columns (e.g., Biphenyl) | Offers complementary selectivity to standard C18 columns, helping to resolve analytes from co-eluting matrix interferences [16]. | Chromatographic method development to mitigate matrix effects and improve specificity. |

| Characterized Interference Stocks | Purified substances (e.g., hemoglobin, bilirubin, triglycerides, common drugs) used to prepare samples for interference studies according to CLSI guidelines. | Systematic investigation of an assay's susceptibility to specific interferents. |

A method comparison study is only as valid as the foundational work that precedes it. Sample stability, matrix effects, and interferences are not peripheral concerns but central pillars of a scientifically sound and defensible protocol. By investing in rigorous, well-designed experiments to characterize these factors, researchers and drug development professionals can ensure that the observed differences between methods are a true reflection of analytical performance and not an artifact of poor pre-study planning. This disciplined approach minimizes the risk of costly errors, safeguards patient safety, and fosters confidence in the adoption of new, advanced laboratory methods.

Executing the Experiment: A Step-by-Step Guide to Data Collection and Analysis

This technical guide provides a comprehensive framework for optimal experimental design within method comparison protocols for clinical laboratory research. Focusing on the critical pillars of sample size, measurement range, and timing, this whitepaper equips researchers and drug development professionals with evidence-based methodologies to ensure rigorous, reproducible, and clinically relevant results. Adherence to these principles is fundamental for validating new measurement procedures against established ones, thereby ensuring the reliability of data that informs patient care and therapeutic development.

In clinical laboratory research, the introduction of a new measurement method necessitates a rigorous comparison against an established procedure to ensure the continuity of reliable patient results [6]. The fundamental question these studies address is one of substitution: can two different methods measure the same analyte interchangeably without affecting clinical outcomes? [18]. A well-designed method-comparison experiment assesses the bias (systematic difference) between methods, which must be understood and shown to be clinically acceptable before a new method is adopted [2] [6]. The quality of the experimental design directly determines the validity of the conclusions, making careful planning paramount [6].

Core Principles of Experimental Design

The foundation of a robust method comparison study rests on sound statistical principles of experimental design. These principles, championed by Fisher, ensure that the experiment is efficient, minimizes the influence of extraneous variables, and yields reliable data [19].

- Replication: Observing a result a single time is insufficient to establish reliability. Replication across multiple experimental runs and days accounts for natural variability and increases the rigor of the findings [19] [2].

- Randomization: Allocating treatments or the order of sample analysis randomly is crucial. Randomization spreads unspecified disturbances evenly across treatment groups, preventing confounding variables from biasing the estimated difference between methods [19] [6].

- Blocking: This technique accounts for known sources of variability. For instance, if samples are known to degrade over time, "blocking" by analysis day ensures this time-based effect impacts both methods equally, thereby increasing the precision of the comparison [19].

- Multifactorial Design: Instead of varying one factor at a time, a simultaneous approach that studies the response at each possible factor-level combination is more efficient and makes it possible to detect interactions between factors [19].

Determining Sample Size and Measurement Range

Determining an appropriate sample size is a critical step that balances statistical reliability with practical constraints. An under-powered study with too few samples may fail to detect a clinically important bias (Type II error), while an over-powered study wastes resources [20] [21].

Sample Size Calculation Parameters

Prior to conducting a power analysis, researchers must define several key parameters [22] [20]:

- Type I Error Rate (Alpha, α): The probability of falsely claiming a significant difference exists when it does not (false positive). This is typically set at 0.05 [20].

- Power (1-β): The probability of correctly detecting a true effect. A target power between 80-95% is standard, indicating an 80-95% chance of finding a statistically significant result if a real, biologically relevant effect exists [20] [21].

- Effect Size: The minimum difference between the two methods that is considered clinically or biologically important. This is not the difference one expects to find, but the smallest difference that would matter in practice [20]. For continuous data, this can be a standardized effect size like Cohen's d (e.g., 0.5 for a small effect, 1.0 for medium) or an absolute difference in units [20] [23].

- Variability (Standard Deviation, SD): An estimate of the population variance for the measured analyte. This can be obtained from prior studies, pilot data, or published literature [20] [21].

Table 1: Recommended Sample Sizes for Method Comparison Studies

| Source & Context | Recommended Minimum Sample Number | Key Rationale |

|---|---|---|

| CLSI EP09-A3 Guideline [6] | 40 patient specimens | Provides a baseline for reliable estimation. |

| CLSI EP09-A3 Guideline (Preferred) [2] [6] | 100 - 200 patient specimens | Larger sample size helps identify unexpected errors due to interferences or sample matrix effects. |

| Westgard (Comparison of Methods) [2] | 40 (carefully selected), ideally 100-200 | Quality of specimens (covering a wide range) is as important as quantity. |

Defining the Measurement Range

The patient samples selected for the study must cover the entire clinically meaningful measurement range [2] [6]. This is critical because the bias between methods may not be constant; it could be proportional, increasing or decreasing with the concentration of the analyte.

- Strategy: Specimens should be intentionally selected to represent low, medium, and high values within the reportable range of the method, rather than relying on randomly received samples which might cluster in a narrow interval [2].

- Importance: A narrow range of data can lead to misleading conclusions about the agreement between methods and fails to characterize the relationship across all clinically relevant levels [6].

Timing of Measurements and Data Collection

The timing and sequence of measurements are vital for controlling pre-analytical variables and ensuring a fair comparison.

- Simultaneity: Measurements by the two methods should be taken as close in time as possible to ensure the underlying biological state has not changed [18] [2]. The definition of "simultaneous" depends on the stability of the analyte; for stable analytes, measurements within a few hours may be acceptable, while for unstable ones (e.g., ammonia), simultaneous sampling is required [18].

- Randomization of Order: The sequence in which samples are analyzed by the two methods should be randomized to avoid carry-over effects or systematic biases related to the order of analysis [6].

- Study Duration: The experiment should be conducted over multiple days (a minimum of 5 days is recommended) and multiple analytical runs. This practice incorporates typical day-to-day variation in laboratory conditions, making the estimates of bias more representative of real-world performance [19] [2].

The following workflow diagram summarizes the key stages of a method-comparison study:

Data Analysis and Interpretation

A robust analysis plan involves both visual and statistical methods. It is crucial to avoid common pitfalls, such as relying solely on correlation coefficients or t-tests, as these are inadequate for assessing agreement [6].

Graphical Analysis

- Scatter Plots: Plotting the results of the new method (y-axis) against the established method (x-axis) provides a visual overview of the data distribution and the relationship between methods. A line of equality (y=x) can be added to help visualize deviations [6].

- Bland-Altman Plots: This is the recommended graphical method for assessing agreement. The plot displays the difference between the two methods (y-axis) against the average of the two methods (x-axis) [18] [6]. It visually reveals the bias (the mean difference) and the limits of agreement (bias ± 1.96 × standard deviation of the differences), which describe the range within which most differences between the two methods are expected to lie [18].

Statistical Analysis

- Bias and Precision Statistics: The mean difference (bias) and its standard deviation (SD) are calculated directly from the paired differences. The limits of agreement are derived from these values [18].

- Regression Analysis: For data spanning a wide concentration range, linear regression analysis (e.g., Deming or Passing-Bablok regression) is used to model the relationship between the methods. It helps quantify constant error (y-intercept) and proportional error (slope) [2].

Table 2: Essential Research Reagent Solutions for Method Comparison

| Item / Concept | Function / Definition | Role in Experimental Design |

|---|---|---|

| Patient Specimens | The primary biological samples used for comparison. | Must be carefully selected to cover the entire clinically meaningful measurement range and be stable for the duration of testing [2] [6]. |

| Control Groups | A group receiving a standard or sham treatment for comparison. | In laboratory studies, this is the established (comparative) method. Its performance is the benchmark against which the new method is evaluated [20]. |

| Comparative Method | The established measurement procedure already in clinical use. | Serves as the benchmark for comparison. Ideally, this is a reference method, but often it is the current routine laboratory method [2]. |

| Power Analysis Software | Tools for calculating required sample size (e.g., G*Power, Russ Lenth's applets). | Used prior to the experiment to determine the minimum number of samples needed to detect a clinically relevant difference with sufficient power [19] [20]. |

A method comparison study that is optimally designed with respect to sample size, measurement range, and timing forms the bedrock of reliable clinical laboratory research. By adhering to the principles of replication, randomization, and blocking, and by employing a sample size justified by a priori power analysis, researchers can ensure their studies are efficient, ethical, and scientifically sound. The subsequent analysis using Bland-Altman plots and appropriate regression techniques provides a clear and interpretable assessment of method agreement. Ultimately, this rigorous approach is indispensable for making valid inferences about the performance of new analytical methods and for safeguarding the quality of patient care and drug development.

In clinical laboratory research, the validity of a method comparison study hinges on the proper selection of patient samples. A fundamental requirement is that these samples must cover the clinically meaningful measurement range—the spectrum of values that trigger different clinical decisions, from diagnosis to therapeutic monitoring. Selecting samples that only represent a narrow, healthy range can lead to biased estimates of a new method's performance and ultimately mislead clinical decision-making. This guide provides researchers, scientists, and drug development professionals with a structured approach to selecting patient samples that ensures a method comparison robustly assesses performance across the entire range of clinical relevance, framed within the broader context of a method comparison protocol.

Defining the Clinically Meaningful Range

The Concept of Clinical Meaningfulness

A clinically meaningful effect or difference is not solely a statistical concept; it is one considered important by key stakeholders, including patients, clinicians, and regulators, to inform decisions about care and treatment [24]. In the context of laboratory measurements, this translates to a difference in measured analyte concentration that would lead to a change in clinical management or a different interpretation of a patient's status. Ignoring this concept can have serious ethical and practical consequences, potentially leading to trials that are too large, costly, and slow to provide useful answers for stakeholders [25].

The clinically meaningful range for an analyte should be derived from a combination of sources:

- Clinical Practice Guidelines: Established medical guidelines often define reference intervals, diagnostic cut-offs, and therapeutic targets.

- Regulatory Standards: Recommendations from bodies like the FDA or standards from organizations like the Clinical and Laboratory Standards Institute (CLSI) can provide targets for acceptable performance.

- Stakeholder Input: Engaging with clinicians provides insight into the decision points where test values trigger action. Patient perspectives ensure that outcomes important to their quality of life are considered [24].

- Published Literature: Existing studies on clinical outcomes can link specific analyte concentrations to changes in patient risk, diagnosis, or treatment response.

Table 1: Common Effect Size Measures and Their Clinical Interpretation

| Measure | Calculation | Clinical Interpretation | Considerations |

|---|---|---|---|

| Cohen's d | Difference between group means divided by common standard deviation [24] | Degree of overlap in responses between two groups [24] | Assumes normal distribution and equal variances [24] |

| Success Rate Difference (SRD) | Probability a random patient from treatment group T1 has a clinically preferable response to a random patient from T2 [24] | Ranges from -1 to +1; +1 indicates every T1 response is preferable to every T2 response [24] | Non-linear correspondence with Cohen's d [24] |

| Number Needed to Treat (NNT) | Reciprocal of the SRD (1/SRD) [24] | Number of patients needing treatment for one to benefit [24] | Highly dependent on the specific outcome and context (e.g., prevention vs. symptom reduction) [24] |

Experimental Protocol for Sample Selection

The following protocol aligns with principles from guidelines such as CLSI EP09, which describes procedures for determining the bias between two measurement procedures using patient samples [8].

Pre-Collection Planning

- Define the Clinical Reportable Range: Establish the minimum and maximum values of the analyte that are critical for clinical decision-making. This range should encompass values from clinically healthy individuals to those with severe disease.

- Identify Critical Medical Decision Points: Pinpoint specific concentrations within the reportable range that are used for diagnosis, risk stratification, or triggering a change in therapy (e.g., HbA1c ≥6.5% for diagnosing diabetes).

- Calculate the Target Sample Size: The number of samples should be sufficient to provide precise estimates of bias across the range. While specific numbers depend on the analyte and required confidence, a minimum of 40 samples is often a starting point, with a target of 100-200 being more robust.

Sample Acquisition and Stratification

- Source Samples from Routine Workflow: Collect leftover patient samples from the clinical laboratory after routine testing is complete. This ensures the samples are representative of the actual patient population.

- Stratify by Concentration: Deliberately select samples to ensure adequate representation across the pre-defined clinical reportable range. A suggested distribution is:

- 20% of samples from below the lower medical decision point.

- 60% of samples covering the central, "clinically ambiguous" range where small errors are most critical.

- 20% of samples from above the upper medical decision point.

- Ensure Sample Integrity: Use samples that are stored appropriately and handled according to standard laboratory procedures to maintain analyte stability. The comparative measurement procedure should ideally have lower uncertainty than the candidate method [8].

Documentation and Tracking

Maintain meticulous records for each sample, including the source, storage conditions, and the value obtained from the comparator method. This traceability is essential for investigating discrepancies.

Data Presentation and Analysis

Structuring Sample Distribution Data

The planned and final distribution of samples should be clearly documented to demonstrate coverage of the clinically meaningful range.

Table 2: Example Stratification Plan for Sample Selection (Hypothetical Cardiac Troponin Assay)

| Concentration Stratum | Clinical Context | Planned Number of Samples | Planned Percentage | Actual Number Collected |

|---|---|---|---|---|

| < 5 ng/L | Rule-out range for myocardial infarction | 20 | 20% | 22 |

| 5 - 50 ng/L | "Grey zone" for clinical observation | 60 | 60% | 58 |

| > 50 ng/L | Rule-in range for myocardial infarction | 20 | 20% | 20 |

| Total | 100 | 100% | 100 |

Quantifying Clinically Meaningful Differences

When interpreting method comparison results, it is critical to assess whether the observed differences are clinically meaningful. The following table summarizes general guidance for different contexts.

Table 3: Guidance for Interpreting Meaningful Change Thresholds

| Context | Typical Threshold Range | Key Considerations | Primary Reference |

|---|---|---|---|

| Group-Level Comparisons | 2 to 6 T-score points [26] | A threshold of 3 points is often reasonable; smaller differences can be significant with large sample sizes [26] | PROMIS Guidelines [26] |

| Individual-Level Monitoring | 5 to 7 T-score points [26] | A lower bound of 5 points is often reasonable; requires a larger change to be confident for a single person [26] | PROMIS Guidelines [26] |

| General Definitive Trials | Difference considered important by at least one key stakeholder group [25] | Should be both important and realistic; ignoring importance can lead to unethical or useless research [25] | DELTA-2 Guidance [25] |

Visual Workflows and Toolkit

Workflow for Sample Selection

The following diagram visualizes the end-to-end process for selecting patient samples to cover the clinically meaningful range.

Analytical Validation Pathway

Once samples are selected, they are used in a method comparison experiment. The following diagram outlines the core analytical pathway.

The Scientist's Toolkit for Sample Selection

Table 4: Essential Research Reagent Solutions and Materials

| Item | Function/Description |

|---|---|

| Residual Patient Samples | The core "reagent" for the study; these are leftover clinical specimens that are representative of the real-world patient population [8]. |

| Comparator Measurement Procedure | The established, often higher-standard method against which the new candidate method is compared. It should have traceability to reference materials or procedures where possible [8]. |

| Stable Storage Equipment | Freezers and refrigerators that maintain appropriate temperatures to ensure analyte stability in samples from collection through testing. |

| Data Management System | A secure database or LIMS (Laboratory Information Management System) to track sample identifiers, storage locations, and results from both measurement procedures. |

| Statistical Software | Software capable of performing regression analysis, Bland-Altman plots, and calculating bias estimates with confidence intervals. |

In clinical laboratory research, method comparison studies are essential for estimating the bias of a new candidate measurement procedure relative to an established comparator method [8]. These studies rely on robust data collection practices to produce reliable, actionable results that ensure patient safety and regulatory compliance [27]. The precision of a measurement procedure—encompassing its repeatability (within-run precision) and reproducibility (day-to-day precision)—is a fundamental characteristic that must be thoroughly evaluated before implementing a new method in routine clinical practice [28] [7].

Duplicate measurements and multi-day analysis represent two critical experimental approaches for characterizing this precision. These practices systematically capture different sources of variability inherent to the analytical method, instrument, and operational environment [28]. When properly integrated into a method comparison protocol, they provide the empirical evidence needed to judge whether a new method's performance meets predefined analytical performance specifications required for its intended clinical use [7].

Core Concepts and Definitions

Method Evaluation Framework

Method evaluation in clinical laboratories generally falls into one of two categories, each with distinct requirements for duplicate measurements and multi-day analysis:

Method Validation: A comprehensive process performed for laboratory-developed tests (LDTs) or significantly modified FDA-approved tests that establishes analytical performance characteristics through extensive experimentation across diverse conditions [28]. This requires substantial duplicate measurements and multi-day analysis to capture all sources of variability.

Method Verification: An abbreviated process for FDA-approved tests where the laboratory confirms manufacturer claims using fewer samples and measurements while still employing duplicate measurements and multi-day analysis to verify performance under local operational conditions [28] [7].

Key Precision Metrics

The experimental data collected from duplicate measurements and multi-day analysis are used to calculate specific statistical metrics that quantify method performance:

Standard Deviation (SD): The absolute measure of dispersion or variability in the results [29].

Coefficient of Variation (CV): The relative measure of variability expressed as a percentage of the mean, calculated as (Standard Deviation / Mean) × 100 [7].

Total Analytical Error (TAE): A composite measure that combines random error (imprecision) and systematic error (inaccuracy) to provide a comprehensive assessment of method performance [7].

Performance Specification Hierarchies

Analytical performance specifications for precision studies should be defined a priori using a hierarchical approach [28]:

- Clinical Outcomes: Specifications based on demonstrated impact on clinical decision-making

- Biological Variation: Specifications derived from within-subject and between-subject biological variation data

- State-of-the-Art: Specifications based on the best performance currently achievable by available methods

Experimental Design and Protocols

Precision Studies: Within-Run and Day-to-Day

Precision studies evaluate the random error of a measurement procedure and are typically conducted at multiple concentrations to assess performance across the analytical measurement range [7].

Table 1: Precision Study Experimental Protocols

| Study Type | Time Frame | Samples | Replicates | Performance Goals |

|---|---|---|---|---|

| Within-Run Precision | Same day | 2-3 QC or patient samples at multiple concentrations | 10-20 consecutive measurements | CV < 1/4 to 1/6 of allowable total error (ATE) [7] |

| Day-to-Day Precision | 5-20 days | 2-3 QC materials at multiple concentrations | 2 measurements per day across multiple runs | CV < 1/3 to 1/4 of ATE [7] |

Method Comparison Studies

Method comparison experiments estimate the bias between a candidate method and a comparative method (reference method or current laboratory method) using patient samples [8]. These studies should incorporate multi-day analysis to account for routine sources of variation such as different reagent lots, calibrations, and operators [28].

Table 2: Method Comparison Study Design

| Parameter | Minimum Requirement | Optimal Practice |

|---|---|---|

| Sample Size | 40 patient samples [7] | 100+ samples for higher precision estimates |

| Concentration Range | Span the analytical measurement range | Include concentrations at medical decision points |

| Replication | Single measurements on both methods | Duplicate measurements on both methods |

| Testing Duration | 5 days minimum | 10-20 days to capture more variability sources |

| Sample Type | Fresh or frozen patient samples | Native patient samples representing routine practice |

Data Analysis and Interpretation

Statistical Methods for Precision Data

The data collected from duplicate measurements and multi-day analysis require appropriate statistical treatment to yield meaningful performance estimates:

Descriptive Statistics: Calculate mean, standard deviation, and coefficient of variation for each concentration level tested [29].

Analysis of Variance (ANOVA): Use nested ANOVA models to separate different components of variance (within-run, between-run, between-day) when multiple replicates are measured across different runs and days [29].

Total Analytical Error Calculation: Combine estimates of imprecision (random error) and inaccuracy (systematic error) to assess overall method performance against allowable total error specifications [7].

Acceptance Criteria for Precision Studies

Precision performance should be evaluated against predefined analytical performance specifications. The following table provides examples of acceptance criteria based on different models for setting analytical performance specifications:

Table 3: Precision Acceptance Criteria Based on Allowable Total Error (ATE)

| Performance Model | Within-Run Precision Criteria | Day-to-Day Precision Criteria |

|---|---|---|

| Biological Variation | CV < 0.25 × (within-subject biological variation) | CV < 0.33 × (within-subject biological variation) |

| Sigma Metrics | CV < ATE/4 when using 6 sigma goals | CV < ATE/4 when using 6 sigma goals [7] |

| University of Wisconsin Model | CV < ATE/6 | CV < ATE/3 [7] |

| Manufacturer's Claims | CV within manufacturer's stated precision claims | CV within manufacturer's stated precision claims |

Troubleshooting Unacceptable Precision

When precision studies fail to meet acceptance criteria, systematic investigation should identify potential causes:

- Review outliers: Examine data for potential errors or contamination [7]

- Repeat studies: Confirm findings with additional experimentation [7]

- Check reagent lots: Evaluate consistency across different manufacturing lots [28]

- Verify operator technique: Ensure proper training and consistent technique [28]

- Assess environmental conditions: Consider temperature, humidity, and other potential factors [28]

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents and Materials for Method Evaluation Studies

| Item | Function | Specification Considerations |

|---|---|---|

| Quality Control Materials | Monitor precision and accuracy across measurement range | Should mimic patient samples, available at multiple concentrations, stable for study duration |

| Calibrators | Establish analytical measurement relationship to reference | Traceable to reference methods or materials when available [8] |

| Patient Samples | Evaluate method comparison and bias estimation | Should represent intended patient population, span analytical measurement range, free from known interferences [8] |

| Linearity Materials | Verify reportable range of method | Commercially available linearity materials or prepared samples with known concentrations |

| Interference Materials | Assess analytical specificity | Solutions of potential interferents (hemoglobin, bilirubin, lipids, common medications) |

| Sample Collection Devices | Verify matrix compatibility | Match intended clinical use (serum, plasma, specific anticoagulants) [28] |

Advanced Applications and Methodologies

Integration with External Quality Assurance

Method evaluation should include comparison with external quality assurance (EQA) programs, also known as proficiency testing, when available [28]. This provides an external benchmark for assessing method performance against peer laboratories using the same or different methods. EQA data can reveal method-specific biases or trends not apparent from internal studies alone.

Longitudinal Performance Monitoring

The data collected during method evaluation establishes a baseline for ongoing quality monitoring. Statistical quality control practices implemented after method deployment should maintain at least the level of performance demonstrated during the evaluation period [28]. Control rules and frequencies should be established based on the precision and accuracy estimates determined through duplicate measurements and multi-day analysis.

Adaptive Approaches for Specialized Testing

For tests with unique challenges, such as rare analyte measurements or unstable analytes, adaptive approaches to duplicate measurements and multi-day analysis may be necessary:

Stability Studies: For unstable analytes, duplicate measurements at predetermined time intervals establish stability limits and appropriate handling requirements.

Carryover Studies: For automated analyzers, duplicate measurements of high-concentration samples followed by low-concentration samples assess potential carryover effects [7].

Sample Volume Studies: For pediatric or volume-limited testing, duplicate measurements at different sample volumes verify minimal volume requirements.

Duplicate measurements and multi-day analysis represent fundamental practices in method comparison protocols that systematically capture the random error components of measurement procedures. When properly designed and executed using the frameworks outlined in this guide, these approaches provide robust characterizations of method performance essential for ensuring the quality and reliability of clinical laboratory testing. The experimental protocols, statistical analyses, and acceptance criteria detailed herein provide laboratory professionals with evidence-based strategies for implementing these critical evaluation components in both method validation and verification contexts. As laboratory medicine continues to advance with new technologies and methodologies, these foundational practices for assessing and verifying measurement precision will remain essential for maintaining the analytical quality that underpins optimal patient care.

In clinical laboratory research, the comparison of measurement procedures is a fundamental activity, whether when introducing a new diagnostic assay, changing instrument platforms, or validating method performance. Within this context, statistical analysis extends far beyond establishing mere correlation to rigorously determine whether two methods agree sufficiently for clinical use. While correlation measures the strength of a relationship between two variables, it is insufficient for method comparison as it does not quantify agreement or systematic differences. This guide establishes the foundational statistical frameworks—specifically regression analysis and difference plots (Bland-Altman analysis)—essential for evaluating method agreement, identifying bias, and establishing the clinical acceptability of new measurement procedures within a robust method comparison protocol.

The Null Hypothesis Significance Testing (NHST) paradigm, while common in clinical research, is often inadequate for method comparison as it focuses on detecting any difference, which may be statistically significant but clinically irrelevant [30]. Instead, method evaluation prioritizes agreement and bias assessment, requiring specialized analytical approaches that directly estimate the magnitude and clinical impact of observed differences [30]. This shift in focus—from statistical significance to clinical significance—forms the core principle of effective method comparison in laboratory medicine.

Theoretical Foundations: Statistical Significance vs. Clinical Significance

Key Statistical Concepts in Laboratory Medicine

In quantitative testing, clinical laboratories deal with variables derived from measurements and multiple factors that influence their variability [30]. Understanding the distinction between statistical and clinical significance is paramount:

- Statistical Significance: An observed effect is statistically significant when it is unlikely to have occurred by random chance alone. It depends on sample size, variability (imprecision), and effect size [30].

- Clinical Significance: Measurement results are clinically significant when the average effect is substantial enough to be fit for the intended use, cost-effective, and/or favored by patients [30].

A p-value below 0.05 may indicate a statistically significant difference that is not clinically meaningful, especially with large sample sizes where even tiny, irrelevant differences can be detected [30]. Consequently, method comparison studies prioritize agreement assessment through techniques like Bland-Altman plots and regression analysis rather than relying solely on hypothesis tests [30].

Limitations of Correlation Analysis

While correlation coefficients (e.g., Pearson's r) are commonly reported in method comparison studies, they have serious limitations:

- Correlation measures the strength of a linear relationship, not agreement.

- Two methods can be perfectly correlated yet have consistent differences (biases).

- Correlation can be deceptively high when the data range is wide, masking poor agreement at specific clinical decision points.

Core Analytical Technique I: Regression Analysis

Purpose and Application in Method Comparison

Regression analysis in method comparison quantifies the systematic relationship between measurements from two methods, typically with the established method on the x-axis and the new method on the y-axis. It goes beyond correlation by modeling the functional relationship between methods, allowing for the detection and quantification of constant and proportional bias.

Methodological Protocols

Passing-Bablok Regression Protocol:

- Purpose: To compare two measurement methods without assuming the reference method is error-free; robust against outliers and non-normal error distributions [30].

- Procedure:

- Collect paired measurements (x~i~, y~i~) from both methods across an appropriate measurement range.

- Calculate all pairwise slopes S~ij~ = (y~j~ - y~i~)/(x~j~ - x~i~) for i < j.

- Sort these slopes and determine the median slope as the estimate for proportional bias.

- Calculate the intercept using the median of the differences {y~i~ - b·x~i~}.

- Interpretation: A slope significantly different from 1 indicates proportional bias; an intercept significantly different from 0 indicates constant bias.

- Sample Size Considerations: A minimum of 40 samples is recommended, with samples distributed across the clinical reporting range.

Deming Regression Protocol:

- Purpose: To model the relationship between two methods when both have measurement error; requires an estimate of the ratio of variances (λ) of the errors in both methods.

- Procedure:

- Collect paired measurements from both methods.

- Specify the ratio of error variances (λ), often assumed to be 1 if unknown.

- Calculate the slope estimate: b = { (S~yy~ - λS~xx~)^2^ + 4λS~xy~^2^ }^0.5^ / (2S~xy~), where S~xx~, S~yy~, and S~xy~ are sums of squares and cross-products.

- Calculate the intercept: a = ȳ - bx̄.

- Interpretation: Similar to Passing-Bablok; confidence intervals for slope and intercept indicate the presence of significant constant or proportional bias.

Data Presentation and Interpretation

Table 1: Interpretation of Regression Parameters in Method Comparison

| Parameter | Theoretical Value Indicating Perfect Agreement | Clinical Acceptance Threshold | Interpretation of Deviation |

|---|---|---|---|

| Slope | 1 | Defined based on clinical requirements (e.g., 0.95-1.05) | Proportional bias: The difference between methods changes proportionally with the analyte concentration. |

| Intercept | 0 | Defined based on clinical requirements | Constant bias: A fixed difference exists between methods regardless of concentration. |