A Practical Guide to Choosing Machine Learning Methods for Drug Discovery

This article provides a comprehensive framework for researchers and drug development professionals to select and validate machine learning methods across the drug discovery pipeline.

A Practical Guide to Choosing Machine Learning Methods for Drug Discovery

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to select and validate machine learning methods across the drug discovery pipeline. It covers foundational concepts of key ML algorithms, from classical models to modern transformers and few-shot learning, and establishes a practical 'Goldilocks paradigm' for method selection based on dataset size and diversity. The guide delves into application-specific best practices for target prediction, ADMET property forecasting, and generative chemistry, while also addressing critical troubleshooting aspects like data bias, model interpretability, and compliance with evolving FDA and EMA regulatory guidance. Through comparative performance analysis and validation frameworks, it equips scientists with strategic insights to accelerate AI-driven drug discovery while ensuring robust, reproducible, and regulatory-compliant outcomes.

Machine Learning Fundamentals: Core Algorithms and Their Evolution in Drug Discovery

The integration of machine learning (ML) into pharmaceutical research represents nothing less than a paradigm shift, replacing labor-intensive, human-driven workflows with AI-powered discovery engines capable of compressing timelines and expanding chemical and biological search spaces [1]. This transition has moved from theoretical promise to tangible impact, with dozens of AI-designed drug candidates entering clinical trials by 2025—a remarkable leap from virtually zero in 2020 [1]. Modern ML technologies are enabling researchers to move away from guesswork by screening millions of compounds digitally within minutes, predicting failure/success outcomes using past studies, and generating more accurate drug-target interaction models than previously possible [2]. This technological evolution spans the entire drug development pipeline, from initial target identification to clinical trial optimization and personalized medicine, fundamentally redefining the speed and scale of modern pharmacology [1] [3].

The classical drug discovery process has traditionally been characterized by high costs attributed to lengthy timelines and high failure rates, often taking approximately 15 years from concept to market [3]. With the integration of AI-driven approaches, pharmaceutical companies can now navigate this complex landscape more efficiently and effectively. Machine learning algorithms can analyze vast databases to identify intricate patterns, allowing for the discovery of novel therapeutic targets and the prediction of potential drug candidates with better accuracy and at a faster pace than traditional trial-and-error approaches [3]. This review examines the expanding ML toolkit through the critical lens of method comparison, providing application notes and experimental protocols to guide rigorous evaluation and implementation of these transformative technologies.

Application Note 1: Target Identification and Validation

Background and Significance

Target identification and validation represents the foundational stage of drug discovery, where disease-modifying targets are identified and their therapeutic potential assessed. Modern ML approaches have revolutionized this process by enabling systematic mining of complex, high-dimensional biological data to uncover novel targets with higher probability of clinical success [4] [3]. ML capabilities lie in mining genomic, proteomic, and transcriptomic data to discover potential drug targets and simulate how these targets interact with various compounds, allowing for faster and more accurate validation [4]. This approach has proven particularly valuable for identifying targets for diseases with complex pathophysiology and for drug repurposing opportunities, where existing drugs can be matched to new therapeutic applications through analysis of hidden relationships between drugs and diseases [3].

Experimental Protocol: Knowledge-Graph Driven Target Discovery

Purpose: To systematically identify and prioritize novel therapeutic targets for specific disease indications using ML-driven knowledge graphs.

Materials and Software:

- Data Sources: Structured biological databases (e.g., UniProt, KEGG, STRING) and unstructured data from scientific literature [1]

- Analysis Tools: BenevolentAI platform or similar knowledge-graph technology [1]

- Validation Resources: CRISPR screening data, in vitro cellular models, omics datasets [1]

Methodology:

- Data Integration and Knowledge Graph Construction

- Assemble heterogeneous datasets including genomic, proteomic, transcriptomic, and clinical data

- Apply natural language processing (NLP) to extract relationships from scientific literature

- Construct a structured knowledge graph representing biological entities and their relationships

Target Hypothesis Generation

- Implement graph traversal algorithms to identify paths connecting disease nodes to potential target nodes

- Apply network centrality measures to prioritize targets based on their topological importance

- Calculate confidence scores for each target hypothesis based on evidence strength

Multi-factor Target Prioritization

- Assess target druggability using structural and chemical feasibility predictors

- Evaluate safety profiles based on genetic perturbation data and known biological pathways

- Analyze disease association strength through genetic and functional evidence

Experimental Validation

- Employ CRISPR-based gene editing to functionally validate target-disease relationships

- Conduct in vitro assays using disease-relevant cellular models

- Correlate target modulation with disease-relevant phenotypic readouts

Quality Control Considerations:

- Implement cross-validation to assess model generalizability across different disease areas

- Establish benchmarks against known validated targets to calibrate prediction accuracy

- Apply statistical methods to control for false discovery rates in high-throughput validation screens

Performance Metrics and Comparison

Table 1: Comparative Performance of Target Identification Methods

| Method Type | Targets/Week | Validation Rate | Key Limitations |

|---|---|---|---|

| Manual Literature Review | 2-5 | ~15% | Subject to human bias, incomplete knowledge |

| Traditional Bioinformatics | 10-20 | ~22% | Limited to structured data, poor with novel biology |

| ML Knowledge Graphs | 50-100 | ~35% | Dependent on data quality, complex interpretation |

Application Note 2: Generative Molecular Design

Background and Significance

Generative molecular design represents one of the most transformative applications of ML in pharmaceutical research, enabling the de novo creation of novel chemical entities with optimized properties. Unlike traditional virtual screening which explores existing chemical space, generative AI models can propose entirely new molecular structures that satisfy precise target product profiles, including potency, selectivity, and ADME (absorption, distribution, metabolism, and excretion) properties [1]. Companies like Exscientia have demonstrated that this approach can achieve dramatic compression of discovery timelines, reporting AI-driven design cycles approximately 70% faster and requiring 10x fewer synthesized compounds than industry norms [1]. This capability has been proven in practice, with Exscientia's generative-AI-designed idiopathic pulmonary fibrosis drug progressing from target discovery to Phase I trials in just 18 months compared to the typical 5 years needed for conventional approaches [1].

Experimental Protocol: Generative Adversarial Networks for Lead Optimization

Purpose: To generate novel molecular structures with optimized potency, selectivity, and pharmacokinetic properties using Generative Adversarial Networks (GANs).

Materials and Software:

- Chemical Databases: ChEMBL, PubChem, ZINC for training data [3]

- Representation: SMILES strings or molecular graphs [3]

- Platform: Exscientia's Centaur Chemist platform or similar generative chemistry environment [1]

- Validation: In silico ADMET prediction tools, molecular docking simulations [3]

Methodology:

- Data Preprocessing and Chemical Space Representation

- Curate high-quality chemical structures with associated bioactivity data

- Convert molecules to appropriate representations (SMILES, graphs, fingerprints)

- Apply chemical standardization and normalization procedures

Generative Adversarial Network Implementation

- Generator Network: Creates novel molecular structures from latent space sampling

- Discriminator Network: Distinguishes generated compounds from real bioactive molecules

- Adversarial Training: Iterative optimization where generator improves its outputs to fool the discriminator

Property-Guided Optimization

- Integrate predictive models for key properties (potency, solubility, metabolic stability)

- Implement reinforcement learning with property prediction as reward function

- Apply transfer learning to adapt models to specific target classes

Multi-Objective Compound Selection

- Balance competing molecular properties using Pareto optimization

- Assess synthetic accessibility using retrosynthesis prediction tools

- Apply diversity metrics to ensure broad exploration of chemical space

Quality Control Considerations:

- Validate generated structures for chemical correctness and novelty

- Implement applicability domain assessment to identify extrapolations beyond training data

- Establish synthetic feasibility thresholds to prioritize readily accessible compounds

Performance Metrics and Case Studies

Table 2: Generative AI Performance in Lead Optimization

| Platform/Company | Compounds Synthesized | Timeline Reduction | Clinical Candidates |

|---|---|---|---|

| Traditional Medicinal Chemistry | 2,500-5,000 | Baseline | 1-2 per program |

| Exscientia (CDK7 Inhibitor) | 136 | ~70% faster | 1 [1] |

| Insilico Medicine (IPF Program) | Not specified | 18 months (target to Phase I) | 1 [1] |

Application Note 3: Clinical Trial Optimization

Background and Significance

Clinical trials represent one of the most costly and time-consuming stages of drug development, with traditional approaches often struggling with recruitment challenges, protocol deviations, and inaccurate outcome predictions [4]. ML technologies are transforming this landscape by enabling smarter trial design, optimized patient recruitment, and real-time monitoring [4] [2]. By learning from historical trial data, ML models can forecast potential outcomes, dropout rates, or adverse events for new studies, helping stakeholders make evidence-backed decisions on whether to proceed, modify, or discontinue a trial [4]. This approach allows clinical research institutes to run trials that are smaller, faster, and safer while generating more robust conclusions about therapeutic efficacy [2].

Experimental Protocol: AI-Enhanced Patient Recruitment and Stratification

Purpose: To accelerate clinical trial enrollment and improve patient stratification using machine learning analysis of heterogeneous healthcare data.

Materials and Software:

- Data Sources: Electronic Health Records (EHRs), genomic databases, medical claims data, patient registries [4]

- ML Platform: Cloud-based analytics environment with appropriate data security protocols

- Tools: Natural language processing for clinical note analysis, predictive modeling frameworks

Methodology:

- Data Harmonization and Feature Engineering

- Implement HIPAA-compliant data de-identification and privacy protection

- Apply NLP to extract structured information from clinical notes and medical narratives

- Create derived features combining diagnosis codes, medication history, and lab values

Predictive Model Development

- Train supervised learning models (e.g., gradient boosting, neural networks) to identify eligible patients

- Implement survival analysis techniques to forecast patient availability timelines

- Develop similarity metrics to match patient profiles to trial eligibility criteria

Digital Twin Simulations

- Create in silico patient representations using historical control data [4]

- Generate synthetic control arms for rare diseases or difficult-to-recruit conditions

- Simulate trial outcomes across different recruitment and stratification scenarios

Adaptive Recruitment Monitoring

- Implement real-time dashboards to track enrollment rates and demographics

- Apply anomaly detection to identify sites with unexpected recruitment challenges

- Dynamically adjust recruitment strategies based on predictive analytics

Quality Control Considerations:

- Validate prediction accuracy against actual enrollment outcomes in pilot studies

- Ensure algorithmic fairness across demographic groups through bias testing

- Maintain audit trails for regulatory compliance and model explainability

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Research Reagent Solutions for AI-Driven Drug Discovery

| Tool Category | Specific Examples | Function | Implementation Considerations |

|---|---|---|---|

| Generative Chemistry Platforms | Exscientia Centaur Chemist, Insilico Medicine Generative Tensorial Reinforcement Learning (GENTRL) | De novo molecular design with multi-parameter optimization | Requires integration with wet-lab validation; platform-specific expertise needed [1] |

| Knowledge Graph Technologies | BenevolentAI Platform, Semantic MEDLINE | Extracts hidden relationships from structured and unstructured data | Dependent on data quality and completeness; complex interpretation required [1] |

| Phenotypic Screening Platforms | Recursion Phenomics Platform, Exscientia Patient-on-a-Chip | High-content screening using cellular models including patient-derived samples | Generates massive image datasets requiring specialized computer vision analysis [1] |

| Clinical Trial Optimization Tools | Unlearn.AI Digital Twins, Predictive recruitment algorithms | Creates synthetic control arms, optimizes patient selection | Regulatory acceptance evolving; requires extensive historical data [4] |

| Protein Structure Prediction | DeepMind AlphaFold, RoseTTAFold | Predicts 3D protein structures from amino acid sequences | Accuracy varies by protein class; experimental validation recommended [4] |

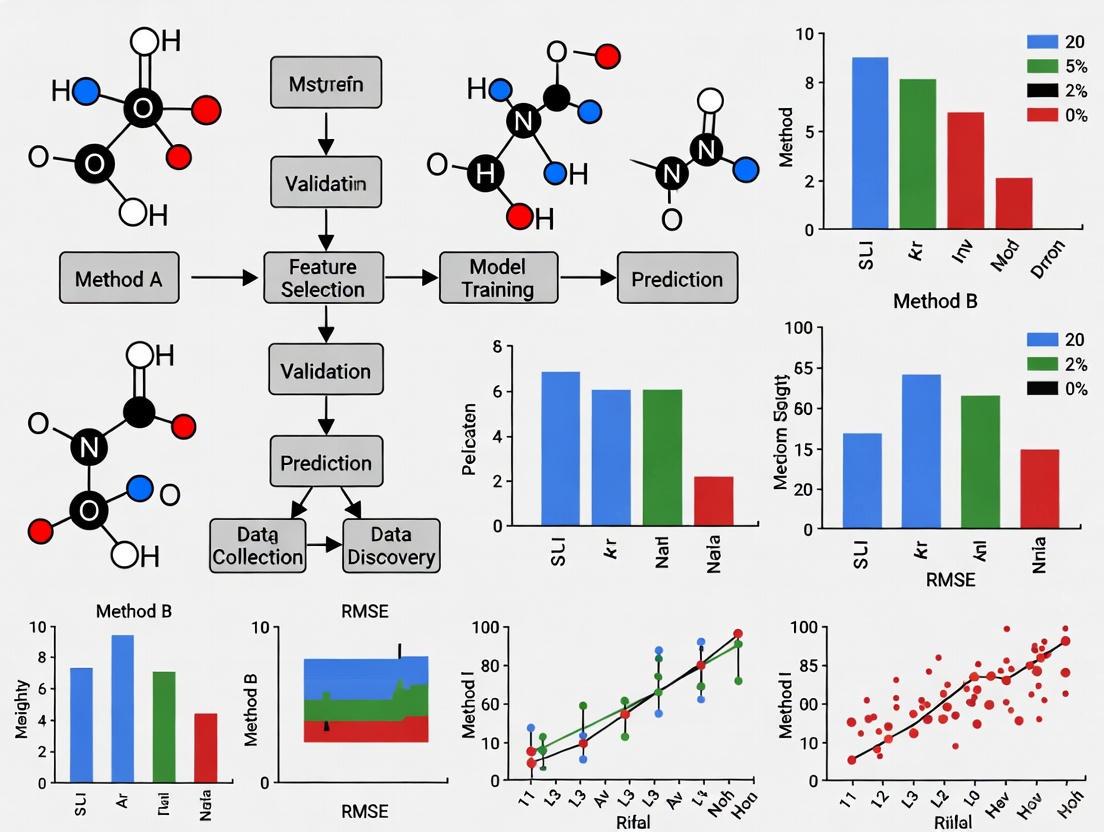

Visualization of Key Workflows

Machine Learning Model Development Workflow

AI-Driven Drug Discovery Pipeline

Method Comparison Framework

Method Comparison Guidelines for ML in Drug Discovery

Robust method comparison is essential for advancing ML applications in pharmaceutical research. The following guidelines provide a framework for rigorous evaluation:

Dataset Selection and Partitioning:

- Utilize chemically diverse and biologically relevant compound collections

- Implement time-split validation to assess temporal generalizability

- Apply scaffold-based splits to evaluate performance on novel chemical classes

- Ensure adequate representation of negative data (inactive compounds) to avoid bias

Performance Metrics and Benchmarking:

- Early Discovery: Prioritize early enrichment metrics (EF1, EF10) alongside AUC

- Lead Optimization: Include multi-parameter optimization success rates

- Synthetic Accessibility: Incorporate synthetic feasibility scores and medicinal chemistry desirability indices

- Experimental Validation: Report confirmation rates in downstream biological assays

Statistical Significance and Practical Relevance:

- Employ appropriate statistical tests for method comparison (e.g., paired t-tests, bootstrap confidence intervals)

- Differentiate between statistical significance and practical relevance in pharmaceutical contexts

- Report effect sizes with confidence intervals rather than relying solely on p-values

- Consider computational efficiency and resource requirements alongside predictive performance

The implementation of these method comparison guidelines requires domain-appropriate performance metrics and statistically rigorous protocols to ensure replicability and ultimately the adoption of ML in small molecule drug discovery [5]. As the field continues to evolve, maintaining rigorous standards for methodological comparison will be essential for differentiating genuine advances from incremental improvements and for building trust in AI-driven approaches across the pharmaceutical research community.

Deep learning, a subset of machine learning driven by multilayered neural networks, has emerged as a transformative technology for analyzing complex biological data. These artificial neural networks are inspired by the structure of the human brain and comprise interconnected layers of "neurons" that perform mathematical operations [6]. The "deep" in deep learning refers to the use of multiple layers (typically at least four, though modern architectures often have hundreds or thousands) that progressively transform input data into more abstract and composite representations [7] [6]. This hierarchical learning capability makes deep learning particularly well-suited for biological pattern recognition tasks, where relevant information is often embedded in high-dimensional data with complex, non-linear relationships.

In the context of drug discovery, deep learning models power most state-of-the-art artificial intelligence applications, from target identification and validation to predictive toxicology [8] [9]. The field of computational biology has especially benefited from these advances, with deep learning algorithms achieving performance comparable to or surpassing human expert performance in areas including protein structure prediction, medical image analysis, and bioinformatics [7] [10]. Unlike traditional machine learning that often requires hand-crafted feature engineering, deep learning models automatically discover optimal feature representations directly from raw data, making them exceptionally capable of identifying subtle, complex patterns in biological datasets without explicit programming of domain knowledge [7].

Deep Learning Architectures for Biological Pattern Recognition

Different deep learning architectures offer unique advantages for specific types of biological data and analytical tasks. Understanding these architectures is essential for selecting the appropriate method for a given drug discovery application.

Table 1: Deep Learning Architectures for Biological Data Analysis

| Architecture | Best-Suited Data Types | Key Strengths | Drug Discovery Applications |

|---|---|---|---|

| Convolutional Neural Networks (CNNs) | Image data, grid-like data | Spatial feature detection, translation invariance | Medical image analysis, histopathology, protein-ligand interaction prediction [8] [11] |

| Recurrent Neural Networks (RNNs) | Sequential data, time series | Temporal dependency modeling, variable-length inputs | Protein sequence analysis, genomic sequences, time-series experimental data [11] [12] |

| Transformers | Sequences, structured data | Long-range dependency capture, parallel processing | Protein structure prediction, molecular property prediction, de novo drug design [10] [9] |

| Graph Convolutional Networks | Graph-structured data | Relationship modeling, topological feature learning | Molecular graph analysis, protein-protein interaction networks, disease propagation models [8] |

| Deep Autoencoder Networks | High-dimensional data | Dimensionality reduction, feature learning | Single-cell RNA sequencing data, biomarker discovery, data compression [8] |

Specialized Architectures for Biological Data

Beyond these foundational architectures, several specialized approaches have been developed specifically for biological applications. Deep belief networks can be trained in an unsupervised manner, which is particularly valuable given the abundance of unlabeled biological data compared to labeled data [7]. Generative adversarial networks (GANs) consist of two networks—one generating content and the other classifying it—and have shown promise in de novo molecular design and generating synthetic biological data for training augmentation [8]. Transformers, originally developed for natural language processing, have been successfully adapted for biological sequences by treating amino acids or nucleotides as "words" and entire proteins or genes as "sentences" to capture long-range dependencies and structural contexts [10] [11].

The training process for these architectures follows a consistent methodology regardless of the specific application. During the forward pass, input data flows through the network, with each layer performing linear transformations (weighted sums of inputs plus biases) followed by non-linear activation functions [12] [6]. The output is then compared to the true value using a loss function that quantifies the prediction error. Through backpropagation, this error is propagated backward through the network, and the gradient descent algorithm adjusts weights and biases to minimize the loss in subsequent iterations [11] [6]. This iterative process allows the network to automatically learn hierarchical feature representations optimal for the specific prediction task.

Application Protocols for Protein Structure Prediction

Protein structure prediction represents one of the most significant successes of deep learning in computational biology. Accurate protein structures are crucial for understanding biological processes and designing effective therapeutics, yet traditional experimental methods like X-ray crystallography and cryo-electron microscopy are time-consuming and expensive [10]. Deep learning approaches have dramatically accelerated and improved this process, as exemplified by state-of-the-art tools like AlphaFold.

Data Preprocessing and Feature Engineering

The initial stage in protein structure prediction involves comprehensive data preprocessing and feature extraction from amino acid sequences and related biological data:

Multiple Sequence Alignment (MSA) Generation: Input the target amino acid sequence to databases like UniProt, TrEMBL, or Pfam to identify homologous sequences and construct MSAs [10]. MSAs capture evolutionary constraints and residue co-evolution patterns that inform structural contacts.

Feature Representation: Convert the raw amino acid sequence and MSA into numerical representations suitable for neural network processing. This includes:

- Sequence embeddings (one-hot encoding, learned embeddings)

- Position-specific scoring matrices (PSSMs)

- Predicted secondary structure features

- Physicochemical property encodings (hydrophobicity, charge, volume)

- Co-evolutionary information from residue covariation

Data Augmentation: Apply random transformations to training examples including sequence cropping, rotation invariance enforcement, and noise injection to improve model robustness and prevent overfitting.

Table 2: Key Protein Structure Databases for Training and Validation

| Database | Primary Content | Data Scale | Application in Model Development |

|---|---|---|---|

| Protein Data Bank (PDB) | Experimentally determined 3D protein structures | ~200,000 structures | Gold-standard training data and benchmark validation [10] |

| UniProt/TrEMBL | Protein sequences and functional information | >200 million sequences | Multiple sequence alignment generation, evolutionary context [10] |

| CATH/SCOP | Protein structure classification | Manual curation of PDB entries | Structural taxonomy, fold recognition, model evaluation [10] |

Model Architecture and Training Protocol

The following protocol outlines the end-to-end process for developing a deep learning model for protein structure prediction:

Step 1: Model Selection and Configuration

- Select appropriate architecture based on prediction task (typically transformer-based or CNN-based models)

- Configure hyperparameters including number of layers (typically 20-100+), attention heads (for transformers), filter sizes (for CNNs), and hidden unit dimensions

- Implement residual connections and normalization layers to enable training of very deep networks

- Set optimization parameters (learning rate, batch size, gradient clipping thresholds)

Step 2: Model Training

- Initialize model with pretrained weights when available (transfer learning)

- Implement mini-batch training with balanced batch composition

- Apply progressive training strategies: initially train on easier targets (high homology templates), then progressively include more difficult examples

- Employ regularization techniques including dropout, weight decay, and early stopping to prevent overfitting

- Monitor training and validation loss curves, adjusting learning rate schedules accordingly

Step 3: Prediction and Structure Generation

- Feed preprocessed target sequence and MSA through trained network to obtain distance matrices, torsion angles, and/or coordinate predictions

- Convert network outputs to 3D atomic coordinates using gradient-based optimization or geometry-based reconstruction

- Generate multiple candidate structures (typically 5-25) to explore conformational space

Step 4: Model Selection and Refinement

- Rank candidate structures using confidence metrics (predicted confidence scores, consensus metrics)

- Apply energy minimization and molecular dynamics refinement to relax stereochemical constraints

- Validate structures using geometric quality assessment (Ramachandran plots, rotamer distributions, clash scores)

- Compare to existing structures (if available) using metrics like TM-score and RMSD

Experimental Validation and Method Comparison Protocols

Robust validation and method comparison are essential for establishing the practical utility of deep learning approaches in drug discovery research. The following protocols provide guidelines for rigorous evaluation and comparison of deep learning methods in biological data analysis.

Performance Metrics and Benchmarking

Comprehensive evaluation requires multiple complementary metrics that assess different aspects of model performance:

Table 3: Key Performance Metrics for Deep Learning Models in Drug Discovery

| Metric Category | Specific Metrics | Interpretation in Biological Context |

|---|---|---|

| Predictive Accuracy | AUC-ROC, Accuracy, Precision, Recall, F1-score | Classification performance for bioactivity prediction, disease diagnosis |

| Regression Performance | RMSE, MAE, R² | Quantitative structure-activity relationship (QSAR) modeling, binding affinity prediction |

| Structural Quality | TM-score, RMSD, GDT-TS | Protein structure prediction accuracy relative to experimental structures |

| Statistical Significance | p-values, confidence intervals | Reliability of reported performance differences between methods |

| Practical Utility | Early enrichment factor, hit rate | Effectiveness in actual drug discovery campaigns |

When comparing new deep learning methods to established baselines, it is essential to implement statistically rigorous comparison protocols [5] [13]. This includes appropriate train/validation/test splits, cross-validation strategies, and significance testing for performance differences. For small molecule property modeling, domain-appropriate metrics that reflect real-world utility should be prioritized over generic statistical measures [5].

Cross-validation Strategy for Limited Biological Data

Biological datasets often face limitations in sample size, particularly for specific protein families or disease contexts. The following cross-validation protocol ensures robust performance estimation:

Stratified Splitting: Partition data into training/validation/test sets (typical ratio: 60/20/20) while preserving distribution of important characteristics (e.g., protein families, activity classes)

Nested Cross-Validation: Implement outer loop for performance estimation (5-10 folds) and inner loop for hyperparameter optimization (3-5 folds)

Temporal Validation: For time-series biological data, enforce temporal splitting where models are trained on past data and validated on future data

Cluster-Based Validation: Ensure that highly similar compounds or proteins (based on chemical similarity or sequence homology) are contained within the same split to prevent information leakage

Essential Research Reagent Solutions

Implementing deep learning approaches for biological pattern recognition requires both computational tools and experimental resources for validation.

Table 4: Essential Research Reagents and Tools for Deep Learning in Drug Discovery

| Category | Specific Tools/Resources | Function/Purpose |

|---|---|---|

| Deep Learning Frameworks | TensorFlow, PyTorch, Keras | Model development, training, and deployment [8] [6] |

| Specialized Libraries | Scikit-learn, DeepChem, BioPython | Data preprocessing, cheminformatics, bioinformatics utilities [8] |

| Hardware Accelerators | GPUs (NVIDIA), TPUs (Google Cloud) | Parallel processing for training deep neural networks [8] [6] |

| Protein Structure Tools | MODELLER, SwissPDBViewer, PyMOL | Template-based modeling, structure visualization, analysis [10] |

| Experimental Validation | X-ray crystallography, Cryo-EM, NMR | Experimental structure determination for model validation [10] |

| Compound Management | ChemBL, PubChem, ZINC | Small molecule databases for training and testing [8] |

Implementation Workflow for Drug Discovery Applications

The following diagram illustrates the complete workflow for implementing deep learning approaches in drug discovery projects, from data collection to experimental validation:

Deep learning approaches have demonstrated remarkable capabilities for complex pattern recognition in biological data, particularly in protein structure prediction and small molecule property modeling [10] [8]. These methods excel at automatically learning hierarchical feature representations from raw data, eliminating the need for manual feature engineering that traditionally limited computational biology approaches [7]. As deep learning continues to evolve, several emerging trends are likely to shape future applications in drug discovery, including multi-modal learning (integrating diverse data types), explainable AI techniques for model interpretability, and federated learning approaches that enable collaboration while preserving data privacy [8] [9].

The successful implementation of these technologies requires rigorous method comparison protocols and domain-appropriate validation strategies [5] [13]. By adhering to the application notes and protocols outlined in this document, researchers can ensure that deep learning approaches are deployed in a manner that generates biologically meaningful, reproducible, and practically significant results for drug discovery research. As the field advances, the integration of deep learning with experimental validation will continue to accelerate the identification of novel drug targets, the prediction of protein-ligand interactions, and the design of innovative therapeutics for complex diseases.

The application of transformer-based architectures and large language models (LLMs) represents a paradigm shift in computational molecular analysis for drug discovery. These models, originally developed for natural language processing (NLP), are uniquely suited to biological data because they can interpret genomic, chemical, and protein sequences as specialized languages with complex, hierarchical syntax and semantics [14] [15]. By leveraging self-attention mechanisms, these models capture long-range dependencies and intricate patterns within molecular data that traditional computational methods often miss [14] [16]. This capability is now accelerating various stages of the drug discovery pipeline, from target identification and molecular design to property prediction, compressing discovery timelines that traditionally required many years into a matter of months in some notable cases [1] [17].

This document provides application notes and detailed experimental protocols for employing transformers and LLMs in molecular analysis. The content is framed within the critical context of method comparison guidelines for machine learning in drug discovery, emphasizing the need for robust, reproducible, and statistically rigorous benchmarking [5] [18]. The protocols are designed for use by researchers, scientists, and drug development professionals.

Key Applications and Quantitative Performance

The table below summarizes the primary applications of transformer models and LLMs in molecular analysis, along with documented performance metrics and impacts from both real-world applications and research settings.

Table 1: Performance Metrics of Transformers and LLMs in Drug Discovery Applications

| Application Area | Specific Task | Reported Performance / Impact | Model / Company Example |

|---|---|---|---|

| Target Identification | Disease mechanism understanding & target prioritization | Identified candidate therapeutic targets for cardiomyopathy via in silico deletion [15]. | Geneformer [15] |

| De Novo Molecular Design | Generative design of novel drug-like molecules | Achieved clinical candidate after synthesizing only 136 compounds, far fewer than the thousands typically required [1]. | Exscientia (CDK7 inhibitor program) [1] |

| Molecule Optimization | Accelerating design-make-test-analyze cycles | ~70% faster design cycles and 10x fewer synthesized compounds than industry norms [1]. | Exscientia Platform [1] |

| Property Prediction | Predicting absorption, distribution, metabolism, excretion, and toxicity (ADMET) | Critical for filtering out molecules with undesirable characteristics early in the discovery process [15]. | Specialized LLMs [15] |

| Protein Structure & Function | Predicting protein structure and annotating function from sequence | Successfully predicts protein structures and annotates functions directly from amino acid sequences [15]. | ESM (Evolutionary Scale Modeling) [15] |

| Chemistry Automation | Planning chemical synthesis and predicting reactions | Demonstrates potential in automating chemistry experiments, including retrosynthesis and reaction outcome prediction [15]. | ChemCrow [15] |

Application Notes & Experimental Protocols

Protocol 1: Protein Function Annotation using a Protein Language Model

This protocol details the use of a specialized protein LLM to annotate protein functions from its amino acid sequence, a crucial step in early target validation.

Research Reagent Solutions

Table 2: Essential Materials for Protein Function Annotation

| Item | Function / Description |

|---|---|

| ESM (Evolutionary Scale Modeling) | A specialized protein LLM pretrained on millions of protein sequences to learn evolutionary patterns and structural constraints [15]. |

| FASTA File of Target Protein | The input data containing the amino acid sequence of the protein of interest in a standard text-based format [15]. |

| Tokenization Vocabulary | A predefined mapping that converts each amino acid character in the sequence into a token ID that the model can process [15]. |

| Computation Cluster (GPU) | High-performance computing resources to handle the intensive computations of the transformer model. |

Step-by-Step Workflow

- Input Preparation: Obtain the amino acid sequence of the protein of interest. Format this sequence into a standard FASTA file.

- Tokenization: Process the sequence through the model's tokenizer. This step splits the sequence into tokens (e.g., individual amino acids or sub-words) and converts them into numerical token IDs using the model's vocabulary [15].

- Masked Language Modeling Inference:

- Masking: Randomly mask a portion (e.g., 15%) of the amino acid tokens in the input sequence, replacing them with a special

<mask>token. - Prediction: Feed the masked sequence into the ESM model. The model's task is to predict the original amino acids for the masked positions based on the context provided by the entire surrounding sequence.

- Output: The model outputs a probability distribution over all possible amino acids for each masked position.

- Masking: Randomly mask a portion (e.g., 15%) of the amino acid tokens in the input sequence, replacing them with a special

- Function Prediction: The model's ability to accurately predict the missing residues is correlated with its understanding of the protein's fold and function. The learned sequence representations (embeddings) can be used as input features for downstream tasks, such as:

- Direct Function Annotation: Using the embeddings to predict Gene Ontology terms.

- Structure Prediction: Inferring the 3D structure of the protein from its sequence [15].

The following diagram illustrates the logical workflow and data flow for this protocol.

Protocol 2: De Novo Small Molecule Design using a Generative Chemical LLM

This protocol describes a generative approach to design novel small molecules with desired properties using a chemical LLM trained on SMILES notation.

Research Reagent Solutions

Table 3: Essential Materials for De Novo Molecular Design

| Item | Function / Description |

|---|---|

| Generative Chemical LLM | A transformer model trained on a vast corpus of known chemical structures represented as SMILES strings, learning the grammatical rules of chemistry. |

| Target Product Profile (TPP) | A predefined set of constraints for the desired molecule (e.g., potency, selectivity, ADMET properties) to guide the generation process. |

| SMILES Notation | A string-based representation system that uses ASCII characters to describe the structure of a molecule using a small set of grammatical rules [15]. |

| Property Prediction Models | Auxiliary models (e.g., for QSAR or binding affinity prediction) used to score, filter, and prioritize the generated molecules. |

Step-by-Step Workflow

- Model Pretraining: A transformer model is first pretrained on a large dataset of known chemical structures (e.g., from PubChem or ZINC) represented as SMILES strings. This teaches the model the fundamental "syntax" and "vocabulary" of chemistry [15].

- Conditional Generation: The pretrained model is then fine-tuned or guided using reinforcement learning to generate molecules that not only are syntactically valid but also optimize for specific properties defined in the TPP.

- Sampling and Decoding: Using techniques like beam search or nucleus sampling, the model generates a large library of novel, valid SMILES strings.

- In Silico Screening: The generated molecules are virtually screened using predictive models for properties like binding affinity, solubility, and metabolic stability (ADMET) [15].

- Iterative Optimization: The results from the screening are used to provide feedback, further refining the generative model in an iterative "design-make-test" cycle, dramatically compressing the lead optimization timeline [1].

The workflow for this generative and iterative process is shown below.

Method Comparison and Benchmarking Guidelines

The adoption of transformers and LLMs in high-stakes drug discovery decisions necessitates rigorous and statistically sound method comparison. The following guidelines, drawn from emerging best practices, should be adhered to when benchmarking new models [5] [18].

- Use Appropriate Data Splitting: Avoid simple random splits of data, which can lead to over-optimistic performance estimates due to data leakage, especially with structurally similar molecules. Use more rigorous methods like scaffold splitting, which groups molecules by their core chemical structure, ensuring that the model is tested on truly novel scaffolds [18].

- Employ Cross-Validation Correctly: While k-fold cross-validation is common, repeated random splitting is generally not recommended as it creates strong dependencies between splits. If using cross-validation, ensure the splitting strategy is aligned with the problem's domain, such as grouping by protein targets for binding affinity prediction [18].

- Report Domain-Appropriate Metrics: Beyond generic metrics like AUC-ROC or accuracy, report metrics that are meaningful to medicinal chemists. This includes the "hit rate" in virtual screening, the synthetic accessibility score (SAS) of generated molecules, and the false positive/negative rates in toxicity prediction [5].

- Prioritize Interpretability and Transparency: Given the "black box" nature of many complex models, it is critical to use and report explainability techniques (e.g., attention visualization, SHAP plots) to build trust and provide mechanistic insights. Transparent workflows that allow researchers to verify inputs and outputs are essential for adoption [17] [19].

- Validate with Wet-Lab Experiments: The ultimate validation of any in silico model is its correlation with real-world experimental results. A robust benchmarking protocol must include plans for in vitro and/or in vivo validation of top-ranked candidates to confirm predicted efficacy and safety [1] [17].

In early-stage drug discovery, the scarcity of high-quality, large-scale data presents a significant bottleneck for traditional machine learning models. Few-shot learning (FSL) has emerged as a transformative paradigm, enabling models to generalize and make accurate predictions from a very limited number of training examples. This capability is particularly vital for predicting drug responses in rare cancers, repurposing existing pharmaceuticals, and accelerating novel therapeutic development where structured biological data is inherently limited. By leveraging prior knowledge and advanced learning strategies, FSL methods are overcoming one of the most persistent challenges in computational drug discovery.

Performance Comparison of Few-Shot Learning Approaches

The table below summarizes the performance characteristics of prominent few-shot learning methods as applied to drug discovery challenges, particularly in predicting drug pair synergy across rare cancer tissues with limited data availability.

Table 1: Performance Comparison of Few-Shot Learning Methods in Drug Discovery

| Method | Architecture | Sample Efficiency | Key Applications | Performance Notes |

|---|---|---|---|---|

| CancerGPT [20] | LLM-based (~124M parameters) | Effective in k-shot (k=0 to 128) scenarios | Drug pair synergy prediction in rare tissues | Achieves significant accuracy even in zero-shot settings; outperforms larger models in out-of-distribution tissues |

| Meta-CNN [21] | Few-shot meta-learning with convolutional networks | Enhanced stability with limited samples | CNS drug discovery, pharmaceutical repurposing | Improved prediction accuracy over traditional ML with limited brain physiology data |

| Fine-tuning with Mahalanobis Loss [22] | Regularized quadratic-probe loss with dedicated optimizer | Highly competitive with minimal samples | Molecular property prediction | Robust to domain shifts; avoids need for episodic pre-training |

| GPT-3 [20] | Large LLM (~175B parameters) | Competitive with increasing shots | Drug pair synergy prediction | Highest accuracy in pancreas tissue with zero-shot tuning; benefits from abundant samples |

| Data-Driven Models (TabTransformer, Collaborative Filtering) [20] | Traditional tabular data models | Requires in-distribution data | Drug synergy when common/rare tissue patterns align | Superior accuracy when external data distribution matches target tissue |

Detailed Experimental Protocols

Protocol: CancerGPT for Drug Pair Synergy Prediction

Application Note: This protocol enables prediction of synergistic drug combinations for rare cancer tissues with minimal training samples by leveraging knowledge encoded in large language models [20].

Materials & Reagents:

- Drug pair synergy data (e.g., from DrugComb database)

- Rare tissue genomic characteristics (optional)

- Pre-trained language model (GPT-2 architecture)

- Computational resources (GPU recommended)

Procedure:

- Task Reformulation: Convert structured drug pair prediction task into natural language format by creating textual descriptors of drug compounds, target tissues, and molecular attributes.

Embedding Extraction: Derive prior knowledge embeddings from the pre-trained LLM's weight matrices to initialize the model with biochemical knowledge learned from scientific literature.

k-Shot Fine-tuning:

- For each rare tissue, select k training samples (where k typically ranges from 0 to 128)

- Update model parameters using limited tissue-specific examples

- Apply full training strategy (updating both LLM parameters and classification head) for optimal accuracy

Synergy Prediction:

- Input target drug pair and tissue characteristics

- Generate synergy classification (synergistic/non-synergistic)

- Output confidence metrics and supporting literature rationale

Validation: Assess model performance using area under precision-recall curve (AUPRC) and area under receiver operating characteristic (AUROC) metrics on held-out test samples.

Troubleshooting:

- For tissues with extremely limited samples (k<5), prioritize zero-shot or minimal fine-tuning to avoid overfitting

- When external data from common tissues is available and in-distribution, consider hybrid approaches combining prior knowledge with data-driven patterns

Protocol: Meta-Learning for CNS Drug Discovery

Application Note: This methodology integrates few-shot meta-learning with brain activity mapping (BAMing) to enhance discovery of central nervous system therapeutics from limited pharmacological data [21].

Materials & Reagents:

- Brain activity mapping data

- Validated CNS drug profiles

- Meta-learning framework (e.g., Meta-CNN)

- High-throughput screening capabilities

Procedure:

- Pattern Learning: Utilize patterns from previously validated CNS drugs to create prior knowledge base for the meta-learning algorithm.

Meta-Training Phase: Train the Meta-CNN model on diverse but limited drug profiling datasets to learn generalizable features of pharmacological activity.

Rapid Adaptation: For novel drug candidates, apply the pre-trained model and adapt with minimal samples (few-shot learning) to predict neuropharmacological properties.

BAM Integration: Correlate predicted drug activity with whole brain activity mapping data to validate and refine predictions.

Candidate Prioritization: Rank drug candidates based on predicted efficacy and similarity to known CNS therapeutic patterns.

Validation: Compare prediction stability and accuracy against traditional machine learning methods using limited sample validation sets.

Workflow Visualization

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagents and Computational Tools for Few-Shot Learning in Drug Discovery

| Item | Function | Example Sources/Platforms |

|---|---|---|

| Drug Knowledge Bases | Provide structured pharmacological information for grounding model predictions | Drugs.com, NHS drug database, PubMed [23] |

| Biomedical Language Models | Encode prior knowledge from scientific literature for few-shot inference | CancerGPT, SciFive, Med-PaLM, DrugGPT [23] [20] |

| Domain Adaptation Frameworks | Enable model transfer between common and rare tissues with limited samples | Multi-objective iterated symbolic regression (MISR) [24] |

| Meta-Learning Algorithms | Learn transferable knowledge across multiple drug discovery tasks | Meta-CNN, optimization-based meta-learners [21] |

| Specialized Fine-tuning Tools | Adapt pre-trained models to specific drug discovery contexts with minimal data | Regularized quadratic-probe loss with Mahalanobis distance [22] |

| Interpretability Frameworks | Validate model predictions and ensure alignment with biological principles | Mechanistic and functional interpretation methods [25] |

The pharmaceutical industry has long been constrained by Eroom's Law (Moore's Law spelled backward), the observation that the cost of developing a new drug has increased exponentially over time, despite technological advancements [26]. The traditional drug discovery pipeline was a linear, sequential process requiring 10-15 years and exceeding $2 billion in costs per approved drug, with a success rate of less than 10% from Phase I trials to market approval [26] [27] [28]. This paradigm has been fundamentally disrupted by the integration of Machine Learning (ML) and Artificial Intelligence (AI), shifting the core of discovery from the wet lab (in vitro) to the computer (in silico) [26]. This document details the quantitative efficiency gains, provides standardized application protocols, and establishes a methodological framework for comparing ML approaches within the context of modern drug discovery.

Historical Benchmarks and ML-Driven Efficiency Gains

The following tables synthesize key performance metrics, contrasting traditional drug discovery with the new, AI-driven paradigm.

Table 1: Comparative Timeline and Cost Efficiency of Traditional vs. AI-Driven Drug Discovery

| Development Stage | Traditional Timeline | AI-Accelerated Timeline | Traditional Cost | AI-Accelerated Cost |

|---|---|---|---|---|

| Target Identification | 2-3 years [27] | 1.5 years (e.g., Insilico Medicine) [1] [28] | N/A | ~$150,000 (target discovery only) [28] |

| Preclinical Candidate | 3-6 years [29] [28] | 18 months (e.g., Exscientia's DSP-1181) [1] [28] | N/A | Substantially reduced [29] |

| Overall Discovery to Market | 10-15 years [26] [28] | Projected reduction to ~1 year for discovery phase [29] | >$2 billion [26] [28] | Up to $110B annual industry value potential [26] |

Table 2: Quantitative Improvements in Discovery Metrics and Clinical Success

| Performance Metric | Traditional Approach | AI/ML-Driven Approach | Citation |

|---|---|---|---|

| Compounds Synthesized | Thousands per candidate | 10x fewer; e.g., 136 for a CDK7 inhibitor | [1] |

| Design Cycle Time | Industry standard months | ~70% faster | [1] |

| Phase I Trial Success Rate | 40-65% | 80-90% | [29] |

| Molecules in Clinical Trials (by end of 2024) | N/A | >75 AI-derived molecules | [1] |

Application Notes & Experimental Protocols

This section provides detailed methodologies for key applications of ML in the drug discovery pipeline, designed for replication and comparison by research scientists.

Protocol: AI-Driven Target Identification and Validation

Application Note: This protocol uses a holistic, systems biology approach to identify novel therapeutic targets, moving beyond the reductionist model of single-protein modulation [30]. It leverages large-scale, multimodal data to prioritize targets with higher translational potential.

Materials & Experimental Setup:

- Data Sources: Genomic data (e.g., RNA sequencing, proteomics), patient data, scientific literature, patents, and clinical trial data (≈40 million documents) [30].

- Computational Platform: High-performance computing (HPC) environment or cloud infrastructure (e.g., AWS).

- Key Software/Models: Knowledge graph embedding models, Natural Language Processing (NLP) models (e.g., transformer-based), and feature ranking algorithms.

Step-by-Step Workflow:

- Data Ingestion and Fusion: Integrate multimodal data (genomic, proteomic, textual) into a unified data lake. NLP models extract biological context and entity relationships from text corpora [30].

- Knowledge Graph Construction: Encode biological relationships (e.g., gene-disease, compound-target) into a graph structure. Use embedding models to represent nodes and edges in a vector space [30].

- Target Hypothesis Generation: Apply graph traversal algorithms and attention-based neural architectures to identify and rank subgraphs of biological relevance, generating novel target hypotheses [30].

- Multi-Factor Validation & Prioritization: Score candidate targets against a multi-parameter profile, including:

- Global Trend Score: Assess scientific and commercial interest from the knowledge graph [30].

- Druggability: Evaluate based on protein structure and known ligand interactions [30].

- Genetic Evidence: Prioritize targets with human genetic validation from patient-derived data [30] [28].

- Competitive Landscape: Analyze patent and clinical trial data for competing programs [1] [30].

- Experimental Validation: Advance top-ranked targets to in vitro and ex vivo validation using patient-derived cell lines or tissues to confirm biological relevance [1] [30].

Protocol: Generative AI forDe NovoMolecular Design & Optimization

Application Note: This protocol employs generative models for the de novo design of novel, synthetically accessible small molecules optimized for multiple properties simultaneously, drastically reducing the number of compounds that need to be synthesized and tested [1] [30].

Materials & Experimental Setup:

- Chemical Data: Large libraries of chemical structures with associated bioactivity and ADMET properties.

- Computational Resources: GPU-accelerated computing clusters.

- Key Software/Models: Generative models (e.g., Reinforcement Learning (RL), Generative Adversarial Networks (GANs), transformer-based architectures), molecular dynamics simulation software, and automated chemistry planning tools.

Step-by-Step Workflow:

- Define Target Product Profile (TPP): Establish the desired multi-objective optimization criteria, including potency, selectivity, metabolic stability, solubility, and low toxicity [1] [30].

- Generative Molecular Design: Use a generative model (e.g., policy-gradient-based RL) to propose novel molecular structures that satisfy the TPP. The model is constrained by synthetic accessibility via reaction-aware models [30].

- In Silico Evaluation & Prioritization:

- Property Prediction: Use deep learning models (e.g., Multi-modal Transformers) trained on diverse preclinical datasets to predict ADMET and other clinical properties [30].

- Structural Analysis: Employ structure prediction models (e.g., multi-scale diffusion models) to predict atom-level protein-ligand complexes and assess target engagement and specificity [30].

- Closed-Loop Learning: A select subset of top-ranking virtual compounds is synthesized and tested in biochemical or phenotypic assays. The experimental results are fed back into the AI models to retrain and refine subsequent design cycles, creating an iterative Design-Make-Test-Analyze (DMTA) loop [1] [30].

Protocol: Phenotypic Drug Discovery using High-Content Screening and AI

Application Note: This protocol bypasses the need for a predefined target by using high-content cellular imaging and ML to identify compounds that induce a desired phenotypic signature, enabling target-agnostic drug discovery [1] [30].

Materials & Experimental Setup:

- Cell Lines: Disease-relevant cell models, preferably patient-derived.

- Instrumentation: High-throughput automated microscopy systems, robotic liquid handlers.

- Computational Resources: High-performance computing for image analysis and model training (e.g., supercomputers like BioHive-2).

- Key Software/Models: Deep learning models for image analysis (e.g., Vision Transformers like Phenom-2), knowledge graphs for target deconvolution.

Step-by-Step Workflow:

- Phenotypic Screening: Treat disease-relevant cells with thousands of chemical compounds in automated, high-throughput assays. Use high-content microscopy to capture millions of cellular images [1] [30].

- Feature Extraction & Phenotypic Profiling: Process the images using a deep learning model (e.g., a Vision Transformer) to convert each image into a high-dimensional feature vector, a "phenotypic fingerprint" that captures the compound's morphological impact [30].

- Hit Identification: Use unsupervised learning or similarity search algorithms to identify compounds whose phenotypic fingerprints closely resemble a desired phenotypic state (e.g., healthy cells) or are distinct from negative controls.

- Target Deconvolution: For promising hit compounds, use an integrated knowledge graph that combines the phenotypic data with biological context (e.g., protein interactions, global trend scores, clinical data) to generate and rank hypotheses about the compound's molecular mechanism of action [30].

- Validation: Test the target hypotheses using genetic (e.g., CRISPR) or biochemical methods in subsequent experiments.

Method Comparison Guidelines for ML in Drug Discovery

Robust method comparison is essential for advancing the field. The following guidelines and table provide a framework for evaluating ML models in small-molecule drug discovery [5] [13].

Core Principles for Comparison:

- Statistically Rigorous Protocols: Implement domain-appropriate performance metrics and ensure replicability. Use significance testing that accounts for multiple comparisons and data variance [5].

- Practically Significant Benchmarks: Focus on metrics that translate to real-world impact (e.g., reduction in compounds synthesized, improvement in clinical success rates) rather than abstract statistical gains [5].

- Holistic Evaluation: Assess a model's ability to integrate multimodal data and represent biology at a systems level, not just its performance on a single, narrow task [30].

Table 3: Framework for Comparative Analysis of ML Platforms in Drug Discovery

| Evaluation Dimension | Assessment Criteria | Exemplary Platforms / Approaches |

|---|---|---|

| Technological Approach | Generative Chemistry, Phenotypic Screening, Knowledge Graphs, Physics-Based Simulation, Hybrid Models [1] [30] | Exscientia (Generative), Recursion (Phenomics), Insilico (Knowledge Graphs) [1] |

| Data Strategy & Holism | Use of multimodal data (omics, images, text); Focus on biological holism vs. reductionism [30] | Recursion OS (≈65 PB data); Insilico (1.9T data points) [1] [30] |

| Validation & Output | Track record of novel targets/candidates; Clinical pipeline size; Partnership traction [1] [30] | >75 AI-derived molecules in clinic by end-2024 [1] |

| Quantifiable Efficiency | Reported reduction in discovery time; Reduction in synthesized compounds; Clinical success rates [1] [29] | 70% faster design; 10x fewer compounds; 80-90% Phase I success [1] [29] |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Computational Tools and Platforms for AI-Driven Drug Discovery

| Tool / Platform Name | Type | Primary Function | Key Feature |

|---|---|---|---|

| Pharma.AI (Insilico) | Integrated Software Platform | End-to-end drug discovery from target to candidate [30] | Combines PandaOmics (target ID), Chemistry42 (generative chemistry), and inClinico (trial prediction) [30] |

| Recursion OS | Vertical Technology Platform | Maps biological relationships using phenomics and ML [30] | Integrates wet-lab data with "World Model" AI; Powered by BioHive-2 supercomputer [30] |

| Exscientia AI Platform | Generative AI Platform | Automates drug design and prioritization [1] | Closed-loop "Design-Make-Test" cycle integrated with automated robotics [1] |

| Iambic Therapeutics AI | Specialized AI Pipeline | Integrates molecular design, structure prediction, and property inference [30] | Unified pipeline with Magnet (design), NeuralPLexer (structure), and Enchant (properties) [30] |

| CONVERGE (Verge Genomics) | End-to-End ML Platform | Discovers drugs for complex diseases using human data [30] | Leverages human-derived genomic data and closed-loop ML to prioritize targets [30] |

| Cloud HPC (e.g., AWS) | Computational Infrastructure | Provides scalable computing for training and running large models [1] | Enables access to foundation models (e.g., Amazon Bedrock) and scalable storage [1] |

Strategic Method Selection: Matching ML Algorithms to Drug Discovery Tasks

The integration of machine learning (ML) into drug discovery has introduced a critical challenge for researchers: selecting the optimal algorithm from an ever-expanding array of options. Traditional model-centric approaches, which prioritize algorithmic complexity, often yield inconsistent results when applied across diverse drug discovery datasets. This protocol establishes a data-centric framework—the "Goldilocks Paradigm"—that systematically matches algorithm selection to dataset characteristics, particularly size and diversity [31].

Shifting from a model-centric to a data-centric approach represents a fundamental reorientation in machine learning for drug discovery. Where model-centric efforts focus on developing increasingly sophisticated algorithms while treating data as static, the data-centric approach prioritizes data quality and characteristics, using a consistent model while iteratively improving the dataset itself [32] [33] [34]. This paradigm recognizes that in scientific domains like drug discovery, high-quality, well-curated data often contributes more to final model performance than algorithmic sophistication [32] [35].

The Goldilocks Paradigm formalizes this principle for algorithm selection in drug discovery applications, providing clear heuristics for matching model architecture to dataset properties. By categorizing datasets into "zones" based on size and diversity metrics, researchers can identify the "just right" algorithm for their specific context, optimizing predictive performance while conserving computational resources [31].

Quantitative Framework: Dataset Characteristics and Algorithm Performance

The Goldilocks Paradigm establishes quantitative thresholds for dataset categorization and algorithm selection based on rigorous benchmarking across multiple drug discovery datasets. The framework's core insight is that no single algorithm performs optimally across all dataset conditions; instead, performance depends on the interplay between dataset size and structural diversity [31].

Table 1: Goldilocks Zones for Algorithm Selection Based on Dataset Characteristics

| Goldilocks Zone | Dataset Size Range (Compounds) | Diversity Threshold (div metric) | Recommended Algorithm | Performance Advantage |

|---|---|---|---|---|

| Small Data | <50 | Any value | Few-Shot Learning (FSL) | Outperforms both classical ML and transformers on very small datasets [31] |

| Small-to-Medium, Diverse | 50-240 | >0.5 | Transformer (MolBART) | Better handles chemical diversity; transfer learning beneficial [31] |

| Small-to-Medium, Homogeneous | 50-240 | <0.5 | Classical ML (SVC/SVR) | Sufficient for less diverse chemical spaces [31] |

| Large Data | >240 | Any value | Classical ML (SVC/SVR) | Highest predictive power with sufficient data [31] |

The diversity metric (div) referenced in Table 1 is calculated from the area under the Cumulative Scaffold Frequency Plot (CSFP) curve: div = 2(1 - AUC). A perfectly diverse dataset (all unique scaffolds) scores 1, while a completely homogeneous dataset (single scaffold) scores 0 [31].

Table 2: Performance Comparison of ML Approaches Across Dataset Types

| Algorithm Type | Small Data (<50 compounds) | Medium Data (50-240 compounds) | Large Data (>240 compounds) | Data Diversity Handling |

|---|---|---|---|---|

| Few-Shot Learning | Best performance | Moderate performance | Poor performance | Limited |

| Transformer (MolBART) | Moderate performance | Best with high diversity | Moderate performance | Excellent |

| Classical ML (SVC/SVR) | Poor performance | Best with low diversity | Best performance | Moderate |

Beyond dataset size and diversity, the imbalance ratio between active and inactive compounds significantly impacts model performance, particularly for classification tasks in virtual screening. Research on anti-infective drug discovery demonstrates that adjusting imbalance ratios (e.g., to 1:10) through strategic undersampling can enhance model performance on external validation [36].

Experimental Protocols

Dataset Characterization and Categorization Protocol

Purpose: To quantitatively characterize chemical datasets and assign them to the appropriate Goldilocks Zone for algorithm selection.

Materials:

- RDKit Cheminformatics package

- Dataset of chemical structures (SMILES notation)

- Murcko scaffold analysis tools

Procedure:

- Dataset Size Assessment:

- Calculate total number of unique compounds in dataset

- Confirm each compound has associated experimental data (e.g., IC50, Ki, activity classification)

- Categorize according to size thresholds in Table 1

Structural Diversity Analysis:

- Generate Murcko scaffolds for all compounds using RDKit's

MurckoScaffoldSmilesFromSmilesfunction - Calculate scaffold frequency distribution

- Generate Cumulative Scaffold Frequency Plot (CSFP):

- X-axis: Percentage of unique scaffolds (0-100%)

- Y-axis: Percentage of molecules represented (0-100%)

- Calculate area under CSFP curve (AUC)

- Compute diversity metric:

div = 2(1 - AUC)

- Generate Murcko scaffolds for all compounds using RDKit's

Imbalance Ratio Calculation (for classification tasks):

- For binary classification, identify active and inactive compounds based on experimental thresholds

- Calculate Imbalance Ratio (IR) = (number of minority class samples) : (number of majority class samples)

- Note: Highly imbalanced datasets (typically >1:10) may require balancing techniques before final modeling [36]

Goldilocks Zone Assignment:

- Use size and diversity metrics to assign dataset to appropriate zone per Table 1

- Proceed with recommended algorithm class for experimental testing

Data Quality Enhancement Protocol

Purpose: To implement data-centric improvements to enhance dataset quality before model training.

Materials:

- Multi-stage hashing tools (Perceptual Hashing, CityHash)

- Confident learning frameworks

- Data augmentation pipelines

- Domain expert access for annotation

Procedure:

- Duplicate Compound Removal:

- Apply perceptual hashing (pHash) to identify duplicate molecular representations

- Use CityHash for rapid processing of large datasets

- Remove duplicates while preserving associated experimental data

Noisy Label Detection and Correction:

- Implement confident learning to identify potentially mislabeled compounds

- Set probability threshold for noisy label detection (optimize through pilot experiments)

- Refer low-confidence labels to domain experts for verification

- Correct labels based on expert annotation and literature validation

Data Augmentation (for small datasets):

- Apply SMILES enumeration to generate valid alternative representations

- Use molecular transformation techniques (scaffold hopping, functional group modification)

- Implement rotation-based augmentation for image-based data

Imbalance Adjustment (for classification):

- Test multiple imbalance ratios (1:50, 1:25, 1:10) using K-ratio random undersampling (K-RUS)

- Evaluate impact on model performance metrics

- Select optimal ratio based on balanced accuracy and MCC [36]

Algorithm Implementation and Validation Protocol

Purpose: To implement and validate algorithms according to Goldilocks Zone assignments.

Materials:

- Machine learning frameworks (scikit-learn, PyTorch, TensorFlow)

- Pre-trained transformer models (MolBART, ChemBERTa)

- Few-shot learning implementations

- Nested cross-validation pipelines

Procedure:

- Algorithm Selection and Configuration:

- Based on Goldilocks Zone assignment, implement recommended algorithm class:

- Few-Shot Learning: Use prototypical networks or matching networks

- Transformer Models: Fine-tune pre-trained MolBART with transfer learning

- Classical ML: Implement SVR/SVC with ECFP6 fingerprints and hyperparameter optimization

- Based on Goldilocks Zone assignment, implement recommended algorithm class:

Model Training:

- Employ nested 5-fold cross-validation strategy

- For classical ML: optimize hyperparameters via grid search

- For transformers: use transfer learning with gradual unfreezing

- For FSL: employ episodic training with support and query sets

Performance Validation:

- Evaluate models using task-appropriate metrics:

- Regression: R², RMSE

- Classification: Balanced accuracy, F1-score, MCC

- Compare performance against benchmarks from Table 2

- Perform external validation on held-out test sets when available

- Evaluate models using task-appropriate metrics:

Iterative Refinement:

- If performance falls below expectations, revisit data quality enhancement steps

- Consider alternative algorithms from adjacent Goldilocks Zones

- Document final performance metrics and optimal algorithm selection

Visualization Framework

Goldilocks Algorithm Selection Workflow

Data Quality Enhancement Process

Research Reagent Solutions

Table 3: Essential Research Tools for Implementing the Goldilocks Paradigm

| Tool Category | Specific Solution | Function in Framework | Application Context |

|---|---|---|---|

| Cheminformatics Libraries | RDKit | Murcko scaffold generation, molecular fingerprint calculation, diversity metric calculation | All dataset characterization steps [31] |

| Deep Learning Frameworks | PyTorch, TensorFlow | Implementation of transformer models, few-shot learning architectures | Algorithm implementation across Goldilocks Zones [31] |

| Pre-trained Models | MolBART, ChemBERTa | Transfer learning for small-to-medium datasets, molecular representation learning | Transformer zone implementation [31] [36] |

| Data Versioning Tools | Neptune.ai, Weights & Biases, DVC | Dataset version tracking, experiment reproducibility, performance comparison | Data quality enhancement tracking [33] |

| Molecular Fingerprints | ECFP6, MACCS keys | Structural representation for classical ML algorithms | Classical ML zone implementation [31] |

| Imbalance Handling | K-Ratio Random Undersampling (K-RUS) | Adjusting active:inactive ratios for classification tasks | Data preparation for virtual screening [36] |

| Confident Learning Tools | CleanLab implementations | Noisy label detection, data quality assessment | Data quality enhancement protocol [32] |

Optimal Applications for Classical Models (SVM, Random Forest) in Large, Well-Defined Datasets

In the modern drug discovery pipeline, characterized by an explosion of high-dimensional chemical and biological data, classical machine learning models such as Support Vector Machines (SVM) and Random Forest (RF) remain cornerstone methodologies. Their sustained relevance is attributed to their robust performance, interpretability, and computational efficiency, particularly when applied to large, well-curated datasets. This application note, framed within a broader thesis on method comparison guidelines for machine learning in drug discovery, delineates the optimal use-cases, protocols, and experimental workflows for these models. We provide a structured comparison of their performance in specific, high-value tasks including virtual screening, drug-target interaction prediction, and physicochemical property prediction, supported by quantitative data and detailed implementation protocols.

The selection between SVM and Random Forest is often dictated by the specific nature of the problem, the dataset, and the desired outcome. The following table summarizes their documented performance across various drug discovery applications, providing a benchmark for model selection.

Table 1: Comparative Performance of SVM and Random Forest in Drug Discovery Tasks

| Application Area | Model Used | Reported Performance | Dataset Characteristics | Key Advantage |

|---|---|---|---|---|

| VEGFR-2 Inhibitor Screening [37] | SVM (RBF Kernel) | Accuracy: 81.8% (P-value = 0.008) [37] | 9,271 compounds from BindingDB [37] | High accuracy in classification with feature selection |

| Drug-Target Interaction Prediction [38] | Random Forest | Mean Accuracy: 0.882; ROC AUC: 0.990 [38] | 26,452 ligands from ChEMBL [38] | Superior performance with complex, interaction-rich data |

| LogD & Solubility Prediction [39] | Linear SVM (LIBLINEAR) | Performance on par with non-linear SVM [39] | ~1.2 million compounds from ChEMBL [39] | Dramatically faster training on very large datasets |

| Drug/Nondrug Classification [40] | SVM with Feature Selection | Accuracy: ~97% on training set [40] | 429 compounds (311 drugs/320 nondrugs) [40] | Effective in low-dimensional, curated feature spaces |

Application-Specific Protocols

Protocol 1: Virtual Screening with Support Vector Machines (SVM)

This protocol is designed for classifying potent inhibitors for a specific target, such as Vascular Endothelial Growth Factor Receptor-2 (VEGFR-2), a key anti-angiogenesis target in oncology [37].

1. Objective: To build a robust classification model that separates potent VEGFR-2 inhibitors from inactive compounds.

2. Research Reagent Solutions & Data Sources

- Chemical Compounds: Source from public repositories like BindingDB using target-specific queries (e.g., for VEGFR-2) [37].

- Software for Descriptor Calculation: Use Dragon software to compute a comprehensive set of molecular descriptors [37].

- Pre-processing Tool: Utilize Openbabel for structure optimization and file format conversion [37].

- Modeling Environment: Python with scikit-learn or R for implementing SVM and feature selection algorithms.

3. Experimental Workflow

The following diagram illustrates the multi-stage workflow for virtual screening using an SVM model.

4. Step-by-Step Methodology

Step 1: Data Curation

- Extract compounds from BindingDB with known activity (e.g., IC50, Ki) against the target.

- Apply a activity threshold (e.g., 1 µM) to label compounds as "active" or "inactive" [37].

- Remove duplicate structures and invalid entries to ensure data quality.

Step 2: Molecular Featurization

- Calculate molecular descriptors using software like Dragon, which can generate thousands of 0D to 3D descriptors [37].

- Standardize the resulting descriptor matrix (e.g., mean centering, variance scaling).

Step 3: Feature Selection

- Critical Step: Apply a correlation-based feature selection algorithm to reduce dimensionality and mitigate overfitting, which is crucial for model generalizability [37].

- This step removes redundant and non-informative features, leading to a more robust and interpretable model.

Step 4: Model Training & Validation

- Partition the data into training and test sets (e.g., 80/20 split).

- Train an SVM model with a Radial Basis Function (RBF) kernel. The RBF kernel can capture complex, non-linear relationships in the data [37] [39].

- Optimize hyperparameters (e.g., cost C, gamma γ) via grid search and cross-validation.

- Validate the model on the held-out test set, reporting accuracy, P-value, and other relevant metrics.

Step 5: Deployment for Screening

- Apply the trained model to screen large, proprietary compound libraries (e.g., >900,000 molecules) to identify novel potential inhibitors [37].

Protocol 2: Drug-Target Interaction Prediction with Random Forest

This protocol leverages the ensemble strength of Random Forest for predicting interactions between drugs and biological targets, a core task in polypharmacology and drug repurposing.

1. Objective: To predict whether a given drug molecule interacts with a specific protein target.

2. Research Reagent Solutions & Data Sources

- Bioactivity Data: Source from ChEMBL database, a rich source of curated drug-target bioactivities [38].

- 3D Conformer Generation: Use OpenEye Omega or RDKit to generate multiple 3D conformations for each molecule [38].

- 3D Molecular Fingerprint: Utilize E3FP fingerprints to represent the 3D structure of each conformer [38].

3. Experimental Workflow

The following diagram outlines the process for featurizing molecules and building a DTI prediction model using Random Forest.

4. Step-by-Step Methodology

Step 1: Data Preparation & Conformer Generation

- Select a set of targets and their known ligands from ChEMBL.

- Remove duplicate entries and generate a diverse set of 3D conformers for each ligand using tools like RDKit [38].

Step 2: 3D Molecular Representation

- Encode each 3D conformer using the E3FP fingerprint. This captures the radial distribution of atomic features in 3D space, providing richer information than 2D fingerprints [38].

Step 3: Feature Engineering via Similarity and KLD

- Innovative Feature Construction: For each target, compute a Q-Q matrix containing pairwise 3D similarity scores between all its known ligands.

- For a query molecule and a target, compute a Q-L vector of similarity scores between the query and all known ligands of that target.

- Transform the Q-Q matrix and Q-L vector into probability density functions using Kernel Density Estimation.