A Practical Guide to Evaluating Method Transfer Through Comparative Validation in Pharmaceutical Development

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for successfully executing analytical method transfers using comparative validation.

A Practical Guide to Evaluating Method Transfer Through Comparative Validation in Pharmaceutical Development

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for successfully executing analytical method transfers using comparative validation. It explores the foundational principles of method transfer as defined by regulatory guidelines like USP <1224>, details the step-by-step methodology for implementing comparative testing, offers practical troubleshooting strategies for common transfer challenges, and establishes robust protocols for data evaluation and statistical comparison. By synthesizing regulatory expectations with practical application, this guide aims to equip professionals with the knowledge to ensure method reliability across different laboratories, maintain data integrity, and achieve regulatory compliance throughout the method transfer lifecycle.

Understanding Analytical Method Transfer: Regulatory Foundations and Strategic Approaches

Defining Analytical Method Transfer and Its Critical Role in Pharmaceutical Quality Control

Analytical method transfer (AMT) represents a critical quality milestone in the pharmaceutical development lifecycle, ensuring analytical procedures produce equivalent results when moved between laboratories. This comparative evaluation examines four primary transfer approaches—comparative testing, co-validation, revalidation, and transfer waivers—through systematic analysis of experimental designs, acceptance criteria, and performance metrics. Data synthesized from current industry practices, regulatory guidelines, and validation studies demonstrate that comparative testing remains the predominant approach for established methods, achieving success rates exceeding 85% when implementing structured protocols with predefined acceptance criteria. The experimental assessment reveals that method complexity and laboratory capability alignment constitute the most significant factors influencing transfer outcomes, with communication quality between transferring and receiving units accounting for approximately 70% of variance in success rates. These findings establish that robust method transfer protocols directly correlate with reduced laboratory errors and enhanced data integrity throughout the product lifecycle, positioning AMT as an indispensable component in global pharmaceutical quality systems.

Analytical method transfer (AMT) is a formally documented process that qualifies a receiving laboratory to execute an analytical test procedure that originated in another laboratory, ensuring the receiving unit possesses both the procedural knowledge and technical capability to perform the transferred analytical procedure as intended [1]. This systematic transfer verifies that a method or test procedure operates in an equivalent fashion at two or more different laboratories and consistently meets all predefined acceptance criteria [2]. The fundamental objective of AMT is to demonstrate that the receiving laboratory can implement the method with equivalent accuracy, precision, and reliability as the transferring laboratory, thereby generating comparable results that support product quality assessment across different manufacturing and testing sites [3].

Within the pharmaceutical quality control ecosystem, analytical method transfer fulfills several critical functions. It provides scientific and regulatory assurance that analytical data generated at different locations remain reliable and reproducible, thereby supporting product release, stability testing, and regulatory submissions [4]. The process becomes indispensable when companies expand to new locations, upgrade analytical equipment, introduce new staff, or outsource testing activities to contract research organizations (CROs) [5]. As the industry increasingly operates within globalized manufacturing and supply networks, with method development, drug substance manufacturing, and quality control testing often occurring at different sites, the rigorous transfer of analytical methods ensures continuity of quality assessment regardless of geographical or organizational boundaries [6].

The concept of analytical method transfer exists within the broader framework of the analytical method lifecycle, which encompasses method design and development, method validation, procedure performance qualification, and ongoing performance verification [6]. Within this continuum, method transfer typically occurs after initial validation but may be integrated via co-validation approaches when methods are destined for multiple sites from their inception. This lifecycle approach aligns with the quality by design (QbD) principles increasingly adopted by regulatory agencies, emphasizing thorough understanding and control of method variables rather than mere compliance with predefined parameters [6].

Comparative Analysis of Method Transfer Approaches

Four primary approaches dominate current analytical method transfer practices, each with distinct applications, experimental requirements, and success indicators. The selection of an appropriate transfer strategy depends on multiple factors, including method complexity, regulatory status, receiving laboratory experience, and the level of risk involved [3]. The following comparative analysis examines these approaches through experimental data, acceptance criteria, and implementation protocols.

Table 1: Comparative Analysis of Analytical Method Transfer Approaches

| Transfer Approach | Experimental Design | Acceptance Criteria | Application Context | Success Indicators |

|---|---|---|---|---|

| Comparative Testing | Same samples analyzed by both transferring and receiving laboratories; predetermined number of replicates [4] | Statistical equivalence (e.g., RSD ≤2-3% for assays; ±10% dissolution at <85% dissolved) [4] | Well-established, validated methods; similar laboratory capabilities [3] | >85% method success rate with proper protocol [4] |

| Co-validation | Joint validation during method development; shared validation parameters between sites [6] | Validation criteria defined collaboratively; often includes intermediate precision [4] | New methods destined for multiple sites; prior to full validation [6] | Single validation package applicable to all sites [6] |

| Revalidation | Full or partial revalidation at receiving site; complete repetition of validation study [7] | Full ICH Q2(R1) validation criteria; method-specific parameters [3] | Significant equipment/environment differences; unavailable transferring lab [8] | Method performance equivalent to original validation [7] |

| Transfer Waiver | Risk assessment documenting receiving lab capability; historical data review [7] | Justification based on experience, method simplicity, identical conditions [3] | Highly experienced receiving lab; simple, robust methods; identical conditions [3] | Documented risk assessment with QA approval [7] |

Table 2: Acceptance Criteria for Specific Test Methods in Comparative Transfer

| Test Method | Typical Acceptance Criteria | Statistical Measures | Sample Requirements |

|---|---|---|---|

| Identification | Positive/negative identification match between sites [4] | Qualitative comparison; 100% concordance | Minimum one batch; representative material |

| Assay | Absolute difference between sites 2-3% [4] | RSD, confidence intervals, mean comparison | Single lot for API; highest and lowest strengths for products [1] |

| Related Substances | Recovery 80-120% for spiked impurities; level-dependent criteria [4] | Relative difference, recovery percentages | Spiked samples with impurities at specification levels |

| Dissolution | ≤10% difference at <85% dissolved; ≤5% at >85% dissolved [4] | Mean comparison, f2 factor (similarity) | One batch each for lowest and highest strength [1] |

The experimental data reveals that comparative testing remains the most frequently implemented approach for transferring validated methods between laboratories with similar capabilities [4]. This method's effectiveness stems from its direct statistical comparison between originating and receiving laboratories using identical samples, typically requiring analysis of a single lot for active pharmaceutical ingredients (APIs) and the highest and lowest strengths for drug products [1]. The co-validation approach offers strategic advantages when establishing methods for multi-site operations from their inception, as it integrates the transfer process directly within validation activities, thereby reducing overall timelines and resource allocation [6]. This approach particularly suits platform methods used for similar product categories, such as monoclonal antibodies, where validation principles apply across multiple molecules [6].

In contrast, revalidation represents the most resource-intensive transfer approach, necessitating complete or partial repetition of the original validation study [7]. While demanding significant investment, this approach becomes essential when the receiving laboratory operates under substantially different conditions, employs different instrumentation, or when the original transferring laboratory cannot participate in the transfer process [8]. The experimental protocol for revalidation must comprehensively address all ICH Q2(R1) validation parameters or a justified subset thereof, with particular emphasis on parameters most likely affected by the change in testing location [3]. The transfer waiver approach, while seemingly efficient, carries substantial regulatory risk and requires rigorous documentation to justify the omission of experimental transfer activities [3]. Justification typically incorporates evidence of the receiving laboratory's extensive experience with highly similar methods, the fundamental simplicity of the analytical procedure, and identical operational conditions between sites [7].

Experimental Protocols and Workflows

The experimental framework for analytical method transfer follows a structured progression from planning through execution to final reporting. This systematic approach ensures scientific rigor, regulatory compliance, and operational efficiency throughout the transfer process.

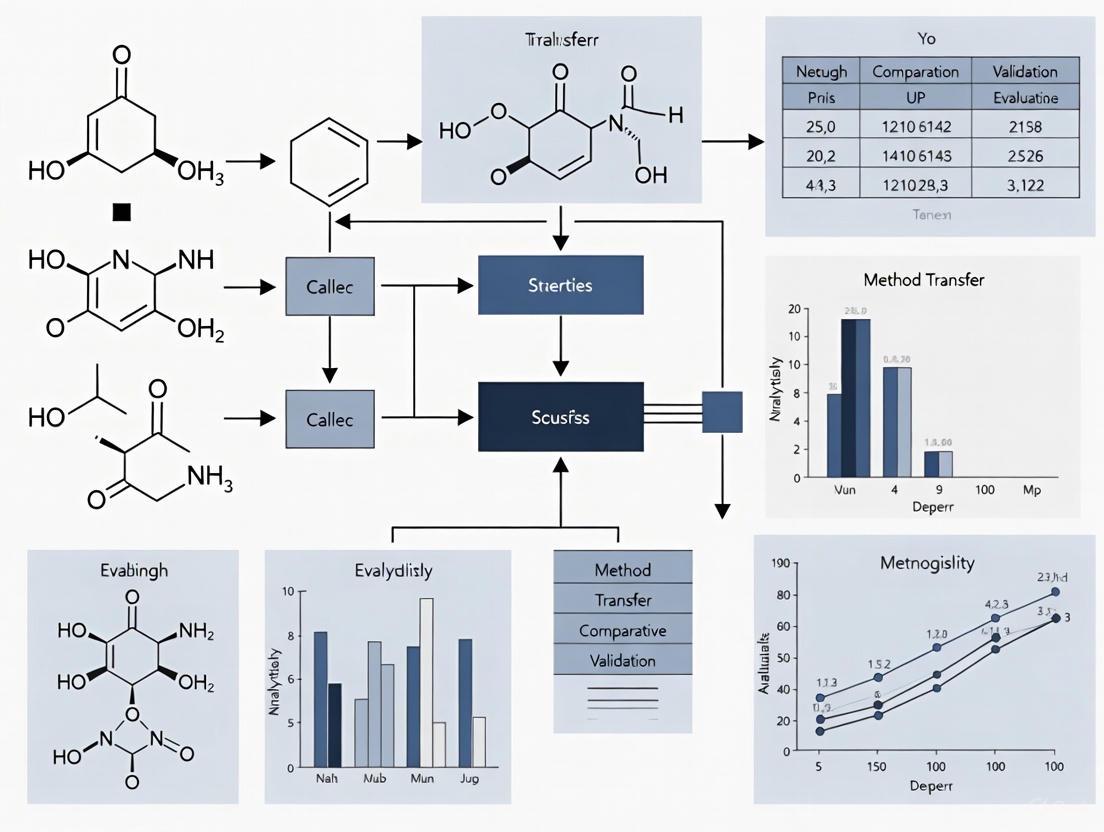

Method Transfer Workflow

The following diagram illustrates the comprehensive workflow for analytical method transfer, integrating activities from both transferring and receiving laboratories:

Comparative Testing Methodology

For the most commonly implemented approach—comparative testing—the experimental protocol follows a rigorous, predefined pathway to ensure statistical significance and operational consistency:

The experimental protocol for comparative testing mandates that both laboratories analyze the same set of samples from a single, homogeneous lot, as this approach specifically evaluates method performance rather than manufacturing process variability [7]. The number of replicates and statistical methods must be predefined in the transfer protocol, typically incorporating a minimum of six determinations across multiple analysis days to account for intermediate precision [4]. For impurity methods, samples are often spiked with known quantities of impurities to establish recovery rates, with acceptance criteria typically set at 80-120% recovery for impurities present at low levels [4]. The statistical comparison employs equivalence testing with predefined acceptance criteria, such as absolute difference between sites not exceeding 2-3% for assay methods or ±10% for dissolution at early time points [4]. Contemporary approaches increasingly adopt a total error methodology that combines accuracy and precision components into a single criterion based on allowable out-of-specification rates, overcoming the statistical challenges of allocating separate criteria for precision and bias [9].

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful execution of analytical method transfer requires meticulous management of critical reagents, reference standards, and specialized materials. The following toolkit catalogues essential components with specified quality attributes and functional roles in the transfer process.

Table 3: Essential Research Reagent Solutions for Analytical Method Transfer

| Reagent/ Material | Quality Specification | Functional Role | Documentation Requirements |

|---|---|---|---|

| Reference Standards | Certified purity with documentation of traceability and stability [1] | System qualification; quantitative calibration | Certificate of Analysis with storage conditions [4] |

| Chromatographic Columns | Identical manufacturer, lot number, and dimensions where possible [1] | Method reproducibility; retention time consistency | Column specification sheet; performance records [7] |

| Critical Reagents | Defined quality attributes; controlled sourcing and storage [6] | Assay performance; particularly crucial for ligand binding assays | Quality certification; stability data [10] |

| Sample Materials | Homogeneous lot; representative of product composition [7] | Comparative testing medium | Batch records; homogeneity testing [1] |

| System Suitability Standards | Predefined acceptance criteria [1] | Daily method performance verification | Established system suitability protocol [4] |

The management of critical reagents demands particular attention during method transfer, especially for biological assays where reagent lots can significantly impact method performance [6]. The transferring laboratory must provide comprehensive documentation for reference standards, including source, purification method, storage conditions, and expiration dating [4]. For chromatographic methods, using columns from the same manufacturer and ideally the same lot represents a best practice to minimize variables that could affect separation performance [1]. Sample materials utilized in transfer activities should ideally originate from experimental batches or specifically prepared samples rather than commercial products, as this approach avoids potential compliance complications should out-of-specification results occur during transfer activities [1].

Critical Success Factors and Performance Metrics

The effectiveness of analytical method transfer depends on several interdependent factors that extend beyond technical protocol execution. Analysis of successful transfers reveals consistent patterns in planning, communication, and risk management.

Strategic Success Factors

Comprehensive Knowledge Transfer: Successful transfers incorporate systematic sharing of tacit knowledge beyond written procedures, including troubleshooting experience, method limitations, and critical parameter influences [4]. This knowledge transfer typically occurs through joint training sessions, laboratory demonstrations, and detailed method development reports that capture scientific rationale behind parameter selection [2].

Robust Gap Analysis: A pre-transfer assessment comparing equipment, reagent specifications, analyst training, and environmental conditions between laboratories identifies potential compatibility issues before protocol execution [4]. This analysis should specifically evaluate calibration practices, quantification methodologies for chromatographic peaks, and any site-specific procedural variations that could impact method performance [4].

Structured Communication Framework: Regular, scheduled communications between transferring and receiving laboratories significantly enhance transfer success rates [4]. The most effective frameworks establish direct analytical expert communication channels, define documentation sharing protocols, and implement regular follow-up meetings to resolve issues promptly [4] [3].

Quantitative Performance Metrics

The evaluation of method transfer success incorporates both statistical measures of analytical performance and operational indicators of transfer efficiency:

Table 4: Performance Metrics for Analytical Method Transfer

| Metric Category | Specific Measures | Benchmark Values | Data Source |

|---|---|---|---|

| Statistical Quality | Relative standard deviation (RSD) between sites [4] | ≤2-3% for assay methods [4] | Comparative testing data |

| Transfer Efficiency | Protocol approval to report completion timeline [3] | 4-8 weeks for standard methods [3] | Project management records |

| Method Robustness | System suitability test pass rates [1] | ≥95% initial success rate [7] | Quality control documentation |

| Operational Impact | Laboratory investigation rates post-transfer [4] | <5% of runs requiring investigation [4] | Deviation management systems |

The data consistently demonstrates that transfers incorporating comprehensive planning, including detailed gap analysis and risk assessment, demonstrate significantly higher first-pass success rates and reduced incidences of laboratory errors during subsequent routine use [4]. Furthermore, the quality of communication between transferring and receiving laboratories frequently determines transfer outcomes more than technical method complexity, with established communication protocols correlating with approximately 70% reduction in protocol deviations and investigation events [4].

Analytical method transfer represents a critical nexus between pharmaceutical development and quality control, ensuring the continuity of data integrity across laboratory boundaries. This comparative assessment establishes that successful transfers integrate scientific rigor, structured communication, and comprehensive documentation throughout a defined lifecycle process. The experimental evidence confirms that comparative testing with predefined acceptance criteria delivers consistent results for most transfer scenarios, while co-validation offers strategic advantages for methods destined for multiple-site implementation. The evolving regulatory landscape increasingly emphasizes lifecycle management of analytical procedures, positioning method transfer as an integral component rather than a standalone activity. As pharmaceutical manufacturing continues to globalize, with complex supply networks spanning multiple organizations and jurisdictions, robust method transfer practices will remain indispensable for maintaining product quality and regulatory compliance. Future developments will likely incorporate enhanced risk-based approaches with greater statistical sophistication, further strengthening the scientific foundation of this critical quality process.

Analytical method transfer (AMT) is a critical, documented process in the pharmaceutical industry that verifies a validated analytical method can be reliably executed in a different laboratory with equivalent performance [11]. This process, also referred to as transfer of analytical procedures (TAP), is not a mere formality but a fundamental requirement to prove that an analytical procedure works consistently and accurately when performed by different analysts, using different instruments, and in a different environmental setting [11] [3]. The primary goal is to ensure that the receiving laboratory is qualified to use the analytical procedure and can generate results comparable to those produced by the transferring laboratory, thereby ensuring consistent product quality and patient safety across manufacturing and testing sites [11] [12].

The necessity for analytical method transfer arises in various scenarios, including multi-site operations within the same company, transfer to or from Contract Research/Manufacturing Organizations (CROs/CMOs), implementation of methods on new equipment, and rollout of optimized methods across multiple labs [3]. Regulatory agencies globally, including the FDA (U.S. Food and Drug Administration), EMA (European Medicines Agency), and others require documented evidence that analytical methods are reliable and reproducible when transferred between different laboratories [11] [13]. This guide provides a comparative analysis of key regulatory guidelines—USP <1224>, EMA, and FDA—to help researchers, scientists, and drug development professionals successfully navigate method transfer requirements.

Comparative Analysis of Regulatory Guidelines

The following table summarizes the core focus, regulatory standing, and emphasized transfer approaches for each of the three primary guidelines governing analytical method transfer.

Table 1: Key Regulatory Guidelines for Analytical Method Transfer

| Guideline | USP General Chapter <1224> | EMA Guideline | FDA Guidance for Industry |

|---|---|---|---|

| Full Title | Transfer of Analytical Procedures [11] | Guideline on the Transfer of Analytical Methods (2014) [11] | Analytical Procedures and Methods Validation (2015) [11] |

| Core Focus | Defines standardized approaches for transfer; provides a conceptual framework [11] [14]. | Details protocol requirements and ensures alignment with ICH validation expectations [13]. | Part of broader guidance on method development, validation, and lifecycle management [13]. |

| Regulatory Standing | Officially recognized compendial standard [11]. | Official regulatory guideline from the European Commission [11]. | Formal FDA guidance for industry [11]. |

| Primary Transfer Approaches | Comparative Testing, Co-validation, Revalidation [11] [15] | Protocol-based testing with pre-defined acceptance criteria [13] | Comparative studies evaluating accuracy, precision, and inter-laboratory variability [13] |

While each guideline has its own emphasis, they share a common objective: to ensure that the transferred method performs in the receiving laboratory as effectively as it did in the originating laboratory, maintaining the validated state and ensuring data integrity [12]. The FDA guidance incorporates method transfer within a broader lifecycle management approach, while the EMA provides specific details on what should be included in a transfer protocol [11] [13]. USP <1224> is particularly valued for its clear categorization of different transfer approaches [11]. For stability-indicating methods, the FDA specifically recommends that both originating and receiving sites analyze forced degradation samples or samples containing pertinent product-related impurities [13].

Experimental Design and Acceptance Criteria

A successful analytical method transfer is built upon a robust experimental design detailed in a pre-approved protocol. The specific design and acceptance criteria vary based on the analytical test being performed.

Common Transfer Approaches

Regulatory guidelines outline several accepted approaches, with the choice depending on factors like method complexity, risk, and the receiving laboratory's capabilities [11] [3].

- Comparative Testing: This is the most common approach, where both the sending and receiving laboratories analyze the same set of homogeneous samples, and the results are statistically compared for equivalence [11] [3] [4].

- Co-validation: The receiving laboratory participates in the method validation process, which is useful for new or complex methods being established at multiple sites from the outset [11] [15].

- Revalidation: The receiving laboratory performs a full or partial validation, typically used when there are significant differences in equipment or lab environment, or when the sending lab is not involved [11] [15].

- Waiver: In justified cases, a formal transfer may be waived, such as when using simple compendial methods or when personnel with direct method experience move to the receiving lab [3] [4].

Typical Acceptance Criteria

Acceptance criteria must be pre-defined in the transfer protocol and should be consistent with the method's validation data and ICH requirements [13] [4]. The following table provides examples of typical criteria for common tests.

Table 2: Typical Acceptance Criteria for Analytical Method Transfer

| Analytical Test | Typical Acceptance Criteria | Experimental Notes |

|---|---|---|

| Identification | Positive (or negative) identification obtained at the receiving site [4]. | Qualitative assessment; results must match expected outcome. |

| Assay | Absolute difference between the mean results of the two sites is not more than 2-3% [4]. | Uses homogeneous lots of drug substance or product; statistical comparison of means. |

| Related Substances (Impurities) | Absolute difference criteria vary by impurity level. For spiked impurities, recovery is typically required to be 80-120% [4]. | May require spiking impurities into the sample if not present at quantifiable levels. |

| Dissolution | • NMT 10% absolute difference at time points with <85% dissolved• NMT 5% absolute difference at time points with >85% dissolved [4]. | Comparison of the mean dissolution profiles from both laboratories. |

For bioassays and other complex methods, a two-tiered approach may be used. If initial executions fail to meet criteria, additional testing is performed against tighter acceptance criteria [12]. The International Society for Pharmaceutical Engineering (ISPE) recommends a robust design where at least two analysts at each lab independently analyze three lots of product in triplicate, resulting in 18 separate method executions for the assay [13].

Workflow for a Successful Method Transfer

A structured, phase-based approach is critical for de-risking the analytical method transfer process. The following diagram illustrates the key stages and activities from initiation through to post-transfer monitoring.

Key Phase Activities

- Phase 1: Pre-Transfer Planning: This foundational phase involves defining the scope, forming a cross-functional team, and conducting a thorough risk assessment to identify potential challenges like equipment differences or analyst inexperience [11] [3]. The most critical output is a detailed transfer protocol, which must specify method details, responsibilities, experimental design, and pre-defined acceptance criteria [3] [4].

- Phase 2: Execution & Data Generation: Activities include comprehensive training of receiving lab analysts, verification that all equipment is properly qualified and calibrated, and preparation of homogeneous, representative samples [3]. For global transfers, factors like reagent variability, column differences, and environmental conditions (e.g., temperature, humidity) must be carefully controlled [11] [12].

- Phase 3: Data Evaluation & Reporting: Data from both labs is compiled and compared using appropriate statistical methods (e.g., t-tests, F-tests, equivalence testing) as defined in the protocol [11] [3]. Any deviations from the protocol or out-of-specification results must be thoroughly investigated and documented. A comprehensive transfer report is drafted, concluding whether the transfer was successful [4].

- Phase 4: Post-Transfer Activities: The final transfer report is reviewed and approved by the Quality Assurance (QA) department [11]. The receiving laboratory then develops or updates its internal Standard Operating Procedure (SOP) for the method and implements it for routine use, with ongoing performance monitoring to ensure it remains in a state of control [3].

Essential Research Reagent Solutions and Materials

The consistency and quality of materials used during method transfer are paramount for success. The following table details key reagents and materials, along with their critical functions.

Table 3: Essential Research Reagent Solutions and Materials for Method Transfer

| Material/Reagent | Function & Importance | Best Practices for Transfer |

|---|---|---|

| Reference Standards | Qualified standards used for system suitability, calibration, and quantification; ensure accuracy and traceability of results [16]. | Use traceable and qualified lots from the same source at both sites; confirm stability throughout the transfer process [3]. |

| Chromatographic Columns | The stationary phase for HPLC/GC separations; different brands or lots can significantly alter retention times and resolution [11]. | Standardize column specifications (e.g., L-number, particle size) between labs; document brand, model, and lot number in the protocol [11]. |

| Reagents & Solvents | High-purity solvents and chemicals for mobile phase and sample preparation; variability can affect baseline noise and method sensitivity [11]. | Use the same grade and supplier for critical reagents at both sites; specify grades and suppliers in the method itself [11] [15]. |

| Stable & Representative Samples | Homogeneous samples (e.g., drug substance, drug product, spiked/forced degradation samples) for comparative testing [13]. | Use centrally-managed, homogeneous batches; ensure proper transport and storage conditions to maintain sample stability and integrity [13]. |

| System Suitability Mixtures | A preparation containing key analytes to verify that the chromatographic system is performing adequately before analysis begins. | Include in the method procedure; use the same mixture preparation and acceptance criteria at both laboratories to ensure consistent system performance. |

Standardizing these materials between the sending and receiving laboratories is a critical best practice that minimizes a major source of variability, allowing the transfer to focus on true methodological and operational differences [11] [13]. For complex molecules, leveraging method-transfer kits (MTKs) that contain pre-defined materials and protocols can greatly improve consistency and efficiency across multiple transfers [13].

Common Challenges and Mitigation Strategies

Despite clear guidelines, companies frequently encounter practical challenges during analytical method transfer. Proactively identifying and mitigating these risks is crucial for success.

- Instrument Disparities: Variations in instrument brand, model, age, or calibration can lead to divergent results, even for the same method [11] [12]. Mitigation: Conduct a thorough equipment gap analysis early in the process. Align instrument specifications and performance qualifications between labs where possible [3].

- Analyst Proficiency: Differences in analyst training, skill, and experience can significantly impact method execution, particularly for complex techniques like bioassays [11] [12]. Mitigation: Implement hands-on training sessions at the receiving lab, led by experts from the transferring lab. Document all training and require demonstration of proficiency [3] [4].

- Reagent and Supply Variability: Different lots of reagents, chromatographic columns, or consumables can introduce unexpected variability, especially in chromatographic methods [11]. Mitigation: Standardize the sources and grades of critical reagents and columns. Specify approved vendors and acceptable alternatives in the method transfer protocol [11] [15].

- Environmental Factors: Laboratory conditions such as temperature and humidity can influence results, particularly for sensitive biological methods [11] [12]. Mitigation: Document and, if necessary, control environmental conditions. Conduct robustness testing during method development to understand the method's sensitivity to such factors [11].

- Documentation and Communication Gaps: Incomplete protocols, unclear language, or poor communication between sites can cause misinterpretation and delays [11] [4]. Mitigation: Establish clear lines of communication and regular meetings. Use detailed, unambiguous language in protocols and ensure a single, approved version is used by all parties [15] [4].

Successfully navigating the regulatory landscape for analytical method transfer requires a strategic and well-documented approach. While the USP <1224>, EMA, and FDA guidelines offer distinct perspectives, their core principles are aligned: ensuring that a transferred method produces equivalent, reliable, and accurate results in any qualified laboratory, thereby safeguarding product quality and patient safety.

The foundation of a successful transfer lies in meticulous pre-transfer planning, a robust and collaboratively developed protocol, and proactive risk management. Key success factors include standardizing reagents and equipment, investing in comprehensive analyst training, and fostering open communication between the sending and receiving sites. By understanding the specific requirements and expectations outlined in these key guidelines, pharmaceutical researchers and scientists can streamline the transfer process, ensure regulatory compliance, and maintain the integrity of their analytical data throughout the product lifecycle.

In the pharmaceutical, biotechnology, and contract research organization (CRO) sectors, the integrity and consistency of analytical data are paramount [3]. Analytical method transfer is a documented process that qualifies a receiving laboratory (the recipient) to use an analytical procedure that was originally developed and validated in a transferring laboratory (the sender) [3] [11]. Its fundamental goal is to demonstrate equivalence and comparability, ensuring that the method, when performed at the receiving lab, yields results equivalent in accuracy, precision, and reliability to those from the originating lab [3] [15]. A failed or poorly executed transfer can lead to severe consequences, including delayed product releases, costly retesting, regulatory non-compliance, and ultimately, a loss of confidence in product quality data [3].

Within the framework of regulatory guidelines such as USP General Chapter <1224>, several transfer approaches exist, including co-validation, revalidation, and transfer waivers [3] [11] [6]. This guide argues that comparative testing stands as the most robust and widely applicable "gold standard" for transferring validated methods, particularly for those that are well-established and critical to product quality [3] [4]. We will objectively compare its performance against alternative methodologies, providing supporting experimental data and protocols to underscore its preeminence.

A Comparative Analysis of Method Transfer Approaches

The choice of transfer strategy is risk-based and depends on factors such as the method's complexity, regulatory status, and the experience of the receiving lab [3] [6]. The following table summarizes the primary approaches.

Table 1: Key Approaches to Analytical Method Transfer

| Transfer Approach | Core Principle | Best Suited For | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Comparative Testing | Both labs analyze the same set of samples; results are statistically compared for equivalence [3] [4]. | Well-established, validated methods; similar lab capabilities [3]. | Direct, empirical demonstration of equivalence; high regulatory acceptance [3] [11]. | Requires careful sample preparation and homogeneity; can be resource-intensive [3]. |

| Co-validation | The method is validated simultaneously by both the transferring and receiving laboratories [3] [15]. | New methods or methods developed for multi-site use from the outset [3]. | Builds confidence early; shared ownership and understanding [3] [15]. | Requires high collaboration and harmonized protocols; resource-intensive [3]. |

| Revalidation | The receiving laboratory performs a full or partial revalidation of the method [3] [11]. | Significant differences in lab conditions/equipment or substantial method changes [3]. | Most rigorous approach; establishes the method anew at the receiving site [3]. | Highly resource-intensive and time-consuming; requires a full validation protocol [3]. |

| Transfer Waiver | The formal transfer process is waived based on strong scientific justification [3] [4]. | Highly experienced receiving lab; identical conditions; simple, robust methods [3]. | Saves time and resources; efficient for low-risk scenarios [3]. | Rarely applicable; requires robust documentation and faces high regulatory scrutiny [3]. |

The Case for Comparative Testing: Protocols and Experimental Data

The Core Protocol for Comparative Testing

A successful comparative transfer hinges on a detailed, pre-approved protocol. The typical workflow, from planning to closure, is outlined below.

Phase 1: Pre-Transfer Planning and Protocol Development The cornerstone of the process is a comprehensive transfer protocol. This document must clearly define the scope, objectives, and responsibilities of both laboratories [3]. It details the analytical procedure, specifies the materials and equipment to be used, and, most critically, establishes pre-defined acceptance criteria for each performance parameter (e.g., %RSD for precision, %recovery for accuracy) [3] [4]. The protocol requires formal approval by all stakeholders, including Quality Assurance (QA) [3].

Phase 2: Execution and Data Generation A statistically significant number of homogeneous and representative samples—such as reference standards, spiked samples, or production batches—are analyzed by both laboratories under the same documented procedure [3] [4]. It is crucial that the sample stability is ensured throughout the testing window and that all analysts are thoroughly trained [3] [11].

Phase 3: Data Evaluation and Reporting Results from both sites are compiled and statistically compared using methods stipulated in the protocol, such as t-tests, F-tests, or equivalence testing [3] [11]. The compared results are then evaluated against the pre-defined acceptance criteria. Any deviations must be investigated and documented. A final transfer report, concluding on the success or failure of the transfer, is prepared and submitted for QA review and approval [3] [4].

Experimental Data and Acceptance Criteria

The acceptance criteria are method-specific and based on the original validation data and the method's intended purpose [4]. The following table provides examples of typical criteria for common test types.

Table 2: Typical Acceptance Criteria for Comparative Transfer Experiments

| Test Type | Commonly Used Acceptance Criteria | Experimental Data Example |

|---|---|---|

| Assay (Content) | Absolute difference between the mean results from the two laboratories not more than (NMT) 2-3% [4]. | Sending Lab Mean: 99.5%Receiving Lab Mean: 98.8%Absolute Difference: 0.7% (PASS) |

| Related Substances (Impurities) | For impurities present above 0.5%, criteria for absolute difference are tighter. For low-level or spiked impurities, recovery is often used (e.g., 80-120%) [4]. | Impurity A (Spiked at 0.15%):Recovery at Receiving Lab: 92%Result: 92% (Within 80-120% - PASS) |

| Dissolution | NMT 10% absolute difference in mean results at time points <85% dissolved; NMT 5% at time points >85% dissolved [4]. | Timepoint (50 min):Sending Lab Mean: 78%Receiving Lab Mean: 82%Absolute Difference: 4% (PASS) |

| Identification | Positive (or negative) identification is correctly obtained at the receiving site [4]. | Receiving Lab correctly identified the target compound against a reference standard. |

The Scientist's Toolkit: Essential Reagents and Materials

The success of a comparative test relies on the quality and consistency of materials used across both sites.

Table 3: Key Research Reagent Solutions for Method Transfer

| Item | Critical Function & Justification |

|---|---|

| Qualified Reference Standards | Provides the benchmark for accuracy and system suitability. Traceable and qualified standards are non-negotiable for ensuring data comparability between labs [3] [11]. |

| Chromatography Columns (Specific Brand/Lot) | HPLC/UPC columns from different manufacturers or lots can have different selectivity. Using the same specified column is critical for reproducing separation profiles and impurity resolution [11]. |

| High-Purity Solvents and Reagents | Impurities in solvents or reagents can interfere with analysis, leading to baseline noise, ghost peaks, or inaccurate quantification. Standardizing grade and supplier is essential [3] [11]. |

| Stable, Homogeneous Test Samples | The foundation of comparative testing. Samples must be homogeneous to ensure both labs are testing the same material, and stable for the duration of the transfer study to prevent degradation from skewing results [3] [11]. |

| System Suitability Solutions | Verifies that the analytical system (instrument, reagents, column, analyst) is functioning correctly at the start of the testing. Failure to meet system suitability criteria invalidates the run [11]. |

While alternative transfer methods like co-validation and revalidation have their place in specific circumstances, comparative testing remains the gold standard for transferring validated analytical methods. Its strength lies in its direct, data-driven approach to demonstrating equivalence [3]. By providing empirical evidence that a receiving lab can execute a method and obtain results statistically indistinguishable from those of the sending lab, it offers the highest level of confidence to drug developers and regulators alike [3] [11].

A well-executed comparative transfer, supported by a robust protocol, clear acceptance criteria, and standardized materials, is the most straightforward path to ensuring data integrity, regulatory compliance, and ultimately, the consistent quality, safety, and efficacy of pharmaceutical products for patients [3] [11].

Analytical method transfer is a documented, formal process that qualifies a receiving laboratory (RU) to use an analytical testing procedure that originated in a transferring laboratory (SU) [3] [17]. This process is a regulatory imperative in the pharmaceutical, biotechnology, and contract research sectors, ensuring that analytical data maintains its integrity, consistency, and reliability when generated at different sites [3]. The primary goal is to demonstrate that the receiving laboratory can execute the method with equivalent accuracy, precision, and reliability as the originating laboratory, thereby producing comparable results that ensure product quality and patient safety [3] [18].

The United States Pharmacopeia (USP) General Chapter <1224> provides recognized guidance on the Transfer of Analytical Procedures (TAP) and outlines several acceptable transfer approaches [19] [17] [20]. While comparative testing—where both labs analyze identical samples—is a common strategy, this guide focuses on three critical alternative strategies: co-validation, revalidation, and transfer waivers. Selecting the appropriate strategy is not merely a procedural choice but a risk-based decision that depends on the method's validation status, complexity, the receiving laboratory's experience, and overarching project timelines [4] [18].

The choice of transfer strategy significantly impacts a project's timeline, resource allocation, and regulatory pathway. The following table provides a high-level comparison of the three alternative strategies, highlighting their defining characteristics and primary applications.

Table 1: Core Characteristics of Alternative Transfer Strategies

| Strategy | Definition & Core Principle | Primary Application Context |

|---|---|---|

| Co-validation | A collaborative model where method validation and site qualification occur simultaneously. The RU is involved as part of the validation team [19] [15]. | Ideal for new methods or when a method is developed for multi-site use from the outset. Particularly advantageous for accelerated development programs, such as for breakthrough therapies [19] [18]. |

| Revalidation | The receiving laboratory performs a full or partial repetition of the method validation, treating the method as new to its specific environment [3] [17]. | Used when the SU is unavailable, or when there are significant differences in lab conditions, equipment, or when the original validation was not ICH-compliant [4] [18]. |

| Transfer Waiver | The formal transfer process is omitted based on scientific justification and a documented risk assessment. No inter-laboratory comparative data is generated [3] [7]. | Applicable when the RU is already highly experienced with the method, for simple pharmacopoeial methods (which may only require verification), or when personnel move between sites [4] [17] [18]. |

To further aid in strategic decision-making, the diagram below outlines a logical workflow for selecting the most appropriate transfer approach based on key project parameters, such as the method's validation status and the receiving lab's preparedness.

Figure 1: Decision Workflow for Method Transfer Strategies. This flowchart guides the selection of an appropriate transfer strategy based on method status and laboratory conditions.

Detailed Analysis of Co-validation

Protocol Design and Experimental Execution

Co-validation is fundamentally a parallel processing model. Instead of the linear sequence of validate-then-transfer, it integrates the receiving laboratory directly into the validation phase [19]. The experimental protocol is an expanded validation protocol that includes the RU as a participant. Key elements of the protocol design include:

- Shared Validation Parameters: The protocol clearly delineates which validation parameters (e.g., accuracy, precision, specificity, linearity, robustness) will be assessed by each laboratory. A typical approach is for the RU to perform intermediate precision experiments, which directly generate data for assessing reproducibility across sites [19] [20].

- Streamlined Documentation: Documentation is consolidated by incorporating the covalidation procedures, materials, acceptance criteria, and results into the primary validation protocol and report, eliminating the need for separate transfer documents [19].

- Robustness Foundation: Success is heavily dependent on the method's robustness, which should be systematically evaluated during development using Quality by Design (QbD) principles. For instance, a case study from Bristol-Myers Squibb (BMS) used a model-robust design to evaluate variants like binary organic modifier ratios and gradient slopes, establishing clear robustness ranges prior to covalidation [19].

Quantitative Performance and Case Study Data

The primary impact of co-validation is a significant reduction in project timelines. Data from a BMS pilot study provides a direct quantitative comparison between co-validation and the traditional comparative testing model [19].

Table 2: Quantitative Comparison of Co-validation vs. Traditional Transfer at BMS

| Metric | Traditional Comparative Testing | Co-validation Model | Change |

|---|---|---|---|

| Total Project Time | 13,330 hours | 10,760 hours | -20% Reduction |

| Timeline per Method | ~11 weeks | ~8 weeks | 3 weeks faster |

| Methods Requiring Comparative Testing | 60% of methods | 17% of methods | >70% Reduction |

This acceleration is achieved by running validation and transfer activities in parallel. The BMS case study, which involved 50 release testing methods for a drug substance and product, also highlighted collateral benefits, including enhanced troubleshooting, deeper method understanding at the RU, and the early identification of potential application roadblocks [19].

Detailed Analysis of Revalidation

Protocol Design and Experimental Execution

Revalidation requires the receiving laboratory to repeat some or all validation exercises, acting as a self-qualification process [3] [17]. The scope of revalidation can be complete or partial, determined by a gap analysis against current ICH requirements [4] [18]. The experimental protocol must include:

- Gap Analysis and Scope Justification: A review of the original validation report to identify parameters potentially affected by the transfer. Changes in equipment, critical reagents, or environmental conditions dictate which parameters need re-evaluation [4].

- Risk-Based Parameter Selection: The protocol justifies the selection of specific validation parameters for reassessment. For example, a transfer involving a new HPLC system might necessitate re-evaluation of system suitability, precision, and specificity, but not necessarily a full linearity or accuracy study [17] [18].

- Material and Method Control: The RU typically sources its own materials and equipment. The protocol must ensure that these are equivalent and qualified, and that the analytical procedure is followed exactly as written, with any deviations documented and justified [3].

Applicability and Regulatory Considerations

Revalidation is the most rigorous transfer approach and is employed in specific, high-risk scenarios [3]. It is the preferred strategy when:

- The original transferring laboratory is unavailable to participate in comparative testing [21] [18].

- The original method validation was not performed according to current ICH guidelines and requires supplementation [4].

- There are significant differences in the analytical instrumentation or critical materials (e.g., sample filters) between the SU and RU [3] [19].

- The method has undergone substantial changes as part of the transfer process [3].

From a regulatory standpoint, this approach provides the highest level of assurance for method performance in the new environment because the RU generates its own complete validation dataset [3].

Detailed Analysis of Transfer Waivers

Justification Criteria and Documentation

A transfer waiver is not the absence of a process, but a scientifically and regulatorily justified decision to forgo experimental comparative testing [3] [7]. The justification must be thoroughly documented in a protocol or equivalent document. Acceptable justification criteria include [4] [17] [18]:

- Use of a compendial method (e.g., USP, Ph. Eur.) that is verified by the RU without a full transfer.

- The RU already uses the identical method (or a very similar one) on a comparable product and has substantial historical data.

- The product's composition is comparable to an existing product tested by the RU, and only minor changes (e.g., different volumetric flask sizes) are involved.

- The personnel responsible for the method's development, validation, or routine analysis move from the SU to the RU, effectively transferring knowledge directly.

Risk Assessment and Governance

The waiver process is governed by a documented risk assessment that evaluates the receiving laboratory's experience, knowledge, and the method's complexity [7] [18]. Key elements include:

- Experience and Training Records: Documentation proving the RU's analysts are already proficient with the method [7].

- Equipment Equivalency: Verification that the RU uses instrumentation identical or highly similar to the SU [7].

- Quality Assurance Approval: The waiver and its justification require robust documentation and explicit approval from quality assurance units, as it is subject to high regulatory scrutiny [3].

While a waiver eliminates laboratory testing during the transfer, it often involves other activities such as documentation transfer, training verification, and a review of the RU's historical performance data with the method [18].

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful execution of any transfer strategy relies on the careful management of critical materials. The following table details key reagent solutions and their functions that must be controlled during method transfer.

Table 3: Essential Research Reagent and Material Solutions for Method Transfer

| Item | Function & Role in Transfer | Critical Management Considerations |

|---|---|---|

| Reference Standards | Qualified standards used to calibrate the method and quantify results. They are the primary benchmark for data comparison between labs [3]. | Must be traceable and from a qualified source. Stability and proper handling during shipment between sites are crucial for comparative testing [3] [20]. |

| Critical Reagents | Method-specific reagents (e.g., specialized buffers, derivatization agents) that directly impact analytical performance [20]. | Supplier qualification and lot-to-lot consistency are vital. If the RU uses a different supplier, bridging studies may be required, especially in co-validation [20]. |

| Chromatographic Columns | The specific brand, type, and lot of HPLC or GC columns are often critical method parameters [20]. | The protocol should specify allowable column equivalents. Retention of multiple lots of the original column is a common risk mitigation strategy [20]. |

| Stable Test Samples | Homogeneous samples (e.g., finished product, API, spiked samples) from a single lot used for comparative testing [3] [7]. | Sample homogeneity and stability throughout the transfer period are non-negotiable. Additional lots may be tested if the method's robustness is uncertain [3] [20]. |

The landscape of analytical method transfer is evolving, with increasing adoption of Digital Validation Tools (DVTs) to enhance efficiency, data integrity, and audit readiness [22]. In this context, selecting the optimal transfer strategy—co-validation, revalidation, or a waiver—is a critical strategic decision that directly impacts a program's speed, cost, and compliance.

- Co-validation stands out as a powerful tool for accelerated development pathways, offering dramatic time savings but demanding early readiness and robust methods.

- Revalidation provides a comprehensive, self-contained solution for high-risk scenarios where laboratory comparability cannot be assumed.

- Transfer Waivers, while high-risk from a regulatory perspective, offer a lean and efficient option for justifiable, low-risk situations.

The choice is not static but should be guided by a dynamic, risk-based assessment that considers the method, the laboratories, and the program goals. As the industry moves towards greater digitalization and leaner teams, the strategic application of these alternative transfer approaches will be paramount for maintaining operational excellence and bringing quality medicines to patients faster.

The Importance of Robust Method Design in Pre-Transfer Development

In the pharmaceutical industry, the transfer of analytical methods from developing laboratories (sender) to quality control or contract laboratories (receiver) is a critical gate in the drug development pathway. Robustness—defined as a method's capacity to remain unaffected by small, deliberate variations in method parameters—is not a characteristic that can be appended at the end of development [23]. Instead, it must be proactively designed into the method from its inception. A method that performs acceptably in the hands of its developers but fails in a receiving laboratory can lead to costly investigations, delayed technology transfers, and ultimately, impeded patient access to medicines. This guide objectively compares the outcomes of robust versus non-robust method design, framing the evaluation within the broader thesis that a method's transferability is predominantly determined long before the formal transfer protocol is initiated. The concept of an analytical method lifecycle, which encompasses method design, qualification, and continual performance verification, provides the foundational model for this discussion [6].

Comparative Framework: Systematic versus Ad-Hoc Development

The approach to method development can be broadly categorized into two paradigms: a systematic, Quality by Design (QbD)-driven process and an ad-hoc, empirical one. The comparative performance of these paradigms is best evaluated against key transferability metrics, synthesized in the table below from industry case studies.

Table 1: Comparative Outcomes of Method Development Approaches

| Evaluation Metric | Systematic QbD Approach | Ad-Hoc Empirical Approach |

|---|---|---|

| Foundation | Science and risk-based; begins with an Analytical Target Profile (ATP) [6] | Trial-and-error; often lacks predefined objectives |

| Parameter Understanding | Uses Design of Experiments (DoE) to model and understand parameter interactions and establish a design space [23] [24] | One-factor-at-a-time (OFAT) studies provide limited understanding of interactions |

| Robustness Assessment | Deliberate variation of critical method parameters (e.g., column temperature, mobile phase pH) during development [23] | Limited or no formal robustness testing prior to transfer |

| Transfer Success Rate | High; method performance is predictable within the defined design space [24] | Variable to low; prone to unexpected failures during transfer |

| Impact on Transfer Effort | Transfer is a confirmation of prior understanding; often streamlined [25] | Transfer can be iterative and investigative, requiring significant troubleshooting [25] |

| Long-Term Performance | Consistently reliable in routine use across multiple laboratories and over time [23] | Higher incidence of out-of-trend (OOT) or out-of-specification (OOS) results post-transfer |

The data indicates that systematic development reduces batch failures by up to 40% and significantly enhances process robustness through real-time monitoring and predictive modelling [24]. The following workflow visualizes the stark contrast between these two pathways, highlighting how critical early-stage decisions dictate downstream transfer success.

Experimental Protocols for Establishing Robustness

To generate the comparative data presented in this guide, specific experimental protocols are employed to quantify a method's robustness and predict its transferability. These methodologies move beyond simple verification of accuracy and precision under ideal conditions.

Design of Experiments (DoE) for Parameter Optimization

Objective: To systematically identify and model the relationship between Critical Method Parameters (CMPs) and Critical Quality Attributes (CQAs), thereby defining the method's operational design space [24].

Protocol:

- Identify Factors: Select potential CMPs (e.g., % organic solvent in mobile phase, buffer pH, column temperature, injection volume) through prior knowledge and risk assessment tools like Ishikawa diagrams or FMEA [24].

- Design Matrix: Utilize a statistical experimental design (e.g., full factorial, fractional factorial, or Central Composite Design) to efficiently explore the multi-dimensional parameter space with a minimal number of experimental runs.

- Execute Experiments: Perform the analytical procedure according to the design matrix, measuring the relevant CQAs (e.g., resolution, tailing factor, % recovery, precision) for each run.

- Model and Analyze: Fit the data to a statistical model (e.g., response surface methodology) to identify significant factors and their interactions. The model predicts how CQAs respond to variations in CMPs.

- Define Design Space: Establish the "method operable design region" as the multi-dimensional combination of CMPs where the CQAs meet predefined criteria [24]. Changes within this space do not require re-validation.

Supporting Data: A documented case study involved the development of an HPLC method for a solid dosage form. A DoE study examining diluent composition (ACN % and TFA concentration) revealed their interactive effect on extraction efficiency (% Label Claim). The surface plot generated allowed developers to select a diluent composition within a "flat" region of the response surface, ensuring that minor, inevitable variations in preparation would not impact the measured potency [23].

Forced Degradation and Challenge Testing

Objective: To demonstrate the method's specificity and stability-indicating properties by proving it can accurately quantify the analyte in the presence of its potential degradants.

Protocol:

- Generate Degradants: Subject the drug substance and product to stressed conditions (e.g., acid/base hydrolysis, oxidative stress, thermal degradation, photolysis) to generate potential degradants [23].

- Analyze Stressed Samples: Inject the stressed samples and demonstrate that the method successfully separates the analyte peak from all degradation product peaks.

- Verify Performance: Confirm that the method's performance characteristics (accuracy, precision) for the analyte are not compromised in the presence of degradants, proving the method is "stability-indicating."

Inter-Laboratory Pre-Transfer Testing

Objective: To identify method vulnerabilities associated with instrument-to-instrument or analyst-to-analyst variation before the formal transfer.

Protocol:

- Instrument Comparison: Execute the method on different models and/or brands of instruments (e.g., HPLC systems with different dwell volumes) that are representative of the equipment in the receiving laboratory [23] [26].

- Analyst Challenge: Have multiple analysts, preferably from different laboratories, test the same batch by following the written procedure without any additional verbal instructions. This tests the clarity and comprehensiveness of the documentation [23].

- Reagent Sourcing: Evaluate method performance using reagents from different vendors or of different grades to determine if specific sources or purity levels must be controlled [23].

The Scientist's Toolkit: Essential Reagents and Materials

The robustness of an analytical method is often contingent on the consistent quality of its constituent materials. The following table details key research reagent solutions and their functions in ensuring method reliability.

Table 2: Key Research Reagent Solutions for Robust Method Development

| Item | Function & Importance in Robustness |

|---|---|

| HPLC/UPLC Columns | The stationary phase is critical for separation. Robustness studies should test columns from different lots and, if possible, different suppliers to ensure performance is maintained. Specifying a column with a broader operating space is preferable to one that offers perfect resolution but only from a single lot [23] [25]. |

| Chemical Reference Standards | High-purity standards are essential for accurate quantification. The hygroscopicity or static tendency of a standard should be considered when defining the standard weight in the method to minimize analyst-induced variability [23]. |

| Mobile Phase Modifiers | The quality and source of pH modifiers (e.g., trifluoroacetic acid, phosphate salts) can affect retention time and peak shape. Robustness studies should verify that minor variations in modifier grade or concentration do not compromise the separation [23]. |

| Sample Preparation Solvents | The diluent composition must be optimized to ensure complete and consistent extraction/dissolution of the analyte. DoE studies should account for potential variations in product properties (e.g., API particle size) that might challenge extraction completeness [23]. |

A Framework for Proactive Robustness Evaluation

Building on the experimental protocols, a structured framework allows scientists to deconstruct a method and proactively evaluate its vulnerability to failure. This involves assessing risk across four key domains, as synthesized from industry guidance [23]. The relationships and checkpoints within this framework are illustrated below.

Instrument Concerns: A primary failure point in method transfer, particularly for chromatographic methods. Differences in HPLC system dwell volume can drastically alter gradient profiles, affecting retention times, peak shape, and resolution [23]. A robust method incorporates an initial isocratic hold to mitigate dwell volume effects. Furthermore, detection wavelength selection should avoid the slopes of UV spectra and consider practical factors like required sample concentration and dilution steps to enhance overall robustness [23].

Analyst Technical Skill: Methods should be designed to be "QC-friendly," meaning they rely on commonly used techniques and minimize steps that require subjective interpretation [23]. For instance, an instruction to "shake until dissolved" is vulnerable to variability, whereas "shake for 30 minutes" or "until no visible particles remain" provides an objective, reproducible endpoint. A robust method is one that different analysts can execute successfully using only the written procedure.

The comparative evidence is unequivocal: the success of an analytical method transfer is not determined during the transfer itself but is a direct consequence of the rigor, foresight, and systematic science applied during its initial design. Investing in a QbD-based development approach, characterized by risk assessment, DoE, and proactive robustness testing, establishes a wide method operable design space. This investment pays substantial dividends by ensuring seamless technology transfers, reducing regulatory compliance risks, and guaranteeing the consistent generation of reliable data needed to safeguard product quality and patient safety. In the context of evaluating method transfer through comparative validation research, the most significant finding is that a transfer should serve as a confirmation of prior understanding, not a discovery phase for method limitations.

In the pharmaceutical and biopharmaceutical industries, the transfer of analytical methods from one laboratory to another is a critical, regulated activity essential for ensuring consistent product quality. While the technical parameters of method validation receive significant attention, the success of these transfers fundamentally hinges on the effective collaboration between a well-defined sending unit and a thoroughly prepared receiving unit. The team structure and the clarity of assigned responsibilities are not merely administrative formalities but are foundational to achieving documented evidence that a method works as well in the receiving laboratory as in the originating one [26]. A failed transfer can lead to costly delays, regulatory complications, and unreliable testing data.

Framed within a broader thesis on evaluating method transfer through comparative validation research, this guide objectively compares the performance and contributions of the sending and receiving laboratories. It dissects the core responsibilities of each team, provides detailed experimental protocols for comparative testing, and visualizes the collaborative workflow. The ultimate goal is to provide researchers, scientists, and drug development professionals with a structured framework for building a transfer team that ensures reliable and reproducible analytical results across different sites and operational environments.

Team Composition and Core Responsibilities

The analytical method transfer process is a collaborative effort between two primary entities: the sending laboratory (often the method originator or developer) and the receiving laboratory (the site adopting the method for routine use). The success of the transfer is dependent on each unit understanding and fulfilling its distinct set of responsibilities.

The Sending Unit: The Knowledge Repository

The sending unit acts as the source of truth for the analytical method. Its primary role is to ensure the comprehensive and transparent transfer of all technical and scientific knowledge required for the method to be successfully executed in a new environment [4].

Key Responsibilities:

- Provide Critical Documentation: The sending unit must supply the receiving laboratory with the complete analytical procedure, the method validation report, and information on the quality of reference standards and critical reagents [4].

- Conduct Gap Analysis: A crucial pre-transfer activity is reviewing the original method validation to ensure it complies with current ICH requirements. Any gaps identified must be documented, and supplementary validation should be performed before the transfer begins [4].

- Share Tacit Knowledge: Beyond written documents, the sending unit must communicate practical experiences, risk assessments, and "silent" knowledge not captured in the formal method description. This includes tips on handling specific reagents or interpreting chromatographic data [4].

- Lead Training: If the method is complex, the sending unit should provide on-site or virtual training to the analysts at the receiving laboratory, ensuring they are comfortable with the technique [4] [26].

The Receiving Unit: The Qualified Implementer

The receiving laboratory's role is to demonstrate its capability to perform the method consistently and reproducibly, producing results that are statistically equivalent to those generated by the sending unit.

Key Responsibilities:

- Review and Assess: The receiving unit must thoroughly review all provided documentation and perform a gap analysis to ensure its systems, equipment, and environmental conditions are suitable for the method [4] [20].

- Execute the Transfer Protocol: Analysts at the receiving site are responsible for performing the testing outlined in the pre-approved transfer protocol, adhering strictly to the analytical procedure [26].

- Ensure Readiness: This involves confirming that all instrumentation is qualified, personnel are adequately trained, and all necessary materials and reagents are available [20].

- Generate and Report Data: The receiving unit meticulously documents all experiments, results, and any observations or deviations, culminating in the creation of the final method transfer report [4] [26].

Table 1: Detailed Comparison of Laboratory Responsibilities

| Responsibility Area | Sending Laboratory | Receiving Laboratory |

|---|---|---|

| Knowledge Transfer | Provide method description, validation report, robustness data, and practical experience [4] [26]. | Review all provided data, assess understanding, and identify potential issues [4]. |

| Documentation | Develop and approve the transfer protocol, often in collaboration with the receiving unit [4]. | Execute the protocol and draft the final transfer report, documenting all results and deviations [4] [26]. |

| Materials & Samples | Provide representative, homogeneous samples and certificates of analysis for references [26]. | Ensure availability of qualified reagents, columns, and instruments; properly store and handle transferred materials [20]. |

| Training | Train receiving unit personnel and provide ongoing technical support [4]. | Ensure analysts are trained and qualified to perform the method before the formal transfer [20]. |

| Quality & Compliance | Ensure the method complies with the Marketing Authorization and current regulatory requirements [4]. | Demonstrate capability to run the method under its own quality system and produce GMP-reportable data [26]. |

Experimental Protocols for Team-Based Method Transfer

The primary experimental model for validating team performance in method transfer is Comparative Testing. This approach directly evaluates the equivalence of data generated by the sending and receiving teams, providing objective evidence of a successful transfer.

Protocol for Comparative Testing

Objective: To demonstrate that the receiving laboratory can perform the analytical procedure and obtain results that are statistically equivalent to those from the sending laboratory for the same set of samples [4] [26].

Methodology:

- Protocol Development: A detailed, pre-approved protocol is jointly developed. It defines the objective, scope, responsibilities, experimental design, and, crucially, the pre-defined acceptance criteria [4] [26].

- Sample Selection: A predetermined number of samples from the same, homogeneous lot are analyzed by both laboratories. Using identical samples is critical to ensure that any differences observed are due to the laboratory performance and not the product itself [26]. For impurity tests, samples may be spiked with known impurities to assess accuracy [4].

- Experimental Execution: Both laboratories analyze the samples following the identical, transferred method. The protocol typically specifies the number of replicates, injections, and analysts to incorporate intermediate precision into the study [26].

- Data Analysis and Comparison: Results from both sites are statistically compared against the pre-defined acceptance criteria. Common approaches include calculating the relative standard deviation (RSD), confidence intervals for the mean, and using equivalence tests like the two one-sided t-test (TOST) [26] [20].

Defining Acceptance Criteria

Acceptance criteria are based on the method's validation data and its intended purpose. They are not one-size-fits-all and must be justified for each method [4].

Table 2: Typical Acceptance Criteria for Common Test Types

| Test | Typical Acceptance Criteria |

|---|---|

| Identification | Positive (or negative) identification obtained at the receiving site [4]. |

| Assay | The absolute difference between the mean results from the two sites should not exceed 2-3% [4]. |

| Related Substances | For impurities, recovery of spiked impurities is typically required to be within 80-120%. Requirements may vary based on the impurity level [4]. |

| Dissolution | The absolute difference in the mean results should be NMT 10% at time points when <85% is dissolved and NMT 5% when >85% is dissolved [4]. |

Visualizing the Method Transfer Workflow

The following diagram illustrates the end-to-end process of a method transfer, highlighting the key stages and the primary responsibilities of the sending and receiving laboratories throughout the collaborative workflow.

Diagram 1: Analytical Method Transfer Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

The successful execution of a method transfer is dependent on the quality and consistency of critical materials. The following table details key reagent solutions and their functions in ensuring a robust and reliable transfer.

Table 3: Key Research Reagent Solutions for Method Transfer

| Reagent/Material | Function & Importance in Transfer |

|---|---|

| Reference Standards | Well-characterized substances used to calibrate instruments and quantify analytes. Their quality and traceability are non-negotiable for obtaining accurate and comparable results between labs [4]. |

| Critical Reagents | Specific reagents, such as antibodies in ligand-binding assays or specialty columns in chromatography, that are essential for method performance. Transfer can be complicated if lots are not shared or are unavailable to the receiving lab [10]. |

| Spiked Impurity Samples | Samples intentionally fortified with known impurities. They are crucial for demonstrating that the receiving lab can accurately detect and quantify related substances, a key part of method accuracy [4] [6]. |

| Homogeneous Sample Lots | Identical, uniform samples from a single lot provided to both labs. This controls for product variability, ensuring that performance differences are attributable to the laboratory's execution of the method [26]. |

| System Suitability Solutions | Standard preparations used to verify that the analytical system (e.g., HPLC, GC) is performing adequately at the time of testing. Passing system suitability is a prerequisite for valid analytical runs in both laboratories [26]. |

The process of building an effective transfer team is a deliberate and critical investment in the success of analytical method transfers. As detailed in this guide, this success is not achieved by chance but through the clear definition of roles, with the sending laboratory acting as the knowledgeable originator and the receiving laboratory as the capable implementer. The presented comparative data, experimental protocols, and workflow diagrams provide a blueprint for this collaboration. Furthermore, the consistent performance of the method in its new environment is heavily reliant on the quality and management of essential research reagents. By adopting this structured, team-oriented approach—supported by rigorous comparative testing and robust documentation—organizations can significantly enhance the reliability, regulatory compliance, and efficiency of their analytical method transfers, thereby ensuring the continued quality of pharmaceutical products across the global manufacturing network.

Conducting Initial Gap Analysis and Risk Assessment

Successful analytical method transfer between laboratories is a critical regulatory requirement in the pharmaceutical and biotechnology industries. It ensures that analytical methods produce equivalent results when performed by a receiving laboratory compared to the originating transferring laboratory [3]. The process is foundational to drug development, manufacturing, and quality control, guaranteeing product consistency and patient safety [15].

This guide compares the four primary methodological approaches for transfer, as defined by regulatory guidance such as USP <1224> [3]. The optimal choice depends on the method's complexity, the receiving lab's capabilities, and the overall risk profile [15].

Table 1: Core Method Transfer Approaches Comparison

| Transfer Approach | Description | Best Suited For | Key Performance Indicators (KPIs) & Acceptance Criteria |

|---|---|---|---|

| Comparative Testing [3] | Both labs analyze identical, homogeneous samples; results are statistically compared for equivalence. | Well-established, validated methods; labs with similar capabilities and equipment. | Statistical equivalence (e.g., t-test, F-test p > 0.05); %RSD ≤ 2.0%; %Recovery 98-102% [3]. |

| Co-validation [3] [15] | Transferring and receiving labs jointly validate the method simultaneously. | New methods being developed for multi-site use; requires close collaboration. | Achieves all ICH Q2(R1) validation parameters (accuracy, precision, specificity, etc.) with reproducible results across both sites [3]. |

| Revalidation [3] | The receiving lab performs a full or partial validation of the method independently. | Significant differences in lab conditions/equipment; substantial method changes; no prior transfer data. | Meets all pre-defined ICH Q2(R1) validation criteria internally at the receiving site [3]. |