A Practical Guide to Handling Outliers in Method Comparison Studies for Biomedical Research

This article provides a comprehensive framework for detecting, handling, and validating outliers in method comparison data, a critical step in biomedical and clinical research.

A Practical Guide to Handling Outliers in Method Comparison Studies for Biomedical Research

Abstract

This article provides a comprehensive framework for detecting, handling, and validating outliers in method comparison data, a critical step in biomedical and clinical research. Tailored for researchers, scientists, and drug development professionals, it covers foundational concepts, practical application of statistical and machine learning techniques, strategies for troubleshooting complex datasets, and protocols for ensuring analytical validity. The guidance supports robust data integrity, leading to reliable conclusions in drug development and diagnostic method validation.

Understanding Outliers: Sources, Impact, and Investigation in Method Comparison

Defining Outliers in the Context of Analytical Method Comparison

In analytical method comparison, an outlier is a data point that deviates significantly from the overall pattern of the data generated by the methods being compared [1] [2]. These atypical observations do not conform to the general data distribution and can arise from variability in measurement, experimental error, or genuine rare events [1].

The accurate identification and management of outliers is a critical step in robust data analysis. If not properly addressed, outliers can distort statistical results, lead to inappropriate model applications, and ultimately steer research towards misleading conclusions, which is particularly critical in drug development and healthcare decisions [1] [2].

FAQs on Outlier Detection and Management

What defines an outlier in analytical method comparison studies?

An outlier is defined by its significant numerical distance from other observations in a dataset [1]. In the context of method comparison, this typically manifests as a result that differs markedly from the consensus between the two methods being studied. Key characteristics include [1] [2]:

- Greatly differing numerically from other observations

- Potential to alter statistical conclusions (e.g., mean, regression parameters)

- Arising from measurement errors, data processing errors, natural variations, or genuine rare occurrences

Why is detecting outliers crucial in method validation?

Outlier detection is fundamental for several reasons [1] [2]:

- Prevents Distorted Statistics: Outliers can skew measures of central tendency and variability, leading to misinformation.

- Ensures Model Accuracy: They can disproportionately influence model parameters, resulting in poor predictive performance.

- Supports Valid Conclusions: Proper handling prevents inaccurate conclusions that could compromise research validity or patient care.

- Reveals Hidden Insights: In some cases, outliers may indicate novel phenomena, experimental errors, or critical process variations that warrant further investigation.

What are the most reliable techniques for identifying outliers?

Multiple statistical and visual techniques are available for outlier detection. The choice of method depends on your data characteristics and study objectives.

Table 1: Common Outlier Detection Techniques

| Technique | Methodology | Best Use Cases | Considerations |

|---|---|---|---|

| Z-Score | Measures standard deviations from the mean [3] | Large datasets; normally distributed data | Simple but sensitive to extreme values itself [1] |

| IQR Method | Identifies points outside 1.5*IQR from quartiles [3] [1] | Non-normal distributions; robust to extreme values | Uses quartiles, less influenced by extremes [3] |

| Dixon's Q Test | Comports gap/range ratio to critical values [4] | Small sample sizes; single suspected outlier | Designed specifically for small datasets [4] |

| Graphical Methods | Visual identification via boxplots, scatter plots [3] [1] | Initial exploration; communicating findings | Provides intuitive visual assessment [3] |

How should I handle a confirmed outlier in my dataset?

Once an outlier is statistically confirmed, the appropriate handling strategy depends on its determined cause.

Table 2: Outlier Handling Strategies

| Strategy | Procedure | When to Apply |

|---|---|---|

| Investigation | Review experimental notes, recalibrate equipment, check data entry | First step for any suspected outlier; determines root cause |

| Removal | Exclude the data point from analysis | Clear evidence of experimental error; the point is definitively invalid [1] |

| Winsorization | Capping extreme values at a specified percentile [1] | Outlier may contain valid signal but exact value is unreliable [1] |

| Documentation | Flagging without immediate modification | Need for transparency; requires further analysis under different scenarios [1] |

| Comparison | Analyze data with and without the outlier | Assessing the outlier's impact on final conclusions [1] |

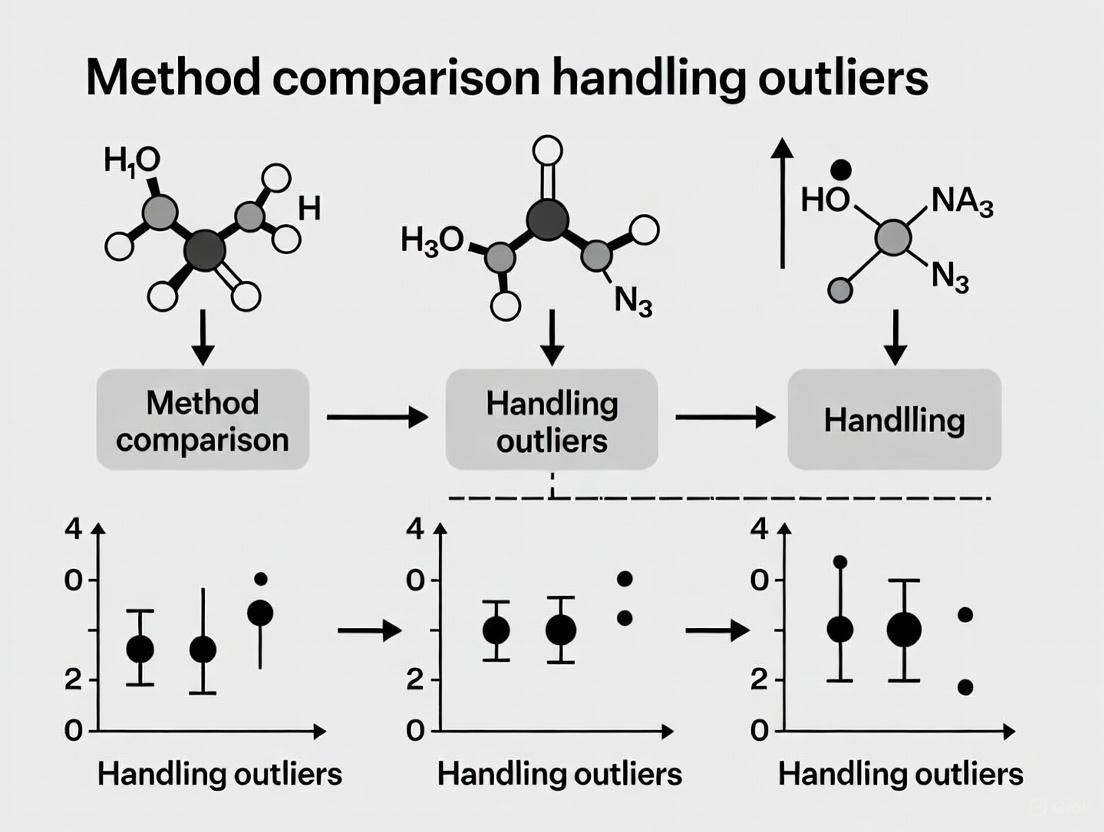

The following workflow provides a systematic approach for handling outliers in method comparison studies:

What common pitfalls should I avoid when working with outliers?

- Automatically Removing All Outliers: Not all outliers are errors; some may represent important biological variability or novel discoveries [1].

- Insufficient Documentation: Always document which points were flagged as outliers, the tests used, and the rationale for their treatment [1].

- Ignoring Context: Statistical tests alone shouldn't dictate outlier handling. Consider your scientific knowledge and experimental context [5].

- Using Single Method Reliance: Employ multiple detection techniques to validate findings, as different methods may yield different results [6].

Troubleshooting Guides

Problem: Inconsistent Outlier Identification Between Methods

Symptoms: Different statistical tests (e.g., Z-score vs. IQR) flag different data points as outliers.

Solution:

- Prioritize Robust Methods: For small sample sizes, use Dixon's Q test or Grubbs' test [4]. For non-normal distributions, prefer the IQR method [3].

- Cross-Validate: Use at least two complementary methods to confirm potential outliers [6].

- Apply Domain Knowledge: Investigate whether flagged points have technical explanations (e.g., sample processing error, instrument calibration drift).

- Document the Process: Record all tests performed and their results to ensure transparency [1].

Symptoms: Regression parameters (slope, intercept) or correlation coefficients change significantly based on inclusion/exclusion of questionable points.

Solution:

- Compare Scenarios: Perform and present analyses both with and without the outlier[s] to demonstrate their impact [1].

- Use Robust Regression: If outliers are suspected to be valid, consider Deming or Passing-Bablock regression which are less sensitive to outliers than ordinary linear regression [5].

- Assess Clinical Significance: Evaluate whether the outlier affects conclusions at medically relevant decision levels [5].

- Increase Sample Size: If possible, collect additional data points around the contentious concentration to improve reliability.

The Scientist's Toolkit

Table 3: Essential Reagents and Resources for Outlier Analysis

| Tool/Reagent | Function/Purpose | Application Notes |

|---|---|---|

| Statistical Software | Performing outlier detection tests | R, Python (with scipy, pandas), or specialized tools; enables Z-score, IQR, Dixon's Q calculations [1] [4] |

| Quality Control Samples | Monitoring analytical performance | Use of additional QC samples in validation allows rejection of spurious data while meeting requirements [4] |

| Dixon's Q Critical Tables | Determining statistical significance for Dixon's Q test | Reference tables provide threshold values based on sample size and confidence level [4] |

| Method Validation Protocols | Standardized procedures for handling outliers | Pre-established SOPs ensure consistent treatment of outliers across studies [4] |

| Laboratory Investigation Forms | Documenting root cause analysis | Structured forms to record potential causes (e.g., pipetting error, sample mix-up) for outliers |

Frequently Asked Questions

Q1: What is the fundamental difference between an outlier, a leverage point, and an influential point?

- A: These terms describe different types of "unusual" observations with distinct impacts on a regression model [7].

- Outlier: An observation where the actual outcome (Y-value) is far from the model's predicted value, resulting in a large residual. It may not necessarily be extreme in its predictor (X) values [8] [7].

- Leverage Point: An observation that is unusual in its predictor (X-value) space. It has the potential to influence the model because it sits far from other X-values. A leverage point may still follow the overall trend of the data [9] [7].

- Influential Point: An observation that, if removed, causes a major change in the regression model (e.g., significantly alters the slope or intercept of the line). Influential points are often both outliers (extreme Y) and have high leverage (extreme X) [9] [7].

- A: These terms describe different types of "unusual" observations with distinct impacts on a regression model [7].

Q2: Why should I not automatically remove all outliers from my clinical dataset?

- A: Automatically removing outliers is discouraged because they are not necessarily "bad" data [8] [9]. An outlier can be a valuable source of discovery, potentially indicating a previously unknown subpopulation, a novel drug response, or a new disease mechanism [10]. Removing them can lead to overconfidence in a model that does not reflect the full scope of biological reality [8] [9]. Instead, their root cause should be investigated—whether it is a data entry error, a natural deviation, or a novel finding [10].

Q3: Which statistical test is recommended for formally testing regression outliers?

- A: A formal test like the

outlierTestfunction from thecarpackage in R can be used [8]. This test calculates Studentized residuals and applies a Bonferroni correction to the p-values to account for multiple testing, which helps control the false positive rate when checking many observations simultaneously [8].

- A: A formal test like the

Q4: In the context of clinical registry benchmarking, what are the challenges in outlier detection?

- A: Benchmarking in clinical registries involves comparing healthcare provider outcomes. Key challenges include [6]:

- Methodological Inconsistency: Studies have found that using different statistical models (e.g., random effects vs. fixed effects regression) can yield vastly different outlier results, and there is no clear consensus on the optimal method [6].

- Data Issues: Registries must handle overdispersion (excessive variation), low outcome prevalence, and missing data, all of which can complicate accurate outlier identification [6].

- High Stakes: Public reporting of underperforming providers based on outlier status has significant reputational and financial consequences, making accurate detection critically important [6].

- A: Benchmarking in clinical registries involves comparing healthcare provider outcomes. Key challenges include [6]:

Q5: What are some robust regression methods that are less sensitive to outliers?

Troubleshooting Guides

This guide provides a systematic approach to diagnosing and addressing outliers in clinical regression analysis.

Step 1: Detect and Visualize Potential Outliers

- Action: Begin with visual and simple statistical checks.

- Protocol:

- Create Diagnostic Plots: Plot Studentized residuals against fitted values. Observations with large absolute residual values are potential outliers [8].

- Use Boxplots: For univariate analysis, boxplots can visually identify data points beyond the whiskers (typically defined as 1.5 * IQR from the quartiles) [3].

- Calculate Statistical Thresholds: Apply the IQR method (outlier if value < Q1 - 1.5IQR or > Q3 + 1.5IQR) or Z-score method (outlier if |Z-score| > 3) for a preliminary list [3].

Step 2: Diagnose the Type and Impact of Unusual Points

- Action: Differentiate between outliers, high-leverage points, and influential points.

- Protocol:

- Measure Leverage: Calculate the hat values (diagonal elements of the hat matrix). A common rule is that a point has high leverage if its hat value exceeds

2p/n, wherepis the number of model parameters andnis the sample size [7]. - Measure Influence: Compute Cook's Distance for each observation. A Cook's D greater than 1 or, more commonly, a value that is significantly larger than the others, suggests a highly influential point [7]. DFITs is another metric where an absolute value above 1 (for small/medium datasets) can indicate influence [7].

- Measure Leverage: Calculate the hat values (diagonal elements of the hat matrix). A common rule is that a point has high leverage if its hat value exceeds

- Interpretation: The flowchart below outlines the diagnostic process and relationship between these concepts.

Step 3: Investigate the Root Cause

- Action: Before taking any corrective action, investigate why the point is unusual.

- Protocol:

- Check for Data Errors: Verify the observation for data entry or measurement mistakes. This is the simplest and most correctable cause.

- Understand the Context: Use domain expertise. Does the outlier represent a known but rare clinical phenomenon (e.g., an unexpected drug response in a specific patient subgroup)? If so, it may be a "novelty-based" outlier that is key to a new discovery [10].

- Classify the Root Cause: Categorize the outlier's origin to guide handling [10]:

- Error-based: Human or instrument error.

- Fault-based: Underlying system fault (e.g., disease state).

- Natural Deviation: A rare but explainable chance event.

- Novelty-based: A new, previously unaccounted-for mechanism.

Step 4: Apply Appropriate Handling Techniques

- Action: Choose a mitigation strategy based on the findings from Steps 1-3.

- Protocol: Refer to the table below to select an appropriate method.

| Technique | Description | Best Use Case | Clinical Consideration |

|---|---|---|---|

| Sensitivity Analysis [8] | Fit the model with and without the outlier(s) and compare the results. | The gold standard for assessing the outlier's impact on clinical conclusions. | If conclusions don't change, the outlier may not be a major problem. Essential for transparent reporting. |

| Transformation [8] [11] | Apply a mathematical function (e.g., log) to the outcome variable. | When the Y-distribution is very skewed, leading to large residuals. | Can help meet model assumptions but may make interpretation of coefficients less intuitive. |

| Robust Regression [11] [12] | Use statistical methods (e.g., M-estimation) that are less sensitive to outliers. | When outliers are believed to be valid but influential observations. | Provides a model that is not unduly influenced by extreme values. |

| Winsorizing [13] [12] | Replace extreme values with the nearest "non-extreme" value. | When you want to retain the data point but reduce its extreme influence. | Artificially reduces variability; may not be suitable for all clinical analyses. |

| Trimming/Removal [13] [12] | Remove the outlier from the dataset. | Only if the point is conclusively a data error and cannot be corrected. | Risks losing valuable information and should be justified and documented thoroughly [8] [9]. |

The table below summarizes common statistical methods for identifying outliers.

| Method | Calculation | Threshold for Outlier | Notes |

|---|---|---|---|

| Z-Score [3] | ( Z = \frac{(X - \mu)}{\sigma} ) | ( |Z| > 3 ) | Simple but assumes normality; sensitive to outliers itself. |

| IQR Rule [3] | IQR = Q3 - Q1 | < Q1 - 1.5×IQR or > Q3 + 1.5×IQR | Non-parametric; robust to non-normal distributions. |

| Studentized Residual [8] | ( R{student} = \frac{residual}{SE{residual} \sqrt{1 - h_i}} ) | Bonferroni-adjusted p-value < 0.05 | Accounts for the variability of the residual and is preferred in regression. |

| Leverage (h-value / Hat value) [7] | Diagonal of hat matrix | ( > \frac{2p}{n} ) | Identifies points extreme in the X-space. |

| Cook's Distance (Influence) [7] | Combined function of leverage and residual | > 1 (or visually distinct) | A common measure of a point's overall influence on the model. |

The Scientist's Toolkit: Key Reagents for Analysis

This table lists essential "reagents"—statistical measures and methods—for a robust outlier diagnostic workflow.

| Tool | Function | Application in Clinical Research |

|---|---|---|

| Studentized Residual | Flags observations with poorly predicted Y-values (outliers). | Identifying patients whose outcomes are not well explained by the model, potentially indicating comorbidities or unique responses [8]. |

| Hat Value (Leverage) | Identifies patients with unusual combinations of predictor variables (e.g., age, biomarkers). | Detecting if a model's conclusions are overly dependent on a small subgroup with rare baseline characteristics [7]. |

| Cook's Distance | Measures the overall influence of a single observation on the entire regression model. | Quantifying how much a single patient's data impacts the estimated drug effect or risk factor association [7]. |

| Bonferroni Correction | Adjusts significance levels for multiple comparisons to control false positives. | Crucial when testing hundreds or thousands of observations for outliers to avoid flagging too many by chance [8]. |

| Robust Regression | Provides parameter estimates that are less sensitive to outliers. | Generating more reliable and stable clinical models when the data contains valid but extreme values [11] [12]. |

FAQ: Troubleshooting Data Anomalies in Method Comparison Studies

Q1: What are the primary categories of data anomalies a researcher should investigate? When analyzing data from method comparison studies, anomalies generally fall into three categories, each with distinct causes and implications [14]:

- Data Entry and Measurement Errors: Mistakes made during the manual entry of data or by instruments during measurement. These are typically incorrect values that should be corrected or removed [15] [14].

- Sampling Problems: Occurs when data is collected from subjects or under conditions that do not represent the target population. These data points are not relevant to the research question and can be legitimately excluded [14].

- Natural Variation: Extreme values that are a legitimate, though rare, part of the population you are studying. These contain meaningful information and should generally be retained in the dataset to accurately represent the process's inherent variability [16] [17] [14].

Q2: How can I determine if an outlier is due to a sampling problem? An outlier likely stems from a sampling problem if you can identify a specific reason why the data point does not belong to your target population. This requires a thorough investigation of experimental conditions and subject eligibility [14]. For example, in a study on bone density growth in healthy pre-adolescent girls, a subject with a health condition like diabetes—which is known to affect bone health—would not be part of the target population. Her data would constitute a sampling problem and could be excluded [14].

Q3: What should I do if a statistical method in my comparison study fails to produce a result? This is known as method failure (e.g., non-convergence, software crashes) and should not be handled like simple missing data [18] [19]. Avoid the common pitfalls of discarding entire datasets or imputing values, as this can lead to biased comparisons [18] [19]. Instead, the recommended approach is to implement a fallback strategy [18] [19]. This involves:

- Documenting every instance of failure.

- Defining a logical and consistent alternative method to use when the primary method fails.

- Reporting the failure rates and the fallback strategy used, as this information is critical for interpreting the robustness and practical applicability of the methods being compared [18].

Troubleshooting Guides

Guide 1: Identifying the Root Cause of an Outlier

Follow this logical workflow to diagnose the nature of a data anomaly. A text-based summary of the workflow is provided below the diagram.

Text-based workflow summary:

- Investigate Data Entry & Measurement: Begin by checking for typos, instrument calibration errors, or incorrect unit recordings. If an error is found, correct the value if possible; otherwise, remove it [14].

- Examine Sampling Frame: If no measurement error is found, verify if the data point comes from your defined target population. Consider subject eligibility and whether experimental conditions were abnormal (e.g., a power failure during a manufacturing test) [14]. If it is not from the target population, it can be removed.

- Assess Natural Variation: If the previous steps are negative, evaluate if the extreme value, while rare, is a biologically or physically plausible result. If it is a legitimate part of the process's natural variation, you should retain the point to accurately represent the true variability in your study [14].

Guide 2: Handling Method Failure in Comparison Studies

This guide outlines the recommended procedure for when an analytical method fails to produce a result in a comparison study.

Text-based workflow summary:

- Document the Failure: Record the data set, the specific error message (e.g., non-convergence, memory error), and the computational environment [18] [19].

- Avoid Inadequate Handlings: Resist the temptation to simply delete the problematic data set for all methods or to impute a performance value (e.g., with a mean or constant). These approaches can severely bias the comparison [18] [19].

- Implement a Fallback Strategy: Use a pre-specified, simpler, or more robust method to obtain a result. This reflects what a practitioner might do if their first-choice method fails and allows for meaningful aggregation across all data sets [18].

- Report Transparently: In your findings, clearly state the frequency of method failures and describe the fallback strategy employed. This is critical information for assessing a method's reliability [18] [19].

Protocol 1: Comparing Data-Checking Methods to Reduce Entry Errors

Objective: To evaluate the accuracy and speed of different methods for identifying and correcting manually introduced data-entry errors [20].

Methodology Summary:

- Materials: Created 20 fictitious data sheets containing six data types: ID codes, sex (M/F), numerical ratings, alphabetical scales, spelled words, and three-digit numbers. Deliberately introduced 32 errors during initial entry [20].

- Participants: 412 undergraduates were randomly assigned to one of four data-checking methods [20].

- Checked Methods:

- Visual Checking: Comparing the original data sheet to the computer screen.

- Solo Read Aloud: The checker reads the data sheet aloud and verifies the screen.

- Partner Read Aloud: One person reads the sheet, another verifies the screen.

- Double Entry: Data is entered a second time by the same person; software flags discrepancies for resolution against the original sheet [20].

- Outcomes Measured: The number of corrected errors and the time taken to complete the checking process [20].

Summary of Quantitative Findings from Data-Checking Study [20]:

| Data-Checking Method | Relative Error Correction Accuracy | Relative Speed |

|---|---|---|

| Double Entry | Most Accurate (Significantly superior) | Slowest |

| Solo Read Aloud | Moderately Accurate | Faster than Double Entry |

| Visual Checking | Less Accurate | Faster |

| Partner Read Aloud | Less Accurate | Faster |

Protocol 2: Identifying Outliers Using the Interquartile Range (IQR) Method

Objective: To detect outliers in a dataset in a way that is robust to non-normal distributions [21].

Methodology Summary:

- Calculation:

- Calculate the First Quartile (Q1): the 25th percentile of your data.

- Calculate the Third Quartile (Q3): the 75th percentile of your data.

- Compute the Interquartile Range (IQR): IQR = Q3 - Q1.

- Determine the Lower Fence: Q1 - 1.5 * IQR.

- Determine the Upper Fence: Q3 + 1.5 * IQR [21].

- Identification: Any data point that falls below the Lower Fence or above the Upper Fence is classified as a potential outlier [21] [15]. This is often visualized using a box plot [21].

The Scientist's Toolkit: Essential Reagents & Solutions for Data Investigation

This table details key analytical "reagents" – statistical methods and tools – used to diagnose and handle data anomalies.

| Research Reagent / Solution | Function / Explanation | ||

|---|---|---|---|

| IQR Method | A non-parametric method for identifying outliers that is not influenced by extreme values, making it suitable for non-normal data [21]. | ||

| Z-Score Method | Used to identify outliers in normally distributed data by measuring the number of standard deviations a point is from the mean. A common threshold is | Z | > 3 [21]. |

| Bland-Altman Plot | A graphical method used in method comparison studies to plot the differences between two methods against their averages, helping to assess agreement and identify systematic bias [22]. | ||

| Fallback Strategy | A pre-specified alternative method used to generate a result when the primary method in a comparison study fails, preventing the loss of data and enabling fair aggregation [18] [19]. | ||

| Nonparametric Tests | A class of statistical hypothesis tests (e.g., Mann-Whitney U test) that are robust to outliers because they do not rely on distributional assumptions like normality [14]. | ||

| Double Data Entry | A data-checking method where data is entered twice (often by different people), and discrepancies are reconciled against the original source. Considered the "gold standard" for error reduction [20]. |

Frequently Asked Questions

1. What is the fundamental difference between justified outlier removal and data manipulation? Justified removal is based on identifiable, documentable causes such as measurement error or the data point not belonging to the target population. Data manipulation occurs when outliers are removed solely to achieve a desired statistical result, such as statistical significance or a better model fit, without a valid, pre-established reason [14].

2. My model fit improves significantly after removing a data point. Is this sufficient justification for removal? No. Improving model fit is a consequence of removal, not a justification for it. Removing a point simply to produce a better-fitting model makes the process appear more predictable than it actually is and is considered bad practice. The justification must come from investigating the underlying cause of the outlier [14].

3. How should I handle outliers that represent a rare but real event? You should generally retain them. These outliers capture valuable information about the natural variability of the process you are studying. In these cases, consider using statistical analyses that are robust to outliers, such as nonparametric tests or data transformations, instead of removal [14].

4. Is it acceptable to remove an entire dataset from an analysis? Yes, but only if you can establish that the entire dataset does not represent your target population. For example, if data was collected under abnormal experimental conditions or from a subject that does not meet the study's inclusion criteria, its removal can be legitimate. You must be able to attribute a specific cause [14].

5. What is the most critical step to take when I decide to remove an outlier? Document everything. You must document the excluded data points and provide a clear, scientific rationale for their removal. Another robust approach is to perform and report your analysis both with and without the outliers, discussing the differences in the results [14].

Troubleshooting Guide: A Framework for Ethical Decision-Making

When you encounter a potential outlier, follow this structured workflow to guide your actions. The diagram below outlines the key decision points.

Step 1: Investigate the Origin of the Outlier

Before any statistical analysis, investigate the root cause.

- Check for Data Entry and Measurement Errors: Typos or instrument malfunctions can produce impossible values. For example, a human height recorded as 10.8135 meters is a clear error [14].

- Action: If verified as an error, correct the value if possible. If the correct value cannot be determined, removal is justified because you know the data point is incorrect [14].

- Review Experimental Conditions: Was the data point collected under abnormal or non-standard conditions? This could include a power failure during a measurement, a machine setting drifting, or a subject having an unrelated health condition that affects the outcome [14].

- Action: If the data point originated from conditions outside your defined experimental protocol, it does not represent your target population. Removal is justified [14].

Step 2: Evaluate the Outlier's Relationship to Your Population

If no error is found, determine if the outlier is a genuine member of the population you are studying.

- Natural Variation: All data distributions have a spread of values, and extreme values can occur by chance, especially in large datasets. These are "real" data [23] [14].

- Action: Do not remove. Retaining these points is crucial to accurately represent the true variability of the subject area. Removing them makes the process seem less variable than it is, which is a form of bias [14].

Step 3: Choose a Statistically Sound Approach

The table below summarizes your options based on the outcome of your investigation.

| Situation | Recommended Action | Ethical Justification |

|---|---|---|

| Verified Error (e.g., typo, instrument fault) | Correct the value or, if not possible, remove it. | The data point is factually incorrect. Its inclusion would harm data integrity [14]. |

| Not from Target Population (e.g., wrong experimental conditions) | Legitimately remove from the primary analysis. | The data point is not relevant to the research question being asked [14]. |

| Natural Variation (a genuine, though extreme, value) | Do not remove. Analyze with robust statistical methods (see below). | Removal to improve fit is data manipulation. It misrepresents the natural variability of the process [14]. |

Alternatives to Removal for Natural Outliers: When you must keep outliers but they distort standard analyses, use these robust methods:

- Use Nonparametric Tests: These tests do not rely on distributional assumptions (like normality) that can be violated by outliers [14].

- Apply Data Transformations: Logarithmic or square root transformations can reduce the influence of extreme values and stabilize variance [24] [25].

- Employ Robust Regression: Some statistical packages offer regression techniques designed to be less sensitive to outliers [14].

- Switch to Robust Summary Statistics: Use the median instead of the mean for central tendency, and the interquartile range (IQR) instead of the standard deviation for variability [23] [25].

The Scientist's Toolkit: Essential Reagents & Methods

This table lists key methodological "reagents" for handling outliers ethically and effectively in your research.

| Research 'Reagent' | Function & Purpose in Ethical Outlier Handling |

|---|---|

| IQR (Interquartile Range) Method | A robust, non-parametric method for detecting outliers by defining a "fence" beyond which data points are considered extreme. Less sensitive to the outliers themselves than mean/SD methods [26] [24]. |

| Cook's Distance | Measures the influence of each data point on a regression model. Helps identify influential observations that should be investigated, but not automatically removed [27]. |

| Robust Statistical Tests | Nonparametric tests (e.g., Mann-Whitney) or robust regression techniques allow valid analysis without requiring the removal of legitimate extreme values [14]. |

| Data Transformation Functions | Mathematical functions (e.g., log, square root) applied to the entire dataset to reduce skewness and the undue influence of outliers, preserving data points while enabling analysis [24]. |

| Pre-Established Protocol | A documented plan, created before data analysis, that defines the specific and objective criteria for outlier identification and handling. This is a critical defense against data manipulation [14]. |

Detection to Action: A Toolkit of Statistical and Machine Learning Techniques

Frequently Asked Questions

FAQ 1: Why is initial visual screening of method comparison data so important? Initial visual screening using plots provides an intuitive and powerful way to understand your data's underlying structure before formal statistical analysis. It helps you quickly identify patterns, trends, and potential problems like outliers that could drastically bias your results and lead to incorrect conclusions [15] [28]. In the context of method comparison, these plots are the first line of defense for ensuring the reliability of your findings.

FAQ 2: I've found outliers in my data. Can I just remove them? No, removal is not the default or always correct action. Outliers can be either errors (e.g., from data entry) or genuine rare events [29] [28]. The appropriate action depends on the context:

- Investigate First: Always try to find a root cause for the outlier [30].

- If an Error is Found: It may be justified to exclude the value, but you must transparently report the exclusion and the reason [28].

- If No Cause is Found: The outlier may be a valid, extreme value. In this case, you should use robust statistical methods (like non-parametric tests or robust regression) that are less influenced by outliers, or report your results both with and without the outlier [30] [28].

FAQ 3: For assessing agreement between two methods, is a box plot or a scatter plot better? They serve different but complementary purposes [31] [32].

- A scatter plot (e.g., Price vs. Kim) is superior for directly visualizing the relationship and agreement between two methods or observers. It allows you to assess correlation and see if one method consistently over- or under-estimates the other [31].

- A box plot is ideal for showing the spread and distribution of a single variable. It efficiently reveals the median, variability, and skewness of the data from each method separately, making it excellent for initial screening of the data's overall structure and for identifying potential outliers within each method's results [29] [32]. A comprehensive analysis often uses both.

Troubleshooting Guides

Problem 1: Suspected Outliers are Skewing the Data Analysis

Question: How can I reliably identify and handle outliers in my method comparison dataset?

Solution: Follow a systematic protocol to detect, investigate, and manage outliers.

Experimental Protocol: A Step-by-Step Guide to Outlier Management

Visual Identification: Use graphical methods for initial screening.

- Box Plots: Plot the data for each method or group. Any data point falling above

Q3 + 1.5 * IQRor belowQ1 - 1.5 * IQRis a potential outlier, where IQR is the Interquartile Range (Q3 - Q1) [21] [29] [15]. - Scatter Plots: In a scatter plot of Method A vs. Method B, look for points that fall far away from the main cluster of data [32].

- Box Plots: Plot the data for each method or group. Any data point falling above

Statistical Validation: Use statistical tests to confirm visual suspicions.

- For Normally Distributed Data: The Extreme Studentized Deviate (ESD) test is effective, especially for identifying a single outlier. It is most reliable with larger sample sizes [30].

- For Small Samples or Non-Normal Data: Dixon-type Tests (e.g.,

r10,r11ratios) are flexible and do not require the assumption of normality [30].

Root Cause Analysis: Before altering the dataset, investigate the potential outlier.

Appropriate Handling: Choose a treatment based on your investigation.

- Trimming: Remove the outlier from the analysis only if an assignable cause (like an error) is found, and always report this action [28].

- Winsorization: Replace the outlier's value with the nearest value that is not an outlier. This reduces its impact without removing it entirely [15].

- Use Robust Methods: Employ statistical techniques that are inherently resistant to outliers, such as non-parametric tests (e.g., Mann-Whitney U test) or robust regression [30] [28].

Table 1: Common Statistical Methods for Outlier Detection

| Method | Best Used For | Key Principle | Considerations |

|---|---|---|---|

| IQR (Box Plot) [29] [15] | Initial, visual screening of any data distribution. | Identifies points outside 1.5 times the Interquartile Range (IQR). | Simple and effective for univariate data. Does not assume a normal distribution. |

| Extreme Studentized Deviate (ESD) [30] | Normally distributed data with more than 10 observations. | Identifies the point with the maximum deviation from the mean, comparing it to a tabled critical value. | Excellent for single outliers; can be generalized for multiple outliers. Requires normality assumption. |

| Dixon's Q-Test [30] | Small sample sizes (e.g., <10-25). | Uses the ratio of ranges between the suspected outlier and the rest of the dataset. | Flexible, does not require normality. Different ratios are used depending on the data's order. |

Diagram: Outlier Investigation Workflow

Problem 2: Choosing the Right Plot for Method Comparison

Question: How do I select the most effective plot to communicate my findings?

Solution: Each plot serves a distinct purpose. Use them in combination for a comprehensive view. The table below summarizes when and how to use each one.

Table 2: Guide to Selecting and Using Key Visualization Plots

| Plot Type | Primary Use Case | How to Interpret | What to Look For |

|---|---|---|---|

| Difference Plot | To visualize the agreement between two methods by plotting the differences against the averages. | The central line represents the mean difference (bias). The upper and lower lines are limits of agreement (mean bias ± 1.96*SD of the differences). | Systematic Bias: If the mean difference line is not at zero. Trends: If differences get larger/smaller as the average increases (indicating proportional error). Outliers: Points outside the limits of agreement. |

| Scatter Plot [31] [32] | To assess the relationship, correlation, and agreement between two methods or observers. | Each point is a pair of measurements. The pattern of points shows the strength and direction of the relationship. | Correlation: How closely the points cluster around a straight line. Agreement: How close the points are to the line of identity (where Method A = Method B). Clusters & Gaps: Suggest subpopulations in the data. |

| Box Plot [29] [32] | To compare the distribution (center, spread, skewness) of a single variable across different groups or methods. | The box shows the middle 50% of data (IQR), the line inside is the median. The whiskers show the range, and points beyond are outliers. | Central Tendency: Compare medians between groups. Spread/Variability: Compare the size of the boxes (IQR) and length of whiskers. Skewness: If the median is not in the center of the box. Outliers: Individual points beyond the whiskers. |

Diagram: Data Visualization Selection Guide

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Method Comparison Studies

| Item / Solution | Function in Experiment |

|---|---|

| Statistical Software (R, Python, SPSS) | Provides the computational environment to generate advanced plots (difference, scatter, box), calculate descriptive statistics, and perform formal outlier tests (ESD, Dixon's). |

| Defect Kit (for AVI qualification) [33] | In Automated Visual Inspection (AVI) for pharmaceutical products, a set of samples with known defects used to qualify and tune inspection systems, ensuring they can detect anomalies consistently. |

| Robust Statistical Methods [28] | A class of statistical techniques (e.g., Mann-Whitney U test, robust regression) used to analyze data that contains outliers without the results being unduly influenced by them. |

| IQR Outlier Labeling Rule [30] [29] | A simple, non-parametric calculation (Q1 - 1.5*IQR and Q3 + 1.5*IQR) used to define fences for identifying potential outliers in a dataset, central to creating box plots. |

Frequently Asked Questions (FAQs)

1. What is the key difference between the Z-score and IQR methods for outlier detection?

The Z-score method measures how many standard deviations a data point is from the mean, making it highly effective for data that follows a normal distribution. In contrast, the Interquartile Range (IQR) method identifies outliers based on the spread of the middle 50% of the data, making it a robust, non-parametric technique that does not assume a normal distribution and is less influenced by extreme values themselves [34] [35].

2. When should I use Grubbs' Test over the IQR method?

Grubbs' Test is particularly useful when you have a small dataset and theoretically expect no more than a single outlier. It is designed to identify one outlier at a time and is often used iteratively. However, a significant limitation is "masking," where the presence of a second outlier can prevent the detection of the first. For datasets where multiple outliers are possible, the IQR method or the ROUT method is recommended [36] [37].

3. My data does not follow a normal distribution. Which method should I use?

For non-normally distributed data, the IQR method is generally the preferred choice. Because it is based on quartiles (ranks) rather than mean and standard deviation, it is a robust statistic that performs well with skewed data or data with heavy tails, which is common in biological and clinical research [35] [38].

4. I've identified a potential outlier. Should I automatically remove it from my dataset?

No. Identifying a statistical outlier is only the first step. Both the USP and best practices warn against automatic removal without a thorough investigation [27] [37]. You should:

- Investigate the root cause: Determine if the outlier is due to a measurement error, data entry mistake, or a genuine biological variation.

- Consider the impact: Assess how the outlier influences your overall results and conclusions.

- Document your decision: Always document any outlier you remove and provide a clear justification, whether it was based on a statistical rule or an identified experimental error [36] [27].

5. What is the ROUT method, and how does it compare to Grubbs' Test?

The ROUT (Robust regression and Outlier removal) method is a model-based outlier detection technique that can identify multiple outliers simultaneously and is less susceptible to the masking problem that affects Grubbs' Test. While Grubbs' Test is slightly better at detecting a single outlier in a perfect Gaussian dataset, the ROUT method is superior in most real-world scientific situations where the possibility of multiple outliers exists [36] [37].

Troubleshooting Guides

Issue 1: Inconsistent Outlier Detection with Z-Score

Problem: You get different outlier results when re-running the analysis on new data, or the Z-score fails to flag obvious extreme values.

Solution: This often occurs when the data is not normally distributed or when the outliers themselves are inflating the standard deviation.

- Verify Data Distribution: First, test your data for normality (e.g., using a Shapiro-Wilk test or by examining a Q-Q plot). If the data is significantly non-normal, switch to a non-parametric method like the IQR.

- Recalculate with Robust Statistics: If you must use the Z-score, consider using the median and Median Absolute Deviation (MAD) instead of the mean and standard deviation, as these are less influenced by outliers.

- Alternative Approach - Use IQR Method: For a more reliable result, implement the IQR method, which is not dependent on normality [35].

Issue 2: Grubbs' Test Fails to Detect an Obvious Outlier

Problem: A visual inspection of your data clearly shows an extreme value, but Grubbs' Test does not identify it as an outlier.

Solution: This is likely due to "masking," where multiple outliers are present.

- Visual Confirmation: Plot your data using a boxplot or scatter plot to confirm the presence of multiple suspicious data points.

- Iterative Removal: If you are using Grubbs' Test iteratively, ensure you re-run the test after removing the most extreme outlier. The previously masked outlier may then be detected in the subsequent run.

- Switch to a More Robust Method: Apply the IQR method, which can handle multiple outliers effectively. For model-based data (e.g., dose-response curves), consider using the ROUT method available in software like GraphPad Prism [36] [37].

Issue 3: Determining the Correct Threshold for IQR

Problem: You are unsure if the standard multiplier of 1.5 for the IQR fence is appropriate for your specific research data.

Solution: The 1.5 multiplier is a conventional balance between sensitivity and specificity, identifying approximately 99.3% of data points if the distribution were normal [35].

- For Standard Analysis: Use the multiplier of 1.5. This is appropriate for most general purposes.

- For a More Stringent Threshold: If you need to flag only the most extreme outliers, use a multiplier of 3.0. Data points beyond these fences are considered extreme outliers.

- Justify Based on Field Standards: Consult historical data or literature in your specific field. Some disciplines may have established conventions for outlier thresholds [39] [38].

The table below provides a concise comparison of the three foundational outlier detection methods.

| Method | Key Formula | Detection Threshold | Best Use Case | Key Assumptions & Limitations |

|---|---|---|---|---|

| Z-Score [34] | ( z = \frac{(X - \mu)}{\sigma} ) | Typically |z| > 2 or 3 | Data with normal distribution; when mean and SD are meaningful. | Assumes normality. Sensitive to outliers themselves (which inflate SD). |

| IQR (Tukey's Fences) [39] [38] | Lower Fence: ( Q1 - 1.5 \times IQR ) Upper Fence: ( Q3 + 1.5 \times IQR ) | Data points outside the fences | Non-normal data; robust, general-purpose use. | Non-parametric; no distributional assumptions. Less sensitive to multiple outliers. |

| Grubbs' Test [36] | ( G = \frac{\max |Y_i - \bar{Y}|}{s} ) | G > Critical Value (based on n, α) | Testing for a single outlier in a small, normally distributed dataset. | Assumes normality. Designed for one outlier; prone to masking with multiple outliers. |

Experimental Protocol: Implementing the IQR Method

This is a detailed, step-by-step protocol for detecting outliers using the IQR method, which is highly recommended for its robustness in research data.

Objective: To systematically identify and document outliers in a dataset using the Interquartile Range method.

Procedure:

- Data Preparation: Organize your dataset in a single column within your statistical software or spreadsheet.

- Calculate Quartiles:

- Q1 (First Quartile): Find the median of the lower half of the dataset (the 25th percentile).

- Q3 (Third Quartile): Find the median of the upper half of the dataset (the 75th percentile).

- Compute IQR: Subtract Q1 from Q3. ( IQR = Q3 - Q1 ) [38].

- Establish Outlier Fences:

- Identify Outliers: Flag any data point that falls below the Lower Fence or above the Upper Fence.

- Documentation and Reporting: In your research notes or methodology section, record:

Method Selection Workflow

The following diagram illustrates the logical process for selecting the appropriate outlier detection method based on your data's characteristics.

Research Reagent Solutions

The table below lists key computational and statistical "reagents" essential for implementing the outlier detection methods discussed.

| Item Name | Function / Purpose | Example/Notes |

|---|---|---|

| Statistical Software | Platform for performing calculations, generating plots, and running statistical tests. | GraphPad Prism (includes ROUT), R, Python (with SciPy, statsmodels libraries), SAS [37]. |

| Z-Score Table (Standard Normal) | Used to determine the probability (p-value) associated with a calculated Z-score. | Found in statistics textbooks or online; specifies the area under the normal curve to the left of a Z-score [34] [41]. |

| Grubbs' Critical Value Table | Provides the threshold value to determine if the calculated G statistic is significant. | Critical values depend on sample size (n) and significance level (α); available in statistical tables or computed by software [36]. |

| Box Plot Visualization | A graphical tool for visualizing the median, quartiles (IQR), and potential outliers in a dataset. | Outliers are typically plotted as individual points beyond the whiskers, providing immediate visual identification [38] [40]. |

Troubleshooting Guide: Algorithm Selection and Application

Q1: How do I choose between Isolation Forest and LOF for my method comparison data? A: The choice depends on your dataset's size, structure, and the nature of outliers you expect. Isolation Forest excels with high-dimensional data and is computationally efficient, making it suitable for large-scale screening. In contrast, LOF is superior for identifying local outliers within clusters of varying density. Consider a hybrid approach for critical applications: use Isolation Forest for initial broad screening and apply LOF for detailed analysis of flagged anomalies [42].

Q2: My Isolation Forest model is not detecting the outliers I expect. What could be wrong?

A: This is often due to an improperly set contamination parameter, which is the expected proportion of outliers in the data [43] [44]. If set incorrectly, the model's threshold for flagging anomalies will be off.

- Troubleshooting Steps:

- Review Parameter: Check the

contaminationvalue used when initializing yourIsolationForestmodel [44]. - Domain Knowledge: Use your expertise to estimate the expected rate of outliers in your method comparison data.

- Iterative Testing: Retrain the model with different

contaminationvalues (e.g.,0.01,0.05,0.1) and evaluate which best captures the known outliers [43]. - Use 'auto': If the outlier proportion is unknown, try the

contamination='auto'setting [45].

- Review Parameter: Check the

Q3: LOF labels many points at the edge of my data clusters as outliers. Is this normal? A: Yes, this is a common characteristic of LOF. It identifies points that have a significantly lower density than their neighbors [46]. Points on the periphery of a cluster naturally have fewer nearby neighbors and thus a lower local density.

- Resolution:

- Adjust

n_neighbors: Increase then_neighborsparameter (e.g., from 20 to 50). This makes the density estimate less sensitive to the immediate local area and more representative of the broader cluster [47] [46]. - Evaluate Context: Determine if these edge points are true anomalies (e.g., indicating a borderline failure in a method) or false positives. This may require domain expertise [14].

- Adjust

Q4: Should I remove all outliers detected by these algorithms from my dataset? A: No. Outlier removal requires careful justification. You should only remove a data point if you can identify a specific cause, such as a measurement error, data entry error, or if it originates from a population not relevant to your study (e.g., a faulty instrument run) [14]. Outliers that represent natural variation in your data should be retained, as their removal can make your process appear less variable than it truly is [14].

Frequently Asked Questions (FAQs)

Q1: Are Isolation Forest and LOF considered supervised or unsupervised learning? A: Both are unsupervised anomaly detection algorithms. They do not require pre-labeled data (normal vs. anomaly) for training, which is ideal for method comparison research where outlier labels are typically unavailable [43] [42].

Q2: What are the key hyperparameters I need to tune for each algorithm? A: The primary hyperparameters are summarized in the table below.

| Algorithm | Key Hyperparameters | Description and Impact |

|---|---|---|

| Isolation Forest | contamination |

The expected proportion of outliers. Directly affects the classification threshold [43] [44]. |

n_estimators |

The number of isolation trees to build. A higher number can improve stability [44]. | |

max_samples |

The number of samples used to build each tree. Controls the randomness of each tree [45]. | |

| Local Outlier Factor (LOF) | n_neighbors |

The number of neighbors used to estimate local density. Crucially impacts the "locality" of the analysis [47] [46]. |

contamination |

Similar to Isolation Forest, it specifies the proportion of outliers when making predictions [47]. |

Q3: How do the anomaly scores differ between the two methods? A: The scores have different interpretations and ranges.

- Isolation Forest: Outputs an anomaly score generally between -0.5 and 0.5, where scores close to -1 indicate anomalies [44]. Some implementations can produce scores between 0 and 1 [45].

- LOF: Produces the Local Outlier Factor, a ratio that can theoretically range from 0 to infinity. An LOF approximately equal to 1 means the point has similar density to its neighbors. An LOF significantly greater than 1 indicates an outlier [46].

Q4: Can these algorithms be applied to real-time, streaming data from analytical instruments? A: Yes, but it requires a specific implementation strategy. For real-time streaming, you can use a sliding window approach: periodically retrain the model (e.g., Isolation Forest for speed) on the most recent data or use online learning algorithms designed for this purpose [42].

Experimental Protocols and Data Presentation

Summary of Algorithm Performance in a Large-Scale Simulation

A comparative experiment on a synthetic dataset of 1 million data points simulating system metrics (e.g., CPU, memory) revealed key performance differences [42]. The following table quantifies the detection results with contamination=0.02 for Isolation Forest.

| Performance Metric | Isolation Forest | Local Outlier Factor (LOF) |

|---|---|---|

| Total Anomalies Detected | 20,000 | 487 |

| Overlap (Anomalies detected by both) | 370 | 370 |

| Unique Anomalies Detected | 19,630 | 117 |

| Primary Use-Case | Large-scale, efficient screening | Precise, local density-based detection |

Detailed Methodology for Implementing Isolation Forest This protocol is designed for researchers to implement Isolation Forest for outlier detection in method comparison datasets using Python's scikit-learn.

Import Libraries:

Initialize Model: Initialize the model with key parameters. The

random_stateensures reproducibility for your research.Train the Model: Fit the model using your feature data (

X). This is an unsupervised process, so labels are not needed.Generate Predictions and Scores: Use the trained model to generate anomaly labels and scores for further analysis.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Experiment |

|---|---|

| Scikit-learn Library | Provides robust, open-source implementations of both Isolation Forest (IsolationForest) and LOF (LocalOutlierFactor) for Python [43] [47]. |

| Iris Dataset | A standard multivariate dataset often used as a benchmark for initial testing and validation of anomaly detection models [43]. |

| Contamination Parameter | A key "reagent" that defines the expected proportion of outliers in the dataset; must be set based on domain knowledge or experimentation [43] [44]. |

| K-means Clustering | Can be used as a pre-processing step to improve the feature selection of Isolation Forest, leading to more stable detection results [48]. |

| Synthetic Data Generator | Tools like sklearn.datasets.make_blobs allow for the creation of custom datasets with known outlier patterns to validate and tune models before applying them to real data [46]. |

Workflow Visualization

Outlier Handling Workflow

Algorithm Decision Guide

Frequently Asked Questions

Q1: How can I quickly check a dataset for potential outliers during exploratory analysis? Create a boxplot or a scatter plot of your data. Visually inspect for data points that fall far outside the whiskers of the boxplot or that lie anomalously far from the main cluster of data points in the scatter plot. For a numerical summary, calculate the interquartile range (IQR) and flag any points below Q1 - 1.5IQR or above Q3 + 1.5IQR.

Q2: What is the most appropriate method to statistically confirm an outlier? Use statistical tests designed for outlier detection. Grubbs' Test is suitable for identifying a single outlier in a univariate dataset that follows an approximately normal distribution. For multiple outliers, the Generalized Extreme Studentized Deviate (ESD) Test is more appropriate. Always ensure your data meets the test's assumptions, primarily normality, before application.

Q3: When should I remove an outlier, and when should I transform or impute it? Removal is justified when an outlier is confirmed to be a result of a data entry error, a measurement error, or an process error. Transformation or imputation is better when the outlier is a genuine but extreme value, especially if its removal would significantly reduce your sample size or if the dataset is small.

Q4: How do I handle outliers in a method comparison study like a Bland-Altman analysis? First, identify outliers on the Bland-Altman plot. Investigate the source data for these points to determine if they stem from an error. If no error is found, perform the analysis both with and without the outliers and report the results of both scenarios, as influential outliers can significantly bias the estimate of agreement between methods.

Q5: What are some robust imputation techniques for outliers? Common techniques include:

- Winsorizing: Capping the outlier to a specified percentile of the data.

- Median Imputation: Replacing the outlier with the median of the dataset, which is not influenced by extreme values.

- Nearest Neighbor Imputation: Replacing the outlier with a value from a similar, non-outlying case in the dataset.

Experimental Protocol: Outlier Handling Workflow

This protocol provides a step-by-step methodology for the systematic handling of outliers in method comparison data.

1. Objective To identify, validate, and appropriately address outliers in a dataset to ensure robust and reliable statistical conclusions in method comparison studies.

2. Materials & Equipment

- Dataset from method comparison study (e.g., paired measurements from two analytical instruments).

- Statistical software (e.g., R, Python with pandas/scipy/statsmodels, GraphPad Prism).

3. Procedure

Step 1: Graphical Identification

- Generate a Bland-Altman plot to visualize the differences between the two methods against their averages. Look for points lying far outside the limits of agreement.

- Generate a boxplot of the differences between methods to identify extreme values.

Step 2: Numerical & Statistical Confirmation

- Calculate the IQR of the differences and flag potential outliers.

- For a formal test, apply Grubbs' Test or the Generalized ESD Test to the residuals or the differences between methods. Set your significance level (α) typically to 0.05.

Step 3: Root Cause Investigation

- For each statistically confirmed outlier, trace back to the original laboratory data.

- Check for transcription errors, instrument malfunction, or deviations from the standard operating procedure during that specific measurement run. Document all findings.

Step 4: Action & Documentation

- If an error is found: Correct the data if possible, or justify its removal. Proceed with the analysis on the corrected dataset.

- If no error is found (a genuine extreme value):

- Perform a sensitivity analysis: run the primary analysis (e.g., Bland-Altman mean difference calculation, regression) twice—once with the outlier included and once with it excluded.

- Report the results of both analyses and discuss the impact of the outlier.

- Consider using a robust statistical method that is less sensitive to outliers for the final analysis.

4. Analysis Compare the key outcomes (e.g., bias, limits of agreement, correlation coefficient) from the sensitivity analysis. A significant change in these parameters upon outlier removal indicates that the outlier is influential, and conclusions should be drawn cautiously.

Table 1: Common Statistical Tests for Outlier Detection

| Test Name | Data Type | Key Assumption | Primary Use | Software Command Example (R) |

|---|---|---|---|---|

| Grubbs' Test | Univariate | Normal distribution | Detect a single outlier | grubbs.test(data_vector) |

| Dixon's Q Test | Univariate, Small Sample Sizes | Normal distribution | Detect a single outlier in small datasets (N < 25) | dixon.test(data_vector) |

| Generalized ESD Test | Univariate | Normal distribution | Detect up to a pre-specified number (k) of outliers | rosnerTest(data_vector, k = 3) |

| Cook's Distance | Multivariate (Regression) | Linear model assumptions | Identify influential points in a regression analysis | cooks.distance(linear_model) |

Table 2: Comparison of Outlier Treatment Methods

| Method | Description | Advantages | Disadvantages | Suitability |

|---|---|---|---|---|

| Removal | Excluding the outlier from the dataset. | Simple, eliminates non-representative data. | Can reduce statistical power; may introduce bias. | Data entry/measurement errors. |

| Winsorizing | Capping outliers at a certain percentile (e.g., 5th and 95th). | Retains data point and sample size. | Arbitrary choice of percentile; distorts data distribution. | Genuine extreme values in large datasets. |

| Transformation | Applying a mathematical function (e.g., log, square root). | Can normalize the distribution of data. | Makes interpretation of results more complex. | Skewed data where outliers are on one tail. |

| Robust Regression | Using regression methods less sensitive to outliers (e.g., Huber, Theil-Sen). | Does not require direct modification of data. | More computationally intensive than ordinary regression. | Method comparison studies with influential outliers. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Method Comparison Studies

| Item/Category | Function & Application |

|---|---|

| Certified Reference Materials (CRMs) | Provides a ground truth with known, traceable values to assess the accuracy and identify systematic biases (outliers) in a new method. |

| Quality Control (QC) Samples | Used to monitor the stability and precision of an analytical method over time. Shifts in QC data can help identify systematic errors that may manifest as groups of outliers. |

| Statistical Software (R/Python) | Provides the computational environment for executing statistical tests for outlier detection (Grubbs', ESD), creating diagnostic plots (Bland-Altman, boxplots), and performing robust statistical analyses. |

| Laboratory Information Management System (LIMS) | A software-based system for tracking metadata associated with samples. Crucial for the root cause investigation of outliers by providing access to information on instrument calibration, analyst, and reagent lot numbers for the specific outlier sample. |

Visual Workflows

Below are diagrams illustrating the core concepts and processes.

Outlier Handling Decision Workflow

Diagram Color Legend

Best Practices for Documenting Outlier Handling Procedures in Research Protocols

Frequently Asked Questions

Q1: What is the minimum documentation required for outlier handling in a regulatory submission? A comprehensive outlier handling protocol must pre-specify the statistical methods for detection (e.g., IQR, Cook's Distance), the exact threshold for what constitutes an outlier, and the treatment procedure (e.g., removal, winsorizing). Justification for the chosen method must be provided to ensure the procedure is not seen as data manipulation [27].

Q2: How should we handle a situation where an outlier is a genuine data point, not a measurement error? The procedure for such cases should be defined in your protocol. One best practice is to perform and report the primary analysis with the outlier excluded and a sensitivity analysis with the outlier included. This demonstrates the outlier's specific influence on the results and supports the robustness of your conclusions [27].

Q3: Our automated anomaly detection algorithm flagged what we believe is a false positive. What steps should we take? First, document the instance thoroughly, including the data point, the algorithm's parameters, and the reason for believing it is a false positive (e.g., visual inspection, domain knowledge). Your protocol should have a pre-established review committee or a set of criteria for adjudicating such cases to maintain objectivity and avoid introducing bias [27].

Q4: Why is Winsorizing sometimes preferred over simple deletion of outliers? Winsorizing reduces the extreme influence of outliers without completely discarding the data point, which preserves more data for analysis. This technique can provide a more stable and reliable estimate, especially in datasets with small sample sizes. Your protocol should state the percentile used for Winsorizing (e.g., 90th and 10th) [27].

Q5: How can we ensure our graphical summaries of data, which include outlier treatment workflows, are accessible to all team members, including those with color vision deficiencies? Adhere to WCAG guidelines by ensuring sufficient color contrast (at least 4.5:1 for normal text) and do not rely on color alone to convey information. Use patterns, shapes, and direct labels in diagrams. Tools like the WebAIM Contrast Checker can validate your color choices [49] [50].

Troubleshooting Guides

Problem: Inconsistent Outlier Identification Across Team Members

- Symptoms: Different analysts identify different data points as outliers when using the same dataset, leading to irreproducible results.

- Solution:

- Standardize the Protocol: Ensure the research protocol includes an unambiguous, step-by-step definition of the outlier detection method. For example, instead of "use IQR," specify "outliers are defined as points below (Q1 - 1.5IQR) or above (Q3 + 1.5IQR)."

- Automate the Process: Use scripted analysis (e.g., in R or Python) to perform the outlier detection. This removes subjective judgment and ensures consistency across the team [27].

- Blinded Review: If manual review is necessary, implement a blinded process where reviewers adjudicate potential outliers without knowledge of the experimental groups to prevent confirmation bias.

Problem: High Rate of False Positives from AI Anomaly Detection Tools

- Symptoms: The machine learning tool flags a large number of normal data points as anomalous, making the results unusable.

- Solution:

- Re-tune Model Parameters: Adjust the sensitivity of the algorithm. This may involve changing the contamination parameter or the threshold for anomaly scores.

- Feature Engineering: Re-evaluate the input features provided to the model. The model may be using non-predictive or noisy features, leading to poor performance.

- Incorporate Domain Knowledge: Use a hybrid approach where the AI tool generates a list of candidate anomalies, which are then reviewed and confirmed by a subject matter expert based on pre-defined biological or chemical plausibility criteria [27].

Problem: Statistical Model is Overly Sensitive to Influential Observations

- Symptoms: The inclusion or exclusion of a single data point dramatically changes the model's coefficients or key outcomes.

- Solution:

- Perform Influence Analysis: Calculate Cook's Distance for each data point to quantitatively identify observations that have a disproportionate influence on the model [27].

- Use Robust Statistical Methods: Switch to regression techniques that are less sensitive to outliers, such as robust regression or quantile regression.

- Report Sensitivity Analyses: Always report the model results both with and without the influential observations. This transparently communicates their impact on the findings [27].

Quantitative Data Standards for Outlier Documentation

The following table summarizes key quantitative metrics and thresholds for common outlier detection methods.

Table 1: Common Outlier Detection Methods and Thresholds

| Method | Formula / Threshold | Typical Application |

|---|---|---|

| Interquartile Range (IQR) | Mild Outliers: < Q1 - 1.5IQR or > Q3 + 1.5IQR Extreme Outliers: < Q1 - 3IQR or > Q3 + 3IQR | Identifying outliers in univariate, non-normal data. |

| Z-Score | Absolute Z-Score > 2 or 3 | Detecting outliers in normally distributed data. |

| Cook's Distance | Di > 4/n (where n is the sample size) | Identifying influential points in regression analysis [27]. |

| Winsorizing | Typically set at 5th and 95th, or 10th and 90th percentiles. | Reducing the impact of outliers without removing them. |

Visual Workflows for Outlier Handling

The diagrams below outline the logical workflow for managing and documenting outliers in research data. Color choices for text and elements adhere to high-contrast guidelines for readability [51] [49] [50].

Outlier Management Process

Documentation Protocol

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Outlier Analysis

| Item / Tool | Function | Brief Explanation |

|---|---|---|

| Statistical Software (R/Python) | Analysis Execution | Platforms like R and Python with libraries (e.g., statsmodels, scikit-learn) are essential for performing reproducible and scripted outlier detection and statistical analysis [27]. |

| IQR Method | Outlier Detection | A non-parametric method robust to non-normal data distributions. It identifies outliers based on the spread of the middle 50% of the data [27]. |

| Cook's Distance | Influence Analysis | A metric used in regression analysis to identify data points that significantly influence the model's estimated coefficients. Points with a large Cook's Distance require careful investigation [27]. |

| Winsorizing | Outlier Treatment | A technique to handle outliers by limiting extreme values. The top and bottom percentiles of data are set to a specified value, reducing variance without removing data points [27]. |

| Sensitivity Analysis | Result Validation | The practice of running the primary analysis multiple times under different conditions (e.g., with/without outliers) to demonstrate the robustness of the conclusions [27]. |

Solving Real-World Challenges: False Positives, Masking, and Data Integrity

Mitigating False Positives and Swamping Effects in High-Dimensional Data

FAQs on Core Concepts

What are false positives and swamping effects in the context of high-dimensional data? In high-dimensional data analysis, a false positive occurs when a normal data point is incorrectly flagged as an outlier. Swamping is the opposite effect, where genuine outliers go undetected and are incorrectly considered part of the normal data population. These errors are particularly prevalent in method comparison studies in drug development, where they can skew the perceived agreement between analytical techniques and lead to invalid conclusions [52].

What are the common sources of batch effects that can induce these errors? Batch effects are technical variations unrelated to the biological or chemical factors of interest. They can be introduced at multiple stages and are a major source of spurious outliers:

- Sample Preparation: Variations in reagent lots, protocol procedures, and storage conditions [53].

- Instrumentation: Using different machines or the same machine over different time periods [53].

- Data Generation: Changes in analysis pipelines or personnel [53].

- Study Design: A confounded study design where batch is highly correlated with a biological outcome of interest is a critical source of irreproducibility [53].

Are some data types more susceptible to these issues? Yes. While batch effects are common across omics data, the challenges are magnified in:

- Single-cell RNA sequencing (scRNA-seq): Suffers from higher technical variations, lower RNA input, and higher dropout rates than bulk RNA-seq, making batch effects more severe [53].

- Multi-omics studies: Involve integrating data from different platforms with different distributions and scales, increasing the complexity of batch effects [53].

- Longitudinal or multi-center studies: Technical variables can be confounded with time or exposure, making it difficult to distinguish true changes from artifacts [53].

Troubleshooting Guides

Problem: Suspected batch effects are causing false outliers. Solution: Diagnose and correct for batch effects.

- Diagnosis:

- Correction:

- Choose an Algorithm: Select a batch effect correction algorithm (BECA) suited to your data type.

- Preserve Biology: For scRNA-seq data, prefer methods that use "anchors" (mutual nearest neighbors) to align shared cell populations across batches, such as Seurat or Harmony [55].

- Maintain Data Integrity: Consider methods with order-preserving features to maintain the original relative rankings of gene expression levels, which helps retain biologically meaningful patterns [54].

Problem: High number of false positives during outlier detection. Solution: Implement robust, projection-based detection methods. The KASP (Kurtosis and Skewness Projections) procedure is a modern method designed for high-dimensional data [52].

- Methodology: It finds specific projections that maximize non-normality:

- Direction 1: Maximizes a combination of squared skewness and kurtosis.

- Direction 2: Minimizes the kurtosis coefficient.

- Direction 3: Maximizes the squared skewness coefficient.

- Protocol: Applying KASP involves using these projections to identify observations that are outliers in these maximally non-normal directions, which has been shown to correctly identify outliers for many different contamination structures [52].

Problem: Need to validate an outlier detection method's performance. Solution: Use standardized metrics to evaluate clustering accuracy and batch mixing after correction. The table below summarizes key metrics used in benchmarking studies, such as those evaluating batch-effect correction methods for scRNA-seq data [54].

Table 1: Quantitative Metrics for Evaluating Outlier and Batch Effect Correction Methods

| Metric | Full Name | What It Measures | Interpretation |

|---|---|---|---|

| ARI | Adjusted Rand Index | Similarity between two data clusterings (e.g., true vs. predicted cell types). | Higher values (closer to 1) indicate better accuracy in identifying true biological groups. |

| ASW | Average Silhouette Width | How similar an object is to its own cluster compared to other clusters. | Higher values (closer to 1) indicate tighter and more distinct clusters. |

| LISI | Local Inverse Simpson's Index | Diversity of batches in a local neighborhood. | Higher values indicate better mixing of batches (fewer batch-specific outliers). |

Experimental Protocols for Method Validation

Protocol: Benchmarking an Outlier Detection Pipeline with scRNA-seq Data

This protocol is adapted from methodologies used in recent papers to evaluate batch-effect correction and outlier detection tools [54].

- Data Acquisition and Preprocessing: Obtain a public scRNA-seq dataset with known batch effects and annotated cell types. Perform standard preprocessing: quality control, normalization, and log-transformation.

- Apply Batch Effect Correction: Process the data using the method under evaluation (e.g., Seurat, Harmony, ComBat) and a baseline "uncorrected" dataset.

- Dimensionality Reduction and Clustering: Generate UMAP/t-SNE embeddings for visualization. Perform clustering on the corrected data.

- Quantitative Evaluation: Calculate the metrics in Table 1 (ARI, ASW, LISI) to assess the trade-off between batch mixing (reducing false positives) and biological integrity (preventing swamping).

- Assess Data Integrity:

- Inter-gene Correlation: For key cell types, calculate the Pearson correlation of significantly correlated gene pairs before and after correction. A good method will maintain high correlation (low Root Mean Square Error) [54].

- Order-Preserving Feature: For a given gene, plot the Spearman correlation of its expression levels (non-zero) in cells before versus after correction. A value of 1 indicates perfect order preservation [54].

Workflow and Pathway Diagrams

Diagram 1: High-Dimensional Data Analysis Workflow

The Scientist's Toolkit