A Practical Guide to Intermediate Precision Testing: Experimental Design, ICH Q2(R2) Compliance, and Method Validation

This article provides a comprehensive guide for researchers and drug development professionals on designing and executing robust intermediate precision studies.

A Practical Guide to Intermediate Precision Testing: Experimental Design, ICH Q2(R2) Compliance, and Method Validation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on designing and executing robust intermediate precision studies. Covering foundational principles, step-by-step methodologies, troubleshooting strategies, and validation against regulatory standards, it bridges the gap between ICH Q2(R2) guidelines and practical laboratory implementation. Readers will learn to construct effective experimental designs, calculate key metrics, and integrate intermediate precision into a holistic analytical procedure lifecycle for reliable, compliant method validation.

Understanding Intermediate Precision: Definitions, Regulatory Importance, and Distinction from Other Precision Measures

In the realm of analytical method validation, precision represents the closeness of agreement between a series of measurements obtained from multiple sampling of the same homogeneous sample under specified conditions [1]. It is a critical parameter that ensures the reliability and consistency of analytical results, forming a cornerstone of quality control in pharmaceutical development and other research fields. Precision is typically investigated at three distinct levels: repeatability, intermediate precision, and reproducibility [2] [3]. Understanding these hierarchies is essential for designing robust analytical methods that can withstand the variations encountered in routine laboratory practice.

While repeatability expresses the precision under identical conditions over a short period of time, and reproducibility assesses precision between different laboratories, intermediate precision occupies a crucial middle ground [2]. It measures the within-laboratory variation that occurs when an analytical procedure is performed over an extended period by different analysts using different equipment [4] [5]. This application note explores the conceptual framework, experimental design, and practical implementation of intermediate precision testing within the context of advanced research in analytical method validation.

Theoretical Framework and Definitions

Conceptual Hierarchy of Precision

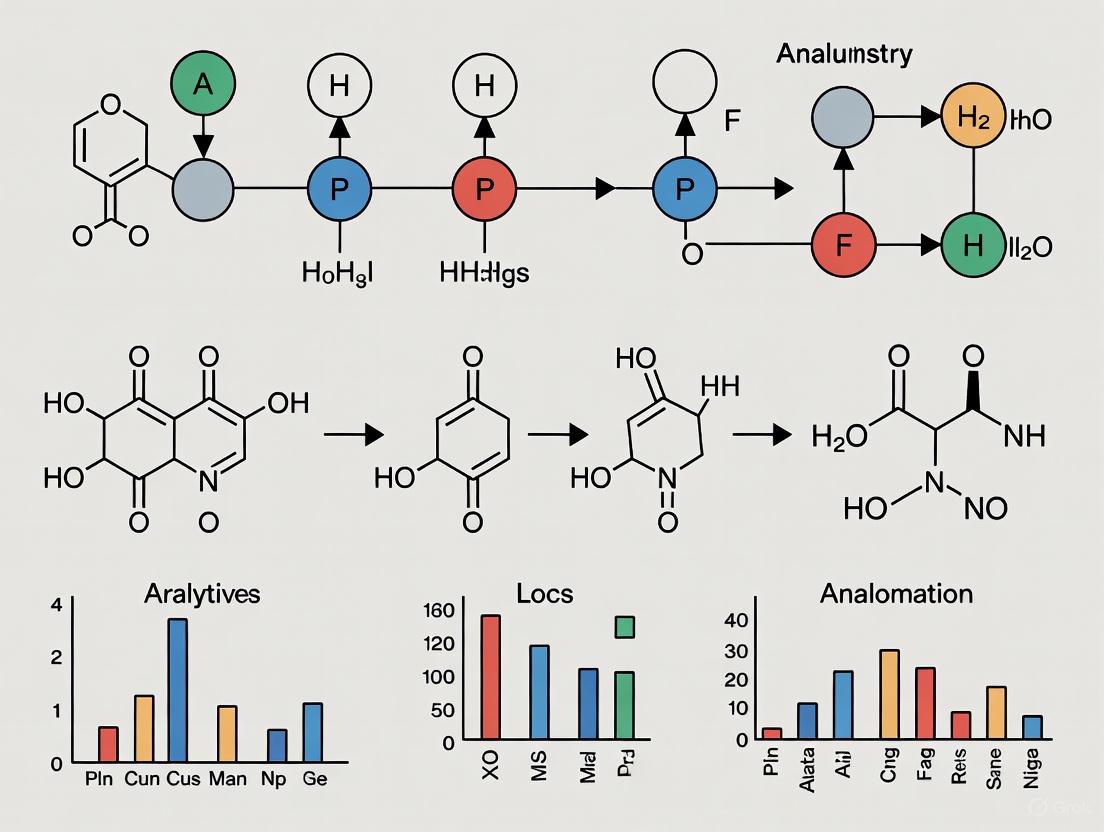

The relationship between different precision measures forms a hierarchical structure where each level incorporates additional sources of variability. Intermediate precision serves as the critical bridge between the optimal conditions of repeatability and the completely independent conditions of reproducibility [4]. This hierarchy can be visualized through the following conceptual diagram:

Repeatability represents the most optimistic precision measure, obtained when measurements are performed under identical conditions: same procedure, same operators, same measuring system, same operating conditions, same location, and over a short period of time [2] [1]. The standard deviation obtained under these conditions (srepeatability, sr) is expected to show the smallest possible variation in results [2].

Intermediate precision (sintermediate precision, sRW) incorporates additional variables that naturally occur within a single laboratory over a longer timeframe [2]. These factors—which may include different analysts, equipment, reagent batches, columns, and calibration standards—behave systematically within a day but manifest as random variables over extended periods [2] [4]. Consequently, the standard deviation for intermediate precision is typically larger than that for repeatability.

Reproducibility expresses the precision between measurement results obtained in different laboratories, capturing the maximum expected method variability [2] [4]. This represents the most realistic assessment of how a method will perform across multiple testing sites.

Critical Distinctions Between Precision Measures

The table below summarizes the key operational differences between these precision measures:

Table 1: Comparison of Precision Measures in Analytical Chemistry

| Parameter | Repeatability | Intermediate Precision | Reproducibility |

|---|---|---|---|

| Time Frame | Short period (typically one day or one analytical run) [2] | Extended period (generally at least several months) [2] | Extended period [1] |

| Operators | Same analyst [1] | Different analysts [2] [4] | Different analysts across laboratories [2] |

| Equipment | Same measuring system [1] | Different instruments within same lab [4] | Different instruments across laboratories [1] |

| Location | Same laboratory [1] | Same laboratory [5] | Different laboratories [2] |

| Scope of Variability | Minimal variability [2] | Within-laboratory variability [4] | Between-laboratory variability [2] |

| Standard Deviation | Smallest (srepeatability, sr) [2] | Larger than repeatability (sintermediate precision, sRW) [2] | Largest [1] |

Experimental Design for Intermediate Precision Studies

Key Variability Factors in Intermediate Precision

Intermediate precision investigations systematically evaluate the impact of various factors that contribute to methodological variability within a single laboratory. These factors represent the normal variations encountered during routine application of an analytical method [4]. The major sources of variability include:

- Temporal Variations: Measurements conducted over an extended period (several months) to account for potential drifts in instrument performance, environmental fluctuations, and reagent degradation [2] [5]

- Analyst Variations: Different analysts performing the method using their individual techniques and sample preparation styles [4] [3]

- Instrument Variations: Different instruments of the same type (e.g., HPLC systems, balances, spectrophotometers) with their unique performance characteristics [4]

- Consumable Variations: Different batches of reagents, columns, solvents, and other consumables that might exhibit batch-to-batch variability [2] [6]

- Calibration Variations: New calibrations performed at different times with freshly prepared standard solutions [5]

Experimental Design Approaches

Matrix Approach (Experimental Design)

The matrix approach provides a structured framework for efficiently evaluating multiple variables simultaneously through an experimental design [7]. This method "kills all aspects with one stone" by systematically varying conditions across a series of experiments [7]. A typical matrix design for intermediate precision assessment includes the following structure:

Table 2: Matrix Experimental Design for Intermediate Precision Evaluation

| Experiment | Operator | Day | Instrument |

|---|---|---|---|

| 1 | 1 | 1 | 1 |

| 2 | 2 | 1 | 2 |

| 3 | 1 | 2 | 2 |

| 4 | 2 | 2 | 1 |

| 5 | 1 | 3 | 1 |

| 6 | 2 | 3 | 2 |

This design consists of 6 experiments where two technicians perform analyses over three days using two different instruments, with the sample analyzed at 100% target concentration [7]. The arrangement ensures that all factor combinations are adequately represented while maintaining a practical number of experimental runs.

Kojima Design (Japanese NIHS Approach)

A more comprehensive variation known as the Kojima design or Japanese NIHS design extends the matrix approach by incorporating additional factors such as different HPLC column batches [7]. This design spans six independent experiments conducted on different days:

Table 3: Kojima Design for Comprehensive Intermediate Precision Assessment

| Independent Experiment/Day | 1 | 2 | 3 | 4 | 5 | 6 |

|---|---|---|---|---|---|---|

| Analyst | 1 | 1 | 1 | 2 | 2 | 2 |

| Equipment | 1 | 2 | 1 | 2 | 1 | 2 |

| Column | 1 | 2 | 2 | 2 | 1 | 1 |

This approach provides a robust framework for evaluating intermediate precision while accounting for multiple potential sources of variability within the laboratory environment [7].

Risk-Based Approaches

Modern quality-by-design (QbD) principles emphasize science- and risk-based approaches to intermediate precision studies [8]. Rather than employing generic designs, these approaches identify factors that present the highest risk of impacting analytical procedure performance through prior knowledge and risk assessment tools [8]. The number of independent analytical runs is then linked to the overall risk and complexity associated with the analytical procedure [8].

Calculation Methodologies and Data Evaluation

Statistical Foundation

The calculation of intermediate precision incorporates variability both within and between experimental conditions. The combined standard deviation for intermediate precision (σIP) can be calculated using the formula:

σIP = √(σ²within + σ²between) [4]

Where:

- σ²within represents the variance within each set of conditions (e.g., within each analyst's results)

- σ²between represents the variance between different conditions (e.g., between different analysts' means)

For practical purposes, intermediate precision is typically expressed as the relative standard deviation (RSD%) or coefficient of variation (CV%), which standardizes the variability measure relative to the mean value:

RSD% = (Standard Deviation / Mean) × 100% [4] [6]

This normalized measure allows for meaningful comparisons across different methods and concentration ranges.

Data Evaluation and Interpretation

The evaluation of intermediate precision data focuses on the RSD% value calculated from all measurements across the varying conditions. The following example illustrates a typical data evaluation scenario:

Table 4: Example Intermediate Precision Data for Drug Substance Content Determination

| Analyst | Instrument | Results (%) | Mean (%) | Standard Deviation | RSD% |

|---|---|---|---|---|---|

| 1 | 1 | 98.7, 99.1, 98.9, 99.2, 98.8, 99.0 | 98.95 | 0.19 | 0.19 |

| 2 | 2 | 99.3, 98.8, 99.5, 99.1, 98.7, 99.4 | 99.13 | 0.31 | 0.31 |

| Overall | Combined | All 12 results | 99.04 | 0.26 | 0.26 |

In this example, the intermediate precision RSD% of 0.26% incorporates variability from both analysts and instruments [6]. The RSD% for the combined data is typically larger than the individual RSD% values from repeatability studies, reflecting the additional sources of variability being captured [6].

Acceptance criteria for intermediate precision depend on the method's intended purpose and the analytical context. Generally, lower RSD% values indicate better precision, with typical acceptance criteria ranging from 1-2% for assay methods of drug substances to higher values for impurity determinations or biological assays [4].

Essential Research Reagent Solutions and Materials

Successful intermediate precision studies require careful selection and control of key materials and reagents. The following table outlines essential items and their functions in intermediate precision testing:

Table 5: Essential Research Reagent Solutions for Intermediate Precision Studies

| Material/Reagent | Function in Intermediate Precision | Considerations for Study Design |

|---|---|---|

| Reference Standards | Provides benchmark for method accuracy and calibration | Use different lots to assess standard-to-standard variability [2] |

| HPLC Columns | Stationary phase for chromatographic separation | Include multiple lots/batches to assess column-to-column variability [2] [7] |

| Reagent Batches | Solvents, buffers, and mobile phase components | Use different manufacturing lots to account for reagent variability [2] [4] |

| Sample Types | Representative test samples across validated range | Include different concentrations to assess precision across working range [3] |

| Calibrators | Establish calibration curve for quantitative methods | Prepare fresh calibrations for different experimental runs [5] |

Implementation Protocols and Best Practices

Step-by-Step Protocol for Intermediate Precision Assessment

Define Study Scope: Identify which variables will be incorporated (analysts, days, equipment, reagent batches, columns) based on risk assessment and intended method use [4] [8]

Design Experiment: Select appropriate experimental design (matrix approach, Kojima design, or risk-based design) with sufficient replicates to ensure statistical significance [7]. A minimum of six independent measurements across varying conditions is typically recommended [7] [8]

Prepare Materials: Ensure availability of appropriate reference standards, reagents, columns, and samples from different lots/batches as defined in the experimental design [2]

Execute Analysis: Conduct analyses according to the predefined experimental design, ensuring that each combination of conditions is properly implemented [7]

Collect Data: Record all raw measurement values rather than averaged results to capture true variability in the system [4]

Calculate Statistical Parameters: Determine mean, standard deviation, and RSD% for the combined data set across all varying conditions [4] [6]

Evaluate Results: Compare calculated RSD% against predefined acceptance criteria based on method requirements and industry standards [4]

Document Findings: Comprehensive documentation should include experimental design, raw data, statistical calculations, and interpretation of results [3]

Common Pitfalls and Mitigation Strategies

- Insufficient Data Points: Avoid using too few measurements that may not adequately capture variability. Include a minimum of 6-12 measurements across different days [4]

- Inadequate Training: Ensure all analysts are thoroughly trained on the method protocol to minimize operator-dependent variations [4]

- Poor Environmental Control: Monitor and control laboratory conditions (temperature, humidity) as these can significantly impact results [4]

- Over-reliance on Statistical Tests: Avoid using statistical significance tests (e.g., Student's t-test) for small sample sizes as they may indicate significant differences that are not practically relevant [6]

- Neglecting Column Variability: Include different batches of chromatographic columns as this represents a significant source of variability in separation methods [7]

Intermediate precision represents a critical validation parameter that bridges the gap between ideal repeatability conditions and real-world laboratory variability. Through carefully designed experiments such as matrix approaches or risk-based designs, researchers can quantitatively assess the impact of normal laboratory variations on analytical method performance. The calculated intermediate precision, typically expressed as RSD%, provides a realistic expectation of method performance during routine use within a single laboratory. Proper implementation of intermediate precision studies strengthens method robustness and ensures reliable analytical results throughout the method's lifecycle, ultimately contributing to the overall quality and reliability of scientific data in pharmaceutical development and other research fields.

Within the framework of ICH Q2(R2), the validation of analytical procedures is paramount for ensuring the reliability and quality of pharmaceutical testing. Intermediate precision is a critical validation parameter that demonstrates the reliability of an analytical method under normal, but varied, conditions of use within a single laboratory [9]. It expresses the closeness of agreement between a series of measurements obtained from multiple samplings of the same homogeneous sample under varied prescribed conditions [10]. This parameter is essential for building confidence that an analytical method will perform consistently day-to-day, between different analysts, and across different equipment, forming a bedrock of robust method performance throughout the method's lifecycle.

The ICH Q2(R2) guideline distinguishes precision at three levels: repeatability, intermediate precision, and reproducibility [10]. While repeatability (intra-assay precision) assesses variability under the same operating conditions over a short interval, and reproducibility assesses precision between different laboratories, intermediate precision occupies the crucial middle ground. It evaluates the method's resilience to expected operational variations, making it a more realistic measure of a method's routine performance. A robust demonstration of intermediate precision is, therefore, not merely a regulatory checkbox but a fundamental component of a science- and risk-based validation strategy, ensuring that analytical results remain accurate and precise even when minor, inevitable changes occur in the analytical environment [9].

Regulatory Context and Experimental Design Principles

The Mandate of ICH Q2(R2)

The ICH Q2(R2) guideline provides the global standard for the validation of analytical procedures. It mandates that intermediate precision should be established by evaluating the method's performance under the varying circumstances expected during its routine use [11] [10]. Typical variations incorporated into an intermediate precision study include the effects of different days, analysts, equipment, and critical reagents [10]. The guideline encourages the use of a structured experimental design (also referred to as a "study set-up") to efficiently and effectively determine this parameter, moving away from a univariate approach to a more holistic one that can capture potential interaction effects between factors [10].

A key principle in designing these studies is covering the reportable range. ICH Q2(R2) distinguishes between the reportable range (pertaining to product specifications) and the working range (pertaining to concentration levels of sample preparations) [10]. The intermediate precision must be acceptable across this entire reportable range, meaning that the study should demonstrate that the method delivers acceptable precision at both the lower and upper specification limits [10].

Foundational Design Concepts

A well-designed intermediate precision study is built on several core concepts. The setup must include a sufficient number of independent runs—defined as a complete, independent execution of the analytical procedure—to properly estimate the between-run variability. The guideline suggests "not less than 6 runs" for a proper determination of the standard deviation and RSD% [10]. Each run should incorporate pre-defined variations, such as different analysts, instruments, and HPLC columns, with fresh preparations of reagents and reference solutions to ensure true independence between runs [10].

The total variability observed in the study results from two primary sources: the within-run variance (which corresponds to the method's repeatability) and the between-run variance (which arises from the deliberate changes in conditions) [10]. The statistical sum of these two variance components yields the total variance for intermediate precision. The use of Analysis of Variance (ANOVA) is the recommended statistical tool to deconstruct the overall variability into these meaningful components, providing a clear and quantifiable measure of the method's robustness to within-laboratory variations [10].

Experimental Protocol for Intermediate Precision

Study Setup and Variable Selection

A robust experimental protocol for intermediate precision begins with a risk-based selection of variables to include in the study. The protocol should be documented in a detailed validation protocol that defines the scope, acceptance criteria, and analytical procedure.

Core Experimental Setup: The foundational setup involves a minimum of 6 independent runs, each containing a minimum of 3 replicates [10]. This design allows for the simultaneous determination of both intermediate precision and repeatability. The following table outlines a recommended design for a single batch:

Table 1: Recommended Experimental Design for Intermediate Precision (Single Batch)

| Run Number | Analyst | Instrument | HPLC Column | Day | Number of Replicates |

|---|---|---|---|---|---|

| 1 | A | 1 | 1 | 1 | 3 |

| 2 | A | 2 | 2 | 2 | 3 |

| 3 | B | 1 | 3 | 3 | 3 |

| 4 | B | 2 | 1 | 4 | 3 |

| 5 | A | 1 | 2 | 5 | 3 |

| 6 | B | 2 | 3 | 6 | 3 |

This balanced design ensures that the effects of multiple factors (analyst, instrument, column) are adequately assessed across different days, providing a comprehensive view of the method's performance. For studies involving multiple batches (e.g., a release and a stability batch), the same run structure should be applied to each batch, and the variance component attributable to the "Batch" factor should be excluded from the final intermediate precision calculation [10].

Sample Preparation and Analysis

The sample used for the study should be a homogeneous sample representative of the material tested. For assays, the study should cover the reportable range, typically requiring testing at 100% of the test concentration, and potentially at the lower and upper limits of the specification range (e.g., 70% and 130%) to demonstrate acceptable precision across the entire range [10].

For each run, all samples, standard solutions, and mobile phases must be prepared fresh to ensure that the runs are truly independent. The analytical procedure should be followed exactly as written, and all system suitability criteria must be met before the data from a run can be included in the final evaluation. The following workflow diagram illustrates the entire experimental process.

Data Evaluation and Statistical Analysis

Analysis of Variance (ANOVA) Workflow

The evaluation of intermediate precision data relies heavily on Analysis of Variance (ANOVA). ANOVA is used to partition the total variability in the data into its constituent parts: the within-run variance (repeatability) and the between-run variance [10]. The intermediate precision is then calculated as the sum of these two variance components.

Before performing ANOVA, the data must be checked for two key assumptions: homoscedasticity (equality of variances across different runs and levels) and normality [10]. Homoscedasticity can be confirmed visually or by using statistical tests such as Levene's test or the Bartlett test. If the data exhibits heteroscedasticity (where variability changes with concentration, which is common for impurity methods or bioassays), a data transformation (e.g., log or square root transformation) may be necessary before proceeding with ANOVA [10].

The following logical diagram outlines the statistical evaluation process from raw data to the final intermediate precision value.

Interpretation of Results and Acceptance Criteria

The final output of the ANOVA is the calculation of the intermediate precision, expressed as a standard deviation (SD) and relative standard deviation (%RSD). The formula for the intermediate precision standard deviation is:

Intermediate Precision SD = √(Between-Run Variance + Within-Run Variance)

The acceptance criteria for intermediate precision are method-specific and should be defined prospectively in the validation protocol based on the method's intended use and the requirements of the analyte [10] [12]. There is no universal value, as the acceptable level of precision depends on the analytical technique (e.g., HPLC, ELISA) and the nature of the test (e.g., assay, impurity determination). For an assay of an active ingredient, an intermediate precision %RSD of not more than 2.0% is often targeted, but this must be scientifically justified.

Table 2: Key Statistical Outputs from Intermediate Precision Study

| Statistical Parameter | Description | Source |

|---|---|---|

| Within-Run Variance | The variance of measurements within the same run. Represents the method's repeatability. | ANOVA Output (MSwithin) |

| Between-Run Variance | The variance arising from the changes in conditions between different runs (e.g., analyst, day). | ANOVA Output |

| Intermediate Precision SD | The total standard deviation accounting for within-lab variations. Calculated as √(σ²between + σ²within). | Calculated |

| Intermediate Precision %RSD | The relative standard deviation, calculated as (IP SD / Overall Mean) x 100%. | Calculated |

The Scientist's Toolkit: Essential Research Reagent Solutions

The execution of a reliable intermediate precision study depends on the quality and consistency of materials used. The following table details key reagents and materials, along with their critical functions in the context of the study.

Table 3: Essential Research Reagent Solutions for Intermediate Precision Studies

| Item | Function in Intermediate Precision Study |

|---|---|

| Reference Standards | Certified materials with known purity and concentration used to calibrate the analytical procedure and ensure accuracy across all runs [12]. |

| HPLC Columns (Different Lots/Suppliers) | To deliberately vary a critical method parameter and assess the method's robustness to changes in column performance, a known source of variability [10] [12]. |

| Mobile Phase Reagents | High-purity solvents and buffers prepared fresh for each independent run to introduce realistic variation in reagent batches and ensure run independence [10]. |

| System Suitability Test (SST) Solutions | Specific test mixtures used to verify that the chromatographic system is performing adequately at the start of each run, ensuring data validity [12]. |

| Placebo/Matrix Blanks | Samples containing all components except the analyte, used to demonstrate the specificity of the method and confirm the absence of interference across varied conditions [12]. |

Intermediate precision is not a mere regulatory formality but a fundamental pillar of a sound analytical procedure validation strategy under ICH Q2(R2). A well-designed study, incorporating a risk-based selection of variables and a structured experimental design, provides a realistic assessment of a method's performance in the routine laboratory environment. The use of ANOVA for data evaluation allows for a nuanced understanding of the sources of variability, deconstructing it into repeatability and between-run components. By rigorously demonstrating intermediate precision, scientists provide compelling evidence that an analytical method is truly fit-for-purpose, ensuring the generation of reliable, high-quality data that underpins drug product quality and, ultimately, patient safety. This approach aligns perfectly with the modernized, science- and risk-based paradigm championed by the concurrent implementation of ICH Q2(R2) and ICH Q14 [9].

In the scientific method, the principle of reproducibility is a major foundation for establishing valid scientific knowledge [13]. Within analytical chemistry and method validation, precision is a critical parameter that quantifies the random variation in a series of measurements under specified conditions. This application note deconstructs the hierarchical layers of precision—repeatability, intermediate precision, and reproducibility—which are often mistakenly used interchangeably despite representing distinct concepts with different implications for experimental design and data interpretation. Understanding these distinctions is particularly crucial for researchers and drug development professionals designing robust studies and validating analytical methods that will withstand regulatory scrutiny.

The precision hierarchy progresses from the most controlled conditions (repeatability) through realistic within-laboratory variations (intermediate precision) to the broadest consistency assessment across different laboratories (reproducibility). Each level incorporates additional sources of variability, providing progressively more comprehensive assessments of method reliability. Proper differentiation among these terms is essential for designing appropriate validation protocols, setting realistic acceptance criteria, and ensuring the generation of reliable, defensible data in pharmaceutical development and other scientific fields.

Theoretical Framework and Definitions

The Three-Tiered Precision Hierarchy

The precision hierarchy encompasses three formally recognized levels, each defined by the specific conditions under which measurements are obtained. The following structured definitions establish the conceptual framework for understanding their relationships and applications.

Repeatability represents the most fundamental level of precision, defined as the "closeness of agreement between the results of successive measurements of the same measure, when carried out under the same conditions of measurement" [14]. These specific conditions are formally known as repeatability conditions and include: the same measurement procedure, same operators, same measuring system, same operating conditions, and same location over a short period of time [2]. In metrology, it is characterized as a measurement system's ability to produce the same results consistently when the same item is measured multiple times under identical conditions [15]. Repeatability is expected to give the smallest possible variation in results, as it captures only the random error occurring under nearly identical circumstances within a very limited timeframe [2].

Intermediate Precision (occasionally called within-laboratory precision) occupies the middle tier in the precision hierarchy. Differently from repeatability, intermediate precision is "the precision obtained within a single laboratory over a longer period of time (generally at least several months) and takes into account more changes than repeatability" [2]. The Association for Computing Machinery further defines it as "a measure of precision under a defined set of conditions: same measurement procedure, same measuring system, same location, and replicate measurements on the same or similar objects over an extended period of time" [5]. This level systematically introduces realistic variations expected during routine laboratory operations, including different analysts, different calibrants, different reagent batches, different equipment, and different environmental conditions [4]. These factors behave as systematic within a day but manifest as random variables over an extended period, thus providing a more comprehensive assessment of method robustness under normal operating conditions within a single facility.

Reproducibility represents the broadest level of precision assessment, formally defined as the "precision between the measurement results obtained at different laboratories" [2]. The National Academies of Sciences, Engineering, and Medicine further clarify that "reproducibility refers to the ability of a researcher to duplicate the results of a prior study using the same materials and procedures as were used by the original investigator" [16]. This highest tier incorporates all potential sources of variability, including different personnel, equipment, calibration standards, reagent sources, environmental conditions, and laboratory practices [13]. Reproducibility is not always required for single-lab validation but becomes essential when an analytical method is standardized or transferred between facilities, such as methods developed in R&D departments that will be deployed across multiple quality control laboratories [2].

Conceptual Relationships and Distinctions

The relationship between these three precision levels can be visualized as a hierarchy of increasing variability sources, with each level encompassing all the variability of the preceding level plus additional sources. The following diagram illustrates this conceptual relationship and the key differentiating factors at each tier.

Figure 1: The Precision Hierarchy Pyramid

This conceptual framework shows how each progressive level incorporates additional sources of variability. Repeatability forms the foundation with minimal variability under identical conditions. Intermediate precision builds upon this by introducing realistic within-laboratory variations. Reproducibility represents the most comprehensive assessment by incorporating all potential sources of variability across different laboratories. Understanding this hierarchical relationship is essential for designing appropriate validation protocols and setting realistic acceptance criteria for analytical methods.

Quantitative Comparison and Acceptance Criteria

Statistical Measures and Expressions

Each level of the precision hierarchy is quantified using specific statistical measures that facilitate objective comparison and establish method suitability for intended applications. The most common statistical expressions for precision include standard deviation (SD) and relative standard deviation (RSD%), also known as the coefficient of variation (CV).

Repeatability is typically expressed as the standard deviation under repeatability conditions (s~repeatability~, s~r~) or the repeatability coefficient [2] [14]. The repeatability standard deviation represents the smallest variability achievable with the method, as it incorporates only random error under nearly identical conditions. For practical applications, repeatability is often reported as the %RSD of a minimum of six determinations at 100% of the test concentration or nine determinations covering the specified range (three concentrations with three replicates each) [3].

Intermediate Precision is expressed as the intermediate precision standard deviation (s~intermediate precision~, s~RW~) and is calculated by combining variance components from the varied conditions within the laboratory [2]. The formula for intermediate precision combines these variance components: σ~IP~ = √(σ²~within~ + σ²~between~) [4]. This calculation accounts for both random variations within each set of conditions and systematic variations between different conditions (e.g., between different analysts or different days). Intermediate precision results are typically reported as %RSD, and the percentage difference in mean values between different analysts' results are statistically compared using methods such as Student's t-test [3].

Reproducibility is quantified as the reproducibility standard deviation (s~reproducibility~, s~R~) when assessing collaborative studies between laboratories [13]. Documentation in support of reproducibility studies should include the standard deviation, relative standard deviation, and confidence interval [3]. In inter-laboratory experiments, reproducibility is defined as the standard deviation for the difference between two measurements from different laboratories [13]. The acceptance criteria for reproducibility depend on the specific application and methodological requirements but generally allow for greater variability than intermediate precision due to the incorporation of additional inter-laboratory variance components.

Comparative Analysis of Precision Parameters

The table below provides a comprehensive comparison of the three precision levels, including their defining conditions, statistical expressions, and typical acceptance criteria for analytical method validation in pharmaceutical applications.

Table 1: Comparative Analysis of Precision Parameters in Analytical Method Validation

| Parameter | Repeatability | Intermediate Precision | Reproducibility |

|---|---|---|---|

| Definition | Closeness of agreement between successive results under identical conditions [2] | Precision within a single laboratory over extended period with varied conditions [2] | Precision between measurement results obtained at different laboratories [2] |

| Conditions | Same procedure, operator, instrument, location, short time period [14] | Different days, analysts, equipment, reagent batches; same location [4] | Different laboratories, personnel, equipment, environments [2] |

| Time Frame | Short period (typically one day or one analytical run) [2] | Extended period (several months) [2] | Extended period (collaborative studies) |

| Variability Sources | Random error only | Random error + within-lab systematic variables | Random error + within-lab + between-lab variables |

| Statistical Expression | Standard deviation (s~r~), %RSD [3] | σ~IP~ = √(σ²~within~ + σ²~between~), %RSD [4] | Standard deviation (s~R~), %RSD [3] |

| Typical Acceptance Criteria (Pharmaceutical Assay) | %RSD ≤ 1.0% for API [3] | %RSD ≤ 2.0-5.0% depending on method complexity [4] | Criteria set based on collaborative study results |

| Minimum Determinations | 6 at 100% or 9 across range [3] | 6 per analyst across multiple conditions [3] | Varies by study design |

| Primary Application | Instrument capability, minimal variability assessment [15] | Routine method performance, robustness under normal use [4] | Method standardization, transfer, regulatory submission [2] |

This comparative analysis demonstrates the progressive nature of precision assessment, with each level building upon the previous one by incorporating additional variability sources. The acceptance criteria similarly progress from most stringent for repeatability to more lenient for reproducibility, reflecting the increasing complexity of maintaining consistency across expanding variability factors.

Experimental Protocols for Precision Assessment

Protocol for Repeatability Determination

Objective: To determine the repeatability of an analytical method by assessing the variability in results obtained under identical conditions over a short time period.

Materials and Equipment:

- Calibrated analytical instrument

- Reference standards and samples

- Appropriate reagents and solvents

- Data collection system

Procedure:

- Prepare a homogeneous sample solution at the target concentration (100% of test concentration).

- Using the same analyst, instrument, and reagents throughout the procedure, perform six replicate injections or measurements of the sample solution.

- Alternatively, prepare samples at three concentration levels (e.g., 80%, 100%, 120% of target) with three replicates at each level, for a total of nine determinations.

- Ensure all measurements are completed within a single analytical run or within one day.

- Record all individual results for subsequent statistical analysis.

Data Analysis:

- Calculate the mean and standard deviation of the results.

- Compute the relative standard deviation (%RSD) using the formula: %RSD = (Standard Deviation / Mean) × 100.

- Compare the calculated %RSD to predefined acceptance criteria (typically ≤ 1.0% for drug substance assay).

Acceptance Criteria:

- The %RSD should be within specified limits based on method type and analyte concentration.

- For assay of drug substances, %RSD is typically acceptable at ≤ 1.0%.

- Individual results should show no significant trends or systematic patterns.

Comprehensive Protocol for Intermediate Precision Evaluation

Objective: To establish intermediate precision by evaluating method performance under varied conditions within a single laboratory, simulating realistic operational variations.

Experimental Design: A systematically designed study incorporating deliberate variations in key operational parameters:

Table 2: Intermediate Precision Experimental Design Matrix

| Study Component | Variation Factors | Minimum Requirements | Data Analysis |

|---|---|---|---|

| Different Analysts | Two analysts independently performing entire procedure [3] | Each analyst prepares standards and samples independently [3] | Compare mean results using Student's t-test |

| Different Days | Analysis performed on different days (minimum 2 days separated by at least one week) | Complete analytical run on each day | Assess day-to-day variability through ANOVA |

| Different Equipment | Use of different HPLC systems or equivalent instruments | Same model but different serial numbers preferred | Compare system suitability parameters |

| Different Reagent Lots | Use of at least two different lots of critical reagents | Document lot numbers and expiration dates | Evaluate impact on retention time and response |

| Different Column Batches | Use of different batches of chromatographic columns | Same manufacturer and specifications | Assess chromatographic performance |

Procedure:

- Design the study to incorporate the variations outlined in Table 2, ensuring that a minimum of six determinations are performed for each varied condition.

- Two analysts should independently prepare their own standards and sample solutions using different instrument systems where possible.

- Execute the analysis over multiple days (at least two days separated by approximately one week).

- Where applicable, incorporate different lots of critical reagents and different batches of chromatographic columns.

- Maintain comprehensive documentation of all experimental conditions, including instrument identification, reagent lot numbers, analyst identifiers, and dates of analysis.

Data Analysis:

- Calculate the overall mean, standard deviation, and %RSD for all combined results.

- Perform component-of-variance analysis to quantify the contribution of different factors (e.g., between-analyst, between-day) to the total variability.

- Use statistical tests (e.g., Student's t-test, F-test) to compare results between different analysts and different days.

- The formula for intermediate precision is: σ~IP~ = √(σ²~within~ + σ²~between~) [4].

Acceptance Criteria:

- The overall %RSD should meet predefined method suitability criteria (typically ≤ 2-5% depending on method type).

- No statistically significant differences (p > 0.05) should be observed between analysts or between days.

- All system suitability parameters should remain within specified limits throughout the study.

Protocol for Reproducibility Assessment

Objective: To evaluate method reproducibility through collaborative testing across multiple laboratories, establishing method performance when transferred between sites.

Procedure:

- Select a minimum of three to five participating laboratories with appropriate capabilities.

- Develop a detailed study protocol including complete method documentation, sample preparation instructions, and data reporting requirements.

- Provide all participating laboratories with identical test samples, reference standards, and critical reagents when possible.

- Establish predefined acceptance criteria and data reporting formats before study initiation.

- Each laboratory should perform the analysis following the standardized protocol, with a minimum of six determinations per sample.

- Include a familiarization period allowing laboratories to optimize instrument conditions while maintaining methodological integrity.

Data Analysis:

- Collect all data from participating laboratories and perform statistical analysis using appropriate methods.

- Calculate the reproducibility standard deviation (s~R~) and overall %RSD.

- Perform one-way ANOVA to separate within-laboratory and between-laboratory variance components.

- Assess consistency of results across laboratories using statistical tests for outliers.

Acceptation Criteria:

- The inter-laboratory %RSD should be within acceptable limits for the method type.

- No statistically significant differences between laboratory means should be observed.

- A predetermined percentage of participating laboratories should meet method suitability criteria.

Essential Research Reagents and Materials

Successful precision assessment requires careful selection and control of research reagents and materials. The following table details essential items and their functions in precision studies.

Table 3: Essential Research Reagent Solutions for Precision Assessment

| Reagent/Material | Function in Precision Assessment | Critical Quality Attributes | Precision Impact |

|---|---|---|---|

| Reference Standards | Quantification and system calibration | Purity, stability, assigned potency | Direct impact on accuracy and precision of results |

| Chromatographic Columns | Analyte separation | Batch-to-batch consistency, selectivity, efficiency | Major contributor to intermediate precision |

| HPLC-Grade Solvents | Mobile phase preparation | Purity, UV cutoff, volatility | Affects retention time reproducibility |

| Buffer Reagents | Mobile phase modification | pH consistency, lot-to-lot purity | Impacts retention time and peak shape |

| Internal Standards | Normalization of analytical response | Purity, stability, non-interference | Improves precision by correcting for variations |

| Derivatization Reagents | Analyte detection enhancement | Reactivity, purity, stability | Critical for precision in derivatization methods |

The consistency and quality of these reagents directly influence precision outcomes. For intermediate precision studies, intentional variation of critical reagent lots is recommended to assess their impact on method performance. Proper documentation of reagent attributes, including source, lot number, and expiration date, is essential for troubleshooting and method transfer activities.

Methodological Workflow for Comprehensive Precision Validation

A systematic approach to precision validation ensures thorough assessment of all relevant variability components. The following workflow diagram illustrates the strategic progression through repeatability, intermediate precision, and reproducibility assessments, highlighting key decision points and methodological considerations.

Figure 2: Precision Validation Methodology Workflow

This workflow emphasizes the sequential nature of precision validation, beginning with repeatability as the foundation. Only after successful demonstration of acceptable repeatability should intermediate precision assessment proceed. Similarly, reproducibility studies are typically conducted after establishing adequate intermediate precision, unless specific method applications require preliminary assessment of inter-laboratory transferability. At each decision point, failure to meet acceptance criteria should trigger investigation and method refinement before progressing to the next validation stage.

The hierarchical differentiation of repeatability, intermediate precision, and reproducibility provides a critical framework for analytical method validation in pharmaceutical research and development. This structured approach allows researchers to systematically assess method performance under progressively challenging conditions, from controlled ideal circumstances to real-world operational variations. Understanding these distinctions is particularly crucial for designing intermediate precision testing protocols that accurately simulate the variability encountered during routine method application.

For drug development professionals, implementing the protocols and methodologies outlined in this application note will strengthen method validation packages and facilitate regulatory compliance. The experimental designs and statistical approaches presented enable comprehensive characterization of method precision, supporting robust analytical procedures that generate reliable data throughout the product lifecycle. By adhering to this precision hierarchy framework, researchers can develop analytical methods with well-understood limitations and appropriate application boundaries, ultimately contributing to the development of safe and effective pharmaceutical products.

Why Intermediate Precision Reflects Real-World Laboratory Performance

In regulated environments such as pharmaceutical quality control, the reliability of analytical data is paramount. Intermediate precision is a critical component of analytical method validation that quantitatively measures a method's resilience to normal, expected variations within a single laboratory [3]. It provides documented evidence that an analytical procedure will perform as intended not under ideal, static conditions, but under the fluctuating circumstances encountered in day-to-day operation [3]. This characteristic makes intermediate precision a direct reflection of real-world laboratory performance, bridging the gap between the perfect repeatability of a controlled study and the broader reproducibility expected across different laboratories [17]. By evaluating how consistent results remain despite changes in analysts, instruments, and days, intermediate precision delivers a realistic forecast of a method's operational robustness, ensuring data integrity and supporting regulatory compliance throughout the drug development lifecycle [7] [3].

Core Concepts and Definitions

The Precision Hierarchy in Analytical Method Validation

Within method validation, precision is systematically investigated at multiple tiers, with intermediate precision occupying a distinct and crucial role between repeatability and reproducibility.

- Repeatability (Intra-assay Precision): Assesses the variability in results when the analysis is performed under identical conditions over a short time interval—same analyst, same instrument, same day [3]. It represents the best-case scenario for a method's precision.

- Intermediate Precision (Inter-assay Precision): Examines the variability observed when the method is applied within the same laboratory but under changing conditions that are typical in a working lab, such as different days, different analysts, or different equipment [7] [17].

- Reproducibility: Measures the precision of a method across different laboratories, often assessed through collaborative inter-laboratory studies [3] [17]. This is critical for method transfer and global regulatory submission.

The following workflow illustrates the relationship between these concepts and the typical experimental sequence for establishing intermediate precision:

Why Intermediate Precision is a Proxy for Real-World Performance

Intermediate precision is uniquely positioned as the most accurate predictor of a method's day-to-day reliability because it intentionally incorporates the very sources of variation that are unavoidable in practice [7] [17]. A method with high intermediate precision demonstrates that its performance is not fragile or dependent on a specific set of ideal circumstances. Instead, it provides confidence that the method will produce reliable results despite the natural, minor fluctuations that define the operational reality of any laboratory. This is in stark contrast to repeatability, which only confirms performance under idealized, static conditions, and reproducibility, which addresses a broader, inter-laboratory transferability that may not capture the internal variability of a single lab [17]. Essentially, intermediate precision tests the method's built-in robustness to common internal variables, making it a direct indicator of its practical utility and sustainability for routine use.

Experimental Design and Protocols

Establishing intermediate precision requires a structured experimental design that deliberately introduces predefined laboratory variables. The objective is to quantify the collective impact of these variables on the analytical results.

The Matrix Experimental Design

A highly efficient and systematic approach for this is the matrix design [7]. This design "kills all aspects with one stone" by orchestrating a series of experiments that vary multiple factors simultaneously according to a predefined plan, rather than investigating one factor at a time [7]. A classic matrix for evaluating three key factors (Operator, Day, and Instrument) through six independent experiments is detailed below:

Table 1: Matrix Experimental Design for Intermediate Precision Evaluation

| Experiment Number | Operator | Day | Instrument |

|---|---|---|---|

| 1 | 1 | 1 | 1 |

| 2 | 2 | 1 | 2 |

| 3 | 1 | 2 | 1 |

| 4 | 2 | 2 | 2 |

| 5 | 1 | 3 | 2 |

| 6 | 2 | 3 | 1 |

This design is balanced and allows for the assessment of variability contributed by each factor in a resource-efficient manner. A modified version of this approach, known as the Kojima or Japanese NIHS design, extends the principle to include an additional factor, such as different batches of HPLC columns, over six independent experiments [7].

Table 2: Kojima (Japanese NIHS) Design with Additional Factor

| Independent Experiment / Day | Analyst | Equipment | Column |

|---|---|---|---|

| 1 | 1 | 1 | 1 |

| 2 | 1 | 2 | 2 |

| 3 | 1 | 1 | 2 |

| 4 | 2 | 2 | 2 |

| 5 | 2 | 1 | 1 |

| 6 | 2 | 2 | 1 |

The logical flow for designing, executing, and evaluating an intermediate precision study is summarized in the following workflow diagram:

Detailed Experimental Protocol

The following protocol provides a step-by-step methodology for conducting an intermediate precision study for an assay method, such as one employing High-Performance Liquid Chromatography (HPLC).

Protocol: Determination of Intermediate Precision for an HPLC Assay Method

1.0 Scope and Applicability This protocol describes the procedure for establishing the intermediate precision of an analytical method by introducing variations in analyst, day, and instrumentation, following a matrix experimental design.

2.0 Materials and Reagents

- Standard Reference Material: Certified standard of the analyte of known purity and concentration.

- Test Sample: Homogeneous sample (e.g., drug substance or product) prepared at 100% of target concentration.

- Mobile Phase and Solvents: HPLC-grade solvents and buffers prepared as per the method specification.

- HPLC Systems: At least two different instruments (e.g., from different manufacturers or models).

- Chromatographic Columns: At least two different batches of the specified column.

3.0 Experimental Design

- Utilize a matrix design, such as the one shown in Table 1, involving a minimum of two analysts, three days, and two instruments, resulting in six independent sample preparations and analyses [7].

4.0 Procedure 1. Preparation: Two qualified analysts independently prepare all required standards, mobile phases, and sample solutions following the validated method procedure. Each uses their own reagents and volumetric glassware. 2. Analysis: The analysts perform the analysis according to the design matrix. For example, on Day 1, Analyst 1 uses Instrument 1, and Analyst 2 uses Instrument 2 to analyze independently prepared samples. 3. Replication: The process is repeated across three different days to account for day-to-day variability. The instrument and column used by each analyst are varied as per the design. 4. Data Recording: For each of the six experiments, record the analyte's peak response (e.g., area) and calculate the resulting assay value (e.g., % of label claim).

5.0 Data Evaluation 1. Calculate the mean (average) of all assay results from the six experiments. 2. Calculate the standard deviation (SD) and the relative standard deviation (RSD%, also known as the coefficient of variation). 3. Formula: RSD% = (Standard Deviation / Mean) x 100. 4. Compare the calculated RSD% to a predefined acceptance criterion. For an assay method, a typical acceptance criterion might be RSD% ≤ 2.0% [3].

Data Analysis and Key Parameters

The evaluation of intermediate precision data is quantitative and centers on statistical measures that express the variability observed across the deliberately varied experimental conditions.

The data from the intermediate precision study is summarized by calculating the mean, standard deviation (SD), and relative standard deviation (RSD) of the results [7] [3]. The RSD is the primary metric for assessment as it expresses the standard deviation as a percentage of the mean, allowing for comparison across different scales and methods. The following table outlines common analytical performance characteristics and example acceptance criteria relevant to method validation, within which intermediate precision sits [3].

Table 3: Key Analytical Performance Characteristics and Example Acceptance Criteria

| Performance Characteristic | Definition | Example Acceptance Criteria |

|---|---|---|

| Accuracy | Closeness of agreement between an accepted reference value and the value found. | Recovery: 98–102% |

| Repeatability | Precision under the same operating conditions over a short time interval (intra-assay). | RSD ≤ 1.0% for n=9 determinations |

| Intermediate Precision | Precision under varying conditions within the same laboratory (inter-assay). | RSD ≤ 2.0% (derived from collaborative data) |

| Linearity | The ability of the method to obtain results directly proportional to analyte concentration. | Correlation coefficient (r²) ≥ 0.998 |

| Range | The interval between the upper and lower concentrations of analyte with suitable precision, accuracy, and linearity. | Typically 80-120% of test concentration for assay |

Interpretation of Results

A low RSD value in an intermediate precision study indicates that the variability introduced by different analysts, days, and instruments is minimal. This is the hallmark of a robust method that is well-suited for routine use in the quality control laboratory. The results are often subjected to statistical testing, such as a Student's t-test, to examine if there is a statistically significant difference in the mean values obtained by different analysts, which provides another layer of insight into the method's susceptibility to specific operational variables [3].

The Scientist's Toolkit: Essential Research Reagents and Materials

The execution of a rigorous intermediate precision study relies on the use of well-characterized materials and instruments. The following table lists key items essential for these experiments.

Table 4: Essential Materials for Intermediate Precision Studies

| Item | Function / Role in Intermediate Precision |

|---|---|

| Certified Reference Standard | Serves as the benchmark for accuracy and calibration. Its known purity and concentration are critical for evaluating the method's performance across all varied conditions. |

| HPLC-Grade Solvents and Reagents | Ensure minimal background interference and consistent chromatographic performance (e.g., retention time, baseline stability) across different instrument systems and days. |

| Different Batches of Chromatographic Columns | Evaluating different column batches tests the method's robustness to minor variations in stationary phase chemistry, a common real-world variable. |

| Multiple Calibrated Instruments (HPLC/UPLC) | Core to the study; assesses whether the method produces equivalent results on different hardware platforms available within the laboratory. |

| Traceable Volumetric Glassware and Balances | Ensure that all analysts can perform accurate and precise sample and standard preparations, a fundamental prerequisite for meaningful results. |

Intermediate precision is not merely a regulatory checkbox; it is a fundamental assessment that directly correlates with the practical viability of an analytical method. By deliberately challenging the method with the same sources of variation inherent to laboratory life—different analysts performing the test on different days using different equipment—it provides a realistic forecast of the method's performance [7] [17]. A method demonstrating strong intermediate precision instills confidence that it will deliver reliable, consistent, and accurate data throughout its lifecycle in a quality control environment. This reliability is the bedrock of data integrity in drug development and manufacturing, ensuring that product quality and patient safety are consistently upheld. Therefore, investing in a thorough intermediate precision study using structured experimental designs, such as the matrix approach, is an indispensable practice for developing robust, reproducible, and real-world-ready analytical methods.

Linking Intermediate Precision to Product Quality and Patient Safety

Analytical method validation is a foundational process in the pharmaceutical industry, providing documented evidence that an analytical procedure is suitable for its intended use [18]. Among the various validation parameters, intermediate precision holds critical importance as it quantifies the reliability of analytical results under the normal, expected variations within a single laboratory over time. This application note details the role of intermediate precision in ensuring product quality and patient safety, providing researchers and drug development professionals with structured experimental protocols and data interpretation frameworks. Establishing robust intermediate precision demonstrates that an analytical method can deliver consistent and reliable results, forming a scientific basis for critical decisions in drug development and quality control [4] [12].

The Critical Role of Intermediate Precision in Pharmaceutical Quality

Intermediate precision measures an analytical method's variability under different conditions within the same laboratory, including different days, different analysts, and different equipment [4] [2]. Unlike repeatability, which assesses performance under identical conditions, intermediate precision reflects the real-world variability encountered during routine pharmaceutical analysis. This parameter is essential because it confirms that a method remains reliable despite minor operational changes, thereby ensuring that product quality assessments are consistent and trustworthy over time [3] [12].

The direct linkage between intermediate precision and patient safety operates through a causal chain of quality assurance. A method with poor intermediate precision may produce inconsistent results, potentially leading to incorrect assessments of drug potency, impurity levels, or other critical quality attributes. Such inaccuracies can compromise drug safety and efficacy, directly impacting patient health [18] [19]. Regulatory guidelines from ICH, FDA, and USP explicitly require intermediate precision testing to ensure that analytical methods can consistently verify that pharmaceutical products meet their quality specifications throughout their lifecycle [18] [12] [9].

The Precision Hierarchy in Analytical Method Validation

Figure 1: The Precision Hierarchy in Analytical Method Validation

Experimental Protocol for Intermediate Precision Assessment

Protocol Design and Execution

A comprehensive intermediate precision study should be designed to systematically evaluate the impact of key variables on analytical results. The following protocol provides a detailed methodology suitable for chromatographic assay methods.

Experimental Timeline and Resource Planning:

- Duration: Minimum of 3-6 separate analytical runs conducted over at least 2-3 weeks

- Analysts: At least two qualified analysts performing independent analyses

- Equipment: Two different HPLC systems (or equivalent instruments) of the same model and configuration

- Reagents: At least two different lots of critical reagents and chromatographic columns

Sample Preparation and Analysis:

- Standard Solution Preparation: Each analyst independently prepares standard solutions from separate weighings of reference standard [3] [12]

- Test Sample Preparation: Prepare a homogeneous bulk sample of the drug substance or product at target concentration (100%)

- Sample Analysis: Each analyst performs the analysis using their assigned instrument and reagents following the validated method procedure

- Replication: Analyze a minimum of six determinations at 100% of test concentration per analyst [12]

- Experimental Design: Employ a structured design that allows monitoring of individual variable effects (analyst, day, instrument)

Data Collection Parameters:

- Record all peak responses (area, height)

- Document retention times and system suitability parameters

- Note any deviations from standard procedure

- Maintain complete raw data traceability

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 1: Essential Materials and Reagents for Intermediate Precision Studies

| Item | Function | Critical Considerations |

|---|---|---|

| Reference Standards | Provides analytical benchmark for accuracy determination [3] | Must be certified and of highest available purity; different lots should be used in study |

| Chromatographic Columns | Stationary phase for separation [12] | Multiple lots from same supplier; columns from different suppliers if specified |

| HPLC-Grade Solvents | Mobile phase components [12] | Multiple lots from same manufacturer; different suppliers if method robustness includes this parameter |

| Buffer Components | Mobile phase pH and ionic strength control [12] | Multiple lots; pH verification for each preparation |

| Sample Preparation Solvents | Dissolution and extraction of analytes [12] | Standardized quality; multiple lots |

| System Suitability Standards | Verify chromatographic system performance [12] | Stable, well-characterized mixture of key analytes |

Data Analysis and Interpretation Framework

Statistical Calculation Methodology

The evaluation of intermediate precision requires a structured statistical approach to quantify variability components and determine method reliability.

Step 1: Initial Data Organization Organize results in a structured format to clearly identify the varying conditions:

Table 2: Example Data Collection Structure for Intermediate Precision Study

| Day | Analyst | Instrument | Sample Result (%) | Replicate |

|---|---|---|---|---|

| 1 | Analyst A | HPLC System 1 | 98.7 | 1 |

| 1 | Analyst A | HPLC System 1 | 99.1 | 2 |

| 1 | Analyst B | HPLC System 2 | 98.5 | 1 |

| 1 | Analyst B | HPLC System 2 | 98.9 | 2 |

| 2 | Analyst A | HPLC System 2 | 98.5 | 1 |

| 2 | Analyst A | HPLC System 2 | 98.8 | 2 |

| 2 | Analyst B | HPLC System 1 | 99.2 | 1 |

| 2 | Analyst B | HPLC System 1 | 98.6 | 2 |

Step 2: Intermediate Precision Calculation Calculate intermediate precision using the combined variance approach [4]:

- Compute the mean value for each data set

- Calculate standard deviation within and between data groups

- Apply the formula: σIP = √(σ²within + σ²between)

- Express as relative standard deviation (RSD%): RSD% = (σIP / Overall Mean) × 100

Step 3: Acceptance Criteria Evaluation Compare calculated RSD% against predefined acceptance criteria:

Table 3: Intermediate Precision Acceptance Criteria Based on Method Type

| Method Type | Target RSD% | Interpretation | Regulatory Reference |

|---|---|---|---|

| Assay of Drug Substance | ≤ 2.0% | Excellent precision | ICH Q2(R2) [18] |

| Assay of Drug Product | ≤ 2.0% | Excellent precision | ICH Q2(R2) [18] |

| Impurity Quantitation | ≤ 5.0-10.0% | Acceptable for trace analysis | ICH Q2(R2) [18] |

| Content Uniformity | ≤ 2.0% | Excellent precision | USP <905> [12] |

Advanced Statistical Analysis

For enhanced understanding of variability sources:

- Perform Analysis of Variance (ANOVA) to quantify contribution of individual factors (analyst, day, instrument)

- Establish control charts for ongoing monitoring of method performance

- Calculate confidence intervals for the mean (typically 95% confidence level)

Integrating Intermediate Precision into Quality Risk Management

Intermediate precision data should be incorporated into formal quality risk management systems as required by ICH Q9 [19] [9]. The experimental results directly inform the control strategy for analytical procedures.

Figure 2: Intermediate Precision in the Quality Risk Management Workflow

Regulatory Framework and Compliance

Intermediate precision testing is mandated by major regulatory authorities worldwide. The ICH Q2(R2) guideline provides the primary framework for validation studies, including intermediate precision [18] [9]. Recent updates to this guideline emphasize a lifecycle approach to method validation, connecting development with ongoing performance verification [9].

Documentation Requirements:

- Validation protocols with predefined acceptance criteria

- Complete raw data with traceability to analysts and instruments

- Statistical calculations and graphical representations

- Formal validation report with conclusions on method suitability

Inspection Readiness: Regulatory inspectors typically review intermediate precision data to ensure [18] [19]:

- Appropriate experimental design covering relevant variables

- Statistical significance of results

- Adherence to predefined acceptance criteria

- Investigation of any failures or outliers

Intermediate precision serves as a critical bridge between analytical method capability and consistent product quality. Through rigorous experimental design and comprehensive data analysis, pharmaceutical scientists can demonstrate method reliability under normal operational variations, thereby ensuring the safety and efficacy of pharmaceutical products reaching patients. The protocols and frameworks presented in this application note provide a scientifically sound approach to intermediate precision testing aligned with current regulatory expectations and quality standards.

Designing and Executing Intermediate Precision Studies: A Step-by-Step Protocol

Intermediate precision measures the variability in analytical test results when an analytical procedure is applied repeatedly to multiple samplings of the same homogeneous sample under varied conditions within the same laboratory [4]. This critical method validation characteristic quantifies the effects of random day-to-day, analyst-to-analyst, and equipment-to-equipment variations, providing a more realistic assessment of method performance under normal operating conditions than repeatability alone [4] [20].

Unlike repeatability (which assesses precision under identical conditions) and reproducibility (which evaluates precision between different laboratories), intermediate precision occupies a distinct middle ground, reflecting the expected variability that occurs during routine use of an analytical method in a single laboratory [4]. Establishing robust intermediate precision is essential for demonstrating that an analytical method remains reliable despite the normal, expected variations in a quality control environment.

Critical Variability Factors in Intermediate Precision

The following factors represent the key sources of variability that must be considered during intermediate precision studies. These elements should be deliberately varied in a structured manner to quantify their individual and collective impacts on method performance.

Table 1: Key Variability Factors in Intermediate Precision Studies

| Factor Category | Specific Elements to Vary | Impact on Precision |

|---|---|---|

| Operator | Different analysts with varying skill levels and experience [4] [20] | Introduces variability through differences in technique, sample preparation, and interpretation |

| Instrumentation | Different instruments of the same type/model; different equipment calibrations [4] | Accounts for performance differences between supposedly equivalent equipment |

| Temporal | Different days, different runs within a day, potentially different weeks [4] [20] | Captures environmental fluctuations and time-dependent reagent degradation |

| Reagent Batches | Different lots of critical reagents, solvents, columns, and consumables [4] [21] | Controls for variability in quality and performance between manufacturing lots |

| Environmental | Laboratory temperature, humidity [4] | Addresses potential subtle effects on chemical reactions or instrument performance |

The experimental design for intermediate precision testing should systematically introduce these variations according to a pre-defined plan. A well-executed study will quantify the method's robustness to these normal operational fluctuations and confirm its suitability for routine use [21].

Experimental Design and Protocol

Systematic Approach Using Design of Experiments

A structured approach to intermediate precision testing begins with defining the purpose and scope of the study. The experimental design should incorporate principles of Quality by Design (QbD) and follow a science- and risk-based approach as outlined in ICH Q2(R2) and Q14 guidelines [9].

Key Design Considerations:

- Define the Analytical Target Profile (ATP): prospectively define the required performance characteristics of the method, establishing clear goals for precision, accuracy, and other validation parameters [9].

- Risk Assessment: Complete a formal risk assessment to identify which factors (operators, instruments, days, reagents) are most likely to influence method performance [21]. This ensures resources are focused on the most critical variables.

- Experimental Matrix: For studies with multiple factors (typically more than three), a D-optimal custom Design of Experiments (DOE) approach is recommended for efficiently exploring the design space [21].

- Sampling Plan: Include sufficient replicates and duplicates to properly quantify variation. Replicates (complete method repeats) provide total method variation, while duplicates (multiple measurements of the same sample preparation) isolate instrument/chemistry precision [21].

Table 2: Intermediate Precision Experimental Protocol

| Protocol Step | Key Activities | Documentation Requirements |

|---|---|---|

| 1. Study Design | - Define factors and levels to be tested- Establish acceptance criteria (e.g., RSD%)- Determine sample size and replication scheme | - Formal experimental design protocol- Statistical power calculations |

| 2. Sample Preparation | - Use homogeneous sample material- Prepare samples at 100% analyte concentration or across validated range [20]- Utilize different reagent lots as planned | - Sample preparation records- Reagent certification and lot numbers |

| 3. Data Collection | - Multiple analysts perform analysis- Different instruments used according to design- Data collected over different days- Environmental conditions monitored and recorded | - Raw data sheets with analyst identification- Instrument log files |

| 4. Data Analysis | - Calculate overall mean, standard deviation, and RSD%- Perform analysis of variance (ANOVA) to partition variability sources | - Statistical analysis report- Variance component analysis- Graphical summaries of data |

Calculation Methodology

Intermediate precision is calculated by combining variance components from different sources using the formula:

σIP = √(σ²within + σ²between) [4]

Where:

- σ²within represents variance from within-run replication error

- σ²between represents variance from different operators, instruments, days, and reagent batches

The result is typically expressed as relative standard deviation (RSD%), which allows for comparison across different methods and concentration levels [4]. Acceptance criteria for RSD% are typically established based on the method's intended use and industry standards, with more stringent requirements for assays with narrow specifications.

Visualization of Intermediate Precision Testing Workflow

The following diagram illustrates the systematic workflow for designing, executing, and interpreting an intermediate precision study, highlighting the key decision points and processes.

Intermediate Precision Study Workflow: This diagram outlines the sequential process for conducting intermediate precision testing, from initial planning through final assessment, including the iterative improvement cycle when acceptance criteria are not met.

Research Reagent Solutions and Materials

The following table details essential materials and reagents required for intermediate precision studies, with specific attention to items that represent potential sources of variability.

Table 3: Essential Research Reagent Solutions for Intermediate Precision Studies

| Material/Reagent | Function in Study | Variability Considerations |

|---|---|---|

| Reference Standard | Provides known analyte concentration for accuracy and precision determination [20] | Must be well-characterized; stability and proper storage are critical to minimize bias |

| Critical Reagents | Specific antibodies, enzymes, or chemical reagents essential to the analytical method | Multiple lots should be tested; quality and performance between lots may vary significantly |

| Chromatography Columns | Separation medium for chromatographic methods (HPLC, GC) | Different columns of same type should be evaluated; column aging affects performance |

| Solvents & Buffers | Mobile phases, extraction solvents, dilution media | Multiple lots from same and different suppliers should be assessed for purity and composition |

| Sample Matrix | Placebo or blank matrix for spiking studies | Should represent actual test samples; multiple lots may capture natural matrix variability |

| Quality Controls | Samples with known concentrations for system suitability | Used to monitor performance across different conditions; stability must be established |

Regulatory Considerations and Compliance

Intermediate precision is a required validation characteristic for analytical procedures used in pharmaceutical quality control, as defined in ICH Q2(R2) guidelines [9]. The study should demonstrate that the method provides reliable results across the normal variations expected in a quality control laboratory environment.