A Practical Guide to Multivariate Simplex Optimization in Pharmaceutical Development

This article provides a comprehensive overview of the Simplex method for multivariate optimization, tailored for researchers and professionals in drug development.

A Practical Guide to Multivariate Simplex Optimization in Pharmaceutical Development

Abstract

This article provides a comprehensive overview of the Simplex method for multivariate optimization, tailored for researchers and professionals in drug development. It covers the foundational principles of the algorithm, from its basic geometric interpretation to advanced modified versions like the Nelder-Mead Simplex. The scope extends to practical, step-by-step protocols for implementing Simplex optimization in analytical chemistry and bioprocess development, including troubleshooting for common challenges like noise and convergence. Finally, the article offers a comparative analysis against alternative optimization strategies, such as Evolutionary Operation (EVOP) and Response Surface Methodology (RSM), and validates its efficacy through real-world case studies in chromatography and the design of drug-like molecules, empowering scientists to efficiently navigate complex experimental spaces.

Simplex Optimization Demystified: Core Principles for Scientists

Multivariate optimization represents a paradigm shift in experimental methodology, enabling researchers to systematically investigate multiple factors and their interactions simultaneously. This approach stands in stark contrast to the traditional one-variable-at-a-time (OVAT) method, which fails to capture interactive effects between variables and often leads to suboptimal solutions. Within the framework of multivariate optimization, the simplex method emerges as a particularly powerful algorithm for navigating complex experimental landscapes efficiently. This protocol details the application of simplex optimization in pharmaceutical development contexts, providing researchers with structured methodologies for optimizing analytical methods, formulation parameters, and process conditions. The structured tables, visual workflows, and reagent specifications presented herein offer practical implementation guidance for scientists seeking to enhance experimental efficiency and outcome quality in drug development pipelines.

The Limitation of Univariate Approaches

Traditional univariate optimization, while straightforward, presents significant limitations in complex experimental systems. This method involves changing one factor while holding all others constant, fundamentally ignoring potential interactions between variables [1]. In pharmaceutical development, where multiple formulation components, process parameters, and analytical conditions often interact in non-linear ways, this approach can yield misleading results and suboptimal conditions. The failure to account for factor interactions may result in reduced potency, stability issues, or manufacturing inefficiencies that would remain undetected with OVAT methodology.

Fundamental Concepts of Multivariate Optimization

Multivariate optimization may be defined as a non-linear approach where multiple decision variables are optimized simultaneously [2]. The general formulation involves minimizing or maximizing an objective function f(x₁, x₂, ..., xₙ) with respect to decision variables x₁, x₂, ..., xₙ, potentially subject to constraints. These optimization problems are categorized based on their constraint profiles:

- Unconstrained multivariate optimization: No limitations on decision variable values

- Multivariate optimization with equality constraint: Solutions must satisfy exact mathematical relationships

- Multivariate optimization with inequality constraint: Solutions must satisfy limiting conditions [2]

In analytical chemistry and pharmaceutical development, multivariate optimization has demonstrated superior efficiency compared to univariate approaches, enabling significant reductions in experimental numbers, reagent consumption, and time requirements while providing comprehensive understanding of variable interactions [1].

The Simplex Method: Theory and Algorithm

Historical Foundation and Mathematical Principles

The simplex method was originally developed by George Dantzig in 1947 as a mathematical approach for solving linear programming problems in resource allocation [3]. The method transforms optimization problems into geometric representations, where constraints form a polyhedral feasible region in n-dimensional space (where n equals the number of variables), and the optimal solution resides at a vertex of this polyhedron [3]. In the context of multivariate optimization, the simplex algorithm refers to a sequential experimental approach that uses a geometric figure with k+1 vertices (where k represents the number of variables) to navigate the experimental domain toward optimal conditions [1].

The fundamental principle involves comparing responses at vertex points and moving the simplex away from the worst-performing point toward more promising regions of the experimental space. This geometric progression continues iteratively until the optimum is located within specified tolerance limits.

Simplex Algorithm Variants

Basic Simplex (Fixed-Size) The original simplex algorithm utilizes a regular geometric figure that maintains constant size throughout the optimization process. The initial simplex size represents a critical parameter that significantly influences optimization efficiency and requires researcher judgment based on system understanding [1].

Modified Simplex (Variable-Size) Nelder and Mead (1965) introduced modifications allowing the simplex to change size through expansion and contraction operations, dramatically improving convergence efficiency [1]. This variable-size approach enables more rapid identification of optimal regions followed by precise localization of the optimum point. The modified simplex incorporates four fundamental operations:

- Reflection: Moving away from the worst vertex

- Expansion: Accelerating toward promising regions

- Contraction: Reducing size for finer search near suspected optima

- Reduction: Shrinking around best vertex when no improvement direction is found

Table 1: Comparison of Simplex Method Variants

| Characteristic | Basic Simplex | Modified Simplex |

|---|---|---|

| Figure Size | Fixed throughout process | Variable through expansion/contraction |

| Convergence Speed | Slower, methodical | Faster, adaptive |

| Initial Size Sensitivity | High sensitivity | Moderate sensitivity |

| Optimum Precision | Limited by initial size | Can achieve higher precision |

| Computational Requirements | Lower | Moderate |

| Application Complexity | Suitable for simpler systems | Preferred for complex interactions |

Experimental Protocols

Protocol 1: Implementing Modified Simplex Optimization for HPLC Method Development

Objective: Optimize high-performance liquid chromatography (HPLC) separation parameters for compound quantification in pharmaceutical formulations.

Principle: The sequential simplex method efficiently navigates the multidimensional factor space to identify optimal chromatographic conditions that maximize resolution while minimizing analysis time.

Materials and Equipment:

- HPLC system with UV/Vis or PDA detector

- Analytical column (C18, 150mm × 4.6mm, 5μm)

- Reference standards and samples

- Mobile phase components (HPLC grade)

- Data acquisition and analysis software

Procedure:

Factor Selection and Range Definition:

- Identify critical factors: mobile phase pH (X₁), organic modifier concentration (X₂), flow rate (X₃), and column temperature (X₄)

- Define feasible ranges based on column specifications and compound stability

- Establish the objective function: Resolution = 1.5 × (Peak Resolution) - 0.5 × (Analysis Time)

Initial Simplex Construction:

- Create an initial simplex with k+1 vertices (5 vertices for 4 factors)

- Calculate vertex coordinates using the basic simplex equation: Vᵢ = V₀ + δ×eᵢ

- V₀: initial vertex based on literature or preliminary experiments

- δ: step size (10-20% of factor range)

- eᵢ: unit vector in factor direction

Sequential Experimentation:

- Conduct experiments at each vertex in randomized order

- Evaluate response (resolution function) for each vertex

- Identify the worst vertex (W) producing the lowest response value

Simplex Transformation:

- Calculate the centroid (C) of all vertices except W

- Reflect W through C to generate new vertex R: R = C + (C - W)

- Evaluate response at R

- Apply modification rules:

- If R is better than current best: Expand to E = C + γ×(C - W) where γ > 1

- If R is worse than second-worst: Contract to S = C + β×(C - W) where 0 < β < 1

- If S is worse than W: Reduce all vertices toward best vertex

Termination Criteria:

- Continue iterations until the standard deviation of responses falls below 5% of mean

- Alternatively, terminate when step size reduces below practical significance level

- Verify optimal conditions with triplicate validation experiments

Troubleshooting:

- If simplex cycles without improvement: Reduce step size or apply contraction

- If response shows excessive noise: Re-evaluate objective function or increase replication

- If constraints are violated: Implement penalty functions in objective evaluation

Protocol 2: Multi-Objective Simplex Optimization for Drug Formulation Development

Objective: Simultaneously optimize multiple formulation properties including dissolution rate, stability, and flow characteristics.

Principle: Multi-objective simplex optimization extends the traditional approach to handle conflicting objectives through weighted summation or Pareto optimization techniques [4].

Materials and Equipment:

- API and excipients

- Powder blending equipment

- Tablet compression machine

- Dissolution apparatus

- Stability chambers

- Powder flow characterization equipment

Procedure:

Objective Function Formulation:

- Define critical quality attributes: Y₁ (dissolution at 30 min), Y₂ (tablet hardness), Y₃ (content uniformity)

- Establish composite objective function: F = w₁Y₁ + w₂Y₂ + w₃Y₃

- Assign weights based on relative importance (Σwᵢ = 1)

Factor-Response Modeling:

- Identify critical formulation factors: API particle size (X₁), lubricant concentration (X₂), disintegrant percentage (X₃)

- Establish factor ranges based on preliminary compatibility studies

Multi-Objective Simplex Implementation:

- Construct initial simplex with k+1 vertices

- Execute experiments according to simplex vertices

- Evaluate all objective responses at each vertex

- Calculate composite objective function value

- Apply modified simplex rules based on composite score

Pareto Frontier Identification:

- After convergence, analyze trade-offs between objectives

- Identify non-dominated solutions where no objective can be improved without compromising another

- Select final formulation based on balanced performance requirements

Validation and Robustness Testing:

- Confirm optimal formulation with triplicate manufacturing

- Test robustness through deliberate factor variation

- Establish control strategy based on sensitivity analysis

Research Reagent Solutions

Table 2: Essential Research Reagents for Simplex Optimization in Pharmaceutical Development

| Reagent/Equipment | Function in Optimization | Application Notes |

|---|---|---|

| HPLC Grade Solvents | Mobile phase components for chromatographic method development | Low UV absorbance; minimal particulate matter |

| Reference Standards | System suitability testing and response quantification | High purity (>99%); well-characterized properties |

| Analytical Columns | Stationary phase for separation optimization | Multiple chemistries (C8, C18, phenyl, etc.) |

| pH Adjusters | Mobile phase pH control for ionization manipulation | Buffer salts, acids, bases; maintain consistent ionic strength |

| Pharmaceutical Excipients | Formulation component optimization | Compatibility with API; grade-specific functionality |

| Stability Chambers | Accelerated degradation studies for stability optimization | Controlled temperature/humidity; ICH guideline compliance |

| Dissolution Apparatus | Drug release profile quantification | USP-compliant equipment; calibrated baskets/paddles |

| Particle Size Analyzers | Physical characterization of optimized formulations | Multiple techniques (laser diffraction, dynamic light scattering) |

Workflow Visualization

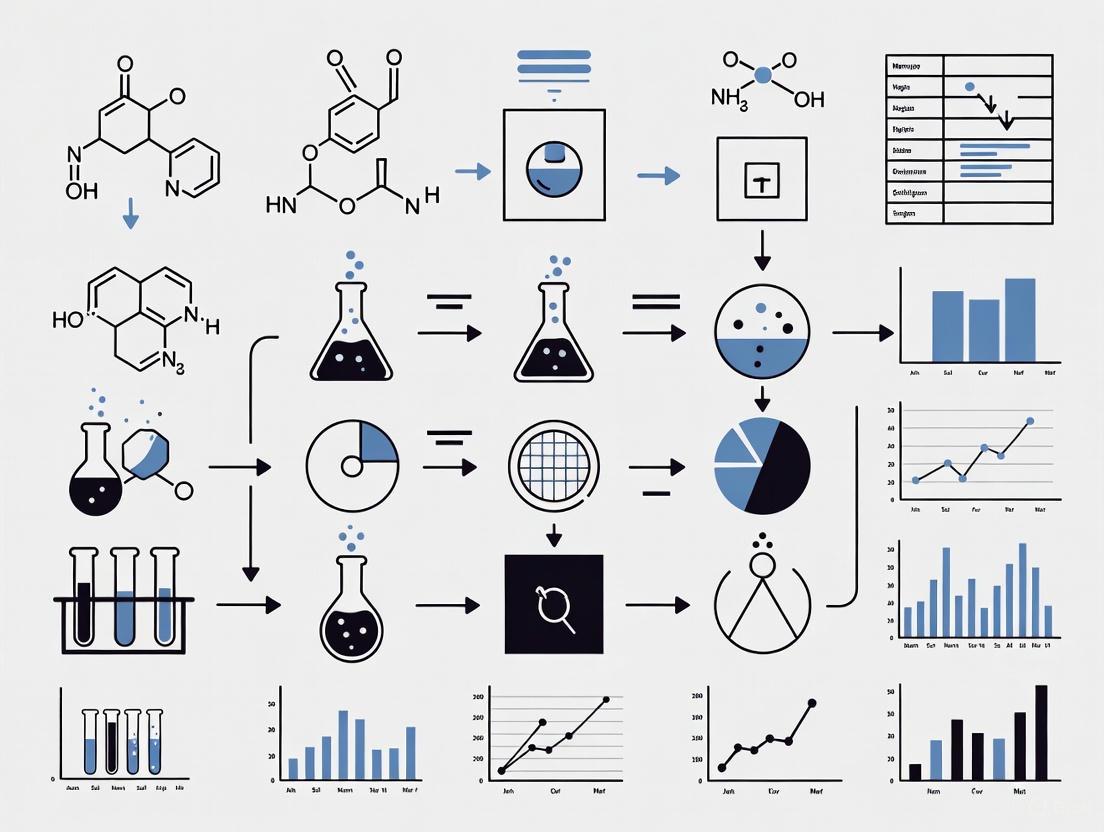

Figure 1: Modified simplex optimization workflow for pharmaceutical development applications. The algorithm iteratively refines experimental conditions until convergence criteria are satisfied.

Figure 2: Geometric transformations in modified simplex optimization. The algorithm reflects the worst vertex (red) away from low-response regions, then expands toward higher-response areas (green).

Applications in Pharmaceutical Development

Analytical Method Optimization

Simplex optimization has demonstrated particular utility in chromatographic method development, where multiple interacting parameters significantly impact separation quality. Applications include:

- HPLC/UPLC method development: Simultaneous optimization of mobile phase composition, gradient profile, temperature, and flow rate [1]

- Capillary electrophoresis: Optimization of buffer pH, concentration, and applied voltage

- Spectroscopic methods: Parameter optimization for atomic absorption and emission techniques

The modified simplex approach typically reduces method development time by 40-60% compared to univariate approaches while producing more robust methods capable of withstanding normal operational variation.

Formulation Development

Pharmaceutical formulation represents an ideal application for simplex optimization due to the complex interactions between multiple components and process parameters. Successful implementations include:

- Solid dosage forms: Optimizing excipient ratios and processing parameters for desired dissolution and stability profiles

- Liquid formulations: Balancing preservative efficacy, viscosity, and stability through component optimization

- Nanoparticle systems: Optimizing multiple characteristics including particle size, polydispersity, and drug loading

Multi-objective simplex approaches enable formulators to balance competing objectives such as maximizing dissolution while minimizing manufacturing cost or stability risks [4].

Process Optimization

Manufacturing process development benefits significantly from simplex methodology through:

- Reaction condition optimization: Simultaneously optimizing yield, purity, and reaction time

- Extraction processes: Maximizing extraction efficiency while minimizing solvent consumption and processing time

- Purification parameters: Optimizing chromatographic separation conditions for biopharmaceutical purification

Table 3: Performance Comparison of Optimization Methods in Pharmaceutical Development

| Optimization Aspect | Univariate (OVAT) | Multivariate Simplex |

|---|---|---|

| Number of Experiments | High (typically 3ⁿ +) | Moderate (typically 10-30) |

| Factor Interactions | Not detectable | Fully characterized |

| Optimal Condition Reliability | Low (may miss true optimum) | High (systematic approach) |

| Resource Consumption | High | Moderate to low |

| Implementation Complexity | Low | Moderate |

| Adaptability to Constraints | Poor | Excellent |

| Multi-Objective Capability | Limited | Strong [4] |

Recent Advances and Future Perspectives

Theoretical understanding of simplex methods has advanced significantly in recent decades. While the simplex method has always demonstrated practical efficiency, theoretical concerns about exponential worst-case performance persisted for decades [3]. Recent work by Huiberts and Bach (2024) has substantially addressed these concerns, providing mathematical justification for the observed efficiency and establishing polynomial-time bounds for simplex performance [3]. These theoretical advances strengthen the foundation for applying simplex methods in regulated pharmaceutical environments.

Future directions for simplex methodology in pharmaceutical research include:

- Hybrid approaches: Integration of simplex methods with other optimization techniques such as genetic algorithms or artificial neural networks

- High-throughput implementation: Automation of simplex optimization using robotic screening systems

- QbD integration: Formal incorporation into Quality by Design frameworks for regulatory submissions

- Multi-scale optimization: Simultaneous optimization of molecular, formulation, and process parameters

The continued development of multi-objective optimization approaches addresses the complex, competing requirements inherent in pharmaceutical development, enabling more systematic and efficient development of robust, high-quality drug products [4].

The simplex is a fundamental geometric concept in multivariate optimization, representing the simplest possible polytope in any given dimension. In the context of optimization algorithms, the simplex provides the foundational geometry for the simplex method, a cornerstone technique for solving linear programming problems. This method operates by navigating the vertices of a polyhedral feasible region defined by constraints, moving from one vertex to an adjacent one to improve the objective function value with each step [5]. The algorithm's name derives from the geometric structure it effectively utilizes, though it operates on simplicial cones rather than simplices themselves [5].

For researchers in drug development, understanding the simplex geometry is crucial for solving complex optimization problems in areas such as formulation development, process optimization, and experimental design. The simplex method provides a systematic approach to finding optimal solutions when multiple constraints—such as resource limitations, chemical compatibilities, or safety thresholds—must be satisfied simultaneously [6].

Geometric Foundation of the Simplex

Mathematical Definition and Properties

A k-simplex is defined as a k-dimensional polytope that represents the convex hull of its k + 1 affinely independent vertices [7]. Formally, given k + 1 points u₀, ..., uₖ in k-dimensional space, the simplex is defined as:

$$ C = \left{ \theta0 u0 + \dots + \thetak uk ~ \Bigg| ~ \sum{i=0}^k \thetai = 1 \text{ and } \theta_i \geq 0 \text{ for } i=0,\dots,k \right} $$

This structure creates the simplest possible convex set in any dimensional space, with the regular simplex exhibiting the highest symmetry properties of any polytope [6]. The simplex method in optimization leverages this geometric structure by traversing the vertices of the constraint polytope, which can be decomposed into simplex elements.

Table: Progression of Regular Simplex Elements Across Dimensions

| Dimension (n) | Simplex Name | Vertices | Edges | Faces | Facets |

|---|---|---|---|---|---|

| 0 | Point | 1 | 0 | 0 | 0 |

| 1 | Line Segment | 2 | 1 | 0 | 0 |

| 2 | Triangle | 3 | 3 | 1 | 3 |

| 3 | Tetrahedron | 4 | 6 | 4 | 4 |

| 4 | 5-cell | 5 | 10 | 10 | 5 |

| 5 | 5-simplex | 6 | 15 | 20 | 6 |

| n | n-simplex | n+1 | n(n+1)/2 | - | n+1 |

The number of m-dimensional faces in an n-simplex is given by the binomial coefficient $\binom{n+1}{m+1}$, demonstrating the combinatorial complexity that arises in higher-dimensional optimization problems [7].

The Standard Simplex in Optimization

The standard simplex or probability simplex is particularly relevant in optimization contexts. This k-dimensional simplex is defined in Rᵏ⁺¹ as:

$$ \left{ \vec{x} \in \mathbf{R}^{k+1} : x0 + \dots + xk = 1, x_i \geq 0 \text{ for } i=0,\dots,k \right} $$

This formulation is essential for problems involving probability distributions, resource allocation, and mixture designs—common scenarios in pharmaceutical development where components must sum to a fixed total (e.g., 100% of a formulation) [7].

The Simplex Algorithm: Movement Through Geometry

Algorithmic Mechanics

The simplex algorithm, developed by George Dantzig in 1947, solves linear programming problems by exploiting the geometry of the feasible region [3] [5]. The fundamental principle stems from the observation that if a linear program has an optimal solution, it must occur at one of the extreme points (vertices) of the polytope defined by the constraints [5].

The algorithm operates through pivot operations that move from one vertex to an adjacent vertex along edges of the polytope, improving the objective function with each move [5]. This movement through the geometric structure continues until no improving adjacent vertex exists, indicating an optimal solution has been found.

Table: Simplex Algorithm Operational Components

| Component | Mathematical Representation | Geometric Interpretation | Role in Optimization |

|---|---|---|---|

| Basic Feasible Solution | Vertex of polytope | Extreme point of feasible region | Starting point for algorithm |

| Pivot Operation | Matrix row operations | Movement to adjacent vertex | Iterative improvement mechanism |

| Reduced Cost | $\bar{c}_D^T$ in tableau | Rate of objective improvement | Optimality condition check |

| Canonical Form | $[1 \ -\bar{c}D^T \ zB]$ | Standardized representation | Computational efficiency |

Movement Mechanisms

The geometry of simplex movement involves several key operations:

Initialization (Phase I): Finding an initial basic feasible solution corresponding to a vertex of the polytope [5].

Optimality Check: Evaluating whether the current vertex is optimal by examining adjacent vertices [5].

Pivot Selection: Choosing a non-basic variable to enter the basis and determining which basic variable must leave, corresponding to selecting which edge to traverse [5].

Termination: Ending the process when no adjacent vertex offers improvement, or identifying an unbounded solution if an infinite edge is encountered [5].

The geometric interpretation reveals why the algorithm is efficient: although the number of vertices grows combinatorially with problem size, the algorithm typically visits only a small fraction of these vertices before finding the optimum [3].

Experimental Protocols for Simplex Optimization

Protocol 1: Standard Simplex Implementation for Formulation Optimization

Purpose: To optimize drug formulation components using the simplex method.

Materials:

- Experimental variables (excipient concentrations)

- Response measurement equipment (dissolution apparatus, HPLC)

- Linear programming software (MATLAB, Python with SciPy, or specialized optimization tools)

Procedure:

Problem Formulation:

- Define objective function (e.g., maximize dissolution rate)

- Identify constraints (e.g., total concentration = 100%, individual component limits)

- Transform to standard form: Maximize $c^Tx$ subject to $Ax \leq b$, $x \geq 0$ [5]

Initialization:

Iteration:

- While reduced costs indicate non-optimality:

- Select entering variable (most negative reduced cost)

- Determine leaving variable via minimum ratio test

- Perform pivot operation to update tableau [5]

- While reduced costs indicate non-optimality:

Termination:

- When all reduced costs are non-negative (maximization problem)

- Extract solution from final tableau

Validation: Confirm optimal solution satisfies all constraints and produces expected improvement in objective function.

Protocol 2: Multi-objective Simplex for Balanced Therapeutic Profile

Purpose: To optimize multiple therapeutic objectives simultaneously using multi-objective simplex approaches.

Materials:

- Conflicting efficacy/toxicity response measures

- Weighting factors for objective importance

- Multi-objective linear programming (MOLP) implementation [4]

Procedure:

Problem Structuring:

- Identify all objective functions (efficacy, stability, manufacturability)

- Establish priority weights or constraint limits for each objective [4]

Simultaneous Optimization:

- Apply modified simplex technique to optimize all objectives concurrently [4]

- Generate Pareto-optimal solutions representing trade-offs

Solution Selection:

- Evaluate efficient solutions based on decision-maker preferences

- Select optimal compromise solution

Advantages: Reduced computational effort compared to sequential optimization; identifies true optimal trade-offs between competing objectives [4].

Visualization of Simplex Algorithm Workflow

The following diagram illustrates the complete workflow of the simplex algorithm in optimization:

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational and Experimental Components for Simplex Optimization

| Research Reagent | Function in Simplex Optimization | Implementation Example |

|---|---|---|

| Linear Programming Solver | Computational engine for simplex algorithm | MATLAB linprog, Python SciPy optimize.linprog, commercial solvers (CPLEX, Gurobi) |

| Sensitivity Analysis Tools | Determines parameter stability and solution robustness | Shadow price calculation, objective coefficient ranging, right-hand-side sensitivity |

| Multi-objective Framework | Extends simplex method to multiple conflicting objectives | Weighted sum method, epsilon-constraint technique, goal programming [4] |

| Tableau Data Structure | Matrix representation of linear program | Two-dimensional array storing coefficients, basic variables, and objective values [5] |

| Constraint Handler | Manages inequality and equality constraints | Slack/surplus variable introduction, artificial variables for Phase I [5] |

| Randomization Module | Improves algorithm performance and avoids worst-case complexity | Random pivot rule implementation, perturbation techniques [3] |

| Visualization Package | Geometric interpretation of algorithm progress | 2D/3D constraint plotting, solution path animation, convergence monitoring |

Advanced Considerations in Simplex Applications

Computational Complexity and Recent Advances

While the simplex method has demonstrated remarkable efficiency in practice since its development by Dantzig, theoretical computer science has revealed concerns about its worst-case complexity. In 1972, mathematicians proved that the time required could grow exponentially with problem size in pathological cases [3].

Recent theoretical breakthroughs have addressed this long-standing issue. Building on landmark work from 2001 by Spielman and Teng that introduced randomness to avoid worst-case scenarios, Bach and Huiberts (2024) have further refined the approach to guarantee significantly lower runtimes [3]. Their work provides stronger mathematical justification for the method's practical efficiency and demonstrates that exponential runtimes do not materialize in practice with appropriate randomization techniques [3].

For drug development researchers, these advances validate reliance on simplex-based optimization in critical development timelines, ensuring predictable computational performance even for large-scale problems involving numerous formulation variables and constraints.

Barycentric Coordinates for Mixture Problems

In pharmaceutical formulation development, barycentric coordinates provide a powerful representation for mixture problems. Any point within a simplex can be expressed as a convex combination of its vertices using barycentric coordinates (v₁, v₂, ..., vₙ) where Σvᵢ = 1 and vᵢ ≥ 0 [6].

This coordinate system naturally represents pharmaceutical formulations where components must sum to 100%, allowing researchers to:

- Systematically explore the entire formulation space

- Identify regions of optimal performance

- Visualize composition-property relationships

- Navigate constraint boundaries effectively

The simplex coordinates provide a pseudo-orthogonal framework that facilitates decomposition of complex mixture relationships, making it particularly valuable for understanding interactions between multiple formulation components [6].

In multivariate optimization, simplex-based methods provide a powerful framework for experimental improvement and process optimization, particularly when detailed mechanistic models of the system are unavailable. These methods are classified into two distinct algorithmic philosophies: the fixed-size basic simplex and the adaptive modified simplex. The fundamental difference lies in their operational dynamics—fixed-size simplex maintains a constant step size throughout the optimization process, while adaptive modified simplex dynamically adjusts its step size and search direction based on landscape feedback [8] [9].

For researchers in drug development, these methods offer systematic approaches to navigate complex experimental spaces where multiple factors simultaneously influence critical outcomes. The basic simplex method, originating from the work of Spendley et al., maintains geometric regularity throughout the optimization process, providing stable but potentially slower convergence [8] [10]. In contrast, the modified simplex approach, most famously implemented in the Nelder-Mead algorithm, introduces adaptive mechanisms that allow the simplex to change shape based on response landscape characteristics, potentially accelerating convergence at the cost of increased complexity [10].

Theoretical Foundations and Algorithmic Mechanisms

Fixed-Size Basic Simplex Methodology

The fixed-size basic simplex operates through a series of predetermined movements, maintaining consistent step sizes throughout the optimization process. This approach uses a regular geometric structure (a simplex) with k+1 vertices for k factors, where each vertex represents a specific combination of factor levels [8]. The algorithm proceeds by reflecting the worst-performing vertex through the centroid of the opposing face, generating new experimental points in a structured manner while maintaining a constant simplex size [9].

Key characteristics of the fixed-size approach include:

- Geometric regularity: The simplex maintains its shape throughout the optimization process

- Fixed step size: Perturbation size remains constant, providing consistent experimental increments

- Systematic reflection: The worst vertex is reflected to generate new experimental conditions

- Boundary handling: Specific rules manage constraints and boundary violations

The basic simplex is particularly valued for its stability and predictable behavior, especially in noisy experimental environments where large, adaptive steps might amplify variability issues [8].

Adaptive Modified Simplex Methodology

The adaptive modified simplex, most prominently implemented in the Nelder-Mead algorithm, introduces flexibility in both step size and direction by allowing the simplex to expand, contract, or reshape itself based on local response characteristics [10]. Unlike its fixed-size counterpart, this approach employs a variable step size mechanism that can accelerate convergence in favorable regions or contract to refine the search in unpromising areas.

The modified simplex incorporates four primary operations:

- Reflection: Projects the worst point through the centroid of the remaining points

- Expansion: Extends further in promising directions when reflection yields significant improvement

- Contraction: Reduces step size when reflection provides moderate improvement

- Shrinkage: Globally reduces simplex size when no improvement is found

A key advancement in modern implementations involves the analytical computation of the reflection parameter (α) rather than relying on fixed heuristic values, enhancing convergence properties [10]. This approach allows the algorithm to make larger steps when progressing toward optima and smaller steps when nearing the optimum region, potentially improving efficiency while maintaining robustness.

Figure 1: Adaptive Modified Simplex Decision Logic

Comparative Performance Analysis

Algorithmic Characteristics and Operational Parameters

Table 1: Fundamental Characteristics of Fixed-Size vs. Adaptive Simplex Methods

| Characteristic | Fixed-Size Basic Simplex | Adaptive Modified Simplex |

|---|---|---|

| Simplex Structure | Regular geometric shape maintained | Shape evolves based on response surface |

| Step Size | Constant throughout optimization | Variable (expands/contracts based on performance) |

| Parameters to Define | Initial step size, reflection coefficient | Reflection, expansion, contraction, shrinkage coefficients |

| Convergence Behavior | Stable, predictable progression | Potentially faster but may oscillate near optima |

| Noise Sensitivity | More robust to experimental noise | More sensitive to noise due to adaptive nature |

| Boundary Handling | Requires explicit constraint management | Can incorporate boundary constraints in operations |

| Computational Complexity | Lower; simple calculations | Higher; multiple operations per iteration |

| Implementation Complexity | Straightforward to implement | More complex decision logic required |

Quantitative Performance Metrics

Table 2: Performance Comparison Under Different Experimental Conditions

| Experimental Condition | Fixed-Size Basic Simplex | Adaptive Modified Simplex |

|---|---|---|

| Low Noise (SNR > 1000) | Slow but reliable convergence | Fast convergence with minimal oscillations |

| High Noise (SNR < 250) | Maintains direction stability | Prone to misdirection; may require restart |

| Low Dimensions (k < 4) | Efficient with minimal overhead | Very efficient with rapid improvement |

| High Dimensions (k > 6) | Computationally expensive | More efficient per evaluation but may require more iterations |

| Factor Step Size (dx) | Critical parameter; optimal ~1-5% of range | Less critical; algorithm adapts step size |

| Computational Resources | Lower memory and processing requirements | Higher memory for storing complex states |

Research comparing these approaches demonstrates that the optimal selection depends heavily on specific experimental conditions. In simulation studies, the adaptive modified simplex generally outperforms the fixed-size approach in low-noise environments and lower-dimensional spaces, while the fixed-size method maintains advantages in high-noise scenarios or when consistent, small perturbations are required to keep processes within specification limits [8].

Experimental Protocols for Pharmaceutical Applications

Protocol 1: Fixed-Size Basic Simplex for Reaction Optimization

Objective: Optimize yield and purity in a synthetic pathway while maintaining temperature and pressure within safe operating boundaries.

Materials and Equipment:

- Reaction vessel with temperature and pressure control

- Analytical HPLC system for purity assessment

- pH meter for monitoring reaction conditions

- Reagents and catalysts as required for synthesis

Experimental Workflow:

Define Optimization Factors and Ranges:

- Factor A: Reaction temperature (30-80°C)

- Factor B: Catalyst concentration (0.1-2.0 mol%)

- Factor C: Reaction time (1-24 hours)

- Factor D: Solvent ratio (0.2-0.8 v/v)

Initialize Simplex:

- Create initial simplex with 5 vertices (k+1 for k=4 factors)

- Set step size to 10% of factor range for each variable

- Define reflection coefficient = 1.0

Iterative Optimization:

- Execute experiments according to current simplex vertices

- Measure responses (yield, purity)

- Calculate composite objective function: 0.6yield + 0.4purity

- Identify worst-performing vertex

- Reflect worst vertex through centroid of remaining vertices

- Verify new vertex stays within constraint boundaries

- If boundary violation occurs, implement projection to feasible region

Termination Criteria:

- Continue until simplex cycles without significant improvement (<2% change in objective function over 3 iterations)

- Maximum of 20 experimental iterations

Data Analysis:

- Plot objective function progression vs. iteration number

- Construct response surfaces based on final simplex vertices

- Identify optimal factor settings from best-performing vertex

Figure 2: Fixed-Size Simplex Experimental Workflow

Protocol 2: Adaptive Modified Simplex for Formulation Development

Objective: Optimize drug formulation composition to maximize dissolution rate while minimizing excipient cost and ensuring stability.

Materials and Equipment:

- Powder blending equipment

- Tablet compression machine

- Dissolution testing apparatus

- Stability chambers (controlled temperature and humidity)

- HPLC system for potency verification

Experimental Workflow:

Define Factors and Objective Function:

- Factor A: API concentration (5-30% w/w)

- Factor B: Binder percentage (1-10% w/w)

- Factor C: Disintegrant percentage (2-15% w/w)

- Factor D: Lubricant percentage (0.5-3% w/w)

- Objective: Maximize Z = 0.5dissolution_rate + 0.3(1/cost) + 0.2*stability_index

Initialize Adaptive Simplex:

- Generate k+1 = 5 initial vertices using Latin Hypercube sampling

- Set initial parameters: α(reflection)=1.0, γ(expansion)=2.0, β(contraction)=0.5, δ(shrinkage)=0.5

Iterative Optimization Cycle:

- Prepare formulations according to current simplex vertices

- Characterize formulations (dissolution, cost calculation, stability testing)

- Rank vertices by objective function value

- Calculate centroid of best k vertices

- Apply reflection operation to generate candidate point

- Evaluate candidate point:

- If best improvement: Apply expansion

- If moderate improvement: Accept reflection

- If slight improvement: Apply contraction

- If no improvement: Apply shrinkage

Convergence Determination:

- Terminate when vertex standard deviation falls below threshold

- Or when simplex volume reduces to predetermined minimum

- Maximum of 15 iterations due to resource constraints

Data Analysis:

- Construct perturbation plots showing factor effects on responses

- Perform robustness analysis around optimal formulation

- Validate optimal formulation with triplicate experiments

Implementation Considerations for Drug Development

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagents and Solutions for Simplex Optimization Experiments

| Reagent/Solution | Function in Optimization | Application Notes |

|---|---|---|

| pH Buffer Systems | Control and optimize reaction microenvironment | Critical for enzymatic or pH-sensitive synthetic pathways |

| Catalyst Libraries | Screen for optimal reaction acceleration | Vary concentration as factor in synthetic optimization |

| Solvent Mixtures | Modulate polarity and solubility parameters | Adjust ratios as continuous factors in formulation |

| Excipient Blends | Optimize drug delivery characteristics | Varied proportions affect dissolution and stability |

| Stability Indicators | Quantify formulation robustness under stress | Incorporate into objective function for stability |

| Analytical Standards | Quantify yield, purity, and byproducts | Essential for accurate response measurement |

| Mobile Phase Components | HPLC method development and analysis | Can be factors when optimizing analytical methods |

Practical Implementation Guidelines

Successful implementation of simplex methods in pharmaceutical research requires careful consideration of several practical aspects:

Factor Selection and Scaling:

- Select factors with significant expected impact on responses

- Scale factors to similar magnitude (e.g., 0-1 range) to prevent dominance by single variable

- Include both process and composition factors where applicable

Experimental Design Considerations:

- For fixed-size simplex, initial step size should represent practically significant changes

- For adaptive simplex, initial vertices should span feasible region adequately

- Include replication at reference point to estimate experimental error

Response Measurement and Objective Function:

- Incorporate multiple critical quality attributes in objective function

- Apply appropriate weighting to balance competing objectives

- Consider using desirability functions for complex multi-objective optimization

Constraint Management:

- Implement hard constraints for safety-critical parameters

- Use penalty functions for soft constraints in objective function

- Establish boundary violation protocols before beginning experiments

The selection between fixed-size basic simplex and adaptive modified simplex represents a fundamental strategic decision in experimental optimization for pharmaceutical development. The fixed-size approach offers stability and robustness in high-noise environments or when consistent, small perturbations are required to maintain process control. In contrast, the adaptive modified simplex provides accelerated convergence and greater efficiency in well-characterized experimental spaces with lower noise levels.

For drug development applications, the adaptive modified simplex generally offers advantages in early-stage formulation and synthetic route optimization where rapid iteration is valuable and experimental noise can be controlled. The fixed-size approach maintains relevance in manufacturing process optimization and scale-up activities where consistent, controlled adjustments are essential for maintaining quality and regulatory compliance.

Future directions in simplex methodology development include hybrid approaches that combine the stability of fixed-size methods with the efficiency of adaptive approaches, as well as integration with machine learning techniques for initial guidance and anomaly detection [10] [11]. These advances promise to further enhance the utility of simplex methods as essential tools in the pharmaceutical researcher's toolkit.

In the pursuit of efficient and cost-effective drug development, researchers constantly seek superior methods for optimizing complex processes. Within this context, multivariate optimization presents a significant challenge, particularly in early-stage development where resources are limited and experimental data is sparse. The Simplex method emerges as a powerful, sequential optimization technique that enables researchers to navigate multidimensional experimental spaces with remarkable efficiency. Unlike traditional Design of Experiments (DoE) approaches that require extensive upfront experimentation, Simplex methods begin with a minimal set of experiments and then progressively determine the direction toward improved responses through an iterative process of reflection, expansion, and contraction [12]. This paper delineates the specific pharmaceutical use cases where Simplex protocols offer distinct advantages over conventional optimization approaches, providing detailed application notes and experimental protocols for implementation.

Pharmaceutical Applications of Simplex Optimization

Ideal Use Cases and Advantages

Simplex optimization demonstrates particular strength in specific pharmaceutical development scenarios. The table below summarizes the key use cases and the corresponding advantages over traditional methods.

Table 1: Pharmaceutical Use Cases for Simplex Optimization

| Use Case | Key Advantages | Traditional Method Challenge |

|---|---|---|

| Early Bioprocess Development [13] | Rapid identification of optimal conditions with minimal experiments; handles complex, nonlinear data trends | Extensive experimentation required before establishing viable operating windows |

| High-Throughput Chromatography Optimization [13] | Efficiently optimizes multiple response variables (yield, DNA content, HCP) simultaneously; compatible with gridded experimental data | Graphical optimization becomes complex with multiple responses; requires numerous experimental slices |

| Multi-objective Formulation Development | Avoids deterministic weight specification; delivers solutions belonging to Pareto set (non-dominated solutions) [13] | Weight specification requires extensive expert knowledge; solutions may be dominated in all responses |

| Membrane Protein Proteomics [14] | Superior enrichment of hydrophobic and lipidated proteins compared to acetone precipitation | Conventional one-phase extraction methods inefficient for membrane-rich samples |

Simplex in Bioprocess Chromatography

In high-throughput downstream process development, a grid-compatible Simplex variant has demonstrated exceptional performance in optimizing chromatography steps. This approach efficiently manages three critical responses simultaneously: yield, residual host cell DNA content, and host cell protein (HCP) content [13]. The method employs a desirability function to amalgamate these multiple responses into a single objective function, effectively converting a multi-objective problem into a scalar optimization challenge. The Simplex algorithm then navigates the complex space of both experimental conditions and response weights, delivering operating conditions that offer balanced, superior performance across all outputs. This approach has proven successful even with highly nonlinear response surfaces where high-order DoE models struggle [13].

Simplex in Multi-omics Sample Preparation

The SIMPLEX (Simultaneous Metabolite, Protein, Lipid Extraction) protocol represents a specialized liquid-liquid extraction application in analytical pharmacology. This method significantly enriches membrane proteins, transmembrane proteins, and S-palmitoylated proteins from lipid-rich synaptic junctions compared to conventional acetone precipitation [14]. For drug development research focusing on neuronal targets or membrane-bound receptors, this capability is crucial for comprehensive proteomic and phosphoproteomic characterization. The method achieves a 42% enrichment in membrane proteins, enabling more effective mass spectrometry-based identification of challenging hydrophobic protein targets relevant to neurological disorders [14].

Experimental Protocols

Grid-Compatible Simplex for Bioprocess Optimization

Table 2: Reagent Solutions for Bioprocess Optimization

| Research Reagent | Function in Protocol |

|---|---|

| Desirability Function Framework [13] | Amalgamates multiple responses (yield, impurities) into a single objective function |

| Gridded Experimental Space [13] | Pre-processed search space with monotonically increasing integers assigned to factor levels |

| Response Weight Parameters [13] | Incorporated as optimization inputs to avoid deterministic specification |

| Chromatography Resins & Buffers | Experimental materials for which optimal conditions are determined |

Protocol Steps:

- Pre-processing: Convert the gridded experimental search space by assigning monotonically increasing integers to the levels of each process factor (e.g., pH, conductivity, buffer concentration). Replace any missing data points with highly unfavorable surrogate values to guide the algorithm away from these regions [13].

- Initial Simplex Formation: Define a starting point or initial simplex within the processed experimental space. The number of vertices in this simplex equals n+1, where n is the number of variables being optimized [12] [15].

- Iterative Optimization:

- Evaluation: Conduct experiments at the conditions defined by the simplex vertices and measure all relevant responses (yield, DNA, HCP).

- Desirability Calculation: Apply the desirability approach to merge multiple responses into a total desirability value (D). Use Equations 1 and 2 for maximizing (e.g., yield) and minimizing (e.g., impurities) responses respectively, and Equation 3 to calculate the composite D [13].

- Simplex Transformation: Based on the response values, apply Simplex rules (reflection, expansion, contraction) to determine the coordinates of the next experimental point, moving away from unfavorable conditions and toward promising regions [12] [13].

- Termination: Continue iterations until the method identifies an optimum (no further improvement in D is observed) or meets predefined convergence criteria [13].

SIMPLEX Extraction for Membrane Proteomics

Table 3: Reagent Solutions for Membrane Proteomics

| Research Reagent | Function in Protocol |

|---|---|

| Methyl-tert-butylether (MTBE) [14] | Organic solvent for lipid extraction and membrane solubilization |

| Methanol [14] | Homogenization agent and protein precipitant |

| Triethylammonium bicarbonate (TEAB) [14] | Buffering agent for maintaining pH during protein digestion |

| Trypsin (Mass Spectrometry Grade) [14] | Proteolytic enzyme for protein digestion into analyzable peptides |

| Tris(2-carboxyethyl)phosphine (TCEP) [14] | Reducing agent for breaking protein disulfide bonds |

| Iodoacetamide (IAA) [14] | Alkylating agent for cysteine side chain modification |

Protocol Steps:

- Homogenization: Resuspend the membrane-enriched sample (e.g., synaptic junctions) in 225 µL of methanol. Perform three freeze-thaw cycles with intermediate ultrasonication to thoroughly homogenize the sample [14].

- Lipid Extraction: Add 750 µL of MTBE to the homogenate and incubate for 1 hour at 950 rpm and 4°C to solubilize membranes and extract lipids [14].

- Phase Separation: Add 188 µL of dd water to induce phase separation. Centrifuge at 10,000 × g for 10 minutes at 4°C. Remove and discard the upper organic phase containing lipids [14].

- Protein Precipitation: To the remaining lower phase, add 527 µL of methanol and incubate at -20°C for 2 hours to precipitate proteins. Pellet proteins by centrifuging at 13,500 × g for 30 minutes [14].

- Protein Digestion: Resuspend the protein pellet in 8 M urea, 0.1% rapigest in 50 mM TEAB buffer. Reduce proteins with 10 mM TCEP (1 hour, 22°C) and alkylate with 40 mM IAA (30 minutes, room temperature in the dark). Dilute with TEAB to reduce urea concentration below 1 M, then digest with trypsin (enzyme-to-substrate ratio 1:40 w/w) for 16 hours at 37°C [14].

- Analysis: Terminate digestion with formic acid, centrifuge, and desalt the peptides using C18 solid-phase extraction before LC-MS/MS analysis [14].

Workflow Visualization

The following diagram illustrates the logical workflow and decision process for implementing the grid-compatible Simplex method in pharmaceutical development:

Diagram 1: Simplex Optimization Workflow

The strategic implementation of Simplex methods addresses critical inefficiencies in pharmaceutical development, particularly for early-stage process optimization, multi-objective formulation challenges, and specialized analytical preparations. The grid-compatible Simplex algorithm provides a robust framework for navigating complex experimental spaces with minimal experimental runs, while the SIMPLEX extraction protocol offers a superior technical approach for enriching challenging membrane protein targets. By integrating these protocols into their multivariate optimization strategies, researchers and drug development professionals can accelerate development timelines, improve resource utilization, and gain deeper insights into complex biological systems relevant to therapeutic development.

Implementing the Simplex Protocol: A Step-by-Step Guide for Drug Development

The Simplex Method is a foundational algorithm in linear programming and a critical component in multivariate optimization protocol research. It operates by systematically moving from one corner point (extreme point) of the feasible solution space, defined by the problem's constraints, to an adjacent one, improving the objective function value with each step until the optimal solution is found [5]. This method is particularly valued for solving complex resource allocation problems prevalent in pharmaceutical development, such as optimizing reaction conditions, resource scheduling, and raw material blending under multiple constraints.

In the context of modern optimization research, the Simplex Method maintains its relevance even with the development of alternative approaches like Interior Point Methods (IPMs). While IPMs offer polynomial-time complexity and can be exceptionally powerful for very large-scale problems, the Simplex Method often demonstrates superior performance for many practical problems and remains heavily utilized in operational research contexts, including decomposition techniques and column generation schemes [16]. Its geometrical intuition and iterative improvement process make it particularly accessible for researchers modeling complex multivariate systems.

Theoretical Foundation: From LP Formulation to Initial Simplex

Standard Form Conversion

The algorithm requires the linear programming model to be in standard equation form with non-negative right-hand sides and variables [17]. The conversion process involves:

- Slack Variables: Convert ≤ inequalities into equations by adding non-negative slack variables. For example: ( x2 + 2x3 \leq 3 ) becomes ( x2 + 2x3 + s1 = 3 ) with ( s1 \geq 0 ) [5].

- Surplus Variables: Convert ≥ inequalities into equations by subtracting non-negative surplus variables.

- Unrestricted Variables: Replace free variables with the difference of two non-negative variables.

The resulting system forms ( A\mathbf{x} = \mathbf{b} ) with ( \mathbf{x} \geq \mathbf{0} ), where ( A ) is an ( m \times n ) matrix with full row rank [5].

Mathematical Representation

The algorithm utilizes a simplex tableau to organize computations [5]. The initial tableau structure is:

[ \begin{bmatrix} 1 & -\mathbf{c}^T & 0 \ 0 & \mathbf{A} & \mathbf{b} \end{bmatrix} ]

Where ( \mathbf{c} ) represents the objective function coefficients, ( \mathbf{A} ) is the coefficient matrix of constraints, and ( \mathbf{b} ) is the right-hand side vector. Through pivot operations, the tableau is transformed into canonical form, revealing basic feasible solutions and their corresponding objective values.

Table 1: Key Components of the Initial Simplex Tableau

| Component | Symbol | Description | Role in Optimization |

|---|---|---|---|

| Decision Variables | ( x_j ) | Variables representing quantities to be determined | Fundamental units of the solution space |

| Objective Coefficients | ( c_j ) | Coefficients of variables in the objective function | Determine direction of optimization improvement |

| Constraint Matrix | ( A ) | Coefficients of constraints in equation form | Defines the feasible region geometry |

| Right-Hand Side | ( b ) | Constants in constraint equations | Sets capacity limits for resources |

| Slack/Surplus Variables | ( s_i ) | Added variables to convert inequalities to equations | Transform constraint representation |

Experimental Protocol: Constructing the Initial Simplex

Phase I: Initialization and Basic Feasible Solution

The process of finding an initial basic feasible solution constitutes Phase I of the simplex algorithm [5]:

- Problem Formulation: Clearly define the objective function and all constraints based on the optimization problem.

- Standard Form Conversion: Introduce slack, surplus, and artificial variables as needed to transform all constraints into equations.

- Initial Tableau Construction: Set up the initial simplex tableau with the objective function last.

- Artificial Objective Function: For problems requiring artificial variables, create a Phase I objective function that minimizes the sum of artificial variables.

- Feasibility Check: Apply pivot operations until all artificial variables are driven from the basis (or their sum is minimized to zero), indicating a feasible solution has been found.

The outcome of Phase I is either a basic feasible solution to begin Phase II optimization or the determination that the feasible region is empty (infeasible problem) [5].

Workflow Visualization

The following diagram illustrates the complete experimental workflow from problem formulation to optimal solution:

Research Reagent Solutions: Computational Tools for Simplex Implementation

Successful implementation of the simplex protocol requires specific computational tools and analytical approaches:

Table 2: Essential Research Reagents for Simplex Protocol Implementation

| Reagent Category | Specific Tools | Function in Protocol |

|---|---|---|

| Computational Environment | MATLAB, Python with NumPy/SciPy, R | Matrix manipulation for tableau operations and pivot selection |

| Optimization Libraries | Google OR-Tools, IBM CPLEX, SciPy Optimize | Provide pre-implemented simplex variants for validation |

| Visualization Tools | Graphviz DOT language, matplotlib, plotly | Create workflow diagrams and solution space representations |

| Linear Algebra Systems | LU decomposition routines, matrix inversion algorithms | Efficiently handle pivot operations and basis updates |

| Constraint Processors | Symbolic math toolkits, automatic differentiation | Convert inequality constraints to standard form equations |

Advanced Applications: Modified Simplex for Complex Optimization Scenarios

Multi-Criteria Optimization in Fuzzy Environments

Recent research has extended the simplex method to handle multi-criteria optimization problems under uncertainty, particularly valuable for pharmaceutical development where criteria may be contradictory or immeasurable [18]. The modified approach integrates fuzzy set theory with the simplex framework:

- Fuzzy Criteria Evaluation: Decision makers provide fuzzy assessments of immeasurable criteria using linguistic variables.

- Simplex Modification: The traditional simplex method is adapted to process these fuzzy evaluations alongside measurable criteria.

- Pareto Optimal Solutions: The algorithm identifies compromise solutions that balance multiple conflicting objectives.

- Convergence Assurance: A theorem guarantees the solution sequence converges to the minimum criteria values [18].

This hybrid approach has demonstrated practical utility in real-world applications such as optimizing benzene production processes [18].

Computational Mechanics of Pivot Operations

The geometrical movement between corner points is implemented computationally through pivot operations:

Table 3: Pivot Operation Decision Parameters

| Decision Point | Calculation Method | Stopping Condition | ||

|---|---|---|---|---|

| Entering Variable | Max absolute negative reduced cost: ( \max_j | \bar{c}_j < 0 | ) | All reduced costs ≥ 0 |

| Leaving Variable | Minimum ratio test: ( \mini { bi/a{ij} | a{ij} > 0 } ) | All ratios negative (unbounded) | ||

| Pivot Element | Intersection of entering column and leaving row | Matrix singularity check | ||

| Optimality Check | All reduced costs non-negative | Optimal solution found |

Application Notes for Pharmaceutical Research

Protocol Implementation Guidelines

When applying the simplex method to drug development optimization:

- Constraint Modeling: Accurately model production constraints as linear inequalities, considering reaction times, resource availability, and purity requirements.

- Objective Specification: Clearly define the optimization target (cost minimization, yield maximization) with appropriate coefficients.

- Sensitivity Analysis: Conduct post-optimality analysis to determine how changes in constraint parameters affect the optimal solution.

- Validation: Verify results through multiple simplex implementations or alternative optimization approaches where feasible.

Integration with Broader Optimization Framework

The simplex method serves as a fundamental component within a comprehensive multivariate optimization protocol. Its strengths in providing exact solutions to linear problems complement other optimization approaches:

- Decomposition Schemes: The simplex method can be effectively combined with column generation techniques for discrete optimization problems like optimal transport [16].

- Hybrid Approaches: For non-linear or fuzzy optimization scenarios, modified simplex procedures can be integrated with fuzzy mathematics and other optimality principles [18].

- Benchmarking: Use simplex solutions as benchmarks for evaluating heuristic or metaheuristic approaches to complex scheduling problems in pharmaceutical manufacturing [19].

This primer establishes the foundational framework for constructing initial simplex configurations within multivariate optimization research, providing researchers with practical protocols for implementation across diverse pharmaceutical development scenarios.

Core Mathematical Operations of the Simplex Protocol

The Nelder-Mead simplex method is a cornerstone of derivative-free multivariate optimization, relying on a geometric structure called a simplex—an n-dimensional polytope defined by n+1 vertices [20]. The algorithm iteratively improves this simplex through a series of geometric operations, navigating the parameter space without requiring gradient information [21]. These operations form the fundamental "iterative engine" that enables the protocol to converge toward optimal solutions, making it particularly valuable for complex optimization landscapes in scientific and engineering disciplines.

Reflection Operation Protocol

The reflection operation generates a new trial point by moving away from the worst-performing vertex of the simplex, under the assumption that a better point lies in the opposite direction.

Mathematical Formulation: Let ( Xh ) be the vertex with the highest (worst) objective function value, and let ( M ) be the centroid of the remaining vertices (excluding ( Xh )). The reflected point ( Xr ) is calculated as [22]: [ Xr = M + \alpha(M - X_h) ] where ( \alpha ) is the reflection coefficient, typically set to 1 [20]. This operation preserves the volume of the simplex while exploring promising directions away from poor regions of the parameter space.

Experimental Protocol:

- Vertex Evaluation: Calculate objective function values ( f(Xi) ) for all vertices ( X1, X2, ..., X{n+1} )

- Identify Worst Vertex: Determine ( Xh ) where ( f(Xh) = \max{f(X1), f(X2), ..., f(X_{n+1})} )

- Compute Centroid: Calculate ( M = \frac{1}{n} \sum{i \neq h} Xi )

- Generate Reflection: Compute ( Xr = M + \alpha(M - Xh) )

- Evaluate Reflection: Calculate ( f(X_r) ) for comparison

Expansion Operation Protocol

The expansion operation extends the reflection further when the reflected point shows significant improvement, enabling more aggressive exploration of promising regions.

Mathematical Formulation: If the reflected point ( Xr ) represents sufficient improvement (( f(Xr) < f(Xl) ) where ( Xl ) is the best vertex), an expansion point ( Xe ) is generated [20] [22]: [ Xe = M + \gamma(X_r - M) ] where ( \gamma ) is the expansion coefficient, typically set to 2 [20]. This operation increases the simplex volume to accelerate progress toward optima.

Decision Protocol:

- Evaluate Reflection: Compare ( f(Xr) ) with current best value ( f(Xl) )

- Expansion Condition: If ( f(Xr) < f(Xl) ), proceed with expansion

- Generate Expansion: Compute ( Xe = M + \gamma(Xr - M) )

- Evaluate Expansion: Calculate ( f(X_e) )

- Selection: Replace ( Xh ) with the better of ( Xr ) and ( X_e )

Contraction Operation Protocol

Contraction operations reduce the simplex size when reflection fails to produce improvement, enabling finer search resolution and adaptation to complex response surfaces.

Matraction Formulation: Two contraction variants exist based on reflection performance [20]:

Outside Contraction (when ( f(Xr) ) is better than ( Xh ) but not the best): [ Xc = M + \beta(Xr - M) ] where ( \beta ) is the contraction coefficient, typically 0.5 [20]

Inside Contraction (when ( f(Xr) ) is worse than ( Xh )): [ Xc = M - \beta(M - Xh) ]

Experimental Protocol:

- Performance Assessment: Compare ( f(X_r) ) with all vertex values

- Contraction Type Selection: Choose outside or inside contraction based on relative performance

- Generate Contraction Point: Compute ( X_c ) using appropriate formula

- Evaluate Contraction: Calculate ( f(X_c) )

- Replacement Decision: Replace ( Xh ) with ( Xc ) if improvement occurs; otherwise, proceed to shrinkage

Quantitative Performance Analysis

Operational Efficiency Across Problem Domains

Table 1: Simplex Operation Efficiency in Noisy Optimization Problems [21]

| Operation Type | Success Rate (%) | Average Improvement per Step | Distortion in Simplex Size (DSS) | Application Context |

|---|---|---|---|---|

| Reflection | 68.4 | 24.7% | 1.02 | Standard landscape exploration |

| Expansion | 71.9 | 31.2% | 1.87 | Aggressive progression toward optima |

| Contraction | 63.1 | 18.5% | 0.53 | Resolution refinement |

| Shrinkage | 42.7 | -5.3% | 0.38 | Recovery from stagnation |

The adaptive Nelder-Mead algorithm (ANMA) demonstrates superior performance in noisy optimization landscapes compared to the standard implementation (SNMA). In complex nonlinear least-squares problems with experimental noise, ANMA achieved approximately 35% higher convergence probability and 28% faster parameter resolution by adaptively adjusting simplex operations based on landscape characteristics [21].

Parameter Configuration Guidelines

Table 2: Optimal Parameter Settings for Pharmaceutical Applications

| Parameter | Standard Value | Adaptive Range | Problem Sensitivity | Effect on Convergence |

|---|---|---|---|---|

| Reflection (α) | 1.0 | 0.8-1.2 | Low | Governs exploration breadth |

| Expansion (γ) | 2.0 | 1.5-3.0 | High | Controls aggressive progression |

| Contraction (β) | 0.5 | 0.3-0.7 | Medium | Determines refinement resolution |

| Shrinkage (δ) | 0.5 | 0.4-0.6 | Low | Recovery from complex landscapes |

Pharmaceutical Application Protocol

Drug Formulation Optimization Workflow

The simplex protocol enables efficient optimization of pharmaceutical formulations where multiple conflicting objectives must be balanced, such as bioavailability, stability, and production cost [23].

Experimental Protocol:

- Parameter Space Definition:

- Identify critical formulation parameters (excipient ratios, processing variables)

- Establish feasible ranges based on physicochemical constraints

- Define objective function incorporating multiple performance metrics

Initial Simplex Design:

- Generate n+1 formulations using systematic experimental design

- Ensure non-degenerate simplex geometry

- Incorporate formulation expertise in initial vertex selection

Iterative Optimization Cycle:

- Prepare and characterize formulations corresponding to simplex vertices

- Evaluate objective function (e.g., dissolution profile, stability metrics)

- Apply reflection, expansion, or contraction operations based on performance

- Continue until convergence criteria satisfied

Multi-Objective Optimization in Drug Development

Pharmaceutical development inherently involves multiple competing objectives, requiring specialized approaches to balance efficacy, safety, and manufacturability [18] [23].

Pareto Optimization Protocol:

- Objective Function Definition:

- Transform each critical quality attribute to normalized deviation from target

- Apply weighting factors based on therapeutic priority

- Construct aggregate objective function: [ cost = \frac{\sum wi \cdot |fi - f{i, target}| / f{i, target}}{\sum w_i} ]

Fuzzy Optimization Framework:

- Incorporate expert knowledge through fuzzy evaluation of immeasurable criteria

- Apply hybrid maximin and Pareto optimality principles [18]

- Handle uncertainty in biological response measurements

Decision Support Implementation:

- Present Pareto-optimal solutions to development team

- Enable interactive exploration of formulation trade-offs

- Incorporate regulatory constraints throughout optimization process

Research Reagent Solutions

Table 3: Essential Computational Tools for Simplex Optimization Research

| Research Tool | Function | Application Context | Implementation Example |

|---|---|---|---|

| Adaptive NMA (ANMA) | Dynamic parameter adjustment | Noisy experimental data | Pharmaceutical formulation optimization [21] |

| Multi-Objective NBI | Pareto front generation | Conflicting objectives | Drug property balancing [24] |

| Fuzzy Evaluation | Immeasurable criteria handling | Expert knowledge integration | Biological response optimization [18] |

| Factor Analysis | Response correlation modeling | Multivariate optimization | Quality by Design (QbD) implementation [24] |

| Robust Cost Function | Weighted objective combination | Priority-based optimization | Formulation parameter tuning [25] |

Convergence and Termination Criteria

Effective implementation requires precise convergence monitoring to balance computational efficiency with solution quality.

Termination Protocol:

- Standard Deviation Criterion: Terminate when standard deviation between simplex vertices falls below threshold (e.g., 1×10⁻⁴) [20]

- Simplex Size Monitoring: Track distortion in simplex size (DSS) throughout iterative process [21]

- Performance Stagnation: Monitor improvement rate across iterations

- Iteration Limit: Implement maximum iteration count as fallback (e.g., 1000 iterations) [20]

Conformance Verification:

- Validate solution robustness through perturbation analysis

- Confirm physiological relevance of optimized parameters

- Verify manufacturability within design space boundaries

The iterative engine of reflection, expansion, and contraction operations provides a robust foundation for multivariate optimization in pharmaceutical development. By adapting these core operations to specific research contexts and implementing rigorous experimental protocols, researchers can efficiently navigate complex design spaces to identify optimal formulations balancing multiple critical quality attributes.

In drug discovery and analytical chemistry, developers routinely face the challenge of optimizing multiple, often conflicting, objectives simultaneously. A formulation scientist might need to maximize product purity while minimizing production cost and processing time. Such multi-objective optimization problems are characterized by vast, complex solution spaces where improving one objective often leads to the deterioration of another [26]. Traditional single-variable (univariate) optimization approaches are inadequate for these scenarios as they optimize conditions one-by-one while holding others constant, failing to capture critical interaction effects between variables and potentially missing the true optimal conditions [27].

The integration of the desirability function with robust optimization algorithms provides a powerful framework for confronting these challenges. This approach allows researchers to transform multiple responses into a single, dimensionless metric that can be systematically optimized. Within this framework, the simplex method serves as a particularly effective optimization engine, especially when dealing with complex experimental landscapes where mathematical derivatives are unobtainable or when processes are characterized by uncertainty and fuzzy criteria [27] [18]. This protocol details the application of this combined approach, providing a structured methodology for researchers in drug development and related fields.

Theoretical Foundation

The Desirability Function

The desirability function, introduced by Derringer and Suich, is a mathematical tool for converting multiple response variables into a single, comprehensive metric. Its core principle involves transforming each individual response ( yi ) into a partial desirability function ( di ), which ranges from 0 (completely undesirable) to 1 (fully desirable). The form of ( d_i ) depends on the optimization goal for that particular response:

For "Higher is Better" (Maximization): ( di = \begin{cases} 0 & \text{if } yi < L \ \left( \frac{yi - L}{T - L} \right)^s & \text{if } L \leq yi \leq T \ 1 & \text{if } y_i > T \end{cases} ) where ( L ) is the lower specification limit, ( T ) is the target value (often the maximum practical value), and ( s ) is a user-defined weight.

For "Lower is Better" (Minimization): ( di = \begin{cases} 1 & \text{if } yi < T \ \left( \frac{U - yi}{U - T} \right)^s & \text{if } T \leq yi \leq U \ 0 & \text{if } y_i > U \end{cases} ) where ( U ) is the upper specification limit and ( T ) is the target.

For "Target is Best": ( di = \begin{cases} \left( \frac{yi - L}{T - L} \right)^s & \text{if } L \leq yi \leq T \ \left( \frac{U - yi}{U - T} \right)^t & \text{if } T \leq y_i \leq U \ 0 & \text{otherwise} \end{cases} ) where ( s ) and ( t ) are weights shaping the function around the target ( T ).

These individual desirabilities are then combined into an overall desirability index, ( D ), using the geometric mean: ( D = (d1 \times d2 \times \cdots \times d_n)^{1/n} ) This overall desirability ( D ) becomes the single objective function for the optimization algorithm. A value of ( D=1 ) represents the ideal case where all responses are on target, while ( D=0 ) indicates that at least one response is outside its acceptable limits [27].

The Simplex Method of Optimization

The simplex method used in experimental optimization (distinct from the Dantzig simplex method for linear programming) is a sequential search technique that does not require the calculation of derivatives. It is therefore classified as a direct search method and is particularly valuable when the functional relationship between variables and the response is complex or unknown [27].

The method operates using a geometric figure called a simplex. For ( n ) factors to optimize, the simplex is defined by ( n+1 ) points in the factor space. For example, with two factors, the simplex is a triangle. The core algorithm, the Nelder-Mead simplex, proceeds by iteratively reflecting, expanding, or contracting the simplex away from the point with the worst performance, thus "rolling" itself towards an optimum [27]. This makes it highly effective for navigating response surfaces with potential interactions between variables, a task where univariate methods fail.

Synergistic Integration in a Multi-Objective Context

The power of this approach lies in the synergy between its components. The desirability function translates a complex, multi-criteria problem into a single, quantifiable objective: maximize ( D ). The simplex algorithm then efficiently solves this problem by navigating the factor space, dynamically adjusting the experimental conditions based on the observed ( D ) values without needing to know the underlying mathematical model of each response. This hybrid approach is highly effective for solving Multi-objective Linear Programming (MOLP) problems and has demonstrated reduced computational effort compared to other techniques like preemptive goal programming [4] [18]. Furthermore, the framework can be extended to handle fuzzy environments, where criteria or constraints are not crisp but are described by linguistic variables, allowing the incorporation of expert knowledge and experience from decision-makers [18].

Application Notes & Protocols

Protocol: Multi-Objective Optimization of an HPLC Method for Drug Analysis

This protocol outlines the steps for optimizing a High-Performance Liquid Chromatography (HPLC) method for quantifying an active pharmaceutical ingredient (API), such as Losartan Potassium, using a desirability-simplex approach [28]. The goal is to simultaneously achieve optimal resolution, analysis time, and peak symmetry.

1. Problem Definition and Goal Setting

- Objective: Develop a robust HPLC method to quantify Losartan Potassium in capsules.

- Critical Responses (Objectives):

- ( y1 ): Resolution (Rs) from closest impurity. Goal: Maximize (Target ≥ 2.0).

- ( y2 ): Analysis time (t). Goal: Minimize (Target ≤ 10 min).

- ( y_3 ): Peak Asymmetry Factor (As). Goal: Target is 1.0.

- Critical Factors (Variables):

- ( x1 ): pH of the mobile phase buffer.

- ( x2 ): Concentration of the organic modifier (e.g., Acetonitrile %).

- ( x3 ): Flow rate (mL/min).

- ( x4 ): Column temperature (°C).

2. Experimental Design and Initial Simplex

- Define the feasible range for each factor based on preliminary experiments or literature.

- Construct an initial simplex in the 4-dimensional factor space. This requires 5 initial experimental runs (n+1, where n=4). These initial points can be selected using a preliminary fractional factorial design to efficiently scout the design space [28].

3. Defining the Desirability Functions For each experimental run, the responses are measured and converted into partial desirabilities. Table 1: Specification of Partial Desirability Functions for HPLC Optimization

| Response Variable | Goal | Lower Limit (L) | Target (T) | Upper Limit (U) | Weights (s, t) |

|---|---|---|---|---|---|