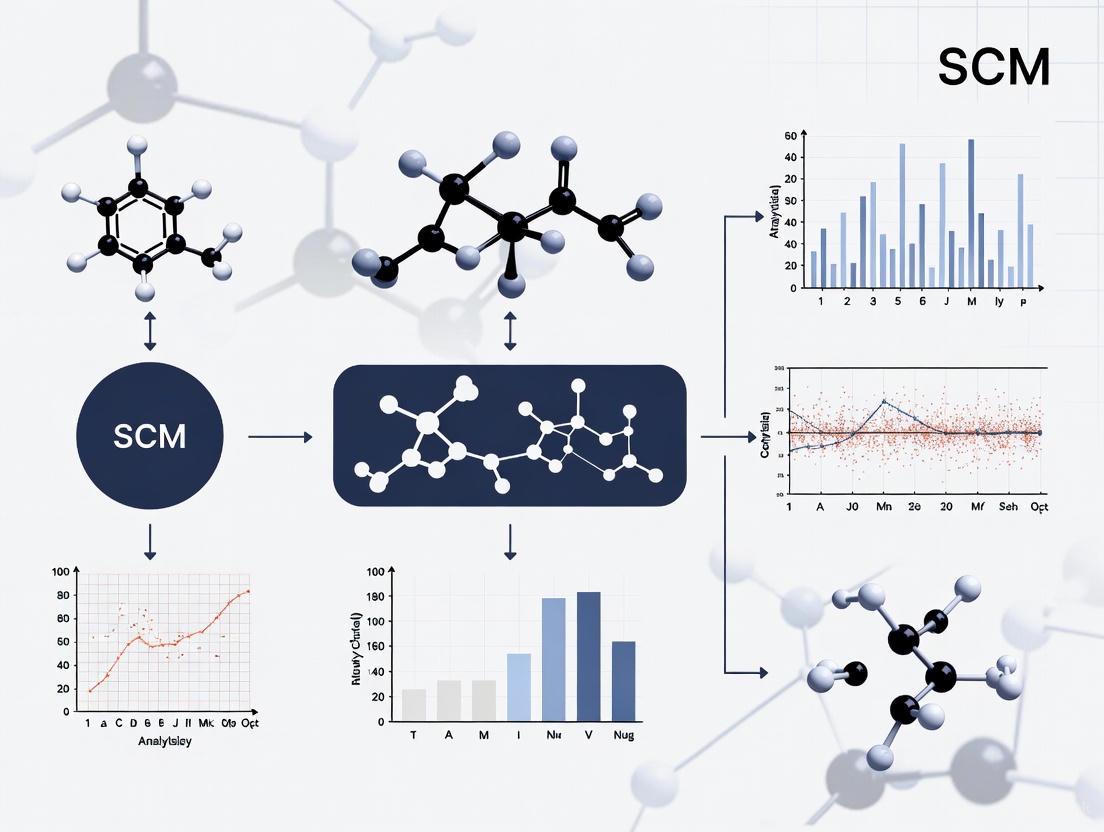

A Practical Guide to Synthetic Control Method (SCM): Step-by-Step Application for Biomedical Research

This guide provides a comprehensive framework for applying the Synthetic Control Method (SCM) in biomedical and clinical research settings.

A Practical Guide to Synthetic Control Method (SCM): Step-by-Step Application for Biomedical Research

Abstract

This guide provides a comprehensive framework for applying the Synthetic Control Method (SCM) in biomedical and clinical research settings. It details a complete workflow from foundational concepts and methodological implementation to troubleshooting common pitfalls and validating results. Designed for researchers and drug development professionals, the content addresses specific challenges in health research, including rigorous donor pool construction, statistical inference for single-case studies, and integration with modern causal inference approaches for robust impact evaluation of interventions, policies, and external events when randomized controlled trials are not feasible.

Understanding Synthetic Control Method: Core Principles and When to Use It in Health Research

The Synthetic Control Method (SCM) is a powerful quasi-experimental technique for estimating causal effects when a policy, intervention, or event affects a single unit—such as a country, state, or city—and traditional randomized controlled trials are not feasible [1]. First introduced by Abadie and Gardeazabal (2003) and formalized by Abadie, Diamond, and Hainmueller (2010), SCM constructs a data-driven counterfactual by creating a weighted combination of untreated donor units that closely mirrors the pre-intervention characteristics and outcomes of the treated unit [1] [2]. This "synthetic control" serves as the best approximation of what would have happened to the treated unit in the absence of the intervention, enabling researchers to estimate the causal effect by comparing post-intervention outcomes between the treated unit and its synthetic counterpart.

SCM has been successfully applied across numerous fields, including public policy, marketing, epidemiology, and economics. Recent applications range from assessing the economic impact of Brexit on the UK's real GDP [3] to evaluating the effect of wildfires on housing prices [4] and measuring the effectiveness of marketing campaigns [2]. The method is particularly valuable in situations where a perfect untreated comparison group does not exist, when treatment is applied to a single unit or a small number of units, or when interventions affect entire populations simultaneously [1] [5].

Theoretical Foundation

Formal Framework and Key Assumptions

SCM operates within the potential outcomes framework of causal inference. Consider a panel of J+1 units observed over T time periods, where unit i = 1 receives treatment starting at time T₀ + 1, while units j = 2, ..., J+1 constitute an untreated donor pool [1] [2]. For each unit i and period t, we observe outcome Y{*it*}. The fundamental problem of causal inference is that we can only observe one potential outcome for each unit at each time: for the treated unit in the post-treatment period (*t* > *T*₀), we observe *Y*{1t}(1) but cannot observe the counterfactual Y_{1t}(0).

The treatment effect for the treated unit at time t is defined as:

τ{1*t*} = *Y*{1t}(1) - Y_{1t}(0) for t > T₀

SCM estimates the unobserved counterfactual Y_{1t}(0) by constructing a synthetic control as a weighted combination of donor units:

Ŷ{1*t*}(0) = ∑{j=2}^{J+1} w{*j*} *Y*{jt*}

where the weights w_{j} are constrained to be non-negative and sum to one, ensuring the synthetic control is a convex combination of donor units [1] [2].

The validity of SCM rests on several key assumptions [1]:

- No Contamination: Only the treated unit experiences the intervention; control units in the donor pool remain untreated.

- No Other Major Changes: The treatment is the only significant event affecting the treated unit during the study period.

- Linearity: The counterfactual outcome of the treated unit can be constructed as a linear combination of control unit outcomes.

- Good Pre-treatment Fit: The synthetic control should closely resemble the treated unit in pre-treatment periods across both characteristics and outcome trajectories.

Outcome Model and Optimization

The underlying outcome model for SCM is often represented as a factor model [1]:

Y{*it*}^*N* = θ*t_ Z_i_ + λt* μ*i_ + ε_{it}

where:

- Z_i represents observed characteristics

- μ_i represents unobserved factors

- ε_{it} represents transitory shocks

The optimal weights W^* = (w₂^, ..., *w_{J+1}^*) are determined by solving an optimization problem that minimizes the discrepancy between the pre-treatment characteristics and outcomes of the treated unit and the synthetic control [1] [2]:

W^* = argmin_W ||X₁ - X₀W||

where:

- X₁ is a vector of pre-treatment characteristics for the treated unit

- X₀ is a matrix of pre-treatment characteristics for the donor units

- The minimization is subject to w{*j*} ≥ 0 and ∑*w*{j} = 1

Table 1: Core Components of the SCM Theoretical Framework

| Component | Description | Mathematical Representation | Interpretation |

|---|---|---|---|

| Treated Unit | Unit experiencing the intervention | i = 1 | Target of causal inference |

| Donor Pool | Collection of untreated units | j = 2, ..., J+1 | Potential control units |

| Weights | Contribution of each donor to synthetic control | w₂, ..., w_{J+1} | Non-negative, sum to 1 |

| Pre-treatment Period | Time before intervention | t = 1, ..., T₀ | Model fitting period |

| Post-treatment Period | Time after intervention | t = T₀+1, ..., T | Treatment effect estimation period |

| Treatment Effect | Causal effect of intervention | τ{1*t*} = *Y*{1t} - Ŷ_{1t}(0) | Difference between observed and synthetic outcome |

Application Protocols

End-to-End Implementation Workflow

Implementing SCM requires a rigorous, multi-stage process to ensure valid causal inference. Based on practitioner guidance and recent applications, the following workflow represents best practices for SCM implementation [2]:

Stage 1: Design and Pre-Analysis Planning

- Define treatment units, outcome metrics, and intervention timing

- Assemble comprehensive candidate donor pool with complete panel data

- Pre-register donor exclusion criteria and analytical specifications

- Ensure measurement consistency across units and time periods

- Conduct power analysis to determine minimum detectable effect sizes

Stage 2: Donor Pool Construction and Screening

- Apply correlation filtering (typically excluding donors with pre-period outcome correlation < 0.3)

- Verify seasonal pattern alignment using spectral analysis

- Test for structural breaks using Chow tests or similar procedures

- Assess contamination risk by removing units with direct or indirect treatment exposure

- Account for geographic considerations and spatial spillovers

Stage 3: Feature Engineering and Scaling

- Select multiple lags of outcome variable spanning complete seasonal cycles

- Include auxiliary covariates only when measurement quality is high

- Apply z-score normalization using pre-period statistics only: (X - μ{pre}) / *σ*{pre}

- Consider moving averages to smooth high-frequency noise

Stage 4: Constrained Optimization with Regularization

- Solve the optimization problem: minW ||X₁ - X₀W||{V}^2 + λR(W)

- Apply entropy penalty: R(W) = ∑{*j*} *w*{j} log w_{j} to promote weight dispersion

- Implement weight caps: w{*j*} ≤ *w*{max} to prevent over-concentration

- Use cross-validation to select optimal regularization parameter λ

Stage 5: Holdout Validation

- Reserve final 20-25% of pre-intervention period as holdout

- Train synthetic control on early pre-period data only

- Evaluate prediction accuracy on holdout using MAPE, RMSE, and R-squared

- Apply quality gates (e.g., MAPE < 15% for monthly data) before proceeding to effect estimation

Stage 6: Effect Estimation and Business Metrics

- Calculate treatment effects: τ̂_{t} = Y_{1t} - ∑_{j=2}^{J+1} w_j^ Y{jt} for t > T0*

- Derive business metrics including lift percentage and incremental Return on Ad Spend (iROAS)

Stage 7: Statistical Inference and Uncertainty Quantification

- Conduct placebo tests (in-space and in-time)

- Generate permutation-based p-values

- Construct confidence intervals via bootstrap methods

Stage 8: Diagnostic Assessment and Sensitivity Analysis

- Monitor weight concentration using effective number of donors: EN = 1/∑{j} wj^{2}

- Verify treated unit lies within convex hull of donors

- Perform leave-one-out analysis for influential donors

- Test robustness to alternative specifications

Case Study Protocol: Wildfire Impact on Housing Prices

A January 2025 study exemplifies the rigorous application of SCM to estimate the causal impact of a wildfire on housing prices in Altadena, California [4]. The following protocol details the methodology, which can be adapted to various intervention studies:

Research Question: What is the causal effect of a January 2025 wildfire on housing prices in Altadena, California?

Data Collection Protocol:

- Outcome Variable: Zillow Home Value Index (ZHVI) for All Homes, Smoothed, Seasonally Adjusted

- Data Source: Zillow's public data repository

- Time Frame: January 2000 to July 2025

- Treated Unit: Altadena, California

- Intervention Date: January 31, 2025

- Pre-intervention Period: 5 years (January 2020 - December 2024)

- Post-intervention Period: 6 months (February 2025 - July 2025)

- Initial Donor Pool: 60 California cities with similar population size and housing market characteristics

- Final Donor Pool: 58 cities after filtering for data availability and pre-treatment correlation

Optimization Protocol:

- Objective Function: Time-weighted loss function with exponential decay

- Weight Formula: ω_t = exp(α(t - T_{end})) with decay parameter α = 0.005

- Optimization: Minimize pre-treatment MSPE between actual and synthetic Altadena

- Validation: Sensitivity analysis across α ∈ [0.003, 0.01] to verify robustness

Inference Protocol:

- Method: Placebo-in-space test

- Procedure: Iteratively apply SCM to each donor city as if it experienced the wildfire

- p-value Calculation: p = (k + 1)/(J + 1) where k = number of placebo effects as large as Altadena's

- Metrics: Average post-treatment gap and post-to-pre-treatment RMSPE ratio

Table 2: Synthetic Control Weights from Altadena Case Study

| City Name | Weight (%) | Cumulative Weight (%) |

|---|---|---|

| Burbank | 35.53 | 35.53 |

| Whittier | 18.66 | 54.19 |

| South Pasadena | 10.69 | 64.88 |

| Temecula | 10.47 | 75.35 |

| Rolling Hills Estates | 7.61 | 82.96 |

| La Canada Flintridge | 6.05 | 89.01 |

| Sierra Madre | 5.50 | 94.51 |

| Other 41 cities | 5.49 | 100.00 |

Results Interpretation:

- Pre-treatment Fit: Excellent with RMSPE of 0.61% relative to average pre-treatment price

- Treatment Effect: Sustained and growing negative impact over six months post-wildfire

- Statistical Significance: Significant at 10% level based on RMSPE ratio (p = 0.0508) but not based on average gap (p = 0.3220)

- Economic Magnitude: Average monthly loss of $32,125 over six months post-wildfire

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software Tools for SCM Implementation

| Tool Name | Implementation Language | Key Features | Use Case |

|---|---|---|---|

| Synth | R | Original SCM algorithm | Canonical SCM applications |

| augsynth | R | Augmented SCM with bias correction | Cases with imperfect pre-treatment fit [6] |

| scpi | Python, R, Stata | Uncertainty quantification with prediction intervals | Robust inference and uncertainty quantification [7] |

| CausalImpact | R, Python | Bayesian structural time series | Alternative counterfactual estimation |

| gsynth | R | Generalized synthetic control | Multiple treated units and staggered adoption |

| SyntheticDifference-in-Differences | R, Python | Combines SCM and DiD advantages | When parallel trends assumption is questionable |

Advanced Methodological Extensions

Augmented and Regularized SCM

Recent methodological advances have addressed key limitations of the standard SCM approach. The Augmented Synthetic Control Method (ASCM) introduced by Ben-Michael, Feller, and Rothstein (2021) extends SCM to cases where perfect pre-treatment fit is infeasible [1] [6]. ASCM combines SCM weighting with bias correction through an outcome model, improving estimates when SCM alone fails to match pre-treatment outcomes precisely. The method is particularly valuable when the treated unit lies outside the convex hull of donor units, a scenario where traditional SCM may produce biased estimates.

Another important advancement is the Penalized Synthetic Control Method proposed by Abadie and L'hour (2021), which modifies the optimization problem to reduce interpolation bias [1]:

minW ||X₁ - ∑{j=2}^{J+1}W{*j*} X{j} ||² + λ ∑{*j*=2}^{*J*+1} *W*{j} ||X₁ - X_{j}||²

where:

- λ > 0 controls the trade-off between fit and regularization

- λ → 0 yields standard synthetic control

- λ → ∞ approaches nearest-neighbor matching

This method ensures sparse and unique solutions for weights while excluding dissimilar control units, thereby reducing interpolation bias.

SCM with Multiple Outcomes

Traditional SCM applications typically use a single outcome variable, but recent work has explored incorporating multiple outcomes to improve counterfactual estimation [8]. When multiple relevant variables are available, analysts can employ a stacked approach that concatenates multiple outcomes into the optimization:

Stacked SCM Approach:

- Vertically concatenate pre-treatment outcomes: Y₀' = [Y₀^{(1),pre}; Y₀^{(2),pre}; ...; Y₀^{(K),pre}]

- Apply standard SCM optimization to stacked data: W^* = argminW ||y₁' - Y₀'W||{F}^2

- Generate out-of-sample predictions using the same weights

An alternative approach incorporates an intercept term for each outcome to control for differences in levels across outcomes [8]:

Intercept-Adjusted SCM: W^* = argminW,*β* ||y₁' - Y₀'W - *β*||{F}^2

where β represents an unconstrained intercept term that adjusts for systematic differences between outcomes.

Recent Algorithmic Innovations

A August 2025 paper introduces a Relaxation Approach to Synthetic Control that addresses settings where the donor pool contains more control units than time periods [3]. This machine learning algorithm minimizes an information-theoretic measure of the weights subject to relaxed linear inequality constraints in addition to the simplex constraint. When the donor pool exhibits a group structure, SCM-relaxation approximates equal weights within each group to diversify prediction risk. The method achieves oracle performance in terms of out-of-sample prediction accuracy and has been applied to assess the economic impact of Brexit on the UK's real GDP.

The Synthetic Control Method represents a rigorous, data-driven approach to counterfactual estimation in settings where traditional experimental designs are not feasible. Through its structured methodology of constructing weighted combinations of control units to approximate the pre-intervention trajectory of treated units, SCM enables credible causal inference across diverse application domains. The continued methodological innovation in areas such as augmentation, regularization, multiple outcomes, and relaxed constraints has further expanded the method's applicability and robustness.

For researchers implementing SCM, adherence to comprehensive protocols encompassing design, donor screening, validation, inference, and sensitivity analysis is essential for producing valid results. The growing ecosystem of software tools has made SCM more accessible while providing sophisticated approaches to uncertainty quantification. As SCM continues to evolve, it remains a powerful tool in the causal inference arsenal, particularly for evaluating interventions affecting single units or small groups in observational settings.

In clinical research and drug development, establishing causal evidence for the effect of a treatment, policy, or intervention is paramount. While randomized controlled trials (RCTs) represent the gold standard for causal inference, they are often ethically problematic, impractical, or prohibitively expensive in many real-world clinical scenarios [9]. In these contexts, observational causal inference methods provide indispensable tools for generating evidence. Among these, Difference-in-Differences (DiD) and various regression approaches constitute foundational methodologies. DiD estimates causal effects by comparing the change in outcomes over time between a treatment group and a control group, relying on a parallel trends assumption [10]. Regression methods, particularly logistic regression, remain a cornerstone for modeling relationships between variables and predicting binary clinical outcomes, valued for their interpretability and robust framework [11]. This article delineates the key advantages of these methods over alternatives, provides structured protocols for their application, and situates them within the evolving landscape of causal inference, including the emerging role of synthetic control methods (SCM).

Key Advantages and Comparative Analysis

Advantages of Difference-in-Differences (DiD)

DiD is a quasi-experimental design that leverages longitudinal data to construct an appropriate counterfactual, making it highly suitable for evaluating policy changes, new treatment protocols, and large-scale interventions in healthcare [10].

Table 1: Key Advantages of the Difference-in-Differences (DiD) Method

| Advantage | Description | Clinical Context Example |

|---|---|---|

| Intuitive Interpretation | The causal effect is derived from a simple comparison of pre-post changes between groups, making results accessible to a broad clinical audience. | Presenting the effect of a new hospital readmission reduction program to administrators and clinicians [10]. |

| Use of Observational Data | Can obtain causal estimates from non-randomized, observational data when core assumptions are met, circumventing ethical or practical barriers to RCTs. | Studying the effect of Medicaid expansion on cardiovascular mortality using administrative claims data [12]. |

| Controls for Baseline Confounding | Accounts for permanent, unobserved differences between treatment and control groups by using each group as its own control over time. | Comparing patient outcomes between two hospital systems with different baseline mortality rates after one implements a new surgical technique [10]. |

| Accounts for Temporal Trends | Adjusts for trends over time that are common to both groups, isolating the effect of the intervention from other secular changes. | Evaluating a smoking ban's effect on hospitalization rates while accounting for pre-existing, improving trends in public health [5]. |

| Flexible Data Requirements | Can be applied to individual-level panel data, repeated cross-sectional data, or group-level aggregate data. | Using national survey data collected from different individuals each year to assess a public health campaign's impact [10]. |

The most critical assumption for a valid DiD analysis is the parallel trends assumption: in the absence of the treatment, the outcome trends for the treatment and control groups would have continued in parallel [10] [12]. Recent methodological advancements have focused on strengthening DiD applications, including covariate adjustment to relax causal assumptions, robust inference techniques, and methods to account for staggered treatment timing, a common feature in the roll-out of new therapies or policies [12].

Advantages of Regression in Clinical Contexts

Regression, particularly logistic regression for binary outcomes, is a workhorse of clinical modeling. Its enduring relevance is attributed to several key strengths over more complex modeling techniques.

Table 2: Key Advantages of Logistic Regression in Clinical Research

| Advantage | Description | Clinical Context Example |

|---|---|---|

| High Interpretability | Model coefficients are directly interpretable as log-odds or odds ratios, providing clinically meaningful effect measures. | Conveying how a one-unit increase in a biomarker level changes the odds of a disease, facilitating risk communication [11]. |

| Handles Mixed Predictor Types | Seamlessly incorporates continuous (e.g., biomarker levels) and categorical (e.g., genotype) predictor variables in the same model. | Developing a diagnostic model for acute coronary syndrome using troponin levels (continuous), ECG findings (categorical), and patient sex (categorical) [11]. |

| Outputs Probabilities | Provides a direct estimate of the probability of an event (e.g., disease presence, treatment success) for individual patients. | Generating a patient-specific probability of post-operative infection to guide prophylactic antibiotic use [11]. |

| Robustness with Small Samples | Generally requires smaller sample sizes than machine learning (ML) models for stable performance, a crucial feature in rare disease research [13]. | Developing a prognostic model for a rare oncological condition with a limited patient cohort [9] [13]. |

| Statistical Inference Framework | Naturally incorporates confidence intervals and p-values for coefficients, aligning with the reporting standards of clinical literature [11]. | Justifying the inclusion of a novel risk factor in a clinical prediction rule based on its statistically significant odds ratio. |

While machine learning models can capture complex non-linear relationships, their performance gains on structured, tabular clinical data are inconsistent and highly context-dependent [13]. A 2019 meta-regression found no performance benefit of ML over statistical logistic regression for binary classification on tabular clinical data, highlighting that data quality and characteristics often outweigh model complexity [13]. Logistic regression's "white-box" nature offers transparency that is paramount for clinical decision-making, where understanding the rationale behind a prediction is as important as the prediction itself [13] [11].

Experimental Protocols and Workflows

Protocol for a Difference-in-Differences Analysis

The following protocol provides a step-by-step guide for implementing a DiD analysis to evaluate a clinical intervention or health policy.

Protocol 1: DiD for Health Policy Evaluation

- Objective: To estimate the causal effect of a new state-wide health policy (e.g., a bundled payment program) on hospital readmission rates.

- Primary Endpoint: Change in 30-day risk-adjusted readmission rates.

- Materials & Data:

- Treatment Group: Hospitals in the state implementing the policy.

- Control Group: Hospitals in comparable states without the policy.

- Data Source: Administrative claims data for a period spanning at least 2-3 years pre-policy and 1-2 years post-policy.

- Variables: Hospital identifier, time period (quarter/year), readmission rate, and relevant covariates (e.g., patient case-mix index).

- Procedure:

- Pre-Analysis Planning:

- Define the intervention start date (

T0). - Pre-specify the treatment and control groups based on policy jurisdiction. Ensure the control group is not exposed to similar policies.

- Justify the pre- and post-intervention periods, ensuring they are long enough to establish trends and capture seasonal effects.

- Define the intervention start date (

- Assess Parallel Trends:

- Visually inspect the trends of the outcome (readmission rate) for both groups in the pre-intervention period. The trends should be approximately parallel [10] [12].

- Formally test for differential pre-trends by regressing the outcome on a linear time trend interacted with the treatment group indicator in the pre-period.

- Model Specification:

- Estimate the following linear regression model using data from both pre- and post-periods:

Y = β0 + β1*[Post] + β2*[Treatment] + β3*[Post*Treatment] + β4*[Covariates] + ε - Where:

Yis the outcome (readmission rate).Postis a dummy variable (0=pre, 1=post).Treatmentis a dummy variable (0=control, 1=treatment).Post*Treatmentis the interaction term.β3is the DiD estimator, representing the causal effect of the policy.

- Estimate the following linear regression model using data from both pre- and post-periods:

- Statistical Inference:

- Sensitivity & Robustness Checks:

- Conduct a placebo test by artificially setting

T0to a time before the actual policy;β3should be statistically insignificant [12]. - Test different control groups or model specifications to ensure the result is not fragile.

- Conduct a placebo test by artificially setting

- Pre-Analysis Planning:

The logical workflow and key checks for this protocol are summarized in the diagram below.

Protocol for Clinical Risk Prediction with Logistic Regression

This protocol outlines the development and validation of a clinical risk prediction model using logistic regression.

Protocol 2: Logistic Regression for Risk Prediction

- Objective: To develop a model predicting the probability of post-operative infection based on pre- and intra-operative patient factors.

- Primary Endpoint: Binary outcome of post-operative surgical site infection within 30 days (Yes/No).

- Materials & Data:

- Patient Cohort: Retrospective cohort of patients undergoing the target procedure.

- Candidate Predictors: Pre-operative albumin levels, BMI, diabetes status, and operative duration.

- Data Source: Electronic health records and surgical registry data.

- Procedure:

- Data Preparation:

- Handle missing data through appropriate methods (e.g., multiple imputation).

- Split the dataset randomly into a training set (e.g., 70%) for model development and a testing set (30%) for validation [11].

- Variable Selection & Assumption Checking:

- Assess the linearity in the log-odds for continuous predictors (e.g., albumin) using restricted cubic splines or residual plots. Violations may require variable transformation [11].

- Check for multicollinearity among predictors using variance inflation factors (VIF).

- Model Fitting:

- Fit the logistic regression model on the training dataset. The model form is:

ln(p/(1-p)) = β0 + β1*Albumin + β2*BMI + β3*Diabetes + β4*OperativeDuration - where

pis the probability of infection, andln(p/(1-p))is the log-odds.

- Fit the logistic regression model on the training dataset. The model form is:

- Model Performance Validation (on Test Set):

- Discrimination: Calculate the Area Under the Receiver Operating Characteristic Curve (AUROC) to assess the model's ability to distinguish between patients who do and do not get an infection [13] [11].

- Calibration: Assess calibration (agreement between predicted probabilities and observed frequencies) using a calibration plot or Hosmer-Lemeshow test. A well-calibrated model should have predictions close to the 45-degree line on the plot [13].

- Clinical Utility: Perform decision curve analysis to evaluate the net benefit of using the model for clinical decisions across different probability thresholds [13].

- Model Interpretation & Deployment:

- Exponentiate coefficients to obtain odds ratios (OR) for each predictor. For example, an OR for diabetes of 1.8 would indicate an 80% higher odds of infection among diabetic patients.

- Present the final model as a nomogram or score chart for ease of use in clinical settings.

- Data Preparation:

The development and validation cycle for the risk prediction model is illustrated below.

The Scientist's Toolkit: Essential Reagents and Materials

Successful implementation of DiD and regression analyses requires both data and software resources. The following table details key "research reagents" for the clinical data scientist.

Table 3: Essential Research Reagents for Causal Analysis

| Item Name | Function / Definition | Application Notes |

|---|---|---|

| Longitudinal Panel Dataset | A dataset containing repeated observations of the same units (e.g., patients, hospitals) over time. | Function: The fundamental input for DiD analysis. Must include data from both pre- and post-intervention periods for treatment and control units. |

| Pre-Intervention Outcome Trajectory | The historical path of the outcome variable for all units before the treatment is introduced. | Function: Critical for verifying the parallel trends assumption in DiD and for constructing the synthetic control in SCM [1] [10]. |

| Stable Unit Treatment Value Assumption (SUTVA) | The assumption that one unit's treatment assignment does not affect another unit's outcome. | Function: A core causal assumption. Violations (e.g., treatment spillover) can bias results. Must be evaluated based on study context [10]. |

| Odds Ratio (OR) | The exponentiated coefficient from a logistic regression model, representing the multiplicative change in odds of the outcome per unit change in the predictor. | Function: The primary interpretable output of logistic regression. Provides a clinically intuitive measure of association but should not be conflated with risk ratios [11]. |

R/Python Synth & CausalImpact Libraries |

Software packages implementing advanced causal methods, including Synthetic Control and Bayesian Structural Time Series. | Function: Enable the implementation of SCM as a robust alternative when a control group for DiD is not readily available [2] [5]. |

| Placebo Test Distribution | A null distribution of treatment effects generated by applying the analysis to untreated units or pre-period dates. | Function: A key inferential tool for SCM and a robustness check for DiD. The true effect should be extreme relative to this distribution [1] [2]. |

Integration with Synthetic Control Methods

In clinical contexts where a single unit (e.g., one country, one hospital system) receives a treatment and no single control unit provides a good match, the Synthetic Control Method (SCM) offers a powerful alternative. SCM constructs a data-driven counterfactual as a weighted combination of multiple control units from a "donor pool," forcing this synthetic unit to closely match the treated unit's pre-intervention outcome trajectory and characteristics [1] [2]. This approach has been used to evaluate the impact of laws like Massachusetts' payment disclosure law on physician prescribing behavior [1].

The key advantage of SCM in a clinical setting is its utility in rare disease trials or studies where a placebo arm is not feasible. Regulatory agencies like the FDA support its use on a case-by-case basis, particularly for severe diseases with inadequate standard of care [9]. A hybrid design, which combines a small randomized control arm with a synthetically augmented control group, is gaining interest as it helps mitigate concerns about unmeasured confounding, a common criticism of purely external control arms [9].

The workflow for creating a synthetic control arm, as applied in clinical trials, is shown below.

DiD and logistic regression remain powerful and essential tools in the clinical researcher's arsenal. DiD provides a credible framework for causal inference from observational data when interventions are applied at a group level and the parallel trends assumption holds. Logistic regression offers an interpretable and robust method for clinical prediction and risk stratification, often matching or surpassing the performance of more complex machine learning models on structured clinical data. The choice between methods is not one of inherent superiority but of aligning the tool with the research question, data structure, and underlying assumptions. As the field evolves, these traditional methods are being complemented and extended by approaches like the Synthetic Control Method, which offers a novel solution to the challenge of constructing valid counterfactuals in increasingly complex and personalized clinical environments.

Application Notes: SCM in Biomedical Research

The Synthetic Control Method (SCM) is a powerful causal inference tool designed for evaluating the impact of interventions when randomized controlled trials (RCTs) are impractical, unethical, or prohibitively expensive [14]. Originally developed in the social sciences, SCM constructs a data-driven, weighted combination of untreated control units—a "synthetic control"—that closely mirrors the pre-intervention trajectory and characteristics of a single treated unit (e.g., a state, country, or patient population) [1] [15]. This method is particularly valuable in biomedical and public health research for assessing the effect of population-level interventions such as new laws, policies, or health system reforms [15].

Core Principles and Advantages for Biomedicine

SCM operates within a potential outcomes framework, estimating the counterfactual—what would have happened to the treated unit without the intervention—by creating a synthetic version from a pool of untreated donor units [2]. The weights for these units are determined via an optimization algorithm that minimizes the discrepancy between the treated unit and the synthetic control during the pre-intervention period across key predictors and outcome trends [1].

Its principal advantages for biomedical research include:

- Transparent and Data-Driven Control Selection: It reduces researcher bias by using a formal algorithm to select and weight control units, rather than relying on subjective choice [15].

- Robustness to Confounding: By closely matching pre-intervention outcome trends and characteristics, SCM strengthens the plausibility of the critical "parallel trends" assumption required for causal inference, making it more robust than simpler before-after or difference-in-differences (DiD) comparisons in many settings [1] [15].

- Ability to Handle Complex Temporal Patterns: SCM can absorb seasonal patterns, long-term trends, and the influence of unobserved confounders, provided these are reflected in the pre-intervention data [2].

- Ideal for Single-Unit Interventions: It is uniquely suited for evaluating interventions applied to a single aggregate unit, a common scenario in policy and legislative health research [1] [5].

Illustrative Use Cases in Biomedicine and Public Health

The following table summarizes key areas where SCM has been, or can be, effectively applied.

Table 1: Ideal Use Cases for SCM in Biomedicine and Public Health

| Use Case Category | Specific Example | Treated Unit | Outcome Metric | Donor Pool | Key Rationale for SCM |

|---|---|---|---|---|---|

| Health Policy & Legislation | Evaluation of Florida's "Stand Your Ground" law on homicide rates [15]. | State of Florida | Annual homicide rate | Other US states without similar laws | No single state is a perfect match; a weighted combination provides a better counterfactual. |

| Impact of Massachusetts' Payment Disclosure Law on physician prescribing behavior [1]. | State of Massachusetts | Rate of prescriptions for branded drugs | Other US states | Isolating the effect of a single state's law requires a robust, data-driven control. | |

| Public Health Interventions | Assessing the effect of early face-mask regulations on COVID-19 outbreak severity [15]. | A specific city or region (e.g., Jena, Germany) | COVID-19 incidence or mortality | Similar cities/regions without early mask mandates | Intervention was implemented in one location; RCT was not feasible. |

| Evaluating the population-level impact of smoking bans or vaccination programs [5]. | A specific country or state | Rates of smoking-related admissions or disease incidence | Comparable untreated regions | Interventions are applied at a population level, preventing individual-level randomization. | |

| Drug & Therapeutic Policy | Analyzing the effect of state-specific regulation changes for opioids [15]. | A state that enacted a new policy | Opioid overdose mortality rates | States with stable opioid policies | Policy change is a single-unit event; SCM controls for underlying state-specific trends. |

| Marketing & Access in Pharma | Measuring the impact of a direct-to-consumer advertising campaign for a new drug [1]. | A specific television market (DMA) | New prescription requests or sales | Similar, unexposed media markets | Campaigns are often rolled out in specific geographies where a control market is hard to find. |

Experimental Protocols

This section provides a detailed, step-by-step protocol for implementing an SCM analysis, framed within the context of a public health policy evaluation.

Protocol: Evaluating a State-Level Public Health Intervention

A. Pre-Analysis Planning and Design

Define the Intervention and Units:

- Treated Unit: Clearly define the single unit exposed to the intervention (e.g., "State of Florida").

- Intervention Date: Precisely specify the time period

T0, when the intervention begins. - Donor Pool: Identify a set of potential control units (e.g., "All other US states that did not enact a similar policy within the study period") [2] [15].

Outcome Variable and Data Collection:

- Primary Outcome: Define the key metric for evaluation (e.g., "Annual homicide rate per 100,000 population").

- Data Source: Secure access to a longitudinal (panel) dataset containing the outcome for all units across multiple time periods.

- Pre-Intervention Period: Ensure a sufficiently long pre-intervention timeline (

T0periods) to capture seasonal cycles and long-term trends. A short pre-period is a common failure point [2].

B. Donor Pool Construction and Screening

Apply Screening Criteria to refine the donor pool [2]:

- Correlation Filtering: Exclude donor units with a pre-period outcome correlation below a threshold (e.g., r < 0.3).

- Seasonality Alignment: Verify similar cyclical patterns using time-series decomposition.

- Contamination Assessment: Remove any units that were indirectly exposed to the intervention or a similar one.

Feature Engineering:

- Primary Predictors: Include multiple lags of the outcome variable to ensure the synthetic control matches the dynamic trajectory [2].

- Auxiliary Covariates: Include a limited set of well-measured demographic or economic variables known to predict the outcome (e.g., poverty rate, population density) [15].

- Standardization: Z-score normalize all features using pre-period statistics only [2].

C. Model Fitting and Optimization

Define the Optimization Problem: Find the weight vector

W* = (w2,..., wJ+1)that solves [1]:Where

X1is the vector of pre-treatment characteristics for the treated unit, andX0is the matrix of characteristics for the donor pool.Implementation: Use established statistical packages like the

Synthpackage in R or similar libraries in Python to perform this constrained optimization [14] [15].Holdout Validation: Reserve the final 20-25% of the pre-intervention period as a holdout set. Train the model on the early pre-period and validate its predictive accuracy on the holdout set using metrics like Mean Absolute Percentage Error (MAPE) [2].

D. Effect Estimation, Inference, and Diagnostics

Calculate Treatment Effects: The treatment effect at time

t(post-intervention) is [1]:Statistical Inference via Placebo Tests: [1] [2]

- In-Space Placebos: Iteratively reassign the "treatment" to each unit in the donor pool and re-run the entire SCM analysis.

- This generates a distribution of placebo effects under the null hypothesis of no effect.

- Calculate a p-value as the proportion of placebo effects that are as large or larger than the observed effect.

Run Diagnostics: [2]

- Pre-Intervention Fit: Visually and quantitatively assess how well the synthetic control tracks the treated unit before the intervention.

- Weight Concentration: Check the effective number of donors (

1/∑ wj^2). A very low number may indicate over-reliance on a single control unit. - Sensitivity Analysis: Test the robustness of results to changes in the donor pool, model specification, and regularization.

Workflow Visualization

The following diagram illustrates the end-to-end SCM analytical workflow.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Packages for SCM Implementation

| Item / Resource | Function / Purpose | Key Features & Considerations |

|---|---|---|

R Synth Package |

The canonical implementation of the original SCM algorithm [14] [15]. | Provides a straightforward interface for weight optimization and effect estimation. Well-documented but limited to the standard method. |

| Augmented SCM (ASCM) | An extension that combines SCM with an outcome model for bias correction when pre-treatment fit is imperfect [1] [2]. | Improves robustness. Implemented in newer R packages (e.g., augsynth). Recommended when the treated unit lies outside the convex hull of donors. |

| Bayesian Structural Time Series (BSTS) | An alternative Bayesian approach for counterfactual forecasting, often used as a comparator to SCM [2]. | Provides probabilistic intervals (credible intervals). Available in R (BSTS package) and Python (CausalImpact). |

Python Causal Inference Libraries (e.g., causalinference) |

Provides a Python-based ecosystem for implementing SCM and related causal methods. | Offers flexibility for integration into larger Python-based data science workflows. |

| Placebo Test Scripts | Custom code for conducting permutation-based inference [1] [2]. | Essential for establishing statistical significance. Must be tailored to the specific study design to iteratively re-assign treatment. |

| Data Panel | A longitudinal dataset containing the outcome and covariates for the treated unit and all potential donors over time [15]. | The fundamental "reagent." Must be complete, consistent, and cover a sufficiently long pre-intervention period to ensure a valid synthetic control can be constructed. |

The validity of the Synthetic Control Method (SCM) hinges on several core assumptions that enable credible estimation of causal effects when a randomized controlled trial is not feasible. SCM constructs a counterfactual for a treated unit as a weighted combination of untreated donor units, replicating the treated unit's pre-intervention trajectory [2]. This data-driven approach for constructing a comparable control group is a natural alternative to Difference-in-Differences when no perfect untreated comparison group exists or when treatment is applied to a single unit [1]. The accuracy of this counterfactual depends critically on three foundational assumptions: no contamination, linearity, and the absence of other major changes. These assumptions ensure that the synthetic control provides a valid representation of what would have happened to the treated unit in the absence of the intervention.

The No Contamination Assumption

Definition and Theoretical Basis

The no contamination assumption stipulates that only the treated unit experiences the intervention, and control units in the donor pool remain entirely unaffected by the treatment [1]. This assumption is crucial for maintaining the integrity of the counterfactual, as it ensures that the donor pool's post-intervention outcomes genuinely reflect what the treated unit would have experienced without treatment.

In practical terms, contamination can occur through various channels:

- Direct exposure: Control units inadvertently receive the treatment.

- Spillover effects: Outcomes in control units are influenced by the treatment's implementation in the treated unit.

- Competitive responses: Control units modify their behavior in reaction to the treated unit's treatment.

- Measurement interference: The treatment affects how outcomes are measured across units.

Validation Protocols and Diagnostic Procedures

Researchers must implement rigorous diagnostic procedures to test the no contamination assumption:

Table 1: Diagnostic Tests for Contamination Detection

| Diagnostic Test | Methodology | Interpretation | Threshold Criteria |

|---|---|---|---|

| Pre-treatment Trend Analysis | Compare trends between treated and donor units during pre-intervention period | Parallel trends suggest no contamination | p > 0.05 for differential trends [2] |

| Post-treatment Donor Monitoring | Monitor donor unit outcomes for anomalous patterns post-treatment | Stable patterns suggest no contamination | Flag significant deviations (e.g., >2σ from mean) [2] |

| Cross-correlation Tests | Calculate cross-correlation between treated and donor regions | Low correlation suggests independence | r < 0.3 indicates minimal spillover [2] |

| Geographic Buffer Analysis | Analyze units at varying distances from treated unit | Distance gradient suggests spillovers | Effect decline with distance indicates contamination [2] |

| Placebo Spatial Tests | Apply synthetic control to units farther from treatment | No effect in distant units validates assumption | p > 0.05 for placebo effects [2] |

Experimental Protocol for Contamination Assessment:

- Define contamination pathways: Map potential mechanisms through which treatment effects could spread to control units.

- Implement geographic buffering: Exclude control units within a specified radius of the treated unit to prevent spatial spillovers.

- Conduct interference detection:

- Monitor donor unit outcomes for anomalous patterns post-treatment

- Perform geographic buffer analysis for spillover effects

- Run cross-correlation tests between treated and donor regions [2]

- Execute sensitivity analysis: Re-estimate synthetic control after removing potentially contaminated units and compare results.

Remediation Strategies for Contamination Violations

When contamination is detected or suspected:

- Expand donor pool geographically: Include units more distant from the treated unit.

- Temporal exclusion: Remove time periods potentially affected by contamination.

- Structural break testing: Implement Chow tests or similar procedures to identify contamination-induced breaks [2].

- Alternative method consideration: Shift to difference-in-differences or interrupted time series approaches.

The Linearity Assumption

Definition and Theoretical Foundation

The linearity assumption posits that the counterfactual outcome of the treated unit can be expressed as a linear combination of control units in the donor pool [1]. Formally, SCM assumes the counterfactual outcome follows a factor model [1]:

[ Y{it}^N = \mathbf{\theta}t \mathbf{Z}i + \mathbf{\lambda}t \mathbf{\mu}i + \epsilon{it} ]

where:

- (\mathbf{Z}_i) = Observed characteristics

- (\mathbf{\mu}_i) = Unobserved factors

- (\epsilon_{it}) = Transitory shocks (random noise)

This assumption enables the construction of the synthetic control as a convex combination ((wj \geq 0), (\sum wj = 1)) of donor units [1] [2]. The linearity constraint prevents extrapolation beyond the support of the donor pool, enhancing the credibility of the counterfactual.

Validation Protocols and Diagnostic Procedures

Diagnostic Framework for Linearity Assessment:

Table 2: Linearity Assumption Diagnostics

| Diagnostic Approach | Implementation | Positive Evidence | Risk Indicators |

|---|---|---|---|

| Convex Hull Test | Check if treated unit lies within convex hull of donors | Treated unit inside convex hull | Mahalanobis distance > critical value [2] |

| Pre-treatment Fit | Examine MSE/RMSE during pre-treatment period | Low prediction error (MAPE < 10%) [2] | Poor fit despite donor optimization |

| Weight Distribution | Analyze concentration of weights across donors | Effective number of donors > 3 [2] | Single donor dominates (weight > 0.8) |

| Non-linearity Test | Add quadratic terms to predictor set | No improvement in fit | Significant improvement with non-linear terms |

| Cross-validation | Holdout validation within pre-treatment period | Consistent performance across periods | High variance in holdout performance [2] |

Experimental Protocol for Linearity Validation:

- Convex hull assessment:

- Calculate Mahalanobis distance between treated unit and donor pool

- Verify treated unit lies within convex hull of donors [2]

- Pre-treatment fit evaluation:

- Reserve final 20-25% of pre-intervention period as holdout

- Train synthetic control on early pre-period data

- Evaluate prediction accuracy on holdout using MAPE, RMSE, R-squared [2]

- Weight distribution analysis:

- Calculate effective number of donors: ( \text{EN} = 1/\sumj wj^2 ) [2]

- Flag high concentration (EN < 3) as potential overfitting

- Regularization assessment:

- Implement penalized synthetic control: ( \min{\mathbf{W}} ||\mathbf{X}1 - \sum{j=2}^{J+1}Wj \mathbf{X}j ||^2 + \lambda \sum{j=2}^{J+1} Wj ||\mathbf{X}1 - \mathbf{X}_j||^2 ) [1]

- Test sensitivity to different regularization parameters

Remediation Strategies for Linearity Violations

When the linearity assumption is violated:

- Augmented SCM: Incorporate regression adjustment for bias correction (Ben-Michael et al., 2021) [1] [6]

- Regularized SCM: Apply penalty terms to exclude dissimilar control units [1]

- Relaxation approaches: Implement machine learning algorithms that minimize information-theoretic measures of weights with relaxed linear constraints [3]

- Alternative methods: Consider Generalized SCM (Xu, 2017) or Bayesian Structural Time Series [2]

The No Other Major Changes Assumption

Definition and Theoretical Basis

The no other major changes assumption requires that the treatment is the only significant event affecting the treated unit during the study period [1]. This assumption isolates the treatment effect from confounding by contemporaneous interventions or external shocks that might differentially impact the treated unit versus the synthetic control.

Potential violations include:

- Policy changes: Implementation of other interventions affecting the outcome

- Economic shocks: Recessions, inflation, or market disruptions

- Natural events: Disasters, pandemics, or environmental changes

- Technological disruptions: Innovations altering production functions

- Social changes: Demographic shifts or behavioral trends

Validation Protocols and Diagnostic Procedures

Diagnostic Framework for Confounding Changes:

Table 3: Diagnostic Tests for Confounding Changes

| Diagnostic Method | Procedure | Evidence Supporting Assumption | Confounding Indicators |

|---|---|---|---|

| Placebo Time Tests | Pretend intervention happened earlier | No effect in pre-period placebo tests | Significant placebo effects [2] [5] |

| Media Analysis | Review news and policy announcements | No major events coinciding with treatment | Documented contemporaneous changes |

| Multiple Specifications | Vary pre-treatment period length | Stable treatment effect estimates | Highly sensitive effect magnitudes |

| Donor Response Analysis | Examine outcomes across all donors | Parallel trends post-treatment | Divergent patterns in donor units |

| Covariate Balance Tracking | Monitor predictors unaffected by treatment | Stable relationships | Shifts in covariate-outcome relationships |

Experimental Protocol for Change Detection:

- Systematic event documentation:

- Catalog all potential confounding events during study period

- Code events by type, magnitude, and anticipated impact

- Map temporal alignment with treatment implementation

- Placebo time testing:

- Simulate treatment at various pre-intervention dates [2]

- Assess whether observed effect magnitude is historically unusual

- Calculate p-values from placebo distribution

- Sensitivity analysis:

- Re-estimate models with varying pre-treatment periods

- Test robustness to donor pool composition changes

- Examine effect stability across specifications

- Triangulation with alternative methods:

- Compare SCM results with difference-in-differences estimates

- Implement Bayesian approaches for validation [2]

Remediation Strategies for Assumption Violations

When other major changes are identified:

- Stratified analysis: Segment study period to isolate confounding events

- Covariate adjustment: Incorporate measures of confounding events in augmented SCM

- Interaction terms: Model effect modification from external factors

- Donor pool restriction: Limit to units experiencing similar external environments

- Exclusion periods: Remove time periods affected by confounding events

Integrated Validation Workflow

Comprehensive Diagnostic Framework

Implementing an integrated validation protocol ensures all core assumptions are simultaneously assessed:

Sequential Testing Protocol:

- Pre-analysis design phase:

- Define exclusion criteria for donor pool

- Pre-register analytical specifications [2]

- Establish quality gates for pre-treatment fit

- Assumption verification phase:

- Conduct contamination diagnostics (Section 2.2)

- Perform linearity assessments (Section 3.2)

- Implement change detection protocols (Section 4.2)

- Robustness confirmation phase:

Quality Thresholds and Decision Rules

Table 4: Integrated Quality Assessment Framework

| Quality Dimension | Optimal Threshold | Warning Zone | Unacceptable Range |

|---|---|---|---|

| Pre-treatment Fit (MAPE) | < 5% | 5-10% | > 10% [2] |

| Effective Donors | > 5 | 3-5 | < 3 [2] |

| Placebo Test p-value | < 0.05 | 0.05-0.10 | > 0.10 [1] |

| Mahalanobis Distance | < 1σ | 1-2σ | > 2σ [2] |

| Holdout R-squared | > 0.90 | 0.80-0.90 | < 0.80 [2] |

Research Reagent Solutions

Table 5: Essential Analytical Tools for SCM Implementation

| Research Reagent | Function | Implementation Example | Key References |

|---|---|---|---|

| Synth Package (R) | Canonical SCM implementation | Original algorithm for weight optimization | Abadie et al. (2010) [1] |

| augsynth R Package | Augmented SCM with bias correction | De-biases SCM estimate using outcome model | Ben-Michael et al. (2021) [1] [6] |

| Penalized SCM Estimator | Reduces interpolation bias | Modifies optimization with similarity penalty | Abadie & L'hour (2021) [1] |

| SCM-relaxation Algorithm | Machine learning approach for counterfactual prediction | Minimizes information-theoretic measure of weights | Liao et al. (2025) [3] |

| Placebo Test Framework | Statistical inference via permutation | Generates null distribution of pseudo-effects | Abadie et al. (2010) [1] |

| BSTS (Bayesian) | Probabilistic counterfactual forecasting | Full posterior distributions over causal paths | Brodersen et al. (2015) [2] |

| Generalized SCM | Extends to multiple treated units | Interactive fixed effects for causal estimation | Xu (2017) [2] |

| Synthetic DiD | Combines SCM and DiD advantages | Balances unobserved time-varying confounders | Arkhangelsky et al. (2021) [2] |

The Synthetic Control Method (SCM) is a rigorous causal inference tool designed for evaluating the impact of interventions—such as a new drug policy, a marketing campaign, or a public health program—when only a single unit (e.g., a country, state, or specific patient group) is exposed to the treatment [15]. Introduced by Abadie and Gardeazabal in 2003 and later formalized by Abadie, Diamond, and Hainmueller in 2010, SCM provides a data-driven approach to construct a credible counterfactual by combining a weighted average of untreated control units [1] [2]. This synthetic control unit is constructed to mimic the pre-intervention characteristics and outcome trajectory of the treated unit as closely as possible. The core causal question SCM addresses is: What would have happened to the treated unit in the absence of the intervention? [15]. Within the potential outcomes framework, the causal effect for the treated unit at post-treatment time t is defined as τ_{1t} = Y_{1t}^I - Y_{1t}^N, where Y_{1t}^I is the observed outcome under intervention and Y_{1t}^N is the unobserved counterfactual outcome [1]. SCM estimates this counterfactual, Y_{1t}^N, by reweighting the outcomes of control units from a donor pool.

Theoretical Foundation: The Factor Model

The statistical credibility of the Synthetic Control Method is anchored in a linear factor model [1]. This model provides a flexible way to account for unobserved confounders that vary over time. The counterfactual outcome for any unit i in the absence of treatment at time t is given by: Y{it}^N = θt Zi + λt μi + ε{it}

Table: Components of the Factor Model of SCM

| Component | Description | Role in Causal Inference |

|---|---|---|

| Z_i | A vector of observed covariates for unit i (e.g., demographic or baseline clinical factors). | Controls for observed confounders. |

| μ_i | A vector of unobserved unit-specific factors (latent confounders). | Accounts for unobserved time-varying confounders. |

| λ_t | A vector of unobserved time-specific effects (common factors). | Captures common shocks or trends affecting all units. |

| θ_t | A vector of unknown parameters | Models the effect of observed covariates over time. |

| ε_{it} | Transitory shocks (idiosyncratic noise) with a mean of zero. | Represents random, unmodeled variation. |

This model posits that outcomes are influenced by both observed covariates (Z_i) and a small number of unobserved common factors (λ_t) with unit-specific loadings (μ_i) [1]. The key assumption for a valid SCM is that the synthetic control weights W* can be found such that the synthetic control unit matches the treated unit in both observed pre-treatment covariates and the unobserved factor loadings. This is achieved by matching the pre-treatment outcome path over a sufficiently long period [1]. Formally, the weights must satisfy: ∑{j=2}^{J+1} w_j^ Zj = Z1* and ∑{j=2}^{J+1} w_j^ Y{jt} = Y{1t}* for all pre-treatment periods t = 1, ..., T_0 [1].

Diagram 1: Structural Factor Model for Counterfactual Outcomes. This graph depicts the causal structure of the factor model underlying SCM, showing how observed covariates, unobserved factors, and transient shocks jointly determine the potential outcome.

Core Assumptions and Data Requirements

For a synthetic control estimate to be valid, several critical assumptions must hold.

Key Assumptions

- No Contamination of Control Group: The intervention must be implemented only in the treated unit. The control units in the donor pool must not be affected by the treatment or a similar policy [1].

- No Other Major Changes: The treatment should be the only significant event occurring at the implementation time that could affect the outcome of interest. This helps isolate the treatment effect [1].

- Linearity: The counterfactual outcome of the treated unit is assumed to be constructed as a linear combination of the control units' outcomes [1].

- Perfect Pre-treatment Fit: The synthetic control should closely resemble the treated unit in pre-intervention periods. A small pre-treatment gap is crucial for a low-bias estimate [1]. The accuracy of SCM depends on the ratio of transitory shocks to the number of pre-treatment periods; a long pre-intervention history is needed for a good fit [1].

Data Requirements

- Donor Pool: A set of control units that were not exposed to the intervention. These units should be similar to the treated unit and not affected by the treatment [1] [15].

- Pre-treatment Period: A sufficiently long time series of data before the intervention. This allows the model to capture underlying trends and seasonal patterns, ensuring a good fit [1] [2].

- Outcome Variable: A consistent and reliably measured outcome of interest, observed for both treated and control units across all time periods [2].

- Predictors: Pre-treatment characteristics that predict the outcome variable. These can include lagged values of the outcome itself and other auxiliary covariates [1].

Application Protocols and Workflow

Implementing SCM involves a structured, multi-stage process to ensure a credible causal estimate.

End-to-End SCM Workflow

Diagram 2: SCM Implementation Workflow. This chart outlines the sequential and iterative stages for implementing the Synthetic Control Method, from initial design to final diagnostics.

Protocol Specifications

Stage 1: Design and Pre-Analysis Planning

- Core Activities: Define the treated unit, outcome metric, and intervention timing definitively. Assemble a comprehensive panel dataset for the donor pool. Pre-register donor exclusion criteria and analytical plans to minimize researcher bias [2].

- Critical Consideration: Ensure the treatment assignment is exogenous (as if random) relative to the potential outcomes. Verify that outcome measurement is consistent across all units and time periods [2].

Stage 2: Donor Pool Construction and Screening

- Primary Screening Criteria:

- Correlation Filtering: Exclude donors with a pre-period outcome correlation below a threshold (e.g., r < 0.3) [2].

- Seasonality Alignment: Use spectral analysis to verify similar cyclical patterns between potential donors and the treated unit [2].

- Contamination Assessment: Remove any units with direct or indirect exposure to the treatment to avoid spillover effects [2].

- Advanced Screening: Conduct structural stability tests (e.g., Chow tests) to check for breaks in the pre-treatment trends of potential donors [2].

Stage 3: Feature Engineering and Scaling

- Feature Selection Strategy: Use multiple lags of the outcome variable to span complete seasonal cycles. Include auxiliary covariates (e.g., demographic/economic variables) only when their measurement quality is high [2].

- Standardization Protocol: Scale all features using pre-period statistics only to avoid look-ahead bias. Apply z-score normalization: (X - μ_pre) / σ_pre [2].

Stage 4: Constrained Optimization with Regularization The goal is to find the optimal weight vector W that minimizes the difference between the treated unit and the synthetic control in the pre-treatment period. The objective function is [1]: min𝐖 ||X₁ - X₀𝐖|| Subject to: *wj ≥ 0* and ∑ w_j = 1.

To reduce interpolation bias, a penalized synthetic control method can be used [1]: min𝐖 ||X₁ - ∑{j=2}^{J+1}Wj Xj ||² + λ ∑{j=2}^{J+1} Wj ||X₁ - X_j||² Here, λ is a regularization parameter; as λ → 0, it becomes the standard SCM, and as λ → ∞, it approximates nearest-neighbor matching [1].

Stage 5: Holdout Validation Framework

- Validation Protocol: Reserve the final 20-25% of the pre-intervention period as a holdout set. Train the synthetic control on the early pre-period data and evaluate its prediction accuracy on the holdout set [2].

- Quality Gates: Use metrics like Mean Absolute Percentage Error (MAPE) and Root Mean Square Error (RMSE). For instance, a pre-intervention MAPE of <5% is often a good benchmark for weekly business data [2].

- Remediation: If validation fails (poor fit), practitioners should expand the donor pool, extend the pre-intervention period, or adjust regularization parameters [2].

Stage 6: Effect Estimation and Business Metrics

- Treatment Effect Calculation: The treatment effect path is estimated as [1] [2]: τ̂_t = Y_{1t} - ∑_{j=2}^{J+1} w_j^ Y_{jt}* for each post-treatment period t.

- Business Metric Derivation:

Inference and Uncertainty Quantification

Unlike traditional statistical methods, SCM with a single treated unit does not support standard asymptotic inference because the sampling mechanism is undefined [1]. Instead, inference relies on permutation-based methods.

Permutation (Placebo) Inference

This is the most common approach for SCM inference [1].

- Procedure: Iteratively reassign the treatment to each unit in the donor pool and calculate a placebo treatment effect for each one. This generates a null distribution of effect sizes under the assumption of no true effect.

- Significance Testing: The statistical significance of the actual treatment effect is assessed by comparing it to this placebo distribution. A one-sided p-value can be calculated as the proportion of placebo effects that are as extreme as, or more extreme than, the observed effect [1]. The effect is considered statistically significant if it is extreme relative to the placebo distribution.

Alternative Inference Methods

- Bootstrap Methods: Useful in settings with multiple treated units or staggered adoption, accounting for both sampling and optimization uncertainty [2].

- Bayesian Approaches: Methods like Bayesian Structural Time Series (BSTS) provide full posterior distributions over counterfactual paths, offering a natural way to quantify uncertainty [2].

Diagnostic Assessment and Sensitivity Analysis

A rigorous diagnostic phase is critical for validating the credibility of the synthetic control.

Core Diagnostics

- Weight Concentration: Calculate the effective number of donors: EN = 1/∑_j w_j^2. Flag high concentration (e.g., EN < 3) as a sign of potential overfitting, where the synthetic control relies too heavily on one or two units [2].

- Overlap Assessment: Verify that the treated unit lies within the convex hull of the donors. The Mahalanobis distance can be used to quantify the similarity between the treated unit and the donor pool [2]. Significant extrapolation occurs if the treated unit is outside the convex hull.

- Pre-treatment Fit: Visually and quantitatively assess how well the synthetic control tracks the outcome of the treated unit before the intervention. A good fit is necessary for the parallel trends assumption to hold in the post-period [15].

Sensitivity Testing

- Leave-One-Out Analysis: Systematically exclude each donor unit to check if the results are driven by a single influential control [2].

- Robustness Checks: Test the sensitivity of the results to different choices of regularization parameters, the composition of the donor pool, and the set of predictors used [2].

- Interference Detection: Monitor donor unit outcomes for anomalous patterns post-treatment, which might indicate spillover effects or other forms of contamination [2].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Methodological Components for SCM Implementation

| Tool / Method | Function | Key Considerations |

|---|---|---|

| Donor Pool | Serves as the source of control units for constructing the counterfactual. | Must be free of treatment contamination; should contain units similar to the treated case [15]. |

| Pre-treatment Outcome Lags | Primary features used to match the trajectory of the treated unit. | Should span multiple seasonal cycles to capture underlying trends [2]. |

| Constrained Optimization | Algorithm that finds the optimal weights for the synthetic control. | Weights are constrained to be non-negative and sum to one to avoid extrapolation [1] [2]. |

| Placebo Test | A permutation method used for statistical inference. | Generates an empirical distribution of effects under the null hypothesis [1]. |

| Augmented SCM (ASCM) | An extension that combines SCM with an outcome model for bias correction. | Used when a perfect pre-treatment fit is not feasible [1]. |

| Holdout Validation | A method to evaluate the predictive power of the synthetic control. | Uses a portion of the pre-treatment data not used in model fitting to test accuracy [2]. |

| Bayesian Structural Time Series (BSTS) | An alternative probabilistic approach for counterfactual forecasting. | Provides built-in uncertainty quantification but can be sensitive to prior specification [2]. |

Advanced Extensions: Augmented and Generalized SCM

When the standard SCM fails to achieve a good pre-treatment fit, advanced extensions can be employed.

- Augmented Synthetic Control Method (ASCM): Introduced by Ben-Michael, Feller, and Rothstein (2021), ASCM extends SCM by incorporating an outcome model for bias correction. It improves estimates when the synthetic control alone fails to match pre-treatment outcomes precisely, making the method doubly robust [1] [2].

- Generalized SCM: Proposed by Xu (2017), this method uses interactive fixed effects regression and is particularly suited for settings with multiple treated units. It is more flexible than the canonical SCM and allows for the use of bootstrap methods for inference [2].

Implementing SCM: An End-to-End Workflow for Clinical and Pharmaceutical Studies

Pre-analysis planning represents a critical foundation for rigorous causal inference using the Synthetic Control Method (SCM). This initial stage establishes the formal framework for evaluating interventions when randomized controlled trials are impractical or impossible to conduct [16]. SCM is particularly valuable in settings with single or limited treated units, such as policy changes in specific regions or drug development interventions targeting particular populations [15] [1]. Proper planning ensures the synthetic control—a data-driven weighted combination of untreated donor units—provides a valid counterfactual for estimating causal effects [16] [2].

The core objective of SCM is to estimate the treatment effect (τ) for a treated unit by comparing its post-intervention outcomes to those of a synthetic control unit constructed from untreated donors [2]. This is formalized as:

τt = Y1t(1) - Y1t(0) for t > T0

Where Y1t(1) is the observed outcome for the treated unit post-intervention, and Y1t(0) is the counterfactual outcome estimated using the synthetic control: Ŷ1t(0) = ∑j=2J+1 wjYjt [2].

Defining the Treated Unit and Intervention Context

Conceptual Definition and Characteristics

The treated unit constitutes the primary entity receiving the intervention whose causal effect researchers aim to estimate. In pharmaceutical and public health contexts, this typically represents a specific population group, geographical region, or patient cohort exposed to a drug, policy, or health program [15].

A well-defined treated unit exhibits three essential characteristics:

- Discrete Identity: The unit must represent a distinct, identifiable entity such as a specific country, state, or defined population group that received the intervention [15].

- Clear Intervention Timing: The unit must have a precise intervention start date (T0 + 1) that clearly demarcates pre-and post-intervention periods [2] [15].

- Data Availability: The unit must have sufficient pre-intervention data covering multiple time periods to enable accurate synthetic control construction [16].

Causal Relationship and Exchangeability Framework

Establishing a plausible causal relationship requires demonstrating that the treated unit's outcomes would have followed a trajectory similar to the synthetic control in the absence of the intervention. This exchangeability assumption is formalized through a factor model [1]:

YitN = θtZi + λtμi + εit>

Where Zi represents observed characteristics, μi represents unobserved factors, and εit represents transitory shocks. Valid inference requires that the synthetic control weights satisfy:

∑j=2J+1 wj*Zj = Z1 and ∑j=2J+1 wj*Yjt = Y1t for all t ≤ T0 [1]

Practical Operationalization in Research Protocols

Table 1: Treated Unit Definition Protocol for Research Documentation

| Documentation Element | Protocol Specification | Data Source Verification |

|---|---|---|

| Unit Identity | Clearly specify the geographical boundaries, population inclusion criteria, or organizational definition | Administrative records; Patient registry data; Policy implementation documents |

| Intervention Timing | Document the exact implementation date (T0 + 1) and any phase-in periods | Policy effective dates; Drug approval records; Program implementation timelines |

| Theoretical Justification | Articulate the causal pathway and biological/behavioral mechanism | Literature review; Theoretical framework; Preliminary evidence |

| Contamination Assessment | Define and monitor for potential spillover effects to control units | Geographic buffers; Network analysis; Implementation fidelity measures |

| Contextual Factors | Document unique circumstances that might affect outcomes | Historical events; Concurrent interventions; System changes |

Study Design Parameters and Temporal Considerations

Temporal Architecture Requirements

The temporal structure of SCM studies requires careful planning to ensure sufficient pre-intervention data for constructing a valid synthetic control and adequate post-intervention observation for effect estimation [16].

Table 2: Quantitative Requirements for Pre-Analysis Planning

| Planning Parameter | Minimum Recommended Threshold | Empirical Justification |

|---|---|---|

| Pre-Intervention Period (T0) | 20-30 time points (e.g., months, quarters) | Captures complete seasonal cycles and long-term trends [2] |

| Post-Intervention Period | Sufficient to observe anticipated effect pattern | Based on pharmacological mechanism and outcome kinetics |

| Holdout Validation Period | 20-25% of pre-intervention data | Provides robust out-of-sample testing [2] |

| Outcome Measurement Frequency | Consistent across all units and time periods | Ensures comparability; Monthly or quarterly recommended |

| Power Considerations | Minimum Detectable Effect (MDE) of 5% achievable | Based on simulation studies of 200+ campaigns [2] |

Outcome Metric Selection and Specification

Selecting appropriate outcome metrics requires balancing theoretical relevance with measurement practicality:

- Primary Outcome: Should directly capture the intervention's hypothesized effect with high measurement reliability [2].

- Secondary Outcomes: Provide mechanistic insights or validate primary findings through convergent evidence.

- Measurement Properties: Must demonstrate consistency across all units and time periods without definitional changes during the study window [2].

Implementation Workflow for Pre-Analysis Planning

The following workflow diagram illustrates the sequential stages of pre-analysis planning for SCM applications:

Experimental Protocols and Validation Framework

Donor Pool Construction Protocol

The donor pool comprises potential control units that could contribute to constructing the synthetic counterfactual. Selection requires systematic screening:

- Correlation Filtering: Exclude donors with pre-period outcome correlation below threshold (typically r < 0.3) [2]

- Seasonality Alignment: Verify similar cyclical patterns using spectral analysis [2]

- Structural Stability: Test for structural breaks using Chow tests or similar procedures [2]

- Contamination Assessment: Remove units with direct or indirect treatment exposure [2]

- Geographic Considerations: Account for spatial spillovers and market overlap in pharmaceutical contexts [2]

Pre-Registration and Sensitivity Analysis Protocol

To minimize researcher bias and ensure analytical robustness, implement the following protocol:

- Pre-Registration Document: Specify donor exclusion criteria, analytical specifications, and primary outcomes before analysis [2].

- Holdout Validation: Reserve final 20-25% of pre-intervention period as holdout for model validation [2].

- Quality Gates: Establish prediction accuracy thresholds (e.g., MAPE < 15%, RMSE < 0.2) based on data frequency [2].

- Sensitivity Framework: Plan robustness checks for control unit selection, regularization parameters, and time periods [16].

Research Reagent Solutions for SCM Implementation

Table 3: Essential Methodological Tools for SCM Application

| Research Tool | Function/Purpose | Implementation Examples |

|---|---|---|

| Statistical Software (R/Python/Stata) | Implementation of SCM algorithms and diagnostics | Synth package in R; scm implementation in Python [16] |

| Optimization Algorithms | Constrained weight estimation with regularization | Quadratic programming for weight optimization with entropy penalty [2] |

| Placebo Test Framework | Statistical inference via permutation tests | Iterative reassignment of treatment to donor units [1] |

| Balance Diagnostics | Assessment of pre-intervention similarity | Mahalanobis distance; Pre-treatment fit statistics (R², MAPE) [2] |

| Sensitivity Analysis Tools | Robustness assessment of causal conclusions | Leave-one-out analysis; Alternative specification testing [16] |

Integration with Broader Causal Inference Framework

Stage 1 planning establishes the foundation for subsequent SCM stages, including donor pool construction, weight optimization, and effect estimation. Proper execution of pre-analysis planning ensures the synthetic control method delivers on its promise as "the most important innovation in the policy evaluation literature in the last 15 years" [15]. By rigorously defining the treated unit, establishing temporal parameters, and pre-specifying analytical protocols, researchers can produce credible causal estimates that withstand methodological scrutiny and inform evidence-based decision-making in drug development and public health policy.