Accuracy and Precision in HPLC Method Validation: A Comprehensive Guide for Reliable Pharmaceutical Analysis

This article provides a complete guide to accuracy and precision in High-Performance Liquid Chromatography (HPLC) method validation, tailored for researchers, scientists, and drug development professionals.

Accuracy and Precision in HPLC Method Validation: A Comprehensive Guide for Reliable Pharmaceutical Analysis

Abstract

This article provides a complete guide to accuracy and precision in High-Performance Liquid Chromatography (HPLC) method validation, tailored for researchers, scientists, and drug development professionals. It covers the fundamental definitions and distinctions between these two critical parameters, explores methodologies for their determination and application in routine analysis, addresses common troubleshooting and optimization strategies to enhance data reliability, and outlines their formal role within a complete validation protocol as per ICH guidelines. By synthesizing foundational concepts with practical applications, this guide aims to empower analysts in developing robust, compliant, and high-quality HPLC methods for pharmaceutical development and quality control.

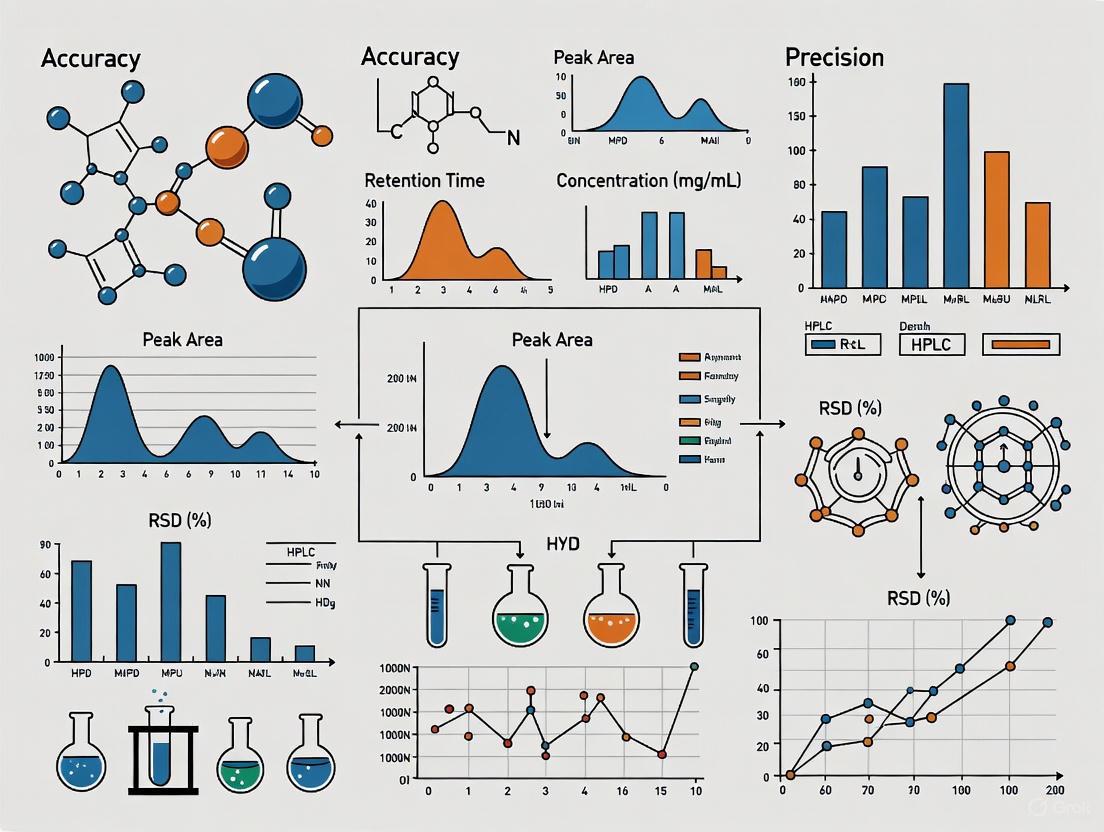

Accuracy vs. Precision: Mastering the Fundamental Pillars of HPLC Data Quality

In the rigorous world of high-performance liquid chromatography (HPLC) method validation, accuracy stands as a fundamental pillar, ensuring that analytical results are not just precise but also correct and truthful. It is formally defined as the "closeness of agreement between the value which is accepted either as a conventional true value or an accepted reference value and the value found" [1] [2]. This parameter, sometimes referred to as "trueness," provides the critical assurance that a method reliably measures what it is intended to measure, forming the bedrock of data integrity in pharmaceutical development and quality control [2]. For researchers and scientists, establishing accuracy is not merely a regulatory checkbox; it is a core scientific demonstration that an analytical method is fit for its purpose, providing confidence that decisions based on its results—from drug release to stability studies—are scientifically sound and defensible.

This article explores the role of accuracy within the broader context of HPLC method validation, framing it as an essential component of a holistic validation strategy that also includes precision, specificity, and robustness.

Accuracy is one of several interrelated performance characteristics that constitute a fully validated analytical method. It is intimately connected to other validation parameters:

- Precision: While precision measures the scatter or reproducibility of a series of measurements from multiple samplings of a homogeneous sample, accuracy confirms that these measurements are centered on the true value [1] [3]. A method can be precise but inaccurate (consistent but biased), or inaccurate and imprecise (unreliable and biased). An ideal method is both accurate and precise.

- Specificity: The ability of a method to measure the analyte accurately in the presence of other components is a prerequisite for achieving accuracy [1] [4]. Without specificity, interference from impurities, degradants, or the sample matrix can lead to biased results.

- Linearity and Range: Accuracy is established across the specified range of the method, demonstrating that the method provides truthful results at both the upper and lower ends of the analytical measurement scale [1] [5].

The following workflow illustrates how accuracy is integrated within the broader HPLC method validation process:

Establishing Accuracy: Detailed Experimental Protocols

The validation of accuracy is typically performed using a recovery study, where the results from the method under validation are compared to a known reference value [2] [5]. The general methodology is consistent across different application types, though specific details vary.

General Principles and Experimental Design

The ICH Q2(R1) guideline recommends that accuracy be established across the specified range of the method using a minimum of nine determinations over a minimum of three concentration levels (for example, three concentrations and three replicates each) [1] [4]. The data can be reported as the percentage recovery of the known, added amount or as the difference between the mean and the true value with confidence intervals [1].

A typical experimental approach involves:

- Spiking known amounts of the analyte into a blank matrix (e.g., placebo for a drug product or a diluent for a drug substance).

- Analyzing the spiked samples using the validated HPLC method.

- Calculating the percentage recovery for each sample by comparing the measured value to the theoretical (known) value.

- Statistical evaluation of the recovery data (mean, standard deviation, confidence interval) against pre-defined acceptance criteria [4].

Application-Specific Methodologies

The specific protocol for accuracy determination depends on the analytical application.

Table 1: Experimental Protocols for Determining Accuracy in Different Applications

| Application | Experimental Methodology | Recommended Concentration Levels | Key Considerations |

|---|---|---|---|

| Assay of Drug Substance | Comparison of results to the analysis of a standard reference material or a second, well-characterized method [1] [2]. | 80%, 100%, 120% of the test concentration [2]. | The purity of the reference standard must be well-established. |

| Assay of Drug Product | Analysis of synthetic mixtures spiked with known quantities of the API into the placebo [1] [2]. | 80%, 100%, 120% of the test concentration [2]. | A placebo mixture must be available that mimics the formulation without the active ingredient. |

| Quantification of Impurities | Analysis of samples (drug substance or product) spiked with known amounts of impurities [1] [2]. | From the reporting level to 120% of the specification limit, with a minimum of three levels (e.g., LOQ, 100%, 120%) [2]. | Requires authentic impurity standards. If unavailable, comparison to a second, validated method is an alternative [1]. |

Sample Calculation for Accuracy (Recovery)

The percentage recovery is calculated using the formula below. The following example, based on an assay of a drug product, illustrates the calculation:

Given:

- Average assay value of the drug product (from precision study): 99.5%

- Weight of drug product used in the 100% accuracy spike: 50.10 mg

- Amount of API (working standard) added: 49.95 mg

- Potency of working standard: 99.8%

- Measured peak area of the accuracy sample: 490,490

- Average peak area of the standard: 500,500

Calculation:

- Theoretical Amount Added = (Weight of API added × Potency of Standard) = 49.95 mg × 0.998 = 49.85 mg

- Amount Found = (Measured Area / Avg. Standard Area) × (Theoretical Amount Added) = (490,490 / 500,500) × 49.85 mg = 48.85 mg

- % Recovery = (Amount Found / Theoretical Amount Added) × 100 = (48.85 / 49.85) × 100 = 98.0% [2]

Data Presentation and Acceptance Criteria

The results from accuracy studies must be systematically evaluated against pre-defined acceptance criteria, which are often based on regulatory guidelines and internal company procedures.

Table 2: Typical Acceptance Criteria for Accuracy (Recovery) Studies

| Application | Typical Acceptance Criteria | Reference |

|---|---|---|

| Drug Substance/Drug Product Assay | Recovery between 98.0% and 102.0% | [2] |

| Dissolution Testing (Immediate Release) | Recovery between 95.0% and 105.0% | [2] |

| Impurity Quantification (at specified levels) | A sliding scale is often applied, allowing for wider acceptance criteria at lower concentrations (e.g., ±10% at the reporting level, tightening to ±5% at the specification limit) [4]. | [4] |

The following table provides an example of how accuracy and precision data can be summarized for a drug product assay method, demonstrating acceptable performance across the specified range:

Table 3: Example Summary of Accuracy and Precision Data for a Drug Product Assay

| Spike Level (%) | Theoretical Amount (mg) | Mean Recovery (%) | Standard Deviation (SD) | Relative Standard Deviation (RSD%) | Confidence Interval (95%) |

|---|---|---|---|---|---|

| 80 | 40.00 | 99.5 | 0.45 | 0.45 | 99.1 – 99.9 |

| 100 | 50.00 | 100.2 | 0.51 | 0.51 | 99.6 – 100.8 |

| 120 | 60.00 | 99.8 | 0.39 | 0.39 | 99.5 – 100.1 |

The Scientist's Toolkit: Essential Materials for Validation

Conducting a rigorous accuracy study requires high-quality materials and reagents. The following table lists key items and their functions in the experimental process.

Table 4: Key Research Reagent Solutions and Materials for Accuracy Studies

| Item | Function / Purpose | Critical Quality Attributes |

|---|---|---|

| High-Purity Analytical Standard | Serves as the reference for the "true value" of the analyte [5]. | Certified purity and identity, stored under appropriate conditions to ensure stability. |

| Placebo Formulation | Mimics the composition of the drug product without the active ingredient, used to assess interference and matrix effects [4]. | Must be representative of the final product; should not contain any interfering components. |

| Authentic Impurity Standards | Used for spiking studies to determine the accuracy of impurity quantification methods [4]. | Certified purity and identity. |

| HPLC-Grade Solvents and Reagents | Used for preparation of mobile phases, standard solutions, and sample solutions [6] [7]. | Low UV absorbance, high purity to prevent baseline noise and ghost peaks. |

Accuracy is the definitive benchmark for trueness in HPLC method validation. It moves the assessment of a method's performance beyond simple repeatability, demanding a demonstration of correctness and freedom from bias. Through a meticulously designed and executed experimental protocol—involving spiking studies at multiple concentration levels and comparison against a known reference—researchers can provide irrefutable evidence of their method's capability to produce truthful results. In the highly regulated pharmaceutical environment, where patient safety and product efficacy depend on reliable data, a thoroughly validated, accurate analytical method is not just a technical achievement but a fundamental ethical and professional responsibility.

In High-Performance Liquid Chromatography (HPLC) method validation, precision demonstrates the reliability and consistency of an analytical method by quantifying the closeness of agreement between a series of measurements obtained from multiple sampling of the same homogeneous sample under the prescribed conditions [4] [8]. It is a fundamental parameter that regulatory authorities require to ensure that analytical procedures can generate reproducible and trustworthy data for quality control of pharmaceuticals [4].

Precision is considered at three levels: repeatability (intra-assay precision), intermediate precision, and reproducibility [8]. The precision of an analytical procedure is typically expressed as the standard deviation or the relative standard deviation (RSD%) of a series of measurements [9].

Levels of Precision and Evaluation Methodologies

The evaluation of precision must be appropriate to the type of procedure and the intended use of the method. It is typically assessed at both the assay level for the active pharmaceutical ingredient (API) and at the impurities level [4].

Repeatability

Repeatability expresses the precision under the same operating conditions over a short interval of time [8]. It is evaluated in two ways:

- System Repeatability: Determined by multiple injections (e.g., n≥5) of a single, homogeneous reference solution. This is a mandatory requirement for system suitability testing, with a typical acceptance criterion of RSD < 2.0% for peak area precision [4].

- Method/Multiple Preparations Repeatability: Assessed by making multiple determinations (e.g., n=6) at 100% of the test concentration, or by preparing a minimum of three determinations at three different concentrations (e.g., covering the specified range) in triplicate [4] [8].

Intermediate Precision

Intermediate precision demonstrates the reliability of the method within a single laboratory under normal operational variations, such as different days, different analysts, or different equipment [4]. To determine intermediate precision, different levels of analyte concentrations in triplicate are prepared three different times in a day for intra-day variation, and the same procedure is followed for three different days for inter-day variation [9]. The percent RSD of the predicted concentrations from the regression equation is taken as the measure of precision [9].

Reproducibility

Reproducibility expresses the precision of measurement between different laboratories, such as during method transfer studies. Inter-instrument variation can be studied by reanalyzing one set of different concentration levels on another HPLC system [9].

The following workflow outlines a standard protocol for assessing the different levels of precision in an HPLC method validation study:

Quantitative Data and Acceptance Criteria

Precision is quantitatively expressed as the Relative Standard Deviation (RSD%), which allows for comparison across different concentration levels. The acceptance criteria become stricter as the analyte concentration increases.

Table 1: Typical Acceptance Criteria for Precision in HPLC Validation [10] [4]

| Level of Precision | Experimental Methodology | Typical Acceptance Criterion (RSD%) |

|---|---|---|

| System Repeatability | Multiple injections (n=5-6) of a single preparation. | < 2.0% for assay; may be higher for low-level impurities. |

| Method Repeatability | Multiple sample preparations (n=6) at 100% test concentration. | < 2.0% for assay of drug substance/product. |

| Intermediate Precision | Multiple preparations analyzed over different days, by different analysts, or on different instruments. | Overall RSD < 2.0-2.5% for assay; no significant statistical difference between sets of data. |

Table 2: Example of Precision (Repeatability) Data from a Drug Product Assay Validation [4]

| Sample No. | Concentration Level | Measured Assay (%) | Mean (%) | Standard Deviation (SD) | RSD (%) |

|---|---|---|---|---|---|

| 1 | 100% | 99.8 | |||

| 2 | 100% | 100.2 | |||

| 3 | 100% | 100.5 | 100.2 | 0.34 | 0.34 |

| 4 | 100% | 99.9 | |||

| 5 | 100% | 100.1 | |||

| 6 | 100% | 100.5 |

For the quantitation of impurities, where concentrations are much lower, a sliding scale for acceptance criteria is often applied, allowing for a higher RSD at lower concentration levels [4].

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key reagents and materials essential for conducting precision studies in HPLC method validation.

Table 3: Key Research Reagent Solutions for HPLC Precision Studies

| Item | Function in Precision Assessment |

|---|---|

| HPLC-Grade Solvents (e.g., Acetonitrile, Methanol) [11] [12] | Used for preparing mobile phases and sample solutions. High purity is critical to minimize baseline noise and variability, which directly impact peak area and retention time precision. |

| High-Purity Reference Standards (e.g., API, impurities) [11] [4] | Well-characterized substances of known purity used to prepare calibration and test solutions. Their quality is paramount for generating accurate and precise quantitative data. |

| Internal Standards (e.g., p-terphenyl) [13] | A compound added in a constant amount to all standards and samples to correct for variations in injection volume, detector response, and sample preparation losses, thereby improving precision. |

| Buffer Salts (e.g., Potassium Dihydrogen Phosphate) [11] [14] | Used to control the pH of the mobile phase. Consistent buffer preparation is vital for maintaining stable retention times and achieving reproducible separations, a key aspect of precision. |

| Characterized Chromatographic Column [15] [14] | The stationary phase (e.g., C18, CN) where separation occurs. Column performance, monitored by parameters like plate count and tailing factor, is foundational for precise and consistent results. |

Internal Standard Method for Enhanced Precision

The internal standard (IS) method is a powerful technique to improve precision, especially when volume errors are difficult to predict and control during sample preparation [13]. This method involves adding a carefully chosen compound, different from the analyte, uniformly to every standard and sample.

The calibration curve plots the ratio of the analyte response to the internal standard response against the ratio of the analyte amount to the internal standard amount [13]. This ratioing corrects for numerous variables. Studies have systematically demonstrated that the internal standard method outperformed external standard methods in all instances, particularly in minimizing errors caused by evaporation of solvents, injection inaccuracies, and complex sample preparation involving transfers, extractions, and dilutions [13].

Precision in the Broader Validation Context

Precision should not be viewed in isolation. It is intrinsically linked to other validation parameters. For instance, precision must be demonstrated across the specified range of the method, and its evaluation is often conducted concurrently with accuracy studies [4] [8]. A method cannot be truly accurate if it is not precise, as high variability makes it impossible to determine the true value with confidence.

Regulatory bodies like the ICH and FDA require precision data as part of method validation for marketing authorizations [12] [4]. The demonstration of a precise, robust, and reliable HPLC method is therefore not just a scientific best practice but a regulatory necessity for ensuring the consistent quality, safety, and efficacy of pharmaceutical products.

In the realm of high-performance liquid chromatography (HPLC) method validation, the concepts of accuracy and precision form the cornerstone of reliable analytical measurement. These parameters are not merely statistical abstractions but practical necessities for ensuring that pharmaceutical products meet stringent quality, safety, and efficacy standards mandated by regulatory authorities worldwide [8] [4]. The fundamental distinction between these two concepts can be elegantly visualized through the bullseye analogy, which provides an intuitive framework for understanding measurement performance characteristics essential for HPLC method validation [16].

Within the pharmaceutical industry, analytical method validation is a formal, systematic process required to demonstrate that an analytical procedure is suitable for its intended purpose, providing assurance that the technique yields satisfactory and consistent results throughout its scope of application [8]. This validation process requires cooperative efforts across multiple departments including regulatory affairs, quality control, quality assurance, and analytical development, with accuracy and precision representing two of the most critical validation parameters that must be rigorously established [8] [1].

The Bullseye Analogy: A Conceptual Framework

The bullseye analogy serves as a powerful visual metaphor for illustrating the relationship between accuracy and precision in analytical measurements. In this analogy, the target's bullseye represents the true value of the analyte being measured, while the shot patterns represent individual measurement results obtained through the analytical procedure [16].

The following visualization depicts the four fundamental scenarios that arise when combining accuracy and precision:

Visual Guide to Bullseye Scenarios - This diagram represents the four possible combinations of accuracy and precision using target patterns, where the bullseye center represents the true value and shot patterns represent measurement results.

Defining the Core Concepts

Accuracy: Defined as the closeness of agreement between a measured value and the true value or an accepted reference value [16] [17]. In the bullseye analogy, high accuracy is represented by shot patterns centered on or near the bullseye, indicating that the average of measurements closely approximates the true value.

Precision: Defined as the closeness of agreement between independent measurement results obtained under specified conditions [16] [1]. In the analogy, high precision is represented by tightly clustered shot patterns, regardless of their position relative to the bullseye, indicating minimal scatter or variability between repeated measurements.

The relationship between these concepts is critical: it is possible for results to be precise without being accurate, and vice versa [16]. For instance, a method can produce tightly clustered results (high precision) that are consistently offset from the true value (low accuracy) due to systematic error. Conversely, a method might produce results that are scattered (low precision) but whose average approximates the true value (high accuracy) [18].

Accuracy and Precision in HPLC Method Validation

In the specific context of HPLC method validation, accuracy and precision take on well-defined technical meanings and experimental protocols as established by regulatory guidelines such as those from the International Conference on Harmonisation (ICH) and the United States Pharmacopeia (USP) [4] [1].

Accuracy in HPLC Validation

For HPLC methods, accuracy represents the closeness of agreement between the value found and the value accepted as a true or conventional value of the analyte [1]. Accuracy studies are typically evaluated by determining the recovery of spiked analytes into the sample matrix [4].

Experimental Protocol for Determining Accuracy:

Sample Preparation: For drug substance analysis, accuracy is measured by comparison with a standard reference material. For drug product analysis, synthetic mixtures spiked with known quantities of components are used [1]. For impurity quantification, accuracy is determined by analyzing samples spiked with known amounts of impurities [1].

Study Design: Accuracy should be established across the specified range of the method using a minimum of nine determinations over a minimum of three concentration levels (e.g., 80%, 100%, and 120% of the target concentration) with three replicates at each level [4] [1].

Data Analysis: Results are typically reported as percent recovery of the known, added amount, calculated using the formula:

Recovery (%) = (Measured Concentration / Theoretical Concentration) × 100

The mean recovery value at each concentration level should fall within accepted ranges, typically 98-102% for the assay of active pharmaceutical ingredients [4].

Precision in HPLC Validation

The precision of an HPLC method measures the degree of agreement among individual test results from repeated analyses of a homogeneous sample [1]. Precision is evaluated at three levels in HPLC method validation:

Repeatability (Intra-assay precision): Expresses the precision under the same operating conditions over a short interval of time [1]. This is typically determined by making six sample determinations at 100% concentration or by preparing three samples at three different concentrations in triplicates covering the specified range [8].

Intermediate Precision: Expresses within-laboratory variations, such as different days, different analysts, or different equipment [1]. An experimental design is used so that the effects of individual variables can be monitored, typically involving two analysts who prepare and analyze replicate sample preparations using different HPLC systems [1].

Reproducibility: Expresses the precision between laboratories, typically assessed through collaborative studies [1]. This is especially important when transferring methods between laboratories or sites.

Precision in HPLC is typically expressed as the relative standard deviation (RSD) or coefficient of variation (CV) of a series of measurements [1]. For assay determination of active pharmaceutical ingredients, the RSD for repeatability is generally expected to be less than 2.0% [4].

Quantitative Assessment: Protocols and Acceptance Criteria

The experimental determination of accuracy and precision in HPLC method validation follows specific protocols with predetermined acceptance criteria. The following table summarizes the standard experimental designs for evaluating these parameters:

Table 1: Experimental Protocols for Accuracy and Precision Determination in HPLC Validation

| Parameter | Experimental Design | Minimum Requirements | Data Presentation | Typical Acceptance Criteria |

|---|---|---|---|---|

| Accuracy | Recovery studies using spiked samples | 9 determinations over 3 concentration levels | Percent recovery at each level | 98-102% for API assay [4] |

| Precision (Repeatability) | Multiple injections of homogeneous sample | 6 replicates at 100% or 3 concentrations × 3 replicates | Relative Standard Deviation (RSD) | RSD < 2.0% for API assay [4] |

| Precision (Intermediate Precision) | Multiple analyses under varied conditions | 2 analysts using different instruments | RSD comparison and statistical testing (e.g., t-test) | RSD < 2.0% with no significant difference between analysts [1] |

The following workflow illustrates the integrated experimental approach for simultaneously validating accuracy, precision, and range in HPLC methods:

HPLC Validation Workflow - This diagram outlines the systematic experimental approach for simultaneously determining accuracy and precision in HPLC method validation studies.

Case study data demonstrates the application of these protocols in practice. In a study validating an HPLC method for determination of active compounds in a Thai herbal formulation, the method exhibited acceptable precision with RSD values lower than 2%, while accuracy was evaluated based on recovery percentages found to be within an acceptable range of 90.12-105.39% [19]. Similarly, in a study of an HPLC method for simultaneous quantification of dimethylcurcumin and resveratrol in nano-micelles, within-run precisions (%RSD) were 0.073-0.444% for dimethylcurcumin and 0.159-0.917% for resveratrol, while between-run precisions were 0.344-1.47 and 0.458-1.651 respectively [20].

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful execution of HPLC method validation studies requires specific reagents, materials, and instrumentation designed to ensure accuracy, precision, and reliability of results. The following table details key research reagent solutions essential for conducting proper accuracy and precision studies:

Table 2: Essential Research Reagent Solutions for HPLC Method Validation

| Reagent/Material | Function in Validation | Application Notes |

|---|---|---|

| Certified Reference Standards | Provides conventional true value for accuracy determination | Must have certified purity and identity; used for calibration curves and spike recovery studies [17] |

| Chromatography Columns | Stationary phase for analyte separation | C18 bonded phases most common; column dimensions (10-15 cm) with 3 or 5 μm particles recommended [12] |

| HPLC-Grade Solvents | Mobile phase components | Low UV absorbance; minimal particulate matter; acetonitrile/water or methanol/water systems most common [12] |

| Placebo Formulations | Specificity and accuracy assessment | Mock drug product without API; demonstrates absence of interference from excipients [4] |

| System Suitability Standards | Verification of system performance | Used to establish precision requirements before sample analysis; typically includes resolution, tailing factor, and precision standards [21] |

Understanding the sources and types of error in HPLC measurements is essential for improving both accuracy and precision. Errors in analytical chemistry are classified as systematic (determinate) or random (indeterminate) [18].

Systematic Errors Affecting Accuracy

Systematic errors cause measurements to consistently deviate from the true value in one direction and include:

- Instrument Calibration Errors: Improperly calibrated instruments consistently bias results [18].

- Reference Standard Issues: Chemical standards with assigned values different from true values systematically bias measurements [17].

- Sample Preparation Errors: Inconsistent extraction, filtration, or dilution techniques introduce systematic inaccuracies [12].

- Matrix Effects: Interference from sample components that differentially affect analyte response [17].

Random Errors Affecting Precision

Random errors are unavoidable fluctuations that cause scatter in measurements and include:

- Instrument Noise: Detector noise, pump pulsations, and temperature fluctuations [18].

- Sample Introduction Variability: Injection volume inconsistencies in auto-samplers [21].

- Environmental Factors: Temperature and humidity variations affecting separation [21].

- Operator Technique: Minor variations in sample handling and preparation [16].

Improvement Strategies

Strategies to improve accuracy and precision in HPLC methods include:

- Regular Instrument Calibration: Ensuring all instruments are properly calibrated and maintained [16].

- Analyst Training: Standardizing techniques across personnel to minimize human factor variations [16].

- Robust Method Development: Systematically optimizing chromatographic parameters to establish resilient methods [12].

- Quality Control Samples: Implementing routine QC samples to monitor method performance over time [18].

- Proper System Suitability Testing: Establishing and monitoring critical performance parameters before each analytical run [21].

The bullseye analogy provides more than just a visual representation of accuracy and precision—it offers a fundamental framework for understanding measurement performance in HPLC method validation. For researchers, scientists, and drug development professionals, mastering these concepts is not merely an academic exercise but a practical necessity for ensuring the reliability and regulatory compliance of analytical methods.

In the highly regulated pharmaceutical environment, the demonstrated accuracy and precision of HPLC methods directly impact decisions regarding product quality, safety, and efficacy. By rigorously applying the experimental protocols and acceptance criteria outlined in this guide, analytical scientists can provide the documented evidence required to prove that their methods are fit for purpose, ultimately contributing to the development of safe and effective pharmaceutical products for patients worldwide.

In the highly regulated pharmaceutical industry, the reliability of analytical data is the cornerstone of product quality, patient safety, and regulatory approval. High-Performance Liquid Chromatography (HPLC) method validation provides documented evidence that an analytical procedure is suitable for its intended purpose, ensuring that measurements of identity, potency, purity, and quality are accurate, precise, and reproducible. This process is governed by a harmonized yet complex framework of guidelines established by major international and national regulatory bodies.

The International Council for Harmonisation (ICH), the U.S. Food and Drug Administration (FDA), and the U.S. Pharmacopeia (USP) provide the primary foundational guidelines that define the requirements for analytical method validation [22] [23]. While these organizations have distinct roles and jurisdictions, their collaborative efforts have created a largely harmonized set of expectations for the pharmaceutical industry. The ICH develops global harmonized guidelines, which are then adopted by regulatory members like the FDA, ensuring that a method validated in one region is recognized and trusted worldwide [22]. Concurrently, the USP provides legally recognized compendial standards in the United States, including general chapters that detail validation practices [24] [25].

Understanding the specific and overlapping roles of these organizations is crucial for navigating regulatory submissions and ensuring compliance during inspections. This technical guide explores the individual and integrated roles of ICH, FDA, and USP guidelines in defining the core concepts of accuracy and precision within HPLC method validation, providing researchers and drug development professionals with a clear roadmap for implementation.

The Roles of ICH, FDA, and USP

International Council for Harmonisation (ICH)

The ICH's mission is to achieve greater harmonization worldwide to ensure that safe, effective, and high-quality medicines are developed and registered in the most resource-efficient manner. For analytical method validation, the two most critical ICH guidelines are:

- ICH Q2(R2): Validation of Analytical Procedures: This revised guideline serves as the global reference for what constitutes a valid analytical procedure [22]. It outlines the core validation parameters (e.g., accuracy, precision, specificity) and provides a framework for a science- and risk-based approach to validation. The 2024 update modernizes the principles to include contemporary technologies and emphasizes an analytical procedure lifecycle concept [26] [22].

- ICH Q14: Analytical Procedure Development: This complementary guideline provides a systematic framework for analytical procedure development and introduces the Analytical Target Profile (ATP) as a prospective summary of the method's intended purpose and desired performance criteria [22]. This shift from a prescriptive, "check-the-box" approach to a more scientific, lifecycle-based model represents a significant modernization of analytical method guidelines [22].

US Food and Drug Administration (FDA)

The FDA, as a key regulatory authority and member of the ICH, adopts and implements the harmonized ICH guidelines. For U.S. markets, complying with ICH Q2(R2) and ICH Q14 is a direct path to meeting FDA requirements for submissions such as New Drug Applications (NDAs) and Abbreviated New Drug Applications (ANDAs) [22]. The FDA also provides its own specific guidance documents, such as "Analytical Procedures and Methods Validation for Drugs and Biologics," which align with ICH principles [25]. Recent FDA inspectional focus has intensified on requiring product-specific reports proving that methods, including compendial USP methods, have been appropriately validated or verified [25].

US Pharmacopeia (USP)

The USP is a non-governmental, scientific organization that develops and publishes public compendial quality standards for medicines and their ingredients. These standards are recognized in U.S. law and are enforceable by the FDA. Key general chapters relevant to HPLC method validation include:

- USP General Chapter 〈1225〉 "Validation of Compendial Procedures": This chapter categorizes analytical tests and defines which validation parameters are required for each category [23].

- USP General Chapter 〈1226〉 "Verification of Compendial Procedures": This chapter outlines the process for demonstrating that a compendial method works as intended under specific conditions of use, which is a regulatory requirement for USP methods [25].

The following diagram illustrates the interconnected relationship between these organizations in setting the standards for analytical method validation.

Regulatory Guidelines Relationship

Core Principles: Accuracy and Precision in HPLC

Defining Accuracy and Precision

In the context of HPLC method validation, accuracy and precision are two fundamental performance characteristics that form the bedrock of method reliability. They are distinct yet complementary concepts, both of which are mandatory for regulatory compliance.

Accuracy is defined as the closeness of agreement between a measured test result and the true value (accepted reference value) [22] [23]. It answers the question: "Is my method measuring the correct concentration?" Accuracy is typically expressed as percent recovery by assaying a sample of known concentration (e.g., a reference standard) and calculating the percentage of the found amount relative to the theoretical amount. For an impurity method, accuracy might be validated by spiking the drug substance with a known amount of impurity and demonstrating that the method can recover it [24] [22].

Precision refers to the closeness of agreement between a series of measurements obtained from multiple sampling of the same homogeneous sample under the prescribed conditions [22] [23]. It measures the random error and reproducibility of the method, answering the question: "Can my method produce the same result consistently?" Precision is further subdivided into three tiers:

- Repeatability (intra-assay precision): Expresses the precision under the same operating conditions over a short interval of time (e.g., multiple injections from a single preparation by one analyst).

- Intermediate precision: Expresses within-laboratories variations, such as different days, different analysts, or different equipment.

- Reproducibility: Expresses the precision between different laboratories, which is assessed during method transfer studies [22].

Regulatory Definitions and Expectations

The table below summarizes how the key regulatory bodies define and emphasize accuracy and precision.

Table 1: Regulatory Requirements for Accuracy and Precision

| Regulatory Body | Accuracy Definition & Focus | Precision Definition & Focus | Typical Acceptance Criteria |

|---|---|---|---|

| ICH Q2(R2) [22] | Closeness of agreement between test result and true value. Focus on science-based, risk-managed approach. | Closeness of agreement between a series of measurements. Includes repeatability, intermediate precision, and reproducibility. | Varies by method type; e.g., Assay: Accuracy should be ≥98-102%; Precision (RSD) <2% for drug substance. |

| FDA [22] [25] | Adopts ICH definitions. Emphasizes lifecycle validation and risk management. Requires product-specific proof. | Adopts ICH definitions. Hyper-focused on verification of compendial methods and intermediate precision for tech transfer. | Similar to ICH. Strict enforcement of predefined acceptance criteria in validation protocols. |

| USP 〈1225〉 [23] | The agreement between the measured value and the true value. Required for all quantitative tests. | The degree of agreement among individual test results. Required for all quantitative tests. | Specific to the article (monograph). For assay of drug substance, similar to ICH (e.g., RSD ≤1% for repeatability). |

Experimental Protocols for Accuracy and Precision

Protocol for Determining Accuracy

A well-defined protocol is critical for generating defensible accuracy data. The following methodology is aligned with ICH, FDA, and USP expectations [22] [27] [28].

- Sample Preparation: Prepare a minimum of nine determinations across a minimum of three concentration levels covering the specified range (e.g., 80%, 100%, and 120% of the target concentration). For each level, prepare three separate samples (e.g., by spiking a placebo with known amounts of the analyte).

- Chromatographic Analysis: Analyze the prepared samples using the finalized HPLC method. The method should be executed with all critical parameters (e.g., column temperature, mobile phase composition, flow rate) strictly controlled as per the method specification.

- Data Analysis and Calculation: For each concentration level, calculate the mean recovery (%) and the relative standard deviation (RSD).

- Calculation: % Recovery = (Mean Measured Concentration / Theoretical Concentration) × 100.

- Acceptance Criteria: The method is considered accurate if the mean recovery at each level is within the predefined range (e.g., 98.0-102.0% for a drug substance assay) and the RSD is within the limits for precision (e.g., ≤2%) [22] [28].

Protocol for Determining Precision

The precision of an HPLC method should be evaluated at the repeatability and intermediate precision levels as part of initial validation [22].

Repeatability (Intra-assay Precision):

- Sample Preparation: Prepare a minimum of six independent sample preparations at 100% of the test concentration. These should be prepared from a single, homogeneous batch of material.

- Chromatographic Analysis: Analyze all six preparations using the same HPLC system, by the same analyst, on the same day.

- Data Analysis: Calculate the % RSD of the six results (e.g., % assay).

Intermediate Precision:

- Experimental Design: Incorporate variations to estimate the impact of random events within the same laboratory. Key variables include different analysts, different days, and different HPLC instruments.

- Sample Preparation and Analysis: A second analyst repeats the repeatability study (six preparations at 100%) on a different day, using a different, but qualified, HPLC system.

- Data Analysis and Calculation: The results from both analysts (total of 12 determinations) are combined and statistically evaluated. The overall % RSD is calculated. A statistical test (e.g., F-test, t-test) may be applied to compare the data sets from the two analysts.

Acceptance Criteria: For the assay of a drug substance, typical acceptance criteria for repeatability is an RSD of ≤1%, and for intermediate precision, an RSD of ≤2%. The results from the two analysts in the intermediate precision study should not be statistically significantly different [22].

The workflow for establishing and documenting the entire validation process, from planning to reporting, is captured in the diagram below.

Method Validation Workflow

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key research reagent solutions and materials essential for conducting robust accuracy and precision experiments in HPLC method validation.

Table 2: Essential Materials for HPLC Method Validation Experiments

| Item | Function / Purpose | Critical Quality Attribute |

|---|---|---|

| Reference Standard | Serves as the benchmark for determining accuracy and assigning quantitative value. Its purity is the "true value" in calculations. | Certified purity and identity, high stability, and traceability to a primary standard. |

| High-Purity Solvents | Used for mobile phase preparation and sample dissolution. Impurities can cause baseline noise, ghost peaks, and altered retention times. | HPLC-grade or better, low UV absorbance, minimal particulate matter. |

| Weighed Sample of Drug Substance/Product | The test article used to demonstrate the method's performance on actual product matrix. | Representative, homogeneous, and well-characterized sample. |

| Placebo Matrix (for accuracy) | Used in recovery studies for formulated products. It is spiked with known amounts of analyte to prove specificity and accuracy without interference. | Must be identical to the product formulation, excluding the active ingredient. |

| Qualified HPLC Instrument | The platform for performing the separation and detection. System suitability tests are based on its performance. | Properly qualified (DQ/IQ/OQ/PQ), calibrated pumps, detector, autosampler, and column oven. |

The regulatory foundations provided by ICH, FDA, and USP guidelines create a comprehensive, interdependent system that ensures HPLC methods are scientifically sound and fit-for-purpose. The concepts of accuracy and precision are central to this framework, serving as non-negotiable pillars of data integrity and product quality. The evolving landscape, marked by the recent introduction of ICH Q2(R2) and ICH Q14, underscores a strategic shift towards a more holistic, risk-based analytical procedure lifecycle management. For researchers and drug development professionals, a deep understanding of these guidelines is not merely about regulatory compliance—it is a fundamental scientific discipline that guarantees the reliability of every data point underpinning the safety and efficacy of pharmaceutical products.

In the highly regulated pharmaceutical industry, the development and validation of High-Performance Liquid Chromatography (HPLC) methods serve as the foundation for ensuring drug safety, efficacy, and quality. Analytical method validation provides the documented evidence that a laboratory procedure consistently produces reliable, accurate, and reproducible results suitable for its intended application [24] [29]. This process is not merely a regulatory formality but a critical quality assurance tool that safeguards pharmaceutical integrity and ultimately, patient safety [24].

The core premise of effective method validation lies in establishing an unbreakable link between validation parameters and analytical purpose. Without this crucial connection, even the most sophisticated HPLC methods risk generating misleading data that can compromise product quality and regulatory submissions. As regulatory frameworks like ICH Q2(R2) emphasize a science-based, risk-informed approach, understanding why both parameters and purpose are non-negotiable becomes essential for researchers, scientists, and drug development professionals working in method development, quality control, and regulatory affairs [24] [30].

The Foundation: Understanding Accuracy and Precision in HPLC

In HPLC method validation, accuracy and precision represent two fundamental but distinct performance characteristics that form the bedrock of method reliability.

Accuracy refers to the closeness of agreement between the value obtained by the analytical method and the accepted true value or reference standard. It is often assessed through recovery studies where known amounts of analyte are added to the sample matrix, with results typically expressed as percentage recovery [29] [30]. For instance, in a validated method for carvedilol analysis, accuracy assessments revealed recovery rates ranging from 96.5% to 101%, well within acceptable pharmaceutical standards [31].

Precision, conversely, measures the degree of agreement among individual test results when the method is applied repeatedly to multiple samplings of the same homogeneous specimen. Precision is further categorized into:

- Repeatability (intra-assay precision): Precision under the same operating conditions over a short time interval

- Intermediate precision: Variation within laboratories (different days, different analysts, different equipment)

- Reproducibility: Precision between different laboratories [29]

Precision is typically expressed as relative standard deviation (RSD%), with acceptable values generally below 2.0% for pharmaceutical applications [31] [32]. The relationship between accuracy and precision creates the foundation for method reliability, where ideal methods demonstrate both high accuracy and high precision, generating results that are both correct and consistent.

Table 1: Accuracy and Precision Requirements Across Different HPLC Applications

| Analytical Purpose | Typical Accuracy Range (Recovery %) | Typical Precision (RSD%) | Example from Literature |

|---|---|---|---|

| Assay of Drug Substance | 98-102% | ≤1% | Upadacitinib method showed R²=0.9996 with RSD <2% [33] |

| Impurity Quantification | 90-110% | ≤5% | Carvedilol impurity method demonstrated RSD <2% [31] |

| Bioanalytical Methods | 85-115% | ≤15% | NAM-amidase activity detection showed RSD <2% [34] |

| Herbal Product Analysis | 95-105% | ≤2% | Zerumbone quantification in Zingiber ottensii [32] |

Validation Parameters and Their Purpose-Driven Application

The validation parameters required for an HPLC method are dictated entirely by its intended purpose. Regulatory guidelines like ICH Q2(R2) classify analytical procedures based on their application, with each category demanding specific validation characteristics [29].

Specificity and Selectivity

Specificity is the ability of the method to measure the analyte accurately and specifically in the presence of other components that may be expected to be present in the sample matrix, such as impurities, degradants, or excipients [29]. For stability-indicating methods, this parameter is particularly crucial as it must distinguish the active ingredient from its degradation products. The method for upadacitinib successfully demonstrated specificity by separating the drug from its forced degradation products formed under acidic, alkaline, and oxidative conditions [33].

Linearity and Range

Linearity refers to the method's ability to produce test results that are directly proportional to analyte concentration within a given range, while range defines the interval between the upper and lower concentrations for which the method has suitable linearity, accuracy, and precision [29]. The validated HPLC method for zerumbone quantification exhibited excellent linearity (R² > 0.999) across a range of 10-1000 μg/mL, demonstrating its capability for both trace-level and major component analysis [32].

Limit of Detection (LOD) and Limit of Quantitation (LOQ)

LOD represents the lowest amount of analyte in a sample that can be detected but not necessarily quantified, while LOQ is the lowest amount that can be quantitatively determined with suitable precision and accuracy [29]. These parameters are particularly critical for impurity testing and bioanalytical applications. The HPLC method for NAM-amidase activity detection demonstrated remarkably low LOD (0.033 μM) and wide linear range (0.1-100 μM), making it suitable for detecting even trace enzyme activity [34].

Robustness and Ruggedness

Robustness measures the method's capacity to remain unaffected by small, deliberate variations in method parameters, such as changes in flow rate, column temperature, or mobile phase pH [30]. The carvedilol analysis method was intentionally tested under varying conditions, including changes in flow rate, initial column temperature, and mobile phase pH, confirming its reliability under normal operational variations [31].

Table 2: Purpose-Driven Validation Parameters Based on ICH Guidelines

| Method Type | Primary Validation Focus | Critical Parameters | Application Example |

|---|---|---|---|

| Identification Tests | Selectivity/Specificity | Specificity | Herbal material authentication [32] |

| Quantitative Impurity Tests | Specificity, detection and quantification limits | Specificity, LOD, LOQ, Accuracy, Linearity | Upadacitinib forced degradation studies [33] |

| Assay of Drug Substance/Product | Accurate quantification | Specificity, Accuracy, Precision, Linearity, Range | Carvedilol content determination [31] |

| Bioanalytical Methods | Sensitivity in complex matrices | LOD, LOQ, Accuracy, Precision, Specificity | NAM-amidase activity detection [34] |

Experimental Protocols: From Theory to Laboratory Practice

Protocol for Accuracy Assessment

The following protocol outlines a standard approach for determining method accuracy through recovery studies:

- Sample Preparation: Prepare a placebo mixture containing all excipients without the active ingredient.

- Spiking Protocol: Spike the placebo with known quantities of the analyte at three concentration levels (typically 50%, 100%, and 150% of target concentration) in triplicate.

Analysis and Calculation: Analyze both spiked samples and standard solutions using the developed HPLC method. Calculate recovery percentage using the formula:

Recovery % = (Found Concentration / Added Concentration) × 100

Acceptance Criteria: For drug substance assays, recovery should be 98-102%; for impurity tests, 90-110% is generally acceptable [29].

In the upadacitinib validation study, this approach confirmed the method's accuracy with recovery percentages within the acceptable range, though specific values were not provided in the summary [33].

Protocol for Precision Evaluation

A comprehensive precision study encompasses multiple levels:

Repeatability (Intra-assay Precision):

- Prepare six independent test preparations of a single homogeneous sample at 100% of test concentration.

- Analyze using the same analyst, same instrument, on the same day.

- Calculate %RSD of the results: %RSD = (Standard Deviation / Mean) × 100

Intermediate Precision:

- Repeat the above study using different analysts, different instruments, or different days.

- The combined %RSD from both studies should meet acceptance criteria.

The method for paracetamol, phenylephrine hydrochloride, and pheniramine maleate quantification demonstrated excellent precision with %RSD values below the acceptable limit of 2%, though the exact values were not included in the available excerpt [35].

Diagram 1: Precision Evaluation Workflow - This workflow illustrates the comprehensive process for assessing method precision, encompassing both intra-assay and intermediate precision studies.

Case Study: The Carvedilol Analysis Method

The optimized HPLC method for carvedilol and impurities exemplifies the critical link between parameters and purpose [31]:

Method Purpose: Accurate determination of carvedilol content while minimizing interference from impurity C and N-formyl carvedilol, allowing precise impurity analysis.

Experimental Approach:

- The method was tested under varying conditions, including changes in flow rate, initial column temperature, and mobile phase pH to establish robustness.

- Precision was validated through RSD% values below 2.0%.

- Accuracy was confirmed through recovery rates ranging from 96.5% to 101%.

- Linearity was demonstrated with R² values consistently above 0.999 for all analytes.

Significance: This comprehensive validation approach ensured the method's reliability for pharmaceutical analysis, with stability studies indicating minimal variation in peak areas and impurity content over extended time periods.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful HPLC method development and validation requires specific reagents, standards, and materials that ensure reliability and reproducibility.

Table 3: Essential Research Reagent Solutions for HPLC Method Validation

| Reagent/Material | Function/Purpose | Example from Literature |

|---|---|---|

| Reference Standards | Provides known purity material for accuracy determination and calibration | USP/EDQM standards used in paracetamol combination product analysis [35] |

| HPLC-Grade Solvents | Ensure minimal UV absorbance and interference in mobile phase | Methanol and acetonitrile of HPLC grade used in upadacitinib method [33] |

| Buffering Agents | Maintain consistent mobile phase pH for reproducible retention | Sodium octanesulfonate solution (pH 3.2) in paracetamol combination analysis [35] |

| Column Phases | Stationary phase selection critical for separation efficiency | Zorbax SB-Aq, COSMOSIL C18 columns used in various studies [35] [33] |

| Internal Standards | Correct for variability in sample preparation and injection | Used in LC-MS/MS methods for biological sample analysis (implied) [33] |

Implementing the Parameter-Purpose Framework: A Practical Guide

Implementing a purpose-driven validation strategy requires systematic planning and execution. The following workflow ensures parameters are appropriately selected based on analytical goals:

Diagram 2: Parameter-Purpose Implementation Workflow - This diagram outlines the systematic process for linking validation parameters to analytical purpose throughout the method lifecycle.

Risk Management in Method Validation

Common pitfalls in analytical method validation often stem from disconnects between parameters and purpose [24]:

- Insufficient specificity testing: Failing to test across all relevant matrices can lead to unexpected interferences during routine use.

- Inadequate sample size: Too few data points increase statistical uncertainty and reduce confidence in results.

- Improper statistical application: Misapplication of statistical methods can distort conclusions and hide method weaknesses.

- Documentation gaps: Missing data or protocol deviations create compliance risks during audits.

The inextricable link between validation parameters and analytical purpose forms the foundation of reliable HPLC methods in pharmaceutical development. As demonstrated through multiple case studies, successful method validation requires carefully selecting parameters based on the method's intended use and rigorously demonstrating that acceptance criteria are met. Accuracy and precision serve as fundamental pillars in this framework, providing measures of both correctness and consistency that build confidence in analytical results.

The parameter-purpose connection remains non-negotiable because it transforms HPLC from a mere analytical technique into a decision-making tool trusted by regulators, manufacturers, and ultimately, healthcare providers and patients. By adopting the purpose-driven validation strategies outlined in this guide, scientists and researchers can develop robust, reliable HPLC methods that stand up to technical and regulatory scrutiny while advancing pharmaceutical quality and patient safety.

From Theory to Practice: How to Determine and Apply Accuracy and Precision in Your HPLC Lab

In High-Performance Liquid Chromatography (HPLC) method validation, accuracy represents the closeness of agreement between a measured value and a value accepted as a true or reference value. It demonstrates that an analytical method provides results that are genuinely representative of the sample composition, ensuring that quality control and research conclusions are based on reliable data [8] [21]. Within a comprehensive validation framework, accuracy works in tandem with precision (the closeness of agreement between a series of measurements) to establish the overall reliability of an analytical procedure [10]. For the analysis of active pharmaceutical ingredients (APIs) in drug products, recovery studies using spiked samples provide the most direct and accepted approach for accuracy determination [36] [4].

This guide details the standardized protocols for conducting accuracy recovery studies, providing researchers and drug development professionals with the experimental methodologies and acceptance criteria necessary to demonstrate that their HPLC methods are fit for purpose.

Theoretical Foundation of Recovery Studies

Defining Accuracy Through Recovery

In the context of a spiked recovery study, accuracy is quantitatively expressed as the percentage recovery of the analyte. This metric compares the measured concentration of the analyte to the known, spiked concentration added to the sample matrix. The calculation is defined as follows [37]:

Recovery (%) = (Measured Concentration / Spiked Concentration) × 100

A recovery of 100% indicates perfect accuracy, where the measured value is identical to the true value. In practice, a predefined range of acceptable recovery is established, typically 98%–102% for drug substance and product assays at the target concentration level [36] [4].

Spiked Sample Design: Overcoming Matrix Effects

Spiked recovery studies are essential for drug product analysis because the sample matrix (e.g., excipients, fillers, binders) can interfere with the analyte's detection or quantification. This interference, known as the matrix effect, may cause suppression or enhancement of the analyte signal, leading to inaccurate results [10]. By spiking a known amount of the pure analyte into a placebo mixture (a blend of all formulation components except the API) or a pre-analyzed sample, scientists can isolate and quantify the method's effectiveness at extracting and measuring the analyte in the presence of these potential interferents [36] [8].

Experimental Protocols for Spiked Recovery Studies

Core Study Design and Sample Preparation

The following protocol outlines the standard procedure for conducting a spiked recovery study to assess the accuracy of an HPLC method for a drug product.

Materials and Reagents:

- Analyte Reference Standard: Pure, well-characterized substance of known purity.

- Placebo: A mixture of all excipients present in the final drug formulation, excluding the Active Pharmaceutical Ingredient (API).

- Appropriate Solvents: HPLC-grade solvents and mobile phase components.

- Volumetric Glassware: Class A pipettes and flasks.

Procedure:

- Prepare a Placebo Solution: Process the placebo mixture using the same sample preparation procedure (e.g., dissolution, dilution, sonication, filtration) specified in the analytical method [4].

- Spike the Placebo: Accurately weigh and spike the placebo solution with the analyte reference standard at a minimum of three concentration levels, with nine determinations in total (e.g., three concentrations, each in triplicate) [4]. The recommended levels are:

- 80% of the target test concentration

- 100% of the target test concentration

- 120% of the target test concentration [36]

- Analyze the Samples: Inject each spiked sample into the HPLC system following the validated method conditions.

- Prepare and Analyze Standard Solutions: In parallel, prepare standard solutions of the analyte at equivalent concentrations in pure solvent (without the placebo matrix). Analyze these to establish the reference response.

- Calculate Recovery: For each spiked sample, calculate the recovery percentage using the formula in Section 2.1. The measured concentration is determined by comparing the response (e.g., peak area) to a calibration curve or by direct comparison to the standard solution analyzed the same day [36].

Key Validation Parameters and Acceptance Criteria

For a method to be considered accurate, the recovery results must meet predefined acceptance criteria. The table below summarizes the typical criteria for the assay of a drug product.

Table 1: Acceptance Criteria for Accuracy (Recovery) Studies in HPLC Method Validation

| Parameter | Study Design | Typical Acceptance Criteria | Source |

|---|---|---|---|

| Accuracy (Recovery) | Minimum of 9 determinations over 3 concentration levels (e.g., 80%, 100%, 120%) | Recovery Range: 98% - 102% RSD (Precision): < 2% | [36] [4] |

| Linearity | 5-7 points across the range (LOQ to 200%) | Correlation Coefficient (r): > 0.999 | [36] [8] |

Table Note: For the quantification of impurities, a wider acceptance criterion for recovery (e.g., 90-107%) is often applied, especially at lower concentrations near the limit of quantitation. The range should be justified based on the intended use of the method [4].

Experimental Workflow Visualization

The following diagram illustrates the logical workflow for conducting a spiked recovery study, from experimental design to final interpretation of the accuracy data.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful execution of a recovery study requires carefully selected, high-quality materials. The following table details key reagents and their critical functions in the experiment.

Table 2: Essential Research Reagents for Spiked Recovery Studies

| Reagent / Material | Function & Importance | Technical Notes |

|---|---|---|

| Analyte Reference Standard | Serves as the known "truth" for spiking; its purity directly impacts accuracy. | Must be of high and documented purity (e.g., ≥98%). Purity should be accounted for in calculations [38] [6]. |

| Placebo Mixture | Recreates the sample matrix to evaluate extraction efficiency and detect interference. | Composition must match the final drug product formulation exactly, minus the API [4]. |

| HPLC-Grade Solvents | Used for preparing mobile phases, standard solutions, and sample dilutions. | High purity minimizes UV absorbance background noise and prevents system contamination [38] [39]. |

| Internal Standard (Optional) | A compound added in a constant amount to all samples and standards to normalize analytical response. | Corrects for variability in injection volume, extraction efficiency, and instrument response drift [10]. |

Data Analysis and Interpretation

Upon completion of the recovery study, data analysis involves calculating the mean recovery and the relative standard deviation (RSD) for each concentration level, as well as across all levels. The RSD, a measure of precision, must also meet acceptance criteria (typically <2%) to confirm that the method is not only accurate but also reliable [36] [37].

It is critical to ensure that the highest concentration used in the recovery study falls within the demonstrated linear range of the method. Quantification of an analyte at a concentration outside the linear range will not be reliable, even if a recovery value appears acceptable [36].

Spiked recovery studies are a cornerstone of HPLC method validation, providing direct, empirical evidence of a method's accuracy. By adhering to the rigorous protocols outlined in this guide—employing a systematic approach to sample design, utilizing high-quality reagents, and applying strict statistical criteria—researchers and pharmaceutical scientists can generate defensible data that proves their analytical methods are capable of producing true and reliable results. This, in turn, ensures the quality, safety, and efficacy of pharmaceutical products.

In the realm of High-Performance Liquid Chromatography (HPLC) method validation, precision quantifies the degree of scatter in a series of measurements obtained from multiple sampling of the same homogeneous sample under prescribed conditions [1]. It provides assurance that an analytical method will yield consistent and reproducible results whenever applied [4]. Within the framework of analytical method validation, precision serves as a fundamental pillar supporting data integrity and regulatory compliance, without which analytical results may be unreliable [40]. For researchers and drug development professionals, understanding and rigorously assessing precision is not merely a regulatory formality but a scientific necessity to ensure that quality decisions are based on trustworthy data.

Precision is typically evaluated at three distinct levels: repeatability (intra-assay precision), intermediate precision (inter-day, inter-analyst, inter-equipment variations), and reproducibility (inter-laboratory precision) [1] [4]. This hierarchical approach systematically examines variability under different conditions, providing a comprehensive understanding of a method's reliability throughout its lifecycle. The International Council for Harmonisation (ICH) Q2(R1) guideline establishes the global standard for assessing these parameters, with other regulatory bodies like the United States Pharmacopeia (USP) largely aligning with its principles [41].

Theoretical Framework: The Three Tiers of Precision

Repeatability

Repeatability, also referred to as intra-assay precision, expresses the precision under the same operating conditions over a short interval of time [1]. It represents the best-case scenario for a method's performance, demonstrating the fundamental variability inherent in the analytical procedure when executed by a single analyst using the same instrument and reagents on the same day [4]. Regulatory guidelines suggest that repeatability should be assessed using a minimum of nine determinations covering the specified range of the procedure (e.g., three concentrations and three repetitions each) or a minimum of six determinations at 100% of the test concentration [1]. Results are typically reported as the relative standard deviation (RSD) of the measured values [40].

Intermediate Precision

Intermediate precision investigates the impact of normal, expected variations within a laboratory environment on the analytical results [1]. This tier of precision assessment deliberately introduces variables such as different days, different analysts, and different equipment to ensure the method remains reliable despite these common fluctuations [4]. The purpose is to demonstrate that the method will perform consistently during routine use in a single laboratory. A well-designed intermediate precision study uses an experimental design that allows the effects of individual variables to be monitored and understood [1]. Historically, this parameter was referred to as "ruggedness" in USP guidelines, though this term is being superseded by the ICH terminology of "intermediate precision" [1] [41].

Reproducibility

Reproducibility represents the highest level of precision assessment, demonstrating the precision of a method across different laboratories [1]. This is typically assessed during collaborative studies between laboratories and provides critical data for method standardization and transfer [4]. Reproducibility studies are particularly important when establishing compendial methods or when transferring methods between development and quality control laboratories, or between manufacturers and contract research organizations [1]. Documentation in support of reproducibility should include the standard deviation, relative standard deviation, and confidence interval of the results obtained across participating laboratories [1].

Table 1: Key Characteristics of Precision Tiers

| Precision Tier | Experimental Conditions | Typical Assessment Approach | Primary Application |

|---|---|---|---|

| Repeatability | Same analyst, same instrument, same day, short time interval | Minimum of 9 determinations over specified range (3 concentrations × 3 replicates) or 6 determinations at 100% [1] | Establishing baseline method variability |

| Intermediate Precision | Different days, different analysts, different instruments within same laboratory | Experimental design to monitor effects of individual variables; typically two analysts preparing and analyzing replicates independently [1] [4] | Verifying method robustness for routine laboratory use |

| Reproducibility | Different laboratories, typically collaborative studies | Multiple laboratories analyze same samples using same protocol; statistical comparison of results [1] | Method standardization and transfer between sites |

Experimental Protocols and Methodologies

Protocol for Assessing Repeatability

System Precision (Injector Precision): This evaluates the performance of the HPLC instrument itself. The protocol involves making five to six replicate injections of a single standard solution preparation and calculating the relative standard deviation (RSD) of the peak areas or retention times [40]. Acceptance criteria typically require an RSD of ≤1-2% for most quantitative pharmaceutical analyses, demonstrating that the instrument system produces consistent responses [4].

Method Repeatability (Analysis Precision): This assesses the complete analytical procedure under unchanged conditions. The protocol requires a single analyst to prepare and analyze multiple samples (at least six) from the same homogeneous sample batch [4] [40]. These samples are prepared independently from the same stock solution to capture variability from the entire sample preparation process. The RSD of the measured analyte concentrations is calculated, with acceptance criteria generally set at ≤2.0% for drug products [4].

Protocol for Assessing Intermediate Precision

A comprehensive intermediate precision study incorporates variations in critical factors that might reasonably fluctuate during routine method use. The protocol typically involves two analysts who independently prepare replicate sample preparations using their own standards and solutions, potentially using different HPLC systems [1]. The study should be conducted over different days to capture day-to-day variability.

Experimental Design:

- Analyst Variation: Two different qualified analysts perform the entire analytical procedure independently.

- Instrument Variation: Different HPLC systems with similar specifications are used.

- Temporal Variation: Analyses are performed on different days.

- Sample Preparation: Each analyst prepares independent standard solutions and mobile phases.

Statistical Evaluation: The results from both analysts are subjected to statistical comparison. The percentage difference in the mean values between the two analysts' results should be within predetermined specifications [1]. Additionally, statistical tests such as Student's t-test may be applied to determine if there is a significant difference between the means obtained by different analysts [1]. The RSD for the combined data sets from both analysts should also meet acceptance criteria, typically ≤2.0% for drug product assays [4].

Protocol for Assessing Reproducibility

Reproducibility is typically evaluated during formal method transfer between laboratories or in collaborative validation studies. The protocol requires multiple laboratories (at least two) to analyze the same set of samples using the identical analytical method and predetermined acceptance criteria [1].

Study Design:

- Sample Homogeneity: All participating laboratories receive aliquots from the same homogeneous sample batch to eliminate sample variability.

- Method Documentation: All laboratories follow the same validated method procedure.

- Independent Execution: Each laboratory performs the analysis independently with their own analysts, equipment, and reagents.

- Data Collection: All raw data and results are compiled for statistical analysis.

Statistical Analysis: The reproducibility is assessed by comparing the results between laboratories using statistical measures including the standard deviation, relative standard deviation, and confidence intervals [1]. Analysis of Variance (ANOVA) may be employed to distinguish between variability within laboratories and variability between laboratories [42]. The acceptance criteria for reproducibility are typically established prior to the study based on the method's intended purpose and the analytical requirements.

Table 2: Experimental Protocols for Precision Assessment

| Precision Tier | Key Experimental Variables | Minimum Sample Requirements | Statistical Measures | Typical Acceptance Criteria |

|---|---|---|---|---|

| Repeatability | Single analyst, instrument, and day | 6 determinations at 100% or 9 determinations over range [1] | %RSD [40] | %RSD ≤ 2.0% for assay [4] |

| Intermediate Precision | Different analysts, days, instruments | Two analysts performing replicate analyses [1] | %RSD, Student's t-test, % difference of means [1] | Combined %RSD ≤ 2.0%; No significant difference between means [4] |

| Reproducibility | Different laboratories | Collaborative study with multiple labs analyzing same samples [1] | %RSD, ANOVA, confidence intervals [1] [42] | Study-specific criteria; %RSD comparable to intermediate precision |

Data Analysis and Statistical Methods

Calculation of Relative Standard Deviation (RSD)

The Relative Standard Deviation, also known as the coefficient of variation, is the primary statistical measure for quantifying precision across all three tiers [42]. It is calculated as:

RSD (%) = (Standard Deviation / Mean) × 100

This normalized measure allows for comparison of variability across different concentration levels and between different methods [40]. For HPLC methods in pharmaceutical analysis, acceptance criteria for RSD are typically set at ≤2.0% for drug product assays, though tighter criteria (≤1.0%) may be applied for drug substance analyses [4].

Advanced Statistical Approaches

Beyond basic RSD calculations, more sophisticated statistical methods are increasingly employed for precision assessment:

Analysis of Variance (ANOVA): This statistical technique is particularly valuable for intermediate precision and reproducibility studies as it can distinguish between different sources of variability (e.g., between-analyst vs. between-day variation) [42]. ANOVA helps determine whether observed differences are statistically significant or merely result from random variation.

Control Charts: For ongoing precision monitoring, control charts such as Shewhart charts, CUSUM (Cumulative Sum) charts, and EWMA (Exponentially Weighted Moving Average) charts enable continuous monitoring of system performance and early detection of analytical drift [42]. These tools help distinguish between random variations and systematic errors that may develop over time.

Variance Component Analysis: This approach quantifies the contribution of each source of variation (e.g., analyst, instrument, day) to the total variability, providing insights for method improvement [42]. Understanding which factors contribute most to variability allows for targeted refinements to enhance method robustness.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Materials and Reagents for Precision Studies

| Item Category | Specific Examples | Function in Precision Assessment | Critical Considerations |

|---|---|---|---|

| Reference Standards | Drug substance CRS (Certified Reference Standard), impurity standards [4] | Provides known concentration for accuracy and precision determination; essential for system suitability testing | Purity certification, proper storage conditions, stability over time [4] |

| Chromatography Columns | C18 columns (e.g., 150 mm × 4.6 mm, 5 μm) [7] | Stationary phase for separation; critical for retention time reproducibility | Column lot-to-lot variability, lifetime studies, manufacturer consistency [4] |

| Mobile Phase Components | HPLC-grade methanol, acetonitrile, water; buffer salts (e.g., potassium phosphate) [12] [7] | Liquid phase for eluting analytes; impacts selectivity and retention | pH control, filtration, degassing, preparation consistency [12] |