Analytical Method Validation: A Comprehensive Guide for Researchers and Scientists

This article provides a comprehensive overview of analytical method validation, a critical process for ensuring the reliability, accuracy, and regulatory compliance of data in pharmaceutical research and drug development.

Analytical Method Validation: A Comprehensive Guide for Researchers and Scientists

Abstract

This article provides a comprehensive overview of analytical method validation, a critical process for ensuring the reliability, accuracy, and regulatory compliance of data in pharmaceutical research and drug development. Tailored for researchers, scientists, and development professionals, it covers the foundational principles of major guidelines like ICH Q2(R2) and FDA requirements. The scope extends from core validation parameters and methodological applications to advanced troubleshooting, lifecycle management, and comparative analysis of techniques. By synthesizing current regulatory trends with practical implementation strategies, this guide serves as an essential resource for developing robust, fit-for-purpose analytical methods that uphold data integrity and facilitate successful regulatory submissions.

The Pillars of Validation: Understanding Guidelines and Core Parameters

The Role of ICH, FDA, and EMA in Setting Global Standards

In the landscape of drug development and analytical method validation, three organizations form the cornerstone of global regulatory standards: the International Council for Harmonisation (ICH), the U.S. Food and Drug Administration (FDA), and the European Medicines Agency (EMA). For researchers and drug development professionals, understanding the distinct yet interconnected roles of these bodies is crucial for designing robust analytical methods and navigating the complex pathway to drug approval. The ICH provides the foundational scientific and technical guidelines through international consensus, while the FDA and EMA translate these guidelines into enforceable regulations within their respective jurisdictions—the United States and the European Union. This framework ensures that the data generated from analytical procedures, such as those used in release and stability testing of drug substances and products, are accurate, reliable, and acceptable to multiple regulatory authorities, thereby streamlining global drug development [1].

This whitepaper provides an in-depth technical analysis of how the ICH, FDA, and EMA collaboratively and individually establish the standards that govern pharmaceutical research and quality control. It places specific emphasis on the context of analytical method validation, detailing the experimental protocols, documentation requirements, and compliance strategies essential for success in a regulated environment.

The International Council for Harmonisation (ICH)

Mission and Structure

The International Council for Harmonisation (ICH) is a unique global initiative that brings together regulatory authorities and the pharmaceutical industry to discuss the scientific and technical aspects of drug registration. Its primary mission is to achieve greater harmonization worldwide to ensure that safe, effective, and high-quality medicines are developed and registered in the most resource-efficient manner. Harmonization reduces the need for redundant testing, accelerates the availability of new medicines, and protects public health. The ICH operates through a series of topic-specific Expert Working Groups (EWGs) where members from regulatory bodies and industry associations collaborate to develop consensus-based guidelines.

Key Guidelines for Analytical Science

ICH guidelines are categorized into four primary areas: Quality (Q series), Safety (S series), Efficacy (E series), and Multidisciplinary (M series). For analytical researchers, the Quality guidelines are of paramount importance.

- ICH Q2(R2): Validation of Analytical Procedures: This recently revised guideline provides a comprehensive framework for the validation of analytical procedures. It describes the validation tests that should be considered for various types of procedures, including assays, impurity tests, and identification tests. The guideline defines key validation characteristics such as accuracy, precision, specificity, detection limit, quantitation limit, linearity, and range. It applies to new or revised analytical procedures used for the release and stability testing of commercial drug substances and products, both chemical and biological [1].

- ICH Q1A(R2): Stability Testing of New Drug Substances and Products: This guideline defines the stability data package required for drug registration in all three ICH regions. It is fundamental for designing stability studies and establishing shelf lives.

- The Common Technical Document (CTD): While not a guideline per se, the CTD (ICH M4) is a critical ICH output. It provides a harmonized format for the organization of registration application dossiers, ensuring that data is presented consistently to regulators, which streamlines the review process [2].

The ICH process ensures that once a guideline is adopted, it is implemented by its regulatory members, such as the FDA and EMA, into their own regulatory frameworks, creating a unified scientific standard.

The U.S. Food and Drug Administration (FDA)

Regulatory Authority and Approach

The FDA is the United States' federal agency responsible for protecting public health by ensuring the safety, efficacy, and security of human drugs, biological products, and medical devices, among other products [3] [4]. The FDA's authority is derived from U.S. law, and it issues legally enforceable regulations. These regulations are published in the Federal Register and codified in Title 21 of the Code of Federal Regulations (21 CFR) [4]. The FDA's approach to regulation is centralized, meaning it oversees the entire drug development and approval process for a single, large market.

A cornerstone of the FDA's quality mandate is the enforcement of Current Good Manufacturing Practice (CGMP) regulations. The CGMPs for drugs contain the minimum requirements for the methods, facilities, and controls used in manufacturing, processing, and packing. Their purpose is to ensure that a product is safe for use and that it has the ingredients and strength it claims to have [5].

Key Regulations and Guidance for Analytical Methods

The FDA translates ICH guidelines into its regulatory structure, making compliance with them a de facto requirement for market approval.

- 21 CFR Part 211 - Current Good Manufacturing Practice for Finished Pharmaceuticals: This is the principal regulation detailing the CGMP requirements for the preparation of drug products. It mandates that all laboratory controls, including the calibration of instruments and apparatus, shall be established and followed. It requires that analytical methods be sound and scientifically valid [5].

- 21 CFR Part 314 - Applications for FDA Approval to Market a New Drug: This part governs the content and format of New Drug Applications (NDAs) and Abbreviated New Drug Applications (ANDAs), which must include comprehensive analytical data generated using validated methods, as per ICH Q2(R2) [5].

- FDA-Specific Guidance Documents: Beyond implementing ICH guidelines, the FDA issues its own more detailed guidance documents that provide the agency's current thinking on a topic. For analytical scientists, these may include product-specific guidances and recommendations on advanced analytical techniques.

The FDA's rulemaking process is a formal "notice and comment" procedure, allowing for public input on proposed rules and guidance, which adds a layer of transparency and scientific input to its regulatory development [4].

The European Medicines Agency (EMA)

Regulatory Authority and Approach

The European Medicines Agency (EMA) is a decentralized agency of the European Union (EU) responsible for the scientific evaluation, supervision, and safety monitoring of medicines. Unlike the centralized FDA, the EMA operates in a network that coordinates the national competent authorities of the EU Member States [3]. While the EMA runs a "centralized procedure" for the authorization of innovative medicines, national procedures also exist, and the EMA's guidelines are developed in collaboration with its member states.

The EMA strongly encourages applicants to follow its scientific guidelines, and any deviation must be fully justified in the marketing authorization application. Applicants are advised to seek scientific advice to discuss any proposed deviations during medicine development [2].

Key Guidelines and Frameworks for Analytical Methods

The EU regulatory framework is compiled in a set of rules known as EudraLex. Volume 3 of EudraLex contains guidelines on the quality, safety, and efficacy of medicinal products for human use, which is where the EU's adoption of ICH guidelines is published [2].

- Adoption of ICH Guidelines: The EMA is a founding regulatory member of the ICH and integrates ICH guidelines directly into the EU regulatory framework. For example, the ICH Q2(R2) guideline on analytical procedure validation is published as an EMA scientific guideline, making it applicable to all marketing authorization applications in the EU [1] [2].

- Quality Guidelines: The EMA's compilation of guidelines includes a specific "Quality" section that addresses all aspects of the manufacture, characterization, and control of the active substance and finished product. These guidelines are structured to follow the Common Technical Document (CTD) format, ensuring consistency in dossier submission [2].

- Good Pharmacovigilance Practices (GVP): While focused on post-authorization safety, the GVP framework underscores the lifecycle approach to a medicine's benefit-risk profile, which begins with the quality and consistency of the product ensured by validated analytical methods [6] [7].

Table 1: Comparison of Regulatory Frameworks for Analytical Standards

| Aspect | ICH | FDA (U.S.) | EMA (E.U.) |

|---|---|---|---|

| Primary Role | Develop harmonized scientific & technical guidelines through consensus [2] | Translate guidelines into federal law & enforce regulations [5] [4] | Coordinate network for scientific evaluation & implement guidelines across member states [3] [2] |

| Legal Status of Guidelines | Non-binding, but adopted as standards by member regulators | Binding when referenced in regulations (21 CFR); Guidance documents represent FDA's thinking [4] | Legally binding for Marketing Authorization Applicants upon adoption into EudraLex [2] |

| Key Document for Method Validation | ICH Q2(R2) Validation of Analytical Procedures [1] | 21 CFR Part 211 (CGMP) & ICH Q2(R2) implemented via guidance [5] | ICH Q2(R2) adopted as part of EudraLex, Volume 3 [1] [2] |

| Application Format | Common Technical Document (CTD - ICH M4) [2] | Common Technical Document (CTD) format required in NDAs/ANDAs | Common Technical Document (CTD) format required in MAAs |

Analytical Method Validation: A Converged Standard

Core Validation Parameters (ICH Q2(R2))

Analytical method validation provides documented evidence that a procedure is fit for its intended purpose. The ICH Q2(R2) guideline establishes a common set of validation characteristics and methodologies that are recognized by the FDA, EMA, and other global regulators. The core parameters are as follows:

- Accuracy: The closeness of agreement between the value which is accepted as a conventional true value or an accepted reference value and the value found. This is typically established by spiking the analyte into a placebo and measuring recovery, or by comparison to a reference method.

- Precision: The closeness of agreement between a series of measurements obtained from multiple sampling of the same homogeneous sample under the prescribed conditions. Precision is considered at three levels: repeatability (intra-assay), intermediate precision (inter-day, inter-analyst, inter-equipment), and reproducibility (between different laboratories).

- Specificity: The ability to assess unequivocally the analyte in the presence of components that may be expected to be present, such as impurities, degradants, and matrix components. For chromatographic methods, this is demonstrated by the resolution of peaks.

- Detection Limit (LOD) & Quantitation Limit (LOQ): The LOD is the lowest amount of analyte in a sample that can be detected but not necessarily quantitated. The LOQ is the lowest amount of analyte in a sample that can be quantitatively determined with suitable precision and accuracy. These can be determined based on the signal-to-noise ratio, standard deviation of the response, and the slope of the calibration curve.

- Linearity and Range: Linearity is the ability of the method to obtain test results that are directly proportional to the concentration of the analyte. The range is the interval between the upper and lower concentrations of analyte for which it has been demonstrated that the analytical procedure has a suitable level of precision, accuracy, and linearity.

Experimental Protocol for Validating an HPLC Assay

The following is a detailed methodology for validating a High-Performance Liquid Chromatography (HPLC) assay for a drug substance, based on the principles of ICH Q2(R2).

1. Objective: To validate an HPLC assay for the quantification of active pharmaceutical ingredient (API) in a tablet formulation, demonstrating that the method is accurate, precise, specific, linear, and robust over the specified range.

2. Materials and Reagents:

- Reference Standard: Certified API standard with known purity.

- Test Sample: Drug product (tablets).

- Placebo: All excipients of the formulation without the API.

- Mobile Phase: HPLC-grade solvents and buffers as per the method.

- Diluent: Appropriate solvent to dissolve the API and prepare samples.

3. Experimental Procedure:

Specificity/Selectivity:

- Procedure: Separately inject the diluent (blank), placebo solution, API standard solution, and a sample solution spiked with known impurities/degradants (generated by stress conditions: acid, base, oxidation, heat, and light).

- Acceptance Criteria: The analyte peak from the API and test sample should be pure and free from interference from the blank, placebo, or any degradation peaks. Peak purity tools (e.g., diode array detector) should be used.

Linearity and Range:

- Procedure: Prepare a minimum of 5 concentrations of API standard solutions, typically from 50% to 150% of the target assay concentration (e.g., 50%, 75%, 100%, 125%, 150%). Inject each solution in triplicate and plot the average peak area versus concentration.

- Acceptance Criteria: The correlation coefficient (r) should be not less than 0.999. The y-intercept should not be significantly different from zero.

Accuracy:

- Procedure: Prepare placebo samples and spike with known quantities of API at three levels (e.g., 80%, 100%, and 120% of the target concentration). Analyze each level in triplicate. Calculate the percentage recovery of the API.

- Acceptance Criteria: The mean recovery at each level should be between 98.0% and 102.0%.

Precision:

- Repeatability: Analyze six independent sample preparations from a homogeneous batch at 100% of the test concentration. Calculate the % Relative Standard Deviation (%RSD) of the assay results.

- Acceptance Criteria: %RSD should be NMT 2.0%.

- Intermediate Precision: On a different day, using a different analyst and a different HPLC system, repeat the repeatability study. The combined data from both days should have a %RSD NMT 2.5%.

- Repeatability: Analyze six independent sample preparations from a homogeneous batch at 100% of the test concentration. Calculate the % Relative Standard Deviation (%RSD) of the assay results.

Robustness:

- Procedure: Deliberately introduce small, deliberate variations in method parameters (e.g., mobile phase pH ±0.2 units, flow rate ±10%, column temperature ±5°C). Evaluate the system suitability (e.g., resolution, tailing factor) under each condition.

- Acceptance Criteria: The system suitability criteria should be met in all variations.

Table 2: The Scientist's Toolkit for HPLC Method Validation

| Reagent/Material | Function in Validation |

|---|---|

| Certified Reference Standard | Serves as the benchmark for identity, purity, and potency; essential for preparing calibration standards for linearity, accuracy, and precision studies. |

| Placebo Formulation | A mixture of all excipients without the API; critical for demonstrating the specificity of the method by proving no interference with the analyte peak. |

| HPLC-Grade Solvents | Used for mobile phase and sample preparation; high purity is essential to minimize baseline noise and ghost peaks, ensuring accurate and precise detection. |

| System Suitability Standard | A reference preparation used to verify that the chromatographic system is performing adequately at the time of testing (e.g., for resolution, tailing factor, and repeatability). |

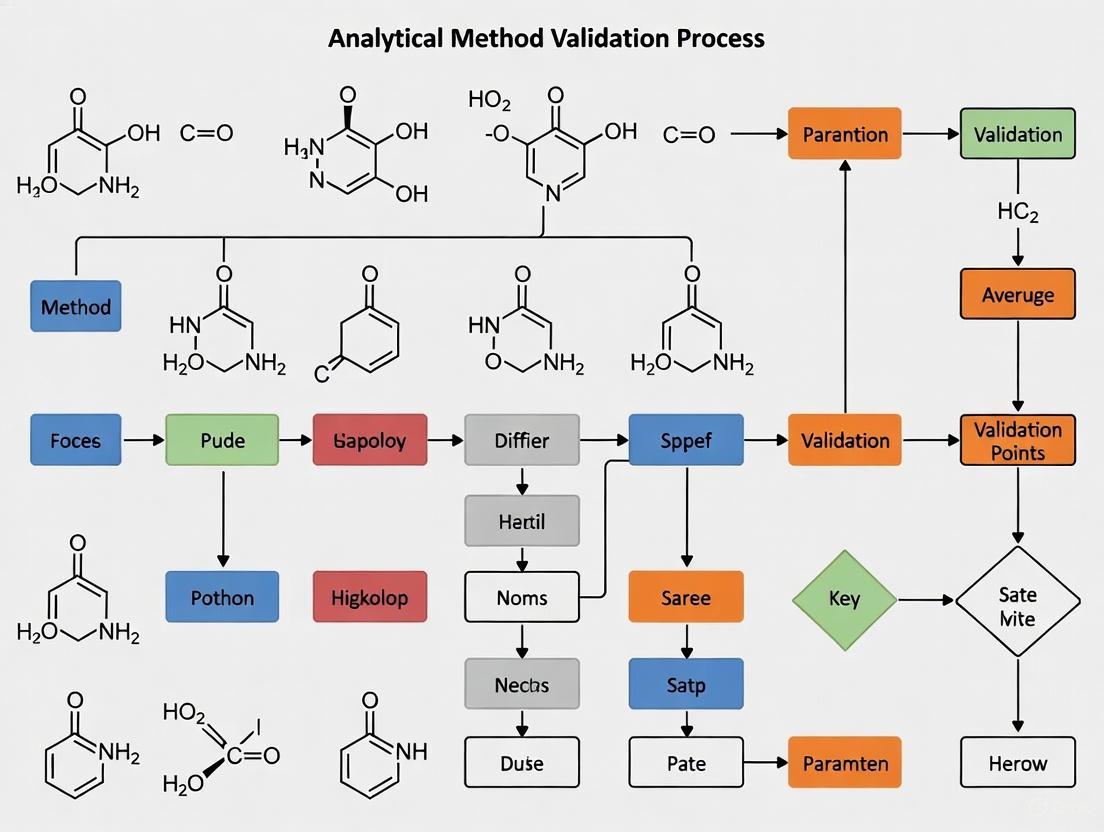

Visualization of the Standard Development and Validation Pathway

The following diagrams illustrate the logical relationships in global standard development and the experimental workflow for analytical method validation.

Global Standard Development Process

(Global Standard Development Process)

Analytical Method Validation Workflow

(Analytical Method Validation Workflow)

The synergistic relationship between the ICH, FDA, and EMA has created a robust, predictable, and science-driven framework for global drug development. For researchers and scientists, a deep understanding of the ICH Q2(R2) guideline and its implementation by the FDA and EMA is non-negotiable for successful analytical method validation. This harmonized system not only facilitates regulatory approval across major markets but also upholds the highest standards of product quality, safety, and efficacy. As regulatory science evolves, continued engagement with these bodies through scientific advice and commentary on draft guidance is essential for the advancement of analytical techniques and public health.

Demystifying ICH Q2(R2) and its Modernized Lifecycle Approach

The International Council for Harmonisation (ICH) guideline Q2(R2) on Validation of Analytical Procedures represents a significant evolution in the standards for ensuring drug quality. Moving beyond the prescriptive, one-time validation approach of its predecessor Q2(R1), Q2(R2) introduces a modernized, lifecycle model that is applied in conjunction with the new ICH Q14 guideline on Analytical Procedure Development [8] [9]. This shift, which regulatory bodies like the U.S. FDA and China's NMPA are adopting, emphasizes a science-based, risk-informed framework for developing and maintaining analytical methods, ensuring they are robust and fit-for-purpose throughout their entire lifespan [9]. This guide provides researchers and drug development professionals with a detailed overview of these foundational changes, their technical requirements, and their practical implementation.

The simultaneous development and release of ICH Q2(R2) and ICH Q14 marks a pivotal change in pharmaceutical analytical science. The core objective is to harmonize and modernize the approach to analytical procedures used in the registration of drug substances and products [8].

In the past, analytical method validation was often treated as a discrete, checklist-based activity conducted after method development. The new framework integrates development and validation into a continuous lifecycle process [9]. This is designed to foster a deeper scientific understanding of the method, which in turn leads to more robust quality oversight for drug manufacturers and can streamline regulatory submissions by reducing questions from agencies [8].

Globally, regulatory authorities are in the process of implementing these guidelines. In China, the National Medical Products Administration (NMPA) has taken significant steps, hosting official training sessions and initiating the process of incorporating these principles, with some aspects being reflected in the upcoming 2025 edition of the Chinese Pharmacopoeia [10] [11]. This global adoption underscores the importance for researchers to understand and apply these new principles.

Core Principles of the Lifecycle Approach

The modernized approach introduced by Q2(R2) and Q14 is built on several foundational concepts that differentiate it from the previous paradigm.

The Lifecycle Model: An Integrated Journey

The analytical procedure lifecycle is a continuous process that begins with initial development and extends through validation, routine use, and eventual retirement or continual improvement. ICH Q14 provides the structure for systematic development, while Q2(R2) provides the criteria for establishing and maintaining validation [8]. This model acknowledges that a method must be managed and monitored throughout its application, with a defined strategy for handling post-approval changes based on scientific understanding [9].

The following diagram illustrates the key stages and their relationships within this integrated lifecycle.

The Analytical Target Profile (ATP)

A cornerstone of the new approach is the Analytical Target Profile (ATP), introduced in ICH Q14 [9]. The ATP is a prospective summary that defines the intended purpose of the analytical procedure and its required performance characteristics (e.g., target precision, accuracy) [9]. It is a pre-defined objective that specifies what the method needs to achieve, rather than how it should be achieved. By defining the ATP at the outset, development and validation activities are strategically aligned to ensure the final method is truly fit-for-purpose.

Enhanced vs. Minimal Development Approaches

ICH Q14 formally describes two pathways for method development:

- The Minimal Approach: This is a traditional, direct approach to development, which may provide less data and understanding.

- The Enhanced Approach: This is a systematic, risk-based approach that seeks a deeper understanding of the method and its controlling factors. While more resource-intensive initially, the enhanced approach facilitates a more flexible control strategy and can simplify post-approval changes [9].

ICH Q2(R2) has been revised to align with the lifecycle model and to incorporate modern analytical technologies. The following table summarizes the major updates and new sections in the guideline [8].

Table 1: Key Updates and New Elements in ICH Q2(R2)

| Section | Category | Core Description |

|---|---|---|

| Validation during the lifecycle | New Section | Provides validation approaches for different stages of the analytical procedure lifecycle. |

| Considerations for multivariate procedures | New Section | Describes factors for calibrating and validating complex multivariate analytical methods. |

| Demonstration of stability-indicating properties | New Section | Guides how to demonstrate the specificity/selectivity of stability-indicating tests. |

| Reportable Range | Updated | Offers expected reportable ranges for common uses of analytical procedures. |

| Introduction & Scope | Updated | Describes the objective of the guideline and aligns it with ICH Q14. |

| Annex 1 & 2 | New | Provide guidance on selecting validation tests and illustrative examples for common techniques. |

Traditional and Lifecycle Validation Parameters

While ICH Q2(R2) maintains the core validation parameters from Q2(R1), their application and evaluation are now contextualized within the lifecycle framework. The guideline provides detailed recommendations on how to derive and evaluate these parameters for different types of analytical procedures [8] [9].

Table 2: Analytical Method Validation Parameters and Acceptance Considerations

| Performance Characteristic | Definition | Typical Acceptance Criteria & Methodology |

|---|---|---|

| Accuracy | Closeness of test results to the true value [9]. | Assessed by analyzing a standard of known concentration or by spiking a placebo. Recovery rates typically 98-102% for assay. |

| Precision (Repeatability, Intermediate Precision) | Degree of agreement among individual test results from multiple samplings [9]. | Expressed as relative standard deviation (%RSD). Repeatability (intra-assay); Intermediate precision (inter-day, inter-analyst). |

| Specificity/Selectivity | Ability to assess the analyte unequivocally in the presence of other components [9]. | Demonstrated by proving no interference from blank, placebo, impurities, or degradation products. |

| Linearity | Ability to obtain results proportional to analyte concentration [9]. | Established across a specified range, with a minimum of 5 concentration levels. Correlation coefficient (r) > 0.999 is often expected for assay. |

| Range | The interval between upper and lower analyte concentrations with suitable linearity, accuracy, and precision [9]. | Derived from the linearity, accuracy, and precision studies. Must be specified. |

| Limit of Detection (LOD) | The lowest amount of analyte that can be detected [9]. | Based on signal-to-noise ratio (3:1) or standard deviation of the response. |

| Limit of Quantitation (LOQ) | The lowest amount of analyte that can be quantified with acceptable accuracy and precision [9]. | Based on signal-to-noise ratio (10:1) or standard deviation of the response. Must demonstrate acceptable accuracy and precision at the LOQ. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters [9]. | Evaluated by testing the impact of small changes (e.g., pH, temperature, flow rate). Now a more formalized part of development. |

Application of Orthogonal Methods for Accuracy

A significant update reflected in Q2(R2) and corresponding regional pharmacopoeias, including the 2025 Chinese Pharmacopoeia, is the formal recognition of orthogonal methods for verifying accuracy when traditional spike-recovery studies are not scientifically sound [11]. This is particularly relevant for complex products like biologics, certain complex formulations, and products where creating a blank matrix is impossible.

- Scenario: A biological drug where the active protein is integral to the matrix; removing it destroys the matrix properties.

- Solution: Use a well-characterized, independent method (e.g., Capillary Electrophoresis) based on a different physicochemical principle (e.g., separation mechanism) than the primary method (e.g., HPLC) to cross-validate the results [11].

- Procedure:

- Orthogonal Method Validation: The secondary method (e.g., CE-SDS) must first be fully validated itself, demonstrating, for instance, a recovery rate of 98.5–101.2% [11].

- Sample Testing: A minimum of three representative sample batches are analyzed independently by both the primary and orthogonal methods [11].

- Data Comparison: Results from both methods are statistically compared. If the average deviation between methods is within a pre-defined acceptable range (e.g., <1.5%), it confirms the accuracy of the primary method [11].

Implementation Strategy: A Roadmap for Researchers

Successfully implementing the Q2(R2) and Q14 guidelines requires a strategic shift in laboratory practice. The following workflow and subsequent toolkit provide a practical roadmap.

The Scientist's Toolkit: Essential Components for Implementation

Table 3: Key Research Reagent Solutions and Method Components

| Item / Component | Function in Development & Validation |

|---|---|

| Well-Characterized Reference Standards | Serves as the benchmark for all quantitative measurements, critical for establishing accuracy, linearity, and range. |

| Representative Placebo/Matrix | Used in specificity and accuracy studies to demonstrate no interference and appropriate recovery in the sample matrix. |

| Forced Degradation Samples | Stressed samples (acid, base, oxidation, heat, light) are essential for demonstrating the stability-indicating properties of a method (specificity). |

| System Suitability Test (SST) Parameters | A set of reference materials and criteria used to verify that the analytical system is performing adequately at the time of the test. |

| Orthogonal Method Reagents | Independent analytical techniques and their associated reagents are crucial for accuracy verification in complex matrices [11]. |

The modernized lifecycle approach of ICH Q2(R2) and ICH Q14 represents a significant evolution in pharmaceutical analysis, moving the industry toward a more scientific, robust, and flexible paradigm. For researchers and drug development professionals, embracing these guidelines is not merely about regulatory compliance. It is an opportunity to build a deeper process understanding, develop more reliable analytical methods, and ultimately, enhance the overall quality control strategy for pharmaceutical products. As global regulatory authorities, including the NMPA and FDA, continue to implement these guidelines, their principles will become the foundational standard for all analytical work supporting drug registration.

In the pharmaceutical and life sciences industries, the integrity and reliability of analytical data are the bedrock of quality control, regulatory submissions, and ultimately, patient safety [9]. Analytical method validation is the process of providing documented evidence that an analytical procedure consistently produces results that are fit for their intended purpose, establishing that its performance characteristics meet the requirements for the intended analytical application [12]. For researchers and drug development professionals, this process is not merely a regulatory formality but a fundamental scientific activity that ensures the consistency, reliability, and accuracy of data used to make critical decisions about drug safety, efficacy, and quality [13].

The International Council for Harmonisation (ICH) provides a harmonized framework for validation that, once adopted by member regulatory bodies like the U.S. Food and Drug Administration (FDA), becomes the global standard [9]. The recent simultaneous release of ICH Q2(R2) on "Validation of Analytical Procedures" and ICH Q14 on "Analytical Procedure Development" represents a significant modernization of analytical guidelines [9]. This evolution marks a shift from a prescriptive, "check-the-box" approach to a more scientific, risk-based, and lifecycle-based model that begins with a clear definition of the Analytical Target Profile (ATP) – a prospective summary of a method's intended purpose and desired performance characteristics [9] [14]. Within this framework, accuracy, precision, specificity, and linearity stand as core validation parameters that researchers must thoroughly understand and demonstrate.

Defining the Core Parameters

Accuracy

Accuracy expresses the closeness of agreement between a measured value and a value accepted as either a conventional true value or an accepted reference value [12] [15]. It is a measure of the exactness of an analytical method, often referred to as "trueness" [16]. In practice, accuracy is established across the method's range and is measured as the percentage of analyte recovered by the assay [12]. For drug substances, accuracy is typically assessed by comparing results to the analysis of a standard reference material. For drug products, it is evaluated through the analysis of synthetic mixtures spiked with known quantities of components [12]. The ICH guidelines recommend that accuracy be documented by collecting data from a minimum of nine determinations over at least three concentration levels covering the specified range (e.g., three concentrations, three replicates each) [12].

Precision

Precision describes the closeness of agreement among individual test results when the analytical procedure is applied repeatedly to multiple samplings of a homogeneous sample [9] [12]. It is an expression of random error and does not relate to the true value. Precision is generally investigated at three levels, as outlined in Table 1 [12]:

Table 1: Levels of Precision Measurement

| Precision Level | Conditions | Measures |

|---|---|---|

| Repeatability (Intra-assay precision) | Results over a short time interval under identical conditions (same analyst, same equipment) [12]. | The degree of scatter in results under normal operating conditions [16]. |

| Intermediate Precision | Results from within-laboratory variations (different days, different analysts, different equipment) [12]. | The method's robustness to normal laboratory variations [9]. |

| Reproducibility | Results from collaborative studies between different laboratories [12]. | The method's performance across multiple laboratories, often assessed during method transfer [12]. |

Precision results are typically reported as the standard deviation or the relative standard deviation (RSD, also known as the coefficient of variation) [12]. A common industry acceptance criterion for repeatability is an RSD of ≤ 2%, though this can vary based on the method and analyte [14].

Specificity

Specificity is the ability of an analytical method to assess unequivocally the analyte of interest in the presence of other components that may be expected to be present in the sample matrix, such as impurities, degradants, or excipients [9] [16] [15]. A specific method generates a response primarily, if not exclusively, from the target analyte, thereby avoiding false positives or negatives [16]. For identification tests, specificity is demonstrated by the ability to discriminate between compounds or by comparison to known reference materials. For assay and impurity tests, it is typically shown by the resolution of the two most closely eluted compounds, often the active ingredient and a closely eluting impurity [12]. Modern guidance recommends the use of peak-purity tests based on photodiode-array (PDA) detection or mass spectrometry (MS) to provide unequivocal evidence of specificity in chromatographic analyses [12].

Linearity

Linearity of an analytical procedure is its ability to elicit test results that are directly proportional to the concentration (amount) of analyte in the sample within a given range [9] [12]. It is typically demonstrated by preparing and analyzing a series of solutions containing the analyte at different concentrations across the method's specified range. The data—usually the detector response versus the analyte concentration—is then evaluated statistically, often by calculating a regression line using the method of least squares [12]. The correlation coefficient, y-intercept, slope of the regression line, and residual sum of squares are commonly used to judge the linearity [12]. The range of the method is the interval between the upper and lower concentrations of analyte for which suitable levels of linearity, accuracy, and precision have been demonstrated [9] [16].

Experimental Protocols and Methodologies

Standard Experimental Design for Core Parameters

A well-designed validation protocol is essential for generating reliable and defensible data. The following provides a general experimental approach for evaluating the four core parameters.

General Experimental Setup:

- Instrumentation: A qualified and calibrated analytical system (e.g., HPLC, LC-MS) [12].

- Materials: High-purity reference standards of the analyte, appropriate solvents, and placebo or blank matrix materials [12].

- System Suitability: Before initiating validation experiments, perform system suitability tests to ensure the analytical system is functioning correctly and is adequate for the intended analysis [15].

Protocol for Accuracy [12]:

- Prepare a minimum of nine samples over at least three concentration levels (e.g., 50%, 100%, 150% of the target concentration) within the method's range, with three replicates per level.

- For drug products, this is often done by spiking known amounts of the analyte into a placebo blend.

- Analyze the samples using the method.

- Calculate the recovery (%) for each sample using the formula:

(Measured Concentration / Theoretical Concentration) * 100. - Report the mean recovery and confidence interval (e.g., ± standard deviation) for each level.

Protocol for Precision [12]:

- Repeatability: A single analyst analyzes a homogeneous sample at 100% of the test concentration a minimum of six times, or a minimum of nine determinations covering the specified range (three concentrations/three replicates), in a single session under identical conditions. Calculate the %RSD of the results.

- Intermediate Precision: Two different analysts in the same laboratory perform the analysis on different days, using different instruments and/or different reagent lots, following the same scheme as for repeatability. The results from both analysts are compared using statistical tests (e.g., Student's t-test) to check for significant differences.

Protocol for Specificity [12]:

- Inject a blank or placebo sample to demonstrate the absence of interfering peaks at the retention time of the analyte.

- Inject a standard solution of the analyte to confirm its retention time and detector response.

- Inject samples spiked with likely interferents (e.g., impurities, degradation products, excipients) to demonstrate that the analyte peak is resolved from all other peaks, typically with a resolution factor (Rs) greater than 1.5-2.0.

- For chromatographic methods, use a peak purity test (PDA or MS) to demonstrate that the analyte peak is homogeneous and not co-eluting with any other component.

Protocol for Linearity and Range [12]:

- Prepare a minimum of five standard solutions covering the entire specified range of the method (e.g., 50%, 75%, 100%, 125%, 150%).

- Analyze each solution, preferably in triplicate.

- Plot the mean detector response against the concentration of the analyte.

- Perform linear regression analysis to obtain the correlation coefficient (r), coefficient of determination (r²), slope, and y-intercept.

- The range is validated by demonstrating that the method provides acceptable linearity, accuracy, and precision at the extreme ends of the interval.

Quantitative Data and Acceptance Criteria

While acceptance criteria should be predefined and justified based on the method's intended use, Table 2 summarizes typical examples derived from industry guidelines and practices [9] [12] [14].

Table 2: Typical Acceptance Criteria for Core Validation Parameters

| Parameter | Typical Acceptance Criteria |

|---|---|

| Accuracy | Mean recovery of 98–102% with a low %RSD (e.g., ≤ 2%) [14]. |

| Precision | Repeatability: %RSD ≤ 1–2% for assay of drug substance/product [14]. Intermediate Precision: No statistically significant difference between analysts/labs (e.g., p > 0.05 in t-test). |

| Specificity | No interference from blank/placebo. Resolution (Rs) > 1.5-2.0 between the analyte and the closest eluting potential interferent. Peak purity test (PDA/MS) confirms a homogeneous peak [12]. |

| Linearity | Correlation coefficient (r) > 0.998 [12]. Coefficient of determination (r²) > 0.998. Visual inspection of the residual plot shows random scatter [12]. |

Logical Workflow and Relationships

The process of validating the core parameters is interconnected and follows a logical sequence. The following diagram illustrates the typical workflow and the critical relationships between these parameters, from defining the method's purpose to establishing its overall reliability.

Diagram 1: Core Parameter Validation Workflow. This flowchart visualizes the logical progression and interdependence of key validation activities, beginning with the Analytical Target Profile (ATP).

The Scientist's Toolkit: Essential Research Reagents and Materials

The successful execution of validation protocols relies on a suite of high-quality materials and reagents. Table 3 details key items essential for experiments validating accuracy, precision, specificity, and linearity.

Table 3: Essential Research Reagents and Materials for Validation Studies

| Item | Function in Validation |

|---|---|

| Analytical Reference Standard | A substance of established purity and quality used as the benchmark for preparing calibration standards and spiked samples for accuracy, linearity, and precision studies [12]. |

| Placebo/Blank Matrix | The sample matrix without the active analyte. Critical for demonstrating specificity by proving the absence of interfering signals and for preparing spiked samples for accuracy and linearity [12]. |

| High-Purity Solvents & Reagents | Used for preparing mobile phases, standard solutions, and sample dilutions. Their purity is vital to prevent introducing artifacts, background noise, or contamination that could compromise specificity, accuracy, and detection limits [17]. |

| Chromatographic Column | The stationary phase for separation (e.g., HPLC, UPLC). Its performance is key to achieving specificity (resolution of peaks) and is a common variable in robustness testing [12]. |

| Available Impurities/Degradants | Chemically characterized impurity and degradation product standards. Used to intentionally challenge the method and conclusively demonstrate specificity by proving resolution from the main analyte [12]. |

Accuracy, precision, specificity, and linearity are not isolated checkboxes but interconnected pillars supporting the validity of an analytical method. As outlined in modern ICH Q2(R2) and Q14 guidelines, a thorough, science-based understanding and demonstration of these parameters is fundamental to building quality into analytical procedures from the very beginning of development [9]. For researchers in drug development, mastering these concepts and their practical application ensures the generation of reliable, high-integrity data. This, in turn, safeguards product quality, facilitates regulatory compliance, and underpins the safety and efficacy of medicines reaching patients. The experimental protocols and frameworks provided here offer a foundational guide for conducting rigorous, defensible method validation in a regulated research environment.

Defining Range, LOD, LOQ, and Robustness for Your Method

In the pharmaceutical industry and analytical research, the reliability of data is paramount. A method that produces inconsistent, inaccurate, or non-reproducible results can compromise product quality, patient safety, and regulatory submissions. Method validation provides the evidence that an analytical procedure is fit for its intended purpose, ensuring that every data point can be defended with scientific rigor. Among the key validation parameters, Range, Limit of Detection (LOD), Limit of Quantification (LOQ), and Robustness are critical for establishing the boundaries of a method's capability and its reliability under normal operating conditions. This guide provides an in-depth examination of these parameters, offering researchers and drug development professionals detailed protocols and frameworks for their determination and application.

Core Definitions and Their Significance in Method Validation

Range

The Range of an analytical method is the interval between the upper and lower concentrations of analyte for which it has been demonstrated that the procedure has a suitable level of precision, accuracy, and linearity. It defines the concentrations over which the method can be reliably applied without modification. The range is typically derived from the linearity study and is confirmed by assessing accuracy and precision at the lower and upper limits.

Limit of Detection (LOD)

The Limit of Detection (LOD) is the lowest amount of analyte in a sample that can be detected—but not necessarily quantified as an exact value—under the stated experimental conditions [18] [19]. It represents a threshold at which a signal can be reliably distinguished from the background noise. The ICH Q2(R1) guideline defines it as the point of detection with certainty, but not for precise quantification [18] [19].

Limit of Quantitation (LOQ)

The Limit of Quantitation (LOQ), also called the Quantification Limit, is the lowest amount of analyte in a sample that can be quantitatively determined with acceptable precision and accuracy [18] [20]. At the LOQ, the method must demonstrate not only that the analyte is present, but also that its concentration can be measured with a defined degree of reliability. For bioanalytical methods, the Lower LOQ (LLOQ) typically requires precision within 20% CV and accuracy within 20% of the nominal concentration [20].

Robustness

Robustness is a measure of a method's capacity to remain unaffected by small, deliberate variations in its procedural parameters. It serves as an indicator of the method's reliability during normal usage and helps establish a set of system suitability parameters to guard against routine operational fluctuations [21]. Robustness testing examines the influence of factors such as mobile phase pH, flow rate, column temperature, and variations in reagent batches.

Table 1: Summary of Key Validation Parameters

| Parameter | Definition | Primary Significance | Typical Acceptance Criteria |

|---|---|---|---|

| Range | The interval between upper and lower analyte concentrations for which the method is suitable. | Defines the operational scope of the method. | Linearity, precision, and accuracy are demonstrated across the interval. |

| LOD | Lowest analyte concentration that can be detected. | Establishes the detection sensitivity. | Signal is distinguishable from blank with a defined confidence level (e.g., S/N ≥ 2-3 or LOD = 3.3σ/S). |

| LOQ | Lowest analyte concentration that can be quantified with accuracy and precision. | Establishes the quantification sensitivity. | Predefined precision and accuracy are met (e.g., S/N ≥ 10 or LOQ = 10σ/S; for bioanalysis, precision and accuracy ≤20%). |

| Robustness | Resistance to deliberate, small changes in method parameters. | Evaluates method reliability and identifies critical parameters. | Key results (e.g., retention time, peak area) remain within specified acceptance criteria. |

Establishing the Limits: LOD and LOQ

Key Concepts and Statistical Basis

The determination of LOD and LOQ is fundamentally about distinguishing an analyte's signal from the background noise of the measurement system and ensuring that the signal at the LOQ is strong enough for a precise and accurate measurement. The Limit of Blank (LoB) is a related concept, defined as the highest apparent analyte concentration expected to be found when replicates of a blank sample are tested [22] [19]. Statistically, the relationships are often expressed as:

- LoB = Mean~blank~ + 1.645 * SD~blank~ (assuming a one-sided 95% confidence level for a normal distribution) [22]

- LOD = LoB + 1.645 * SD~low concentration sample~ [22] Alternatively, methods based on the calibration curve use the formulas:

- LOD = 3.3 * σ / S [18] [23]

- LOQ = 10 * σ / S [18] [20]

Where 'σ' is the standard deviation of the response and 'S' is the slope of the calibration curve.

Experimental Protocols for Determination

There are several accepted approaches for determining LOD and LOQ, and the choice depends on the nature of the analytical method.

Signal-to-Noise Ratio (S/N)

This approach is applicable primarily to instrumental methods that exhibit a baseline noise, such as chromatography [18].

- Procedure: Prepare and analyze a sample with a low concentration of the analyte and compare the analyte signal to the baseline noise. The noise is measured from a blank injection.

- Acceptance Criteria: A signal-to-noise ratio of 2:1 or 3:1 is generally accepted for LOD, while a ratio of 10:1 is required for LOQ [18] [20].

Standard Deviation of the Response and Slope

This is a widely used method, particularly for techniques that employ a calibration curve.

- Procedure:

- Prepare a calibration curve using samples with analyte concentrations in the range of the suspected LOD/LOQ. Using the normal working range curve is not recommended, as it can overestimate the limits [23].

- The standard deviation (σ) can be estimated in different ways:

- Calculation: Apply the formulas LOD = 3.3 * σ / S and LOQ = 10 * σ / S [18].

A practical example for LOD calculation using multiple calibration curves is shown below [23]:

Table 2: Example LOD Calculation from Calibration Curves

| Experiment | Slope (S) | SD of Y-Intercept (σ) | Calculated LOD (µg/mL) = 3.3 * σ / S |

|---|---|---|---|

| 1 | 15878 | 2943 | 0.61 |

| 2 | 15814 | 2849 | 0.59 |

| 3 | 16562 | 1429 | 0.28 |

| 4 | 15844 | 2937 | 0.61 |

Visual Evaluation

This non-instrumental approach is suitable for methods like dissolution testing or titrations.

- Procedure: Analyze samples with known concentrations of analyte and establish the minimum level at which the analyte can be reliably detected (for LOD) or quantified (for LOQ) [18] [19]. This may involve determining a color change in a titration or the minimum concentration to inhibit microbial growth.

- Data Analysis: Logistic regression can be used to model the probability of detection or quantification versus concentration, with LOD often set at 99% detection probability [19].

Demonstrating Method Robustness

Purpose and Design of Robustness Studies

Robustness testing is an intra-laboratory study conducted during method development to identify critical parameters that could affect method performance. It involves the deliberate, systematic introduction of small changes to method parameters to assess their impact [21]. The goal is to "stress-test" the method before it is transferred or used in a regulated environment. A well-designed robustness study can:

- Identify parameters that require tight control in the method procedure.

- Establish system suitability criteria to ensure the method is performing as validated.

- Prevent out-of-specification (OOS) results due to minor, inevitable variations in a laboratory.

Experimental Protocol and Analysis

A standard approach involves the use of factorial experimental designs, which allow for the efficient testing of multiple factors and their interactions with a minimal number of experiments [21].

Step 1: Identify Key Parameters. Select the method variables most likely to influence the results. For an HPLC method, this could include:

- Mobile phase pH (± 0.1-0.2 units)

- Flow rate (± 0.1 mL/min)

- Column temperature (± 2-5°C)

- Mobile phase composition (± 1-2% absolute)

- Different columns (e.g., from different batches or manufacturers) [21]

Step 2: Design the Experiment. A full or fractional factorial design is employed. For example, a 2³ design would test two levels (high and low) of three different factors in all possible combinations [21].

Step 3: Execute the Study. Run the analytical method for each combination of parameters in the design. Monitor critical outcomes such as retention time, peak area, resolution, tailing factor, and theoretical plates.

Step 4: Analyze the Data. Statistically analyze the results (e.g., using ANOVA) to determine which parameters have a significant effect on the responses. The effect of each factor is calculated, and factors whose variation leads to a significant change in the results are deemed critical.

Step 5: Define Control Limits. Based on the results, set acceptable ranges for the critical parameters in the method's standard operating procedure (SOP). These ranges should be narrower than those tested in the robustness study to ensure consistent performance.

The Scientist's Toolkit: Essential Reagents and Materials

Successful method validation relies on high-quality, well-characterized materials. The following table outlines key solutions and reagents required for experiments determining range, LOD, LOQ, and robustness.

Table 3: Key Research Reagent Solutions for Method Validation

| Reagent/Material | Function and Importance in Validation |

|---|---|

| High-Purity Analytic Reference Standard | Serves as the benchmark for accuracy, linearity, and recovery studies. Its certified purity and concentration are foundational for all quantitative measurements. |

| Appropriate Blank Matrix | A sample matrix free of the analyte, critical for determining background signal, LoB, LOD, and for preparing calibration standards and QC samples. |

| Calibration Standards | A series of solutions of known concentration, used to construct the calibration curve and define the working range, linearity, sensitivity (slope), and LOD/LOQ. |

| Quality Control (QC) Samples | Independently prepared samples at low, medium, and high concentrations within the range. Used to assess accuracy, precision, and to verify the calibration curve. |

| Chromatographic Columns (Different Batches/Lots) | Used in robustness testing to evaluate the method's performance consistency when a critical component is changed, ensuring ruggedness. |

| HPLC-Grade Solvents and Reagents | Ensure minimal background interference and consistent baseline noise, which is crucial for accurate LOD/LOQ determination based on S/N. |

Logical Workflow for Parameter Determination

The following diagram illustrates the logical sequence and relationships between the different activities involved in defining the range, LOD, LOQ, and robustness of an analytical method.

Method Validation Workflow

A rigorous and science-based approach to defining the Range, LOD, LOQ, and Robustness of an analytical method is non-negotiable in pharmaceutical research and development. These parameters collectively establish the boundaries of a method's capability and its susceptibility to variation, forming the bedrock of data integrity. By adhering to the detailed protocols and frameworks outlined in this guide—from the statistical determination of detection limits to the systematic design of robustness studies—researchers and scientists can ensure their methods are not only compliant with regulatory guidelines like ICH Q2(R2) but are also fundamentally reliable, reproducible, and fit for their intended purpose. This diligence ultimately safeguards product quality and reinforces the foundation of trust in scientific data.

Data integrity serves as the foundational pillar for credible scientific research, ensuring that data remains complete, consistent, and accurate throughout its entire lifecycle [24]. In regulated industries such as pharmaceuticals, biotechnology, and clinical research, robust data integrity practices are not merely optional but constitute a regulatory requirement for compliance with Good Practices (GxP) regulations [25]. The global regulatory landscape, including authorities like the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA), mandates that organizations implement comprehensive frameworks to guarantee data reliability, traceability, and security [26].

The ALCOA framework, originally articulated by the FDA in the 1990s, provides a structured approach to achieving data integrity by defining core attributes that data must possess [25] [26]. This acronym represents the five fundamental principles of Attributable, Legible, Contemporaneous, Original, and Accurate data management [24]. As data management practices evolved with technological advancements, the original ALCOA concept was expanded to ALCOA+ (or ALCOA Plus) to address the complexities of modern electronic systems and the complete data lifecycle [27]. This enhanced framework incorporates four additional principles: Complete, Consistent, Enduring, and Available [27] [28]. More recent developments have further extended these concepts to ALCOA++, which includes a tenth principle—Traceable—emphasizing comprehensive audit trails and data reconstruction capabilities [25].

For researchers and professionals engaged in analytical method validation, understanding and implementing ALCOA+ principles is critical for regulatory compliance and scientific validity. These principles ensure that analytical data generated during method development, validation, and routine application maintains its integrity, thereby supporting the reliability of research outcomes and subsequent regulatory decisions [25] [29]. The following sections provide a detailed examination of each ALCOA+ principle, their practical implementation in research settings, and their specific applications within analytical method validation workflows.

The Core ALCOA+ Principles Explained

The ALCOA+ framework comprises nine fundamental principles that collectively ensure data integrity throughout its lifecycle. The table below summarizes these principles and their core requirements for researchers.

Table 1: The Core ALCOA+ Principles and Requirements for Researchers

| Principle | Core Requirement | Key Questions for Researchers |

|---|---|---|

| Attributable | Data must be traceable to the person or system that created or modified it [25] [28]. | Who generated the data and when? Which instrument was used? |

| Legible | Data must be readable and permanently recorded [25] [30]. | Can the data be understood now and in the future? |

| Contemporaneous | Data must be recorded at the time the work is performed [25] [27]. | Was the data recorded in real-time? |

| Original | The primary source of data or a certified copy must be preserved [25] [28]. | Is this the first capture of the data or a true copy? |

| Accurate | Data must be error-free, truthful, and reflect actual observations [25] [24]. | Does the data correctly represent what happened? |

| Complete | All data must be present, including repeats, metadata, and audit trails [25] [27]. | Is all data included, with nothing omitted? |

| Consistent | Data must be chronologically ordered with sequential timestamps [25] [28]. | Is the sequence of events logical and traceable? |

| Enduring | Data must be preserved on durable media for the required retention period [25] [30]. | Is the data stored securely for the long term? |

| Available | Data must be accessible for review, audit, or inspection throughout its lifetime [25] [27]. | Can the data be retrieved when needed? |

The Original ALCOA Components

The original five ALCOA principles form the foundation of data integrity, focusing on the initial creation and capture of data.

Attributable: This principle establishes data ownership and provenance. Each data point must be linked to the individual who recorded it, through secure login credentials for electronic systems or signatures and initials on paper records [25]. Furthermore, the specific equipment or system used to generate the data must also be recorded. In practice, this requires using unique user IDs with appropriate access controls and prohibiting shared accounts to ensure clear accountability [25] [28].

Legible: Data must be permanently readable and understandable by anyone who needs to review it, both now and in the future [25] [30]. This requires using permanent, non-fading ink for paper records and ensuring that electronic data formats remain decodable and independent of specific hardware or software. For electronic data, any encoding, compression, or encryption must be reversible so that information is not lost over time [25].

Contemporaneous: Data must be recorded at the time the activity is performed [27]. This real-time documentation is crucial for preventing errors, omissions, or potential data manipulation that can occur with delayed recording. For electronic systems, this requires automatically capturing the date and time from a synchronized network time source, rather than relying on manual entry or device clocks that can be inaccurate or manipulated [25] [28].

Original: The first capture of data—the source record—must be preserved, or if applicable, a certified copy created under controlled procedures [25] [28]. This original record serves as the definitive source of truth for all subsequent analyses and reports. In dynamic systems, the original data in its dynamic form should remain available. The concept of a "certified copy" is critical here, requiring a verified and documented process to ensure the copy is an exact replica of the original [25].

Accurate: Data must be error-free and truthfully represent the actual observations or measurements obtained during the experiment or study [24] [30]. This requires that devices used for data capture are properly calibrated and fit for purpose. Furthermore, if data requires amendment, the original record must remain visible, and any changes must be documented with a clear rationale, creating a transparent audit trail [25] [28].

The "Plus" Components

The four "plus" principles address the broader data lifecycle, ensuring data remains reliable and usable beyond its initial creation.

Complete: This principle requires that all data is included—from initial entries to final results. There should be no omissions or deletions of any data, including repeats, outliers, or failed runs [27]. The complete dataset must also include all relevant metadata and a secure audit trail that logs all additions, deletions, or alterations without obscuring the original record [25]. This ensures the entire story of the data can be reconstructed.

Consistent: The data record should demonstrate a chronologically sound sequence [28]. Date and time stamps should be consistent and align across all systems and records, following a logical order that reflects the actual sequence of events. This consistency is vital for reconstructing processes and detecting potential anomalies or inconsistencies in the data timeline [25].

Enduring: Data must be recorded and stored on durable, authorized media to ensure it survives the required retention period, which can span decades in the life sciences [27] [30]. This involves using high-quality, long-lasting materials for paper records and robust, controlled electronic media for digital data. It also necessitates a sound backup and disaster recovery strategy to protect against data loss [25].

Available: Data must be readily retrievable for review, monitoring, audits, and inspections throughout its entire retention period [25] [27]. This requires that storage locations—whether physical archives or electronic repositories—are well-organized, indexed, and searchable. Contracts with cloud service providers must also guarantee continuous access and address data retrieval in case of contract termination [28].

Diagram 1: ALCOA+ Data Integrity Framework

Implementing ALCOA+ in Analytical Method Validation

The integration of ALCOA+ principles into analytical method validation is essential for generating reliable, defensible, and regulatory-compliant data. This section provides detailed methodologies for embedding these principles into the validation workflow, which typically progresses from method development and qualification to full validation and routine use.

Experimental Design and Protocol

A robust experimental design is the first critical step in ensuring data integrity. The validation protocol must be detailed and unambiguous, explicitly referencing how ALCOA+ principles will be upheld throughout the process.

- Pre-Defined Acceptance Criteria: Before initiating experimental work, clearly define and document acceptance criteria for all validation parameters (e.g., accuracy, precision, specificity). This practice aligns with the Consistent principle by ensuring standardized evaluation and prevents post-hoc justification of results [31].

- Instrument Qualification and Calibration: Ensure all analytical instruments (e.g., HPLC, mass spectrometers) are properly qualified and calibrated according to schedule. Maintain calibration certificates and logs that are Attributable (signed by the analyst), Legible, Original, and Accurate. This directly supports the generation of reliable data [28].

- Sample Tracking and Chain of Custody: Implement a rigorous system for tracking samples from receipt through disposal. This system must log all sample movements, handling, and storage conditions, ensuring the data is Attributable, Contemporaneous, and Complete [25].

Data Acquisition and Recording

The phase of active data generation is where several core ALCOA principles are put into practice.

- Electronic Data Capture (EDC): Whenever possible, use validated computerized systems configured with secure, unique logins to ensure data is Attributable [25] [29]. Systems should automatically capture date/time stamps from a synchronized network source to enforce Contemporaneous recording [25].

- Use of Controlled Worksheets: For any manual data entry, use controlled, sequentially numbered worksheets. These forms act as the Original record. All entries must be made with permanent ink, errors corrected by a single strike-through with initial and date, preserving the Original and Accurate record of what was done [24] [28].

- Metadata and Audit Trails: For electronic systems, ensure the audit trail functionality is enabled and validated. The audit trail provides a Complete and Consistent record of all data changes, including the "who, what, when, and why," which is essential for the Traceable element of ALCOA++ [25].

Data Processing and Calculation

The transformation of raw data into final results must be transparent and verifiable.

- Version-Controlled Procedures: All calculation methods, algorithms, and software scripts used for data processing must be managed under version control. This ensures that the processing steps are Attributable, Consistent, and that the Original method can be retrieved if needed [25].

- Raw Data Preservation: The Original raw data (e.g., chromatograms, spectral data) must never be overwritten or deleted. Any data processing or integration must be performed on a copy or within a system that preserves the raw data, aligning with Complete and Enduring principles [25] [30].

- Calculation Verification: Manually or independently verify a subset of calculations to ensure Accuracy. This is a key quality check, especially for custom calculations or spreadsheet-based analyses [24].

Data Review and Reporting

The final stage involves a critical review of all data and the compilation of the final report.

- Audit Trail Review: As per regulatory expectations, perform a risk-based, ongoing review of audit trails for critical data [25]. This review checks for anomalous activities (e.g., data deleted or modified after acquisition) and ensures the data story is Complete and Traceable.

- Second-Person Verification: A qualified individual who was not involved in the original analysis must review all data, calculations, and metadata. This verification confirms adherence to the protocol, checks for Completeness, and validates Accuracy [28].

- Final Report Preparation: The final validation report must accurately and completely reflect the raw data. It must be Traceable back to the Original records, allowing a reviewer to reconstruct the entire process from the source data [25].

Diagram 2: ALCOA+ Method Validation Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of ALCOA+ in analytical method validation relies on the use of specific, controlled materials and reagents. The following table details key items and their functions in upholding data integrity.

Table 2: Essential Research Materials for ALCOA+-Compliant Analytical Validation

| Item / Reagent | Function in Validation | ALCOA+ Integrity Link |

|---|---|---|

| Certified Reference Standards | Provides a known, pure substance with certified properties to calibrate instruments and validate method Accuracy and precision [32]. | Accurate, Attributable, Original (as the primary calibrator). |

| System Suitability Test (SST) Mixtures | A prepared mixture used to verify that the chromatographic system (or other analytical system) is performing adequately at the time of the test [31]. | Consistent, Accurate (ensures system performance is consistent and data is reliable). |

| Quality Control (QC) Samples | Samples with known concentrations analyzed alongside test samples to monitor the ongoing reliability and Accuracy of the analytical method [32]. | Accurate, Complete (QC data must be included in the record). |

| Controlled, Sequentially Numbered Worksheets | Pre-approved forms for manual data entry that prevent use of unofficial paper and provide a structured format for Original recordings [24]. | Original, Legible, Complete, Attributable (when signed). |

| Stable Isotope-Labeled Internal Standards | Added to samples to correct for analyte loss during preparation or instrument variability, improving data Accuracy and precision [32]. | Accurate, Consistent (improves reproducibility). |

| Documented Reagents with Certificates of Analysis (CoA) | Reagents and solvents purchased from qualified suppliers with accompanying CoAs that confirm identity, purity, and grade. | Attributable, Accurate (traceable to supplier quality). |

| Electronic Lab Notebook (ELN) / LIMS | A validated software system for managing samples, data, and workflows, often including integrated audit trails and electronic signatures [25] [27]. | All ALCOA+ principles by design (Attributable, Contemporaneous, Complete, etc.). |

| Secure, Long-Term Archival System | A dedicated system (electronic or physical) for preserving Original data and metadata for the required retention period, ensuring it remains Enduring and Available [30]. | Enduring, Available, Original. |

Regulatory Landscape and Future Directions

The enforcement of data integrity principles by global regulatory agencies has intensified significantly over the past decade. Analyses of FDA enforcement indicate that a substantial majority of warning letters issued are related to data integrity issues, highlighting this area as a primary focus for inspections [25] [26]. Regulatory bodies like the FDA, EMA, and WHO explicitly reference or implicitly expect compliance with ALCOA+ principles in their guidance documents [24] [26]. The recent draft revision of EU GMP Chapter 4, for instance, moves to formally codify all ten principles of ALCOA++ into binding regulation, underscoring the evolving and tightening nature of these requirements [26].

The emergence of Artificial Intelligence (AI) and Machine Learning (ML) in research and development presents both new opportunities and challenges for data integrity [25] [29]. AI systems can enhance integrity by minimizing human error in data processing; however, they also introduce complexities in ensuring the Attributability, Consistency, and Completeness of data used to train and operate these models [29]. The foundational "garbage in, garbage out" principle is critically relevant—the integrity of AI-driven decisions is entirely dependent on the integrity of the underlying data, necessitating rigorous application of ALCOA+ throughout the AI lifecycle [29].

Furthermore, the shift towards complex electronic data sources such as wearables in clinical trials, eCOA, and sophisticated laboratory instruments demands robust governance. These systems generate vast amounts of data that must be Contemporaneous, Original, and Complete, with metadata and audit trails configured to capture the full context of data generation [25]. As the digital landscape evolves, the principles of ALCOA+ will continue to serve as the immutable foundation upon which reliable, trustworthy scientific research and drug development are built.

From Theory to Practice: Implementing and Applying Validated Methods

Quality by Design (QbD) and the Analytical Target Profile (ATP) in Method Development

Analytical Quality by Design (AQbD) is a systematic, risk-based approach to analytical method development that emphasizes building quality into the method from the outset, rather than relying solely on traditional quality-by-testing (QbT). According to the International Council for Harmonisation (ICH) guidelines, QbD is defined as "a systematic approach to development that begins with predefined objectives and emphasizes product and process understanding and process control, based on sound science and quality risk management" [33] [34]. This methodology represents a paradigm shift from the conventional one-factor-at-a-time (OFAT) approach, which often proves time-consuming, resource-intensive, and potentially lacking in reproducibility [33] [35].

The pharmaceutical industry's adoption of AQbD has been steadily increasing, supported by regulatory bodies including the US Food and Drug Administration (FDA) and outlined in various guidelines such as ICH Q8(R2), Q9, Q10, Q12, and the more recent ICH Q14 on analytical procedure development [34] [9] [36]. The AQbD framework ensures method robustness throughout the entire analytical procedure lifecycle, reducing out-of-specification (OOS) and out-of-trend (OOT) results by systematically understanding and controlling critical method parameters [33] [35]. This approach provides significant advantages over traditional methods, including enhanced regulatory flexibility, continuous improvement opportunities, minimized deviations, and reduced variability in analytical attributes [35].

Core Concepts of AQbD and the Analytical Target Profile (ATP)

The Analytical Target Profile (ATP) as the Foundation

The Analytical Target Profile (ATP) serves as the cornerstone of the AQbD approach, comparable to the Quality Target Product Profile (QTPP) in product development [35]. The ATP is defined as "a prospective description of the desired performance of an analytical procedure that is used to measure a quality attribute, and it defines the required quality of the reportable value produced by the procedure" [33]. Essentially, the ATP outlines what the method must achieve, without initially prescribing how to achieve it.

The ATP establishes the method's performance requirements before development begins, connecting analytical outcomes to product critical quality attributes (CQAs) [36]. According to regulatory guidelines, creating an effective ATP involves:

- Selection of target analytes (API and impurities) [37]

- Choice of appropriate analytical technique category (HPLC, GC, HPTLC, etc.) [37]

- Definition of method requirements including testing profile, impurity profiling, and solvent residue analysis [37]

- Establishment of performance criteria for accuracy, precision, and other relevant characteristics based on the method's intended purpose [38] [9]

The ATP functions as the focal point for all stages of the analytical lifecycle, ensuring the method remains fit-for-purpose from development through routine use [38] [36]. With the publication of USP general chapter <1220> on the Analytical Procedure Lifecycle and ICH Q14, the ATP has become formally recognized as an essential component of regulatory submissions [33] [36].

Interrelationship of AQbD Elements

The AQbD methodology comprises several interconnected elements that systematically transform method requirements into a controlled, robust analytical procedure. The relationship between these components follows a logical progression from defining what to measure to establishing how to control the method effectively.

Figure 1: AQbD Workflow - The systematic progression from ATP definition to continuous lifecycle management

Implementation of AQbD: A Step-by-Step Methodology

Defining Critical Quality Attributes (CQAs)

Following ATP establishment, Critical Quality Attributes (CQAs) are identified as the next crucial step. CQAs are defined as "physical, chemical, biological, or microbiological properties or characteristics that must be within the appropriate limits, ranges, or distributions to ensure the desired product quality" [37] [34]. For analytical methods, CQAs represent method attributes and parameters that measure method performance in accordance with the ATP [34].

The specific CQAs vary depending on the analytical technique employed:

- For HPLC methods: CQAs typically include mobile phase buffer composition, pH, diluent properties, column selection, organic modifier concentration, and elution method [37]

- For GC methods: Critical attributes encompass gas flow rates, temperature parameters (injection port, oven), diluent selection, and sample concentration [37]

- For HPTLC methods: CQAs involve TLC plate type, mobile phase composition, injection parameters, plate development time, and detection methodology [37]

These CQAs are directly linked to the performance characteristics defined in regulatory guidelines such as ICH Q2(R2), including accuracy, precision, specificity, linearity, range, detection limit, quantitation limit, and robustness [37] [9].

Risk Assessment and Management

Risk assessment represents a fundamental component of AQbD, enabling the systematic evaluation of potential variability sources in CQAs [35]. The ICH Q9 guideline provides the framework for quality risk management, which pharmaceutical analysts apply to evaluate risks associated with method parameters, instrument configuration, material attributes, sample preparation, and environmental conditions [33] [35].

Several structured tools facilitate effective risk assessment in analytical method development:

- Fishbone (Ishikawa) Diagrams: Visual tools that categorize potential risk factors into groups such as instrumentation, materials, methods, chemicals/reagents, operators, and environment [33] [34] [35]

- Failure Mode and Effects Analysis (FMEA): A systematic approach that identifies and ranks potential failure modes based on severity, occurrence, and detectability using a numerical scoring system (typically 1-10) [33] [35]

- Risk Estimation Matrix (REM): A semiquantitative tool that categorizes risks into levels (low, medium, high) based on severity and probability [35]