Analytical Method Validation vs. Verification: A Strategic Guide for Pharmaceutical Development

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on navigating the critical processes of analytical method validation and verification.

Analytical Method Validation vs. Verification: A Strategic Guide for Pharmaceutical Development

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on navigating the critical processes of analytical method validation and verification. It clarifies the fundamental distinction between validating a new method and verifying an established one, outlining a phase-appropriate, risk-based framework aligned with ICH and FDA guidelines. The content covers key methodological parameters, common challenges in development and transfer, and strategic approaches for comparative studies and post-approval changes. By synthesizing foundational principles with practical applications and troubleshooting, this guide aims to equip professionals with the knowledge to ensure regulatory compliance, data integrity, and robust quality control throughout the drug product lifecycle.

Laying the Groundwork: Understanding Method Validation vs. Verification

Analytical method validation is a foundational pillar in pharmaceutical development and quality control. It is defined as the process of establishing documented evidence that provides a high degree of assurance that a specific analytical procedure will consistently produce results meeting its predetermined specifications and quality attributes [1]. In the context of research comparing new versus established analytical methods, validation provides the critical data necessary to objectively demonstrate that a novel method is fit-for-purpose, ensuring the reliability, accuracy, and reproducibility of analytical data that forms the basis for decisions on product quality, safety, and efficacy [2] [3].

The modern guidance from the International Council for Harmonisation (ICH), particularly the recently updated ICH Q2(R2) and ICH Q14 guidelines, emphasizes a shift from a one-time validation event to a more holistic lifecycle management approach [4]. This framework is instrumental for researchers, as it encourages the proactive definition of method performance requirements from the outset, ensuring that development and validation activities are aligned with the method's intended analytical application [4].

Core Principles and Regulatory Foundation

The "Why": Importance in Pharmaceutical Development

For researchers and drug development professionals, analytical method validation is not merely a regulatory hurdle; it is a critical scientific exercise. Its importance is multi-faceted [3]:

- Ensures Accuracy and Reliability: It verifies that test results truly represent the sample’s quality, ensuring the integrity of data used for critical decisions.

- Regulatory Compliance: Agencies like the FDA, EMA, and WHO require validated analytical methods for product approvals. Following ICH guidelines provides a harmonized path to meeting global regulatory requirements [4] [3].

- Patient Safety: Accurate testing ensures that medicines are safe, effective, and free from harmful levels of impurities.

- Facilitates Technology Transfer: A robustly validated method can be reliably transferred between different laboratories and manufacturing sites without compromising data quality.

The "What": Key Validation Parameters

Validation involves testing a series of performance characteristics to demonstrate the method's capability. The table below summarizes the core parameters as defined by ICH and other regulatory bodies [1] [4] [3].

Table 1: Key Analytical Method Validation Parameters and Definitions

| Parameter | Definition |

|---|---|

| Accuracy | The closeness of agreement between a test result and an accepted reference value (the "true" value) [3] [5]. |

| Precision | The closeness of agreement among a series of measurements from multiple sampling of the same homogeneous sample. It is measured at three levels: repeatability, intermediate precision, and reproducibility [3] [5]. |

| Specificity | The ability to assess unequivocally the analyte in the presence of components that may be expected to be present, such as impurities, degradants, or matrix components [4] [3]. |

| Linearity | The ability of the method to obtain test results that are directly proportional to the concentration of the analyte in a given range [1] [3]. |

| Range | The interval between the upper and lower concentrations of analyte for which the method has demonstrated suitable linearity, accuracy, and precision [4] [3]. |

| Limit of Detection (LOD) | The lowest amount of analyte in a sample that can be detected, but not necessarily quantitated, under the stated experimental conditions [4] [5]. |

| Limit of Quantitation (LOQ) | The lowest amount of analyte in a sample that can be quantitatively determined with acceptable precision and accuracy [4] [5]. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters (e.g., pH, temperature, mobile phase composition) [4] [3]. |

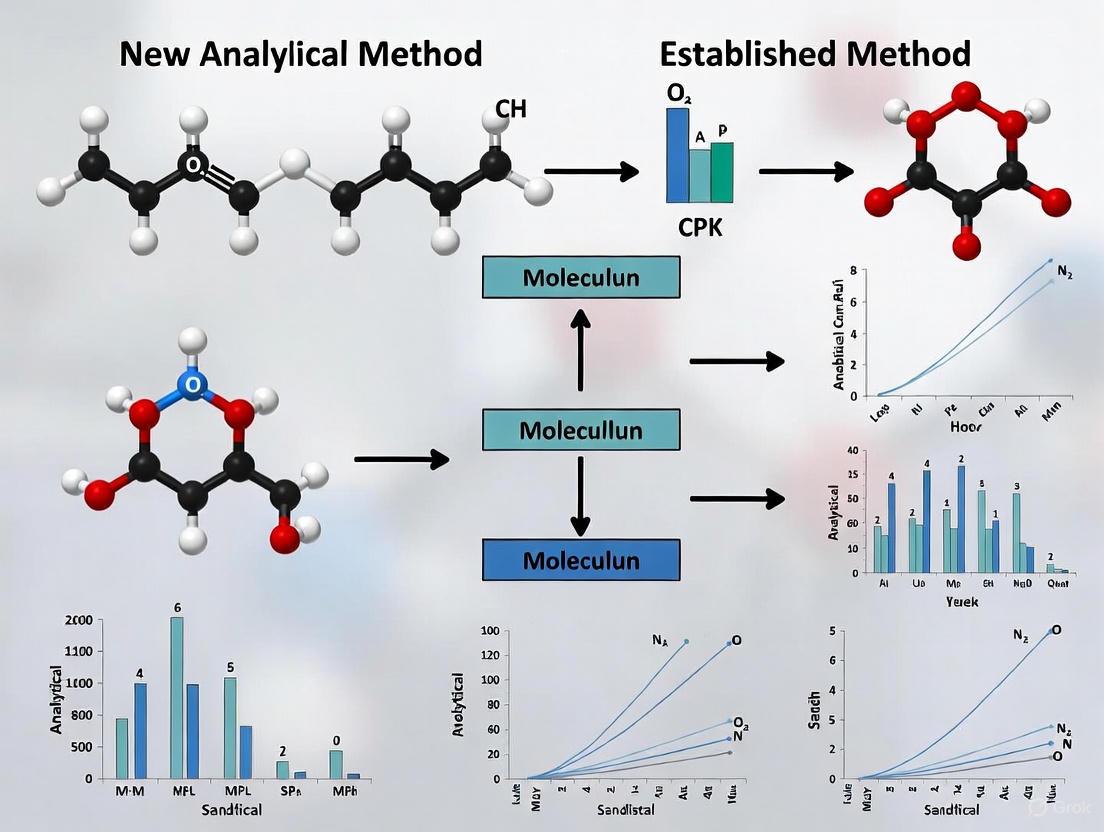

The following workflow illustrates the logical relationship and sequence for evaluating these core parameters during a validation study.

Diagram 1: Analytical Method Validation Workflow

Experimental Protocols for Key Validation Parameters

This section provides detailed methodologies for core experiments, serving as a practical guide for researchers.

Protocol for Determining Accuracy

Accuracy demonstrates the exactness of the analytical method and is typically established across the specified range [5].

- Methodology: Analyze a sample of known concentration (a reference standard) and compare the result to the true value. For drug products, this is often done by spiking a placebo matrix with known quantities of the analyte across a range (e.g., 50% to 150% of the target concentration) [1] [3].

- Procedure:

- Prepare a minimum of nine determinations over at least three concentration levels (e.g., three levels with three replicates each) [5].

- Analyze each sample using the method under validation.

- Calculate the percent recovery for each sample.

- Calculation:

% Accuracy = 100 × (Experimental Amount – Theoretical Amount) / Theoretical Amount[1] The data can also be expressed as the bias of the method (e.g., -1.2% bias) [1]. - Acceptance Criteria: Varies with the sample type. For a drug substance assay, recovery is often required to be within 98.0–102.0% [3].

Protocol for Determining Precision

Precision, the measure of method scatter, is evaluated at three tiers: repeatability, intermediate precision, and reproducibility [3] [5].

- Methodology:

- Repeatability (Intra-assay): Have a single analyst perform multiple injections (e.g., six at 100% of test concentration or nine across the specified range) of a homogeneous sample in a single session [1] [5].

- Intermediate Precision: Demonstrate the impact of random events within the same laboratory. A common approach involves two different analysts on different days, using different instruments and columns, to prepare and analyze replicate sample preparations [1] [5].

- Reproducibility (Inter-laboratory): Assess precision between laboratories, typically required for method standardization (e.g., collaborative studies between different company sites) [3].

- Procedure:

- For intermediate precision, two analysts each prepare and analyze a minimum of six sample preparations at 100% of the test concentration.

- Calculate the mean, standard deviation (SD), and relative standard deviation (%RSD) for each set of results.

- Compare the means from the two analysts using statistical tests (e.g., Student's t-test) to check for significant differences [5].

- Calculation:

%RSD = (Standard Deviation / Mean) × 100% - Acceptance Criteria: For chromatographic assay of drug products, the repeatability RSD is often expected to be < 1.0% [1]. The difference in means between analysts in intermediate precision should be within pre-defined limits.

Protocol for Determining Specificity

Specificity proves that the method can measure the analyte free from interference [3].

- Methodology: For chromatographic assays, demonstrate that the peak response is due to a single component. This is achieved by analyzing and comparing chromatograms of [5]:

- The analyte (active pharmaceutical ingredient, API).

- Placebo or blank (all components except the analyte).

- Sample spiked with potential interferents (impurities, degradants, excipients).

- Procedure:

- Inject the blank/placebo and confirm no peaks co-elute with the analyte.

- Inject a standard of the analyte to record its retention time.

- Inject a sample solution that has been stressed (e.g., by heat, light, acid/base) to generate degradants.

- Demonstrate resolution between the analyte peak and the closest eluting potential interferent.

- Use peak purity techniques (e.g., Photodiode Array (PDA) detection or Mass Spectrometry (MS)) to confirm the analyte peak is homogeneous and not a co-elution of multiple compounds [5].

- Acceptance Criteria: The method should demonstrate that impurities, degradants, and excipients do not interfere with the analyte. For stability-indicating methods, the analyte peak should be pure, and resolution from the nearest eluting peak should be > 1.5 [5].

Table 2: Acceptance Criteria Examples for Key Validation Parameters

| Parameter | Typical Acceptance Criteria (for Assay of Drug Substance) | Reference |

|---|---|---|

| Accuracy | Recovery: 98.0 - 102.0% | [3] |

| Precision (Repeatability) | Relative Standard Deviation (RSD) < 1.0% | [1] |

| Linearity | Correlation coefficient (r) ≥ 0.99 (R² ≥ 0.9999 for higher expectations) | [1] [6] |

| LOD | Signal-to-Noise ratio ≥ 3:1 | [5] |

| LOQ | Signal-to-Noise ratio ≥ 10:1, with acceptable accuracy and precision at that level | [5] |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful method validation relies on the use of high-quality, well-characterized materials. The following table details key reagents and their critical functions.

Table 3: Essential Materials for Analytical Method Validation

| Material / Solution | Function in Validation |

|---|---|

| Qualified Reference Standards | Certified materials with known purity and identity used to calibrate the method and determine accuracy. Their reliability and stability are a fundamental prerequisite [1]. |

| Placebo Matrix | A mixture of all inert components (excipients) of a formulation without the active ingredient. Used to prepare spiked samples for accuracy and specificity studies in drug product testing [3]. |

| System Suitability Solutions | A reference standard preparation used to verify that the chromatographic system (or other instrument) is performing adequately at the time of the test. It typically checks for parameters like plate count, tailing factor, and repeatability [3] [5]. |

| Stressed/Sample Solutions | Samples (drug substance or product) that have been subjected to forced degradation (e.g., heat, light, acid, base, oxidation) to generate impurities and degradants. Critical for demonstrating the specificity of stability-indicating methods [5]. |

| High-Purity Mobile Phase Solvents & Reagents | Essential for achieving the required sensitivity, baseline stability, and reproducible retention times in chromatographic methods. Variations in quality can directly impact robustness [3]. |

The Analytical Procedure Lifecycle: A Modern Framework

The introduction of ICH Q14 and the updated ICH Q2(R2) formalizes a modern, holistic view of analytical procedures. This lifecycle approach, illustrated below, is highly relevant for research on new methods as it integrates development, validation, and ongoing performance monitoring [4].

Diagram 2: The Analytical Procedure Lifecycle per ICH Q14/Q2(R2)

The cycle begins with defining an Analytical Target Profile (ATP) – a prospective summary of the method's required performance characteristics [4]. This ATP guides the development and validation phases, ensuring the procedure is designed to be fit-for-purpose from the start. Once in routine use, the method's performance is continuously monitored, and any proposed changes are managed through a structured, science-based process, ensuring continued validity throughout the method's lifetime.

Analytical method validation is a rigorous, scientifically-driven process that moves beyond a mere regulatory requirement to become the foundation of data integrity in pharmaceutical research and development. For scientists engaged in the critical task of validating a new analytical method against an established one, a deep understanding of the core parameters, experimental protocols, and the modern lifecycle framework is indispensable. By systematically applying these principles and adhering to the structured workflows and acceptance criteria outlined, researchers can generate defensible data that not only satisfies global regulatory standards but, more importantly, ensures the safety and quality of medicines for patients.

When is Verification the Right Choice?

In the pharmaceutical laboratory, the choice between method validation and method verification is a fundamental strategic decision. While validation establishes that a new analytical procedure is suitable for its intended purpose, verification confirms that a previously validated method performs as expected in a new laboratory environment [7] [8]. This distinction is crucial for regulatory compliance and operational efficiency, particularly when working with established methods.

Verification serves as a bridge between method development and routine use, providing documented evidence that a specific process will consistently produce results meeting predetermined specifications when implemented under different conditions [9]. This process is less extensive than full validation but equally critical for ensuring data integrity and reliability when transferring methods between sites, adopting compendial procedures, or implementing methods with minor modifications [7] [8].

This application note delineates the specific circumstances warranting verification, outlines core performance characteristics requiring assessment, and provides detailed experimental protocols for implementation within regulated laboratories.

Key Scenarios for Method Verification

Decision Framework for Verification

The choice to verify rather than validate hinges on both regulatory requirements and practical considerations. The following table outlines common scenarios and the corresponding justification for verification.

Table 1: Scenarios Warranting Method Verification

| Scenario | Description | Regulatory Basis |

|---|---|---|

| Adoption of Compendial Methods | Using established pharmacopeial methods (e.g., USP, Ph. Eur.) in a laboratory for the first time [7] [9]. | Verification is mandated by regulatory authorities as the method's suitability has already been established by the compendial body [8] [9]. |

| Method Transfer Between Laboratories | Moving a validated method from a transferring lab (e.g., R&D) to a receiving lab (e.g., QC or a CRO) [9]. | Documentation must qualify the receiving laboratory to use the method, ensuring equivalent performance [9]. |

| Use of Established Methods with Minor Changes | Implementing a validated method with slight modifications (new analyst, equipment, or reagent batch) that do not constitute a major change [9]. | A risk-based approach justifies verification over revalidation for minor changes [9]. |

| Routine Analysis Using Standard Methods | Applying well-established, standardized methods in quality control workflows [8]. | Verification offers a quicker, more efficient path for routine analysis while maintaining compliance [8]. |

Verification Versus Validation: A Comparative Analysis

Understanding the fundamental differences between verification and validation prevents regulatory missteps. The following workflow diagram illustrates the decision-making process for selecting the correct approach.

Figure 1: Decision Workflow for Method Verification vs. Validation

Core Performance Characteristics for Verification

Essential Parameters and Acceptance Criteria

Verification involves a targeted assessment of critical method parameters to confirm performance in the new setting. The extent of testing is guided by the method's complexity and the degree of change from original conditions [7] [9]. The following table summarizes the typical parameters assessed during verification alongside common acceptance criteria.

Table 2: Key Parameters and Typical Acceptance Criteria for Method Verification

| Parameter | Experimental Goal | Typical Acceptance Criteria | Reference to Full Validation |

|---|---|---|---|

| Accuracy | Establish agreement between found value and accepted reference value [9]. | Percent recovery within predefined limits (e.g., 98-102%) [10]. | Comprehensive assessment across the range [7]. |

| Precision | Demonstrate variability under normal assay conditions (repeatability) [9]. | %RSD (Relative Standard Deviation) ≤ 2% for assay, may vary by method [10]. | Includes repeatability, intermediate precision, and reproducibility [7]. |

| Specificity | Ability to assess analyte unequivocally in the presence of potential interferents [9]. | No interference from blank; resolution of peaks in chromatography [11]. | Rigorously tested with all potential impurities and excipients [10]. |

| Linearity & Range | Confirm direct proportionality between analyte concentration and signal [9]. | Correlation coefficient (r) ≥ 0.990 [11]. | Established across the entire specified range [7]. |

| Detection Limit (LOD) / Quantitation Limit (LOQ) | Verify the lowest detectable/quantifiable analyte level [9]. | Signal-to-noise ratio ≥ 3 for LOD, ≥ 10 for LOQ [10]. | Determined through rigorous statistical methods [7]. |

Detailed Experimental Protocols for Verification

Protocol 1: Verification of Accuracy and Precision

Principle: Accuracy (closeness to the true value) and precision (agreement among a series of measurements) are foundational to method reliability [9]. This protocol uses replicate analysis of quality control samples at multiple concentrations.

Materials & Reagents:

- Certified Reference Material (CRM) or sample of known concentration [9]

- Appropriate quality control materials at low, mid, and high concentrations within the range

- All method-specific reagents and solvents

Procedure:

- Sample Preparation: Prepare a minimum of five samples at each of the three concentration levels (low, mid, high) using the CRM or spiked samples [12].

- Replicate Analysis: Analyze each sample in triplicate (n=3) in a single sequence for repeatability (within-day precision) [12].

- Intermediate Precision: Repeat the entire experiment on a different day, using a different analyst and/or instrument if applicable, to assess intermediate precision [10].

- Data Analysis:

- Accuracy: Calculate percent recovery for each level:

(Mean Observed Concentration / Known Concentration) * 100. - Precision: Calculate the %RSD for the replicates at each concentration level:

(Standard Deviation / Mean) * 100.

- Accuracy: Calculate percent recovery for each level:

Acceptance Criteria: Percent recovery and %RSD should meet pre-defined criteria justified by the method's intended use, such as recovery of 98-102% and %RSD ≤ 2.0% for an assay method [10].

Protocol 2: Verification of Specificity

Principle: Specificity demonstrates the method's ability to measure the analyte accurately in the presence of other components like impurities, degradants, or matrix elements [10].

Materials & Reagents:

- Pure analyte standard

- Placebo or blank matrix (without analyte)

- Potentially interfering substances (e.g., known impurities, excipients)

Procedure:

- Blank Analysis: Inject/analyze the placebo or blank matrix. The chromatogram or signal should show no interference at the retention time or location of the analyte [11].

- Analyte Standard Analysis: Inject/analyze the pure analyte standard to establish the primary response.

- Forced Degradation/Interference Test: Inject/analyze the analyte standard spiked with potential interferents. For identity tests like immunophenotyping, techniques like the Fluorescence Minus One (FMO) method can be used to confirm antibody specificity [11].

- Data Analysis: Assess chromatograms or signals for baseline resolution, peak purity, or lack of signal suppression/enhancement.

Acceptance Criteria: The blank shows no peak/interference at the analyte's retention time. The analyte peak is pure and resolved from all other peaks, with resolution (Rs) > 1.5 for chromatographic methods [10].

Protocol 3: Verification of Linearity and Range

Principle: This protocol confirms that the analytical procedure produces results directly proportional to analyte concentration within a specified range [9].

Materials & Reagents:

- Stock standard solution of the analyte

- Diluents for preparing standard curve

Procedure:

- Standard Preparation: Prepare a minimum of five standard solutions at concentrations spanning the claimed range (e.g., 50%, 75%, 100%, 125%, 150% of target) [12].

- Analysis: Analyze each standard solution. It is recommended to analyze each concentration in duplicate [12].

- Data Analysis: Plot the mean response against the concentration. Perform linear regression analysis to obtain the correlation coefficient (

r), slope, and y-intercept.

Acceptance Criteria: The correlation coefficient r is typically ≥ 0.990 or ≥ 0.998 for higher-precision assays [10] [11]. The y-intercept should not be significantly different from zero.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful method verification relies on high-quality, well-characterized materials. The following table lists key reagents and their critical functions in the verification process.

Table 3: Essential Research Reagent Solutions for Method Verification

| Reagent/Material | Function & Importance in Verification |

|---|---|

| Certified Reference Materials (CRMs) | Provides a traceable standard with known purity and concentration, essential for accurate determination of accuracy and linearity [9]. |

| Quality Control (QC) Materials | Stable, well-characterized samples at known concentrations used to demonstrate precision and ongoing method performance [12]. |

| Compendial Reagents (USP, Ph. Eur.) | Ensures that reagents meet the specifications outlined in the official method, which is critical when verifying compendial procedures [7]. |

| System Suitability Standards | A specific mixture used to confirm that the total analytical system (instrument, reagents, columns) is performing adequately at the start of the experiment [10]. |

Method verification is not merely a technical exercise but a regulatory requirement under various frameworks. The ICH Q2(R2) guideline provides the foundational framework for validation activities, which directly informs the scope of verification [10]. For laboratories operating under ISO/IEC 17025, verification is generally required to demonstrate that standardized methods function correctly under local conditions [8]. Furthermore, the USP General Chapter <1225> states that compendial methods do not require full validation but must undergo "suitability testing" upon implementation, which is synonymous with verification [9].

In conclusion, verification is the right and necessary choice when implementing an established method in a new context. By applying this targeted, risk-based approach—assessing critical parameters like accuracy, precision, and specificity through structured protocols—laboratories can ensure regulatory compliance, maintain data integrity, and optimize resource utilization. This enables efficient and reliable quality control, ultimately supporting the delivery of safe and effective pharmaceuticals to patients.

Analytical method validation provides documented evidence that a laboratory test reliably performs its intended purpose, forming the foundation for regulatory approvals across pharmaceutical development and manufacturing. For researchers and drug development professionals, understanding the nuanced relationships between ICH Q2(R1), FDA, and EMA guidelines is critical for designing compliant validation protocols. These frameworks establish that analytical methods consistently produce accurate, precise, and reproducible results supporting product quality assessments. Within a thesis investigating new versus established method research, this guidance dictates the evidence requirements for demonstrating method suitability, influencing both development strategy and regulatory submission planning.

The International Council for Harmonisation (ICH) Q2(R1) guideline, "Validation of Analytical Procedures," serves as the primary global foundation, defining core validation parameters and their evaluation methodologies [13]. The U.S. Food and Drug Administration (FDA) and European Medicines Agency (EMA) largely adopt ICH principles while implementing them through region-specific guidance documents and enforcement expectations [14] [13]. For instance, the FDA may reference additional compendial standards like USP 〈1225〉 and emphasize system suitability and method robustness more explicitly in some contexts [14] [13]. A comparative analysis of these frameworks reveals strategic considerations for global drug development, particularly when validating innovative analytical technologies or applying established methods to novel products.

Comparative Analysis of Key Regulatory Guidelines

ICH Q2(R1): The International Benchmark

ICH Q2(R1), "Validation of Analytical Procedures," establishes the internationally harmonized framework for validating analytical methods used in pharmaceutical quality control [13] [15]. Its primary scope encompasses procedures for testing drug substances and finished products, including assays, purity tests, identity tests, and impurity tests. The guideline provides standardized definitions and methodologies for assessing a comprehensive set of validation characteristics, ensuring consistency in application and evaluation across regulatory jurisdictions [15].

The key validation parameters defined in ICH Q2(R1) and their regulatory significance are detailed in Table 1.

Table 1: Core Validation Parameters as Defined by ICH Q2(R1)

| Validation Parameter | Definition and Regulatory Significance | Typical Methodology |

|---|---|---|

| Accuracy | The closeness of agreement between the conventional true value and the value found. Demonstrates method reliability for measuring the target analyte. [7] [16] | Comparison with reference standard; Spiked recovery studies for impurities. |

| Precision | The closeness of agreement between a series of measurements. Includes repeatability (same conditions) and intermediate precision (different days, analysts, equipment). [7] [16] | Multiple measurements of homogeneous samples; Statistical analysis of variance. |

| Specificity | The ability to assess unequivocally the analyte in the presence of components that may be expected to be present. Critical for method selectivity. [16] [15] | Chromatographic resolution; Forced degradation studies; Placebo interference analysis. |

| Linearity | The ability of the method to obtain test results proportional to the analyte concentration. [16] | Analyte response across a defined concentration range. |

| Range | The interval between the upper and lower concentration of analyte for which suitable precision, accuracy, and linearity are demonstrated. [16] | Validated from linearity studies, must encompass specified test concentrations. |

| Detection Limit (LOD) | The lowest amount of analyte that can be detected, but not necessarily quantified. [16] | Signal-to-noise ratio; Visual evaluation; Standard deviation of response. |

| Quantitation Limit (LOQ) | The lowest amount of analyte that can be quantitatively determined with suitable precision and accuracy. [16] | Signal-to-noise ratio; Standard deviation of the response and slope. |

| Robustness | A measure of method capacity to remain unaffected by small, deliberate variations in method parameters. [13] [16] | Variation of factors like pH, temperature, flow rate, mobile phase composition. |

FDA-Specific Implementation and Expectations

The FDA incorporates ICH Q2(R1) principles through its guidance, "Analytical Procedures and Methods Validation for Drugs and Biologics," while layering on specific U.S. regulatory expectations [13] [16]. The FDA's approach is characterized by a strong emphasis on method robustness and comprehensive lifecycle management [13]. The agency explicitly requires system suitability testing as an integral part of method validation and routine use, ensuring the analytical system is functioning correctly at the time of testing [14] [13]. Furthermore, FDA submissions require thorough documentation of all validation activities, including raw data, protocols, and any deviations encountered, to support regulatory reviews and inspections [7].

Beyond traditional pharmaceuticals, the FDA issues product-specific guidance, such as the recent "Validation and Verification of Analytical Testing Methods Used for Tobacco Products," which adapts core validation principles to unique product categories [17] [18]. This demonstrates the FDA's application of fundamental validation tenets across diverse regulatory portfolios. For bioanalytical methods, the FDA has adopted the ICH M10 guideline, which provides unified standards for validating methods used to measure drug and metabolite concentrations in biological matrices, replacing previous agency-specific recommendations [19] [20] [21]. This move enhances global harmonization for nonclinical and clinical study support.

EMA Adaptation and Regional Nuances

The European Medicines Agency (EMA) aligns closely with ICH Q2(R1) but differs from the FDA in its implementation style and emphasis on certain elements [14]. While the EMA acknowledges the importance of robustness, its guidance may not always mandate its formal inclusion in validation reports with the same strictness as the FDA, sometimes accepting evaluation during method development [14]. The EMA typically does not explicitly incorporate compendial standards like the Ph. Eur. into its method validation guideline in the same way the FDA references USP 〈1225〉, focusing instead on the core ICH principles [14] [13].

For bioanalytical method validation, the EMA has transitioned to the ICH M10 guideline, superseding its previous internal document (EMEA/CHMP/EWP/192217/2009 Rev. 1 Corr. 2) [19] [20]. This shift underscores a significant step toward global regulatory convergence, reducing the need for region-specific validation protocols for studies submitted in the EU. The EMA's overall framework is considered scientifically rigorous but may offer slightly more flexibility in the documentation of certain parameters like robustness, provided the scientific rationale is sound [14].

Navigating the regulatory landscape requires a clear understanding of the practical differences between major agencies. Table 2 provides a side-by-side comparison of key aspects.

Table 2: Key Comparative Aspects of FDA and EMA Method Validation Guidance

| Aspect | FDA Approach | EMA Approach |

|---|---|---|

| Primary Guideline | ICH Q2(R1) + Referenced Standards (e.g., USP 〈1225〉) [14] [13] | ICH Q2(R1) [14] |

| System Suitability | Clearly mandated and required as part of method validation and routine use [14] [13] | Expected but may be less explicitly emphasized in validation guidance [14] |

| Robustness | Should be formally studied and described in the validation report [14] [13] | Evaluated, but not always strictly required for the validation report; may be part of development [14] |

| Bioanalytical Methods | ICH M10 (Adopted) [21] | ICH M10 (Adopted) [19] [20] |

| Documentation Focus | Extensive documentation of all validation data and lifecycle management [7] [13] | Comprehensive documentation with a focus on scientific justification [14] |

Experimental Protocols for Method Validation

Protocol for Validating a New HPLC Method for Drug Assay

This protocol outlines the experimental procedure for validating a new High-Performance Liquid Chromatography (HPLC) method for the assay of a drug substance, according to ICH Q2(R1) and associated FDA/EMA expectations.

1.0 Objective: To establish and document that the HPLC assay method is suitable for its intended purpose of determining the potency of [Drug Substance Name] in accordance with regulatory standards.

2.0 Materials and Reagents:

- Drug Substance Standard: Certified Reference Material of [Drug Substance Name] with known purity.

- Test Samples: Representative batches of [Drug Substance Name].

- Mobile Phase: Precisely prepared mixture of [e.g., Buffer pH X.X] and [Organic Solvent, e.g., Acetonitrile] in a ratio of [X:Y].

- Diluent: A solvent system [e.g., Water:Acetonitrile Y:Z] capable of dissolving the analyte.

- HPLC System: Equipped with [e.g., UV/VIS or DAD] detector and [specify column type, e.g., C18, 150 x 4.6 mm, 5 µm].

3.0 Experimental Procedure and Acceptance Criteria:

Table 3: Validation Experiments for a New HPLC Assay Method

| Validation Parameter | Experimental Protocol | Acceptance Criteria |

|---|---|---|

| System Suitability | Inject six replicates of standard solution. | RSD of peak area ≤ 2.0%; Theoretical plates > [e.g., 2000]; Tailing factor ≤ [e.g., 2.0] [13] [16] |

| Specificity | Inject blank (diluent), placebo, standard, and sample. Stress sample (e.g., acid, base, oxidative, thermal, photolytic). | Analyte peak should be pure and resolved from any blank or degradant peaks. No interference at the retention time of the analyte. [16] [15] |

| Linearity & Range | Prepare and inject standard solutions at a minimum of 5 concentrations from 50% to 150% of target assay concentration. | Correlation coefficient (r) > 0.998. [16] |

| Accuracy (Recovery) | Spike placebo with analyte at 80%, 100%, and 120% levels (n=3 per level). Calculate % recovery. | Mean recovery 98.0–102.0%; RSD ≤ 2.0%. [7] [16] |

| Precision | a) Repeatability: Analyze six independent samples at 100% concentration. b) Intermediate Precision: Perform repeatability test on different day, with different analyst and instrument. | RSD for assay ≤ 2.0% (for both repeatability and intermediate precision). [7] [16] |

| Robustness | Deliberately vary method parameters (e.g., flow rate ±0.1 mL/min, temperature ±2°C, mobile phase pH ±0.1). Evaluate system suitability and assay results. | Method meets all system suitability criteria under all varied conditions. [13] [16] |

4.0 Documentation: All raw data, chromatograms, calculations, and a final validation report summarizing conclusions against all pre-defined acceptance criteria must be maintained.

Protocol for Verification of an Established Compendial Method

This protocol is applied when a compendial method (e.g., from USP, Ph. Eur.) is adopted for use in a new laboratory setting, focusing on confirming key performance attributes without full re-validation [7].

1.0 Objective: To verify that the compendial method for [Test, e.g., Assay of Drug Product Y] performs as expected in the receiving laboratory's environment.

2.0 Materials and Reagents: As specified in the compendial monograph. All compendial reference standards and materials must be sourced.

3.0 Experimental Procedure and Acceptance Criteria:

- System Suitability: Perform as per compendial instructions. It must meet all monograph criteria.

- Specificity: Demonstrate absence of interference from placebo or matrix components.

- Accuracy: Perform a spike recovery experiment at 100% level (n=3) or analyze a certified reference material. Recovery should be within 98.0-102.0%.

- Precision (Repeatability): Analyze six independent preparations of a single homogeneous sample. The RSD of the results must meet compendial expectations or a pre-defined criterion (e.g., ≤ 2.0%).

4.0 Documentation: A verification report is generated, documenting the successful completion of the limited tests and confirming the method's suitability for routine use.

Decision Framework and Workflows

The choice between validation, verification, and qualification is critical and depends on the method's origin and stage of application. The distinctions are as follows [7]:

- Validation: A formal, comprehensive process demonstrating a method's suitability for its intended use. It is required for new methods used in routine quality control and regulatory decision-making [7].

- Verification: A confirmation that a previously validated method (e.g., a compendial method) works as expected in a new laboratory environment with its specific analysts, equipment, and reagents [7].

- Qualification: An early-stage evaluation, often in development, to show a method is likely reliable before committing to full validation. It guides optimization [7].

Diagram 1: Decision workflow for selecting the appropriate analytical methodology approach, based on method origin and intended use [7].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful method validation relies on high-quality, well-characterized materials. The following table details essential reagent solutions and their critical functions in the process.

Table 4: Key Research Reagent Solutions for Analytical Method Validation

| Reagent / Material | Function and Role in Validation |

|---|---|

| Certified Reference Standard | Serves as the benchmark for accuracy, linearity, and precision assessments. Its certified purity and identity are fundamental for all quantitative measurements. |

| High-Purity Mobile Phase Solvents & Buffers | Constitute the elution environment in chromatographic methods. Their purity and precise preparation are vital for baseline stability, retention time reproducibility, and specificity. |

| System Suitability Test Mix | A specific mixture of analytes and/or related compounds used to verify chromatographic system performance (e.g., efficiency, resolution, tailing) before and during validation experiments. |

| Placebo/Matrix Blanks | Used in specificity experiments to demonstrate the absence of interfering signals from non-active components (excipients, biological matrix) at the retention time of the analyte. |

| Stressed/Sample Solutions (Forced Degradation) | Samples subjected to stress conditions (acid, base, oxidation, heat, light) are used in validation to prove the method's stability-indicating properties and specificity. |

| Calibration/Linearity Standards | A series of solutions at known concentrations across the claimed range, used to establish the relationship between analyte response and concentration (linearity and range). |

Navigating the regulatory landscapes of ICH, FDA, and EMA requires a strategic and nuanced understanding of both harmonized principles and regional emphases. ICH Q2(R1) provides the foundational framework, while the FDA and EMA enforce these principles with distinct emphases on elements such as robustness documentation and compendial alignment. For researchers engaged in the validation of new methods versus the verification of established ones, a risk-based approach is paramount. The provided protocols and decision framework offer a practical roadmap for developing compliant, scientifically sound validation data packages. As regulatory science evolves, staying abreast of updates—such as the transition to ICH M10 for bioanalysis and the emergence of ICH Q14 for analytical procedure development—will be essential for maintaining regulatory compliance and ensuring the quality, safety, and efficacy of pharmaceutical products across global markets.

In the dynamic environment of pharmaceutical development and quality control, analytical methods routinely undergo changes driven by technological advancements, process improvements, or evolving regulatory requirements. The implementation of these changes presents a significant challenge: how to ensure continued method reliability and regulatory compliance while avoiding unnecessary re-validation efforts. A risk-based approach provides a systematic framework for addressing this challenge, enabling scientists to prioritize resources toward the most critical aspects of method changes [22].

The International Council for Harmonisation (ICH) defines risk as "the combination of the probability of occurrence of harm and the severity of that harm" [23]. When applied to analytical method changes, this concept shifts the focus from blanket validation requirements to a targeted strategy that evaluates the potential impact on method performance and product quality. This paradigm aligns with regulatory expectations from major agencies including the FDA, EMA, and ICH, which increasingly emphasize risk-based quality management systems [22] [24].

This application note details a structured protocol for implementing risk-based assessment for analytical method changes, providing researchers and drug development professionals with practical tools to enhance decision-making, maintain regulatory compliance, and optimize resource allocation throughout the method lifecycle.

Theoretical Foundation: Risk Assessment Principles

Qualitative Risk Analysis in Method Changes

Qualitative risk analysis serves as the cornerstone of evaluating analytical method changes, particularly when historical data is limited or when assessing novel modifications. This systematic approach involves evaluating threats based on expert judgment, probability, and potential impact using descriptive scales rather than numerical values [25]. For method changes, qualitative analysis answers three fundamental questions:

- What risks are introduced by this method change?

- How likely is it that these risks will occur (probability)?

- How damaging would they be to method performance and product quality if they occurred (impact)? [25]

The output of this analysis is typically a risk ranking that enables prioritization of mitigation efforts toward changes with the greatest potential impact on method performance and product quality.

Regulatory Framework and Guidelines

Major regulatory authorities globally recognize and encourage risk-based approaches to analytical procedures. The ICH Q9 guideline on quality risk management establishes the fundamental framework, while region-specific guidance from EMA, WHO, and ASEAN provides additional implementation details [24] [23]. A comparative analysis of these guidelines reveals that while specific requirements may vary, all emphasize product quality, safety, and efficacy as the ultimate goals of risk management activities [24].

The FDA's initiative "Pharmaceutical cGMPs for the 21st Century - A Risk-Based Approach" further underscores the importance of risk management strategies to ensure quality in pharmaceutical processes, including analytical methods [23]. For method changes specifically, a well-documented risk assessment provides evidence of due diligence and creates clear protocols for responding to potential method failures [22].

Experimental Protocol: Implementing Risk-Based Assessment for Method Changes

Risk Identification and Categorization

Objective: Systematically identify and categorize potential risks associated with a proposed analytical method change.

Materials and Equipment:

- Cross-functional team (QA, analytical, manufacturing, regulatory)

- Historical method performance data

- Change control documentation

- Risk assessment software (e.g., Lumivero's Predict!) or structured templates

Procedure:

- Constitute Assessment Team: Assemble a cross-functional team representing quality assurance, analytical development, manufacturing, and regulatory affairs to ensure comprehensive perspective [22].

- Define Change Scope: Clearly document the specific parameters being modified, including current and proposed conditions, and the scientific rationale for the change.

- Conduct Brainstorming Session: Using facilitated discussion or structured techniques like the Delphi method, identify potential failure modes associated with the change [25].

- Categorize Risks: Group identified risks based on the area of impact:

- Accuracy/Precision: Changes affecting quantitative performance

- Specificity/Selectivity: Modifications impacting interference detection

- Robustness/Ruggedness: Changes to method conditions affecting reliability

- System Suitability: Alterations to acceptance criteria

- Regulatory Compliance: Impacts on approved method status [8] [23]

Deliverable: Comprehensive risk register documenting all potential failure modes associated with the method change.

Risk Analysis and Prioritization

Objective: Evaluate and prioritize identified risks based on probability and impact.

Materials and Equipment:

- Risk assessment matrix (5x5 recommended)

- Historical method performance data

- Validation data from original method

- Statistical analysis software

Procedure:

- Define Probability Scales: Establish qualitative definitions for probability of occurrence:

- Very High: >80% likelihood of occurrence

- High: 61-80% likelihood

- Medium: 41-60% likelihood

- Low: 21-40% likelihood

- Very Low: ≤20% likelihood [25]

- Define Impact Scales: Establish qualitative definitions for impact on method performance:

- Critical: Method fails to meet its intended purpose, potentially affecting product quality or patient safety

- Major: Significant degradation in method performance requiring major mitigation

- Moderate: Noticeable effect on performance requiring additional controls

- Minor: Minimal effect easily addressed through normal processes

- Negligible: No detectable impact on method performance [25]

- Risk Ranking: Plot each identified risk on a 5x5 risk matrix combining probability and impact.

- Prioritization: Categorize risks as:

- High Priority: Requiring immediate mitigation and extensive verification

- Medium Priority: Requiring controlled mitigation and targeted verification

- Low Priority: Managed through routine controls with limited verification [22]

Table 1: Risk Prioritization Matrix for Analytical Method Changes

| Probability → Impact ↓ | Very Low (1) | Low (2) | Medium (3) | High (4) | Very High (5) |

|---|---|---|---|---|---|

| Critical (5) | Medium (5) | Medium (10) | High (15) | High (20) | High (25) |

| Major (4) | Low (4) | Medium (8) | Medium (12) | High (16) | High (20) |

| Moderate (3) | Low (3) | Low (6) | Medium (9) | Medium (12) | High (15) |

| Minor (2) | Low (2) | Low (4) | Low (6) | Medium (8) | Medium (10) |

| Negligible (1) | Low (1) | Low (2) | Low (3) | Low (4) | Medium (5) |

Deliverable: Prioritized risk register with color-coded risk levels (high=red, medium=yellow, low=green).

Experimental Verification Based on Risk Priority

Objective: Design and execute a targeted verification protocol based on risk priority.

Materials and Equipment:

- Qualified instrumentation

- Reference standards

- Test samples (placebo, API, finished product)

- Statistical analysis software

Procedure:

- Define Verification Scope:

- Execute Tiered Verification Protocol:

Table 2: Risk-Based Verification Strategy for Method Changes

| Risk Priority | Verification Level | Recommended Tests | Acceptance Criteria |

|---|---|---|---|

| High | Comprehensive | Accuracy, Precision, Specificity, LOD/LOQ, Linearity, Robustness, System Suitability | Comparable to original validation criteria (±15% for chromatography) |

| Medium | Targeted | Accuracy, Precision, Specificity for affected components only | Method performance verified against established criteria for changed parameters only |

| Low | Limited | System Suitability only, or documentary assessment | Meet existing system suitability criteria |

- Documentation and Reporting:

- Document all verification results against pre-defined acceptance criteria

- Justify any deviations from the protocol

- Summarize conclusions regarding method performance post-change

- Update method documentation and lifecycle records

Deliverable: Comprehensive verification report supporting the method change implementation.

The Scientist's Toolkit: Essential Materials for Risk Assessment

Table 3: Research Reagent Solutions and Essential Materials for Risk-Based Method Changes

| Item | Function/Application | Examples/Specifications |

|---|---|---|

| Risk Assessment Software | Facilitates systematic risk identification, analysis, and documentation | Lumivero's Predict! Risk Controller, FMEA modules, bow-tie analysis tools [25] |

| Statistical Analysis Package | Enables data trend analysis, capability assessment, and experimental design for verification studies | JMP, Minitab, R with appropriate packages, SAS |

| Qualified Instrumentation | Ensures reliable data generation during verification studies | HPLC/UPLC with validated software, qualified detectors, calibrated instruments |

| Reference Standards | Provides benchmark for method performance assessment | USP/EP/BP certified reference standards, characterized impurities |

| Document Management System | Maintains audit trail for risk assessment decisions and change control | Electronic document management systems (EDMS) with version control |

| Design of Experiments (DoE) Software | Supports efficient investigation of multiple parameters and their interactions during verification | MODDE, Design-Expert, Stat-Ease |

Workflow Visualization: Risk-Based Approach to Method Changes

The following diagram illustrates the complete workflow for implementing a risk-based approach to analytical method changes:

Risk Assessment Workflow for Method Changes

Case Study: Successful Implementation and Outcomes

Background: A pharmaceutical company needed to transfer an HPLC method for drug product assay from an older instrument to a new UPLC platform, representing a significant methodological change with potential impact on separation efficiency and quantitative results.

Risk Assessment Application:

- Risk Identification: Cross-functional team identified potential failure modes including peak co-elution, sensitivity variation, and retention time shifts.

- Risk Prioritization: Using the 5x5 matrix, specificity changes due to altered separation efficiency were rated "High" priority, while minor retention time shifts were rated "Medium."

- Verification Strategy: Implementation followed the tiered approach with comprehensive testing for specificity (forced degradation studies, resolution measurements) and targeted testing for precision and accuracy.

- Outcome: The risk-based approach reduced verification efforts by approximately 40% compared to full revalidation, while maintaining focus on critical quality attributes. The change was successfully implemented with regulatory notification only, avoiding the need for prior approval [22] [23].

Organizations implementing such risk-based validation typically reduce unnecessary testing by 30-45% while maintaining or improving quality outcomes [22]. This efficiency gain translates directly to cost savings and faster implementation of improved methodologies.

Regulatory Considerations and Compliance Strategy

When implementing method changes using a risk-based approach, regulatory strategy must align with regional expectations. The ICH Q12 guideline provides a structured framework for post-approval changes, classifying them based on potential impact on product quality [23]. For changes with sufficient risk, prior approval is needed, while moderate or low-risk changes may only require notification.

A key advantage of systematic risk assessment is the potential for regulatory flexibility. When methods are developed using Analytical Quality by Design (AQbD) principles with established Method Operability Design Regions (MODR), changes within these proven ranges are considered adjustments rather than fundamental changes [23]. This approach facilitates continual improvement while maintaining compliance, as changes within the MODR typically require only notification rather than full regulatory submission.

Proper documentation of risk assessment provides evidence of due diligence during regulatory inspections and creates a defensible rationale for the verification strategy employed [22]. This documentation should clearly trace the decision-making process from risk identification through verification scope determination, demonstrating a science-based approach to method lifecycle management.

The application of a risk-based approach to analytical method changes represents a paradigm shift from standardized re-validation protocols to a more scientific, targeted strategy. This framework enables pharmaceutical scientists to focus resources on critical changes while maintaining regulatory compliance and ensuring uninterrupted method performance. By implementing the protocols and workflows detailed in this application note, researchers and drug development professionals can optimize their method change processes, reduce unnecessary verification efforts, and build a more robust analytical lifecycle management system.

The integration of risk assessment early in the change evaluation process provides the critical first step toward efficient, scientifically-defensible method modifications that align with both business objectives and regulatory expectations across global markets.

Integrating Quality by Design (QbD) Principles into Method Development

Quality by Design (QbD) is a systematic, proactive approach to development that begins with predefined objectives and emphasizes product and process understanding and control based on sound science and quality risk management [26]. In the context of analytical method development, QbD principles ensure that methods are designed to be robust, reproducible, and fit for their intended purpose throughout their lifecycle. The paradigm has shifted from a traditional, empirical "one-factor-at-a-time" approach to a modern, systematic framework that builds quality into the method from the outset [27] [28].

The International Council for Harmonisation (ICH) guidelines Q8-Q11 provide the foundation for QbD in pharmaceutical development, with the recent ICH Q14 (Analytical Procedure Development) and updated ICH Q2(R2) (Validation of Analytical Procedures) offering specific guidance for implementing QbD principles in analytical methods [29] [4]. These guidelines, effective from June 2024, harmonize scientific approaches and facilitate better communication between industry and regulators [29]. The enhanced QbD approach to analytical development contrasts sharply with traditional methods, as it incorporates prior knowledge, risk assessment, and systematic studies to establish a method's design space and control strategy [30] [10].

Core Principles and Workflow of AQbD

Foundational Concepts

Analytical Quality by Design (AQbD) extends pharmaceutical QbD principles to the development of analytical methods. Several key concepts form the foundation of the AQbD approach:

Analytical Target Profile (ATP): A prospective summary of the analytical procedure's requirements that defines the intended purpose and desired performance criteria [30] [4]. The ATP describes what the method is intended to measure (e.g., identity, assay, impurity content) and establishes performance standards for accuracy, precision, specificity, and other validation parameters.

Critical Quality Attributes (CQAs): For analytical methods, CQAs are the performance characteristics that must be controlled to ensure the method meets its ATP [27]. These typically include parameters such as resolution, tailing factor, retention time, and peak capacity.

Method Operable Design Region (MODR): The multidimensional combination of critical method parameters (CMPs) within which the method performs reliably and meets ATP criteria [30]. Operating within the MODR provides flexibility without requiring regulatory submission.

Control Strategy: A planned set of controls derived from current product and process understanding that ensures method performance and reproducibility [26] [30]. This includes system suitability tests, reference standards, and defined operational ranges.

AQbD Workflow Implementation

The implementation of AQbD follows a systematic workflow that transforms method development from an empirical exercise to a science-based, risk-managed process. The workflow progresses through defined stages from conceptualization to lifecycle management, creating a comprehensive framework for robust analytical methods.

Diagram 1: AQbD Workflow illustrates the systematic approach to Analytical Quality by Design, beginning with defining requirements and progressing through risk assessment, experimental design, and lifecycle management.

Experimental Protocols for AQbD Implementation

Protocol 1: ATP Definition and Risk Assessment

Objective: To define the analytical method requirements and identify potential critical method parameters through systematic risk assessment.

Materials and Equipment:

- Regulatory guidance documents (ICH Q2(R2), ICH Q14)

- Risk assessment tools (e.g., FMEA matrix, Ishikawa diagrams)

- Prior knowledge databases and literature references

Procedure:

ATP Development

- Define the method's purpose (e.g., release testing, stability testing)

- Establish target performance criteria based on intended use

- Document required validation parameters with acceptance criteria

- Specify the measurement uncertainty requirements

Initial Risk Assessment

- Form a multidisciplinary team including analytical chemists, quality specialists, and project stakeholders

- Identify all potential method parameters using brainstorming sessions and prior knowledge

- Construct Ishikawa diagrams to visualize relationships between parameters and method CQAs

- Conduct preliminary risk ranking based on severity, occurrence, and detectability

Risk Filtering and Parameter Prioritization

- Use Failure Mode Effects Analysis (FMEA) to score potential risks

- Classify parameters as critical, non-critical, or uncertain based on risk scores

- Document rationale for parameter classification

- Establish the experimental plan for DoE studies focusing on high-risk parameters

Deliverables: ATP document, risk assessment report, parameter classification table, experimental plan for DoE.

Protocol 2: Design of Experiments (DoE) for Method Optimization

Objective: To systematically evaluate the effects of critical method parameters and their interactions on method CQAs, and to define the MODR.

Materials and Equipment:

- HPLC system with compatible columns and detectors

- Reference standards and representative test samples

- DoE software (e.g., JMP, Design-Expert, Minitab)

- Chemical reagents and mobile phase components

Procedure:

Experimental Design

- Select critical method parameters identified from risk assessment

- Choose appropriate experimental design (e.g., Box-Behnken, Central Composite Design)

- Define factor ranges based on preliminary experiments and scientific judgment

- Randomize run order to minimize bias

Execution and Data Collection

- Prepare mobile phases, standards, and samples according to experimental design

- Perform chromatographic runs in randomized order

- Record response variables (CQAs) such as resolution, tailing factor, retention time, and peak area

- Monitor system suitability parameters throughout the study

Data Analysis and Model Building

- Perform regression analysis to develop mathematical models

- Evaluate model significance and lack-of-fit

- Create response surface plots to visualize parameter effects

- Identify significant factors and interaction effects

MODR Establishment

- Use contour plots and overlay plots to define regions meeting all ATP criteria

- Verify MODR boundaries with confirmatory experiments

- Document the MODR with appropriate control limits

Deliverables: Experimental data set, statistical models, response surface plots, MODR definition, confirmation study report.

Protocol 3: Method Validation Following QbD Principles

Objective: To demonstrate that the analytical procedure meets the ATP criteria following ICH Q2(R2) guidelines, incorporating knowledge from AQbD development studies.

Materials and Equipment:

- Validated instrumentation and qualified reference standards

- Representative drug substance and product samples

- Documentation system for recording validation data

Procedure:

Validation Planning

- Prepare validation protocol referencing ATP requirements

- Define acceptance criteria based on ATP and MODR knowledge

- Incorporate robustness validation within MODR boundaries

Enhanced Validation Execution

- Perform accuracy studies across the analytical range using recovery experiments

- Conduct precision studies including repeatability and intermediate precision

- Establish specificity through forced degradation studies and resolution from impurities

- Determine linearity and range using appropriate statistical methods

- Quantify LOD and LOQ using signal-to-noise or statistical approaches

- Verify robustness by challenging method parameters within MODR

Validation Reporting

- Compare results against predefined acceptance criteria

- Document any deviations and investigations

- Summarize method capabilities and limitations

- Establish system suitability tests based on validation outcomes

Deliverables: Validation protocol, complete validation report, system suitability specification, finalized analytical procedure.

Case Study: QbD-Based LC-MS Method for Fluoxetine Quantification

Application to Bioanalytical Method Development

A practical implementation of AQbD principles was demonstrated in the development and validation of an LC-MS/MS method for quantification of fluoxetine in human plasma [31]. This case study illustrates the systematic approach to managing variability in complex bioanalytical methods.

ATP Definition: The ATP required a selective and sensitive method for quantifying fluoxetine in human plasma over the concentration range of 2–30 ng/mL, with precision ≤15% RSD and accuracy within ±15% of nominal values, for application in pharmacokinetic and bioequivalence studies.

Risk Assessment and DoE Implementation: Critical method parameters were identified as mobile phase flow rate (X1), pH (X2), and mobile phase composition (X3). A Box-Behnken design was employed to systematically optimize these parameters, with retention time (Y1) and peak area (Y2) as the critical responses [31].

Table 1: Experimental Design and Results for Fluoxetine Method Optimization

| Run Order | Flow Rate (mL/min) | pH | Organic Phase (%) | Retention Time (min) | Peak Area |

|---|---|---|---|---|---|

| 1 | 0.7 | 2.5 | 90 | 4.2 | 12540 |

| 2 | 0.9 | 2.5 | 90 | 3.1 | 11850 |

| 3 | 0.7 | 3.5 | 90 | 4.5 | 13210 |

| 4 | 0.9 | 3.5 | 90 | 3.3 | 12180 |

| 5 | 0.7 | 3.0 | 85 | 5.1 | 14250 |

| 6 | 0.9 | 3.0 | 85 | 3.8 | 13520 |

| 7 | 0.7 | 3.0 | 95 | 3.9 | 12870 |

| 8 | 0.9 | 3.0 | 95 | 2.9 | 11940 |

| 9 | 0.8 | 2.5 | 85 | 4.8 | 13890 |

| 10 | 0.8 | 3.5 | 85 | 5.2 | 14560 |

| 11 | 0.8 | 2.5 | 95 | 3.7 | 12480 |

| 12 | 0.8 | 3.5 | 95 | 4.1 | 13120 |

| 13 | 0.8 | 3.0 | 90 | 4.3 | 12980 |

| 14 | 0.8 | 3.0 | 90 | 4.2 | 12890 |

| 15 | 0.8 | 3.0 | 90 | 4.3 | 13010 |

MODR Establishment and Control Strategy: The optimized chromatographic conditions employed an Ascentis express C18 analytical column (75 × 4.6 mm, 2.7 µm) with a mobile phase of ammonium formate and acetonitrile (5:95 ratio) at a flow rate of 0.8 mL/min [31]. The MODR was established as flow rate: 0.75–0.85 mL/min, pH: 2.8–3.2, and organic composition: 88–92%, within which the method consistently met ATP criteria.

Validation Results: The method demonstrated linearity (r² > 0.999), precision (RSD < 5%), and accuracy (95–105% recovery) across the concentration range. The QbD approach enhanced method robustness, with the MODR providing operational flexibility while maintaining reliability [31].

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of AQbD requires specific materials and reagents that ensure method robustness and reproducibility. The following table details key research reagent solutions for HPLC method development within a QbD framework.

Table 2: Essential Research Reagent Solutions for AQbD Implementation

| Reagent/Material | Function in AQbD | Critical Quality Attributes | Selection Considerations |

|---|---|---|---|

| Chromatographic Columns | Stationary phase for analyte separation | Particle size, pore size, surface chemistry, ligand density, batch-to-batch reproducibility | Select based on analyte properties; consider multiple vendors for robustness studies |

| Buffer Components | Mobile phase modifier for pH control | Purity, pH range, volatility, UV transparency, biocompatibility for LC-MS | Assess buffer capacity within method operable range; include in robustness testing |

| HPLC-Grade Solvents | Mobile phase components | UV cutoff, purity, water content, acidity/alkalinity, residue after evaporation | Establish vendor specifications; monitor lot-to-lot variability |

| Reference Standards | Method calibration and qualification | Purity, stability, identity, certification | Source from certified suppliers; establish proper storage and handling procedures |

| Derivatization Reagents | Analyte modification for detection | Reactivity, purity, stability, by-product formation | Evaluate multiple reagents if needed; optimize reaction conditions through DoE |

| SPE Cartridges | Sample cleanup and pre-concentration | Sorbent chemistry, bed mass, retention capacity, lot consistency | Include in method screening phase; test multiple sorbent chemistries |

Regulatory Framework and Lifecycle Management

ICH Guidelines Integration

The regulatory landscape for analytical method development has evolved significantly with the issuance of ICH Q14 and the revision of ICH Q2(R2), effective from June 2024 [29] [4]. These guidelines provide a modernized framework that encourages a science- and risk-based approach to analytical development.

ICH Q14 introduces the concept of an enhanced approach to analytical procedure development, which aligns with QbD principles [4]. This enhanced approach includes:

- Definition of an Analytical Target Profile (ATP)

- Systematic assessment of critical method parameters

- Establishment of a method operable design region

- Development of a control strategy

- Lifecycle management of analytical procedures

The traditional approach remains acceptable, but the enhanced approach provides regulatory flexibility, particularly for post-approval changes [4]. When an enhanced approach is used, changes within the established MODR can be managed through the pharmaceutical quality system without regulatory submission [30].

Lifecycle Management and Continuous Improvement

A fundamental principle of AQbD is the ongoing monitoring and improvement of analytical methods throughout their lifecycle. The lifecycle approach encompasses method development, validation, routine use, and eventual retirement or replacement [30] [10].

Continuous Monitoring: Method performance should be regularly assessed through system suitability tests, quality control samples, and trend analysis of historical data. Statistical process control (SPC) charts can be employed to monitor method performance over time and detect trends or shifts.

Change Management: AQbD facilitates science-based change management through the established MODR. Changes within the MODR can be implemented with reduced regulatory oversight, while changes outside the MODR require more substantial assessment and potentially regulatory notification [30].

Knowledge Management: The extensive data generated during AQbD implementation should be captured in a knowledge management system. This knowledge forms the basis for future method improvements and can be applied to related analytical procedures.

The relationship between the MODR and the analytical control strategy creates a framework for maintaining method robustness throughout the method lifecycle, as illustrated below.

Diagram 2: MODR and Control Strategy demonstrates the relationship between the knowledge space, method operable design region, normal operating conditions, and the control strategy that ensures ongoing method performance.

Integrating QbD principles into analytical method development represents a paradigm shift from empirical approaches to systematic, science-based methodologies. The AQbD framework, supported by ICH Q14 and Q2(R2) guidelines, enables development of robust methods that consistently meet performance requirements throughout their lifecycle. The case study of fluoxetine method development demonstrates practical implementation of AQbD principles, while the experimental protocols provide actionable guidance for researchers. By adopting AQbD, pharmaceutical scientists can enhance method reliability, reduce operational failures, and maintain regulatory compliance in an evolving landscape. The structured approach outlined in this article provides researchers with a comprehensive framework for implementing QbD principles in analytical method development within the context of method validation research.

Building a Robust Method: Key Parameters and Practical Protocols

For researchers and scientists in drug development, the validation of analytical methods is a critical step in ensuring the reliability and acceptability of data for regulatory submissions. The process demonstrates that an analytical procedure is suitable for its intended purpose, such as the identity, purity, potency, and stability of a drug substance or product [32] [33]. Within a broader thesis context, whether developing a novel analytical method or adopting an established one, the assessment of core validation parameters forms the foundation of this demonstration.

The International Council for Harmonisation (ICH) guideline Q2(R2) provides the primary framework for this validation, a standard adopted by regulatory bodies worldwide, including the FDA and EMA [4] [10]. The four parameters of Accuracy, Precision, Specificity, and Linearity are among the fundamental "performance characteristics" that must be evaluated to prove a method is "fit for purpose" [4] [34]. This application note provides detailed protocols and experimental designs for assessing these core parameters, framed within the context of comparing a new analytical method against an established one.

Core Parameters and Acceptance Criteria

The table below summarizes the definitions and typical acceptance criteria for the four core validation parameters, based on ICH Q2(R2) and associated regulatory guidelines [32] [4] [10].

Table 1: Core Validation Parameters and Acceptance Criteria

| Parameter | Definition | Typical Acceptance Criteria |

|---|---|---|

| Accuracy | The closeness of agreement between the measured value and a reference value accepted as the true value [4] [33]. | Recovery of 95–105% for drug substance assay [35]. |

| Precision | The closeness of agreement between a series of measurements from multiple sampling of the same homogeneous sample [4] [33]. | RSD ≤ 2% for repeatability of drug substance assay [35]. |

| Specificity | The ability to assess the analyte unequivocally in the presence of components that may be expected to be present [32] [4]. | The method should be able to discriminate the analyte from impurities, degradants, and matrix components [32]. |

| Linearity | The ability of the method to obtain test results that are directly proportional to the concentration of the analyte [4] [10]. | A correlation coefficient (r) of ≥ 0.99 [35]. |

Experimental Protocols

Accuracy

The accuracy of an analytical method is expressed as the percentage of recovery of the analyte known to be present in the sample [33].

Protocol for Drug Substance Assay (using a Reference Standard):

- Preparation: Prepare a minimum of nine determinations across a minimum of three concentration levels (e.g., 80%, 100%, 120% of the target concentration), with three replicates per level [4] [10].

- Analysis: Analyze each sample according to the method procedure.

- Calculation: For each concentration level, calculate the percent recovery using the formula:

- % Recovery = (Measured Concentration / Known Concentration) × 100

- Data Interpretation: Report the recovery and the relative standard deviation (RSD) of the recoveries at each level. The mean recovery should meet predefined acceptance criteria, such as 95–105% [35].

Precision

Precision is typically considered at three levels: repeatability, intermediate precision, and reproducibility [4] [33].

Protocol for Repeatability (Intra-assay Precision):

- Preparation: Prepare a minimum of six determinations at 100% of the test concentration from a single, homogeneous sample solution [4] [10].

- Analysis: A single analyst performs all analyses in one session using the same equipment.

- Calculation: Calculate the Relative Standard Deviation (RSD or %RSD) of the six results.

- %RSD = (Standard Deviation / Mean) × 100

- Data Interpretation: The %RSD should be within acceptance criteria, for example, ≤ 2% for an assay [35].

Protocol for Intermediate Precision: This demonstrates the impact of random variations within the same laboratory on different days, with different analysts, or using different instruments [4]. The experimental design should incorporate these variables, and the combined results from both sequences are evaluated using an appropriate statistical test, such as an F-test for variability.

Specificity

For identity tests, specificity ensures the method can discriminate between compounds of similar structure. For assays and impurity tests, it requires the resolution of the analyte from other components like impurities, degradants, or excipients [32].

Protocol for Specificity in a Stability-Indicating Assay:

- Sample Preparation:

- Analyte Standard: Prepare a sample of the pure analyte reference standard.

- Placebo/Blank: Prepare a sample of the formulation placebo (all excipients, minus the active ingredient).

- Stressed Sample: Subject the drug product to stress conditions (e.g., heat, light, acid/base hydrolysis, oxidation) to generate degradants [32].

- Spiked Sample: Spike the placebo with the analyte and potential impurities.

- Analysis: Inject all samples and compare the chromatograms (for HPLC methods) or profiles.

- Data Interpretation: The method is specific if:

- The analyte peak is pure and unaffected by the placebo.

- There is baseline resolution between the analyte peak and the nearest degradant or impurity peak.

- The blank/placebo shows no interfering peaks at the retention time of the analyte [32].

Linearity

Linearity is determined by constructing a calibration curve of response versus analyte concentration.

Protocol for Linearity:

- Preparation: Prepare a minimum of five concentration levels spanning a defined range (e.g., from 50% to 150% of the target concentration) [4] [10].

- Analysis: Analyze each level in duplicate or triplicate.

- Calculation: Plot the mean response against the concentration. Perform a linear regression analysis on the data to obtain the slope, y-intercept, and correlation coefficient (r).

- Data Interpretation: The correlation coefficient (r) is typically required to be ≥ 0.99 [35]. A plot of the residuals (the difference between the measured and predicted values) should be random, confirming the linear model's appropriateness.

The Validation Workflow and Experimental Design

The following diagram illustrates the logical workflow for designing a validation study for a new analytical method, incorporating the core parameters and their relationships.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Reagents and Materials for Method Validation

| Item | Function in Validation |

|---|---|

| Analytical Reference Standard | A highly characterized material of known purity and identity used to prepare solutions for Accuracy, Linearity, and Precision studies [33]. |

| Placebo Formulation | A mixture of all excipients without the active ingredient, critical for demonstrating Specificity and the absence of matrix interference [32]. |

| Forced Degradation Samples | Samples of the drug substance or product subjected to stress conditions (heat, light, acid/base, oxidation) to generate degradants for Specificity testing [32]. |

| Certified Impurity Standards | Isolated and characterized impurities to confirm the method's ability to resolve and quantify specific known impurities. |