Assay vs. Impurity Method Validation: A Guide to ICH Q2(R2) Compliance and Lifecycle Management

This article provides a comprehensive guide for researchers and drug development professionals on the distinct validation requirements for assay and impurity methods.

Assay vs. Impurity Method Validation: A Guide to ICH Q2(R2) Compliance and Lifecycle Management

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the distinct validation requirements for assay and impurity methods. Aligned with the latest ICH Q2(R2) and FDA guidelines, it covers foundational principles, methodological applications, and troubleshooting strategies. Readers will gain a clear understanding of how to apply a science- and risk-based approach to method validation, from setting an Analytical Target Profile (ATP) to managing the entire method lifecycle, ensuring regulatory compliance, product quality, and patient safety.

Understanding the Core Principles and Regulatory Landscape of Method Validation

Defining Analytical Method Validation (AMV) and Its Role in GMP and Product Quality

Analytical Method Validation (AMV) is the process of proving that an analytical method is acceptable for its intended purpose, providing documented evidence that the method consistently produces reliable and accurate results during routine use [1] [2]. In the context of Good Manufacturing Practice (GMP), AMV is not merely a scientific exercise but a federal requirement essential for ensuring the identity, potency, quality, and purity of drug substances and products [3]. A well-validated method provides the assurance that every future measurement in routine analysis will be close enough to the unknown true value for the content of the analyte in the sample, thereby directly safeguarding product quality and patient safety [1].

The validity of an analytical method is deeply intertwined with the specific guideline and performance criteria selected, as numerous international standards exist with differences in terminology, experimental procedure, and acceptance criteria [1]. This guide will objectively compare the validation requirements for two critical analytical applications: assay methods and impurity methods, highlighting the distinct performance benchmarks for each.

Key Performance Characteristics in Method Validation

The performance characteristics, or validation parameters, collectively provide a comprehensive picture of a method's reliability. The requirements for these parameters, however, differ significantly based on the method's intended use.

The table below summarizes the core performance characteristics and their fundamental definitions [4] [2].

Table 1: Core Performance Characteristics of Analytical Method Validation

| Performance Characteristic | Definition |

|---|---|

| Accuracy | The closeness of agreement between a measured value and an accepted reference or true value [4] [2]. |

| Precision | The closeness of agreement (degree of scatter) between a series of measurements from multiple sampling of the same homogeneous sample [4]. This is evaluated at three levels: repeatability, intermediate precision, and reproducibility [4] [2]. |

| Specificity | The ability to measure the analyte accurately and specifically in the presence of other components that may be expected to be present in the sample (e.g., impurities, degradants, excipients) [2]. |

| Linearity | The ability of the method to obtain test results that are directly proportional to the analyte concentration within a given range [2]. |

| Range | The interval between the upper and lower concentrations of analyte that have been demonstrated to be determined with suitable precision, accuracy, and linearity [4] [2]. |

| Detection Limit (LOD) | The lowest amount of analyte in a sample that can be detected, but not necessarily quantified, under the stated experimental conditions [4] [2]. |

| Quantitation Limit (LOQ) | The lowest amount of analyte in a sample that can be quantitatively determined with acceptable precision and accuracy [4] [2]. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters (e.g., flow rate, temperature, mobile phase pH) [2]. |

Comparative Validation: Assay vs. Impurity Methods

The extent and acceptance criteria for validating performance characteristics are driven by the method's purpose. Assay methods are designed to measure the main active component, while impurity methods must detect and quantify minor components, often at very low levels, making their validation more stringent in specific areas.

The table below provides a detailed comparison of experimental protocols and acceptance criteria for assay and impurity methods, based on ICH and other regulatory guidelines [4] [3].

Table 2: Validation Protocol Comparison: Assay vs. Impurity Methods

| Validation Parameter | Assay Method Protocol & Acceptance | Impurity Method Protocol & Acceptance |

|---|---|---|

| Accuracy | Protocol: Compare results to a standard reference material or by spiking drug product placebo with known amounts of analyte [4] [2]. Minimum of 9 determinations over 3 concentration levels [2].Acceptance: Typically 98-102% recovery for drug substance [4]. | Protocol: Assess by spiking drug substance/product with known amounts of impurities. If unavailable, compare to a second well-characterized procedure [2] [3].Acceptance: 90-110% for impurities at 0.5-1.0%; wider ranges (e.g., 80-120%) may be acceptable for lower levels [3]. |

| Precision (Repeatability) | Protocol: Minimum of 6 determinations at 100% of test concentration or 9 determinations over the specified range [4] [2].Acceptance: Low %RSD (e.g., <1%) is expected [4]. | Protocol: Analyze six samples of drug substance/product containing impurities [3].Acceptance: %RSD is highly dependent on impurity level; higher %RSD (e.g., 10-20%) may be acceptable at very low levels near the LOQ [3]. |

| Specificity | Protocol: Demonstrate that the assay is unaffected by the presence of excipients or impurities. For chromatography, use peak purity tests (e.g., DAD or MS) [2].Acceptance: No interference from blank; resolution of critical pairs [2]. | Protocol: Requires rigorous forced degradation studies (acid, base, oxidation, thermal, photolytic) to demonstrate separation of all potential degradation products from each other and the main peak [3].Acceptance: Resolution between all peaks, typically NLT 1.0-1.5; successful peak purity assessment [3]. |

| Linearity & Range | Protocol: Minimum of 5 concentration levels [4] [2].Range: Typically 80-120% of the test concentration [4].Acceptance: Coefficient of determination (R²) typically >0.95-0.99 [4] [3]. | Protocol: Minimum of 5 concentration levels for each impurity [3].Range: From LOQ to 120-150% of the specification limit for the impurity [4] [3].Acceptance: R² >0.95 may be acceptable for one-point calibration, but higher linearity is preferred [3]. |

| LOD/LOQ | Protocol & Acceptance: Often less critical for the main assay. Can be determined via signal-to-noise (S/N: 3:1 for LOD, 10:1 for LOQ) or based on standard deviation of the response and slope of the calibration curve [4] [2]. | Protocol & Acceptance: Critical parameter. Must be sufficiently low to detect and quantify impurities at reporting thresholds. LOQ should be at or below the reporting threshold (e.g., 0.1-0.05%) [3]. S/N of 10:1 is standard for LOQ [4] [2]. |

| Robustness | Protocol: Deliberate, small changes in operational parameters (e.g., flow rate ±0.02 mL/min, mobile phase composition ±2%, temperature ±2°C) [3].Acceptance: Method remains unaffected with comparable results to the original conditions [3]. | Protocol & Acceptance: More critical due to the potential for co-elution. Test parameters that could affect the separation of impurities (e.g., pH of mobile phase, column temperature, different columns). Acceptance is tied to maintaining system suitability and resolution [3]. |

| System Suitability | Protocol: A set of checks (e.g., precision, tailing factor, theoretical plates) to ensure the system is functioning correctly at the time of the test [2]. | Protocol & Acceptance: Extremely critical. Often includes testing with a stressed sample to demonstrate that the method can still separate critical impurity pairs before every run, preventing out-of-specification (OOS) results due to system variability [3]. |

Experimental Workflow for Analytical Method Validation

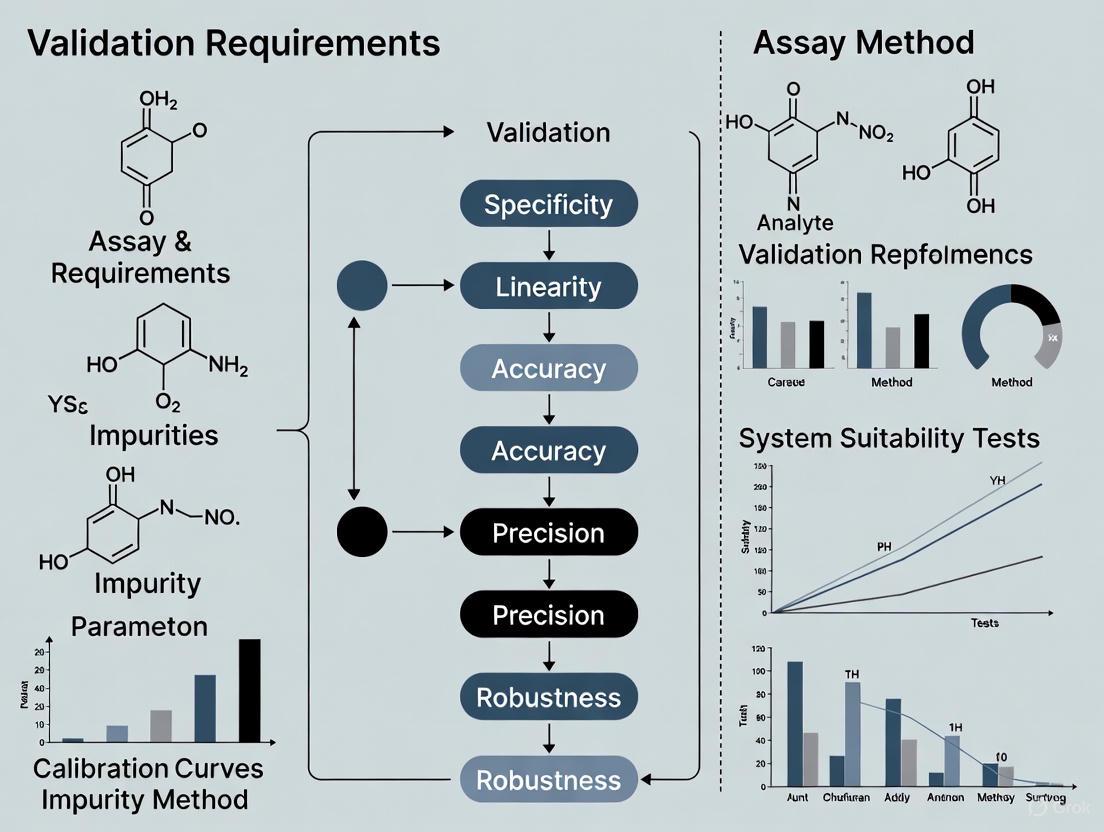

The following diagram illustrates the logical progression of a comprehensive method validation study, from initial planning to final assessment of acceptability, integrating the key performance characteristics.

The Scientist's Toolkit: Essential Reagents and Materials

Successful method validation relies on high-quality, well-characterized materials. The table below lists key research reagent solutions and their critical functions in the validation process, particularly for chromatographic methods.

Table 3: Essential Research Reagent Solutions for Method Validation

| Reagent / Material | Function in Validation |

|---|---|

| Drug Substance (Reference Standard) | Serves as the primary benchmark of known purity and identity for establishing accuracy, linearity, and precision for assay methods. It may also be used as a surrogate for impurities when they are unavailable [4] [3]. |

| Known Impurity Standards | Pure, well-characterized impurities are essential for validating impurity methods. They are used to spike samples for accuracy, precision, specificity, and to establish LOD/LOQ [2] [3]. |

| Placebo/Excipient Mixture | A synthetic mixture containing all components of the drug product except the active analyte. It is used in specificity testing to demonstrate no interference and in accuracy studies for drug products by spiking with the analyte [4]. |

| Stressed Samples (Forced Degradation) | Samples of the drug substance or product that have been intentionally degraded under various stress conditions (e.g., acid, base, oxidation, heat, light). These are critical for demonstrating the specificity and stability-indicating properties of a method [3]. |

| High-Purity Solvents & Mobile Phases | Essential for preparing samples and mobile phases. Their purity is critical to avoid introducing artifacts, elevated baselines, or noise that can interfere with the analysis, particularly for LOD/LOQ determination [4] [2]. |

| Characterized Chromatographic Columns | Columns from different lots or manufacturers are used during robustness and intermediate precision testing to ensure the method's performance is not overly sensitive to the specific column used [3]. |

Within the framework of GMP, Analytical Method Validation is a foundational element that directly underpins product quality. As demonstrated, a one-size-fits-all approach is not applicable. The validation strategy must be meticulously tailored to the method's purpose. Assay methods focus on accurately and precisely quantifying the major active component over a relatively narrow range around the target concentration. In contrast, impurity methods demand a more rigorous approach, with an emphasis on specificity through forced degradation, superior detection sensitivity (LOD/LOQ), and a wider linear range to ensure the reliable quantification of trace-level components that could impact drug safety and efficacy. Understanding these distinctions is paramount for researchers and drug development professionals to design validation protocols that are both compliant and scientifically sound, ensuring the delivery of high-quality medicines to patients.

The International Council for Harmonisation (ICH) provides globally recognized guidelines to ensure the quality, safety, and efficacy of pharmaceuticals. For researchers and drug development professionals, understanding the interplay between ICH Q2(R2) on analytical procedure validation, ICH Q14 on analytical procedure development, and subsequent U.S. Food and Drug Administration (FDA) adoption is crucial for successful regulatory submissions [5]. Issued in March 2024, these documents represent a significant modernization from previous versions, moving from a prescriptive "check-the-box" approach to a more scientific, risk-based, and lifecycle-oriented model [5]. This framework is designed to ensure that a method validated in one region is recognized and trusted worldwide, thereby streamlining the path from development to market [5].

This guide objectively compares the application of these guidelines, focusing specifically on validation requirements for two critical analytical procedures: assay methods and impurity methods. The core thesis is that while the fundamental validation principles apply to both, the specific performance criteria, experimental protocols, and control strategies differ substantially based on the analytical procedure's intended purpose and the associated risk to product quality and patient safety.

Comparative Analysis of ICH Q2(R2), ICH Q14, and FDA Guidance

Scope and Focus

The following table summarizes the distinct yet complementary roles of these key guidelines.

| Guideline | Primary Focus | Key Introductions/Emphases | Regulatory Status |

|---|---|---|---|

| ICH Q2(R2) | Validation of Analytical Procedures [6] | Provides a general framework for validation principles, including modern techniques like spectroscopy and multivariate analysis [6] [5]. | Final guideline, adopted by the FDA (March 2024) [6]. |

| ICH Q14 | Analytical Procedure Development [6] | Introduces Analytical Target Profile (ATP), enhanced development approach, and lifecycle management [5] [7]. | Final guideline, adopted by the FDA (March 2024) [6]. |

| FDA Guidance | Implementation in the US | Adopts and implements ICH Q2(R2) and Q14, making them critical for NDAs and ANDAs [5]. | The FDA considers these guidances the current standard for regulatory evaluations [6]. |

Core Validation Parameters from ICH Q2(R2)

ICH Q2(R2) outlines the fundamental performance characteristics required to demonstrate an analytical procedure is fit for purpose [5]. The application and acceptance criteria for these parameters vary significantly between assay and impurity methods, as detailed in the table below.

| Validation Parameter | Definition | Application in Assay/Potency Methods | Application in Impurity Methods |

|---|---|---|---|

| Accuracy | Closeness of test results to the true value [5]. | Demonstrated across the specification range, typically using a placebo spike or reference standard [5]. | Critical for quantifying specific identified impurities at or near the specification threshold (e.g., reporting threshold, qualification threshold) [5]. |

| Precision | Degree of agreement among individual test results [5]. | Repeatability: Required with multiple sample preparations of a homogeneous sample. Intermediate Precision: Essential to demonstrate lab/analyst robustness [5]. | Repeatability: Crucial at low impurity levels. Intermediate Precision: Required, as variability can significantly impact quantification near limits [5]. |

| Specificity | Ability to assess the analyte unequivocally in the presence of other components [5]. | Must demonstrate separation from known and potential impurities, excipients, or matrix components [5]. | Highest Priority: Must demonstrate baseline separation of all potential impurities from each other and from the main analyte peak [5]. |

| Linearity & Range | Linearity: Ability to obtain results proportional to analyte concentration. Range: The interval where linearity, accuracy, and precision are demonstrated [5]. | Range typically from 80-120% of the test concentration for drug substance/product assay [5]. | Range should cover from the reporting threshold (e.g., 0.05%) to at least 120% of the specification limit for the impurity [5]. |

| Limit of Detection (LOD) / Quantitation (LOQ) | LOD: Lowest detectable amount. LOQ: Lowest quantifiable amount with accuracy and precision [5]. | Generally not required for the main analyte in assay methods. | Fundamental Requirement: LOQ must be established and validated to be at or below the reporting threshold [5]. |

Experimental Protocols for Method Validation

A robust validation study begins with a protocol derived from the ATP and a thorough risk assessment [5]. The workflow below illustrates the complete lifecycle of an analytical procedure, integrating both development and validation stages.

Defining the Analytical Target Profile (ATP) and Risk Assessment

The ATP is a prospective summary of the analytical procedure's required performance characteristics, defining what the method must achieve [5] [7]. For an impurity method, the ATP would explicitly define the LOQ (e.g., ≤ 0.05%) and required specificity to separate all known potential degradants. For an assay method, the ATP would focus on accuracy (e.g., 98-102%) and precision (e.g., RSD ≤ 1.0%) at the 100% concentration level.

A subsequent risk assessment using tools like Failure Mode and Effects Analysis (FMEA) systematically identifies and ranks factors that could impact the ATP [8]. This assessment directly informs the design of both the method development and validation studies. High-risk factors, such as chromatographic parameters (e.g., pH, gradient) in an HPLC impurity method, become the focus of robustness testing during development and are tightly controlled in the final procedure.

Protocol for a Comparative Accuracy Study

This experiment is fundamental for both assay and impurity methods but is executed differently.

- Objective: To demonstrate the closeness of agreement between the measured value and a accepted reference value [5].

- Materials: Drug substance/product, known impurities, placebo (if applicable), reference standards, and all relevant reagents and solvents.

- Methodology for Assay: Analyze a minimum of 9 determinations over a minimum of 3 concentration levels covering the specified range (e.g., 80%, 100%, 120%). The sample can be a synthetic mixture of the analyte with placebo components spiked with a known quantity of reference standard [5].

- Methodology for Impurities: Spike the drug substance/product with known impurities at various levels, especially at the specification limit (e.g., 0.1%, 0.2%) and the LOQ. The recovery of each impurity is calculated.

- Data Analysis: Report the percent recovery for each level or the difference between the mean and the accepted true value along with confidence intervals [5].

Protocol for a Specificity Study Forced Degradation

This study is critical, especially for stability-indicating methods, to demonstrate the method's ability to measure the analyte amidst degradation products.

- Objective: To prove that the method can unequivocally quantify the analyte (for assay) or detect impurities in the presence of other components [5].

- Materials: Stressed samples (acid, base, oxidative, thermal, photolytic) of the drug substance and product.

- Methodology: Subject samples to various forced degradation conditions to generate ~5-20% degradation. Analyze the stressed samples and demonstrate that the method can:

- For Assay: Resolve all degradation products from the main peak and provide an unbiased assay of the active ingredient.

- For Impurities: Resolve all degradation products from each other (peak purity) and be able to quantify them.

- Data Analysis: Provide chromatograms demonstrating separation and peak purity tools (e.g., from a PDA detector) to demonstrate homogeneity of the analyte peak.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table lists key materials and solutions required for developing and validating analytical methods, particularly for chromatographic analyses of small molecules and biologics.

| Item / Reagent | Function / Role in Experimentation |

|---|---|

| Reference Standards | Highly characterized substance used as a benchmark for quantitative analysis (e.g., calculating assay content or impurity amount) [8]. |

| System Suitability Solutions | A mixture of key analytes used to verify that the chromatographic system is performing adequately before and during the analysis (e.g., resolution, tailing factor) [8]. |

| Known Impurity Standards | Isolated and characterized impurities used to confirm specificity, establish relative retention times, and calibrate the method for quantitative impurity determination. |

| Placebo/Matrix Formulation | The formulation without the active ingredient. Used in specificity studies to demonstrate no interference and in accuracy studies for spiking experiments [5]. |

| Stability Samples | Samples stored under long-term and accelerated conditions. Used to validate the method's ability to monitor stability and generate degradation profiles [7]. |

| High-Quality Solvents & Reagents | Essential for achieving the required sensitivity (low UV absorbance), specificity, and robust performance. Variations can significantly impact robustness, especially for impurity methods [8]. |

The integrated ICH Q2(R2) and Q14 guidelines, as adopted by the FDA, provide a modern, flexible framework for analytical procedures. The key differentiator in validation requirements for assay versus impurity methods lies in the risk-based application of core validation parameters. Assay methods prioritize accuracy and precision at the 100% level within a narrow range, while impurity methods demand extreme sensitivity (LOQ) and high specificity over a wider dynamic range to control low-level components that impact patient safety.

Success in regulatory compliance hinges on a deep, scientifically justified understanding of the method's purpose, captured prospectively in the Analytical Target Profile, and verified through tailored experimental protocols. This science- and risk-based lifecycle approach not only meets regulatory requirements but also builds more efficient, reliable, and trustworthy analytical procedures, ultimately ensuring product quality and patient safety.

In pharmaceutical development, assay methods for potency and impurity methods for safety represent two distinct analytical pillars that serve fundamentally different purposes in ensuring drug quality. Potency assays are quantitative methods designed to measure the biological activity of an active pharmaceutical ingredient (API) and its ability to elicit a specific therapeutic effect [9]. These functional analyses confirm that a drug possesses the intended pharmacological activity at the declared concentration. In contrast, impurity methods are qualitative and quantitative procedures that identify and measure unwanted components in drug substances or products, serving primarily to ensure patient safety by controlling potentially harmful contaminants [10] [3].

The regulatory framework mandates both types of methods throughout the drug development lifecycle, with potency testing fulfilling requirements for demonstrating effectiveness under 21 CFR 211.165(a) and 21 CFR 600.3(kk), while impurity control addresses safety requirements outlined in various FDA guidances, including those specifically addressing nitrosamine impurities [11] [12] [13]. This article provides a comprehensive comparison of these critical analytical approaches, examining their distinct purposes, methodological requirements, and validation parameters within the context of pharmaceutical quality control.

Purpose and Regulatory Significance

Potency Methods: Demonstrating Therapeutic Efficacy

The primary purpose of potency assays is to provide quantitative measurement of a drug's biological activity and functional integrity, directly confirming its therapeutic capability [13]. Potency methods must be mechanism-reflective, meaning they should measure biological responses that mirror the drug's known mechanism of action (MoA) in vivo [13]. For biopharmaceuticals particularly, potency assays serve as critical quality attributes (CQAs) that ensure batch-to-batch consistency throughout the product lifecycle, from development through commercial manufacturing [13].

Regulatory agencies require potency testing for all licensed biological products under Section 351 of the Public Health Service Act, mandating that manufacturers demonstrate safety, purity, and potency for BLA approval [13]. The FDA requires quantitative functional assays for product release, with some flexibility allowed for complex modalities where surrogate assays may be acceptable to the EMA in certain cases [13]. These requirements underscore the critical role of potency assays in confirming that each drug batch contains the specified strength of active ingredient to deliver the intended therapeutic effect.

Impurity Methods: Ensuring Patient Safety

Impurity methods serve the fundamentally different purpose of identifying and quantifying unwanted components that may pose safety risks to patients [11] [3]. These methods focus on detecting and measuring various impurity categories, including organic impurities (such as nitrosamines), inorganic impurities, and residual solvents [9]. The safety focus is particularly evident in FDA's detailed guidance on nitrosamine impurities, which establishes strict Acceptable Intake (AI) limits based on carcinogenic potency categorization to ensure patient safety [11].

The regulatory framework for impurity control establishes permissible limits based on toxicological data and maximum daily dose considerations [9]. Impurity methods must be capable of detecting contaminants at thresholds specified in ICH guidelines (Q3A, Q3B, Q3C), with particularly stringent requirements for mutagenic impurities following the ICH M7 framework [9]. The primary objective is risk mitigation through rigorous monitoring and control of potentially harmful substances that may form during synthesis, emerge from degradation, or originate from raw materials or packaging components [11] [3].

Table 1: Comparative Analysis of Primary Purposes

| Aspect | Potency Methods | Impurity Methods |

|---|---|---|

| Primary Purpose | Measure biological activity and therapeutic strength [9] [13] | Identify and quantify safety-critical contaminants [11] [3] |

| Regulatory Focus | Confirming efficacy and batch consistency [13] | Ensuring patient safety through impurity control [11] |

| Key Guidance | 21 CFR 600.3(kk); FDA Guidance on Potency Tests [13] | ICH Q3A-Q3C; FDA Nitrosamine Guidance [11] [9] |

| Critical Outcome | Quantitative potency value for release testing [13] | Verification against Acceptable Intake limits [11] |

Methodological Approaches and Techniques

Analytical Techniques for Potency Assessment

Potency determination employs biofunctional assays that measure the drug's specific biological activity through mechanism-relevant systems. For GLP-1 therapeutics and similar biologics, this typically involves cell-based assays measuring downstream signaling events such as cyclic AMP (cAMP) accumulation in cells expressing human target receptors [9]. These complex biological systems account for factors like serum binding effects and provide clinically predictive potency measurements [9].

The industry employs a progressive implementation approach where simpler techniques like ELISA or ligand-binding assays may suffice during early development, advancing to more complex, MoA-reflective cell-based or kinetic assays for later stages and commercial release [13]. Chromatographic techniques such as reversed-phase HPLC/UPLC may support potency assessment when they provide stability-indicating data, but they serve merely as complementary analyses unless they demonstrate direct correlation with biological activity [9].

Diagram 1: Potency assay workflow showing parallel approaches for cell-based and receptor binding assays

Analytical Techniques for Impurity Profiling

Impurity analysis relies primarily on separation techniques coupled with sensitive detection methods. High-performance liquid chromatography (HPLC) is the cornerstone technique for impurity assessment, particularly using reversed-phase methodology for separating drug substances from related impurities [10] [3]. These chromatographic methods are valued for their specificity and selectivity in distinguishing the primary active compound from structurally similar impurities [10].

For comprehensive impurity characterization, HPLC is typically coupled with mass spectrometry (MS) to provide structural identification of unknown impurities and degradants [9]. The technical requirements for impurity methods emphasize sensitivity with limits of detection often at the 0.05-0.1% level relative to the drug substance, and specificity to resolve multiple impurities from each other and from the main API peak [3]. Forced degradation studies are integral to impurity method development, intentionally exposing drugs to acid, base, oxidation, light, and heat to generate potential degradants and verify the method's stability-indicating capability [3].

Table 2: Core Analytical Techniques Comparison

| Technique | Potency Applications | Impurity Applications |

|---|---|---|

| HPLC/UPLC | Supporting technique when correlated with activity [9] | Primary technique for separation and quantification [10] [3] |

| Mass Spectrometry | Limited utility for potency assessment | Structural identification of impurities [9] |

| Cell-Based Assays | Primary method for biofunctional assessment [9] [13] | Generally not applicable |

| Ligand Binding | Alternative method for binding assays [13] | Limited utility |

| Forced Degradation | Not required for potency methods | Required for validation [3] |

Validation Parameters and Requirements

Validation of Potency Assays

Potency assay validation follows ICH Q2(R1) and biological assay guidelines from USP (1033, 1034), with specific adaptations for functional bioassays [13]. The validation parameters reflect the biological nature of these methods and their use in quantifying relative potency rather than absolute concentration:

- Accuracy and Precision: Must demonstrate reliable measurement with acceptable variability through replicate measurements and control samples. Biological systems inherently show higher variability, requiring statistical rigor in validation [13].

- Linearity and Range: Defined differently than for chemical assays; demonstrates linear relationship between predicted and measured relative potencies within a verified reportable range (typically 50% to 150%) [13].

- Specificity: Confirms the assay exclusively measures the intended biological activity without interference from matrix components, impurities, or degradants [13].

- Robustness: Establishes reliability under varied conditions involving different analysts, equipment, or environmental factors [9] [13].

System suitability criteria are particularly critical for potency assays, employing control charts and predefined thresholds to monitor assay performance throughout testing [9] [13].

Validation of Impurity Methods

Impurity method validation follows ICH Q2(R1) requirements with specific acceptance criteria tailored to impurity quantification [3]. The validation parameters address the need for sensitive detection and accurate quantification of minor components:

- Specificity/Forced Degradation: Must demonstrate separation of all potential impurities from each other and the main peak, typically with resolution NLT 1.0. Forced degradation studies under acid, base, oxidation, and photolytic conditions verify the method can detect degradants [3].

- Accuracy: Determined through spike recovery studies (90-110% for 0.5-1.0% impurities; 80-120% for <0.5% impurities). At identification threshold levels (LOQ), recovery of 50-150% may be acceptable [3].

- Precision: Evaluated through repeatability (intra-day) and intermediate precision (inter-day, different analysts) with %RSD acceptance criteria varying based on impurity level [3].

- LOD/LOQ: LOQ should be at or below the reporting threshold (typically 0.1%), with signal-to-noise ratio of 10:1 for LOQ and 3:1 for LOD [3].

Diagram 2: Key validation parameters for potency versus impurity methods

Experimental Protocols and Research Reagents

Detailed Methodologies for Core Applications

Protocol 1: Cell-Based Potency Assay for GLP-1 Therapeutics

This protocol outlines a mechanism-reflective potency assay for GLP-1 receptor agonists using cAMP response measurement [9].

- Cell Preparation: Culture HEK-293 cells stably expressing human GLP-1 receptor in appropriate growth medium. Harvest cells during logarithmic growth phase and prepare suspension at 1×10^6 cells/mL in assay buffer.

- Reference Standard and Sample Preparation: Prepare serial dilutions of reference standard and test samples in assay buffer across the range of 50-150% of expected potency. Include system suitability controls.

- Stimulation and Incubation: Aliquot cell suspension into 96-well plates. Add reference and test sample dilutions to designated wells. Incubate for 60 minutes at 37°C, 5% CO₂ to allow cAMP accumulation.

- cAMP Detection: Lyse cells and quantify intracellular cAMP using competitive immunoassay detection systems according to manufacturer specifications.

- Data Analysis: Generate dose-response curves for reference and test samples. Calculate relative potency using parallel line analysis or four-parameter logistic fit in validated software (e.g., SoftMax Pro). Apply outlier analysis (e.g., Rosner Extreme Studentized Deviate Test) and assess parallelism [13].

Protocol 2: HPLC Impurity Method Validation for Nitrosamine Detection

This protocol describes the validation of an impurity method for specific nitrosamine detection per FDA guidance requirements [11] [3].

Specificity/Forced Degradation:

- Prepare samples under stress conditions: 0.1N HCl (acid), 0.1N NaOH (base), 0.3% H₂O₂ (oxidation), and light exposure (photolytic).

- Inject stressed samples and demonstrate resolution NLT 1.0 between all degradant peaks and the main API peak.

- Perform peak purity assessment using PDA detection to ensure main peak homogeneity.

Accuracy and Precision:

- Spike placebo with known impurities at LOQ, 100%, and 150% of specification level (based on AI limits, e.g., 26.5-1500 ng/day depending on nitrosamine potency category) [11].

- Prepare six samples at each level and analyze against calibrated standards.

- Calculate % recovery (80-120% acceptable for ≤0.5% impurities) and %RSD.

LOD/LOQ Determination:

- Prepare serial dilutions of impurity standards from 0.05% to 0.15% relative to API concentration.

- Inject and determine signal-to-noise ratios of 3:1 for LOD and 10:1 for LOQ.

- Verify LOQ precision with %RSD ≤20% and accuracy 80-120%.

Table 3: Essential Research Reagent Solutions

| Reagent/Material | Function in Potency Assays | Function in Impurity Methods |

|---|---|---|

| Reference Standard | Biological activity calibration [13] | Retention time and response factor determination [3] |

| Cell Lines | GLP-1 receptor expressing for mechanism reflection [9] | Generally not applicable |

| cAMP Detection Kit | Quantifying functional response [9] | Not applicable |

| Impurity Standards | Limited utility | Identification and quantification calibration [3] |

| HPLC Columns | Limited use in potency | Primary separation mechanism [10] [3] |

| Mass Spectrometer | Not typically used | Structural identification of unknown impurities [9] |

Regulatory Frameworks and Compliance Requirements

Distinct Regulatory Expectations

The regulatory frameworks governing potency and impurity methods reflect their different purposes in drug quality assessment. Potency methods for biological products must comply with 21 CFR 600.3(kk), which defines potency as "the specific ability or capacity of the product... to effect a given result" [13]. Release testing must provide quantitative data that meets pre-defined acceptance criteria as specified in 21 CFR 211.165(d) and 21 CFR 610.1 [13].

Impurity methods operate under a different regulatory framework, primarily following ICH Q3A-Q3C guidelines for qualification thresholds, with additional specific guidance for carcinogenic impurities like nitrosamines [11] [9]. The FDA's nitrosamine guidance establishes Acceptable Intake (AI) limits based on carcinogenic potency categorization, with strict limits such as 26.5 ng/day for high-potency category 1 nitrosamines like N-nitroso-benzathine, and 1500 ng/day for lower-potency category 4-5 nitrosamines [11].

Implementation Timelines and Compliance

Regulatory agencies provide updated implementation timelines for impurity control strategies, with recent FDA documents updating recommended timelines through June 2025 [11]. Manufacturers must adhere to these timelines while developing and validating appropriate methods. For both potency and impurity methods, the fundamental requirement is that they must be suitable for their intended use and provide reliable, meaningful data to ensure drugs are safe and effective throughout their shelf life.

Assay methods for potency and impurity analysis serve fundamentally different yet complementary roles in pharmaceutical quality assurance. Potency methods focus on confirming therapeutic activity through mechanism-reflective bioassays, while impurity methods prioritize patient safety through sensitive detection and control of harmful contaminants. The methodological approaches, validation parameters, and regulatory frameworks for each reflect their distinct purposes, with potency assays emphasizing biological relevance and functional assessment, and impurity methods prioritizing separation power and sensitive detection.

Both methodologies are essential components of a comprehensive quality system, providing the critical data needed to ensure that pharmaceutical products are both efficacious and safe for patient use. As regulatory expectations evolve, particularly for challenging impurities like nitrosamines, the continued development and refinement of both potency and impurity methods remains essential for advancing drug quality and patient safety.

The Evolving Regulatory and Scientific Landscape

The development and validation of analytical procedures are undergoing a significant transformation, shifting from a discrete, linear process to an integrated, holistic lifecycle approach. This evolution is driven by the need for more robust, reliable, and fit-for-purpose methods in pharmaceutical analysis, especially when comparing the distinct validation requirements for assay methods versus impurity methods [14].

The traditional view of analytical procedures emphasized a rapid development phase followed by validation and operational use. Changes were difficult to implement, often requiring revalidation or redevelopment. In contrast, the Analytical Procedure Lifecycle Management (APLM) framework introduces a more dynamic, science-based paradigm. This modern approach is championed by regulatory and pharmacopeial bodies, including the U.S. Pharmacopeia (USP), which has drafted a new general chapter, <1220>, to formalize this methodology [14]. The core principle of APLM is that a procedure should be maintained in a state of control throughout its entire lifespan—from initial design through routine use—facilitating continuous improvement and adaptation based on accumulated data [15].

This lifecycle model is particularly crucial when framing a thesis on the validation requirements for assay versus impurity methods. Assay methods, which measure the main active component, typically operate over a wide range (e.g., 70-130% of label claim) and prioritize accuracy and precision. Impurity methods, designed to quantify or qualify trace-level components, demand far greater sensitivity and selectivity, with validation heavily focused on limits of detection (LOD) and quantification (LOQ). The lifecycle approach provides a structured framework for understanding and applying these distinct validation criteria from the outset [14].

The Three Stages of the Analytical Procedure Lifecycle

The APLM framework, as proposed in the draft USP <1220>, consists of three iterative stages that ensure a procedure remains fit for its intended purpose over its entire use. The model incorporates feedback loops for continuous improvement, a critical aspect for maintaining method robustness for both assay and impurity determinations [14]. The workflow and key objectives of this lifecycle are illustrated below.

Stage 1: Procedure Design and Development

The foundation of the lifecycle is the Analytical Target Profile (ATP), a predefined objective that articulates the procedure's requirements for its intended use. The ATP is essentially a "performance specification" that defines the Critical Quality Attributes (CQAs) the method must measure, the required level of accuracy and precision, and the range over which it must operate [14]. For an assay method, the ATP might specify an accuracy of 98-102% and precision of ≤2% RSD. For an impurity method, the ATP would define the required LOQ, often as a low percentage of the drug substance (e.g., 0.05%), and the necessary selectivity to resolve impurities from the main peak and from each other.

Method development then proceeds using Quality-by-Design (QbD) principles. This involves:

- Risk Assessment: Identifying factors (e.g., chromatographic conditions, sample preparation) that could impact method performance.

- Design of Experiments (DoE): Systematically evaluating these factors to understand their interactive effects and establish a Method Operational Design Range (MODR)—the multidimensional space where the method delivers consistent, reliable quality [15]. This structured development is far more likely to yield a robust method suitable for its intended use, whether for assay or impurity profiling.

Stage 2: Procedure Performance Qualification

This stage aligns with the traditional concept of method validation but is conducted with a deeper, data-informed understanding from Stage 1. The goal is to demonstrate that the procedure, as developed, is capable of consistently meeting the criteria defined in the ATP [14]. The validation parameters assessed will differ in emphasis for assay and impurity methods, as detailed in the table below.

Table 1: Key Validation Parameters for Assay vs. Impurity Methods

| Validation Parameter | Assay Method Focus | Impurity Method Focus |

|---|---|---|

| Accuracy (Trueness) | High priority; recovery expected near 100% | Critical for quantification; may be assessed at specific low levels |

| Precision (Repeatability) | High priority; very low RSD expected | Crucial at the LOQ level and reporting threshold |

| Specificity/Selectivity | Must demonstrate no interference from excipients | Must demonstrate baseline separation from all known and potential impurities |

| Linearity & Range | Over a wide range (e.g., 50-150%) around the target concentration | Over a narrow range from the reporting threshold to at least 120% of the specification |

| Limit of Detection (LOD) | Often not critical for main component | Extremely critical; must be sufficient to detect impurities at or below the reporting threshold |

| Limit of Quantification (LOQ) | Often not critical for main component | Extremely critical; must be sufficient to quantify impurities at the reporting threshold with acceptable precision and accuracy |

| Robustness | Should be evaluated against deliberate variations in method parameters | Highly critical; small variations must not impact the separation and quantification of impurities |

Stage 3: Continued Procedure Performance Verification

The lifecycle does not end with validation. Stage 3 involves the ongoing monitoring of the procedure's performance during routine use to ensure it remains in a state of control. This is achieved through strategies like system suitability tests (SST) and tracking of control charts with pre-defined alert and action limits [14]. If data trends indicate a drift in performance, this triggers a feedback loop, prompting investigation and potential method improvement (a return to Stage 1 or 2), thus closing the lifecycle loop.

Modern Tools for Lifecycle Management: RAPI and BAGI

The industry is developing advanced tools to support the quantitative assessment of methods within the APLM framework. A significant recent advancement is the Red Analytical Performance Index (RAPI), a tool designed to standardize the evaluation of the "red" dimension—analytical performance [16] [17].

RAPI provides a structured, semi-quantitative scoring system based on ten key analytical parameters derived from ICH and other regulatory guidelines, including repeatability, intermediate precision, trueness, LOQ, working range, linearity, robustness, and selectivity [17]. Each parameter is scored from 0 to 10, resulting in a final composite score between 0 and 100. This score is visually represented in a radial pictogram, offering an immediate, transparent overview of a method's strengths and weaknesses [16]. RAPI is complemented by its "sister" tool, the Blue Applicability Grade Index (BAGI), which assesses practical and economic aspects ("blue" dimension). Together, they form a comprehensive evaluation system under the White Analytical Chemistry (WAC) model, which integrates performance (red), sustainability (green), and practicality (blue) [17].

Table 2: The Scientist's Toolkit for Analytical Procedure Lifecycle Management

| Tool / Solution | Category | Primary Function in APLM |

|---|---|---|

| Analytical Target Profile (ATP) | Strategic Document | Defines the objective and performance standards for the analytical procedure [14]. |

| Design of Experiments (DoE) | Statistical Framework | Optimizes method conditions efficiently and defines the Method Operational Design Range (MODR) [15]. |

| Red Analytical Performance Index (RAPI) | Assessment Software | Quantifies and visualizes analytical performance for objective comparison and lifecycle monitoring [16] [17]. |

| High-Resolution Mass Spectrometry (HRMS) | Instrumentation | Provides unmatched sensitivity and selectivity for characterizing complex molecules and impurities [15]. |

| Process Analytical Technology (PAT) | Monitoring System | Enables real-time in-process testing and control, supporting Real-Time Release Testing (RTRT) [15]. |

| Cloud-Based LIMS (Laboratory Information Management System) | Data Management | Enables real-time data sharing and collaboration across global sites, underpinning data integrity (ALCOA+) [15]. |

Experimental Protocol for a Comparative Lifecycle Study

To illustrate the application of the APLM concept in a research context, the following is a generalized experimental protocol for a study comparing two analytical procedures—for example, a traditional HPLC method versus a modern UHPLC method for drug assay and impurity profiling.

1. Define the ATP: The study begins by defining a precise ATP. For example: "The procedure must quantify the active pharmaceutical ingredient (API) with an accuracy of 98.0-102.0% and a precision of ≤1.5% RSD, and must simultaneously identify and quantify specified impurities at a level of 0.10% with an accuracy of 90-110% and a precision of ≤5.0% RSD."

2. Method Development via DoE: Both methods are developed using a DoE approach. Critical factors (e.g., column temperature, mobile phase gradient, and pH) are varied within a predefined range. Responses such as peak resolution, tailing factor, and runtime are measured to establish the MODR for each method [15].

3. Procedure Performance Qualification: A full validation is conducted for both methods against the parameters in Table 1, with specific emphasis as dictated by the ATP (e.g., LOQ for impurities).

4. Holistic Assessment with RAPI and BAGI: The validation data from both methods are input into the RAPI software to generate a quantitative performance score and visual pictogram [17]. The methods are also assessed using the BAGI tool to compare practicality (e.g., cost, time, safety).

5. Data Analysis and Lifecycle Selection: The results are synthesized. A hypothetical outcome is summarized below, demonstrating how the lifecycle approach facilitates an objective, multi-faceted comparison.

Table 3: Hypothetical Comparative Data for Two Chromatographic Methods

| Assessment Criteria | Traditional HPLC Method | Modern UHPLC Method | Inference for Lifecycle Management |

|---|---|---|---|

| Analytical Performance (RAPI Score) | 75 / 100 | 88 / 100 | UHPLC demonstrates superior overall performance and robustness. |

| Accuracy (API Assay) | 99.5% | 100.2% | Both methods meet ATP criteria for the main assay. |

| LOQ for Key Impurity | 0.15% | 0.05% | UHPLC method better fulfills the impurity ATP requirement (0.10%). |

| Run Time per Sample | 20 minutes | 5 minutes | UHPLC offers significant throughput advantages for routine use. |

| Organic Solvent Consumption | 12 mL/sample | 3 mL/sample | UHPLC is more environmentally sustainable ("green"). |

| Practicality (BAGI Score) | 65 / 100 | 82 / 100 | UHPLC is more practical and cost-effective over the procedure's lifecycle. |

Conclusion: Based on the holistic data, the UHPLC method, while potentially having a higher initial investment, is more fit-for-purpose according to the ATP, more sustainable, and more practical for long-term lifecycle management. This structured, data-driven comparison supports a sound scientific and business case for its selection and adoption.

When is Validation Required? New Methods, Transfers, and Significant Changes

In pharmaceutical research and development, the reliability of analytical data is the cornerstone of correct scientific interpretation and decision-making. Unreliable results can lead to the over- or underestimation of effects, false interpretations, and unwarranted conclusions, which in a regulatory context, can compromise patient safety and drug efficacy [18]. Validation is the formal process of establishing, through laboratory studies, that the performance characteristics of an analytical method are suitable for its intended analytical purpose [19]. This guide objectively compares validation requirements across three critical scenarios: the introduction of new methods, the transfer of existing methods, and the management of significant changes to validated methods, framed within the specific contexts of assay and impurity methods research.

Part 1: Validation for New Analytical Methods

Core Principles and Regulatory Definitions

Before a new analytical method is used in a regulatory-decision context, its relevance, reliability, and fitness for purpose must be established. According to the Organisation for Economic Co-operation and Development (OECD), validation is “the process by which the reliability and relevance of a particular approach, method, process or assessment is established for a defined purpose” [19]. In plain language, this process assures developers and users that an assay is ready and acceptable for its intended use [19].

- Reliability refers to the reproducibility of the method within and between laboratories over time when performed using the same protocol.

- Relevance ensures the scientific underpinning of the test and that the outcome it measures is meaningful and useful for a particular purpose [19].

This process is supported by agencies like the Interagency Coordinating Committee on the Validation of Alternative Methods (ICCVAM), which evaluates and recommends alternative test methods for regulatory use [19].

Key Validation Parameters: Assay vs. Impurity Methods

The core parameters required for validating a new method are well-established, but the acceptance criteria and relative importance of each parameter can differ significantly between assay and impurity methods. The following table summarizes these parameters and their typical emphasis.

Table 1: Key Validation Parameters for New Assay and Impurity Methods

| Validation Parameter | Brief Description & Purpose | Relative Emphasis for Assay Methods (Quantitative) | Relative Emphasis for Impurity Methods (Quantitative) |

|---|---|---|---|

| Accuracy | Closeness of measured value to true value | High. Critical to demonstrate the method correctly measures the main analyte. | High. Critical for quantifying impurity levels against a reference standard. |

| Precision (Repeatability, Intermediate Precision) | Closeness of agreement between a series of measurements | High. Essential for demonstrating consistency of the main component result. | Very High. Impurities are often at low levels, making precision challenging and critical. |

| Specificity | Ability to assess the analyte unequivocally in the presence of other components | High. Must prove excipients, degradants, or other impurities do not interfere. | Highest. Must separate and quantify multiple impurities from each other and the main analyte. |

| Linearity & Range | The ability to obtain results proportional to analyte concentration, within a specified range | High. A linear response across the expected product strength is required. | High. Must be linear at the low end, covering from reporting threshold to specification limit. |

| Limit of Detection (LOD) | Lowest amount of analyte that can be detected | Low. Not a primary concern for the main component present at high levels. | High. Must be established to know when an impurity is detectable. |

| Limit of Quantification (LOQ) | Lowest amount of analyte that can be quantified with acceptable accuracy and precision | Low. Not a primary concern for the main component. | Very High. Must be established to know when an impurity can be reliably quantified. |

| Robustness | Capacity to remain unaffected by small, deliberate variations in method parameters | Medium-High. Important for method reliability during routine use. | Very High. Small variations can significantly impact the separation and quantification of impurities. |

Experimental Protocol for a New Method Validation Study

A typical validation protocol for a new HPLC method for an impurity procedure would involve the following detailed steps [18]:

Solution Preparation: Prepare a stock solution of the drug substance and its known impurities. From this, prepare a series of solutions for the validation study:

- Accuracy/Recovery Solutions: Spiked samples at multiple concentration levels (e.g., 50%, 100%, 150% of the specification level) in the presence of the drug product matrix.

- Precision Solutions: A minimum of six independent preparations at the 100% level of the specification.

- Linearity Solutions: A minimum of five concentrations spanning from the LOQ to 150-200% of the specification limit.

- Specificity Solutions: Individual solutions of the main analyte, each impurity, and placebos of the sample matrix to demonstrate separation and lack of interference.

Instrumentation and Data Acquisition: Analyses are performed using a qualified HPLC system with a diode array detector (DAD). The chromatographic conditions (column, mobile phase, gradient, temperature, flow rate) are set as per the method. Data on peak area, retention time, and resolution are recorded.

Data Analysis and Calculation:

- Accuracy: Calculate the mean percentage recovery for the analyte at each level. The mean recovery should be within 98-102%.

- Precision: Calculate the %Relative Standard Deviation (%RSD) of the peak areas for the six preparations. An %RSD of not more than 5.0% is typically acceptable for impurities.

- Linearity: Plot the peak area against the concentration and perform linear regression analysis. The correlation coefficient (r) should be greater than 0.999.

- LOD/LOQ: Determine based on a signal-to-noise ratio of 3:1 for LOD and 10:1 for LOQ, confirmed by injecting standards at those levels with acceptable accuracy and precision.

Part 2: Validation During Method Transfer

The Purpose of Transfer Validation

Method transfer is the process of qualifying a receiving laboratory (RL) to execute a validated analytical method that was developed and validated in a transferring laboratory (TL). The goal is to ensure the method performs in the RL as reliably as it does in the TL, ensuring data consistency across sites.

Comparative Study Designs for Method Transfer

The core of a method transfer is a comparison study. The design of this study depends on the goal and the nature of the methods being compared [20].

Table 2: Quantitative Comparison Approaches in Method Transfer

| Comparison Approach | Description & Formula | Best Suited For |

|---|---|---|

| Mean Difference (Constant Bias) | Calculates the average difference between results from the RL and TL.Formula: ( \text{Mean Difference} = \frac{\sum{i=1}^{n} (Ri - Ti)}{n} ) Where ( Ri ) = Receiving Lab result, ( T_i ) = Transferring Lab result. | Comparing parallel instruments or labs running the exact same method. Assumes any difference is constant across the concentration range [20]. |

| Bias as a Function of Concentration (Regression) | Uses linear regression (e.g., Passing-Bablok) to model the relationship and estimate bias across the measuring range. | Situations where the RL uses different instrumentation or a slightly modified method, and bias is expected to vary with concentration [20]. |

| Sample-Specific Differences | Examines the difference for each sample individually. The overview report shows the smallest and largest difference. | Small-scale transfers with a limited number of samples (e.g., <10), or when ensuring every sample result is within pre-set bias goals [20]. |

Experimental Protocol for a Method Transfer Study

A standard protocol for an inter-laboratory method transfer is as follows [20]:

Pre-Transfer Agreement: The TL and RL agree on the transfer protocol, which includes the number of samples (typically a minimum of 3 lots analyzed in triplicate each), acceptance criteria (e.g., %RSD <2.0%, mean difference ±2.0%), and the responsibilities of each lab.

Sample Selection: The TL provides the RL with homogeneous samples of known concentration, including drug substance and finished product batches, which cover the expected range.

Execution: Analysts at the RL, who have been trained on the method, perform the analysis independently following the written method procedure.

Data Analysis: Both labs perform statistical analysis on the collected data. The RL's results are compared against the TL's results or the known reference values. A statistical test like the t-test is often used to compare the means of the two data sets, with a significance level of p > 0.05 indicating no statistically significant difference.

Part 3: Validation for Significant Changes

Defining a "Significant Change"

In the context of maintaining validated systems, a significant change is any modification that could reasonably be expected to affect the safety or effectiveness of a product or the performance of an analytical method [21]. This is a crucial concept in regulated manufacturing and laboratory environments. The guiding principle is that implementing change in a validated system is a critical time for ensuring it remains controlled [22].

Categorizing Changes and Required Re-validation

Not all changes are equal. They can be categorized to determine the appropriate level of re-validation effort [22].

Table 3: Categories of Change and Corresponding Re-validation Actions

| Change Category | Description & Examples | Typical Re-validation Action |

|---|---|---|

| Minor | A change with minimal impact and low risk. *Examples: *Changing a supplier for a equivalent solvent. Software update for a bug fix with no functionality change. | Minimal testing. Limited re-validation focused only on the specific element changed. Testing is confined to the directly affected component [22]. |

| Major | A change with a notable, direct impact on the method or system.Examples: Changing a column from one manufacturer to another (same chemistry claimed). Changing the wavelength of a detector. Modifying a mobile phase pH by ±0.1 units. | Wide-ranging testing. Requires re-validation of areas both directly and indirectly impacted. A full suite of validation parameters (e.g., Specificity, Precision, Accuracy) may need to be partially or fully re-executed to prove the change did not adversely affect the method [22]. |

| Critical | A change with a substantial, system-wide impact and high risk.Examples: Changing the core analytical technique (e.g., from HPLC to UPLC). Extending the method to a new matrix. Changing the active pharmaceutical ingredient (API) synthesis route. | Full re-validation. Typically requires re-validating the entire method as if it were new. All key parameters must be re-evaluated to establish that the method remains suitable for its intended use [22]. |

The Change Management and Re-validation Workflow

A structured change management process is essential for handling modifications to validated systems [22].

Experimental Protocol for Assessing a Significant Change

The evaluation of a significant change, such as a major modification to an HPLC method, follows a rigorous process [22] [21]:

Change Request and Impact Assessment: A formal change request is submitted, detailing the proposed change (e.g., "Replace Column A with Column B"). The impact on the method's performance, the product's quality attributes, and the patient is assessed.

Risk Assessment: A risk assessment is conducted to identify potential new hazards or shifts in existing risks. It estimates the probability and severity of harm and determines the acceptability of the residual risk. This assessment is crucial for justifying the extent of re-validation [21].

Re-validation Testing Protocol: Based on the categorization (e.g., Major), a targeted re-validation protocol is written. For a column change, this would typically require re-evaluating:

- System Suitability: Ensuring it still meets criteria with the new column.

- Specificity: Demonstrating that resolution between the main peak and impurities is maintained.

- Precision: Performing a minimum of six injections of a standard to confirm repeatability. The tests are performed in a controlled, non-production "sandbox" environment if possible [22].

Documentation and Implementation: The results are documented, and if successful, the change is implemented in the live environment. The validation documentation is updated to reflect the new method conditions, ensuring the system remains in a validated state [22].

The Scientist's Toolkit: Essential Reagents and Materials for Validation

The following table details key materials used in a typical bioanalytical method validation study, such as for quantifying a drug and its metabolites in plasma [18].

Table 4: Key Research Reagent Solutions for Bioanalytical Validation

| Item | Function in Validation |

|---|---|

| Analyte of Interest (Drug Substance) | The primary target molecule for quantification. Serves as the reference standard for preparing calibration curves and quality control samples. |

| Stable Isotope-Labeled Internal Standard (IS) | A chemically identical version of the analyte with atoms replaced by stable isotopes (e.g., ²H, ¹³C). Added to all samples to correct for variability in sample preparation and instrument response [18]. |

| Control Blank Matrix | The biological fluid (e.g., human plasma) free of the analyte. Used to prepare calibration standards and quality control (QC) samples and to demonstrate specificity by showing no interfering peaks. |

| Certified Reference Standards for Metabolites/Impurities | Highly purified and well-characterized materials used to identify and quantify degradation products or metabolites. Critical for validating impurity methods. |

| Quality Control (QC) Samples | Samples spiked with known concentrations of the analyte (Low, Mid, High) in the control matrix. These are treated as unknown during analysis to assess the accuracy and precision of the run. |

| Matrix Effect Evaluation Solutions | Solutions used to investigate ion suppression or enhancement in mass spectrometry. Often involve post-column infusion of the analyte while injecting a blank matrix extract [18]. |

Validation is not a one-time event but an ongoing commitment to data quality and integrity throughout the lifecycle of an analytical method. The rigor and scope of validation are dictated by the specific trigger: new method development demands a comprehensive, parameter-based approach; method transfer relies on comparative statistical studies to ensure consistency; and the management of significant changes requires a risk-based assessment to determine the appropriate level of re-validation. For researchers in drug development, a deep understanding of these requirements, particularly the nuanced differences between assay and impurity methods, is not merely a regulatory hurdle but a fundamental scientific practice that ensures the safety and efficacy of medicinal products.

Applying Validation Parameters: A Practical Guide for Assay and Impurity Methods

In the highly regulated world of pharmaceutical development, demonstrating that an analytical method is "fit for purpose" is a fundamental requirement. The Analytical Target Profile (ATP) has emerged as the foundational concept to formally define this fitness, providing a prospective summary of the performance requirements an analytical procedure must meet to reliably report on a product's critical quality attributes (CQAs) [23]. This guide compares how the ATP is applied to two critical analytical procedures: assay methods and impurity methods, highlighting their distinct performance requirements and validation strategies.

The Analytical Target Profile: A Strategic Foundation

The ATP is a strategic tool that defines the quality of the reportable value needed from an analytical procedure, ensuring it is suitable for its intended use and capable of supporting key decisions about product quality and compliance [24]. It is the analytical equivalent of the Quality Target Product Profile (QTPP) for a drug product [23].

The lifecycle of an analytical procedure, guided by the ATP, is a continuous process from definition through post-approval change management. The following diagram illustrates this workflow and the role of the ATP within it.

Comparative Analysis: Assay vs. Impurity Methods

While the ATP framework is consistent, the specific performance criteria it defines vary significantly depending on the procedure's purpose. The table below summarizes the key distinctions in how ATP requirements are applied to assay methods versus impurity methods.

Table 1: ATP and Validation Requirements Comparison: Assay vs. Impurity Methods

| Characteristic | Assay/Potency Methods | Impurity Methods |

|---|---|---|

| Primary ATP Focus | Accuracy and precision of the main component measurement [8]. | Specificity, sensitivity, and ability to separate and quantify minor components [3]. |

| Key Validation Parameters | Accuracy, Precision, Linearity, Range [25]. | Specificity/Forced Degradation, LOD/LOQ, Range [3] [25]. |

| Accuracy & Precision Acceptable Ranges | Typically tighter ranges (e.g., 98-102%) for the main analyte [8]. | Wider, level-dependent ranges (e.g., 90-110% at 0.5-1.0%; 80-120% for levels <0.5%) [3]. |

| Specificity & Forced Degradation | Must demonstrate no interference from excipients or impurities [25]. | Critical to demonstrate separation of all potential impurities from each other and the main peak. Requires stress studies to predict future impurities [3]. |

| Linearity & Range | Typically 80-120% of the test concentration [25]. | From LOQ (e.g., 0.05%) to at least 1.5x the specification limit [3]. |

| System Suitability | Ensures system performance is adequate for the intended assay measurement. | Often includes a degraded sample to demonstrate ongoing separation capability for critical impurity pairs [3]. |

Experimental Protocols for Method Validation

The validation experiments conducted are direct implementations of the criteria established in the ATP. The protocols below are typical for demonstrating key ATP requirements.

Protocol 1: Establishing Specificity for an Impurity Method via Forced Degradation

For impurity methods, specificity is paramount and is rigorously demonstrated through forced degradation studies [3].

- Objective: To demonstrate the method can separate and quantify known and potential degradation products from the active pharmaceutical ingredient (API) and from each other.

- Sample Preparation: The drug substance or product is stressed under various conditions including acid, base, oxidation, thermal, and photolytic stress. The conditions should be "realistic and practical" [3]. For example, for a drug product stable at pH 3-5, acid degradation should be studied in more detail than base degradation.

- Data Analysis:

- Peak Purity: Use a diode array detector (DAD) to ensure the main peak is pure and not hiding any co-eluting impurities. Note that peak purity can be misleading if an impurity has a similar spectrum, so the method should be challenged with different chromatographic parameters (e.g., new column lot) [3].

- Mass Balance: An attempt is made to account for all degradation products and the remaining API. While a mass balance of 100% is ideal, values as low as 80% can be acceptable with proper justification (e.g., non-UV absorbing degradants, difference in response factors) [3].

Protocol 2: Determining Accuracy and Precision for an Assay Method

For an assay method, the ATP requires a high degree of confidence in the reportable value for the main component [24] [8].

- Objective: To prove the method can recover the API from the sample matrix with high accuracy and precision.

- Sample Preparation: A placebo blend representative of the drug product formulation is spiked with known quantities of the API at multiple concentration levels, typically 80%, 100%, and 120% of the target concentration. For drug substance, the API is simply dissolved at the target concentration.

- Data Analysis:

- Accuracy: Calculated as the percentage recovery of the known, added amount of API. Results should typically be within 98-102% [8].

- Precision (Repeatability): Expressed as the relative standard deviation (%RSD) of multiple (e.g., six) injections of a single homogeneous sample. The %RSD should be low (e.g., <1-2%) and the total measurement error, incorporating both accuracy and precision, should be evaluated against the product specification to ensure it is fit for purpose [24] [8].

Essential Research Reagent Solutions

The following table details key materials and instruments required to develop and validate methods based on a predefined ATP.

Table 2: Essential Research Reagents and Tools for ATP-Driven Analytical Development

| Item | Function/Purpose |

|---|---|

| Chemical Reference Standards | Highly purified substances used to confirm the identity, potency, and purity of the API and known impurities. Essential for specificity, accuracy, and linearity experiments. |

| Forced Degradation Reagents | Acids (e.g., HCl), bases (e.g., NaOH), oxidizers (e.g., H₂O₂) used in stress studies to challenge method specificity and robustness [3]. |

| HPLC/UPLC System with DAD | The core instrumentation for chromatographic separation and detection. A Diode Array Detector (DAD) is critical for assessing peak purity during forced degradation [3]. |

| Chromatography Data System (CDS) | Software for instrument control, data acquisition, and analysis. Modern systems may integrate AI for autonomous method optimization [26]. |

| Validated Method Protocol | A detailed, step-by-step documented procedure that has been verified to meet all ATP criteria, ensuring consistency and compliance during transfer and routine use [27] [8]. |

Defining fitness-for-purpose through a well-constructed ATP is not merely a regulatory checkbox but a strategic imperative for efficient and compliant analytical practices. As shown, the application of the ATP is highly specific: assay methods demand high accuracy and precision for the main component, while impurity methods prioritize extreme specificity, sensitivity, and separation power. Adopting this ATP-focused, lifecycle approach, as championed by ICH Q14 and Q2(R2), ensures analytical methods remain scientifically sound and suitable for their intended purpose, ultimately safeguarding product quality and patient safety from development through commercial production.

In pharmaceutical development, analytical method validation is a critical, documented process that proves a testing procedure is reliable and suitable for its intended purpose [28]. It confirms that an analytical method consistently produces accurate, precise, and reproducible results, thereby underpinning the credibility of scientific data and ensuring drug product quality, safety, and efficacy [4] [29]. Guidelines from the International Council for Harmonisation (ICH), particularly ICH Q2(R1) and its updated revision ICH Q2(R2), provide the globally recognized framework for validating these procedures [30] [31]. The core parameters—Specificity, Accuracy, Precision, Linearity, and Range—form the foundation for assessing any analytical method's performance [4] [32]. Understanding these parameters is essential for researchers, scientists, and drug development professionals, especially when comparing validation requirements for different method types, such as assay methods versus impurity methods, as the context of use dictates the stringency and approach to validation [33].

Core Validation Parameters and Regulatory Definitions

The validation of analytical procedures for pharmaceutical substances and products is guided by harmonized principles outlined in ICH guidelines. The following core parameters are essential for demonstrating that a method is fit-for-purpose.

Specificity

Specificity is the ability of a method to assess unequivocally the analyte of interest in the presence of other components that may be expected to be present in the sample matrix [31] [29]. This includes impurities, degradation products, excipients, or other matrix components. A specific method yields results for the target analyte, and the target analyte only, free from any interference [32]. For assay methods, this typically means demonstrating that the excipients in a drug product do not interfere with the measurement of the Active Pharmaceutical Ingredient (API). For impurity methods, specificity is even more critical; it must be demonstrated that the method can resolve and accurately quantify each impurity individually, as well as from the main API peak [4].

Accuracy

The accuracy of an analytical procedure expresses the closeness of agreement between the value found and a value accepted as either a conventional true value or an accepted reference value [4] [32]. It is a measure of the trueness of the method, often demonstrated through recovery experiments where a known amount of the analyte is added to the sample matrix, and the measured value is compared to the theoretical value [29]. Accuracy is usually reported as a percentage recovery.

Precision

Precision expresses the closeness of agreement (degree of scatter) between a series of measurements obtained from multiple sampling of the same homogeneous sample under the prescribed conditions [4]. It is generally considered at three levels:

- Repeatability: Precision under the same operating conditions over a short interval of time (e.g., same analyst, same equipment).

- Intermediate Precision: Variations within the same laboratory, such as different days, different analysts, or different equipment.

- Reproducibility: Precision between different laboratories, which is often assessed during collaborative studies [4] [29]. Precision is typically expressed as standard deviation, relative standard deviation (RSD), or confidence interval [4].

Linearity

Linearity of an analytical procedure is its ability (within a given range) to obtain test results that are directly proportional to the concentration (amount) of analyte in the sample [4] [32]. It is demonstrated by preparing and analyzing a series of samples with analyte concentrations across the expected range. The data is usually evaluated by plotting the signal response against the concentration and calculating a regression line, often by the least-squares method [4]. The correlation coefficient (R²) is a common metric, with a value of ≥ 0.999 often expected for assay methods [29].

Range

The range of an analytical procedure is the interval between the upper and lower concentrations (amounts) of analyte in the sample for which it has been demonstrated that the procedure has a suitable level of precision, accuracy, and linearity [4] [32]. The specific range is derived from linearity studies and depends entirely on the intended application of the method. For instance, the range for an assay of a drug substance or product is typically 80% to 120% of the test concentration, whereas for content uniformity, it is 70% to 130% [4].

Comparative Analysis: Assay vs. Impurity Methods

The application and acceptance criteria for validation parameters differ significantly between assay methods (intended to measure the main active component) and impurity methods (intended to identify and quantify minor components). The table below provides a detailed, parameter-by-parameter comparison of validation requirements.

Table 1: Comparison of Validation Parameters for Assay vs. Impurity Methods

| Validation Parameter | Typical Requirement for Assay Methods | Typical Requirement for Impurity Methods |

|---|---|---|