Beyond Accuracy: Developing Overall Efficiency (OE) Metrics for Optimization Methods in Drug Discovery

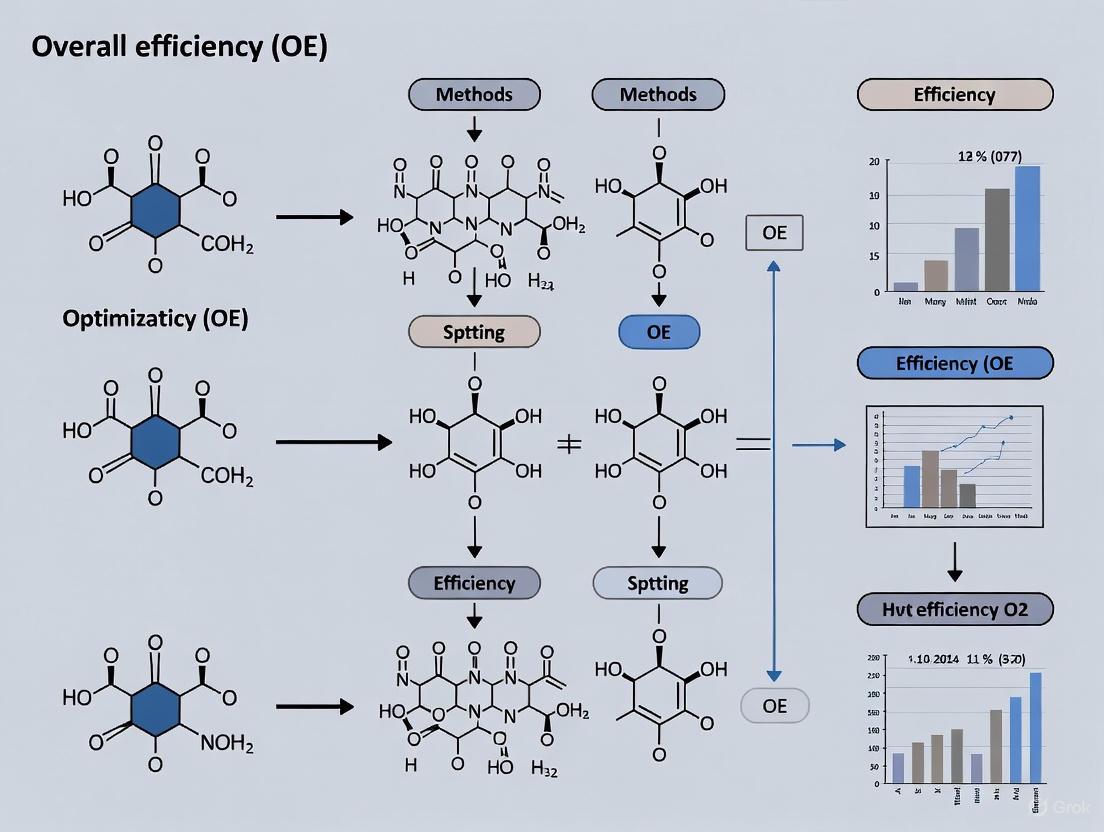

This article addresses the critical need for Overall Efficiency (OE) metrics to evaluate optimization methods in drug discovery and development.

Beyond Accuracy: Developing Overall Efficiency (OE) Metrics for Optimization Methods in Drug Discovery

Abstract

This article addresses the critical need for Overall Efficiency (OE) metrics to evaluate optimization methods in drug discovery and development. Tailored for researchers, scientists, and development professionals, it moves beyond single metrics like accuracy to propose a holistic framework. The content explores the limitations of current evaluation standards, outlines the components of a robust OE metric, provides actionable strategies for implementation and troubleshooting, and establishes methods for validation and comparative analysis. By integrating computational speed, resource use, and predictive robustness, this framework aims to enhance decision-making, reduce attrition rates, and accelerate the translation of preclinical research into clinical success.

Why Single Metrics Fail: The Foundational Need for Overall Efficiency in Drug Discovery

Technical Support Center: Troubleshooting Clinical Trial Efficiency

This support center provides evidence-based guidance to help researchers and drug development professionals diagnose and resolve common inefficiencies in clinical trials, framed within the context of Overall Efficiency (OE) metrics for optimization methods research.

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common operational metrics for diagnosing clinical trial inefficiency? The top operational metrics for diagnosing inefficiency focus on study startup, enrollment, and financial performance [1]:

- Study Startup: Time from Institutional Review Board (IRB) submission to approval; Time from notice of grant award to study opening.

- Enrollment: Accrual-to-date versus target amount; Days since last participant was enrolled.

- Resource Allocation: Staff time spent on protocol per task; Individual staff time spent on activation tasks per protocol.

FAQ 2: A significant number of screened participants are failing to qualify. What is the primary cause and solution? Screen failures, occurring in 20-30% of trials [2], are primarily caused by abnormal laboratory values (59% of cases) [2]. This indicates a mismatch between initial pre-screening and formal protocol criteria.

- Troubleshooting Protocol:

- Verify Pre-Screening: Audit your pre-screening checklists against the full protocol eligibility criteria.

- Analyze Lab Data: Review the specific laboratory values causing failures to see if pre-screening can be improved.

- Implement AI Tools: Consider AI-driven platforms that can interpret patient charts and match them to trials with high precision, reducing manual burden and improving pre-screening accuracy [3].

FAQ 3: How can we reduce patient dropout rates, which are harming our data integrity and timelines? Around 30% of participants drop out of trials [4], with 18% of randomized patients typically leaving before study completion [5]. The main reasons are logistical (e.g., travel burden, schedule conflicts) and a lack of ongoing engagement [4] [5].

- Troubleshooting Protocol:

- Diagnose the Cause: Survey withdrawn patients. Those who drop out are 2x more likely to have found the Informed Consent Form difficult to understand and 2.4x more likely to have found site visits stressful [5].

- Implement Retention Strategies:

FAQ 4: Our trial sites are struggling with staff shortages and burnout. How can we improve operational efficiency? Over 80% of US research sites face staffing shortages, driven by unsustainable job expectations and inadequate compensation [3]. The global number of clinical trial investigators fell by almost 10% from 2017-2024 [3].

- Troubleshooting Protocol:

- Measure Staff Effort: Track metrics like "Staff time spent on protocol per task" to objectively demonstrate workload and negotiate better budgets [1].

- Reduce Data Burden: Invest in technology and infrastructure that automates data collection and reduces manual tasks, especially for staff in community settings [3].

- Expand Site Networks: Build capacity and provide support to community and rural healthcare systems to broaden the pool of available research staff [3].

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Poor Patient Enrollment

- Problem: Studies are failing to meet accrual goals.

- OE Metric Impact: Directly reduces operational efficiency and return on investment.

- Diagnostic Steps:

- Monitor Accrual: Track "accrual-to-date versus target amount" and "days since last participant enrolled" [1].

- Assess Feasibility: Determine if the issue is site-specific or related to overly narrow trial criteria.

- Evaluate Accessibility: Check if your trial sites are concentrated only in academic medical centers, effectively shutting out the 80% of patients treated in community settings [3].

- Solutions:

- Expand Geographic Access: Use a decentralized trial model and technology to enable participation from community clinics and rural hospitals [3].

- Leverage AI: Deploy AI-powered platforms to rapidly screen massive volumes of patient records and identify eligible patients across a wider network [3].

- Simplify Protocols: Redesign protocols to reduce the number of complex procedures and frequent site visits that deter participation [3].

Guide 2: Addressing Delays in Study Activation and Startup

- Problem: The time from grant award to study opening is too long, delaying research.

- OE Metric Impact: Increases overhead costs and shortens the period for data collection.

- Diagnostic Steps:

- Pinpoint Bottlenecks: Track metrics for "IRB submission to approval" and "contract receipt to execution turnaround" [1].

- Identify Causes: Analyze whether delays are due to contractual negotiations, regulatory reviews, or internal resource constraints.

- Solutions:

Quantitative Data on Clinical Trial Inefficiency

Table 1: Screen Failure and Dropout Analysis [2]

| Predictor | Impact on Screen Failures (Crude Odds Ratio) | Impact on Dropouts (Crude Odds Ratio) |

|---|---|---|

| High-Risk Studies | 39.4x higher odds | 2.6x higher odds |

| Industry-Funded Studies | 27.3x higher odds | No significant association |

| Interventional Studies | 237.6x higher odds | 2.5x higher odds |

| Healthy Participants | 19.5x higher odds | No significant association |

Table 2: The Financial and Operational Cost of Inefficiency [3]

| Cost Factor | Estimated Impact |

|---|---|

| Median Cost of Drug Development | $879.3 million [6] to $2.3 billion [3] |

| Average Phase 3 Oncology Trial Cost | Nearly $60 million (can exceed $100 million) |

| Cost of Trial Delay (per day) | $40,000 in direct costs + $500,000 in lost revenue (foregone drug sales) |

| Patient Recruitment Cost | Over $6,500 per patient [4] |

Experimental Protocols for Efficiency Analysis

Protocol 1: "Leaky Pipe" Analysis for Patient Recruitment and Retention

- Objective: To systematically identify and quantify points of participant attrition from initial identification through trial completion [5].

- OE Metric Connection: This protocol provides the foundational data for calculating participant-related efficiency metrics.

- Methodology:

- Define Funnel Stages: Clearly delineate each stage of the participant journey (e.g., Identified → Pre-screened → Consented → Screened → Randomized → Completed).

- Track Volume: Record the number of participants at each stage for a given study or set of studies.

- Calculate Attrition: Determine the percentage of participants lost between each consecutive stage.

- Industry Benchmarking: Compare your attrition rates to industry benchmarks, which suggest that approximately 10 patients need to be identified to randomize 1, and about 18% of randomized patients will drop out [5].

- Required Materials:

- Patient tracking database or Clinical Trial Management System (CTMS).

- Screening and enrollment logs.

- Visualization: The workflow for this analysis can be modeled as a funnel, as shown in the diagram below.

Patient Journey and Attrition Funnel

Protocol 2: Operational Efficiency Benchmarking for Study Startup

- Objective: To measure and compare the duration of critical study startup activities against internal historical data and external benchmarks to identify areas for process improvement.

- OE Metric Connection: This directly measures the efficiency of core operational processes that delay trial initiation.

- Methodology:

- Select Key Metrics: Focus on "Time from IRB submission to approval" and "Time from notice of grant award to study opening" [7] [1].

- Data Collection: Extract dates for each milestone from your regulatory and project management systems.

- Calculate Durations: Compute the time elapsed (in business days) for each interval.

- Stratify and Analyze: Break down the data by study type (e.g., phase, therapeutic area, risk level) to understand variability.

- Benchmark: Compare your median and quartile times to industry standards or consortium data (e.g., from the CTSA program) [7].

- Required Materials:

- Regulatory document tracking system.

- Grant management and activation timelines from the CTMS.

Study Startup Efficiency Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Improving Clinical Trial Efficiency

| Tool / Solution | Function | Application in OE Research |

|---|---|---|

| AI-Driven Patient Matching Platform [3] | Interprets entire patient charts using AI to quickly and precisely identify eligible patients for specific trials. | Reduces screen failure rates and manual pre-screening burden, accelerating enrollment. |

| Clinical Trial Management System (CTMS) | Centralized software for managing all operational aspects of a clinical trial, from startup to closeout. | Provides the data source for tracking key OE metrics like activation timelines and accrual. |

| Electronic Data Capture (EDC) System | A computerized system designed for the collection of clinical data in electronic format for clinical trials. | Improves data quality and reduces time from data collection to database lock. |

| Remote Data Capture & eConsent Tools | Enables remote participant monitoring and electronic informed consent processes. | Reduces logistical barriers for patients, improving retention and supporting diverse recruitment [6]. |

| Business Intelligence (BI) Platforms [8] | Software (e.g., Microsoft Power BI, Tableau) that analyzes and visualizes operational data. | Creates dashboards for real-time monitoring of OE metrics, enabling data-driven decisions. |

Troubleshooting Guide: Common Metric Misapplications

| Error | Cause | Solution |

|---|---|---|

| High performance metrics (Accuracy/F1) on imbalanced data | Applying Accuracy to a dataset where one class dominates (e.g., 95% healthy patients, 5% diseased) creates an illusion of high performance by correctly classifying the majority class [9]. | Use metrics that are robust to class imbalance, such as Precision-Recall (PR) curves and Area Under the PR Curve, or calculate metrics separately for each class [9]. |

| Model fails in real-world clinical deployment despite high F1-Score | The F1-Score, a harmonic mean of Precision and Recall, may not align with the clinical or economic cost of different error types (e.g., a false negative can be more costly than a false positive) [9]. | Conduct a clinical utility analysis that incorporates the real-world consequences of different error types into the evaluation framework, moving beyond a single summary metric [10]. |

| Statistical results and conclusions are not supported by the data | Using statistical tests without verifying their underlying assumptions (e.g., using a parametric test on non-normally distributed data) or misapplying tests for multiple comparisons can invalidate results [9]. | Create a detailed statistical analysis plan a priori that specifies tests, handles outliers, and corrects for multiple comparisons. Ensure all statistical assumptions are met and disclosed [9]. |

| Inability to compare models or reproduce published results | Lack of transparency in reporting, such as omitting details on data preprocessing, exclusion of outliers, or the decision-making process for choosing certain metrics, makes validation impossible [9]. | Adopt comprehensive reporting checklists. Disclose all analytical decisions, including data transformations and outlier handling. Provide full methodological details for reproducibility [10] [9]. |

Frequently Asked Questions (FAQ)

What is the most critical first step to avoid being misled by Accuracy?

The most critical step is to analyze your dataset's class distribution before selecting your metrics. If you are working with a naturally imbalanced problem, such as screening for a rare disease where the positive cases are a small minority, Accuracy is a misleading metric and should be avoided in favor of metrics like Sensitivity, Specificity, and the Precision-Recall curve [9].

My dataset is highly imbalanced. When should I use F1-Score versus a Precision-Recall Curve?

The F1-Score provides a single summary number, which is useful for quick model comparison when you want to balance the cost of false positives and false negatives. However, a single F1-Score gives a limited view. The Precision-Recall (PR) Curve is often more informative for imbalanced datasets because it shows the trade-off between precision and recall across different classification thresholds, without being skewed by the overwhelming number of true negatives that the ROC curve is sensitive to [9].

How do I statistically validate that my chosen metric is appropriate for my biomedical model?

Incorporating a rigorous statistical validation plan is essential. This should include:

- A Priori Planning: Define your primary evaluation metric and statistical tests in a pre-analysis plan before conducting the experiment [9].

- Resampling Methods: Use techniques like bootstrapping or cross-validation to estimate the confidence intervals of your performance metrics (e.g., the mean and variance of your Accuracy or F1-Score), ensuring their stability [9].

- Comparison Testing: Use statistical tests like McNemar's test or DeLong's test to determine if the performance difference between two models is statistically significant, rather than relying on point estimates alone [9].

What is "Biomedical Health Efficiency" and how does it relate to model metrics?

Biomedical Health Efficiency is a proposed systems-thinking approach to ensure that biomedical innovations, including AI models, deliver their full potential value in real-world healthcare settings [11]. It relates directly to model metrics because a model with high analytical accuracy is of little value if system barriers (like policy constraints, workflow bottlenecks, or lack of equipment) prevent it from reaching the right patients at the right time. Therefore, evaluating a model must eventually extend beyond technical metrics to include its impact on overall health system efficiency and patient outcomes [11].

Can ensemble methods solve the problems of misleading metrics?

No, ensemble methods alone cannot solve this problem. While ensemble learning frameworks (e.g., combining Random Forest, SVM, and CNN) can achieve high classification accuracy by leveraging the strengths of multiple models, they do not change the fundamental nature of the evaluation metrics [12]. A highly accurate ensemble model trained and evaluated on a biased dataset will still produce misleadingly high metric values that may not reflect real-world clinical utility. The solution lies in the proper application of metrics and rigorous evaluation design, not solely in the choice of modeling technique [12].

Experimental Protocols & Methodologies

Protocol 1: Designing a Robust Evaluation Framework for Imbalanced Data

Objective: To reliably evaluate a diagnostic classification model when the dataset has a severe class imbalance. Materials: Imbalanced biomedical dataset, computing environment (e.g., Python with scikit-learn). Methodology:

- Data Splitting: Split the dataset into training and test sets using stratified k-fold cross-validation to preserve the class distribution in each fold.

- Metric Selection: Calculate the following metrics on the held-out test set:

- Standard Accuracy

- Confusion Matrix

- Per-class Sensitivity and Specificity

- F1-Score, Precision, and Recall for the minority class(es)

- Area Under the Receiver Operating Characteristic (ROC-AUC) Curve

- Area Under the Precision-Recall (PR-AUC) Curve

- Benchmarking: Compare the PR-AUC to the ROC-AUC. In high-imbalance scenarios, a depressed PR-AUC with a high ROC-AUC indicates that the model's performance on the class of interest is poor, a fact that ROC-AUC obscures.

- Reporting: Report all metrics from step 2, with primary focus given to PR-AUC and per-class Sensitivity/Specificity for the clinical decision context.

Protocol 2: Implementing an Ensemble Learning Framework for Signal Classification

Objective: To classify spectrogram images from biomedical signals (e.g., percussion, palpation) into anatomical regions with high accuracy [12]. Materials: Percussion and palpation signal data, computing environment for machine learning (e.g., Python, TensorFlow/PyTorch, scikit-learn). Methodology:

- Signal Preprocessing: Normalize raw signal data to ensure consistency.

- Feature Extraction: Apply Short-Time Fourier Transform (STFT) to convert 1D signals into 2D spectrogram images, capturing temporal and spectral information [12].

- Model Architecture:

- CNN Branch: For extracting spatial features from the spectrograms.

- Random Forest Branch: For handling tabular data and mitigating overfitting.

- SVM Branch: For managing high-dimensional feature spaces.

- Ensemble Training: Train each model component independently. Combine their predictions through a weighted averaging or meta-classifier to make the final classification.

- Validation: Evaluate the ensemble framework on a test set of annotated spectrograms. The cited study achieved a classification accuracy of 95.4% across eight anatomical regions using this methodology [12].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function |

|---|---|

| Short-Time Fourier Transform (STFT) | A signal processing technique that converts time-series signals (e.g., percussion, palpation) into time-frequency representations (spectrograms), enabling the extraction of both spectral and temporal features for analysis [12]. |

| Ensemble Learning Framework | A machine learning approach that combines multiple models (e.g., CNN, Random Forest, SVM) to improve overall predictive performance and robustness by leveraging the complementary strengths of each constituent model [12]. |

| Statistical Analysis Plan (SAP) | A pre-defined, formal document that outlines all planned statistical methods, handling of outliers, and choice of evaluation metrics before data analysis begins. It is critical for ensuring transparency and validity and reducing selective reporting [9]. |

| Precision-Recall (PR) Curve | A plot that illustrates the trade-off between Precision (positive predictive value) and Recall (sensitivity) for a model at different classification thresholds. It is the recommended tool for evaluating performance on imbalanced datasets [9]. |

| Stratified Cross-Validation | A resampling technique that ensures each fold of the data retains the same percentage of samples for each class as the complete dataset. This is vital for obtaining reliable performance estimates on imbalanced data [9]. |

Conceptual Diagrams

Diagram 1: Pathway to Metric Selection

Diagram 2: Ensemble Framework for Signal Classification

Frequently Asked Questions

This section addresses common challenges researchers face when defining and measuring Overall Efficiency (OE) in their optimization experiments.

How should I handle conflicting objectives when calculating a unified OE score?

Conflicting objectives, such as minimizing cost while maximizing accuracy, are a central challenge. A multi-objective optimization (MOO) framework is designed for this scenario. Instead of forcing a single score, identify the Pareto frontier—the set of solutions where one objective cannot be improved without worsening another. You can then apply a weighted sum approach or use algorithms like NSGA-III to navigate these trade-offs based on your research priorities [13].

My OE results are unstable across repeated experiments. How can I improve reliability?

Unstable results often stem from an algorithm's sensitivity to its initial parameters or a tendency to converge on local optima. To enhance robustness, consider employing a Hybrid Grasshopper Optimization Algorithm (HGOA). This approach integrates mechanisms like elite preservation and opposition-based learning to improve the stability and repeatability of outcomes, providing more reliable performance predictions for complex systems like fuel cells [14].

What is the most critical mistake to avoid when tracking efficiency metrics?

The most critical mistake is an overemphasis on a single, overall score. A top-level metric can mask underlying issues in specific components of your system. To avoid this, ensure you break down the OE to analyze the performance of each core dimension—such as availability, performance, and quality—individually. This granular analysis is essential for diagnosing the root causes of inefficiency [15] [16].

How can I validate that my OE framework generalizes beyond my specific experimental setup?

To ensure generalizability, test your framework under a wide range of operating conditions. For example, one study on Proton Exchange Membrane Fuel Cells (PEMFCs) validated their model across seven different test cases (FC1–FC7). This process confirms that the framework and its chosen algorithms are not overfitted to a single dataset and can be reliably scaled and adapted [14].

Troubleshooting Guides

Poor Convergence in Multi-Objective Optimization

Symptoms: Optimization process stalls at a suboptimal solution, fails to explore the full Pareto frontier, or exhibits high variance in results between runs.

Diagnosis and Resolution:

- Problem: The optimization algorithm is trapped in a local optimum.

- Solution: Utilize a hybrid metaheuristic algorithm. The Hybrid Grasshopper Optimization Algorithm (HGOA), for instance, combines standard GOA with elite preservation and opposition-based learning to enhance global search capability and escape local optima [14].

- Problem: Poor balance between exploration (searching new areas) and exploitation (refining good solutions).

- Problem: The algorithm's parameters are not tuned for your specific problem.

- Solution: Conduct a sensitivity analysis. Systematically vary key parameters (e.g., population size, mutation rate) and observe the impact on convergence stability and solution quality to identify the optimal configuration [14].

Inaccurate Performance Prediction Model

Symptoms: Significant discrepancy between the model's predicted efficiency and actual experimental results, or the model fails under different operating conditions.

Diagnosis and Resolution:

- Problem: The model does not account for real-time, dynamic parameters.

- Solution: Integrate digital twin technology. Construct a virtual mirror of your physical system that ingests real-time data from IoT sensors on equipment status and environmental parameters, allowing for dynamic and accurate prediction [17].

- Problem: Use of oversimplified linear models for a nonlinear system.

- Solution: Employ Deep Reinforcement Learning (DRL). For example, an improved Deep Deterministic Policy Gradient (DDPG) algorithm can handle the nonlinear, time-series data of a complex system, leading to more accurate dynamic adjustments and performance forecasts [17].

- Problem: Model is trained on an insufficient or non-representative dataset.

- Solution: Use Long Short-Term Memory (LSTM) networks to process and learn from extensive historical time-series data, which improves the model's ability to predict future states and efficiency metrics [17].

Inconsistent OE Measurement Across Experimental Setups

Symptoms: Inability to compare OE results meaningfully between different experiments, algorithms, or lab setups.

Diagnosis and Resolution:

- Problem: The OE metric is calculated using inconsistent benchmarks or cycle times.

- Solution: Always calibrate against a theoretical maximum or ideal cycle time. Using average or arbitrary benchmarks artificially inflates scores and hides inefficiencies. The benchmark must be consistent across all experiments [15].

- Problem: The framework fails to measure all types of losses.

- Solution: Adopt a structured loss categorization. The "Six Big Losses" framework is a proven model that ensures all potential sources of inefficiency—such as unplanned stops, slow cycles, and quality defects—are accounted for in the OE calculation [16].

- Problem: The measurement point is not at the system's constraint.

- Solution: Measure OE at the bottleneck of your process. The performance of this constraint dictates the system's overall throughput. Measuring elsewhere provides a misleading picture of true efficiency [18].

Experimental Protocols & Data

Protocol 1: Benchmarking Optimization Algorithms for OE

This protocol provides a standardized method for comparing the performance of different optimization algorithms within your OE framework.

Objective: To quantitatively evaluate and compare the accuracy, speed, and stability of candidate optimization algorithms.

Materials:

- Computational environment (e.g., MATLAB, Python).

- Standardized dataset representing the system under study.

- Candidate optimization algorithms (e.g., HGOA, PSO, NSGA-III).

Methodology:

- Setup: Configure each algorithm with its recommended initial parameters. Define a clear set of objectives and constraints for the optimization problem.

- Execution: Run each algorithm for a predetermined number of iterations or until a convergence criterion is met. Repeat the experiment multiple times (e.g., 30 runs) to gather statistically significant data.

- Data Collection: Record the following metrics for each run:

- Final objective function value(s).

- Number of iterations/Time to convergence.

- Standard deviation of results across multiple runs.

Key Metrics for Comparison [14]:

| Metric | Description | Ideal Outcome |

|---|---|---|

| Absolute Error (AE) | Difference between found solution and known optimum. | Closer to 0. |

| Relative Error (%) | Absolute error expressed as a percentage. | Closer to 0%. |

| Mean Bias Error (MBE) | Indicates systematic bias in the solution. | Approaching 0. |

| Computational Time | Time or iterations to reach convergence. | Lower, with acceptable accuracy. |

Protocol 2: Validating OE in a Simulated Supply Chain

This protocol outlines an experiment to validate an OE framework using a digital twin of an e-commerce product supply chain, integrating multiple efficiency dimensions.

Objective: To demonstrate a measurable improvement in Overall Efficiency by applying a hybrid AI framework to a complex, multi-objective system.

Materials:

- Digital twin platform.

- Historical datasets (order flow, inventory, equipment power, transportation routes).

- LSTM network for demand forecasting.

- Improved DDPG algorithm for dynamic control.

Methodology:

- Baseline Establishment: Run the digital twin simulation using traditional management rules to establish baseline performance for energy consumption, cost, and order fulfillment rate.

- Intervention: Deploy the integrated AI framework. The LSTM network predicts dynamic loads, and the improved DDPG algorithm dynamically adjusts equipment states and logistics planning.

- Validation: Compare key performance indicators (KPIs) between the baseline and the AI-optimized scenario over a simulated operational period.

Expected Quantitative Outcomes [17]:

| Performance Indicator | Baseline | OE-Optimized (with AI) | Improvement |

|---|---|---|---|

| Comprehensive Energy Efficiency | - | - | +19.7% |

| Carbon Emission Intensity | - | - | -14.3% |

| Peak Electricity Load (Warehousing) | - | - | -23% |

| Transportation Network Efficiency | - | - | +17.6% |

| Inventory Turnover Efficiency | - | - | +12% |

The Scientist's Toolkit

Research Reagent Solutions for Optimization Experiments

| Item | Function in Research |

|---|---|

| Digital Twin Platform | Creates a virtual, real-time replica of a physical system (e.g., a supply chain or fuel cell) for safe, high-fidelity simulation and testing of optimization strategies [17]. |

| Hybrid Metaheuristic Algorithms (e.g., HGOA) | Advanced computational procedures that combine multiple optimization strategies to effectively solve complex, non-linear problems and avoid suboptimal solutions [14]. |

| Deep Reinforcement Learning (DRL) Framework | Enables the development of AI agents that learn optimal decisions through trial and error in a dynamic environment, suitable for adaptive control tasks [17]. |

| LSTM (Long Short-Term Memory) Network | A type of recurrent neural network ideal for processing and predicting time-series data, such as dynamic load forecasts in energy systems [17]. |

| Pareto Frontier Analysis Tool | Software or algorithms used to identify and visualize the set of non-dominated optimal solutions in a multi-objective optimization problem [13]. |

Workflow Diagrams

Overall Efficiency Optimization Workflow

This diagram illustrates the iterative process for developing and refining an Overall Efficiency framework, from initial system modeling to final validation.

OE Calculation and Loss Breakdown

This diagram deconstructs a core OE component, showing how a high-level metric is built from underlying factors and how the "Six Big Losses" framework helps diagnose root causes [16].

A Technical Support Guide for Researchers

This technical support center addresses common challenges and questions researchers face when utilizing ex vivo human organ models in their work. The guidance is framed within the context of enhancing the Overall Efficiency (OE) of your research pipeline, focusing on metrics such as model reproducibility, data reliability, and the speed of translational decision-making.

Frequently Asked Questions & Troubleshooting

Q1: Our organoids suffer from high batch-to-batch variability, compromising our OE metrics for screening. How can we improve reproducibility?

- A: Variability often stems from manual, non-standardized culture processes. To enhance OE:

- Implement Automation: Utilize automated production systems and bioreactors to standardize organoid generation and culture conditions, reducing human error [19] [20].

- Use Validated Models: Whenever possible, source assay-ready, pre-validated organoids that have undergone rigorous characterization to ensure consistency [20].

- Adopt AI-Driven Monitoring: Integrate artificial intelligence (AI) tools to objectively monitor and adjust culture parameters, removing bias from decision-making [20].

Q2: We are unable to maintain our ex vivo perfused organs for the duration needed for our drug metabolism studies. What are the key factors for viability?

- A: Sustaining an organ ex vivo requires replicating its physiological environment. Adherence to the following acceptance criteria is critical for OE, as it prevents wasted resources on non-viable organs [21] [22].

- Perfusion Solution: Use a solution that provides electrolytes, nutrients, buffers, and antibiotics. Common choices include Krebs-Henseleit or STEEN solution, sometimes supplemented with oxygen carriers [22].

- Oxygenation: Maintain adequate oxygen delivery using carbogen gas, hemoglobin-based oxygen carriers (HBOCs), or leukocyte-filtered whole blood perfusate [22].

- Physiological Parameters: Continuously monitor and adjust perfusion pressure, flow rates, and temperature (typically 37°C) to meet the organ's metabolic demands [21] [22].

Q3: Our organoids develop a necrotic core, which skews our toxicity readouts. What is the cause and how can we fix it?

- A: Necrotic cores are a common limitation that directly impacts the OE of organoid models by reducing their physiological relevance.

- Root Cause: This is primarily due to the lack of a vascular system, which limits the diffusion of nutrients and oxygen to the core of the organoid as it grows in size [19] [20].

- Solutions to Improve OE:

- Co-culture with Endothelial Cells: Investigate co-culturing organoids with endothelial cells to encourage the formation of vascular networks [20].

- Integrate with Organ-on-Chip Technology: Use microfluidic organ-on-chip devices that provide dynamic fluid flow, enhancing nutrient delivery and mimicking blood perfusion [20].

Q4: How can we use patient-derived organoids (PDOs) to improve the efficiency of our personalized medicine pipeline?

- A: PDOs are a powerful tool for boosting OE in drug development by incorporating human diversity early in the process.

- Application: Generate organoids from individual patients to create a biobank. These PDOs can be used to screen a panel of drug candidates to identify which therapy is most likely to be effective for that specific patient's disease, enabling personalized treatment plans [20].

- OE Benefit: This approach acts as an early predictor of a drug's success or failure in a specific genetic context, saving significant time and costs by filtering out ineffective candidates before human trials [20].

Experimental Protocol: Setting Up an Ex Vivo Organ Perfusion (EVOP) System

The following table outlines a generalized protocol for establishing a benchtop EVOP system for a rodent organ, based on common methodologies [22]. This standardized workflow is designed to maximize data quality and OE.

| Protocol Step | Detailed Methodology | Key Parameters & OE Considerations |

|---|---|---|

| 1. System Setup | Assemble a perfusion circuit with a peristaltic pump, oxygenator, organ chamber, and tubing. Place the system in a temperature-controlled incubator or on a benchtop with a heated water jacket. | Flow rate, temperature (37°C), oxygenation (95% O₂/5% CO₂). Consistent setup reduces experimental variability [22]. |

| 2. Organ Harvest & Cannulation | Following ethical guidelines, harvest the target organ (e.g., liver, lung, intestine) ensuring minimal trauma. Cannulate the main artery/vein (e.g., pulmonary artery for lung, portal vein for liver). | Speed of harvest, minimal ischemic time. Proper cannulation is critical for uniform perfusion and organ survival [21] [22]. |

| 3. Organ Acceptance | Begin perfusion and monitor until the organ meets pre-defined viability criteria before introducing any test compounds. | Stable perfusion pressure, flow rates, absence of significant edema. This step ensures data is collected from a physiologically stable organ, protecting OE [21]. |

| 4. Dosing & Sampling | Introduce the drug candidate through a physiologically relevant route (e.g., into the gut lumen for intestine, into the blood for liver). Collect samples at timed intervals. | Sample types: Blood/plasma, bile (liver), gut contents (intestine), airway lavage (lung), tissue biopsies [21]. |

| 5. Data Analysis | Use the organ as its own control. Compare the effect of a test compound against positive and negative standards administered in the same organ. | Analyze samples for drug concentration, metabolites, and biomarkers of efficacy or toxicity. This internal control design enhances data reliability and OE [21]. |

The Scientist's Toolkit: Key Research Reagent Solutions

The table below details essential materials and their functions for working with ex vivo organ models.

| Reagent/Material | Function in the Experiment |

|---|---|

| Induced Pluripotent Stem Cells (iPSCs) | The starting material for generating most human organoids; can be programmed to develop into any cell type, including patient-specific lines [19] [20]. |

| Krebs-Henseleit Solution | A common physiological salt solution used in EVOP; provides electrolytes, buffers, and glucose to maintain ionic balance and cellular function [22]. |

| STEEN Solution | A perfusion solution commonly used for lungs and kidneys; contains human serum albumin and dextran to maintain oncotic pressure and inhibit leukocyte adhesion [22]. |

| Hemoglobin-Based Oxygen Carriers (HBOCs) | Cell-free synthetic solutions used in perfusate to carry and deliver oxygen to the organ, overcoming challenges associated with using red blood cells [22]. |

| Extracellular Matrix (ECM) Hydrogels | A scaffold (e.g., Matrigel) in which stem cells are embedded to provide a 3D environment that supports organoid growth and self-organization [19]. |

| Physician-Compounded Foam (PCF) | In vascular research, a sclerosing foam used in ex vivo vein models to study endothelial damage and therapeutic efficacy [23]. |

Workflow Visualization: From Model to Decision

The following diagram illustrates the logical workflow for utilizing ex vivo models in a drug development pipeline, highlighting key decision points that impact Overall Efficiency.

This diagram shows how organoid and EVOP models can be integrated to provide human-relevant data early in the drug development process. The "Early Go/No-Go" decisions informed by these models directly enhance OE metrics like Decision Speed by filtering out failing compounds before they reach costly and time-consuming animal studies and clinical trials.

Component Visualization: Ex Vivo Organ Perfusion (EVOP) System

The diagram below details the core components of a standard benchtop EVOP system and their interconnections.

This schematic outlines a typical recirculating EVOP setup. The perfusate is pumped from the Reservoir through an Oxygenator to maintain physiological oxygen and carbon dioxide levels before entering the Organ Chamber. The entire system is maintained at 37°C by a Temperature Controller. Real-time Data Collection on parameters like pressure and flow is essential for monitoring organ viability and ensuring experimental consistency, which directly contributes to reliable OE metrics [21] [22].

Drug Development Tools (DDTs) are methods, materials, or measures that have the potential to facilitate drug development and regulatory review. The U.S. Food and Drug Administration (FDA) has established formal qualification programs to support DDT development, creating a pathway for their acceptance in regulatory decision-making. The program was formally structured through the 21st Century Cures Act of 2016, which defined a three-stage qualification process allowing use of a qualified DDT across multiple drug development programs [24].

Qualification represents a conclusion that within a stated context of use, the DDT can be relied upon to have a specific interpretation and application in drug development and regulatory review. Once qualified, DDTs become publicly available for any drug development program for the qualified context of use and can generally be included in Investigational New Drug (IND), New Drug Application (NDA), or Biologics License Application (BLA) submissions without needing FDA to reconsider their suitability for each application [24].

DDT Categories and Program Metrics

DDT Categories

The FDA's DDT Qualification Programs focus on three primary categories of tools, with an additional program for innovative approaches:

- Biomarkers: A defined characteristic that is measured as an indicator of normal biological processes, pathogenic processes, or responses to an exposure or intervention [24]. A biomarker can be a single concept or a panel of multiple concepts.

- Clinical Outcome Assessments (COAs): Measures that describe or reflect how a patient feels, functions, or survives [25]. These include Patient-Reported Outcomes (PROs), Clinician-Reported Outcomes (ClinROs), Observer-Reported Outcomes (ObsROs), and Performance Outcome (PerfO) assessments.

- Animal Models: Specifically for use in efficacy testing of medical countermeasures under the regulations commonly referred to as the Animal Rule [24].

- ISTAND Program: Covers innovative DDT types that fall outside the scope of the other three categories, including novel digital health technologies, tissue chips, and artificial intelligence-based algorithms [26].

Program Metrics and Current Status

The table below summarizes the current metrics for DDT Qualification Programs as of August 2025, providing insight into program utilization and efficiency [27]:

Table 1: DDT Qualification Program Metrics (as of June 30, 2025)

| Program Area | Total Projects in Development | LOIs Accepted | QPs Accepted | Newly Qualified DDTs (Past 12 Months) | Total Qualified DDTs to Date |

|---|---|---|---|---|---|

| All DDT Programs | 141 | 121 | 20 | 1 | 17 |

| Biomarker Qualification | 59 | 49 | 10 | 0 | 8 |

| Clinical Outcome Assessment | 67 | 58 | 9 | 1 | 8 |

| Animal Model | 5 | 5 | 0 | 0 | 1 |

| ISTAND | 10 | 9 | 1 | 0 | 0 |

These metrics demonstrate that while many tools enter the qualification pipeline, the progression to full qualification remains challenging, with only 17 tools qualified to date across all categories.

The DDT Qualification Process: Workflow and Procedures

The FDA's DDT qualification process follows a structured three-stage pathway with established review timelines. The following diagram illustrates this workflow:

DDT Qualification Process Workflow

Stage 1: Letter of Intent (LOI)

The qualification process begins with submission of a Letter of Intent that includes [24] [25]:

- Description of the DDT and its proposed context of use

- Rationale for the DDT and its potential benefits to drug development

- Preliminary data supporting the DDT's utility

- FDA conducts a 60-day completeness assessment followed by a 3-month substantive review

- Outcome: LOI accepted or not accepted into the qualification program

Stage 2: Qualification Plan (QP)

If the LOI is accepted, the requester submits a detailed Qualification Plan containing [24]:

- Detailed description of the DDT and its context of use

- Complete development plan for qualifying the DDT

- Summary of existing data and gaps

- Proposed studies to address qualification

- FDA conducts a 60-day completeness assessment followed by a 6-month substantive review

- Outcome: QP accepted or not accepted

Stage 3: Full Qualification Package (FQP)

After QP acceptance, the requester submits a Full Qualification Package with [24]:

- Complete data and analyses from studies outlined in the QP

- Final evidence demonstrating the DDT's performance within the context of use

- FDA conducts a 60-day completeness assessment followed by a 10-month substantive review

- Outcome: DDT qualified or qualification not granted

Efficiency Analysis: Timelines and Program Impact

Qualification Timelines

An analysis of the Clinical Outcome Assessment (COA) Qualification Program reveals significant challenges in qualification efficiency [25]:

Table 2: COA Qualification Program Performance Analysis

| Metric | Finding | Implications for Overall Efficiency |

|---|---|---|

| Average Qualification Time | ~6 years from start to qualification | Extended timelines delay tool availability and impact drug development planning |

| Review Timeline Adherence | 46.7% of submissions exceeded published review targets | Unpredictable reviews complicate resource allocation and project management |

| Qualification Rate | Only 8.1% (7 of 86) of COAs achieved qualification | High attrition suggests potential process inefficiencies or unclear expectations |

| Tool Utilization | Only 3 of 7 qualified COAs used to support benefit-risk assessment of medicines | Limited adoption may indicate misalignment between qualified tools and development needs |

Impact on Drug Development

The limited uptake of qualified DDTs in actual drug development programs suggests efficiency challenges. Analysis shows that qualified COAs have been used to support benefit-risk assessment for only 11 medicines, primarily as secondary or exploratory endpoints rather than primary endpoints [25]. This limited integration into regulatory decision-making indicates potential gaps between the qualification program outputs and the practical needs of drug developers.

Technical Support: FAQs and Troubleshooting Guides

Frequently Asked Questions

Q: What is the difference between DDT qualification and use of a tool in a specific drug application? A: Qualification creates a publicly available tool that can be used across multiple drug development programs without needing re-evaluation for each application. Using a tool in a specific drug application involves demonstrating its suitability for that specific product and context, which must be re-established for each new application [24].

Q: Can the context of use be modified after initial qualification? A: Yes, as additional data are obtained over time, requestors may submit a new project with additional data to expand upon a qualified context of use [24].

Q: What types of innovative tools does the ISTAND program consider? A: The ISTAND program accepts submissions for DDTs that are out of scope for existing qualification programs, including tools for remote/decentralized trials, tissue chips (microphysiological systems), novel nonclinical assays, AI-based algorithms, and digital health technologies like wearables [26].

Q: How does the FDA define "context of use"? A: Context of use is the manner and purpose of use for a DDT, describing all elements characterizing its purpose and manner of use. The qualified context of use defines the boundaries within which available data adequately justify use of the DDT [24].

Common Submission Challenges and Solutions

Challenge: Incomplete submission packages causing review delays

- Solution: Use the FDA's revised Qualification Plan Content Element Outline (updated July 2025) as a comprehensive guide for preparing submissions [24]. Ensure all required elements are addressed completely before submission.

Challenge: Extended and unpredictable review timelines

- Solution: Plan for potential review timeline extensions based on historical data [25]. Build buffer periods into development timelines and maintain open communication with FDA throughout the process.

Challenge: Limited adoption of qualified tools in drug development

- Solution: Early engagement with potential end-users during tool development to ensure alignment with practical drug development needs. Consider forming collaborative consortia to increase tool awareness and adoption [24].

Challenge: Determining the appropriate evidence for qualification

- Solution: Engage with FDA through mechanisms like Critical Path Innovation Meetings (CPIMs) for early feedback on development strategies before formal qualification submission [28].

Research Reagents and Resource Solutions

Table 3: Essential Research Resources for DDT Development

| Resource Category | Specific Tools/Frameworks | Function in DDT Development |

|---|---|---|

| Regulatory Guidance | Qualification Process for Drug Development Tools - Draft Guidance [24] | Provides FDA's current thinking on qualification process requirements and expectations |

| Biomarker Resources | BEST (Biomarkers, EndpointS, and other Tools) Glossary [28] | Standardized terminology and definitions for biomarker categories and applications |

| Data Standards | CDER Data Standards Program [28] | Ensures consistency in data collection, formatting, and submission across development programs |

| Database Tools | CDER & CBER's DDT Qualification Project Search Database [24] [27] | Allows identification of existing qualified DDTs and projects in development to avoid duplication |

| Collaborative Frameworks | Public-Private Partnerships (PPPs) [24] | Enables resource pooling and risk-sharing for DDT development beyond individual organizational capabilities |

Biomarker Integration in Drug Development: A Case Study

Biomarkers represent the largest category of DDTs in development, with 59 projects currently in the qualification pipeline [27]. The strategic integration of biomarkers in drug development, particularly in oncology, demonstrates their value in overall efficiency optimization.

Biomarker Categories and Applications

The table below outlines key biomarker categories with specific applications in drug development, particularly for dose optimization strategies [29]:

Table 4: Biomarker Categories for Drug Development Applications

| Biomarker Category | Purpose in Development | Example Application |

|---|---|---|

| Pharmacodynamic | Assess biological activity of intervention without necessarily confirming efficacy | Phosphorylation of proteins downstream of drug target [29] |

| Predictive | Identify patients more or less likely to respond to treatment | BRCA1/2 mutations predicting sensitivity to PARP inhibitors [29] |

| Surrogate Endpoint | Serve as substitute for direct measures of patient experience or survival | Overall response rate as surrogate for survival endpoints [29] |

| Safety | Indicate likelihood, presence, or degree of treatment-related toxicity | Neutrophil count monitoring during cytotoxic chemotherapy [29] |

| Integral | Required for trial design (eligibility, stratification, endpoints) | BRCA1/2 mutations for inclusion in PARP inhibitor trials [29] |

Biomarker Application in Dose Optimization

Modern oncology drug development illustrates the critical role of biomarkers in improving development efficiency. Traditional dose-finding approaches focused on maximum tolerated dose (MTD) have proven suboptimal for targeted therapies, with over half of novel oncology drugs approved between 2012-2022 receiving post-marketing requirements for additional dose exploration [30].

Biomarkers enable identification of the biologically effective dose (BED) range, potentially lower than MTD, optimizing the therapeutic window. Circulating tumor DNA (ctDNA) exemplifies this application, serving as [29]:

- Predictive biomarker for patient selection in targeted trials

- Pharmacodynamic biomarker for assessing biological activity

- Potential surrogate endpoint through correlation with radiographic response and survival outcomes

The following diagram illustrates how biomarkers integrate into comprehensive dose optimization strategies:

Biomarker Integration in Dose Optimization

The FDA's DDT Qualification Program represents a significant advancement in regulatory science, creating a structured pathway for developing standardized tools to facilitate drug development. However, current metrics reveal substantial opportunities for efficiency improvements:

Accelerated Qualification Timelines: The average 6-year qualification timeframe for COAs limits the program's impact on evolving drug development needs [25]. Streamlining this process could significantly enhance overall efficiency in drug development.

Enhanced Predictability: With nearly half of submissions exceeding target review times, improved timeline predictability would enable better resource planning and integration of DDT development into broader drug development programs [25].

Strategic Tool Selection: The limited use of qualified COAs in regulatory decision-making suggests need for better alignment between tool development and practical application needs [25].

Collaborative Development: FDA encourages formation of collaborative groups and public-private partnerships to pool resources and data, decreasing individual costs and expediting development [24].

For researchers and drug developers, strategic engagement with the DDT Qualification Program—particularly through early FDA interactions, careful context of use definition, and collaborative development models—offers the potential to enhance overall efficiency in drug development while contributing to the growing ecosystem of qualified tools available to the broader development community.

Building a Better Metric: Core Components and Methodologies for OE

Troubleshooting Guides

Computational Cost

Issue: Model training or inference is prohibitively slow, hindering research iteration. Question: How can I diagnose and reduce the high computational cost of my optimization metric?

Diagnosis and Solutions:

| Step | Action | Purpose & Technical Details |

|---|---|---|

| 1. Profile Code | Use profilers (e.g., cProfile in Python, line_profiler) to identify bottlenecks. |

Isolates specific functions or lines of code consuming the most CPU time and memory. For model training, profile data loading, forward/backward passes, and model saving. |

| 2. Simplify Model | Reduce model complexity (e.g., number of layers/parameters in a neural network, depth of a tree-based model). | Lowers computational load for both training and inference. The goal is to find the simplest model that meets predictive performance requirements [31]. |

| 3. Use Hardware Acceleration | Leverage GPUs/TPUs for parallelizable operations and optimize data pipelines. | Provides hardware-level speedups for mathematical computations common in model training and evaluation. |

| 4. Implement Early Stopping | Halt training when performance on a validation set stops improving. | Prevents unnecessary computational expenditure on iterations that no longer yield benefits, directly reducing training cost [32]. |

| 5. Adopt Efficient Data Types | Use reduced precision (e.g., 16-bit floating point instead of 32-bit) for calculations. | Decreases memory footprint and can accelerate computation on supported hardware. |

Predictive Performance

Issue: The model's predictions are inaccurate or unreliable. Question: How can I systematically evaluate and improve the predictive performance of my model?

Diagnosis and Solutions:

| Step | Action | Purpose & Technical Details |

|---|---|---|

| 1. Select Correct Metrics | Choose metrics aligned with your problem type (regression vs. classification) and business goal [32] [33]. | Regression: Use RMSE, R-squared. Classification: Use Accuracy, Precision, Recall, F1-Score. Avoid accuracy for imbalanced datasets [32]. |

| 2. Analyze Residuals/Errors | Plot residuals (for regression) or analyze the confusion matrix (for classification). | Reveals patterns in errors; for example, if the model consistently under-performs on certain data subsets, indicating potential bias or missing features [32]. |

| 3. Perform Feature Engineering | Create new input features, remove irrelevant ones, or address missing values. | Improves the model's ability to discern underlying patterns in the data, directly boosting predictive power. |

| 4. Tune Hyperparameters | Systematically search for optimal model configuration (e.g., Grid Search, Random Search). | Finds the model parameters that maximize predictive performance on your specific dataset. |

| 5. Use Cross-Validation | Assess model performance by training and validating on different data splits (e.g., k-fold cross-validation). | Provides a more robust estimate of how the model will generalize to unseen data, reducing overfitting [32]. |

Robustness

Issue: The model performs well on training data but fails with slight data variations or adversarial inputs. Question: How can I test and improve the robustness of my model to ensure reliable performance in real-world conditions?

Diagnosis and Solutions:

| Step | Action | Purpose & Technical Details |

|---|---|---|

| 1. Define Threat Scenarios | Identify potential sources of data variation or adversarial attacks relevant to your domain [34]. | Focuses testing efforts on realistic scenarios (e.g., sensor noise, new experimental conditions, or data poisoning attempts). |

| 2. Introduce Data Perturbations | Artificially add noise, occlusions, or transformations to your test data. | Quantifies performance degradation under controlled variations. A robust model should maintain stable predictions [34]. |

| 3. Adversarial Robustness Testing | Use techniques like Projected Gradient Descent (PGD) or Fast Gradient Sign Method (FGSM) to generate adversarial examples. | Stress-tests the model by finding small, worst-case perturbations to inputs that cause prediction errors [34]. |

| 4. Analyze Failure Modes | Closely examine inputs where the model's performance drops significantly. | Informs targeted improvements, such as collecting more diverse data for those specific scenarios or adding regularization. |

| 5. Regularization & Robust Training | Apply techniques like dropout, weight decay, or adversarial training. | These methods prevent the model from over-relying on fragile, non-robust features in the data, encouraging simpler and more stable decision boundaries [31]. |

Frequently Asked Questions (FAQs)

FAQ 1: How do I balance the trade-off between high predictive performance (accuracy) and model interpretability in my OE metric?

This is a fundamental challenge. Highly complex models (e.g., deep neural networks) often achieve top accuracy but act as "black boxes," while simpler models (e.g., linear regression) are more interpretable but may be less accurate [31]. To navigate this:

- Define Requirements: First, determine the level of interpretability required for your research or regulatory context [31].

- Consider Gray-Box Models: Explore models like Belief Rule Base (BRB) systems, which offer a balance between flexibility and interpretability by incorporating expert knowledge [31].

- Use Post-Hoc Explanations: For complex models, employ techniques like SHAP (SHapley Additive exPlanations) to provide insights into specific predictions [31].

FAQ 2: My model has a high R-squared value but makes poor predictions on new data. What is happening?

A high R-squared indicates that your model explains a large portion of the variance in the training data. Poor performance on new data suggests overfitting [32] [33]. Your model has likely learned the noise and specific details of the training set rather than the generalizable underlying patterns. Solutions include:

- Increase Training Data: More data helps the model learn general patterns.

- Apply Regularization: Techniques like L1 (Lasso) or L2 (Ridge) regression penalize model complexity.

- Simplify the Model: Reduce the number of features or model parameters.

- Use Cross-Validation: As highlighted in the troubleshooting guide, this is essential for a realistic performance estimate [32].

FAQ 3: What is the difference between robustness and predictive performance? Aren't they the same?

No, they are distinct but complementary pillars of a good OE metric.

- Predictive Performance measures the model's accuracy on a static, clean dataset that resembles the training data (e.g., 95% accuracy on a standard test set).

- Robustness measures how consistent that performance remains when the input data is slightly altered, noisy, or deliberately manipulated [34]. A model can have high performance but low robustness if its accuracy plummets with minor data changes.

FAQ 4: How can I quantify the robustness of my model for reporting in my research?

Robustness can be quantified by measuring the change in your predictive performance metrics under various stresses:

- Performance Drop: Report the difference in accuracy/F1-score/ RMSE between your clean test set and a perturbed/adversarial test set. A smaller drop indicates greater robustness.

- Adversarial Accuracy: Calculate the model's accuracy on a set of generated adversarial examples [34].

- Stability Metrics: For regression, you might calculate the average variance in predictions for small input perturbations.

Experimental Protocol for OE Metric Evaluation

The following workflow provides a standardized methodology for comprehensively evaluating an Overall Efficiency (OE) metric, integrating the three key pillars.

The Scientist's Toolkit: Key Research Reagents & Solutions

The following tools and conceptual frameworks are essential for developing and evaluating robust OE metrics.

| Tool / Solution Category | Specific Examples | Function & Application in OE Metric Development |

|---|---|---|

| Performance Evaluation Libraries | Scikit-learn (metrics), TensorFlow Model Analysis, MLflow | Provide standardized, reproducible implementations of key metrics (Precision, Recall, RMSE, etc.) for model validation and comparison [32]. |

| Profiling & Computational Tools | cProfile, py-spy, line_profiler, memory_profiler, GPU monitoring (e.g., nvidia-smi) |

Precisely measure computational cost, identify code bottlenecks, and monitor hardware resource utilization during model training and inference. |

| Robustness Testing Frameworks | ART (Adversarial Robustness Toolbox), Foolbox, TextAttack (for NLP) |

Implement state-of-the-art adversarial attacks and defense strategies to systematically stress-test model robustness [34]. |

| Interpretability & Explainability Tools | SHAP, LIME, Captum |

Provide post-hoc explanations for model predictions, helping to build trust, debug performance issues, and validate that the model uses biologically/physically plausible features [31]. |

| Model & Data Versioning | DVC (Data Version Control), Weights & Biases, Neptune.ai | Track experiments, manage dataset versions, and log model parameters and metrics to ensure full reproducibility of all results. |

Frequently Asked Questions

Q1: My grid search is recommending extreme hyperparameter values (e.g., a very large C for an SVM). Should I trust these results, or will they cause overfitting?

You are right to be cautious. It is common for the absolute best performance on a validation set to be found at extreme parameter values, but this can indeed be a sign of overfitting to that specific data split [35]. To ensure your model generalizes better:

- Use a different metric: Avoid using simple "Accuracy." Instead, optimize for metrics that are more robust to class imbalance and can better capture model performance, such as ROC AUC, Brier score, or the Matthews Correlation Coefficient (MCC) [36] [35].

- Apply the "1 Standard Error" rule: A good hedging strategy is to select the simplest model (e.g., a larger regularization parameter, which corresponds to a smaller C) whose performance is within one standard error of the best-performing model [35].

- Consider standard ranges: While not sacrosanct, established ranges for parameters can serve as a useful sanity check. For instance, a typical range for the SVM parameter

Cis from (2^{-5}) to (2^{15}), and forgamma, it is from (2^{-15}) to (2^{3}) [35].

Q2: When should I choose Bayesian optimization over the simpler grid or random search?

The choice often involves a trade-off between computational cost, search space size, and the need for intelligent exploration.

- Choose Grid Search when you have a small hyperparameter space (few parameters with limited values) and computational cost is not a primary concern. It is a comprehensive, brute-force method suitable for spotting well-known, high-performing combinations [37] [38].

- Choose Random Search when you have a larger search space and need to balance exploration with efficiency. It is faster than grid search and can discover good hyperparameters without exploring the entire space, making it suitable for projects with tight deadlines [37] [39].

- Choose Bayesian Optimization when you are working with complex models, large datasets, or a high-dimensional hyperparameter space, and each model evaluation is computationally expensive (e.g., training a deep neural network) [37] [40]. It is the best fit when you need to obtain optimal hyperparameters with fewer trials and are willing to accept a longer run time for each individual iteration to achieve that [39].

Q3: How does the choice of evaluation metric impact the hyperparameter optimization process?

The evaluation metric is a critical, non-neutral decision. Optimizing for different metrics can lead to models with vastly different performance characteristics, especially on imbalanced datasets commonly found in real-world applications like fraud detection or medical diagnosis [36].

- Traditional metrics like Accuracy can be misleading on imbalanced data, as a model might achieve high accuracy by simply always predicting the majority class, thereby failing to identify the critical minority class (e.g., attacks in a smart home network) [36].

- Imbalance-aware metrics like MCC, G-Mean, or AUC-PR are often better suited for guiding the hyperparameter search. Research has shown that models optimized for MCC, for example, can achieve more robust and generalizable performance across multiple criteria compared to those optimized for Accuracy or AUC-ROC [36].

Troubleshooting Guides

Problem: Grid Search is Taking Too Long to Complete

Grid search runtime grows exponentially with the number of hyperparameters, a phenomenon known as the "curse of dimensionality."

- Solution 1: Switch to Random or Bayesian Search. For a large search space, random search can often find a good-enough solution in a fraction of the time [39] [41]. Bayesian optimization is even more efficient, converging to the best parameters in fewer iterations [37] [39].

- Solution 2: Reduce the Search Space. Use domain knowledge to narrow down the values for each hyperparameter. Instead of testing a wide range of values linearly, consider using a logarithmic scale (e.g., for the learning rate or regularization strength) to explore orders of magnitude more efficiently [38].

- Solution 3: Use Parallel Computing. Frameworks like Scikit-learn's

GridSearchCVandRandomizedSearchCVhave ann_jobsparameter that allows you to use multiple CPU cores to parallelize the search process, significantly reducing wall-clock time [38].

Problem: My Optimized Model is Overfitting

If your model performs well on the validation set but poorly on new, unseen data, the hyperparameter tuning process itself might be the cause.

- Solution 1: Re-evaluate Your Cross-Validation Strategy. Ensure you are using a robust method like Repeated Stratified K-Fold for classification. This provides a more reliable estimate of model performance and reduces the variance of the estimated score [38].

- Solution 2: Simplify the Model. The extreme hyperparameters found by the search might be creating an overly complex model. As mentioned in the FAQ, applying the "1 Standard Error Rule" to choose a simpler model can improve generalization [35].

- Solution 3: Incorporate Regularization. If your model supports it, ensure that regularization hyperparameters (like

Cin SVMs or Logistic Regression, or dropout in neural networks) are included in the search space. Proper tuning of these parameters explicitly controls overfitting [42].

Quantitative Comparison of Methods

The following table summarizes a typical comparative study of the three hyperparameter tuning methods on a shared task, highlighting their performance in the context of an Overall Efficiency (OE) metric that balances computational cost against achieved model performance [37] [39].

| Method | Total Trials | Trials to Best | Best F1-Score | Total Run Time | Key Characteristic |

|---|---|---|---|---|---|

| Grid Search | 810 | 680 | 0.914 | ~45 min | Exhaustive, uninformed search [39] |

| Random Search | 100 | 36 | 0.902 | ~6 min | Random, uninformed search [39] |

| Bayesian Optimization | 100 | 67 | 0.914 | ~9 min | Informed, adaptive search [37] [39] |

Note: The data in this table is a synthesis from comparative experiments detailed in the search results. The exact values will vary based on the specific dataset, model, and search space.

Experimental Protocols

Protocol 1: Implementing Hyperparameter Search with Scikit-Learn

This protocol outlines the steps for performing hyperparameter optimization using Scikit-learn's GridSearchCV and RandomizedSearchCV [38].

- Define the Model: Instantiate the base estimator (e.g., a

RandomForestClassifier). - Define the Search Space:

- For Grid Search, the space is a dictionary where keys are parameter names and values are lists of settings to try. Example:

param_grid = {'C': [0.1, 1, 10], 'gamma': [0.001, 0.01, 0.1]}[41]. - For Random Search, the space can include distributions. Example:

param_dist = {'max_depth': [3, None], 'min_samples_leaf': randint(1, 9)}[41].

- For Grid Search, the space is a dictionary where keys are parameter names and values are lists of settings to try. Example:

- Define the Evaluation Procedure: It is recommended to define a cross-validation object explicitly. For classification, use

RepeatedStratifiedKFold(n_splits=10, n_repeats=3, random_state=1)[38]. - Configure and Execute the Search: Create the search object, specifying the model, parameter grid, cross-validation strategy, scoring metric, and enabling parallelization (

n_jobs=-1). - Analyze Results: After fitting, you can access the best score (

grid_result.best_score_) and the best parameters (grid_result.best_params_).

Protocol 2: Bayesian Optimization using Optuna

This protocol describes the process for using the Optuna framework for Bayesian optimization [37] [39].

- Define the Objective Function: Create a function that takes an Optuna

trialobject and returns the validation score to maximize (or minimize).- Inside the function, use

trial.suggest_*methods (e.g.,suggest_float,suggest_categorical) to define the hyperparameter search space. - Within the function, train your model using the suggested hyperparameters and evaluate it on a validation set.

- Inside the function, use

- Create a Study Object: Instantiate a study that directs the optimization, specifying the direction ('maximize' or 'minimize').

- Run the Optimization: Invoke the

optimizemethod on the study, passing the objective function and the number of trials. - Retrieve the Best Trial: After optimization, you can access the best parameters and the best value from the study object:

best_params = study.best_trial.params.

Workflow Visualization

The following diagram illustrates the logical flow and key differences between the three hyperparameter optimization methods, from setup to the selection of the final model configuration.

Hyperparameter Optimization Methods Workflow

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key software tools and their functions for conducting hyperparameter optimization research.

| Tool / Framework | Primary Function | Key Features / Use Case |

|---|---|---|

| Scikit-learn [38] [41] | Provides GridSearchCV and RandomizedSearchCV |

The standard library for traditional grid and random search with integrated cross-validation. Ideal for getting started and for models with small to medium search spaces. |

| Optuna [37] [39] | A dedicated framework for Bayesian optimization | Defines search spaces and objective functions intuitively. Uses TPE (Tree-structured Parzen Estimator) by default. Excellent for complex, high-dimensional searches. |

| Ray Tune [42] | A scalable library for distributed hyperparameter tuning | Designed for distributed computing environments. Supports all major search algorithms (Grid, Random, Bayesian, PBT) and can scale experiments across clusters. |

| OpenVINO Toolkit [42] | A toolkit for model optimization and deployment | Includes model optimization techniques like quantization and pruning that can be applied after hyperparameter tuning to reduce model size for deployment. |

Frequently Asked Questions

1. What makes traditional metrics like accuracy unsuitable for drug discovery? In drug discovery, datasets are typically highly imbalanced, with far more inactive compounds than active ones. A model can achieve high accuracy by simply predicting the majority class (inactive compounds) while failing to identify the rare, active candidates that are the primary target. This can render traditional metrics misleading and unfit for purpose [43] [44].

2. When should I prioritize Recall over Precision in a screening pipeline? Prioritize Recall when the cost of missing a true positive (a promising drug candidate or a serious adverse event) is unacceptably high. Conversely, prioritize Precision when the cost of false positives is high, such as when experimental validation resources are limited and must be allocated only to the most promising leads [45] [43] [44].

3. How does Rare Event Sensitivity differ from standard Recall? While both measure the model's ability to find all relevant items, Rare Event Sensitivity is specifically designed and optimized for scenarios where the positive class is extremely rare. It focuses the evaluation on the model's performance in detecting these critically important low-frequency events, which might be obscured in the broader calculation of standard Recall [43].

4. Can I use Precision-at-K if my recommendation list is shorter than K? Yes. If your list length is shorter than your chosen K, the number of items in the list is used as the denominator for that specific case. The metric is then averaged across all users or queries to get the final system performance assessment [45].

Troubleshooting Guides

Problem: Model with High Accuracy Fails to Identify Active Compounds

This is a classic symptom of evaluating a model on an imbalanced dataset using inappropriate metrics.

Step 1: Diagnosis Confirm the issue by calculating the baseline accuracy (the percentage of the majority class). If your model's accuracy is only slightly better than this baseline, it is likely not performing useful work [44].

Step 2: Apply Domain-Specific Metrics Implement a suite of metrics designed for imbalance and ranking:

- Calculate Precision-at-K (P@K) to ensure your top recommendations are relevant [45] [43].

- Calculate Recall or Rare Event Sensitivity to verify you are capturing a sufficient share of all active compounds [45] [43].

- Use the F-score (F1 or Fβ) to find a balance between Precision and Recall that suits your project's goals [45].

Step 3: Implement a Technical Solution During model training, employ techniques like cost-sensitive learning to assign more weight to the minor class (active compounds), helping the model learn from these rare examples [46].

Problem: High Rate of False Positives in High-Throughput Screening

This leads to wasted resources as expensive wet-lab experiments are spent validating inactive compounds.

Step 1: Adjust the Prediction Threshold The default classification threshold is often 0.5. For rare events, this can be too low. Increase the classification threshold (e.g., to 0.7 or 0.8) to only classify the most confident predictions as "active," thereby increasing precision and reducing false positives [44].

Step 2: Optimize for Precision-at-K If your goal is to select a fixed number of candidates for the next stage, explicitly optimize your model and evaluation for Precision-at-K, where K is your batch size. This directly measures the metric of business interest [45] [43].

Step 3: Analyze Feature Importance Use model interpretation tools like SHAP (SHapley Additive exPlanations) to identify the features driving the false positive predictions. This can reveal issues in the input data or model logic that can be corrected [46].

Problem: Inconsistent Yield in Bioprocess Optimization

Unexpected variations in bioprocess yield indicate that a model trained on historical data may not be generalizing well to new production runs.

Step 1: Review Batch Data for Patterns Manually review batch records and analytics to pinpoint patterns or anomalies. Look for correlations between input materials, process parameters (like pH or temperature), and yield outcomes [47].

Step 2: Employ Statistical Process Control (SPC) Implement SPC charts to visually detect deviations, trends, or outliers in yield and other Critical Process Parameters (CPPs) that fall outside acceptable control limits [47].

Step 3: Conduct Root Cause Analysis using DoE Use Design of Experiments (DoE) to systematically investigate and optimize critical process variables. DoE helps efficiently identify which factors and their interactions significantly impact yield, moving from a reactive to a proactive optimization strategy [48] [47].

Quantitative Data and Benchmarking

Table 1: Benchmarking Overall Equipment Effectiveness (OEE) in Pharmaceutical Manufacturing

| Performance Tier | OEE Score | Availability | Performance | Quality | Key Characteristics |

|---|---|---|---|---|---|

| World-Class (Pharma) | ~70% | High | ~100% | ~100% | Top 10% quartile; minimal performance losses and near-zero scrap [49]. |

| Digitized (Pharma 4.0) | >60% | 67% | 93% | 98% | Leverages AI, and real-time monitoring for efficiency gains [49]. |

| Industry Average | ~35-37% | <50% | ~80% | ~94% | Significant planned losses (e.g., cleaning, changeovers) and micro-stops [49]. |

Table 2: Comparison of Evaluation Metrics for Drug Discovery Models