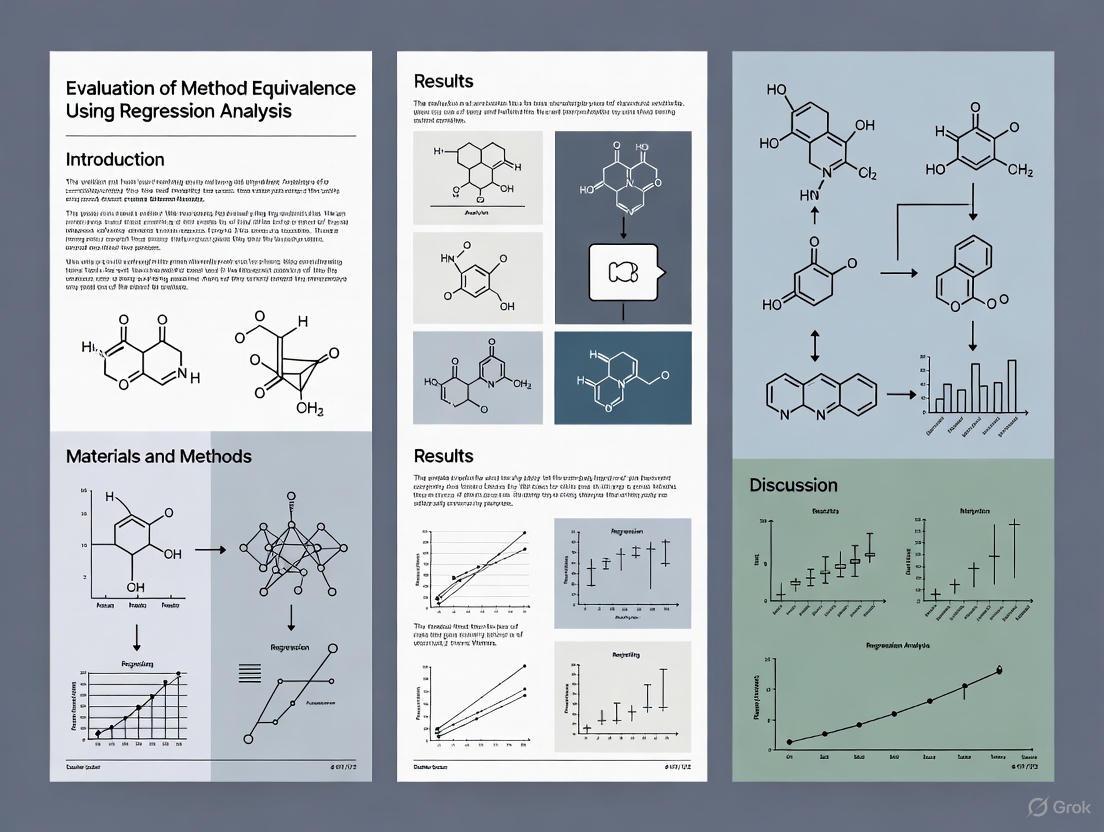

Beyond Difference Testing: A Practical Guide to Evaluating Method Equivalence Using Regression Analysis

This article provides a comprehensive guide for researchers and drug development professionals on using regression analysis to demonstrate method equivalence—a critical task in measurement validation, bioequivalence studies, and instrument calibration.

Beyond Difference Testing: A Practical Guide to Evaluating Method Equivalence Using Regression Analysis

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on using regression analysis to demonstrate method equivalence—a critical task in measurement validation, bioequivalence studies, and instrument calibration. Moving beyond traditional difference tests that are ill-suited for proving similarity, we detail the foundational principles of equivalence testing, including the Two-One-Sided Tests (TOST) procedure and proper setting of equivalence bounds. The content covers practical methodologies for applying these tests to regression coefficients and mean responses, strategies for troubleshooting common issues like model uncertainty, and advanced techniques for comparing full regression curves. By synthesizing modern statistical approaches, this guide empowers scientists to build robust, defensible evidence of equivalence in biomedical and clinical research.

Why Traditional Statistics Fail for Equivalence and What to Do Instead

In scientific research and drug development, the failure to reject a null hypothesis is frequently misinterpreted as evidence for the absence of an effect or difference. This article delineates the critical distinction between a non-significant result and the demonstration of equivalence, highlighting the statistical perils of this common misconception. We explore the roles of statistical power, beta error, and formal equivalence testing, with a specific focus on methodologies for evaluating method equivalence using regression and correlation analysis. Supported by experimental data and clear visual guides, this guide provides researchers and developers with the tools to correctly interpret and validate apparent similarities.

The Fundamental Problem: ‘No Difference’ Is Not ‘The Same’

A statistically non-significant result, typically indicated by a P-value greater than 0.05, is often erroneously interpreted as proof that no meaningful difference exists. This logical error stems from a misunderstanding of frequentist statistics [1]. A hypothesis test answers the question: "How likely are these results if the samples came from the same population?" A high P-value indicates that the observed data are quite plausible under the assumption of no true effect (the null hypothesis). It does not, however, prove that the null hypothesis is true [1] [2].

This reasoning is dangerously misconstrued when the consequence of concluding "no difference" is high, such as in asserting a new drug has toxicity equivalent to a placebo. The claim "there is no evidence that X is toxic" is not synonymous with "X is not toxic." The sceptic, and the statistician, must ask: "How toxic, and how much evidence was there to detect it?" [1]. The inability to detect a signal can simply be due to excessive background noise or an inadequate receiver, not the absence of a signal itself [1].

Statistical Pitfalls: Power, Beta Error, and Overlapping Confidence Intervals

The Critical Role of Statistical Power

The power of a statistical test is the probability that it will correctly reject a false null hypothesis; that is, find a defined difference when one truly exists. Power is defined as (1 - β), where β is the beta error (Type II error) [1].

The Beta Error: This is the possibility of classifying a result as showing "no effect" when a true difference exists. An underpowered study, often due to small sample sizes or large population variability, has a high beta error, making it prone to missing real effects [1]. As depicted in Figure 1, a small sample from two populations with a true difference can easily fail to reject the null hypothesis.

Figure 1: The Problem of Low Power. A study with low power may fail to detect a true difference between populations.

The Misleading Nature of Visual Comparisons

Many scientists judge differences "by eye" using plots with error bars, which can be highly misleading. Table 1 shows what different error bars typically represent.

Table 1: Common Error Bars and Their Interpretation

| Error Bar Type | Represents | Key Characteristic |

|---|---|---|

| Standard Deviation (SD) | The spread of the raw data around the mean. | A simple measurement of data variability. Does not directly indicate statistical significance. |

| Standard Error of the Mean (SEM) | The precision of the estimated mean; how the mean would vary across repeated samples. | Shrinks with larger sample size. Closely related to the t-statistic. |

| Confidence Interval (CI) | A range that, with a certain confidence level (e.g., 95%), contains the true population parameter. | Roughly spans ±2 standard errors. Provides a range for the true effect. |

A common error is to assume that if two 95% confidence intervals overlap, there is no statistically significant difference. This is an overly conservative test; overlapping confidence intervals can still belong to groups with a statistically significant difference (P < 0.05) [2]. Conversely, non-overlapping standard error bars do not necessarily signify a significant difference. Relying on the "eyeball test" is not a substitute for a formal hypothesis test [2].

The Solution: Demonstrating Equivalence with Formal Testing

Equivalence Testing as an Alternative Framework

To validly claim that two methods or products are equivalent, the statistical question must be reframed. Instead of testing for any difference, we test whether the difference is smaller than a pre-defined, clinically or scientifically irrelevant margin [3]. This is the foundation of equivalence testing.

Two prominent methods for this are:

- The Two One-Sided Tests (TOST) Method: This procedure tests whether the true effect is both greater than the negative equivalence margin and less than the positive equivalence margin. If both one-sided tests are significant, equivalence can be concluded [3].

- The Anderson-Hauck Test: A single test designed specifically for evaluating equivalence. Simulation studies suggest it may be preferable to TOST for comparing correlation or regression coefficients, though it requires large sample sizes for adequate power [3].

These tests are a direct replacement for the common, yet inappropriate, practice of using a non-significant difference-based test (P > 0.05) to claim equivalence [3].

Application to Regression and Correlation Analysis

The principles of equivalence testing can be extended to compare the key parameters from regression models, which is directly relevant for method-comparison studies in research and development. This allows for a formal assessment of whether two regression or correlation coefficients are equivalent within a specified margin [3]. The workflow for such an analysis is outlined in Figure 2.

Figure 2: Workflow for Equivalence Testing of Regression/Coefficients.

Experimental Protocol: A Sample Equivalence Study

This protocol outlines a generic experiment to demonstrate equivalence between two analytical methods (Method A and Method B).

1. Objective: To demonstrate that the measurement outputs of Method A and Method B are equivalent for quantifying a target analyte. 2. Experimental Design:

- Samples: A panel of N samples covering the analytical measurement range of interest should be analyzed. The sample size (N) must be determined by a power calculation based on a pre-specified equivalence margin to ensure the study is sufficiently powered [1] [3].

- Procedure: Each sample is measured in triplicate (or more) by both Method A and Method B in a randomized order to avoid bias. 3. Data Analysis:

- Perform a correlation analysis between the mean results from Method A and Method B.

- Fit a linear regression model with Method B results as the dependent variable and Method A results as the independent variable.

- A priori, define the equivalence margin (Δ) for the slope and intercept of the regression line. This margin represents the maximum acceptable deviation from a slope of 1 and an intercept of 0 for the two methods to be considered equivalent.

- Use an equivalence test (e.g., TOST or Anderson-Hauck) for the regression coefficients (slope and intercept) to determine if their differences from ideal values (1 and 0) fall within the equivalence margin [-Δ, Δ] [3].

The Scientist's Toolkit: Essential Reagents & Materials

Table 2: Key Research Reagent Solutions for Method Equivalence Studies

| Item / Reagent | Function in Experiment |

|---|---|

| Certified Reference Materials | Provides a ground-truth standard with known analyte concentration to calibrate instruments and validate the accuracy of both methods under comparison. |

| Quality Control Samples | Used to monitor the precision and stability of analytical methods throughout the experiment, ensuring data integrity. |

| Sample Panel Spanning Dynamic Range | A critical set of samples with concentrations covering the low, medium, and high end of the expected measurement range to comprehensively assess method performance. |

| Statistical Software (R, Python, SAS) | Essential for performing complex statistical analyses, including regression, calculation of confidence intervals, and formal equivalence testing (TOST). |

The assertion that "no significant difference" implies equivalence is a profound statistical flaw that can lead to incorrect and potentially harmful conclusions in research and drug development. Moving beyond this fallacy requires a shift in both mindset and methodology. Researchers must prioritize study power, understand the limitations of visual data summaries, and, most importantly, adopt formal equivalence testing frameworks like TOST when the goal is to demonstrate similarity. By applying these rigorous standards, particularly in regression-based method comparisons, scientists can generate reliable and defensible evidence of true equivalence.

In scientific research, particularly in fields like drug development and measurement validation, researchers often need to demonstrate that two methods, processes, or treatments are sufficiently similar rather than different. This fundamental need has led to the development of equivalence testing, a statistical approach that flips the conventional logic of hypothesis testing. Unlike traditional tests that default to assuming no difference, equivalence testing is designed specifically to provide evidence of similarity [4] [5].

The core distinction lies in the null hypothesis. Traditional difference testing (e.g., t-tests, ANOVA) uses a null hypothesis of no difference (H₀: δ = 0) and seeks evidence to reject it in favor of finding a difference. Equivalence testing reverses this framework by setting a null hypothesis of non-equivalence (H₀: |δ| ≥ Δ), where Δ is a pre-specified equivalence margin. The alternative hypothesis (H₁: |δ| < Δ) represents the claim that the differences are within acceptable bounds of similarity [4] [6]. This reversal shifts the burden of proof, forcing the data to demonstrate equivalence rather than defaulting to it when no difference is detected [5].

This approach is particularly valuable in method validation, clinical trials, and process comparisons where demonstrating similarity has practical importance. For instance, equivalence testing is routinely used in bioequivalence studies to compare generic and branded drugs, in laboratory settings to validate modified testing processes, and in measurement research to evaluate new assessment tools against established criteria [7] [6].

Core Principles and Statistical Foundations

The Equivalence Margin and Zone of Indifference

The foundation of any equivalence test is the equivalence margin (Δ), also referred to as the "zone of scientific or clinical indifference" [8]. This pre-specified boundary represents the maximum difference between two methods that is considered scientifically or clinically trivial [4] [7]. Determining this margin requires subject-matter expertise and should be established prior to conducting the study based on clinical, practical, or regulatory considerations [8] [7].

The equivalence margin may be defined in absolute terms (e.g., within 5 units) or relative terms (e.g., within 10% of the reference mean) [4]. For example, in physical activity research, equivalence might be defined as a mean difference within ±15% of the reference method, while in analytical chemistry, regulatory guidelines might specify acceptable percentage differences between testing processes [4] [7].

The Two One-Sided Tests (TOST) Procedure

The most common statistical approach for equivalence testing is the Two One-Sided Tests (TOST) procedure [4] [8]. This method decomposes the overall null hypothesis of non-equivalence (H₀: δ ≤ -Δ or δ ≥ Δ) into two separate one-sided hypotheses:

- H₀₁: δ ≤ -Δ (Test method is substantially lower)

- H₀₂: δ ≥ Δ (Test method is substantially higher)

Both null hypotheses are tested simultaneously using one-sided statistical tests at a significance level α (typically 0.05). If both tests are rejected, the overall null hypothesis of non-equivalence is rejected, providing evidence that the true difference lies within the equivalence region (-Δ < δ < Δ) [4]. The overall p-value for the equivalence test equals the larger of the two one-sided p-values [4].

Table 1: Key Components of the TOST Procedure

| Component | Description | Role in Equivalence Testing | ||

|---|---|---|---|---|

| Equivalence Margin (Δ) | Pre-specified boundary of clinically/scientifically trivial differences | Defines the range of differences considered equivalent | ||

| Null Hypothesis (H₀) | δ | ≥ Δ (difference exists outside equivalence margin) | Assumption that methods are not equivalent | |

| Alternative Hypothesis (H₁) | δ | < Δ (difference lies within equivalence margin) | Claim that methods are equivalent | |

| Test Statistics | Two one-sided test statistics (t-tests commonly used) | Assess whether observed difference is significantly within bounds | ||

| Decision Rule | Reject H₀ if both one-sided tests are significant | Conclude equivalence when data provides sufficient evidence |

The Confidence Interval Approach

A mathematically equivalent and often more intuitive approach to equivalence testing uses confidence intervals [8]. For a significance level α = 0.05, a 90% confidence interval for the difference is constructed (not the conventional 95%). If this entire confidence interval falls completely within the equivalence region (-Δ, Δ), the null hypothesis of non-equivalence is rejected, and equivalence is concluded at the 5% significance level [4] [8].

This approach provides visual clarity—when the entire confidence interval lies within the equivalence bounds, equivalence is demonstrated. If the interval spans outside the bounds, equivalence cannot be claimed, regardless of whether it includes zero [8]. The confidence interval approach also offers more information about the precision of the estimate and the magnitude of the potential difference.

Figure 1: The Two One-Sided Tests (TOST) Decision Logic

Experimental Protocols for Equivalence Testing

Means Equivalence Testing Protocol

Means equivalence testing evaluates whether the average results from two methods differ by more than a negligible amount. This approach is commonly used to detect systematic bias between methods [7].

Experimental Protocol:

- Define Equivalence Margin: Establish Δ based on clinical/practical significance before data collection [7]

- Experimental Design: Obtain paired or parallel measurements from both methods on the same set of test materials [7]

- Data Collection: Ensure measurements cover the expected range of values and are collected under appropriate conditions

- Statistical Analysis:

- Calculate mean difference between methods and standard error

- Perform TOST procedure or construct 90% confidence interval for mean difference

- Compare results to equivalence margin

Interpretation: Reject non-equivalence if 90% CI falls entirely within (-Δ, Δ) [8]

Table 2: Example Scenarios for Means Equivalence Testing

| Application Field | Typical Equivalence Margin | Key Considerations | Reference Method |

|---|---|---|---|

| Bioequivalence Studies | 80-125% for AUC and Cmax | Regulatory guidelines specify margins | Branded drug formulation |

| Method Validation | ± allowable error from regulatory guidance | Cover clinically relevant range | Reference standard method |

| Process Improvement | Based on quality requirements | Risk assessment for wrong decisions | Current established process |

Regression-Based Equivalence Testing Protocol

When comparing methods across a range of values, regression-based equivalence testing provides a more comprehensive assessment than means testing alone [5]. This approach evaluates whether the relationship between two methods demonstrates equivalence in both intercept and slope.

Experimental Protocol:

- Define Equivalence Region: Establish acceptable ranges for both intercept (α) and slope (β) parameters

- Experimental Design: Collect paired measurements across the entire measurement range

- Statistical Analysis:

- Fit regression model: y = α + βx + ε

- Estimate confidence intervals for intercept and slope

- Test whether both parameter CIs fall within equivalence region

Interpretation: Methods are equivalent if both intercept and slope demonstrate equivalence [5]. This approach is more rigorous than means testing alone as it assesses equivalence across the entire measurement range rather than at a single point.

Model Averaging in Equivalence Testing

Traditional regression-based equivalence tests assume the correct model form is known, which is rarely true in practice. Model averaging addresses this uncertainty by incorporating multiple plausible models into the equivalence testing framework [9].

Experimental Protocol:

- Specify Candidate Models: Identify a set of plausible regression models (e.g., linear, quadratic, Emax, sigmoidal)

- Calculate Model Weights: Assign weights to each model based on information criteria (e.g., AIC, BIC)

- Estimate Parameters: Compute weighted averages of parameters across all models

- Conduct Equivalence Test: Perform equivalence testing on the model-averaged estimates

This approach is particularly valuable in dose-response studies, time-response analyses, and other scenarios where the underlying functional form is uncertain [9]. By accounting for model uncertainty, it provides more robust equivalence conclusions and reduces the risk of misspecification errors.

Essential Research Reagents and Tools

Table 3: Essential Research Reagents and Statistical Tools for Equivalence Testing

| Tool/Reagent | Function/Purpose | Application Context |

|---|---|---|

| Two One-Sided Tests (TOST) | Primary statistical method for equivalence testing | Testing mean equivalence between two methods |

| 90% Confidence Intervals | Visual and mathematical approach to assess equivalence | Complement or alternative to TOST |

| Equivalence Margin (Δ) | Pre-specified boundary of trivial differences | Defining the threshold for equivalence claims |

| Model Averaging Algorithms | Account for model uncertainty in regression equivalence | Dose-response and time-response studies |

| Sensitivity Analysis | Assess robustness of equivalence conclusions | Varying equivalence margins or statistical models |

| Statistical Software | Implement equivalence testing procedures | R, SAS, Python, or specialized equivalence packages |

Comparative Analysis and Data Presentation

Equivalence Testing Versus Traditional Difference Testing

The fundamental differences between equivalence testing and traditional difference testing extend beyond their opposing null hypotheses to their practical implications for research conclusions.

Table 4: Comparison of Equivalence Testing vs. Traditional Difference Testing

| Aspect | Equivalence Testing | Traditional Difference Testing | ||

|---|---|---|---|---|

| Null Hypothesis | Methods are not equivalent ( | δ | ≥ Δ) | Methods are not different (δ = 0) |

| Alternative Hypothesis | Methods are equivalent ( | δ | < Δ) | Methods are different (δ ≠ 0) |

| Burden of Proof | Data must demonstrate similarity | Data must demonstrate difference | ||

| Effect of Sample Size | Larger samples make it easier to prove equivalence | Larger samples make it easier to find differences | ||

| Proper Conclusion when p > 0.05 | Cannot claim equivalence (inconclusive) | Cannot claim difference (inconclusive) | ||

| Appropriate Use Case | Demonstrating similarity or non-inferiority | Detecting statistically significant effects |

Applications Across Research Domains

Equivalence testing has been successfully applied across diverse scientific fields, each with domain-specific considerations for implementation.

Pharmaceutical Development: In bioequivalence studies, generic drugs must demonstrate equivalent pharmacokinetic profiles (AUC and Cmax) to branded counterparts, typically within 80-125% equivalence margins [6]. The TOST procedure is the standard statistical approach accepted by regulatory agencies worldwide.

Method Validation and Transfer: When modifying testing processes (e.g., new instrumentation, reagents, or locations), equivalence testing demonstrates that results remain comparable to the established method [7]. This application includes assessing means equivalence, slope equivalence, and range equivalence depending on the modification type.

Measurement Research: In exercise science and health research, equivalence testing validates new assessment tools (e.g., activity monitors, fitness tests) against criterion measures [4]. This approach is statistically more appropriate than correlation coefficients or difference tests for demonstrating measurement agreement.

Model Validation: Equivalence testing provides a formal framework for comparing model predictions to observed data, shifting the burden of proof to the model to demonstrate its predictive accuracy [5]. This approach is superior to traditional goodness-of-fit tests that become overpowered with large sample sizes.

Equivalence testing with its reversed null hypothesis provides a statistically rigorous framework for demonstrating similarity between methods, processes, or treatments. The core principles—defining a clinically meaningful equivalence margin, employing the TOST procedure or confidence interval approach, and accounting for model uncertainty—establish a foundation for appropriate equivalence assessments across research domains.

As methodological research advances, developments in model averaging, multiple quantile equivalence testing, and adaptive equivalence designs continue to enhance the applicability and robustness of these methods [9] [10]. For researchers seeking to demonstrate methodological equivalence rather than difference, these statistical approaches offer the proper tools to support scientifically valid conclusions of similarity.

In pharmaceutical development, demonstrating that a new method is equivalent to an established one is a common and critical challenge. Whether for bioanalytical methods, manufacturing processes, or clinical trial designs, proving equivalence ensures that new, potentially superior approaches can be reliably adopted without compromising data integrity or patient safety. This guide objectively compares the performance of traditional regression analysis against modern Model-Informed Drug Development (MIDD) approaches for evaluating method equivalence, providing the experimental protocols and data interpretation frameworks essential for researchers and scientists.

The Statistical Foundation of Equivalence

Core Principles and Regulatory Context

Equivalence testing is a statistical framework used to demonstrate that two methods, processes, or products do not differ in their outcomes by a clinically or scientifically meaningful amount. Unlike traditional significance testing, which seeks to prove a difference, equivalence testing aims to confirm the absence of a practical difference within a pre-specified margin known as the equivalence region (or equivalence margin) [11].

This region represents the largest difference that is considered scientifically or clinically unimportant. Properly defining this margin is the most critical step in designing a valid equivalence study, as it aligns statistical proof with practical relevance. Within drug development, the International Council for Harmonisation (ICH) has expanded its guidance to include MIDD approaches, promising improved consistency in applying these quantitative methods globally [12].

Traditional Regression Analysis vs. Modern MIDD Approaches

The evaluation of method equivalence has evolved from relying solely on traditional regression to incorporating more robust MIDD tools. The table below compares their core characteristics:

Table 1: Comparison of Equivalence Testing Methodologies

| Feature | Traditional Regression Analysis | Modern MIDD Approaches |

|---|---|---|

| Primary Focus | Establishing a functional relationship (e.g., y = mx + c) between two methods [11]. |

A quantitative framework for prediction and data-driven insights across the entire drug development lifecycle [12]. |

| Key Question | "What is the mathematical relationship between Method A and Method B?" | "Are methods A and B equivalent for a specific Context of Use (COU), and what is the associated risk?" [12] |

| Equivalence Region | Often implied by the confidence intervals around the slope and intercept. | Explicitly defined as part of the "Fit-for-Purpose" strategy, closely aligned with the Question of Interest (QOI) and COU [12]. |

| Data Output | A regression line with confidence intervals and R² value [11]. | A model that provides quantitative prediction and assesses potential drug candidates more efficiently, reducing costly late-stage failures [12]. |

| Limitations | Correlation does not imply causation; sensitive to outliers and structured noise [11]. | Requires experienced teams with multidisciplinary expertise for proper implementation [12]. |

Experimental Protocols for Establishing Equivalence

Protocol 1: Standard Method Comparison Using Regression Analysis

This protocol outlines the steps for a traditional bioanalytical method comparison, suitable for demonstrating equivalence between a new method and a reference method.

1. Objective: To demonstrate that the new analytical method is equivalent to the validated reference method for quantifying Drug Substance X in human plasma.

2. Experimental Design:

- Sample Preparation: A set of calibration standards and quality control (QC) samples of Drug Substance X in human plasma are prepared across the validated concentration range (e.g., 1–100 ng/mL).

- Sample Analysis: All samples are analyzed in a single batch by both the New Method and the Reference Method. The analysis order should be randomized to avoid systematic bias.

- Replication: Each concentration level should be analyzed with a minimum of n=5 replicates to provide a robust estimate of variability.

3. Key Research Reagent Solutions: Table 2: Essential Materials for Method Comparison

| Item | Function |

|---|---|

| Drug Substance X Reference Standard | Provides the known analyte for preparing calibration curves and QC samples, ensuring accuracy. |

| Stable Isotope-Labeled Internal Standard | Corrects for variability in sample preparation and ionization efficiency in mass spectrometry. |

| Blank Human Plasma | Serves as the biological matrix for preparing standards and QCs, matching the composition of study samples. |

| Protein Precipitation Solvent | Deproteinizes plasma samples to extract the analyte and reduce matrix effects. |

4. Data Analysis:

- Scatterplot and Regression: Plot the results from the New Method (y-axis) against the Reference Method (x-axis). Perform simple linear regression (

y = mx + c) to obtain the slope (m), intercept (c), and coefficient of determination (R²) [11]. - Bland-Altman Analysis: Calculate the difference between paired measurements and plot these differences against their average. This visualizes bias across the concentration range.

- Equivalence Evaluation: The 95% confidence interval for the slope must contain 1.0, and the interval for the intercept must contain 0.0, within the pre-defined equivalence region.

The workflow for this protocol is systematic and linear, as shown below:

Protocol 2: MIDD "Fit-for-Purpose" Equivalence for Clinical Endpoints

This protocol describes a model-based approach for demonstrating equivalence between a new clinical trial design and a standard one, a common scenario in submissions under the 505(b)(2) pathway [12].

1. Objective: To demonstrate, via a Model-Informed Drug Development (MIDD) approach, that a new optimized clinical trial design yields equivalent conclusions about drug efficacy compared to the standard design.

2. Experimental Design:

- Data Source: Use existing Phase III clinical trial data for Drug Y, which utilized the Standard Design.

- Virtual Population Simulation: Create a realistic virtual cohort that matches the demographic and baseline disease characteristics of the original trial population [12].

- Clinical Trial Simulation: Use the new, optimized trial design (e.g., with an adaptive element or different endpoint) to "re-run" the trial on the virtual population. This process is repeated thousands of times using techniques like Monte Carlo simulation to account for variability [13].

3. Data Analysis:

- Primary Comparison: The primary outcome (e.g., change from baseline in a disease score) from the simulated new trials is compared to the outcome from the original standard trial.

- Define Equivalence Region: The equivalence region for the trial outcome is defined a priori based on clinical input (e.g., a difference of less than 0.5 points on the disease scale is not clinically meaningful).

- Equivalence Evaluation: The difference in outcomes between the simulated and original trials is calculated. If the 90% confidence interval of this difference falls entirely within the pre-specified equivalence region, equivalence of the trial designs is concluded.

The following diagram illustrates the iterative, simulation-heavy nature of this MIDD protocol:

Data Presentation and Performance Comparison

Quantitative Results from Simulated Case Study

A simulated case study was conducted to compare the performance of a new LC-MS/MS method (Method B) against a reference HPLC-UV method (Method A) for quantifying a small molecule drug. The pre-defined equivalence region for the slope was 0.95–1.05 and for the intercept was -5.0 to +5.0 ng/mL.

Table 3: Method Comparison Regression Results (n=40 paired samples)

| Parameter | Reference Method A | New Method B | Regression Outcome | Within Equivalence Region? |

|---|---|---|---|---|

| Slope (95% CI) | - | - | 1.02 (0.98, 1.06) | Yes |

| Intercept (95% CI), ng/mL | - | - | -1.5 (-3.8, +0.8) | Yes |

| Mean Cmax (ng/mL) | 78.5 | 79.2 | - | - |

| Mean AUC0-t (ng*h/mL) | 645.1 | 652.8 | - | - |

| Key Conclusion | - | - | Methods are equivalent | - |

The data shows that the 95% confidence intervals for both the slope and intercept fall entirely within the pre-specified equivalence region. This quantitative evidence allows researchers to confidently conclude that the new LC-MS/MS method is equivalent to the reference method and is suitable for its intended use in pharmacokinetic studies.

The choice between traditional regression and a modern MIDD approach hinges on the complexity of the question and the context of use. For straightforward analytical method comparisons, traditional regression, supplemented with Bland-Altman plots, provides a clear and defensible path to proving equivalence. However, for complex questions involving clinical trial simulations, dose optimization, or population pharmacokinetics, a "Fit-for-Purpose" MIDD approach is indispensable [12]. It forces an explicit, scientifically justified definition of the equivalence region upfront, directly linking statistical outcomes to the key questions of interest in drug development, thereby reducing costly late-stage failures and accelerating the delivery of new therapies to patients [12].

The Two-One-Sided Tests (TOST) Method and Confidence Interval Approach

In scientific and industrial research, particularly in fields such as pharmaceutical development and measurement validation, there is often a need to demonstrate that two methods, processes, or products are functionally equivalent rather than statistically different. Traditional difference testing, with its null hypothesis of no difference, is fundamentally unsuited for this purpose as failure to reject the null does not provide positive evidence of equivalence [4] [14]. Equivalence testing addresses this need by reversing the conventional hypothesis testing framework, placing the burden of proof on demonstrating that differences between compared items are small enough to be practically insignificant [14].

Two primary statistical methodologies have emerged for assessing equivalence: the Two-One-Sided Tests (TOST) method and the confidence interval (CI) approach. These methods are operationally linked and provide researchers with robust tools for demonstrating similarity within pre-specified tolerance limits [15] [8]. Within regression analysis research, these approaches extend beyond simple mean comparisons to evaluating the equivalence of slope coefficients, mean responses, and treatment-covariate interactions, enabling more nuanced methodological comparisons [16] [17].

Theoretical Foundations

The TOST Procedure

The Two-One-Sided Tests procedure, formally developed by Schuirmann in 1987, decomposes the equivalence testing problem into two separate one-sided hypotheses [18] [14]. For a comparison between two population means, μ₁ and μ₂, with a pre-specified equivalence margin Δ, the hypotheses are structured as:

- Null Hypothesis (H₀): Non-equivalence, where the true difference is outside the equivalence bounds (μ₁ - μ₂ ≤ -Δ OR μ₁ - μ₂ ≥ Δ)

- Alternative Hypothesis (H₁): Equivalence, where the true difference lies within the equivalence bounds (-Δ < μ₁ - μ₂ < Δ) [4] [18]

The TOST procedure tests two simultaneous one-sided hypotheses:

- H₀₁: μ₁ - μ₂ ≤ -Δ versus Hₐ₁: μ₁ - μ₂ > -Δ

- H₀₂: μ₁ - μ₂ ≥ Δ versus Hₐ₂: μ₁ - μ₂ < Δ

Equivalence is concluded at significance level α if both null hypotheses are rejected [15] [18]. This is equivalent to requiring that the p-values for both tests be less than α [19].

The Confidence Interval Approach

The confidence interval approach provides an intuitive visual and analytical method for assessing equivalence. Using this method, equivalence is established if the entire (1 - 2α) × 100% confidence interval for the difference in means lies completely within the equivalence interval (-Δ, Δ) [15] [8].

For example, when using a significance level of α = 0.05, researchers would calculate a 90% confidence interval for the difference between means. If this entire interval falls within the pre-specified equivalence bounds, equivalence can be concluded with 95% confidence [8]. This approach is operationally equivalent to the TOST procedure, though conceptually simpler for many researchers to implement and interpret [15] [8].

Operational Equivalence Between Methods

The fundamental connection between TOST and confidence interval approaches lies in their operational equivalence. When using a significance level α, the TOST procedure produces the same conclusions as checking whether the 100(1 - 2α)% confidence interval falls entirely within the equivalence bounds [15] [8]. This relationship, however, has caused some confusion in practical applications, particularly regarding whether to use 1-α or 1-2α confidence levels when applying the CI approach [15].

Table 1: Comparison of TOST and Confidence Interval Approaches

| Feature | TOST Approach | Confidence Interval Approach |

|---|---|---|

| Hypothesis Structure | Two one-sided tests | Single interval evaluation |

| Decision Rule | Reject H₀ if both one-sided p-values < α | Conclude equivalence if 100(1-2α)% CI within (-Δ, Δ) |

| Visual Interpretation | Less immediate | Highly intuitive |

| Computational Complexity | Moderate | Simple |

| Implementation in Software | Requires specialized routines | Can use standard output with adjusted confidence levels |

Equivalence Testing in Regression Analysis

Testing Slope Coefficients

In regression analysis, equivalence testing extends to assessing whether slope coefficients are practically negligible or equivalent between models. For a simple linear regression model Y = β₀ + Xβ₁ + ε, the equivalence test for a slope coefficient evaluates:

H₀: β₁ ≤ Δₗ or β₁ ≥ Δᵤ versus H₁: Δₗ < β₁ < Δᵤ

where Δₗ and Δᵤ are pre-specified lower and upper equivalence bounds, often set as symmetric values around zero (Δₗ = -Δ, Δᵤ = Δ) for assessing negligible trend [17]. The TOST procedure for slope equivalence uses the test statistics:

Tₛₗ = (β̂₁ - Δₗ)/(σ̂²/SSX)¹ᐟ² and Tₛᵤ = (β̂₁ - Δᵤ)/(σ̂²/SSX)¹ᐟ²

where β̂₁ is the least squares estimator of β₁, σ̂² is the error variance estimator, and SSX is the sum of squares for the predictor variable. The null hypothesis is rejected if both Tₛₗ > tᵥ,ₐ and Tₛᵤ < -tᵥ,ₐ, where tᵥ,ₐ is the upper α-th percentile of the t-distribution with ν degrees of freedom [17].

Testing Treatment-Covariate Interactions

In models with multiple groups or treatment conditions, equivalence testing can assess whether treatment-covariate interactions are negligible, supporting the assumption of parallel regression slopes. The Welch-type TOST procedure has been adapted for testing slope equivalence under variance heterogeneity, which is particularly valuable when comparing regression lines across different populations or experimental conditions [16].

The test statistic for comparing two slope coefficients β₁₁ and β₁₂ takes the form:

Wₛ = (β̂₁₁ - β̂₁₂)/Ĥₛ¹ᐟ²

where β̂₁₁ and β̂₁₂ are the sample slope estimators, and Ĥₛ is the estimator of the variance of the slope difference [16]. This approach accommodates the distributional properties of normal covariates and provides a robust method for assessing interaction equivalence in practical applications.

Testing Mean Responses at Specific Covariate Values

Equivalence testing can also evaluate mean responses at specific values of covariates, which is methodologically related to the Johnson-Neyman technique for identifying regions of significance [17]. For a mean response μ = β₀ + Xβ₁ at a selected value X = X({}_{\text{F}}), the hypotheses are:

H₀: μ ≤ Δₗ or μ ≥ Δᵤ versus H₁: Δₗ < μ < Δᵤ

The TOST procedure uses the test statistics:

T({}{\text{ML}}) = (μ̂ - Δₗ)/(σ̂²H({}{\text{M}})¹ᐟ² and T({}{\text{MU}}) = (μ̂ - Δᵤ)/(σ̂²H({}{\text{M}})¹ᐟ²

where μ̂ is the response estimator at X({}{\text{F}}), and H({}{\text{M}}) = 1/N + (X({}_{\text{F}}) - X̄)²/SSX [17]. This approach enables researchers to identify ranges of predictor values where mean responses between compared groups are practically equivalent.

Figure 1: Logical workflow for implementing TOST and confidence interval approaches in regression equivalence testing.

Experimental Protocols and Applications

Pharmaceutical Bioequivalence Studies

Bioequivalence assessment represents the most established application of equivalence testing, required by regulatory agencies for approving generic drugs. These studies typically evaluate whether pharmacokinetic parameters (AUC, Cₘₐₓ) between generic and brand-name drugs fall within equivalence margins, often set at ±20% of the reference mean [20]. The multivariate extension of TOST allows simultaneous assessment of equivalence for multiple parameters, though this presents statistical challenges due to power loss with increasing outcomes [20].

Table 2: Example Bioequivalence Study Results for Ticlopidine Hydrochloride

| Pharmacokinetic Parameter | Mean Ratio (Test/Reference) | 90% Confidence Interval | Equivalence Conclusion |

|---|---|---|---|

| AUC | 0.98 | (0.92, 1.05) | Equivalent |

| Cₘₐₓ | 1.02 | (0.95, 1.09) | Equivalent |

| tₘₐₓ | 1.05 | (0.91, 1.19) | Equivalent |

Measurement Method Comparison

Equivalence testing is valuable for validating new measurement instruments against reference methods. In a physical activity monitor validation study [4], researchers assessed equivalence by determining if the mean difference between devices was within ±15% of the reference mean. The TOST procedure applied to the mean difference (0.18 METs) yielded a 90% confidence interval of [-0.15, 0.52], which fell entirely within the equivalence region of [-0.65, 0.65], supporting equivalence.

Regression-Based Equivalence Protocols

For regression applications, a comprehensive equivalence testing protocol includes:

Define Equivalence Bounds: Establish Δₗ and Δᵤ based on subject-matter knowledge, considering the practical significance of slope coefficients or mean differences in the specific research context [8] [17].

Sample Size Determination: Calculate required sample sizes using power functions that accommodate the random nature of predictor variables in regression settings [16] [17].

Model Estimation: Fit the regression model and obtain parameter estimates with their standard errors.

Equivalence Testing: Apply TOST procedure to relevant parameters (slopes, mean responses) or compute confidence intervals.

Interpretation: Conclude equivalence if testing criteria are met, providing both statistical and practical interpretations.

Practical Implementation Considerations

Determining Equivalence Margins

Defending appropriate equivalence bounds represents the most challenging aspect of implementation. These margins should be established based on clinical, practical, or scientific considerations rather than statistical criteria [4] [8]. In bioequivalence studies, regulatory guidelines often specify standard margins (e.g., ±20% for pharmacokinetic parameters), while in novel applications, researchers must justify their chosen bounds based on previous literature, expert opinion, or assessment of practical significance.

Power and Sample Size Calculations

Proper power analysis is essential for designing informative equivalence studies. Power functions for TOST procedures in regression contexts must account for the distributional properties of both response and predictor variables [16] [17]. Unlike traditional difference testing, equivalence studies require larger sample sizes to demonstrate similarity with high confidence, particularly when the true difference is near the equivalence boundaries.

For slope equivalence testing, the power function depends on the noncentrality parameter λ = β₁/(σ²/SSX)¹ᐟ² and accommodates the stochastic nature of predictor variables through their distributional properties [17]. Numerical methods are often required for power calculation in multivariate equivalence testing scenarios [20].

Multivariate Extensions

Many practical applications require assessing equivalence across multiple endpoints simultaneously. The conventional multivariate TOST procedure declares equivalence only if all univariate tests meet their equivalence criteria, but this approach becomes increasingly conservative as the number of outcomes grows [20]. Recent developments, such as the multivariate α*-TOST procedure, apply finite-sample adjustments that correct the significance level to account for dependence between outcomes, providing improved power while maintaining the prescribed Type I error rate [20].

Research Reagent Solutions

Table 3: Essential Statistical Tools for Equivalence Testing in Regression Analysis

| Tool/Resource | Function | Implementation Considerations |

|---|---|---|

| Welch-Type TOST Procedure | Tests slope equivalence under variance heterogeneity | Accommodates distributional properties of normal covariates [16] |

| Power Analysis Software | Calculates sample size requirements for equivalence studies | Must account for random nature of predictor variables in regression [17] |

| Multivariate α*-TOST Adjustment | Corrects significance level for multiple endpoints | Maintains test size while improving power in multivariate settings [20] |

| Confidence Interval Methods | Provides visual equivalence assessment | Requires 100(1-2α)% confidence intervals for equivalence testing [15] [8] |

| Noncentral t-Distribution | Models sampling distribution under alternatives | Essential for power calculations in TOST procedures [17] |

Figure 2: Methodological framework for implementing equivalence testing in regression analysis research.

The TOST method and confidence interval approach provide statistically sound and practically implementable frameworks for establishing equivalence in regression analysis and broader scientific applications. While operationally equivalent, these approaches offer complementary advantages: TOST provides formal hypothesis testing machinery, while the confidence interval method enables intuitive visual assessment of equivalence.

In regression contexts, equivalence testing extends beyond simple mean comparisons to evaluate slope coefficients, treatment-covariate interactions, and mean responses at specific covariate values. Recent methodological advances address complex application scenarios, including multivariate equivalence testing and power calculations accommodating random predictor distributions.

Successful implementation requires careful attention to key elements: scientifically justified equivalence margins, appropriate sample size planning, and proper interpretation of results within the research context. When properly applied, equivalence testing offers a powerful approach for demonstrating similarity and comparability across methodological, clinical, and industrial research domains.

Choosing Between Average and Whole-Curve Equivalence for Your Research Question

In scientific research, particularly in pharmaceutical development and analytical method comparison, establishing equivalence is fundamental for demonstrating that two products, processes, or methods are sufficiently similar in their effects or outputs. Two dominant statistical paradigms have emerged for this purpose: Average Equivalence and Whole-Curve Equivalence. Average Equivalence, a well-established approach, tests whether single summary metrics (e.g., means, AUC) between two groups or treatments differ by more than a pre-specified equivalence threshold [9] [21]. In contrast, Whole-Curve Equivalence represents a more modern, comprehensive framework that assesses whether entire functional relationships (e.g., regression curves describing dose-response or time-response profiles) are equivalent across their entire domain using a suitable distance measure [9]. The choice between these methodologies carries significant implications for study design, statistical power, and the robustness of conclusions, making it a critical consideration in research planning.

Theoretical Foundations and Key Concepts

Average Equivalence

Average Bioequivalence (ABE) is the standard regulatory requirement for approving generic drugs. It focuses on comparing population averages for key pharmacokinetic parameters [21] [22]. The core principle is that two formulations are considered bioequivalent if the difference in their average responses is sufficiently small. The standard statistical procedure for ABE is the Two One-Sided Tests (TOST) procedure, which establishes that the true difference between products lies entirely within a pre-defined equivalence range [23] [21]. This approach relies on calculating a 90% confidence interval for the ratio of the averages of the test and reference products. For pharmacokinetic parameters like AUC (area under the curve) and Cmax (peak concentration), the accepted bioequivalence limits are 80%-125% [23] [22]. This means the 90% confidence interval for the ratio of the geometric means must fall entirely within these limits to claim equivalence.

Whole-Curve Equivalence

Whole-Curve Equivalence moves beyond single summary measures to compare entire functional relationships. Instead of testing single quantities like the mean or AUC, this method assesses the equivalence of whole regression curves over an entire covariate range, such as a time window or dose range [9]. Tests are typically based on a suitable distance measure between two curves, with the maximum absolute distance between them being a common choice [9]. This approach is particularly valuable when differences depend on a particular covariate, where average-based methods may lack accuracy. A significant challenge in Whole-Curve Equivalence is model uncertainty—the fact that the true underlying regression model is rarely known in practice. Model misspecification can lead to inflated Type I errors (falsely claiming equivalence) or conservative test procedures (reduced power to detect true equivalence) [9].

Comparative Analysis: Average vs. Whole-Curve Equivalence

Table 1: Direct comparison of Average and Whole-Curve Equivalence methodologies

| Feature | Average Equivalence | Whole-Curve Equivalence |

|---|---|---|

| Comparison Focus | Single summary metrics (e.g., mean, AUC) [9] | Entire functional relationships/curves across their domain [9] |

| Typical Application | Bioequivalence for generic drugs [22]; comparing group means | Dose-response studies; time-response analysis; comparing curve shapes [9] |

| Data Requirements | Aggregate summary measures for each group | Raw data across the entire covariate range (e.g., all dose levels or time points) |

| Key Assumptions | Data normally distributed (often after log transformation) [22] | Correct regression model specification (mitigated by model averaging) [9] |

| Statistical Procedure | Two One-Sided Tests (TOST); 90% CI within 80-125% limits [23] [21] | Distance-based tests (e.g., maximum absolute distance) with confidence intervals [9] |

| Primary Advantage | Simplicity; well-established regulatory acceptance [22] | Comprehensive profile comparison; detects covariate-dependent differences [9] |

| Primary Limitation | May miss important profile differences if averages are similar [9] | Model uncertainty; more complex implementation and interpretation [9] |

Decision Framework for Method Selection

The choice between Average and Whole-Curve Equivalence depends on your research question, data structure, and regulatory context. The following diagram outlines a logical pathway for selecting the appropriate methodology.

Experimental Protocols and Implementation

Protocol for Average Equivalence Study

The following workflow details the standard experimental protocol for establishing Average Bioequivalence, the most common application of average equivalence testing.

Implementation Details:

- Study Design: A two-period, two-sequence, two-treatment, single-dose crossover design is most common, where subjects are randomly assigned to sequence groups (e.g., TR or RT, where T=Test, R=Reference) [22].

- Statistical Analysis: After log-transformation of pharmacokinetic parameters (AUC and Cmax) to achieve normal distribution, the TOST procedure is applied. The 90% confidence interval for the ratio of geometric means is calculated [22]. The formulations are considered bioequivalent if this confidence interval lies entirely within the acceptance range of 80% to 125% [23] [22].

- Sample Size: For highly variable drugs (%CV ≥ 30), sample size requirements increase dramatically. A two-way crossover study for a drug with 30% CV and a GMR of 1.05 may require 38 subjects to achieve 80% power, while a four-way replicate design could reduce this to 20 subjects [23].

Protocol for Whole-Curve Equivalence Study

Implementation Details:

- Model Specification: Define a set of candidate models (e.g., linear, quadratic, Emax, exponential, sigmoid Emax) that could potentially describe the relationship between the covariate (dose, time) and response [9].

- Model Averaging: To address model uncertainty, implement a model averaging approach using smooth Bayesian Information Criterion (BIC) weights rather than relying on a single selected model [9]. This creates a weighted composite model that incorporates uncertainty from all candidate models.

- Distance Calculation: Compute a suitable distance measure (e.g., maximum absolute distance, integrated squared distance) between the averaged curves for the two groups being compared [9].

- Inference: Derive a confidence interval for the distance measure using bootstrap methods. Compare this interval to the pre-specified equivalence threshold Δ. If the entire confidence interval lies below Δ, whole-curve equivalence can be concluded [9].

Table 2: Common regression models for dose-response and time-response curves in whole-curve equivalence testing [9]

| Model Name | Equation | Key Characteristics |

|---|---|---|

| Linear | ( m(x, \theta) = \beta0 + \beta1 x ) | Constant rate of change; simplest form |

| Quadratic | ( m(x, \theta) = \beta0 + \beta1 x + \beta_2 x^2 ) | Parabolic relationship; can capture turning points |

| Emax | ( m(x, \theta) = \beta0 + \frac{\beta1 x}{\beta_2 + x} ) | Saturating relationship; common in pharmacology |

| Exponential | ( m(x, \theta) = \beta0 + \beta1 \left( \exp\left(\frac{x}{\beta_2}\right) - 1 \right) ) | Monotonic increasing or decreasing |

| Sigmoid Emax | ( m(x, \theta) = \beta0 + \frac{\beta1 x^{\beta3}}{\beta2^{\beta3} + x^{\beta3}} ) | S-shaped curve; flexible for dose-response |

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key research reagents and computational tools for equivalence studies

| Tool/Reagent | Function/Role | Application Context |

|---|---|---|

| LC-MS/MS Systems | Highly sensitive bioanalytical instrumentation for quantifying drug concentrations in biological matrices | Essential for measuring PK parameters (AUC, Cmax) in average equivalence studies [22] |

| Validated Bioanalytical Methods | FDA/EMA-compliant protocols for sample preparation, extraction, and analysis | Required for generating reliable concentration data in bioequivalence studies [22] |

| Statistical Software (R, Phoenix, SAS) | Implementation of TOST, bootstrap procedures, and model averaging algorithms | Critical for both average and whole-curve equivalence statistical analysis [9] [21] |

| Model Averaging Algorithms | Computational methods (e.g., smooth BIC weights) to combine multiple candidate models | Reduces model uncertainty in whole-curve equivalence testing [9] |

| Bootstrap Resampling Code | Computer-intensive method for deriving confidence intervals without distributional assumptions | Used in both average and whole-curve equivalence for interval estimation [9] |

The choice between Average and Whole-Curve Equivalence is fundamentally determined by the research question and the nature of the data. Average Equivalence remains the gold standard for regulatory bioequivalence assessment of generic drugs, offering a straightforward, widely accepted framework for comparing summary metrics. In contrast, Whole-Curve Equivalence provides a more comprehensive approach for situations where the entire functional relationship between a covariate and response needs comparison, especially when differences may be localized to specific covariate ranges. The incorporation of model averaging techniques significantly strengthens Whole-Curve Equivalence by addressing the critical issue of model uncertainty. Researchers should carefully consider their specific objectives, regulatory requirements, and the depth of comparison needed when selecting between these powerful methodological frameworks.

Implementing Equivalence Tests in Regression: From Simple Linear to Complex Models

Equivalence Testing for Slope Coefficients in Simple Linear Regression

In traditional regression analysis, statistical tests are designed to detect significant relationships between variables, typically employing null hypotheses that assert no effect (e.g., a slope coefficient of zero). However, a growing awareness of methodological limitations has highlighted that failing to reject a null hypothesis does not constitute evidence for the null. This fundamental statistical principle creates a substantial challenge for researchers aiming to demonstrate the absence of meaningful effects, particularly in method comparison, assay validation, and process change evaluation in pharmaceutical development. Equivalence testing emerges as a statistically sound solution to this problem by essentially reversing the conventional hypothesis testing framework.

The conceptual foundation of equivalence testing lies in specifying an equivalence margin (Δ) – a region around zero within which differences are considered practically insignificant. Rather than testing for difference, equivalence tests evaluate whether an estimated effect size (such as a regression slope) falls within these pre-specified boundaries of practical equivalence. This approach aligns perfectly with regulatory requirements in drug development, where demonstrating comparability after process changes often holds greater importance than detecting differences. As highlighted in pharmacological research, equivalence testing was developed to address precisely these needs, with the two-one-sided tests (TOST) procedure now recognized as a standard methodology for bioequivalence assessment [24] [25].

When applied to linear regression, equivalence testing for slope coefficients provides researchers with a rigorous statistical framework for confirming the lack of a meaningful association between continuous variables. This application is particularly valuable for validating that a predictor variable has a negligible practical impact on a response variable, supporting claims of practical non-association rather than merely statistical non-significance [17] [26].

Theoretical Framework and Statistical Foundations

The Limitations of Traditional Hypothesis Tests for Equivalence

Traditional hypothesis tests in linear regression evaluate whether slope coefficients significantly differ from zero or another specified value. The standard approach formulates a null hypothesis (H₀: β₁ = 0) against an alternative hypothesis (H₁: β₁ ≠ 0). When statistical tests fail to reject the null hypothesis, researchers often mistakenly interpret this result as evidence of no meaningful relationship. However, this interpretation is methodologically flawed because failure to reject could simply result from insufficient statistical power, small sample sizes, or large measurement variability [4] [27].

This limitation becomes particularly problematic in pharmaceutical applications where demonstrating similarity is paramount. As noted in biopharmaceutical process development, "the null hypothesis of the TOST states that the two means are not equivalent. The impact of the null hypothesis is that in case of small sample sizes and/or poor precision (large variance) in one or both groups, equivalence is rather rejected resulting in low numbers of false positive test results" [24]. This property makes equivalence testing particularly suitable for quality control and method validation, where incorrectly claiming similarity could have serious consequences.

Formulating Equivalence Hypotheses for Slope Coefficients

Equivalence testing reverses the conventional hypothesis structure. For a slope coefficient in simple linear regression, the equivalence test can be formulated as:

- Null Hypothesis (H₀): |β₁| ≥ Δ (the slope is outside the equivalence bounds)

- Alternative Hypothesis (H₁): |β₁| < Δ (the slope is within the equivalence bounds)

Here, Δ represents the equivalence margin, which defines the minimum practically significant slope value. This margin must be defined a priori based on subject-matter expertise, regulatory requirements, or clinical relevance [17] [4]. The equivalence margin can be symmetric (e.g., -Δ to Δ) or asymmetric around zero, depending on the research context.

In practice, the hypothesis test is often structured as two one-sided tests:

- H₀₁: β₁ ≤ -Δ versus Hₐ₁: β₁ > -Δ

- H₀₂: β₁ ≥ Δ versus Hₐ₂: β₁ < Δ

Both null hypotheses must be rejected at the chosen significance level (typically α = 0.05) to conclude equivalence [17] [25].

The Two-One-Sided Tests (TOST) Procedure

The TOST procedure provides a straightforward method for implementing equivalence testing for regression parameters. For a slope coefficient β₁ in simple linear regression, the test statistics are calculated as:

- T_L = (β̂₁ - (-Δ)) / SE(β̂₁) = (β̂₁ + Δ) / SE(β̂₁)

- T_U = (β̂₁ - Δ) / SE(β̂₁)

where β̂₁ is the estimated slope coefficient from the regression model and SE(β̂₁) is its standard error [17].

The null hypothesis of non-equivalence is rejected if both TL > t(ν, α) and TU < -t(ν, α), where t_(ν, α) is the critical value from the t-distribution with ν degrees of freedom (typically n-2 for simple linear regression) at significance level α. This procedure is operationally equivalent to examining whether the 100(1-2α)% confidence interval for β₁ falls completely within the equivalence bounds (-Δ, Δ) [17] [4].

Table 1: Comparison of Traditional and Equivalence Testing Approaches for Regression Slopes

| Aspect | Traditional Significance Test | Equivalence Test |

|---|---|---|

| Null Hypothesis | Slope equals zero (H₀: β₁ = 0) | Slope exceeds equivalence margin (H₀: |β₁| ≥ Δ) |

| Alternative Hypothesis | Slope differs from zero (H₁: β₁ ≠ 0) | Slope within equivalence margin (H₁: |β₁| < Δ) |

| Interpretation when Rejecting H₀ | Statistically significant relationship | Practically insignificant relationship |

| Interpretation when Failing to Reject H₀ | Inconclusive (cannot claim no relationship) | Inconclusive (cannot claim equivalence) |

| Primary Concern | Type I error (falsely claiming an effect) | Type I error (falsely claiming equivalence) |

Implementation Protocols for Regression Slope Equivalence

Determining the Equivalence Margin

The most critical step in implementing equivalence testing is establishing a justified equivalence margin (Δ). This margin represents the largest absolute slope value that would be considered practically insignificant in the specific research context. The equivalence margin should be determined based on:

- Clinical or practical significance: What magnitude of change in the response variable per unit change in the predictor would be considered meaningful?

- Regulatory guidelines: Established standards for specific applications (e.g., bioequivalence testing often uses 20% boundaries).

- Historical data: Variability and effect sizes observed in previous similar studies.

- Expert consensus: Input from domain specialists regarding meaningful differences.

As emphasized in laboratory medicine research, "the equivalence region may be specified in absolute terms, e.g., two methods are equivalent when the mean for a test method is within 5 units of the mean for a reference method, or in relative terms, e.g., two methods are equivalent when the mean for a test method is within 10% of the reference mean" [4]. For regression slopes, these margins can be defined in terms of the expected change in the outcome variable or using standardized effect sizes.

Experimental Design Considerations

Proper experimental design is essential for informative equivalence testing. Key considerations include:

- Sample size planning: Equivalence tests typically require larger sample sizes than traditional tests to achieve adequate power for detecting equivalence.

- Power analysis: Statistical power should be sufficient (typically 80% or higher) to correctly conclude equivalence when the true slope is indeed within the equivalence bounds.

- Measurement precision: Reducing measurement error increases the precision of slope estimates and enhances the ability to detect equivalence.

- Predictor range: Ensuring sufficient variability in the predictor variable improves the precision of slope estimation.

Research on equivalence testing in biopharmaceutical applications highlights that "in case of small sample sizes and/or poor precision (large variance) in one or both groups, equivalence is rather rejected resulting in low numbers of false positive test results" [24]. This conservative property makes adequate sample size planning particularly important for equivalence studies.

Analytical Workflow

The analytical procedure for conducting equivalence testing on a regression slope follows a systematic workflow:

Figure 1: Analytical workflow for equivalence testing of regression slope coefficients

Applications in Pharmaceutical Research and Development

Method Comparison Studies

Equivalence testing for regression slopes finds valuable application in method comparison studies, which are frequently conducted during analytical method validation in pharmaceutical development. When comparing two measurement methods, researchers often collect paired measurements across a range of concentrations and fit a linear regression model. The slope coefficient provides information about proportional differences between methods, and equivalence testing can formally demonstrate that this slope is sufficiently close to 1 (often by testing whether β₁ - 1 falls within pre-specified equivalence bounds) [4] [24].

For example, in physical activity measurement research, "equivalence testing is more appropriate than conventional tests of difference to assess the validity of physical activity measures" [4]. This principle extends directly to pharmaceutical analytical methods, where demonstrating equivalence between a new method and a reference method is often required for method validation.

Process Change Evaluation

The biopharmaceutical industry frequently employs equivalence testing to demonstrate comparability following manufacturing process changes. As noted in downstream process development, "for post-approval variations which may have an impact on quality, safety or efficacy of a biopharmaceutical such as changes in e.g., the manufacturing process, the analytical methods, the manufacturing equipment, the manufacturing location or the facility, comparability of the pre-change product to the post-change product has to be confirmed" [24].

In this context, researchers might model critical quality attributes as a function of process parameters and test whether slope coefficients have remained equivalent before and after process modifications. This application ensures that process changes do not alter fundamental relationships between process parameters and product quality.

Dose-Response Relationship Assessment

Equivalence testing can be applied to evaluate the similarity of dose-response relationships between different drug formulations or manufacturing batches. By testing the equivalence of slope coefficients in linear regression models relating drug concentration to pharmacological effect, researchers can demonstrate that different formulations exhibit sufficiently similar potency profiles.

Comparison of Statistical Software Implementation

Various statistical software packages offer capabilities for conducting equivalence tests on regression parameters, though implementation approaches may differ:

Table 2: Software Implementation of Equivalence Testing for Regression

| Software/ Package | Implementation Approach | Key Functions/Features |

|---|---|---|

| R | Manual calculation using model summary output | lm(), emmeans, car, multcomp, lavaan |

| SAS | PROC REG with additional calculations | Parameter estimates with DATA step processing |

| SPSS | Custom syntax or MANOVA procedures | Regression command with additional syntax |

| Minitab | Specialized equivalence testing procedures | Built-in equivalence test options |

| JMP | Custom calculator from fit model platform | Parameter estimates with calculator functions |

As demonstrated in statistical programming resources, "these 6 simple methods have wide applications to GL(M)M's, SEM, and more" [28]. The R statistical programming environment, in particular, offers multiple approaches through various packages, including the emmeans package for post-hoc comparisons, the car package for linear hypothesis testing, and the multcomp package for general linear hypotheses.

Research Reagent Solutions for Robust Equivalence Testing

Table 3: Essential Methodological Components for Reliable Equivalence Testing

| Component | Function | Implementation Considerations |

|---|---|---|

| Sample Size Planning Tools | Determine required sample size to achieve target power | Power analysis based on expected effect size, variability, and equivalence margin |

| Equivalence Margin Justification | Define practically insignificant effect size | Based on regulatory guidance, historical data, or clinical expertise |

| Statistical Software | Implement TOST procedure and visualization | R, SAS, Python, or specialized commercial software |

| Sensitivity Analysis Framework | Assess robustness of equivalence conclusion | Vary equivalence margins or analyze subgroups |

| Data Quality Assessment Tools | Evaluate regression assumptions | Residual analysis, influence diagnostics, normality tests |

Equivalence testing for slope coefficients in simple linear regression provides pharmaceutical researchers and drug development professionals with a methodologically sound framework for demonstrating the absence of meaningful relationships between variables. By reversing the traditional hypothesis testing paradigm and incorporating pre-specified equivalence margins based on practical significance, this approach addresses a critical limitation of conventional statistical methods.

The TOST procedure offers a straightforward implementation method that aligns well with regulatory requirements for demonstrating comparability in pharmaceutical applications. As the field continues to emphasize method robustness and product quality, equivalence testing represents an essential tool in the statistical toolkit for method validation, process change evaluation, and analytical procedure comparison.

When properly implemented with appropriate equivalence margins, adequate sample sizes, and rigorous analytical protocols, equivalence testing for regression slopes strengthens scientific conclusions regarding method equivalence and process comparability in drug development.

Assessing Equivalence of Mean Responses at Critical Decision Points

In the field of medical device development, demonstrating the equivalence of a new product to a legally marketed predicate device is a fundamental regulatory requirement. The 510(k) premarket notification process under section 510(k) of the Food, Drug, and Cosmetic Act requires manufacturers to submit substantial evidence demonstrating that their new device is "substantially equivalent" to a predicate device already on the market [29]. This process of establishing equivalence is not unique to medical devices—it represents a broader statistical challenge in method comparison studies across scientific disciplines.

Traditional statistical tests of difference, such as t-tests and ANOVA, are fundamentally flawed for validation studies because failure to reject the null hypothesis of "no difference" does not provide positive evidence of equivalence [4]. Equivalence testing reverses the conventional statistical hypothesis framework, making the null hypothesis that two methods are not equivalent, while the alternative hypothesis is that they are equivalent within a predefined margin [4]. This approach provides a more appropriate statistical framework for demonstrating that a new measurement method, diagnostic tool, or therapeutic product performs comparably to an established reference.

The FDA's Five Critical Decision Points for Substantial Equivalence

The U.S. Food and Drug Administration (FDA) has established a structured framework for evaluating substantial equivalence in 510(k) submissions. This framework revolves around five critical decision points that determine whether a new device will be cleared for market [29] [30]. Understanding and successfully addressing each of these decision points is essential for navigating the regulatory pathway efficiently.

Decision Point 1: Legally Marketed Predicate Device

The first critical decision point involves establishing that the predicate device selected for comparison is legally marketed in the United States [29]. A legally marketed predicate means the device has previously undergone FDA clearance through the 510(k) process or was on the market before the Medical Device Amendments of 1976. The consequences of selecting a non-legally marketed predicate are severe—it will result in a "Not Substantially Equivalent" (NSE) determination, potentially requiring the manufacturer to pursue a lengthier and more costly Premarket Approval (PMA) pathway [29]. Manufacturers must thoroughly review the predicate device's regulatory history and ensure its marketing status is current and valid.

Decision Point 2: Identical Intended Use

The second decision point requires demonstrating that the new device has the same intended use as the predicate device [29]. Intended use refers to the general purpose or function of the device as described in its Indications for Use (IFU) statement. If the new device's intended use differs from the predicate—even if the technological characteristics are similar—the device cannot be considered substantially equivalent and will receive an NSE determination [29]. Manufacturers should carefully compare the wording in their IFU statements with those of the predicate device and review fundamental design characteristics, materials, and energy sources to ensure alignment in intended use.

Decision Point 3: Technological Characteristics

The third decision point evaluates whether the devices have the same technological characteristics [29]. Technological characteristics encompass the key components, materials, design principles, and energy sources that enable the device to achieve its intended use. When the new device and predicate share identical technological characteristics, this alone may be sufficient to demonstrate substantial equivalence. However, when differences exist in technological characteristics, manufacturers must thoroughly identify these differences and assess their potential impact on device safety and effectiveness [29]. Even seemingly minor changes in materials or design can significantly alter performance and risk profiles.

Decision Point 4: Safety and Effectiveness Questions

The fourth decision point addresses whether any differences in technological characteristics raise new questions regarding safety and effectiveness [29]. If the technological differences introduce new safety concerns or effectiveness considerations not applicable to the predicate device, an NSE determination may result. To avoid this outcome, manufacturers must propose appropriate scientific methods—such as bench testing, laboratory studies, animal models, or simulated use testing—to thoroughly evaluate the impact of these differences [29]. The acceptability of these proposed methods to FDA reviewers is crucial for successful navigation of this decision point.

Decision Point 5: Performance Data Evaluation

The fifth and final decision point involves a comprehensive assessment of performance data to demonstrate substantial equivalence [29]. This evaluation has two components: first, determining whether the methods used to generate performance data are scientifically sound and appropriate for evaluating the safety and effectiveness questions raised by any technological differences; and second, whether the data themselves demonstrate that the new device is as safe and effective as the predicate [29]. Performance testing should show comparable outcomes to the predicate across specifications, mechanical testing, simulated use, and other relevant metrics. If performance data reveal significant safety, efficacy, or performance differences from the predicate, an NSE determination will result [29].

Table 1: FDA's Five Critical Decision Points for Substantial Equivalence

| Decision Point | Key Question | Consequence of Negative Finding |

|---|---|---|

| 1. Predicate Device | Is the predicate device legally marketed? | Not Substantially Equivalent (NSE) determination |

| 2. Intended Use | Do the devices have the same intended use? | NSE determination |

| 3. Technological Characteristics | Do the devices have the same technological characteristics? | Proceed to Decision Point 4 |

| 4. Safety & Effectiveness | Do different technological characteristics raise different questions of safety and effectiveness? | NSE determination |

| 5. Performance Data | Does the performance data demonstrate substantial equivalence? | NSE determination |

Statistical Frameworks for Assessing Equivalence

Principles of Equivalence Testing