Beyond Trial and Error: A Systematic Approach to Optimization with Simplex and DoE vs. OFAT in Pharmaceutical Development

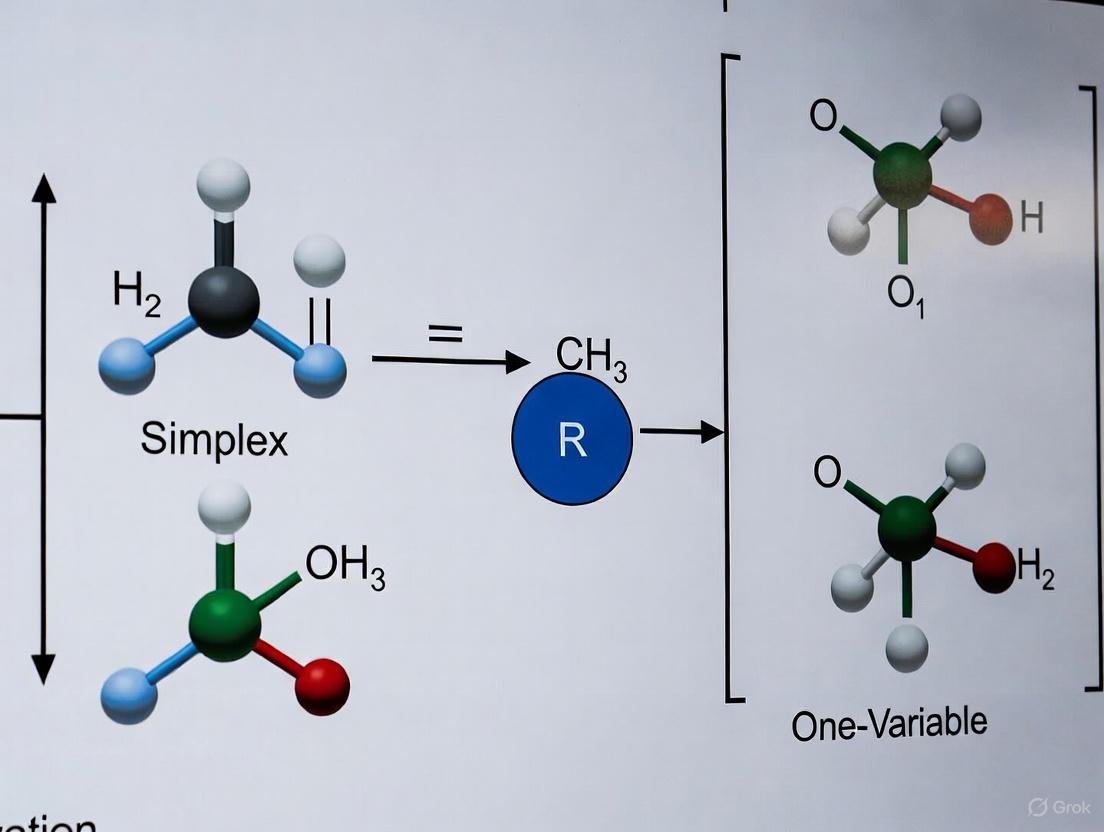

This article provides a comprehensive comparison of systematic optimization methods, such as the Simplex method and Design of Experiments (DoE), against the traditional One-Factor-at-a-Time (OFAT) approach.

Beyond Trial and Error: A Systematic Approach to Optimization with Simplex and DoE vs. OFAT in Pharmaceutical Development

Abstract

This article provides a comprehensive comparison of systematic optimization methods, such as the Simplex method and Design of Experiments (DoE), against the traditional One-Factor-at-a-Time (OFAT) approach. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles, practical applications, and comparative advantages of these methodologies. Readers will gain insights into how modern systematic approaches can efficiently identify critical factors, capture interaction effects, and lead to more robust, optimized processes in pharmaceutical development and biomedical research, ultimately saving time and resources while improving product quality.

From Traditional Guesswork to Systematic Search: Understanding OFAT and Modern Optimization Paradigms

In the rigorous fields of pharmaceutical development and scientific research, the path to process optimization is paved by structured experimentation. Two foundational methodologies dominate this landscape: the traditional One-Factor-at-a-Time (OFAT) approach and the systematic Design of Experiments (DOE). The core distinction between these contenders lies in their fundamental philosophy for probing complex systems. OFAT, a classical and widely taught method, investigates a process by varying a single factor while holding all others constant [1] [2]. In stark contrast, systematic DOE is a structured, statistically-driven methodology that deliberately varies multiple factors simultaneously according to a pre-defined experimental plan to efficiently uncover not just main effects, but also critical interaction effects between factors [1] [3]. This in-depth technical guide explores the core principles of these methodologies, framed within the context of simplex versus one-variable-at-a-time optimization research, providing researchers and drug development professionals with the knowledge to select the most powerful and efficient path to innovation.

Deconstructing the One-Factor-at-a-Time (OFAT) Approach

Core Principles and Historical Context

The OFAT approach, also known as the classical or hold-one-factor-constant method, is rooted in a straightforward, sequential process of inquiry [2]. Its procedure involves selecting a baseline set of conditions for all input factors, then systematically varying the level of one single factor across its range of interest while all other factors are kept rigidly static [2]. After the effect of that factor is observed and recorded, it is returned to its baseline level before the next factor is varied in the same manner. This cycle continues until all factors of interest have been tested independently [2].

Historically, OFAT gained popularity due to its intuitive simplicity and ease of implementation, requiring no complex experimental designs or advanced statistical analysis [2]. It was a practical choice in the early stages of scientific exploration, particularly when experiments were conducted manually and resource constraints were a primary concern. This method allowed researchers to isolate the effect of individual variables without the computational burden of multivariate analysis.

Inherent Limitations and Drawbacks

Despite its historical prevalence, the OFAT method carries severe limitations that render it unsuitable for optimizing complex processes, especially in pharmaceutical development where factor interactions are the rule, not the exception.

- Failure to Capture Interaction Effects: OFAT's most critical flaw is its inherent assumption that factors do not interact. It is incapable of detecting or quantifying synergistic or antagonistic effects between factors, which can lead to profoundly misleading conclusions and a failure to identify true optimal conditions [2]. For instance, the ideal level of an excipient in a formulation might depend entirely on the specific level of a binder used, a relationship completely invisible to an OFAT study.

- Inefficient Resource Utilization: Although seemingly simple, OFAT experiments require a large number of experimental runs to explore the same factor space, leading to an inefficient use of time, materials, and financial resources [1] [2].

- Lack of Optimization Capabilities: The method is primarily focused on understanding individual effects and is not a systematic tool for response optimization. It cannot reliably identify a robust optimum or a design space, as required by modern quality frameworks like Quality by Design (QbD) [2].

- Increased Risk of Misleading Results: By failing to account for interactions and exploring the experimental space along a single path, OFAT increases the risk of both Type I and Type II errors, potentially guiding development down an incorrect or suboptimal path [2].

The Systematic Approach: Design of Experiments (DOE)

Foundational Principles and Philosophical Shift

Design of Experiments (DOE) represents a paradigm shift from OFAT, moving from a sequential, isolated approach to a holistic, systems-based one. DOE is a structured and statistical methodology for simultaneously investigating the effects of multiple input factors on one or more output responses [3]. Its power lies in its ability to efficiently map a process's behavior across the entire experimental region. The methodology is built upon three bedrock statistical principles that ensure the validity and reliability of its findings:

- Randomization: The order of experimental runs is randomized to minimize the impact of lurking variables and systematic biases, thereby enhancing the generalizability of the results [2].

- Replication: Repeating experimental runs under identical conditions allows for the estimation of experimental error, which is essential for assessing the statistical significance of the observed effects [2].

- Blocking: This technique accounts for known sources of nuisance variability (e.g., different batches of raw material, different operators) by grouping experiments into homogenous blocks, thus improving the precision of effect estimation [2].

Key Methodologies in Systematic DOE

Systematic DOE encompasses a family of powerful design strategies, each tailored to specific experimental objectives.

Factorial Designs

Factorial designs form the cornerstone of DOE. In a full factorial design, all possible combinations of the levels of all factors are investigated [2]. For example, a 2-level, 3-factor design (2³) requires 8 experimental runs. This comprehensiveness allows for the unbiased estimation of all main effects and all interaction effects. The analysis is typically performed using Analysis of Variance (ANOVA), a statistical technique that partitions the total variability in the data into components attributable to each main effect, interaction effect, and experimental error, allowing for formal hypothesis testing [2].

Response Surface Methodology (RSM)

When the objective is to optimize a process or product formulation, Response Surface Methodology (RSM) is the tool of choice. RSM uses designed experiments to fit a polynomial model (often a quadratic model) to the experimental data, which can then be used to navigate the design space and locate optimal factor settings [2]. Two common RSM designs are:

- Central Composite Designs (CCD): These consist of a factorial or fractional factorial core, augmented with axial points to estimate curvature and center points to estimate pure error [2].

- Box-Behnken Designs: An alternative to CCDs that are often more efficient, as they use fewer runs for a three-level design by combining two-level factorial blocks [4] [2].

Comparative Analysis: OFAT vs. Systematic DOE

The following tables provide a structured, quantitative and qualitative comparison of the OFAT and systematic DOE approaches, summarizing their core characteristics and performance.

Table 1: Direct Comparison of OFAT and Systematic DOE Characteristics

| Characteristic | OFAT Approach | Systematic DOE Approach |

|---|---|---|

| Experimental Structure | Sequential, one variable varied per experiment | Simultaneous, multiple variables varied per experiment |

| Coverage of Experimental Space | Limited, explores along a single path [1] | Systematic and thorough [1] |

| Ability to Detect Interactions | Fails to identify interactions [1] [2] | Explicitly identifies and quantifies all interactions [2] |

| Resource Efficiency | Inefficient use of resources [1] | Establishes solution with minimal resource [1] |

| Statistical Foundation | Weak, no estimation of experimental error | Strong, built on randomization, replication, and blocking [2] |

| Optimization Capability | May miss the optimal solution [1] | Powerful optimization via RSM [2] |

Table 2: Pros and Cons of OFAT and Systematic DOE

| Methodology | Advantages | Disadvantages |

|---|---|---|

| OFAT | Widely taught and straightforward [1] | Fails to identify interactions [1]; Inefficient [1]; May miss optimum [1] |

| Systematic DOE | Systematic and thorough coverage; Highly efficient [1] | Higher initial learning curve; Requires a minimum entry of ~10 experiments [1]; May involve running anticipated "failed" experiments [1] |

Experimental Protocols and Applications in Drug Development

Protocol for a Factorial Design Study: Excipient Compatibility

Objective: To systematically investigate the main and interaction effects of two critical formulation factors—Disintegrant Concentration (Factor A: 2% and 5%) and Lubricant Mixing Time (Factor B: 5 and 15 minutes)—on the Tablet Dissolution at 30 minutes (Q30) and Tablet Hardness (Response Variables).

Methodology:

- Experimental Design: A full 2² factorial design with 3 center point replicates (total of 7 experimental runs). Center points (Disintegrant 3.5%, Mixing Time 10 min) are included to estimate curvature and pure error.

- Randomization: The run order for the 7 formulations is fully randomized to mitigate the effects of lurking variables.

- Execution:

- Prepare powder blends according to the randomized design matrix.

- Compress tablets under fixed compression force and speed.

- For each formulation, test 6 tablets for hardness and perform dissolution testing on a full vessel (n=6 or 12 per regulatory standards).

- Data Analysis:

- Perform ANOVA for both Q30 and Hardness.

- Construct Main Effects Plots and an Interaction Plot for each response.

- If a significant interaction is found between Disintegrant Concentration and Lubricant Mixing Time for dissolution, it indicates that the effect of the disintegrant depends on how long the lubricant was mixed—a critical insight completely invisible to OFAT.

Protocol for an RSM Study: Crystal Size Distribution Optimization

Objective: To model and optimize an API crystallization process to maximize Crystal Size Uniformity (measured by Span) and Yield.

Methodology:

- Experimental Design: A Central Composite Design (CCD) is selected for two Critical Process Parameters (CPPs): Cooling Rate (Factor X1) and Agitation Rate (Factor X2).

- Execution:

- Execute the crystallization runs as per the CCD matrix, which includes factorial points, axial points, and center points.

- For each run, isolate the crystals and characterize the particle size distribution via laser diffraction to calculate the Span and determine the Yield.

- Data Analysis:

- Fit a second-order polynomial (quadratic) model to the Span and Yield data using multiple regression.

- Use ANOVA to confirm the significance of the model and its terms.

- Generate 2D contour plots and 3D response surface plots for each response.

- Apply a numerical optimization algorithm (e.g., Desirability Function) to identify the combination of Cooling Rate and Agitation Rate that simultaneously minimizes Span (improves uniformity) and maximizes Yield.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Formulation and Process Optimization Studies

| Item | Function in Experimentation |

|---|---|

| Active Pharmaceutical Ingredient (API) | The therapeutically active component whose physical and chemical properties are central to the optimization study. |

| Excipients (e.g., Disintegrants, Binders, Lubricants) | Inert substances formulated alongside the API to confer specific functionalities to the drug product (e.g., stability, dissolution, manufacturability). |

| Solvents (for Crystallization) | The medium in which crystallization occurs; solvent choice and properties critically impact crystal nucleation, growth, and final particle characteristics. |

| Process Analytical Technology (PAT) Tools | Instruments (e.g., in-line particle size analyzers, NIR spectrometers) for real-time monitoring of Critical Quality Attributes (CQAs), enabling Quality by Design (QbD). |

| Cell-Based Assay Kits (for Target Validation) | Used in early-stage discovery to validate that a target is "druggable" and its modulation elicits a desired biological response [5]. |

| Monoclonal Antibodies (as Validation Tools) | Used for target validation due to their exquisite specificity, helping to establish a causal link between target modulation and therapeutic effect [5]. |

Visualizing Methodological Workflows and Interactions

The diagrams below, generated using DOT language, illustrate the core procedural and conceptual differences between OFAT and DOE.

OFAT Sequential Workflow

DOE Systematic Workflow

Concept of Interaction Effects

The contention between OFAT and systematic DOE is decisively settled by the demands of modern scientific and regulatory environments. While OFAT offers an intuitive starting point, its inability to account for factor interactions and its inherent inefficiency make it a high-risk strategy for optimizing complex processes, particularly in pharmaceutical development where the Quality by Design (QbD) paradigm is now prevalent [4]. Systematic DOE, with its robust statistical foundation, provides a powerful framework for efficiently building a deep understanding of a process, accurately modeling its behavior, and reliably navigating its design space. For researchers and drug development professionals committed to rigorous, efficient, and successful innovation, the adoption of systematic DOE is not merely an option—it is an imperative.

The Historical Context and Traditional Use of the OFAT Approach

The One-Factor-at-a-Time (OFAT) approach represents one of the earliest and most intuitive strategies in experimental science. For generations, this methodology served as the cornerstone of empirical investigation across diverse fields, including chemistry, biology, engineering, and manufacturing [2]. Its fundamental principle—varying a single variable while holding all others constant—provided a straightforward framework for isolating cause-and-effect relationships. Historically, OFAT gained widespread adoption due to its conceptual simplicity and ease of implementation, requiring no complex experimental designs or advanced statistical analysis [2]. Researchers could manually control and adjust factor levels sequentially, making it a practical choice during the early stages of scientific exploration or when working with limited resources and physical setups that were difficult to modify [2].

Historical Background and Traditional Use

The OFAT method has a long and established history in scientific experimentation. It was one of the primary strategies employed by researchers studying complex systems with multiple variables before the development of more sophisticated statistical design techniques [2]. Its popularity stemmed from the direct logic of its procedure: an experimenter would select a baseline set of conditions, then systematically vary one input factor of interest across a range of levels while keeping all other factors rigidly fixed at their initial values [2]. After observing the outcome, the adjusted factor would be returned to its starting level before proceeding to investigate the next variable [2]. This cyclical process continued until all factors of interest had been tested individually [2].

In traditional practice, OFAT experiments were often conducted manually. This approach was particularly instrumental in situations where experiments were time-consuming, expensive, or involved physical apparatus that required significant effort to reconfigure [2]. The method provided a clear, step-wise path to building basic comprehension of a system, which was especially valuable when dealing with systems with limited variables or well-understood behaviors [6].

Traditional OFAT Experimental Protocol

The following workflow visually represents the sequential and cyclical nature of the classical OFAT method:

A Concrete Example: OFAT in Lactic Acid Fermentation

A 2020 study on lactic acid production provides a clear, real-world illustration of a traditional OFAT application. The research aimed to optimize fermentation factors to maximize lactic acid yield from beet molasses using the bacterium Enterococcus hirae ds10 [7].

Detailed Experimental Protocol

The researchers employed a sequential OFAT methodology to investigate four key factors, following the protocol below [7]:

- Initial Baseline Setup: The fermentation was first established under standard conditions: molasses sugar concentration at 2% (w/v), inoculum size at 5% (v/v), pH at 7.0, and temperature at 37°C [7].

- Sequential Factor Variation:

- Step 1 - Sugar Concentration: While holding inoculum size (5%), pH (7.0), and temperature (37°C) constant, the molasses sugar concentration was varied (e.g., 2%, 4%, 6% w/v). The optimal level for yield (4% w/v) was identified and fixed for subsequent steps [7].

- Step 2 - Inoculum Size: With sugar concentration now fixed at the new optimum (4%), pH (7.0), and temperature (37°C) constant, the inoculum size was varied (e.g., 5%, 10%, 15% v/v). The best level (10% v/v) was identified and fixed [7].

- Step 3 - pH: With sugar (4%) and inoculum (10%) fixed at their optimal levels, and temperature constant (37°C), the pH was varied (e.g., 6.0, 7.0, 8.0). The optimal pH (8.0) was identified and fixed [7].

- Step 4 - Temperature: Finally, with the first three factors fixed at their optimized levels, temperature was varied (e.g., 35°C, 40°C, 45°C) to find its optimal value (40°C) [7].

- Outcome: This OFAT approach successfully increased lactic acid production to a maximum of 25.4 ± 0.42 g L⁻¹, a significant improvement over the initial yield [7].

Research Reagent Solutions

The following table details key materials and reagents used in this OFAT fermentation experiment and their functions [7].

| Reagent/Material | Function in the Experiment |

|---|---|

| Beet Molasses | Served as the low-cost, primary carbon source (sucrose, glucose, fructose) for bacterial growth and acid production. |

| Enterococcus hirae ds10 | The selected thermotolerant lactic acid bacterium strain responsible for fermenting sugars into lactic acid. |

| MRS Broth Medium | A complex nutrient medium providing essential nitrogen (yeast extract, peptone), vitamins, and minerals for bacterial growth. |

| Ammonium Chloride | An inorganic salt supplemented as an alternative, low-cost nitrogen source. |

| Yeast Extract | A vital source of amino acids, peptides, and vitamins (B-complex) required by fastidious lactic acid bacteria. |

| Sodium Hydroxide (NaOH) / Hydrochloric Acid (HCl) | Used to adjust and maintain the pH of the fermentation medium at the required levels for different experimental runs. |

Limitations of the OFAT Approach

While OFAT provided valuable initial insights in the lactic acid study and countless others, its methodological constraints become apparent in complex systems. The core limitations are summarized in the table below.

| Limitation | Impact on Experimental Outcomes |

|---|---|

| Failure to Capture Interaction Effects [2] [8] | The approach cannot detect instances where the effect of one factor depends on the level of another (synergistic or antagonistic effects), potentially leading to misleading conclusions and suboptimal conditions. |

| Inefficient Resource Use [2] [1] | OFAT requires a large number of experimental runs to explore multiple factors, making it time-consuming, costly, and inefficient compared to modern methods. |

| Lack of Optimization Capabilities [2] | The method is suited for understanding individual effects but provides no systematic framework for finding a true optimum combination of factor levels. |

| Limited Scope of Exploration [2] | OFAT only investigates the experimental space along a single, narrow path, potentially missing better regions of the factor space that exist outside the tested sequence. |

The following diagram contrasts the limited exploration of OFAT with the comprehensive coverage of a modern factorial design, illustrating why OFAT can miss optimal conditions and interaction effects.

The Modern Alternative: Design of Experiments (DOE)

The limitations of OFAT prompted the development of Design of Experiments (DOE), a structured and statistically sound framework for experimental investigation. Unlike OFAT, DOE systematically varies multiple factors simultaneously according to a predefined mathematical plan [2]. This allows for the efficient estimation of both main effects and critical interaction effects between factors [2] [8].

Key principles underpinning DOE include [2]:

- Randomization: Running trials in a random order to minimize the impact of lurking variables and biases.

- Replication: Repeating experimental runs to estimate experimental error and improve precision.

- Blocking: A technique to account for known sources of variability (e.g., different equipment or operators).

In the lactic acid study, when researchers transitioned from OFAT to a Response Surface Methodology (a type of DOE), they achieved a 60% increase in yield, producing 40.69 g L⁻¹ of lactic acid compared to the 25.4 g L⁻¹ found via OFAT [7]. This starkly demonstrates the potential payoff of modern experimental design.

The One-Factor-at-a-Time approach holds a significant place in the history of science as a foundational and intuitive experimental method. Its traditional use provided a critical pathway to initial process understanding, especially in systems with limited variable interactions and resource constraints. However, the inherent limitations of OFAT—particularly its inability to detect factor interactions and its inefficiency—render it unsuitable for optimizing complex, modern processes. The evolution toward sophisticated methodologies like Design of Experiments marks a paradigm shift from sequential, isolated testing to an integrated, systems-based approach capable of uncovering deeper insights and achieving superior optimization. This progression from OFAT to factorial and response surface designs forms the core of the ongoing "simplex vs. one variable at a time" optimization research, underscoring the critical importance of experimental design choice in scientific and industrial advancement.

The One-Factor-at-a-Time (OFAT) experimental method represents one of the most traditional approaches to scientific investigation, characterized by varying a single factor while maintaining all other factors constant. Despite its historical prevalence across chemical, biological, and engineering disciplines, OFAT contains fundamental limitations that render it increasingly inadequate for modern complex systems, particularly in drug discovery and process optimization where factor interactions dominate system behavior [2]. This methodological approach, while intuitively simple and easily implementable, fails to capture the multidimensional relationships that define most contemporary scientific challenges, from fermentation medium optimization to lead compound identification [9].

The persistence of OFAT often stems from its conceptual simplicity and lower mental effort requirements during experimental design phases [10]. Researchers frequently default to this approach without fully considering the statistical and practical consequences, particularly the method's inherent inability to detect factor interactions and its profound inefficiency in resource utilization. As research questions grow more complex and resource constraints intensify, understanding these limitations becomes paramount for scientists seeking to maximize information gain while minimizing experimental expenditure. This paper examines the core deficiencies of the OFAT paradigm, specifically focusing on its inefficiency and neglect of interaction effects, while providing practical guidance for implementing superior methodological approaches that capture system complexity more faithfully.

The Inefficiency Problem: Experimental Resource Limitations

Quantitative Analysis of OFAT's Experimental Burden

The inefficiency of OFAT manifests most visibly in the sheer number of experimental runs required to investigate multiple factors simultaneously. Unlike factorial designs where factors are varied together, OFAT must explore each dimension of the experimental space sequentially, resulting in an exponential growth in experimental requirements as factors increase. This inefficiency becomes particularly problematic in resource-intensive fields like drug discovery where assays, reagents, and researcher time represent significant costs [11].

Table 1: Comparison of Experimental Runs Required: OFAT vs. Factorial Design

| Number of Factors | Levels per Factor | OFAT Runs Required | Full Factorial Runs | Efficiency Ratio |

|---|---|---|---|---|

| 2 | 2 | 4 | 4 | 1:1 |

| 3 | 2 | 8 | 8 | 1:1 |

| 4 | 2 | 16 | 16 | 1:1 |

| 5 | 2 | 32 | 32 | 1:1 |

| 3 | 3 | 27 | 27 | 1:1 |

| 4 | 3 | 81 | 81 | 1:1 |

| 5 | 3 | 243 | 243 | 1:1 |

While Table 1 appears to show parity between OFAT and full factorial designs, this misleading representation only holds when considering the bare minimum runs for main effects estimation. In practice, OFAT's inability to estimate experimental error without replication dramatically increases its true resource requirements [2]. Furthermore, when considering precision of effect estimation, factorial designs provide substantially more information per experimental run, with some modern designs like Plackett-Burman offering greater precision with equivalent runs [10].

Case Study: Fermentation Medium Optimization

A concrete example of OFAT inefficiency emerges from fermentation medium optimization, where researchers must balance numerous nutritional components to maximize metabolite yield. A typical scenario investigating five factors (carbon source, nitrogen source, pH, temperature, and agitation rate) at three levels each would require 243 experimental runs using OFAT methodology [9]. In contrast, a fractional factorial design could extract similar information about main effects with as few as 16-32 runs, representing an 85-93% reduction in experimental burden [9]. This efficiency gain translates directly to reduced resource consumption, shorter development timelines, and ultimately lower research costs—critical considerations in competitive fields like pharmaceutical development.

The inefficiency problem extends beyond mere numbers of experiments to include the risk of experimental error accumulation. With OFAT's extensive sequence of runs, the potential for uncontrolled variability, instrumental drift, and operator fatigue introduces systematic errors that can compromise result validity [2]. Designed experiments incorporating randomization principles mitigate these risks by distributing potential confounding effects evenly across factor combinations.

Figure 1: Experimental Efficiency Comparison Between OFAT and Factorial Designs

The Interaction Effect Blind Spot

Defining Interaction Effects in Experimental Systems

Interaction effects occur when the effect of one factor on a response variable depends on the level of another factor. These interdependent relationships represent a fundamental characteristic of complex biological, chemical, and pharmacological systems, yet remain completely invisible to OFAT methodology [10] [2]. In drug discovery, for example, interactions between pH and temperature can dramatically influence binding affinity, membrane permeability, and metabolic stability—relationships that OFAT systematically fails to detect [11].

The statistical definition of an interaction represents a non-additive effect when factors combine. In practical terms, this means that knowing the individual effects of factors A and B in isolation provides insufficient information to predict their combined effect. OFAT's foundational assumption of effect additivity represents a critical oversimplification that routinely leads researchers to miss optimal factor combinations or misidentify factor significance [10]. This methodological blind spot becomes increasingly problematic as system complexity grows, with higher-order interactions creating emergent behaviors that cannot be predicted from individual factor effects.

Documented Consequences of Ignoring Interactions

The practical consequences of ignoring interaction effects are well-documented across multiple domains. In fermentation optimization, carbon and nitrogen sources frequently interact in their influence on metabolite production [9]. For instance, the effect of a specific carbon source on antibiotic yield may reverse completely depending on the nitrogen source present—a phenomenon OFAT cannot detect and would typically misinterpret as random variability or attribute incorrectly to a single factor [9].

In pharmaceutical development, the failure to detect interactions carries even graver consequences. Drug-target engagement assays like CETSA (Cellular Thermal Shift Assay) have revealed that buffer composition, temperature, and cellular context interact significantly in modulating observed drug-target interactions [11]. OFAT approaches risk mischaracterizing compound potency and mechanism of action by overlooking these critical interdependencies, potentially contributing to the high attrition rates in drug development.

Table 2: Documented Interaction Effects in Pharmaceutical and Bioprocessing Contexts

| System | Interacting Factors | Nature of Interaction | Impact |

|---|---|---|---|

| Antibiotic Production [9] | Carbon source & nitrogen source | Non-additive effect on yield | OFAT misses optimal nutrient combination |

| Penicillin Fermentation [9] | Glucose & lactose | Carbon catabolite repression | OFAT misidentifies glucose as universally inhibitory |

| Drug-Target Engagement (CETSA) [11] | pH & temperature | Cooperative stabilization | OFAT underestimates binding affinity |

| Reaction Optimization [12] | Catalyst & solvent | Synergistic rate enhancement | OFAT fails to identify optimal combination |

| Software Testing [13] | Multiple input parameters | Failure-inducing combinations | OFAT misses corner-case bugs |

Figure 2: Interaction Effects and Their Implications for OFAT Experimental Designs

Methodological Alternatives: Designed Experiments

Fundamental Principles of Design of Experiments (DOE)

Design of Experiments (DOE) provides a statistically rigorous framework that directly addresses OFAT's limitations through three core principles: randomization, replication, and blocking [2]. Randomization ensures that experimental runs are conducted in random order to minimize the impact of lurking variables and systematic biases. Replication involves repeating experimental runs under identical conditions to estimate experimental error and improve effect estimation precision. Blocking accounts for known sources of variability by grouping homogeneous experimental runs, thus isolating nuisance factors from experimental error [2].

These foundational principles enable DOE to efficiently explore multifactor spaces while providing estimates of both main effects and interaction effects. The methodological shift from OFAT to DOE represents a transition from isolated factor examination to system-level understanding, acknowledging the inherent complexity of biological, chemical, and pharmacological systems. This paradigm shift has become increasingly essential as research questions grow more multidimensional and resource constraints intensify.

Factorial Designs: Capturing Interactions Systematically

Full and fractional factorial designs represent the most direct alternative to OFAT, specifically engineered to estimate interaction effects while maintaining computational efficiency [2]. In a two-factor factorial design, researchers simultaneously vary both factors across their levels, creating a matrix of experimental conditions that enables estimation of both individual factor effects (main effects) and their interactive combination (interaction effect) [10].

The mathematical foundation for factorial designs relies on analysis of variance (ANOVA), which partitions total variability in response data into components attributable to main effects, interaction effects, and experimental error [2]. This partitioning enables statistical testing of each effect's significance, providing researchers with objective criteria for factor importance rather than relying on visual interpretation of OFAT data, which frequently misses subtle but important interactions.

Advanced Techniques: Response Surface Methodology and Beyond

For optimization problems where the goal extends beyond understanding to actual performance maximization or minimization, Response Surface Methodology (RSM) provides powerful extensions to basic factorial designs [2]. RSM employs specialized experimental designs like Central Composite Designs (CCD) and Box-Behnken Designs to fit quadratic models that capture curvature in response surfaces—another phenomenon invisible to OFAT [2].

These advanced approaches enable researchers to not only detect interactions but to actually map the complete response landscape, identifying optimal regions and understanding trade-offs between multiple objectives. In drug discovery, this capability proves particularly valuable when balancing potency, selectivity, and pharmacokinetic properties, where multiple competing objectives must be optimized simultaneously [11]. The emergence of machine learning and AI-driven experimental design further extends these capabilities, enabling even more efficient navigation of complex experimental spaces [11] [12].

Experimental Protocols for Interaction Detection

Two-Factor Factorial Design Protocol

Objective: To detect and quantify interaction effects between two factors while estimating their main effects on the response variable.

Materials and Equipment:

- Standard laboratory equipment for response measurement

- Controlled environmental conditions for experimental factors

- Statistical software for data analysis (R, JMP, Minitab, etc.)

Procedure:

- Select two factors of interest (Factor A and Factor B) and define their experimental ranges (low and high levels) based on preliminary knowledge.

- Construct a 2×2 factorial design comprising all possible combinations of factor levels: (Alow, Blow), (Alow, Bhigh), (Ahigh, Blow), (Ahigh, Bhigh).

- Randomize the run order to protect against confounding time-based trends.

- Execute experiments according to the randomized sequence, measuring response variables for each combination.

- Include center points (Amid, Bmid) if curvature detection is desired.

- Replicate the entire design to estimate experimental error (minimum of 2-3 replicates recommended).

Statistical Analysis:

- Perform Two-Way ANOVA with interaction term:

- Response ~ Factor A + Factor B + Factor A × Factor B

- Examine significance of interaction term (p < 0.05 typically indicates statistically significant interaction).

- Create interaction plot by plotting response means for each factor combination.

- If interaction is significant, avoid interpreting main effects in isolation.

Interpretation: Parallel lines in the interaction plot indicate no interaction; non-parallel lines suggest presence of interaction. The significance of the interaction term in ANOVA provides statistical evidence for interaction effect.

Fractional Factorial Screening Protocol

Objective: To efficiently screen multiple factors for important main effects and two-factor interactions when full factorial designs are prohibitively expensive.

Materials and Equipment: Same as Protocol 5.1 plus understanding of design resolution concepts.

Procedure:

- Identify 5-8 potentially important factors for screening.

- Select appropriate fractional factorial design (Resolution IV or higher to avoid confounding main effects with two-factor interactions).

- Generate experimental design using statistical software, maintaining principles of randomization.

- Execute experiments according to generated design.

- Measure response variables with appropriate precision.

Statistical Analysis:

- Fit linear model with all main effects and two-factor interactions.

- Use half-normal probability plots or Pareto charts to identify significant effects.

- Apply model reduction techniques to eliminate nonsignificant terms.

- Validate model assumptions through residual analysis.

Interpretation: Significant two-factor interactions indicate where factor effects depend on other factor levels. Follow-up experiments may be required to de-alias confounded interactions.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for Advanced Experimental Design

| Reagent/Material | Function | Application Context |

|---|---|---|

| CETSA (Cellular Thermal Shift Assay) Kits | Quantitative measurement of drug-target engagement in intact cells | Confirming target engagement in physiologically relevant systems [11] |

| Statistical Software (JMP, R, Minitab) | Experimental design generation and statistical analysis | Creating and analyzing factorial, fractional factorial, and response surface designs [13] |

| High-Throughput Screening Plates (1536-well) | Miniaturized reaction vessels for efficient experimentation | Enabling rapid testing of multiple factor combinations [12] |

| Artificial Intelligence/Machine Learning Platforms | Predictive modeling for factor optimization | Guiding experimental designs based on existing data [11] |

| Automated Reactor Systems | Self-optimization through iterative experimentation | Implementing design-make-test-analyze cycles without manual intervention [12] |

The fundamental limitations of OFAT—specifically its profound inefficiency and systematic failure to detect interaction effects—render it inadequate for addressing complex research questions in contemporary science. While the method retains value in preliminary investigations or truly additive systems, its application in multidimensional optimization problems consistently leads to suboptimal solutions, missed opportunities, and misleading conclusions [10] [2].

The alternative framework of Designed Experiments, particularly factorial designs and response surface methodology, provides statistically rigorous approaches that directly address OFAT's deficiencies. By varying multiple factors simultaneously, these methods capture interaction effects while dramatically improving experimental efficiency [2]. The incorporation of fundamental principles like randomization, replication, and blocking further enhances result reliability and validity.

For researchers in drug discovery and development, where system complexity and resource constraints continue to intensify, embracing these advanced methodological approaches represents not merely a statistical preference but a practical necessity. As the field moves toward increasingly integrated, cross-disciplinary pipelines, the ability to efficiently detect and quantify factor interactions will separate successful optimization efforts from costly, inconclusive experimentation [11]. The transition beyond OFAT represents an essential evolution in scientific methodology, enabling researchers to properly address the multidimensional challenges that define modern science.

This technical guide provides researchers, scientists, and drug development professionals with a comprehensive analysis of two systematic optimization frameworks: Design of Experiments (DoE) and the Simplex Method. Within the broader context of simplex versus one-variable-at-a-time (OVAT) optimization research, we examine the mathematical foundations, application methodologies, and comparative advantages of these approaches for complex experimental optimization in pharmaceutical development. The guide includes structured data presentation, detailed experimental protocols, and visualization tools to facilitate implementation in research settings.

In chemical process development and pharmaceutical research, optimization strategies aim to improve system performance while minimizing experimental effort [14]. Traditional one-variable-at-a-time (OVAT) approaches, while simple to implement, suffer from critical limitations: they cannot detect interactions between variables, require more experiments, and may miss optimal conditions [14]. Systematic frameworks address these shortcomings through multivariate approaches that simultaneously optimize all parameters.

The comparative efficiency of multidimensional optimization is particularly valuable in pharmaceutical development, where limited resources and time constraints demand maximum information from minimal experiments. This guide examines two powerful systematic approaches: Design of Experiments (DoE), which characterizes experimental space through response surface modeling, and the Simplex Method, an algorithmic approach that iteratively moves toward optimal conditions [14].

Mathematical Foundations

The Simplex Method for Linear Programming

The Simplex Method, developed by George Dantzig in 1947, is an algorithm for solving linear programming problems involving optimization of a linear objective function subject to linear constraints [15] [16]. The method operates on the fundamental principle that the optimal solution to a linear programming problem lies at a vertex of the feasible region, which forms a convex polytope in multidimensional space [15] [17].

The algorithm begins with identification of a basic feasible solution (a corner point of the feasible region) and iteratively moves along the edges of the feasible region to adjacent vertices, improving the objective function at each step until no further improvements can be made [15]. For a problem with n variables and m constraints, the Simplex Method transforms inequality constraints into equalities by introducing slack variables, creating what is known as a "dictionary" or "tableau" representation [18]:

After introducing slack variables s, the constraints become:

The initial dictionary takes the form:

The method proceeds through pivot operations that systematically swap basic and non-basic variables, moving from one vertex to another while continuously improving the objective function [18].

Design of Experiments (DoE) Fundamentals

Design of Experiments constitutes a statistical approach that characterizes a chemical reaction's experimental space through a response surface model [14]. The model is described by a mathematical function with one optimum, derived from multivariate screening of reaction parameters according to a systematic experimental plan [14].

The key advantage of DoE over OVAT approaches is its ability to quantify interaction effects between variables and identify true optimal conditions through structured variation of multiple parameters simultaneously. This methodology aligns with Quality by Design (QbD) principles, providing a systematic approach to drug development that enhances product and process understanding [19].

Comparative Analysis: Capabilities and Applications

Table 1: Comparison of Systematic Optimization Frameworks

| Characteristic | Simplex Method | Design of Experiments (DoE) |

|---|---|---|

| Foundation | Algorithmic optimization [15] | Statistical modeling [14] |

| Approach | Iterative movement toward optimum [17] | Response surface characterization [14] |

| Experimental Requirements | Sequential experiments | Pre-defined experimental matrix |

| Interaction Detection | Limited | Comprehensive [14] |

| Optimum Identification | Converges to local optimum | Maps entire experimental space |

| Implementation in Pharma | Continuous flow processes [14] | Batch and continuous processes [14] |

| Analysis Method | Primarily online analysis [14] | Offline and online analysis [14] |

Table 2: Pharmaceutical Application Domains

| Application Area | Simplex Method | DoE |

|---|---|---|

| Lipid Formulation Development | Limited application | Extensive use in SMEDDS optimization [19] |

| Oral Drug Delivery Systems | Secondary approach | Primary approach for bioavailability enhancement [19] |

| Reaction Optimization | Modified Nelder-Mead implementation [14] | Response surface methodology [14] |

| Process Analytical Technology | Compatible with real-time monitoring [14] | Generally offline analysis [14] |

Experimental Protocols and Methodologies

Protocol for Simplex Method Implementation

The following protocol outlines the implementation of the modified Nelder-Mead Simplex algorithm for chemical reaction optimization, as demonstrated in imine synthesis [14]:

- System Setup: Configure a fully automated microreactor system with real-time monitoring capability (e.g., inline FT-IR spectroscopy)

- Initial Simplex Formation: Generate initial simplex based on the number of optimization parameters (n+1 vertices for n parameters)

- Objective Function Calculation: For each vertex, calculate the objective function (e.g., yield, concentration, or cost function)

- Simplex Transformation:

- Reflection: Replace the worst vertex with its reflection across the centroid of the remaining vertices

- Expansion: If reflected vertex shows improvement, expand further in that direction

- Contraction: If no improvement, contract the simplex toward better vertices

- Termination Check: Continue iterations until the simplex converges to an optimum or reaches maximum iterations

For self-optimizing systems in pharmaceutical applications, the algorithm can be modified to respond in real-time to process disturbances, maintaining optimal performance despite fluctuations in starting materials or environmental conditions [14].

Protocol for DoE Implementation

The implementation of Design of Experiments for pharmaceutical formulation development follows this systematic protocol [19]:

- Factor Selection: Identify critical process parameters (e.g., excipient ratios, processing conditions)

- Experimental Design: Select appropriate design (e.g., factorial, central composite, Box-Behnken) based on factors and desired resolution

- Response Definition: Define critical quality attributes as responses (e.g., droplet size, solubility, bioavailability)

- Experimental Execution: Conduct experiments according to the designed matrix

- Model Development: Fit response surface models to experimental data

- Optimization: Identify optimal factor settings that simultaneously satisfy all response constraints and objectives

- Verification: Conduct confirmation experiments at predicted optimal conditions

In lipid-based formulation development, DoE has been particularly valuable for optimizing self-microemulsifying drug delivery systems (SMEDDS) by balancing ratios of oils, surfactants, and co-surfactants to enhance drug solubility and absorption [19].

Visualization of Methodologies

Simplex Method Workflow

DoE Methodology Flowchart

Comparison of Optimization Approaches

The Scientist's Toolkit: Essential Research Materials

Table 3: Key Reagent Solutions for Optimization Experiments

| Material/Reagent | Function/Purpose | Example Applications |

|---|---|---|

| Medium-Chain Triglycerides (MCT) | Lipid phase component for enhancing drug solubility [19] | SMEDDS formulations [19] |

| Nonionic Surfactants | Stabilize emulsions, reduce interfacial tension [19] | Microemulsion systems [19] |

| Lauroyl Polyoxylglycerides | Hydrophilic surfactant for self-emulsifying systems [19] | Lipid-based drug delivery [19] |

| Inline FT-IR Spectrometer | Real-time reaction monitoring [14] | Continuous flow optimization [14] |

| Microreactor Systems | Enable precise parameter control and automation [14] | Self-optimizing chemical processes [14] |

Recent Advances and Future Directions

Recent theoretical work has addressed long-standing questions about the Simplex Method's efficiency. While worst-case complexity analyses suggested exponential runtimes, Bach and Huiberts (2023) have demonstrated that with appropriate randomization and practical implementation tricks, the method operates efficiently in polynomial time [16] [20]. These theoretical advances complement the method's established practical success.

In pharmaceutical applications, the integration of both Simplex and DoE methodologies with automated experimental platforms and real-time analytics represents the cutting edge of optimization research [14]. Such systems enable fully autonomous experimental optimization while simultaneously collecting kinetic data for enhanced process understanding.

The continued development of these systematic frameworks supports the transition from empirical to mechanistic approaches in pharmaceutical development, aligning with regulatory initiatives promoting Quality by Design principles [19]. Future advancements will likely focus on hybrid approaches that leverage the strengths of both methodologies while integrating machine learning and artificial intelligence for enhanced predictive capability.

In the realm of optimization, particularly within research and development, the choice of methodology can significantly influence the efficiency, cost, and success of projects such as drug development. This guide explores four foundational concepts—Main Effects, Interaction Effects, Feasible Region, and Objective Function—framed within a critical comparison of two optimization approaches: the traditional One-Variable-At-a-Time (OVAT) method and the more sophisticated Simplex Method rooted in Design of Experiments (DoE). Understanding these terminologies and the underlying principles of these methodologies is paramount for researchers and scientists seeking to optimize processes in a resource-conscious and effective manner. The persistent use of OVAT in academic settings, despite its documented limitations, stands in contrast to the powerful, simultaneous factor evaluation enabled by the Simplex Method and linear programming, highlighting a significant opportunity for methodological advancement in fields like synthetic chemistry and pharmaceutical development [2] [21].

Defining the Core Terminology

Objective Function

The Objective Function is a mathematical expression that defines the goal of an optimization problem. It represents the quantity that needs to be maximized (e.g., profit, yield, efficacy) or minimized (e.g., cost, waste, side effects). In the context of this guide, the objective function is what the researcher is ultimately trying to optimize.

- In Linear Programming: It is expressed as ( Z = c1x1 + c2x2 + ... + cnxn ), where ( Z ) is the objective to be optimized, ( ci ) are the coefficients (e.g., unit profit), and ( xi ) are the decision variables (e.g., amount of a drug component to use) [22] [23].

- Example: In a drug development scenario, the objective could be to maximize therapeutic yield while minimizing the cost of raw materials.

Feasible Region

The Feasible Region is the set of all possible points (combinations of decision variables) that satisfy the problem's constraints, including inequalities, equalities, and integer requirements. It represents the "solution space" within which an optimal solution must be found [24].

- Characteristics: The feasible region in a linear programming problem forms a convex polytope, a geometric shape whose boundaries are defined by the constraints [24] [22].

- Optimal Solution: In linear programming, if an optimal solution exists, it can be found at one of the extreme points (vertices) of this feasible region [22].

- Visualization: The diagram below illustrates a feasible region defined by multiple constraints.

Main Effects

A Main Effect describes the isolated, individual impact of a single independent variable (factor) on the response (dependent) variable, disregarding the influence of any other factors in the system [2] [21].

- OVAT Focus: The OVAT method is exclusively designed to identify main effects by varying one factor while holding all others constant [2] [10].

- Interpretation: It represents the average change in the response when a factor is moved from its low to high level.

Interaction Effects

Interaction Effects occur when the effect of one independent variable on the response depends on the level of one or more other variables. They represent the combined, non-additive effect of factors [2] [25].

- Critical Limitation of OVAT: A fundamental weakness of the OVAT approach is its inability to detect interaction effects because it does not vary factors simultaneously [2] [10] [21].

- Modeling: In a statistical model, interaction effects are represented by product terms (e.g., ( β{1,2}x1x_2 )) [25] [21].

- Example: In a chemical reaction, a higher temperature might increase yield only when a specific catalyst is present at a high concentration. Varying temperature alone (OVAT) would miss this crucial dependency.

Experimental Optimization: OVAT vs. Simplex Methodology

One-Variable-At-a-Time (OVAT) Protocol

Principle: This classical method involves sequentially varying a single factor while maintaining all other factors at fixed, constant levels. After testing a range for one factor, the process is repeated for the next factor, using the presumed optimal level of the previous one [2] [10] [21].

- Step-by-Step Workflow:

- Select Baseline: Establish a set of baseline conditions for all variables.

- Vary One Factor: Systematically change one factor across a predefined range.

- Observe Response: Measure the outcome (e.g., yield, purity) for each level of the varied factor.

- Identify "Optimum": Select the factor level that produces the best response.

- Iterate: Fix the first factor at its new "optimal" level and repeat steps 2-4 for the next factor.

- Finalize: The combination of the individually optimal levels is declared the overall optimum.

The following diagram visualizes this sequential, constrained process.

Key Reagents & Solutions: The requirements for OVAT are conceptually simple, as it mirrors traditional controlled experimentation.

Table: Research Reagent Solutions for OVAT

Item Function in Protocol Baseline Condition Setup Serves as the constant experimental environment against which changes to a single variable are measured. High/Mid/Low Levels per Factor Defines the range of investigation for each variable, typically tested in sequence. Fixed Resource Constraints Represents the static limitations (e.g., budget, time, materials) under which the sequential tests are run.

Simplex Method & Design of Experiments (DoE) Protocol

Principle: The Simplex Method is an algorithm for solving linear programming problems. It operates by moving intelligently along the edges of the feasible region polytope from one vertex (extreme point) to an adjacent one, improving the objective function with each step until the optimum is found [22] [16] [26]. In the broader context of optimization, it represents a paradigm shift from OVAT by simultaneously evaluating multiple factors, a core principle of DoE.

Step-by-Step Workflow (Simplex Algorithm):

- Formulate Problem: Define the objective function and constraints in standard form.

- Initialize: Convert inequalities to equations using slack variables to define an initial basic feasible solution (a vertex of the polytope) [22] [26].

- Construct Tableau: Set up the initial simplex tableau, a matrix representation of the linear program [22] [26].

- Optimality Check: If all coefficients in the objective row are non-negative, the current solution is optimal; STOP. Otherwise, continue.

- Pivot Operation: a. Select Pivot Column: Choose a column with the most negative objective row coefficient. b. Select Pivot Row: Calculate quotients of the RHS and the pivot column; choose the row with the smallest non-negative quotient. c. Pivot: Use Gaussian elimination to make the pivot element 1 and all other elements in the pivot column 0, creating a new tableau and a new basic feasible solution [22].

- Iterate: Return to Step 4.

Key Reagents & Solutions (DoE Context): The Simplex Method and DoE require a more structured setup than OVAT.

Table: Research Reagent Solutions for DoE & Simplex

Item Function in Protocol Defined Factor Space Ranges Establishes the upper and lower bounds (the feasible region) for all variables to be explored simultaneously. Experimental Design Matrix The core "reagent" that specifies the exact combination of factor levels for each experimental run. Slack/Surplus Variables Mathematical tools used to convert inequality constraints into equalities, defining the boundaries of the feasible polytope [22] [23]. Statistical Software Essential for generating designs, analyzing results, fitting models, and identifying significant main and interaction effects.

The theoretical and practical differences between OVAT and the Simplex/DoE approach are substantial. The table below summarizes the key distinctions based on the gathered research.

Table: Comparison of OVAT and Simplex/DoE Optimization Methods

| Feature | OVAT (One-Variable-At-a-Time) | Simplex / DoE (Design of Experiments) |

|---|---|---|

| Basic Principle | Sequential, isolated factor testing [2] [10] | Simultaneous factor variation & structured experimentation [2] [21] |

| Interaction Effects | Cannot be detected or estimated [2] [10] [21] | Can be detected, estimated, and quantified [2] [25] [21] |

| Experimental Efficiency | Low; requires many runs for multi-factor problems, leading to resource inefficiency [2] [10] | High; extracts maximum information from minimal runs through structured designs [2] [21] |

| Risk of Misleading Optima | High, as it may miss true optima due to factor interactions [2] [21] | Low, as it explores the response surface more comprehensively [2] [21] |

| Foundational Concept | Based on a series of controlled experiments [10] | Based on statistical principles (randomization, replication, blocking) and linear algebra [2] [22] |

| Problem Scope | Limited to main effects only | Can handle main effects, interactions, and non-linear responses (via RSM) [2] [21] |

| Handling of Constraints | Informal and sequential | Formal and direct via the feasible region and constraint equations [24] [22] |

For researchers, scientists, and drug development professionals, the choice between OVAT and the Simplex/DoE framework is critical. While OVAT offers simplicity and intuitive appeal, its inability to account for interaction effects and its inefficiency in exploring the feasible region pose significant risks of arriving at suboptimal solutions. The Simplex Method, and the DoE philosophy it embodies, provides a robust, mathematically sound approach to optimization. By formally defining the objective function and constraints to map the feasible region, and by systematically probing this space to understand both main effects and crucial interactions, it ensures a more efficient path to a true and reliable optimum. Embracing these advanced methodologies is not merely a technical choice but a strategic imperative for accelerating and de-risking research and development in complex, multi-factorial environments like pharmaceutical science.

Putting Theory into Practice: A Guide to Implementing Simplex and DoE in the Lab

In the realms of operations research, drug development, and chemical formulation, professionals continually face the challenge of making optimal decisions within constraints—whether maximizing therapeutic efficacy, minimizing production costs, or achieving perfect drug release profiles. For decades, two philosophical approaches have dominated this optimization landscape: the sophisticated sequential simplex method that navigates multiple variables simultaneously, and the intuitive but limited one-variable-at-a-time (OVAT) approach. Where OVAT methodologies adjust a single factor while holding others constant, the simplex method operates as a systematic navigation algorithm that traverses the edges of a multidimensional feasible region, constantly moving toward improved solutions by considering the entire variable space concurrently [26] [18].

The mathematical foundation of the simplex method reveals why it outperforms simpler approaches for complex problems. Developed by George Dantzig in 1947, this algorithm solves linear programming problems by moving along the edges of a polytope (the multidimensional feasible region defined by constraints) from one vertex to the next, ensuring each step improves the objective function [22]. In contrast to OVAT which often becomes trapped in local optima or misses crucial variable interactions, the simplex method guarantees finding the global optimum for linear problems—a critical advantage when optimizing pharmaceutical formulations where component interactions significantly impact performance [27]. The method's efficiency stems from its systematic approach: it evaluates only corner-point feasible solutions rather than searching the entire feasible region, making it exceptionally efficient for problems with thousands of variables and constraints [26].

Theoretical Foundation: How the Simplex Method Works

Standard Form and Problem Setup

The simplex algorithm requires linear programs to be expressed in standard form to create a consistent framework for computation. The standard form for a maximization problem consists of:

- An objective function to maximize: ( z = c1x1 + c2x2 + ... + cnxn )

- Constraint equations: ( a{11}x1 + a{12}x2 + ... + a{1n}xn = b_1 )

- Non-negativity restrictions: ( x1, x2, ..., x_n \geq 0 )

For minimization problems, conversion to maximization is straightforward by multiplying the objective function by -1 [28]. The transformation of inequality constraints into equations occurs through the introduction of slack variables (for ≤ constraints) and surplus variables (for ≥ constraints). Each slack variable represents the unused portion of a resource, while surplus variables represent the excess beyond a minimum requirement [22]. This conversion is crucial as it transforms the problem into a system of linear equations that can be manipulated using matrix operations.

The initial basic feasible solution is found by setting the original decision variables to zero and solving for the slack variables. This solution corresponds to the origin of the feasible region, providing a starting point for the algorithm [26]. The simplex method then proceeds through a series of iterations, each moving to an adjacent vertex with an improved objective value, until no further improvement is possible—signaling that the optimal solution has been found [22].

Mathematical Principles and Algorithmic Steps

The simplex method operates through systematic pivot operations that algebraically move from one basic feasible solution to another. Each iteration involves:

Optimality Check: Examining the objective row coefficients to determine if introducing any non-basic variable would improve the objective value. For maximization problems, positive coefficients indicate potential improvement [26].

Entering Variable Selection: Choosing the non-basic variable with the most positive coefficient (in maximization) to enter the basis. This selection strategy, known as the steepest ascent rule, typically provides the greatest objective improvement per unit increase [18].

Leaving Variable Determination: Applying the minimum ratio test to determine which basic variable must leave the basis to maintain feasibility. This test identifies the constraint that would first be violated as the entering variable increases [26].

Pivot Operation: Performing Gaussian elimination to create a new canonical form with the entering variable replacing the leaving variable in the basis [28].

The algorithm terminates when no positive coefficients remain in the objective row (for maximization), indicating that no adjacent vertex provides improvement. At this point, the current basic feasible solution is guaranteed to be optimal [22]. The elegance of the simplex method lies in its finite convergence—though pathological examples exist, the algorithm typically reaches the optimum in a number of steps proportional to the problem dimensions [22].

Step-by-Step Implementation Guide

Problem Formulation and Initial Setup

Consider a pharmaceutical production optimization problem where a company needs to allocate limited resources to maximize profit from two drug formulations. The problem can be formulated as:

Maximize: ( P = 3x + 2y ) (Profit function) Subject to: ( x - y \leq 2 ) (Raw material constraint) ( 3x + y \leq 5 ) (Production capacity) ( 4x + 3y \leq 7 ) (Quality control capacity) ( x, y \geq 0 ) (Non-negativity)

To convert this to standard form, we introduce slack variables ( s1 ), ( s2 ), and ( s_3 ) for each inequality constraint:

Maximize: ( P = 3x + 2y + 0s1 + 0s2 + 0s3 ) Subject to: ( x - y + s1 = 2 ) ( 3x + y + s2 = 5 ) ( 4x + 3y + s3 = 7 ) ( x, y, s1, s2, s_3 \geq 0 )

The initial basic feasible solution is ( x = 0 ), ( y = 0 ), ( s1 = 2 ), ( s2 = 5 ), ( s_3 = 7 ), with an initial profit of ( P = 0 ). The slack variables form the initial basis as they provide the simplest identity matrix [26] [28].

Constructing the Initial Simplex Tableau

The simplex tableau organizes all necessary information into a tabular format that tracks the objective function values, basic variables, and constraint coefficients throughout the iterations. The initial tableau for our example is constructed as:

caption: Initial Simplex Tableau

| Basic Variable | Value | x | y | s₁ | s₂ | s₃ | Ratio |

|---|---|---|---|---|---|---|---|

| s₁ | 2 | 1 | -1 | 1 | 0 | 0 | - |

| s₂ | 5 | 3 | 1 | 0 | 1 | 0 | 5/3 ≈ 1.67 |

| s₃ | 7 | 4 | 3 | 0 | 0 | 1 | 7/4 = 1.75 |

| P | 0 | -3 | -2 | 0 | 0 | 0 | - |

The tableau's bottom row (objective row) shows the reduced costs of the non-basic variables. The negative coefficients (-3 for x and -2 for y) indicate that introducing either variable into the basis will improve the objective value [26] [28]. The "Value" column represents the current values of the basic variables, while the remaining columns contain the coefficients of each variable in the constraints.

Iteration and Pivot Operations

First Iteration:

Select Pivot Column: The most negative coefficient in the objective row is -3 in column x, so x becomes the entering variable [26].

Select Pivot Row: Calculate ratios of the "Value" column to the positive coefficients in the x-column:

- s₁ row: 2/1 = 2

- s₂ row: 5/3 ≈ 1.67

- s₃ row: 7/4 = 1.75

The minimum ratio is 1.67 from the s₂ row, so s₂ becomes the leaving variable [26].

Pivot Operation: The pivot element is 3 at the intersection of the s₂ row and x column. Perform row operations to convert the x-column to [0, 1, 0, 0]ᵀ:

- Divide pivot row by 3: R₂ → R₂/3

- Update other rows: R₁ → R₁ - R₂, R₃ → R₃ - 4R₂, R₄ → R₄ + 3R₂

caption: Tableau After First Iteration

| Basic Variable | Value | x | y | s₁ | s₂ | s₃ | Ratio |

|---|---|---|---|---|---|---|---|

| s₁ | 1 | 0 | -4/3 | 1 | -1/3 | 0 | - |

| x | 5/3 | 1 | 1/3 | 0 | 1/3 | 0 | 5 |

| s₃ | 1/3 | 0 | 5/3 | 0 | -4/3 | 1 | 1/5 = 0.2 |

| P | 5 | 0 | -1 | 0 | 1 | 0 | - |

Second Iteration:

Select Pivot Column: The most negative coefficient in the objective row is -1 in column y.

Select Pivot Row: Calculate ratios for the y-column:

- s₁ row: negative coefficient, skip

- x row: (5/3)/(1/3) = 5

- s₃ row: (1/3)/(5/3) = 1/5 = 0.2

The minimum ratio is 0.2 from the s₃ row, so s₃ becomes the leaving variable.

Pivot Operation: The pivot element is 5/3. Perform row operations to convert the y-column to [0, 0, 1, 0]ᵀ:

- Divide pivot row by 5/3: R₃ → 3R₃/5

- Update other rows accordingly

caption: Final Optimal Tableau

| Basic Variable | Value | x | y | s₁ | s₂ | s₃ |

|---|---|---|---|---|---|---|

| s₁ | 1.4 | 0 | 0 | 1 | -1.4 | 0.8 |

| x | 1.6 | 1 | 0 | 0 | 0.6 | -0.2 |

| y | 0.2 | 0 | 1 | 0 | -0.8 | 0.6 |

| P | 5.2 | 0 | 0 | 0 | 0.2 | 0.6 |

The final tableau shows no negative coefficients in the objective row, indicating optimality. The optimal solution is ( x = 1.6 ), ( y = 0.2 ), with maximum profit ( P = 5.2 ). The slack variables indicate that the first constraint is binding (s₁ = 1.4 > 0), while the other resources are fully utilized [26] [28].

Visualization of the Simplex Method Workflow

The following diagram illustrates the complete simplex algorithm workflow, from problem formulation through optimal solution, including the key decision points and operations:

caption: Simplex Algorithm Workflow

Application in Drug Development: A Case Study

Formulation Optimization Using the Simplex Method

In pharmaceutical development, the simplex method provides a powerful framework for optimizing complex drug formulations where multiple components interact to influence critical quality attributes. A recent study on glipizide sustained-release tablets demonstrates this application effectively [27]. Researchers aimed to optimize five excipient components—HPMC K4M (X1), HPMC K100LV (X2), MgO (X3), lactose (X4), and anhydrous CaHPO4 (X5)—to achieve target drug release rates at 2 hours (Y2: 15-25%), 8 hours (Y8: 55-65%), and 24 hours (Y24: 80-110%) [27].

The optimization challenge involved balancing multiple response variables simultaneously while respecting component interaction effects—a task poorly suited to OVAT approaches, which cannot capture interaction effects between formulation components. Through systematic variation of components based on simplex-type design principles and subsequent modeling, the researchers identified an optimal formulation: HPMC K4M (38.42%), HPMC K100LV (13.51%), MgO (6.28%), lactose (17.07%), and anhydrous CaHPO4 (7.52%) [27]. This optimized formulation demonstrated superior performance with cumulative release rates of 22.75%, 64.98%, and 100.23% at 2, 8, and 24 hours respectively, meeting all target specifications [27].

Comparative Analysis: Simplex vs. OVAT in Pharmaceutical Context

Table: Comparison of Optimization Approaches in Drug Formulation

| Aspect | One-Variable-at-a-Time (OVAT) | Sequential Simplex Method |

|---|---|---|

| Variable Interactions | Cannot detect interactions; may miss optimal regions | Explicitly models interactions through systematic variation |

| Experimental Efficiency | Inefficient; requires many experiments to explore space | Highly efficient; moves directly toward optimum |

| Solution Quality | Often finds local optima | Guaranteed global optimum for linear problems |

| Implementation Complexity | Simple conceptually but tedious mathematically | Requires mathematical sophistication but automated in software |

| Pharmaceutical Applications | Limited to simple formulations with minimal interactions | Ideal for complex formulations with multiple interacting components |

The case study demonstrates the superiority of the simplex approach for pharmaceutical optimization. Where OVAT might have required hundreds of experiments to map the five-component space, the model-informed simplex approach efficiently navigated the design space using mathematical guidance [27]. This efficiency translates directly to reduced development time and cost—critical factors in drug development where patent clocks are constantly ticking.

Computational Tools and Software

Successful implementation of the simplex method in research settings requires appropriate computational tools. For initial learning and small-scale problems, spreadsheet software with linear programming solvers (Excel's Solver add-in) provides an accessible platform. For advanced pharmaceutical applications and larger problems, specialized software offers robust implementation:

- Python with SciPy: The

scipy.optimize.linprogfunction implements the simplex algorithm with an accessible programming interface [18] - MATLAB: Provides built-in functions for linear programming using simplex algorithms

- R: Packages such as

bootandlinprogoffer simplex implementations for statistical computing environments - Commercial Optimization Suites: Gurobi, CPLEX, and Xpress provide industrial-strength implementations for large-scale problems

These tools handle the computational complexity of the simplex algorithm, allowing researchers to focus on problem formulation and interpretation of results rather than implementation details [18].

Mathematical Prerequisites and Conceptual Framework

Effective application of the simplex method requires understanding of several key mathematical concepts:

- Linear Algebra: Matrix operations, Gaussian elimination, and basis transformations form the computational core of the pivot operations [22]

- Multivariable Calculus: Understanding of gradients and contour surfaces aids in visualizing the optimization process

- Convex Geometry: Comprehension of polytopes, vertices, and edges provides geometric intuition for the algorithm's movement through feasible space [22]

For researchers without extensive mathematical backgrounds, modern software implementations abstract these complexities while still providing access to the algorithm's power. The critical thinking skill lies in proper problem formulation—defining appropriate decision variables, constructing meaningful objective functions, and specifying correct constraints [26].

Comparative Analysis: Simplex vs. One-Variable-at-a-Time Optimization

The fundamental distinction between simplex and OVAT methodologies lies in their approach to multidimensional optimization. OVAT operates under the assumption that variables contribute independently to the objective function—an assumption rarely valid in complex biological and chemical systems. In contrast, the simplex method explicitly acknowledges and exploits variable interactions to navigate directly toward optimal regions [27].

In pharmaceutical formulation development, this distinction has profound practical implications. Excipient components frequently exhibit synergistic or antagonistic interactions that OVAT approaches cannot detect. For example, in the glipizide sustained-release formulation, the ratio of HPMC K4M to HPMC K100LV critically influenced drug release kinetics—an interaction that would likely be missed by sequential adjustment of individual components [27]. The simplex method, through its systematic exploration of variable combinations, successfully identified these interactions and their optimal balance.

From a resource perspective, the simplex method typically requires fewer experimental iterations than comprehensive OVAT approaches, particularly as the number of variables increases. This efficiency stems from the algorithm's directed search strategy, which continuously moves toward improved solutions rather than exhaustively mapping the entire experimental space [26] [27]. For resource-intensive pharmaceutical studies where experimental materials are costly or time-consuming to prepare, this efficiency translates directly to development cost savings.

The simplex method represents a paradigm shift from traditional one-variable-at-a-time optimization, offering a systematic, mathematically rigorous framework for navigating complex decision spaces. Its sequential optimization approach—moving from one vertex solution to adjacent improved solutions—provides both computational efficiency and theoretical guarantees of optimality for linear problems. In pharmaceutical and chemical development contexts, where multiple interacting components influence critical quality attributes, the simplex method's ability to explicitly account for these interactions makes it uniquely valuable.

As demonstrated in the sustained-release formulation case study, implementation of the simplex method can lead to quantitatively superior solutions compared to traditional approaches. The resulting optimized formulations not only meet target specifications more precisely but also achieve this with greater development efficiency. For researchers and development professionals, mastery of this methodology provides a powerful tool for addressing the complex optimization challenges inherent in modern scientific problems.

While the mathematical foundations of the simplex method are decades old, its relevance continues to grow as computational power becomes more accessible and optimization problems become increasingly complex. The integration of simplex methodologies with emerging machine learning approaches represents a promising frontier for further enhancing optimization efficiency in scientific and industrial applications.