Chemometrics in Multicomponent Analysis: Advanced Strategies for Pharmaceutical and Biomedical Research

This article provides a comprehensive overview of chemometric techniques for the analysis of multicomponent mixtures, a common challenge in pharmaceutical and biomedical research.

Chemometrics in Multicomponent Analysis: Advanced Strategies for Pharmaceutical and Biomedical Research

Abstract

This article provides a comprehensive overview of chemometric techniques for the analysis of multicomponent mixtures, a common challenge in pharmaceutical and biomedical research. It covers foundational principles, key methodological approaches including Multivariate Curve Resolution-Alternating Least Squares (MCR-ALS), Partial Least Squares (PLS), and Artificial Neural Networks (ANN), and their application in resolving complex spectral data from drugs and biologics. The content details optimization strategies and constraint implementation to enhance model performance, alongside rigorous validation protocols and comparative assessments against traditional methods like HPLC. Furthermore, it emphasizes the role of chemometrics in promoting sustainable analytical practices through greenness assessment tools, offering researchers a validated framework for efficient, accurate, and environmentally conscious mixture analysis.

Core Concepts and Data Exploration in Chemometric Analysis

Defining Chemometrics and Its Role in Resolving Multicomponent Systems

Chemometrics is a chemical discipline that employs mathematics, statistics, and computer science to design optimal measurement procedures and experiments and to extract maximum chemical information from complex analytical data [1] [2]. In the context of modern spectroscopy and analytical chemistry, chemometrics transforms spectroscopic techniques from mere data providers into direct participants in solving complex chemical problems, particularly in the analysis of multicomponent mixtures [3].

For researchers and drug development professionals, chemometrics provides powerful tools for qualitative and quantitative analysis of complex mixtures without prior physical separation of components. This capability is particularly valuable in pharmaceutical applications where traditional methods like High-Performance Liquid Chromatography (HPLC) are costly, time-consuming, and generate hazardous waste [2]. The core strength of chemometrics lies in its ability to resolve significant spectral overlaps, reduce signal interference, and minimize noise through multivariate calibration techniques [2].

Foundational Chemometric Methods

Core Algorithms and Their Applications

Modern chemometrics encompasses a diverse toolkit of algorithms, each suited to specific analytical challenges in multicomponent analysis:

Multivariate Calibration Methods form the backbone of quantitative analysis. Principal Component Regression (PCR) and Partial Least Squares (PLS) regression are the most widely applied techniques for resolving overlapped spectra and establishing predictive models between spectral data and component concentrations [2] [3]. These methods are particularly valuable when analyzing complex pharmaceutical formulations with overlapping spectral signatures [2].

Pattern Recognition Techniques enable qualitative analysis. Principal Component Analysis (PCA) simplifies complex datasets by identifying underlying patterns and is frequently used for exploratory data analysis and classification [4] [5]. Linear Discriminant Analysis (LDA) and Partial Least Squares-Discriminant Analysis (PLS-DA) are supervised methods for classifying samples based on their chemical composition [5] [6].

Advanced Modeling Approaches address more complex analytical challenges. Multivariate Curve Resolution-Alternating Least Squares (MCR-ALS) resolves concentration profiles and spectral signatures of individual components in evolving mixtures [2]. Artificial Neural Networks (ANN) emulate cognitive processes to model both linear and nonlinear relationships in spectral data, often outperforming traditional multivariate models for complex systems [2]. Support Vector Machine (SVM) models offer flexible, local modeling approaches suitable for quantification predictions in dynamic systems like bioprocesses [4].

Method Selection Guidelines

The selection of appropriate chemometric methods depends on the specific analytical problem. PLS and PCR are ideal for quantitative analysis of mixtures with known components, while PCA and PLS-DA are preferred for classification and quality control applications. ANN models excel with highly complex, nonlinear systems, and MCR-ALS is valuable for resolving unknown mixture components. For real-time process monitoring, SVM models based on PCA scores offer robust performance in dynamic environments [4].

Advanced Chemometric Approaches

Complex-Valued Chemometrics

Traditional chemometric techniques typically rely on real-valued input data, most often absorbance or transmission spectra, which are limited by their reliance on intensity-based measurements subject to systematic errors from reflection losses, interfacial effects, and other factors [7]. Complex-valued chemometrics represents a paradigm shift by incorporating both the real and imaginary parts of the complex refractive index, thereby preserving phase information that is discarded in conventional intensity-only approaches [7].

This advanced approach offers significant advantages for multicomponent analysis. By capturing the full electromagnetic response of materials, complex-valued chemometrics improves linearity with respect to analyte concentration—a fundamental assumption of linear chemometric models like CLS, ILS, PCA, and PLS [7]. The inclusion of the real part (dispersion) alongside the imaginary part (absorption) often reveals inconsistencies in conventional models and improves robustness in multivariate regression, especially for complex systems with strong solvent and analyte interactions [7].

Complex-valued spectra can be acquired through modern techniques like spectroscopic ellipsometry or generated from conventional intensity spectra using Kramers-Kronig transformations or iterative wave-optics models based on Fresnel equations [7].

Multi-Block Data Analysis

As analytical measurements become increasingly multi-modal, traditional chemometric methods may be inadequate for integrated data analysis. Multi-block methods have emerged to analyze data from multiple sources or techniques simultaneously, enabling more comprehensive characterization of complex samples [8]. These methods are available for data visualization, regression, and classification, with advanced applications including preprocessing fusion and calibration transfer between instruments [8].

QAMS Method for Economical Quality Control

The Quantitative Analysis of Multi-components by Single Marker (QAMS) method addresses the challenge of limited reference standards in quality control, particularly for traditional Chinese medicines and natural products [6] [9]. This innovative approach uses one readily available standard to determine multiple components with similar structures, significantly reducing analytical costs while maintaining comprehensive quality assessment [9].

In practice, QAMS selects an easily available active constituent as an internal reference standard (IRS), then calculates the contents of multiple structurally similar constituents using relative calibration factors [9]. When combined with chromatographic fingerprinting, this approach enables simultaneous determination of multiple target constituents and comprehensive quality evaluation, offering a practical solution for quality control in resource-limited settings [9].

Experimental Protocols

Protocol 1: Development of Multivariate Calibration Models for Pharmaceutical Formulations

This protocol outlines the development of chemometric models for analyzing multicomponent pharmaceutical mixtures, adapted from validated methods for quantifying paracetamol, chlorpheniramine maleate, caffeine, and ascorbic acid in combined dosage forms [2].

Materials and Equipment

- Spectrophotometer: UV-vis spectrophotometer (e.g., Shimadzu 1605) with 1.00 cm quartz cells

- Software: MATLAB with PLS Toolbox, MCR-ALS Toolbox, and Neural Network Toolbox

- Reference Standards: High-purity analytical standards of target compounds

- Solvents: HPLC-grade methanol or other appropriate solvents

- Samples: Pharmaceutical formulations or synthetic mixtures

Procedure

Solution Preparation: Prepare stock solutions (1 mg/mL) of each compound by dissolving reference standards in appropriate solvent. Prepare working solutions through serial dilution.

Spectral Collection: Measure absorption spectra of calibration standards and samples over appropriate wavelength range (e.g., 200-400 nm). Use 1 nm intervals for spectral acquisition.

Experimental Design: Implement a multi-level, multi-factor calibration design (e.g., five-level, four-factor design for four components) to construct calibration set with 25-30 mixtures covering concentration ranges expected in samples.

Data Preprocessing: Mean-center spectral data and apply appropriate preprocessing techniques (baseline correction, normalization, derivative transformations) to remove irrelevant variance.

Model Development:

- For PLS and PCR models, optimize number of latent variables using leave-one-out cross-validation

- For MCR-ALS, apply non-negativity constraints and other appropriate constraints

- For ANN models, optimize network architecture (hidden nodes, learning rate, epochs) using trial approach with purelin-purelin transfer function

Model Validation: Validate models using independent validation set not included in calibration. Assess prediction performance through recovery percentages and root mean square error of prediction.

Table 1: Performance Comparison of Chemometric Models for Pharmaceutical Analysis

| Model Type | Latent Variables/Neurons | Average Recovery (%) | RMSEP | Optimal Application |

|---|---|---|---|---|

| PLS | 4 | 98-102 | 0.15-0.25 | Linear systems with known components |

| PCR | 4 | 97-101 | 0.18-0.28 | Multicollinear spectral data |

| MCR-ALS | N/A | 96-103 | 0.20-0.30 | Resolving unknown mixtures |

| ANN | 4 hidden neurons | 99-102 | 0.10-0.20 | Nonlinear complex systems |

Protocol 2: Real-Time Bioprocess Monitoring Using Raman Spectroscopy and Chemometrics

This protocol describes the implementation of Raman spectroscopy combined with chemometrics for real-time monitoring of multicomponent bioprocesses, adapted from successful applications in E. coli fermentation processes [4].

Materials and Equipment

- Raman Spectrometer: Portable Raman spectrometer with 785 nm laser excitation (e.g., Wasatch Photonics)

- Sampling Interface: Fiber-optic Raman probe with immersion tip for in-situ or offline measurements

- Reference Method: HPLC system for reference measurements

- Software: Chemometric software package (e.g., RamanMetrix) with preprocessing and modeling capabilities

Procedure

Sample Collection: Collect samples hourly from bioreactor throughout process duration. Maintain consistent sampling protocol.

Reference Analysis: Analyze samples using reference method (HPLC) to determine actual concentrations of feedstock, products, and byproducts.

Spectral Acquisition: Acquire Raman spectra for each sample using following parameters:

- Spectral range: 270-2000 cm⁻¹ (fingerprint region)

- Resolution: 7-8 cm⁻¹

- Laser power: 450 mW

- Acquisition time: 1500 ms per spectrum

- Number of accumulations: 20 spectra averaged per sample

Spectral Preprocessing:

- Apply baseline correction to remove fluorescence background

- Implement normalization to account for instrumental variations

- Use derivative transformations to enhance spectral features

- Apply spike removal if necessary

Chemometric Modeling:

- Associate preprocessed spectra with reference concentration data

- Develop PCA model to explore spectral patterns and identify outliers

- Build SVM regression model based on PCA scores for concentration prediction

- Optimize model parameters using cross-validation

Model Implementation: Deploy validated model for real-time prediction of concentrations during fermentation processes using in-situ Raman probe.

Table 2: Essential Research Reagent Solutions for Chemometric Analysis

| Reagent/Software | Function/Role | Application Context |

|---|---|---|

| MATLAB with PLS Toolbox | Multivariate model development | Pharmaceutical analysis, general spectral modeling |

| MCR-ALS Toolbox | Resolution of component spectra | Evolving mixture analysis, unknown identification |

| RamanMetrix Software | Raman-specific chemometric analysis | Real-time bioprocess monitoring |

| HPLC-grade Methanol | Solvent for standard and sample preparation | UV-vis spectroscopic analysis of pharmaceuticals |

| Chlorogenic Acid | Internal standard for QAMS | Quality control of natural products |

| Notopterol | Internal standard for coumarin analysis | Traditional medicine quality assessment |

Data Analysis and Interpretation

Spectral Preprocessing Strategies

Effective preprocessing is essential for extracting meaningful chemical information from spectral data. Common techniques include:

- Baseline Correction: Removes offset and drift effects caused by scattering or fluorescence [4] [3]

- Standard Normal Variate (SNV): Corrects for multiplicative scattering effects and path length variations [3]

- Derivative Transformations: Enhance resolution of overlapping peaks and eliminate baseline effects [3]

- Normalization: Minimizes impacts of experimental variations in laser power, integration time, and other factors [4]

The selection of preprocessing methods should be guided by the specific characteristics of the analytical problem and spectral data. For Raman spectroscopy of biological samples, baseline correction is particularly important for removing fluorescence background from complex organic matrices [4].

Model Validation and Quality Assessment

Rigorous validation is critical for ensuring reliable chemometric models. Key validation parameters include:

- Cross-Validation: Assess model robustness using leave-one-out or k-fold cross-validation [2]

- External Validation: Evaluate prediction performance using independent validation sets [2]

- Figures of Merit: Calculate root mean square error of calibration (RMSEC), root mean square error of prediction (RMSEP), and correlation coefficients [2]

- Greenness Assessment: Apply green chemistry metrics (AGREE, eco-scale) to evaluate environmental impact of analytical methods [2]

For QAMS methods, additional validation should include evaluation of relative correction factors under different chromatographic conditions to ensure method robustness [9].

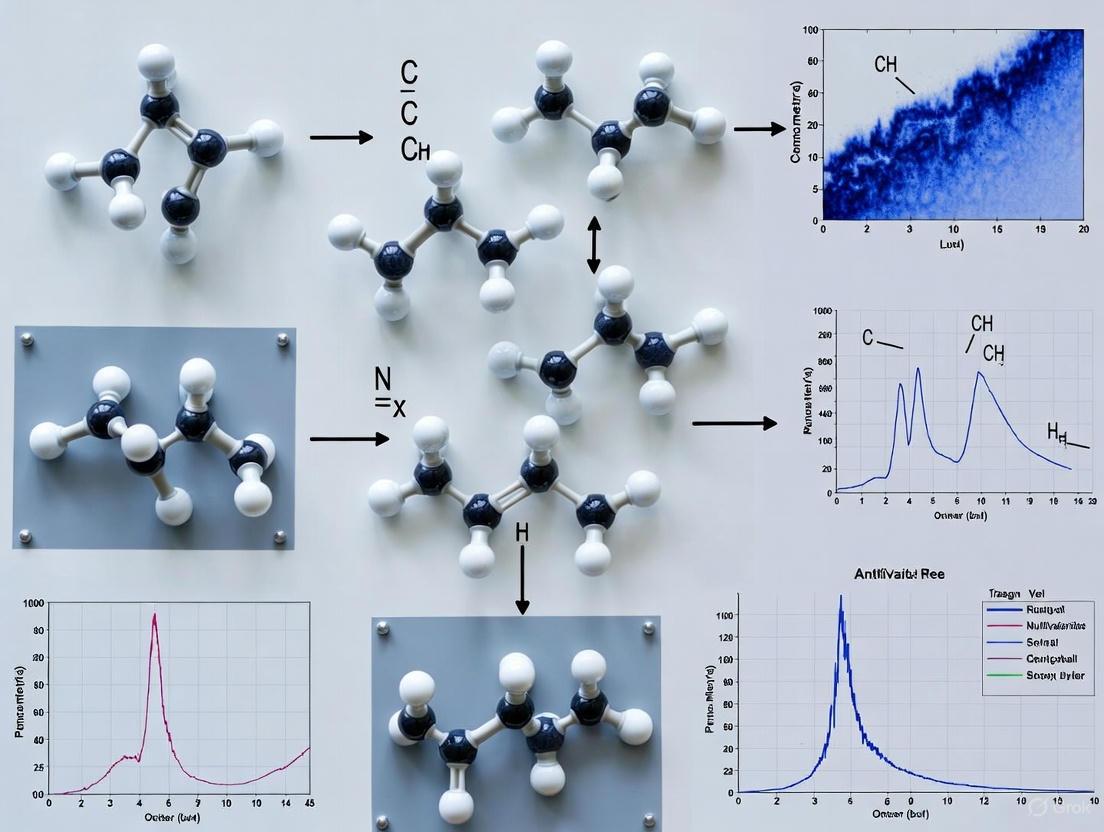

Visualizations

Chemometric Analysis Workflow for Multicomponent Systems

The following diagram illustrates the comprehensive workflow for chemometric analysis of multicomponent systems, integrating both theoretical and practical aspects:

Diagram 1: Chemometric analysis workflow for multicomponent systems

QAMS Method Implementation Logic

The following diagram illustrates the systematic approach for implementing the Quantitative Analysis of Multi-components by Single Marker method:

Diagram 2: QAMS method implementation workflow

Chemometrics has revolutionized the analysis of multicomponent systems by transforming spectral data into actionable chemical information. Through sophisticated mathematical and statistical approaches, chemometrics enables researchers to resolve complex mixtures, quantify components without physical separation, and implement real-time monitoring strategies across pharmaceutical, biotechnological, and quality control applications.

The continued advancement of chemometric methods—including complex-valued approaches, multi-block data analysis, and economical quality control strategies like QAMS—ensures that this discipline will remain at the forefront of analytical science. For drug development professionals and researchers, mastery of these tools provides powerful capabilities for addressing the increasingly complex analytical challenges in modern science and industry.

In the analysis of complex chemical systems, researchers are frequently confronted with the mixture analysis problem, where the measured signal from an instrument represents the combined response of multiple underlying components. The bilinear model provides a powerful mathematical framework to address this challenge by decomposing a data matrix into the meaningful, pure profiles of its constituent parts [10]. The model's core principle is that a data matrix D can be expressed as the product of two smaller matrices, C and ST, plus an error matrix E that contains the residual variance unexplained by the model:

D = C ST + E [10]

In this decomposition, the matrix ST contains the qualitative profiles (e.g., pure spectra) of the individual sources of variation, while the matrix C contains their related apportionment profiles (e.g., concentration profiles) [10]. A paradigmatic example is found in chromatographic data analysis with UV detection: the data matrix D comprises all the UV spectra collected over the elution time, ST contains the pure spectra of the eluted compounds, and C contains their corresponding concentration profiles (chromatographic elution peaks) [10]. The bilinear model is the foundational concept underlying Multivariate Curve Resolution (MCR), a family of chemometric methods that has been dynamically evolving for over five decades to adapt to a wide array of demanding scientific scenarios [10] [11].

Theoretical Foundation and Key Concepts

The Mathematics of Bilinear Decomposition

The fundamental equation, D = C ST + E, implies that the data matrix D (with dimensions m × n) is described as a sum of k independent components, where k is the number of pure contributors to the system. Each component is represented by the outer product of its two pure profiles: a column ci from C (dimensions m × k) and a row siT from ST (dimensions k × n). The matrix E (dimensions m × n) holds the residuals. The power of this model lies in its ability to recover the pure, underlying profiles C and S from the observed mixture D without prior knowledge of their identities, a process often referred to as self-modeling curve resolution [10].

The Challenge of Rotational Ambiguity

A central challenge in implementing the bilinear model is rotational ambiguity (RA). This phenomenon occurs because, for a given data set, there may exist a range of different sets of profiles in C and S that, when multiplied, fit the original data matrix D equally well within the bounds of experimental error [12]. In other words, even with the correct number of components, multiple bilinear decompositions can exist that reproduce the data with an optimal fit. All these equivalent decompositions constitute the range of feasible solutions, all valid under the constraints applied [10]. The extent of RA depends on the level of overlap between component profiles and the nature and strength of the constraints applied during the decomposition. For systems with more than two components, estimating the full range of feasible profiles becomes computationally demanding, though methods like sensor-wise N-BANDS have been developed to provide the upper and lower boundaries of feasible profiles for multi-component systems in a reasonable time [12].

Experimental Protocols for Multivariate Curve Resolution

Protocol 1: MCR with Alternating Least Squares (MCR-ALS)

The MCR-ALS algorithm is a widely used iterative method for resolving the bilinear model. The following protocol provides a detailed methodology for its application.

- Aim: To decompose a spectral data matrix D (e.g., from HPLC-DAD) into the concentration profiles C and spectral profiles ST of its pure components.

- Primary Materials:

- A data matrix D (samples × wavelengths).

- Software with MCR-ALS implementation (e.g., MATLAB toolboxes).

- Procedure:

- Data Pre-processing: Perform necessary pre-processing on matrix D, such as baseline correction, scaling, or noise filtering.

- Estimate Number of Components (k): Use principal component analysis (PCA) or other factor analysis methods on D to determine the number of significant components, k.

- Initial Estimate: Provide initial estimates for either C or ST. This can be done using Evolving Factor Analysis (EFA), pure variable detection methods (e.g., SIMPLISMA), or from prior knowledge.

- Apply Constraints: Define the constraints to be applied during the optimization. Common choices include:

- Non-negativity: For concentrations and spectra.

- Unimodality: For concentration profiles in chromatography.

- Closure: For systems where the sum of concentrations is constant.

- Hard-modeling: When a physicochemical model governs the concentration profiles.

- ALS Optimization: Iterate until convergence is achieved: a. C-step: With ST fixed, calculate C by least-squares: C = D S (ST S)⁻¹, followed by application of constraints to C. b. S-step: With C fixed, calculate ST by least-squares: ST = (CT C)⁻¹ CT D, followed by application of constraints to ST. c. Check Convergence: Evaluate if the change in residual fit between iterations falls below a pre-set threshold (e.g., 0.1%).

- Validation: Validate the resolved profiles using available prior knowledge, cross-validation, or by analyzing the residuals E.

Protocol 2: Assessing Rotational Ambiguity with Sensor-wise N-BANDS

This protocol estimates the boundaries of feasible profiles in multi-component systems, which is critical for evaluating the uncertainty of the MCR solution.

- Aim: To compute the upper and lower boundaries of the set of feasible concentration and spectral profiles satisfying the bilinear decomposition under applied constraints.

- Primary Materials:

- A resolved MCR-ALS model (matrices C, S, and the data matrix D).

- MATLAB software with the N-BANDS algorithm code (available from public repositories).

- Procedure:

- Input Preparation: Load the data matrix D and the MCR-ALS solution as a starting point for the optimization.

- Define Objective Function: The sensor-wise N-BANDS method modifies the standard N-BANDS algorithm. The objective function is no longer a global norm but the value of the profile element at a specific sensor. The algorithm is run through the entire sensor range in both data modes.

- Set Optimization Constraints: The optimization is subject to a single scalar constraint based on the sum of squared residuals (SSR). The SSR of the solution is allowed to vary only up to a limit defined from a reference model (e.g., the initial MCR-ALS model) and the estimated noise level [12].

- Run Optimization for Boundaries: For each sensor in the concentration and spectral modes, perform a non-linear optimization to find the maximum and minimum feasible value of the profile at that sensor. This generates two envelops of profiles for each component.

- Output Analysis: The output is the set of upper and lower boundaries for the concentration and spectral profiles of each component. These boundaries provide a visual and quantitative measure of the extent of rotational ambiguity present in the system [12].

Table 1: Key Chemometric Algorithms for Bilinear Decomposition

| Algorithm Name | Key Principle | Typical Applications | Advantages | Limitations |

|---|---|---|---|---|

| MCR-ALS [10] | Iterative least-squares optimization with constraints | Process monitoring, HPLC-DAD, environmental analysis | Highly flexible; can incorporate diverse constraints | Solutions may be affected by rotational ambiguity |

| N-BANDS [12] | Non-linear optimization of component-wise functions | Estimation of feasible solution boundaries | Assesses uncertainty and extent of rotational ambiguity | Computationally intensive for high-component systems |

| Sensor-wise N-BANDS [12] | Optimization of profile values at individual sensors | Estimating boundaries for multi-component systems | Provides boundaries in real space for any component number | Requires a reference model and noise estimate |

Applications in Pharmaceutical and Bioanalytical Research

The bilinear model has found profound utility in modern drug discovery and development, enabling researchers to extract pure component information from complex biological and chemical mixtures.

Predicting Anti-Cancer Drug Response

In oncology research, predicting individual patient responses to anti-cancer drugs is a major goal of precision medicine. The BANDRP framework is a deep bilinear attention network that integrates multi-omics data of cancer cell lines (gene expression, genomic mutation, DNA methylation) and multiple molecular fingerprints of drugs to predict anti-cancer drug responses (IC50 values) [13]. The model uses gene expression data to calculate pathway enrichment scores, enriching the features of cancer cell lines. It then uses a bilinear attention network to automatically learn the interactive information between cancer cell lines and drugs. Benchmarking tests have demonstrated that BANDRP surpasses baseline models and exhibits robust generalization performance, providing a reliable computational framework for predicting anti-cancer drug response [13].

Drug-Target Interaction Prediction

Predicting the interaction between drugs and their protein targets is a critical step in drug discovery. DrugBAN, a deep bilinear attention network framework with domain adaptation, explicitly learns pairwise local interactions between drugs (represented as molecular graphs) and targets (represented as protein sequences) [14]. The model's use of a bilinear attention map not only improves prediction accuracy but also provides interpretable insights by highlighting which parts of a drug molecule and which regions of a protein sequence contribute most to the interaction. Experiments under both in-domain and cross-domain settings showed that DrugBAN achieved the best overall performance against several state-of-the-art baseline models [14].

Table 2: Essential Research Reagents and Materials for MCR Studies

| Item Name | Function/Purpose | Example from Literature |

|---|---|---|

| Hyperspectral Image Data | Provides a 3D data cube (x, y, λ) for spatial-spectral analysis of samples. | Used in MCR for analyzing pharmaceutical samples and biological tissues [10]. |

| Chromatographic Data (HPLC-DAD, GC-MS) | Provides a 2D data matrix (time × wavelength/mass) for analysis of complex mixtures. | A paradigmatic example for MCR; resolves pure spectra and elution profiles [10]. |

| Cell Line Multi-omics Data | Includes gene expression, mutation, and methylation data from resources like CCLE. | Used as input for the BANDRP model to represent cancer cell lines [13]. |

| Drug Molecular Fingerprints | Numerical representation of drug chemical structure (e.g., ECFP, PubchemFP). | Used as input for drug response (BANDRP) and drug-target interaction (DrugBAN) models [13] [14]. |

The Scientist's Toolkit

MCR-ALS Workflow

The bilinear model, operationalized through Multivariate Curve Resolution, provides an indispensable toolkit for decomposing complex, multi-component data into pure chemical profiles. While the challenge of rotational ambiguity remains an active area of research, the strategic application of constraints and the development of advanced algorithms like N-BANDS allow scientists to obtain meaningful, quantifiable results. The continued evolution of MCR, particularly its integration with deep learning architectures like bilinear attention networks, is expanding its utility into new frontiers such as personalized cancer therapy and intelligent drug discovery. By enabling the extraction of pure component information from complex mixtures, the bilinear model empowers researchers and drug development professionals to gain deeper insights into the fundamental composition and behavior of the systems they study.

Principal Component Analysis (PCA) stands as a cornerstone multivariate analysis technique in chemometrics, particularly for the exploratory analysis of complex multicomponent mixtures. By reducing data dimensionality, PCA facilitates the visualization of underlying patterns, the identification of sample clusters, and the detection of anomalous measurements that could signify experimental error, unique sample properties, or novel chemical phenomena. This protocol details the application of PCA for pattern recognition and outlier detection within pharmaceutical and chemical research, providing a structured workflow from data pre-processing to the interpretation of results, complete with robust statistical methods for identifying outliers.

In the analysis of multicomponent mixtures via techniques like optical spectroscopy, datasets are often high-dimensional, comprising numerous wavelengths, time points, or chemical features. Principal Component Analysis (PCA) is a linear dimensionality reduction technique that transforms correlated variables into a smaller set of uncorrelated principal components (PCs) that capture the greatest variance in the data [15]. This transformation is pivotal for exploratory data analysis, allowing researchers to discern patterns, classify samples, and pinpoint outliers that deviate from established chemical profiles [16]. Within chemometrics, these capabilities are essential for calibrating instruments, validating methods, and ensuring the quality and consistency of chemical products and pharmaceutical compounds [17].

Theoretical Foundations

PCA operates on the principle of identifying new, orthogonal axes—the principal components—in the data space. The first PC captures the direction of maximum variance, with each subsequent component capturing the next highest variance while remaining orthogonal to all preceding components [15] [18]. The mathematical procedure involves:

- Covariance Matrix Computation: PCA begins with the calculation of the covariance matrix, which encapsulates the variances and covariances of the original features [19] [18]. For a data matrix ( X ) with features centered to have zero mean, the covariance matrix ( \Sigma ) is given by ( \Sigma = \frac{1}{n-1} X^T X ), where ( n ) is the number of observations [15].

- Eigenvalue Decomposition: The principal components are derived from the eigenvectors of this covariance matrix. The corresponding eigenvalues represent the amount of variance captured by each PC [19] [18]. The eigenvector with the largest eigenvalue defines the first PC, and so on.

This process results in a transformed dataset where the new features (PCs) are linear combinations of the original variables, are uncorrelated, and are ranked by their importance in describing the data structure [15] [20].

Application Notes: Protocol for PCA-Based Analysis

This section provides a detailed, step-by-step protocol for applying PCA to a typical chemometric dataset, such as spectral data from a multicomponent mixture.

Data Pre-processing

- Objective: To ensure all variables are on a comparable scale, preventing features with larger inherent variances (e.g., absorbance intensity) from disproportionately influencing the model.

- Procedure: Standardize the dataset by centering each variable on zero mean and scaling to unit variance [19]. For each feature ( x ), calculate the standardized value ( z ) using: ( z = \frac{x - \mu}{\sigma} ) where ( \mu ) is the feature mean and ( \sigma ) is its standard deviation.

PCA Implementation and Pattern Recognition

- Objective: To project the data onto its principal components and identify clusters or trends indicative of chemical similarities or differences.

- Procedure:

- Decomposition: Perform PCA on the standardized data matrix. Most software packages will return the principal component scores (the transformed coordinates of the data in the new PC space) and the loadings (the weights of the original variables in each PC) [15] [19].

- Variance Assessment: Examine the explained variance ratio for each PC. This indicates the proportion of the dataset's total variance captured by each component. The first few PCs often contain the majority of the chemically relevant information, while later components may be dominated by noise [16].

- Visualization and Interpretation:

- Generate a scores plot (e.g., PC1 vs. PC2) to visualize the spatial distribution of samples. Samples clustering together share similar chemical profiles, while separated clusters represent distinct compositions [20] [16].

- Consult the loadings plot to interpret the chemical meaning of the PCs. Loadings indicate which original variables (e.g., specific wavelengths) contribute most strongly to a given PC, linking the observed sample patterns back to the original analytical measurements [16].

Outlier Detection Methodologies

- Objective: To identify samples that are statistically unusual within the context of the PCA model.

- Procedure: Employ one or more of the following robust methods on the PC scores:

- Extreme Value Analysis on PCs: For each principal component, flag samples where the absolute robust Z-score exceeds a threshold (e.g., 6). The score ( t ) for sample ( i ) on a given PC is transformed into a robust Z-score using ( z = \frac{|t_i - \text{median}(t)|}{\text{MAD}(t)} ), where MAD is the Median Absolute Deviation [21].

- Robust Mahalanobis Distance (MD): Calculate the MD for each sample based on the robust estimate of the covariance matrix of the PC scores. This multivariate distance measures how far a sample is from the center of the data distribution, accounting for the structure of the data. Samples with a significantly large MD are potential outliers [21].

- Reconstruction Error: Project the data onto the first ( k ) PCs and then reconstruct it back to the original space. The reconstruction error is the squared difference between the original and reconstructed data. Outliers often exhibit large reconstruction errors because they are not well-represented by the primary patterns in the data [22].

- Local Outlier Factor (LOF): Apply the LOF algorithm to the PC scores. LOF is a density-based method that identifies samples that are isolated relative to their local neighbors, making it effective for detecting outliers that may not be extreme in any single PC [21].

Experimental Workflows

The following diagrams illustrate the logical workflow for implementing the protocols described above.

Diagram 1: Comprehensive PCA Analysis Workflow. This diagram outlines the complete process from raw data to the interpretation of patterns and outliers.

Diagram 2: Outlier Detection Methodologies. This diagram compares the four primary statistical methods for identifying outliers within the PCA-transformed data.

Data Presentation and Interpretation

Example PCA Variance Explanation

Table 1: Typical explained variance for a spectral dataset. The first two components capture the majority of the structured information.

| Principal Component | Eigenvalue | Explained Variance (%) | Cumulative Explained Variance (%) |

|---|---|---|---|

| PC1 | 4.52 | 75.3% | 75.3% |

| PC2 | 0.89 | 14.8% | 90.1% |

| PC3 | 0.31 | 5.2% | 95.3% |

| PC4 | 0.15 | 2.5% | 97.8% |

| PC5 | 0.08 | 1.3% | 99.1% |

| PC6 | 0.05 | 0.9% | 100.0% |

Interpreting PCA Loadings for Chemical Insight

Table 2: Example loadings for PC1 and PC2 from a spectroscopic analysis. High absolute loadings indicate variables (wavelengths) that strongly influence a component.

| Wavelength (nm) | PC1 Loading | PC2 Loading | Interpretation |

|---|---|---|---|

| 450 nm | -0.01 | +0.95 | Minor influence on PC1, very strong positive influence on PC2. |

| 550 nm | +0.85 | -0.05 | Strong positive influence on PC1, minor influence on PC2. |

| 650 nm | +0.52 | +0.25 | Moderate positive influence on both PC1 and PC2. |

| 750 nm | -0.08 | -0.18 | Minor negative influence on both components. |

The Scientist's Toolkit

Table 3: Essential computational tools and resources for implementing PCA in chemometric research.

| Item Name | Function / Application | Example Use in Protocol |

|---|---|---|

| StandardScaler | Standardizes features by removing the mean and scaling to unit variance. | Data Pre-processing (Step 3.1). |

| PCA Decomposition Algorithm | Performs the core linear algebra computation to derive principal components and scores. | PCA Implementation (Step 3.2). |

| Robust Covariance Estimator (e.g., Minimum Covariance Determinant) | Calculates a covariance matrix resistant to the influence of outliers. | Robust Mahalanobis Distance calculation (Step 3.3). |

| Local Outlier Factor (LOF) Algorithm | Computes the local density deviation of a sample relative to its neighbors. | Density-based outlier detection (Step 3.3). |

| Statistical Software (Python/R) | Provides the integrated environment and libraries to execute the entire analytical workflow. | Used throughout the protocol. |

Concluding Remarks

PCA is an indispensable tool in the chemometrician's arsenal, providing a powerful and intuitive framework for unraveling the complex, multivariate data inherent in the analysis of multicomponent mixtures. By adhering to the standardized protocols outlined herein—from rigorous data pre-processing to the application of robust outlier detection methods—researchers and drug development professionals can consistently extract meaningful chemical patterns and identify critical anomalies, thereby enhancing the reliability and depth of their analytical conclusions.

The analysis of multicomponent mixtures using spectroscopic techniques is fundamental to pharmaceutical development, environmental monitoring, and food safety. However, two persistent challenges significantly complicate accurate quantification: spectral overlap and matrix effects. Spectral overlap occurs when the absorption or scattering profiles of multiple components in a mixture coincide, making it difficult to resolve individual analyte signals [2]. Matrix effects refer to the influence of all other sample components on the measurement of the target analyte, which can cause signal suppression or enhancement and lead to inaccurate results [23].

Advances in chemometric methods provide powerful mathematical tools to address these challenges, enabling researchers to extract meaningful information from complex analytical data without extensive physical separation steps. This Application Note details the core principles of these challenges and presents validated experimental protocols for their mitigation using modern multivariate calibration techniques, providing a practical framework for researchers and drug development professionals engaged in complex mixture analysis.

Understanding Spectral Overlap

The Fundamental Problem

Spectral overlap arises in the analysis of multi-component mixtures when two or more substances have similar spectroscopic properties. In such cases, their individual absorption spectra coincide or partially merge, creating a single, convoluted signal. This makes it impossible to quantify individual components using traditional univariate calibration methods that rely on measuring signals at specific, unique wavelengths [24] [2]. This challenge is particularly prevalent in UV-Vis spectrophotometry but also affects other spectroscopic techniques, including Raman and NMR.

In quantitative terms, the measured signal at any given wavelength (Aλ) in an n-component mixture is the sum of the individual contributions: Aλ = ε1,λbC1 + ε2,λbC2 + ... + εn,λbCn where εn,λ is the molar absorptivity of component n at wavelength λ, b is the path length, and Cn is the concentration of component n [24]. When ε values for multiple components are significant at the same wavelength, overlap occurs.

Practical Impact on Analysis

The primary consequence of spectral overlap is the inability to selectively monitor a target analyte without interference from other mixture components. For example, in pharmaceutical analysis, a study resolving a ternary mixture of Telmisartan, Chlorthalidone, and Amlodipine found substantial overlap in their UV spectra, preventing accurate quantification using conventional methods [24]. Similarly, research on a quaternary mixture of Paracetamol, Chlorpheniramine maleate, Caffeine, and Ascorbic acid demonstrated "highly overlapping spectra" that necessitated advanced chemometric resolution [2]. This overlap leads to inflated detection limits, reduced method sensitivity, and potentially inaccurate quantification, ultimately compromising the reliability of analytical results in quality control and research settings.

Addressing Matrix Effects

According to the International Union of Pure and Applied Chemistry (IUPAC), the matrix effect is the "combined effect of all components of the sample other than the analyte on the measurement of the quantity" [23]. These effects manifest through two primary mechanisms:

- Chemical and Physical Interactions: Matrix components such as solvents, proteins, or salts can interact with the analyte, altering its spectroscopic properties, stability, or apparent concentration. These interactions include solvation processes and physical effects like light scattering or pathlength variations [23].

- Instrumental and Environmental Effects: Variations in instrumental conditions (temperature fluctuations, humidity, source drift) or sample presentation can create artifacts (e.g., baseline shifts, noise) that distort the analytical signal [23].

Consequences for Analytical Accuracy

Matrix effects can cause either signal suppression or enhancement, leading to systematic errors in quantification. The core issue is that these effects create a discrepancy between the calibration standard's environment (often a pure solution in a simple solvent) and the sample's actual environment (a complex mixture). Consequently, a model built on a calibration set that does not adequately represent the unknown sample's matrix will produce inaccurate predictions, a problem particularly acute in complex samples like biological fluids, food products, and environmental samples [23].

Advanced Chemometric Solutions

Multivariate Calibration Models

Chemometrics applies mathematical and statistical methods to extract chemical information from complex data. Multivariate calibration models are essential for handling spectral overlap as they utilize the entire spectral profile rather than relying on a few discrete wavelengths.

Table 1: Key Multivariate Calibration Models for Resolving Spectral Overlap

| Model | Acronym | Primary Principle | Best Used For |

|---|---|---|---|

| Partial Least Squares | PLS | Finds latent variables that maximize covariance between spectral data and concentration | Linear relationships; general quantitative analysis [2] |

| Principal Component Regression | PCR | Uses principal components (max variance in spectral data) as predictors | Dimensionality reduction; initial exploratory modeling [2] |

| Multivariate Curve Resolution-Alternating Least Squares | MCR-ALS | Decomposes data matrix into concentration and spectral profiles using constraints | Resolving complex mixtures; identifying pure component profiles [2] [23] |

| Artificial Neural Networks | ANN | Non-linear model that learns relationships through interconnected nodes | Modeling complex, non-linear relationships in data [2] |

Protocols for Implementing Chemometric Models

The following protocol is adapted from validated methodologies for analyzing multi-component pharmaceutical formulations [2].

Protocol: Developing a Multivariate Calibration Model for a Quaternary Mixture

1. Equipment and Software

- UV-Vis spectrophotometer with 1.0 cm quartz cells

- MATLAB software with PLS Toolbox and MCR-ALS Toolbox

2. Reagent Preparation

- Standard Solutions: Prepare individual stock solutions (e.g., 1 mg/mL) of each analyte (e.g., PARA, CPM, CAF, ASC) in a suitable solvent (e.g., methanol).

- Working Solutions: Dilute stock solutions to prepare working standards (e.g., 100 µg/mL).

- Calibration Mixtures: Use a factorial design (e.g., five-level, four-factor) to create 25 mixtures covering the expected concentration ranges of all analytes.

3. Spectral Acquisition

- Scan absorption spectra of all calibration mixtures and validation samples across a relevant wavelength range (e.g., 200-400 nm).

- Transfer the spectral data (e.g., from 220-300 nm at 1 nm intervals) to MATLAB for analysis.

4. Model Construction and Validation

- Data Preprocessing: Mean-center the spectral data.

- Model Optimization:

- For PLS/PCR, use cross-validation (e.g., leave-one-out) to determine the optimal number of Latent Variables (LVs).

- For MCR-ALS, apply appropriate constraints (e.g., non-negativity for concentrations and spectra).

- For ANN, optimize network architecture (e.g., number of hidden neurons, learning rate, epochs).

- Validation: Assess model performance using an independent validation set. Calculate Root Mean Square Error of Prediction (RMSEP) and Percent Recovery to evaluate accuracy.

Strategies for Mitigating Matrix Effects

Several advanced calibration strategies have been developed to improve model robustness against matrix effects.

Table 2: Advanced Strategies to Counteract Matrix Effects

| Strategy | Methodology | Advantages | Limitations |

|---|---|---|---|

| Matrix Matching | Matching the composition of calibration standards to the sample matrix [23] | Proactively minimizes matrix variability; improves accuracy | Requires prior knowledge of matrix composition |

| Standard Addition | Adding known quantities of analyte to the sample itself [23] | Calibrates within the sample matrix; good for simple matrices | Impractical for complex mixtures with multiple analytes |

| Local Modeling | Selecting a subset of calibration samples most similar to the unknown [23] | Reduces prediction error by focusing on relevant samples | Requires a large, diverse calibration set |

Protocol: MCR-ALS-Based Matrix Matching Strategy

This protocol utilizes Multivariate Curve Resolution to identify the best-matched calibration set for an unknown sample, thereby minimizing matrix effects [23].

1. Preparation of Multiple Calibration Sets

- Prepare several calibration sets with varying, known matrix compositions that span the expected variability in real samples.

2. MCR-ALS Modeling of Calibration Sets

- For each calibration set ( i ), apply MCR-ALS to decompose the data matrix ( Di ) into concentration profiles ( Ci ) and spectral profiles ( Si ): ( Di = Ci Si^T + E_i ).

- Use appropriate constraints (non-negativity, closure, etc.).

3. Analyzing the Unknown Sample

- Obtain the spectrum of the unknown sample, ( d_u ).

- Use the MCR-BANDS algorithm to estimate the extent of rotational ambiguity and check for the presence of unexpected components not included in the calibration model.

4. Matrix Matching and Prediction

- Spectral Matching: Regress ( du ) against the spectral profile of each calibration set ( Si ) to estimate a concentration vector ( c_u ).

- Concentration Matching: Regress ( cu ) against the concentration profile ( Ci ) to assess similarity.

- Evaluate the fitting error between the unknown sample and each calibration model. The calibration set with the lowest fitting error is identified as the best matrix-matched set.

- Use this selected model to predict the property (e.g., concentration) of the unknown sample.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagent Solutions for Chemometric Analysis

| Item | Specification / Function | Application Notes |

|---|---|---|

| Analytical Standards | High-purity certified reference materials (≥98%) | Essential for building accurate calibration models; purity must be verified [2]. |

| UV-Vis Spectrophotometer | Double-beam with 1 nm bandwidth; quartz cuvettes | For acquiring high-resolution spectral data [24] [2]. |

| Chemometrics Software | MATLAB with PLS Toolbox, MCR-ALS Toolbox | Industry-standard platforms for developing and validating multivariate models [2] [23]. |

| Green Solvents | Ethanol, methanol (HPLC grade) | Used for preparing standard and sample solutions; ethanol is preferred for greenness [24]. |

| Volumetric Glassware | Class A volumetric flasks and pipettes | Critical for precise and accurate dilution and sample preparation [2]. |

Spectral overlap and matrix effects represent significant, yet surmountable, challenges in the analysis of multicomponent mixtures. The integration of advanced chemometric models—such as PLS, MCR-ALS, and ANN—into analytical protocols provides a powerful framework for overcoming these obstacles. The detailed methodologies outlined in this Application Note, from multivariate calibration development to sophisticated matrix-matching strategies, offer researchers a clear pathway to achieving accurate, reliable, and robust quantification in complex matrices. By adopting these practices, scientists can enhance the quality of analytical data, thereby supporting more confident decision-making in drug development, quality control, and broader scientific research.

Key Chemometric Methods and Real-World Pharmaceutical Applications

Multivariate calibration is an indispensable chemometric tool that enables the extraction of quantitative chemical information from complex, non-specific instrumental responses. In analytical chemistry, it serves as a powerful solution for rapid analysis of complex mixtures where physical separation of components is difficult, expensive, or time-consuming. Unlike traditional univariate methods that utilize only a single measured variable (e.g., absorbance at one wavelength), multivariate calibration leverages multiple variables simultaneously (e.g., entire spectral regions) to build predictive models for chemical or physical properties of interest.

The fundamental advantage of multivariate approaches lies in their ability to compensate for interferents mathematically and utilize virtually all relevant information contained in analytical signals. This is particularly valuable when analyzing samples with overlapping spectral features or varying matrix effects. As noted in analytical literature, "Multivariate methods are generally better than univariate methods. They increase the amount of possible information that can be obtained without loss; multivariate models can always be simplified to a univariate model" [25]. These methods have found widespread application across diverse fields including pharmaceutical analysis, food chemistry, clinical diagnostics, environmental monitoring, and industrial process control [26] [27].

The core mathematical framework of multivariate calibration encompasses both inverse and direct calibration approaches. Inverse calibration methods, which form the basis of most modern applications, establish a relationship between multivariate measurements and analyte concentrations without requiring explicit knowledge of all interfering components [27]. This tutorial focuses on two foundational inverse calibration techniques: Principal Component Regression (PCR) and Partial Least Squares (PLS) regression, detailing their theoretical foundations, practical implementation, and applications in analytical chemistry.

Theoretical Foundations

Principal Component Regression (PCR)

Principal Component Regression is a two-step multivariate calibration method that combines Principal Component Analysis (PCA) with conventional least squares regression. The first step involves PCA, an unsupervised dimensionality reduction technique that transforms the original correlated variables into a new set of uncorrelated variables called principal components (PCs). These PCs are linear combinations of the original variables and are calculated to successively capture the maximum variance present in the data matrix X (e.g., spectral measurements) [25] [27].

In mathematical terms, PCA decomposes the mean-centered data matrix X as follows: X = TP^T + E where T contains the scores (projections of samples onto the PCs), P contains the loadings (directions of maximum variance), and E represents the residual matrix. The scores represent the coordinates of the samples in the new PC space, while the loadings indicate the contribution of each original variable to the principal components.

The second step of PCR employs a subset of the calculated PCs as independent variables in a multiple linear regression model to predict the dependent variable y (analyte concentration or property): y = Tb + e where b contains the regression coefficients and e represents the error term. A key consideration in PCR is determining the optimal number of PCs to retain in the model—enough to capture important variance patterns but not so many as to incorporate noise or irrelevant variance [25].

Partial Least Squares (PLS) Regression

Partial Least Squares regression is a supervised multivariate calibration method that, unlike PCR, considers the relationship between the X-block (instrumental measurements) and y-block (concentrations or properties) during the dimensionality reduction process. While PCR focuses solely on capturing maximum variance in X, PLS seeks directions in the X-space that simultaneously explain variance in X and correlate with y [28] [29].

The PLS algorithm performs decomposition of both X and y matrices: X = TP^T + E y = UQ^T + F with the additional constraint that the relationship between X-scores (T) and y-scores (U) is maximized. This is achieved through an inner relation: U = TD + H where D is a diagonal matrix of weights and H is the residual matrix.

The supervised nature of PLS often makes it more efficient than PCR for prediction purposes, particularly when the predictive components do not coincide with directions of high variance in X. "The main difference with PCR is that the PLS transformation is supervised. Therefore, as we will see in this example, it does not suffer from the issue we just mentioned" [28]. This characteristic enables PLS to frequently achieve comparable or better predictive performance with fewer latent variables than PCR.

Comparative Analysis: PCR vs. PLS

The theoretical relationship between PCR and PLS has been extensively discussed in the chemometrics literature. Both methods employ latent variable-based decomposition approaches but differ fundamentally in their objective functions. While PCR focuses exclusively on explaining variance in the X-block, PLS specifically targets covariance between X and y [29].

Table 1: Theoretical Comparison of PCR and PLS Regression

| Characteristic | PCR | PLS |

|---|---|---|

| Objective | Maximize variance in X | Maximize covariance between X and y |

| Model approach | Unsupervised | Supervised |

| Decomposition | X only | X and y simultaneously |

| Latent variables | Principal components | Latent components |

| Efficiency | May require more components for equivalent prediction | Often achieves good prediction with fewer components |

| Noise sensitivity | More sensitive to structured noise in X | Less sensitive to irrelevant variance in X |

Historically, PLS has gained wider adoption in chemometrics, though literature surveys reveal that performance differences are often application-dependent. "While there were a few cases which indicated that PLS gave better results than PCR, a greater number of studies indicated no real difference in performance" [29]. The choice between methods should consider specific data characteristics, including the presence of interfering components, noise structure, and the relationship between predictive components and variance structure in X.

Experimental Protocols

Sample Preparation and Experimental Design

Proper experimental design and sample preparation are critical for developing robust multivariate calibration models. The calibration set should adequately represent the expected variability in future samples, including variations in analyte concentration, matrix composition, and potential interferents. For pharmaceutical applications, this may include deliberate variations in excipient ratios, particle size distributions, and manufacturing parameters that might affect spectral measurements [30].

When designing calibration experiments, include a blank sample (zero analyte concentration) to better characterize the low concentration region and detection capabilities. The concentration range should cover the expected analytical scope with sufficient levels to properly model possible non-linearities. "The set of concentrations designed for calibration should include the blank. The sample with zero analyte concentration allows one to gain better insight into the region of low analyte concentrations and detection capabilities" [31].

Appropriate reference method validation is essential, as the accuracy of multivariate calibration models cannot exceed the accuracy of the reference method used for calibration development. For pharmaceutical applications, this typically involves validated HPLC or UV-Vis methods with demonstrated specificity, accuracy, and precision for the target analytes [30].

Data Collection and Preprocessing

Spectroscopic data collection for multivariate calibration should be performed using well-characterized and properly calibrated instrumentation. For NIR applications, spectra are typically collected in the 1100-2500 nm range, capturing relevant overtone and combination bands [30]. Consistent sample presentation is crucial, particularly for diffuse reflectance measurements, where variations in particle size, packing density, or physical form can introduce significant light scattering effects.

Data preprocessing is an essential step to minimize non-chemical sources of variance and enhance the signal-to-noise ratio. Common preprocessing techniques include:

- Standard Normal Variate (SNV): Corrects for multiplicative scatter effects by centering and scaling each individual spectrum [32].

- Multiplicative Scatter Correction (MSC): Compensates for additive and multiplicative scattering effects by regressing each spectrum against a reference spectrum [32].

- Savitzky-Golay Smoothing and Derivatives: Reduces high-frequency noise while preserving spectral features; derivatives also help remove baseline offsets [30].

- Detrending: Removes non-linear baseline variations by subtracting polynomial fits from spectra [32].

The selection of optimal preprocessing methods should be guided by the specific data characteristics and validated through model performance metrics [30].

Model Development Workflow

The following workflow outlines the systematic development of PCR and PLS calibration models:

Diagram 1: Multivariate Calibration Model Development Workflow (Title: Model Development Workflow)

A critical step in model development is the proper division of samples into calibration and validation sets. The Kennard-Stone algorithm is commonly employed for this purpose, as it selects a representative subset of samples that uniformly span the experimental space [30]. For the calibration set, "a number N of well selected samples, sufficient to explore the variability of the chemical systems on which the regression model has to be applied; N must be sufficient also to evaluate the accuracy of the model" [27].

Model Optimization and Validation

Optimizing the number of latent variables (principal components in PCR or latent vectors in PLS) is crucial for building robust models. Insufficient components result in underfitting and poor predictive ability, while too many components lead to overfitting and reduced model robustness. Cross-validation techniques, such as leave-one-out or venetian blinds, are commonly used for this purpose [30].

Multiple performance metrics should be considered during model optimization and validation:

- Root Mean Square Error of Calibration (RMSEC): Measures fit to the calibration data.

- Root Mean Square Error of Cross-Validation (RMSECV): Assesses internal prediction performance.

- Root Mean Square Error of Prediction (RMSEP): Evaluates performance on an independent validation set.

- Coefficient of Determination (R²): Quantifies the proportion of variance explained by the model.

- Ratio of Performance to Deviation (RPD): Compares the standard deviation of the reference data to the standard error of prediction [30].

Table 2: Model Performance Metrics and Interpretation Guidelines

| Metric | Calculation | Interpretation |

|---|---|---|

| RMSEC | $\sqrt{\frac{\sum{i=1}^{n}(\hat{y}i-y_i)^2}{n}}$ | Should not be significantly lower than RMSEP |

| RMSEP | $\sqrt{\frac{\sum{i=1}^{m}(\hat{y}i-y_i)^2}{m}}$ | Primary indicator of prediction accuracy |

| R² | $1-\frac{\sum{i=1}^{n}(yi-\hat{y}i)^2}{\sum{i=1}^{n}(y_i-\bar{y})^2}$ | Closer to 1.0 indicates better model fit |

| RPD | $\frac{SD}{RMSEP}$ | >2.0: Fair; >3.0: Good; >4.0: Excellent |

Recent research emphasizes the importance of considering parameter interactions during optimization. Rather than optimizing preprocessing, variable selection, and latent factors sequentially, a more effective approach evaluates these parameters in combination to identify optimal modeling pathways [30].

Applications in Pharmaceutical Analysis

Multivariate calibration methods have found extensive application in pharmaceutical analysis, where they support quality-by-design (QbD) principles and process analytical technology (PAT) initiatives. Specific applications include:

Active Pharmaceutical Ingredient (API) Quantification

PLS and PCR models are widely employed for the quantification of APIs in various pharmaceutical dosage forms, including tablets, capsules, and liquids. For example, NIR spectroscopy combined with PLS has been successfully implemented for determining meloxicam in tablets, with models demonstrating sufficient accuracy and precision for quality control applications [30]. These methods enable rapid, non-destructive analysis without extensive sample preparation, making them ideal for high-throughput manufacturing environments.

Content Uniformity Testing

Content uniformity is a critical quality attribute for solid dosage forms that can be efficiently monitored using multivariate calibration approaches. Studies have demonstrated the transferability of NIR calibration models across multiple instruments from different vendors, including both dispersive and Fourier transform spectrometers [32]. This capability facilitates implementation across multiple manufacturing sites and quality control laboratories.

Polycomponent Mixture Analysis

Pharmaceutical formulations often contain multiple active components, excipients, and impurities that can be simultaneously determined using multivariate calibration methods. The ability to mathematically resolve overlapping spectral features enables quantification of individual components without physical separation. This is particularly valuable for fixed-dose combination products and complex natural product formulations, such as the determination of baicalin in Yinhuang granules using NIR spectroscopy [30].

Advanced Considerations

Calibration Transfer

A significant challenge in practical implementation of multivariate calibration models is their transferability across instruments, measurement conditions, or time. Calibration transfer techniques address this challenge by mathematically standardizing spectra between different platforms. Common approaches include:

- Piecewise Direct Standardization (PDS): Maps spectral responses from a secondary instrument to match those of a primary instrument using a transfer set measured on both instruments [32].

- Spectral Regression: Uses PLS regression to compute the relationship between spectra of transfer samples measured on different instruments [32].

- Wavelet Transform Methods: Employ wavelet compression, denoising, and multiscale analysis to improve transfer precision [32].

The selection of appropriate transfer standards is critical for successful calibration transfer, with ideal standards exhibiting chemical and physical stability with spectral features representative of the sample matrix.

Handling Heteroscedastic Data

Traditional multivariate calibration methods assume homoscedastic measurement errors (constant variance across the analytical range). However, real-world analytical data often exhibit heteroscedasticity (varying error variance), which can adversely affect model performance. Modified approaches, such as Heteroscedastic PCR (H-PCR), explicitly account for variations in the measurement error covariance matrix across different experimental conditions [33].

"For this reason, the present work describes a new numerical procedure for analyses of heteroscedastic systems (heteroscedastic principal component regression or H-PCR) that takes into consideration the variations of the covariance matrix of measurement fluctuations" [33]. These advanced methods are particularly relevant for process analytical applications where measurement conditions may vary systematically.

Integration with Artificial Intelligence

Recent advances in artificial intelligence (AI) and machine learning are expanding the capabilities of traditional multivariate calibration approaches. AI techniques complement PCR and PLS by enabling automated feature extraction, non-linear calibration, and enhanced pattern recognition [34].

Key AI concepts relevant to multivariate calibration include:

- Machine Learning (ML): Develops models capable of learning from data without explicit programming, including supervised, unsupervised, and reinforcement learning paradigms.

- Deep Learning (DL): Employs multi-layered neural networks for hierarchical feature extraction, particularly valuable for processing unstructured spectral data.

- Generative AI (GenAI): Creates synthetic spectral data to balance datasets, enhance calibration robustness, or simulate missing measurements [34].

The convergence of traditional chemometrics and AI represents a paradigm shift in spectroscopic analysis, bringing unprecedented levels of automation, predictive power, and interpretability to multivariate calibration.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Materials for Multivariate Calibration Studies

| Item | Function | Application Notes |

|---|---|---|

| Standard Reference Materials | Calibration model development and validation | Certified purity, representative of sample matrix |

| Chemical Standards | Preparation of calibration mixtures | High purity, well-characterized spectral properties |

| Sample Cells/Cuvettes | Containment during spectral measurement | Consistent pathlength, appropriate window material |

| Spectrophotometer | Spectral data acquisition | Proper calibration and performance verification |

| Chemometrics Software | Model development and validation | MATLAB, SIMCA, Unscrambler, PLS_Toolbox, etc. |

| Validation Samples | Independent model assessment | Not used in calibration, representative of future samples |

Principal Component Regression and Partial Least Squares regression represent powerful chemometric tools for extracting quantitative information from complex analytical data. While both methods employ latent variable approaches, their fundamental differences in objective function (variance explanation vs. covariance maximization) lead to distinct performance characteristics across different application scenarios.

The successful implementation of multivariate calibration models requires careful attention to experimental design, data preprocessing, model optimization, and validation. Performance should be assessed using multiple metrics, including RMSEP, R², and RPD, with independent validation being essential for demonstrating real-world predictive capability.

Emerging trends, including advanced calibration transfer methods, heteroscedastic data handling approaches, and AI integration, continue to expand the application scope and robustness of multivariate calibration in pharmaceutical analysis and other fields. These developments support the continued adoption of multivariate approaches as standard analytical tools in research, development, and quality control environments.

Multivariate Curve Resolution-Alternating Least Squares (MCR-ALS) is a powerful chemometric method designed to solve the mixture analysis problem, where measured responses originate from multiple underlying sources or components. The methodology describes the total signal of a multicomponent dataset as the sum of the signal contributions from each of the individual constituents present [10]. MCR-ALS operates under a bilinear model based on the Beer-Lambert law, making it particularly suitable for analyzing spectroscopic data from chemical and biological systems [35].

The fundamental model can be expressed in matrix form as:

D = CS^T + E

Where D is the original data matrix of mixed measurements, C is the matrix of concentration profiles, S^T is the matrix of pure response profiles (such as spectra), and E contains the variation unexplained by the model [35] [10]. This formulation allows MCR-ALS to extract chemically meaningful information about pure components from measurements of their mixtures without prior knowledge of their identities or concentrations [36].

Theoretical Foundation and Algorithm

Core Algorithm and Optimization

The MCR-ALS algorithm solves the bilinear model through an iterative optimization process that alternates between estimating concentration profiles and pure spectra while applying relevant constraints [35]. The algorithm begins with initial estimates of either spectral or concentration profiles, then proceeds with alternating least squares steps:

- Initialize: Provide initial estimates for either C or S^T

- Solve for ST: Given current estimate of C, solve for S^T using least squares

- Apply constraints to S^T (non-negativity, normalization, etc.)

- Solve for C: Given constrained S^T, solve for C using least squares

- Apply constraints to C (non-negativity, closure, selectivity, etc.)

- Check convergence: Evaluate fit and repeat steps 2-5 until convergence [35]

The general minimization in the iterative optimization can be expressed as:

min‖D - CS^T‖

This process continues until the model satisfactorily reproduces the original data, typically determined by reaching a stable value of explained variance [35].

Addressing Ambiguity Through Constraints

A fundamental challenge in MCR is rotational ambiguity, where different sets of profiles can reproduce the original data with similar fit quality [10]. MCR-ALS addresses this through the strategic application of constraints based on known chemical or mathematical properties of the system:

Table: Common Constraints in MCR-ALS Analysis

| Constraint Type | Mathematical Expression | Chemical Property Enforced |

|---|---|---|

| Non-negativity | C ≥ 0, S^T ≥ 0 | Concentrations and spectral intensities cannot be negative |

| Unimodality | Single maximum in concentration profiles | Chromatographic elution profiles |

| Closure | Sum of concentrations constant | Mass balance in closed systems |

| Selectivity | Known pure spectra or concentrations | Specific components identified in certain regions |

| Hard-modeling | Profiles follow kinetic models | Concentration profiles obey known rate laws |

Proper application of constraints not only decreases ambiguity but also provides chemical meaning to the resolved profiles [10]. The choice of constraints depends on the specific analytical context and available prior knowledge about the system.

Experimental Protocols and Applications

Protocol 1: Pharmaceutical Formulation Analysis

Recent research demonstrates the application of MCR-ALS for analyzing complex pharmaceutical formulations, offering a green alternative to chromatographic methods [2].

Materials and Equipment

Table: Essential Research Reagents and Equipment

| Item | Specification | Function/Purpose |

|---|---|---|

| UV-Vis Spectrophotometer | Shimadzu 1605 or equivalent | Spectral data acquisition |

| Quartz Cells | 1.00 cm path length | Hold samples for measurement |

| MATLAB Software | Version R2014a or newer | Data processing and algorithm implementation |

| MCR-ALS Toolbox | Available at www.mcrals.info | Core algorithm execution |

| Paracetamol Standard | Pharmaceutical grade | Target analyte quantification |

| Methanol | HPLC grade | Solvent for standard and sample preparation |

Step-by-Step Procedure

Standard Solution Preparation: Prepare individual stock solutions (1 mg/mL) of each analyte in methanol. For Paracetamol, Chlorpheniramine maleate, Caffeine, and Ascorbic acid, weigh 100 mg of each drug into separate 100 mL volumetric flasks, dissolve in methanol, and dilute to volume [2].

Calibration Set Design: Construct a multilevel, multifactor calibration design. For a four-component system, prepare 25 mixtures containing varying concentrations of each analyte within their linear ranges (e.g., 4-20 μg/mL for Paracetamol) [2].

Spectral Acquisition: Measure absorption spectra from 200-400 nm at 1 nm intervals. Export the spectral data between 220-300 nm (81 data points) to MATLAB for analysis [2].

Data Preprocessing: Mean-center the spectral data before MCR-ALS model construction to enhance the performance of the algorithm [2].

Model Development: Apply non-negativity constraints to both concentration and spectral profiles. Set appropriate convergence criteria (typically 0.1% change in residual standard deviation) and maximum iteration count [2].

Model Validation: Use an independent validation set with known concentrations to assess prediction accuracy through recovery percentages and root mean square error of prediction [2].

This protocol has been successfully applied to analyze Grippostad C capsules, demonstrating its practical utility for pharmaceutical quality control [2].

Protocol 2: Beta-Blocker Determination in Formulated Products

MCR-ALS has been effectively implemented for the simultaneous determination of multiple beta-blockers in pharmaceutical products, addressing the need for environmentally friendly analytical methods [37].

Materials and Equipment

- UV-1800 Shimadzu double-beam spectrophotometer

- MCR-ALS GUI 2.0 software with MATLAB 2015a

- Beta-blocker standards: Metoprolol, Atenolol, Bisoprolol, Sotalol HCl

- 0.1N HCl in water as solvent

Step-by-Step Procedure

Stock Solution Preparation: Prepare individual stock solutions (1 mg/mL) of each beta-blocker in methanol. Store solutions at 4°C when not in use [37].

Experimental Design: Implement a five-factor, five-level orthogonal design for the calibration set (25 samples). Concentration ranges should cover expected values: 4-14 μg/mL for Metoprolol, 2.5-10.5 μg/mL for Atenolol [37].

Spectra Collection: Acquire UV spectra from 200-400 nm at a scanning speed of 2800 nm/min with 1 nm bandwidth. Use 0.1N HCl as solvent for all measurements [37].

MCR-ALS Implementation: Execute the MCR-ALS algorithm with non-negativity constraints applied to both concentration and spectral profiles. Allow the algorithm to iterate until convergence criteria are met [37].

Quantitative Analysis: Use the resolved concentration profiles for quantification. Compare results with PLSR models to validate method performance [37].

This green methodology reduces solvent consumption and analysis time compared to traditional HPLC methods, making it suitable for routine quality control applications [37].

Workflow Visualization

Performance Assessment and Greenness Evaluation

Quantitative Performance in Pharmaceutical Analysis

MCR-ALS has demonstrated excellent performance in quantitative pharmaceutical analysis, as evidenced by recent studies:

Table: Performance Metrics of MCR-ALS in Pharmaceutical Applications

| Application | Analytes | Concentration Range (μg/mL) | Recovery (%) | RMSEP | Reference |

|---|---|---|---|---|---|

| Cold medication | Paracetamol | 4.00-20.00 | 98.5-101.2 | <0.45 | [2] |

| formulation | Chlorpheniramine | 1.00-9.00 | 99.1-100.8 | <0.35 | [2] |

| Caffeine | 2.50-7.50 | 99.3-101.5 | <0.25 | [2] | |

| Ascorbic acid | 3.00-15.00 | 98.8-100.9 | <0.50 | [2] | |

| Beta-blockers | Metoprolol | 4-14 | 99.7-101.1 | 0.198 | [37] |

| Atenolol | 2.5-10.5 | 99.2-100.7 | 0.215 | [37] | |

| Bisoprolol | 0.5-4.5 | 99.5-101.2 | 0.103 | [37] |

The method's accuracy and precision are comparable to official pharmacopeial methods while offering advantages in terms of greenness and efficiency [2] [37].

Environmental Impact Assessment

Greenness assessment tools provide quantitative evaluation of the environmental friendliness of MCR-ALS methods:

Table: Greenness Evaluation of MCR-ALS Methods

| Assessment Tool | Score/Result | Interpretation | Reference |

|---|---|---|---|

| AGREE | 0.77 (out of 1.0) | High greenness | [2] |

| Analytical Eco-Scale | 85 (out of 100) | Excellent greenness | [2] |

| GAPI | Intermediate greenness | Lower impact than HPLC | [37] |

MCR-ALS methods demonstrate superior environmental performance compared to traditional chromatography due to reduced solvent consumption, minimal waste generation, and lower energy requirements [2] [37].

Advanced Applications and Future Perspectives