Design of Experiments for Mass Spectrometry Optimization: A Complete Guide to Robust LC-MS/MS Methods

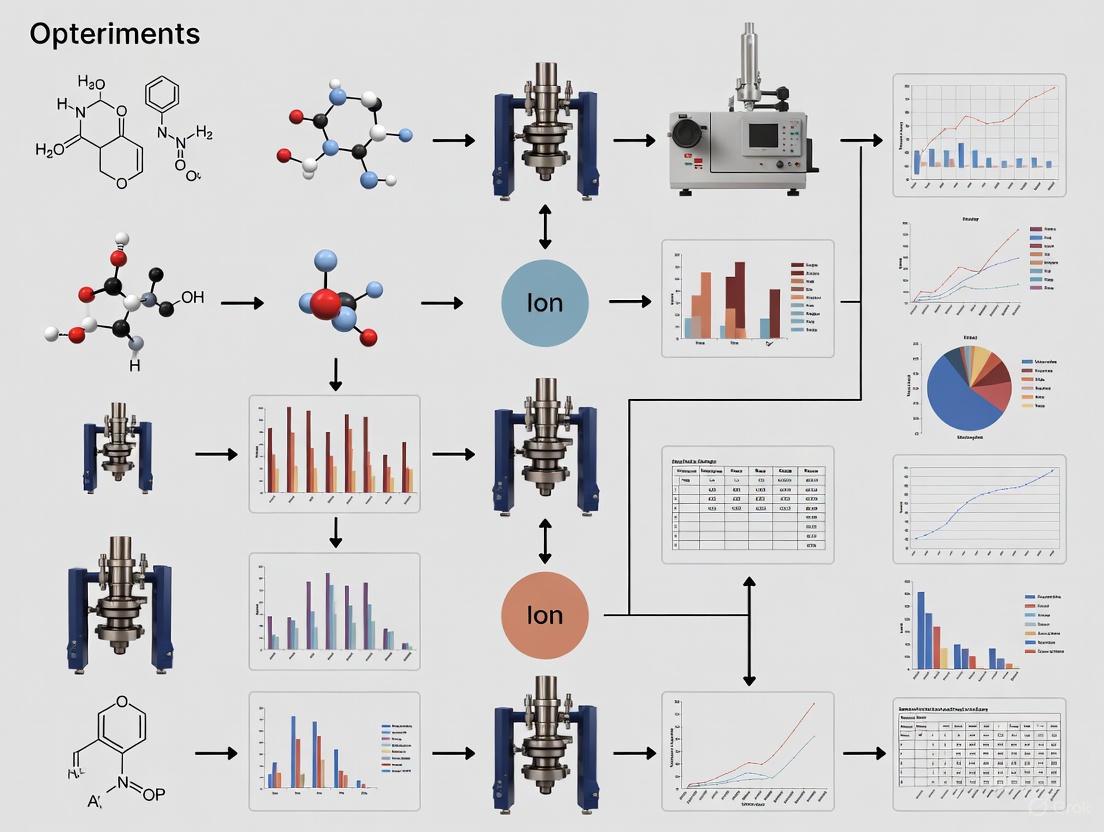

This article provides a comprehensive guide to applying Design of Experiments (DoE) principles for optimizing mass spectrometry methods, with a focus on liquid chromatography-tandem mass spectrometry (LC-MS/MS).

Design of Experiments for Mass Spectrometry Optimization: A Complete Guide to Robust LC-MS/MS Methods

Abstract

This article provides a comprehensive guide to applying Design of Experiments (DoE) principles for optimizing mass spectrometry methods, with a focus on liquid chromatography-tandem mass spectrometry (LC-MS/MS). It covers foundational concepts of DoE and MS, practical methodologies for method development and application across pharmaceutical and clinical research, advanced techniques for troubleshooting and data-driven optimization, and robust strategies for analytical validation and comparative analysis. Tailored for researchers, scientists, and drug development professionals, this guide synthesizes established best practices with cutting-edge trends to empower the development of sensitive, reproducible, and efficient MS-based assays.

Core Principles: Building Your DoE and Mass Spectrometry Foundation

Key Concepts of Design of Experiments

Design of Experiments (DoE) is a branch of applied statistics dealing with planning, conducting, analyzing, and interpreting controlled tests to evaluate factors that control the value of a parameter or group of parameters [1]. It is a systematic approach that allows researchers to efficiently investigate the effects of multiple input factors on a process output (response) simultaneously [2] [3].

Fundamental Components and Terminology

The foundational elements of any DoE study are summarized in the table below.

Table 1: Key Components of a Designed Experiment [2] [4]

| Component | Definition | Example in MS Context |

|---|---|---|

| Factors | Input variables that may influence the outcome of an experiment. | Temperature, pH, enzyme-to-protein ratio. |

| Levels | The specific values or settings at which a factor is tested. | Temperature: 25°C (low), 37°C (high). |

| Response | The measurable output or outcome of the experiment. | Protein yield, signal intensity, quantification accuracy. |

| Experimental Run | A single execution of the experiment with a specific combination of factor levels. | One sample preparation and MS analysis. |

| Replication | Repetition of an entire experimental run to estimate variability and enhance reliability. | Preparing and analyzing three samples with identical settings. |

| Randomization | The random sequencing of experimental runs to avoid bias from lurking variables. | Randomizing the order in which samples are analyzed by the MS. |

| Interaction | When the effect of one factor on the response depends on the level of another factor. | The effect of temperature on protein yield may depend on the pH level. |

Core Principles: Blocking, Randomization, and Replication

A well-designed experiment is built on three key principles [1]:

- Blocking: Used to account for known sources of variability that cannot be randomized (e.g., performing all experiments with one mass spectrometer on one day, and with another instrument on a different day).

- Randomization: The random order of experimental runs helps eliminate the effects of unknown or uncontrolled variables.

- Replication: Repeating experimental runs provides an estimate of experimental error and enhances the reliability of the results.

Advantages Over the One-Factor-at-a-Time (OFAT) Approach

The traditional OFAT method, which involves changing one factor while holding others constant, is inefficient and can lead to misleading conclusions [3]. The primary advantage of DoE is its ability to efficiently study multiple factors at once, which [3]:

- Detects Interactions: Reveals how factors interact, which OFAT cannot do.

- Identifies True Optima: Finds optimal factor settings that OFAT may miss.

- Improves Efficiency: Requires far fewer experimental runs to obtain the same, or better, information, especially as the number of factors increases.

Relevance of DoE to Mass Spectrometry Optimization

DoE is a powerful multipurpose tool for process improvement and method development, with specific uses highly relevant to mass spectrometry (MS) research [5].

Table 2: Key Uses of DoE and Their Application in Mass Spectrometry

| Use of DoE | General Application [5] | Relevance to MS Optimization |

|---|---|---|

| Comparing Alternatives | Supplier A vs. Supplier B; Catalyst X vs. existing catalyst. | Comparing different digestion enzymes, sample preparation kits, or LC columns. |

| Screening | Selecting the few critical factors from many possible factors. | Identifying which sample prep parameters (time, temp, ratio) most affect protein quantification. |

| Response Surface Modeling | Modeling a process to hit a target, maximize/minimize a response, or reduce variation. | Modeling the relationship between MS parameters and signal-to-noise to maximize sensitivity. |

| Hitting a Target | Fine-tuning a process to consistently hit a target. | Calibrating instrument methods to achieve a specific lower limit of quantification (LLOQ). |

| Maximizing/Minimizing | Optimizing a process output for highest yield or lowest cost. | Maximizing protein identification counts or minimizing ion suppression effects. |

| Reducing Variation | Finding factor settings that make a process more consistent. | Improving the reproducibility of peptide peak areas across multiple runs. |

| Making a Process Robust | Designing a product/process to be less sensitive to external noise. | Developing a sample prep protocol that delivers consistent results across different operators or labs. |

A recent study in bottom-up proteomics exemplifies the power of DoE for MS optimization. Researchers used a DoE approach to simultaneously optimize four critical factors in protein digestion—digestion time, temperature, enzyme-to-protein ratio, and denaturing agent concentration [6]. This systematic method enabled them to successfully reduce the digestion time from 18 hours (overnight) to just 4 hours while maintaining digestion efficiency. Furthermore, the optimized workflow improved the sensitivity of their UPLC-MRM-MS assay, allowing for the absolute quantification of 257 proteins in human plasma, including proteins that previously fell below the limit of quantification [6].

Experimental Protocols for DoE in MS

Generic DoE Workflow Protocol

The following workflow outlines the general steps for conducting a DoE, which can be adapted for various MS optimization projects [2] [4].

Protocol Steps:

- Define Clear Objectives: Formulate a precise goal. Example: "Reduce variability in peptide signal intensity by 20%," or "Maximize the number of proteins quantified in a plasma sample" [2] [4].

- Select Process Variables:

- Factors: Identify all potential input variables (e.g., ionization voltage, collision energy, solvent composition, incubation time). Use brainstorming sessions or Fishbone (Cause & Effect) diagrams [4].

- Responses: Define the critical measurable outputs (e.g., peak area, signal-to-noise ratio, number of protein identifications, quantification accuracy).

- Select Experimental Design: Choose a design that fits your objective and number of factors. For beginners, a 2-level full or fractional factorial design is a common starting point to screen for important factors [2] [1].

- Execute Design:

- Analyze and Interpret Results:

- Use statistical software (e.g., JMP, Minitab, R) to perform an Analysis of Variance (ANOVA) to identify which factors and interactions are statistically significant [7].

- Create Pareto charts to visualize the magnitude of factor effects and interaction plots to understand how factors influence each other [1].

- Validate Optimal Settings: Run a confirmation experiment using the predicted optimal factor settings from your model to verify that the results match the prediction [3].

Protocol for a Specific Screening Experiment: 2-Factor Full Factorial

This protocol outlines a basic DoE to investigate the effects of two factors on a single response, a common scenario in MS method development.

Table 3: Design Matrix for a 2-Factor Full Factorial Experiment

| Standard Order | Run Order (Randomized) | Factor A: Temperature (°C) | Factor B: pH | Response: Protein Yield (%) |

|---|---|---|---|---|

| 1 | 3 | -1 (25°C) | -1 (6.0) | 21.0 |

| 2 | 1 | -1 (25°C) | +1 (8.0) | 42.0 |

| 3 | 4 | +1 (37°C) | -1 (6.0) | 51.0 |

| 4 | 2 | +1 (37°C) | +1 (8.0) | 57.0 |

Steps:

- Define Objective: To understand the individual and combined effects of Temperature and pH on Protein Yield during enzymatic digestion.

- Select Variables:

- Factors: Temperature (A), pH (B).

- Response: Protein Yield (%).

- Levels: For each factor, select a realistic low (-1) and high (+1) level.

- Select Design: A 2-factor full factorial design requires 2^2 = 4 experimental runs [1].

- Create Design Matrix: The matrix shows all possible combinations of the factor levels. The run order must be randomized.

- Calculate Main Effects:

- Calculate Interaction Effect (AB): An interaction exists if the effect of one factor is different at various levels of the other factor. This is calculated by multiplying the coded levels for A and B for each run to create a new column (AB: +1, -1, -1, +1) and then calculating its effect similarly to the main effects [1].

- Analyze and Interpret: Plot the results. A large interaction effect often manifests as non-parallel lines in an interaction plot, indicating that the optimal setting for one factor depends on the level of the other.

The Scientist's Toolkit for DoE in MS Research

Essential Research Reagent Solutions

Table 4: Key Reagents and Materials for Proteomic Sample Preparation

| Item | Function in Experiment |

|---|---|

| Trypsin (Sequencing Grade) | The primary protease enzyme used in bottom-up proteomics to digest proteins into peptides for MS analysis. The enzyme-to-protein ratio is a key factor in DoE optimization [6]. |

| Urea / Guanidine HCl | Denaturing agents used to unfold proteins, making them more accessible to enzymatic digestion. Their concentration is a critical factor for efficient digestion [6]. |

| Trialkylammonium Buffer (e.g., TEAB) | A buffering agent to maintain a stable pH during digestion. The pH level is a common factor studied in digestion optimization DoEs [6]. |

| Reducing Agent (e.g., DTT) | Breaks disulfide bonds within proteins, aiding denaturation. |

| Alkylating Agent (e.g., IAA) | Modifies cysteine residues to prevent reformation of disulfide bonds. |

| Synthetic Peptide Standards | Isotopically labeled peptides used as internal standards for absolute quantification of proteins via MRM-MS, serving as a key response variable [6]. |

Logical Relationship of DoE Types

The selection of a DoE type is not arbitrary; it follows a logical sequence based on the experimental goal, moving from broad screening to precise optimization.

Diagram Explanation: The process typically begins with Screening Designs (e.g., fractional factorial, Taguchi), which efficiently identify the "vital few" significant factors from a long list of potential variables [2] [5]. Once the key factors are known, Response Surface Methodology (RSM) designs (e.g., Central Composite, Box-Behnken) are employed to model the curvature of the response and accurately locate the optimal factor settings [2] [5]. This leads to the final stage of process optimization and robustness testing, where the goal is to find settings that not only produce the best output but also ensure the process is insensitive to hard-to-control noise factors [5] [4].

Mass spectrometry (MS) is a powerful analytical technique that identifies and quantifies compounds by measuring the mass-to-charge ratio (m/z) of ions. Its foundational principle involves converting sample molecules into gas-phase ions, separating these ions based on their m/z, and detecting them to generate a mass spectrum. The performance and application suitability of any mass spectrometer are determined by the integrated operation of three core components: the ion source, which ionizes sample molecules; the mass analyzer, which separates the ions based on their m/z; and the detector, which captures and quantifies the separated ions [8]. This refresher details these critical components and frames their optimization within the modern context of Design of Experiments (DOE), a systematic statistical approach that efficiently evaluates multiple factors simultaneously to enhance method robustness, sensitivity, and throughput in research and drug development [9].

The ionization source is the entry point for analysis, responsible for converting neutral sample molecules into gas-phase ions. The choice of ionization technique is crucial and depends heavily on the properties of the analyte and the chromatographic interface.

Electrospray Ionization (ESI): Ideal for thermally labile and high molecular weight compounds such as proteins, peptides, and oligonucleotides. It works well with liquid chromatography (LC) interfaces and is highly effective for polar molecules. ESI operates at atmospheric pressure, where a high voltage is applied to a liquid sample, creating a fine aerosol of charged droplets that desolvate to yield gas-phase ions. Advanced versions like the Jet Stream ESI enhance sensitivity by improving desolvation and ion generation efficiency [10] [8]. The OptaMax Plus Ion Source is another innovation designed to improve ionization efficiency for a wider range of compounds at higher LC flow rates by delivering higher vaporizer temperatures [11].

Atmospheric Pressure Chemical Ionization (APCI): A complementary technique often available on the same platform as ESI. APCI is more suitable for less polar, thermally stable small molecules. In APCI, the solvent is vaporized, and reactant ions are created by a corona discharge needle. These reactant ions then ionize the sample molecules through chemical ion-molecule reactions [8].

Matrix-Assisted Laser Desorption/Ionization (MALDI): Commonly used with time-of-flight (ToF) mass analyzers, as both operate in pulsed mode [12]. MALDI involves embedding the sample in a light-absorbing matrix. A pulsed laser irradiates the mixture, causing desorption and ionization of the sample molecules with minimal fragmentation. This makes it exceptionally well-suited for analyzing large biomolecules like proteins and polymers.

Mass Analyzers: The Heart of Mass Separation

The mass analyzer is the core of the spectrometer, separating ions based on their mass-to-charge ratio (m/z). Different analyzers offer distinct trade-offs in resolution, mass accuracy, speed, and cost, making each type suitable for specific applications [12] [8].

o6d9b9c1e9a (Mass analyzer selection workflow)

The following table provides a detailed comparison of the most common mass analyzer types.

Table 1: Comparative Analysis of Mass Analyzer Technologies

| Analyzer Type | Key Operating Principle | Key Strengths | Common Limitations | Ideal Application Examples |

|---|---|---|---|---|

| Quadrupole [12] [8] | Ions are separated by stability of their trajectories in oscillating electric fields created by four parallel rods. | Rugged, cost-effective, fast scan speeds, high sensitivity for targeted analysis. | Lower resolution compared to other techniques; typically unit mass resolution. | High-throughput targeted quantification (e.g., clinical assays, environmental monitoring) [10] [8]. |

| Time-of-Flight (ToF) [12] | Ions are accelerated by an electric field and their flight time over a fixed distance is measured; lighter ions arrive first. | Virtually unlimited mass range, fast acquisition rates, high sensitivity in full-spectrum mode. | Requires pulsed ion source; resolution can be affected by kinetic energy spread (corrected by reflectrons). | Untargeted screening, metabolomics, polymer analysis, and imaging when coupled with MALDI [12] [13]. |

| Ion Trap [12] | Ions are trapped and stored in a dynamic electric field; they are sequentially ejected to the detector by scanning the field. | Compact size, good sensitivity, capable of MSⁿ fragmentation for structural elucidation. | Limited dynamic range, lower resolution compared to Orbitrap/ToF. | Structural studies of molecules, forensics, and analytical applications where MSⁿ is beneficial. |

| Orbitrap [12] [11] | Ions orbit around a central spindle; their oscillation frequencies are measured via image current and converted to m/z via Fourier Transform. | Very high resolution and mass accuracy, compact size relative to performance. | Requires ultra-high vacuum; slower acquisition speed than some ToF instruments. | Proteomics, metabolomics, biopharmaceutical characterization, and any application requiring definitive compound identification [10] [8]. |

| Magnetic Sector [12] | Ions are deflected by a magnetic field, with the radius of curvature depending on their m/z. | Very high resolution and accuracy, high sensitivity. | Large, expensive, requires skilled operation and ultra-high vacuum, not ideal for LC coupling. | Isotope ratio measurement, high-precision elemental analysis. |

The Rise of Hybrid and Tandem Systems

To overcome the limitations of individual analyzers, hybrid mass spectrometers combine different technologies, offering enhanced capabilities. Tandem mass spectrometry (MS/MS) typically involves multiple stages of mass analysis, often separated by a collision cell where ions are fragmented [12]. Prominent examples include:

- Triple Quadrupole (QqQ): Consists of two mass-resolving quadrupoles (Q1 and Q3) separated by a collision cell (q2). It is the gold standard for sensitive and selective Multiple Reaction Monitoring (MRM) for quantification [10] [8].

- Quadrupole-Time-of-Flight (Q-TOF): Combines the front-end mass filtering of a quadrupole with the high resolution, accurate mass, and fast acquisition of a ToF analyzer. Excellent for both qualitative and quantitative analysis [8].

- Quadrupole-Orbitrap: Hybrid systems like the Q Exactive series combine a quadrupole mass filter with the high-resolution Orbitrap detector, making them powerful for discovery proteomics and metabolomics [8].

- Tribrid Systems: Instruments like the Orbitrap Fusion Lumos incorporate a quadrupole, an Orbitrap, and a linear ion trap, providing exceptional versatility and multiple fragmentation modes for the most challenging analytical problems [8].

Detectors: From Ions to Measurable Signals

The final core component is the detector, which counts the ions emerging from the mass analyzer. The two most common types are:

- Electron Multipliers (Discrete Dynode and Continuous Dynode): These are the most prevalent detectors. An ion strikes a conversion dynode, releasing electrons. These electrons are then accelerated through a series of dynodes, each causing the release of more electrons in a cascade, resulting in a measurable electrical current that can be amplified by factors of up to 10⁸ [8] [11].

- Photomultiplier Tubes (PMT): In this setup, ions strike a conversion dynode, and the resulting electrons are accelerated onto a phosphor. The light emitted from the phosphor is then detected and amplified by a photomultiplier, offering high sensitivity. The Thermo Scientific Stellar Mass Spectrometer, for instance, uses a dual conversion dynode configuration coupled to a photomultiplier tube to achieve single-ion detection sensitivity [11].

A more advanced detection method is used in FTMS analyzers like the Orbitrap:

- Image Current Detection: Instead of striking a detector, ions pass between two electrodes, inducing an image current. This current, which oscillates with the frequencies of the orbiting ions, is recorded over time. A Fourier Transform algorithm then deconvolutes this complex signal into the individual frequency components, which are directly related to the m/z values of the ions, producing the mass spectrum [12].

Application Note: DOE for Optimizing a Bottom-Up Proteomics Workflow

Background and Objective

Liquid chromatography-tandem mass spectrometry (LC-MS/MS) is a cornerstone of modern bioanalysis, particularly for protein quantification. However, the sample preparation for bottom-up proteomics—which involves denaturation, reduction, alkylation, and enzymatic digestion—is a multi-step, time-consuming process with many interacting variables that can impact final sensitivity and reproducibility. This application note demonstrates the use of a Design of Experiments (DOE) approach to systematically optimize this complex workflow, significantly improving efficiency and performance for the absolute quantification of proteins in human plasma [6] [9].

Research Reagent Solutions

Table 2: Key Reagents and Materials for Bottom-Up Proteomics

| Item | Function in the Protocol |

|---|---|

| Human IgG1 Monoclonal Antibody | Model analyte spiked into rat plasma for method development and optimization [9]. |

| Rat Plasma | Complex biological matrix used to mimic real-world sample conditions [9]. |

| Urea & Guanidine HCl | Denaturing agents that unfold proteins to make cleavage sites accessible to the enzyme. DOE revealed urea significantly improved peptide response, while guanidine suppressed it [9]. |

| Trypsin | Proteolytic enzyme that cleaves proteins at the C-terminal side of lysine and arginine residues, generating peptides for LC-MS/MS analysis [9]. |

| Dithiothreitol (DTT) | Reducing agent that breaks disulfide bonds within and between protein chains [9]. |

| Iodoacetamide (IAA) | Alkylating agent that caps the reduced cysteine residues, preventing reformation of disulfide bonds [9]. |

Detailed Experimental Protocol

Instrumentation: The optimized workflow utilized a Waters Xevo TQ-XS UPLC-MRM-MS system, a triple quadrupole mass spectrometer known for its high sensitivity in targeted quantitative analyses [6].

DOE-Optimized Sample Preparation Workflow:

k8m2b1c0a3 (DOE optimization workflow)

DOE Screening Phase: A screening design (e.g., a Plackett-Burman or fractional factorial design) was first employed to evaluate the main effects of multiple factors, including:

- Denaturation: Type and concentration of denaturant (e.g., Urea vs. Guanidine).

- Reduction: Concentration of DTT, time, and temperature.

- Alkylation: Concentration of IAA and time.

- Digestion: Trypsin-to-protein ratio, digestion time, and temperature [9]. This phase identified that urea was a critical positive factor, while guanidine significantly suppressed surrogate peptide responses [9].

DOE Optimization Phase: A response surface methodology (RSM) design, such as a Central Composite Design (CCD), was then applied to the most influential factors identified in the screening phase. This model established the mathematical relationship between the factors and the responses (e.g., peak area of surrogate peptides) to find the optimal operating conditions [9].

Method Execution: Following the optimized conditions derived from the DOE model:

- The model analyte (IgG1 mAb) was spiked into rat plasma.

- Denaturation, reduction, alkylation, and digestion were performed using the optimized parameters.

- The digested peptide mixture was analyzed by UPLC-MRM-MS [9].

The systematic DOE approach yielded dramatic improvements:

- Efficiency: Protein digestion time was successfully reduced from a legacy method requiring 18 hours (overnight) to just 4 hours [6].

- Sensitivity: Peptide response saw a dramatic increase—up to 50-fold for one surrogate peptide (VVSV)—when compared to the legacy 2-day preparation method [9].

- Robustness: The optimized workflow, combined with the sensitivity of the TQ-XS system, enabled the absolute quantification of 257 proteins in human plasma, demonstrating the practical power of this optimized approach for clinical research [6].

This application note conclusively shows that DOE is an efficient and powerful tool for optimizing complex MS sample preparation workflows. It moves beyond one-factor-at-a-time (OFAT) experimentation, saving time and resources while unlocking superior analytical performance, which is essential for high-impact fields like biomarker discovery and personalized medicine [9].

Emerging Trends and Future Outlook

The field of mass spectrometry continues to evolve rapidly, driven by technological innovation and expanding application needs.

Artificial Intelligence and Automation: AI and machine learning are revolutionizing data processing. For instance, SCIEX's AI Quantitation software automatically identifies optimal MS and MS/MS signals based on compound structure and peak quality, simplifying the complex data from high-resolution mass spectrometers and enabling more precise and efficient quantitative analysis [14]. Furthermore, the integration of automated sample preparation is key to improving reproducibility and throughput in clinical research settings [6].

Miniaturization and Portability: There is a growing trend toward developing smaller, portable mass spectrometers using Micro-Electro-Mechanical Systems (MEMS). These devices enable on-site analysis in fields like environmental monitoring, food safety, and forensic science, moving analysis away from the central laboratory [15].

Market and Application Expansion: The global next-generation mass spectrometer market is projected to grow significantly, from USD 2.37 billion in 2025 to approximately USD 4.43 billion by 2034 [15]. This growth is fueled by technological advancements, rising demand in pharmaceuticals and healthcare for precision medicine, and increased government investment in life sciences research. North America currently leads the market, but the Asia-Pacific region is anticipated to witness the fastest growth [15] [13].

Advanced System Capabilities: New flagship systems like the Thermo Scientific Stellar Mass Spectrometer, a 2025 R&D 100 Award winner, incorporate features like multi-notch isolation for complex samples, adaptive retention time routines to maximize data completeness, and environmentally friendly dry pumps, setting new benchmarks for quantitative performance and laboratory productivity [11].

In mass spectrometry (MS) method development, the one-factor-at-a-time (OFAT) approach has been a traditional mainstay. This method involves changing a single parameter—such as collision energy or source temperature—while holding all others constant. While intuitively simple, OFAT possesses a critical flaw: it is fundamentally incapable of detecting interactions between parameters [16]. In reality, MS instrumentation operates as a complex, interconnected system where the optimal value of one parameter often depends on the settings of several others. For instance, the effect of changing a source temperature on signal intensity may be dramatically different at various desolvation gas flow rates. These factor interactions are invisible to OFAT, leading to methods that are fragile, difficult to transfer, and prone to failure with minor instrumental variations [16].

Design of Experiments (DoE) provides a powerful, systematic alternative. DoE is a structured statistics-based approach for planning, conducting, and analyzing controlled tests to evaluate the factors that control the value of a parameter or group of parameters [4]. Its core strength in MS optimization lies in its ability to efficiently and simultaneously investigate multiple factors and, most importantly, their complex interactions [17] [16] [18]. By moving beyond trial-and-error, DoE enables researchers to build robust, high-performing MS methods with a deeper understanding of the instrumental landscape. This application note details how a simplified DoE (sDOE) framework can be applied to optimize key MS parameters, using top-down electron transfer dissociation (ETD) as a case study [17].

DoE Methodology: A Tailored Approach for MS

Core Principles and Terminology

To effectively utilize DoE, a clear understanding of its basic components is essential [4] [16]:

- Factors: These are the independent, controllable variables of the MS method. In an ETD optimization, factors could include reagent accumulation time, collision energy, and reaction time.

- Levels: These are the specific values or settings chosen for each factor. For a two-level design, a factor like reaction time might be tested at a "low" (e.g., 50 ms) and a "high" (e.g., 200 ms) level.

- Response: This is the measurable output or result of the experiment that indicates performance. The primary response in ETD optimization is often the peptide or protein sequence coverage achieved through fragmentation [17].

- Interactions: This occurs when the effect of one factor on the response depends on the level of another factor. DoE is uniquely capable of quantifying these critical relationships.

The sDOE Workflow for Mass Spectrometry

For the MS researcher, a full-factorial DoE can seem daunting. The sDOE (Simple Design-of-Experiment) approach simplifies the toolkit, making it accessible for everyday use while retaining statistical rigor [17]. The workflow is a disciplined, iterative process, as illustrated below.

- Define the Problem and Goals: The first step is to clearly state the objective. For an MS experiment, this could be "maximize sequence coverage for intact proteins in a top-down ETD experiment" [17].

- Select Factors and Levels: Identify key parameters that could influence the response based on literature and operational knowledge. Carefully selected high and low levels should span a realistic range of operation [17] [16].

- Choose Experimental Design: A screening design like a fractional factorial or Plackett-Burman is ideal for initially scoping many factors. Once key drivers are identified, a Response Surface Methodology (RSM) design like Central Composite can be used for precise optimization [16].

- Conduct Randomized Experiments: Execute the experimental runs in a randomized order as specified by the DoE software. Randomization is critical to minimize the impact of uncontrolled, "lurking" variables that could bias the results [4] [16].

- Analyze Data and Build Model: Use statistical software to analyze the results. The analysis will generate main effects plots, interaction plots, and mathematical models that show how factors influence the response [16].

- Validate Optimal Conditions: Run confirmation experiments using the predicted optimal settings to verify that the model accurately forecasts the response. This step ensures the robustness of the final method [16].

Case Study: Optimizing Top-Down ETD Fragmentation

Experimental Protocol for sDOE in ETD

Objective: To maximize the sequence coverage of proteins fragmented via Electron Transfer Dissociation (ETD) on a UHR-QTOF mass spectrometer [17].

Step-by-Step Procedure:

- Factor Selection: Based on prior knowledge and the chemistry of ETD, select the critical parameters. For this study, the factors were reagent accumulation time, collision energy, and reaction time [17].

- Level Assignment: Define a practical range for each factor. For example:

- Reagent Accumulation Time: Low = 5 ms, High = 50 ms

- Collision Energy: Low = 5 V, High = 15 V

- Reaction Time: Low = 50 ms, High = 200 ms

- DoE Matrix Generation: Using statistical software, generate a fractional factorial experimental design. This creates a table specifying the unique combination of factor levels for each experimental run.

- Sample Preparation & Data Acquisition: Prepare a standard protein sample (e.g., cytochrome c) at a consistent concentration. Using the DoE matrix, inject the sample repeatedly, adjusting the three parameters for each run as per the randomized order.

- Response Measurement: For each experimental run, process the resulting MS/MS spectrum and calculate the primary response: percentage sequence coverage.

- Data Analysis: Input the response data (sequence coverage) into the DoE software. Perform analysis of variance (ANOVA) to identify significant factors and interactions.

- Model Validation: The software will predict the parameter settings for maximum coverage. Perform a final experiment using these recommended settings to confirm the result.

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential materials and reagents for the ETD optimization protocol.

| Item Name | Function/Description | Example/Note |

|---|---|---|

| UHR-QTOF Mass Spectrometer | High-resolution instrument for accurate mass measurement of intact proteins and their fragments. | Instrument capable of ETD/ECD fragmentation [17]. |

| ETD Reagent | Source of electrons for the electron transfer dissociation reaction. | Fluoranthene is a common reagent gas [17]. |

| Standard Protein | A well-characterized protein used to standardize and optimize the method. | Cytochrome c or myoglobin [17]. |

| LC System | For sample introduction and, if needed, desalting or separation prior to MS analysis. | Nano-flow or capillary LC system. |

| Statistical Software | For generating the DoE matrix and performing statistical analysis of the results. | Tools like EngineRoom, JMP, or built-in DoE packages [4] [16]. |

| Volatile Buffers | For sample preparation to prevent ion suppression and salt accumulation in the ion source. | Ammonium bicarbonate or ammonium acetate. |

Data Analysis and Visualization of Interdependence

Quantitative Results and Parameter Interactions

Applying the sDOE protocol to top-down ETD reveals the profound interdependence of MS parameters. The quantitative data from the experimental runs can be analyzed to produce the following results.

Table 2: Example results from an sDOE study on ETD parameters, showing how different combinations affect sequence coverage.

| Run Order | Reagent Accumulation (ms) | Collision Energy (V) | Reaction Time (ms) | Sequence Coverage (%) |

|---|---|---|---|---|

| 1 | 5 (Low) | 15 (High) | 50 (Low) | 42 |

| 2 | 50 (High) | 5 (Low) | 200 (High) | 38 |

| 3 | 50 (High) | 15 (High) | 50 (Low) | 45 |

| 4 | 5 (Low) | 5 (Low) | 200 (High) | 35 |

| 5 | 27.5 (Center) | 10 (Center) | 125 (Center) | 55 |

| 6* | 40 (Optimal) | 12 (Optimal) | 80 (Optimal) | 68 |

*Predicted optimal run for validation.

Statistical analysis of this data might show that while increasing reaction time generally improves coverage, its effect is drastically reduced when reagent accumulation time is too low. This is a classic interaction effect. The data can be modeled to create a response surface, visually mapping the relationship between two factors and the outcome.

Visualizing the Optimization Logic

The following diagram illustrates the logical decision process for identifying and optimizing critical MS parameters using the sDOE approach, moving from a broad screening focus to targeted optimization.

The systematic application of DoE, specifically the sDOE framework, provides an unparalleled strategy for navigating the complex parameter space of mass spectrometry. By moving beyond the limitations of OFAT, researchers can efficiently uncover the critical links and interdependencies between instrument parameters. This leads to the development of more robust, reproducible, and higher-performing MS methods, whether for top-down proteomics, small molecule quantification, or imaging mass spectrometry [17] [19] [18]. The resulting high-quality, comprehensive datasets are also ideally suited for informing machine learning (ML) algorithms, paving the way for fully automated, AI-driven MS optimization in the future [20] [21]. Adopting DoE is not merely a change in technique but a paradigm shift towards deeper process understanding and superior scientific outcomes.

In mass spectrometry (MS) research and development, achieving optimal performance requires a delicate balance between often-competing objectives: sensitivity, resolution, and speed. Traditional one-factor-at-a-time (OFAT) approaches to method optimization are inefficient and risk missing optimal conditions due to their inability to account for parameter interactions [22]. In contrast, Design of Experiments (DOE) provides a statistical framework for simultaneously investigating multiple factors and their complex interrelationships, enabling researchers to systematically navigate these trade-offs and define clear success criteria for method development [22].

This application note details how DOE methodologies can be strategically deployed to set and achieve well-defined objectives for sensitivity, resolution, and speed in mass spectrometry. We provide structured protocols and data to guide researchers and drug development professionals in implementing these approaches for robust, optimized analytical methods.

Defining Optimization Objectives and Key Parameters

Core Performance Metrics

The primary triumvirate of MS performance metrics must be defined with precise, measurable objectives before commencing experimental design.

- Sensitivity: Often expressed as the signal-to-noise ratio for a target analyte at a specific concentration. A clear objective might be "maximize S/N for compound X to achieve a lower limit of quantification (LLOQ) of 1 pg on-column" [23].

- Resolution: Defined as the ability to distinguish between closely spaced spectral peaks (e.g., in mass or chromatographic space). An objective could be "ensure baseline separation (resolution > 1.5) for critical pair Y and Z."

- Speed/Throughput: Refers to the number of samples analyzed per unit time. An objective may be "reduce chromatographic run time to under 5 minutes without compromising data quality for a 50-analyte panel."

Instrument and Method Parameters for Optimization

The following parameters are frequently targeted in DOE studies for LC-MS systems, as they directly govern the core performance metrics.

Table 1: Key Mass Spectrometry Parameters for Optimization

| Parameter Category | Specific Factors | Primary Impacted Metric(s) |

|---|---|---|

| Ion Source & Desolvation | Nebulizing Gas Flow Rate, Drying Gas Flow Rate, Interface Temperature, Capillary Voltage [24] | Sensitivity |

| Mass Analyzer | Collision-Induced Dissociation (CID) Gas Pressure, Entrance/Exit Potentials, Collision Energies [23] | Sensitivity, Resolution |

| Chromatography | Gradient Time, Flow Rate, Column Temperature [25] | Speed, Resolution |

| Data Acquisition | Dwell Time, Scan Rate, Isolation Windows [25] | Speed, Sensitivity |

Experimental Protocols for DOE-Based Optimization

Protocol 1: Screening Significant Factors with a Fractional Factorial Design

Purpose: To efficiently identify the few critical parameters from a large set that have significant effects on your responses (sensitivity, resolution, speed), thereby reducing the number of factors for more detailed optimization.

Step-by-Step Procedure:

- Define Factors and Ranges: Select 5-8 parameters you suspect influence your response. Define a realistic low (-1) and high (+1) level for each based on preliminary knowledge or instrument constraints [24]. For example, in optimizing an ESI source, factors may include Capillary Voltage (2000V-4000V), Drying Gas Flow Rate (8-12 L/min), and Nebulizer Gas Pressure (20-40 psi).

- Select Design Template: Choose a resolution IV or V fractional factorial design to avoid confounding main effects with two-factor interactions [22].

- Randomize and Execute: Randomize the run order provided by the design to protect against unknown biases and perform the experiments [22].

- Analyze and Model: Fit the data to a linear model with interaction terms. Use Pareto charts and half-normal plots of effects to identify statistically significant parameters.

- Interpret Results: The factors showing strong statistical significance (low p-value, e.g., < 0.05) are carried forward to the optimization protocol.

Protocol 2: Response Surface Modeling for Central Composite Design

Purpose: To model curvature and locate the precise optimum settings for the critical factors identified in the screening design.

Step-by-Step Procedure:

- Select Factors: Use the 2-4 most significant factors from Protocol 1.

- Design Construction: Employ a Central Composite Design (CCD), which augments a factorial core with axial (star) points and center points [24]. This allows for fitting a quadratic model.

- Execute Experiments: Perform the CCD runs in a randomized order. Replication at the center point is crucial for estimating pure error.

- Model Fitting and Analysis: Fit the data to a second-order polynomial model. Analyze the model using Analysis of Variance (ANOVA) to check for significance and lack-of-fit.

- Locate Optimum: Use contour plots and 3D response surface plots to visualize the relationship between factors and responses. The mathematical model can then be used to find the factor settings that produce the desired optimum for a single response or a compromise for multiple responses [22] [24].

Protocol 3: Multi-Objective Optimization for Balanced Performance

Purpose: To find a set of instrument parameters that delivers the best possible compromise when sensitivity, resolution, and speed objectives are in conflict.

Step-by-Step Procedure:

- Run a CCD: Follow Protocol 2, but collect data for all relevant responses (e.g., S/N for sensitivity, peak width for resolution, and cycle time for speed).

- Build Models for Each Response: Create a separate mathematical model for each performance metric.

- Apply Desirability Functions: Use a multi-objective optimization function that converts each predicted response into an individual desirability value (d_i), ranging from 0 (undesirable) to 1 (fully desirable).

- Maximize Overall Desirability: The software algorithm searches for factor settings that maximize the overall desirability (D), which is the geometric mean of the individual d_i values. This provides a statistically derived "sweet spot" that balances all objectives [22].

Case Study & Data Presentation

Optimizing Oxylipin Analysis via DOE

A recent study on UHPLC-ESI-MS/MS analysis of oxylipins effectively demonstrates the DOE workflow. The goal was to improve sensitivity across diverse oxylipin classes [23].

- Screening: A fractional factorial design first identified interface temperature and CID gas pressure as the most significant factors.

- Optimization: A subsequent Central Composite Design mapped the response surfaces. The study revealed a key finding: optimal sensitivity for polar prostaglandins and lipoxins was achieved at lower temperatures and higher CID gas pressure, while more lipophilic HETEs and HODEs performed better under different conditions [23].

- Outcome: This DOE-guided approach led to a tailored method that increased signal-to-noise ratios by two-fold for lipoxins/resolvins and three- to four-fold for leukotrienes and HETEs, significantly enhancing trace-level detection [23].

Table 2: Quantitative Results from Oxylipin Optimization Study

| Oxylipin Class | Key Optimal Parameter Range | Improvement in S/N Ratio |

|---|---|---|

| Prostaglandins & Lipoxins | Lower Interface Temperature, Higher CID Gas Pressure | 2-fold increase |

| Leukotrienes & HETEs | Analyte-specific settings | 3 to 4-fold increase |

| HODEs & HoTrEs | Higher Interface Temperature, Moderate CID Gas Pressure | Significantly improved |

Logical Workflow for MS Optimization

The following diagram illustrates the strategic decision-making process for applying DOE to mass spectrometry optimization.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for LC-MS Method Development

| Reagent/Material | Function & Application in Optimization |

|---|---|

| Model Compound Mixture | A representative set of target analytes with varying polarities and chemical properties used to gauge overall method performance [24]. |

| MS-Grade Solvents & Additives | High-purity solvents (e.g., methanol, acetonitrile) and volatile additives (e.g., formic acid, ammonium hydroxide) to minimize background noise and optimize ionization efficiency in both positive and negative modes [24]. |

| Stable Isotope-Labeled Internal Standards | Compounds used to correct for instrumental variability and matrix effects during quantitative optimization, ensuring precision and accuracy [23]. |

| Characterized Complex Matrix | A well-defined, relevant biological matrix (e.g., plasma, tissue homogenate) used to test and optimize the method under realistic conditions, assessing factors like ion suppression [25]. |

Practical DoE Strategies for LC-MS/MS Method Development and Real-World Applications

In the field of mass spectrometry optimization research, Response Surface Methodology (RSM) and D-optimal designs represent powerful statistical approaches for modeling complex multivariate systems and efficiently identifying optimal parameter settings. Traditional one-factor-at-a-time (OFAT) approaches, where parameters are optimized iteratively, are time-consuming and risk missing true global optima due to their inability to account for parameter interactions [26] [27]. In contrast, RSM employs mathematical and statistical techniques to build empirical models that describe the relationship between multiple input variables and one or more response outcomes, enabling researchers to navigate multi-dimensional experimental spaces systematically [28].

D-optimal designs constitute a specific class of computer-generated experimental designs that maximize the information obtained while minimizing the number of experimental runs required. This is particularly valuable in mass spectrometry research, where instrument time and reagents can be costly. By selecting design points to maximize the determinant |X'X| of the design matrix, D-optimal designs provide precise parameter estimates for the model while significantly reducing experimental burden compared to full factorial approaches [29]. When integrated within the RSM framework, these designs enable mass spectrometry researchers to develop robust, optimized methods with greater efficiency and statistical rigor.

Table 1: Comparison of Experimental Design Approaches in Mass Spectrometry

| Design Approach | Key Characteristics | Advantages | Limitations | Typical Applications |

|---|---|---|---|---|

| One-Factor-at-a-Time (OFAT) | Sequential parameter optimization | Simple to implement and interpret | Ignores parameter interactions; risks suboptimal conditions | Preliminary parameter screening |

| Full Factorial | Tests all possible factor combinations | Captures all interactions; comprehensive | Experimentally prohibitive with many factors | Small factor sets (2-4 factors) |

| Response Surface Methodology (RSM) | Models relationship between factors and responses | Identifies optimal regions; models interactions | Requires pre-defined experimental region | Process optimization; method development |

| D-Optimal Design | Selects most informative experimental subsets | Maximizes information with minimal runs | Model-dependent; computer-generated | Constrained experimental scenarios |

Theoretical Foundations and Design Selection

Core Principles of Response Surface Methodology

Response Surface Methodology operates on several fundamental statistical concepts that make it particularly suitable for mass spectrometry optimization. The methodology utilizes factorial designs to efficiently explore factor interactions and polynomial regression to model curvature in response surfaces [28]. First-order models (linear relationships) are typically employed during initial screening phases, while second-order quadratic models capture curvature and interaction effects necessary for locating optima [28]. To avoid computational issues with multicollinearity and improve model stability, RSM often employs factor coding schemes that transform natural variables to dimensionless coded variables, usually with symmetric scaling around zero [28].

A critical aspect of implementing RSM successfully is model validation through techniques such as Analysis of Variance (ANOVA), lack-of-fit testing, R-squared values, and residual analysis [28]. These statistical assessments ensure the fitted model adequately represents the true underlying relationship between mass spectrometry parameters and analytical responses. For optimization, RSM then employs techniques such as steepest ascent to sequentially move toward optimal regions of the experimental space, followed by canonical analysis to characterize the nature of the identified stationary points [28].

D-Optimal Designs in Research Context

D-optimal designs belong to the broader class of "optimal designs" that are generated algorithmically rather than from classical geometric templates like Central Composite Designs (CCD) or Box-Behnken Designs (BBD). The "D" in D-optimal refers to the determinant criterion used to evaluate design efficiency - these designs maximize the determinant of the information matrix (X'X), which minimizes the volume of the confidence ellipsoid for the regression coefficients [29]. This statistical property makes D-optimal designs particularly advantageous for situations with non-standard design regions or when classical designs would require prohibitively large numbers of experimental runs.

In practical research settings, D-optimal designs demonstrate particular strength when dealing with constrained experimental spaces (where not all factor combinations are feasible), categorical factors mixed with continuous variables, and situations requiring model-specific designs where the experimenter knows certain interaction effects can be safely ignored [29]. For mass spectrometry researchers, this translates to significant resource savings while maintaining statistical precision in parameter estimation.

Table 2: Comparison of RSM Design Types for Mass Spectrometry Applications

| Design Type | Factor Levels | Number of Runs (3 factors) | Efficiency | Best Use Cases |

|---|---|---|---|---|

| Central Composite Design (CCD) | 5 (-α, -1, 0, +1, +α) | 15-20 | Excellent for quadratic models | General RSM applications with continuous factors |

| Box-Behnken Design (BBD) | 3 (-1, 0, +1) | 15 | Good for quadratic models; no extreme conditions | When extreme factor levels should be avoided |

| D-Optimal Design | Flexible | User-defined (typically 12-16 for 3 factors) | Maximizes information per run | Constrained spaces; mixture variables; specific models |

Experimental Protocols

Implementing D-Optimal Designs: A Case Study in Solid Phase Extraction

The following protocol outlines the application of a D-optimal design for optimizing an automated solid-phase extraction (SPE) procedure for polycyclic aromatic hydrocarbons (PAHs) from coffee samples, as referenced in the literature [29]. This approach demonstrates how to handle multiple factors at different levels while managing analytical complexity.

Initial Experimental Setup

- Define Critical Quality Attributes (CQAs): Identify the response variables that define analytical success. In the PAH study, responses were the differences in sample loadings between spiked and blank coffee samples for nine target PAHs, all requiring maximization [29].

- Select Control Method Parameters (CMPs): Identify factors significantly influencing CQAs. For the SPE optimization, four factors were identified: elution volume (2 levels), dry time (3 levels), wash volume (3 levels), and organic solvent type (4 levels) [29].

- Establish Design Constraints: Define any practical limitations on factor combinations. The SPE study faced a discrete experimental domain where the system performed differently for each analyte, making simultaneous optimization challenging.

Design Execution and Analysis

- Design Generation: Using statistical software, generate a D-optimal design from the full factorial foundation of 72 experiments, reduced to 19 experimental runs while maintaining estimation precision [29].

- Randomization and Blocking: Randomize run order to protect against unknown confounding factors. Implement blocking if experiments must be conducted across multiple days or instrument batches.

- Data Collection: Execute experiments according to the generated design matrix, measuring all specified CQAs for each experimental run.

- Model Fitting: Develop mathematical relationships between CMPs and CQAs using regression techniques. For multiple responses, create separate models for each CQA.

Optimization and Validation

- Multi-Response Optimization: When CQAs show conflicting responses to factor changes (as in the SPE study where different PAHs had different optimal conditions), identify compromise conditions using the Pareto front of non-dominated CQA values [29].

- Design Space Development: Apply Analytical Quality by Design (AQbD) principles to construct a design space of CMPs that ensures satisfactory CQA performance [29].

- Confirmation Experiments: Conduct additional experimental runs at the predicted optimum conditions to validate model accuracy and system performance.

Implementing RSM for MALDI Matrix Spraying Optimization

This protocol details the application of RSM for optimizing matrix application parameters in Matrix-Assisted Laser Desorption/Ionization Mass Spectrometry Imaging (MALDI-MSI), based on published research [26] [30] [31].

Problem Definition and Factor Selection

- Define Response Variables: Identify critical analytical outcomes. In the MALDI-MSI study, two key responses were defined: (1) minimization of analyte delocalization (quantified through colocalization with glomeruli correlation values), and (2) maximization of lipid annotations (number of distinct lipid species detected) [26].

- Select Factors and Ranges: Based on mechanistic understanding and preliminary experiments, identify factors to include in the optimization. The MALDI study selected five factors with practical ranges: temperature (42.5-67.5°C), flow rate (0.0575-0.1525 mL/min), spraying velocity (775-1125 mm/min), number of cycles (8-22), and methanol concentration (42.5-67.5%) [26].

Experimental Design and Execution

- Design Selection: Choose an appropriate experimental design based on the number of factors and desired model complexity. While the specific design wasn't named in the MALDI study, they tested 32 combinations of spraying conditions [26], suggesting a fractional factorial or D-optimal approach.

- Experimental Execution: Prepare samples according to the experimental design. In the MALDI study, human kidney biopsy sections (10 μm thickness) were thaw-mounted onto ITO-coated glass slides before matrix application using a robotic sprayer with parameters set according to the design matrix [26].

- Response Measurement: Collect data for all response variables. In the MALDI study, this included FTICR mass spectrometry analysis followed by colocalization quantification and lipid annotation using the METASPACE platform [26].

Model Development and Optimization

- Response Surface Modeling: Fit mathematical models describing the relationship between spraying parameters and each response. The MALDI study used RSM to model both delocalization and detection sensitivity responses across the five-dimensional parameter space [26] [30].

- Model Adequacy Checking: Evaluate model quality using statistical measures including R² values, ANOVA, and residual analysis to ensure predictive capability.

- Simultaneous Optimization: Identify parameter settings that balance multiple, potentially competing responses. The MALDI study successfully identified optimal automated spraying parameters that minimized delocalization while maintaining high detection sensitivity for lipids [26].

Experimental Visualization and Workflow

Experimental Optimization Workflow

Research Reagent Solutions and Materials

Table 3: Essential Research Materials for Experimental Design Implementation

| Category | Specific Items | Function/Purpose | Example Applications |

|---|---|---|---|

| Statistical Software | JMP, Minitab, Design-Expert, R | Generate experimental designs; analyze results; create models | All DOE implementations |

| MS Instrumentation | MALDI-FTICR Mass Spectrometer; HPLC-FLD | Analytical measurement of response variables | MALDI-MSI; PAH analysis |

| Sample Preparation | Robotic sprayer (HTX Technologies TM-Sprayer); automated SPE system | Precise, reproducible parameter control | MALDI matrix application; SPE optimization |

| Chemical Reagents | DHB matrix; methanol; PAH standards; polydopamine nanoparticles | Experimental-specific materials | MALDI-MSI; photoporation; SPE studies |

| Validation Tools | ANOVA tables; lack-of-fit tests; confirmation experiments | Statistical validation of model adequacy | All RSM implementations |

Applications in Mass Spectrometry and Biotechnology

The practical implementation of RSM and D-optimal designs has demonstrated significant value across diverse mass spectrometry and biotechnology applications. In MALDI mass spectrometry imaging, researchers successfully employed RSM to optimize five key matrix application parameters simultaneously—temperature, flow rate, spraying velocity, number of cycles, and solvent composition—achieving optimal conditions that minimized analyte delocalization while maximizing detection sensitivity for lipids in human kidney biopsies [26] [30]. This approach replaced traditional OFAT methods that risked suboptimal conditions and required substantially more experimental effort.

In analytical chemistry applications, D-optimal designs have proven particularly valuable for optimizing complex, multi-residue extraction procedures. One notable implementation involved the development of an automated solid-phase extraction method for nine polycyclic aromatic hydrocarbons (PAHs) in complex coffee matrices [29]. The D-optimal design efficiently reduced a 72-experiment full factorial to just 19 experimental runs while maintaining statistical precision, successfully handling the challenge of conflicting optimal conditions for different analytes through Pareto front optimization.

Beyond mass spectrometry, these methodologies have found application in emerging biotechnology areas such as photoporation—a physical membrane-disruption technique for intracellular delivery. Researchers comparing RSM approaches (Central Composite and Box-Behnken designs) for optimizing polydopamine nanoparticle parameters achieved five- to eight-fold greater efficiency compared to traditional OFAT methodology while revealing critical insights about nanoparticle size dependencies within the design space [27]. This demonstrates how structured experimental approaches can simultaneously optimize processes while generating fundamental mechanistic understanding.

These case studies collectively highlight how RSM and D-optimal designs enable researchers to efficiently navigate complex experimental landscapes, balance competing objectives, and develop robust, optimized methods with significantly reduced experimental burden compared to conventional approaches.

Within mass spectrometry (MS) research, the transition from one-factor-at-a-time (OFAT) experimentation to systematic Design of Experiments (DoE) represents a paradigm shift for method development and optimization. This application note details the strategic selection and optimization of three foundational pillars—ionization settings, liquid chromatography (LC) gradients, and mass analyzer parameters—within a structured DoE framework. When optimized in concert through statistical designs, these parameters significantly enhance method sensitivity, robustness, and throughput, which is critical for researchers and drug development professionals aiming to characterize complex biological samples, such as proteomes or metabolomes [32] [33]. The following sections provide detailed protocols and data-driven insights to guide this optimization.

Theoretical Foundation: The DoE Advantage

Traditional OFAT optimization varies a single parameter while holding others constant. This approach is inefficient, often fails to identify true optimal conditions due to ignored parameter interactions, and risks locating local optima rather than a global maximum response [33]. In contrast, DoE is a statistically-based methodology that involves performing multivariate experiments to evaluate the impact of multiple factors (parameters) and their interactions on predefined responses (e.g., signal intensity, number of identifications) simultaneously [34] [33].

The power of DoE lies in its ability to:

- Systematically Explore Factor Interactions: Identify synergistic or antagonistic effects between parameters that would be missed by OFAT.

- Maximize Information Gain per Experiment: Reduce the total number of experiments required to model a complex system accurately.

- Build Predictive Models: Use response surface methodology (RSM) to mathematically model the relationship between factors and responses, enabling the prediction of optimal parameter settings [34] [35].

A generalized DoE workflow for MS optimization is depicted below.

Experimental Design and Factor Selection

A successful DoE strategy follows a tiered approach to efficiently navigate the multi-parameter space of an LC-MS system.

Defining Factors and Responses

The first step is to select the factors (adjustable parameters) and responses (performance metrics) for the study. Key factors are categorized in Table 1.

Table 1: Critical Factor Categories for LC-MS Optimization

| Category | Key Factors | Influence on Performance |

|---|---|---|

| Ionization Source | Drying/Sheath Gas Temperature & Flow, Nebulizer Pressure, Nozzle, Capillary, and Fragmentor Voltages [35] | Governs ionization efficiency and desolvation, directly impacting signal intensity and background noise [36] [37]. |

| LC Separation | Gradient Time (t_g), Initial/Final %B, Flow Rate, Column Temperature [36] [32] |

Determines peak capacity, resolution of co-eluting analytes, and analysis time. Affects ionization by modulating ion suppression [36]. |

| Mass Analyzer | Collision Energy (CE), Entrance/Exit Potentials, MS1 Injection Time, AGC Target, FAIMS CV [34] [38] [39] | Controls ion transmission, fragmentation efficiency, mass accuracy, and detection sensitivity [34] [38]. |

Critical responses include signal-to-noise (S/N) ratio, number of identified compounds (e.g., proteins, oxylipins), total ion chromatogram (TIC) quality, and mass accuracy [34] [32] [38].

The Three-Stage DoE Workflow

A recommended workflow for comprehensive optimization is shown below, illustrating the progression from screening to final verification.

Protocol: Three-Stage DoE for LC-MS Optimization

- Objective: To systematically identify and optimize critical LC-MS parameters for maximum analyte detection.

Materials:

- Pure analyte standard(s) of interest [39].

- LC-MS system (e.g., UHPLC coupled to QqQ or Orbitrap MS).

- DoE software (e.g., MODDE Pro, JMP, or comparable packages).

Procedure:

Stage 1: Screening with Fractional Factorial Design (FFD)

- Prepare a standard solution of your analyte at a concentration suitable for detector response (e.g., 50 ppb - 2 ppm) in a solvent compatible with your prospective mobile phase [39].

- Select a wide range for all initial factors of interest (Table 1). A FFD with Resolution IV or higher is used to efficiently screen 6-8 factors with a minimal number of experiments [34] [33].

- Randomize the run order to minimize bias from instrument drift.

- Analysis: Evaluate the statistical significance (via ANOVA, p < 0.05) of each factor's effect on your chosen responses. The goal is to narrow the focus to the 3-4 most influential factors for the next stage.

Stage 2: Optimization with Response Surface Methodology (RSM)

- Using the shortlisted factors from Stage 1, define narrower ranges around the suspected optimum.

- Employ a Central Composite or Box-Behnken design to model quadratic (curved) responses and factor interactions [34] [33].

- Analysis: The software builds a mathematical model and generates a response surface. Use this model to precisely calculate the parameter setpoints that maximize your response (e.g., S/N ratio).

Stage 3: Robustness Verification

Protocol Implementation: Case Studies

Case Study 1: DoE-Guided Optimization of Oxylipin Analysis

A 2025 study on oxylipin analysis exemplifies the power of DoE. Oxylipins are diverse, low-abundance signaling molecules, making their analysis challenging [34].

- Experimental: A FFD screened factors including interface temperature, CID gas pressure, and various voltage potentials. This was followed by a central composite design for optimization. The response was signal intensity in MRM mode [34].

- Key Findings: The RSM model revealed distinct optimal conditions for different oxylipin classes. Polar prostaglandins and lipoxins benefited from higher CID gas pressure and lower interface temperatures, while more lipophilic HETEs and HODEs showed different preferences [34].

- Result: This analyte-specific optimization, which would be difficult to discover via OFAT, resulted in a 2-4 fold increase in S/N ratio for key oxylipin classes, significantly enhancing trace-level detection [34].

Table 2: Quantitative Improvements in Oxylipin Analysis via DoE [34]

| Oxylipin Class | Improvement in Signal-to-Noise (S/N) | Key Optimized Parameter |

|---|---|---|

| Leukotrienes & HETEs | 3 to 4-fold increase | Collision-Induced Dissociation (CID) Gas Pressure |

| Lipoxins & Resolvins | 2-fold increase | Interface Temperature |

| All Classes | Lower Limits of Quantification (LLOQ) | Individual Collision Energy (CE) |

Case Study 2: Ionization Source Optimization for SFC-ESI-MS

Another study demonstrated the systematic optimization of eight ESI source parameters for Supercritical Fluid Chromatography-MS coupling [35].

- Experimental: A geometric Rechtschaffner design was used to evaluate factors like gas temperatures/flows and key voltages. The response was the signal height for 32 diverse compounds [35].

- Key Findings: The initial screening identified the fragmentor voltage as the most influential parameter, accounting for 78.6% of the variation in signal response. This finding allowed the researchers to focus subsequent optimization efforts effectively [35].

- Result: The DoE approach led to a robust setpoint that provided sufficient ionization for all 32 compounds, demonstrating the utility of a systematic approach for multi-analyte methods [35].

The Scientist's Toolkit: Research Reagent Solutions

The following reagents and materials are essential for executing the protocols described in this application note.

Table 3: Essential Reagents and Materials for LC-MS Method Optimization

| Item | Function / Application | Example / Specification |

|---|---|---|

| Ammonium Formate/Acetate | LC-MS compatible volatile buffer for mobile phase pH control and ion-pairing. | Typically used at 2-20 mM concentration; prepared in LC-MS grade water [36] [35]. |

| Acetonitrile & Methanol | LC-MS grade organic modifiers for reversed-phase chromatography. | Low UV absorbance and minimal background ions; essential for high-sensitivity detection [34] [32]. |

| Acetic Acid / Formic Acid | Mobile phase additives to improve protonation and peak shape for acidic/basic analytes. | Commonly used at 0.1% concentration [34] [32]. |

| Pure Chemical Standards | Required for parameter optimization free from matrix interference. | Diluted to 50 ppb - 2 ppm in mobile phase for infusion or flow injection analysis [39]. |

| UHPLC Columns | High-efficiency separation core. | C18 columns (e.g., 2.1 x 100 mm, 1.7 µm) are common; selection depends on analyte properties [34] [32]. |

| Argon / Nitrogen Gas | High-purity collision gas (Argon) and source desolvation/drying gas (Nitrogen). | Essential for consistent fragmentation and ion source operation [34] [39]. |

The strategic selection and optimization of ionization settings, LC gradients, and mass analyzer parameters are no longer tasks suited for sequential, OFAT experimentation. By adopting a structured DoE approach, as outlined in the protocols and case studies above, researchers can efficiently develop more sensitive, robust, and reproducible LC-MS methods. This is particularly critical in drug development, where reliable quantification of trace-level analytes in complex matrices is paramount. The initial investment in designing a DoE study pays substantial dividends in accelerated method development and superior analytical performance.

The development of robust bioanalytical methods is a critical component in the drug development pipeline, ensuring accurate quantification of therapeutics in biological matrices. For complex molecules such as antibody-drug conjugates (ADCs) and proteins, method development presents unique challenges due to their heterogeneous composition and the complex sample preparation required. Design of Experiments (DoE), a systematic statistical approach for evaluating multiple experimental factors simultaneously, has emerged as a powerful tool to overcome these challenges. This case study details the application of a DoE methodology to optimize a bottom-up proteomic sample preparation workflow for the absolute quantification of proteins in human plasma, utilizing UPLC-Multiple Reaction Monitoring-Mass Spectrometry (UPLC-MRM-MS) [6]. By implementing DoE, we successfully streamlined a traditionally time-consuming process, enhancing both efficiency and analytical performance.

Experimental Design and Workflow

The core challenge addressed was the optimization of protein digestion, a critical and often rate-limiting step in bottom-up proteomics. Traditional one-factor-at-a-time (OFAT) approaches are not only inefficient but also fail to capture potential factor interactions. A DoE approach was employed to systematically investigate the impact of key digestion parameters and identify optimal conditions.

Critical Factors and DoE Setup

Four factors were identified as having a significant impact on digestion efficiency. These factors and their investigated ranges are summarized in the table below.

Table 1: Experimental Factors and Levels for Digestion Optimization

| Factor | Low Level | High Level | Role |

|---|---|---|---|

| Digestion Time | 4 hours | 18 hours | Continuous |

| Digestion Temperature | 30°C | 45°C | Continuous |

| Enzyme-to-Protein Ratio | 1:20 | 1:50 | Continuous |

| Denaturing Agent Concentration | 0.1% | 1.0% | Continuous |

A statistical model was built to explore the main effects of these factors as well as their two-way interactions, enabling the prediction of digestion efficiency across the experimental space [6].

Analytical Platform and Quantification Strategy

The optimized digestion protocol was integrated with a sensitive UPLC-MRM-MS platform for absolute quantification.

- Platform: Waters Xevo TQ-XS UPLC-MRM-MS [6].

- Objective: Absolute quantification of 257 proteins in human plasma [6].

- Sample Preparation: The workflow was automated to improve reproducibility and throughput, making it suitable for a clinical research setting [6].

The following workflow diagram illustrates the complete experimental process, from sample preparation to data analysis.

Workflow for Automated Protein Quantification

DoE Optimization Protocol

This section provides a detailed, step-by-step protocol for implementing the DoE strategy to optimize protein digestion.

Protocol: DoE for Digestion Efficiency

Objective: To systematically optimize protein digestion conditions (time, temperature, enzyme-to-protein ratio, and denaturant concentration) for maximum efficiency and peptide yield.

Materials:

- Research Reagent Solutions: Essential materials for the experiment are listed in the table below.

Table 2: Research Reagent Solutions for DoE Digestion Optimization

| Item | Function / Description |

|---|---|

| Tryptic Protease | Enzyme for proteolytic digestion of proteins into peptides for MS analysis [6]. |

| Denaturing Agent (e.g., RapiGest) | Disrupts protein tertiary structure to increase enzyme accessibility [6]. |

| Human Plasma Samples | The biological matrix for method development and validation. |

| Waters Xevo TQ-XS | Triple quadrupole mass spectrometer for high-sensitivity MRM analysis [6]. |

Procedure:

- Experimental Design: Utilize statistical software to generate a DoE matrix (e.g., a Response Surface Methodology design) that encompasses the factor ranges listed in Table 1.

- Sample Preparation: Aliquot human plasma samples into a 96-well plate suitable for automated processing.

- Automated Denaturation and Reduction: Using a liquid handler, add the specified concentration of denaturing agent to each sample according to the DoE layout. Incubate to denature proteins.

- Automated Digestion: Program the liquid handler to add trypsin to each sample at the designated enzyme-to-protein ratio. Subsequently, incubate the plate at the specified temperatures and for the durations defined by the DoE matrix.

- Reaction Quenching: After digestion, acidify the samples to stop the enzymatic reaction.

- LC-MRM-MS Analysis: Inject the digested samples onto the UPLC-MRM-MS system for peptide separation and quantification.

Data Analysis:

- Process the raw MRM data to determine the peak areas of target peptides, which serve as the measure of digestion efficiency (the response in the DoE model).

- Input the response data into the statistical software to build a predictive model.

- Analyze the model to identify significant factors and interactions, and to locate the optimal set of conditions that maximize digestion efficiency within the defined experimental space.

Results and Discussion

The implementation of the DoE strategy yielded significant improvements in both the efficiency and performance of the bioanalytical assay.

Key Outcomes of DoE Optimization

The quantitative results of the optimization are summarized in the table below.

Table 3: Summary of DoE Optimization Outcomes

| Parameter | Pre-Optimization (OFAT) | Post-Optimization (DoE) | Impact |

|---|---|---|---|

| Digestion Time | 18 hours (overnight) [6] | 4 hours [6] | >75% reduction in sample prep time |

| Assay Sensitivity | Lower Limit of Quantification (LLOQ) constrained for some proteins [6] | Improved LLOQ, enabling quantification of previously undetectable proteins [6] | Expanded dynamic range and coverage |

| Throughput | Manual or semi-automated processing | Full workflow automation [6] | Enhanced reproducibility and scalability |

| Number of Quantifiable Proteins | Not specified for prior method | 257 proteins in human plasma [6] | Robust platform for comprehensive analysis |

The model revealed the complex interplay between the four factors. For instance, it likely identified that a higher digestion temperature could compensate for a shorter digestion time, or that the optimal enzyme-to-protein ratio was dependent on the concentration of the denaturing agent. This level of insight is unattainable through OFAT experimentation.

The following cause-and-effect diagram illustrates the relationships between the critical factors and the experimental outcomes, as revealed by the DoE model.

Factor-Effect Relationships in Digestion Optimization

Application in Regulated Bioanalysis

The principles demonstrated in this case study are directly applicable to the bioanalysis of complex therapeutics like Antibody-Drug Conjugates (ADCs). ADCs present unique challenges due to their heterogeneous nature, containing a monoclonal antibody, cytotoxic payload, and linker [40]. Accurate pharmacokinetic assessment requires quantifying different analyte forms (e.g., conjugated antibody, total antibody, unconjugated payload), often using a combination of Ligand Binding Assays (LBAs) and LC-MS/MS [40]. The DoE approach is equally critical here for optimizing the sample preparation and analysis conditions for these diverse analytes, ensuring the resulting methods are robust, sensitive, and fit-for-purpose in a GxP environment [41].

This case study demonstrates that a systematic DoE approach is not merely an improvement but a fundamental paradigm shift in bioanalytical method development. By simultaneously evaluating critical factors, we successfully transformed a lengthy, overnight protein digestion into a rapid 4-hour process without compromising efficiency. Furthermore, the optimized workflow enhanced the sensitivity of the UPLC-MRM-MS platform, allowing for the absolute quantification of 257 proteins in human plasma. The integration of automation ensures the reproducibility and scalability of this method, making it ideally suited for the high-demand environment of clinical research. The success of this model underscores the broad applicability of DoE in overcoming complex bioanalytical challenges, from targeted proteomics to the characterization of next-generation biotherapeutics.

This application note details the experimental design and protocols for optimizing Single-Cell ProtEomics by Mass Spectrometry (SCoPE-MS), a transformative method for quantifying protein abundance in individual cells. We focus on the data-driven optimization of mass spectrometry parameters to enhance sensitivity, coverage, and quantitative accuracy, providing a framework for researchers in drug development and basic science.