Essential Software Skills for Analytical Chemists in 2025: A Guide from Data Acquisition to AI-Driven Analysis

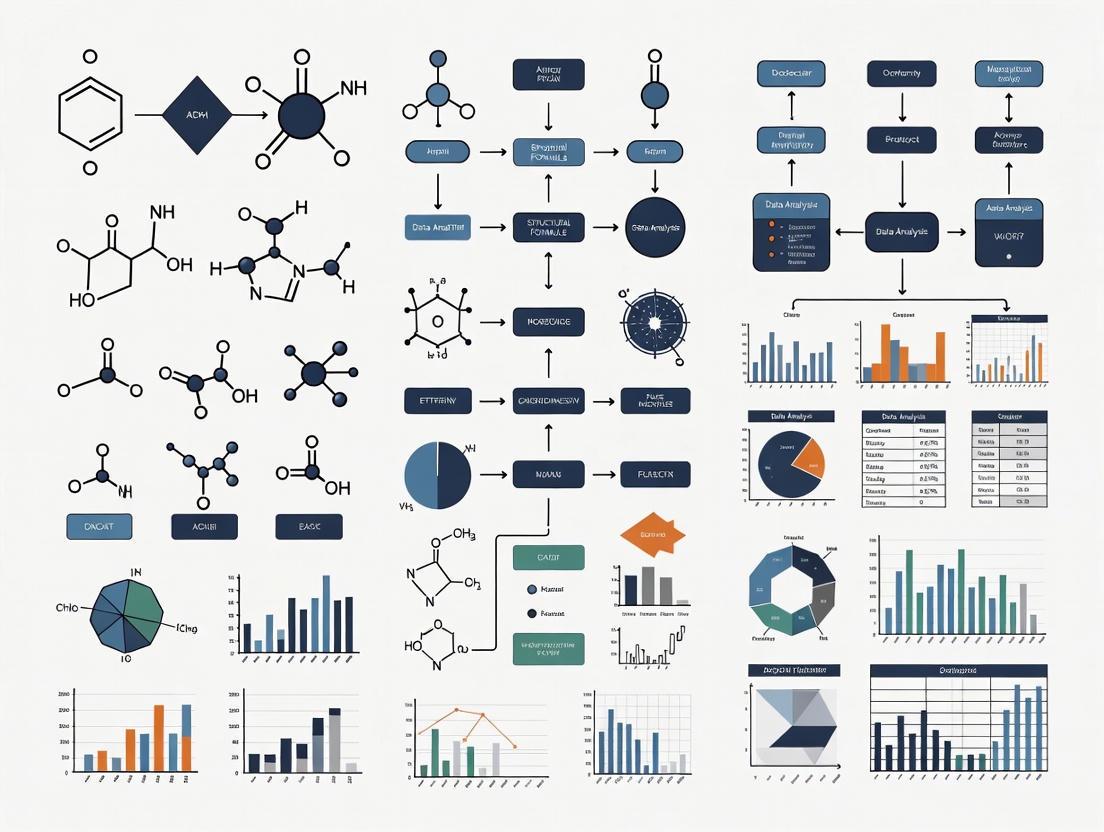

This article provides a comprehensive guide to the essential software skills required for modern analytical chemists, particularly those in drug development and biomedical research.

Essential Software Skills for Analytical Chemists in 2025: A Guide from Data Acquisition to AI-Driven Analysis

Abstract

This article provides a comprehensive guide to the essential software skills required for modern analytical chemists, particularly those in drug development and biomedical research. It covers the foundational knowledge of Chromatography Data Systems (CDS) and Laboratory Information Management Systems (LIMS), explores the application of software in method development and data analysis, addresses troubleshooting and data integrity for compliance, and offers a comparative look at emerging AI and cloud-based tools. The content is designed to help researchers and scientists enhance their technical proficiency, streamline workflows, and maintain a competitive edge in a rapidly evolving, data-driven field.

The Digital Backbone: Core Software Platforms Every Analytical Chemist Must Master

In contemporary analytical laboratories, the Chromatography Data System (CDS) has evolved from a simple data collection tool into the central operational hub, seamlessly integrating instrument control, data processing, and enterprise data management [1]. Chromatographic analysis—including high-performance liquid chromatography (HPLC), gas chromatography (GC), and ion chromatography (IC)—constitutes a major portion of testing in analytical laboratories, and all these techniques require a CDS [1]. For researchers and drug development professionals, mastery of the CDS is not merely an operational skill but a critical competency that directly impacts data integrity, analytical throughput, and regulatory compliance. Modern CDS platforms represent sophisticated informatics solutions that connect people, instruments, and data within a secure, compliant architecture, thereby serving as the cornerstone of analytical efficiency in research and quality control environments [2] [3]. This technical guide explores the architecture, functionality, and strategic implementation of CDS within the context of essential software skills for analytical chemists.

CDS Architecture and Historical Evolution

The architecture of a CDS determines its scalability, performance, and compliance capabilities. Understanding this evolution provides context for current system capabilities and limitations.

From Standalone to Enterprise Systems

Chromatography data handling has progressed through several distinct phases of technological advancement:

- Strip Chart Recorders (1960s-1970s): These devices plotted analog signals from detectors onto moving chart paper. Quantitation required manual measurements of peak heights or areas via "cut-and-weigh" or triangulation methods, which were time-consuming and prone to error [1].

- Electronic Integrators (1970s-1980s): Devices like the Hewlett-Packard HP-3380A introduced automated peak integration, calibration, and rudimentary reporting capabilities. They featured built-in A/D converters, internal memory, and firmware for data processing, representing a significant step toward automation [1].

- PC Workstations (1980s): The advent of the personal computer enabled more flexible data handling and instrument control. Early systems like TurboChrom dominated the market, leveraging the Windows operating system to provide more versatile chromatography data management [1].

- Network and Client-Server CDS (1990s-Present): Modern CDS operates primarily on a client-server model, which centralizes data storage and management while providing distributed access to multiple users. This architecture supports compliance requirements through enhanced security, audit trails, and data integrity protections [1].

Modern CDS Deployment Options

Modern CDS platforms typically offer multiple deployment configurations to suit different laboratory needs and scales:

- Workstation: A single-computer system providing comprehensive instrument control, data analysis, and reporting for individual instruments or small setups [2].

- Workstation Connect: Connects multiple workstations to form a small network without a dedicated server, enabling improved data sharing and security [2].

- Enterprise: A full client-server architecture supporting global, multi-site deployments with centralized data management, often integrated with other informatics solutions like LIMS and ELN [2].

The following diagram illustrates the architecture of a modern enterprise CDS:

Figure 1: Modern Enterprise CDS Architecture. The central server manages data integrity, security, and compliance while supporting multiple instrument types and user roles across the organization.

Core CDS Functions: From Acquisition to Reporting

A modern CDS encompasses a comprehensive workflow that spans the entire analytical process. The core functionality can be divided into three interconnected domains: instrument control, data processing, and reporting.

Instrument Control and Data Acquisition

The CDS serves as the primary interface for controlling chromatographic instrumentation and acquiring raw data. This function extends beyond simple command execution to encompass:

- Unified Method Management: A single instrumental method within the CDS controls all parameters for each module of a chromatographic system (for example, pump, autosampler, column compartment, and detectors in an HPLC system) [1].

- Real-Time Monitoring: Modern CDS provides live monitoring of ongoing analyses, instrument status, and data quality, enabling proactive intervention and decision-making [4].

- Multi-Vendor Instrument Support: Enterprise CDS solutions can typically control instruments from various manufacturers through native drivers or standardized communication protocols, reducing the need for multiple control software platforms [5].

- Sequential Analysis Management: Through sequence files, the CDS automates batch analyses by defining sample lists, injection orders, and method sequences, significantly enhancing throughput and reproducibility [6].

Data Processing and Integration

The transformation of raw detector signals into meaningful analytical information represents a core CDS capability:

- Peak Integration: Mathematical algorithms identify and integrate chromatographic peaks, typically using either slope thresholds or second derivatization of the raw data [1].

- Component Identification: Peaks are identified by comparing retention times or spectral data with reference standards.

- Calibration and Quantitation: The CDS constructs calibration curves from standard analyses and uses these curves to quantify analytes in unknown samples [6].

- System Suitability Testing (SST): Automated calculations of SST parameters (such as resolution, tailing factor, and precision) ensure the chromatographic system is performing adequately before sample analysis [1].

Reporting and Data Management

The final stage involves presenting processed results in accessible, actionable formats:

- Customizable Report Generation: Flexible reporting engines allow creation of everything from simple result summaries to comprehensive certificates of analysis (CoA) containing chromatograms, spectra, and compliance data [2] [1].

- Centralized Data Repository: Enterprise CDS solutions store all raw data, processed results, methods, and metadata in a secure, relational database, facilitating data retrieval, audit, and review [2].

- Regulatory Compliance Features: Automated audit trails, electronic signatures, and version control ensure data integrity and regulatory compliance [5].

The following workflow diagram illustrates the complete analytical process managed by a CDS:

Figure 2: End-to-End CDS Workflow. The CDS manages the complete analytical process from method setup through data archiving, ensuring data integrity at each stage.

Quantitative Comparison of CDS Features and Capabilities

When evaluating CDS solutions for research or drug development applications, specific technical specifications and compliance features must be considered. The tables below summarize key quantitative and qualitative factors for CDS selection.

Table 1: CDS Deployment Configuration Comparison

| Configuration | Maximum Users/Instruments | IT Infrastructure | Typical Use Case | Compliance Features |

|---|---|---|---|---|

| Workstation | 1-2 users/instruments | Single PC | Small research labs, method development | Basic security, limited audit trail |

| Workstation Connect | 5-10 users/instruments | Multiple PCs without dedicated server | Small network, departmental use | Improved data sharing, compliance tools |

| Enterprise | 1000+ users/instruments [2] | Centralized server (on-premise or cloud) | Multi-site, regulated environments | Full 21 CFR Part 11 compliance, electronic signatures |

Table 2: CDS Technical Specifications and Performance Metrics

| Feature | Standard Capability | Advanced Capability | Impact on Laboratory Operations |

|---|---|---|---|

| Instrument Control | Single vendor, chromatography only | Multi-vendor, chromatography + CE + MS [2] | Reduced training, unified workflow |

| Data Processing Speed | Standard integration algorithms | MS-optimized (up to 10x faster processing) [2] | Higher throughput, faster results |

| Peak Integration | Threshold-based, first derivative | Second derivative, deconvolution | Better resolution of complex peaks |

| Calibration Models | Linear, quadratic | Weighted, non-linear models | Improved accuracy across wide concentration ranges |

| System Suitability Testing | Manual calculation templates | Automated, real-time SST evaluation | Reduced manual review time |

Compliance and Data Integrity: The Regulatory Framework

In regulated pharmaceutical and biotechnology environments, CDS must adhere to stringent regulatory requirements. Understanding this framework is essential for analytical chemists involved in drug development.

Regulatory Requirements and CDS Features

Modern CDS incorporates specific features designed to maintain data integrity and regulatory compliance:

- 21 CFR Part 11 Compliance: The CDS must provide features that ensure electronic records and signatures are trustworthy, reliable, and equivalent to paper records [1] [5].

- Comprehensive Audit Trails: The system automatically records every action, modification, and deletion applied to data files, methods, or user accounts, detailing who performed the action, when, and why [5].

- Electronic Signatures: Enforceable electronic approvals and reviews of methods, samples, and results, linking unique signatures to the data they confirm [5].

- User Access Control: Granular security settings that assign specific permissions (e.g., data acquisition only, processing and reporting, system administration) to different user roles, preventing unauthorized changes [5].

Table 3: Essential CDS Compliance Features for Reg Laboratories

| Compliance Feature | Regulatory Requirement | CDS Implementation | Data Integrity Principle |

|---|---|---|---|

| Audit Trail | 21 CFR Part 11, EU GMP Annex 11 | Comprehensive, uneditable log of all data-related actions | Attributable, Contemporaneous |

| Electronic Signatures | 21 CFR Part 11 | Unique user credentials with non-repudiation | Attributable, Legible |

| User Access Control | ALCOA+ Framework | Role-based permissions, least privilege access | Original, Accurate |

| Data Encryption & Security | Data integrity regulations | Encryption in transit and at rest | Enduring, Available |

| Version Control | GMP/GLP requirements | Method and document versioning with change rationale | Original, Accurate |

CDS Implementation: Methodologies and Best Practices

Successful implementation of a CDS requires careful planning, execution, and validation. The following section outlines proven methodologies for CDS deployment and operation.

CDS Implementation Protocol

A structured approach to CDS implementation ensures system validity and operational efficiency:

Requirements Definition

- Document analytical workflows, data management needs, and compliance requirements

- Identify integration points with existing systems (LIMS, ELN)

- Establish performance metrics and acceptance criteria

System Design and Configuration

- Design system architecture based on laboratory scale and throughput requirements

- Configure user roles, permissions, and electronic signature workflows

- Customize data fields, report templates, and audit trail settings

Installation and Validation

- Execute Installation Qualification (IQ) to verify proper installation

- Perform Operational Qualification (OQ) to ensure system operates according to specifications

- Conduct Performance Qualification (PQ) to demonstrate system functions in the actual operational environment [5]

User Training and Proficiency Assessment

CDS Administrator Maintenance Procedures

Ongoing system administration follows standardized procedures:

Daily Maintenance Tasks

- Verify successful completion of automated backups

- Monitor system performance and storage capacity

- Review error logs and system alerts

Weekly Administrative Procedures

- Audit user access and security settings

- Review and archive completed studies

- Validate system performance against established benchmarks

Monthly Maintenance Activities

- Apply security patches and software updates

- Perform comprehensive system diagnostics

- Review and purge temporary files

The Scientist's Toolkit: Essential CDS Research Reagents and Solutions

Beyond the software itself, effective CDS utilization requires specific "research reagents" in the form of standardized procedures, templates, and integration tools. The table below details these essential components.

Table 4: Essential CDS Research Reagents and Solutions

| Tool/Component | Function/Purpose | Implementation Example |

|---|---|---|

| eWorkflow Procedures | Standardize complex analytical protocols with guided steps | Chromeleon eWorkflow enables moving from injection to final results in three clicks [2] |

| Method Templates | Ensure consistency in method development and transfer | Ready-to-run method templates for specific applications (e.g., environmental, pharmaceutical) [2] |

| Custom Report Templates | Standardize result reporting across studies and analysts | Amplified spreadsheet-based custom reporting engines [2] |

| System Suitability Test (SST) Protocols | Automate calculation of critical chromatographic parameters | Built-in SST calculations for resolution, tailing factor, and precision [1] |

| Data Extraction Tools | Overcome proprietary format limitations for data sharing | ACD/Labs Spectrus Platform extracts full chromatographic data for use in other applications [3] |

| Integration Connectors | Facilitate data exchange with other informatics systems | Pre-built connectors for LIMS, ELN, and ERP systems [5] |

Future Trends and Directions in CDS Technology

Chromatography Data Systems continue to evolve, incorporating emerging technologies that enhance their capabilities and extend their role in the analytical laboratory.

- Cloud-Based Deployments: Increasing adoption of cloud infrastructure (IaaS, PaaS, SaaS) for CDS deployment offers enhanced scalability, reduced IT overhead, and improved accessibility for multi-site organizations [1].

- Enhanced MS Data Handling: Specialized processing capabilities for mass spectrometry data, including server-side processing for faster MS/MS data handling speeds, are becoming standard in advanced CDS platforms [2].

- Remote Monitoring and Mobility: Modern CDS supports remote monitoring via tablets and mobile devices, enabling real-time tracking of analyses and instrument status from anywhere [4].

- AI and Machine Learning Integration: Automated data processing, peak integration, and method optimization increasingly leverage artificial intelligence to improve accuracy and efficiency [3].

- Sustainability Features: CDS now incorporates gas reduction functions for GC systems, optimization of instrument parameters, and support for hydrogen as a sustainable carrier gas, aligning with laboratory sustainability initiatives [4].

The modern Chromatography Data System has firmly established itself as the indispensable central hub for instrument control and data processing in analytical chemistry. For researchers and drug development professionals, proficiency with these systems represents a fundamental software competency that directly impacts research quality, efficiency, and compliance. As CDS technology continues to evolve—incorporating cloud computing, enhanced mobility, and artificial intelligence—its role as the integrative platform for laboratory operations will only expand. The analytical chemists who master these systems and their capabilities will be best positioned to leverage the full potential of their chromatographic instrumentation and drive innovation in research and development.

In the evolving landscape of analytical chemistry and drug development, the ability to manage vast amounts of data while ensuring its integrity and traceability is paramount. A Laboratory Information Management System (LIMS) serves as the digital backbone of the modern laboratory, centralizing data and standardizing processes to meet stringent regulatory requirements. This technical guide explores the core functionalities of a LIMS, detailing its role in orchestrating complex workflows and providing complete data traceability from sample accession to final reporting. For the analytical chemist, proficiency in leveraging a LIMS is no longer a secondary skill but an essential component of rigorous, efficient, and compliant research.

A Laboratory Information Management System (LIMS) is a specialized software platform built around a centralized database designed to manage laboratory samples, associated data, and standardized workflows [8]. It digitally records and tracks metadata, results, and instruments, transforming the laboratory from a collection of manual, error-prone processes into an integrated, efficient, and data-driven operation [9]. For analytical chemists and drug development professionals, a LIMS is foundational for maintaining data integrity, supporting regulatory compliance, and facilitating collaboration across research teams.

The primary function of a LIMS is to provide a precise, auditable trail for a sample through its entire lifecycle—from creation and usage to disposal [8]. This is achieved by automating data capture, enforcing standard operating procedures (SOPs), and integrating with laboratory instrumentation. By replacing manual record-keeping with digital tracking, LIMS significantly reduces human error, optimizes resource utilization, and frees up scientists to focus on analytical interpretation rather than administrative tasks [10] [11].

Core LIMS Functionalities for Workflow Orchestration

Workflow orchestration refers to the automated coordination, execution, and management of complex laboratory processes. A LIMS achieves this by providing a structured digital environment that guides each step, ensures task completion, and maintains process consistency.

Sample Management and Tracking

Sample management is the cornerstone of LIMS functionality. The system provides a centralized platform for registering, tracking, and managing sample inventory [9]. Upon receipt, each sample is assigned a unique identifier, often coupled with a barcode, which is used to track its location, status, and chain of custody in real-time throughout all testing stages [12] [11]. This eliminates the risks of misplacement or misidentification inherent in manual systems and provides immediate visibility into sample status for all authorized personnel.

Configurable Workflows and Protocol Management

LIMS allows laboratories to design and implement customized, yet standardized, digital workflows that reflect their specific testing protocols and SOPs [13]. These configurable workflows can automatically assign tasks to specific analysts or instruments, track progress, and enforce the correct sequence of operations [9]. For instance, a stability-testing workflow can be configured to automatically schedule future tests and alert analysts when a sample is due for analysis, ensuring adherence to the study's complex timeline [8].

Instrument Integration and Data Capture

Direct integration of laboratory instruments with the LIMS is a critical feature for workflow orchestration and data integrity. This integration automates the capture of test results and associated metadata, completely bypassing error-prone manual transcription [12] [9]. This not only improves efficiency—with some labs reporting 40-60% gains—but also ensures that data is directly linked to its source, complete with instrument-specific metadata [12]. Furthermore, LIMS can track instrument calibration and maintenance schedules, ensuring that data is only generated from properly qualified equipment [14].

Inventory and Resource Management

Efficient management of reagents, standards, and consumables is vital for uninterrupted laboratory operations. LIMS provides real-time visibility into inventory levels, tracking the usage of reagents and supplies [9]. The system can be configured to generate automatic alerts when stock levels fall below a predefined threshold, enabling proactive procurement and preventing costly delays in testing and experiments [9].

Table 1: Quantitative Benefits of LIMS Implementation in Key Areas

| Functional Area | Reported Benefit | Impact on Laboratory Operations |

|---|---|---|

| Data Entry & Workflow Efficiency | Up to 40-60% efficiency improvement from instrument integration [12] | Frees analyst time for higher-value tasks; faster turnaround times |

| Data Accuracy | 80% reduction in data entry errors reported by a cannabis testing lab [15] | Higher quality data, reduced need for rework, increased confidence in results |

| Sample Throughput | 50% increase in Certificate of Analysis (CoA) turnaround [15]; some labs report up to 100x processing capacity increase [16] | Increased operational capacity and scalability without proportional staff increase |

| Compliance & Auditing | Estimated 50% reduction in compliance audit time [17] | Significant time and cost savings during regulatory inspections |

Ensuring End-to-End Data Traceability

Data traceability is the ability to track and document the origin, transformation, and flow of data throughout its entire lifecycle within the laboratory. It is a non-negotiable requirement for demonstrating data integrity and meeting regulatory standards.

The Audit Trail

A comprehensive, immutable audit trail is a fundamental feature of a compliant LIMS. The system automatically logs every action taken within it, recording what was changed, when, by whom, and for what reason [12] [14]. This provides a transparent and defensible record of all data-related activities, which is indispensable during regulatory audits and internal quality reviews. The audit trail ensures that the history of any data point is fully reconstructable.

Barcode Technology for Sample Identification

Barcoding is a powerful tool for enhancing traceability and minimizing errors. LIMS automates the generation of unique barcodes for each sample, which are then used for precise identification and tracking [12] [10]. Scanning a barcode instantly logs a sample's movement or a processing step, creating a precise, timestamped record without manual data entry. This prevents sample misassociation and guarantees that every piece of data is accurately linked to the correct sample [12] [11].

Electronic Signatures and Role-Based Access Control

To comply with regulations like FDA 21 CFR Part 11, LIMS implements electronic signatures that are legally equivalent to handwritten signatures, providing non-repudiation for critical actions such as result approval or report authorization [14]. Coupled with role-based access controls (RBAC), which restrict system functions and data visibility based on a user's role, the LIMS ensures that only authorized personnel can perform specific tasks or access sensitive data, thereby safeguarding data integrity [14].

Automated Specification Checks and Quality Flags

LIMS enhances quality assurance by allowing labs to predefine acceptance criteria or specifications for test results. The system can then automatically compare results against these limits and flag any out-of-specification (OOS) or atypical results [10]. This triggers immediate investigation, ensures timely resolution, and prevents the progression of non-conforming samples through the workflow, thereby embedding quality control directly into the operational process.

Experimental Protocol: A LIMS-Driven Workflow for Pharmaceutical Quality Control

The following section outlines a detailed methodology for a typical analytical chemistry workflow in a pharmaceutical quality control (QC) laboratory, illustrating how a LIMS orchestrates the process and ensures traceability.

Method

Objective: To analyze an incoming batch of Active Pharmaceutical Ingredient (API) against predefined specifications for identity and purity.

Materials and Reagents:

- Sample: API Batch #X-123

- Reference Standards: USP-grade reference standard for the API

- Mobile Phase: HPLC-grade solvents, prepared as per SOP M-101

- Chromatographic Column: C18, 5µm, 250 x 4.6 mm

Table 2: Research Reagent Solutions and Essential Materials

| Item | Function in the Experiment |

|---|---|

| USP Reference Standard | Serves as the benchmark for comparing the sample's chromatographic retention time and peak response to confirm identity and quantify purity. |

| HPLC-Grade Mobile Phase | The solvent system that carries the dissolved sample through the chromatographic column, separating individual components based on their chemical properties. |

| C18 Chromatographic Column | The stationary phase where the actual separation of the API from its potential impurities and degradation products occurs. |

Experimental Procedure

- Sample Registration: Upon receipt in the lab, the API batch is logged into the LIMS. The system assigns a unique sample ID (e.g., API-X123-001) and generates a barcode label, which is affixed to the sample container. Metadata, including supplier, date of receipt, and required tests, is recorded [8].

- Test Assignment and Workflow Triggering: Based on the sample type, the LIMS automatically triggers the "API QC Release" workflow. It creates the required analytical tasks (e.g., "HPLC Identity and Assay") and assigns them to the appropriate instrument and analyst based on pre-configured rules and availability [9].

- Reagent and Standard Preparation: The analyst retrieves the USP reference standard from inventory. The LIMS records its usage, updating the inventory count. The analyst prepares the mobile phase according to SOP M-101, and the LIMS electronic notebook is used to document the preparation steps and weights.

- Instrument Integration and Data Acquisition: The analyst dissolves and prepares the sample as per the method. The sample vial, along with necessary standards and blanks, is placed in the HPLC autosampler. The analyst scans the sample barcode at the instrument. The LIMS recognizes the sample and automatically transmits the correct instrument method to the HPLC. Upon completion, the results (chromatogram and data) are automatically acquired by the LIMS and securely linked to the sample ID [12].

- Automated Review and Specification Checking: The LIMS automatically compares the sample's HPLC results (e.g., retention time, peak area, impurity profile) against the pre-loaded product specifications. If all results are within limits, the data is flagged for analyst review. If an OOS result is detected, the system flags it and automatically initiates an investigation workflow, preventing final approval [10].

- Electronic Review and Approval: The qualified analyst reviews the data and the system's audit trail for the analysis within the LIMS. Upon verification, the analyst applies an electronic signature to approve the results. A second reviewer, typically the lab manager, performs a final electronic sign-off, making the results official [14].

- Report Generation and Archival: The LIMS automatically generates a Certificate of Analysis (CoA) containing all relevant data, results, and a statement of compliance. The final report is stored in the centralized database, permanently linked to the complete raw data, audit trail, and sample history, creating an immutable and easily retrievable record [9].

The following diagram visualizes this integrated, LIMS-orchestrated workflow.

Diagram 1: LIMS QC Workflow. This diagram illustrates the orchestrated flow of a quality control sample, including automated checks and an out-of-specification investigation loop.

The Data Traceability Chain

The power of a LIMS in ensuring data integrity lies in its ability to create an unbreakable chain of traceability that links every piece of information. The following diagram maps the logical relationships between sample, data, and metadata, demonstrating how a LIMS creates a complete digital thread.

Diagram 2: Data Traceability Network. This entity-relationship diagram shows how a LIMS creates an interconnected network of all data and metadata, forming a complete and auditable history.

For the contemporary analytical chemist, a Laboratory Information Management System is far more than a simple database; it is an essential platform that orchestrates complex laboratory workflows and guarantees the traceability and integrity of scientific data. Mastery of this software is a critical skill, enabling researchers to navigate the complexities of modern drug development and analytical science. By centralizing data, automating processes, and embedding compliance into every step, a LIMS empowers scientists to generate reliable, defensible data, thereby accelerating research and ensuring that the highest standards of quality and safety are met.

Electronic Laboratory Notebooks (ELN) and Their Integration with CDS and LIMS

In modern analytical chemistry, the digital ecosystem of a laboratory is built upon three core software pillars: the Electronic Laboratory Notebook (ELN), the Laboratory Information Management System (LIMS), and the Chromatography Data System (CDS). An ELN serves as a digital replacement for the paper notebook, enabling researchers to document experiments, procedures, and observations in a structured, searchable, and secure format [18]. Its function extends beyond simple record-keeping; a modern ELN is a dynamic platform for capturing intellectual property and experimental context.

When integrated with a LIMS—which manages samples, associated data, and laboratory workflows—and a CDS, which specifically handles data from chromatographic instruments, these systems transform from isolated record-keeping tools into a unified informatics backbone [19]. This integration is a critical software skill for analytical chemists, as it creates a seamless data flow from instrument output to final analysis and reporting, thereby enhancing data integrity, operational efficiency, and regulatory compliance [20].

Core Concepts and Definitions

The Role of ELN, LIMS, and CDS in Analytical Chemistry

- Electronic Laboratory Notebook (ELN): A digital platform for recording research experiments. It captures methodological details, unstructured observations, and analytical results, facilitating data sharing and collaboration while ensuring data integrity and traceability [18].

- Laboratory Information Management System (LIMS): Specialized software designed to automate lab operations. Its core functions include sample management (tracking from receipt to disposal), workflow automation, data collection, and ensuring regulatory compliance (e.g., with FDA 21 CFR Part 11 and ISO/IEC 17025) [21]. It acts as the central database for sample-related data.

- Chromatography Data System (CDS): An application specialized in controlling chromatographic instruments (e.g., HPLC, GC), acquiring raw data, processing peaks, and generating analytical reports [19].

The Imperative for Integration

The drive for integration is fueled by the limitations of isolated systems. Without integration, laboratories struggle with data silos, manual data transcription errors, and inefficient workflows [20]. Integrating ELN, LIMS, and CDS establishes a single, authoritative source of truth for all experimental data. This is a foundational concept of Lab 4.0, where digital technologies are leveraged to create end-to-end automated laboratory operations [22]. The benefits are multifold:

- Elimination of Manual Transcription: Data flows automatically from the CDS to the ELN and LIMS, drastically reducing errors [23].

- Enhanced Traceability: The complete journey of a sample and its associated analytical data is linked and easily audited [20].

- Accelerated Workflows: Automated data transfer and reporting free up scientists to focus on data analysis and interpretation rather than administrative tasks [23].

Quantitative Analysis of the Integrated Informatics Landscape

The adoption of integrated laboratory informatics platforms is accelerating, driven by tangible needs for efficiency and compliance. The tables below summarize key market data and integration benefits.

Table 1: Laboratory Informatics Market Overview and Growth Drivers

| Aspect | Quantitative Data & Trends | Source/Reference |

|---|---|---|

| LIMS Market Size | Expected to reach USD 3.56–5.19 billion by 2030, with a CAGR of 6.22–12.5%. | [24] |

| ELN Market Drivers | Rising R&D expenditure in pharma/biotech; need for data integrity and regulatory compliance. | [18] |

| Cloud Deployment | Over 75% of new lab informatics contracts in 2024 were cloud-based SaaS deployments. | [25] |

| AI Adoption | AI-driven anomaly detection reduced QC investigation time by 50% in pharma labs. | [25] |

Table 2: Measured Benefits of System Integration in the Laboratory

| Benefit Category | Impact of Integration | Source/Reference |

|---|---|---|

| Operational Efficiency | Reduces manual errors, improves turnaround time, and provides a clear view of work-in-progress to eliminate bottlenecks. | [23] |

| Data Management | Enables real-time data access and sharing across departments, breaking down information silos. | [23] |

| Compliance & Security | Ensures adherence to FDA 21 CFR Part 11, GxP, and ISO 17025 via automated audit trails and role-based access. | [20] |

| Workflow Automation | Mobile-enabled Laboratory Execution Systems (LES) cut field-to-report time by 65% in environmental monitoring. | [25] |

Protocols for System Integration and Implementation

Successfully integrating ELN, LIMS, and CDS requires a methodical approach. The following protocols provide a roadmap for analytical chemists and lab managers.

Pre-Integration Assessment and Vendor Selection

- Needs Assessment: Define the laboratory's specific requirements, including lab size, workflow complexity, data volume, and compliance needs (e.g., GxP, 21 CFR Part 11) [23]. Identify all instruments and software to be integrated.

- Vendor Evaluation: Select a LIMS/ELN platform that supports seamless integration. Key criteria should include:

- Ease of Use: An intuitive interface is critical for user adoption and consistent data entry [26].

- Integration Capabilities: Look for built-in connectors for your CDS (e.g., Waters Empower, Agilent OpenLab) and a robust, well-documented API for custom integrations [20] [26].

- Compliance Features: Ensure the system supports electronic signatures, full audit trails, and role-based access control out-of-the-box [21] [26].

- Collaboration with Vendors: Engage with your LIMS/ELN provider, CDS vendor, and instrument manufacturers early to ensure compatibility and resolve technical challenges before they become roadblocks [20].

Technical Integration Methodology

- Define Data Transfer Protocols: Establish structured workflows for data synchronization. This includes defining data formats, synchronization rules, and error-checking mechanisms to maintain data integrity [20].

- Leverage Interoperability Standards: Utilize existing standards to simplify integration:

- API-Led Integration: Use the platform's Application Programming Interface (API) to build custom connectors. This allows for the creation of automated workflows that, for example, pull sample lists from the LIMS, send them to the CDS, and return the results to the correct experiment in the ELN [26].

Validation and Change Management

- Test and Validate: Before full deployment, conduct thorough integration testing. This should include automated data verification, stress testing under high data loads, and validation against regulatory compliance checklists [20].

- Train Lab Personnel: A well-integrated system is only as effective as its users. Invest in training staff on new data entry protocols, system navigation, and troubleshooting to ensure high adoption rates and prevent operational disruptions [20].

- Monitor and Optimize: After implementation, continuously monitor the system. AI-powered analytics can help detect inefficiencies, suggest workflow optimizations, and maintain compliance [20].

The Scientist's Toolkit: Essential Research Reagent Solutions

For an analytical chemist working in an integrated digital lab, the "reagents" are both chemical and digital. The following table details key solutions and their functions.

Table 3: Essential Digital and Physical Tools for Integrated Chromatography Workflows

| Tool Category | Example Solutions | Function in Integrated Workflows |

|---|---|---|

| Informatics Platforms | LabWare LIMS/ELN, LabVantage LIMS, Revvity Signals Notebook, CDD Vault | Provide the core software environment for managing samples (LIMS), experiments (ELN), and chemical/biological data (CDD Vault). [21] [19] [18] |

| Chromatography Data Systems (CDS) | Waters Empower, Agilent OpenLab, Thermo Fisher Chromeleon | Control HPLC/GC/UPLC instruments, acquire raw chromatographic data, perform peak integration and analysis, and generate results for export to LIMS/ELN. [20] [19] |

| Instrument Integration & Control | SiLA 2 Standard, Thermo Fisher TSX Series (freezers), various barcode readers | Standardizes communication with instruments and automated equipment, enabling seamless data capture and status monitoring (e.g., calibration, maintenance). [24] [23] |

| Analytical Standards & Reagents | Certified Reference Materials, HPLC-grade solvents, stable isotope-labeled internal standards | Ensure analytical accuracy and precision. The LOT and expiration of these materials are tracked in the LIMS to maintain data validity and compliance. |

| Collaboration & Data Sharing | Benchling, Scispot, Dassault Systèmes BIOVIA | Cloud-native platforms that centralize research documentation and facilitate collaboration across multidisciplinary teams and with CROs. [22] [18] |

Architectural Workflow of an Integrated System

The following diagram illustrates the logical relationship and data flow between a scientist, the core software systems (ELN, LIMS, CDS), and laboratory instruments in an integrated environment.

The diagram above visualizes a typical automated workflow in an integrated lab:

- The Scientist uses the ELN to design an experiment and document the initial hypothesis and procedure.

- The ELN communicates with the LIMS to formally request an analysis, creating a sample tracking record.

- The LIMS sends a worklist or sequence to the CDS.

- The CDS controls the analytical Instruments (e.g., HPLC) to execute the method.

- Instruments return the acquired raw data to the CDS for processing.

- The CDS transfers the finalized results (e.g., peak areas, concentrations) back to the LIMS.

- The LIMS updates the sample status and pushes the results to the corresponding experiment in the ELN.

- Both the ELN and LIMS contribute to the final, structured, and auditable record in the central Data Repository.

Emerging Technologies

The integrated lab of the future is evolving towards greater autonomy and intelligence. Key trends include:

- AI and Intelligent Assistants: AI co-pilots are being integrated to parse SOPs, detect QC anomalies, and suggest experiment designs based on historical data, further reducing human error and accelerating discovery [24] [25] [27].

- The Self-Driving Lab: The concept of multi-agentic labs is emerging, where software agents orchestrate experiments by triggering instrument runs, analyzing results, and proposing next steps with minimal human intervention [24].

- Validated Cloud (SaaS) Ecosystems: Cloud-based platforms are maturing to offer pre-validated environments that comply with GAMP 5 principles, making compliance a continuous process rather than a one-off project and simplifying updates [24].

For the modern analytical chemist, proficiency with ELN, LIMS, and CDS is no longer a niche skill but a core competency. Understanding how these systems integrate is crucial for operating effectively within a Lab 4.0 environment. This integration creates a powerful, seamless data backbone that enhances data integrity, operational efficiency, and regulatory compliance. As the field moves toward AI-powered and self-driving labs, the ability to work within and leverage these connected digital ecosystems will become increasingly central to successful research and drug development.

For analytical chemists and research scientists, the ability to accurately represent, analyze, and communicate molecular information is a fundamental skill that directly impacts research quality and reproducibility. Within the modern chemical sciences, ChemDraw has established itself as an indispensable software tool, bridging the gap between theoretical molecular concepts and tangible, publishable data. Since its debut in 1985, ChemDraw has evolved from a simple drawing utility into a comprehensive suite for chemical intelligence, fundamentally transforming how chemists interact with and present structural data [28] [29]. This guide provides an in-depth technical overview of ChemDraw, detailing its core functionalities, advanced features, and practical applications specifically within the context of analytical chemistry and drug development research. Mastering ChemDraw is not merely about creating aesthetically pleasing structures; it is about leveraging a connected platform that integrates drawing, prediction, database access, and collaboration to accelerate the research workflow.

ChemDraw Ecosystem: Product Variants and Specifications

The ChemDraw ecosystem is tailored to meet diverse user needs, from students to industrial research teams. Understanding the capabilities of each offering is crucial for selecting the appropriate tool.

Product Portfolio and Feature Comparison

The software is available in three primary tiers, each with a distinct feature set designed for different levels of research complexity [30] [29].

Table 1: Comparison of ChemDraw Product Offerings

| Feature Category | ChemDraw Prime | ChemDraw Professional | Signals ChemDraw |

|---|---|---|---|

| Core Drawing | Efficient drawing with hot-keys, structure/reaction clean-up, publisher styling [29] | Includes all Prime features plus advanced drawing tools [29] | Includes all Professional features plus modern, cloud-native applications [31] |

| Property Prediction | pKa, logP, logS, etc. [29] | Advanced predictions including 1H and 13C NMR spectrum prediction [30] [29] | All Professional predictions with cloud-enhanced analysis [31] |

| Database Integration | Limited | Name-to-Structure, integration with ChemACX, CAS SciFinder, Reaxys [30] [29] | Advanced integrations with cloud-based search and data management [31] [30] |

| Biopolymers | Basic support | HELM standard for peptides and oligonucleotides [29] | Enhanced HELM editor with find/replace, FASTA support [31] |

| Deployment & Collaboration | Desktop application [29] | Desktop application [29] | Cloud-based SaaS with real-time collaboration, centralized license management [31] [29] |

The Modern Cloud Platform: Signals ChemDraw

Signals ChemDraw represents the latest evolution of the software, combining the advanced capabilities of the desktop application with a cloud-native platform [31]. This hybrid suite transforms drawings into actionable knowledge by enabling streamlined management, sharing, and reporting of chemical data. Key advantages for enterprise research environments include:

- Cloud-Based Collaboration: Securely create, share, and collaborate on chemical drawings in real-time from any location [28].

- Unified Data Management: Organize chemical data into collections, perform structure searches across documents, and generate reports directly within the platform [30].

- Continuous Updates: As a Software-as-a-Service (SaaS) solution, users automatically gain access to the latest features and improvements [29].

Technical Capabilities and Experimental Methodologies

For the analytical chemist, ChemDraw is more than a drawing tool; it is an integrated platform for structural analysis and validation.

Spectral Prediction and Validation Protocols

Predicting NMR spectra is a critical step in the analytical workflow for verifying proposed molecular structures. The following methodology outlines a standard protocol for using ChemDraw Professional or Signals ChemDraw for this purpose.

Experimental Protocol: NMR Spectrum Prediction

- Structure Preparation: Draw the candidate chemical structure meticulously in ChemDraw, ensuring all atoms, bonds, and stereochemistry are correctly represented. Use the Structure Cleanup function to standardize bond lengths and angles to industry standards.

- Spectrum Calculation: Select the entire structure. Navigate to the

Structuremenu and choosePredict 1H NMRorPredict 13C NMR. The software will calculate the chemical shifts based on its internal algorithm. - Data Interpretation: The predicted spectrum will be displayed as a graph. Analyze the chemical shifts (δ in ppm), signal multiplicity (singlet, doublet, etc.), and integration values.

- Comparative Analysis: Overlay or compare the predicted spectrum with the experimentally obtained data. Significant discrepancies between predicted and observed signals may indicate an error in the proposed structure.

This predictive capability allows researchers to rapidly screen and validate structural hypotheses before, during, or after synthesis and isolation [30] [28].

Physicochemical Property Calculations

Beyond spectral prediction, ChemDraw can instantly calculate a suite of key physicochemical properties essential for drug discovery and analytical method development.

Table 2: Key Physicochemical Properties Predictable in ChemDraw

| Property | Symbol | Unit | Research Application |

|---|---|---|---|

| Acid Dissociation Constant | pKa | - | Predicting ionization state and solubility at physiological pH [30] |

| Partition Coefficient | logP | - | Estimating lipophilicity and membrane permeability [30] |

| Aqueous Solubility | logS | mol/L | Forecasting solubility for bioavailability and formulation [30] |

| Melting Point | Mp | °C | Assisting in compound identification and characterization [30] |

| Molecular Weight | MW | g/mol | Essential for stoichiometry and MS data interpretation [31] |

| Exact Mass | - | Da | Accurate mass calculation for high-resolution mass spectrometry [31] |

These properties are context-sensitive and calculated in real-time based on the selection on the canvas, displayed in the Analysis Panel. Users can then select which properties to add directly to their drawing for reporting purposes [31] [32].

Digital Workflow Integration

ChemDraw's true power is realized through its integration into the broader digital research ecosystem, creating a seamless workflow from concept to analysis. The following diagram visualizes this integrated process.

Research Workflow Integration

This digital workflow enables researchers to efficiently manage the entire lifecycle of chemical information. The process begins with drawing a structure, which can then be used to query integrated scientific databases like CAS SciFinder and Elsevier Reaxys for existing literature and data [30] [29]. The structure undergoes in-silico analysis within ChemDraw for property prediction. Finally, the structured data and publication-ready drawings can be directly documented in electronic lab notebooks (ELNs) such as Signals Notebook for reporting and knowledge sharing, breaking down information silos and enhancing productivity [30] [28].

Advanced Applications for Complex Molecular Representation

Modern chemical research increasingly involves complex biomolecules, which ChemDraw handles through specialized functionalities.

Biopolymer Representation with HELM

The Hierarchical Editing Language for Macromolecules (HELM) is integrated into ChemDraw to accurately represent complex biomolecules—including peptides, oligonucleotides, and antibody-drug conjugates—that are difficult to depict with standard notation [33] [30]. The HELM editor within ChemDraw allows researchers to:

- Assemble Monomers: Build complex sequences from libraries of standard and custom monomers.

- Edit Efficiently: Use find and replace capabilities to make large-scale modifications to sequences rapidly. For example, converting a natural sequence from a FASTA string into a complex modified sequence is streamlined [31].

- Copy as FASTA: Directly export natural analog sequences in the standard FASTA format, replacing the previous 'Copy as HELM (natural analog)' function [31].

Visualization and Presentation Tools

Creating clear, professional visuals is paramount for communication in publications and presentations.

- 3D Modeling and Presentation: ChemDraw can generate realistic 3D conformations of molecules. The enhanced 3D Clean-up function produces accurate models, even for complexes with metals. A key feature is the ability to copy the model as a 3MF file and paste it directly into Microsoft PowerPoint, allowing for interactive 3D manipulation and animation during presentations [32].

- Alignment and Coloring Tools: New alignment tools enable users to center, align horizontally or vertically, and distribute objects evenly on the canvas to produce clean, consistent figures [31]. Ring Fill coloring and customizable object colors allow users to direct audience focus to specific parts of a molecule, with precise control via hex codes for publication-ready drawings [31].

Essential Digital Research Reagents

In the context of digital chemistry, the following tools and resources within the ChemDraw ecosystem function as essential "research reagents" for a productive workflow.

Table 3: Key Digital "Research Reagent" Solutions in ChemDraw

| Item Name | Function in the Research Workflow |

|---|---|

| ChemACX Database | A unified database of tens of millions of substances; enables conversion of trade names and synonyms to structures and facilitates chemical sourcing [31] [30]. |

| HELM Monomer Library | The standardized set of building blocks used for constructing and representing complex biopolymers like peptides and oligonucleotides [31] [30]. |

| Periodic Table Tool | An interactive tool within the toolbar for quick element selection and the creation of atom lists for generating generic structures [31]. |

| Mass Fragmentation Tool | Mimics mass spectrometry fragmentation patterns to generate fragment structures with calculated molecular formulas and masses, aiding in spectral interpretation [31]. |

| ChemDraw/Signals Notebook Integration | Acts as a conduit for embedding chemical structures directly into electronic lab notebook entries, linking drawings to experimental data [28]. |

ChemDraw has progressed far beyond its origins as a simple drawing program to become a central hub for chemical intelligence. For the modern analytical chemist or drug development professional, proficiency with its advanced features—from predictive analytics and database integration to the representation of complex biomolecules via HELM—is no longer optional but a core component of effective research. The shift towards cloud-based platforms like Signals ChemDraw further underscores the importance of connected, collaborative, and efficient digital workflows. By mastering the technical capabilities and methodologies outlined in this guide, scientists can leverage ChemDraw not just for communication, but as a powerful tool to validate hypotheses, manage data, and accelerate the pace of discovery.

From Data to Discovery: Applying Software in Method Development and Advanced Analysis

Leveraging CDS for Efficient Chromatographic Method Development and Optimization

Chromatography Data Systems (CDS) are integral software platforms that control data from chromatography instruments, automating instrument control, data acquisition, data processing, and data storage across various chromatography systems including gas chromatography (GC), high performance liquid chromatography (HPLC), and supercritical fluid chromatography (SFC) [34]. In the context of essential software skills for analytical chemists, proficiency with CDS has become fundamental for research and drug development professionals seeking to accelerate analytical workflows while maintaining data integrity and regulatory compliance. The global CDS market, valued at USD 480.2 million in 2023 and projected to reach USD 976.04 million by 2032, reflects the growing criticality of these systems in modern laboratories [34].

For analytical chemists, CDS represents more than mere data collection software—it provides a structured informatics environment that facilitates chromatography-based analysis using chromatography indicators, enabling faster and more accurate interpretation of complex chemical data [34]. This technical guide explores the strategic application of CDS capabilities to streamline chromatographic method development and optimization, with particular emphasis on pharmaceutical applications and quality control environments where method robustness, transferability, and regulatory compliance are paramount.

CDS Fundamentals for Method Development

Core CDS Components and Architecture

Modern CDS platforms consist of several integrated components that collectively support the method development lifecycle. The core architecture typically includes instrument control modules, data acquisition servers, processing methods, repository databases, and reporting modules. These systems are categorized as either standalone CDS, which are all-in-one systems that simplify chromatography-based analysis, or integrated CDS, which facilitate workflow integration and effective communication between multiple instruments or laboratories [34]. The integrated segment currently dominates the market revenue share due to increasing demand for workflow integration that enhances coordination and delivers more accurate, rapid results [34].

From a deployment perspective, CDS solutions are available as on-premise installations, which offer greater data security control and privacy, or cloud-based solutions, which provide higher flexibility, quick accessibility, easy data backup, and lower handling costs [34]. The cloud-based segment has gained significant traction due to capabilities for real-time tracking and archiving of data, substantial storage space for massive datasets, and remote access from any location and device [34].

Foundational Chemistry Principles in CDS

Effective method development begins with foundational chemistry principles that underlie every chromatographic separation. CDS platforms increasingly incorporate predictive tools for physicochemical properties including pKa, logD, and solubility, enabling scientists to select better starting conditions for method development [35]. By leveraging these predictive capabilities, researchers can identify optimal solvents and pH ranges to screen, along with appropriate stationary phases using databases of Tanaka parameters or other column characterization systems [35]. This principled approach reduces the experimental work required to reach optimal separation conditions.

The integration of scientific reasoning with software capabilities represents a critical skill for modern analytical chemists. Rather than employing trial-and-error approaches, researchers can use CDS tools to plan strategic experiments that efficiently characterize the separation space. The software facilitates building models with experimental data, enabling understanding of separation behavior in multiple dimensions through simulated chromatograms that provide intuitive understanding of method robustness [35].

Strategic Method Development Framework

Systematic Workflow for Method Development

A structured approach to method development ensures efficient utilization of resources while achieving robust analytical methods. The following workflow visualization outlines the key stages in the CDS-supported method development process:

CDS-Supported Method Development Workflow

This systematic approach ensures that method development proceeds logically from fundamental characterization to final validation, with CDS tools supporting each stage. By planning each experiment to "paint a better picture of your separation space," researchers can minimize the number of experiments while maximizing information gain [35]. The software assists by suggesting experimental conditions and building models with collected data, enabling multidimensional understanding of the separation space.

Experimental Design and Scouting

Initial method scouting employs strategic experimental designs to efficiently explore separation parameters. CDS platforms facilitate this process through automated screening protocols that systematically vary critical parameters including:

- Stationary phase chemistry (C18, phenyl, HILIC, etc.)

- Mobile phase pH (typically 2-3 values across the stability range)

- Organic modifier (acetonitrile vs. methanol)

- Temperature (e.g., 30°C, 40°C, 50°C)

- Gradient slope (shallow, medium, steep)

This multidimensional screening is enhanced through CDS capabilities that manage complex experimental sequences and automatically collate results for comparative analysis. For example, Agilent's Infinity III application-specific HPLC systems include configurations designed specifically for method development that can automate this screening process [36]. The output from these scouting experiments provides the foundational data for subsequent optimization phases.

Advanced CDS Tools for Method Optimization

Peak Integration and Detection Optimization

Advanced integration algorithms within CDS platforms provide critical capabilities for accurately quantifying chromatographic results. Tools such as Agilent's OpenLab CDS Integration Optimizer guide analysts to optimal setting for their specific analysis, enabling deployment of these settings across the laboratory for operational consistency [37]. This approach allows less experienced analysts to achieve correct integration while expert analysts can work more efficiently.

Special integration regions allow method developers to tune subsections of the chromatogram with parameters independent of the general integration settings, particularly valuable for complex separations with varying peak characteristics across the chromatogram [37]. Baseline annotations and timed events can be displayed directly on the chromatogram for quick visual reference, facilitating method troubleshooting and refinement. These capabilities directly address the common challenge noted in user experiences where "reliability, robustness, and lifetimes of methods have improved, while simultaneously reducing the loss of interpretation information and expensive retesting of samples" [35].

Wavelength Selection and Spectral Analysis

Diode array detector (DAD) data presents both opportunities and challenges for method development, with CDS platforms offering specialized visualization tools to optimize detection parameters. The Isoabsorbance Plot in OpenLab CDS simplifies the task of selecting optimal wavelengths by evaluating all possible wavelengths and presenting a visual heat map that displays the highest signal for peaks of interest [37]. This heat map approach, with red signals indicating high response and blue indicating low response, enables rapid identification of the best wavelength for subsequent analyses.

Table 1: CDS Integration Optimization Parameters

| Parameter | Function | Impact on Method Quality |

|---|---|---|

| Peak Width | Defines the expected width of chromatographic peaks | Affects ability to distinguish closely eluting peaks and accurate integration |

| Threshold | Sets sensitivity for peak detection | Influences detection of minor impurities and baseline noise rejection |

| Integration Events | Allows customized integration for specific regions | Enables handling of complex baselines and co-elution patterns |

| Baseline Tracking | Adjusts how baseline is drawn between peaks | Critical for accurate quantitation, especially in noisy chromatograms |

Extracted chromatograms and spectra provide method developers with full insight on analytical results at a given wavelength and contributing components at a given retention time, enabling informed decisions about specificity and potential interference [37]. This comprehensive spectral analysis is particularly valuable for method development where detection parameters must balance sensitivity, specificity, and robustness across the analytical lifecycle.

Method Modeling and Simulation

CDS platforms increasingly incorporate modeling capabilities that predict separation outcomes based on experimental data, allowing virtual method optimization without continuous instrument time. These tools build mathematical models of the separation space that enable researchers to simulate chromatographic outcomes under different conditions, providing an intuitive understanding of method robustness [35]. The accuracy of these models depends on both the underlying algorithms and the quality of input data, with advanced software allowing customization of equations to reflect specific parameter relationships [35].

The modeling approach is particularly valuable for understanding temperature effects, where protein and small-molecule separations demonstrate different behaviors [35]. By building models that reflect these differences, method developers can optimize temperature parameters with fewer experiments. Simulation capabilities also facilitate training and knowledge transfer, allowing less experienced analysts to develop intuition about chromatographic behavior without consuming reagents or instrument time.

Experimental Protocols for Method Optimization

Gradient Optimization Protocol

Systematic gradient optimization represents a fundamental application of CDS capabilities in method development. The following protocol outlines a structured approach:

Materials and Equipment:

- HPLC or UHPLC system with binary or quaternary pump

- CDS software with method development capabilities

- Diode array detector or equivalent

- Columns for screening (typically 3-5 different chemistries)

- Standard mixture containing all analytes of interest

- Mobile phase components (buffers, organic modifiers)

Procedure:

- Initial Scouting Gradient: Program a broad gradient (e.g., 5-95% organic modifier over 30 minutes) using a medium pH buffer (e.g., pH 4.5) and a reference column (e.g., C18).

- Column Screening: Execute the scouting gradient on 3-5 different column chemistries, maintaining consistent flow rate and temperature.

- pH Screening: Select the most promising column chemistry and perform scouting gradients at 2-3 different pH values (e.g., 2.5, 4.5, 7.0).

- Temperature Optimization: Using the optimal column and pH, perform gradient runs at 3 different temperatures (e.g., 30°C, 40°C, 50°C).

- Gradient Steepness Optimization: Refine the gradient slope using the established conditions, testing 2-3 different gradient times.

- Fine-Tuning: Make minor adjustments to gradient shape (isocratic hold periods, curvature) to resolve critical pairs.

Data Analysis:

- Use CDS peak tracking capabilities to follow individual compounds across experiments

- Apply resolution calculations between critical peak pairs

- Employ modeling software to predict optimal conditions

- Validate predictions with confirmation experiments

This systematic approach, supported by CDS automation, enables efficient exploration of the multidimensional parameter space while maintaining documentation for regulatory compliance.

Robustness Testing Protocol

Robustness testing establishes method tolerance to small, deliberate variations in parameters, providing critical information for method validation and transfer.

Experimental Design:

- Identify critical method parameters (e.g., mobile phase pH, column temperature, flow rate, gradient time)

- Define ranges for variation (±0.1 pH units, ±2°C, ±0.1 mL/min, ±1 minute)

- Employ fractional factorial or Plackett-Burman designs to minimize experiments

- Program the experimental sequence in CDS

Execution:

- Prepare mobile phases and standards according to method specifications

- Program the experimental sequence in CDS, incorporating system suitability tests

- Execute the sequence with randomized run order to minimize bias

- Monitor system performance throughout the sequence

Data Analysis:

- Calculate resolution between critical peak pairs for each experiment

- Determine retention time reproducibility across conditions

- Evaluate peak asymmetry and efficiency variations

- Establish acceptance criteria for each critical quality attribute

- Identify parameters requiring tight control during method implementation

The automated execution and data collection capabilities of CDS significantly reduce the labor burden of robustness testing while ensuring consistent data quality and complete documentation.

CDS-Enabled Analytical Quality by Design (AQbD)

The Analytical Quality by Design (AQbD) approach applies systematic methodology to method development, focusing on understanding method critical quality attributes and controlling critical parameters. CDS platforms provide essential tools for implementing AQbD principles through:

Method Operable Design Region (MODR) Definition: CDS facilitates the establishment of MODR—the multidimensional combination and interaction of input variables demonstrated to provide assurance of quality—through structured experimentation and data analysis. The modeling capabilities within advanced CDS platforms enable visualization of the design space, showing parameter combinations that will produce acceptable results [35].

Control Strategy Implementation: Once the MODR is established, CDS supports implementation of control strategies through method parameters that ensure operation within the design space. System suitability tests can be programmed within the CDS to verify method performance before each sequence, with automated data flagging algorithms alerting analysts to potential issues [37].

Knowledge Management: AQbD emphasizes scientific understanding and knowledge retention, which CDS supports through searchable databases of project data [35]. This allows organizations to share project information, using past attempts as starting points for future projects. With search capabilities by structure, substructure, method parameters, retention time, and other attributes, institutional knowledge becomes accessible rather than languishing unused [35].

The QbD approach to method development aided by CDS provides return on investment through improved method robustness and reduced method failure rates, with one organization reporting that "reliability, robustness, and lifetimes of methods have improved, while at the same time we have reduced the loss of interpretation information and expensive retesting of samples" [35].

CDS Integration with Complementary Techniques

Mass Spectrometry Detection Integration

The integration of chromatographic separation with mass spectrometric detection represents a powerful combination for method development, particularly for impurity identification and complex matrix analysis. Modern CDS platforms seamlessly control hyphenated systems, synchronizing data acquisition across multiple detectors. New MS systems introduced in 2024-2025, such as the Sciex 7500+ MS/MS and ZenoTOF 7600+, offer enhanced capabilities for method development with features including increased resilience across sample types, improved user serviceability, and advanced fragmentation techniques like Electron Activated Dissociation (EAD) [36].

The timsTOF Ultra 2 from Bruker, a trapped ion mobility-TOF MS designed for advanced proteomics and multiomics, enables deep, high-fidelity 4D proteomics from minimal sample amounts—capable of measuring over 1000 proteins from a 25-pg sample [36]. These advanced detection capabilities, when integrated with CDS control, provide unprecedented insight into separation performance and compound identity confirmation during method development.

Automated Method Translation and Transfer

Method transfer between different instrument platforms represents a common challenge in analytical development. CDS platforms address this through method translation capabilities that adjust method parameters to maintain separation performance when transferring methods between different systems (e.g., HPLC to UHPLC) or between instruments from different vendors. The following visualization illustrates the method transfer workflow:

CDS-Mediated Method Transfer Process

Advanced CDS platforms include system suitability tests and automated comparison tools that facilitate objective assessment of method performance across different instruments and locations, supporting successful technology transfer to quality control laboratories or contract research organizations.

Essential Research Reagent Solutions

Successful chromatographic method development requires not only software expertise but also appropriate selection of reagents and materials. The following table details essential research reagent solutions for chromatographic method development:

Table 2: Essential Research Reagents for Chromatographic Method Development

| Reagent/Material | Function in Method Development | Selection Considerations |

|---|---|---|

| HPLC Grade Solvents | Mobile phase components providing separation medium | Low UV absorbance, high purity, batch-to-batch consistency |

| Buffer Salts | Mobile phase additives controlling pH and ionic strength | Purity, solubility, UV transparency, volatility for MS compatibility |

| Stationary Phases | Chromatographic columns with varied selectivity | Multiple chemistries (C18, phenyl, HILIC, etc.), particle sizes, and dimensions |

| Reference Standards | Method development and system suitability testing | High purity, well-characterized, stability under method conditions |

| Derivatization Reagents | Enhance detection of poorly responding compounds | Reaction efficiency, stability of derivatives, compatibility with detection |

| Ion Pairing Reagents | Modify retention of ionic compounds | Concentration optimization, MS compatibility, batch-to-batch consistency |

The selection of appropriate reagents represents a critical aspect of method development that complements CDS proficiency. As noted in research, "Starting from a better place, you'll reduce the work needed to reach the optimal point" [35], highlighting the importance of foundational choices in reagents and columns before beginning systematic optimization.

Current Trends and Future Directions

The CDS landscape continues to evolve with several trends shaping future capabilities for method development:

Cloud-Based Deployments: Cloud-based CDS solutions are experiencing rapid adoption due to advantages in flexibility, accessibility, data backup, and handling costs [34]. These platforms enable real-time tracking and archiving of data while providing substantial storage capacity and remote access from any location, facilitating collaboration across research sites and with external partners.

Artificial Intelligence Integration: While not explicitly detailed in the search results, the trend toward increased automation and predictive modeling suggests growing incorporation of AI and machine learning algorithms for method development optimization. These capabilities would build upon existing modeling features to provide more intelligent method recommendations and troubleshooting guidance.

Enhanced Data Integrity and Compliance: Regulatory requirements continue to drive CDS enhancements in audit trail completeness, electronic signatures, and data protection. These features support the application of method development in regulated environments such as pharmaceutical quality control, where compliance with Good Manufacturing Practice (GMP) is essential [38].

Integration with Laboratory Informatics: CDS platforms increasingly function as components within broader laboratory informatics ecosystems, integrating with Laboratory Information Management Systems (LIMS), Electronic Laboratory Notebooks (ELN), and Scientific Data Management Systems (SDMS). This integration creates seamless data flow from method development through routine analysis, enhancing knowledge management and operational efficiency.

Chromatography Data Systems have evolved from simple data collection tools to sophisticated platforms that actively support and enhance the method development process. By leveraging CDS capabilities for systematic experimentation, data modeling, and knowledge management, analytical chemists can develop more robust methods in less time while reducing solvent consumption and instrument usage. The integration of foundational chemistry principles with advanced software tools represents the future of chromatographic method development, enabling researchers to efficiently navigate complex separation challenges while maintaining regulatory compliance.

As the field advances, capabilities for predictive modeling, automated optimization, and knowledge-based recommendations will further transform method development from an empirical art to a systematic science. For today's analytical chemists, proficiency with CDS represents not merely a technical skill but a fundamental component of the methodological toolkit essential for research excellence in pharmaceutical development and quality control environments.

Automating Data Processing and Quantitative Analysis to Accelerate Reporting

In the data-intensive landscape of modern analytical chemistry, the ability to rapidly process information and generate accurate reports has become a critical competency. Automating data processing and quantitative analysis represents a paradigm shift, moving scientists from manual, time-consuming tasks toward efficient, high-throughput workflows. This transformation is particularly vital in regulated industries like pharmaceutical development, where the speed of reporting can directly impact the timeline for bringing new therapies to patients. The integration of artificial intelligence (AI) and machine learning (ML) algorithms is revolutionizing this space, offering unprecedented capabilities in interpreting large volumes of complex analytical data while significantly enhancing both efficiency and accuracy [39].

For the contemporary analytical chemist, proficiency with these automated systems is no longer optional but essential. The modern laboratory generates data at a scale that far exceeds human capacity for manual interpretation, particularly with techniques like high-resolution mass spectrometry, multidimensional chromatography, and real-time sensor networks. Within pharmaceutical research, automated clinical trial reporting systems are demonstrating dramatic improvements, reducing some reporting timelines from weeks to mere days or hours while simultaneously improving consistency and regulatory compliance [40]. This technical guide explores the core principles, methodologies, and practical implementations of automated data processing frameworks that are becoming indispensable tools in the analytical chemist's software arsenal.

AI and Machine Learning Foundations

At the heart of modern automation strategies lie sophisticated AI and machine learning technologies that serve as the cognitive engine for data interpretation. These systems are distinguished from traditional software by their ability to learn from data patterns and improve their analytical performance over time. Within the analytical chemistry domain, several key AI subtypes each play distinct roles:

- Machine Learning (ML): ML algorithms excel at identifying patterns within complex datasets, enabling applications such as sample classification, property prediction, and anomaly detection in analytical data streams [39]. For quantitative analysis, supervised ML algorithms construct robust calibration models that can predict analyte concentrations in unknown samples with minimal human intervention.