Foundations of Method Validation Studies: A 2025 Guide for Robust and Compliant Bioanalysis

This article provides a comprehensive guide to method validation studies for researchers and drug development professionals.

Foundations of Method Validation Studies: A 2025 Guide for Robust and Compliant Bioanalysis

Abstract

This article provides a comprehensive guide to method validation studies for researchers and drug development professionals. It covers foundational regulatory principles, modern application methodologies, advanced troubleshooting strategies, and comparative validation frameworks. Grounded in the latest 2025 guidelines from the FDA and ICH, as well as emerging industry trends like AI and lifecycle management, this resource equips scientists to develop, optimize, and validate robust analytical methods that ensure data integrity, regulatory compliance, and patient safety.

Core Principles and Regulatory Landscape of Method Validation in 2025

Method validation is the formally documented process of proving that an analytical procedure employed for a specific test is suitable for its intended use [1]. It provides objective evidence that the method consistently produces reliable, accurate, and reproducible results that meet predetermined specifications and quality attributes [2]. In regulated industries like pharmaceuticals, this process offers a high degree of assurance that a specific analytical method will consistently yield assay results supporting accurate decisions about product quality [2]. Essentially, validation confirms that a method is scientifically sound and robust enough to deliver trustworthy data, forming the foundation for product safety, efficacy, and quality.

The terms "analytical method validation" and "test method validation" are often used interchangeably, as both refer to establishing the performance characteristics of a method—such as precision, accuracy, and specificity—to ensure they meet requirements for the intended application [2]. This process is not a one-time event but an integral part of good analytical practice that must be performed before a method's introduction into routine use, whenever validation conditions change, or whenever a method is modified outside its original scope [1].

The Critical Role and Regulatory Imperative of Validation

Method validation is a cornerstone of quality assurance in highly regulated environments. For manufacturers of medicinal products and medical devices, it is a Good Manufacturing Practice (GMP) regulatory requirement to produce evidence-based determination that the analytical methods used to analyze products are validated [2]. This means the methods must consistently generate true results with precision and accuracy every time they are used [2].

The primary goal is to guarantee that analytical data is trustworthy, thereby protecting patient safety and product integrity. A well-validated method ensures that a product's quality attributes are accurately measured, providing confidence that the product meets all required specifications for identity, strength, quality, and purity [3]. Without proper validation, there is no guarantee that test results reflect reality, potentially allowing unsafe or ineffective products to reach consumers.

Regulatory bodies globally recognize method validation as essential. The FDA's Process Validation Guidelines define it as "the process of establishing documented evidence which provides a high degree of assurance that a specific process such as analytical test method, will consistently produce a product supported by assay results meeting its predetermined specifications and quality attributes" [2]. While different regulatory agencies (FDA, ICH, EMA, USP) may emphasize different aspects, all require rigorous validation to ensure data integrity and product quality [3].

When is Method Validation Required?

Analytical methods require validation in several key circumstances:

- Before their introduction into routine use [1]

- Whenever the conditions change for which the method was originally validated (e.g., different instrument characteristics or sample matrices) [1]

- Whenever the method is changed and the modification falls outside the original scope of the validation [1]

- For test methods determining critical quality attributes that impact product quality and process efficacy [2]

Core Performance Parameters of Method Validation

The validation process systematically evaluates key analytical performance characteristics to ensure the method is fit for purpose. These parameters, often called "the eight steps of analytical method validation," provide a comprehensive assessment of method capability [4].

Accuracy

Accuracy measures the exactness of an analytical method or the closeness of agreement between an accepted reference value and the value found in a sample [4]. It represents how close measured results are to the true value and is typically expressed as the percentage of analyte recovered by the assay or as the bias of the method [2]. To document accuracy, guidelines recommend collecting data from a minimum of nine determinations across at least three concentration levels covering the specified range [4].

Precision

Precision is defined as the closeness of agreement among individual test results from repeated analyses of a homogeneous sample [4]. It is measured through three approaches:

- Repeatability (intra-assay precision): Ability to generate consistent results over a short time interval under identical conditions [4]

- Intermediate precision: Agreement between results considering within-laboratory variations (different days, analysts, or equipment) [4]

- Reproducibility: Results of collaborative studies among different laboratories, measuring precision under expected normal variation [4]

Precision is typically reported as the percent Relative Standard Deviation (%RSD), with repeatability of chromatographic methods ideally <1.0% [2].

Specificity

Specificity is the method's ability to measure accurately and specifically the analyte of interest in the presence of other components that may be expected in the sample [4]. It ensures that a peak's response is due to a single component with no peak coelutions [4]. For chromatographic methods, specificity is demonstrated by the resolution of the two most closely eluted compounds, typically the major component and a closely eluted impurity [4]. Modern specificity verification often employs peak-purity tests using photodiode-array detection or mass spectrometry [4].

Linearity and Range

Linearity is the ability of the method to provide test results directly proportional to analyte concentration within a given range [4]. The range is the interval between upper and lower analyte concentrations that have been demonstrated to be determined with acceptable precision, accuracy, and linearity [4]. Guidelines specify testing a minimum of five concentration levels to determine linearity and range, with the range typically expressed in the same units as test results [4]. The correlation coefficient (r) should be >0.99 for the selected range [2].

Limit of Detection (LOD) and Limit of Quantitation (LOQ)

The Limit of Detection is the lowest concentration of an analyte that can be detected but not necessarily quantified, while the Limit of Quantitation is the lowest concentration that can be quantified with acceptable precision and accuracy [4]. The most common determination method uses signal-to-noise ratios—typically 3:1 for LOD and 10:1 for LOQ [4]. An alternative calculation method uses the formula: LOD/LOQ = K(SD/S), where K is a constant (3 for LOD, 10 for LOQ), SD is the standard deviation of response, and S is the slope of the calibration curve [4].

Robustness

Robustness is a measure of the method's capacity to obtain comparable and acceptable results when perturbed by small but deliberate variations in method parameters [4]. It provides an indication of the method's reliability during normal usage and is influenced by variations such as stability of analytical solutions, extraction time, or mobile pH composition [2].

Table 1: Key Performance Parameters in Method Validation

| Parameter | Definition | Typical Acceptance Criteria | Methodology |

|---|---|---|---|

| Accuracy | Closeness to true value | % Recovery 98-102% (varies by sample type) | Compare to reference standard or spike recovery [4] [2] |

| Precision | Closeness between results | %RSD <1-2% (depending on sample type) | Multiple preparations of homogeneous sample [4] [2] |

| Specificity | Ability to measure analyte specifically | No interference from other components; resolution >1.5 | Spike with potentially interfering compounds [4] [2] |

| Linearity | Proportionality of response to concentration | Correlation coefficient r > 0.99 | Minimum of 5 concentration levels [4] [2] |

| Range | Interval where method performs acceptably | Meets accuracy, precision, linearity requirements | Established from linearity study [4] |

| LOD | Lowest detectable concentration | Signal-to-noise ratio ≥ 3:1 | Based on signal-to-noise or statistical calculation [4] |

| LOQ | Lowest quantifiable concentration | Signal-to-noise ratio ≥ 10:1 | Based on signal-to-noise or statistical calculation [4] |

| Robustness | Resistance to small parameter changes | Consistent results under varied conditions | Deliberate variation of method parameters [4] [2] |

Table 2: Example Precision Acceptance Limits Based on Sample Type

| Analytical Sample Type | Suggested Acceptance Limit (%RSD) |

|---|---|

| Assay of active ingredient | 1.0% |

| Impurity determination | 5-10% (depending on level) |

| Dissolution testing | 2-3% |

| Content uniformity | 2% |

Experimental Protocol: A Step-by-Step Validation Workflow

A properly structured validation follows a systematic protocol to ensure all parameters are adequately assessed. The process begins with careful planning and proceeds through experimental verification of each performance characteristic.

Pre-Validation Prerequisites

Before initiating validation, several prerequisites must be satisfied:

- Laboratory instruments must be properly qualified and calibrated [2] [3]

- A well-developed documentation process must be established [2]

- Reference standards must be reliable, stable, and properly characterized [2]

- Analysts must be trained and qualified [2]

- A well-documented method validation protocol must be prepared [2]

Validation Protocol Development

The validation protocol serves as the blueprint for all validation activities and should include:

- Statement of protocol scope and objectives [2]

- Responsibilities for approval, execution, and final review [2]

- List of required materials and instruments [2]

- The final draft of the test method [2]

- Detailed experimental design for verifying each performance parameter [2]

- Documents and forms for recording validation results [2]

- Acceptance criteria for each performance parameter [2]

Parameter-Specific Experimental Methodologies

Precision Determination

- Repeatability: Make a minimum of 6 preparations of a homogeneous sample, analyze, and compare results [2]. Calculate %RSD, which should ideally be <1.0% for chromatographic methods [2].

- Intermediate precision: Have two analysts prepare and analyze replicate sample preparations on different days using different equipment [2]. Each analyst should prepare their own standards and solutions. Compare results using statistical testing (e.g., Student's t-test) [4].

- Reproducibility: Conduct collaborative studies between different laboratories using the same method [5]. A minimum of eight sets of acceptable results are needed after outlier removal [5].

Accuracy Determination

For drug substances, accuracy measurements are obtained by:

- Comparison to analysis of a standard reference material [4]

- Comparison to a second, well-characterized method [4]

- For drug products, spike the active into a placebo matrix at amounts ranging from 25% to 150% of dose strength [2]

- Calculate accuracy as: % Accuracy = 100 × [(Experimental amount - Theoretical amount)/Theoretical amount] [2]

Specificity Verification

For HPLC methods, specificity is verified by:

- Adding analyte to each potential interfering compound [2]

- Ensuring no peaks present in mobile phase or diluents chromatogram, or that they elute with solvent front [2]

- Confirming all excipient peaks elute at relative retention times different from API, internal standard, or impurity peaks [2]

- Using photodiode-array detection or mass spectrometry for peak purity confirmation [4]

Linearity and Range Establishment

- Prepare at least 5 samples of differing concentrations in triplicate [2]

- Concentrations should cover 50-125% of the target concentration [2]

- Use accurate weighing and dilution techniques [2]

- Apply linear regression analysis to results [2]

- Verify correlation coefficient r > 0.99 for the selected range [2]

LOD and LOQ Determination

- Signal-to-noise method: Prepare samples at progressively lower concentrations until signal-to-noise ratio reaches 3:1 for LOD and 10:1 for LOQ [4]

- Statistical method: Use the formula LOD/LOQ = K(SD/S), where K is 3 for LOD and 10 for LOQ, SD is standard deviation of response, and S is slope of calibration curve [4]

- Validate the determined limits by analyzing an appropriate number of samples at those concentrations [4]

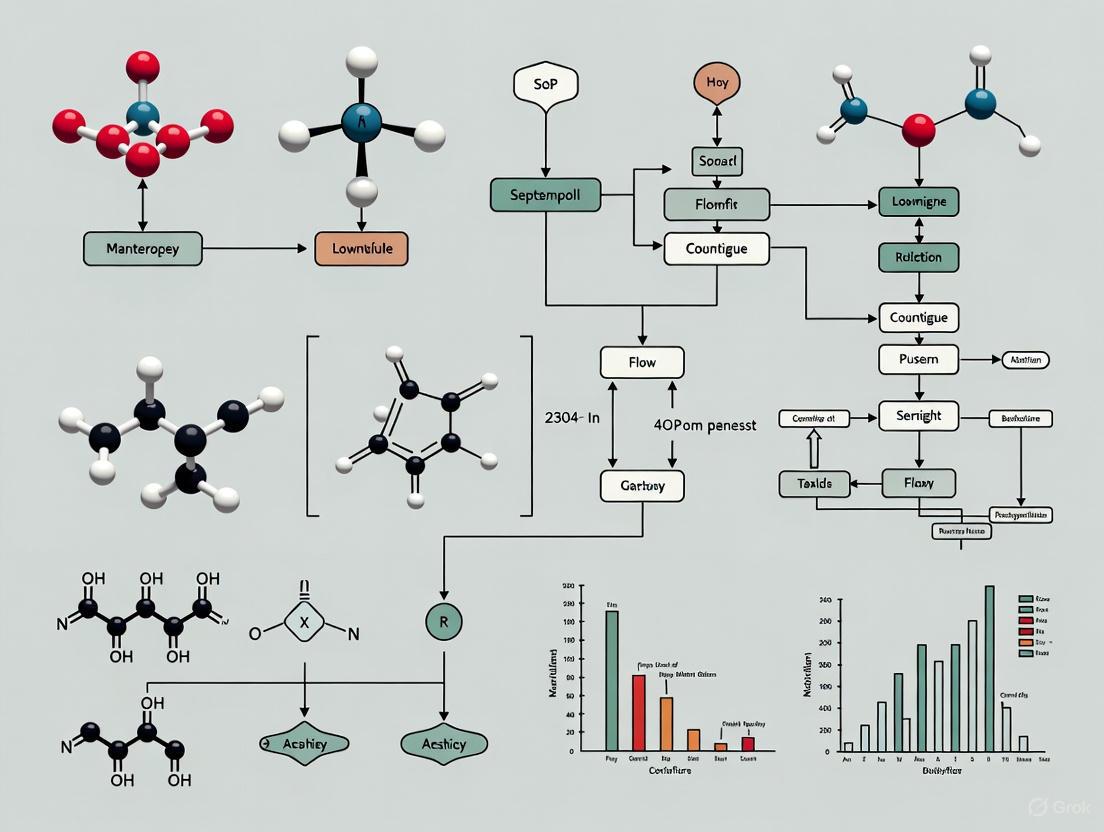

Diagram 1: Method Validation Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful method validation relies on high-quality materials and reagents with well-characterized properties. The following table details essential items used in validation experiments.

Table 3: Essential Research Reagents and Materials for Method Validation

| Item | Function in Validation | Critical Quality Attributes |

|---|---|---|

| Reference Standards | Serves as known concentration for accuracy, linearity, and precision studies [4] [2] | High purity, well-characterized, stable, traceable to primary standards [2] |

| Placebo Matrix | Used in accuracy studies by spiking with analyte to assess recovery without interference [2] | Represents final product composition without active ingredient [2] |

| System Suitability Standards | Verifies instrument performance before analytical runs [4] | Stable, produces consistent retention times and responses [4] |

| Mobile Phase Components | Creates the chromatographic environment for separation [3] | HPLC-grade purity, specified pH, filtered and degassed [3] |

| Internal Standards | Normalizes variation in sample preparation and injection (especially for LC-MS/MS) [3] | Stable, non-interfering, consistent recovery [3] |

Common Pitfalls and Risk Mitigation in Method Validation

Despite established guidelines, laboratories frequently encounter challenges during method validation. Awareness of these common pitfalls enables proactive risk management.

Insufficient Experimental Design

- Inadequate sample size: Too few data points increase statistical uncertainty [3]. Regulatory bodies expect robust sample sizes for each parameter.

- Solution: Follow guideline recommendations—minimum nine determinations for accuracy, six for repeatability, and five concentrations for linearity [4] [2].

Matrix Effects

- Failing to test across relevant matrices: Different sample matrices can cause unexpected reactions during real-world use [3].

- Solution: Validate using the same matrix as actual samples whenever possible [5]. For capillary whole blood tests, consider EDTA whole blood as substitute when necessary [5].

Unrealistic Test Conditions

- Conditions not reflecting routine operations: May conceal equipment faults or method limitations [3].

- Solution: System suitability tests must mimic actual use cases [3]. Robustness testing should explore method behavior at parameter extremes [4].

Statistical Misapplication

- Improper statistical methods: Can distort conclusions and hide method weaknesses [3].

- Solution: Ensure statistical tools match dataset type and validation objectives [3]. Use appropriate calculations for %RSD, linear regression, and confidence intervals [4].

Documentation Gaps

- Missing data or protocol deviations: Create red flags during audits and compromise regulatory submissions [3].

- Solution: Maintain complete, organized documentation with clear audit trails [3]. Document all deviations with justifications [2].

Diagram 2: Common Risks and Mitigation Strategies

Method validation remains the undeniable foundation of data integrity and product quality in regulated industries. By systematically establishing that analytical procedures are suitable for their intended use through rigorous assessment of accuracy, precision, specificity, and other critical parameters, organizations ensure the reliability of the data driving quality decisions. As regulatory landscapes evolve and analytical technologies advance, the fundamental principles of validation continue to provide the framework for generating scientifically sound, defensible data. A thoroughly validated method not only satisfies regulatory requirements but, more importantly, builds confidence in product quality and ultimately protects patient safety—the ultimate objective of all analytical testing in the health sciences.

The regulatory framework for pharmaceutical analysis is dynamic, with 2025 marking a significant period of implementation for harmonized guidelines critical for global drug development. The foundation of method validation studies research rests upon two pivotal International Council for Harmonisation (ICH) guidelines: ICH Q2(R2) on analytical procedure validation and ICH M10 on bioanalytical method validation. These documents provide the scientific and regulatory standards for demonstrating that analytical methods are fit for their intended purpose, ensuring the reliability, accuracy, and consistency of data submitted to regulatory authorities. As of late 2023 and 2024, these guidelines have been adopted by major regulatory bodies, including the European Commission, the U.S. Food and Drug Administration (FDA), and others, making their understanding and application essential for researchers, scientists, and drug development professionals [6].

This whitepaper provides an in-depth technical guide to navigating these core documents within the 2025 framework. It details the core principles of ICH Q2(R2) and ICH M10, summarizes key changes and requirements in structured tables, outlines detailed experimental protocols for critical validation experiments, and visualizes core workflows. Adherence to these harmonized guidelines is paramount for generating robust data that supports regulatory decisions on the safety, efficacy, and quality of new drug substances and products.

ICH Q2(R2) - Validation of Analytical Procedures

Scope and Key Definitions

ICH Q2(R2), "Validation of Analytical Procedures," provides guidance and recommendations for the validation of analytical procedures used in the testing of chemical and biological/biotechnological drug substances and products [7]. Its scope encompasses procedures for release and stability testing, including assay/potency, purity, impurities, identity, and other quantitative or qualitative measurements [7]. The guideline serves as a collection of terms and their definitions, forming a common language for analytical scientists and regulators. A fundamental principle reinforced in the 2023 revision is that the validation effort should be commensurate with the risk and the intended use of the procedure, allowing for a science- and risk-based justification of the approach taken [6].

Core Validation Parameters and Methodologies

The validation process involves experimentally demonstrating that an analytical procedure meets predefined acceptance criteria for a set of core parameters. The following table summarizes these parameters and their definitions as per ICH Q2(R2).

Table 1: Core Analytical Procedure Validation Parameters per ICH Q2(R2)

| Validation Parameter | Definition |

|---|---|

| Accuracy | The closeness of agreement between the value which is accepted as a conventional true value or an accepted reference value and the value found. |

| Precision | The closeness of agreement between a series of measurements obtained from multiple sampling of the same homogeneous sample under the prescribed conditions. |

| Specificity | The ability to assess unequivocally the analyte in the presence of components which may be expected to be present. |

| Detection Limit (LOD) | The lowest amount of analyte in a sample which can be detected but not necessarily quantitated as an exact value. |

| Quantitation Limit (LOQ) | The lowest amount of analyte in a sample which can be quantitatively determined with suitable precision and accuracy. |

| Linearity | The ability of the procedure (within a given range) to obtain test results directly proportional to the concentration (amount) of analyte in the sample. |

| Range | The interval between the upper and lower concentration (amounts) of analyte in the sample for which it has been demonstrated that the analytical procedure has a suitable level of precision, accuracy, and linearity. |

| Robustness | A measure of the procedure's capacity to remain unaffected by small, deliberate variations in method parameters and provides an indication of its reliability during normal usage. |

Experimental Protocol: Accuracy and Precision (Combined Approach)

ICH Q2(R2) introduces the possibility of using a combined approach for accuracy and precision, which can be a more efficient and holistic way to demonstrate method performance [6].

- Objective: To simultaneously demonstrate the trueness (accuracy) and precision of an analytical procedure over the specified range.

- Methodology:

- Prepare a minimum of three concentration levels (e.g., low, medium, high) covering the validation range, with each level analyzed in a minimum of three replicates.

- The analysis should be performed over different days, by different analysts, or using different equipment to incorporate intermediate precision.

- For each concentration level, calculate the mean (as a measure of accuracy) and the standard deviation or confidence interval (as a measure of precision).

- A combined assessment can be performed by calculating a Target Measurement Uncertainty interval or ensuring that the confidence interval for the result is compatible with pre-defined acceptance criteria that integrate both accuracy and precision [6].

- Data Analysis: Report the mean, standard deviation, relative standard deviation (RSD), and an appropriate 100(1-α)% confidence interval for each concentration level. The observed confidence intervals should be compatible with the corresponding acceptance criteria.

Experimental Protocol: Linearity

- Objective: To establish a linear relationship between the analytical response and the concentration of the analyte.

- Methodology:

- Prepare a minimum of five concentration levels spanning the expected range (e.g., from 50% to 150% of the target concentration).

- Analyze each concentration level. ICH Q2(R2) allows for single measurements if justified, though some regulatory expectations (e.g., ANVISA) may require replicates [8].

- Plot the analytical response versus the analyte concentration.

- Perform linear regression analysis to calculate the slope, y-intercept, and coefficient of determination (r²).

- Data Analysis: The correlation coefficient, y-intercept, and residual sum of squares should be reported. A visual inspection of the plot and the residuals is crucial to confirm linearity.

Industry Implementation and Survey Insights

A 2024 survey conducted by the ISPE-PQLI team provides valuable insights into industry readiness and challenges for implementing ICH Q2(R2) [6]. The key findings are summarized below.

Table 2: Industry Readiness for ICH Q2(R2) Implementation (ISPE Survey 2024)

| Survey Aspect | Key Finding | Percentage of Respondents |

|---|---|---|

| Confidence Intervals (CI) | Expressed concerns about CI requirements due to limited replicates and lack of expertise. | 76% |

| Combined Accuracy & Precision | Already using or planning to use combined approaches. | 58% |

| Platform Analytical Procedures (Clinical) | Have utilized platform procedures during clinical development. | >50% |

| Platform Analytical Procedures (Commercial) | Have successfully secured approval for commercial use with abbreviated validation. | ~10% |

| Platform Analytical Procedures (Future) | Willing to implement for future commercial registrations. | 45% |

The survey highlighted several perceived risks, including uncertainty in setting acceptance criteria for confidence intervals and combined approaches, regulatory acceptance of platform analytical procedures, and application of the new concepts to legacy products [6]. Conversely, significant opportunities were identified, such as leveraging prior knowledge and development data, applying enhanced science- and risk-based justifications, and the clear documentation of platform analytical procedures for increased efficiency [6].

ICH M10 - Bioanalytical Method Validation

Scope and Regulatory Context

ICH M10, "Bioanalytical Method Validation and Study Sample Analysis," provides harmonized regulatory recommendations for the validation of bioanalytical assays used to measure concentrations of chemical and biological drugs and their metabolites in biological matrices [9]. The data generated from these methods are critical for supporting regulatory decisions on safety and efficacy, making it imperative that the methods are well-characterized, appropriately validated, and thoroughly documented [9]. The guideline applies to both nonclinical and clinical studies and covers the two most common bioanalytical techniques: chromatographic methods and ligand-binding assays [10].

Core Validation Parameters and Requirements for Bioanalytical Assays

Bioanalytical method validation shares some common parameters with analytical validation but places specific emphasis on factors unique to complex biological samples.

Table 3: Key Bioanalytical Method Validation Parameters per ICH M10

| Validation Parameter | Specific Requirements in Bioanalysis |

|---|---|

| Selectivity and Specificity | Demonstration that the measured analyte response is unaffected by the presence of endogenous matrix components, metabolites, or concomitant medications. |

| Accuracy and Precision | Required at multiple QC levels (LLOQ, low, medium, high), with acceptance criteria typically within ±15% (±20% at LLOQ) for accuracy and RSD not exceeding 15% (20% at LLOQ). |

| Matrix Effect | Must be evaluated for mass spectrometry-based methods to ensure that the matrix does not suppress or enhance the analyte signal. |

| Stability | A comprehensive assessment of analyte stability under various conditions (e.g., benchtop, frozen, freeze-thaw, in-process) in the specific biological matrix. |

| Incurred Sample Reanalysis (ISR) | Reanalysis of a portion of study samples to demonstrate the reproducibility of the method in the actual study matrix. |

Experimental Protocol: Incurred Sample Reanalysis (ISR)

- Objective: To verify the reproducibility and reliability of the validated bioanalytical method when applied to actual study samples from dosed subjects.

- Methodology:

- Select a portion of study samples (as per M10 recommendations) for reanalysis. Samples should be selected around C~max~ and in the elimination phase for nonclinical studies, and from a sufficient number of subjects in clinical studies.

- The reanalysis should be performed in a different run than the original analysis, ideally by a different analyst.

- Calculate the percentage difference between the original and reanalyzed concentrations for each sample.

- Data Analysis: The results are acceptable if at least two-thirds of the repeated sample results are within a pre-defined percentage (e.g., 20%) of their original value. Failure to meet ISR criteria may trigger an investigation and potential re-assay of study samples.

Essential Tools and Workflows for Compliance

The Scientist's Toolkit: Key Reagent and Material Solutions

Successful method validation and application require carefully selected reagents and materials. The following table details essential items for a bioanalytical laboratory.

Table 4: Essential Research Reagent Solutions for Bioanalytical Method Development

| Item / Reagent | Function and Importance |

|---|---|

| Stable-Labeled Internal Standards (IS) | Corrects for variability in sample preparation and ionization efficiency in LC-MS/MS, improving accuracy and precision. |

| Control Biofluid Matrix | (e.g., blank plasma). Sourced from the appropriate species and anticoagulant, it is essential for preparing calibration standards and quality control samples and for assessing selectivity. |

| Analyte Reference Standard | A well-characterized substance of known purity and identity used to prepare calibration standards; the cornerstone of quantitative analysis. |

| Critical Assay Reagents | (e.g., antibodies, enzymes, solid-phase extraction sorbents). The quality and specificity of these reagents are paramount for the performance of ligand-binding assays and sample cleanup procedures. |

Visualizing the Analytical Procedure Lifecycle

The effective implementation of ICH Q2(R2) and ICH Q14 promotes a holistic lifecycle management approach for analytical procedures, from development through routine use and eventual retirement. The following diagram illustrates this integrated workflow.

Bioanalytical Method Validation Workflow

The validation of a bioanalytical method per ICH M10 is a sequential process where the failure of a key parameter may necessitate a return to the development stage. The workflow below outlines the critical path.

The 2025 regulatory framework, shaped by the implementation of ICH Q2(R2) and ICH M10, underscores a global commitment to harmonized, science-based, and risk-informed analytical practices. For drug development professionals, a deep understanding of these guidelines is non-negotiable. Success hinges on proactively addressing implementation challenges, such as the statistical evaluation of confidence intervals and the strategic use of platform approaches, while leveraging the opportunities for enhanced flexibility and efficiency presented by the new paradigms. As regulatory agencies worldwide continue to adopt and provide training on these guidelines [11] [6], a commitment to continuous learning and robust scientific practice will ensure that analytical and bioanalytical methods generate data of the highest quality, ultimately supporting the development of safe and effective medicines for patients.

In pharmaceutical development and quality control, the reliability of analytical data is non-negotiable. Analytical method validation provides documented evidence that a procedure is fit for its intended purpose, ensuring the identity, potency, quality, and purity of drug substances and products [12]. This process is a cornerstone of regulatory submissions worldwide, required by agencies following International Council for Harmonisation (ICH) and U.S. Food and Drug Administration (FDA) guidelines [12] [13].

Among the various validation parameters, four stand as critical pillars: Accuracy, Precision, Specificity, and Linearity. These characteristics form the foundation for demonstrating that an analytical method produces trustworthy results, safeguarding public health and ensuring compliance with global regulatory standards. This guide explores the technical definitions, experimental protocols, and significance of these core parameters within the broader context of method validation studies.

Defining the Core Parameters

Accuracy

Accuracy expresses the closeness of agreement between a measured value and a value accepted as either a conventional true value or an accepted reference value [14] [4]. It is a measure of correctness, sometimes referred to as "trueness." An inaccurate method yields results that are not close to the true value, which could lead to incorrect decisions about drug quality, potency, or safety [14].

Precision

Precision expresses the closeness of agreement (degree of scatter) between a series of measurements obtained from multiple sampling of the same homogeneous sample under the prescribed conditions [14] [4]. It is a measure of reproducibility and repeatability, without necessarily implying anything about the result's accuracy. A method can be precise without being accurate, and vice versa.

Specificity

Specificity is the ability to assess unequivocally the analyte of interest in the presence of other components that may be expected to be present in the sample matrix, such as impurities, degradants, or excipients [14] [4] [12]. It ensures the method is free from interference and that the measured signal is due solely to the target analyte, minimizing false positives or negatives [14] [15].

Linearity

Linearity is the ability of a method to produce test results that are directly, or through a well-defined mathematical transformation, proportional to the concentration of the analyte in samples within a given range [4] [12]. It demonstrates that the analytical instrument response shifts in a predictable and consistent manner as the analyte concentration changes.

Table 1: Core Validation Parameter Definitions and Importance

| Parameter | Technical Definition | Importance in Method Validation |

|---|---|---|

| Accuracy | Closeness of agreement between measured and true value [14] | Ensures results are correct, preventing incorrect quality control decisions [16] |

| Precision | Closeness of agreement between repeated measurements [14] | Ensures method consistency and reliability under normal operating conditions [13] |

| Specificity | Ability to measure analyte unequivocally amid interferents [12] | Confirms the measured signal is from the target only, avoiding false results [15] |

| Linearity | Ability to obtain results proportional to analyte concentration [4] | Establishes the method's quantitative capability across a designated range [15] |

Experimental Protocols for Determination

Validating an analytical method requires carefully designed experiments to generate robust data for each parameter. The following protocols are aligned with ICH and FDA guidelines.

Protocol for Determining Accuracy

Accuracy is typically assessed by analyzing samples of known concentration and comparing the measured value to the true value [14]. Two common approaches are:

- Comparison to a Reference Material: The results from the method under validation are compared to those from the analysis of a standard reference material [4].

- Spike Recovery Studies: For drug products, accuracy is evaluated by analyzing synthetic mixtures spiked with known quantities of components. A known amount of the pure analyte is added to a placebo or sample matrix, and the percentage of the analyte recovered is calculated [4].

Detailed Methodology:

- Prepare a minimum of nine determinations over a minimum of three concentration levels covering the specified range (e.g., three concentrations, three replicates each) [14] [4].

- The data should be reported as the percent recovery of the known, added amount. For example, an accuracy of 98-102% recovery is often considered acceptable for an Active Pharmaceutical Ingredient (API) assay [13].

- The difference between the mean and the true value along with confidence intervals (e.g., ±1 standard deviation) should also be reported [4].

Protocol for Determining Precision

Precision is measured at three levels: repeatability, intermediate precision, and reproducibility [4].

- Repeatability (Intra-assay Precision): Precision under the same operating conditions over a short time interval [4] [13].

- Methodology: Analyze a minimum of nine determinations covering the specified range (three levels, three repetitions) or a minimum of six determinations at 100% of the test concentration [4].

- Reporting: Results are typically reported as the Relative Standard Deviation (RSD) or % RSD (coefficient of variation) of the replicate measurements [4].

- Intermediate Precision: Precision within the same laboratory, capturing variations from different days, different analysts, or different equipment [4].

- Methodology: An experimental design is used where two analysts prepare and analyze replicate sample preparations using their own standards and potentially different HPLC systems [4].

- Reporting: The %-difference in the mean values between the two analysts' results is calculated and can be subjected to statistical tests (e.g., Student's t-test) [4].

- Reproducibility: Precision between different laboratories, typically assessed during collaborative studies for method standardization [4].

Table 2: Experimental Design for Precision Studies

| Precision Level | Conditions Varied | Minimum Experimental Design | Reporting Metric |

|---|---|---|---|

| Repeatability | None (same analyst, same system, short time) | 9 determinations over 3 concentration levels (3x3) or 6 at 100% [4] | % RSD [4] |

| Intermediate Precision | Different days, analysts, or equipment [13] | Two analysts preparing and analyzing replicates independently [4] | % difference in means, statistical comparison [4] |

| Reproducibility | Different laboratories | Collaborative study across multiple labs | % RSD, confidence intervals [4] |

Protocol for Determining Specificity

Specificity ensures the method can distinguish the analyte from everything else that might be in the sample.

- For Identification Tests: Specificity is demonstrated by the ability to discriminate between other compounds in the sample or by comparison to known reference materials [4].

- For Assay and Impurity Tests: Specificity is shown by resolving the two most closely eluted compounds (e.g., the API and a closely eluting impurity). This can be done by:

- Spiking with Interferents: If impurities or degradants are available, it is demonstrated that the assay is unaffected by the presence of these spiked materials [4].

- Peak Purity Tests: Using techniques like photodiode-array (PDA) detection or mass spectrometry (MS) to demonstrate that the analyte peak is pure and not co-eluting with any other compound [4]. Modern PDA detectors collect spectra across a peak to evaluate purity, while MS provides unequivocal structural information [4].

Protocol for Determining Linearity and Range

Linearity establishes that the method's response is proportional to analyte concentration, while the Range is the interval between the upper and lower concentrations for which linearity, accuracy, and precision have been demonstrated [14] [4].

- Methodology:

- Reporting:

The Scientist's Toolkit: Essential Research Reagent Solutions

The following reagents and materials are fundamental for conducting the experiments described in this guide, particularly for chromatographic methods like HPLC.

Table 3: Key Research Reagents and Materials for Method Validation

| Item | Function in Validation |

|---|---|

| Reference Standard (High Purity) | Serves as the known, true value for accuracy studies and for constructing calibration curves in linearity testing [4]. |

| Placebo Formulation | A mixture of all sample components except the analyte, used in specificity testing to check for interference and in accuracy recovery studies [4]. |

| Available Impurities/Degradants | Pure characterized impurities are used in specificity testing to demonstrate resolution from the main analyte [4]. |

| Appropriate Chromatographic Column | The stationary phase (e.g., C18 for HPLC) is critical for achieving the separation needed to demonstrate specificity and generate precise data [13]. |

| High Purity Solvents and Reagents | Essential for preparing mobile phase and sample solutions; impurities can contribute to noise, affecting precision, sensitivity, and linearity [13]. |

Visualizing the Method Validation Workflow and Relationships

The following diagrams illustrate the logical relationships between the core parameters and a general workflow for a validation study.

Core Parameter Relationships

Validation Study Workflow

The parameters of Accuracy, Precision, Specificity, and Linearity are formally defined in global regulatory guidelines, primarily ICH Q2(R2): Validation of Analytical Procedures and its complementary guideline ICH Q14: Analytical Procedure Development [12]. The FDA adopts these ICH guidelines, making them critical for submissions like New Drug Applications (NDAs) [12]. A modern, lifecycle approach to validation, as encouraged by these latest guidelines, involves defining an Analytical Target Profile (ATP) upfront, which prospectively outlines the required performance characteristics of the method, including these four core parameters [12].

In conclusion, a rigorous understanding and evaluation of Accuracy, Precision, Specificity, and Linearity is fundamental to any method validation study in pharmaceutical research and development. These parameters are not merely checkboxes for regulatory compliance but are scientifically sound measures that collectively ensure an analytical method is fit-for-purpose, providing a foundation of reliable data for decision-making throughout the drug development lifecycle.

In the context of method validation studies, the integrity of analytical data is the cornerstone of credible scientific research and regulatory compliance. Data integrity refers to the completeness, consistency, and accuracy of data throughout its entire lifecycle [17]. The ALCOA+ framework provides a structured set of principles to ensure that all generated data are reliable, trustworthy, and reproducible. Originally articulated by the U.S. Food and Drug Administration (FDA) in the 1990s, ALCOA has evolved into ALCOA+ (and in some discussions, ALCOA++) to address the complexities of modern, data-intensive analytical workflows, including those leveraging artificial intelligence [18] [19] [20]. For researchers and drug development professionals, adhering to these principles is not merely a regulatory formality but a fundamental aspect of producing defensible data that can withstand scientific and regulatory scrutiny.

The following diagram illustrates the logical relationships between the core and extended ALCOA+ principles and their collective role in supporting data integrity and method validation.

Diagram 1: ALCOA+ Framework for Data Integrity

Core ALCOA+ Principles and Their Technical Definitions

The ALCOA+ framework is built upon a foundational set of five principles, extended by four additional criteria to form ALCOA+, with traceability often discussed as a further enhancement (ALCOA++) [18] [21] [22]. These principles provide a comprehensive blueprint for data management in analytical workflows. The table below summarizes the core principles and their critical functions in method validation.

Table 1: The Core ALCOA+ Principles Explained

| Principle | Technical Definition | Role in Analytical Workflows & Method Validation |

|---|---|---|

| Attributable | Unambiguously identifies the source of the data (person or system) and any subsequent modifications [18] [23]. | Ensures that all data generated during method development, including instrument readings and manual observations, can be traced to the responsible scientist or automated system, creating a chain of accountability. |

| Legible | Data must be readable, understandable, and permanent for the entire required retention period [18] [24]. | Prevents misinterpretation of analytical results, such as chromatogram peaks or spectral data, ensuring that records remain decipherable for the duration of the method's lifecycle. |

| Contemporaneous | Data is recorded at the time the activity is performed, with a secure and accurate timestamp [18] [19]. | Documents the exact sequence of the analytical procedure, which is critical for investigating anomalies and ensuring that method validation steps are followed in real-time. |

| Original | The first capture of data or a "certified copy" created under controlled procedures [18] [25]. | Preserves the raw, unprocessed data from analytical instruments (e.g., HPLC, mass spectrometers) as the source of truth, from which all subsequent analyses and reports are derived. |

| Accurate | Data is error-free, truthful, and represents the actual observation or measurement [18] [23]. | Forms the basis for calculating method validation parameters (e.g., accuracy, precision, linearity); any inaccuracy directly compromises the validity of the method itself. |

| Complete | All data is present, including repeat or reanalysis performed, with no omissions [18] [22]. | Ensures that the entire dataset from the method validation study is available for review, including any outliers or failed runs, providing a true picture of the method's performance. |

| Consistent | Data is sequenced chronologically and created using standardized processes [18] [26]. | Demonstrates that the analytical method is executed under a stable, controlled system, which is a prerequisite for proving the robustness and reliability of the method. |

| Enduring | Data is recorded on durable media and preserved for the length of the retention period [18] [25]. | Guarantees that validation data remains intact and usable for the lifespan of the analytical method, supporting method transfers, verifications, and regulatory inspections. |

| Available | Data is readily accessible for review, audit, or inspection throughout its retention period [18] [23]. | Allows for timely monitoring of method performance and immediate retrieval of validation data to support regulatory submissions or during audits. |

| Traceable | (ALCOA++) Data changes are logged, and the history of the data can be reconstructed from source to result [18] [21]. | Provides a complete audit trail for the data generated during method validation, documenting the "who, what, when, and why" of any changes to ensure full transparency. |

Implementing ALCOA+ in Analytical Workflows: Protocols and Controls

Experimental Protocol for a Validated Analytical Method

Implementing ALCOA+ requires embedding its principles into the very fabric of experimental procedures. The following protocol outlines a generalized workflow for executing a validated analytical method, such as a chromatographic assay for drug substance quantification, with integrated ALCOA+ controls.

Table 2: ALCOA+ Compliant Experimental Protocol Workflow

| Step | Procedure | Integrated ALCOA+ Controls & Documentation |

|---|---|---|

| 1. Sample Preparation | Weigh the analyte and prepare sample solutions according to the validated method. | Attributable: Analyst logs into the LMS/LIMS. Original/Accurate: Use calibrated balances; print weight tickets or capture data electronically. Contemporaneous: Record preparation time in lab notebook or electronic system immediately. |

| 2. Instrument Analysis | Inject prepared samples into the analytical instrument (e.g., HPLC). | Attributable: System uses unique user login. Original: Instrument data system acquires and stores raw data file. Contemporaneous: Run sequence starts with automated timestamp. Accurate: Instrument qualification and calibration status is verified before use. |

| 3. Data Processing | Integrate chromatograms, generate calibration curve, and calculate results. | Consistent: Apply predefined and validated integration parameters. Traceable: The data processing method is version-controlled. Any manual reprocessing is recorded in the audit trail with a reason. |

| 4. Result Review & Approval | Senior scientist or QA reviews the data packet for compliance and accuracy. | Complete: Reviewer checks for presence of all raw data, processed data, and metadata (e.g., instrument logs, audit trails). Legible: Ensure all electronic and paper records are clear and understandable. Available: Data is stored in a searchable archive for retrieval during the review. |

| 5. Archival | Transfer the complete data package to a secure, long-term storage repository. | Enduring: Data is archived in a format that ensures readability for the mandated retention period. Available: Archival system is indexed to allow for authorized retrieval for future reference or inspection. |

The workflow for this protocol, highlighting key decision points and data integrity checkpoints, is visualized below.

Diagram 2: ALCOA+ Compliant Analytical Workflow

Technical and Procedural Controls for ALCOA+ Compliance

Achieving ALCOA+ compliance requires a combination of technical system controls and robust procedural governance. The following table details essential controls for a modern laboratory environment.

Table 3: Technical and Procedural Controls for ALCOA+

| Control Category | Specific Mechanisms | Supported ALCOA+ Principles |

|---|---|---|

| Access Security | Unique user IDs, role-based access control, strong password policies, and electronic signatures [18] [23]. | Attributable, Original, Accurate |

| Audit Trails | Secure, computer-generated, time-stamped electronic records that track creation, modification, or deletion of data [18] [26]. | Attributable, Contemporaneous, Complete, Traceable |

| Data Capture & Storage | Automated data capture from instruments, use of validated systems, secure storage on durable media with regular backups, and disaster recovery plans [23] [24]. | Original, Accurate, Legible, Enduring, Available |

| Procedural Governance | Comprehensive training on Data Integrity and GDP, standardized SOPs for data handling, routine internal audits, and a culture of quality and transparency [18] [17]. | Consistent, Complete, Accurate |

The Scientist's Toolkit: Essential Research Reagent Solutions

The integrity of an analytical method is also dependent on the quality and consistency of the materials used. The following table details key reagent solutions and their critical functions in ensuring reliable and ALCOA+ compliant results.

Table 4: Essential Research Reagents for Analytical Workflows

| Reagent/Material | Function in Analytical Workflows | Data Integrity Consideration |

|---|---|---|

| Certified Reference Standards | Provides the absolute benchmark for calibrating instruments, qualifying methods, and determining the accuracy and traceability of measurements. | Using uncertified or improperly characterized standards fundamentally undermines Accuracy and Traceability. Their source, purity, and certification must be documented. |

| HPLC/Grade Solvents | Serves as the mobile phase and sample diluent in chromatographic systems. Purity and lot-to-lot consistency are critical for maintaining stable baselines and reproducible retention times. | Inconsistent solvent quality leads to variable results, violating Consistency and Accuracy. Supplier Certificates of Analysis (CoA) should be retained as part of the Complete record. |

| System Suitability Test (SST) Kits | A pre-defined mixture of analytes used to verify that the total analytical system (instrument, reagents, column, and analyst) is performing adequately at the start of a sequence. | SST failure invalidates all subsequent sample data, acting as a critical control for Accuracy. SST results are Original records that must be included to demonstrate data validity. |

| Stable Isotope-Labeled Internal Standards | Added to samples to correct for analyte loss during preparation and for matrix effects during instrumental analysis, improving the precision and accuracy of quantification. | Proper use supports Accurate and Consistent results. The identity and concentration of the internal standard must be Attributable and traceable. |

Advanced Applications: ALCOA+ in AI-Driven and Automated Systems

The principles of ALCOA+ are increasingly critical with the adoption of advanced technologies like Artificial Intelligence (AI) and Machine Learning (ML) in analytical development. These systems handle vast volumes of data, making traditional manual checks insufficient. The integration of ALCOA+ ensures that the data fueling AI models is reliable, thereby ensuring the output is trustworthy [20].

For AI-driven analytical methods, specific considerations include:

- Attributable & Traceable: The AI model itself, including its version, training dataset, and hyperparameters, must be documented and preserved as part of the Original record. The rationale for any model-based decision or anomaly detection should be loggable [20].

- Consistent & Complete: The data pipeline feeding the AI must be standardized and controlled to prevent introducing bias or drift. The entire dataset used for training and validation must be Complete and representative [21] [20].

- Accurate: Robust model validation protocols are necessary to ensure the AI's predictions are Accurate and reliable within the defined scope of the method. This includes challenging the model with known standards and monitoring its performance over time [20].

In automated laboratories, ALCOA+ is operationalized through validated system interfaces and seamless data transfer between instruments and the central Laboratory Information Management System (LIMS). This minimizes manual transcription errors, enforces Contemporaneous recording, and ensures that Original data is automatically captured and made Available for review [23] [24].

The ALCOA+ framework is an indispensable component of the foundation of method validation studies. By rigorously applying its principles—Attributable, Legible, Contemporaneous, Original, Accurate, Complete, Consistent, Enduring, Available, and Traceable—research scientists and drug developers can establish a controlled environment where data is generated, processed, and maintained with the highest degree of integrity. This not only ensures regulatory compliance but, more importantly, builds a bedrock of trust in the analytical results that underpin critical decisions in the drug development process. As analytical technologies evolve towards greater automation and intelligence, the disciplined application of ALCOA+ will remain the key to ensuring that innovation is built upon a foundation of reliable and defensible data.

Validation is a critical cornerstone of pharmaceutical development and clinical research, ensuring that processes, methods, and computer systems consistently produce reliable results that safeguard patient safety and product quality. Traditionally, validation followed a one-size-fits-all approach, often characterized by comprehensive, uniform testing regardless of the actual risk involved. This prescriptive methodology, while thorough, frequently led to inefficient resource allocation, where trivial aspects received the same scrutiny as critical ones.

The modern risk-based approach to validation represents a fundamental paradigm shift, moving from this compliance-centric model to a science-based, targeted framework. This methodology systematically focuses efforts on areas with the greatest potential impact on patient safety, product quality, and data integrity [27] [28]. By aligning validation activities with patient risk, organizations can optimize resource deployment, enhance operational efficiency, and maintain rigorous regulatory compliance, all while strengthening the ultimate goal of protecting public health.

This guide provides an in-depth technical exploration of risk-based validation, detailing its core principles, implementation frameworks, and practical experimental protocols tailored for researchers, scientists, and drug development professionals.

Core Principles of a Risk-Based Validation Framework

A risk-based validation framework is governed by several interconnected principles that differentiate it from traditional approaches. Understanding these principles is essential for effective implementation.

- Proportionality: The depth and extent of validation activities are proportionate to the level of risk identified. High-risk elements demand comprehensive validation, while low-risk aspects may require only verification or simple checks [27] [28].

- Science-Driven Rationale: Decisions are grounded in scientific evidence and process understanding, replacing arbitrary rules. For instance, the old "golden rule" of testing in triplicate is superseded by a justified test plan based on risk assessment [28].

- Lifecycle Management: Validation is not a one-time event but a continuous process spanning the entire lifecycle of a process, method, or system. This includes stages from initial design and qualification through to ongoing process verification during routine production [28].

- Focus on Critical Aspects: The framework deliberately directs attention and resources toward Critical Process Parameters (CPPs) and Critical Quality Attributes (CQAs) that directly impact patient safety and product efficacy [27].

- Ongoing Risk Management: Risk management is an iterative activity. Risks are regularly monitored, reviewed, and re-assessed in light of performance data and process changes, ensuring the validation state remains current [27].

The following diagram illustrates the logical flow and cyclical nature of the risk-based validation lifecycle.

Implementing the Risk-Based Approach: A Step-by-Step Methodology

Successful implementation of a risk-based approach requires a structured, multi-stage process. The following section outlines this methodology, from initial assessment through to continuous verification.

Foundational Requirements and Risk Assessment

The journey begins with a clear understanding of requirements and a systematic risk assessment.

- User Requirement Specifications (URS): The foundation is a well-defined URS, which outlines all necessary functions and performance criteria for the equipment, process, or software being validated. This provides the traceable inputs for the subsequent risk assessment [28].

- Functional Requirement Specifications (FRS): For software validation, an FRS logically follows the URS, detailing how the configured system will meet the user requirements [28].

- Risk Identification and Analysis: A systematic assessment identifies potential hazards, failure modes, and sources of variability. Tools such as Failure Mode and Effects Analysis (FMEA) or Hazard Analysis and Critical Control Points (HACCP) are commonly employed for this purpose [27]. The process involves asking fundamental questions: "What could go wrong?", "How likely is it to occur?", and "What are the consequences?" [29].

Risk Prioritization and Control

Once risks are identified, they must be evaluated and prioritized to direct resources effectively.

- Risk Scoring Matrix: Risks are prioritized based on their severity, probability of occurrence, and detectability [27] [30]. A standard risk matrix categorizes risks as High, Medium, or Low. For example, in clinical trials, impact on patient well-being and safety is assigned the highest severity score [30].

- Defining Risk Levels:

- Risk Control Measures: Mitigation strategies are developed and implemented to reduce identified risks to an acceptable level. These can include process design improvements, enhanced quality control systems, robust standard operating procedures (SOPs), and additional personnel training [27].

Validation Strategy, Execution, and Ongoing Monitoring

The prioritized risks directly inform the validation strategy and its execution.

- Validation Strategy and Test Plan: Based on the risk assessment, a validation strategy is developed that defines the scope, approach, and level of validation required [27]. The test plan is tailored to the risk level:

- High Risk: Complete, comprehensive testing is required, similar to the classic validation approach [28].

- Medium Risk: Testing of functional requirements per the URS and FRS is required to ensure proper characterization [28].

- Low Risk: No formal testing may be needed, but the presence or detectability of the function should be confirmed [28].

- Validation Execution (The Three Stages): For processes, validation execution is split into three stages as per FDA guidance [28]:

- Process Design: The commercial process is defined based on knowledge from development and scale-up.

- Process Qualification: The designed process is confirmed to be reproducible at commercial scale.

- Continued Process Verification: Ongoing assurance is obtained that the process remains in a state of control during routine production.

- Ongoing Risk Management: The final, continuous stage involves regular monitoring of process performance, data analysis, periodic revalidation, and rigorous change management to ensure the validated state is maintained throughout the lifecycle [27].

The workflow below details the experimental and decision-making process for determining the extent of validation required based on risk level.

Experimental Protocols for Key Validation Activities

This section provides detailed methodologies for critical experiments in method validation, illustrating how a risk-based approach is applied in practice.

Precision (Impression) Testing

Precision is defined as "the closeness of agreement between independent test results obtained under stipulated conditions" [31]. The level of precision required for a method is directly related to its intended use and the magnitude of the biological change it aims to detect.

- Procedure:

- Sample Selection: Select a minimum of two samples (e.g., one normal and one pathological level) for analysis. Using a spiked sample and a naturally contaminated or authentic sample is recommended [5].

- Experimental Replication: Analyze each sample a minimum of 10 times to estimate repeatability (within-run precision). To assess intermediate precision (between-run precision), analyze the samples in duplicates over a period of at least five days, using two different reagent lots, and two analysts if possible [31].

- Data Analysis: Calculate the mean (average), standard deviation (SD), and coefficient of variation (CV%) for the measured concentrations at each level. The CV% is calculated as (SD/Mean) × 100.

- Acceptance Criteria: The acceptable level of imprecision (CV%) should be defined a priori based on the biological variation of the analyte or the clinical requirements. A method intended to detect small changes demands a lower, more stringent CV%.

Determination of Limits of Quantification

The Limits of Quantification (LOQ) define the highest and lowest concentrations of an analyte that can be measured with acceptable precision and accuracy (trueness) [31]. This is critical for determining the reliable working range of an assay.

- Procedure:

- Sample Preparation: Prepare a series of samples with analyte concentrations spanning the expected lower and upper limits. This can be done by spiking the analyte into the relevant biological matrix [5].

- Measurement and Analysis: Analyze each sample multiple times (e.g., 10 replicates) across different runs. For the Lower LOQ (LLOQ), the measured concentration should be within ±20% of the theoretical concentration, and the CV% should not exceed 20% [31]. Similar principles apply to the Upper LOQ (ULOQ).

- Dilutional Linearity: To validate the ULOQ, a sample with a concentration above the ULOQ can be diluted to fall within the working range. The measured concentration after dilution, when multiplied by the dilution factor, should match the original expected concentration with acceptable precision and accuracy [31].

Selectivity and Specificity Testing

Selectivity is "the ability of the bioanalytical method to measure and differentiate the analytes in the presence of components that may be expected to be present" [31]. This ensures that the method is measuring the intended analyte without interference.

- Procedure:

- Interference Testing: Test potential interfering substances that are likely to be encountered. Common examples include bilirubin, hemoglobin, and lipids. Test these substances at clinically relevant high concentrations.

- Sample Preparation: Prepare a baseline sample (analyte in clean matrix) and test samples by spiking the potential interferent into the baseline sample.

- Comparison and Calculation: Measure the concentration in the baseline sample and the test samples. The difference in measured concentration is calculated and expressed as a percentage of the baseline concentration. A change of less than a pre-defined limit (e.g., ±10-15%) indicates no significant interference.

- Parallelism: This is a test of selectivity against the matrix itself. It involves performing recovery tests on the biological matrix (or diluted matrix) and comparing the results against the calibrators in a substitute matrix to ensure the method performs consistently in the intended sample type [31].

Robustness Testing

Robustness is "the ability of a method to remain unaffected by small variations in method parameters" [31]. This is typically investigated during method development for in-house assays.

- Procedure:

- Parameter Identification: Identify critical procedural parameters that could vary in a real-world setting (e.g., incubation times ±1 minute, temperatures ±2°C, reagent volumes ±5%, different analysts).

- Systematic Variation: Perform the assay with deliberate, small variations in these parameters, one at a time, while using the same set of test samples.

- Protocol Refinement: If the measured concentrations are unaffected by the variations, the protocol is adjusted to include these acceptable tolerances (e.g., "incubate for 30 ± 3 minutes"). If a parameter systematically alters results, its acceptable variation range must be narrowed until no effect is observed, and this refined specification is incorporated into the final method protocol [31].

The table below summarizes the core performance characteristics that should be evaluated during a method validation, along with their definitions and key procedural points.

Table 1: Core Method Validation Parameters and Protocols

| Validation Parameter | Definition | Key Experimental Procedure |

|---|---|---|

| Precision | The closeness of agreement between independent test results [31]. | Analyze samples in replicates (≥10) within a run and over multiple days (≥5) with different analysts/reagent lots [31]. |

| Trueness/Accuracy | The closeness of agreement between the average value and an accepted reference value [31]. | Analyze samples with known concentrations (spiked or reference materials) and calculate recovery as (Measured/Expected) × 100. |

| Limits of Quantification | The highest and lowest concentrations measurable with acceptable precision and accuracy [31]. | Analyze serially spiked samples near the limits. LLOQ/ULOQ typically require ±20% accuracy and ≤20% CV [31]. |

| Selectivity | The ability to measure the analyte unequivocally in the presence of interfering components [31]. | Spike potential interferents and measure bias. Test for "parallelism" by analyzing serially diluted authentic samples [31]. |

| Robustness | The ability of a method to remain unaffected by small, deliberate variations in method parameters [32] [31]. | Vary one parameter at a time (e.g., incubation time, temperature) and observe the impact on results [31]. |

The Scientist's Toolkit: Essential Reagents and Materials

The successful execution of validation protocols relies on a set of well-characterized reagents and materials. The selection and quality of these materials are often governed by risk-based decisions.

Table 2: Essential Research Reagent Solutions for Validation Studies

| Reagent/Material | Function in Validation | Risk-Based Selection Consideration |

|---|---|---|

| Certified Reference Material (CRM) | Serves as an accepted reference value to establish the trueness (accuracy) of a method [31]. | Use is critical for high-risk methods where accurate quantification is directly linked to patient diagnosis or dosing. |

| Spiked and Authentic Samples | Used to establish precision, LOQ, and recovery. Spiked samples are created by adding analyte to a matrix; authentic samples are naturally contaminated [5]. | Using the intended sample matrix (e.g., capillary blood) is preferred to detect matrix effects, a high-risk failure mode [5]. |

| Interference Stocks | Solutions of potential interfering substances (e.g., hemoglobin, bilirubin, lipids) used to test method selectivity [31]. | Essential for methods used in patient populations where specific interferents are common, mitigating the risk of false results. |

| Control Samples | Stable materials with known concentrations used for precision monitoring and as system suitability checks [5]. | High-risk processes require more frequent use of controls and stricter acceptance criteria to ensure ongoing assay performance. |

| Calibrators | A series of standards used to construct the calibration curve, which is fundamental to all quantitative measurements. | The traceability and stability of calibrators are high-risk factors. Using multiple lots during validation assesses this risk [31]. |

The adoption of a risk-based approach to validation is more than a regulatory expectation; it is a strategic imperative for modern, efficient, and scientifically sound drug development and clinical research. By systematically identifying, assessing, and prioritizing risks based on their potential impact on patient safety and product quality, organizations can ensure that validation efforts are both effective and efficient. This targeted focus not only optimizes resource allocation but also fosters a deeper, more fundamental understanding of processes and methods. As the industry continues to evolve with advancements in AI, personalized medicines, and complex therapies, the principles of risk-based validation will remain a foundational element in ensuring that innovation continues to be matched with the highest standards of quality, reliability, and patient safety.

Implementing Modern Methodologies: From QbD to Advanced Instrumentation

Adopting a Lifecycle Approach to Method Validation and Management

The foundation of method validation studies research is undergoing a significant paradigm shift, moving from a static, one-time validation event to a dynamic, holistic Analytical Procedure Lifecycle Management (APLM) approach. This evolution is driven by regulatory agencies worldwide, including the FDA and EMA, which have updated guidance documents to incorporate quality by design (QbD) principles into the analytical environment, creating the new term Analytical Quality by Design (AQbD) [33]. Traditional method validation, as governed by ICH Q2(R1), often resulted in "problematic" methods that, while validated, exhibited issues during routine use such as variable chromatography, frequent system suitability test failures, and duplication problems requiring extensive investigation [34] [33]. The lifecycle approach addresses these shortcomings by providing a systematic framework for developing more robust and reliable analytical procedures that remain suitable for their intended use throughout the drug development process and commercial lifecycle.

The Analytical Procedure Lifecycle Framework

The Analytical Procedure Lifecycle consists of three interconnected stages that create a continuous improvement model, as visualized in Figure 1. This framework emphasizes greater attention to earlier phases and incorporates feedback mechanisms for continual enhancement [34].

Stage 1: Procedure Design and Development

The initial stage begins with defining an Analytical Target Profile (ATP) which serves as the foundational specification for the procedure [34]. The ATP is a predefined objective that outlines the requirements for the reportable value produced by an analytical procedure, ensuring it is fit for its intended use [34]. Method development then proceeds based on this ATP, employing systematic studies to understand critical method parameters and their impact on performance attributes [35]. During this stage, design of experiments (DoE) and risk-based tools such as Ishikawa diagrams or control, noise, and experimental (CNX) methods are recommended for identifying critical factors, followed by failure mode effect analysis (FMEA) for prioritization [35]. This systematic approach to development provides a scientific rationale for the method and establishes the knowledge space that informs subsequent validation activities.

Stage 2: Procedure Performance Qualification

This stage corresponds to traditional method validation but is conducted with enhanced understanding gained from Stage 1 [34]. The Procedure Performance Qualification demonstrates that the analytical procedure is capable of providing reliable data for its intended use [34]. According to ICH Q2(R2), a risk-based approach should be used, and validation should be performed based on intended use, allowing for different validation strategies at different phases of the development lifecycle [33]. The validation exercise should ideally occur before pivotal studies and after clinical proof-of-concept is established for the candidate [35]. A key deliverable from method qualification is the establishment of appropriate acceptance criteria for validation based on process capability and product profile [35].

Stage 3: Procedure Performance Verification

The final stage involves ongoing monitoring of the analytical procedure during routine use to ensure it remains in a state of control [34]. Procedure Performance Verification includes continuous assessment of procedure performance through trending of system suitability data, quality control sample results, and method performance indicators [34]. This stage represents a significant advancement over traditional approaches where method performance was often assumed to remain acceptable after initial validation. The new guidance welcomes continuous improvement provided that documented evidence shows how the analytical procedure has evolved to improve data quality and results [33]. Regulatory authorities may consider an analytical procedure that has not changed in 5-10 years to be a red flag and may want to understand how robust the analytical procedure is on a daily basis [33].

Figure 1: The Three-Stage Analytical Procedure Lifecycle Model with Feedback Mechanisms. This model emphasizes knowledge-driven development and continuous improvement throughout the method lifecycle [34].

Phase-Appropriate Implementation Across Drug Development

The analytical method lifecycle must be appropriately staged in accordance with regulatory requirements while considering financial and time constraints incurred by each project [35]. Table 1 outlines how analytical procedures evolve across the drug development lifecycle, with validation activities expanding as knowledge increases and materials become more characterized.

Table 1: Phase-Appropriate Analytical Method Lifecycle Activities in Drug Development [35] [33]

| Development Phase | Method Status | Key Lifecycle Activities | Typical Validation Parameters Assessed |

|---|---|---|---|

| Discovery & Phase I | Basic procedure with limited knowledge | Initial performance assessment; Confirm method is scientifically sound | Precision, Specificity, Linearity, Limited Robustness, Limited Stability |

| Phase II | Enhanced understanding of product and impurities | Method refinement; Robustness assessment; Setting ICH-compliant acceptance criteria | Accuracy, Detection Limit (DL), Further Robustness, Further Stability |

| Phase III | Optimized for commercial use | Complete validation; Validation readiness assessment; Formal transfer to QC | Intermediate Precision, Quantitation Limit (QL), Detailed Robustness, Detailed Stability |

| Commercial | Controlled state with continuous monitoring | Ongoing procedure performance verification; Trending; Continuous improvement | System suitability monitoring, QC sample tracking, Method performance indicators |

Early Phase Development (Phase I)

During early development, analytical procedures are typically simple with limited knowledge of the drug product manufacturing process and impurity profile [33]. The method may be limited to a basic chemical purity test, with characterized reference standards often unavailable [33]. At this stage, the focus is on confirming that the method is "scientifically sound, suitable, and reliable for its intended purpose" with initial assessment of fundamental validation parameters [35]. For Phase I clinical trials, EMA guidelines state that "the suitability of the analytical methods used should be confirmed" with acceptance limits and validation parameters presented in tabulated form [35].

Mid-Phase Development (Phase II)

As knowledge increases during Phase II, analytical procedures develop with better understanding of the drug product and impurity profile [33]. Method refinement typically occurs at this stage, potentially involving different column technologies or gradient profiles to improve peak shape and separation of known impurities [33]. Characterized reference materials for the drug product and known impurities should become available, allowing more accurate quantitation [33]. This phase presents the opportunity to apply QbD principles through risk-based tools and design of experiments to rationalize laboratory work, better understand method performance, and ensure optimal project spend [35].

Late Phase Development (Phase III) and Commercial