From Pixels to Chemistry: A Comprehensive Guide to Hyperspectral Imaging for Material Mapping

This article provides a comprehensive exploration of hyperspectral imaging (HSI) as a powerful, non-destructive tool for chemical mapping of materials.

From Pixels to Chemistry: A Comprehensive Guide to Hyperspectral Imaging for Material Mapping

Abstract

This article provides a comprehensive exploration of hyperspectral imaging (HSI) as a powerful, non-destructive tool for chemical mapping of materials. Tailored for researchers and drug development professionals, it covers the foundational principles of HSI technology, from data cube structure and spectral 'fingerprints' to advanced methodologies like spectral unmixing and deep learning. The scope extends to practical applications in pharmaceutical quality control and biomedical diagnostics, while also addressing key challenges in data processing and model validation. By synthesizing traditional chemometric approaches with cutting-edge AI techniques, this guide serves as a vital resource for implementing and optimizing HSI for precise, spatially-resolved chemical analysis.

Hyperspectral Imaging Unveiled: Core Principles and the Science of Spectral Fingerprints

Hyperspectral imaging (HSI) is an advanced optical sensing technique that integrates spectroscopy and digital photography into a single system, enabling the simultaneous acquisition of spatial and spectral information from a target scene or object [1]. This process results in a unique three-dimensional (3D) dataset known as a hyperspectral data cube [1]. The cube combines two spatial dimensions (x, y) with one spectral dimension (λ), effectively bridging the gap between conventional imaging and spectroscopy [1]. Each pixel in the spatial domain contains a continuous spectrum, often referred to as a spectral "fingerprint," which encodes the chemical, physical, and biological properties of the materials within that pixel [1] [2].

This data structure fundamentally differs from traditional imaging modalities. While panchromatic imaging records a single broad spectral band and standard RGB cameras capture only three broad bands (red, green, blue), hyperspectral systems routinely capture over hundreds of contiguous spectral channels at high spectral resolution (commonly 5-10 nm) [1]. This extensive spectral coverage, typically spanning wavelengths from 380 to 2500 nm (encompassing visible, near-infrared (NIR), and shortwave infrared (SWIR) regions), enables the identification of subtle features invisible to conventional cameras, such as molecular absorption bands and pigment-related transitions [1].

Deconstructing the Hyperspectral Data Cube

Core Dimensional Components

The hyperspectral data cube is architecturally defined by three orthogonal dimensions:

- Spatial Dimension (x-axis): Represents the horizontal pixel coordinate of the scene.

- Spatial Dimension (y-axis): Represents the vertical pixel coordinate of the scene.

- Spectral Dimension (λ-axis): Represents the wavelength or band number, providing a continuous spectrum for each spatial pixel.

The integration of these dimensions means that for every spatial location (x, y), a complete spectrum across the λ-dimension is recorded. Conversely, for any specific wavelength (λ), a full two-dimensional spatial image can be rendered [1]. This structure is often visualized as a stack of images, each representing a specific narrow wavelength band, forming the 3D cube.

Quantitative System Parameters

The specifications of a hyperspectral imaging system directly determine the characteristics and information content of the acquired data cube. Key parameters are summarized in the table below.

Table 1: Key Parameters of Hyperspectral Imaging Systems

| Parameter | Typical Range/Description | Impact on Data Cube |

|---|---|---|

| Spectral Range | 380–2500 nm (Visible, NIR, SWIR) [1] | Determines the types of chemical bonds and materials that can be detected. |

| Spectral Resolution | 5–10 nm [1] | Finer resolution allows discrimination of narrower spectral features. |

| Number of Bands | >100 to thousands [1] [2] | Increases spectral detail but also data volume and complexity. |

| Spatial Resolution | Varies with sensor and optics | Determines the smallest object distinguishable in the x, y dimensions. |

| Data Dimensionality | High-dimensional (x × y × λ) [1] | Poses challenges for processing, storage, and analysis. |

Experimental Protocols for Chemical Mapping

The application of HSI for chemical mapping involves a structured workflow from data acquisition to analysis. The following protocols are adapted from recent research applications.

Protocol 1: Chemical Identification via Nonlinear Spectral Unmixing

This protocol is designed for identifying thin layers of organic materials on environmental surfaces, where the measured spectrum is a nonlinear mixture of the target and background materials [3].

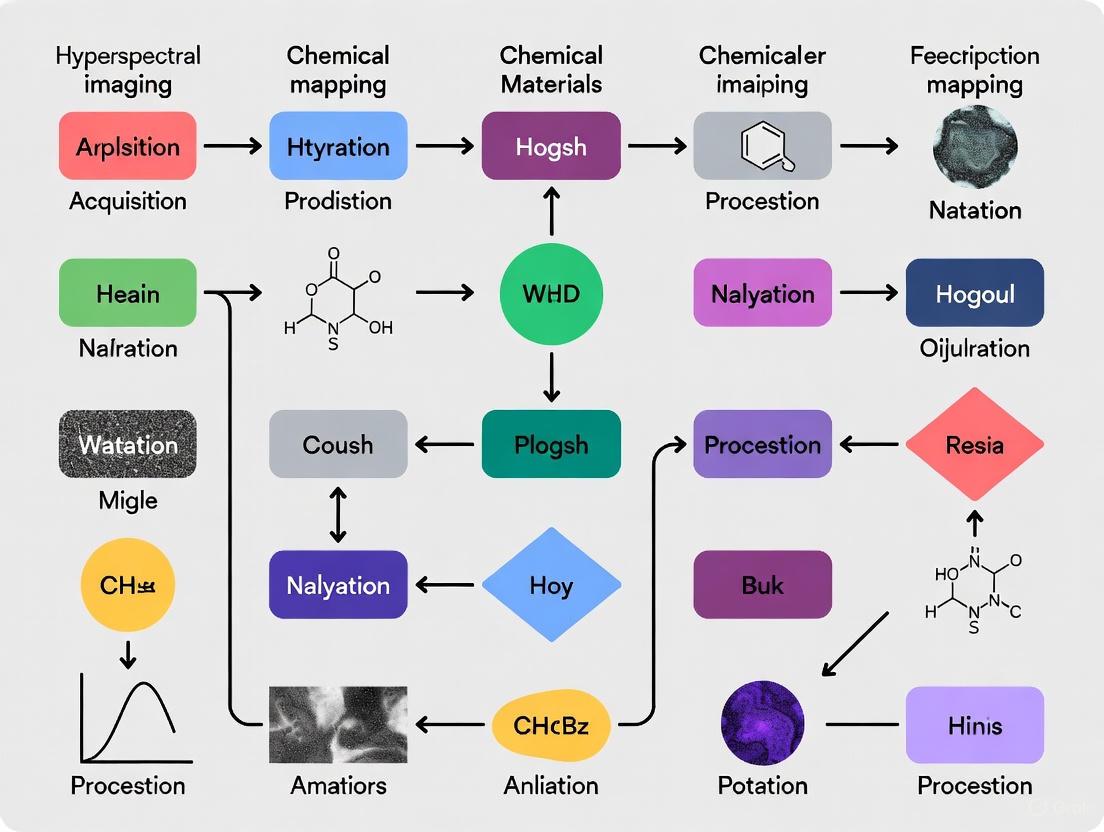

Workflow Diagram: Chemical Identification via Machine Education

Step-by-Step Methodology:

Data Acquisition:

Machine Education Inputs:

- Define the Nonlinear Mixing Model: Input the physical model that describes the interaction of light with the target and background. For a thin layer, this is often an element-wise (multiplicative) mixing model [3]:

I_i(λ) = I_i^0(λ) ⊙ [R_b(λ) ⊙ α_i ⋅ R_m(λ) + (1 - α_i) ⋅ R_b(λ)]whereI_i(λ)is the measured radiance,I_i^0(λ)is the incident light,R_b(λ)andR_m(λ)are the background and target material reflectances, andα_iis the target abundance [3]. - Input Pure Spectral Libraries: Provide the known reflectance spectra

R_m(λ)of the pure target materials. These are considered problem-invariant [3]. - Input Unlabeled Data: Feed the acquired, unlabeled HSI data into the model [3].

- Define the Nonlinear Mixing Model: Input the physical model that describes the interaction of light with the target and background. For a thin layer, this is often an element-wise (multiplicative) mixing model [3]:

Analysis and Output:

Protocol 2: Rapid Screening of Microplastic-Degrading Bacteria

This protocol uses HSI to rapidly screen environmental samples for bacteria capable of degrading microplastics (e.g., Polybutylene Adipate Terephthalate, PBAT) on a co-metabolic solid medium [4].

Workflow Diagram: Screening of Biodegrading Bacteria

Step-by-Step Methodology:

Sample Preparation:

HSI Data Acquisition:

- Acquire near-infrared (NIR) hyperspectral images of the solid media cultures after a set incubation period. The NIR spectrum captures chemical bond vibrations (e.g., C-H, O-H) [4].

Deep Learning Model Development:

- Extract spectral data from the HSI cubes corresponding to areas with known chemical concentrations.

- Train a deep learning model (e.g., a convolutional neural network) to establish the relationship between the spectral features and the PBAT concentration in the solid medium [4].

Screening and Validation:

- Apply the trained model to predict the PBAT concentration across the entire HSI data cube, comparing pre- and post-incubation states [4].

- Identify bacterial colonies that induce a significant decrease in local PBAT concentration, indicating biodegradation capability [4].

- Validate the HSI-based findings using traditional analytical methods like High-Performance Liquid Chromatography (HPLC) [4].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for HSI-based Material Research

| Item | Function in HSI Experiments | Example Application |

|---|---|---|

| Hyperspectral Imager | Core sensor for capturing the spatial (x, y) and spectral (λ) data cube. Types include pushbroom, snapshot, and tunable filter-based systems [1]. | All HSI applications. |

| Standard Calibration Panels | Used for radiometric calibration to convert raw sensor data to reflectance/radiance, correcting for illumination and sensor artifacts [1] [5]. | All HSI applications. |

| Pure Chemical Standards | Provide known spectral signatures (R_m(λ)) for target materials; essential for building spectral libraries and training models [3]. | Chemical identification and spectral unmixing [3]. |

| Co-metabolic Solid Media | Culture medium containing both the target polymer and auxiliary carbon sources to support growth of a wider range of biodegrading microorganisms [4]. | Screening of microplastic-degrading bacteria [4]. |

| Specific Polymer Emulsions | Target analytes for degradation studies (e.g., PBAT emulsion). Their chemical breakdown is monitored via spectral changes [4]. | Screening of microplastic-degrading bacteria [4]. |

| Data Processing Software | Tools for HSI cube visualization, preprocessing (e.g., normalization), dimensionality reduction, and analysis (e.g., classification, spectral unmixing) [6] [5]. | All HSI applications. |

Comparative Analysis of HSI Applications

The power of the hyperspectral data cube for chemical mapping is demonstrated across diverse fields. The quantitative performance of various applications is summarized below.

Table 3: Performance Metrics of HSI in Selected Applications

| Application Field | Target Analysis | Key Performance Metric | Result |

|---|---|---|---|

| Chemical Identification | Thin organic layers on surfaces [3] | Probability of Detection | 96% (Educated Machine) vs. 90% (Classical Machine) [3] |

| Environmental Bioprospecting | PBAT-degrading bacteria [4] | Screening Outcome | Successfully identified a validated PBAT-degrading bacterium [4] |

| Agriculture & Food Safety | Crop disease detection [2] | Accuracy | 98.09% (Detection) [2] |

| Medical Diagnostics | Colorectal cancer detection [2] | Sensitivity / Specificity | 86% / 95% [2] |

| Pharmaceutical Security | Counterfeit tablet identification [2] | Authentication Capability | Accurately identified fake anti-malarial tablets [2] |

Hyperspectral Imaging (HSI) is a powerful analytical technique that merges spatial and spectroscopic data, creating a detailed three-dimensional data cube often referred to as a hyperspectral image [7] [8]. Unlike traditional RGB imaging, which captures only three broad spectral bands (red, green, and blue), HSI acquires data across numerous contiguous spectral bands, generating a full spectrum for each pixel in the image [7]. This detailed spectral "fingerprint" enables the identification and spatial mapping of materials based on their chemical composition [3] [8]. In materials research and drug development, this capability is transformative, allowing researchers to visualize component distribution, detect impurities, and monitor processes with unprecedented chemical specificity. The instrumentation pipeline that enables these analyses is a sophisticated integration of optical, electronic, and computational components, each critical for transforming light into chemically meaningful data.

System Components and Technical Specifications

The HSI instrumentation pipeline can be conceptually divided into several key subsystems: the illumination and optical assembly, the spectral dispersion device, the detector array, and the data acquisition system. Table 1 summarizes the core components and their functions within the pipeline.

Table 1: Core Components of a Hyperspectral Imaging Instrumentation Pipeline

| System Stage | Key Components | Primary Function | Technical Considerations |

|---|---|---|---|

| Optical Assembly | Illumination Source, Lenses, Mirrors, Beam Splitters | Delivers light to the sample and collects the reflected/transmitted signal | Wavelength range, intensity stability, light throughput, geometric optics |

| Spectral Dispersion | Prisms, Gratings, Tunable Filters, | Splits the collected light into its constituent wavelengths | Spectral resolution, light efficiency, scanning speed |

| Detector Array | CCD, CMOS, or InGaAs Focal Plane Array | Converts photons (light) into electrons (digital signal) | Quantum efficiency, readout noise, dark current, dynamic range, pixel resolution |

| Data Acquisition | Analog-to-Digital Converter, FPGA, Control Software, High-Speed Storage | Digitizes, processes, and saves the raw spectral data | Frame rate, bit depth, data transfer throughput, storage capacity |

The performance of an HSI system is quantified by several key parameters. Spectral Resolution defines the ability to distinguish between adjacent wavelengths and is crucial for identifying fine spectral features of chemicals. Spatial Resolution determines the smallest object detail that can be resolved in the image. The Signal-to-Noise Ratio (SNR) is paramount for detecting weak signals, such as those from minor chemical components or low-abundance analytes. Maximizing light throughput from the sample to the detector is a primary goal of the optical design, as it directly impacts sensitivity and acquisition speed [9].

Experimental Protocols for HSI-Based Chemical Mapping

Protocol: Mapping Acrylamide in Potato Chips Using NIR-HSI

This protocol is adapted from a study that successfully predicted and visualized acrylamide content in potato chips using Near-Infrared Hyperspectral Imaging (NIR-HSI) and chemometrics [10].

1. Sample Preparation:

- Materials: Potato tubers (e.g., Agria and Jaerla varieties), industrial frying equipment, laboratory crusher.

- Procedure: Process 300 potato tubers under controlled frying conditions to generate chips with a natural variation in acrylamide content. Allow chips to cool to room temperature. For spectral acquisition, present chips on a non-reflective, black background to minimize scattering.

2. Hyperspectral Image Acquisition:

- Instrument Setup: Utilize a line-scanning NIR hyperspectral imaging system in reflectance mode.

- Spectral Range: Ensure the system covers the relevant NIR range (e.g., 900-1700 nm).

- Calibration: Prior to sample measurement, perform a white reference calibration using a standard reflectance tile (e.g., ~99% reflectance) and a dark reference with the lens covered (0% reflectance). This corrects for instrumental and illumination irregularities.

- Acquisition Parameters: Set the scanning speed and exposure time to avoid pixel saturation. Acquire hyperspectral images of all potato chips.

3. Data Preprocessing and Model Development:

- Extraction of Spectra: Extract the mean spectrum from each chip's hyperspectral image.

- Reference Analysis: Quantify the actual acrylamide content in each chip using a standard analytical method (e.g., liquid chromatography-mass spectrometry).

- Preprocessing: Apply spectral preprocessing techniques to enhance the signal. The cited study found Standard Normal Variate (SNV) transformation to be most effective for removing scatter effects [10].

- Chemometric Modeling: Develop a predictive model using Partial Least Squares Regression (PLSR). The model relates the preprocessed spectral data (X-matrix) to the reference acrylamide values (Y-matrix).

- Validation: Split the data into calibration and validation sets. The optimal model should achieve high predictive performance, for example, with a coefficient of determination for prediction (R²p) of 0.85 and a Root Mean Square Error of Prediction (RMSEP) of 201 μg/kg [10].

4. Visualization (Chemical Mapping):

- Pixel-Wise Prediction: Apply the validated PLSR model to the spectrum of every pixel in the hyperspectral image of a new chip.

- Generate Concentration Map: Refold the predicted concentration values for each pixel back into a 2D spatial map. Use a color scale to visualize the spatial distribution of acrylamide across the chip surface [10] [8].

Protocol: Handling Nonlinear Spectral Mixing in Thin Organic Films

This protocol addresses a common challenge in HSI of materials: nonlinear mixing, where the measured spectrum is a product of the spectral signatures of multiple materials, rather than a simple linear combination [3].

1. Problem Identification:

- Recognize scenarios prone to nonlinear effects, such as thin layers of organic materials deposited on environmental surfaces where multipath scattering occurs.

- The measured signal ( Ii(\lambda) ) for a pixel ( i ) can be described by the model: ( Ii(\lambda) = Ii^0(\lambda) \odot [Rb(\lambda) \odot \alphai \cdot Rm(\lambda) + (1 - \alphai) \cdot Rb(\lambda)] ), where ( \odot ) is element-wise multiplication, ( Rm ) and ( Rb ) are the reflectance of the target and background material, and ( \alpha_i ) is the abundance [3].

2. Machine Education Approach:

- Instead of standard machine learning, employ a "machine education" paradigm. Equip the analysis algorithm with the physical model of nonlinear mixing (as above) and the known spectral signatures of the pure target materials.

- The machine uses this invariant physical information to resolve the nonlinear mixing and identify the target material's signature from unlabeled HSI data, leading to superior generalization and reduced false identifications compared to classical methods [3].

3. Validation:

- Validate the identification accuracy against a ground-truthed subset of the data. The educated machine approach has been shown to reduce falsely identified samples by approximately 100 times compared to a classical machine learning classifier [3].

Workflow Visualization

The following diagram illustrates the complete HSI instrumentation and data analysis pipeline for chemical mapping.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of HSI for chemical mapping requires both hardware and analytical tools. Table 2 lists key solutions and materials central to this field.

Table 2: Essential Toolkit for HSI-based Chemical Mapping Research

| Tool/Reagent | Function/Description | Application Example |

|---|---|---|

| Standard Reflectance Tiles | Ceramic tiles with known, stable reflectance properties (e.g., ~99% white, ~2% dark). | Critical for calibrating the HSI instrument before every measurement session to correct for dark current and non-uniform illumination [10]. |

| Chemometric Software | Software packages (e.g., Python with Scikit-learn, MATLAB, PLS Toolbox, ENVI) for multivariate data analysis. | Used to develop and apply PLSR or SVM models for quantitative prediction and spectral unmixing [10] [8]. |

| Spectral Preprocessing Algorithms | Mathematical algorithms including Standard Normal Variate (SNV), Detrending, and Derivatives. | Applied to raw spectra to remove light scattering effects and baseline shifts, improving the robustness of chemometric models [10]. |

| Reference Analytical Method | A primary, validated method (e.g., LC-MS, GC-MS) for quantifying the target chemical. | Provides the ground-truth data (Y-variables) required to build the initial calibration model for the HSI system [10]. |

| Line-Scanning HSI System | An imaging system that acquires data one line of pixels at a time, synchronized with a conveyor belt. | Enables real-time, on-line monitoring of chemical properties in moving streams, such as monitoring composition in pharmaceutical powder blends [8]. |

A spectral signature is the unique pattern of electromagnetic radiation that a material absorbs, reflects, or emits across a range of wavelengths. This fingerprint arises from the fundamental interactions between light and matter, driven by the electronic, vibrational, and rotational energy states of atoms and molecules. When incident photons match the energy required for a transition between these quantum states, they are absorbed; the remaining wavelengths are reflected or transmitted, creating a characteristic pattern that reveals the material's chemical composition. Hyperspectral Imaging (HSI) exploits this principle by capturing spatially resolved spectral data, generating a three-dimensional data cube (x, y spatial dimensions, and λ spectral dimension) that enables non-destructive chemical mapping of samples [1] [11].

The near-infrared (NIR, 800–2500 nm) region is particularly informative for chemical analysis, as it contains overtone and combination bands of fundamental molecular vibrations. Key functional groups, such as O-H, N-H, and C-H bonds, exhibit characteristic absorption features in this region, allowing for precise material identification and quantification [12]. This Application Note details the protocols and methodologies for utilizing HSI to decode these spectral signatures for advanced materials research.

Key Principles of Light-Matter Interaction

The following diagram illustrates the core principle of how light interacts with a material's molecular structure to generate a measurable spectral signature.

The interaction mechanisms captured in the workflow are:

- Electronic Transitions: Occur in the ultraviolet and visible regions when photons promote electrons to higher energy levels [1].

- Vibrational Modes: Molecular bonds vibrate with characteristic frequencies, absorbing energy in the infrared region, including fundamental vibrations (mid-IR) and overtones/combinations (NIR) [12] [1].

- Rotational Modes: Molecules rotate with discrete energies, primarily affecting the far-infrared and microwave regions [1].

These interactions collectively generate a spectral signature that is unique to a material's specific chemical composition and physical state.

Experimental Protocols for HSI Analysis

Protocol 1: Reflectance-based Chemical Mapping of Solid Materials

This protocol is designed for the non-destructive identification and mapping of chemical components in solid samples, such as polymers, pharmaceuticals, or composite materials.

A. Research Reagent Solutions & Essential Materials

Table 1: Essential Materials and Equipment for Reflectance-based HSI.

| Item Name | Function/Description | Key Specifications |

|---|---|---|

| Hyperspectral Imager | Captures spatial and spectral data to form a hypercube. | Pushbroom or snapshot camera; Spectral range covering NIR (900-1700 nm) is often ideal for organics [12] [13]. |

| Stabilized Light Source | Provides consistent, uniform illumination. | Tungsten-halogen lamp (360-2600 nm) with integrated collimating optics [14]. |

| Spectralon Reference Panel | Used for white reference calibration. | >99% diffuse reflectance standard. |

| Liquid Crystal Variable Retarder (LCVR) | Enables tunable, wavelength-dependent filtering for rapid phasor-based HSI [12]. | Adjustable retardance to cover 900-1600 nm. |

| Motorized Sample Stage | Allows precise spatial scanning for pushbroom systems. | High-precision (e.g., 0.5 µm step size) [14]. |

| Data Processing Software | For data visualization, analysis, and classification. | e.g., Spectronon, ENVI, or Python with specialized libraries (Spectral, PySptools) [15] [11]. |

B. Step-by-Step Procedure

System Setup and Calibration:

- Mount the HSI camera in a fixed position relative to the sample stage. For a pushbroom system, align the camera's line of sight perpendicular to the direction of stage movement [1] [14].

- Position the light source at a consistent angle (e.g., 45°) to minimize specular reflection and maximize diffuse reflectance.

- Power on the light source and allow it to stabilize for at least 30 minutes to ensure consistent output.

- Perform a white reference scan by capturing an image of the Spectralon panel under the same illumination and camera settings used for samples. This corrects for the system's inherent response.

- Perform a dark reference scan by covering the camera lens with its cap. This corrects for dark current and electronic offset.

Data Acquisition:

- Place the sample securely on the motorized stage.

- Set the HSI system parameters: exposure time, gain, and scanning velocity to achieve optimal signal-to-noise ratio without saturating the sensor.

- For pushbroom scanning, initiate the acquisition sequence. The system will capture a line of spatial data across all spectral bands simultaneously as the stage moves, building the hypercube line-by-line [11] [14].

- For snapshot or tunable filter-based systems (e.g., using an LCVR [12]), capture the entire scene or a set of wavelength-filtered images according to the manufacturer's protocol.

Data Preprocessing:

- Convert raw digital numbers (DN) to reflectance or absorbance values using the calibration images. The standard formula is:

Reflectance = (Sample_Image - Dark_Reference) / (White_Reference - Dark_Reference) - Apply any necessary noise reduction or spatial/spectral binning to improve data quality.

- Convert raw digital numbers (DN) to reflectance or absorbance values using the calibration images. The standard formula is:

Protocol 2: Spectral Unmixing for Complex Mixtures

Many samples consist of multiple materials within a single pixel. This protocol uses spectral unmixing to identify and quantify individual components.

A. Research Reagent Solutions & Essential Materials

Table 2: Essential Materials for Spectral Unmixing Analysis.

| Item Name | Function/Description |

|---|---|

| Pure Material Standards (Endmembers) | Samples of each pure component for building a spectral library. |

| Software with Unmixing Algorithms | Tools containing algorithms like Pixel Purity Index (PPI), Sequential Maximum Angle Convex Cone (SMACC), and Fully Constrained Least Squares (FCLS) [11]. |

B. Step-by-Step Procedure

Endmember Extraction:

- Option A (Library from Pure Standards): Use Protocol 1 to collect the spectral signatures of each pure component expected in the mixture (e.g., pure cellulose, lignin, and polypropylene [11]).

- Option B (Direct from Image): If pure components are present within the hyperspectral image itself, use automated algorithms like PPI or SMACC to identify the "purest" pixel spectra [11]. PPI iteratively projects data onto random vectors to find extreme pixels, while SMACC uses an orthogonal subspace projection to find a set of endmembers that form a convex cone containing all data points.

Spectral Unmixing Analysis:

- Model each pixel's spectrum in the mixed sample as a linear combination of the endmember spectra: ( Rx = Σ (ai * Ei) + ε ), where ( Rx ) is the pixel's reflectance, ( ai ) is the abundance fraction of endmember ( Ei ), and ( ε ) is an error term.

- Use the Fully Constrained Least Squares (FCLS) algorithm to estimate the abundance fractions ( a_i ) for each pixel, with the constraints that all abundances are non-negative and sum to one [11].

- Generate abundance maps for each endmember, visually representing the spatial distribution and concentration of each chemical component.

The following workflow summarizes the two primary experimental pathways from data acquisition to chemical insight.

Data Analysis and Dimensionality Reduction

Hyperspectral datacubes are high-dimensional, often containing hundreds of spectral bands. Dimensionality reduction is critical for efficient processing and analysis.

Table 3: Common Dimensionality Reduction and Analysis Methods in HSI.

| Method Category | Example Algorithms | Principle | Application Context |

|---|---|---|---|

| Band Selection | Standard Deviation (STD), Mutual Information (MI) | Selects a subset of original bands with the highest information content (e.g., variance or class relevance). Simple and preserves physical meaning [14]. | Rapid preprocessing; resource-constrained environments (e.g., reduced data size by 97.3% while maintaining 97.2% accuracy [14]). |

| Feature Extraction | Principal Component Analysis (PCA), Linear Discriminant Analysis (LDA) | Transforms data into a new, lower-dimensional feature space using linear combinations of original bands. | General-purpose noise reduction and visualization; PCA is unsupervised, LDA is supervised [16] [14]. |

| Non-Linear Feature Extraction | Convolutional Autoencoders (CAE), Deep Margin Cosine Autoencoder (DMCA) | Uses neural networks to learn compact, non-linear representations of the spectral data in a latent space. | Capturing complex, non-linear spectral patterns; can achieve very high accuracy (>99% in some studies [14]). |

| Classification | Spectral Angle Mapper (SAM), Support Vector Machine (SVM), Random Forest | Compares unknown pixel spectra to reference libraries or trained models to assign a class label. | Material identification and mapping (e.g., distinguishing plastic polymers [12] [11]). |

| Quantitative Regression | Partial Least Squares Regression (PLSR) | Models the relationship between spectral data and a continuous property of interest (e.g., concentration, moisture). | Predicting analyte concentration or physical properties in pharmaceutical or food samples [16]. |

Application Examples in Materials Research

The utility of spectral signature analysis is demonstrated across diverse fields:

- Polymer and Plastic Identification: HSI in the short-wave infrared (SWIR) can distinguish between plastic polymers like polypropylene and polyethylene, which may appear visually identical. Spectral unmixing can further quantify their abundance in complex objects like disposable coffee cups, with demonstrated area estimation errors of less than 1% [11].

- Pharmaceutical Analysis: HSI enables non-destructive quality control of drug formulations, detecting active pharmaceutical ingredients (APIs), excipients, and potential contaminants or adulterants based on their unique NIR spectral fingerprints [2] [16].

- Biomedical and Life Sciences: As a label-free technique, HSI can monitor live cell cultures, classify healthy and diseased tissues (e.g., achieving sensitivity of 87% and specificity of 88% for skin cancer [2]), and study disease pathogenesis by tracking biochemical changes [1] [14].

- Environmental Monitoring: HSI can identify and map specific minerals in geological samples, monitor plant health and water stress in agriculture, and detect pollutants like microplastics in the environment [12] [2] [17].

Troubleshooting and Best Practices

- Low Signal-to-Noise Ratio: Optimize exposure time and illumination intensity. Apply spatial or spectral binning during or after acquisition, and ensure proper dark current subtraction.

- Spectral Library Mismatch: Ensure reference spectra are collected using the same instrument, illumination geometry, and processing steps as the sample data. Standardized protocols are essential [16] [18].

- Computational Challenges with Large Datasets: Employ dimensionality reduction techniques early in the processing chain. For real-time applications, band selection methods like STD offer a strong balance of performance and speed [14].

- Validation: Always validate HSI results with a complementary analytical technique, such as gas chromatography-mass spectrometry (GC-MS) or Raman spectroscopy, especially when developing new models.

Hyperspectral imaging (HSI) has emerged as a powerful analytical technique for the non-destructive, label-free chemical mapping of materials, directly supporting advanced research in drug development and material sciences [1]. This technology integrates spectroscopy and digital imaging to simultaneously capture spatial and spectral information, generating a three-dimensional data cube comprised of two spatial dimensions (x, y) and one spectral dimension (λ) [1] [19]. Each pixel within this cube contains a continuous spectrum, often described as a spectral "fingerprint," that enables the identification and characterization of materials based on their unique chemical composition [1] [3].

For researchers focused on chemical mapping, the critical system specifications—spectral range, spectral resolution, and radiometric accuracy—determine the efficacy and reliability of their analyses. These parameters govern the system's ability to detect specific molecular absorption bands, distinguish between similar compounds, and provide quantitative chemical information [20] [19]. This application note details these key specifications, provides standardized protocols for their validation, and establishes a framework for selecting and operating HSI systems to optimize performance in materials research applications.

Core System Specifications

Spectral Range

Spectral range defines the breadth of the electromagnetic spectrum that a hyperspectral camera can capture, typically measured in nanometers (nm) [20]. It determines the types of chemical bonds and molecular vibrations that can be detected, as different materials exhibit characteristic absorption and reflection features across specific spectral regions [21] [19].

Table: Common Spectral Ranges in Hyperspectral Imaging and Their Research Applications

| Spectral Range | Wavelength (nm) | Common Detector Materials | Primary Applications in Chemical Mapping |

|---|---|---|---|

| VNIR | 400 – 1000 [21] | Silicon CCD, CMOS [21] | Pigment identification, organic compound detection, quality assessment of herbal medicines [21] [22]. |

| SWIR | 900 – 1700 [21] | InGaAs [21] | Analysis of moisture content, hydrogen-bonded phases, polymers, and certain pharmaceutical compounds [21] [23]. |

| Extended SWIR | 1000 – 2500 [21] | MCT, InSb [21] | Detailed hydrocarbon characterization, mineral identification, and complex organic molecular vibrations [21]. |

| MWIR | 3000 – 5000 [21] | InSb, PbSe [21] | Black plastic sorting, analysis of fundamental molecular vibrations [21] [23]. |

Spectral Resolution

Spectral resolution defines a system's ability to distinguish between two closely spaced wavelengths [20]. It is a critical parameter for identifying materials with subtle, overlapping spectral features [20]. High spectral resolution, characterized by a larger number of narrow spectral bands, allows for the precise resolution of sharp absorption peaks, which is essential for differentiating between chemically similar compounds [20].

Spectral resolution is quantified by two interrelated parameters: the number of spectral bands and the width of each band (in nm) [20]. It is important to note that bandwidth is not always constant across the entire spectral range of a camera; it may be narrower in some regions and broader in others [20]. For instance, a visible/near-infrared (VNIR) camera might have a resolution of 5 nm between 450-700 nm and 10 nm between 700-900 nm [20].

The selection of an appropriate spectral resolution involves balancing analytical detail with practical constraints. Higher spectral resolution increases data volume and can reduce the signal-to-noise ratio (SNR) by distributing incoming light across more channels [20]. For exploratory research where the target spectral signatures are unknown, higher resolution is advantageous. However, for a well-defined application targeting specific known features, a resolution above a certain floor may be sufficient, allowing resources to be allocated to other performance parameters like SNR or frame rate [20].

Radiometric Accuracy and Signal-to-Noise Ratio (SNR)

Radiometric accuracy refers to the precision with which a sensor measures the intensity of incoming radiation [24]. In practical terms, this is often discussed as the Signal-to-Noise Ratio (SNR), which is how well the instrument collects light amidst system noise [24]. A high SNR is fundamental for reliable chemical identification and quantification, as noise can obscure subtle spectral features critical for distinguishing materials [24].

Radiometry is particularly important for HSI because the incoming light signal is divided into many narrow spectral channels, which can result in low signal levels per channel [24]. Noisy, "light-starved" data diminish the value of the rich spectral information HSI provides [24]. It is crucial to note that while datasheets often report a single SNR value, the SNR typically varies across the camera's wavelength range [24]. Therefore, researchers should consult full SNR plots provided by manufacturers for an informed decision.

Table: Trade-offs Between Key HSI Specifications

| Specification | Performance Benefit | Associated Trade-off |

|---|---|---|

| Wider Spectral Range | Detects a broader array of chemical bonds and materials. | Increased system cost and complexity; often requires specialized, expensive detector materials (e.g., InGaAs, MCT) [21]. |

| Higher Spectral Resolution | Enables discrimination of materials with finely spaced or overlapping spectral features. | Larger data volumes, lower signal-to-noise ratio, potential for slower data acquisition speeds [20]. |

| Higher Radiometric Accuracy (SNR) | Improves detection of subtle spectral features and quantitative analysis reliability. | Requires longer exposure times (slower scanning) or more intense illumination, which may not be feasible in all applications (e.g., airborne, real-time) [24]. |

Experimental Protocols for System Characterization

Protocol 1: Spectral Calibration and Resolution Verification

Objective: To verify the accurate wavelength assignment and determine the practical spectral resolution of the HSI system.

Materials:

- HSI system with integrated light source

- Spectral calibration lamp (e.g., mercury-argon or neon)

- Certified diffuse reflectance standards (e.g., Labsphere)

- Data acquisition and analysis software

Methodology:

- System Setup: Warm up the HSI system and illumination source for the manufacturer-specified duration to ensure stable operation.

- Wavelength Calibration:

- Place the spectral calibration lamp in the system's field of view.

- Acquire a hyperspectral image of the lamp's emission.

- Extract the spectrum and identify the observed emission peaks.

- Create a calibration model by fitting the known peak wavelengths to the observed pixel positions, generating a wavelength-pixel mapping function [22].

- Resolution Verification:

- Image a material with known, sharp spectral emission or absorption lines.

- Measure the Full Width at Half Maximum (FWHM) of an isolated, narrow line in the acquired spectrum. The FWHM provides a direct measure of the system's instantaneous spectral resolution [20].

Protocol 2: Radiometric Calibration and SNR Assessment

Objective: To establish a quantitative relationship between the sensor's digital number (DN) output and the true radiance, and to measure the system's Signal-to-Noise Ratio.

Materials:

- HSI system

- Certified white reference panel with near-Lambertian reflectance properties (e.g., Spectralon)

- Light source with stable, known spectral output

- Dark current reference (e.g., a cap or black body)

Methodology:

- Radiometric Calibration:

- Acquire an image of the calibrated white reference panel under the system's operational illumination to obtain a white reference (W).

- Acquire an image with the lens capped or under complete darkness to obtain a dark reference (D).

- For any subsequent raw target image (Iraw), compute the reflectance (R) using the formula: ( R = (I{\text{raw}} - D) / (W - D) ) [22].

- This process corrects for dark current and non-uniform illumination.

- SNR Assessment:

- Acquire multiple successive hyperspectral images of a uniform, stable target under consistent illumination.

- For each pixel and spectral band, calculate the mean signal across the image sequence.

- Calculate the standard deviation of the signal for each pixel and band across the sequence.

- The SNR is computed as: ( \text{SNR} = \frac{\text{Mean Signal}}{\text{Standard Deviation of Signal}} ) [24].

- This should be reported as a function of wavelength.

Workflow for Chemical Mapping

The following workflow outlines the key steps from system setup to chemical identification for material mapping.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table: Essential Materials for Hyperspectral Imaging-Based Chemical Mapping

| Item | Function | Application Notes |

|---|---|---|

| Certified White Reference | Provides a known, near-perfect diffuse reflector for converting raw sensor data to reflectance values. Critical for radiometric calibration [22]. | Must be kept clean and undamaged. Re-certification is recommended periodically. |

| Spectral Calibration Source | Emits light at known, discrete wavelengths (e.g., Hg/Ar lamp). Used for accurate wavelength assignment and resolution verification [22]. | Essential for validating manufacturer's spectral specifications and for research requiring precise wavelength accuracy. |

| Dark Reference | Captures the system's electronic and thermal noise (dark current) when no light reaches the sensor. | Should be acquired at the same integration time and sensor temperature as the target images. |

| Stable Illumination System | Provides consistent, uniform illumination across the target. Halogen lights are common due to their broad spectral output [21]. | Illumination stability is paramount for achieving high radiometric accuracy and reproducible results. |

| Analysis Software | For data preprocessing (e.g., normalization, smoothing), dimensionality reduction, spectral unmixing, and classification [24] [22]. | Software ease of use is a critical but often overlooked attribute that impacts research efficiency [24]. |

The successful application of hyperspectral imaging for chemical mapping in materials research hinges on a deep understanding of the core specifications of spectral range, resolution, and radiometric accuracy. These parameters are deeply interconnected, and their optimal configuration is invariably a balance dictated by the specific research question, whether it involves mapping active pharmaceutical ingredients, identifying mineral phases, or detecting contaminants. By adhering to the standardized characterization and operational protocols outlined in this document, researchers can ensure the collection of high-fidelity, quantitative data, thereby unlocking the full potential of HSI as a powerful, non-destructive tool for advanced chemical analysis.

From Data to Chemical Maps: Methodologies and Real-World Applications in Biomedicine

In the field of materials research, hyperspectral imaging (HSI) has emerged as a powerful non-destructive technique that integrates spatial and spectral information to comprehensively evaluate the chemical properties of a sample [25]. Each pixel in a hyperspectral image contains a full spectrum, creating a three-dimensional data hypercube (x, y, λ) that is rich in chemical information [26]. The extraction of meaningful chemical maps from this vast and complex data relies on a robust chemometrics workflow encompassing preprocessing, dimensionality reduction, and feature extraction. This pipeline is essential for transforming raw spectral data into actionable knowledge about material composition, distribution, and identity, which is particularly valuable in applications ranging from nuclear forensics to food quality assessment [25] [27]. The following sections detail the protocols and application notes for each stage of this workflow, framed within the context of chemical mapping for materials research.

Preprocessing of Hyperspectral Data

Raw hyperspectral data are often contaminated by various noise sources and instrumental effects. Preprocessing is a critical first step to enhance the signal-to-noise ratio and prepare the data for subsequent analysis.

Key Preprocessing Techniques

The objective of preprocessing is to remove unwanted spectral variations not related to the chemical composition of the sample. The table below summarizes the primary functions and applications of common preprocessing techniques.

Table 1: Common Preprocessing Techniques for Hyperspectral Data

| Technique | Primary Function | Typical Application Context |

|---|---|---|

| Standard Normal Variate (SNV) | Scatter correction and normalization of each individual spectrum. | Correcting for light scattering effects in powdered or uneven surfaces [25]. |

| Savitzky-Golay Smoothing (SGS) | Noise reduction by fitting a polynomial to a moving spectral window. | Denoising spectra while preserving the shape and width of spectral peaks [25]. |

| Multiplicative Scatter Correction (MSC) | Compensation for additive and multiplicative scattering effects. | Similar to SNV, used for normalizing spectra against a reference spectrum [25]. |

| Derivative Spectra | Resolution of overlapping peaks and removal of baseline drift. | Highlighting subtle spectral features for improved chemical identification [25]. |

Experimental Protocol: Data Preprocessing

Application Context: This protocol is designed for preprocessing HSI data of nut samples for quality assessment, as reviewed by [25], but is broadly applicable to other solid materials.

Materials and Reagents:

- Hyperspectral Image Data Cube: Raw data in a structured format (e.g.,

[x_pixels, y_pixels, λ_wavelengths]). - Computing Environment: Software with chemometric capabilities (e.g., MATLAB, Python with SciKit-learn, or open-source R apps like the "dimensionality reduction app" [28]).

- Reference Standards: White (e.g., Teflon) and dark reference images for calibration.

Procedure:

- Calibration: Convert raw intensity values (

I_raw) to reflectance (R) using the formula:R = (I_raw - I_dark) / (I_white - I_dark)whereI_darkis the dark reference image andI_whiteis the white reference image. - Smoothing: Apply a Savitzky-Golay filter (e.g., 2nd-order polynomial, 11-point window) to each spectrum to reduce high-frequency noise [25].

- Scatter Correction: Process each spectrum using Standard Normal Variate (SNV). This centers the spectrum by subtracting its mean and then scales it by its standard deviation.

- Baseline Correction (Optional): If significant baseline drift is present, apply a derivative filter (e.g., 1st or 2nd derivative using Savitzky-Golay) to enhance spectral features [25].

- Validation: Visually inspect the preprocessed spectra to ensure noise and scattering artifacts have been effectively reduced without distorting the genuine spectral features.

Dimensionality Reduction and Feature Extraction

The high dimensionality of HSI data presents computational challenges and risks of overfitting. Dimensionality reduction techniques are employed to compress the data while preserving the most chemically relevant information.

Comparative Analysis of Dimensionality Reduction Methods

Dimensionality reduction can be achieved through variable selection or variable extraction. The latter, which creates new, smaller sets of composite variables, is widely used.

Table 2: Comparison of Variable Extraction Methods for Dimensionality Reduction

| Method | Type | Key Principle | Advantage in HSI |

|---|---|---|---|

| Principal Component Analysis (PCA) | Unsupervised | Finds orthogonal directions of maximum variance in the data. | Excellent for exploratory data analysis and revealing clustering or outliers [29]. |

| Partial Least Squares (PLS) | Supervised | Finds directions that maximize covariance between spectral data and a response variable (e.g., concentration). | Superior performance for predictive tasks like classification or regression [29]. |

| Deep Feature Extraction | Non-linear | Uses pre-trained neural networks to extract multi-scale spatial features from images [30]. | Captures complex texture and morphological patterns beyond spectral data alone. |

Experimental Protocol: Dimensionality Reduction with PLS

Application Context: This protocol uses a supervised approach to reduce data dimensionality for a classification task, such as identifying the botanical origin of honey from GC-IMS data [29], a concept directly transferable to HSI.

Materials and Reagents:

- Preprocessed HSI Data: The output from Section 2.2.

- Reference Data: A known class label for each sample or pixel (e.g., "pure material A," "contaminated," "background").

- Chemometrics Software: Tools capable of PLS modeling (e.g., the RShiny app from [28] or MATLAB PLS Toolbox).

Procedure:

- Data Arrangement: Unfold the hyperspectral hypercube into a 2D matrix where each row is a pixel's spectrum and each column is a wavelength.

- Data Splitting: Split the dataset into a training set (e.g., 70%) and a test set (e.g., 30%).

- Model Training: On the training set, fit a PLS-Discriminant Analysis (PLS-DA) model. The algorithm will project the original spectral data onto Latent Variables (LVs) that best separate the defined classes.

- Variable Importance: Calculate Variable Importance in Projection (VIP) scores for each wavelength. VIP scores quantify the contribution of each original variable to the PLS model.

- Feature Selection: Select wavelengths with a VIP score > 1.0 as these are considered the most relevant for the classification task [29].

- Data Transformation: Create a new, reduced dataset by extracting the spectral intensities only at the selected key wavelengths, or by using the scores from the first few LVs.

Advanced Spatial-Spectral Feature Fusion

For HSI, spectral information alone may be insufficient to distinguish materials with similar compositions but different morphologies. Advanced workflows fuse spatial and spectral features.

Experimental Protocol: Spatial-Spectral Fusion

Application Context: This protocol is adapted from a generic framework for jointly processing spatial and spectral information from HSI, as demonstrated in the early detection of apple scab on leaves [26].

Materials and Reagents:

- Preprocessed HSI Hypercube.

- Image Processing & Chemometrics Software: e.g., MATLAB with Image Processing Toolbox.

Procedure:

- Define Regions of Interest (ROIs): Manually or automatically segment the HSI into sub-images containing the objects or regions to be characterized.

- Spectral Feature Extraction: For each ROI, extract the mean spectrum or perform a singular value decomposition (SVD) on the spectral data to obtain a dominant spectral signature [26].

- Spatial Feature Extraction: For the same ROI, calculate Gray-Level Co-occurrence Matrices (GLCMs) to quantify texture features such as contrast, correlation, and entropy [26].

- Data Fusion: Combine the extracted spectral and spatial feature vectors for each ROI. This creates a unified block of data per ROI.

- Multiblock Modeling: Analyze the fused data using a multiblock method like Multiblock PLS-DA (MB-PLS-DA). This model will provide a comprehensive classification based on both chemical (spectral) and physical (spatial) properties [26].

Workflow Visualization

The following diagram illustrates the logical flow of the complete chemometrics workflow for hyperspectral imaging, from raw data to chemical knowledge.

The Scientist's Toolkit

The following table details key research reagents, software, and hardware solutions essential for implementing the described chemometrics workflow.

Table 3: Essential Research Reagents and Solutions for the HSI Workflow

| Item | Function/Application |

|---|---|

| Portable VNIR HSI Camera (e.g., 400-1000 nm range) | Captures the hyperspectral data cube in field or lab settings. Essential for non-destructive, in-situ material analysis [31]. |

| Transition-Edge Sensor (TES) Microcalorimeter Detector | Provides superior spectral energy resolution (e.g., 7 eV FWHM) in HSI systems, enabling finer discrimination of chemical states, particularly valuable in nuclear forensics [27]. |

| Standard Reference Materials (e.g., White Teflon, Spectralon) | Used for calibration and conversion of raw data to reflectance, ensuring data consistency and accuracy across measurements. |

| RShiny 'Dimensionality Reduction App' | An open-source web application that allows researchers to perform PCA, PLS, and other analyses without deep programming knowledge, facilitating accessible chemometrics [28]. |

| Pre-trained Deep Learning Models (e.g., ResNet, VGG) | Used for automated extraction of complex spatial features from hyperspectral images, complementing traditional spectral analysis [30]. |

| Multiblock Analysis Software (e.g., MATLAB toolboxes) | Enables the fusion of disparate data blocks (spatial and spectral features) into a unified model for enhanced material characterization [26]. |

Hyperspectral imaging (HSI) has emerged as a powerful analytical technique that transcends traditional spectroscopy by simultaneously capturing spatial and spectral information from material surfaces [32]. In materials research and drug development, this capability is paramount for visualizing the spatial distribution of chemical components within a sample, a process known as chemical mapping [32]. A fundamental challenge, however, arises from the presence of mixed pixels. These occur when the spatial resolution of the sensor is coarser than the scale of spatial heterogeneity on the ground, causing a single pixel to contain a mixture of disparate substances [33]. Spectral unmixing is the computational process designed to resolve these mixed pixels, decomposing them into their constituent pure materials, known as endmembers, and their corresponding abundances, which represent the fractional proportion of each endmember within the pixel [33] [34].

The drive for accurate unmixing is particularly strong in chemical mapping for materials research. Traditional methods for generating chemical maps, such as Partial Least Squares (PLS) regression, often rely on pixel-wise predictions that ignore spatial context. This can result in noisy maps where predictions may fall outside physically possible ranges (e.g., 0-100% concentration) and lack spatial coherence [32]. Furthermore, in many research scenarios, acquiring pixel-level reference values for training models is infeasible; reference data are often only available as averaged measurements for an entire sample [32]. This review focuses on demystifying two foundational algorithms for endmember extraction—Pixel Purity Index (PPI) and Spectral Mixture Analysis by Chain Reactions (SMACC)—providing detailed protocols for their application within a research context focused on chemical mapping.

Theoretical Foundations of Spectral Unmixing

The Linear Mixture Model

The most widely used model for spectral unmixing is the Linear Mixture Model (LMM). It operates on the assumption that the spectral signature of a mixed pixel is a linear combination of the endmember spectra, weighted by their fractional abundances [33]. Mathematically, this is represented as:

y = Ea + ε

Where:

- y is the measured spectral vector of a mixed pixel (ℓ × 1, where ℓ is the number of spectral bands).

- E is the endmember matrix (ℓ × m, where m is the number of endmembers), with each column containing the spectrum of a pure material.

- a is the abundance vector (m × 1) containing the fractional coverage of each endmember in the pixel.

- ε is the residual error term (ℓ × 1).

The LMM is subject to two physical constraints:

- Abundance Non-negativity Constraint (ANC): All abundance values must be non-negative (aᵢ ≥ 0).

- Abundance Sum-to-one Constraint (ASC): The abundances for a pixel must sum to one (∑aᵢ = 1) [35].

Endmember Extraction and the Role of Spatial Information

The process of identifying the pure spectral signatures (the matrix E) is called endmember extraction. PPI and SMACC are two algorithms designed for this critical first step. For years, spectral unmixing methods treated each pixel as independent of its neighbors, using only spectral information [33]. However, a growing body of research has found that incorporating spatial information significantly improves unmixing results. Spatial-spectral unmixing leverages the inherent spatial arrangement of pixels, acknowledging that materials often form contiguous regions rather than being randomly distributed [33] [35]. While PPI and SMACC are primarily spectral-based methods, modern deep learning approaches, such as U-Net and fully convolutional networks, now explicitly model joint spatial-spectral information to generate more accurate and spatially coherent chemical maps [32] [35].

The Pixel Purity Index (PPI) Algorithm

Principle and Workflow

The Pixel Purity Index (PPI) is a geometrically-based algorithm that identifies the purest pixels in a hyperspectral dataset by projecting data onto a series of random unit vectors. Its fundamental principle relies on the concept of the convex geometry of linear mixtures, where endmembers reside at the vertices of a simplex enclosing the data cloud. PPI operates under the assumption that the purest pixels will be projected onto the extreme ends of these random vectors more frequently than mixed pixels.

Table 1: Key Characteristics of the PPI Algorithm

| Aspect | Description |

|---|---|

| Underlying Principle | Convex Geometry & Random Projections |

| Primary Output | A "purity score" for each pixel, indicating how often it was an extreme projection |

| Key Parameters | Number of random vectors (skewers), PPI threshold value |

| Advantages | Conceptually intuitive; effective at finding spectral extremes |

| Limitations | Computationally intensive; results can be sensitive to the number of skewers; requires manual selection of endmembers from candidate list |

Figure 1: The PPI algorithm workflow for endmember candidate identification.

Experimental Protocol for PPI

Protocol: Implementing Pixel Purity Index for Endmember Extraction

1. Preprocessing of Hyperspectral Data:

- Radiometric Correction: Convert raw digital numbers to apparent reflectance or radiance using calibration coefficients.

- Noise Reduction: Apply a noise-reduction filter (e.g., a spatial or spectral median filter) to improve signal-to-noise ratio.

- Dimensionality Reduction: Transform the data using Minimum Noise Fraction (MNF) or Principal Component Analysis (PCA). The goal is to reduce computational load and isolate the signal-dominated components. Retain only the components with eigenvalues significantly greater than 1.

2. Algorithm Execution:

- Skewer Generation: Generate a large number (e.g., 10,000) of random unit vectors, known as "skewers," in the dimensionality-reduced data space.

- Projection and Extreme Finding: For each skewer, project all pixels onto it and record the pixels corresponding to the maximum and minimum projections (the "extremes").

- Purity Scoring: For each pixel, count the number of times it was recorded as an extreme. This count is its Pixel Purity Index score.

3. Post-processing and Endmember Selection:

- Thresholding: Apply a threshold to the PPI scores to create a list of candidate endmember pixels. This threshold can be absolute (e.g., pixels with scores > N) or relative (e.g., the top P% of scores).

- Visual Inspection and Clustering: Visually inspect the spectral profiles of the candidate pixels. Use clustering algorithms (e.g., k-means, spectral angle mapper) on the candidate pixels to group spectrally similar candidates and select the final endmembers from the cluster centers.

Validation: The final endmember set can be validated by examining the model's reconstruction error or by comparing the abundance maps they generate with known spatial features in the sample.

The SMACC Algorithm

Principle and Workflow

The Spectral Mixture Analysis by Chain Reactions (SMACC) is an automated endmember extraction algorithm that progressively builds a set of endmembers using a recursive process. Unlike PPI, which is a stochastic method, SMACC follows a deterministic, sequential procedure. It uses a projection-based approach to find the pixel spectrum that is most distinct from the current set of endmembers and adds it to the library. It then projects the data orthogonally to this new endmember, and the process repeats, hence the "chain reaction" in its name.

Table 2: Key Characteristics of the SMACC Algorithm

| Aspect | Description |

|---|---|

| Underlying Principle | Projection & Orthogonal Subspace |

| Primary Output | A full endmember library and corresponding abundance maps |

| Key Parameters | Number of endmembers, threshold for stopping criteria |

| Advantages | Fully automated; simultaneously produces endmembers and abundances; fast and efficient |

| Limitations | Can be sensitive to initial conditions; may extract implausible or noisy endmembers if not constrained |

Figure 2: The sequential, recursive workflow of the SMACC algorithm.

Experimental Protocol for SMACC

Protocol: Implementing SMACC for Automated Endmember and Abundance Extraction

1. Preprocessing of Hyperspectral Data:

- Perform radiometric correction and noise reduction as described in the PPI protocol.

- While SMACC is less sensitive to high dimensionality than PPI, applying MNF transformation can still stabilize the solution and speed up computation.

2. Algorithm Execution and Parameterization:

- Initialization: The algorithm typically starts by selecting the brightest pixel (e.g., the pixel with the largest vector norm) in the dataset as the first endmember.

- Abundance Estimation: After selecting an endmember, SMACC estimates the abundance of that endmember in every pixel using a non-negative least squares algorithm, enforcing the ANC.

- Residual Calculation: The contribution of the estimated endmember is subtracted from the original image, creating a residual data cube.

- Next Endmember Selection: The algorithm searches the residual cube for the pixel with the largest residual magnitude (i.e., the pixel worst explained by the current endmember set) and adds it as the next endmember.

- Iteration: The process of abundance estimation, residual calculation, and new endmember selection repeats iteratively.

3. Stopping Criteria and Output:

- The chain reaction stops when a predefined stopping criterion is met. Common criteria include:

- A user-specified maximum number of endmembers has been extracted.

- The magnitude of the largest residual falls below a set threshold.

- The reconstruction error for the entire scene is sufficiently low.

- Output: SMACC directly outputs the final set of endmember spectra and their corresponding fractional abundance maps for the entire scene.

Validation: As with PPI, inspect the plausibility of the extracted endmember spectra and the spatial coherence of the abundance maps. Cross-validate with known sample composition if possible.

Comparative Analysis and Application Guidance

Algorithm Comparison and Selection

Choosing between PPI and SMACC depends on the specific research goals, computational resources, and level of desired user intervention.

Table 3: Comparative Analysis of PPI and SMACC Algorithms

| Feature | Pixel Purity Index (PPI) | SMACC |

|---|---|---|

| Automation Level | Low (requires manual candidate selection) | High (fully automated from start to finish) |

| Computational Speed | Slower (depends on number of skewers) | Faster (deterministic and sequential) |

| Primary Output | List of candidate endmember pixels | Final endmember library & abundance maps |

| User Control | High control over final endmember selection | Lower control; driven by internal parameters |

| Best Use Case | Exploratory analysis where expert knowledge is key | High-throughput analysis of many samples |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagents and Computational Tools for Spectral Unmixing

| Item / Tool Name | Function / Application in Protocol |

|---|---|

| Hyperspectral Image Analysis Software (e.g., ENVI, HypraPy, Python with scikit-learn/specutils) | Provides the computational environment and implemented algorithms (PPI, SMACC) for processing HSI data cubes. |

| Spectral Library | A collection of known pure material spectra. Used for validation or as a reference for supervised unmixing methods. |

| Calibration Panels (e.g., White Reference, Dark Current) | Essential for the radiometric correction step to convert raw sensor data to physically meaningful reflectance values. |

| Minimum Noise Fraction (MNF) Transform | A critical pre-processing step for PPI to reduce data dimensionality and noise before running the endmember extraction. |

| Non-Negative Least Squares (NNLS) Solver | The computational core for abundance estimation in SMACC and other unmixing methods, enforcing the ANC. |

Advanced Perspectives: From Traditional Algorithms to Deep Learning

While PPI and SMACC are foundational tools, the field of spectral unmixing is rapidly evolving. The limitations of these traditional methods—particularly their neglect of spatial context and the manual intervention they often require—are being addressed by new paradigms.

The integration of spatial and spectral information is now a major trend. As highlighted in the review by [33], spatial-spectral unmixing methods can significantly improve the performance of endmember extraction, selection, and abundance estimation. Modern deep learning approaches are at the forefront of this integration. For instance:

- U-Net Architectures: Modified U-Net models can be trained to directly generate chemical maps from hyperspectral images. These models jointly use spatial and spectral information, producing results with superior spatial coherence and physical plausibility (e.g., predictions stay within the 0-100% range) compared to traditional pixel-wise methods like PLS regression [32].

- Fully Convolutional Networks (FCN) with Attention: Patch-wise frameworks based on FCNs avoid the computational redundancy and potential information leakage of older pixel-wise methods. The incorporation of spatial-spectral attention modules further enhances performance by activating the most informative spatial areas and spectral features [35].

These advanced methods represent the future of creating accurate, detailed chemical maps for materials research and drug development, moving beyond the capabilities of traditional algorithms like PPI and SMACC to provide a more robust and automated analysis workflow.

The transition from traditional spectral analysis to the generation of precise, spatially-coherent chemical maps represents a significant advancement in materials research. Hyperspectral imaging (HSI) captures detailed spectral information for each pixel in an image, creating a data-rich "hyperspectral cube" that contains both spatial and extensive spectral information [36] [23]. Unlike conventional RGB imaging with three color channels, hyperspectral imaging can encompass dozens to hundreds of narrow spectral bands, ranging from ultraviolet to short-wave infrared [23]. This detailed spectral data enables the identification of materials based on their unique spectral signatures or "fingerprints" [37].

However, transforming these complex datasets into accurate chemical maps has traditionally relied on methods like Partial Least Squares (PLS) regression, which generate pixel-wise predictions that often ignore spatial context and suffer from significant noise [38]. The advent of U-Net-based deep learning architectures has revolutionized this process by incorporating spatial relationships during analysis, thereby producing chemical maps with dramatically improved spatial correlation and biological or chemical relevance [38]. These advancements are particularly valuable in pharmaceutical development and materials science, where precise spatial distribution of components is critical for understanding product performance and stability.

Performance Comparison: Traditional vs. U-Net Approaches

Recent research demonstrates the superior performance of U-Net architectures compared to traditional methods for chemical map generation. The table below summarizes quantitative comparisons between these approaches:

Table 1: Performance comparison between traditional PLS and U-Net approaches for chemical mapping

| Metric | PLS Regression | U-Net Architecture | Improvement |

|---|---|---|---|

| Root Mean Squared Error | Baseline | 7% lower [38] | Significant |

| Spatially Correlated Variance | 2.37% [38] | 99.91% [38] | Dramatic |

| Prediction Range Adherence | Predictions beyond 0-100% range [38] | Stays within physically possible range [38] | Critical |

| Classification Accuracy | Not applicable | 92% (e-waste) [39] | High |

| Intersection over Union (IoU) | Not applicable | 0.39 (e-waste) [39] | Moderate |

The exceptional spatial correlation achieved by U-Net models (99.91% compared to 2.37% for PLS) indicates that the model successfully incorporates spatial context into its predictions, rather than treating each pixel as an independent measurement [38]. This capability is crucial for generating chemically plausible maps that accurately represent the continuous distribution of components in real-world materials.

Advanced U-Net Architectures for Chemical Mapping

Modified U-Net for Chemical Map Generation

A study focused on generating chemical maps of fat distribution in pork belly utilized a modified U-Net that maintained the core encoder-decoder structure with skip connections but incorporated a custom loss function optimized for chemical prediction tasks [38]. This approach skipped all intermediate steps required for traditional pixel-wise analysis, enabling an end-to-end workflow from hyperspectral image to chemical map. The model learned to produce predictions that respected physical constraints (0-100% fat content) without explicit programming, demonstrating its ability to incorporate domain knowledge directly from the data [38].

Hybrid Multi-Dimensional Attention U-Net

For hyperspectral image reconstruction—a critical prerequisite for chemical mapping—researchers have developed a Hybrid Multi-Dimensional Attention U-Net (HMDAU-Net) that integrates 3D and 2D convolutions [40]. This architecture addresses the unique challenge of processing spatial-spectral data cubes ((x,y,\lambda)) by:

- Employing 3D convolutions in initial layers to capture spectral correlations between adjacent wavelength bands [40]

- Transitioning to 2D convolutions in deeper layers to reduce computational cost while maintaining spatial feature extraction [40]

- Incorporating attention gates to highlight salient features and suppress noise carried through skip connections [40]

This hybrid approach balances the need for spectral fidelity with computational efficiency, making it practical for large-scale hyperspectral datasets [40].

U-Net for Electronic Waste Classification

In the domain of sustainable materials management, a modified U-Net has been applied to hyperspectral e-waste classification using only three spectral bands [39]. This architecture incorporated several enhancements:

- Group normalization to stabilize training with small batch sizes

- PReLU activation functions to introduce non-linearity while avoiding vanishing gradients

- Band-wise spectral attention in skip connections to enhance spectral-spatial feature fusion [39]

The system achieved 92% classification accuracy and a 0.39 Intersection over Union (IoU) score on the Tecnalia WEEE dataset, outperforming standard U-Net (90.15% accuracy, 0.357 IoU) and demonstrating a 23% improvement over traditional RGB-based approaches [39]. This is particularly valuable for identifying visually similar non-ferrous metals in recycling applications.

Experimental Protocol: U-Net for Chemical Mapping

Sample Preparation and Data Acquisition

Table 2: Research reagents and materials for hyperspectral chemical mapping

| Item | Function | Example Specifications |

|---|---|---|

| Hyperspectral Camera | Capture spatial-spectral data cube | 400-1000 nm range, 25+ spectral bands [37] |

| Reference Standards | Model calibration and validation | Certified chemical standards with known concentrations |

| Sample Mounting | Precise positioning | Motorized stages with temperature control (optional) |

| Data Storage System | Handle large hyperspectral datasets | High-speed solid-state drives, >1TB capacity |

| Computing Hardware | Model training and inference | GPU with >8GB VRAM, CUDA compatibility |

The protocol for implementing U-Net-based chemical mapping begins with hyperspectral data acquisition. For the pork belly fat mapping study, samples were systematically imaged using a hyperspectral camera covering relevant wavelength ranges (typically 400-1000 nm for organic compounds) [38]. Each hyperspectral image captured the full spatial-spectral data cube in a single snapshot, with careful attention to consistent illumination and distance to prevent artifacts [38].

Data Preprocessing and Annotation

The acquired hyperspectral data undergoes several preprocessing steps:

- Spectral calibration using white and dark references to normalize intensity values

- Spatial registration to correct for any optical distortions across wavelengths

- Noise reduction through spectral smoothing or spatial filtering algorithms

For supervised learning approaches, reference values for chemical composition must be obtained through reference analytical methods (e.g., chemical extraction and quantification) for a subset of samples or regions [38]. These reference measurements serve as ground truth for model training.

Model Training and Implementation

The U-Net model is trained using the following protocol:

- Data partitioning: Randomly split hyperspectral datasets into training (70%), validation (15%), and test (15%) sets, ensuring the same physical samples appear in only one set

- Loss function selection: Use mean squared error for continuous chemical values or cross-entropy for categorical classifications

- Hyperparameter tuning: Optimize learning rate, batch size, and network depth using validation set performance

- Regularization: Apply dropout, weight decay, or early stopping to prevent overfitting

- Evaluation: Assess model performance on held-out test set using metrics appropriate for the specific application

For the chemical mapping U-Net, training typically requires 50-100 epochs with a batch size of 8-16, depending on available GPU memory [38] [39].

Implementation Workflow

The following workflow diagram illustrates the complete process for U-Net-based chemical mapping from hyperspectral images:

Figure 1: Workflow for U-Net-Based Chemical Mapping

Technical Considerations and Future Directions

Data Compression and Computational Efficiency

Recent advances in hyperspectral snapshot compressive imaging (SCI) have addressed the challenges of handling massive hyperspectral datasets [40]. These systems compressively capture 3D spatial-spectral data-cubes in single-shot 2D measurements, significantly reducing storage and bandwidth requirements [40]. The reconstruction of full hyperspectral cubes from these compressed measurements represents an ill-posed problem that U-Net architectures are particularly well-suited to solve.

The computational demands of processing hyperspectral data cubes remain significant, especially for 3D convolutional operations. Future developments will likely focus on optimized architectures that balance spectral accuracy with inference speed, potentially through:

- Adaptive spectral sampling that focuses on diagnostically relevant wavelengths

- Knowledge distillation from larger to smaller models

- Hardware-software co-design for specialized processing platforms

Interpretation and Validation

As with many deep learning applications, model interpretability remains challenging. Techniques such as attention visualization and gradient-weighted class activation mapping (Grad-CAM) can help identify which spectral and spatial features most influence predictions. Additionally, uncertainty quantification through methods like Monte Carlo dropout provides valuable confidence estimates for chemical predictions [41].

Robust validation against multiple analytical techniques is essential, particularly when deploying these models in regulated environments like pharmaceutical development. Correlating U-Net-generated chemical maps with established methods such as chromatography or mass spectrometry imaging builds confidence in the approach.

U-Net architectures have demonstrated remarkable capabilities in transforming hyperspectral images into spatially-correlated chemical maps, significantly outperforming traditional methods like PLS regression. Through specialized modifications—including hybrid 2D/3D convolutions, attention mechanisms, and custom loss functions—these models effectively leverage both spatial context and spectral information to generate chemically plausible distribution maps. The implementation protocols outlined provide a foundation for researchers seeking to apply these powerful techniques to diverse materials characterization challenges, from pharmaceutical development to environmental sustainability. As hyperspectral imaging technology continues to advance toward higher speeds, better resolution, and reduced costs, U-Net-based chemical mapping will play an increasingly vital role in materials research and quality control applications.

Hyperspectral imaging (HSI) is a powerful analytical technique that combines imaging and spectroscopy to generate a three-dimensional dataset known as a hypercube, containing two spatial dimensions and one spectral dimension [42]. This enables the direct correlation of spatial information with spectral fingerprints for each pixel in a sample, providing both morphological and biochemical information non-destructively [43]. This application note details specific, actionable protocols for two critical use cases in materials research: pharmaceutical heterogeneity analysis and medical tissue diagnostics, framed within a broader thesis on hyperspectral chemical mapping.

Use Case 1: Pharmaceutical Heterogeneity Analysis

Background and Principle