How to Perform a Method Comparison Study: A Step-by-Step Guide for Researchers

This article provides a comprehensive, step-by-step framework for designing, executing, and interpreting a robust method comparison study, tailored for researchers, scientists, and drug development professionals.

How to Perform a Method Comparison Study: A Step-by-Step Guide for Researchers

Abstract

This article provides a comprehensive, step-by-step framework for designing, executing, and interpreting a robust method comparison study, tailored for researchers, scientists, and drug development professionals. It covers the entire research lifecycle—from foundational concepts and methodological selection to troubleshooting common pitfalls and validating findings. By integrating established guidelines with advanced strategies for handling real-world challenges like method failure, this guide empowers professionals to generate reliable, actionable evidence for critical decision-making in biomedical and clinical research.

Laying the Groundwork: Core Principles and Study Design

Defining the Research Question and Objectives for Your Comparison

A method-comparison study is fundamentally conducted to determine whether a new measurement method (test method) can be used interchangeably with an established one [1]. The core research question addresses a clinical or research need for substitution: can we measure a specific variable using either Method A or Method B and obtain equivalent results? [1] A well-defined research question and precise objectives are therefore the critical foundation for a valid and conclusive study. This document outlines the protocol for establishing this foundation within the context of a comprehensive method-comparison thesis.

Defining the Core Research Question

The overarching research question in a method-comparison study is one of agreement and substitution. The question must be specific, measurable, and structured to guide the entire experimental design.

Primary Research Question Format: "Is the measurement agreement between the new method [Test Method] and the established method [Comparative Method] for measuring [Analyte/Variable] within clinically acceptable limits for drug development purposes?"

This primary question should be broken down into more specific sub-questions, which directly inform the study's objectives:

- What is the bias (mean difference) between the two methods? [1]

- What are the limits of agreement for the differences between the two methods? [1] [2]

- Is the precision (repeatability) of the test method acceptable? [1]

- Does the observed bias have a constant or proportional component? [3]

Establishing SMART Objectives

The research objectives must be Specific, Measurable, Achievable, Relevant, and Time-bound (SMART). They translate the research question into an actionable plan.

Table 1: Example SMART Objectives for a Method-Comparison Study

| Objective Component | Description | Application Example |

|---|---|---|

| Specific | Clearly defines the methods, variable, and population. | To compare the measurement of blood glucose concentration between the new point-of-care glucometer (Test Method) and the central laboratory analyzer (Comparative Method) in venous whole blood from diabetic patients. |

| Measurable | Identifies the key metrics for comparison. | To quantify the bias and the 95% limits of agreement (using Bland-Altman analysis) between the two methods. |

| Achievable | Ensures the design is feasible with available resources. | To collect 100 paired measurements from 40 unique patient specimens over a 20-day period, covering the clinically relevant range (3.0-25.0 mmol/L). |

| Relevant | Links directly to the goal of method substitution. | To determine if the new glucometer's agreement is within pre-defined acceptable limits (±0.5 mmol/L bias) for clinical decision-making. |

| Time-bound | Sets a timeframe for completion. | To complete all data collection and primary statistical analysis within a 3-month period. |

Experimental Design and Protocol

A robust design is essential to ensure the results are valid and the objectives are met [1] [3].

Selection of Measurement Methods

- Fundamental Requirement: Both methods must measure the same underlying variable or analyte [1].

- Comparative Method: Ideally, an established "reference method" with documented correctness should be used. If a routine method is used, differences must be interpreted with caution, as inaccuracies could originate from either method [3].

- Test Method: The new, less-established method under investigation.

Sample and Measurement Protocol

The following protocol details the key steps for executing the comparison experiment.

Protocol 1: Sample Analysis and Data Collection Workflow

Sample Selection and Preparation:

- Number: A minimum of 40 different patient specimens is recommended, though 100-200 may be needed to assess specificity [3].

- Range: Specimens should be carefully selected to cover the entire working range of the method [3].

- Matrix: Specimens should represent the spectrum of matrices and disease states expected in routine use [3].

- Stability: Analyze specimens by both methods within a short time frame (e.g., 2 hours) to prevent degradation from causing differences [3].

Paired Measurement:

- Timing: Measurements should be taken simultaneously or in rapid succession, with randomized order if sequential, to ensure the same underlying value is being compared [1].

- Replication: Analyze each specimen singly by each method. Performing duplicate measurements is advantageous to identify errors and confirm discrepant results [3].

Data Collection and Initial Inspection:

- Record paired results (Test Method value vs. Comparative Method value) for each specimen.

- Graphical Inspection: Plot the data as a difference plot (test minus comparative vs. comparative value) or a scatter plot (test vs. comparative) during collection to immediately identify discrepant results for re-analysis [3].

Study Duration:

- The experiment should extend over multiple days (minimum of 5 days, ideally longer, e.g., 20 days) to incorporate routine analytical variation and minimize the impact of systematic errors from a single run [3].

Data Analysis and Interpretation

The analysis quantifies the agreement and checks the assumptions of the statistical methods.

Statistical Analysis Plan

Table 2: Key Statistical Analyses for Method Comparison

| Analysis Method | Purpose | Interpretation | Protocol |

|---|---|---|---|

| Bland-Altman Plot [1] [2] | To visualize agreement and estimate bias and limits of agreement. | The bias (mean difference) indicates how much higher/lower the new method is. The limits of agreement (bias ± 1.96SD) show the range where 95% of differences between methods are expected to lie. | 1. Calculate the difference (Test - Comparative) for each pair.2. Calculate the average of each pair.3. Plot differences (Y-axis) against averages (X-axis).4. Plot the mean difference (bias) and limits of agreement. |

| Linear Regression [3] | To model the relationship between methods and identify constant/proportional error. | The y-intercept indicates constant systematic error. The slope indicates proportional systematic error. | For a wide concentration range, fit a least-squares regression line (Y = a + bX), where Y is the test method and X is the comparative method. |

| Correlation Analysis [3] | To assess the strength of the linear relationship, not agreement. | An r-value ≥ 0.99 suggests the data range is wide enough for reliable regression estimates. A high correlation does not imply good agreement. | Calculate Pearson's correlation coefficient (r). |

| Precision Estimation [1] | To verify that the test method's repeatability is acceptable before assessing agreement. | If a method has poor repeatability, assessment of agreement is meaningless. | Perform a separate replication study to determine the standard deviation and coefficient of variation of the test method. |

Defining Acceptable Limits

A critical step, often omitted, is to define acceptable limits of agreement a priori based on clinical or analytical requirements [2]. This involves:

- Identifying critical medical decision concentrations for the analyte.

- Determining the maximum allowable error (total allowable error) at these concentrations that would not impact clinical decisions.

- Comparing the estimated bias and limits of agreement from the study against these pre-defined acceptable limits.

The Scientist's Toolkit

Table 3: Essential Reagents and Materials for a Method-Comparison Study

| Item | Function & Specification |

|---|---|

| Patient Specimens | The core sample for analysis. Should be a sufficient number (N=40-200) and cover the full analytical measurement range [3]. |

| Test Method Reagents/Kits | All consumables, calibrators, and controls required to operate the new method under investigation. Must be from a single lot number. |

| Comparative Method Reagents/Kits | All consumables, calibrators, and controls required to operate the established comparative method. Must be from a single lot number. |

| Statistical Software | Software capable of generating Bland-Altman plots and performing linear regression and paired t-tests (e.g., MedCalc, R, Python, specialized packages) [1] [2]. |

| Data Collection Template | A standardized spreadsheet or electronic data capture system for recording paired results, sample IDs, and timestamps to prevent transcription errors. |

| Standard Operating Procedures (SOPs) | Detailed SOPs for both the test and comparative methods to ensure consistent operation and minimize performance bias. |

In method comparison research, the selection of an appropriate study design is foundational to generating valid and reliable evidence. These designs provide the structured framework for planning, conducting, and analyzing studies that evaluate the agreement between a new measurement method and an established standard. The core research category is first divided into descriptive studies, which aim to accurately depict the characteristics of a method's performance without quantifying relationships, and analytic studies, which seek to quantify the relationship between the method and its outcomes, often by testing specific hypotheses [4]. Analytical studies are further classified based on researcher involvement: observational studies, where the researcher passively measures exposures and outcomes as they occur naturally, and experimental studies, where the researcher actively manipulates the intervention or exposure [4] [5]. For researchers and drug development professionals, a precise understanding of descriptive, analytical, and case-control designs is critical for designing robust experiments that can accurately characterize method performance, identify potential biases, and ultimately support regulatory submissions or process improvements.

Core Study Design Types and Definitions

Descriptive Studies

Descriptive studies serve as the initial exploration of a measurement method's behavior. They use a variety of methods to observe existing natural or man-made phenomena without influencing it, thereby gathering, organizing, and analyzing data to depict and describe "what is" [5]. In the context of method comparison, this involves detailing the basic performance characteristics of a new analytical technique without formally quantifying its relationship to a reference standard. These studies are essential for generating hypotheses, identifying potential sources of variation, and providing an in-depth look at processes and patterns that can inform subsequent analytical investigations.

Key Characteristics:

- Objective: To describe the distribution of outcomes or variables without establishing causal or correlational relationships.

- Researcher Role: Passive observer; no intervention is managed.

- Data Collection: Often at a single point in time (cross-sectional) or as a detailed report of a unique case.

- Outputs: Prevalence, incidence, case reports, case series, and qualitative descriptions [6] [4].

Analytical Observational Studies

Analytical observational studies attempt to quantify the relationship between two factors—specifically, the effect of an exposure (e.g., using a new measurement method) on an outcome (e.g., a measured result) [4]. In these studies, the researcher measures the exposure or treatments of the groups but does not assign them [4]. The direction of enquiry is a key differentiator. Cohort studies are forward-directional, following groups from exposure to outcome, while case-control studies are backward-directional, starting with the outcome and looking back for exposures [6]. These designs are particularly valuable in method comparison research when it is unethical or impractical to randomly assign participants to different measurement methods, such as when evaluating diagnostic methods for a rare disease.

Key Characteristics:

- Objective: To quantify associations between exposures (e.g., a new method) and outcomes (e.g., accuracy).

- Researcher Role: Passive measurer of pre-existing exposures and outcomes.

- Data Collection: Longitudinal for cohort studies; retrospective for case-control studies.

- Outputs: Measures of association, such as odds ratios and relative risks [6].

Case-Control Studies

A case-control study is a specific type of analytical observational study that involves identifying patients who have the outcome of interest (cases) and matching them with individuals who have similar characteristics but do not have the outcome (controls) [5]. The investigation then looks back in time to see if these two groups differed with regard to the exposure of interest [5]. In method comparison research, "cases" could be samples where a gold-standard method identifies an abnormality, while "controls" are samples where the result is normal. The study would then determine how frequently the new test method correctly classified these pre-defined groups.

Key Characteristics:

- Direction: Backward-direction (from outcome to exposure) and always retrospective [6].

- Efficiency: Ideal for studying rare outcomes or those with a long lag between exposure and outcome, as they require fewer subjects than cohort studies [6] [4].

- Challenges: Susceptible to recall and selection biases, and the selection of appropriate controls is critical [6] [4].

Table 1: Comparison of Key Study Design Characteristics

| Feature | Descriptive Studies | Analytical Observational Studies | Case-Control Studies |

|---|---|---|---|

| Primary Goal | Describe "what is"; generate hypotheses [5] | Quantify relationships between variables [4] | Identify risk factors or exposures for a specific outcome [5] |

| Typical Outputs | Prevalence, case reports, case series [5] | Relative risk, hazard ratios [6] | Odds ratios [6] |

| Temporality | Not established | Established in cohort studies [6] | Difficult to establish [6] |

| Best For | Detailing new methods or uncommon results | Studying the effect of predictive risk factors [4] | Studying rare diseases or outcomes [6] |

| Key Limitations | Cannot determine causality | Potential for confounding [6] | Susceptible to recall and selection bias [6] |

Experimental Protocols for Study Implementation

Protocol for a Descriptive Method Characterization Study

A descriptive study protocol for method comparison must meticulously document the standard operating procedures to ensure the data collected is reliable and reproducible. This protocol focuses on characterizing the basic performance of a new analytical method.

1. Objective: To comprehensively describe the precision, linearity, and range of a new high-performance liquid chromatography (HPLC) method for quantifying a novel drug compound in plasma.

2. Materials and Reagents:

- Reference Standard: High-purity drug compound for calibration.

- Quality Controls (QCs): Prepared in pooled plasma at low, medium, and high concentrations.

- Internal Standard: A structurally analogous compound for normalization.

- Sample Preparation Kit: Including solvents for protein precipitation and solid-phase extraction plates.

3. Experimental Procedure:

- Step 1 - Calibration Curve: Prepare and analyze a minimum of six non-zero calibration standards covering the expected range of concentrations (e.g., 1-1000 ng/mL). Each standard should be analyzed in duplicate.

- Step 2 - Precision and Accuracy: Analyze QC samples (n=5 per concentration) over three separate analytical runs. Calculate within-run and between-run precision (coefficient of variation, CV%) and accuracy (percentage of nominal concentration).

- Step 3 - Specificity: Analyze samples from at least six individual sources of blank plasma to confirm the absence of interfering peaks at the retention times of the analyte and internal standard.

- Step 4 - Data Recording: Record all peak areas, retention times, and calculated concentrations. The data should be summarized using descriptive statistics (mean, standard deviation, CV%).

4. Analysis Plan: The study is considered successful if the calibration curve demonstrates a coefficient of determination (R²) of ≥0.99, and both precision and accuracy values are within ±15% (±20% at the lower limit of quantification).

Protocol for an Analytical Observational (Cohort) Method Comparison Study

This protocol outlines a prospective cohort study to compare the diagnostic accuracy of a new point-of-care (POC) device against a central laboratory standard.

1. Objective: To determine the agreement and diagnostic performance of the "POC-Glu" meter compared to the standard laboratory glucose oxidase method in a cohort of diabetic patients.

2. Study Population & Recruitment:

- Population: Adult patients with Type 2 diabetes scheduled for routine follow-up at a clinic.

- Inclusion Criteria: Diagnosis of Type 2 diabetes, age 18-75 years.

- Exclusion Criteria: Critical illness, severe anemia, or on medications known to interfere with glucose meters.

- Sample Size: A minimum of 100 participants will be enrolled to provide adequate power for agreement statistics.

3. Data Collection Workflow:

- Enrollment (Baseline): Obtain informed consent and record demographic and clinical data.

- Exposure & Outcome Measurement: For each participant, collect a capillary blood sample for immediate analysis on the POC-Glu meter (exposure). Simultaneously, collect a venous blood sample, which will be processed and analyzed using the central laboratory method (outcome). The personnel performing the laboratory analysis will be blinded to the POC device results.

- Follow-up: The comparison is immediate; no longitudinal follow-up is required for this specific objective.

4. Statistical Analysis Plan:

- Primary Analysis: Bland-Altman analysis to assess the mean bias and limits of agreement between the two methods.

- Secondary Analysis: Calculation of Pearson's correlation coefficient and error grid analysis to determine clinical acceptability.

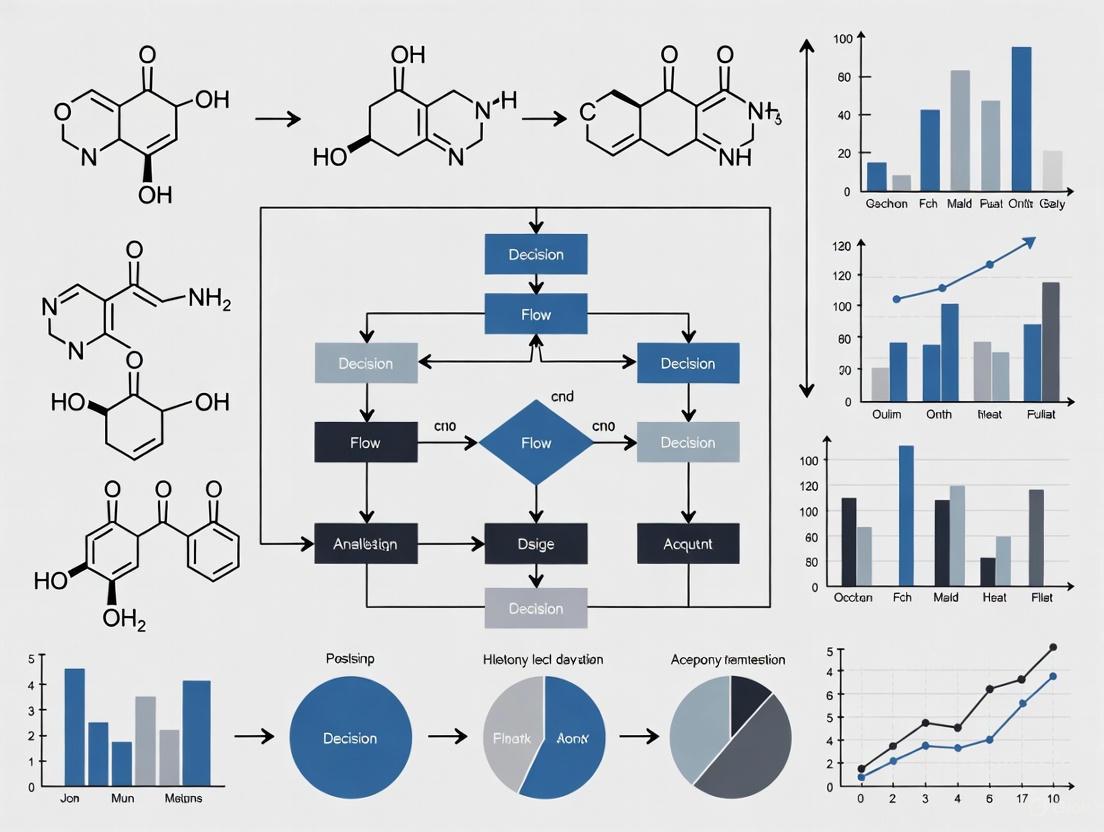

The following workflow diagram illustrates the protocol for the analytical observational cohort study:

Protocol for a Case-Control Method Validation Study

This protocol describes a case-control study designed to validate a new biomarker assay for detecting early-stage ovarian cancer.

1. Objective: To evaluate the sensitivity and specificity of a novel serum protein panel (the "OvaMark" assay) for distinguishing patients with early-stage ovarian cancer from healthy controls.

2. Case and Control Definition:

- Cases: Women with histologically confirmed, newly diagnosed Stage I or II epithelial ovarian cancer (n=50). Cases will be identified from a prospective surgical registry.

- Controls: Healthy women with no personal history of cancer, matched to cases by age (±5 years) and menopausal status (n=50). Controls will be recruited from a community wellness screening program.

- Biospecimens: Archived serum samples, collected prior to any treatment for cases and at enrollment for controls, will be used.

3. Laboratory Analysis:

- Blinding: All serum samples will be de-identified and coded before analysis. The laboratory personnel performing the OvaMark assay will be blinded to the case-control status of the samples.

- Assay Run: Cases and controls will be analyzed in a random order within the same assay batch to minimize batch effects.

- Data Output: The assay will generate a continuous score for each sample. A pre-specified cut-off value will be used to classify samples as positive or negative.

4. Statistical Analysis Plan:

- The association between the OvaMark assay result (exposure) and case-control status (outcome) will be summarized using an odds ratio (OR) from a logistic regression model.

- Sensitivity, specificity, and the area under the receiver operating characteristic (ROC) curve will be calculated to assess diagnostic performance.

The following workflow diagram illustrates the protocol for the case-control study:

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and materials essential for conducting robust method comparison studies in a bioanalytical or clinical chemistry context.

Table 2: Essential Research Reagents and Materials for Method Comparison Studies

| Item | Function & Application | Key Considerations |

|---|---|---|

| Certified Reference Standards | Provides the highest quality analyte for method calibration and validation. Serves as the foundation for establishing accuracy. | Purity and traceability to a primary standard are critical. Supplier certification is essential [7]. |

| Stable Isotope-Labeled Internal Standards | Used in mass spectrometry to correct for analyte loss during sample preparation and for matrix effects. Improves precision and accuracy. | The isotope label should be non-exchangeable and should not co-elute with any endogenous compounds. |

| Matrix-Matched Quality Controls (QCs) | Prepared in the same biological matrix as study samples (e.g., human plasma). Monitors assay performance and stability during the analytical run. | Should be prepared independently from calibration standards and cover the low, medium, and high concentration ranges. |

| Immunoassay Kits (ELISA) | Allows for the specific and high-throughput quantification of proteins, hormones, or antibodies. Often used in case-control studies for biomarker measurement. | Lot-to-lot variability must be assessed. The kit's stated dynamic range and specificity should be verified for the study context. |

| Point-of-Care (POC) Test Strips/Cartridges | The consumable component of POC devices that facilitates rapid, decentralized testing. The target of comparison in many device evaluation studies. | Strict lot control and storage conditions are necessary. The principle of detection (e.g., electrochemical, optical) should be understood. |

Data Presentation and Visualization Guidelines

Effective data presentation is paramount for communicating the results of method comparison studies clearly and accurately. The choice between tables and charts depends on the message and the audience's needs. Tables are superior for presenting detailed, exact numerical values where precision is key, allowing readers to probe deeper into specific results [8]. Charts, on the other hand, are better for showing trends, patterns, and visual insights, making them ideal for summarizing data and delivering a quick understanding of relationships [8].

Table 3: Comparison of Data Presentation Formats: Tables vs. Charts

| Aspect | Tables | Charts (e.g., Bar, Line, Scatter) |

|---|---|---|

| Visual Form | Text and numbers in rows and columns [8] | Graphical representation of data [8] |

| Primary Strength | Precise, detailed analysis and comparisons; provides specific numerical values [8] | Identifying patterns, trends, and relationships at a glance [8] |

| Best Use Case | Presenting raw data for technical audiences; summarizing participant characteristics; displaying exact values for statistical results [8] [7] | Showing trends over time (line charts); comparing quantities between groups (bar charts); displaying agreement (Bland-Altman plots) [8] |

| Interpretation | Requires more cognitive effort for side-by-side comparison and trend spotting [8] | Quick to interpret for an overview and general trends; visual cues make comparisons straightforward [8] |

| Audience | Best suited for users familiar with the subject who need granular detail [8] | More engaging and easier for a general audience, including stakeholders [8] |

Best Practices for Visualizations:

- Clarity is Key: Avoid "chartjunk" – extraneous elements like heavy 3D effects that distract from the data. Use clear labels for titles, axes, and legends [8].

- Choose the Right Chart: Use bar charts for comparing quantities, line charts for trends over time, and scatter plots for relationships between two continuous variables [8] [7].

- Limit Categories: In pie or bar charts, try to limit the number of categories to 5-7 to prevent clutter and aid comprehension [8].

- Standardized Flowcharts: For reporting study participant progress, especially in clinical trials, use a standardized flowchart (like the CONSORT flowchart) to clearly show enrollment, allocation, follow-up, and analysis numbers [9].

Choosing Between Longitudinal and Cross-Sectional Comparison Approaches

Selecting the appropriate research design is a critical first step in method comparison studies, particularly in drug development. The choice between longitudinal and cross-sectional approaches fundamentally shapes the research questions you can answer, the quality of evidence you generate, and the resources required. Longitudinal studies involve repeated observations of the same variables or participants over sustained periods—from weeks to decades—to detect changes and establish sequences of events [10] [11]. In contrast, cross-sectional studies examine a population at a single point in time, providing a snapshot of conditions, behaviors, or attitudes without a time component [12] [13]. This framework provides researchers, scientists, and drug development professionals with structured protocols for selecting, implementing, and analyzing data from these distinct methodological approaches.

Comparative Analysis of Research Designs

Fundamental Design Characteristics

Table 1: Core Structural Differences Between Longitudinal and Cross-Sectional Designs

| Aspect | Longitudinal Study | Cross-Sectional Study |

|---|---|---|

| Data Collection | Over multiple time points [12] | At a single point in time [12] |

| Participants | Same group followed over time [12] [10] | Different participants (a "cross-section") in each sample [12] [10] |

| Temporal Focus | Change, development, or trends over time [12] [11] | Differences or associations at one specific time [12] |

| Primary Purpose | Study changes or trends over time; can suggest cause-and-effect relationships [12] | Examine differences, associations, or prevalence at one time; shows correlation, not causation [12] [13] |

| Typical Duration | Months to decades [12] [10] | Usually short-term [12] |

| Resource Requirements | Expensive and time-consuming [12] [10] | Quick and cost-effective [12] |

Applications and Evidentiary Strength

Table 2: Research Applications and Methodological Considerations

| Consideration | Longitudinal Study | Cross-Sectional Study |

|---|---|---|

| Optimal Research Context | Tracking change, growth, or decline; predicting long-term outcomes; studying rare conditions [12] | Comparing groups or populations; exploring relationships at one time point [12] |

| Causal Inference | Can suggest cause-and-effect relationships by establishing sequence of events [12] [11] | Shows correlation, not causation [12] [13] |

| Key Strengths | Tracks individual-level change; establishes temporal sequence; reduces recall bias; controls for individual differences [12] [10] [14] | Fast and economical; good for large samples; helps identify correlations; easy replication [12] |

| Primary Limitations | Attrition; time-intensive; high cost; requires long-term management [12] [10] [11] | No time dimension; snapshot bias; cannot measure change or causality; confounding variables [12] [13] |

| Common Statistical Methods | Mixed-effect regression models (MRM); generalized estimating equations (GEE); growth curve modeling [11] | Prevalence calculation; odds ratios; descriptive statistics [13] |

Experimental Protocols

Protocol 1: Implementing a Longitudinal Study Design

Study Planning and Design Phase

- Define Research Question and Timeline: Precisely specify the outcome measures, time intervals, and what constitutes meaningful change. Example: In a drug development context: "Does [Drug X] improve glycemic control in Type 2 diabetes patients over 24 weeks?" Timeline: Baseline (week 0), midpoint (week 12), endpoint (week 24). Metrics: HbA1c levels, fasting plasma glucose, patient-reported outcomes [15] [16].

- Select Longitudinal Design Type:

- Panel Study: Follow the same specific individuals over time (gold standard for tracking individual change) [16] [17].

- Cohort Study: Follow a group defined by shared characteristics (e.g., patients diagnosed in the same year) but not necessarily the exact same individuals at each time point [17].

- Retrospective Study: Analyze historical data already collected (e.g., existing electronic health records) [10] [17].

- Establish Participant Tracking System: Implement unique participant identifiers from the first interaction. This prevents duplicate records and ensures automatic linkage of responses across all time points without manual matching, which is a common failure point in longitudinal research [16].

Data Collection and Management Phase

- Design Balanced Survey Instruments: Create instruments with:

- Standardize Data Collection Procedures: Maintain identical methods of data collection and recording across all study sites and time points to uphold validity. Use recognized classification systems for individual inputs [11].

- Implement Retention Strategies: Minimize attrition through regular participant engagement, updated contact information, and potentially incentives. Conduct exit interviews for participants who drop out to understand reasons for departure [11] [17].

Data Analysis and Interpretation Phase

- Address Missing Data: Employ techniques like maximum likelihood estimation or multiple imputation to handle attrition, which is preferable to listwise deletion that reduces power and may introduce bias [17].

- Select Appropriate Statistical Models: Use analytical approaches that account for within-subject correlation:

- Test for Measurement Invariance: In multi-wave studies, confirm that the same construct is measured consistently across time using confirmatory factor analysis [17].

Protocol 2: Implementing a Cross-Sectional Study Design

Study Planning and Design Phase

- Define Research Objective: Clearly state the snapshot goal: prevalence estimation, group comparison, or hypothesis generation. Example: "What is the prevalence of antibiotic resistance in Propionibacterium acnes isolates from acne vulgaris patients presenting at tertiary care centers in 2024?" [13].

- Determine Sampling Strategy: Identify the target population and establish inclusion/exclusion criteria. Ensure the sample is representative of the broader population to which you wish to generalize [13]. Recruit participants based solely on these criteria, not based on exposure or outcome status [13].

- Calculate Sample Size: Power the study appropriately to detect meaningful effects or provide precise prevalence estimates, often requiring larger samples for subgroup analyses [17].

Data Collection Phase

- Single Time Point Assessment: Collect all data (exposure and outcome) at one point in time from each participant [13].

- Standardize Measurement Procedures: Ensure all measures are administered consistently across all participants to avoid introduction of measurement bias.

- Minimize Snapshot Bias: Be aware that findings might be affected by temporary factors (e.g., seasonal variations, recent economic events) and document contextual factors that might influence results [12].

Data Analysis and Interpretation Phase

- Calculate Prevalence: Determine the proportion of the population with the characteristic or outcome of interest. Formula: Prevalence = (Number with condition) / (Total sample size) [13].

- Analyze Associations: Use odds ratios to study relationships between exposures and outcomes. For example, in a 2x2 table comparing exposure vs. outcome, the odds ratio = (AD)/(BC) [13].

- Control for Confounding: Use multivariate regression techniques to adjust for potential confounding variables that might distort the relationship between exposure and outcome.

- Interpret Causality Conservatively: Clearly acknowledge the limitation that cross-sectional analyses cannot establish causal order or directionality between variables [12] [13].

Visualizing Research Design Workflows

Research Design Selection Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagent Solutions for Method Comparison Studies

| Reagent/Material | Function/Application | Considerations |

|---|---|---|

| Unique Participant Identifier System | Tracks same participants across multiple time points in longitudinal studies; prevents data fragmentation and duplicate records [14] [16]. | Critical for maintaining data integrity; should be established before first data collection. |

| Standardized Data Collection Protocols | Ensures consistent measurement across time points (longitudinal) or sites (cross-sectional); maintains methodological rigor [11]. | Requires training and monitoring; documented in study manual. |

| Pharmacometric Models | Mathematical frameworks for analyzing longitudinal drug response data; can streamline proof-of-concept trials by using all available data [15]. | Allows mechanistic interpretation; can reduce required sample size in clinical trials. |

| Validated Bioanalytical Assays | Quantifies drug concentrations, biomarkers, or biochemical endpoints in biological samples [18]. | Requires validation for precision, accuracy, stability; supports GCP/GLP studies. |

| Data Linkage Systems | Connects multiple data sources (e.g., clinical, laboratory, administrative) for comprehensive analysis [19]. | Must address privacy and ethical considerations; requires secure infrastructure. |

| Retention Strategy Toolkit | Maintains participant engagement in longitudinal studies to minimize attrition bias [11] [17]. | Includes contact management, engagement materials, and potentially incentives. |

| Statistical Software for Repeated Measures | Analyzes correlated data from longitudinal designs (e.g., mixed-effects models, GEE) [11]. | Requires appropriate modeling of within-subject correlation. |

Identifying Independent, Dependent, and Control Variables

In quantitative research, variables are the fundamental building blocks that allow scientists to test hypotheses and draw meaningful conclusions from their data. A variable is any characteristic, number, or quantity that can be measured or quantified, and that can vary across observations, time, or conditions [20]. In experimental design, researchers systematically manipulate and measure these variables to establish cause-and-effect relationships and understand the mechanisms underlying biological processes, drug responses, and disease pathways.

Proper identification and operational definition of variables are particularly crucial in method comparison studies, where researchers aim to determine whether a new measurement method can effectively replace an established one without affecting patient results or clinical decisions [21] [1]. This article provides a comprehensive framework for identifying and classifying variables within the context of method comparison research, complete with practical protocols, visualization tools, and applications for drug development professionals.

Core Variable Types: Definitions and Roles

Independent Variables

The independent variable (IV) is the condition, characteristic, or intervention that the researcher manipulates, selects, or categorizes to examine its effects on an outcome. In experimental settings, it is the variable that is deliberately changed or controlled by the investigator [20] [22]. In method comparison studies specifically, the independent variable is typically the measurement method itself—researchers select which method (established vs. new) is used to perform the measurement [3] [21].

Independent variables are also referred to as:

- Explanatory variables (they explain an event or outcome)

- Predictor variables (they predict the value of a dependent variable)

- Right-hand-side variables (in regression equations) [22]

Key Characteristics:

- The IV is determined or set by the researcher before data collection

- It precedes the dependent variable in time

- It is not influenced by other variables in the study

- In method comparison studies, it defines the comparison groups (method A vs. method B) [20]

Dependent Variables

The dependent variable (DV) is the outcome that researchers measure to assess the effect of the independent variable. It represents the data collected as the study's results, and its value depends on changes in the independent variable [20] [22]. In method comparison studies, the dependent variable is the quantitative result obtained from measuring each sample using the different methods [21] [1].

Dependent variables are also called:

- Response variables (they respond to changes in another variable)

- Outcome variables (they represent the outcome being measured)

- Left-hand-side variables (in regression equations) [22]

Key Characteristics:

- The DV is always measured or observed, never manipulated

- It depends on or is influenced by the independent variable

- It is measured after the independent variable has been applied

- It should map cleanly to the construct of interest and have adequate reliability [20]

Control Variables

Control variables are factors that researchers hold constant or statistically adjust to minimize their potential impact on the relationship between independent and dependent variables. These are not the primary focus of the research hypothesis but are included because prior evidence suggests they may influence the outcome [20]. In method comparison studies, control variables might include sample handling procedures, operator experience, or environmental conditions [3] [1].

Key Characteristics:

- They are measured and accounted for to reduce alternative explanations

- They can be controlled through experimental design (matching, blocking) or statistical adjustment

- Proper control variables strengthen the validity of the study

- Over-controlling for mediators can hide true effects, while under-controlling can bias estimates [20]

Table 1: Summary of Variable Types in Research

| Variable Type | Role in Research | Method Comparison Example | Temporal Order |

|---|---|---|---|

| Independent Variable | Explains or predicts changes in the outcome; manipulated or selected by researcher | The measurement method being used (e.g., established method vs. new method) | Set first |

| Dependent Variable | The measured outcome; responds to changes in independent variable | The quantitative result obtained from measuring each sample | Measured after |

| Control Variable | Factor held constant to reduce bias; not the primary focus | Sample stability, operator training, reagent lot, environmental conditions | Measured and accounted for throughout |

Variable Identification in Method Comparison Studies

Method comparison studies represent a specific application where proper variable identification is essential for valid conclusions. These studies aim to assess the systematic errors (bias) that occur when measuring patient specimens with different methods [3]. The fundamental question is whether two methods can be used interchangeably without affecting patient results and clinical decisions [21].

Variable Framework for Method Comparisons

In a typical method comparison study:

- Independent Variable: The measurement method (test method vs. comparative method)

- Dependent Variable: The quantitative measurement result for each sample

- Control Variables: Sample characteristics, measurement conditions, operator factors, timing of analysis [3] [21] [1]

The comparative method should be carefully selected because the interpretation of results depends on assumptions about its correctness. When possible, a reference method with documented accuracy should be chosen [3].

Practical Protocol: Designing a Method Comparison Study

Objective: To determine whether a new measurement method (test method) provides results equivalent to an established method (comparative method) already in clinical use.

Experimental Design Considerations:

Sample Selection and Preparation

- Select a minimum of 40 patient specimens, preferably 100 or more [3] [21]

- Ensure samples cover the entire clinically meaningful measurement range [21] [1]

- Include samples representing the spectrum of diseases expected in routine application [3]

- Analyze specimens within their stability period, ideally within 2 hours of each other by both methods [3]

- Extend the experiment over multiple days (minimum 5 days) to mimic real-world conditions [3] [21]

Measurement Protocol

Data Collection and Management

- Record results from both methods for each sample

- Note any deviations from protocol immediately

- Document control variables (sample handling, timing, environmental conditions)

- Store data in structured format for analysis

Diagram 1: Method Comparison Study Workflow

Data Analysis and Visualization Approaches

Graphical Methods for Variable Relationships

Visualization of data plays a crucial role in understanding the relationship between variables in method comparison studies. Appropriate graphs help researchers detect patterns, identify outliers, and assess agreement between methods [23] [21].

Scatter Plots: Display paired measurements throughout the range of values, with the comparative method on the x-axis and test method on the y-axis. These show variability and help identify gaps in the measurement range that need additional samples [21].

Difference Plots (Bland-Altman Plots): Graph the differences between methods (y-axis) against the average of the methods (x-axis). These plots visually represent bias and agreement limits, helping researchers assess whether differences are consistent across the measurement range [21] [1].

Box Plots: Display distribution summaries for each method side-by-side, showing medians, quartiles, and potential outliers. These are excellent for comparing the central tendency and variability of results from different methods [23].

Table 2: Statistical Measures in Method Comparison Studies

| Statistical Measure | Purpose | Interpretation | Calculation Method |

|---|---|---|---|

| Bias | Estimate systematic difference between methods | Mean difference between test and comparative method | Mean of (test method - comparative method) |

| Correlation Coefficient (r) | Assess linear relationship between methods | Strength of association (not agreement) | Pearson or Spearman correlation |

| Linear Regression | Quantify constant and proportional error | Slope indicates proportional error, intercept indicates constant error | Y = a + bX |

| Limits of Agreement | Range within which most differences between methods lie | 95% of differences fall between these limits | Bias ± 1.96 × SD of differences |

Statistical Analysis Protocol

Step 1: Visual Data Inspection

- Create scatter plots and difference plots for initial data assessment

- Identify outliers and extreme values that may need verification

- Check for uniform distribution across the measurement range [21]

Step 2: Calculate Descriptive Statistics

- Compute mean, median, and standard deviation for each method

- Calculate correlation coefficient to assess linear relationship

- Note: Correlation shows association, not agreement [21]

Step 3: Assess Agreement

- Calculate bias (mean difference between methods)

- Compute standard deviation of the differences

- Determine limits of agreement (bias ± 1.96 × SD) [1]

Step 4: Evaluate Clinical Significance

- Compare observed bias to predefined clinically acceptable limits

- Assess whether agreement limits are narrow enough for methods to be used interchangeably

- Consider proportional error if present across measurement range [21] [1]

Diagram 2: Data Analysis Pathway for Method Comparison

Essential Materials and Research Reagents

Table 3: Essential Research Reagents and Materials for Method Comparison Studies

| Item Category | Specific Examples | Function in Study | Key Considerations |

|---|---|---|---|

| Patient Samples | Serum, plasma, whole blood, urine | Provide biological matrix for method comparison | Cover clinical range; ensure stability; represent disease spectrum [3] [21] |

| Calibrators & Standards | Manufacturer calibrators, reference materials | Establish measurement traceability and accuracy | Use same lot for both methods; verify calibration status [3] |

| Quality Control Materials | Commercial controls, pooled patient samples | Monitor assay performance during study | Include multiple concentration levels; use same QC for both methods [3] |

| Reagents | Test-specific reagents, buffers, substrates | Enable analyte detection and measurement | Document lot numbers; ensure proper storage conditions [3] |

| Consumables | Pipette tips, cuvettes, reaction vessels | Facilitate sample processing and analysis | Use consistent supplies throughout study; avoid lot changes [21] |

In pharmaceutical research and development, proper identification and control of variables in method comparison studies is essential for generating reliable data that supports regulatory submissions. When developing new biomarker assays, pharmacokinetic tests, or diagnostic methods, researchers must demonstrate that new methods provide equivalent results to established approaches [24].

The framework presented in this article provides drug development professionals with a structured approach to designing, executing, and interpreting method comparison studies. By clearly identifying independent, dependent, and control variables, researchers can generate robust evidence regarding method comparability, ultimately supporting critical decisions in drug development and patient care.

Understanding these variable relationships also facilitates proper statistical analysis and interpretation, ensuring that conclusions about method equivalence are valid and scientifically defensible. This systematic approach to variable identification strengthens the overall quality of research and supports the development of reliable measurement methods essential for advancing pharmaceutical science.

Formulating a Hypothesis and Establishing Success Criteria

For researchers, scientists, and drug development professionals, the validity of a new analytical method is not assumed but must be empirically demonstrated against an existing standard. A method comparison study is the critical experimental process that provides this validation, forming the cornerstone of reliable quantitative research, diagnostic development, and regulatory submission [24]. At the heart of a robust method comparison study lie two foundational elements: a precisely formulated hypothesis and clearly defined success criteria. These elements transform a simple technical exercise into a scientifically rigorous investigation capable of generating definitive evidence about a method's performance. This protocol details the systematic process of constructing a testable hypothesis and establishing statistically sound success criteria, ensuring that the resulting data meets the exacting standards required for internal decision-making and external regulatory approval.

The Conceptual Framework of a Method Comparison Study

A method comparison study is a structured experiment designed to evaluate the performance of a new candidate method against a comparator method [24]. The objective is to generate quantitative evidence that the candidate method is fit for its intended purpose, which often means demonstrating that its results are sufficiently equivalent or superior to those produced by the established method. The comparator can be an approved in-vitro diagnostic device, a reference method considered a gold standard, or, in some cases, a clinical diagnosis endpoint [24].

The entire study is built upon a core conceptual framework, illustrated below. This framework begins with the initial development of the candidate method and proceeds through the cyclical process of hypothesis and criteria formulation, experimental execution, and statistical analysis, ultimately leading to a conclusive determination of the method's performance.

Core Components of the Study

The following table outlines the essential components that must be defined prior to initiating a method comparison study. These definitions provide the necessary clarity and focus for the entire investigation.

Table 1: Core Definitions for a Method Comparison Study

| Component | Description | Consideration for Hypothesis & Criteria |

|---|---|---|

| Candidate Method | The new test method under evaluation [24]. | The hypothesis is a statement about this method's performance. |

| Comparator Method | The established, approved method used as a benchmark [24]. | Determines whether to calculate sensitivity/specificity (high confidence in comparator) or PPA/NPA (lower confidence) [24]. |

| Intended Use | The specific clinical or analytical purpose the candidate method is designed for [24]. | Dictates whether high sensitivity (e.g., for ruling out disease) or high specificity (e.g., for confirming disease) is prioritized [24]. |

| Sample Set | The collection of positive and negative samples with known results from the comparator method [24]. | A larger, well-characterized set leads to tighter confidence intervals and greater confidence in the results [24]. |

Formulating the Research Hypothesis

A research hypothesis in a method comparison study is a declarative statement that predicts the relationship between the performance of the candidate method and the comparator method. It must be specific, testable, and directly informed by the method's intended use.

Structure of the Hypothesis

The hypothesis typically follows a standard structure: "The candidate method demonstrates non-inferiority [or superiority, or equivalence] to the comparator method in detecting [analyte] as measured by [primary statistical metrics, e.g., sensitivity and specificity]."

Hypothesis Types

The specific nature of the claim defines the type of hypothesis, which in turn guides the statistical analysis plan.

Table 2: Types of Research Hypotheses for Method Comparison

| Hypothesis Type | Core Question | Example Scenario |

|---|---|---|

| Non-Inferiority | Is the new method at least as good as the old one? | The candidate method is cheaper or faster, and the primary goal is to ensure its diagnostic performance is not unacceptably worse than the established standard. |

| Superiority | Is the new method better than the old one? | The candidate method uses a more sensitive technology and is expected to have a lower missed-diagnosis rate (higher sensitivity) [25]. |

| Equivalence | Are the results from both methods effectively the same? | The goal is to replace an old instrument with a new one within a lab, requiring that both methods produce statistically interchangeable results. |

Defining Statistical Success Criteria

Success criteria are the pre-defined, quantitative benchmarks against which the study results are judged. Setting these criteria a priori is essential to avoid bias and ensure the study's integrity. The most common framework for a qualitative method (with positive/negative results) is the 2x2 contingency table [24].

Table 3: The 2x2 Contingency Table for Qualitative Methods

| Comparator Method: Positive | Comparator Method: Negative | Total | |

|---|---|---|---|

| Candidate Method: Positive | a (True Positive, TP) | b (False Positive, FP) | a + b |

| Candidate Method: Negative | c (False Negative, FN) | d (True Negative, TN) | c + d |

| Total | a + c | b + d | n |

Key Performance Metrics

Based on the 2x2 table, the following key metrics are calculated to define success criteria.

Table 4: Key Statistical Metrics for Success Criteria

| Metric | Calculation | Interpretation | Application Example |

|---|---|---|---|

| Positive Percent Agreement (PPA)or Sensitivity | 100 × [a / (a + c)] |

The candidate method's ability to correctly identify positive samples [24]. | A study of a COVID-19 antibody test reported a PPA of 80.0% (95% CI: 56.6–88.5%), indicating it detected 8 out of 10 true positives [24]. |

| Negative Percent Agreement (NPA)or Specificity | 100 × [d / (b + d)] |

The candidate method's ability to correctly identify negative samples [24]. | The same COVID-19 test had an NPA of 100.00% (95% CI: 95.2–100%), meaning it correctly identified all negative samples [24]. |

| Area Under the ROC Curve (AUC) | Area under the Receiver Operating Characteristic curve | An overall measure of diagnostic ability. An AUC of 1.0 represents a perfect test, 0.5 represents a worthless test [26] [25]. | A meta-analysis found that a contrast-enhanced ultrasound method for sentinel lymph node metastasis had an AUC of 0.94, indicating excellent diagnostic performance [25]. |

Establishing the Success Benchmarks

The final step is to set the specific numerical values for the key metrics that will define success. These benchmarks must be justified based on clinical need, analytical requirements, and regulatory guidance. The workflow below outlines the logical process for moving from the raw experimental data to a final, validated conclusion, using the predefined success criteria as the decision point.

Example Benchmark Setting: For a new qualitative diagnostic test, success criteria might be defined as:

- Lower bound of the 95% confidence interval for PPA must be ≥ 85%. (This ensures a high level of confidence in the test's sensitivity).

- Point estimate for NPA must be ≥ 95%. (This ensures a very high specificity).

- The AUC must be ≥ 0.90. (This ensures overall high diagnostic accuracy) [25].

Experimental Protocol for a Qualitative Method Comparison

This section provides a detailed, step-by-step protocol for conducting a method comparison study for a qualitative test (positive/negative result).

Research Reagent Solutions and Essential Materials

Table 6: Essential Materials for Method Comparison Experiments

| Item | Function & Specification |

|---|---|

| Candidate Test System | The complete test system under validation, including device, reagents, and software. |

| Comparator Test System | The approved, established test system used for benchmarking [24]. |

| Characterized Sample Panel | A panel of well-characterized clinical samples with known status. The panel should adequately represent the analytical and clinical range of the intended use population, including weak positives near the detection limit to robustly challenge the assay. |

| Standard Operating Procedures (SOPs) | Detailed, validated instructions for operating both the candidate and comparator methods to ensure consistency. |

| Data Collection Form | A standardized form (e.g., for a 2x2 contingency table) for accurate and consistent data recording [24]. |

Step-by-Step Workflow

The entire experimental process, from preparation to data analysis, is summarized in the following workflow. Adhering to this structured protocol ensures the generation of high-quality, reliable data for validating the method's performance.

Protocol Steps:

- Assemble Sample Panel: Obtain a sufficient number of positive and negative samples. The sample size should be justified by a statistical power calculation to ensure the study can reliably detect a meaningful difference if one exists. The panel should reflect the intended use population [24].

- Run Samples with Comparator Method: Test all samples using the established comparator method according to its approved SOP. Record all results.

- Run Samples with Candidate Method: Test all samples using the candidate method, following its detailed SOP. The testing should be performed by operators who are blinded to the results of the comparator method to prevent bias.

- Record Results: Tabulate the results into a 2x2 contingency table, classifying each sample as a True Positive (a), False Positive (b), False Negative (c), or True Negative (d) [24].

- Calculate Metrics: Using the formulas in Table 4, calculate the point estimates for PPA, NPA, and any other relevant metrics. Calculate the 95% confidence intervals for these estimates to understand the precision of the measurements [24].

- Compare to Success Criteria: Formally compare the calculated metrics and their confidence intervals against the pre-defined success benchmarks established in the study protocol. This comparison leads to the final decision on whether the candidate method has met the validation requirements.

Execution and Analysis: A Practical Roadmap for Your Study

In method comparison studies, the selection of an appropriate comparative method is the cornerstone for obtaining valid and reliable data. The fundamental purpose of this experiment is to estimate the inaccuracy or systematic error of a new test method by comparing it against an established comparative method [3]. The choice between a reference method and a routine method fundamentally influences the interpretation of observed differences and the subsequent conclusions regarding the test method's performance.

This selection dictates whether observed discrepancies can be directly attributed to the test method or require more complex investigation. Within regulated environments like drug development, this choice is a central requirement for the approval of new test methods [24]. A well-executed comparison not only validates a new method but can also reveal insights into the constant or proportional nature of systematic errors, guiding potential improvements [3].

Defining Reference and Routine Methods

Reference Methods

A reference method is a benchmark of quality, possessing a specific meaning that infers a high-quality method whose results are known to be correct. This correctness is established through comparative studies with an accurate "definitive method" and/or through traceability of standard reference materials [3]. In practice, these methods have themselves been rigorously evaluated and are considered gold standards, though they can be difficult to come by and often difficult to use [24].

- Primary Role: To provide results of the highest attainable accuracy.

- Key Characteristic: Well-documented correctness through traceability chains.

- Impact on Data Interpretation: Any differences between a test method and a reference method are assigned to the test method [3].

Routine Methods

The term "comparative method" is a more general term that does not imply documented correctness. Most routine laboratory methods fall into this category [3]. These are typically established, commercially available methods already in use within a laboratory. They may be perfectly adequate for clinical or research purposes but lack the extensive validation and traceability of a reference method.

- Primary Role: To provide results that are precise and consistent for routine use.

- Key Characteristic: May have relative accuracy but lacks definitive traceability.

- Impact on Data Interpretation: Observed differences must be carefully interpreted. If differences are large and medically unacceptable, it becomes necessary to identify which method is inaccurate [3].

Table 1: Core Characteristics of Reference and Routine Comparative Methods

| Feature | Reference Method | Routine Method |

|---|---|---|

| Fundamental Definition | High-quality method with documented correctness through traceability [3] | General term for methods without inferred documented correctness [3] |

| Theoretical Basis | Established through comparison with definitive methods or reference materials [3] | Validated for routine use but may lack highest-order traceability [3] |

| Primary Application | Definitive method comparison and bias assignment [3] | Routine laboratory testing and relative accuracy assessment [3] |

| Data Interpretation | Differences are attributed to the test method [3] | Differences require investigation to identify the source of inaccuracy [3] |

| Availability & Cost | Less available, often difficult to use, and expensive [24] | Readily available, integrated into laboratory workflows, cost-effective [3] |

Experimental Protocols for Method Comparison

Protocol 1: Comparison Against a Reference Method

This protocol is ideal for definitively establishing the systematic error of a new test method.

Step 1: Experimental Design and Sample Selection

- Sample Number: A minimum of 40 different patient specimens is recommended [3].

- Sample Quality: Select specimens to cover the entire working range of the method and represent the spectrum of diseases expected in routine application. Twenty carefully selected specimens covering a wide range are better than hundreds of random specimens [3].

- Measurement Replication: Analyze each specimen in singlicate by both test and reference methods. However, performing duplicate measurements on different samples or in different analytical runs is advantageous for identifying sample mix-ups or transposition errors [3].

- Time Period: Conduct the study over several different analytical runs on different days (minimum of 5 days) to minimize systematic errors from a single run [3].

Step 2: Specimen Handling and Analysis

- Specimen Stability: Analyze specimens by both methods within two hours of each other, unless shorter stability is known. Define and systematize specimen handling procedures prior to the study to prevent differences caused by handling variables [3].

- Analysis Order: Analyze test and reference methods side-by-side on the same sample split to isolate the analytical difference [27].

Step 3: Data Analysis and Interpretation

- Graphical Analysis: Begin with a difference plot (test result minus reference result on the y-axis versus the reference result on the x-axis). Visually inspect for patterns and outliers [3].

- Statistical Analysis: For data covering a wide analytical range, use linear regression statistics (slope, y-intercept, standard deviation about the regression line

s_y/x) to estimate systematic error (SE) at critical medical decision concentrations (X_c). CalculateY_c = a + bX_c, thenSE = Y_c - X_c[3]. - Outcome: Any statistically significant difference is attributed to the test method, providing a direct estimate of its inaccuracy [3].

Protocol 2: Comparison Against a Routine Method

This protocol is used when a reference method is unavailable, requiring careful interpretation to identify the source of any observed discrepancies.

Step 1: Experimental Design

- Follow the same guidelines for sample number, quality, and replication as in Protocol 1 [3].

- The study can be extended over a longer period, such as 20 days, with only 2-5 patient specimens per day [3].

Step 2: Procedure Comparison Considerations

- If comparing a point-of-care (POC) device to a central laboratory analyzer, a two-step approach is critical [27]:

- Method Comparison: Place analyzers side-by-side and test the same split sample. This isolates the analytical difference.

- Procedure Comparison: Place the POC analyzer in its intended location and compare results obtained through routine procedures. This reflects the total error, including preanalytical variables.

Step 3: Data Analysis and Interpretation

- Graphical Analysis: Use difference plots or comparison plots (test result vs. comparative result) [3].

- Statistical Analysis: For wide concentration ranges, use linear regression. For narrow ranges, calculate the average difference (bias) and standard deviation of the differences, often available from a paired t-test analysis [3].

- Outcome Interpretation:

Protocol 3: Procedure Comparison Study

This protocol specifically evaluates the entire testing process, including preanalytical variables, and is often confused with a pure method comparison [27].

Step 1: Experimental Design

- Do not use split samples. Instead, use samples obtained through the routine, intended procedures for each method [27].

- For a POC vs. lab comparison, the POC device uses a capillary whole blood sample while the lab analyzer uses a venous plasma/serum sample processed per standard operating procedures [27].

Step 2: Control for Variables

- Document all preanalytical variables, including [27]:

- Sample type (capillary, venous, arterial)

- Anticoagulants used

- Sample storage time and temperature

- Sample transport conditions

- Sampling devices

Step 3: Data Analysis

- The observed differences will reflect the sum of the analytical difference (between the methods) and the differences induced by the procedures [27].

- This type of study is beneficial for highlighting the importance of correct handling techniques and for training staff [27].

The following diagram illustrates the key decision points and protocols for selecting and executing a comparative method study:

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Materials for Method Comparison Studies

| Item | Function & Importance |

|---|---|

| Well-Characterized Patient Samples | A minimum of 40 specimens covering the entire analytical range and expected pathological conditions. The quality and range of samples are more critical than the total number [3]. |

| Reference Method Materials | Includes reagents, calibrators, and controls for the reference method. Their traceability to higher-order standards is crucial for definitive bias assignment [3]. |

| Test Method Materials | Reagents, calibrators, and controls for the candidate method being evaluated. Must be used according to the manufacturer's specifications. |

| Sample Splitting Device | Ensures that the same sample is analyzed by both methods, critical for isolating analytical bias from preanalytical variation [27]. |

| Appropriated Collection Tubes | Different methods may require specific sample matrices (e.g., serum, plasma, whole blood) or anticoagulants. Using the correct type is vital for a valid comparison [27]. |

| Stable Quality Control Materials | Used to monitor the stability and performance of both methods throughout the duration of the study, ensuring data integrity. |

| Statistical Analysis Software | Essential for performing linear regression, paired t-tests, and generating difference plots for objective data interpretation [3]. |

Data Presentation and Statistical Analysis

Effective data presentation is critical for interpreting method comparison studies. The initial analysis should always include graphical methods to visualize the relationship between methods and identify potential outliers or patterns.

Graphical Techniques:

- Difference Plot: Plots the difference between the test and comparative method results (test - comparative) against the comparative method's result. This is ideal when methods are expected to show one-to-one agreement and helps visualize constant or proportional error [3].

- Comparison Plot: Plots the test method result directly against the comparative method result. This is better for methods not expected to show perfect agreement and provides a visual line of best fit [3].

Table 3: Statistical Methods for Analyzing Comparison Data

| Statistical Method | Application Context | Key Outputs | Interpretation |

|---|---|---|---|

| Linear Regression | Data covers a wide analytical range (e.g., glucose, cholesterol) [3] | Slope (b), Y-intercept (a), Standard Error of Estimate (S_y/x) | Slope indicates proportional error. Y-intercept indicates constant error. SE is calculated at decision levels [3]. |

| Paired t-test / Average Difference (Bias) | Data covers a narrow analytical range (e.g., sodium, calcium) [3] | Mean Difference (Bias), Standard Deviation of Differences, t-value | The average difference (bias) estimates systematic error. The standard deviation describes the spread of the differences [3]. |

| Correlation Coefficient (r) | Assessing the adequacy of the data range for regression [3] | Correlation Coefficient (r) | An r ≥ 0.99 suggests a wide enough range for reliable regression estimates. A lower r indicates a need for more data or alternative statistics [3]. |

| 2x2 Contingency Table | Comparing qualitative methods (positive/negative results) [24] | Positive/Negative Percent Agreement (PPA/NPA) or Sensitivity/Specificity | Used to calculate agreement metrics between qualitative tests. The metrics are labeled based on confidence in the comparator [24]. |

Determining Sample Size and Ensuring Specimen Quality and Stability

Method comparison studies are fundamental for assessing the agreement between a new measurement procedure and an established comparative method in biomedical research and drug development. The validity of such studies hinges on two critical pillars: a sample size sufficient to ensure statistical reliability and rigorous protocols to maintain specimen quality and stability. Inadequate attention to either component can compromise data integrity, leading to erroneous conclusions about a method's performance. This document provides detailed application notes and protocols, framed within the broader context of executing a robust method comparison study, to guide researchers, scientists, and drug development professionals in these essential practices.

Determining Sample Size for Method Comparison Studies

Selecting an appropriate sample size is a critical step that balances statistical power with practical feasibility. An undersized study may fail to detect clinically significant biases, while an excessively large one wastes resources.

Key Principles and Recommendations

General Guidelines: For quantitative method comparisons, a minimum of 40 different patient specimens is widely recommended, with a preferable target of 100 specimens or more [3] [21]. A larger sample size is particularly crucial for identifying unexpected errors due to interferences or sample matrix effects, and for evaluating the specificity of a new method that employs a different chemical reaction or measurement principle [3] [21]. The quality of the specimens, specifically ensuring they cover the entire clinically meaningful measurement range, is as important as the quantity [28] [3].

Sample Size Based on Statistical Precision: For studies utilizing Bland-Altman Limits of Agreement (LoA) with single measurements per method, sample size can be determined based on the precision of the confidence intervals for the limits. Jan and Shieh proposed methods to calculate the sample size so that the expected width of an exact 95% confidence interval for the LoA does not exceed a predefined benchmark value, Δ [28]. A more conservative approach ensures the observed width will not exceed Δ with a specified assurance probability (e.g., 90%), which results in larger sample sizes [28].

Sample Size for Studies with Repeated Measurements: When the study design includes k repeated measurements from each subject (k ≥ 2), an equivalence test for agreement can be employed [28] [24]. This tests the hypothesis that the within-subject variance is less than a predefined unacceptable variance. The sample size is derived iteratively from the degrees of freedom required to achieve the desired statistical power (1-β) and significance level (α) [28]. For a rough, general recommendation, 50 subjects with three repeated measurements each has been suggested to produce stable variance estimates [28].

Sample Size for Observer Variability Studies: In inter-rater reliability studies involving multiple observers, sample size considerations differ. Research indicates that higher precision for confidence intervals is achieved primarily by increasing the number of observers, as increasing the number of subjects alone is not sufficient [28].

Table 1: Sample Size Recommendations for Different Study Types

| Study Type | Key Factor | Recommended Starting Point | Primary Reference |

|---|---|---|---|

| General Method Comparison | Coverage of clinical range | 40 specimens (minimum), 100+ preferred | [3] [21] |

| Bland-Altman LoA (Precision of CI) | Predefined benchmark (Δ) for CI width | Based on expected or assured width calculations | [28] |

| Studies with Replicates | Number of repeated measurements (k) per subject | ~50 subjects with 3 replicates each | [28] |

| Observer Variability | Number of observers (raters) | Increase number of observers for precision | [28] |

Experimental Protocol for Sample Size Calculation using Precision of Limits of Agreement

This protocol outlines the steps to determine sample size for a method comparison study based on the expected width of the confidence interval for the Limits of Agreement [28].

1. Define the Clinical Acceptability Benchmark (Δ):

- Establish the maximum allowable width for the 95% confidence interval of the LoA. This benchmark should be based on clinical requirements or analytical performance specifications derived from biological variation or clinical outcome studies [21].

2. Estimate Population Parameters:

- From pilot data or previous literature, obtain estimates for the mean (µ) and standard deviation (σ) of the differences between the two methods.

3. Select Assurance Probability:

- Choose whether to base the calculation on the expected width (similar to 50% assurance) or a higher assurance probability (e.g., 90%) that the observed width will not exceed Δ [28].

4. Perform Iterative Sample Size Calculation:

- Utilize statistical software (e.g., R, SAS) with specialized scripts to solve for the sample size (N) [28]. The calculation involves finding the N where the expected or assured upper confidence limit for the LoA's width is less than or equal to Δ.

- R-scripts for these calculations are readily available in the scientific literature, such as in the supplemental materials of Jan and Shieh (2018) [28].

5. Document and Justify:

- Clearly document the chosen Δ, the estimated parameters, the assurance probability, and the final calculated sample size in the study protocol.

Ensuring Specimen Quality and Stability

The reliability of method comparison data is profoundly affected by the quality and stability of the specimens used. Mismanagement in pre-analytical phases can introduce significant bias and variability.

Specimen Collection and Handling Protocol

A standardized protocol for specimen collection and handling is essential to minimize pre-analytical errors.

Specimen Selection: Patient specimens should be carefully selected to cover the entire clinically meaningful measurement range [28] [21]. They should represent the spectrum of diseases and conditions expected in the routine application of the method [3]. The sampling procedure should aim to include subjects whose measurements span this full range [28].

Sample Size and Volume: Collect a minimum of 40-100 patient specimens [21] [3]. The exact number should be guided by the sample size calculation. Ensure sufficient sample volume is collected for all planned analyses, including duplicates.

Tube Type and Order: For blood samples, use the appropriate collection tubes (e.g., serum, plasma with specific anticoagulants) as required by the methods. If comparing new and existing tubes, collect blood randomly into the different tube types to avoid order bias [29]. Gently invert tubes according to the manufacturer's instructions to ensure proper mixing of additives [29].

Time and Stability:

- Analyze specimens from both methods within a short time frame, ideally within two hours of each other, unless the analyte is known to have shorter stability [3].

- Analyze samples on the day of collection, or establish and adhere to validated stability conditions if storage is necessary [21] [29].

- Define and systematize specimen handling procedures prior to the study to prevent differences arising from handling variables rather than analytical errors [3].

Centrifugation: Centrifuge samples according to the manufacturer's recommendations for the specific tube type and analyte [29]. For example, BD Barricor tubes may require centrifugation at 4000xg for 3 minutes, while serum tubes might need 2000xg for 10 minutes [29].