Interference in Selectivity Testing: A Comprehensive Guide for Robust Bioanalytical Methods

This article provides researchers, scientists, and drug development professionals with a complete framework for understanding, identifying, and mitigating interference in selectivity testing.

Interference in Selectivity Testing: A Comprehensive Guide for Robust Bioanalytical Methods

Abstract

This article provides researchers, scientists, and drug development professionals with a complete framework for understanding, identifying, and mitigating interference in selectivity testing. Covering foundational concepts, practical methodologies, advanced troubleshooting techniques, and validation protocols, it offers actionable strategies to enhance the reliability and robustness of bioanalytical methods, particularly in High-Content Screening (HCS) and LC-MS/MS assays, ensuring data integrity from development to regulatory submission.

Understanding Interference: Foundational Concepts and Sources in Bioanalysis

Defining Selectivity and Distinguishing It from Specificity

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between antibody specificity and selectivity?

- Specificity refers to an antibody's ability to recognize and bind to a particular epitope—a unique structural part of an antigen. A highly specific antibody binds to a single, defined epitope. However, this epitope might be found on multiple different proteins [1] [2].

- Selectivity describes how well an antibody binds to its intended target molecule within a complex mixture, without binding to other proteins that may share a similar or identical epitope. A highly selective antibody will bind exclusively to its designated target protein in the context of your experiment [1] [2].

2. Can a monoclonal antibody be specific but not selective? Yes. A monoclonal antibody is inherently specific because it binds to a single epitope. However, if that specific epitope is present on multiple different proteins (e.g., isoforms or homologous proteins), the antibody will cross-react and is therefore not selective for your target of interest [2].

3. What are common sources of interference that affect selectivity in assays? Interference can arise from multiple sources, leading to false positives or negatives:

- Compound-mediated effects: Test compounds can be autofluorescent, quench fluorescence, or cause cellular injury/cytotoxicity, which obscures the true biological signal [3].

- Biological matrix effects: Components in culture media (e.g., riboflavins), cells, or tissues can elevate fluorescent background or quench signals [3].

- Exogenous contaminants: Lint, dust, plastic fragments, or microorganisms can cause image aberrations [3].

- Excipient interactions: In biologics and vaccines, formulation components can unfavorably interact with active ingredients or alter the assay environment [4].

4. How can I troubleshoot poor selectivity or interference in my experiments?

- Conduct a thorough risk assessment: Review your formulation and analytical method for potential interactions [4].

- Employ robust controls: Use control samples that mimic the product formulation but lack the target to identify cross-reactivity [2] [4].

- Utilize orthogonal assays: Confirm findings using a second assay based on a fundamentally different detection technology [3].

- Optimize analytical methods: Adjust conditions such as antibody dilution, sample dilution, or chromatography columns to mitigate interference [3] [5] [4].

5. How is selectivity quantified in pharmacology? In pharmacology, selectivity is often quantified as a selectivity ratio. This is calculated by dividing the half-maximal inhibitory concentration (IC50) or inhibition constant (Ki) for a secondary target by the value for the primary target. For example, a drug with a Ki of 1 nM for target A and 100 nM for target B has a 100-fold selectivity for target A [6].

Troubleshooting Guides

Guide 1: Addressing Antibody Cross-Reactivity

Problem: An antibody produces a signal in samples that lack the target protein, suggesting cross-reactivity and poor selectivity.

Investigation and Resolution Steps:

| Step | Action | Expected Outcome & Notes |

|---|---|---|

| 1 | Confirm Specificity | Verify the antibody binds only to its intended epitope using epitope mapping or competitive binding assays [1]. |

| 2 | Test for Selectivity | Run the assay using biological material with high expression, low expression, and a complete absence of the target protein. The signal should correspond proportionally to the target level [2]. |

| 3 | Check Related Proteins | Test the antibody against a panel of closely related proteins (e.g., different receptor isoforms). A selective antibody will not cross-react [2]. |

| 4 | Optimize Conditions | Titrate the antibody to find the optimal dilution. High concentrations can cause non-selective binding. Also, consider changing the assay buffer or blocking agents [2]. |

| 5 | Validate Integrity | Check the antibody's molecular integrity via SDS-PAGE. Exposure to high temperatures, repeated freeze-thaw cycles, or detergents can compromise selectivity [2]. |

Guide 2: Mitigating Compound Interference in High-Content Screening (HCS)

Problem: In cell-based HCS assays, test compounds produce artifactual signals not related to the intended biological target or phenotype.

Investigation and Resolution Steps:

| Step | Action | Expected Outcome & Notes |

|---|---|---|

| 1 | Statistical Flagging | Perform statistical analysis of fluorescence intensity data. Compounds causing interference often appear as outliers compared to control wells [3]. |

| 2 | Image Review | Manually review the images for signs of compound-mediated cytotoxicity (e.g., cell rounding, loss of adhesion) or unexpected fluorescence patterns [3]. |

| 3 | Orthogonal Assay | Use a counter-screen or an orthogonal assay with a different detection technology (e.g., luminescence instead of fluorescence) to confirm the compound's activity [3]. |

| 4 | Control for Autofocus | Be aware that fluorescent compounds or dead cells can interfere with image-based autofocus systems. Using laser-based autofocus (LAF) or adaptive image acquisition may help [3]. |

| 5 | Assay Re-design | If interference is common, consider re-developing the assay to use a different fluorescent probe or detection method less susceptible to the observed interference [3]. |

Experimental Protocols

Protocol 1: Validating Antibody Selectivity via Western Blot

This protocol outlines a method to test an antibody's selectivity by assessing its cross-reactivity with related proteins [2].

Methodology:

- Prepare Samples: Use cell lysates or tissues with confirmed:

- High expression of the target protein.

- Low or no expression of the target protein (e.g., knockout cell line).

- Expression of closely related protein family members.

- Perform Western Blot: Standard SDS-PAGE and western transfer.

- Antibody Incubation: Incubate the membrane with the primary antibody of interest at its optimal dilution.

- Detection: Use an appropriate detection system.

- Analysis: A selective antibody will produce a band only in the lane containing the target protein. Any bands in the related protein lanes indicate cross-reactivity and poor selectivity.

Protocol 2: LC-MS/MS Method for Specificity Troubleshooting

This protocol is adapted from an investigation into noroxycodone interference in urine drug testing [5].

Methodology:

- Sample Preparation: Hydrolyze 100 µL of urine with 200 µL of β-glucuronidase enzyme solution. Terminate the reaction with 300 µL of cold methanol. After centrifugation, mix the supernatant with mobile phase.

- LC Conditions:

- Column: Waters Acquity UPLC BEH C18 (1.7 µm, 2.1 x 100 mm)

- Mobile Phase A: 0.1% formic acid in water

- Mobile Phase B: 0.1% formic acid in acetonitrile

- Gradient: 4.5-minute program, starting at 98:2 (A:B)

- Flow Rate: 0.6 mL/min

- Column Oven: 40°C

- Injection Volume: 7.5 µL

- MS/MS Detection: Quantitative MRM acquisition.

- Troubleshooting Specificity: To enhance specificity, evaluate additional qualifier ion transitions. An interfering compound may co-elute and match one transition but not others unique to the true analyte.

Data Presentation

Quantitative Data on Selectivity and Specificity

The table below summarizes key quantitative and conceptual differentiators.

| Parameter | Specificity | Selectivity |

|---|---|---|

| Definition | Binding to a single, defined epitope [1] [2]. | Binding only to the intended target within a complex mixture [1] [2]. |

| Primary Concern | "To which molecular structure does the binder attach?" | "Does the binder attach to anything else in my sample?" [1] |

| Quantification (Pharmacology) | Not typically quantified as a ratio; considered an ideal state [6]. | Expressed as a selectivity ratio (e.g., IC50 secondary target / IC50 primary target) [6]. |

| Impact of Cross-reactivity | A specific binder can still be cross-reactive if its epitope is shared [2]. | Cross-reactivity directly defines poor selectivity [1]. |

| Ideal Agent | Binds to one epitope. | Binds only to the intended target protein in the experimental context [2]. |

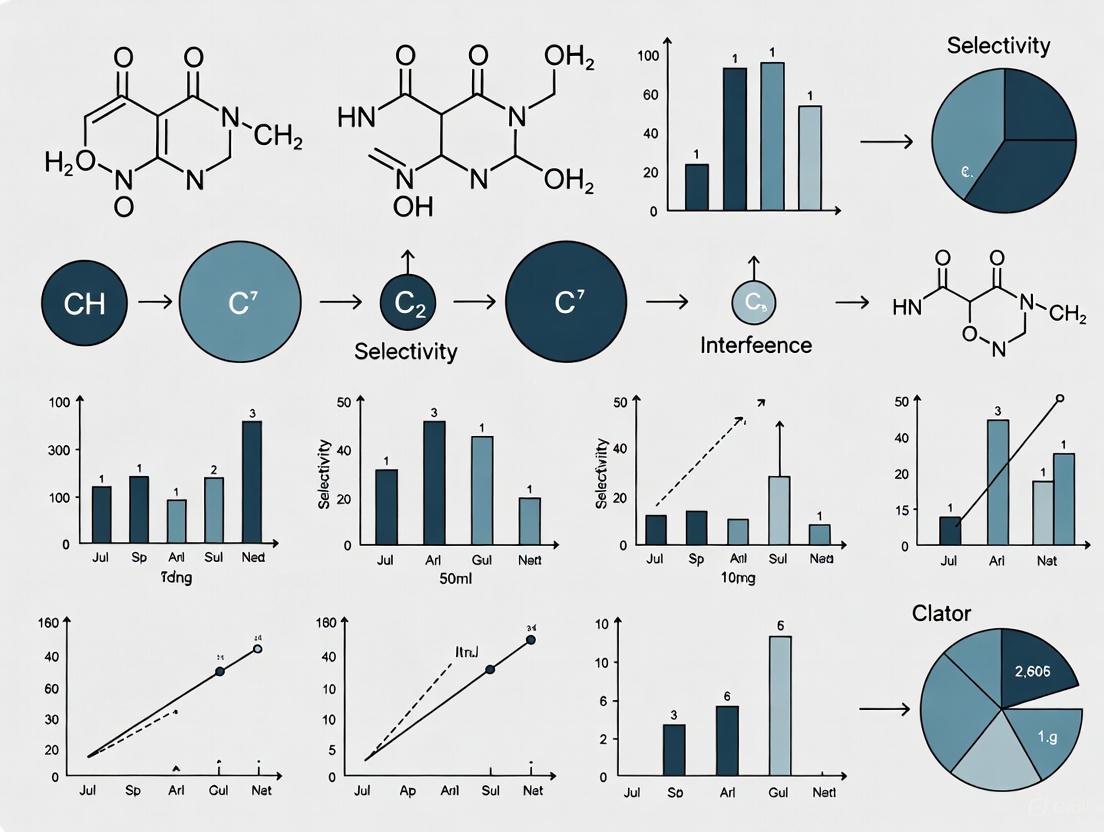

Visualizations

Selectivity vs Specificity Concept

Troubleshooting Interference Workflow

The Scientist's Toolkit

Research Reagent Solutions for Selectivity Testing

| Item | Function in Experiment |

|---|---|

| Knockout Cell Lysates | Biological material lacking the target protein; essential for confirming that an observed signal is specific to the target and not due to cross-reactivity [2]. |

| Related Protein Panel | A set of purified proteins closely related to the target (e.g., same protein family); used to test and validate antibody or drug selectivity [2]. |

| Isotype Control Antibody | An antibody with irrelevant specificity but of the same class; helps distinguish non-specific background binding from specific signal in immunoassays. |

| Orthogonal Assay Kits | A second assay based on a different detection principle (e.g., luminescence vs. fluorescence); used to confirm that a compound's effect is biological and not an artifact [3]. |

| Affinity-Purified Antibodies | Polyclonal antibodies purified against the specific immunogen; this process removes non-specific antibodies, improving specificity and selectivity [2]. |

| Stable Isotope-Labeled Internal Standards | Used in mass spectrometry; corrects for sample loss during preparation and matrix effects, improving assay accuracy and helping to identify interference [5]. |

FAQs: Identifying and Troubleshooting Experimental Interference

What are the most common types of compound-mediated interference in biochemical assays?

Compound-mediated interference occurs when test compounds themselves artificially affect the assay readout, rather than modulating the intended biological target. The most prevalent types are:

- Aggregation: Small molecules can form colloids (aggregates) that nonspecifically inhibit enzymes by adsorbing to them and causing partial unfolding. In high-throughput screening (HTS), over 90% of primary actives can sometimes be attributed to this phenomenon, wasting significant resources if not identified early [7].

- Spectroscopic Interference: This includes autofluorescence (compounds that emit light) and fluorescence quenching (compounds that absorb light), which directly interfere with optical detection methods used in assays like FRET, TR-FRET, and AlphaScreen [8] [3].

- Chemical Reactivity: Compounds may act as nonspecific reactive chemicals, redox-cyclers, or chelators, perturbing the biological system through undesirable mechanisms of action [3].

Troubleshooting Guide: If you suspect compound aggregation, include non-ionic detergents like Triton X-100 (e.g., 0.01% v/v) in your assay buffer, as this can disrupt colloid formation and reverse nonspecific inhibition [7]. For spectroscopic interference, statistical analysis of fluorescence intensity data can flag outliers; these compounds should be evaluated using orthogonal assays that employ a fundamentally different detection technology [3].

How can biological components of my assay system cause interference?

Endogenous substances within your biological reagents can elevate background signals or quench your readout.

- Media Components: Elements like riboflavins can autofluoresce, particularly in the ultraviolet to green fluorescent protein (GFP) spectral ranges, increasing background noise in live-cell imaging applications [3].

- Cellular Constituents: Molecules such as flavin adenine dinucleotide (FAD) and nicotinamide adenine dinucleotide (NADH) are intrinsically fluorescent and can interfere with fluorescent signal detection [3].

Troubleshooting Guide: During assay development, test for background fluorescence from your media and cells in the absence of any probes or test compounds. For live-cell assays, consider using phenol-red free media or media specifically formulated for reduced autofluorescence. Always include appropriate control wells (e.g., no-compound, no-probe) to establish a baseline [3].

What environmental factors in the lab can interfere with my experiments and how do I control them?

Environmental factors can directly affect the performance of sensitive equipment, the stability of reagents, and the integrity of your biological models. The table below summarizes key factors and control measures.

Table: Key Environmental Factors and Control Measures

| Factor | Potential Impact on Experiments | Recommended Control & Monitoring |

|---|---|---|

| Temperature [9] | Alters reaction rates, protein stability, and physical properties of materials. | Use calibrated thermometers; record temperature during procedures; utilize environmental chambers or ovens. |

| Humidity [9] | Can cause hygroscopic materials to absorb water, altering weight and composition; promotes condensation. | Use dehumidifiers or humidifiers; maintain records with hygrometers. |

| Ambient Light [9] | Causes photobleaching of fluorophores; can generate unwanted reflections in optical measurements. | Minimize exposure to direct sunlight; use specific light wavelengths (e.g., red light for sensitive samples); control light intensity. |

| Vibration [9] | Introduces noise in sensitive measurements (e.g., balances, spectrophotometers); can disrupt cell layers. | Use anti-vibration platforms; locate sensitive equipment away from vibration sources (e.g., centrifuges, heavy traffic). |

| Electromagnetic Interference (EMI) [9] [10] | Can cause noise or distortion in electronic measurements and equipment. | Use electromagnetic shielding; ensure proper grounding of all equipment. |

| Air Quality [9] | Airborne particles, chemical vapors, or spores can contaminate samples or assays. | Use adequate ventilation or laminar flow hoods; keep vials capped as much as possible. |

My homogeneous assay is giving inconsistent results. What could be wrong?

Homogeneous "mix-and-read" assays (e.g., AlphaScreen, FRET, TR-FRET) are highly susceptible to interference because test compounds are not removed prior to signal acquisition [8]. The lack of a wash step means that any compound with spectroscopic properties that overlap with your assay's detection wavelengths can cause trouble.

Troubleshooting Guide:

- Signal Attenuation: If your signal is lower than expected, test compounds might be quenching the signal or scattering light. Check for colored or turbid compounds [8].

- False Positives: If you have unexpected activation, test compounds might be autofluorescent. Time-resolved detection methods like TR-FRET can help mitigate this by introducing a delay before measurement, allowing short-lived compound autofluorescence to decay [8].

- General Strategy: Implement counter-screens that can identify these interference mechanisms. For example, run compounds in the absence of the biological target to detect autofluorescence, or add detergents to test for aggregation [8] [7].

How does interference manifest in High-Content Screening (HCS) and how can I detect it?

In HCS, interference can affect both the imaging detection technology and the biological integrity of the cellular model [3].

- Technology-Related Interference: Compound autofluorescence can produce artifactual signals that are mistaken for a true biological phenotype. Fluorescence quenching can mask real signals, leading to false negatives [3].

- Non-Technology-Related Interference: Compound-induced cytotoxicity or dramatic changes in cell morphology (e.g., cells rounding up or detaching) can be misinterpreted as a specific phenotypic effect. This can lead to false positives or negatives depending on the assay design [3].

Troubleshooting Guide:

- Statistical Flagging: Analyze parameters like nuclear counts and fluorescence intensity. Compounds that cause substantial cell loss or extreme fluorescence will appear as statistical outliers [3].

- Image Review: Always manually review images for wells containing potential "hit" compounds. Look for signs of cell death, altered morphology, or unusual fluorescence patterns [3].

- Orthogonal Assays: Confirm HCS hits using an alternative, non-image-based assay to ensure the phenotype is genuine and not an artifact of the detection method [3].

Experimental Protocols for Identifying Interference

Protocol 1: Detecting Compound Aggregation

Principle: This protocol uses non-ionic detergents to disrupt compound aggregates, thereby reversing nonspecific inhibition of an enzyme [7].

Materials:

- Test compound(s) in concentration-response (e.g., 3-fold serial dilution, typically from 100 μM to nM range)

- Target enzyme and substrate

- Assay buffer with and without 0.01% (v/v) Triton X-100 (or another suitable non-ionic detergent like Tween-20)

- Equipment for measuring enzyme activity (e.g., plate reader)

Method:

- Prepare two identical sets of concentration-response curves for the test compound.

- Perform the enzyme activity assay in parallel: one set with standard assay buffer and the other with buffer containing 0.01% Triton X-100.

- Measure the dose-response curves (e.g., IC50 values) under both conditions.

Interpretation: A significant right-shift (e.g., >3-fold increase) in the IC50 value in the presence of detergent is a strong indicator that the observed bioactivity is due to aggregation. True, specific inhibitors are typically unaffected by the presence of low concentrations of detergent [7].

Protocol 2: Counter-Screen for Fluorescent Interference

Principle: This protocol tests if compounds directly interfere with the fluorescent detection system of an assay, independent of the biology [8] [3].

Materials:

- Test compounds at the concentration used in the primary assay

- Assay plates and readout buffer

- All detection reagents (e.g., donor and acceptor beads for AlphaScreen, fluorescent antibodies for TR-FRET) except the biological components (e.g., enzyme, cell lysate)

- Plate reader compatible with your detection method

Method:

- Add readout buffer and detection reagents to the assay plate.

- Add test compounds at the desired concentration. Include positive and negative controls (e.g., DMSO only).

- Incubate the plate under the same conditions as your primary assay (time, temperature).

- Read the signal using the same instrument settings as your primary assay.

Interpretation: A signal significantly different from the negative control (DMSO) indicates the compound is interfering with the detection system. An increased signal suggests autofluorescence; a decreased signal suggests quenching [8] [3].

Research Reagent Solutions

Table: Key Reagents for Mitigating and Identifying Interference

| Reagent / Tool | Function / Purpose |

|---|---|

| Triton X-100 [7] | A non-ionic detergent used to disrupt compound aggregates in biochemical assays. |

| Bovine Serum Albumin (BSA) [7] | A "decoy" protein that can be added to assay buffers to saturate aggregators before they interact with the target enzyme. |

| Time-Resolved FRET (TR-FRET) [8] | A technology that uses lanthanide donors with long emission times to reduce short-lived compound autofluorescence. |

| Lipid Nanoparticles (LNPs) [11] [12] | A delivery system used for nucleic acid drugs (e.g., siRNA) to improve stability and cellular targeting, reducing off-target effects. |

| RF Sensors & Spectrum Monitoring Software [10] | Tools for detecting and geolocating Radio Frequency Interference (RFI) that can disrupt sensitive laboratory equipment. |

Workflow Diagrams

Diagram 1: Systematic Workflow for Investigating Experimental Interference

Diagram 2: Mechanisms of Compound-Mediated Assay Interference

The Impact of Autofluorescence and Fluorescence Quenching in HCS

Frequently Asked Questions (FAQs)

What are autofluorescence and fluorescence quenching, and why are they problematic in HCS?

Autofluorescence is the background fluorescence emitted naturally by components in biological samples, not from the specific fluorescent probes used in your assay. Fluorescence quenching is a process that decreases the intensity of fluorescence emitted by a probe [13].

In High-Content Screening (HCS), these phenomena are major sources of interference because they can mask the specific signal from your target of interest. This leads to a poor signal-to-noise ratio, complicating image analysis and potentially causing both false-positive and false-negative results in drug discovery campaigns [3] [14]. Compound-dependent interference, through autofluorescence or quenching, is a predominant source of such artifacts [3].

Autofluorescence can originate from multiple endogenous substances and external factors:

- Culture Media: Components like riboflavins can fluoresce in the ultraviolet through green fluorescent protein (GFP) variant spectral ranges [3].

- Endogenous Pigments: Flavins, porphyrins, collagen, elastin, red blood cells, and lipofuscin are common culprits. Lipofuscin, which accumulates with age, fluoresces strongly across a broad spectrum (500-695 nm) [14] [15].

- Fixatives: Aldehyde-based fixatives like formalin and glutaraldehyde create Schiff bases that produce autofluorescence with broad emission across blue, green, and red channels [15].

- Plant-Derived Scaffolds: In tissue engineering, lignin, chlorophyll, and polyphenolic molecules in decellularized plant scaffolds exhibit strong autofluorescence that overlaps with common dyes like Hoechst and FITC [16].

How can I quickly check if my experiment is affected by autofluorescence?

The most straightforward method is to prepare control samples that are identical to your test samples but are not incubated with your primary or fluorescently-labeled antibodies or probes. Image these control samples using the same acquisition settings as your experimental samples. If you detect fluorescence signal in these unstained controls, your assay is affected by autofluorescence [15].

Troubleshooting Guides

Guide 1: Strategies for Minimizing and Quenching Autofluorescence

Preventive Measures

- Optimize Fixation: Use paraformaldehyde instead of glutaraldehyde, and fix samples for the minimum time required to preserve structure. Alternatively, consider using chilled ethanol as a non-cross-linking fixative [15].

- Perfuse Tissues: Perfusing tissue with PBS prior to fixation can help remove red blood cells, a significant source of autofluorescence [15].

- Choose Fluorophores Wisely: Select fluorescent dyes that emit in spectral ranges far from the autofluorescence of your sample. For example, if your tissue has high background in the green channel (e.g., from collagen or NADH), use red or far-red fluorophores like Alexa Fluor 594 or CoraLite 647 [15].

Chemical Quenching Protocols

If autofluorescence is already present, the following chemical treatments can be effective.

Protocol A: Using TrueVIEW Autofluorescence Quenching Kit TrueVIEW is a commercially available solution designed to quench autofluorescence from collagen, elastin, and red blood cells in formalin-fixed tissues [14].

- Procedure: After completing your immunofluorescence staining protocol, incubate the tissue sections with the aqueous TrueVIEW reagent solution for 2 minutes [14].

- Considerations: This treatment is straightforward and requires only a short incubation step. It is compatible with common fluorophores and GFP. Note that it may cause a modest loss in specific signal brightness, which can be compensated for by increasing primary antibody concentration or camera exposure time [14].

Protocol B: Using Sudan Black B Sudan Black B is particularly effective against lipofuscin autofluorescence but also helps reduce background from other sources [13] [15].

- Reagent Preparation: Prepare a 0.3% solution of Sudan Black B powder in 70% ethanol. Stir the solution overnight on a shaker, protected from light. Filter the solution before use [13].

- Procedure: After immunolabeling, incubate the samples in the Sudan Black B solution for 10-15 minutes. Rinse gently with PBS. Do not use detergents in washes following treatment, as they can remove the dye [13].

- Considerations: Sudan Black B is a lipophilic dye and can fluoresce in the far-red channel, which must be considered when designing multiplex panels [15].

Protocol C: Using Copper Sulfate (Post-Fixation) Copper sulfate (CuSO₄) is a highly effective agent for quenching autofluorescence, particularly in fixed tissues and plant-derived scaffolds [16].

- Reagent Preparation: Prepare an aqueous solution of CuSO₄. Effective concentrations used in research range from 0.01 M to 0.1 M [16].

- Procedure: Incubate fixed samples (e.g., tissue sections or decellularized scaffolds) in the CuSO₄ solution for 10-20 minutes at room temperature. Wash thoroughly with PBS afterwards [16].

- Considerations: While highly effective for imaging fixed samples, the biocompatibility of CuSO₄ varies. It has been shown to reduce cell viability in some live-cell applications, so its use may be limited to post-fixation imaging [16].

Comparison of Common Autofluorescence Quenching Reagents

| Reagent | Best For Targeting | Typical Incubation Time | Key Advantages | Key Limitations |

|---|---|---|---|---|

| TrueVIEW Kit [14] | Collagen, Elastin, RBCs | 2 minutes | Simple, fast protocol; compatible with many fluorophores | May slightly diminish specific signal |

| Sudan Black B [13] [15] | Lipofuscin, general background | 10-15 minutes | Effective on many tissue types; low cost | Can fluoresce in far-red channel; avoid detergent washes |

| Copper Sulfate [16] | Broad-spectrum, plant scaffolds | 10-20 minutes | Highly effective reduction; stable quenching effect | Can be toxic to live cells; for post-fixation use |

| Sodium Borohydride [15] | Aldehyde-induced fluorescence | Variable | Reduces formalin-induced background | Variable results; can be unstable in solution |

Guide 2: Identifying and Mitigating Compound-Mediated Interference

In HCS, the test compounds themselves are a major source of artifacts, either by being inherently fluorescent (autofluorescence) by quenching the fluorescence of your detection probe [3].

Identification Strategies

- Statistical Outlier Analysis: Compound interference due to autofluorescence or quenching often produces fluorescence intensity values that are statistical outliers compared to the distribution of measurements in control wells [3].

- Image Review: Manually review images from wells containing hit compounds. Look for uniformly bright signals (suggesting compound autofluorescence) or unusually dim signals across all channels (suggesting quenching) that do not correlate with the expected biological phenotype [3].

- Count Nuclear Outliers: Substantial cell loss from cytotoxicity or disrupted adhesion can be identified by statistical analysis of nuclear counts and nuclear stain fluorescence intensity, which will appear as outliers [3].

Mitigation Strategies

- Use Orthogonal Assays: Confirm hits with an assay that uses a fundamentally different detection technology (e.g., luminescence, radiometric, or label-free methods) that is not susceptible to the same optical interferences [3].

- Implement Counter-Screens: Run a separate interference counter-screen to identify compounds that are inherently fluorescent or act as quenchers under your assay conditions [3].

- Test in Broad Dose Response: Test compounds across a broad concentration range. Phenotypes caused by specific biological activity will often show a dose-dependent response, whereas some interference effects may not [17].

Experimental Protocols

Protocol for a Counter-Screen to Identify Fluorescent Compounds

Objective: To identify test compounds that are inherently fluorescent and could cause false positives in your HCS assay.

Materials:

- Assay plates (e.g., 384-well microplates)

- Test compound library

- Dimethyl sulfoxide (DMSO)

- Assay buffer (without cells, probes, or reagents)

- HCS imaging system

Method:

- Plate Preparation: Dispense assay buffer into all wells of the microplate.

- Compound Addition: Add the test compounds to the plate at the same concentration and in the same solvent (typically DMSO) used in your primary HCS assay. Include control wells with solvent alone.

- Image Acquisition: Using your HCS instrument, image the plates with the same excitation and emission settings used for each channel in your primary assay.

- Data Analysis: Quantify the fluorescence intensity in each well. Compounds that show fluorescence intensity significantly higher than the solvent control wells are flagged as potentially autofluorescent. These compounds should be treated with caution or eliminated from consideration for the specific channel in which they fluoresce [3].

Key Research Reagent Solutions

| Reagent / Kit Name | Primary Function | Brief Description |

|---|---|---|

| TrueVIEW Autofluorescence Quenching Kit [14] | Chemical Quenching | A ready-to-use aqueous solution that quenches autofluorescence from collagen, elastin, and RBCs via electrostatic binding. |

| Sudan Black B [13] | Chemical Quenching | A lipophilic dye that masks autofluorescence, particularly from lipofuscin. It is prepared in an ethanol solution. |

| CELLESTIAL Probes [17] | Fluorescent Staining | A comprehensive portfolio of fluorescent probes and reporter assays for monitoring autophagy, cell signaling, and cytotoxicity in HCS. |

| SCREEN-WELL Libraries [17] | Compound Screening | Compound libraries designed for biological screening, useful for counter-screens and orthogonal assays. |

Diagrams of Concepts and Workflows

HCS Interference Identification Workflow

Autofluorescence Quenching Decision Guide

How Matrix Effects and Isobaric Compounds Compromise LC-MS/MS Results

Liquid chromatography-tandem mass spectrometry (LC-MS/MS) is renowned for its high sensitivity and selectivity in bioanalysis. Despite its power, the technique is susceptible to interferences that can compromise data quality and lead to inaccurate results. Two of the most significant challenges are matrix effects and interference from isobaric compounds. Matrix effects cause ion suppression or enhancement, altering the ionization efficiency of your target analyte due to co-eluting matrix components [18] [19]. Isobaric interference occurs when compounds with the same nominal mass as your analyte, or those that generate identical precursor/product ion combinations, are not separated chromatographically and thus contribute to the measured signal [18] [20]. Understanding, identifying, and mitigating these issues is fundamental to developing robust and reliable LC-MS/MS methods.

FAQs: Core Concepts and Troubleshooting

Q1: What exactly is a "matrix effect" in LC-MS/MS?

A matrix effect is an alteration in the ionization efficiency of the target analyte caused by co-eluting compounds from the sample matrix. This results in either ion suppression (a loss of signal) or, less commonly, ion enhancement (an increase in signal) [19] [21]. These effects arise because co-eluting substances compete for charge or droplet space during the ionization process (e.g., in electrospray ionization), physically blocking the analyte from being efficiently ionized [18] [22]. The consequences include reduced assay sensitivity, inaccurate quantification, and poor precision.

Q2: How do isobaric compounds interfere with my analysis?

Isobaric compounds possess the same nominal mass-to-charge ratio (m/z) as your target analyte. In LC-MS/MS, this becomes problematic when the chromatography fails to separate them. Even with the high selectivity of Multiple Reaction Monitoring (MRM), if an isobaric compound fragments to produce a product ion identical to one of your monitored transitions, it will contribute to the signal [18] [20]. This specific type of isobaric interference is a key challenge. Additionally, cross-signal contribution can occur from stable isotope-labeled internal standards (SIL-IS) if they are not pure, as the unlabeled form or other impurities can produce a signal in the channel of the native analyte [20].

Q3: My internal standard isn't correcting for matrix effects. Why?

A stable isotope-labeled internal standard (SIL-IS) is the gold standard for compensating for matrix effects, but it is not infallible. Problems arise if:

- The SIL-IS does not co-elute perfectly with the analyte. If the analyte elutes in a region of ion suppression but the SIL-IS elutes just outside of it, they will experience different degrees of suppression, leading to inaccurate quantification [18].

- The SIL-IS itself is impure. Contamination of the SIL-IS with the unlabeled analyte can cause a direct positive bias in the measured concentration of the native compound [20].

- The suppression is so severe that it drastically reduces the signal-to-noise ratio for both analyte and SIL-IS, compromising assay performance, especially at the lower limit of quantitation (LLOQ) [18].

Q4: I see unexpected peaks in my MRM channels. What should I do?

Unexpected peaks indicate a potential interference. Follow a systematic investigation to narrow down the cause [20]:

- Check for carryover by injecting a blank solvent sample after a high-concentration standard.

- Investigate cross-signal contribution by injecting individual standards and internal standards to see if they produce a signal in other MRM channels.

- Assess standard purity, as impurities in your stock or working solutions can be a direct source of interference [20].

- Evaluate chromatographic separation. Modify the LC method (column, mobile phase, gradient) to see if the unexpected peak shifts or separates from the analyte peak.

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Matrix Effects

Matrix effects are a major cause of unreliable quantification. The workflow below outlines a systematic approach for diagnosing and mitigating them.

Experimental Protocol: Assessing Matrix Effect

You can quantitatively evaluate the matrix effect using the post-extraction spiking method [19] [21]:

Prepare Three Sample Sets:

- Set A (Neat Solution): Spike the analyte into the mobile phase or a solvent.

- Set B (Post-Extraction Spike): Spike the analyte into a blank matrix sample after it has been extracted.

- Set C (Pre-Extraction Spike): Spike the analyte into a blank matrix sample before extraction.

Analysis and Calculation:

- Analyze all sets and record the peak areas (A, B, and C).

- Matrix Effect (ME):

%ME = (B / A) × 100%. A value <100% indicates ion suppression; >100% indicates enhancement. - Recovery (RE):

%RE = (C / B) × 100%. - Process Efficiency (PE):

%PE = (C / A) × 100%.

Mitigation Strategies:

- Sample Preparation: Use selective techniques like Solid-Phase Extraction (SPE) or Liquid-Liquid Extraction (LLE) to remove phospholipids and other interfering matrix components. Protein precipitation (PPT) is simple but often leaves behind significant interferents [21].

- Chromatographic Optimization: Adjust the LC method so that your analyte elutes in a "quiet" region where few matrix components elute, thereby avoiding suppression zones [18].

- Internal Standard: Always use a stable isotope-labeled internal standard (SIL-IS) that co-elutes perfectly with the analyte to best compensate for any remaining matrix effects [18] [22].

Guide 2: Identifying and Managing Isobaric Interference

Isobaric compounds and cross-signal contributions can be subtle but devastating to method specificity.

Experimental Protocol: Testing for Cross-Signal Contribution

This test is crucial during method development to uncover hidden interferences, especially from your internal standard [20].

- Prepare Individual Solutions: Prepare pure solutions of your analyte (A) and your stable isotope-labeled internal standard (SIL-IS, B).

- Analyze in All Channels: Inject solution A and monitor the MRM channels for both A and B. Then, inject solution B and monitor the MRM channels for both B and A.

- Interpret Results: The presence of a peak in the channel for B after injecting pure A (or vice versa) indicates cross-signal contribution. This could be due to:

- Impurity in the standard (e.g., unlabeled analyte in the SIL-IS stock) [20].

- In-source fragmentation of the injected compound generating a product ion identical to the monitored transition of the other compound.

- Insufficient chromatographic resolution between two different compounds sharing the same MRM transition.

Mitigation Strategies:

- Chromatographic Separation: This is the most effective approach. Develop a method that achieves baseline separation between the analyte and all potential isobaric interferents [18].

- Verify Standard Purity: Source high-purity standards and SIL-IS. Assess certificates of analysis and perform your own purity checks if necessary [20].

- Monitor Quality Metrics: Use data quality metrics like ion ratios (the ratio between multiple product ions for a single analyte) and retention time consistency. A significant deviation in the ion ratio of a real sample compared to a pure standard indicates interference [18] [23].

Essential Experimental Protocols

Protocol 1: Post-Column Infusion for Visualizing Matrix Effects

This qualitative method helps you "see" ion suppression/enhancement zones throughout your chromatographic run [18] [21].

- Setup: Connect a syringe pump containing a solution of your analyte to a T-union between the LC column outlet and the MS ion source.

- Infusion: Start a constant infusion of the analyte at a low flow rate (e.g., 5-10 µL/min) to produce a steady background signal.

- Injection: Inject a blank, extracted matrix sample into the LC system and start the chromatographic method as usual.

- Visualization: Monitor the MRM channel for the infused analyte. A steady signal indicates no matrix effects. Ion suppression appears as a dip or valley in the signal; ion enhancement appears as a peak or hill [18]. This map shows you which retention times to avoid during method optimization.

Protocol 2: Comprehensive Assessment of ME, Recovery, and Process Efficiency

For a rigorous validation, integrate the assessment of matrix effect, recovery, and process efficiency into a single experiment as summarized in the table below [19].

Table: Integrated Experiment for Assessing Key Method Performance Parameters

| Parameter | Sample Set | Description | Calculation |

|---|---|---|---|

| Matrix Effect (ME) | Set B (Post-extraction spike) vs. Set A (Neat solution) | Measures ion suppression/enhancement | %ME = (Peak Area B / Peak Area A) × 100% |

| Recovery (RE) | Set C (Pre-extraction spike) vs. Set B (Post-extraction spike) | Measures extraction efficiency | %RE = (Peak Area C / Peak Area B) × 100% |

| Process Efficiency (PE) | Set C (Pre-extraction spike) vs. Set A (Neat solution) | Overall efficiency of the entire process | %PE = (Peak Area C / Peak Area A) × 100% |

This integrated approach, often performed across multiple lots of matrix (e.g., 6 from different sources), provides a complete picture of how your sample matrix and preparation procedure impact quantification [19].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Reagents and Materials for Interference Mitigation

| Tool / Reagent | Function / Purpose | Key Consideration |

|---|---|---|

| Stable Isotope-Labeled Internal Standard (SIL-IS) | Compensates for variability in ionization and sample prep. Gold standard for correcting matrix effects. | Use 13C, 15N labels over deuterium when possible, as they are less likely to alter chromatographic retention [18]. Always check purity. |

| Selective SPE Sorbents | Removes specific matrix interferents like phospholipids. | Mixed-mode cation-exchange polymers are highly effective for cleaning up plasma samples [21]. |

| U/HPLC Columns | Provides chromatographic resolution to separate analytes from isobaric interferents. | Core-shell (e.g., Kinetex) columns offer high efficiency for fast separations [24] [25]. |

| High-Purity Standards | Ensures the accuracy of calibration and avoids introducing interference via impurities. | Request and review certificates of analysis. Test for cross-signal contribution [20]. |

| Post-Column Infusion Kit | Allows for qualitative mapping of ion suppression zones in the chromatogram. | A simple syringe pump and PEEK T-union are the core components [18]. |

Advanced Topics and Future Directions

Innovative approaches are continuously being developed to tackle the persistent challenge of interference. In non-targeted metabolomics, workflows like the IROA TruQuant use a library of stable isotope-labeled internal standards (IROA-IS) with a specific 95% 13C labeling pattern. This allows for the measurement and correction of ion suppression for a wide range of metabolites simultaneously, a significant advancement over traditional targeted methods [22]. Furthermore, the field is moving towards greater automation and intelligence. Artificial Intelligence (AI) is being explored to automatically flag suspicious data, such as abnormal ion ratios, and to manage routine quality control checks, potentially reducing human error and increasing throughput [26].

The Clinical and Research Consequences of Unmitigated Interference

Troubleshooting Guides

Guide 1: Troubleshooting Protocol Non-Compliance in Clinical Trials

Issue: Failure to conduct the clinical investigation according to the approved investigational plan.

Root Causes:

- Staff insufficiently familiar with complex protocol requirements.

- Eagerness to provide patients with investigational drug access, leading to enrollment of non-qualifying subjects.

- Failure to prioritize protocol-required assessments perceived as non-critical to immediate patient care [27].

Diagnostic Steps:

- Conduct a pre-trial protocol training session and assessment for all site staff.

- Implement a pre-enrollment checklist that verifies each inclusion and exclusion criterion for every candidate.

- Perform periodic internal audits of case report forms against source documents for key protocol-specified procedures [27].

Corrective and Preventive Actions (CAPA):

- Immediate Correction: Document any protocol deviations immediately. Report critical deviations to the IRB and sponsor as required.

- Root Cause Analysis: Investigate if deviations are due to a complex protocol, lack of training, or workload issues.

- Preventive Action: Advocate for simplified protocol designs with sponsors. Implement a robust training program and ensure adequate staffing. Use risk-based monitoring strategies to focus on critical data and processes [27].

Guide 2: Troubleshooting Signal Interference in RNAi Therapeutic Development

Issue: Inefficient gene silencing due to poor delivery and off-target effects of RNAi therapeutics.

Root Causes:

- Instability of "naked" siRNA/shRNA in the bloodstream.

- Inefficient uptake by target cells and tissues beyond the liver.

- Activation of the innate immune system [11] [28].

Diagnostic Steps:

- Biodistribution Analysis: Use in vivo imaging or single-cell assays to track the distribution of the RNAi therapeutic.

- qPCR/Western Blot: Quantify target mRNA and protein levels in the target tissue to confirm silencing efficacy.

- Cytokine Profiling: Assess levels of interferons and other cytokines to detect immune activation [11].

Corrective and Preventive Actions (CAPA):

- Optimize Delivery System: Formulate RNAi molecules with advanced lipid nanoparticles (LNPs) or GalNAc conjugates for improved stability and hepatocyte-specific targeting [11].

- Chemical Modification: Incorporate chemical modifications (e.g., 2'-O-methyl) into the oligonucleotide backbone to enhance nuclease resistance and reduce immunogenicity [11].

- Explore Novel Platforms: For extra-hepatic targeting, investigate emerging delivery platforms such as polymeric nanoparticles or cell-penetrating peptides [11] [28].

Guide 3: Troubleshooting Patient Interference in Clinical Trial Enrollment and Retention

Issue: Failure to recruit and retain a diverse and representative patient population, leading to delayed trials and limited data generalizability.

Root Causes:

- Lack of patient awareness about clinical trial opportunities.

- Significant patient burden (travel, time, cost).

- Historical mistrust of the research enterprise and health misinformation [29].

Diagnostic Steps:

- Feasibility Assessment: Use data analytics to map disease prevalence and demographic data against proposed trial site locations.

- Patient Survey/Advisory Board: Elicit direct feedback from patient communities on protocol design and perceived barriers.

- Track Screening & Withdrawal Data: Monitor screening failure reasons and dropout rates in real-time to identify patterns [30] [29].

Corrective and Preventive Actions (CAPA):

- Community Engagement: Partner with community health workers, faith-based groups, and HBCUs to build trust and awareness [29].

- Reduce Patient Burden: Implement decentralized clinical trial (DCT) elements, such as home health visits, local lab draws, and eConsent. Simplify protocols where possible [31] [29].

- Flexible Payment Structures: Implement timely and flexible payment processes that accommodate complex, multi-country trials to improve participant retention [29].

Frequently Asked Questions (FAQs)

Q1: What are the most common regulatory compliance issues for clinical research sites? The most frequent issue cited in FDA Warning Letters is protocol non-compliance (21 C.F.R. § 312.60). This includes enrolling subjects who do not meet eligibility criteria and failing to perform protocol-required assessments. Another common issue, especially for sponsor-investigators, is failing to submit an Investigational New Drug (IND) application before commencing a study that meets the definition of a clinical investigation [27].

Q2: How can we mitigate interference from off-target effects in RNAi therapy development? The primary strategy is the use of chemically modified oligonucleotides. Incorporating modifications like 2'-O-methyl or 2'-fluoro nucleotides into the siRNA structure enhances binding specificity and reduces the potential for innate immune activation. Furthermore, rigorous bioinformatic analysis during the design phase is essential to minimize sequence homology with non-target mRNAs [11].

Q3: Our clinical trials are suffering from high screen failure rates. Can AI help? Yes, AI failure-prediction models can forecast screen failure months before the first patient is enrolled. These models analyze features such as site-specific randomization velocity, historical screen-to-randomization ratios, and the alignment of local patient population demographics with inclusion/exclusion criteria. This allows sponsors to select better-performing sites or adapt recruitment strategies proactively [30].

Q4: What are the key delivery systems for overcoming the biological interference barrier in RNAi therapeutics? The two dominant delivery systems are:

- Lipid Nanoparticles (LNPs): The leading platform, particularly for systemic administration, offering protection and efficient cellular uptake. They hold about 60% of the market share in RNAi delivery [11].

- GalNAc Conjugates: A targeted delivery system for hepatocytes. These conjugates are highly effective for liver-specific diseases and allow for subcutaneous administration with a wide therapeutic index [11] [28].

Q5: What is the clinical consequence of unmitigated interference from a non-diverse trial population? The primary consequence is limited generalizability of the trial results. If a trial population does not reflect the real-world demographic that will use the drug, critical differences in safety and efficacy across sub-populations may be missed. This can lead to unexpected adverse reactions or suboptimal dosing in certain patient groups once the drug is on the market. Regulatory agencies now require Diversity Action Plans to address this [27] [29].

Experimental Protocols

Protocol 1: In Vivo Efficacy Testing of an RNAi Therapeutic in a Murine Model

Objective: To evaluate the efficacy and specificity of a novel siRNA formulation in silencing a target gene in the mouse liver.

Materials:

- Test Article: siRNA formulated in LNP or as a GalNAc-conjugate.

- Control: Scrambled siRNA in the same formulation (negative control).

- Animals: C57BL/6 mice (n=8 per group).

- Reagents: qPCR kit, tissue protein extraction reagent, Western blot supplies, primers/probes for target gene and housekeeping gene.

Methodology:

- Dosing: Administer a single intravenous (LNP) or subcutaneous (GalNAc) dose of the test or control article to mice.

- Monitoring: Monitor animals for signs of toxicity (weight loss, behavior) for the duration of the study.

- Tissue Collection: At predetermined endpoints (e.g., 7, 14, 28 days post-dose), euthanize animals and harvest liver tissue.

- Analysis:

- mRNA Analysis: Homogenize liver tissue. Extract total RNA and perform qPCR to quantify the relative expression level of the target mRNA compared to the control group.

- Protein Analysis: Extract protein from liver tissue. Perform Western blot analysis to confirm reduction of the target protein.

- Data Analysis: Use statistical tests (e.g., unpaired t-test) to compare the target gene expression levels between the test and control groups. A significant reduction (e.g., >50%) indicates successful silencing [11].

Protocol 2: AI-Driven Predictive Analysis for Clinical Trial Site Selection

Objective: To use historical and operational data to predict and mitigate site-level interference in patient enrollment.

Materials:

- Data Sources: Historical site performance data (screening, randomization, and completion rates), country-level start-up timeline (SLA) data, public disease prevalence databases, and census demographic data.

- Software: AI/ML platform capable of gradient boosting or similar ensemble methods [30].

Methodology:

- Feature Engineering: Create predictive features from the data sources, including:

predicted_randomization_velocityprotocol_complexity_scoredemographic_fit_scorecompeting_trial_density

- Model Training: Train a predictive model on completed study data to identify sites likely to meet or exceed enrollment targets.

- Prediction & Validation: Apply the model to a new pool of potential sites for a forthcoming trial. Rank sites based on their predicted performance score.

- Decision Point: Select the top-performing sites for feasibility questionnaires and initiation. The model's output can also inform whether backup sites need to be pre-identified [30].

Data Presentation

Table 1: Clinical Outcomes from BaxHTN Trial on Uncontrolled Hypertension

This table summarizes the efficacy and safety data of baxdrostat from the BaxHTN trial, demonstrating the impact of a targeted therapeutic in a resistant patient population [32].

| Trial Phase / Measure | Placebo Group | Baxdrostat 1 mg | Baxdrostat 2 mg |

|---|---|---|---|

| Part 1 (12-week, randomized) | |||

| Placebo-adjusted Reduction in Seated SBP (Primary Endpoint) | Baseline | -8.7 mmHg | -9.8 mmHg |

| Proportion with Controlled SBP | 18.7% | 39.4% | 40.0% |

| Part 3 (8-week, withdrawal) | |||

| Change in SBP (After withdrawal) | +1.4 mmHg | Not Applicable | -3.7 mmHg |

| Safety (First 12 weeks) | |||

| Serious Adverse Events | 2.7% | 1.9% | 3.4% |

| Discontinuation due to Hyperkalemia | 0% | 0.8% | 1.5% |

Table 2: RNA Interference Drug Delivery Market Forecast and Segmentation (2025-2034)

This table provides a quantitative overview of the growing RNAi therapeutics market, highlighting key growth segments and technologies [11].

| Category | Segment | Market Share (2024) or Key Metric | Projected CAGR (2025-2034) |

|---|---|---|---|

| Overall Market | Global Market Size (2025) | USD 118.18 Billion [11] | 18.11% [11] |

| By Technology | siRNA | 65% [11] | Dominant |

| shRNA | Not Specified | 23.6% [11] | |

| By Delivery System | Lipid Nanoparticles (LNPs) | 60% [11] | Dominant |

| Polymeric Nanoparticles | Not Specified | 20.70% [11] | |

| By Target Disease | Cancer | 40% [11] | Not Specified |

| Genetic Disorders | Not Specified | 23.40% [11] | |

| By Region | North America | 45% [11] | Not Specified |

| Asia-Pacific | Not Specified | ~30% [11] |

Signaling Pathways and Workflows

RNAi Therapeutic Experimental Workflow

Clinical Trial Interference Mitigation Pathway

The Scientist's Toolkit: Research Reagent Solutions

Field: RNA Interference (RNAi) Therapeutic Development

| Item / Reagent | Function | Key Consideration |

|---|---|---|

| Chemically Modified siRNA | The active pharmaceutical ingredient; designed to bind and cleave complementary target mRNA. | Modifications (2'-O-Me, 2'-F) are crucial for stability, potency, and reducing immunogenicity [11]. |

| Lipid Nanoparticles (LNPs) | A delivery vehicle that encapsulates and protects siRNA, enabling efficient cellular uptake and endosomal escape. | The composition of ionizable lipids, PEG-lipids, and helper lipids critically determines efficacy and toxicity profiles [11]. |

| GalNAc Conjugates | A targeted delivery ligand that binds specifically to the asialoglycoprotein receptor (ASGPR) on hepatocytes. | Enables subcutaneous administration and highly efficient liver-specific delivery with a wide therapeutic index [11] [28]. |

| In Vivo Transfection Agent | A reagent used in preclinical research to facilitate the delivery of RNAi molecules into cells in animal models. | Used for proof-of-concept studies before investing in advanced formulations like LNPs. |

| qPCR Assays | To quantitatively measure the knockdown of target mRNA levels in vitro and in vivo. | Requires validated primers and probes specific to the target sequence; essential for demonstrating efficacy [11]. |

Methodological Approaches: Experimental Design and Interference Testing Protocols

Experimental Design for Robust Selectivity Assessment

Welcome to the Technical Support Center

This resource provides troubleshooting guides and frequently asked questions (FAQs) to help researchers address common challenges in selectivity assessment, particularly within the context of drug discovery and high-content screening (HCS). The guidance is framed within the broader thesis of identifying and mitigating interference in selectivity testing.

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common sources of interference in selectivity assays? Interference can be broadly divided into two categories:

- Technology-Related Interference: This includes compound autofluorescence (where compounds naturally fluoresce), fluorescence quenching (where compounds diminish a fluorescent signal), and the presence of colored or pigmented compounds that alter light transmission [3].

- Biological Interference: This includes compound-mediated cytotoxicity (cell death), dramatic changes in cell morphology, and disruption of cell adhesion to the assay plate surface. These effects can obscure the true activity of a compound at the intended target [3].

FAQ 2: How can I determine if a loss of signal in my assay is due to true biological activity or simple cytotoxicity? A significant, compound-mediated reduction in cell count is a key indicator of cytotoxicity. This can be identified through statistical analysis of nuclear counts and nuclear stain fluorescence intensity, where cytotoxic compounds will appear as outliers. Furthermore, manually reviewing the acquired images for signs of dead or rounded-up cells is a crucial verification step [3].

FAQ 3: My positive controls are working, but I am getting high false-positive rates. What should I investigate? High false-positive rates often point to compound-based interference. You should:

- Statistically analyze fluorescence intensity data to identify outlier compounds that may be autofluorescent [3].

- Review the chemical structures of the false-positive compounds for known undesirable functionalities like electrophiles or chelators [3].

- Implement an orthogonal assay that uses a fundamentally different detection technology (e.g., luminescence instead of fluorescence) to confirm the activity [3].

FAQ 4: What is the role of orthogonal assays in confirming selectivity? Orthogonal assays are essential for confirming that a compound's activity is due to modulation of the intended biological target and not an artifact of the primary assay's detection system. By using a different technology (e.g., bioluminescence, TR-FRET, or enzyme activity assays), you can validate hits and eliminate those that act through interfering mechanisms [3].

Troubleshooting Guides

Guide 1: Addressing Compound-Mediated Interference

Problem: Inconclusive results due to compound autofluorescence, quenching, or cytotoxicity.

Investigation and Resolution:

- Step 1: Statistical Flagging: Analyze the raw fluorescence intensity and nuclear count data from your primary screen. Compounds that are statistical outliers (e.g., very high or very low values) should be flagged for further investigation [3].

- Step 2: Image Review: Manually inspect the images corresponding to the flagged compounds. Look for signs of cytotoxicity (cell loss, rounded-up cells), abnormal morphology, or unusually bright/dark wells that are not consistent with the cellular staining [3].

- Step 3: Orthogonal Confirmation: Subject the flagged compounds to a counter-screen or orthogonal assay. The table below summarizes common interference mechanisms and proposed orthogonal assay strategies.

Table 1: Troubleshooting Compound Interference

| Interference Mechanism | Key Indicators | Recommended Orthogonal Assay or Counter-Screen |

|---|---|---|

| Autofluorescence | High fluorescence signal across multiple channels; signal persists in cell-free wells. | Luminescence-based assay; Fluorescence counter-screen in the absence of the biological target [3]. |

| Fluorescence Quenching | Unusually low signal in all fluorescent channels. | Luminescence-based assay; Radioligand binding assay [3]. |

| Cytotoxicity | Significant reduction in cell count; abnormal nuclear morphology. | Viability assay (e.g., ATP-based); Cell membrane integrity assay [3]. |

| Colloidal Aggregation | Non-specific inhibition; loss of activity with the addition of detergent. | Dynamic light scattering (DLS); Assay with non-ionic detergent (e.g., Triton X-100) [3]. |

Guide 2: Mitigating Artifacts from Cells and Media

Problem: High fluorescent background or contamination artifacts obscuring the assay signal.

Investigation and Resolution:

- Source: Media Components. Some tissue culture media components, such as riboflavins, are autofluorescent. This can elevate the background, especially in live-cell imaging applications within the UV to green spectral ranges [3].

- Solution: Use phenol-free media or media specifically formulated for fluorescence imaging. Test and compare different media during assay development.

- Source: Environmental Contaminants. Lint, dust, plastic fragments, and microorganisms can cause image aberrations, focus blur, and image saturation [3].

- Solution: Maintain a clean cell culture environment. Use plates with black walls and clear bottoms to minimize background and cross-talk. Centrifuge compound plates to precipitate insoluble materials before use.

Experimental Protocols for Robust Selectivity Assessment

Protocol 1: Counter-Screen for Autofluorescence and Quenching

Objective: To identify compounds that interfere with fluorescence detection independently of biological activity.

Methodology:

- Plate Preparation: Use the same assay microplates as your primary HCS assay.

- Reagent Addition: Omit the cellular component. Instead, add a solution of a reference fluorophore (e.g., one used in your primary assay) in assay buffer to the wells.

- Compound Addition: Add the test compounds at the same concentration used in the primary screen.

- Data Acquisition: Read the plates using the same imaging parameters and channels as your primary HCS assay.

- Data Analysis: Compounds that significantly increase (autofluorescence) or decrease (quenching) the fluorescence signal of the reference fluorophore compared to DMSO controls are identified as interferers.

Protocol 2: Cytotoxicity Counter-Screen

Objective: To determine if a compound's activity in the primary assay is conflated with or caused by cell death.

Methodology:

- Cell Seeding: Seed the same cell line used in your primary assay in a separate plate.

- Compound Treatment: Treat cells with test compounds using the same concentration and time course as the primary screen.

- Viability Staining: At the endpoint, add a cell-permeable DNA stain (e.g., Hoechst 33342) to label all nuclei and a viability dye (e.g., propidium iodide) that only enters cells with compromised membranes.

- Image Acquisition and Analysis: Acquire images and use an image analysis algorithm to count the total number of nuclei (Hoechst-positive) and the number of dead cells (propidium iodide-positive). A compound causing a significant increase in the ratio of dead to total cells is cytotoxic [3].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Selectivity Assessment

| Item | Function in Selectivity Assessment |

|---|---|

| Phenol-free Media | Reduces background autofluorescence from media components during live-cell imaging [3]. |

| Reference Fluorophores | Used in counter-screens to quantify compound-mediated autofluorescence or quenching (e.g., GFP, RFP) [3]. |

| Viability Dyes | Distinguish live from dead cells in cytotoxicity counter-screens (e.g., propidium iodide) [3]. |

| Non-ionic Detergent | Used to test for colloidal aggregation; reverses non-specific inhibition caused by compound aggregates [3]. |

| Orthogonal Assay Kits | Kits based on a different detection technology (e.g., luminescence, AlphaLISA, TR-FRET) to confirm HCS hits [3]. |

Workflow and Pathway Visualizations

The following diagrams, created using Graphviz DOT language, illustrate key workflows and logical relationships for robust selectivity assessment. The color palette and contrast are designed per specified guidelines.

Primary HCS Hit Triage Workflow

Taxonomy of Assay Interference Types

Fundamental Concepts and Definitions

What is the official definition of "interference" in a clinical chemistry context? Within clinical laboratory science, analytical interference is formally defined as "a cause of medically significant difference in the measurand test result due to another component or property of the sample" [33]. This effect causes the measured concentration of an analyte to differ from its true value [18]. It is distinct from preexamination effects (e.g., physiological drug effects, specimen evaporation, or in vivo chemical alterations), which occur before the analysis phase [33].

How does "selectivity" differ from "specificity"? The term selectivity describes the ability of an analytical method to determine a given analyte without interferences from other components in the sample matrix. It is a gradable parameter—a method can be highly selective, moderately selective, etc. In contrast, specificity is often considered an absolute term, implying that a method is 100% free from interferences. Given the practical difficulty in proving absolute freedom from interference, selectivity is the preferred and recommended term in analytical chemistry [34]. A selective method is less susceptible to interference.

What are the common sources of interferents I should consider? Interferents can originate from a wide variety of endogenous and exogenous sources [18] [33]:

- Endogenous Substances: Metabolites produced in pathological conditions, such as bilirubin (icterus), lipids (lipemia), or hemoglobin from hemolyzed red blood cells (hemolysis).

- Exogenous Substances:

- Compounds from patient treatment: prescription drugs, over-the-counter medications, plasma expanders, anticoagulants.

- Substances ingested: alcohol, nutritional supplements, food components, drugs of abuse.

- Substances added during sample handling: anticoagulants, preservatives, stabilizers.

- Contaminants: residues from hand cream, glove powder, tube stoppers, or leachables from plastic consumables.

Core Experimental Protocols

The Paired-Difference Experiment for Specific Interference Testing

The CLSI EP07-A2 guideline provides a core experimental design for interference testing: the paired-difference experiment [33].

Detailed Methodology:

- Sample Pool Preparation: Prepare a base pool of the sample matrix (e.g., serum, plasma) with a known concentration of the analyte of interest.

- Test and Control Sample Preparation: The base pool is split into two portions:

- Test Sample: The potential interferent is added to this portion.

- Control Sample: An equal volume of the interferent's solvent (e.g., water, saline) is added to this portion. This controls for any dilution effects caused by adding the interferent solution.

- Analysis: Both the test and control samples are analyzed in the same analytical run, with adequate replication (typically in duplicate or triplicate) to ensure statistical significance.

- Calculation of Interference: Interference is calculated as the difference between the mean measured value of the test sample and the mean measured value of the control sample. > Interference = Mean Test - Mean Control

How do I select appropriate interferents and their test concentrations? CLSI EP07-A2, Section 5.4, offers recommendations for selecting potential interferents [18]. You should prioritize:

- Common sample abnormalities (hemolysis, icterus, lipemia).

- Common prescription and over-the-counter drugs.

- Medications prescribed for the conditions your test is intended to diagnose or monitor.

- Dietary supplements.

- Compounds that are structurally related or isobaric (same molecular weight) with your analyte, as these pose a high risk for chromatographic or mass spectrometric interference [18]. The guideline also provides tables of recommended test concentrations for many analytes and interferents, which serve as a solid starting point [35].

Advanced Protocol: Assessing Matrix Effects in LC-MS/MS

Liquid chromatography-tandem mass spectrometry (LC-MS/MS) methods, while highly selective, are susceptible to a phenomenon known as matrix effects, where co-eluting substances alter the ionization efficiency of the analyte [18].

Detailed Methodology: Quantitative Matrix Effect Evaluation

- Prepare Two Sets of Samples:

- Set A (Extracted Matrix): Take several blank matrix samples (e.g., from different donors), extract them using your standard sample preparation protocol, and then spike a known amount of your analyte into the cleaned-up extract.

- Set B (Neat Solution): Spike the same amount of analyte into a pure solvent.

- Analysis and Calculation: Analyze all samples and compare the analyte response (peak area) between the two sets. The matrix effect (ME) is calculated as:

> ME (%) = (Mean Peak Area of Set A / Mean Peak Area of Set B) × 100%

- An ME < 100% indicates ion suppression.

- An ME > 100% indicates ion enhancement. This experiment should be performed at least two analyte concentrations and with several different lots of matrix to account for biological variability [18].

Detailed Methodology: Qualitative Post-Column Infusion Study

This method helps visualize where ion suppression/enhancement occurs during the chromatographic run [18].

- Infusion Setup: A solution of the analyte (or its stable isotope-labeled internal standard) is continuously infused into the LC column effluent via a T-connector, creating a steady signal at the mass spectrometer detector.

- Blank Injection: A blank matrix sample is injected and analyzed using the standard LC gradient.

- Visualization: As the blank matrix elutes from the column, any co-eluting matrix components that cause ion suppression will create a negative "dip" in the otherwise steady signal trace. Ion enhancement would create a positive peak. This helps identify regions of the chromatogram to avoid for your analyte's elution time.

The workflow for designing a comprehensive interference investigation is summarized below.

Troubleshooting Guide & FAQs

We added a known interferent, but see no significant effect. What could be wrong?

- Insufficient Concentration: The concentration of the interferent you tested may be below the threshold for observable interference. Re-test at a higher, but still clinically relevant, concentration.

- Analyte Concentration Too High: If the analyte concentration in your test pool is very high, the relative effect of the interferent might be diluted and not statistically significant. Consider testing at a clinically critical, lower analyte concentration.

- Internal Standard Compensation: In LC-MS/MS, a well-matched stable isotope-labeled internal standard can perfectly compensate for a matrix effect, masking its presence. This is why performing non-normalized (peak area) matrix effect experiments is crucial during method development [18].

Our LC-MS/MS method shows a huge matrix effect. How can we mitigate it? Matrix effects are a common challenge. Mitigation strategies involve enhancing selectivity at various stages of the analysis [18]:

- Sample Preparation: Implement more selective clean-up procedures such as liquid-liquid extraction or solid-phase extraction instead of a simple "dilute-and-shoot" approach.

- Chromatography: Optimize the LC separation to shift the analyte's retention time away from the suppression zone identified by the post-column infusion experiment. This can involve changing the gradient profile, the column type, or the mobile phase.

- Internal Standard: Use a stable isotope-labeled internal standard (with labels like ¹³C or ¹⁵N that do not alter chromatography) that co-elutes perfectly with the analyte, ensuring it experiences the same degree of suppression/enhancement and can accurately correct for it.

A drug known to interfere in other assays did not interfere in ours. Can we claim our method is "specific"? You should state that "no interference was observed" for that particular drug at the concentrations tested. It is more scientifically accurate to describe your method as "highly selective" against that interferent rather than using the absolute term "specific." Claiming absolute specificity is generally discouraged because it is practically impossible to test against all possible compounds [34].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 1: Essential Materials for Interference Testing

| Item | Function & Rationale |

|---|---|

| Pure Analyte Standard | Used to prepare sample pools with known baseline concentrations and for spiking experiments in matrix effect studies. |

| Potential Interferents | A curated list of drugs, metabolites (e.g., bilirubin, hemoglobin), and supplements to test based on CLSI recommendations and clinical relevance [35] [18]. |

| Stable Isotope-Labeled Internal Standard (for LC-MS/MS) | Crucial for compensating for matrix effects and variability in sample preparation; ideally labeled with ¹³C or ¹⁵N to ensure co-elution with the native analyte [18]. |

| Blank Matrix | Matrix from multiple individual donors (e.g., serum, plasma) that is devoid of the analyte. Essential for preparing calibrators and for matrix effect experiments. |

| Derivatization Reagents (e.g., Ninhydrin, OPA) | Used in post-column derivatization to enhance the detectability (sensitivity and selectivity) of analytes like amines, amino acids, and thiols in HPLC methods [36]. |

Data Presentation and Interpretation

Structuring Interference Data

When reporting interference results, a clear table is essential for interpretation. The following table provides a template.

Table 2: Example Format for Reporting Interference Test Results

| Potential Interferent | Concentration Tested | Analyte Concentration | Bias (%) | Clinically Significant? (Y/N) | Notes |

|---|---|---|---|---|---|

| Hemolysate (Hb) | 500 mg/dL | 100 mg/dL | +5.2% | N | Slight positive bias, within acceptable limits. |

| Icteric (Bilirubin) | 20 mg/dL | 100 mg/dL | -15.8% | Y | Negative bias exceeds 10%; method is susceptible. |

| Drug A | 50 µg/mL | 10 mg/dL | +45.0% | Y | Severe positive interference; issue patient advisories. |

Strategies for Identifying Unidentified Interference and Matrix Effects

Core Concepts and Problem Definition

What are matrix effects and how do they impact analytical data?

Matrix effects occur when compounds co-eluting with your analyte interfere with the ionization process in a mass spectrometer, leading to ion suppression or enhancement. This detrimentally affects the accuracy, reproducibility, and sensitivity of your quantitative LC-MS analysis. The interfering compounds, often phospholipids from biological matrices, can neutralize analyte ions or affect charged droplet formation, ultimately changing the detector's response to your target compound [37].

Common sources include:

- Phospholipids: Major components of cell membranes that are notorious for fouling the MS source and causing ionization suppression. They often co-extract with analytes and co-elute during chromatography [38].

- Excess fats, proteins, and pigments: These components, particularly in complex samples like food or biological fluids, can coat instrumentation and obscure target analytes [26].

- Sample processing reagents: Additives, solvents, or impurities introduced during sample preparation.

- Mobile phase additives: Certain additives used to improve chromatographic separation can themselves suppress the electrospray signal [37].

- Endogenous compounds: Metabolites or other naturally occurring substances in biological samples like plasma, serum, or urine [37].

Detection and Diagnosis Strategies

How can I quickly detect the presence of matrix effects in my method?

A simple recovery-based method can be used for rapid detection. Compare the signal response of your analyte dissolved in neat mobile phase to the signal response of an equivalent amount spiked into a blank matrix sample post-extraction. A significant difference in response indicates the extent of the matrix effect. This method is fast, reliable, and can be applied to any analyte or matrix without requiring additional hardware [37].

Table 1: Methods for Detecting Matrix Effects

| Method Name | Principle | Advantages | Limitations |

|---|---|---|---|

| Post-Extraction Spike [37] | Compares analyte response in neat solution vs. spiked blank matrix. | Simple, quantitative, applicable to endogenous analytes. | Requires a blank matrix. |

| Post-Column Infusion [37] | Infuses analyte continuously while injecting blank extract; signal dips indicate suppression. | Qualitative; identifies regions of ionization suppression/enhancement in chromatogram. | Time-consuming; requires extra hardware; not ideal for multi-analyte methods. |

What are the observable symptoms of matrix interference during an LC-MS run?

Several symptoms can indicate interference:

- Reduced sensitivity: Lower than expected signal for your target analytes.

- Poor reproducibility: High variability in replicate analyses.

- Irreproducible analyte response: Inconsistent peak areas for the same concentration [38].

- Increased baseline noise or unexplained peaks in the chromatogram.

- Frequent instrument contamination requiring more maintenance and source cleaning [26].

Experimental Protocols for Identification and Mitigation

Protocol 1: Assessing Matrix Effects via the Post-Extraction Spike Method

Objective: To quantitatively determine the magnitude of matrix effects for a given analyte and matrix.

Materials:

- LC-MS/MS system

- Pure analyte standard

- Blank matrix (e.g., drug-free plasma, urine)

- Solvents for mobile phase and sample preparation