Limit of Detection (LOD) Determination: A Comprehensive Guide for Researchers and Scientists

This article provides a complete guide to Limit of Detection (LOD) determination for researchers, scientists, and drug development professionals.

Limit of Detection (LOD) Determination: A Comprehensive Guide for Researchers and Scientists

Abstract

This article provides a complete guide to Limit of Detection (LOD) determination for researchers, scientists, and drug development professionals. It covers fundamental concepts, key statistical definitions of LOD, Limit of Blank (LoB), and Limit of Quantitation (LoQ), and explores established and emerging methodological approaches. The content delivers practical troubleshooting strategies for improving sensitivity in techniques like HPLC, alongside modern validation frameworks and comparative analyses of different determination methods to ensure regulatory compliance and methodological rigor in biomedical and clinical research.

Understanding LOD Fundamentals: From Blank to Quantitation

In the realm of analytical and bioanalytical science, accurately defining the lowest levels of detection and quantification is paramount for method validation. The terms Limit of Blank (LoB), Limit of Detection (LoD), and Limit of Quantitation (LoQ) represent a critical hierarchy defining the sensitivity and utility of an analytical procedure [1] [2]. Despite their importance, the absence of a universal protocol for their determination has led to varied approaches, making objective comparisons essential for researchers and drug development professionals [3]. This guide provides a structured comparison of these parameters and the experimental methods used to define them.

Core Definitions and Relationships

The terms LoB, LoD, and LoQ describe the smallest concentration of an analyte that can be reliably measured, each with a distinct role in method validation [1]. The relationship between them is sequential, with each building upon the previous.

The diagram above illustrates the foundational relationship: the LoB defines the background noise level, the LoD is the level at which detection becomes feasible above this noise, and the LoQ is the level at which precise and accurate quantification begins. Their formal definitions are:

- Limit of Blank (LoB): The highest apparent analyte concentration expected to be found when replicates of a blank sample containing no analyte are tested [1] [4]. It characterizes the signal produced by the matrix in the absence of the target analyte.

- Limit of Detection (LoD): The lowest analyte concentration likely to be reliably distinguished from the LoB and at which detection is feasible [1] [5]. It confirms the presence of the analyte but does not guarantee accurate quantification.

- Limit of Quantitation (LoQ): The lowest concentration at which the analyte can not only be reliably detected but at which some predefined goals for bias and imprecision are met [1] [4]. Results at or above the LoQ are considered quantitatively reliable.

The practical interpretation of results in relation to these limits is summarized in the table below.

| Reported Result | Interpretation |

|---|---|

| Below LoB | No analyte detected. Concentration is effectively zero [2]. |

| Between LoB and LoD | Analyte may be present, but cannot be reliably distinguished from background noise. |

| Between LoD and LoQ | Analyte is detected with confidence, but cannot be quantified with acceptable precision and accuracy [2]. |

| At or Above LoQ | Analyte is both detected and quantified with acceptable reliability [2]. |

Methodological Approaches and Comparative Data

Multiple approaches exist for determining LoB, LoD, and LoQ, each with specific applications, advantages, and limitations. The choice of method depends on the nature of the analytical procedure (e.g., whether it has significant background noise) and the stage of development or validation [6].

Comparative Analysis of Determination Methods

The following table summarizes the core methodologies, their typical use cases, and associated strengths and weaknesses.

| Method | Typical Use Case | Key Strengths | Key Weaknesses |

|---|---|---|---|

| Standard Deviation of the Blank [6] | Quantitative assays; common initial approach. | Simple, quick calculations. | Does not use data from samples containing analyte; may not reflect true detection capability [1]. |

| Standard Deviation of Response & Slope [6] | Quantitative assays without significant background noise. | Uses calibration curve data; accounts for method sensitivity via slope. | Relies on linearity of the calibration curve at low concentrations. |

| Signal-to-Noise Ratio [6] [7] | Quantitative assays and identification assays with background noise. | Intuitive; directly measures assay performance against its own inherent noise. | Requires experimental determination of noise; specific to the instrument and conditions used. |

| Visual Evaluation [6] [7] | Visual or instrumental detection methods (e.g., color changes, particle presence). | Direct empirical assessment; useful for non-instrumental methods. | Subjective element; requires multiple determinations and logistic regression analysis. |

| Uncertainty Profile [3] | Advanced bioanalytical method validation (e.g., HPLC in plasma). | Provides a realistic and precise estimate of measurement uncertainty; graphical decision tool. | Computationally complex; requires balanced data and advanced statistical knowledge. |

Quantitative Comparison of Calculation Formulas

Different methodological approaches lead to different calculation formulas. The table below provides a direct comparison of the standard formulas for the most common determination methods.

| Method | LoB Formula | LoD Formula | LoQ Formula |

|---|---|---|---|

| Standard Deviation of the Blank [1] [6] | Mean_blank + 1.645(SD_blank) |

LoB + 1.645(SD_low concentration) or Mean_blank + 3.3(SD_blank) [6] |

Mean_blank + 10(SD_blank) [6] |

| Standard Deviation of Response & Slope [6] | Not Applicable | 3.3 σ / Slope |

10 σ / Slope |

| Signal-to-Noise Ratio [6] | Not Applicable | Signal-to-Noise ≥ 2:1 | Signal-to-Noise ≥ 3:1 to 10:1 |

| Visual Evaluation [6] | Not Applicable | Concentration with 99% detection probability | Concentration with 99.95% detection probability |

Detailed Experimental Protocols

A robust determination of LoB, LoD, and LoQ requires careful experimental design. The following workflow and protocols outline the steps based on established guidelines like CLSI EP17 [1] [5].

Protocol 1: Determination via Blank and Low-Concentration Samples

This method, aligned with CLSI EP17, is a comprehensive and empirically grounded approach [1] [4].

Determine the Limit of Blank (LoB):

- Sample: Use a blank sample containing no analyte (e.g., a zero calibrator or appropriate matrix).

- Replication: Test a minimum of 20 replicate samples (manufacturers establishing claims may use 60) [1].

- Analysis: Calculate the mean and standard deviation (SD) of the results.

- Calculation: LoB = Meanblank + 1.645(SDblank). This one-sided calculation defines the concentration value above which 95% of blank measurements are expected to fall (assuming a Gaussian distribution) [1].

Determine the Limit of Detection (LoD):

- Sample: Use a sample containing a low but known concentration of the analyte.

- Replication: Test a minimum of 20 replicate samples [1].

- Analysis: Calculate the mean and standard deviation (SD) of the results.

- Calculation: LoD = LoB + 1.645(SD_low concentration sample). This ensures that 95% of measurements at the LoD will exceed the LoB, limiting false negatives to 5% [1].

- Verification: Confirm that no more than 5% of the measured values from the low-concentration sample fall below the LoB. If this criterion is not met, the LoD must be re-estimated using a sample with a higher concentration [1].

Determine the Limit of Quantitation (LoQ):

- Sample: Test samples with concentrations at or slightly above the provisional LoD.

- Replication: Analyze multiple replicates (e.g., across different days, operators, or instrument lots) to capture total imprecision.

- Analysis: Calculate the bias and imprecision (e.g., %CV) at each concentration level.

- Establishment: The LoQ is the lowest concentration at which predefined performance goals for total error (combining bias and imprecision) are met [1] [5]. A common target for immunoassays is a CV of 20% or less [5].

Protocol 2: Determination via Calibration Curve

For methods without significant background noise, the ICH Q2 guideline describes an approach based on the calibration curve [6].

- Experiment: Prepare and analyze a minimum of five concentration levels in the low range of the method. Use six or more independent determinations at each level [6].

- Analysis: Perform linear regression on the calibration data. The standard deviation (σ) of the response can be estimated as the residual standard deviation of the regression line (root mean squared error) [6].

- Calculation:

- LOD = 3.3 σ / Slope

- LOQ = 10 σ / Slope The slope is used to convert the variability in the instrument response (y-axis) back to the concentration scale (x-axis) [6].

Research Reagent Solutions and Materials

The following table details essential materials and reagents required for the experimental determination of LoB, LoD, and LoQ, particularly following the protocol for blank and low-concentration sample analysis.

| Reagent / Material | Critical Function & Specification |

|---|---|

| Blank Matrix | A sample containing no analyte, used to establish the LoB. Must be commutable with real patient specimens (e.g., drug-free plasma, buffer) [1]. |

| Low-Concentration QC Material | Samples with known, low analyte concentrations, used for LoD and LoQ determination. Should be in the appropriate matrix and ideally a dilution of the lowest non-negative calibrator [1]. |

| Calibrators | A series of standards with known concentrations for constructing the calibration curve, essential for the "Standard Deviation of Response & Slope" method [6]. |

| Internal Standard (for HPLC/MS) | A known compound added to samples to correct for variability in sample preparation and instrument response, improving the precision of methods like HPLC [3]. |

Selecting the appropriate method for defining LoB, LoD, and LoQ is critical for generating trustworthy analytical data. While classical statistical methods offer simplicity, graphical and empirical approaches like the uncertainty profile and the CLSI EP17 protocol generally provide more realistic and reliable assessments, especially for sophisticated bioanalytical methods [3] [7]. Researchers must align their chosen protocol with the intended use of the method, the nature of the matrix, and regulatory requirements to ensure the resulting detection and quantitation limits are truly "fit for purpose."

In the rigorous world of analytical chemistry and drug development, the concepts of false positives and false negatives are not merely statistical abstractions. They are critical performance parameters that define the reliability and capability of any analytical method, particularly when determining fundamental figures of merit like the Limit of Detection (LOD). The LOD represents the lowest concentration of an analyte that can be reliably distinguished from a blank sample, but not necessarily quantified with precision. The selection and application of a specific LOD determination method directly influence a test's susceptibility to these errors, creating a fundamental trade-off that researchers must navigate. This guide provides an objective comparison of the most common LOD determination methods, evaluating their statistical basis, experimental protocols, and their inherent balance between false positive and false negative rates, to inform method validation in pharmaceutical and bioanalytical sciences.

Statistical Foundations of Error

Defining False Positives and False Negatives

In statistical hypothesis testing for analytical detection, the null hypothesis (H₀) is typically that the analyte is not present. The two types of errors are defined within this framework [8] [9]:

- False Positive (Type I Error): This occurs when an analytical method incorrectly indicates the presence of an analyte in a blank sample. The false positive rate (α) is the probability of this error. In detection decisions, it is the probability that the measured signal from a blank sample will exceed a predefined critical level (LC) [9].

- False Negative (Type II Error): This occurs when an analytical method fails to detect an analyte that is actually present at or above the LOD. The false negative rate (β) is the probability of this error. It is the probability that the signal from a sample containing the analyte at the LOD will fall below the critical level [9].

The Trade-off in Detection Limit Determination

The relationship between these two errors is a core consideration in defining a method's LOD. Establishing a low critical level (LC) to minimize false positives inadvertently increases the risk of false negatives for low-concentration samples. Conversely, setting a high LC to avoid false negatives raises the likelihood of false positives [9]. Modern international standards, such as those from ISO, define the LOD as the true net concentration that will lead to a correct detection with a high probability (1-β), formally incorporating the risk of a false negative into the LOD's definition [9].

Comparison of Major LOD Determination Methods

International guidelines, such as ICH Q2(R1), describe several accepted approaches for determining the LOD, each with a different statistical foundation and performance profile [6] [10].

Visual Evaluation

The LOD is determined by analyzing samples with known concentrations and establishing the minimum level at which the analyte can be reliably detected by an instrument or analyst. This method is simple but subjective [6].

Signal-to-Noise (S/N)

This chromatographic technique establishes the LOD as the concentration that yields a signal typically 2 to 3 times the height or amplitude of the background noise. The ICH and various pharmacopoeias endorse this method [6] [9].

Standard Deviation of the Response and Slope

This method uses the calibration curve's characteristics to compute the LOD statistically. The formulas are [10]:

LOD = 3.3σ / S

LOQ = 10σ / S

Where σ is the standard deviation of the response (often the standard error of the regression or the standard deviation of the blank) and S is the slope of the calibration curve.

Table 1: Comparison of Key LOD Determination Methods

| Method | Statistical Basis | Typical Experimental Protocol | Advantages | Disadvantages |

|---|---|---|---|---|

| Visual Evaluation [6] | Analyst or instrument discretion. | Prepare 5-7 concentration levels; 6-10 replicates per level; record detection events. | Simple, intuitive, no complex calculations. | Highly subjective, poor reproducibility, difficult to validate. |

| Signal-to-Noise [6] [9] | Direct measurement of instrumental response. | Measure signal of low-concentration sample and background noise from a blank; calculate S/N ratio. | Directly measures instrumental performance, widely accepted in chromatography. | Highly instrument-dependent, may not account for all sources of analytical error. |

| Standard Deviation & Slope [10] | Interpolated from calibration curve precision and sensitivity. | Run a calibration curve with low-concentration standards; perform linear regression to obtain S and σ (standard error). |

Objective, utilizes data from the entire calibration, accounts for method sensitivity. | Relies on a linear and stable calibration curve at low concentrations. |

Performance Data from Comparative Studies

Independent research has demonstrated that these different methodologies do not produce equivalent results, which has direct implications for error rates.

A study comparing LOD calculation methods for carbamazepine and phenytoin analysis via HPLC-UV found significant variation. The signal-to-noise method provided the lowest LOD values, while the standard deviation of the response and slope method resulted in the highest values [11]. This suggests that the S/N method might be more prone to false positives (by claiming detection at very low levels), whereas the standard deviation/slope method is more conservative, potentially reducing false positives at the cost of a higher false negative rate near its LOD.

Another study comparing classical statistical methods with graphical tools like the uncertainty profile concluded that the classical strategy often provides underestimated LOD and LOQ values [3]. An underestimated LOD increases the risk of reporting false positives for samples with concentrations near that limit.

Detailed Experimental Protocols

Protocol for the Standard Deviation/Slope Method

This is a widely used and statistically robust approach for quantitative assays [10].

- Preparation: Prepare a calibration curve with a minimum of 5 concentration levels in the expected low range of the assay.

- Analysis: Analyze each calibration level following the complete analytical procedure.

- Data Processing: Perform a linear regression analysis on the calibration data (concentration vs. response).

- Parameter Extraction: From the regression output, record the slope (S) of the curve and the standard error (σ) of the regression, which serves as the standard deviation of the response.

- Calculation: Apply the formulas LOD = 3.3σ / S and LOQ = 10σ / S.

- Validation: The calculated LOD and LOQ must be validated by analyzing a suitable number of samples (e.g., n=6) prepared at these concentrations. The method is confirmed if the analyte is detected at the LOD in most replicates and can be quantified at the LOQ with acceptable precision (e.g., ±15% CV) [10].

Protocol for the Signal-to-Noise Method

This method is commonly applied in chromatographic systems with measurable background noise [6] [9].

- Preparation: Prepare a blank sample (without analyte) and a reference sample with a low concentration of the analyte.

- Chromatographic Analysis: Inject the blank and the low-concentration sample.

- Noise Measurement: In the chromatogram of the blank, measure the maximum amplitude of the background noise (

h) over a region where the analyte peak is expected. - Signal Measurement: In the chromatogram of the low-concentration sample, measure the height of the analyte peak (

H). - Calculation: Compute the signal-to-noise ratio as S/N = 2H / h (per European Pharmacopoeia) or a similar defined formula [9].

- LOD Determination: The LOD is the concentration that yields an S/N ratio of 2:1 or 3:1. This may require testing multiple concentrations to find the level that meets this criterion.

Visualizing Method Relationships and Workflows

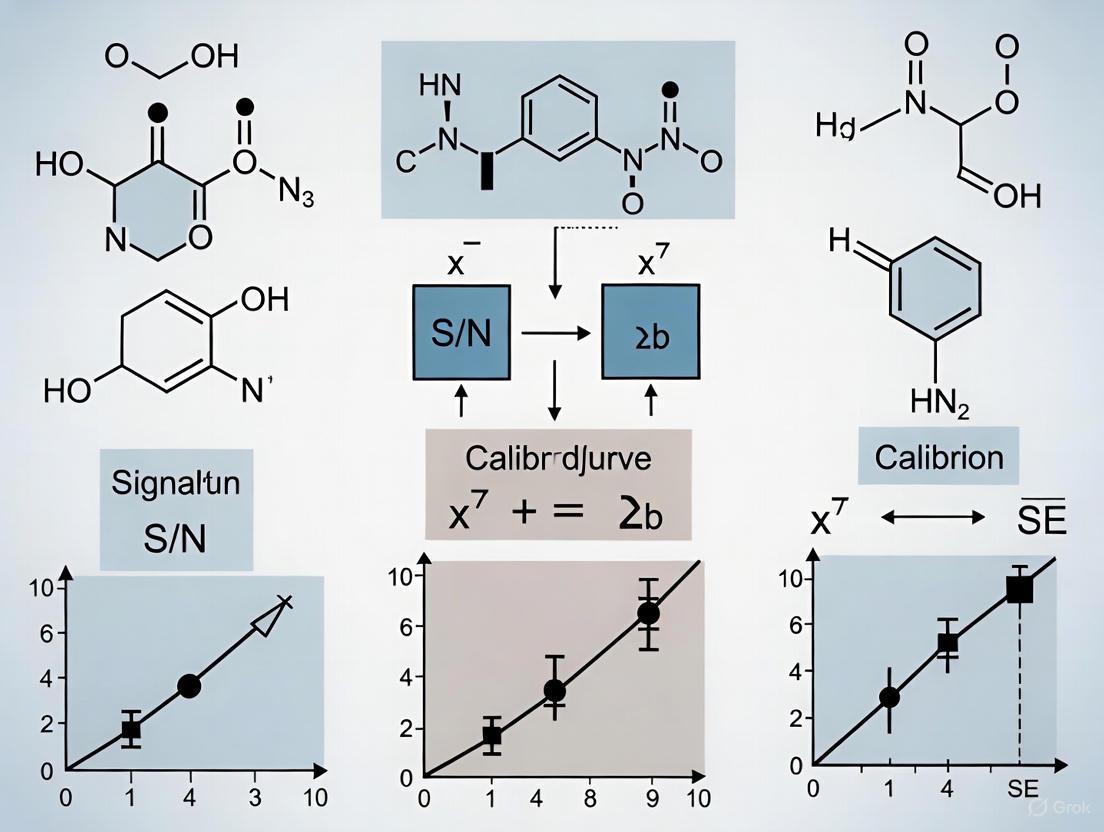

The following diagram illustrates the logical relationship between key statistical concepts and the decision-making process in analyte detection, highlighting where false positives and false negatives occur.

Figure 1: Statistical Decision Model for Analytic Detection

This workflow outlines the general process for determining the Limit of Detection using two common methodologies, culminating in a essential validation step.

Figure 2: LOD Determination and Validation Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Reagents and Materials for LOD Determination Experiments

| Item | Function in LOD Studies |

|---|---|

| Certified Reference Standard | Provides the analyte of known purity and concentration for preparing accurate calibration curves and spiked samples. |

| Appropriate Blank Matrix | The substance without the analyte (e.g., drug-free plasma, pure solvent). Critical for establishing the baseline signal, noise, and for calculating LOB and LOD [6] [5]. |

| Chromatographic Columns & Phases | For HPLC-based methods, these are critical for separating the analyte from interference, which improves the signal-to-noise ratio and lowers the detectable limit. |

| High-Purity Solvents & Reagents | Used for preparing mobile phases, standards, and samples. Impurities can contribute to background noise and elevate the LOD. |

| Stable Internal Standard | Especially for bioanalytical methods, an internal standard corrects for variability in sample preparation and injection, improving the precision of measurements at low concentrations. |

In analytical chemistry and clinical laboratory science, accurately determining the lowest concentrations of an analyte that a method can detect and quantify is fundamental to method validation. The Limit of Detection (LOD) and Limit of Quantitation (LOQ) are critical parameters that define the operational boundaries of analytical procedures [12]. The International Council for Harmonisation (ICH), Clinical and Laboratory Standards Institute (CLSI), and International Organization for Standardization (ISO) have established standardized approaches for defining and determining these limits, though their guidelines reflect different disciplinary perspectives and applications [13] [1] [12]. For researchers, scientists, and drug development professionals, understanding the distinctions, applications, and methodological requirements of these frameworks is essential for developing robust, compliant analytical methods across pharmaceutical, clinical diagnostic, and biomedical research contexts. This guide provides a comprehensive comparison of these key regulatory frameworks, supported by experimental data and procedural protocols.

Core Definitions and Conceptual Foundations

Foundational Terminology Across Guidelines

All three regulatory frameworks address the fundamental concepts of detection and quantitation limits but employ nuanced definitions and introduce distinct terminology reflective of their application domains.

ICH Q2(R2) Definitions: The ICH guideline defines the Limit of Detection (LOD) as "the lowest amount of analyte in a sample which can be detected but not necessarily quantitated as an exact value." The Limit of Quantitation (LOQ) is defined as "the lowest amount of analyte in a sample which can be quantitatively determined with suitable precision and accuracy" [14] [12] [6]. ICH primarily focuses on chemical assays for pharmaceutical analysis.

CLSI EP17 Definitions: The CLSI guideline introduces a three-tiered model for evaluating detection capability. It defines the Limit of Blank (LoB) as "the highest apparent analyte concentration expected to be found when replicates of a sample containing no analyte are tested." The Limit of Detection (LoD) is "the lowest analyte concentration likely to be reliably distinguished from the LoB and at which detection is feasible." The Limit of Quantitation (LoQ) is "the lowest concentration at which the analyte can not only be reliably detected but at which some predefined goals for bias and imprecision are met" [13] [1] [5]. This framework is particularly crucial for clinical laboratory measurement procedures where measurand levels approach zero [13].

ISO Framework: ISO standards, such as the ISO 16140 series for microbiological methods, often treat LOD as a probabilistic measure, particularly in contexts like food pathogen testing. The LOD may be expressed as the probability of detecting a single colony-forming unit (CFU) and is frequently assessed using methods like the Most Probable Number (MPN) technique [12].

The following diagram illustrates the conceptual relationship between LoB, LoD, and LoQ as defined by CLSI, which provides the most granular model.

Conceptual Relationship of LoB, LoD, and LoQ

Table 1: High-Level Comparison of ICH, CLSI, and ISO Guidelines

| Feature | ICH Q2(R2) | CLSI EP17 | ISO 16140 |

|---|---|---|---|

| Primary Scope | Analytical methods for pharmaceutical quality control | Clinical laboratory measurement procedures | Microbiological methods (e.g., food safety) |

| Core Model | LOD and LOQ | Three-tiered: LoB, LoD, LoQ | Probabilistic LOD and method equivalence |

| Key Terminology | LOD, LOQ | LoB, LoD, LoQ | LOD, Probability of Detection |

| Typical Applications | HPLC, Chromatography, Spectroscopy | Immunoassays, Clinical Chemistry Analyzers | Pathogen detection, Sterility testing |

| Defining Formulas | LOD = 3.3σ/S; LOQ = 10σ/S | LoB = Meanblank + 1.645(SDblank); LoD = LoB + 1.645(SD_low) | MPN, Fraction of Positive Replicates |

Methodological Approaches and Experimental Protocols

ICH Q2(R2) Endorsed Methods

The ICH guideline describes several acceptable approaches for determining LOD and LOQ, each with specific experimental protocols [14] [6].

Visual Evaluation: This method involves analyzing samples with known concentrations of the analyte and establishing the minimum level at which the analyte can be reliably detected by an analyst or instrument. While simple, it is considered somewhat subjective [14] [6]. Typically, five to seven concentrations are tested with 6-10 replicates each, and results are analyzed using logistic regression to determine the concentration corresponding to a high probability of detection (e.g., 99% for LOD) [6].

Signal-to-Noise Ratio (S/N): This approach is applicable only to analytical procedures that exhibit baseline noise. The LOD is generally defined as an S/N of 2:1 or 3:1, and the LOQ as an S/N of 10:1 [14] [6]. The protocol requires analysis of five to seven concentration levels with six or more replicates each. The signal is the measurement at each concentration, and the noise is typically the blank control. Non-linear modeling (e.g., 4-parameter logistic) is often used to interpolate the LOD and LOQ values from the S/N versus concentration curve [6]. A key challenge is the lack of a universally defined method for calculating S/N, which can lead to variability [14].

Standard Deviation of the Response and Slope: This is a standard curve-based method suitable for techniques without significant background noise. It uses the calibration curve to estimate the standard deviation of the response and the slope to translate this variation into a concentration value [14] [6]. The formulas are:

- LOD = 3.3 σ / S

- LOQ = 10 σ / S Where σ is the standard deviation of the response (often estimated as the residual standard deviation of the regression line) and S is the slope of the calibration curve [3] [6]. The experiment involves making at least six determinations at each of five concentration levels in the expected low range. The slope is estimated from the calibration curve of the analyte [6].

CLSI EP17 Protocol for LoB and LoD

The CLSI EP17 protocol provides a rigorous, statistically grounded experimental design that explicitly accounts for the distribution of results from both blank and low-concentration samples [1].

Experimental Design: The guideline recommends testing a substantial number of replicates to capture expected performance variability.

Calculation Procedure:

- Step 1: Determine LoB. Measure replicates of a blank sample (containing no analyte) and calculate the mean and standard deviation (SD). The LoB is calculated as: LoB = Meanblank + 1.645 * SDblank (assuming a one-sided 95% confidence interval for a Gaussian distribution) [1] [6].

- Step 2: Determine LoD. Measure replicates of a sample containing a low concentration of analyte. Calculate the mean and SD of this low-concentration sample. The LoD is then: LoD = LoB + 1.645 * SD_low concentration sample [1]. This ensures that a sample at the LoD concentration will be distinguished from the LoB 95% of the time [5]. The guideline also includes methods for non-parametric analysis if the data is not normally distributed [1].

Advanced Graphical and Statistical Methods

Recent scientific research has introduced more advanced graphical strategies for determining LOD and LOQ, which offer a more realistic assessment of method capability.

Uncertainty Profile: This is a decision-making graphical tool based on the β-content tolerance interval and measurement uncertainty [3]. A method is considered valid when the uncertainty limits calculated from tolerance intervals are fully included within the pre-defined acceptability limits (λ). The LOQ is determined as the lowest concentration where this condition is met, found by calculating the intersection point of the upper uncertainty line and the acceptability limit [3]. Studies have shown that this method provides a precise estimate of measurement uncertainty and avoids the underestimation common in classical statistical approaches [3].

Accuracy Profile: This approach uses the total error (bias + imprecision) and tolerance intervals to determine the quantitation limit. The LOQ is the lowest concentration where the tolerance interval for total error falls within acceptable limits [3].

The workflow for applying these advanced methods is summarized below.

Workflow for Uncertainty Profile Validation

Comparative Experimental Data and Performance

Case Study: cIEF for Monoclonal Antibody

A case study evaluating a capillary isoelectrofocusing (cIEF) method for a monoclonal antibody applied five different ICH-suggested techniques to assess LOD and LOQ [15]. The results demonstrated that while different techniques produced varying raw results, they could be converted to common units using instrument sensitivity. Validation experiments confirmed that all techniques provided meaningful values, with no significant discrepancies in the final calculated LOD and LOQ concentrations, supporting the use of any single technique for purity methods [15].

Case Study: HPLC for Sotalol in Plasma

A comparative study of different approaches for an HPLC method analyzing sotalol in plasma revealed important performance differences [3].

- Classical Strategy: Methods based purely on statistical concepts (e.g., standard deviation of the blank and slope of the calibration curve) provided underestimated values for LOD and LOQ [3].

- Graphical Strategies: The uncertainty profile and accuracy profile methods, both based on tolerance intervals, provided a relevant and realistic assessment. The values obtained from these two graphical methods were of the same order of magnitude, with the uncertainty profile method providing a more precise estimate of measurement uncertainty [3].

Table 2: Comparison of LOD/LOQ Values from Different Assessment Methods for an HPLC Method [3]

| Assessment Method | LOD / LOQ Result | Assessment of Result |

|---|---|---|

| Classical Strategy (Standard Deviation & Slope) | Underestimated values | Not realistic for method capability |

| Accuracy Profile (Graphical) | Relevant and realistic values | Reliable assessment |

| Uncertainty Profile (Graphical) | Relevant and realistic values | Reliable assessment with precise uncertainty estimate |

Instrument Comparison: GC-MS vs. GC-IMS

A 2024 study comparing the quantification performance of a thermal desorption gas chromatography system coupled with both mass spectrometry (MS) and ion mobility spectrometry (IMS) highlighted how LOD and linear range can vary significantly with detection technology [16].

- Sensitivity: IMS was found to be approximately ten times more sensitive than MS, achieving limits of detection in the picogram per tube range [16].

- Linear Range: MS exhibited a broader linear range, maintaining linearity over three orders of magnitude (up to 1000 ng/tube). In contrast, IMS retained linearity for only one order of magnitude before transitioning into a logarithmic response, though a linearization strategy could extend this to two orders of magnitude [16].

Table 3: Performance Comparison of MS and IMS Detectors in TD-GC System [16]

| Parameter | MS Detector | IMS Detector |

|---|---|---|

| Relative Sensitivity | Baseline | ~10x more sensitive than MS |

| Limit of Detection | Higher | Picogram/tube range |

| Linear Range | 3 orders of magnitude (up to 1000 ng/tube) | 1 order of magnitude (extendable to 2) |

The Scientist's Toolkit: Essential Research Reagent Solutions

The following reagents and materials are critical for executing the experimental protocols for LOD/LOQ determination across different guidelines.

Table 4: Essential Materials for LOD/LOQ Determination Experiments

| Reagent/Material | Function in Experiment | Application Guidelines |

|---|---|---|

| Certified Reference Materials (CRMs) | Provides traceable, known analyte concentrations to establish calibration curves and determine accuracy. | ICH, CLSI, ISO |

| Blank Matrix | A sample containing all components except the analyte, used to determine baseline noise and LoB. | CLSI EP17 (Critical), ICH |

| Low-Concentration QC Material | A sample with analyte concentration near the expected LoD, used to empirically distinguish analyte signal from blank. | CLSI EP17 (Critical), ICH |

| Internal Standard (e.g., Atenolol for HPLC) | Used to correct for variability in sample preparation and injection volume, improving precision. | ICH (Commonly) |

| Pharmalytes / pI Markers | For charge-based separation methods like cIEF, used to create and calibrate the pH gradient. | ICH (Method Specific) |

| Selective Enrichment Media | Used in microbiological assays to recover and amplify low numbers of target organisms from large sample volumes. | ISO 16140 |

The choice between ICH, CLSI, and ISO guidelines for determining detection and quantitation limits is dictated by the intended use of the analytical method and the regulatory context.

- For Pharmaceutical Quality Control (Drug Substance/Product): ICH Q2(R2) is the definitive standard. Its methods based on visual evaluation, signal-to-noise, and the standard deviation of the response and slope are designed for the chemical assays prevalent in pharmaceutical analysis [12] [6].

- For Clinical Laboratory Diagnostics: CLSI EP17 is the most rigorous and appropriate framework. Its three-tiered model (LoB, LoD, LoQ) is specifically designed for clinical measurement procedures, especially those where medical decision levels are very low, and it properly accounts for the statistical overlap between blank and low-concentration samples [13] [1] [5].

- For Food Safety and Microbiological Testing: The ISO 16140 series provides the relevant guidance. Its probabilistic approach to LOD is tailored to the biological variability inherent in microbiological assays and is widely accepted for food and environmental testing [12].

Emerging graphical strategies like the uncertainty profile offer a powerful, modern alternative for research and bioanalytical methods, providing a more realistic and comprehensive assessment of a method's capabilities at low concentrations, including an explicit estimate of measurement uncertainty [3]. Regardless of the guideline, a well-designed validation study using appropriate materials and sufficient replication is fundamental to generating reliable and defensible LOD and LOQ values.

Why LOD is Crucial for Method Validation and 'Fitness for Purpose'

In analytical chemistry and bioanalysis, the Limit of Detection (LOD) is a fundamental parameter that defines the lowest concentration of an analyte that can be reliably distinguished from its absence. Its accurate determination is not merely a regulatory formality but a cornerstone of method validation, ensuring that an analytical procedure is "fit for purpose" [3] [1]. This guide objectively compares the performance of established and emerging LOD determination strategies, supported by experimental data, to empower researchers in selecting the most appropriate methodology for their specific application.

The Fundamental Role of LOD in Analytical Science

The Limit of Detection (LOD) is formally defined as the lowest quantity or concentration of a component that can be reliably detected with a given analytical method but not necessarily quantified as an exact value [9] [17]. Its significance stems from its role in defining the lower boundary of an method's capability, directly impacting decision-making in drug development, environmental monitoring, and clinical diagnostics.

The concept of "fitness for purpose" dictates that an analytical method must possess the requisite sensitivity and reliability for its intended application [3] [18]. A method with an inappropriately high LOD may fail to detect critical impurities or low-abundance biomarkers, leading to false conclusions and potential safety risks. Conversely, an overly conservative LOD can impose unnecessary analytical burdens and costs. The LOD is therefore not an isolated statistical exercise but a critical performance characteristic that connects methodological capability to real-world analytical requirements [1].

The statistical foundation of LOD revolves around managing Type I (false positive) and Type II (false negative) errors [9]. The critical level (LC) is the signal threshold above which an observation is considered detected, controlling the false positive rate (α). The detection limit (LD) is the true concentration at which a specified false negative rate (β) is maintained, typically set at 5% for both error types [9] [1]. This relationship ensures that a method can not only identify the presence of an analyte but do so with a known and acceptable level of confidence.

Comparative Analysis of LOD Determination Methodologies

Multiple approaches exist for determining LOD, each with distinct theoretical foundations, computational requirements, and performance characteristics. The choice of methodology significantly influences the resulting LOD value and its practical relevance.

Established and Emerging Calculation Strategies

The following table summarizes the core principles, formulae, and key characteristics of prevalent LOD determination methods.

Table 1: Comparison of Primary LOD Determination Methodologies

| Methodology | Fundamental Principle | Typical Formula | Key Characteristics |

|---|---|---|---|

| Uncertainty Profile [3] | A graphical tool based on β-content tolerance intervals and measurement uncertainty, comparing uncertainty intervals to acceptability limits. | ( \text{LOQ is the intersection of uncertainty profile and acceptability limits} ) | Provides precise uncertainty estimates; relevant and realistic assessment; requires balanced data and Satterthwaite approximation. |

| Accuracy Profile [3] | A graphical approach using tolerance intervals for total error (bias + precision). | Derived from tolerance intervals around the regression line. | A reliable graphical alternative to classical methods; directly links to method validity over a concentration range. |

| Signal-to-Noise (S/N) [9] [19] | Empirical measurement of the ratio of the analyte signal to the background noise. | ( \text{LOD = Concentration at S/N ≈ 3} ) | Simple and rapid; mandated in some guidelines (e.g., ICH, USP); unsuitable for multi-signal techniques like MS/MS. |

| Standard Deviation of Blank/Low-Level Sample [9] [1] [20] | Statistical approach based on the standard deviation (SD) of replicate measurements of a blank or a low-concentration sample. | ( \text{LOD = LOB + 1.645(SD}_{low\ sample}) )( \text{or LOD = 3.3 × SD / Slope} ) | Different versions exist (blank vs. low-level sample); IUPAC/ACS recommends k=3 (LOD=3SD/slope); CLSI defines LOB/LoD. |

| Calibration Curve [18] | Utilizes the standard error of the regression and the slope of the calibration function. | ( \text{LOD = 3.3 × σ / S} ) (where σ is residual SD, S is slope) | Common in regulatory guidelines (e.g., ICH); integrates method sensitivity and variability; assumes homoscedasticity. |

Performance Comparison with Experimental Data

Different LOD calculation methods applied to the same dataset can yield significantly divergent results, underscoring the importance of methodological selection.

Table 2: Experimental LOD/LOQ Values for Sotalol in Plasma Using HPLC (n=5) [3]

| Validation Methodology | LOD (ng/mL) | LOQ (ng/mL) |

|---|---|---|

| Classical Strategy (Calibration Curve) | 12.5 | 37.9 |

| Accuracy Profile | 31.6 | 35.5 |

| Uncertainty Profile | 33.1 | 35.0 |

A study on an HPLC method for sotalol in plasma demonstrated that classical calibration curve approaches can yield underestimated values for LOD and LOQ compared to more advanced graphical strategies [3]. The Accuracy and Uncertainty Profiles provided concordant, realistic assessments of the method's capabilities, as they incorporate total error and measurement uncertainty more comprehensively.

Similarly, a study comparing LOD for carbamazepine and phenytoin via HPLC-UV found that the Signal-to-Noise (S/N) method provided the lowest LOD and LOQ values, while the standard deviation of the response and slope (SDR) method resulted in the highest values [11]. This highlights a high degree of variability dependent on the chosen calculation method.

For modern, complex techniques, traditional methods can be inadequate. Research on myclobutanil detection by GC-MS/MS showed that while S/N and blank standard deviation methods calculated impressively low LODs (e.g., 0.066 pg), the actual "Limit of Identification"—the lowest concentration reliably meeting ion ratio criteria—was a more pragmatic 1 pg [19]. This demonstrates that for confirmatory multi-signal mass spectrometry, identification-based criteria are more fit-for-purpose than detection-centric calculations.

Experimental Protocols for LOD Determination

Protocol for Uncertainty Profile Strategy

The uncertainty profile is an innovative validation approach based on the tolerance interval and measurement uncertainty [3].

- Experimental Design: Analyze a statistically significant number of series (a) and independent replicates per series (n) across validation standards at various concentration levels, including the expected low limit.

- Data Collection: For each level, record the predicted concentrations of all validation standards.

- Tolerance Interval Calculation: For each concentration level, compute the two-sided β-content γ-confidence tolerance interval. This interval estimates the range that contains a specified proportion β of the population with a confidence level γ.

- Calculate the reproducibility variance (( \hat{\sigma}m^2 )) as the sum of between-condition variance (( \hat{\sigma}b^2 )) and within-condition variance (( \hat{\sigma}e^2 )).

- Compute the tolerance factor (( k{tol} )) using the Satterthwaite approximation for degrees of freedom.

- The tolerance interval is given by: ( \bar{Y} \pm k{tol} \hat{\sigma}m ), where ( \bar{Y} ) is the mean result.

- Measurement Uncertainty Assessment: Derive the standard measurement uncertainty ( u(Y) ) for each level using the formula: ( u(Y) = (U - L) / 2t(ν) ), where U and L are the upper and lower tolerance limits, and ( t(ν) ) is the quantile of the Student's t-distribution with ν degrees of freedom.

- Construct Uncertainty Profile: Plot the uncertainty intervals (( \bar{Y} \pm k u(Y) ), with k=2 for 95% confidence) against concentration. Superimpose the pre-defined acceptability limits (λ).

- Determine LOD and LOQ: The LOQ is the lowest concentration where the entire uncertainty interval falls within the acceptability limits. The LOD can be identified as a lower point where the uncertainty profile begins to diverge or can be calculated as the intersection point of the upper uncertainty line and the acceptability limit using linear algebra [3].

Protocol for Standard Deviation of the Blank and Calibration Slope

This classical method is widely recommended by IUPAC, ACS, and CLSI [9] [1] [20].

- Blank Preparation: Prepare a test sample (ideally a real matrix) that contains no analyte or a very low concentration close to the expected detection limit.

- Replicate Analysis: Analyze a minimum of 10-20 portions of this blank sample following the complete analytical procedure under specified precision conditions (e.g., repeatability or intermediate conditions) [9] [20]. CLSI EP17 recommends up to 60 replicates for a robust establishment [1].

- Response Conversion: Convert the instrument responses (e.g., peak areas) to concentration units using the calibration curve (subtracting blank signal and dividing by the slope).

- Standard Deviation Calculation: Calculate the standard deviation (SD) of these blank concentrations.

- LOD Calculation:

- Option 1 (CLSI): First, calculate the Limit of Blank (LoB). ( \text{LoB} = \text{mean}{blank} + 1.645(\text{SD}{blank}) ) (assuming a 5% false-positive rate for a one-sided test). Then, analyze a low-concentration sample, calculate its SD, and compute ( \text{LoD} = \text{LoB} + 1.645(\text{SD}{low\ concentration\ sample}) ) [1].

- Option 2 (IUPAC/ACS): ( \text{LOD} = 3 \times \text{SD}{blank} / \text{slope} ) of the calibration curve. If using a low-concentration sample instead of a blank and the standard deviation of the response, a factor of 3.3 is often used instead of 3 to account for the smaller number of replicates and the use of the t-distribution: ( \text{LOD} = 3.3 \times \text{SD} / \text{slope} ) [9] [20] [18].

Workflow and Decision Pathways for LOD Determination

Selecting and executing the appropriate strategy for LOD determination is a multi-step process. The following workflow diagrams provide a visual guide for researchers.

LOD Method Selection Workflow

Figure 1: A decision workflow to guide the selection of an appropriate LOD determination methodology based on the analytical technique's characteristics and regulatory context.

General LOD/LOQ Calculation Workflow

Figure 2: A generalized experimental workflow for the determination and verification of LOD and LOQ, as proposed in tutorial literature [18].

Essential Research Reagent Solutions for LOD Studies

The experimental determination of LOD requires specific high-quality materials and reagents to ensure accuracy and reproducibility.

Table 3: Key Reagents and Materials for LOD Determination Experiments

| Reagent / Material | Function in LOD Studies | Critical Considerations |

|---|---|---|

| Analyte-Free Matrix | Serves as the "blank" sample for establishing the baseline signal and calculating LoB/LOD. | Must be commutable with real patient or sample specimens; can be challenging for complex or biological matrices [1] [18]. |

| Certified Reference Material (CRM) | Provides a known, traceable quantity of the analyte for preparing accurate calibration standards and low-concentration samples. | Purity and stability are critical for preparing precise serial dilutions for calibration and spiking [18]. |

| Internal Standard (e.g., Atenolol) | Used in bioanalytical methods (e.g., HPLC) to correct for variability in sample preparation and instrument response. | Should be a stable, non-interfering compound that behaves similarly to the analyte [3]. |

| High-Purity Solvents & Reagents | Used for sample preparation, dilution, and mobile phase preparation in chromatographic methods. | High purity is essential to minimize background noise and interfering signals that can elevate the LOD [20]. |

| Matrix-Matched Standards | Calibration standards prepared in the same matrix as the sample (e.g., plasma, urine, soil extract). | Crucial for accurate quantification as they correct for matrix effects that can alter the analytical signal [19] [18]. |

The determination of the Limit of Detection is a critical, non-negotiable component of analytical method validation. As demonstrated, the choice of methodology—from classical statistical methods to modern graphical profiles and identification-based limits—profoundly influences the reported LOD value and, consequently, the perceived "fitness for purpose" of the method.

Researchers must move beyond simply selecting a mandated formula. The evidence shows that graphical strategies like Uncertainty and Accuracy Profiles offer more realistic and relevant assessments of a method's capabilities at low concentrations compared to classical strategies, which can be underestimating [3]. Furthermore, for advanced multi-signal techniques like MS/MS, a paradigm shift towards a "Limit of Identification" is necessary to ensure detection is synonymous with reliable identification [19].

Therefore, the most crucial practice is to align the LOD determination strategy with the technical demands of the analytical method and the overarching requirement that the method be truly fit for its intended purpose, providing reliable data for scientific and regulatory decision-making.

LOD in Practice: Standard and Advanced Determination Methods

The Standard Deviation of the Blank and the Signal-to-Noise Ratio

In analytical chemistry, accurately determining the lowest concentration of an analyte that a method can reliably detect is fundamental to method validation and ensuring data quality. Two predominant methodologies have emerged for establishing the Limit of Detection (LOD): the Standard Deviation of the Blank method and the Signal-to-Noise Ratio method. The former is a statistically rigorous approach grounded in hypothesis testing and error propagation, while the latter provides a practical, instrument-based estimation commonly used in chromatographic and spectroscopic techniques. This guide objectively compares these two core methodologies by examining their underlying principles, experimental protocols, and performance outcomes, providing researchers and drug development professionals with the data necessary to select the appropriate technique for their analytical applications.

Fundamental Principles and Definitions

Limit of Detection (LOD) and Limit of Quantification (LOQ)

The Limit of Detection (LOD) is the lowest concentration of an analyte that can be reliably distinguished from a blank sample (containing no analyte) with a stated confidence level, but not necessarily quantified as an exact value [9] [17]. Closely related is the Limit of Quantitation (LOQ), defined as the lowest concentration at which an analyte can not only be detected but also quantified with acceptable precision and accuracy [6] [1]. These parameters are critical for defining the lower limits of an analytical method's dynamic range and are directly related to its fitness for purpose, particularly in trace analysis for pharmaceutical impurities, environmental contaminants, and clinical diagnostics [21] [1].

Core Concepts: Standard Deviation of the Blank and Signal-to-Noise Ratio

The Standard Deviation of the Blank method treats LOD determination as a statistical problem. It acknowledges that measurements of both blank and low-concentration samples exhibit random variations, leading to potential false positives (Type I error, α) and false negatives (Type II error, β) [9]. This method uses the distribution of blank measurements to establish a critical level (LC) and then ensures a low probability of false negatives at the LOD [9] [1].

The Signal-to-Noise Ratio (SNR) method is more empirical and instrumental. SNR is a measure that compares the level of a desired signal to the level of background noise, often expressed in decibels but simplified to a ratio in many analytical contexts [22]. In chromatography, for instance, the LOD is frequently defined as the concentration at which the analyte peak height is three times the baseline noise level (S/N = 3), while the LOQ is set at a ratio of 10:1 [21] [9] [23]. This approach is intuitive but can be more dependent on specific instrument conditions and settings.

Table 1: Core Definitions and Foundational Concepts

| Concept | Description | Primary Context |

|---|---|---|

| Limit of Detection (LOD) | Lowest analyte concentration reliably distinguished from a blank [9] [17]. | Universal analytical chemistry |

| Limit of Quantification (LOQ) | Lowest concentration quantifiable with stated precision and accuracy [6] [1]. | Universal analytical chemistry |

| Standard Deviation of the Blank | Statistical measure of the variability in measurements of a blank sample [9] [1]. | Statistical LOD determination |

| Signal-to-Noise Ratio (SNR) | Ratio of the amplitude of a desired signal to the amplitude of background noise [22] [21]. | Instrumental/chromatographic LOD determination |

| False Positive (Type I Error) | Probability of concluding an analyte is present when it is not (α) [9]. | Statistical LOD determination |

| False Negative (Type II Error) | Probability of failing to detect an analyte that is present (β) [9]. | Statistical LOD determination |

Methodological Comparison: Experimental Protocols

Standard Deviation of the Blank Method

This protocol is based on guidelines from international standards and clinical laboratory practices [9] [1].

1. Experimental Procedure:

- Blank Sample Preparation: Obtain or prepare a test sample with a matrix identical to the real samples but containing no analyte.

- Low-Concentration Sample Preparation: Prepare a sample known to contain the analyte at a concentration near the expected LOD. This can be a spiked blank or a dilution of the lowest calibrator.

- Replicate Analysis: Analyze a minimum of 10, but preferably 20 (for verification) or 60 (for establishment), replicates of both the blank and the low-concentration sample following the complete analytical procedure [9] [1]. The replicates should be measured over different days to capture intermediate precision.

- Data Collection: Record the concentration values (or raw signals) for all replicates.

2. Data Analysis and Calculation:

- Step 1: Calculate the mean (( \text{mean}{\text{blank}} )) and standard deviation (( \text{SD}{\text{blank}} )) of the results from the blank sample.

- Step 2: Calculate the Limit of Blank (LoB).

( \text{LoB} = \text{mean}{\text{blank}} + 1.645 \times \text{SD}{\text{blank}} ) This establishes the critical level where the probability of a false positive is limited to 5% (for a one-sided test) [1].

- Step 3: Calculate the mean and standard deviation (( \text{SD}_{\text{low}} )) of the results from the low-concentration sample.

- Step 4: Calculate the Limit of Detection (LOD). ( \text{LOD} = \text{LoB} + 1.645 \times \text{SD}{\text{low}} ) This formula ensures that the probability of a false negative is also limited to 5% at the LOD concentration, assuming normal distributions and constant variance [1]. If ( \text{SD}{\text{blank}} ) and ( \text{SD}{\text{low}} ) are similar and α = β = 0.05, this simplifies to LOD ≈ 3.3 × ( \text{SD}{\text{blank}} ) [9].

The following workflow illustrates the step-by-step process for determining LOD using the Standard Deviation of the Blank method:

Signal-to-Noise Ratio Method

This protocol is commonly described in chromatographic applications and pharmacopoeias like the ICH guidelines [21] [9].

1. Experimental Procedure:

- Blank Analysis: Inject a blank sample (matrix without analyte) and record the chromatogram. The baseline noise is observed over a distance equal to at least 20 times the width at half-height of the analyte peak [9].

- Standard Analysis: Inject a standard solution with a low concentration of the analyte. The concentration should be such that the peak is clearly visible but still relatively small.

2. Data Analysis and Calculation:

- Step 1: Measure the Noise (N). The noise is typically measured as the peak-to-peak amplitude of the baseline variation in a chromatogram section free from peaks, usually near the retention time of the analyte. Alternatively, the European Pharmacopoeia defines the range (maximum amplitude) of the background noise as h [9].

- Step 2: Measure the Signal (S). For the analyte in the low-concentration standard, measure the signal. This is usually the peak height (H) from the baseline to the peak maximum [9].

- Step 3: Calculate Signal-to-Noise Ratio (S/N).

( S/N = \frac{H}{h} ) [9] where H is the peak height of the analyte and h is the range of the background noise.

Table 2: Direct Comparison of LOD Determination Methodologies

| Aspect | Standard Deviation of the Blank | Signal-to-Noise Ratio (S/N) |

|---|---|---|

| Theoretical Basis | Statistical (Hypothesis testing, Type I/II errors) [9] [1] | Empirical (Instrumental performance) [21] [9] |

| Primary Application | General analytical chemistry, clinical labs, method validation [6] [1] | Chromatography (HPLC, UHPLC), spectroscopy [21] [9] |

| Key Input Parameters | Mean and Standard Deviation of blank and low-conc. samples [1] | Peak Height (H) and Noise Amplitude (h) [9] |

| Standard LOD Threshold | LoB + 1.645×SD (Typically ~3.3×SD_blank) [9] [1] | S/N = 3 : 1 [21] [9] |

| Standard LOQ Threshold | Typically 10×SD_blank [6] | S/N = 10 : 1 [21] [9] |

| Regulatory Recognition | ISO, CLSI EP17 [1] | ICH Q2(R1), USP, European Pharmacopoeia [21] [9] |

| Key Advantage | Statistically robust, defines error probabilities [9] [1] | Simple, fast, intuitive, no complex statistics [21] |

| Key Limitation | Requires many replicates, more complex calculations [9] | Can be subjective (noise measurement), instrument-dependent [21] |

Essential Research Reagents and Materials

The experimental determination of LOD, regardless of the method, requires specific materials to ensure accuracy and reproducibility. The following table details key solutions and reagents.

Table 3: Key Research Reagent Solutions for LOD Experiments

| Reagent/Solution | Function and Description | Critical Parameters |

|---|---|---|

| Blank Matrix | A sample with the same matrix as the unknown but containing no analyte. Serves as the baseline for measurement [24]. | Commutability with patient/real samples; purity from interfering substances [1]. |

| Standard Reference Material | A sample with a known and certified concentration of the analyte, used for spiking and calibration [24]. | Purity, stability, and traceability to a primary standard. |

| Spiked Low-Concentration Sample | A sample prepared by adding a known, small quantity of the analyte to the blank matrix. Used in the SD method to estimate performance at the detection limit [1] [24]. | Concentration near the expected LOD; accurate and precise preparation. |

| Mobile Phase & Buffers | Solvents and buffers used in chromatographic separations to carry the sample through the column. | Purity (HPLC/LC-MS grade), pH, ionic strength, and freedom from particulates. |

| Calibration Standards | A series of samples with known analyte concentrations used to construct the calibration curve. | Linear range that includes the expected LOD and LOQ; appropriate matrix-matching [23]. |

Comparative Analysis and Research Data

Performance and Practical Considerations

The choice between these two methods significantly impacts the reported LOD value and its reliability. The Standard Deviation of the Blank method is considered more statistically sound because it explicitly controls for both false positives and false negatives, providing a comprehensive view of method performance at its detection limits [9] [1]. However, its requirement for a large number of replicate analyses (n=20 to 60) makes it more resource-intensive [1].

In contrast, the Signal-to-Noise Ratio method is highly practical and efficient for routine use in laboratories using chromatographic systems, as it can be performed with minimal injections [21]. A significant drawback is its susceptibility to subjective interpretation; for example, the perceived noise level can vary depending on the chromatographic section selected for measurement and instrument settings like the time constant, which can smooth out noise and potentially obscure smaller peaks if over-applied [21].

Data Interpretation and Regulatory Context

Interpreting results requires understanding what each LOD value represents. An LOD derived from the standard deviation method (e.g., 3.3×SD) with a 5% error rate for both false positives and negatives means that at that concentration, there is a 5% chance a true analyte will be reported as absent [9]. The ICH guideline, which champions the S/N method, is implemented by major regulatory bodies worldwide, including the FDA (USA), EMA (Europe), and PMDA (Japan) [21].

For contexts requiring the utmost statistical rigor, such as clinical diagnostics or forensic testing, the Standard Deviation of the Blank method is often preferred or required [1] [24]. In pharmaceutical quality control for impurity testing, the Signal-to-Noise method is deeply entrenched and accepted due to its simplicity and alignment with ICH guidelines [21].

The selection between the Standard Deviation of the Blank and the Signal-to-Noise Ratio for LOD determination is not merely a technical choice but a strategic one, dictated by the analytical context, regulatory environment, and required rigor. The Standard Deviation of the Blank method offers a robust statistical foundation, making it suitable for clinical, forensic, and research applications where understanding and controlling error probabilities is paramount. The Signal-to-Noise Ratio method provides a rapid, practical tool perfectly adequate for routine chromatographic analysis in regulated industries like pharmaceuticals, where it is the established standard.

Ultimately, the best practice is to understand the principles, advantages, and limitations of both methods. For method validation, especially in a GLP or GMP environment, verifying a manufacturer's LOD claim using a statistically sound approach like the standard deviation method, even if the stated LOD was originally derived from an S/N ratio, can provide greater confidence in the analytical capabilities of the method [21] [24].

In the field of analytical chemistry and drug development, accurately determining the limit of detection (LOD) is crucial for method validation and ensuring data reliability. Among the various techniques available, the method based on the standard deviation of the response and the slope of the calibration curve stands out for its statistical rigor. This approach, endorsed by the International Council for Harmonisation (ICH) guideline Q2(R1), provides a mathematically sound framework for estimating the lowest analyte concentration that can be reliably detected. This guide objectively compares this method with alternative LOD determination techniques, providing supporting experimental data and detailed protocols to help researchers select the most appropriate methodology for their specific applications.

Comparative Analysis of LOD Determination Methods

The following table summarizes the key characteristics of the three primary approaches for determining the Limit of Detection, allowing researchers to compare their relative advantages and limitations.

| Method | Principle | Calculation | Best Use Cases | Key Limitations |

|---|---|---|---|---|

| Standard Deviation of Response & Slope | Statistical relationship between calibration curve parameters and detection capability [10] | LOD = 3.3 × σ / S LOQ = 10 × σ / S Where σ = standard deviation of response, S = slope of calibration curve [10] | Regulatory compliance (ICH Q2(R1)), methods requiring statistical rigor, quantitative comparisons [10] | Requires linear calibration model; assumes normal error distribution; slope variability affects results [10] |

| Signal-to-Noise Ratio | Visual or instrumental comparison of analyte signal to background noise [9] | LOD: S/N ≈ 3:1 LOQ: S/N ≈ 10:1 [9] | Quick estimates, chromatographic methods, quality control checks | Subjective measurement; instrument-dependent; unsuitable for multi-signal techniques like MS/MS [19] |

| Limit of Blank (LoB) & Empirical Testing | Statistical distinction between blank samples and low-concentration samples [1] | LoB = meanblank + 1.645(SDblank) LOD = LoB + 1.645(SD_low concentration sample) [1] | Clinical diagnostics, methods with significant background interference, when blank matrix is available | Requires large number of replicates (n=20-60); more resource-intensive [1] |

Experimental Protocols

Protocol 1: LOD Determination via Standard Deviation and Slope Method

Materials and Equipment

- Analytical Instrument (e.g., HPLC-MS, UV-Vis spectrophotometer) with data acquisition capability [25]

- Reference Standard of known purity and concentration [25]

- Appropriate Solvent for preparing standard solutions [25]

- Volumetric Glassware (flasks, pipettes) for accurate dilution series [25]

- Data Analysis Software with linear regression capability (e.g., Excel, specialized analytical software) [10]

Procedure

Prepare Calibration Standards: Create a minimum of 5-6 standard solutions covering the expected concentration range, including concentrations near the expected LOD. Use serial dilution techniques to ensure accuracy [25].

Analyze Standards: Process each calibration standard through the complete analytical method, using a minimum of three replicate injections or measurements for each concentration level [25].

Generate Calibration Curve: Plot instrument response (y-axis) against concentration (x-axis). Perform linear regression to obtain the equation y = mx + b, where m represents the slope (S) of the calibration curve [26] [10].

Determine Standard Deviation (σ): Calculate the standard deviation of the response using one of these approaches:

Calculate LOD and LOQ:

Experimental Verification: Prepare and analyze multiple samples (n ≥ 6) at the calculated LOD and LOQ concentrations to confirm they meet detection and quantification reliability criteria [10].

Protocol 2: Comparative Study Design for Method Validation

Objective

To empirically compare the LOD values obtained through the standard deviation/slope method against signal-to-noise and Limit of Blank approaches using a representative analyte.

Experimental Design

Sample Preparation: Prepare a blank matrix and a series of standard solutions at concentrations spanning from below the expected LOD to the upper limit of quantification.

Parallel Analysis: Analyze all samples using each LOD determination method simultaneously under identical instrument conditions.

Data Collection:

- For standard deviation/slope method: Full calibration curve with replicate measurements

- For S/N method: Multiple injections at low concentrations with noise measurements

- For LoB method: Multiple blank replicates and low-concentration samples

Statistical Comparison: Calculate LOD values using each method and compare results for consistency and precision.

Essential Research Reagent Solutions

The following table outlines key materials and equipment required for implementing the standard deviation and slope method for LOD determination.

| Item | Function/Purpose | Critical Specifications |

|---|---|---|

| Primary Reference Standard | Provides known analyte for calibration curve preparation [25] | Certified purity (>95%); appropriate for matrix; stable under storage conditions |

| Matrix-Matched Solvent/Blank | Dissolves standards and mimics sample matrix [25] | Free of target analyte; chemically compatible with instrument |

| Volumetric Flasks & Pipettes | Precise preparation of standard solutions and dilutions [25] | Class A tolerance; calibrated regularly; appropriate volume range |

| HPLC-MS or UV-Vis Instrument | Measures analytical response for standards and samples [25] | Sufficient sensitivity for target LOD; stable baseline; linear dynamic range |

| Data Analysis Software | Performs linear regression and statistical calculations [10] [25] | Linear regression capability; standard error calculation; ICH-compliant reporting |

Visualizing the LOD Determination Workflow

The following diagram illustrates the logical relationship and workflow between the calibration curve components and LOD calculation using the standard deviation and slope method.

LOD Calculation Workflow

The standard deviation of response and slope method provides a statistically robust approach for LOD determination that is particularly valuable in regulated environments and when comparing method performance across laboratories. While the signal-to-noise method offers simplicity and speed for routine applications, and the Limit of Blank approach provides fundamental statistical distinction between blank and analyte-containing samples, the standard deviation/slope method strikes an optimal balance between statistical rigor and practical implementation. Researchers should select the appropriate method based on their specific application, regulatory requirements, and available resources, with the understanding that experimental verification remains an essential final step in any LOD determination protocol.

Visual Evaluation and Logistics Regression for Qualitative Methods

The accurate determination of the Limit of Detection (LOD)—the lowest concentration of an analyte that can be reliably detected—is fundamental to developing and validating qualitative diagnostic methods across clinical, pharmaceutical, and biotechnology sectors. Within a broader thesis on LOD determination methodologies, this guide objectively compares two established approaches: the traditional method of Visual Evaluation and the statistical technique of Logistic Regression analysis. Visual evaluation relies on direct observation (by an analyst or instrument) of an analytical signal at decreasing concentrations, whereas logistic regression employs a statistical model to analyze binary detection outcomes (detected/not detected) across a concentration gradient to precisely determine the concentration at which detection becomes predictable [6]. The selection between these methods significantly impacts the reported performance characteristics of diagnostic tests, such as those used in nucleic acid amplification (e.g., LAMP for cytomegalovirus DNA) or chemical contaminant analysis [27] [19]. This guide provides a comparative analysis of their experimental protocols, performance, and applicability to empower researchers and drug development professionals in making informed methodological choices.

Experimental Protocols and Workflows

Visual Evaluation Protocol

The visual evaluation method determines the LOD through direct assessment of detection events at a series of known concentrations [6].

- Step 1 – Sample Preparation: A dilution series of the analyte is prepared, typically encompassing five to seven concentration levels expected to bracket the visual detection threshold. A minimum of six replicate samples should be prepared at each concentration level to account for biological and technical variability [6].

- Step 2 – Analysis and Observation: Each sample is analyzed using the qualitative method (e.g., colorimetric LAMP, lateral flow immunoassay). For each replicate, an analyst or an automated instrument records a binary outcome: the analyte is either "detected" or "not detected" based on a predefined, observable signal (e.g., color change, turbidity, band presence) [27] [6].

- Step 3 – Data Analysis and LOD Determination: The data is analyzed by plotting the percentage of replicates detected at each concentration level. The LOD is empirically defined as the lowest concentration at which a high percentage (e.g., ≥95%) of replicates are positively detected. This does not typically involve complex statistical curve-fitting but relies on direct observation of the data trend [6].

The following workflow diagram illustrates the key steps in this protocol:

Logistic Regression Protocol

Logistic regression models the relationship between analyte concentration and the probability of detection, providing a statistical basis for LOD determination [28] [29] [6].

- Step 1 – Sample Preparation: Similar to the visual method, a dilution series is prepared. However, for logistic regression, it is critical that the concentration range adequately covers the full transition from 0% to 100% detection probability to ensure a robust model fit. A larger number of replicates per concentration (e.g., 10-20) enhances the statistical power of the model [6].

- Step 2 – Analysis and Binary Recording: Identical to the visual protocol, each replicate is analyzed and scored as a binary outcome (1 for detected, 0 for not detected) [6].

- Step 3 – Statistical Modeling and LOD Calculation: The binary data is analyzed using logistic regression, where the log-odds (logit) of detection is modeled as a linear function of the logarithm of concentration. The model is represented by the equation:

log(p/(1-p)) = β₀ + β₁*log(concentration), wherepis the probability of detection [29] [30]. The LOD is statistically defined as the concentration corresponding to a specified detection probability (e.g., 0.95 or 95%), which is calculated from the fitted model parameters [6].

The following workflow diagram illustrates the key steps in this protocol:

Performance Comparison and Experimental Data

Comparative Performance Metrics

Direct comparisons in the literature indicate that logistic regression can offer superior statistical performance in certain contexts. A comparative study on diagnostic tests found that while c-statistics (a ROC curve-based method) showed no significant difference between a new test and a standard test (p=0.08), logistic regression analysis of the same data demonstrated that the new test was a significantly better predictor of disease (p=0.04) [31] [32]. This suggests logistic regression may provide greater sensitivity in discriminating test performance.

Worked Example and Data Analysis

The following table summarizes hypothetical binary detection data for an analyte, simulating the type of data collected in a LOD experiment. This example will be used to illustrate the key difference in how the LOD is derived from the same dataset using the two different methods.

Table 1: Example Binary Detection Data at Various Concentrations

| Concentration (cp/rxn) | Number of Replicates | Number of "Detected" | Percentage Detected |

|---|---|---|---|

| 100 | 20 | 20 | 100% |

| 50 | 20 | 20 | 100% |

| 25 | 20 | 18 | 90% |

| 12.5 | 20 | 12 | 60% |

| 6.25 | 20 | 5 | 25% |

| 3.125 | 20 | 1 | 5% |

| 0 (Blank) | 20 | 0 | 0% |

- Visual Evaluation Analysis: Based on the data in Table 1, a researcher would identify 25 cp/rxn as the LOD, as it is the lowest concentration where detection is observed in a high percentage (≥95% in this strict definition) of replicates. Some protocols might define the LOD as the concentration where 50% or 95% of replicates are positive, which can introduce subjectivity [6].

- Logistic Regression Analysis: The same data is fitted with a logistic regression model. Suppose the model calculates a 95% detection probability at 20.5 cp/rxn. This value is the statistically derived LOD, which provides a more precise estimate than the discrete concentration levels tested and comes with a confidence interval (e.g., 18.2 to 23.1 cp/rxn), quantifying the uncertainty of the estimate [28] [29].

The following diagram conceptualizes how the detection probability curve from logistic regression provides a more interpolated LOD value compared to the discrete, step-wise interpretation of visual evaluation.

Objective Comparison of Method Characteristics

Table 2: Direct Comparison of Visual Evaluation and Logistic Regression for LOD

| Feature | Visual Evaluation | Logistic Regression |

|---|---|---|

| Underlying Principle | Empirical, based on direct observation of detection events [6]. | Statistical, models the probability of detection as a function of concentration [28] [29]. |

| Data Input | Binary (Detected/Not Detected) at each concentration. | Binary (Detected/Not Detected) at each concentration. |

| LOD Definition | The lowest tested concentration where a high percentage (e.g., ≥95%) of replicates are positive [6]. | The concentration corresponding to a specified detection probability (e.g., 95%), derived from a fitted model [6]. |

| Precision & Uncertainty | Does not provide a statistical confidence interval for the LOD. The estimate is constrained to the tested concentrations. | Provides a confidence interval for the LOD estimate, quantifying measurement uncertainty [28]. |

| Handling of Variability | Relies on a sufficient number of replicates to observe the detection trend. | Quantitatively accounts for variability in response data through the model. |

| Resource Requirements | Lower statistical expertise required; can be less computationally intensive. | Requires statistical software and knowledge for model fitting and validation. |

| Regulatory Standing | Accepted by ICH Q2 guidelines [6]. | Accepted by ICH Q2 guidelines and can be more powerful for test comparison [31] [6]. |

| Best Application Context | Rapid, initial assessments; methods where detection is truly visual and binary. | High-stakes validation, comparative studies, and when a precise LOD with a confidence measure is required. |

Essential Research Reagent Solutions

The following table details key reagents and materials essential for implementing the experimental protocols described above, particularly in the context of molecular diagnostics like LAMP or qPCR.

Table 3: Essential Research Reagents and Materials

| Item | Function/Brief Explanation |

|---|---|

| Target Analyte Standard | A purified form of the molecule to be detected (e.g., hCMV DNA) used to prepare the exact concentration dilution series for the LOD experiment [27]. |

| Molecular Grade Water | Used as a solvent and for preparing dilution blanks to ensure no enzymatic inhibitors or contaminants are present that could affect the analysis. |

| Nucleic Acid Amplification Master Mix | For methods like LAMP or PCR, this contains the necessary enzymes (e.g., Bst polymerase), buffers, and salts for the isothermal amplification reaction [27]. |