Linear Regression vs. Correlation: A Strategic Guide for Biomedical Researchers

This article provides a comprehensive comparison of linear regression and correlation analysis, tailored for researchers, scientists, and professionals in drug development.

Linear Regression vs. Correlation: A Strategic Guide for Biomedical Researchers

Abstract

This article provides a comprehensive comparison of linear regression and correlation analysis, tailored for researchers, scientists, and professionals in drug development. It covers the foundational principles of both methods, their proper application in biomedical contexts—from analyzing assay data to predicting drug response—and essential troubleshooting for common pitfalls like non-linearity and confounding. A dedicated validation section offers a strategic framework for selecting the appropriate method, empowering readers to draw accurate, reliable, and actionable conclusions from their data.

Core Concepts: Understanding the 'What' and 'Why' of Correlation and Regression

In the realm of statistical analysis, particularly within data-intensive fields like drug development and biomedical research, understanding the distinction between association and prediction is not merely an academic exercise—it is a fundamental requirement for drawing valid conclusions and building useful models. While both concepts explore relationships between variables, they serve distinct purposes and are validated using different metrics. Association identifies the strength and direction of relationships between variables, answering the question, "Are these variables related?" [1] [2]. In contrast, Prediction uses these relationships to forecast specific outcomes, answering the question, "What will happen given certain conditions?" [1] [2].

The confusion between these concepts is a pervasive issue in scientific literature. A systematic review in the field of diabetes epidemiology found that 61% of articles using "prediction" in their titles reported only association statistics, failing to provide proper predictive metrics [3]. A similar review in allergy research confirmed this trend, with only 39% of such studies reporting genuine prediction metrics [4]. This conflation can lead to misallocated resources in drug development and flawed clinical decisions, ultimately impacting patient care. This guide provides a clear, objective comparison to empower researchers in selecting and evaluating the appropriate analytical approach.

Conceptual Frameworks: Core Definitions and Differences

What is Association?

Association analysis quantifies the relationship between two or more variables without implying a cause-and-effect dynamic or designating dependent and independent variables [1] [5]. It is primarily concerned with measuring co-movement, answering whether variables change together in a systematic way. The most common measure is the correlation coefficient (r), which ranges from -1 to +1 [2] [6]. A value of +1 indicates a perfect positive relationship, -1 a perfect negative relationship, and 0 indicates no linear relationship [5]. It is a symmetric measure, meaning the correlation between X and Y is the same as between Y and X [5].

What is Prediction?

Prediction, often operationalized through regression analysis, models the relationship between a dependent (outcome) variable and one or more independent (predictor) variables to forecast future values or outcomes [1] [5]. Unlike association, it is inherently asymmetric; the model is built to predict the dependent variable from the independent variables, and reversing this relationship yields a different model [5]. The output is a predictive equation (e.g., ( Y = \beta0 + \beta1X )) that can be used to estimate the value of the dependent variable for new observations [5] [7]. The model's success is often evaluated using metrics like R-squared (R²), which indicates the proportion of variance in the dependent variable explained by the model [8] [9].

Key Differences Summarized

The table below synthesizes the fundamental distinctions between association and prediction.

Table 1: Fundamental Differences Between Association and Prediction

| Aspect | Association (e.g., Correlation) | Prediction (e.g., Regression) |

|---|---|---|

| Primary Purpose | Measures strength and direction of a relationship [2] [6] | Models relationships to forecast outcomes [2] [6] |

| Variable Roles | Variables are treated equally; no designation of dependence [5] [2] | Clear distinction between independent (predictor) and dependent (outcome) variables [1] [5] |

| Nature of Output | A single statistic (e.g., correlation coefficient, r) [5] [2] | An equation and goodness-of-fit measures (e.g., R²) [8] [5] |

| Implication of Causality | Does not imply causation [1] [5] | Can suggest causation if supported by a well-designed model and theory [5] |

| Directionality | Symmetric (corr(X,Y) = corr(Y,X)) [5] | Asymmetric (Y = f(X) is not the same as X = f(Y)) [5] |

Quantitative Comparison: Performance and Data Presentation

The performance of association and prediction models is judged by different criteria. The following tables summarize the key quantitative metrics and data requirements for each.

Table 2: Key Performance Metrics for Association and Prediction

| Metric | Applies To | Interpretation | Limitations |

|---|---|---|---|

| Correlation Coefficient (r) | Association | Strength/Direction: -1 (perfect negative) to +1 (perfect positive) [1] [6] | Only measures linear relationships [1] |

| Coefficient of Determination (R²) | Prediction | Proportion of variance in dependent variable explained by the model; 0-100% [8] [9] | Can be artificially inflated by adding more variables [8] [9] |

| Sensitivity & Specificity | Prediction (Classification) | Sensitivity: Ability to correctly identify positives. Specificity: Ability to correctly identify negatives [4] | Requires a defined classification threshold [4] |

| ROC AUC | Prediction (Classification) | Overall discriminative ability of a model; 0.5 (no skill) to 1.0 (perfect separation) [4] | Does not provide the actual classification rule |

Table 3: Typical Data Structure and Software Implementation

| Aspect | Association Analysis | Prediction Analysis |

|---|---|---|

| Data Structure | Two or more continuous or ordinal variables, treated equally. | A designated dependent variable and one/more independent variables. |

| Example R Code | cor(data$height, data$weight, method="pearson") [5] |

model <- lm(weight ~ height, data=data)summary(model) [5] |

| Example Python Code | df.corr() [7] |

from sklearn.linear_model import LinearRegressionmodel = LinearRegression().fit(X, y) [7] |

Experimental Protocols: Methodologies for Validation

Protocol for an Association Study

Aim: To determine if a linear relationship exists between the expression level of a specific biomarker (Protein X) and tumor size in a pre-clinical model.

- Sample Preparation: Collect tumor tissue samples from a cohort of animal models (e.g., n=50). Homogenize the tissue and extract protein.

- Independent Variable Measurement: Quantify the concentration of Protein X in each sample using a standardized enzyme-linked immunosorbent assay (ELISA). Perform all measurements in duplicate.

- Dependent Variable Measurement: Measure the diameter of each tumor using calipers or medical imaging (e.g., MRI) at the time of tissue collection.

- Statistical Analysis: Calculate the Pearson correlation coefficient (r) and its corresponding p-value between the concentration of Protein X and tumor size. A p-value < 0.05 is typically considered statistically significant.

- Interpretation: A significant positive correlation (r > 0) would suggest that higher levels of Protein X are associated with larger tumors. This finding indicates a relationship worthy of further investigation but does not prove that Protein X causes tumor growth.

Protocol for a Prediction Study

Aim: To build and validate a model that predicts patient response (Responder vs. Non-Responder) to a new drug candidate based on a panel of three biomarkers.

- Cohort Definition & Splitting: Enroll a defined patient cohort (e.g., n=200). Randomly split the data into a training set (70%, n=140) to develop the model and a hold-out test set (30%, n=60) to validate it.

- Predictor & Outcome Measurement: For all patients, measure the baseline levels of Biomarker A, B, and C (independent variables). The dependent variable is the clinically assessed treatment response after one cycle (e.g., Responder=1, Non-Responder=0).

- Model Building: Using the training set, fit a logistic regression model with response status as the outcome and the three biomarkers as predictors.

- Model Validation & Performance Metrics: Apply the fitted model to the untouched test set to generate predicted probabilities of response.

- Use a pre-defined threshold (e.g., 0.5) to classify patients as predicted responders or non-responders.

- Compare these predictions to the actual outcomes in the test set to calculate sensitivity, specificity, and generate an ROC curve to report the AUC [4].

- Interpretation: A model with an AUC of 0.8 on the test set demonstrates good predictive performance and the potential to stratify patients before treatment.

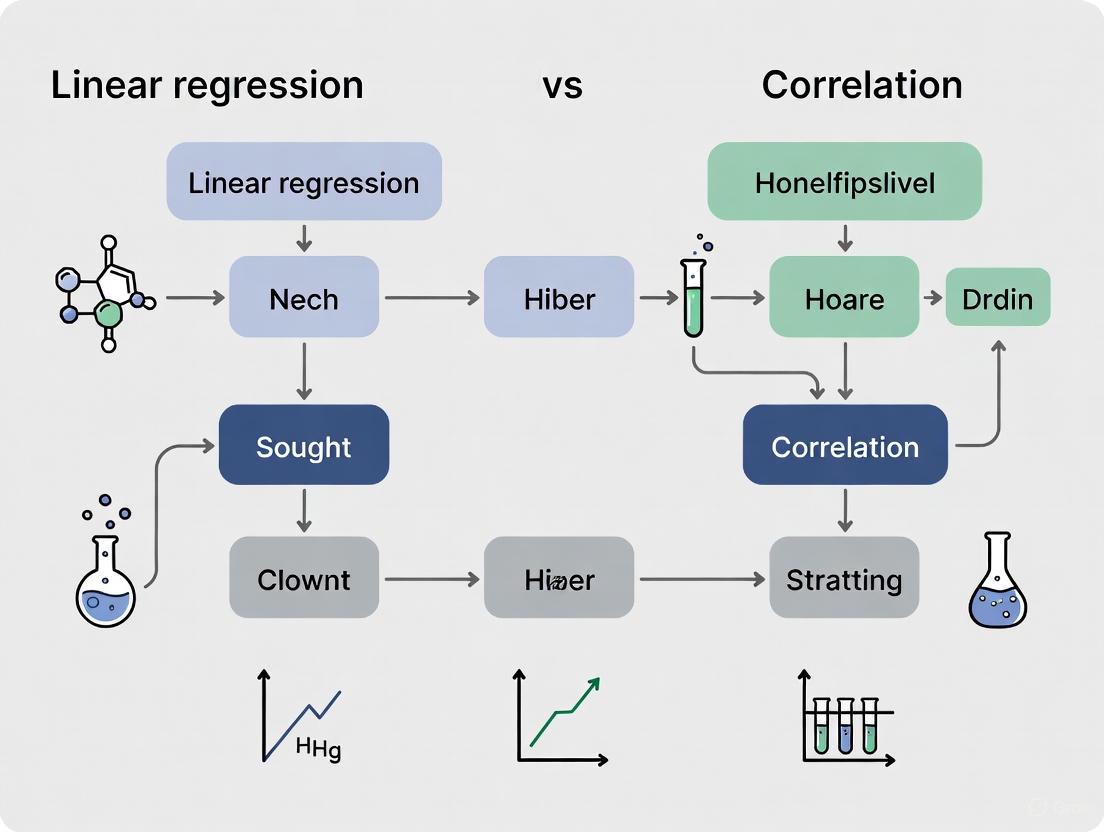

Visualization of Analytical Pathways

The following diagram illustrates the logical workflow and key decision points in choosing between association and prediction analyses.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents and tools essential for conducting robust association and prediction studies in a biomedical context.

Table 4: Essential Research Reagents and Computational Tools

| Item / Solution | Function in Analysis | Example in Protocol |

|---|---|---|

| ELISA Kits | Precisely quantify specific protein biomarker levels from tissue or serum samples. | Measuring the concentration of Protein X in tumor samples for the association study [10]. |

| Clinical Data Management System (CDMS) | Securely collect, store, and manage structured patient data, including clinical outcomes and biomarker readings. | Housing patient baseline characteristics, biomarker levels (A, B, C), and treatment response data for the prediction study. |

| Statistical Software (R/Python) | Perform statistical calculations, compute correlation coefficients, fit regression models, and generate performance metrics. | Running cor() in R for association or scikit-learn in Python for building the logistic regression model [5] [7]. |

| BIOMARKER PANEL | A set of multiple biomarkers measured concurrently to improve the robustness and accuracy of a predictive model. | Using Biomarkers A, B, and C together in the logistic regression model to predict drug response, rather than relying on a single marker. |

| ROC Analysis Software | Evaluate and visualize the discriminative performance of a classification model by plotting the ROC curve and calculating AUC. | Assessing the predictive power of the logistic regression model on the test set in the prediction study [4]. |

Association and prediction are complementary but distinct concepts in statistical analysis. Association, measured by tools like correlation, is ideal for initial data exploration and identifying potential relationships between variables [1]. Prediction, implemented through regression and other modeling techniques, is the necessary framework for forecasting individual outcomes and building diagnostic tools, with performance measured by metrics like ROC AUC and sensitivity/specificity [4] [3].

For researchers and drug development professionals, the critical takeaway is that a statistically significant association does not guarantee accurate prediction [4] [3]. Conflating the two can lead to overoptimistic conclusions about a biomarker's or model's clinical utility. Therefore, the choice of analysis must be driven by the research question: use association to explore relationships and generate hypotheses, and use prediction to build and validate models for forecasting outcomes in new subjects. Adherence to this distinction, along with the use of transparent reporting guidelines like TRIPOD for prediction models, is essential for advancing robust and replicable science [4] [3].

In quantitative method comparison studies, particularly in drug development and clinical research, statistical tools like linear regression and correlation are paramount for assessing associations between variables. While linear regression aims to determine the best linear relationship for prediction, correlation coefficients quantify the strength and direction of association between two methods or variables [11]. The choice between Pearson's and Spearman's correlation coefficients is a critical methodological decision that directly impacts the validity and interpretation of research findings. This guide provides an objective comparison of these two fundamental statistical measures, supporting researchers in selecting the appropriate coefficient based on their data characteristics and research objectives.

Theoretical Foundations and Definitions

Pearson's Correlation Coefficient (r)

The Pearson product-moment correlation coefficient (denoted as r for a sample and ϱ for a population) evaluates the linear relationship between two continuous variables [12] [13]. It measures the extent to which a change in one variable is associated with a proportional change in another variable, assuming the relationship can be represented by a straight line. The coefficient is calculated as the covariance of the two variables divided by the product of their standard deviations [14].

The mathematical formula for calculating the sample Pearson's correlation coefficient is:

r = ∑(xi - x̄)(yi - ȳ) / √[∑(xi - x̄)²][∑(yi - ȳ)²]

Where xi and yi are the values of x and y for the ith individual, and x̄ and ȳ are the sample means [13].

Spearman's Rank Correlation Coefficient (ρ)

The Spearman's rank-order correlation coefficient (denoted as rs for a sample and ρs for a population) is a non-parametric measure that evaluates the monotonic relationship between two continuous or ordinal variables [12] [15]. Unlike Pearson's r, Spearman's ρ assesses how well an arbitrary monotonic function can describe the relationship between two variables, without making assumptions about the frequency distribution of the variables [16].

The formula for calculating Spearman's coefficient when there are no tied ranks is:

rs = 1 - (6∑di²)/(n(n²-1))

Where di is the difference in paired ranks and n is the number of cases [15].

Figure 1: Decision Workflow for Selecting Between Pearson's r and Spearman's ρ

Comparative Analysis: Key Differences

Relationship Types and Data Assumptions

The fundamental distinction between Pearson's and Spearman's coefficients lies in the types of relationships they measure and their underlying assumptions:

Pearson's r measures linear relationships and requires both variables to be continuous and normally distributed [13] [17]. It assumes homoscedasticity and that the relationship between variables can be represented by a straight line [12].

Spearman's ρ measures monotonic relationships (where variables tend to change together, but not necessarily at a constant rate) and can be applied to ordinal data or continuous data that violate normality assumptions [12] [15]. A monotonic relationship is one where, as the value of one variable increases, the other variable either consistently increases or decreases, though not necessarily linearly [15].

Sensitivity to Data Characteristics

Each correlation coefficient responds differently to specific data characteristics:

Sensitivity to outliers: Pearson's r is highly sensitive to outliers, which can disproportionately influence the correlation coefficient [18] [13]. Spearman's ρ is more robust to outliers because it operates on rank-ordered data rather than raw values [13].

Handling of non-normal distributions: Pearson's r requires normally distributed data for valid interpretation, while Spearman's ρ makes no distributional assumptions, making it appropriate for skewed data [13].

Data requirements: Pearson's r requires both variables to be continuous and measured on an interval or ratio scale, while Spearman's ρ can be applied to ordinal, interval, or ratio data [15] [17].

Table 1: Comparative Characteristics of Pearson's r and Spearman's ρ

| Characteristic | Pearson's r | Spearman's ρ |

|---|---|---|

| Relationship Type | Linear | Monotonic |

| Data Types | Continuous, interval/ratio | Ordinal, interval, ratio |

| Distribution Assumptions | Normal distribution required | Distribution-free |

| Sensitivity to Outliers | High sensitivity | Robust |

| Calculation Basis | Raw data values | Rank-ordered data |

| Interpretation | Strength of linear relationship | Strength of monotonic relationship |

| Appropriate for Skewed Data | No | Yes |

Experimental Protocols and Methodological Applications

Protocol for Correlation Analysis in Method Comparison Studies

The following experimental protocol outlines a systematic approach for conducting correlation analysis in method comparison studies, particularly relevant for drug development research:

Data Collection and Preparation: Collect paired measurements from the two methods being compared. Ensure adequate sample size (typically n≥30 for reliable estimates) and representativeness of the measurement range [13].

Preliminary Data Exploration: Generate scatterplots to visually assess the relationship between variables. Examine distributions for normality using statistical tests (e.g., Shapiro-Wilk) or graphical methods (e.g., Q-Q plots) [12] [13].

Appropriate Test Selection: Based on data characteristics, select Pearson's r for linear relationships with normally distributed continuous data, or Spearman's ρ for monotonic relationships with ordinal or non-normally distributed data [13] [17].

Calculation and Statistical Testing: Compute the selected correlation coefficient and perform significance testing using appropriate methods (t-test for Pearson's r, permutation test or special tables for Spearman's ρ) [15].

Interpretation and Reporting: Interpret the correlation coefficient in context, considering both statistical significance and practical significance. Report confidence intervals where possible [13].

Case Study: Maternal Age and Parity Analysis

A practical example from clinical research illustrates the application of both coefficients. In a study of 780 women attending their first antenatal clinic visit, researchers examined the relationship between maternal age and parity [13].

- Data Characteristics: Maternal age is continuous but typically skewed, while parity is ordinal and skewed.

- Appropriate Method Selection: Spearman's ρ was identified as most appropriate due to the ordinal nature of parity and skewed distributions.

- Results: Spearman's coefficient was 0.84, indicating a strong positive correlation, while Pearson's r was 0.80.

- Interpretation: Both coefficients led to similar conclusions in this case, though Spearman's ρ was more appropriate given the data characteristics.

When seven patients with higher parity values were excluded from analysis, Pearson's correlation changed substantially (from 0.2 to 0.3) while Spearman's correlation remained stable at 0.3, demonstrating the greater robustness of Spearman's ρ to outliers [13].

Table 2: Interpretation Guidelines for Correlation Coefficients

| Coefficient Size | Interpretation |

|---|---|

| 0.90 to 1.00 (-0.90 to -1.00) | Very high positive (negative) correlation |

| 0.70 to 0.90 (-0.70 to -0.90) | High positive (negative) correlation |

| 0.50 to 0.70 (-0.50 to -0.70) | Moderate positive (negative) correlation |

| 0.30 to 0.50 (-0.30 to -0.50) | Low positive (negative) correlation |

| 0.00 to 0.30 (0.00 to -0.30) | Negligible correlation |

Limitations and Methodological Considerations

Common Pitfalls in Correlation Analysis

Researchers must be aware of several critical limitations when interpreting correlation coefficients:

Correlation does not imply causation: A high correlation between two variables does not mean that changes in one variable cause changes in the other. The apparent correlation can be purely coincidental (spurious correlation) or influenced by hidden confounding variables [18].

Sensitivity to range restriction: Both correlation coefficients can be attenuated when the range of either variable is artificially restricted [18].

Impact of outliers: As demonstrated in the life expectancy versus health expenditure example, a single outlier can substantially influence Pearson's r, changing the coefficient from 0.71 to 0.54 in one case study [18].

Nonlinear relationships: Neither coefficient adequately captures non-monotonic relationships. For example, a perfect quadratic relationship may yield a correlation coefficient near zero [12] [18].

Appropriate Interpretation in Research Context

In neuroscience and psychology research, the Pearson correlation coefficient is widely used for feature selection and model performance evaluation, but it has notable limitations in capturing complex, nonlinear relationships between brain connectivity and psychological behavior [14]. The Spearman coefficient can partially address these limitations in some cases, but may not fully capture all aspects of nonlinear relationships [14].

Figure 2: Key Limitations in Interpreting Correlation Coefficients

Advanced Applications in Drug Development Research

Machine Learning and Feature Importance Correlation

In drug discovery research, correlation analysis has evolved beyond traditional applications. Feature importance correlation from machine learning models represents an advanced application that uses model-internal information to uncover relationships between target proteins [19].

In a large-scale analysis generating and comparing machine learning models for more than 200 proteins, both Pearson and Spearman correlation coefficients were used to detect similar compound binding characteristics [19]. The analysis revealed that:

- High feature importance correlation served as an indicator of similar compound binding characteristics of proteins

- The methodology detected functional relationships between proteins independent of active compounds

- Spearman correlation helped identify protein families with similar binding characteristics

This approach demonstrates how both correlation coefficients can be integrated into advanced analytical frameworks in pharmaceutical research.

Connectome-Based Predictive Modeling in Neuroscience

In connectome-based predictive modeling (CPM), which examines relationships between brain imaging data and behavioral or psychological metrics, the Pearson correlation coefficient is widely used but has significant limitations [14]:

- It struggles to capture complex, nonlinear relationships between brain functional connectivity and psychological behavior

- It inadequately reflects model errors, especially in the presence of systematic biases or nonlinear error

- It lacks comparability across datasets, with high sensitivity to data variability and outliers

These limitations have prompted researchers to combine multiple evaluation metrics, including Spearman correlation, mean absolute error (MAE), and root mean square error (RMSE), for more comprehensive model assessment [14].

Essential Research Reagent Solutions

Table 3: Essential Analytical Tools for Correlation Analysis

| Research Tool | Function | Application Context |

|---|---|---|

| Statistical Software (SPSS, R, Python) | Calculate correlation coefficients and perform significance tests | General research applications |

| Normality Tests (Shapiro-Wilk, Kolmogorov-Smirnov) | Assess distributional assumptions for selecting appropriate correlation method | Preliminary data analysis |

| Scatterplot Visualization | Graphical assessment of relationship type (linear vs. monotonic) | Data exploration and assumption checking |

| Machine Learning Libraries (scikit-learn, TensorFlow) | Advanced correlation analysis including feature importance correlation | Drug discovery and predictive modeling |

| Bland-Altman Plot | Assess agreement between methods (distinct from correlation) | Method comparison studies |

The choice between Pearson's r and Spearman's ρ represents a critical methodological decision in quantitative research, particularly in method comparison studies and drug development. Pearson's r is appropriate for assessing linear relationships between continuous, normally distributed variables, while Spearman's ρ is more suitable for monotonic relationships with ordinal data or when data violate normality assumptions. Researchers must consider their data characteristics, research questions, and the fundamental limitations of correlation analysis, particularly the principle that correlation does not imply causation. As analytical methods evolve, both coefficients continue to find applications in advanced research domains, including machine learning and neuroinformatics, where they contribute to comprehensive model evaluation frameworks.

In statistical analysis, distinguishing between correlation and regression is fundamental for researchers, scientists, and drug development professionals. While both techniques explore relationships between variables, they serve distinct purposes. Correlation quantifies the strength and direction of a linear relationship between two variables, while regression models the relationship to predict and explain the behavior of a dependent variable based on one or more independent variables [1] [20]. The linear regression equation, ( Y = a + bX ), is a cornerstone of this predictive modeling, where:

- ( Y ) is the dependent variable (the outcome to be predicted).

- ( X ) is the independent variable (the predictor).

- ( b ) is the slope (the change in Y for a one-unit change in X).

- ( a ) is the intercept (the value of Y when X is zero) [1] [21].

This guide provides an objective comparison of these methods, supported by experimental data and detailed protocols.

Conceptual Comparison: Correlation vs. Regression

Correlation is often the first step in analysis, used to identify potential relationships. It produces a correlation coefficient (r) ranging from -1 to +1, indicating the relationship's strength and direction [1] [22] [23]. However, it does not imply causation and cannot predict values [1] [2].

Regression analysis, particularly linear regression, goes a step further by defining the precise mathematical relationship between variables. This allows for forecasting and understanding the impact of predictors [1] [24]. The following table summarizes the core differences.

| Feature | Correlation | Regression |

|---|---|---|

| Purpose | Measures strength and direction of association [1] [2] | Predicts values and models relationships [1] [2] |

| Variable Role | No designation of dependent or independent variables [1] [2] | Clear designation of dependent (Y) and independent (X) variables [1] [24] |

| Output | Single coefficient (r) [2] | Equation (e.g., ( Y = a + bX )) [1] [2] |

| Causality | Does not imply causation [1] [25] | Can suggest causation if derived from controlled experiments [2] |

| Application | Initial exploratory analysis [1] | Predictive modeling, trend analysis, and forecasting [1] [24] |

Experimental Performance and Quantitative Data

Empirical studies across various fields consistently demonstrate the predictive superiority of regression over mere correlation. The following table summarizes quantitative findings from recent research, highlighting the performance of different models.

Table 1: Model Performance in Predicting Building Usable Area

Data sourced from a study comparing models for predicting the usable floor area of houses with multi-pitched roofs [26].

| Model Type | Data Source | Key Predictor Variables | Accuracy | Average Absolute Error |

|---|---|---|---|---|

| Linear Regression Model | Architectural Design Data | Covered Area, Building Height, Number of Storeys | 88% | 8.7 m² |

| Non-linear Model | Architectural Design Data | Covered Area, Building Height, Number of Storeys | 89% | 8.7 m² |

| Machine Learning Model | Architectural Design Data | Covered Area, Building Height, Number of Storeys | 93% | 8.7 m² |

| Best Model (for existing buildings) | Existing Building Data (LiDAR) | Covered Area, Building Height | 90% | 9.9 m² |

Key Insights from Experimental Data

- Regression Enables Quantifiable Prediction: The primary advantage of regression is its ability to provide specific, quantifiable predictions (e.g., the usable area in square meters) with a known average error, which correlation cannot offer [26].

- Data Quality is Critical: The higher accuracy achieved with architectural design data (88%-93%) compared to data from existing buildings (90%) underscores that regression model performance is heavily dependent on input data quality and completeness [26].

- Limitations of Correlation in Modeling: Research in neuroscience confirms that correlation coefficients struggle to capture complex, non-linear relationships and are inadequate for reflecting model prediction errors, potentially leading to skewed evaluations [14].

Experimental Protocols and Methodologies

To ensure the validity and reliability of linear regression models, specific experimental protocols and assumptions must be adhered to.

- Linearity: The relationship between the dependent and independent variable(s) must be linear.

- Independence: Observations must be independent of each other.

- Homoscedasticity: The variance of the residuals (errors) should be constant across all levels of the independent variable.

- Normality: The residuals of the model should be approximately normally distributed.

Detailed Workflow for Regression Modeling

Diagram 1: Statistical Modeling Workflow. This diagram illustrates the typical data analysis pipeline, showing how correlation analysis often serves as an exploratory step within a broader regression modeling process.

- Data Collection and Preparation: Gather data for the dependent and independent variables. Ensure variables are quantitative; recode categorical variables as necessary.

- Exploratory Data Analysis (EDA):

- Generate a scatterplot of X and Y to visually assess linearity.

- Calculate the correlation coefficient (r) to preliminarily gauge the relationship's strength and direction [22].

- Model Fitting: Use the least squares method to compute the regression coefficients (intercept

aand slopeb) for the equation ( Y = a + bX ). - Assumption Validation:

- Check homoscedasticity by plotting residuals vs. fitted values (look for no patterns).

- Check normality of residuals using a histogram or Q-Q plot.

- Prediction and Interpretation: Use the fitted model to predict new values. Interpret the slope (

b) as the change in Y for a unit change in X.

Protocol 2: Connectome-Based Predictive Modeling (CPM) in Neuroscience

A case study highlighting the limitations of correlation in complex modeling [14].

- Feature Selection: Identify brain functional connections relevant to a psychological process. Many studies use Pearson correlation (p < 0.01) to select features, though this is limited to linear associations.

- Feature Summarization: Integrate the selected connectivity features.

- Model Building: Construct a predictive model (e.g., Support Vector Machine) using the summarized features.

- Model Evaluation: Critically, this step should not rely solely on correlation between predicted and observed scores. Instead, use:

- Difference Metrics: Mean Absolute Error (MAE) and Mean Squared Error (MSE) to quantify prediction error.

- Baseline Comparisons: Compare the model's performance against a simple baseline, such as predicting the mean value.

For researchers implementing these statistical methods, the following tools are essential.

Table 2: Key Research Reagent Solutions for Statistical Analysis

| Tool / Resource | Function | Application Example |

|---|---|---|

| Statistical Software (e.g., IBM SPSS, R, Python with sklearn) | Performs complex calculations for correlation and regression analysis [24] [21]. | Automating the calculation of the regression equation ( Y = a + bX ) and associated p-values. |

| LiDAR Data (LoD1/LoD2) | Provides high-resolution topographic and building data for predictor variables [26]. | Sourcing independent variables (e.g., building height, covered area) for real estate valuation models. |

| Pearson Correlation Coefficient (r) | Provides an initial measure of the strength and direction of a linear relationship between two variables [22] [23]. | Initial exploratory analysis to determine if further regression analysis is justified. |

| Evaluation Metrics (MAE, MSE, R-squared) | Quantifies model performance and prediction error beyond what correlation can show [14] [21]. | Determining the real-world predictive accuracy of a regression model (e.g., an average error of 9.9 m²). |

| fMRI Data | Measures brain activity for use as features in predictive models of psychological processes [14]. | Serving as independent variables in connectome-based modeling to predict behavioral indices. |

The regression equation ( Y = a + bX ) is more than a formula; it is the foundation of a powerful predictive framework. While correlation is a useful tool for initial data exploration, regression analysis provides a robust methodology for quantification, prediction, and informed decision-making. Experimental data confirms that regression models, when properly validated, offer precise and actionable insights essential for scientific research and drug development. By understanding their distinct roles and rigorously applying regression protocols, professionals can move beyond describing relationships to truly modeling and forecasting outcomes.

In quantitative method comparison studies, particularly in scientific and drug development research, the initial analytical step is often the most critical. The scatter plot serves as this foundational tool, providing an intuitive visual representation of the relationship between two continuous variables before any complex statistical models are applied. This simple yet powerful graph places the independent variable on the x-axis and the dependent variable on the y-axis, allowing researchers to immediately observe patterns, trends, and potential outliers in their data [27] [28] [29]. For scientists validating analytical methods or comparing measurement techniques, the scatter plot offers the first evidence of association, guiding subsequent statistical analysis and informing decisions about which advanced techniques—whether linear regression for prediction or correlation for assessing relationship strength—are most appropriate for their specific data structure [11].

The value of the scatter plot extends beyond mere pattern recognition. In pharmaceutical research and method validation, it provides a transparent, easily interpretable visualization that can reveal the presence of linear relationships, non-linear patterns, clustering, or anomalous observations that might compromise analytical results [28]. By serving as the initial diagnostic tool in any analytical workflow, the scatter plot helps researchers avoid misinterpretations that can occur when relying solely on summary statistics, ensuring that subsequent analyses are built upon a accurate understanding of the fundamental variable relationships [27] [11].

Scatter Plots in Method Comparison: A Analytical Workflow

The following diagram illustrates the essential role of scatter plots within the broader context of statistical method comparison analysis:

Comparative Analysis of Visual Relationship Patterns

The table below categorizes common relationship patterns observable in scatter plots, with their characteristics and interpretations in method comparison studies:

| Pattern Type | Visual Characteristics | Data Relationship | Interpretation in Method Comparison |

|---|---|---|---|

| Strong Positive | Dots closely follow an upward diagonal line | As variable X increases, variable Y consistently increases | Good agreement between methods; potential proportional bias may require further investigation [28] |

| Strong Negative | Dots closely follow a downward diagonal line | As variable X increases, variable Y consistently decreases | Inverse relationship between methods; not typical in validation studies [28] |

| Weak/No Relationship | Dots form a shapeless cloud with no discernible direction | Changes in X show no consistent pattern with changes in Y | Poor agreement between methods; unacceptable for analytical purposes [28] |

| Non-Linear | Dots follow a curved pattern (U-shape or S-shape) | Relationship between X and Y changes direction across measurement range | Systematic bias that may be concentration-dependent; requires transformation or non-linear modeling [28] |

| Clustered | Multiple distinct groups of points with gaps between | Data naturally falls into separate categories | May indicate different patient populations or sample types that should be analyzed separately [27] |

Statistical Applications: Regression vs. Correlation in Method Comparison

Linear Regression Analysis in Method Comparison

Linear regression analysis determines the best linear relationship between data points, providing a mathematical model that can predict one variable from another [11]. In method comparison studies, this technique is particularly valuable for assessing both constant and proportional bias between two measurement methods.

Experimental Protocol for Linear Regression in Method Comparison:

- Data Collection: Obtain paired measurements from both methods across the clinically relevant range

- Scatter Plot Creation: Graph the reference method on x-axis versus the new method on y-axis

- Regression Line Fitting: Calculate the line of best fit using ordinary least squares or Deming regression

- Parameter Estimation: Determine slope (indicating proportional bias) and intercept (indicating constant bias)

- Residual Analysis: Examine differences between observed and predicted values for pattern violations

- Validation: Assess whether the 95% confidence interval for slope contains 1 and for intercept contains 0 [11]

The regression equation takes the form: y = mx + c, where m represents the slope and c the y-intercept. The coefficient of determination (R²) indicates the proportion of variance in the new method explained by the reference method [11].

Correlation Analysis in Method Comparison

Correlation coefficients quantify the strength and direction of the association between two variables without implying causation [11]. While useful for establishing that two methods are related, correlation alone is insufficient for method agreement assessment.

Experimental Protocol for Correlation Analysis:

- Data Requirements: Collect paired measurements covering the analytical measurement range

- Correlation Coefficient Calculation: Compute Pearson's r for linear relationships or Spearman's rho for monotonic relationships

- Significance Testing: Determine if the observed correlation is statistically different from zero

- Interpretation: Evaluate the clinical relevance of the correlation strength [11]

Pearson's correlation coefficient (r) ranges from -1 to +1, with values closer to ±1 indicating stronger linear relationships. However, high correlation does not necessarily imply good agreement between methods, as it measures association rather than equivalence [11].

Advanced Visualization Techniques for Enhanced Data Interpretation

Incorporating Additional Variables through Visual Encodings

The basic scatter plot can be enhanced to incorporate additional variables through various visual encodings, creating more informative visualizations for complex datasets:

Addressing Common Visualization Challenges

Overplotting Solutions:

- Transparency: Apply alpha blending to distinguish dense areas [27]

- Subsampling: Use random sampling for large datasets while preserving patterns [27]

- Binning: Implement 2D histograms or heatmaps for extremely dense data [27]

- Jittering: Add minimal random noise to separate overlapping points [28]

Interpretation Pitfalls:

- Correlation ≠ Causation: Observed relationships may be influenced by unmeasured confounding variables [27]

- Outlier Impact: Extreme values can disproportionately influence trend lines and correlation coefficients [28]

- Range Restriction: Limited measurement ranges can artificially deflate correlation estimates [11]

- Subgroup Masking: Aggregated data may hide important patterns visible only in subgroups [28]

Essential Research Reagent Solutions for Analytical Studies

The table below details key computational tools and statistical approaches essential for conducting rigorous scatter plot analysis in method comparison studies:

| Tool Category | Specific Solutions | Primary Function | Application in Analysis |

|---|---|---|---|

| Statistical Software | JMP, Analyze-it, R, Python with Matplotlib | Automated calculation of regression parameters and correlation coefficients | Efficient implementation of complex statistical analyses with visualization capabilities [11] |

| Regression Methods | Ordinary Least Squares, Deming Regression, Passing-Bablok | Model fitting for relationship quantification | Accounting for different error structures in comparative measurements [11] |

| Color Palettes | Qualitative, Sequential, Diverging schemes [30] | Visual encoding of categorical and numerical variables | Enhancing plot interpretability through strategic color application [31] |

| Validation Frameworks | Bland-Altman with Regression, Mountain Plots | Comprehensive method comparison beyond correlation | Assessing both statistical and clinical significance of observed relationships [11] |

The scatter plot remains an indispensable first step in any analytical workflow, particularly in method comparison studies essential to pharmaceutical research and drug development. Its unique ability to provide immediate visual insight into data relationships guides researchers in selecting appropriate statistical approaches—whether regression for predictive modeling or correlation for association assessment. While advanced statistical techniques have their place, the fundamental wisdom gained from a well-constructed scatter plot ensures that subsequent analyses are grounded in an accurate understanding of the underlying data structure. For scientists validating analytical methods or comparing measurement techniques, this simple visualization tool provides the critical foundation upon which reliable conclusions are built, making it indeed the first and essential step in any analysis.

Core Conceptual Differences

This section details the fundamental distinctions between correlation and linear regression, covering their basic definitions, purposes, and the nature of their outputs.

Table 1: Fundamental Concepts and Goals

| Feature | Correlation | Linear Regression |

|---|---|---|

| Core Purpose | Measures the strength and direction of a linear association between two numeric variables. [32] [33] | Describes the linear relationship between a response variable and an explanatory variable; used for prediction. [32] [34] |

| Variable Roles | No designation of dependent or independent variables; the relationship is symmetric. [33] [34] | Clear designation of a dependent (response) variable and an independent (explanatory) variable. [32] [1] |

| Output | A single coefficient (r) between -1 and +1. [33] [1] | An equation (Y = a + bX) defining a line, including a slope and intercept. [32] [34] |

| Causality | Does not imply causation. [33] [1] | Can suggest causation if supported by a properly designed experiment, but the model itself does not prove it. [1] |

| Primary Question | "Are these two variables related, and how strong is that relationship?" [1] | "Can we predict the dependent variable (Y) based on the independent variable (X), and by how much does Y change with X?" [1] |

Quantitative Metrics and Units

This section compares the specific metrics, calculations, and units of measurement for both methods, highlighting how they handle the data differently.

Table 2: Formulas, Units, and Metric Interpretation

| Aspect | Correlation | Linear Regression |

|---|---|---|

| Key Metric | Pearson Correlation Coefficient (r). [32] [33] | Regression Coefficient (b), also known as the slope. [32] [34] |

| Calculation | ( r = \frac{\sum{i=1}^{n} (xi - \bar{x})(yi - \bar{y})}{\sqrt{\sum{i=1}^{n} (xi - \bar{x})^2 \sum{i=1}^{n} (y_i - \bar{y})^2}} ) [32] | Slope (b) is estimated via least squares to minimize the sum of squared residuals. [32] [34] |

| Metric Range | -1 to +1. [32] [33] | -∞ to +∞. |

| Interpretation | • +1: Perfect positive linear relationship.• 0: No linear relationship.• -1: Perfect negative linear relationship. [32] [33] | The average change in the dependent variable (Y) for every one-unit change in the independent variable (X). [32] [33] |

| Units | Dimensionless; a pure number without units. [34] | The slope (b) has units: (Units of Y) / (Units of X). [34] |

Experimental Protocols and Methodologies

Applying correlation and regression analyses requires a structured approach to ensure valid and reliable results. The following workflow outlines the key steps, from data preparation to interpretation.

Detailed Experimental Protocol

The workflow diagram above provides a high-level overview. The following sections elaborate on the critical steps for conducting robust analyses.

Step 1: Data Collection and Preparation

Step 2: Check Statistical Assumptions

- Shared Assumptions:

- Additional Assumptions for Regression Inference:

- Normality: For any fixed value of the independent variable (X), the dependent variable (Y) is normally distributed. This is checked by confirming the residuals (errors) are normally distributed. [32] [33]

- Constant Variance (Homoscedasticity): The variability of the residuals is constant across all values of X. This is assessed using a residual plot (residuals vs. fitted values), which should show no funneling or patterned shape. [32] [34]

Step 3: Perform Correlation Analysis

Step 4: Perform Regression Analysis

- Use the method of least squares to fit a regression line (Y = a + bX) to the data, which minimizes the sum of squared differences between the observed and predicted values. [32] [34]

- Obtain estimates for the slope (b) and intercept (a), along with their standard errors and confidence intervals.

- Test the null hypothesis that the slope is equal to zero (H₀: b = 0) using a t-test. [33] [34] Note that for simple linear regression, the p-value for this test is identical to the p-value for the correlation coefficient. [33]

Step 5: Validate the Regression Model

Step 6: Interpret and Report Results

- For correlation, report the

rvalue and its p-value, commenting on the strength and direction of the linear relationship. [33] - For regression, report the regression equation, the estimated slope with its confidence interval and p-value, and the coefficient of determination (R²), which indicates the proportion of variance in Y explained by X. [32] [34]

- For correlation, report the

In the context of statistical analysis for drug development, the "reagents" are the methodologies, software, and regulatory frameworks that ensure robust and credible results.

Table 3: Essential Tools for Statistical Analysis in Drug Development

| Tool / Methodology | Function in Analysis |

|---|---|

| Statistical Software (e.g., Genstat, R) | Used to calculate correlation coefficients, fit regression models, generate diagnostic plots, and perform hypothesis tests, ensuring accuracy and efficiency. [32] [33] |

| Design of Experiments (DOE) | A systematic method to determine the relationship between factors affecting a process and the output of that process. It allows for studying multiple factors simultaneously to maximize information with minimum experimental runs. [36] |

| Bayesian Statistical Methods | An approach that incorporates prior knowledge or beliefs with new data to provide updated probabilities. This can make clinical trials more efficient by allowing for adaptations and potentially requiring fewer participants. [37] |

| Real-World Evidence (RWE) | Data collected from outside traditional clinical trials (e.g., from electronic health records). RWE can be used to inform trial design and provide supplementary evidence of a drug's effectiveness and safety. [38] |

| ICH Guidelines (e.g., Q2(R1), Q8, Q9) | Provide a regulatory framework for analytical method validation (Q2(R1)) and implementing Quality by Design (QbD) in drug development (Q8, Q9, Q10), ensuring scientific rigor and regulatory compliance. [36] |

Application in Drug Development and Research

Correlation and regression are not just academic exercises; they are fundamental to various stages of drug development.

- Preclinical Studies: These initial studies rely on proper statistical design and analysis, including regression, to add rigor and quality. Decisions to advance to human clinical trials hinge on the results of these analyses. [39]

- Pharmacogenomics and Biomarker Discovery: Correlation analysis is used to identify relationships between genetic markers and patient responses to treatment. This helps in stratifying patients into subgroups to determine the right dosage or identify those most likely to benefit from a therapy. [38]

- Stability Studies and Shelf-Life Estimation: Regression models are applied to stability data to estimate the shelf life of a drug product by modeling the degradation of the active ingredient over time. [36]

- Clinical Trial Efficiency: Adaptive trial designs, which often use Bayesian methods (incorporating regression concepts), allow for modifications during the trial. This can lead to more efficient studies with fewer patients and a greater chance of detecting a true drug effect. [38] [37]

From Theory to Practice: Implementing Regression and Correlation in Biomedical Research

Table of Contents

- Introduction to Research Designs

- Defining the Core Concepts

- Key Differences: A Comparative Overview

- Statistical Analysis: Correlation and Regression in Context

- Experimental Protocols for Method Comparison

- Visualizing Study Design and Analysis

- The Researcher's Toolkit for Method Comparison

In scientific research, particularly in fields like drug development and clinical science, the choice of study design is foundational to the validity and interpretability of the results. Two primary approaches—statistically designed experiments and observational studies—offer distinct pathways for investigating relationships between variables [40] [41]. Statistically designed experiments, often called randomized controlled trials (RCTs), actively intervene to test a hypothesis. In contrast, observational studies meticulously record data without intervening in the processes being studied [41]. The selection between these designs directly influences the analytical methods used, such as linear regression and correlation, and fundamentally determines the strength of the conclusions that can be drawn, especially regarding causality [40] [42]. This guide provides an objective comparison of these two paradigms, framing them within the context of method comparison and statistical analysis.

Defining the Core Concepts

Statistically Designed Experiments

A statistically designed experiment is a controlled investigation where researchers actively manipulate one or more independent variables (or factors) to observe the effect on a dependent variable (outcome) [40]. The key feature of this design is the direct control researchers exert over the experimental conditions. The most robust form of this design is the Randomized Controlled Trial (RCT), where subjects are randomly assigned to either an intervention group (e.g., receiving a new drug) or a control group (e.g., receiving a placebo or standard treatment) [41] [43]. Randomization serves to equalize the experimental groups at the start of the study, minimizing the influence of confounding variables—other factors that could otherwise explain the observed results [40].

Observational Studies

Observational studies involve measuring variables of interest without any attempt to change the conditions the subjects experience [40]. Researchers observe and collect data on individuals, groups, or phenomena as they naturally occur. Common types of observational studies include [41] [43]:

- Cohort Studies: A group of people (the cohort) are followed over time to see how their exposures affect outcomes.

- Case-Control Studies: Researchers identify individuals with an existing health problem ("cases") and a similar group without the problem ("controls"), then compare their exposure histories.

- Cross-Sectional Studies: Data are collected from a population at a single point in time, providing a "snapshot" [43].

The following table summarizes the fundamental differences between these two research approaches, highlighting their respective strengths and weaknesses.

Table 1: Core Differences Between Observational Studies and Experiments

| Aspect | Observational Study | Statistically Designed Experiment |

|---|---|---|

| Control & Manipulation | No intervention; researchers observe and measure variables without manipulating them [40]. | Researchers actively manipulate independent variables and control the study environment [40]. |

| Randomization | Not used; subjects are not randomly assigned to exposure groups [40]. | Random assignment of subjects is a standard practice to create comparable groups [40] [41]. |

| Establishing Causality | It is difficult to establish causality due to the potential for confounding biases [40] [41]. | Considered the gold standard for establishing cause-and-effect relationships [40] [41]. |

| Real-World Insight | High external validity; reflects real-world scenarios as they naturally occur [40]. | Can have limited real-world insight due to controlled, often artificial, settings [40]. |

| Susceptibility to Confounding | Highly susceptible to the effects of confounding variables [40]. | Low susceptibility due to randomization and controlled conditions [40]. |

| Cost & Time Efficiency | Generally less expensive and time-consuming [40]. | Often expensive and time-intensive [40] [41]. |

| Ethical Considerations | Essential when it is unethical to assign exposures (e.g., studying smoking effects) [40]. | Not feasible when the exposure is harmful or unethical to assign [40]. |

Statistical Analysis: Correlation and Regression in Context

The choice of study design directly influences the statistical tools used for analysis. In both observational and experimental studies, researchers often investigate relationships between variables, commonly using correlation and regression analysis.

Correlation Analysis

Correlation quantifies the degree, strength, and direction of a linear relationship between two numeric variables [44] [33]. The Pearson correlation coefficient (r) ranges from -1 (perfect negative relationship) to +1 (perfect positive relationship), with 0 indicating no linear relationship [44] [45].

- Purpose: To measure the association between two variables without assuming a cause-and-effect direction [44] [46].

- Interpretation: A strong correlation indicates that two variables change together in a predictable pattern. However, correlation does not imply causation [45] [46]. Two variables may be correlated due to a third, unmeasured confounding variable [40].

- Use in Study Designs: While correlation can be used in any design, it is most common in observational studies. It is crucial to remember that a high correlation coefficient alone does not mean two methods are comparable, as it only assesses the strength of a linear relationship, not the agreement between methods [47].

Linear Regression Analysis

Linear regression is used to model the relationship between a dependent (outcome) variable and one or more independent (predictor) variables [44] [33]. In simple linear regression, the model is represented by the equation Y = β₀ + β₁X, where Y is the outcome, X is the predictor, β₀ is the intercept, and β₁ is the slope [44].

- Purpose: To predict or estimate the value of an outcome based on the value of a predictor. The slope (β₁) gives the average change in Y for a one-unit increase in X [44] [33].

- Interpretation: In method comparison studies, linear regression is used to quantify systematic error. The intercept (β₀) estimates constant bias, and the slope (β₁) estimates proportional bias between two measurement methods [48].

- Use in Study Designs: Regression is widely used in both observational and experimental studies. It is particularly valuable for controlling for multiple variables simultaneously in observational studies and for analyzing results in experiments [44].

Comparing Correlation and Regression

Table 2: Correlation vs. Simple Linear Regression at a Glance

| Feature | Correlation | Simple Linear Regression |

|---|---|---|

| Primary Goal | Measure the strength and direction of a linear association [44] [46]. | Model the relationship to predict the outcome from the predictor [44] [46]. |

| Variables | Variables are symmetric (interchangeable); the correlation of X with Y is the same as Y with X [46]. | Variables are asymmetric; designating the outcome (Y) and predictor (X) is critical [44] [46]. |

| Output | A single coefficient (r) [44]. | An equation (slope and intercept) for making predictions [33] [46]. |

| Causality | Does not address causation [45] [46]. | Does not alone prove causation, but models a predictive relationship [46]. |

| Standardized Coefficient | The correlation coefficient (r) is standardized. | The standardized regression coefficient is equal to Pearson's r [46]. |

Experimental Protocols for Method Comparison

A common application of these principles in laboratory science is the method comparison study, which assesses the agreement between a new measurement procedure and an existing one [47] [48]. The following protocol outlines the key steps for a robust comparison.

Study Design and Sample Preparation

- Sample Size: A minimum of 40, and preferably 100 or more, patient specimens should be tested [47] [48]. Larger sample sizes help identify unexpected errors.

- Sample Selection: Specimens should be carefully selected to cover the entire clinically meaningful measurement range [47] [48].

- Replication: Duplicate measurements for both the current and new method are advisable to minimize random variation [47].

- Timeframe: Measurements should be performed over several days (at least 5) and multiple analytical runs to mimic real-world conditions and account for day-to-day variability [47] [48].

Data Collection and Analysis

- Randomization: The sample analysis sequence should be randomized to avoid carry-over effects and systematic bias [47].

- Graphical Analysis (Visual Inspection): Before statistical tests, data should be plotted.

- Scatter Plot: Plot the results from the new method (Y-axis) against the current method (X-axis). This helps visualize the relationship and identify outliers or non-linear patterns [47] [48].

- Difference Plot (Bland-Altman Plot): Plot the differences between the two methods (Y-axis) against the average of the two methods (X-axis). This is critical for assessing agreement and identifying any relationship between the difference and the magnitude of measurement [47].

- Statistical Analysis:

- Inappropriate Tests: Correlation analysis and t-tests are not adequate for assessing method comparability. Correlation measures association, not agreement, and t-tests may miss clinically meaningful differences or be misled by small sample sizes [47].

- Appropriate Tests: Use linear regression analysis to obtain the slope and intercept, which quantify proportional and constant bias, respectively [48]. The systematic error at a critical medical decision concentration (Xc) can be calculated as SE = (a + b*Xc) - Xc, where 'a' is the intercept and 'b' is the slope [48].

Visualizing Study Design and Analysis

The following diagram illustrates the key decision points and analytical pathways in choosing and executing a study design for method comparison.

Diagram 1: Pathway for Designing and Analyzing a Method Comparison Study.

The Researcher's Toolkit for Method Comparison

Successful execution of a method comparison study relies on careful planning and the use of appropriate materials and statistical tools. The following table details key components.

Table 3: Essential Reagents and Tools for Method Comparison Studies

| Item | Function & Importance |

|---|---|

| Patient Specimens (n=40-100) | The fundamental reagent. Must cover the entire clinical reporting range to properly evaluate method performance across all potential values [47] [48]. |

| Reference Method / Comparative Method | The benchmark against which the new method is tested. An ideal reference method has documented correctness. For routine methods, differences must be carefully interpreted to identify which method is inaccurate [48]. |

| Statistical Software (R, SAS, etc.) | Essential for performing regression analysis, calculating correlation coefficients, and generating high-quality scatter and difference plots for visual data inspection [33]. |

| Scatter Plots | A graphical tool used as a first step in data analysis to visualize the relationship between two methods and identify outliers, linearity, and the range of data [47] [48]. |

| Bland-Altman Plots (Difference Plots) | A critical graphical method for assessing agreement between two measurement techniques. It plots the differences between methods against their averages, helping to identify bias and its relation to the magnitude of measurement [47]. |

| Linear Regression Analysis | The primary statistical procedure for quantifying the constant (intercept) and proportional (slope) bias between two methods, allowing for the estimation of systematic error at medically important decision concentrations [48]. |

In statistical analysis, particularly in fields such as drug development and scientific research, understanding the relationship between variables is fundamental. While both correlation and linear regression explore linear relationships between two quantitative variables, they serve distinct purposes and are often confused. Correlation quantifies the strength and direction of the linear relationship between two variables, producing a correlation coefficient (r) that ranges from -1 to +1 [49] [50]. In contrast, linear regression is a predictive modeling technique that finds the best-fit line to predict a dependent variable (Y) from an independent variable (X) [49] [51]. This distinction is crucial: correlation assesses association, while regression enables prediction and explanation of variable relationships [50] [46].

The method of Least Squares is the most common technique for fitting a linear regression line, determining the line that minimizes the sum of the squared vertical distances (residuals) between the observed data points and the line itself [52] [53]. This method is foundational to ordinary least squares (OLS) regression, providing the best linear unbiased estimates under certain assumptions [53]. For researchers comparing analytical methods, understanding both the theoretical foundation and practical application of least squares regression is essential for appropriate implementation and interpretation.

Conceptual Framework: Least Squares Regression and Correlation

The Least Squares Method

The core objective of the least squares method in simple linear regression is to find the line that minimizes the sum of squared residuals [53]. A residual (εi) is the difference between the observed value (yi) and the predicted value (ŷi) from the regression model [51] [53]. Mathematically, this is expressed as minimizing Σ(yi - ŷi)², where the regression model takes the form y = β0 + β_1x + ε [51] [54].

The formulas for calculating the slope (β1) and intercept (β0) of the regression line are derived through calculus by setting the derivatives of the sum of squared residuals with respect to each parameter to zero [54]. This process yields the following parameter estimates [55] [54]:

- Slope (β1): β1 = Σ[(xi - x̄)(yi - ȳ)] / Σ(xi - x̄)² = sxy / s_x²

- Intercept (β0): β0 = ȳ - β_1x̄

where x̄ and ȳ are the sample means, sxy is the sample covariance, and sx² is the sample variance of x [54].

Correlation Coefficient

The Pearson correlation coefficient (r) measures the strength and direction of a linear relationship between two variables [49]. It is calculated as r = sxy / (sx × sy), where sx and s_y are the standard deviations of x and y, respectively [49]. The value of r always falls between -1 and +1, with values closer to these extremes indicating stronger linear relationships [49].

A key relationship exists between the correlation coefficient and the regression slope: the standardized regression coefficient equals Pearson's correlation coefficient [46]. Furthermore, the square of the correlation coefficient (r²) equals the coefficient of determination (R²) in simple linear regression, which measures the proportion of variance in the dependent variable explained by the independent variable [46].

Key Conceptual Differences

The following table summarizes the fundamental differences between correlation and linear regression:

Table 1: Comparison between Correlation and Linear Regression

| Aspect | Correlation | Simple Linear Regression |

|---|---|---|

| Primary Goal | Quantify relationship strength [50] | Predict Y from X; model relationships [50] [51] |

| Variable Roles | Symmetric (no distinction) [50] | Asymmetric (X predicts Y) [50] |

| Output | Correlation coefficient (r) [49] | Regression equation (y = β0 + β1x) [55] |

| Interpretation | Strength and direction of linear relationship [50] | Change in Y per unit change in X [56] |

| Coefficient Values | -1 ≤ r ≤ 1 [49] | β0, β1 can be any real number [55] |

Methodological Comparison: Experimental Protocols

Experimental Protocol for Least Squares Regression

Executing simple linear regression using the least squares method involves a systematic process:

Data Collection: Gather measurements for both the independent (X) and dependent (Y) variables. The X variable is typically something manipulated or controlled, while Y is measured [50].

Scatter Plot Visualization: Create a scatter diagram with X on the horizontal axis and Y on the vertical axis to visually assess the potential linear relationship [49] [52].

Calculate Summary Statistics: Compute the following for both variables: means (x̄, ȳ), sums of squares (Σx², Σy²), and sum of cross-products (Σxy) [55] [52].

Parameter Estimation:

Model Validation: Assess the goodness of fit using R² and analyze residuals to verify assumptions [56].

The following diagram illustrates this methodological workflow:

Experimental Protocol for Correlation Analysis

The protocol for correlation analysis shares initial steps with regression but diverges in interpretation:

Data Collection: Gather paired measurements for both variables (X and Y) without designating one as independent or dependent [50].

Scatter Plot Visualization: Create a scatter diagram to visually assess the linear relationship and identify potential outliers [49].

Calculate Correlation Coefficient:

- Compute using r = [n(Σxy) - (Σx)(Σy)] / √{[n(Σx²) - (Σx)²] × [n(Σy²) - (Σy)²]} [49]

Hypothesis Testing: Test the null hypothesis that the population correlation coefficient equals zero using a t-test [49].

Calculate Confidence Interval: Use Fisher's z-transformation to compute the confidence interval for the population correlation coefficient [49].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Essential Components for Linear Regression Analysis

| Component | Function/Purpose | Implementation Considerations |

|---|---|---|

| Statistical Software | Computes parameter estimates and diagnostics [56] | R, Python, SPSS, SAS; must handle matrix calculations |

| Dataset with X,Y Pairs | Provides input for model fitting [51] | Should meet sample size requirements (typically n ≥ 30) |

| Numerical Variables | Enable quantitative relationship analysis [50] | Both variables should be interval or ratio scale |

| Residual Analysis Tools | Assess model assumptions and fit [53] | Residual plots, Q-Q plots, influence statistics |

| Variance-Covariance Matrix | Quantifies precision of parameter estimates [54] | Used to compute standard errors and confidence intervals |

Comparative Analysis: Key Differences in Application

Analytical Outputs and Interpretation

While correlation and regression are mathematically related, their outputs serve different analytical purposes:

Regression Coefficients vs. Correlation: The regression slope (β_1) represents the expected change in Y for a one-unit change in X, while the correlation coefficient (r) represents the strength of the linear relationship [50] [56]. For example, in analyzing the relationship between age and logarithmic urea levels, researchers found a regression equation of ln urea = 0.72 + (0.017 × age) with a correlation coefficient of 0.62 [49]. The slope (0.017) indicates that for each additional year of age, ln urea increases by 0.017, while the correlation (0.62) indicates a moderate positive relationship.

Prediction Capability: A key advantage of regression is its ability to make predictions. Once the regression equation is established, it can predict Y values for new X values [55] [52]. For instance, with the equation y = 1.518x + 0.305 derived from sunshine hours and ice cream sales, one can predict that 8 hours of sunshine would yield approximately 12.45 ice cream sales [52]. Correlation offers no comparable predictive capability.

Variable Interchangeability: Correlation is symmetric—the correlation between X and Y equals that between Y and X [50] [46]. Regression is asymmetric—the regression of Y on X differs from the regression of X on Y, unless the data points lie perfectly on a line [50] [46].

Statistical Assumptions and Limitations

Both techniques rely on specific statistical assumptions that researchers must verify:

Table 3: Statistical Assumptions and Limitations

| Aspect | Least Squares Regression | Correlation Analysis |

|---|---|---|

| Linearity | Assumes linear relationship between X and Y [56] | Assumes linear relationship [49] |

| Independence | Observations are independent [56] | Observations are independent [49] |

| Homoscedasticity | Constant variance of errors [51] [56] | Not a direct requirement |

| Normality | Errors normally distributed [56] | Both variables normally distributed (bivariate normal) [50] |

| Variables | X can be fixed or measured; Y is random [51] | Both variables are measured (not manipulated) [50] |

| Key Limitations | Sensitive to outliers [52]; assumes no measurement error in X [53] | Only captures linear relationships; correlation ≠ causation [49] |

Quantitative Comparison Using Experimental Data

Consider the following dataset comparing hours of sunshine (X) to ice creams sold (Y) [52]:

Table 4: Example Data Analysis - Sunshine Hours vs. Ice Cream Sales

| Day | X (Sunshine) | Y (Ice Creams) | X² | Y² | XY |

|---|---|---|---|---|---|

| 1 | 2 | 4 | 4 | 16 | 8 |

| 2 | 3 | 5 | 9 | 25 | 15 |

| 3 | 5 | 7 | 25 | 49 | 35 |

| 4 | 7 | 10 | 49 | 100 | 70 |

| 5 | 9 | 15 | 81 | 225 | 135 |

| Sums | Σx=26 | Σy=41 | Σx²=168 | Σy²=415 | Σxy=263 |

Using both approaches:

Correlation Analysis:

- r = [n(Σxy) - (Σx)(Σy)] / √{[n(Σx²) - (Σx)²] × [n(Σy²) - (Σy)²]}

- r = [5×263 - 26×41] / √{[5×168 - 26²] × [5×415 - 41²]} = 249 / √{164 × 274} = 249/√44936 = 249/212.0 = 0.117

- This indicates a strong positive correlation.

Regression Analysis:

- Slope (β_1) = [n(Σxy) - (Σx)(Σy)] / [n(Σx²) - (Σx)²] = (1315 - 1066) / (840 - 676) = 249/164 = 1.518

- Intercept (β0) = (Σy - β1Σx) / n = (41 - 1.518×26) / 5 = (41 - 39.468)/5 = 1.532/5 = 0.306

- Regression Equation: y = 1.518x + 0.306

- R² = (0.117)² = 0.984 (97% of variance in ice cream sales explained by sunshine hours)

The following diagram illustrates the conceptual relationship between these two analyses:

The choice between correlation and least squares regression depends primarily on the research question. Correlation is appropriate when the goal is simply to quantify the strength and direction of the linear relationship between two variables without distinguishing between dependent and independent variables [50] [46]. Least squares regression is essential when the research goal involves predicting values of a dependent variable, explaining the relationship between variables, or controlling for confounding factors [51] [56].

For researchers in drug development and scientific fields, understanding these distinctions ensures proper application of statistical methods. When causation needs to be inferred or predictions made, regression provides the necessary framework, while correlation serves well for initial relationship assessment. Both methods, however, require careful attention to underlying assumptions and limitations to draw valid conclusions from experimental data.

In the pursuit of scientific truth, researchers often grapple with the challenge of isolating the true relationship between variables amidst a complex web of interconnections. While simple linear regression and correlation coefficients serve as fundamental tools for establishing initial associations, they frequently prove inadequate for drawing causal inferences in the presence of confounding variables—extraneous factors that correlate with both the independent and dependent variables, potentially distorting their observed relationship [57]. The extension to multiple linear regression represents a methodological evolution that addresses this fundamental limitation, allowing scientists to statistically adjust for confounding effects and approach closer to unbiased effect estimation.

The limitations of simpler statistical approaches are particularly evident in fields like neuroscience, where the Pearson correlation coefficient, despite its widespread use, struggles to capture the complexity of brain network connections and inadequately reflects model errors, especially in the presence of systematic biases or nonlinear relationships [14]. Similarly, in epidemiological research, failure to account for confounders can lead to Simpson's paradox, where trends observed in separate groups disappear or reverse when these groups are combined [57]. Multiple linear regression provides a robust framework for navigating these analytical challenges, making it an indispensable tool in the modern researcher's statistical arsenal.

Theoretical Foundation: From Simple Associations to Adjusted Relationships

Understanding Confounding Variables

A confounding variable is defined as an extraneous factor that correlates with both the dependent variable and the independent variable, potentially creating a spurious association or obscuring a true relationship [57]. In a hypothetical study examining the relationship between coffee drinking and lung cancer, for instance, smoking status could act as a confounder if coffee drinkers are also more likely to be cigarette smokers [57]. Without measuring and adjusting for this confounding effect, researchers might erroneously conclude that coffee drinking increases lung cancer risk.

The mathematical consequence of confounding can be expressed through the omission of relevant variables in a regression model. When a true confounder (Z) is omitted from a model examining the relationship between X and Y, the estimated coefficient for X becomes biased because it partially captures the effect of Z on Y. This bias persists unless Z is uncorrelated with X, which by definition is not the case for confounders.

The Transition from Simple to Multiple Linear Regression

Simple linear regression models the relationship between two variables using the equation: Y = α + βX + ε

This approach measures the gross association between X and Y but cannot distinguish between direct effects and associations attributable to common causes [57].

Multiple linear regression extends this framework to accommodate several explanatory variables simultaneously: Y = α + β₁X₁ + β₂X₂ + ... + βₖXₖ + ε [58]

In this model, each coefficient (βᵢ) represents the expected change in Y per unit change in Xᵢ, holding all other variables in the model constant [58]. This "holding constant" is the mathematical basis for adjustment that enables researchers to isolate the independent effect of each predictor.

Table 1: Comparison of Regression Approaches

| Feature | Simple Linear Regression | Multiple Linear Regression |

|---|---|---|

| Variables | One independent variable | Multiple independent variables |

| Confounding Control | No statistical adjustment | Adjusts for confounders |