Machine Learning in Organic Synthesis: Accelerating Reaction Optimization and Drug Discovery

This article explores the transformative impact of machine learning (ML) on optimizing organic synthesis conditions, a critical process in pharmaceutical and materials science.

Machine Learning in Organic Synthesis: Accelerating Reaction Optimization and Drug Discovery

Abstract

This article explores the transformative impact of machine learning (ML) on optimizing organic synthesis conditions, a critical process in pharmaceutical and materials science. It covers the foundational shift from traditional one-variable-at-a-time approaches to data-driven strategies powered by artificial intelligence. The scope includes a detailed examination of core ML methodologies like Bayesian optimization and generative models, their integration with high-throughput experimentation (HTE) in automated platforms, and practical troubleshooting for real-world implementation. Through validation case studies and comparative analysis of performance against traditional methods, this review demonstrates how ML accelerates process development, reduces costs, and unlocks novel chemical discoveries, ultimately shaping the future of efficient and sustainable chemical research.

The New Paradigm: How AI is Reshaping the Foundations of Organic Synthesis

The Limitations of Traditional One-Variable-at-a-Time (OFAT) Optimization

In the field of organic synthesis, particularly in pharmaceutical development, the optimization of reaction conditions is a critical but resource-intensive process. For decades, the One-Variable-at-a-Time (OFAT) approach has been a cornerstone methodology where chemists systematically alter a single factor while keeping all others constant [1]. This intuitive, sequential method is deeply embedded in chemical training and practice, allowing researchers to observe the individual effect of each parameter on the reaction outcome [2]. However, with the increasing complexity of synthetic targets and the emergence of machine learning-driven optimization, the fundamental limitations of OFAT have become increasingly apparent [1] [3]. This Application Note examines these limitations through a quantitative lens, provides experimental protocols for modern alternatives, and contextualizes these findings within the broader thesis of machine-learning-guided reaction optimization.

Core Limitations of the OFAT Approach

The traditional OFAT method suffers from several critical drawbacks that hinder its efficiency and effectiveness in complex reaction optimization.

Inability to Detect Factor Interactions

The most significant limitation of OFAT is its fundamental assumption that variables act independently on the reaction outcome. In reality, chemical reactions often exhibit synergistic or antagonistic interactions between parameters such as temperature, catalyst loading, solvent polarity, and concentration [4]. OFAT methodology is blind to these interactions because it only tests variables in isolation. For instance, the optimal temperature for a reaction may depend heavily on catalyst concentration—a relationship that OFAT cannot systematically uncover. This often leads to the identification of local optima rather than the global optimum for the reaction system [4]. Statistical multivariate approaches, in contrast, are specifically designed to quantify these interactions.

Resource and Time Inefficiency

The OFAT approach is notoriously inefficient in its use of time and materials. As each variable is investigated sequentially, the total number of experiments required grows linearly with the number of factors being studied [5]. This becomes particularly problematic when exploring complex reaction systems with multiple categorical and continuous variables. For example, optimizing just five variables at three levels each would require 3⁵ = 243 experiments in a full factorial design; OFAT would require only 5×3 = 15 experiments but would likely miss the true optimum [4]. While OFAT appears more efficient superficially, its failure to locate true optimal conditions often necessitates additional optimization cycles, ultimately consuming more resources than more efficient experimental designs.

Suboptimal Final Conditions

Due to the inability to detect factor interactions, OFAT campaigns typically converge on suboptimal reaction conditions [2] [4]. The final combination of variable set points identified through OFAT is often substantially inferior to what could be achieved with multivariate optimization. The degree of suboptimality depends on the order in which variables were perturbed, introducing an arbitrary element into the optimization process [4]. In pharmaceutical development, where yield, purity, and cost are critical, this suboptimal performance has significant economic implications.

Table 1: Quantitative Comparison of OFAT versus Modern Optimization Approaches

| Characteristic | OFAT | Design of Experiments (DoE) | Machine Learning-Guided Optimization |

|---|---|---|---|

| Factor Interactions | Not detected | Quantified and modeled | Modeled with complex algorithms |

| Typical Experimental Load | Linear with factors | Fractional factorial (reduced) | Adaptive, often minimal |

| Optimal Solution | Local optimum (often suboptimal) | Global or near-global optimum | Global optimum with uncertainty quantification |

| Required Expertise | Chemical intuition | Statistical literacy | Data science and chemistry |

| Resource Efficiency | Low | Medium to High | High |

| Handling of Categorical Variables | Straightforward | Designed for both categorical and continuous | Requires specialized encoding |

Experimental Protocols for Modern Optimization

Protocol: Design of Experiments (DoE) for Reaction Screening

DoE represents a fundamental shift from OFAT, using statistical principles to systematically vary multiple factors simultaneously [1].

Materials:

- Chemical reactants and solvents

- Automated liquid handling system (e.g., Chemspeed SWING) or manual setup with controlled variance

- Analytical instrumentation (e.g., GC-MS, HPLC)

- DoE software (e.g., JMP, Design-Expert, or open-source alternatives)

Procedure:

- Factor Selection: Identify critical continuous (temperature, concentration, time) and categorical (catalyst type, solvent class) factors [4].

- Experimental Design: Select an appropriate screening design (e.g., Plackett-Burman) to identify significant factors with minimal experiments [4].

- Randomized Execution: Perform experiments in randomized order to minimize systematic bias.

- Response Measurement: Quantify key outcomes (yield, selectivity, purity).

- Statistical Modeling: Build a linear model to identify significant factors and their interactions.

- Optimization Design: For significant factors, implement a Response Surface Methodology (RSM) design such as Central Composite Design (CCD) to model curvature and locate the optimum [4].

- Model Validation: Confirm the predicted optimum with confirmatory experiments.

Protocol: Machine Learning-Guided Closed-Loop Optimization

This advanced protocol integrates high-throughput experimentation with adaptive algorithms for autonomous optimization [3] [5].

Materials:

- Automated reactor platform (e.g., batch HTE system or flow chemistry modules)

- In-line or at-line analytical tools (e.g., ReactIR, GC, HPLC)

- Central control software with optimization algorithm (e.g., Bayesian optimization)

- Access to chemical reaction databases (e.g., Reaxys, Open Reaction Database) [2]

Procedure:

- Define Search Space: Establish parameter bounds for all variables (e.g., temperature: 25-100°C, catalyst loading: 0.5-5 mol%).

- Initial Design: Execute a small set of initial experiments (e.g., Latin Hypercube Sample) to seed the model.

- Model Training: Train a machine learning model (e.g., Gaussian Process Regression) on collected data to predict reaction outcomes.

- Acquisition Function: Use an acquisition function (e.g., Expected Improvement) to suggest the most informative subsequent experiment.

- Automated Execution: The platform automatically prepares, runs, and analyzes the suggested reaction.

- Iterative Learning: Repeat steps 3-5 in a closed loop until convergence to an optimum or exhaustion of the experimental budget.

- Human Validation: Chemists interpret the final results and validate the optimal conditions manually.

Table 2: Research Reagent Solutions for High-Throughput Optimization

| Reagent/Material | Function in Optimization | Example Application |

|---|---|---|

| Microtiter Plates (96/384-well) | Miniaturized parallel reaction vessels | High-throughput screening of reaction conditions [5] |

| Catalyst Kit Libraries | Pre-formulated catalyst sets for rapid screening | Identifying optimal catalysts for cross-coupling reactions [6] |

| Solvent Screening Sets | Diverse polarity and functional group compatibility | Evaluating solvent effects on yield and selectivity [6] |

| Automated Liquid Handling Systems | Precense reagent dispensing and serial dilution | Ensuring reproducibility and enabling assay miniaturization [5] |

| In-line Spectroscopic Flow Cells | Real-time reaction monitoring | Kinetic data acquisition for model-based optimization [7] |

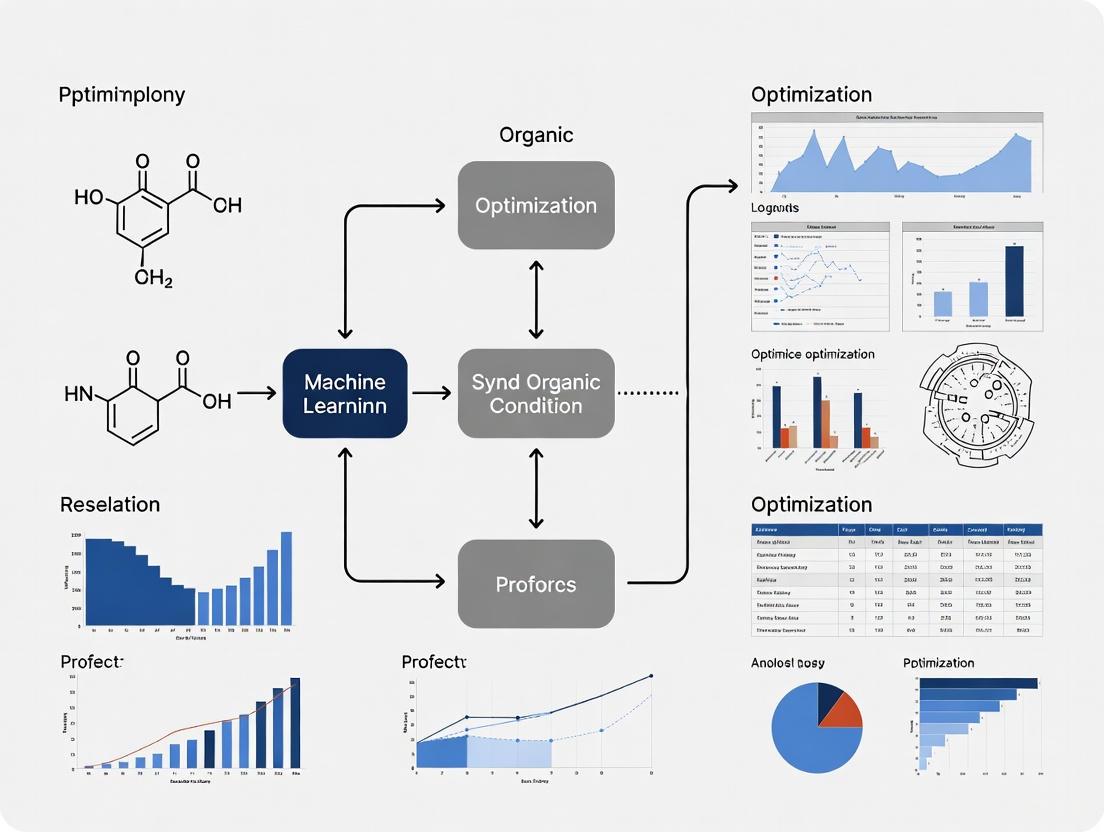

Visualization of Methodologies

The following diagram illustrates the fundamental procedural differences between OFAT, DoE, and ML-guided optimization, highlighting their efficiency in navigating a complex parameter space.

Integration with Machine Learning Optimization

The limitations of OFAT directly inform the value proposition of machine learning (ML) in reaction optimization. ML approaches fundamentally address OFAT's shortcomings:

Data Requirements and Model Training

ML models thrive on the high-dimensional, interaction-rich data that OFAT fails to produce [2]. The transition from OFAT to multivariate data collection enables the development of both global models (trained on large, diverse reaction datasets from databases like Reaxys and Open Reaction Database) and local models (fine-tuned for specific reaction families using High-Throughput Experimentation data) [2]. These models learn the complex relationships between reaction parameters and outcomes, allowing them to predict optimal conditions for new reactions.

The Human-AI Synergy in Optimization

Modern optimization does not seek to fully replace chemist intuition but to augment it [3]. The most successful implementations occur within a human-AI collaboration framework, where chemists define the chemical problem and constraints, and ML algorithms rapidly explore the experimental space [3] [7]. This synergy combines the deep chemical knowledge and pattern recognition of experienced scientists with the tireless, quantitative exploration capabilities of adaptive algorithms.

The One-Variable-at-a-Time approach, while intuitive and historically valuable, presents significant limitations in efficiency, effectiveness, and its capacity to uncover optimal conditions in complex chemical systems. Its inability to detect factor interactions, tendency to converge on local optima, and inherent resource inefficiency render it increasingly inadequate for modern organic synthesis challenges, particularly in drug development timelines. The framework of machine-learning-guided optimization directly addresses these limitations through parallel experimentation, statistical modeling of complex parameter spaces, and adaptive learning algorithms. The future of reaction optimization lies not in abandoning traditional chemical intuition, but in strategically integrating it with multivariate statistical approaches and machine learning to accelerate the discovery and development of synthetic methodologies.

Core AI and Machine Learning Techniques Revolutionizing Chemistry

The optimization of organic synthesis has traditionally been a labor-intensive process, relying on manual experimentation guided by chemist intuition and the inefficient one-variable-at-a-time (OVAT) approach [8]. This paradigm is undergoing a fundamental shift, driven by the convergence of artificial intelligence (AI) and machine learning (ML) with chemistry. These technologies are revolutionizing how researchers discover reactions, predict molecular properties, and design novel compounds, thereby accelerating the entire research and development pipeline [9] [10].

At the heart of this transformation is the ability to synchronously optimize multiple reaction variables across a high-dimensional parametric space. This data-driven approach, powered by lab automation and sophisticated algorithms, requires shorter experimentation time and minimal human intervention [8]. This article details the core AI and ML techniques at the forefront of this revolution, providing application notes and detailed protocols to equip researchers with the knowledge to implement these advanced methods in their work on optimizing organic synthesis conditions.

Core AI/ML Techniques and Their Applications in Chemistry

Molecular Representation and Property Prediction

A critical first step in applying AI to chemistry is representing molecular structures in a format that algorithms can process. The choice of representation significantly influences the performance of predictive models [11].

Table 1: Common Molecular Representations in AI-Driven Chemistry

| Representation Type | Description | Common Use Cases | Examples/Formats |

|---|---|---|---|

| SMILES | 1D string of characters representing the molecular structure [12]. | Retrosynthesis prediction, generative molecule design [12]. | CCO for ethanol |

| Molecular Graph | 2D graph with atoms as nodes and bonds as edges [13]. | Directly captures molecular topology; property prediction [13]. | Adjacency matrices, graph networks |

| Molecular Fingerprints | Binary bit strings indicating the presence of specific substructures [11]. | Similarity searching, QSAR models [11]. | ECFP, Morgan fingerprints, MACCS keys |

| Quantum Mechanical Descriptors | Numerical representations of electronic or geometric properties [11]. | Accurate prediction of reactivity and spectroscopic properties. | Partial charges, orbital energies |

Recent advancements have introduced powerful models that leverage these representations. MolE is a foundational model that uses a transformer-based architecture on molecular graphs. It was pretrained on over 842 million molecular graphs using a self-supervised approach, learning to understand atom environments and their relationships without requiring experimental data [13]. This approach allows it to generalize effectively, achieving state-of-the-art performance on critical ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) prediction tasks. For instance, it ranked first in 10 out of 22 tasks on the Therapeutic Data Commons (TDC) benchmark, including predicting CYP inhibition, which is crucial for anticipating drug-drug interactions [13].

For researchers without deep programming expertise, tools like ChemXploreML provide a user-friendly desktop application for predicting key molecular properties such as boiling point, melting point, and critical temperature, with reported accuracy scores of up to 93% for critical temperature [14]. This tool automates the complex process of translating structures into numerical vectors using built-in "molecular embedders" [14].

Generative AI for Molecular Design

Generative AI models tackle the inverse design problem: they start with a set of desired properties and generate molecular structures that fulfill those criteria [12]. These models are pivotal for de novo molecular design, scaffold hopping, and lead optimization.

REINVENT 4 is a modern, open-source generative AI framework that utilizes Recurrent Neural Networks (RNNs) and Transformers to generate molecules, typically represented as SMILES strings [12]. The software is embedded within powerful ML optimization algorithms, including reinforcement learning (RL), transfer learning, and curriculum learning. In reinforcement learning, the generative agent (the "Actor") is guided by a scoring function that rewards the generation of molecules with desired properties, allowing the model to iteratively learn and improve its output [12].

The workflow for a typical generative design experiment using REINVENT 4 involves:

- Defining the Objective: A scoring function is configured to quantify the desirability of a generated molecule (e.g., high binding affinity, specific logP, low toxicity).

- Selecting a Prior Model: A foundation model, pre-trained on a large dataset of known molecules (e.g., ChEMBL), provides an initial understanding of chemical space and valid chemical structures.

- Running the Optimization: The reinforcement learning cycle begins. The agent generates a batch of molecules, which are scored. The agent's parameters are then updated to increase the likelihood of generating high-scoring molecules while retaining the chemical knowledge from the prior.

Machine Learning in Reaction Optimization and Synthesis Planning

AI extends beyond molecular design into the optimization of the synthetic processes themselves. Machine learning models can predict reaction outcomes, recommend optimal conditions (catalyst, solvent, temperature), and plan multi-step synthetic routes [8] [10].

High-Throughput Experimentation (HTE) plays a crucial role here by generating the large, high-quality datasets required to train robust ML models [6]. In an HTE workflow, hundreds or thousands of miniature reactions are run in parallel under varying conditions. The outcomes (e.g., yield, selectivity) are analyzed, creating a dataset that maps reaction parameters to results. ML algorithms, such as Bayesian optimization or random forests, can then analyze this data to identify optimal conditions or even discover new reactivity [6].

Tools like IBM RXN and AiZynthFinder use AI to perform retrosynthetic analysis, deconstructing a target molecule into simpler precursors and proposing viable synthetic pathways with unprecedented speed [10]. These platforms are increasingly integrated with experimental data, allowing them to not only propose routes but also predict the likelihood of success for each reaction step.

Table 2: Key AI-Driven Platforms for Synthesis and Analysis

| Platform / Tool | Primary Function | Underlying AI/ML Technology | Application in Synthesis Optimization |

|---|---|---|---|

| REINVENT 4 [12] | Generative molecular design | RNNs, Transformers, Reinforcement Learning | De novo design, molecule optimization, scaffold hopping. |

| ChemXploreML [14] | Molecular property prediction | Automated molecular embedders, ML classifiers | Rapid in-silico screening of compound properties to prioritize synthesis targets. |

| IBM RXN [10] | Retrosynthesis & reaction prediction | Transformer-based models | Automated planning of synthetic routes for target molecules. |

| AiZynthFinder [10] | Retrosynthesis planning | Monte Carlo tree search | Finding commercially feasible synthetic pathways. |

| MolE [13] | Molecular property prediction | Graph-based Transformers | Predicting ADMET properties to guide the design of synthesizable compounds with favorable profiles. |

Detailed Experimental Protocols

Protocol 1: Predicting Molecular Properties with ChemXploreML

This protocol outlines the steps for using the ChemXploreML desktop application to predict molecular properties for a series of novel organic compounds, aiding in the prioritization of synthesis targets.

I. Research Reagent Solutions & Materials

- Hardware: Standard desktop computer (Windows/macOS/Linux).

- Software: ChemXploreML application, downloaded and installed from the McGuire Research Group at MIT [14].

- Data Input: A list of candidate molecules in SMILES string format.

II. Step-by-Step Procedure

Input Preparation:

- Prepare a

.csvfile containing a column of SMILES strings representing the molecules to be evaluated. Ensure the SMILES are valid using a tool like RDKit (if available).

- Prepare a

Software Setup:

- Launch the ChemXploreML application. The offline capability ensures data privacy [14].

Model Configuration:

- Load the prepared

.csvfile. - Select the target properties for prediction from the available options (e.g., boiling point, melting point, critical temperature, critical pressure, vapor pressure).

- The application will automatically handle the featurization process, using its built-in molecular embedders to convert SMILES strings into numerical vectors [14].

- Load the prepared

Execution and Analysis:

- Initiate the prediction process. The software will run the built-in state-of-the-art algorithms to generate predictions.

- Once complete, the results will be displayed in the interactive graphical interface and can be exported for further analysis. The achieved accuracy for properties like critical temperature can be as high as 93% [14].

Protocol 2: Optimizing a Catalytic Reaction using HTE and Machine Learning

This protocol describes a combined experimental-computational workflow for optimizing a palladium-catalyzed cross-coupling reaction using High-Throughput Experimentation and Bayesian Optimization.

I. Research Reagent Solutions & Materials

- Automation & HTE: Liquid handling robot, 96-well or 384-well microtiter plates, automated plate sealer.

- Analysis: UHPLC-MS system with an autosampler.

- Chemicals: Substrates, diverse set of palladium catalysts, ligands, bases, and solvents for screening.

- Software: Data analysis software (e.g., Python with scikit-learn, Dragonfly for Bayesian optimization).

II. Step-by-Step Procedure

Experimental Design:

- Define the parametric space for optimization: catalyst (e.g., 4 types), ligand (e.g., 8 types), base (e.g., 4 types), solvent (e.g., 6 types). This creates a 4x8x4x6 = 768-condition grid.

- Use a strategic design (e.g., D-optimal, D-Optimal design, or random selection) to select a subset of ~150-200 conditions for the initial HTE run to efficiently explore the space [6].

HTE Execution:

- Use automated liquid handlers to dispense reagents and solvents into the wells of the microtiter plate in an inert atmosphere to handle air-sensitive chemistry [6].

- Seal the plates and allow reactions to proceed at the designated temperature.

Data Acquisition & Processing:

- After a set time, use UHPLC-MS with an autosampler to analyze reaction mixtures directly from the plate.

- Convert chromatographic data to reaction yield (the objective function for optimization).

ML-Guided Optimization:

- Train a machine learning model (e.g., Gaussian Process regression) on the initial HTE dataset. The model learns the complex relationship between reaction components and yield.

- Use a Bayesian optimization algorithm to propose the next set of ~20-30 promising reaction conditions that balance exploration (trying uncertain areas) and exploitation (improving on high-yield conditions) [8] [6].

- Execute the proposed experiments in the next HTE cycle.

Iteration and Validation:

- Repeat steps 3-4 for 2-3 iterations or until the yield converges to a satisfactory maximum.

- Manually validate the top-predicted conditions in a traditional round-bottom flask to confirm the ML model's predictions.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Research Reagent Solutions for AI-Driven Chemistry

| Category | Item / Software | Function / Application |

|---|---|---|

| Generative AI Software | REINVENT 4 [12] | Open-source framework for de novo molecular design and optimization using RL. |

| Property Prediction | ChemXploreML [14] | User-friendly desktop app for predicting molecular properties without coding. |

| MolE [13] | Foundation model for accurate ADMET property prediction from molecular graphs. | |

| Synthesis Planning | IBM RXN [10] | AI-powered platform for predicting retrosynthetic pathways and reaction outcomes. |

| AiZynthFinder [10] | Open-source tool for retrosynthetic planning using a publicly available compound library. | |

| Cheminformatics Toolkits | RDKit [10] | Open-source toolkit for cheminformatics, molecular descriptor calculation, and fingerprinting. |

| DeepChem [10] | Deep learning library for drug discovery and quantum chemistry. | |

| HTE & Automation | Automated Liquid Handlers | Enables precise, high-throughput dispensing of reagents for parallel reaction setup. |

| Microtiter Plates (96/384-well) | Miniaturized reaction vessels for running hundreds of experiments in parallel [6]. |

The field of organic synthesis is undergoing a profound transformation driven by artificial intelligence (AI) and machine learning (ML). These technologies are reshaping the traditional approach to molecular design and reaction optimization by seamlessly integrating data-driven algorithms with chemical intuition [9]. This document, framed within broader research on ML optimization of organic synthesis conditions, details specific applications and protocols that leverage AI to accelerate discovery. The revolution spans from accurately predicting reaction outcomes to controlling chemical selectivity, simplifying synthesis planning, and accelerating catalyst discovery [9]. This shift addresses critical limitations of conventional methods, which often rely on labor-intensive, time-consuming experimentation guided by human intuition and one-variable-at-a-time optimization [8]. For researchers and drug development professionals, these tools offer a powerful new toolkit to enhance precision, efficiency, and sustainability while addressing pressing global challenges in medicine, materials, and energy [15].

Application Note 1: Predictive Modeling for Reaction Outcomes

Core Concepts and Quantitative Performance

Predicting the results of a chemical reaction before stepping into the laboratory is a cornerstone of accelerated synthesis research. ML models achieve this by learning from vast repositories of reaction data to forecast products, yields, and selectivity. The Graph-Convolutional Neural Networks demonstrate high accuracy in reaction outcome prediction with interpretable mechanisms, while neural-symbolic frameworks and Monte Carlo Tree Search (MCTS) revolutionize retrosynthetic planning, generating expert-quality routes at unprecedented speeds [15]. Another powerful approach utilizes a machine learning model based on molecular orbital reaction theory, which delivers remarkable accuracy and generalizability for organic reaction outcome prediction [15].

Table 1: Performance Metrics of Select Reaction Outcome Prediction Models

| Model / Approach | Reported Accuracy / Performance | Key Application Context | Data Source |

|---|---|---|---|

| Uni-Mol Framework [16] | Identified catalysts achieving 94% yield and 99% enantiomeric excess | Asymmetric aldol reactions & catalyst screening | High-throughput experimentation (HTE) data |

| Graph-Convolutional Networks [15] | "High accuracy" with interpretable mechanisms | General reaction outcome prediction | Not Specified |

| Machine Learning Model (Molecular Orbital Theory) [15] | "Remarkable accuracy and generalizability" | Organic reaction outcome prediction | Not Specified |

| Machine Learning Model (for catalytic reactions on gold) [17] | Up to 93% prediction accuracy | Reactions on oxygen-covered and bare gold surfaces | Experimental data (~200 reactions) |

Experimental Protocol: Implementing the Uni-Mol Framework for Reaction Prediction

This protocol outlines the steps for employing the Uni-Mol framework to predict reaction outcomes and screen catalysts, as validated on asymmetric aldol reaction datasets [16].

- Objective: Rapidly predict reaction yields and enantioselectivity to identify optimal catalyst candidates from a tetrapeptide library for an asymmetric aldol reaction.

- Materials and Inputs:

- A library of candidate catalyst molecules (e.g., a self-synthesized tetrapeptide library).

- Reaction SMILES (Simplified Molecular-Input Line-Entry System) strings defining the reactants and the general reaction type.

- High-throughput experimentation (HTE) equipment for empirical validation.

- Software and Computational Setup:

- Implement the Uni-Mol framework, which leverages a model pre-trained on a large corpus of molecular conformations.

- Ensure access to adequate computational resources (GPU recommended) for model inference.

- Procedure:

- Step 1: Molecular Representation. Feed the SMILES strings of all candidate catalysts in the library into the pre-trained Uni-Mol model. The framework automatically generates a numerical representation (embedding) for each molecule that captures its structural and conformational features.

- Step 2: Model Training. Train a classification or regression model (as described in the source study) using the generated molecular representations as input features. The model's target variable is the reaction outcome (e.g., yield and enantiomeric excess), labeled from a subset of pre-existing HTE data.

- Step 3: Prediction and Screening. Use the trained model to predict the performance of all catalysts in the library, including those not yet experimentally tested.

- Step 4: Experimental Validation. Synthesize the top-ranked catalyst candidates identified by the model (e.g., those predicted to have high yield and enantioselectivity). Conduct the asymmetric aldol reaction under specified HTE conditions to measure the actual yield and enantiomeric excess.

- Expected Output: Successful identification of one or more tetrapeptide catalysts that deliver high performance (e.g., 94% yield and 99% enantiomeric excess) as predicted by the model [16].

Figure 1: Uni-Mol Reaction Prediction Workflow. A workflow for using the pre-trained Uni-Mol framework to predict reaction outcomes and screen potential catalysts.

Application Note 2: Machine Learning-Guided Catalyst Discovery

Core Concepts and Generative Models

Catalyst discovery is being revolutionized by ML-driven generative models, which move beyond simple prediction to the inverse design of novel catalyst structures. These models explore the vast chemical space to propose new candidates that meet specific performance criteria for a given reaction. The CatDRX framework is a prime example—a reaction-conditioned variational autoencoder (VAE) that generates potential catalyst structures and predicts their performance based on learned relationships between catalyst structure, reaction components, and outcomes [18]. This approach is pre-trained on a broad reaction database (e.g., the Open Reaction Database) and fine-tuned for specific downstream tasks, enabling it to handle a wide range of reaction classes [18].

Table 2: Key Generative and Predictive Models for Catalyst Discovery

| Model / Framework | Type | Key Capability | Conditioning |

|---|---|---|---|

| CatDRX [18] | Reaction-conditioned VAE | Generates catalysts and predicts yield/activity | Reactants, reagents, products, reaction time |

| Uni-Mol Framework [16] | Pre-trained Molecular Representation | Screens and predicts catalyst performance for asymmetric reactions | Molecular structure of catalyst and reactants |

| Algorithm with Latent Variables [19] | Machine Learning with Latent Variables | Predicts synthetic conditions and unobservable reactions for organic materials | Substitution patterns of target molecules |

Experimental Protocol: Catalyst Generation and Optimization with CatDRX

This protocol describes the process of using the CatDRX framework for the de novo design and optimization of catalysts for a target reaction [18].

- Objective: Generate novel, high-performance catalyst candidates for a specific catalytic reaction (e.g., a cross-coupling reaction) and predict their expected performance.

- Materials and Inputs:

- Reaction Components: SMILES strings or molecular graphs of the core reactants, reagents, and products of the target reaction.

- Reaction Conditions: Information such as reaction temperature or time, if available.

- Performance Metric: The desired property to optimize (e.g., reaction yield, enantioselectivity ΔΔG‡).

- Software and Computational Setup:

- Access to the CatDRX model architecture and its pre-trained weights on a broad reaction database (e.g., ORD).

- A downstream fine-tuning dataset specific to the reaction class of interest (if available) to enhance prediction accuracy.

- Computational chemistry software (e.g., for DFT calculations) for in silico validation of top candidates.

- Procedure:

- Step 1: Model Setup. Load the pre-trained CatDRX model. If a specialized fine-tuning dataset is available, fine-tune the model on this data to adapt it to the specific reaction domain.

- Step 2: Condition Embedding. Encode the given reaction conditions (reactants, reagents, products, temperature) into a numerical "condition embedding" using the model's condition embedding module.

- Step 3: Catalyst Generation. Use the decoder component of the VAE to generate novel catalyst structures. This can be done by sampling from the latent space and guiding the generation with the condition embedding. Sampling strategies can be adjusted to balance exploration and exploitation.

- Step 4: Performance Prediction. Simultaneously, use the model's predictor module to estimate the catalytic performance (e.g., predicted yield) for each generated catalyst candidate.

- Step 5: Candidate Filtering. Filter the generated catalysts based on:

- Predicted performance scores (prioritize high-yield candidates).

- Chemical feasibility and synthesizability checks (using background chemical knowledge or rules).

- Optional computational validation: Perform rapid DFT calculations on a shortlist of candidates to assess reaction barriers or other key properties.

- Step 6: Experimental Validation. Synthesize or procure the top-ranked, filtered catalyst candidates and test them experimentally in the target reaction.

- Expected Output: A list of novel, generated catalyst structures with high predicted performance for the target reaction, validated computationally and/or experimentally [18].

Figure 2: CatDRX Catalyst Generation Workflow. An overview of the catalyst inverse design process using the CatDRX generative model.

The Scientist's Toolkit: Essential Research Reagents and Materials

The experimental workflows cited in these application notes rely on a combination of physical reagents, computational tools, and data resources.

Table 3: Key Research Reagent Solutions and Essential Materials

| Item / Resource | Function / Application | Example in Context |

|---|---|---|

| Tetrapeptide Catalyst Library [16] | Provides a diverse set of asymmetric organocatalysts for screening and model training. | Used in the Uni-Mol framework to discover catalysts for asymmetric aldol reactions. |

| High-Throughput Experimentation (HTE) Robotic Platform [8] [20] | Automates the parallel execution of thousands of reactions, generating consistent, high-quality data for model training and validation. | Essential for generating the Buchwald-Hartwig cross-coupling dataset used to train and validate GraphRXN and other models. |

| Pre-trained Molecular Models (e.g., Uni-Mol) [16] | Provides a foundational understanding of molecular structure and conformations, enabling rapid feature extraction for downstream tasks with limited data. | Used as the base model for building a classifier that predicts enantioselectivity in catalytic reactions. |

| Open Reaction Database (ORD) [18] | A large, publicly available repository of reaction data used to pre-train broad-scale ML models on diverse chemical transformations. | Serves as the pre-training dataset for the CatDRX framework, giving it a general understanding of chemistry. |

| Graph Neural Network (GNN) Frameworks [20] | The computational engine for learning directly from molecular graph structures (atoms as nodes, bonds as edges) to build powerful reaction predictors. | The foundation of the GraphRXN model, which takes 2D reaction structures as input for yield prediction. |

The Synergy of Data-Driven Algorithms and Chemical Intuition

The field of organic synthesis is undergoing a profound transformation, driven by the integration of artificial intelligence (AI) and data-driven algorithms with deep-rooted chemical intuition. This synergy is reshaping the landscape of molecular design, moving research beyond traditional trial-and-error approaches toward more predictive, efficient, and sustainable practices [9]. AI now plays pivotal roles in accurately predicting reaction outcomes, controlling chemical selectivity, simplifying synthesis planning, and accelerating catalyst discovery [9]. This convergence marks a pivotal moment where algorithms and data combine with human expertise to revolutionize the world of molecules, promising accelerated research cycles and innovative solutions to pressing chemical challenges [9]. This document provides detailed application notes and experimental protocols for implementing these synergistic approaches, framed within broader thesis research on machine learning optimization of organic synthesis conditions.

Quantitative Performance Analysis of Data-Driven Chemistry Tools

The effectiveness of the synergy between data-driven algorithms and chemical intuition is quantitatively demonstrated through the performance of various computational platforms. The table below summarizes key metrics and capabilities of leading cheminformatics tools used in modern organic synthesis research.

Table 1: Performance Metrics of Cheminformatics Tools in Organic Synthesis

| Tool Name | Primary Function | Key Performance Metrics | Optimal Application Context | Validation Status |

|---|---|---|---|---|

| IBM RXN | Reaction prediction & retrosynthesis | >90% accuracy for common reaction types; rapid pathway generation [10] | Retrosynthetic planning for pharmaceutical intermediates | Experimentally validated for multiple drug candidates |

| AiZynthFinder | Synthetic route design | 85% success rate for known targets; 70% for novel structures [10] | Automated synthesis planning for complex natural products | Cross-validated against published synthetic routes |

| Chemprop | Molecular property prediction | RMSE <0.3 for solubility; >0.8 AUC for toxicity classification [10] | Pre-screening of candidate compounds for desired properties | Benchmark performance on diverse chemical datasets |

| ASKCOS | Reaction condition optimization | >40% improvement in yield prediction versus human intuition alone [8] | Optimization of catalyst, solvent, and temperature parameters | Validated through high-throughput experimentation |

| Synthia | Retrosynthetic analysis | Reduces synthesis planning time from weeks to hours [10] | Disconnection strategy for complex polymers & materials | Intellectual property generation for novel compounds |

The data reveals that AI-driven tools consistently enhance research efficiency, with particular strength in retrosynthetic planning and reaction outcome prediction. These platforms demonstrate that the synergy between algorithmic processing of large datasets and chemists' interpretive skills can reduce optimization cycles by up to 40% compared to traditional approaches [8].

Experimental Protocols for Machine Learning-Optimized Organic Synthesis

Protocol: High-Throughput Reaction Optimization with Machine Learning Guidance

Purpose: To efficiently optimize chemical reaction conditions by integrating automated experimentation with machine learning algorithms to navigate high-dimensional parameter spaces.

Background: Traditional reaction optimization involves modifying variables one at a time, a labor-intensive process that often misses optimal conditions due to parameter interactions [8]. This protocol synchronously optimizes multiple reaction variables using machine learning-driven experimental design.

Table 2: Essential Research Reagents and Equipment for ML-Optimized Synthesis

| Item Name | Specification | Function in Protocol | Critical Notes |

|---|---|---|---|

| Automated Liquid Handling System | Multi-channel, nanoliter precision | Enables high-throughput reagent dispensing | Regular calibration essential for volume accuracy |

| Reaction Block | 96-well or 384-well format with temperature control | Parallel reaction execution | Chemical compatibility with reactants/solvents required |

| Machine Learning Software | Bayesian optimization implementation | Designs experimental iterations based on previous results | Customizable acquisition function for specific objectives |

| Analytical Integration Platform | UPLC-MS with automated sampling | Rapid reaction outcome quantification | Direct data feed to ML model reduces processing delays |

| Chemical Variable Library | Substrates, catalysts, solvents, ligands | Provides chemical search space for optimization | Pre-formatted stock solutions enable automated handling |

Procedure:

Parameter Space Definition: Identify 4-6 critical reaction variables to optimize (e.g., catalyst loading, solvent ratio, temperature, concentration, ligand identity). Define realistic ranges for each parameter based on chemical feasibility [8].

Initial Design of Experiments: Generate an initial set of 24-48 reaction conditions using Latin Hypercube Sampling or similar space-filling algorithms to ensure broad coverage of the parameter space.

Automated Reaction Execution:

- Utilize robotic liquid handling systems to prepare reaction mixtures in designated well plates according to the initial experimental design.

- Implement temperature control and stirring conditions as specified for each experimental condition.

- Quench reactions at predetermined timepoints using automated sampling systems.

High-Throughput Analysis:

- Employ UPLC-MS systems with automated sample injection for rapid analysis.

- Quantify reaction outcomes (yield, conversion, selectivity) using calibrated standard curves or internal standards.

- Format results into machine-readable data structures for model input.

Machine Learning Iteration Cycle:

- Input reaction outcomes and conditions into Bayesian optimization algorithm.

- Generate next set of 12-24 proposed experiments focusing on promising regions of parameter space.

- Execute proposed experiments following steps 3-4.

- Repeat for 3-5 optimization cycles or until convergence on optimal conditions.

Validation and Scale-up: Confirm optimal conditions in triplicate at micro-scale, then translate to traditional laboratory equipment for millimole-scale validation.

Troubleshooting:

- Poor Model Convergence: Expand parameter ranges or increase initial experiment diversity.

- Analytical Bottlenecks: Implement parallel analytical techniques or reduce analysis depth during optimization phase.

- Reproducibility Issues: Verify robotic calibration and solution stability throughout experimental timeline.

Protocol: AI-Assisted Retrosynthetic Planning for Complex Molecules

Purpose: To accelerate the design of synthetic routes for target molecules by combining AI-powered disconnection strategies with chemical intuition-based evaluation.

Background: AI has revolutionized retrosynthetic analysis, allowing chemists to devise synthetic routes with unprecedented speed and precision [10]. This protocol integrates computational suggestions with expert evaluation to develop optimal synthetic pathways.

Materials:

- Retrosynthesis software (IBM RXN, AiZynthFinder, or Synthia)

- Chemical database access (Reaxys, SciFinder)

- Electronic laboratory notebook for pathway documentation

Procedure:

Target Molecule Specification:

- Input target structure using standardized chemical representation (SMILES, InChI, or molecular drawing).

- Define strategic constraints if applicable (avoided functional groups, preferred starting materials, safety considerations).

AI-Powered Disconnection Analysis:

- Execute multiple retrosynthetic analysis algorithms to generate diverse synthetic routes.

- Apply filter parameters to focus on most promising pathways (commercial precursor availability, step count, predicted yields).

Pathway Evaluation and Selection:

- Assess generated routes using multi-criteria scoring: step count, atom economy, safety profile, and green chemistry principles.

- Prioritize 2-3 routes for detailed computational and experimental validation.

Critical Intermediate Validation:

- Subject proposed routes to reaction prediction tools (IBM RXN) to estimate feasibility of key transformations.

- Screen proposed reactions against known literature examples for precedent.

Route Refinement and Optimization:

- Apply reaction condition optimization protocols (see Protocol 3.1) to challenging steps within selected routes.

- Iterate between synthetic design and experimental validation to refine the pathway.

Documentation and Knowledge Capture:

- Record both successful and failed disconnection strategies to enhance future AI training.

- Annotate decisions with chemical rationale to build institutional knowledge.

Troubleshooting:

- Overly Complex Route Generation: Adjust algorithm parameters to prioritize simpler transformations or commercially available building blocks.

- Unrealistic Transformation Suggestions: Implement manual curation step to filter chemically implausible suggestions before experimental investment.

- Limited Precedent for Key Steps: Utilize computational reaction modeling tools (Gaussian, ORCA) to predict activation energies and mechanism feasibility [10].

Workflow Visualization of Synergistic Research Approaches

The following diagrams, created using Graphviz DOT language, illustrate key workflows and logical relationships in the synergy between data-driven algorithms and chemical intuition. The color palette complies with the specified guidelines, ensuring sufficient contrast between elements.

Diagram 1: Integrated AI-Chemist Workflow for Synthesis Optimization

Diagram 2: AI-Assisted Retrosynthetic Planning with Expert Validation

The synergy between data-driven algorithms and chemical intuition represents a fundamental shift in organic synthesis methodology. By implementing the protocols and utilizing the tools described in these application notes, researchers can significantly accelerate the optimization of synthetic conditions and the design of novel synthetic routes. This integrated approach, which combines the pattern recognition capabilities of machine learning with the contextual understanding and creative problem-solving of experienced chemists, is poised to profoundly shape the future of organic chemistry [9]. As these technologies continue to evolve, their integration will become increasingly essential for maintaining competitiveness in both academic and industrial research settings, particularly in pharmaceutical development and materials science where rapid innovation is paramount [10].

AI in Action: Machine Learning Workflows and High-Throughput Experimentation Platforms

The optimization of organic synthesis conditions represents a critical challenge in chemical research and development, influencing fields from drug discovery to materials science. Traditional optimization, relying on manual experimentation and one-variable-at-a-time (OVAT) approaches, is inherently limited. It is a labor-intensive, time-consuming task that requires exploring a high-dimensional parametric space, often failing to capture complex variable interactions [8].

A paradigm change has been enabled by the convergence of machine learning (ML) and laboratory automation [5]. This new approach leverages data-driven algorithms to synchronously optimize multiple reaction variables, significantly reducing the number of experiments required and minimizing human intervention [8] [21]. This document outlines the standard ML optimization workflow, providing detailed application notes and experimental protocols tailored for researchers and scientists engaged in optimizing organic reactions.

The Machine Learning Workflow in Context

The machine learning lifecycle is a structured, iterative process distinct from traditional software engineering. Whereas traditional development often follows a linear, deterministic path from requirements to implementation, ML development is fundamentally empirical and data-centric, proceeding through iterative cycles of experimentation and validation [22]. This "scientific method in ML development" involves forming hypotheses (model architecture choices), running experiments (training and validation), analyzing results, and iterating based on findings [22].

In the context of organic synthesis, this iterative workflow is encapsulated in a continuous loop connecting experiment design, execution, data analysis, and model-based decision making [5]. This framework transforms chemical intuition into a quantitative, scalable engineering discipline, enabling the efficient navigation of complex reaction parameter spaces that would be intractable via manual methods.

Standard Workflow for ML-Guided Reaction Optimization

The standard workflow for ML-guided optimization integrates experimental and computational components into a cohesive, self-improving system. The following diagram illustrates the core iterative cycle and the key stages involved.

Stage 1: Design of Experiments (DoE)

The initial stage involves strategically planning the first set of experiments to efficiently explore the reaction parameter space.

- Objective Definition: Clearly define the optimization target(s), which may be a single objective (e.g., maximize yield) or multiple objectives (e.g., maximize yield while minimizing cost and environmental impact) [5].

- Parameter Selection: Identify key continuous variables (e.g., temperature, concentration, time) and categorical variables (e.g., catalyst, solvent, ligand) that influence the reaction outcome [5].

- Initial DoE: Employ statistical design of experiments (DoE) methods to select an initial set of reaction conditions that provide maximal information about the system with a minimal number of experiments. Common approaches include full factorial designs, Plackett-Burman designs, or space-filling designs for more complex spaces [5].

Table 1: Common Experimental Variables in Organic Synthesis Optimization

| Variable Type | Examples | Considerations |

|---|---|---|

| Continuous | Temperature, Time, Concentration, Stoichiometry | Defines a range (e.g., 25°C - 150°C); crucial for modeling continuous relationships. |

| Categorical | Solvent, Catalyst, Ligand, Reagent | Represented numerically for ML models via one-hot or ordinal encoding [23]. |

| Process-Related | Stirring Speed, Pressure, Addition Rate | May require specialized equipment for control and monitoring. |

Stage 2: Reaction Execution with High-Throughput Tools

The planned experiments are executed using high-throughput experimentation (HTE) platforms to generate data rapidly and reliably.

- Platform Selection: HTE platforms leverage a combination of automation, parallelization, and advanced analytics [5].

- Batch Systems: Platforms like Chemspeed SWING use 96-well plate reactor blocks to perform many reactions in parallel, ideal for screening catalysts, ligands, and solvents [5].

- Flow Systems: Continuous flow platforms offer advantages for precise control of reaction time and efficient heat/mass transfer, often used for self-optimization [5].

- Custom Robotic Systems: Advanced labs may employ fully integrated robotic systems, such as the mobile robot developed by Burger et al. for photocatalysis, which links multiple experimental stations [5].

- Automation: Liquid handling systems automate reagent dispensing with high precision, ensuring reproducibility and freeing researcher time [5]. Platforms can perform tasks like heating, cooling, mixing, and even in-line analysis with minimal human intervention.

Stage 3: Data Collection and Processing

The quality of the ML model is directly dependent on the quality of the data. This stage transforms raw experimental results into a structured dataset.

- Analytical Data Collection: Products are characterized using in-line or offline analytical tools. Techniques like UPLC/HPLC, GC, GC-MS, and NMR are common. The output (e.g., yield, conversion, selectivity) is quantified for each reaction [5].

- Data Curation and Feature Engineering: Reaction conditions are recorded in a structured format. Categorical variables are encoded (e.g., one-hot encoding), and molecular structures may be converted into numerical descriptors (e.g., using RDKit) [10]. This creates a feature vector for each experiment.

- Data Storage: All data is stored in a centralized database, often adhering to the FAIR (Findable, Accessible, Interoperable, Reusable) principles to ensure it is usable for current and future modeling efforts.

Stage 4: ML Modeling and Prediction

With a curated dataset, machine learning models are trained to map reaction conditions to outcomes and suggest new experiments.

- Model Selection: The choice of model depends on data size and problem complexity.

- Gaussian Process Regression (GPR): A popular choice for Bayesian optimization due to its ability to provide uncertainty estimates alongside predictions.

- Random Forests / Decision Trees: Effective for non-linear relationships and providing feature importance.

- Neural Networks: Powerful for large, complex datasets and when molecular structures are input as graphs [10].

- Optimization Algorithm: An optimization strategy uses the model's predictions to select the next best experiment(s).

- Bayesian Optimization (BO): A powerful framework that balances exploration (testing in uncertain regions of parameter space) and exploitation (testing conditions predicted to be high-performing) to find the global optimum efficiently [5]. It is particularly effective when experimental runs are expensive or time-consuming.

- Suggestion: The BO algorithm proposes one or a set of reaction conditions predicted to most improve the optimization objective.

Stage 5: Experimental Validation and Iteration

The conditions suggested by the ML model are tested in the lab, closing the loop.

- Validation Run: The proposed experiment is executed, and its outcome is measured analytically.

- Model Update and Iteration: The result from this new experiment is added to the growing dataset. The ML model is retrained on this expanded dataset, improving its accuracy and leading to a new, refined suggestion for the next experiment [5].

- Stopping Criteria: The loop continues until a predefined stopping criterion is met. This could be achieving a performance target, the convergence of suggestions, depletion of resources, or minimal improvement over several iterations.

Essential Tools and Reagents

A successful ML-driven optimization campaign relies on a suite of computational and experimental tools.

Table 2: The Scientist's Toolkit for ML-Guided Optimization

| Category | Tool/Reagent | Function and Application Notes |

|---|---|---|

| ML & Cheminformatics | scikit-learn [23] |

Open-source library for classic ML models (Random Forests, SVMs). Protocol: Use RandomForestRegressor for initial yield prediction. |

RDKit [10] |

Open-source toolkit for cheminformatics; calculates molecular descriptors from structures. | |

Chemprop [10] |

Message-passing neural network specialized for molecular property prediction. | |

| HTE Platforms | Commercial (Chemspeed) [5] | Integrated robotic platform for automated synthesis in well plates. Protocol: Configure a 96-well plate for catalyst/solvent screening. |

| Custom Robotic Systems [5] | Mobile robots or custom rigs for specialized tasks (e.g., photocatalysis). | |

| Analytical Tools | UPLC/HPLC with UV/ELSD | Standard for high-throughput reaction analysis. Protocol: Use a 5-minute gradient method for rapid throughput. |

| Reagent Solutions | Diverse Solvent Library | Covering a range of polarities (hexane to DMSO). Protocol: Use pre-prepared solvent stocks in HTE dispensers. |

| Catalyst/Ligand Libraries | Broad sets of Pd catalysts, phosphine ligands, etc., for reaction discovery. | |

| Internal Standard | e.g., dimethyl fumarate [24]. Protocol: Add a known mass to reaction aliquots for quantitative NMR (qNMR) yield determination [25]. |

A Representative Protocol: ML-Optimized Suzuki-Miyaura Coupling

The following detailed protocol exemplifies the application of the standard workflow to a specific reaction.

Objective: Maximize the yield of a biaryl product from a Suzuki-Miyaura coupling reaction.

Initial Setup and DoE:

- Define Search Space:

- Continuous Variables: Temperature (25-110°C), Time (1-24 h), Catalyst Loading (0.5-5 mol%).

- Categorical Variables: Solvent (Dioxane, DMF, Toluene, Water), Base (K₂CO₃, Cs₂CO₃, NaOH), Pd Catalyst (Pd(PPh₃)₄, Pd(dppf)Cl₂, Pd(OAc)₂).

- Select Initial Design: A space-filling design (e.g., Latin Hypercube Sampling) is used to select 30 initial reaction conditions that broadly cover the defined parameter space.

High-Throughput Execution:

- Automated Setup: A Chemspeed SWING robot is programmed to weigh solids and dispense liquids into a 48-well reaction block [5].

- Reaction Conditions: The block is sealed and heated with stirring. Each well is pressurized with nitrogen to prevent solvent evaporation.

- Parallel Analysis: After the reaction time, an aliquot from each well is quenched and diluted into a 96-well analysis plate.

- UPLC Analysis: The plate is analyzed via UPLC-UV to determine conversion and yield using a calibrated method.

Data Processing & Modeling:

- Data Curation: Results are compiled into a table with columns for all input variables and the output yield.

- Model Training: A Gaussian Process Regression (GPR) model is trained on the initial dataset. Categorical variables are one-hot encoded.

- Bayesian Optimization: The GPR model and an acquisition function (e.g., Expected Improvement) are used to select the next set of 8 reaction conditions predicted to give the highest yield.

Validation and Iteration:

- Iterative Loops: The suggested conditions are executed on the HTE platform, analyzed, and the data is used to update the GPR model.

- Outcome: Typically, within 5-8 iterative loops (totaling 70-90 experiments), the algorithm converges on the optimal set of conditions, often discovering non-intuitive solvent/base combinations that maximize yield.

The standard ML optimization workflow represents a fundamental shift in how organic chemists approach reaction development. By integrating systematic experiment design, high-throughput automation, and iterative machine learning, this methodology enables the rapid and efficient discovery of optimal reaction conditions in a high-dimensional space. This structured, data-driven approach moves beyond traditional one-variable-at-a-time experimentation, accelerating research and development in organic synthesis. As the tools and platforms for this workflow become more accessible and robust, their adoption is poised to become a cornerstone of modern chemical research, particularly in demanding fields like pharmaceutical development.

High-Throughput Experimentation (HTE) has emerged as a transformative approach in modern organic synthesis, enabling the rapid exploration of chemical reaction spaces by conducting numerous parallel experiments under varying conditions. These automated platforms provide the solid technical foundation required for the deep fusion of artificial intelligence with chemistry, allowing researchers to efficiently optimize reaction parameters, screen catalysts, and explore substrate scopes [26]. The integration of HTE with machine learning represents a paradigm shift in chemical research, creating a synergistic relationship where high-quality, extensive datasets generated through HTE train predictive models that subsequently guide more intelligent experimental design.

Within the context of machine learning optimization of organic synthesis, HTE systems serve as the critical data generation engine. The unique advantages of these systems—including low consumption, low risk, high efficiency, high reproducibility, high flexibility, and good versatility—make them indispensable for constructing comprehensive datasets that capture the complex relationships between reaction parameters and outcomes [26]. Intelligent automated platforms for high-throughput chemical synthesis are reshaping traditional scientific approaches, promoting innovation, redefining the rate of chemical synthesis, and revolutionizing material manufacturing methodologies.

HTE Reactor System Architectures

Batch Reactor Systems

Batch reactor systems represent a fundamental architecture within HTE platforms, characterized by their ability to perform multiple reactions simultaneously in discrete, sealed vessels. These systems are particularly valuable for reactions requiring extended reaction times, heterogeneous conditions, or specialized atmospheres. At hte, parallelized batch reactor systems are highly automated, lab-scale systems specifically designed for testing various chemical processes, including polymerization and the precipitation of materials such as battery materials [27]. The modular and flexible design of these systems allows for efficient integration into existing laboratory infrastructures while meeting the highest safety standards.

The design principles of modern HTE batch reactors emphasize modularity and flexibility. As described by hte, these systems are "robust, modular, and easily expandable," offering valuable support during scale-up operations, process development and optimization, and extended catalyst testing [27]. This modularity enables researchers to adapt the systems to challenging process conditions, including high temperatures or demanding feeds and products such as corrosive media. The flexibility extends to the systems' configurability for specific research tasks, particularly in emerging fields like decarbonization, where the ability to quickly adapt experimental setups accelerates innovation.

Flow Reactor Systems

Flow reactor systems represent an alternative HTE architecture where reactions occur in continuously flowing streams rather than in discrete batches. These systems offer distinct advantages for certain reaction classes, including improved heat and mass transfer, enhanced safety profiles for hazardous reactions, and potentially easier scalability from laboratory to production environments. While the provided search results focus primarily on batch systems, the underlying principles of high-throughput experimentation—parallelization, automation, and integrated analytics—apply equally to flow chemistry platforms.

The integration of flow reactor systems into HTE workflows enables the rapid optimization of continuous processes and the exploration of reaction parameters that are specifically relevant to flow chemistry, such as residence time, mixing efficiency, and pressure effects. When combined with machine learning approaches, both batch and flow HTE systems generate the multidimensional datasets necessary to build accurate predictive models of chemical reactivity and process optimization.

HTE System Components and Capabilities

Modern HTE platforms incorporate several integrated components that work in concert to enable efficient, reproducible, and informative experimentation. These systems typically include reactor blocks, automated liquid handling systems, integrated analytical capabilities, and sophisticated software for experimental control and data management.

Reactor Systems: HTE platforms feature specialized reactor designs tailored to different chemical transformations. For catalyst screening and optimization, high throughput systems are designed for parallel testing of multiple catalysts simultaneously, significantly increasing productivity when evaluating heterogeneous catalysts while maintaining high data quality [27]. These systems can screen up to 16 reactions in parallel, with some specialized configurations for electrochemical applications facilitating parallel screening of up to 16 electrochemical cells equipped with specific electrochemical analytics, as well as automatic electrolyte mixing and metering capabilities [27].

Integrated Analytics: A critical feature of modern HTE systems is the integration of analytical capabilities directly within the experimental workflow. hte emphasizes that their laboratory systems are "tailor-made turnkey solutions with integrated analytics and a software package for unit control and data evaluation" [27]. This integration enables rapid analysis of reaction outcomes without manual sample handling, reducing analysis time and minimizing potential errors. The specific analytical techniques employed vary based on the application but often include chromatography (GC, HPLC), spectroscopy (FTIR, NMR), and mass spectrometry.

Software and Data Management: HTE systems incorporate specialized software packages that manage both experimental control and data evaluation. These digital components are essential for handling the large volumes of data generated by high-throughput platforms and ensuring that information is structured appropriately for subsequent machine learning analysis. The software enables researchers to design experimental arrays, control reaction parameters precisely, monitor experiments in real-time, and correlate reaction outcomes with input conditions.

Table 1: Key Characteristics of HTE Reactor Systems

| Characteristic | Batch Reactor Systems | Flow Reactor Systems |

|---|---|---|

| Reaction Volume | Typically 1-50 mL per reactor | Continuous flow with defined residence time |

| Parallelization Capacity | Up to 16-96 parallel reactions [27] | Multiple parallel flow channels |

| Temperature Range | -80°C to 300°C+ | -100°C to 500°C+ |

| Pressure Range | Vacuum to 200 bar | Ambient to 400 bar |

| Mixing Method | Magnetic stirring | Static mixers, segmented flow |

| Residence Time Control | Fixed by reaction duration | Precisely controlled via flow rate |

| Automation Level | High for liquid handling, sampling | High for pumping, parameter control |

| Reaction Phases | Solid, liquid, gas compatible | Primarily homogeneous or slurry |

Applications in Organic Synthesis and Drug Development

HTE systems have found particularly valuable applications in pharmaceutical research and development, where the acceleration of synthetic route development and optimization directly impacts drug discovery timelines. In antibody discovery and optimization, for example, the integration of high-throughput experimentation and machine learning is transforming data-driven antibody engineering, revolutionizing the discovery and optimization of antibody therapeutics [28]. These approaches employ extensive datasets comprising antibody sequences, structures, and functional properties to train predictive models that enable rational design.

The application of HTE extends throughout the drug development pipeline, from early-stage hit identification to late-stage process optimization. Key applications include:

Reaction Condition Optimization: Systematically varying parameters such as temperature, concentration, stoichiometry, and solvent composition to identify optimal conditions for key synthetic transformations.

Catalyst Screening: Rapidly evaluating libraries of homogeneous or heterogeneous catalysts to identify the most selective and efficient catalysts for specific bond-forming reactions.

Substrate Scope Exploration: Testing a particular synthetic methodology across diverse substrate structures to define the limitations and generality of the transformation.

Process Impurity Identification: Intentionally varying process parameters to deliberately generate and identify potential impurities, supporting regulatory filings and quality control strategies.

The generation of high-quality, comprehensive datasets through these applications provides the foundation for machine learning approaches in organic synthesis. By capturing intricate relationships between reaction parameters and outcomes, HTE enables the development of predictive models that can extrapolate beyond the experimentally tested conditions, accelerating the design of optimal synthetic routes.

Table 2: Quantitative Data Output from HTE Systems in Pharmaceutical Applications

| Application Area | Throughput (Experiments/Week) | Data Points Generated | Key Measured Parameters |

|---|---|---|---|

| Catalyst Screening | 100-1,000 | Conversion, selectivity, yield | Temperature, pressure, catalyst loading |

| Reaction Optimization | 50-500 | Yield, impurity profile, kinetics | Solvent composition, stoichiometry, addition rate |

| Enzyme Engineering | 1,000-10,000+ | Activity, specificity, stability | pH, cofactors, substrate concentration |

| Formulation Screening | 200-2,000 | Solubility, stability, dissolution | Excipient ratios, processing parameters |

| Pharmacokinetic Profiling | 100-500 | Clearance, bioavailability, metabolism | Concentration-time data, metabolic stability |

Experimental Protocols for HTE

Protocol 1: High-Throughput Screening of Cross-Coupling Reactions in Batch Reactors

Objective: To systematically optimize a palladium-catalyzed Suzuki-Miyaura cross-coupling reaction using HTE batch reactor systems.

Materials and Equipment:

- HTE batch reactor system with 24-position reactor block

- Automated liquid handling system

- Argon or nitrogen atmosphere glovebox

- HPLC or UPLC system with autosampler

- Aryl halide substrate (1.0 M stock solution in DMF)

- Boronic acid coupling partner (1.2 M stock solution in DMF)

- Palladium catalyst library (0.02 M stock solutions in DMF)

- Ligand library (0.04 M stock solutions in DMF)

- Base solutions (2.0 M aqueous potassium carbonate, potassium phosphate, cesium carbonate)

- Solvents (DMF, toluene, dioxane, water)

Procedure:

- Experimental Design: Utilize a statistical experimental design approach (e.g., D-optimal, factorial design) to define the reaction matrix, varying catalyst (0.5-5 mol%), ligand (1-10 mol%), base (1.5-3.0 equiv), solvent composition (binary mixtures), and temperature (50-120°C).

Reactor Preparation: In an inert atmosphere glovebox, distribute the designated reaction vessels within the HTE reactor block.

Reagent Dispensing: Using the automated liquid handling system, dispense the appropriate volumes of catalyst, ligand, and solvent to each reaction vessel according to the experimental design.

Substrate Addition: Add the aryl halide substrate (0.1 mmol scale) and boronic acid coupling partner (0.12 mmol) to each reaction vessel.

Base Addition: Add the designated base solution (1.5-3.0 equiv) to each reaction vessel.

Reaction Execution: Seal the reactor block and heat to the designated temperatures with continuous agitation (750 rpm) for the prescribed reaction time (typically 2-24 hours).

Quenching and Sampling: After the reaction time, cool the reactor block to room temperature and automatically withdraw aliquots from each reaction vessel.

Analysis: Dilute aliquots with appropriate solvent and analyze by HPLC/UPLC against calibrated standards to determine conversion, yield, and selectivity.

Data Processing: Compile results into a structured database linking reaction parameters to outcomes for subsequent machine learning analysis.

Validation and Quality Control:

- Include control reactions with known outcomes in each experimental array to validate system performance.

- Implement internal standards in analytical methods to ensure quantification accuracy.

- Perform replicate experiments (minimum n=3) for selected conditions to assess reproducibility.

Protocol 2: Optimization of Flow Reaction Parameters Using HTE Approaches

Objective: To optimize residence time, temperature, and stoichiometry for a continuous flow transformation using a high-throughput flow reactor system.

Materials and Equipment:

- HTE flow reactor system with multiple parallel microreactors

- Precision syringe or piston pumps

- Back-pressure regulators

- In-line analytical capability (FTIR, UV-Vis)

- Automated collection system

- Substrate solutions (varying concentrations)

- Reagent solutions (varying stoichiometries)

- Appropriate solvents

Procedure:

- System Configuration: Prime the flow reactor system with solvent and establish stable flow conditions at the desired back-pressure.

Experimental Design: Define a parameter space exploring residence time (0.5-30 minutes), temperature (25-150°C), substrate concentration (0.1-1.0 M), and reagent stoichiometry (1.0-3.0 equiv).

Solution Preparation: Prepare stock solutions of substrates and reagents at concentrations appropriate for the desired stoichiometries at different flow rates.

Parameter Implementation: Program the system to automatically vary flow rates (controlling residence time) and reactor temperatures according to the experimental design.

Equilibration: For each condition, allow the system to stabilize for至少 three residence times before sample collection to ensure steady-state operation.

Sample Collection: Automatically collect output streams for each condition in designated vessels containing appropriate quenching agent if necessary.

In-line Monitoring: Record data from in-line analytical instruments throughout the experiment to monitor reaction progression and stability.

Off-line Analysis: Analyze collected samples by HPLC, GC, or NMR to determine conversion, yield, and selectivity.

Data Compilation: Structure the results to correlate reaction outcomes with flow parameters, including residence time, temperature, concentration, and stoichiometry.

Validation and Quality Control:

- Verify flow rate accuracy through periodic gravimetric measurements.

- Calibrate temperature sensors against reference standards.

- Include replicate conditions at the beginning, middle, and end of the experimental sequence to assess system stability over time.

HTE Experimental Workflow

The following diagram illustrates the integrated workflow of High-Throughput Experimentation combined with Machine Learning for organic synthesis optimization:

HTE-ML Integration Workflow

This workflow demonstrates the iterative cycle between high-throughput experimentation and machine learning. The process begins with clearly defined optimization objectives, followed by ML-guided experimental design that identifies the most informative reactions to execute. After automated execution in HTE systems and integrated analytics, the structured data feeds into ML model training, which generates predictions that guide subsequent validation experiments. This creates a virtuous cycle where each iteration enhances the predictive capability of the models while efficiently exploring the chemical reaction space.

Research Reagent Solutions for HTE

The successful implementation of HTE methodologies requires specialized reagents and materials that enable parallel experimentation while maintaining consistency and reliability across multiple simultaneous reactions.

Table 3: Essential Research Reagent Solutions for HTE Applications

| Reagent/Material | Function in HTE | Application Examples | Technical Specifications |

|---|---|---|---|

| Catalyst Libraries | Enable rapid screening of catalytic activity | Cross-coupling, oxidation, reduction | Pre-weighed in individual vials, 0.1-1.0 mg samples |

| Ligand Collections | Modify catalyst selectivity and reactivity | Asymmetric synthesis, polymerization | 96-well format, 0.05 M stock solutions in appropriate solvents |

| Solvent Systems | Create diverse reaction environments | Solvent optimization studies | HPLC grade, stored over molecular sieves, oxygen-free |

| Substrate Arrays | Explore reaction scope and limitations | Structure-activity relationship studies | 0.5-1.0 M stock solutions in DMSO or DMF |

| Activated Bases | Facilitate reactions requiring strong bases | Deprotonation, elimination reactions | Packaged in single-use capsules to minimize moisture exposure |

| Quenching Reagents | Terminate reactions at precise timepoints | Kinetic studies, reaction profiling | 96-well quench plates with integrated internal standards |

| Internal Standards | Enable accurate quantitative analysis | HPLC, GC calibration | Deuterated or structural analogs at precise concentrations |

High-Throughput Experimentation systems, encompassing both batch and flow reactor architectures, have established themselves as indispensable tools in modern organic synthesis research, particularly within the framework of machine learning optimization. These automated platforms provide the foundational infrastructure for generating the comprehensive, high-quality datasets required to train accurate predictive models of chemical reactivity. The synergy between HTE and machine learning creates a powerful paradigm where data-driven insights guide experimental design, dramatically accelerating the optimization of synthetic methodologies and process development.