Mastering Interrupted Time Series (ITS) Design: A Comprehensive Guide for Robust Drug Utilization and Healthcare Policy Evaluation

This article provides a comprehensive guide to Interrupted Time Series (ITS) design, a powerful quasi-experimental method for evaluating the impact of interventions in healthcare and drug development.

Mastering Interrupted Time Series (ITS) Design: A Comprehensive Guide for Robust Drug Utilization and Healthcare Policy Evaluation

Abstract

This article provides a comprehensive guide to Interrupted Time Series (ITS) design, a powerful quasi-experimental method for evaluating the impact of interventions in healthcare and drug development. Tailored for researchers and professionals, it covers foundational concepts, advanced methodological approaches including segmented regression and ARIMA models, and common analytical pitfalls. Drawing on current literature and empirical findings, the guide offers practical solutions for troubleshooting issues like autocorrelation and model specification, compares the performance of different analytical techniques, and outlines best practices for validation and reporting to ensure rigorous and reliable study outcomes.

What is ITS Design? Foundational Principles and When to Use It in Healthcare Research

In health services and policy research, the Interrupted Time Series (ITS) design has emerged as a powerful quasi-experimental method for evaluating the effects of interventions when randomized controlled trials (RCTs) are infeasible, unethical, or impractical [1]. ITS analyses data collected at multiple time points before and after a well-defined interruption—such as the implementation of a new policy, drug approval, or clinical guideline—to assess whether the intervention caused a significant change in level or trend of the outcome of interest [2] [3]. This design is particularly valuable for evaluating the real-world effectiveness of large-scale health interventions and is increasingly employed in pharmacoepidemiology and health services research.

The fundamental strength of ITS lies in its ability to establish a pre-intervention trend and compare it with post-intervention data, creating a counterfactual framework that strengthens causal inference beyond simpler pre-post designs [1]. By accounting for underlying secular trends, ITS can distinguish between changes that would have occurred naturally and those truly attributable to the intervention. This methodological rigor, combined with its practical applicability, makes ITS an indispensable tool for researchers and drug development professionals seeking robust evidence for regulatory and policy decisions.

Theoretical Foundations and Key Principles

Core Statistical Model

The standard ITS model can be represented mathematically as [3]:

Yₜ = β₀ + β₁T + β₂Xₜ + β₃TXₜ + εₜ

Where:

- Yₜ is the outcome measured at time t

- T is the time since the start of the study

- Xₜ is a dummy variable representing the intervention (0 = pre-intervention, 1 = post-intervention)

- TXₜ is an interaction term between time and intervention

- β₀ represents the baseline level of the outcome

- β₁ is the pre-intervention trend

- β₂ is the immediate level change following the intervention

- β₃ is the change in trend after the intervention

- εₜ represents error terms

Comparison with Other Quasi-Experimental Methods

Table 1: Comparison of Quasi-Experimental Methods in Health Research

| Method | Data Requirements | Key Assumptions | Strengths | Limitations |

|---|---|---|---|---|

| Interrupted Time Series | Multiple time points before & after intervention | Underlying trend would continue without intervention; no concurrent interventions | Controls for secular trends; no control group needed | Requires sufficient data points; vulnerable to autocorrelation |

| Difference-in-Differences | Pre/post data for treatment and control groups | Parallel trends assumption | Controls for time-invariant confounders | Violation of parallel trends can bias estimates |

| Synthetic Control Method | Time-series data for treated unit & multiple control units | Combination of control units approximates treatment unit | Flexible approach for single-unit interventions | Complex implementation; limited statistical inference |

| Pre-Post Design | Single point before & after intervention | No other factors changed between measurements | Simple implementation and analysis | Highly vulnerable to confounding and secular trends |

Among these approaches, ITS performs particularly well when data for a sufficiently long pre-intervention period are available and the underlying model is correctly specified [1]. When all included units have been exposed to treatment (single-group designs), ITS provides a robust framework for impact evaluation without requiring identification of control groups [1].

ITS Application Protocols and Methodologies

Standard Implementation Protocol

Table 2: Step-by-Step ITS Implementation Protocol for Drug Development Research

| Phase | Key Activities | Methodological Considerations | Quality Checks |

|---|---|---|---|

| Protocol Development | Define research question; identify intervention point; select outcome measures; determine sample size | Ensure clinical relevance of outcomes; specify primary and secondary analyses | Protocol registration; ethical approvals; statistical review |

| Data Collection | Extract time-series data; ensure consistent measurement; document data sources | Adequate pre- and post-intervention periods; consistent frequency; manage missing data | Data quality audit; verification of intervention timing; outlier assessment |

| Model Specification | Select statistical model; account for autocorrelation; control for covariates | Check stationarity; identify seasonal patterns; select appropriate correlation structure | Residual diagnostics; goodness-of-fit tests; variance inflation factors |

| Analysis Execution | Parameter estimation; hypothesis testing; effect size calculation | Adjust for autocorrelation; consider segmented regression; model level and slope changes | Sensitivity analyses; validation of model assumptions; robustness checks |

| Interpretation & Reporting | Estimate intervention effects; contextualize findings; discuss limitations | Differentiate statistical vs. clinical significance; consider confounding factors | Comparison with prior evidence; assessment of publication bias |

Advanced Analytical Approaches

For complex interventions, researchers may employ two-stage ITS designs to evaluate multiple intervention components simultaneously [4]. The statistical code provided illustrates how to model two sequential interventions while controlling for covariates using PROC MIXED for continuous outcomes and Poisson regression for count outcomes [4]. This approach is particularly relevant in drug development when evaluating phased implementation or combination therapies.

When implementing ITS analyses, researchers must address autocorrelation (correlation between successive observations), which violates the independence assumption of standard regression models [2]. Appropriate techniques include using autoregressive integrated moving average (ARIMA) models or including correlation structures in generalized estimating equations [2].

Data Visualization and Presentation Standards

Effective visualization is crucial for interpreting and communicating ITS findings. The following standards ensure accurate representation of time series data and intervention effects:

Core Graphing Principles

- Plot all raw data points used in the analysis to allow examination of variation and facilitate data extraction [2]

- Clearly indicate the interruption time with a vertical line or shaded region, labeled with the intervention type [2]

- Display fitted pre- and post-intervention trend lines using bold, solid lines to distinguish from raw data [2]

- Include counterfactual trend lines showing what would have occurred without the intervention, using different line patterns [2]

- Ensure axes are clearly labeled with variables and units of measurement, aligning tick marks with data points [2]

Recent assessments of ITS graphs in published literature found that only 33% allowed accurate data extraction, highlighting the need for greater adherence to visualization standards [2]. Common deficiencies included unclear data points, missing trend lines, and poorly defined interruption points.

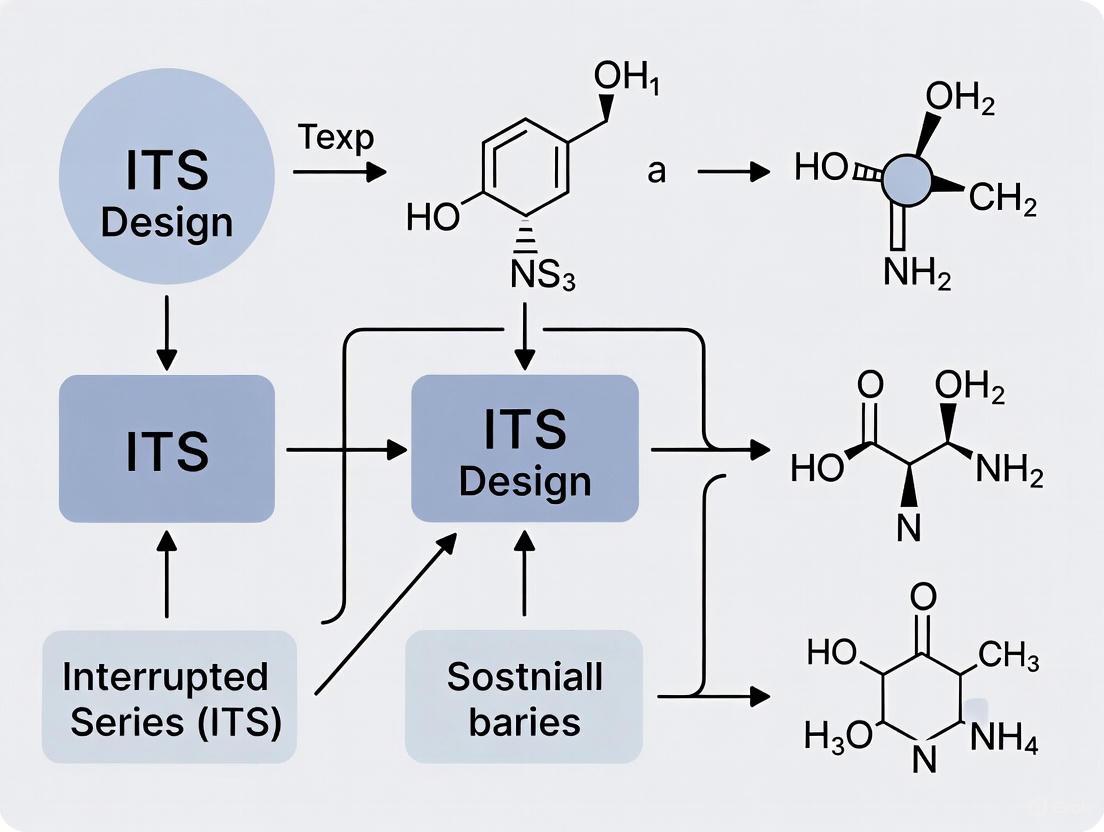

Visual Workflow for ITS Analysis

ITS Analytical Workflow

Comparative Performance and Validation

Evidence from methodological comparisons demonstrates that ITS provides reliable effect estimates when its assumptions are met. A simulation study comparing quasi-experimental methods found that ITS performs very well when all included units have been exposed to treatment and data for a sufficiently long pre-intervention period are available [1]. The key advantage of ITS over simpler pre-post designs is its ability to account for and separate underlying secular trends from intervention effects.

In an empirical comparison of methods evaluating the introduction of activity-based funding in Irish hospitals, ITS produced statistically significant results that differed in interpretation from control-group methods like Difference-in-Differences and Synthetic Control [3]. This highlights the importance of method selection based on the specific research context and available data structure.

Essential Research Reagents and Tools

Table 3: Essential Analytical Tools for ITS Implementation

| Tool Category | Specific Software/Solutions | Primary Application in ITS | Key Functions |

|---|---|---|---|

| Statistical Software | SAS (PROC AUTOREG, PROC MIXED) | Model estimation and inference | Time series analysis; autocorrelation correction; parameter estimation |

| Statistical Packages | R (its.analysis, forecast packages) | Flexible model specification | Segmented regression; ARIMA modeling; visualization |

| Data Visualization | Stata, ggplot2, specialized ITS graphing tools | Creating standard-compliant graphs | Raw data plotting; trend line fitting; counterfactual display |

| Quality Assurance | Statistical diagnostic tools | Validation of model assumptions | Residual analysis; autocorrelation tests; goodness-of-fit assessment |

Specialized statistical software is essential for proper ITS implementation, as standard analytical packages may not adequately address autocorrelation or enable appropriate counterfactual modeling [4]. The provided SAS code illustrates a comprehensive approach to ITS analysis, including model specification, estimation of key parameters, and generation of appropriate visualizations [4].

Interrupted Time Series design represents a methodologically rigorous approach for evaluating intervention effects when RCTs are not feasible. By properly implementing ITS protocols—including appropriate model specification, accounting for autocorrelation, and adhering to visualization standards—researchers can generate robust evidence to inform drug development, health policy, and clinical practice. The structured protocols and analytical frameworks presented in this document provide researchers with practical guidance for applying ITS methods across diverse healthcare contexts.

The Interrupted Time Series (ITS) design is a robust quasi-experimental methodology used to evaluate the impact of interventions or exposures when randomized controlled trials (RCTs) are impractical due to high costs, ethical concerns, or the population-level nature of the intervention [5]. This design is characterized by the collection of data at multiple time points both before and after a clearly defined interruption. By modeling the pre-interruption trend, researchers can establish a counterfactual—what would have likely occurred without the intervention—and compare it to the observed post-interruption data [5] [6]. This allows for the estimation of both immediate and long-term intervention effects, accounting for underlying secular trends. ITS designs are particularly valuable in implementation science and public health for assessing the effects of policy changes, health system interventions, and large-scale quality improvement initiatives [5] [7].

Core Analytical Components of ITS

The analysis of ITS data typically employs segmented regression models to quantify intervention effects. These effects are conceptualized through two primary components: level changes and slope changes. The standard segmented regression model for a single interruption can be parameterized as follows [6]:

Y_t = β₀ + β₁*t + β₂*D_t + β₃*(t - T_I)*D_t + ε_t

Where:

- Y_t is the outcome measured at time

t. - t is a continuous variable indicating time elapsed since the start of the observation period.

- D_t is a dummy variable representing the post-interruption period (0 before the interruption, 1 after).

- T_I is the time at which the interruption occurs.

- ε_t represents the error term.

The core components derived from this model are summarized in the table below.

Table 1: Core Components of the Interrupted Time Series Model

| Component | Statistical Parameter | Interpretation | Visual Representation |

|---|---|---|---|

| Level Change | β₂ | Represents the immediate effect of the intervention. It is the change in the outcome's level that occurs immediately following the interruption, measured as the difference between the observed value just after the intervention and the value predicted by the pre-interruption trend. | A vertical shift in the time series line at the point of interruption. |

| Slope Change | β₃ | Represents the long-term effect of the intervention. It quantifies the change in the trajectory (steepness) of the time series after the interruption compared to the pre-interruption trend. | A change in the angle of the time series line after the interruption. |

| Pre-Interruption Slope | β₁ | Describes the underlying secular trend of the outcome before the intervention was implemented. | The direction and steepness of the line in the pre-interruption segment. |

| Baseline Level | β₀ | Represents the starting level of the outcome at time zero. | The Y-intercept of the time series. |

The Critical Assumption of ITS

The primary assumption underpinning a causal interpretation of ITS results is that the pre-interruption trend would have persisted unchanged into the post-interruption period in the absence of the intervention [5]. This assumption cannot be empirically proven and relies on the researcher's contextual knowledge and methodological rigor to ensure that no other concurrent events or changes (confounders) could plausibly explain the observed deviation in the time series. Violations of this assumption threaten the validity of the study's conclusions.

Quantitative Data and Statistical Protocols

Comparison of Statistical Methods for ITS Analysis

A critical consideration in ITS analysis is accounting for autocorrelation (serial correlation), where data points close in time are more similar than those further apart. Failure to account for positive autocorrelation can lead to underestimated standard errors, inflated test statistics, and an increased risk of Type I errors [6]. Several statistical methods are available, each handling autocorrelation differently. An empirical evaluation of 190 published ITS found that the choice of method can lead to substantially different conclusions, with statistical significance (at the 5% level) differing in 4% to 25% of pairwise comparisons between methods [6].

Table 2: Comparison of Statistical Methods for Analyzing Interrupted Time Series Data

| Statistical Method | Description | Handling of Autocorrelation | Key Considerations |

|---|---|---|---|

| Ordinary Least Squares (OLS) | Most basic method; fits model via standard linear regression. | Does not account for autocorrelation. Standard errors are likely biased if autocorrelation is present. | Simple to implement but not recommended for ITS due to high risk of biased inference [6]. |

| OLS with Newey-West Standard Errors (NW) | Uses OLS for parameter estimates but adjusts the standard errors to account for autocorrelation and heteroscedasticity. | Corrects the standard errors post-estimation, providing more robust confidence intervals and p-values. | A pragmatic improvement over OLS; provides some protection against autocorrelation [6]. |

| Prais-Winsten (PW) | A generalized least squares (GLS) method that transforms the data to account for first-order autocorrelation. | Directly models the autocorrelation in the error term (AR1 process) and uses this to improve estimation. | Often more statistically efficient than OLS/NW when autocorrelation is correctly specified [6]. |

| Restricted Maximum Likelihood (REML) | A likelihood-based method that reduces bias in the estimation of variance components. | Can model autocorrelation directly. The Satterthwaite approximation (Satt) can be used for small samples. | Provides less biased variance estimates, which is beneficial for shorter time series [6]. |

| Autoregressive Integrated Moving Average (ARIMA) | A flexible class of models that can capture complex patterns, including autocorrelation, trends, and seasonality. | Explicitly models the dependency structure using lagged values of the series and the error terms. | Highly flexible but requires more expertise to specify the correct model order [5] [6]. |

Protocol for Conducting an ITS Analysis

The following protocol provides a step-by-step methodology for designing, conducting, and analyzing an ITS study.

Protocol 1: ITS Design and Analysis Workflow

- Define the Intervention and Interruption Point: Clearly specify the intervention and the exact time point (

T_I) at which it is implemented. - Ensure Sufficient Data Points: Collect data for a sufficient number of time points before and after the interruption. A minimum of 3 points per segment is often cited, but more are strongly recommended for robust trend estimation and autocorrelation modeling [5] [6].

- Check and Control for Confounders: Systematically identify and document any other events or changes that occurred around the time of the intervention that could affect the outcome. If possible, collect data on these potential confounders for inclusion in the statistical model.

- Conduct Descriptive Analysis: Plot the raw data to visually inspect for trends, seasonality, outliers, and any obvious level or slope changes at the interruption point.

- Specify the Statistical Model Pre-Analysis: Pre-specify the segmented regression model (as in Equation 1) and the primary statistical method for estimation (e.g., Prais-Winsten) in a study protocol to avoid data-driven choices [6].

- Estimate Model Parameters: Fit the segmented regression model using the chosen statistical method.

- Test for and Model Autocorrelation: Examine the residuals of the model for significant autocorrelation (e.g., using Durbin-Watson or Ljung-Box tests). If autocorrelation is present, consider using a method that accounts for it (PW, REML, ARIMA) or confirm that the chosen method (NW) provides adequate correction.

- Interpret the Core Components: Interpret the estimated coefficients (

β₂for level change,β₃for slope change) along with their confidence intervals and p-values. The level change indicates the immediate effect, while the slope change indicates the sustained, long-term effect on the trend. - Perform Sensitivity Analyses: Assess the robustness of the findings by re-analyzing the data using alternative statistical methods (e.g., comparing PW, NW, and ARIMA results) to ensure conclusions are not dependent on a single methodological choice [5] [6].

Visualization of ITS Concepts and Workflow

The following diagrams, generated using Graphviz, illustrate the core model of an ITS and the recommended analytical workflow.

The Scientist's Toolkit: Essential Reagents for ITS Research

Table 3: Key Research Reagent Solutions for Interrupted Time Series Analysis

| Tool / Reagent | Function / Application | Example / Note |

|---|---|---|

| Statistical Software (R/Stata/SAS) | Platform for executing segmented regression and advanced time series analyses. | R with packages like nlme, forecast, lmtest, and sandwich is widely used for its flexibility and comprehensive time series capabilities. |

| Segmented Regression Code | The script specifying the statistical model to estimate level and slope changes. | Pre-written code templates for methods like Prais-Winsten or Newey-West prevent errors and ensure reproducibility. |

| Autocorrelation Diagnostic Tests | Statistical tests to detect the presence and structure of autocorrelation in model residuals. | The Durbin-Watson test for first-order autocorrelation and the Ljung-Box test for higher-order autocorrelation are essential diagnostics. |

| WebPlotDigitizer | A tool for digitally extracting aggregated data points from published graphs in systematic reviews or meta-analyses. | Critical for including data from published ITS studies when raw data are not otherwise available [6]. |

| Implementation Science Frameworks (e.g., CFIR) | Conceptual tools to guide the understanding of context and determinants influencing the intervention being evaluated. | The Consolidated Framework for Implementation Research (CFIR) helps systematically identify potential confounders and facilitators affecting the outcome [8]. |

| Pre-Analysis Plan | A formal document pre-specifying the research question, model, primary analysis method, and outcomes. | Registering a pre-analysis plan reduces bias and enhances the credibility of reported ITS findings [6]. |

Application Notes: The Role of ITS in Health Research

Interrupted Time Series (ITS) design is a powerful quasi-experimental method for evaluating the impact of interventions when randomized controlled trials (RCTs) are not feasible, ethical, or practical [9] [10]. ITS analyses involve collecting data at multiple, equally spaced time points before and after a defined intervention to determine whether the intervention has caused a significant change in the level or trend of the outcome of interest [9]. This design is particularly valuable in health services research, where policymakers and researchers need to understand the real-world effects of interventions implemented at the population or health system level.

Within the traditional translational research pipeline, ITS designs occupy a crucial space in dissemination and implementation research [11]. They help answer how evidence-based clinical and preventive interventions can be successfully adopted, scaled up, and sustained within community or service delivery systems after efficacy and effectiveness have been established [11]. The strength of ITS lies in its ability to control for underlying trends and secular changes, providing a robust counterfactual for what would have happened without the intervention.

Table 1: Key Characteristics of Interrupted Time Series Design

| Characteristic | Description | Importance in Health Evaluation |

|---|---|---|

| Pre-intervention Data Points | Multiple measurements before intervention | Establishes baseline trend and pattern |

| Post-intervention Data Points | Multiple measurements after intervention | Captures intervention effects over time |

| Known Intervention Time | Exact timing of intervention is specified | Allows precise modeling of intervention effects |

| Autocorrelation Consideration | Accounting for correlation between consecutive measurements | Ensures proper statistical inference |

| Seasonality Adjustment | Controlling for periodic fluctuations | Isolates intervention effects from seasonal patterns |

Experimental Protocols for ITS Analysis

Protocol 1: Segmented Regression Analysis for ITS

Purpose: To quantify intervention effects using segmented regression, the most commonly applied method in healthcare ITS studies [10].

Methodology:

- Data Collection: Collect equally spaced time series data (e.g., monthly, quarterly) with a minimum of 2 pre-intervention and 1 post-intervention points, though substantially more are recommended for adequate power [9].

- Model Specification: Fit a segmented regression model with terms for:

- Baseline trend

- Immediate level change post-intervention

- Trend change post-intervention

- Autocorrelation Testing: Use Durbin-Watson or other statistical tests to detect autocorrelation, which is present in 55% of healthcare ITS studies but formally tested in only 63% of those [10].

- Model Adjustment: If autocorrelation is detected, use autoregressive integrated moving average (ARIMA) models or include correlation structures in segmented regression.

- Effect Estimation: Calculate both absolute and relative effects with confidence intervals for:

- Immediate level change (reported in 70% of studies)

- Slope change (reported in 84% of studies)

- Long-term level change (reported in 21% of studies) [10]

Interpretation: The coefficients for level and slope changes represent the intervention's impact, adjusted for pre-existing trends.

Protocol 2: ARIMA Modeling for Complex Time Series

Purpose: To model ITS data with complex autocorrelation patterns, seasonality, or non-stationarity [9].

Methodology:

- Stationarity Assessment: Check for constant mean and variance over time using differencing if needed.

- Model Identification: Determine appropriate ARIMA(p,d,q) parameters where:

- p = autoregressive order

- d = degree of differencing

- q = moving average order

- Intervention Components: Include step or pulse functions to model intervention effects.

- Model Validation: Check residuals for white noise properties using ACF/PACF plots and Ljung-Box test.

- Comparison with Alternative Models: Consider Generalized Additive Models (GAMs) when the form of non-linear relationships is unknown [9].

Application Notes: ARIMA demonstrates consistent performance across different policy effect sizes and seasonal patterns, while GAMs show greater robustness to model misspecification [9].

ITS Analysis with ARIMA Modeling

Ideal Use Cases in Healthcare

Health Policy Evaluation

ITS designs are exceptionally well-suited for evaluating population-level health policies because they can detect both immediate and gradual effects of policy implementation [9]. The strength of ITS in policy analysis lies in its ability to account for pre-existing trends, which is crucial when policies are implemented in dynamic healthcare environments.

Exemplar Applications:

- Taxation Policies: Evaluating the impact of alcohol or sugar-sweetened beverage taxes on consumption patterns and health outcomes [9]. These analyses often reveal anticipatory effects (declines before implementation) and lagged effects (full impact realized over years).

- Regulatory Changes: Assessing the effect of prescription drug monitoring programs on opioid prescribing patterns and overdose rates.

- Coverage Decisions: Measuring the impact of insurance coverage expansion on healthcare utilization and outcomes.

Table 2: Health Policy Interventions Evaluated with ITS Designs

| Policy Type | Exemplar Study Focus | Typical Outcomes Measured | Data Collection Frequency |

|---|---|---|---|

| Legislative Policies | Bans on alcohol marketing [9] | Consumption rates, Mortality | Monthly/Quarterly |

| * Fiscal Policies* | Taxation changes [9] | Sales data, Hospitalizations | Monthly |

| * Regulatory Policies* | Prescription restrictions [11] | Prescribing rates, Adverse events | Monthly |

| * Coverage Policies* | Insurance expansion [11] | Utilization rates, Health outcomes | Quarterly/Annual |

Drug Utilization Review and Evaluation

Drug Utilization Review (DUR) represents a prime application for ITS designs in pharmaceutical research and regulation [12]. ITS methods can evaluate the impact of prospective, concurrent, and retrospective DUR programs on prescribing patterns, medication safety, and healthcare utilization.

Implementation Framework:

- Prospective DUR: ITS can evaluate screening interventions applied before medication dispensing to assess their impact on inappropriate prescribing, drug-disease contraindications, and therapeutic duplication [12].

- Concurrent DUR: Analyze ongoing monitoring interventions during treatment to assess effects on medication adherence, appropriate duration, and early problem detection.

- Retrospective DUR: Evaluate systematic reviews of medication use after treatment to identify patterns of overuse, underuse, or misuse and assess corrective interventions [12].

Sample Protocol for Drug Policy Evaluation:

- Objective: Evaluate the impact of a prior authorization policy for a new high-cost medication.

- Data: Monthly prescription claims data for 24 months pre-implementation and 24 months post-implementation.

- Outcomes: Primary - appropriate use rate; Secondary - alternative medication use, costs.

- Analysis: Segmented regression with adjustment for seasonality and autocorrelation.

Drug Utilization Review ITS Framework

Healthcare Program Implementation

ITS designs are widely used to evaluate health programs at the hospital, health system, or population level [10]. Programs represent the most common intervention type evaluated using ITS (35% of healthcare ITS studies), followed by policies (28%) [10].

Key Considerations for Program Evaluation:

- Intervention Specification: Clearly define when the program was implemented and whether there was a transition or ramp-up period (considered in only 17% of studies) [10].

- Fidelity Assessment: Monitor program implementation fidelity across sites and over time.

- Contextual Factors: Document concurrent events or policies that might confound program effects.

Methodological Considerations and Reporting Standards

Sample Size and Power Considerations

Determining adequate sample size (number of time points) in ITS designs remains challenging, with only 6% of healthcare ITS studies reporting any sample size calculation [10]. While traditional rules of thumb suggest a minimum of 50 observations, requirements depend on multiple factors:

- Data Variability: More variable data require more time points

- Effect Size: Smaller detectable effects require more observations

- Seasonal Patterns: Seasonal models require additional degrees of freedom (m-1 for seasonal period m)

- Model Complexity: ARIMA models with multiple parameters require more data than simple segmented regression

Simulation approaches are recommended for power analysis, particularly for complex models like GAM where effective degrees of freedom vary by smooth term [9].

Addressing Common Methodological Challenges

Table 3: Methodological Challenges in ITS Analysis

| Challenge | Description | Recommended Approaches |

|---|---|---|

| Autocorrelation | Correlation between sequential observations | Use Durbin-Watson test; Employ ARIMA or correlated error models [9] [10] |

| Seasonality | Regular periodic fluctuations | Include seasonal terms; Use seasonal ARIMA; Apply seasonal adjustment [9] |

| Non-stationarity | Changing mean or variance over time | Apply differencing; Use integrated (I) component in ARIMA [9] |

| Multiple Interventions | Concurrent or sequential interventions | Include multiple intervention terms; Model complex intervention patterns [10] |

| Missing Data | Gaps in time series | Use appropriate imputation; Model missing data mechanism [10] |

Current Reporting Practices and Gaps

Recent methodological reviews reveal significant reporting gaps in healthcare ITS studies [10]:

- Only 57% report analytical methods in abstracts

- Just 29% specify number of pre-intervention points in abstracts

- Only 28% specify number of post-intervention points in abstracts

- Seasonality is considered in only 24% of studies

- Non-stationarity is addressed in only 8% of studies

- Sensitivity analyses are conducted in only 17% of studies

The Scientist's Toolkit: Essential Research Reagents

Table 4: Essential Methodological Tools for ITS Analysis

| Tool Category | Specific Solutions | Function/Application |

|---|---|---|

| Statistical Software | R (package: forecast), SAS PROC ARIMA, Stata itsa |

Model estimation, hypothesis testing, forecasting |

| Primary Analysis Methods | Segmented regression, ARIMA models, Generalized Additive Models (GAM) | Quantifying intervention effects, handling autocorrelation [9] |

| Autocorrelation Diagnostics | Durbin-Watson test, ACF/PACF plots, Ljung-Box test | Detecting and quantifying autocorrelation in residuals [10] |

| Data Visualization | Time series plots with intervention points, ACF plots, residual plots | Visual assessment of trends, intervention effects, model adequacy |

| Sample Size Planning | Simulation-based power analysis, heuristic approaches | Determining adequate number of time points [9] |

ITS Analytical Method Selection

Interrupted Time Series (ITS) design is a powerful quasi-experimental method for evaluating the impact of large-scale health interventions when randomized controlled trials are not feasible or ethical [13]. The analysis of data from such designs hinges on a clear understanding of core time series concepts. Autocorrelation, seasonality, stationarity, and trend are not merely statistical properties; they are fundamental characteristics that, if unaccounted for, can severely bias the estimation of an intervention's effect [14] [13]. This document provides application notes and protocols for researchers and drug development professionals, framing these concepts within the practical context of ITS implementation research, such as evaluating a new drug's rollout or a policy change affecting prescribing practices.

Core Terminology and Implications for ITS

Conceptual Definitions

Trend: A trend represents a long-term increase or decrease in the data, which does not necessarily have to be linear [15]. In a pharmaceutical context, a gradual, nationwide increase in the use of a particular drug class preceding an intervention would constitute a trend. Failing to control for this underlying trend can lead to misattributing the pre-existing growth to the intervention effect.

Seasonality: Seasonality refers to patterns that repeat themselves over a fixed and known period (e.g., time of year or day of the week) [15]. This is common in health data due to factors like weather patterns (e.g., higher antibiotic prescriptions in winter) or administrative processes (e.g., increased medicine dispensings at the end of a financial year) [13]. In ITS, unmodeled seasonality can create the illusion of an intervention effect when the observed change is merely part of a predictable, recurring cycle.

Autocorrelation (Serial Correlation): Autocorrelation describes the phenomenon where successive values in a time series are correlated with themselves over time [16]. In simpler terms, an observation at one time point (e.g., drug sales this month) is often a good predictor of the observation at the next time point (drug sales next month). This violates the standard statistical assumption of independent errors. The presence of autocorrelation can severely bias inferences, leading to underestimation of standard errors and overconfidence in the significance of the intervention effect [14].

Stationarity: A time series is stationary if its statistical properties—such as mean, variance, and covariance—are constant over time [13]. Many time series models, including those based on Autoregressive Integrated Moving Average (ARIMA), require the data to be stationary. An interruption itself, like a policy change, is a structural break that induces non-stationarity. Therefore, the goal is often to achieve stationarity in the data before the intervention to build a reliable model, which is then used to assess the impact of the interruption [17] [18].

Interrelationships and Impact on ITS Analysis

These four concepts are deeply intertwined. A time series can exhibit a trend, upon which seasonal patterns are superimposed, and the deviations from these patterns (residuals) may be autocorrelated. In ITS analysis, the primary risk is that these inherent patterns can be confounded with the intervention effect. For instance, a sharp change following an intervention might be part of a seasonal cycle, or a pre-existing downward trend might make an intervention appear more effective than it truly is. Proper ITS modeling requires isolating the intervention effect from these other components.

Table 1: Core Terminology and ITS Implications

| Term | Core Definition | Primary Risk in ITS Analysis | Common Remedial Actions |

|---|---|---|---|

| Trend | Long-term, non-random, directional movement in the data [15]. | Confounding the intervention effect with a pre-existing slope. | Detrending via differencing; including a time variable in segmented regression. |

| Seasonality | Fixed-frequency, recurring patterns (e.g., yearly, quarterly) [15]. | Misinterpreting a predictable, recurrent change as an intervention effect. | Seasonal differencing; including seasonal dummy variables; using Seasonal ARIMA (SARIMA). |

| Autocorrelation | Correlation of a time series with its own lagged values [16]. | Biased standard errors, leading to overestimation of the intervention's significance [14]. | Using models that explicitly model the error structure (e.g., ARIMA, GLS). |

| Stationarity | Constant statistical properties (mean, variance) over time [13]. | Invalid model parameters and spurious regression results. | Differencing (regular and seasonal); transformations (e.g., log); explicit trend modeling. |

Experimental Protocols for Analysis

This section outlines a standard workflow for preparing and analyzing an ITS dataset, focusing on diagnosing and managing trend, seasonality, and autocorrelation.

Data Preparation and Visualization Protocol

Objective: To visually inspect the raw time series data for initial patterns and prepare it for formal analysis.

Data Loading and Formatting: Load the data (e.g., a CSV file with monthly counts of a drug's dispensings) into a statistical software environment (e.g., R, Python, SAS). Ensure the time variable is correctly parsed as a date-time object and set as the series index. Python Code Snippet:

Initial Visualization: Plot the raw time series data against time. This is the first and most crucial step for identifying obvious trends, seasonal cycles, and potential structural breaks at the intervention point. Python Code Snippet:

Protocol for Decomposition and Stationarity Testing

Objective: To formally decompose the time series into its constituent parts and test for stationarity.

Time Series Decomposition: Decompose the series into trend, seasonal, and residual components. This helps visualize the contribution of each. Python Code Snippet (using statsmodels):

Test for Stationarity: Apply statistical tests to check the null hypothesis of non-stationarity.

- Augmented Dickey-Fuller (ADF) Test: Null hypothesis: the series has a unit root (non-stationary).

- KPSS Test: Null hypothesis: the series is trend-stationary.

Python Code Snippet:

Interpretation: A low p-value (e.g., <0.05) in the ADF test allows rejection of the null, suggesting stationarity. The converse is generally true for the KPSS test.

Addressing Non-Stationarity: If the series is non-stationary, apply differencing.

- First Differencing: Subtract the previous value from the current value (

Y_t - Y_{t-1}) to remove a linear trend. - Seasonal Differencing: For seasonal data, subtract the value from the same point in the previous season (e.g.,

Y_t - Y_{t-12}for monthly data) [19]. Python Code Snippet:

Re-run the stationarity tests on the differenced data.

- First Differencing: Subtract the previous value from the current value (

Protocol for Autocorrelation Analysis and Model Fitting

Objective: To quantify and model the autocorrelation structure and fit an appropriate ITS model.

Plot Autocorrelation Functions:

- Autocorrelation Function (ACF): Plots the correlation between the series and its lags.

- Partial Autocorrelation Function (PACF): Plots the partial correlation between the series and its lags, controlling for correlations at shorter lags.

Python Code Snippet:

Model Selection and Fitting:

- Segmented Regression: Can be used if the autocorrelation is minimal or modeled explicitly. It directly includes terms for

time,intervention, andtime after intervention. - ARIMA/SARIMA Models: A robust alternative when autocorrelation and seasonality are present. The model is defined by (p,d,q) for non-seasonal and (P,D,Q,m) for seasonal components [13].

Python Code Snippet for SARIMA:

- Segmented Regression: Can be used if the autocorrelation is minimal or modeled explicitly. It directly includes terms for

Model Diagnostics: Check the residuals of the final model. They should resemble white noise (i.e., no significant autocorrelations). Plot the ACF/PACF of the residuals and perform a Ljung-Box test.

The following workflow diagram illustrates the logical relationship and sequence of these analytical steps.

For researchers implementing ITS analyses, the following "research reagents" are essential computational tools and statistical constructs.

Table 2: Key Research Reagents for ITS Analysis

| Tool/Reagent | Type | Function in ITS Analysis |

|---|---|---|

| Statistical Software (R/Python/SAS) | Software Platform | Provides the computational environment for data manipulation, modeling, and visualization. |

| Augmented Dickey-Fuller (ADF) Test | Statistical Test | Formally tests the null hypothesis that a time series has a unit root (is non-stationary) [19]. |

| Autocorrelation Function (ACF) Plot | Diagnostic Plot | Visualizes the correlation between a time series and its lagged values, helping to identify AR/MA processes and seasonality [13]. |

| Partial Autocorrelation Function (PACF) Plot | Diagnostic Plot | Displays the partial correlation of a time series with its own lagged values, controlling for intermediate lags; crucial for identifying the order of autoregressive (AR) processes. |

| Seasonal Decomposition (e.g., STL) | Analytical Method | Separates a time series into trend, seasonal, and residual components, allowing for a clear inspection of each [20] [19]. |

| SARIMA Model | Statistical Model | Extends ARIMA to explicitly model seasonal autocorrelation patterns, defined by parameters (p,d,q)(P,D,Q,m) [13]. |

Differencing (Y_t - Y_{t-1}) |

Data Transformation | A method to remove trend and achieve stationarity by computing the changes between consecutive observations [13]. |

Application in Drug Development Trends

The rigorous application of ITS design is particularly relevant in the context of contemporary drug development. The industry is increasingly characterized by the use of artificial intelligence for drug discovery, a growth in personalized and precision medicine, and the adoption of virtual clinical trials and digital health technologies [21] [22]. These trends generate complex, longitudinal data perfect for ITS evaluation. For instance, an ITS could be used to assess the impact of an AI-driven diagnostic tool on time-to-patient-identification for a rare disease trial, or to evaluate how a new policy on personalized medicine reimbursement affected the uptake of a targeted therapy. In all these scenarios, controlling for autocorrelation, seasonality, and underlying trends is essential for deriving valid, actionable insights that can inform regulatory and commercial decisions.

Implementing ITS Analysis: Methodological Choices from Segmented Regression to ARIMA and GAM

The interrupted time series (ITS) design is a powerful quasi-experimental methodology used to evaluate the effects of interventions when randomized controlled trials are not feasible, ethical, or practical [23] [24]. Within this framework, segmented regression has emerged as the most prevalent and recommended statistical technique for analyzing time series data before and after a well-defined intervention [24] [25]. Also known as piecewise or broken-stick regression, this method allows researchers to quantify whether an intervention causes a significant change in the level or trend of an outcome of interest, beyond any pre-existing secular trends [23] [26].

The fundamental strength of segmented regression in ITS analysis lies in its ability to distinguish intervention effects from underlying trends that would have occurred regardless of the intervention [24]. This addresses a critical limitation of simple before-and-after comparisons, which may wrongly attribute secular trends to the intervention itself [23]. In implementation research across healthcare, public policy, and pharmaceutical development, segmented regression provides a robust analytical foundation for causal inference about real-world interventions [23] [27].

Theoretical Framework and Key Concepts

Core Model Specifications

Segmented regression models for ITS partitions a series of observations into pre- and post-intervention segments, fitting separate regression lines to each interval [26]. The most common parameterization for a continuous outcome variable involves four key parameters [23] [28]:

Table 1: Core Coefficients in Segmented Regression for ITS Analysis

| Parameter | Interpretation | Causal Inference Question |

|---|---|---|

| β₀ | Baseline level of outcome at time zero | What was the starting level? |

| β₁ | Pre-intervention slope (secular trend) | What was the underlying trend before intervention? |

| β₂ | Immediate level change following intervention | Did the intervention cause an immediate shift? |

| β₃ | Change in slope from pre- to post-intervention | Did the intervention alter the ongoing trend? |

The basic segmented regression model is expressed as:

Yt = β₀ + β₁ × time + β₂ × intervention + β₃ × post-time + εt

Where Yt is the outcome at time t, "time" indicates elapsed time since study start, "intervention" is a dummy variable (0 pre-intervention, 1 post-intervention), and "post-time" indicates time since intervention started (0 before intervention, 1,2,3... after) [25].

Critical Parameterization Distinction

Research has identified two common parameterizations of segmented regression with important interpretive differences [28] [29]. The approach by Wagner et al. uses (T-Ti) in the interaction term, where Ti is the intervention start time, making β₂ directly interpretable as the immediate level change [28]. In contrast, the approach by Bernal et al. uses simple time (T) in the interaction term, where β₂ represents the difference in intercepts at time zero rather than the immediate effect at intervention time [28] [29].

This distinction is crucial because using the incorrect interpretation can lead to erroneous conclusions about intervention effects [29]. Regardless of parameterization, the immediate level change at intervention time Ti is calculated as β₂ + β₃ × Ti in the Bernal parameterization, but is directly estimated as β₂ in the Wagner parameterization [28].

Fundamental Assumptions of Segmented Regression

For valid causal inference using segmented regression in ITS designs, several key assumptions must be met:

- Linearity: The relationship between time and outcome within each segment is linear [23] [24].

- Independent Errors: Residuals are independent of each other (often violated in time series data) [24].

- Homoscedasticity: Constant variance of errors over time [23].

- Correct Model Specification: The intervention time point is correctly identified, and the functional form is appropriate [23] [25].

- No Omitted Confounders: No unmeasured variables simultaneously affect the intervention assignment and outcome trends [23].

The assumption of independent errors is frequently violated in time series data due to autocorrelation, where consecutive measurements are correlated [24]. When autocorrelation exists but is ignored, standard errors may be underestimated, increasing Type I error rates [24]. Appropriate statistical techniques, such as including autoregressive terms or using generalized estimating equations (GEE), should be employed to address this issue [27].

Application Protocols for Segmented Regression Analysis

Standard Two-Segment Protocol

For evaluating an intervention implemented at a single, well-defined time point:

Data Preparation

- Collect equally spaced observations before and after intervention (minimum 8-12 points per segment recommended)

- Create three key variables:

- Time: continuous variable indicating time from study start

- Intervention: dummy variable (0=pre, 1=post)

- Post-time: time since intervention start (0 before, 1,2,3... after)

Model Specification

- Select appropriate regression type based on outcome variable:

- Linear regression for continuous outcomes

- Logistic regression for binary outcomes

- Poisson regression for count data [23]

- Specify model: Outcome = β₀ + β₁ × time + β₂ × intervention + β₃ × post-time

- Check residual autocorrelation using Durbin-Watson or related tests

- If autocorrelation present, use appropriate correction (e.g., autoregressive terms, Newey-West standard errors)

Interpretation

- Test significance of β₂ (immediate level change) and β₃ (slope change)

- Apply multiplicity adjustment if testing both level and slope changes [23]

- Calculate confidence intervals for intervention effects

Advanced Modeling Protocols

Three-Segment Model for Transition Periods When interventions are gradually implemented or effects manifest over a transition period [25]:

- Identify transition period start (T₀) and end (T₂) points

- Create a continuous function F(t) representing cumulative distribution of intervention effect during transition

- Specify optimized segmented regression model: Yt = β₀ + β₁ × time + β₂ × F(t) × intervention + β₃ × F(t) × post-time + εt

- Common distribution patterns for F(t) include uniform, normal, log-normal, or log-normal flip distributions [25]

Plateau Model with Estimated Breakpoint When the intervention timing or breakpoint is unknown and must be estimated from data [30]:

- Specify nonlinear model with continuity and smoothness constraints at breakpoint

- Use iterative procedures (e.g., PROC NLIN in SAS) to estimate breakpoint location

- For quadratic-plateau model: pre-breakpoint (quadratic), post-breakpoint (constant)

- Apply constraints: f(x₀) = g(x₀) and f'(x₀) = g'(x₀) at breakpoint x₀

Quantitative Data Synthesis

Table 2: Comparison of Segmented Regression Approaches for ITS Designs

| Model Type | Key Features | Indications | Statistical Considerations |

|---|---|---|---|

| Classic Two-Segment | Single breakpoint at known intervention time; estimates level and slope changes | Sharp, immediate interventions with known implementation timing | Autocorrelation adjustment; sufficient data points per segment (≥8) |

| Three-Segment with Transition | Models gradual implementation; accounts for transition period using CDFs | Interventions phased over time; training periods; gradual effect manifestation | Selection of appropriate distribution pattern for transition; sensitivity analysis for transition length |

| Plateau with Estimated Breakpoint | Estimates breakpoint from data; continuity constraints | Unknown intervention timing; natural thresholds; effect saturation | Nonlinear estimation; initial parameter guesses; more complex implementation |

| Multivariate Segmented | Multiple independent variables with potential breakpoints | Complex interventions; multiple simultaneous components | Increased model complexity; potential for overfitting |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Methodological Tools for Segmented Regression Analysis

| Tool/Technique | Function/Purpose | Implementation Examples |

|---|---|---|

| Segmented Package (R) | Fitting segmented regression models with estimated breakpoints | segmented() function for breakpoint detection and piecewise terms |

| PROC NLIN (SAS) | Nonlinear regression for complex segmented models with constraints | Plateau models with smoothness constraints at estimated breakpoints |

| Generalized Estimating Equations (GEE) | Accounting for autocorrelation in correlated time series data | proc genmod in SAS; geeglm in R for panel ITS data |

| Cumulative Distribution Functions (CDFs) | Modeling transition periods in optimized segmented regression | Uniform, normal, log-normal distributions for gradual effects |

| Durbin-Watson Test | Detecting autocorrelation in regression residuals | Statistical testing for serial correlation in time series errors |

Advanced Considerations and Methodological Refinements

Addressing Common Analytical Errors

A frequent error in segmented regression parameterization involves incorrect specification of the interaction term [29]. Researchers must use the product between the intervention variable and time elapsed since intervention start (T-Ti), rather than time since study beginning (T), to obtain valid estimates of the immediate level change [29]. Simulation studies demonstrate that using the incorrect parameterization can produce substantially biased estimates of the intervention's immediate effect [29].

Handling Complex Intervention Scenarios

Multiple Intervention Components For complex interventions with several components introduced at different times:

- Add multiple interruption points to the time series

- Ensure sufficient data points between interventions for independent effect estimation

- Consider structured interrupted time series to isolate component effects [24]

Multi-site ITS Designs When data comes from multiple implementation sites:

- Perform segmented regression separately for each site, then meta-analyze results

- Use hierarchical models with random effects for sites

- Account for potential heterogeneity in intervention effects across sites [24] [27]

Segmented regression remains the gold standard analytical method for interrupted time series designs in implementation research, providing robust causal inference about intervention effects while accounting for underlying secular trends [23] [24]. When properly specified with attention to key assumptions—particularly regarding parameterization, autocorrelation, and intervention timing—it offers researchers across scientific domains a powerful tool for evaluating real-world interventions [28] [29]. The continued development of optimized segmented regression approaches, particularly for handling transition periods and complex intervention scenarios, further enhances its applicability to contemporary implementation research challenges [25] [27].

Interrupted Time Series (ITS) analysis is a powerful quasi-experimental design for evaluating the population-level impact of health policy interventions, pharmaceutical regulations, and public health initiatives when randomized controlled trials are not feasible [13] [31]. Within this framework, Autoregressive Integrated Moving Average (ARIMA) and Seasonal ARIMA (SARIMA) models provide sophisticated analytical approaches that account for complex temporal structures, including autocorrelation, trends, and seasonal patterns, which simpler segmented regression models may inadequately capture [13] [32]. For researchers and drug development professionals, these models offer a robust methodology for determining whether an intervention—such as a new drug policy, vaccination campaign, or market approval—creates a significant deviation from pre-existing trends in outcomes like prescribing rates, disease incidence, or product demand [13] [32] [33].

The core strength of ARIMA/SARIMA models lies in their ability to model the outcome variable based on its own past values and previous forecast errors, while explicitly accounting for temporal dependencies that violate the independence assumption of standard statistical tests [13] [34]. This is particularly valuable in pharmaceutical and public health research, where data often exhibit seasonal fluctuations (e.g., annual influenza patterns, quarterly reporting cycles) and serial correlation that must be controlled to accurately isolate intervention effects [13] [32]. By properly addressing these temporal structures, ARIMA/SARIMA models reduce biased estimation of intervention impacts and provide more valid causal inference in observational settings [35].

Theoretical Foundations of ARIMA and SARIMA Models

Components of ARIMA Models

ARIMA models combine three primary components to describe and forecast time series data. The model is characterized by three parameters: (p, d, q), where:

AR (Autoregressive component - p): This component models the current value of the time series as a linear combination of its previous values [13] [34]. An autoregressive model of order p (AR(p)) can be expressed as:

( Yt = c + \phi1 Y{t-1} + \phi2 Y{t-2} + \dots + \phip Y{t-p} + \varepsilont )

where (Yt) is the value at time t, (c) is a constant, (\phi1, \dots, \phip) are the autoregressive parameters, and (\varepsilont) represents the error term [13].

I (Integrated component - d): This component involves differencing the time series to make it stationary—removing trends and achieving constant statistical properties over time [13] [34] [36]. The order of differencing (d) indicates how many times differencing is applied. First-order differencing is expressed as:

( Yt' = Yt - Y_{t-1} )

Stationarity is crucial for ARIMA modeling as it ensures stable parameters over time, typically verified using tests like the Augmented Dickey-Fuller (ADF) test [37] [36].

MA (Moving Average component - q): This component models the current value based on the residual errors from previous time points [13] [34]. A moving average model of order q (MA(q)) is expressed as:

( Yt = c + \varepsilont + \theta1 \varepsilon{t-1} + \theta2 \varepsilon{t-2} + \dots + \thetaq \varepsilon{t-q} )

where (\theta1, \dots, \thetaq) are the moving average parameters [13].

The complete ARIMA(p,d,q) model combines these elements to regress the time series on its own lagged values and lagged forecast errors [34] [36].

Extension to Seasonal ARIMA (SARIMA) Models

For time series with seasonal patterns, the SARIMA model extends ARIMA by incorporating seasonal components. A SARIMA model is denoted as (p, d, q)(P, D, Q)_m, where [38] [34]:

- (p, d, q): Non-seasonal orders (as in ARIMA)

- (P, D, Q): Seasonal orders for the autoregressive, differencing, and moving average components, respectively

- m: Number of periods in each seasonal cycle (e.g., 12 for monthly data with yearly seasonality, 4 for quarterly data)

The seasonal component models patterns that repeat at fixed intervals, addressing regular fluctuations such as increased prescribing of certain medications in winter months or quarterly reporting cycles in pharmaceutical sales [32] [38]. Seasonal differencing (D) removes seasonal trends, for instance, by computing ( Yt - Y{t-m} ) [34].

Model Implementation Protocol for ITS Analysis

Pre-Modeling Data Preparation and Stationarity Assessment

Step 1: Data Preprocessing

- Address missing values through appropriate imputation methods to maintain series continuity [37].

- Detect and normalize anomalies or outliers that may skew model estimation [37].

- Ensure consistent sampling frequency and regular time intervals between observations [37].

Step 2: Stationarity Testing and Differencing

- Test for stationarity using the Augmented Dickey-Fuller (ADF) test, where the null hypothesis assumes non-stationarity [37] [36]. A p-value below 0.05 typically indicates stationarity.

- Apply differencing (d) if non-stationary: Start with first-order differencing ((Yt - Y{t-1})), and proceed to higher orders if necessary [34] [36].

- Avoid over-differencing: An over-differenced series may still be stationary but can lead to unnecessary complexity and large standard errors [36].

- For seasonal data, apply seasonal differencing (D) with period m (e.g., (Yt - Y{t-m}) for monthly data with m=12) [34].

Table 1: Stationarity Assessment and Differencing Guidelines

| Scenario | ADF Test Result | Recommended Action | Target d/D |

|---|---|---|---|

| No trend, constant variance | p < 0.05 | No differencing needed | d = 0 |

| Linear trend, constant variance | p > 0.05 | First-order differencing | d = 1 |

| Nonlinear trend, changing variance | p > 0.05 | Second-order differencing or transformation | d = 2 |

| Seasonal pattern present | p > 0.05 at seasonal lags | Seasonal differencing | D = 1 with appropriate m |

Model Identification and Parameter Selection

Step 3: Determine AR and MA Orders Using ACF and PACF

- Plot and analyze the Autocorrelation Function (ACF) and Partial Autocorrelation Function (PACF) of the differenced series [13] [38] [36].

- ACF helps identify the order of MA(q): Significant spikes at lag q suggest MA terms [37] [36].

- PACF helps identify the order of AR(p): Significant spikes at lag p suggest AR terms [37] [36].

- For SARIMA, examine ACF/PACF at seasonal lags (multiples of m) to identify P and Q [38].

Step 4: Model Selection and Validation

- Fit multiple candidate models with different (p,d,q)(P,D,Q)_m parameters.

- Compare models using information criteria such as Akaike Information Criterion (AIC) or Bayesian Information Criterion (BIC), where lower values indicate better fit [34].

- Perform residual analysis: Ensure residuals resemble white noise (no significant autocorrelations) and are normally distributed [13] [36].

- Validate model stability using out-of-sample forecasting or cross-validation techniques [38].

Table 2: Interpretation of ACF and PACF Patterns for Model Identification

| Pattern | ACF Behavior | PACF Behavior | Suggested Model |

|---|---|---|---|

| AR(p) | Decays exponentially or sinusoidal | Significant spikes at lag p, then cuts off | AR(p) with order p |

| MA(q) | Significant spikes at lag q, then cuts off | Decays exponentially or sinusoidal | MA(q) with order q |

| ARMA(p,q) | Decays after lag q | Decays after lag p | ARMA(p,q) |

| Seasonal AR | Decays at seasonal lags | Significant spikes at seasonal lags | Seasonal AR(P) |

| Seasonal MA | Significant spikes at seasonal lags | Decays at seasonal lags | Seasonal MA(Q) |

Intervention Effect Quantification in ITS

Step 5: Modeling Intervention Effects

- Incorporate intervention variables into the selected ARIMA/SARIMA model to test hypotheses about level and trend changes [13] [31].

- Common intervention specifications include [31]:

- Step change (abrupt permanent): Binary variable (0 pre-intervention, 1 post-intervention)

- Pulse change (abrupt temporary): Binary variable for immediate, temporary effect

- Ramp change (gradual): Continuous variable counting time since intervention

- Estimate model parameters and test significance of intervention terms [13] [31].

- Compute effect sizes with confidence intervals to quantify intervention magnitude [35].

Application in Pharmaceutical and Public Health Research

ARIMA/SARIMA models have demonstrated substantial utility across various pharmaceutical and public health research contexts:

Policy Impact Evaluation

In a study evaluating Australia's policy change restricting quetiapine prescriptions, ARIMA modeling quantified a significant reduction in inappropriate prescribing following the intervention, demonstrating its value for pharmaceutical policy analysis [13]. Similarly, research on COVID-19's impact on routine immunization in Kenya employed SARIMA to account for seasonal patterns in vaccine coverage, revealing immediate decreases in pentavalent and measles/rubella vaccine doses following pandemic onset, with recovery within approximately four months [32].

Pharmaceutical Sales and Demand Forecasting

ARIMA/SARIMA models provide critical forecasting capabilities for pharmaceutical supply chain management. Studies comparing forecasting approaches found that time series models effectively predict drug demand, enabling optimized production, inventory management, and market responsiveness [33]. Accurate forecasting is particularly valuable for pharmaceutical companies where prediction errors can significantly impact operational efficiency and resource allocation [39] [33].

Infectious Disease Surveillance and Intervention Assessment

In infectious disease research, SARIMA models help quantify the impact of public health interventions by accounting for both seasonal patterns and underlying trends [32] [31]. For example, studies have evaluated antibiotic stewardship programs, vaccination campaigns, and pandemic control measures while controlling for autocorrelation and seasonal variation in disease incidence [32] [31].

Research Reagent Solutions

Table 3: Essential Computational Tools for ARIMA/SARIMA Implementation

| Tool/Software | Primary Function | Application Context |

|---|---|---|

| R statistical software (statsmodels package) | Model fitting and diagnostics | Comprehensive time series analysis [13] [38] |

| Python (statsmodels, pmdarima) | Automated parameter selection and forecasting | Flexible implementation with machine learning integration [37] [36] |

| Augmented Dickey-Fuller test | Stationarity testing | Determining differencing order (d) [37] [36] |

| ACF/PACF plots | Model order identification | Visual guidance for p, q, P, Q selection [13] [36] |

| AIC/BIC criteria | Model comparison | Selecting optimal parameter combinations [34] |

Workflow Visualization

ARIMA/SARIMA Model Implementation Workflow for ITS Studies

Statistical Power Considerations

When designing ITS studies using ARIMA/SARIMA models, statistical power depends on several factors. Simulation studies indicate that [35]:

- Power increases with the number of pre- and post-intervention time points

- Power decreases as autocorrelation increases

- Power increases with larger effect sizes

- At least 24 time points pre- and post-intervention are typically needed to detect effect sizes of 1.0 with 80% power, depending on autocorrelation structure [35]

Smaller effect sizes (<0.5) or fewer time points may yield inadequate power, potentially leading to false conclusions about intervention effectiveness [35].

ARIMA and SARIMA models provide robust analytical frameworks for evaluating interventions in interrupted time series designs, particularly when data exhibit complex temporal structures including trends, autocorrelation, and seasonal patterns. By properly accounting for these features, researchers in pharmaceutical development and public health can obtain more valid estimates of intervention effects, leading to better-informed policy decisions and resource allocation. The structured protocol outlined in this document offers a systematic approach to model identification, estimation, and interpretation, supporting rigorous evaluation of health interventions in observational settings where randomized trials are not feasible.

Generalized Additive Models (GAMs) represent a powerful extension of Generalized Linear Models (GLMs) that replace the linear relationship between predictors and outcome with flexible smooth functions, enabling the modeling of complex, non-linear patterns without requiring prior specification of the relationship's form [40] [41]. In the context of interrupted time series (ITS) design implementation research, this flexibility is particularly valuable for evaluating policy interventions or treatment effects where the underlying trends may follow non-linear patterns that traditional segmented regression cannot adequately capture [9] [42].

The fundamental equation for a GAM can be expressed as:

[g(\mu) = \beta0 + f1(x1) + f2(x2) + \cdots + fp(x_p)]

where (g(\mu)) is the link function, (\beta0) is the intercept, and (fj(x_j)) are smooth functions of the covariates [40] [43]. This structure maintains the additivity of GLMs while allowing for non-linear relationships through the smooth functions, striking a balance between interpretability and flexibility [44] [40].

Compared to traditional linear models, GAMs offer distinct advantages for ITS research. While linear models assume a straight-line relationship between predictors and outcome, GAMs can capture complex nonlinear trends common in real-world time series data [45] [42]. Unlike polynomial regression, which can produce wild extrapolations at the endpoints (Runge's phenomenon), GAMs use smoothing splines that provide more stable behavior at data boundaries [46]. Additionally, compared to complex machine learning approaches, GAMs maintain interpretability through their additive structure, allowing researchers to understand and communicate the effect of individual variables [44] [40].

Comparative Analysis of Modeling Approaches for ITS

Table 1: Comparison of Statistical Approaches for Interrupted Time Series Analysis

| Model Type | Key Characteristics | Assumptions | Strengths | Limitations |

|---|---|---|---|---|

| Segmented Linear Regression | Assumes linear trends before and after intervention | Linear relationships, independent errors | Simple interpretation, widely understood [42] | Poor performance with nonlinear trends [42] |

| ARIMA Models | Accounts for autocorrelation, trend, and seasonality | Stationarity after differencing | Handles complex autocorrelation structures [9] | Complex specification, less intuitive [9] |

| Generalized Additive Models (GAMs) | Flexible smooth functions capture nonlinear patterns | Additivity, smooth relationships | Captures nonlinear trends automatically, robust to model misspecification [9] [42] | Computational intensity, smoothing parameter selection [45] |

Table 2: Performance Comparison of GAMs vs. Alternative Methods in Simulation Studies

| Study Context | Comparison Model | Key Finding | Performance Metric |

|---|---|---|---|

| Policy Intervention Evaluation [42] | Segmented Linear Regression | GAMs showed better performance with nonlinear trends, similar performance with linear trends | Lower MSE and MPE for nonlinear data |

| Health Policy Analysis [9] | ARIMA | GAMs more robust to model misspecification; ARIMA more consistent with different effect sizes | Model accuracy under varying conditions |

| Clinical Prediction Rules [43] | Traditional Categorization | GAM-based categorization performed similarly to continuous predictors | No significant differences in AUC values |

GAM Implementation Protocol for ITS Research

Data Preparation and Preprocessing

- Temporal Alignment: Ensure time series data are equally spaced with consistent intervals between observations [42].

- Missing Data Handling: Address missing values through appropriate imputation techniques or modeling strategies, as GAMs can accommodate missing data points [45].

- Covariate Encoding: Encode categorical predictors (e.g., seasonality indicators) into numeric format using techniques like one-hot encoding [45].

- Outcome Transformation: Transform the outcome variable if necessary to meet distributional assumptions (e.g., log transformation for count data) [47] [48].

Model Specification and Fitting

- Select Smoothing Functions: Choose appropriate basis functions (e.g., thin plate regression splines, cubic regression splines) for the smooth terms [40] [42].

- Define Intervention Effects: Specify appropriate terms to model intervention effects:

- Immediate level changes: Indicator variable for pre/post intervention

- Changes in trends: Interaction between time and intervention indicator

- Lagged effects: Appropriately lagged terms based on content knowledge [9]

- Account for Autocorrelation: Incorporate correlation structures or autoregressive terms when residuals show temporal dependence [9] [42].

- Control for Seasonality: Include seasonal components using cyclic smooths or Fourier terms [9] [42].

- Set Basis Complexity: Choose appropriate basis dimensions (knots) for smooth functions, balancing flexibility and overfitting [40].

Model Diagnostics and Validation

- Residual Analysis: Check residual plots for patterns, heteroscedasticity, and autocorrelation [40] [48].

- Basis Dimension Check: Verify that basis dimensions (k) for smooth terms are sufficient using

gam.check()or similar functionality [40]. - Model Comparison: Compare competing models using AIC, BIC, or cross-validation techniques [45] [43].

- Temporal Validation: Validate model performance on holdout time periods to assess forecasting accuracy [42].

Effect Estimation and Interpretation

- Intervention Effect Quantification: Calculate immediate and cumulative effects of the intervention by comparing predictions with and without the intervention [42].

- Visualization: Generate partial dependence plots to visualize the relationship between predictors and outcome [45] [48].

- Uncertainty Estimation: Compute confidence intervals for smooth functions and intervention effects using Bayesian posterior simulation or bootstrap methods [48].

GAM Implementation Workflow for ITS Studies

GAM Components and Analytical Framework

GAM Mathematical Components and Structure

Research Reagent Solutions: Software and Computational Tools

Table 3: Essential Software Tools for Implementing GAMs in ITS Research

| Tool Name | Type | Primary Function | Key Features for ITS |

|---|---|---|---|

| mgcv (R Package) [48] [42] | Software Library | GAM estimation and inference | Automatic smoothing parameter selection, various basis functions, AR1 error structures |

| pyGAM (Python Package) [46] | Software Library | GAM implementation in Python | Multiple regression types, grid search for optimization, custom loss functions |

| marginaleffects (R Package) [48] | Analysis Tool | Post-estimation interpretation | Conditional and marginal effects, predictions, hypothesis testing for GAMs |

| gam.check (mgcv) [40] | Diagnostic Tool | Model validation | Basis dimension checks, residual diagnostics, QQ-plots |

| Thin Plate Regression Splines [42] | Smoothing Method | Default smoother in mgcv | Optimal smoothing given basis dimension, no knot placement required |

Application in Drug Development and Healthcare Research

GAMs offer particular utility in pharmaceutical and healthcare research where ITS designs are commonly employed to evaluate the impact of policy changes, treatment guidelines, or safety interventions. The non-linear modeling capability of GAMs allows researchers to detect and quantify intervention effects that may follow complex temporal patterns not captured by traditional methods [47] [42].

In a study evaluating the impact of Spain's 2012 cost-sharing reform on pharmaceutical prescriptions, GAMs revealed non-linear trends that would have been missed by segmented linear regression, providing more accurate estimates of the policy's cumulative effect [42]. Similarly, in clinical prediction research, GAMs have been used to develop optimal categorization schemes for continuous clinical variables, preserving critical prognostic information while creating clinically practical decision rules [43].

For drug safety monitoring, GAMs can model complex seasonal patterns in adverse event reports while evaluating the impact of safety warnings, and in clinical biomarker studies, GAMs have elucidated non-linear relationships between alcohol consumption and inflammatory markers like IL-6, revealing risk patterns that would be obscured by dichotomization or linear assumptions [47].

The flexibility of GAMs to accommodate non-normal distributions through appropriate link functions makes them particularly suitable for healthcare outcomes, which often include counts (e.g., hospitalizations), binary outcomes (e.g., mortality), or skewed continuous measures (e.g., healthcare costs) [41]. This distributional flexibility, combined with the ability to capture non-linear temporal patterns, positions GAMs as a powerful analytical tool for the complex longitudinal data common in pharmaceutical and health services research.

The Interrupted Time Series (ITS) design is a powerful quasi-experimental method for evaluating the longitudinal effects of interventions implemented at a population level, such as new health policies, system changes, or drug utilization interventions [49] [50]. Within implementation research, ITS analysis enables researchers to determine whether an intervention has produced a significant effect beyond underlying trends by analyzing data points collected at regular intervals before and after an intervention point [51]. Despite its growing popularity in drug development and health services research, methodological challenges in its application persist, particularly regarding sample size determination, data aggregation strategies, and pre-specification of intervention effects [49] [51].

Recent evidence indicates substantial methodological gaps in current ITS practice. A cross-sectional survey of 153 drug utilization studies using ITS design found that only 28.1% clearly explained the rationale for using ITS, merely 13.7% clarified the rationale for their chosen model structure, and a mere 20.8% of studies using aggregated data justified the number of time points selected [51]. These identified shortcomings highlight the critical need for standardized protocols in ITS design and analysis.