Mastering Systematic Error Control in Narrow Concentration Ranges for Biomedical Research

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to understand, identify, and correct systematic errors in analytical methods operating within narrow concentration ranges.

Mastering Systematic Error Control in Narrow Concentration Ranges for Biomedical Research

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to understand, identify, and correct systematic errors in analytical methods operating within narrow concentration ranges. It covers foundational concepts differentiating systematic from random error, explores methodological detection and correction strategies like Youden calibration and standard additions, offers troubleshooting and optimization techniques for laboratory workflows, and discusses validation protocols for assessing method accuracy and comparability. The guidance is essential for ensuring data integrity and regulatory compliance in critical applications such as bioanalysis, therapeutic drug monitoring, and clinical diagnostics.

Understanding Systematic Error Fundamentals in Quantitative Bioanalysis

For researchers in drug development working with narrow concentration ranges, understanding and controlling systematic error is not just good practice—it is critical to data integrity. Systematic error, or bias, causes measurements to consistently deviate from the true value in a specific direction, directly compromising the accuracy of your results [1]. Unlike random error, which affects precision and can be reduced by averaging repeated trials, systematic error will not average out and can lead to false conclusions about the relationship between variables, such as a drug's dose-response curve [1] [2]. This guide provides practical troubleshooting and FAQs to help you identify, quantify, and minimize systematic error in your analytical workflows.

Understanding Systematic vs. Random Error

Core Definitions

- Systematic Error (Bias): A consistent, repeatable error that skews measurements away from the true value in a predictable direction. It affects the accuracy of your results [1] [3].

- Random Error (Noise): Unpredictable fluctuations in measurements that vary irregularly around the true value. It affects the precision (or repeatability) of your results but can be reduced by taking multiple measurements [1] [4].

The following table summarizes the key differences:

| Aspect | Systematic Error | Random Error |

|---|---|---|

| Definition | Consistent, repeatable error in a specific direction [1]. | Unpredictable fluctuations causing scatter in data [4]. |

| Impact on Data | Consistently biases results away from the true value, affecting accuracy [1]. | Causes variation around the true value, affecting precision [1]. |

| Detection | Challenging; requires comparison to a known standard or control experiment [5]. | Easier to identify through repeated measurements and statistical analysis [4]. |

| Reduction Methods | Calibration, standardized procedures, control groups, blinding [1] [5]. | Increasing sample size, performing multiple trials, improving measurement techniques [1]. |

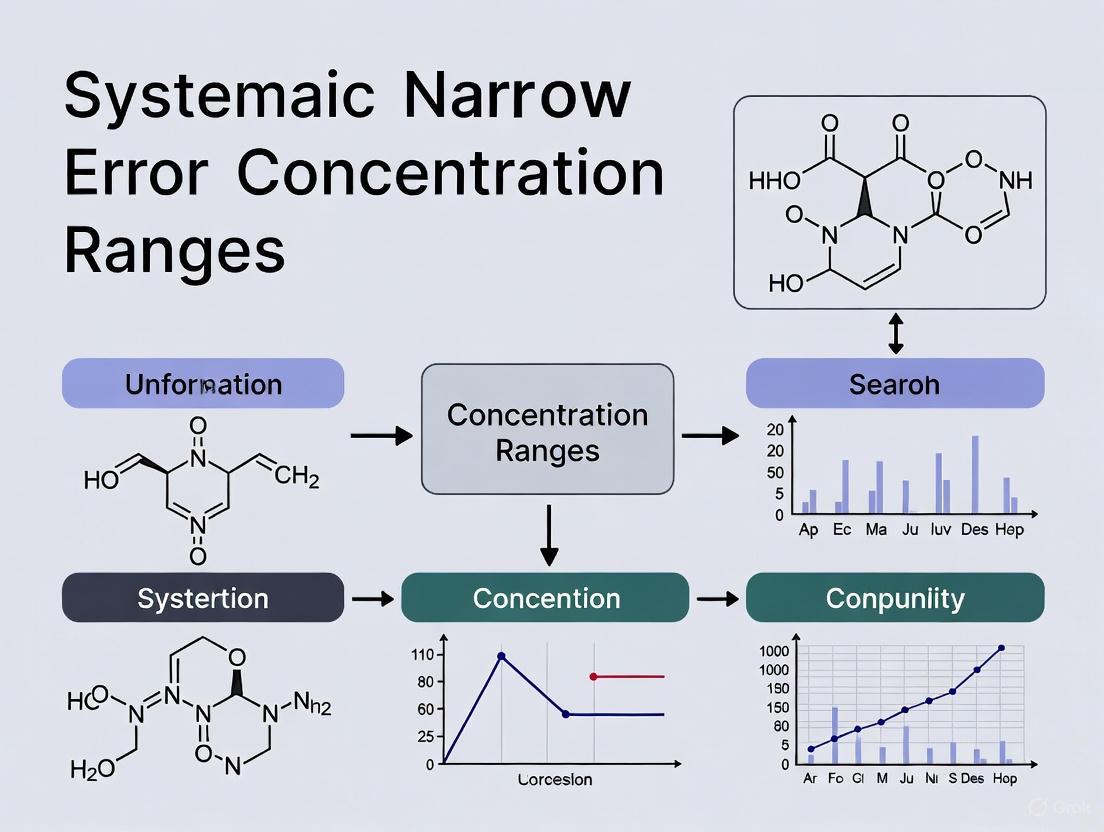

Diagram: Decision flow for identifying error types in measurements.

Troubleshooting Guide: Identifying and Resolving Systematic Error

How do I identify the source of a systematic error in my assay?

Follow a structured troubleshooting approach to isolate the root cause. A key principle is to change only one variable at a time and observe the effect before proceeding; changing multiple factors simultaneously can obscure the true source of the problem and prevent future learning [6].

Diagram: A sequential workflow for troubleshooting systematic error sources.

What are the most effective methods to reduce systematic error?

Reducing systematic error requires a proactive approach focused on your experimental design and procedures. The table below outlines key strategies.

| Method | Description | Example in Drug Development |

|---|---|---|

| Regular Calibration [1] [5] | Comparing instrument readings to a known, traceable standard and adjusting accordingly. | Calibrating an HPLC UV detector with a standard of known concentration and absorbance before analyzing experimental samples. |

| Method Triangulation [1] | Using multiple, independent techniques to measure the same quantity. | Confirming protein concentration assay results using both UV absorbance and a colorimetric (Bradford) method. |

| Standardized Procedures [3] [4] | Developing and strictly adhering to detailed, written protocols for all steps. | Using the same vortexing time and temperature for all sample extractions to ensure consistent analyte recovery. |

| Blinding (Masking) [1] | Hiding the identity of treatment groups from analysts and/or participants to prevent subconscious bias. | Having a colleague prepare and code samples so the analyst measuring the response is unaware of which are controls and which are experimental. |

| Use of Control Groups [4] | Including groups with known or no treatment to identify baseline shifts or instrument drift. | Running a placebo control alongside drug-treated samples in an cell-based efficacy assay. |

Experimental Protocols for Error Management

Protocol 1: Calibrating an Analytical Instrument

This protocol is essential for identifying and correcting instrumental systematic error, a common source of bias in quantitative analysis [5].

- Gather Materials: The instrument to be calibrated, certified reference standards that bracket your expected sample concentration range, and all necessary solvents and consumables. Use plastic containers for mobile phases and samples if measuring analytes susceptible to interference from alkali metal ions leaching from glass [6].

- Zero-Point Adjustment: With no analyte present, run a blank sample and adjust the instrument's reading to the zero point [5].

- Establish Calibration Points: Measure at least two certified reference standards—one near the lower end and one near the upper end of your measurement range. For a wider range or suspected non-linearity, include additional standards [5].

- Create Calibration Curve: Plot the instrument's measured response against the known concentration of the standards. Determine the best-fit line (e.g., via linear regression).

- Apply Correction: Use the equation of the calibration curve to convert future sample measurements into corrected values. The calibration factor accounts for the systematic error [5].

Protocol 2: Quantifying Error in a Sample Preparation Workflow

This procedure helps quantify the total error introduced by your sample preparation process.

- Prepare QC Samples: Spike a blank matrix with a known concentration of your analyte to create Quality Control (QC) samples at low, medium, and high concentrations within your range of interest.

- Process Samples: Subject the QC samples and a set of certified reference materials (CRMs) through your entire sample preparation workflow (e.g., extraction, dilution, derivatization).

- Analyze and Calculate: Analyze all samples and calculate the measured concentration for each.

- Determine Error: For each QC sample and CRM, calculate the percent error:

- Percent Error (%) = [(Measured Value - True Value) / True Value] x 100 [7].

- A consistent positive or negative percent error across replicates indicates a systematic error in the workflow.

Essential Research Reagent Solutions

The following table details key reagents and materials used in experiments designed to control for systematic error.

| Reagent/Material | Function in Error Control |

|---|---|

| Certified Reference Materials (CRMs) | Provides a known, traceable value for calibration and accuracy verification, directly addressing instrumental and methodological systematic error [5]. |

| MS-Grade Solvents & Additives | Reduces spectral interferences and adduct formation in mass spectrometry, a specific source of systematic measurement error [6]. |

| Internal Standards (e.g., Isotope-Labeled) | Corrects for variability in sample preparation and instrument response, mitigating both systematic and random errors [2]. |

| Quality Control (QC) Pooled Samples | Monitors assay performance over time, helping to detect the introduction of systematic error due to reagent lot changes or instrument drift. |

Frequently Asked Questions (FAQs)

Why is systematic error considered more problematic than random error?

Systematic error is generally more serious because it consistently skews your data in one direction, leading to biased conclusions about the relationship between variables [1]. For example, it can cause you to incorrectly conclude a drug is effective when it is not (a false positive, or Type I error), or that it is ineffective when it actually works (a false negative, or Type II error) [1]. Random error, while reducing precision, averages out toward the true value with a large enough sample size and does not cause this type of directional bias [1].

Can good precision guarantee that my data is accurate?

No, good precision does not guarantee accuracy [8]. It is possible to have measurements that are very close to each other (high precision) but that are all consistently offset from the true value due to an unaccounted-for systematic error [8]. High precision indicates low random error but says nothing about the presence of systematic error.

How does sample size affect systematic and random error?

Increasing your sample size is an effective way to reduce the impact of random error because the fluctuations will average out, giving you a more precise estimate of the mean [1] [3]. However, a larger sample size will not reduce systematic error [5]. If your measurement method is biased, that bias will be present and may even be more precisely estimated in a larger sample.

What is the difference between a systematic error and a blunder?

A systematic error is a consistent, inherent flaw in the method, instrument, or setup [1]. A blunder (or gross error) is a one-time, unintentional mistake, such as a transcription error, misreading an instrument, or spilling a sample [8] [7]. Blunders are not part of the systematic or random error categories and should be identified and removed from the data set.

Conceptual Foundation: Understanding Error Types

In quantitative research, particularly when working within narrow concentration ranges, a clear understanding of measurement error is not just beneficial—it is fundamental to producing valid and reliable data.

What is the fundamental difference between systematic and random error?

- Systematic Error (Bias): These are reproducible inaccuracies that consistently push measurements in the same direction, making them either consistently too high or too low. They affect the accuracy of your results [9] [10] [11].

- Random Error: These are unpredictable statistical fluctuations in the measured data due to the precision limitations of the measurement device or environment. They affect the precision of your results [9] [12] [11].

The following diagram illustrates how these errors are classified and their primary sources:

Frequently Asked Questions (FAQs)

1. Why are systematic errors considered more dangerous than random errors in narrow-range assays?

Systematic errors are particularly perilous in narrow-range research because they do not cancel out with repeated measurements and can lead to a consistent over- or under-estimation of the true value [13] [12]. In a narrow concentration range, even a small, consistent bias can be significant enough to cause a result to fall on the wrong side of a critical threshold (e.g., a pharmacokinetic cutoff or a legal limit), leading to incorrect conclusions. Unlike random error, increasing your sample size does not reduce systematic bias; it only makes the incorrect result more precisely wrong [12].

2. How can I determine if my measurements are suffering from systematic error?

Identifying systematic error can be challenging as it is not revealed by statistical analysis of the data alone [5]. Key strategies include:

- Calibration: Perform your experimental procedure on a known reference quantity. A significant difference between your measured value and the known value indicates systematic error [5] [11].

- Method Comparison: Compare your results with those obtained from a different, well-established method or instrument [5].

- Blank Controls: Running blank samples can help identify a consistent offset or baseline drift in your instrumentation [14].

3. What are the most common sources of error I should control for in sensitive measurements?

The following table summarizes the primary sources and their nature, which is critical for planning mitigation strategies.

| Source Category | Common Examples | Typical Error Type |

|---|---|---|

| Measurement Instruments | Improper calibration, instrument drift, faulty equipment, slow response time (lag) [15] [9] [11]. | Systematic |

| Experimental Procedure | Unclear instructions, miscalibrated equipment, non-randomized task order, improper sample preparation [15] [13]. | Systematic |

| Environmental Factors | Temperature fluctuations, air drafts, vibrations, humidity changes, radio frequency interference (RFI) [15] [13] [16]. | Systematic or Random |

| Operator/Personal | Misreading instruments, parallax error, poor technique, inconsistent observation, fatigue [15] [16] [11]. | Random or Systematic |

| Sample Characteristics | Intrinsic biological variability, moisture content, deformation under pressure, degradation over time [15] [10]. | Random |

Troubleshooting Guide: Resolving Common Measurement Issues

This guide addresses specific problems you might encounter during experiments requiring high precision at narrow concentration ranges.

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| Unstable/Drifting Readings | Instrument not warmed up [14]; environmental vibrations/drafts [15]; sample too concentrated [14]; air bubbles in sample [14]. | Allow instrument to warm up for 15-30 minutes [14]; place equipment on a stable, level surface [15]; dilute sample; gently tap cuvette to dislodge bubbles [14]. |

| Consistent Offset from Reference | Systematic error from improper instrument calibration or zero offset [9] [11]; faulty measurement equipment [10]. | Check and adjust zero reading; calibrate instrument against a known traceable standard before use [5] [16]; verify equipment is not worn or damaged. |

| High Variation Between Replicates | Random error from environmental fluctuations [9]; inconsistent technique or sample placement [15]; operator fatigue [10]; small sample size [12]. | Control environmental conditions (temperature, humidity) [15]; use documented procedures for consistency [15]; increase number of measurements or sample size to reduce the impact of variability [10] [12]. |

| Inability to Zero Instrument | Sample compartment not closed [14]; faulty blank preparation [14]; instrument hardware malfunction. | Ensure blank uses correct solvent and a clean, matched cuvette [14]; check that compartment lid is secure; consult technical service for hardware checks [14]. |

| Negative Absorbance Readings | The blank solution is "dirtier" (more absorbing) than the sample [14]; using different cuvettes for blank and sample [14]; very dilute sample. | Use the exact same cuvette for both blank and sample measurements; ensure cuvette is clean; for dilute samples, consider a more sensitive method or concentration step [14]. |

Experimental Protocols for Error Minimization

Protocol 1: Calibration to Identify and Correct Systematic Error

Calibration is the most reliable method for uncovering and correcting systematic errors [5].

Methodology:

- Select Reference Standards: Obtain reference quantities with known values that span the expected range of your measurements, ideally including the upper and lower limits [5].

- Perform Measurements: Execute your full experimental procedure on these known references.

- Analyze Results: Plot the values measured by your instrument against the known reference values.

- Establish Correction: If the data forms a straight line, the systematic error can be characterized by a zero offset and/or a scale (multiplier) error [5] [9]. A correction factor or calibration curve can then be applied to future unknown measurements.

Example: To calibrate a scale, first adjust it to read zero with nothing on it. Then, place a known weight (e.g., 160 lbs) on it. If the scale reads 150 lbs, you know it consistently reads 10 lbs low, and you can apply this correction to all subsequent measurements [5].

Protocol 2: Chromatographic Integration for Narrowly Spaced Peaks

In chromatography, integration method choice is critical for accuracy, especially with poorly resolved peaks in a narrow concentration range [17].

Methodology:

- Evaluate Peak Pairs: For closely eluting peaks, test different integration algorithms (e.g., drop, valley, Gaussian skim) on a standard with a known ratio of components [17].

- Quantify Error: Calculate the percent error between the observed and expected peak areas or heights for each method [17].

- Select Optimal Method: Choose the integration algorithm that produces the least error for your specific peak resolution and size ratio. Studies suggest that for peaks of approximately equal size, the drop method and Gaussian skim method often produce the least error, and peak height can be more accurate than peak area for poorly resolved peaks [17].

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function in Measurement | Considerations for Narrow Range Research |

|---|---|---|

| Certified Reference Materials | To calibrate instruments and validate methods, providing a known value to correct for systematic error [5]. | Ensure the reference material's matrix and concentration range closely match your samples. |

| Matched Cuvettes | To hold blank and sample solutions in spectrophotometry, ensuring identical light path properties [14]. | Using the same cuvette for blank and sample eliminates error from minor optical differences between cuvettes [14]. |

| Stable, High-Purity Solvents | To prepare blanks and dilute samples without introducing interfering substances. | Impurities can cause a consistent offset (bias) in absorbance or other readouts, skewing results in sensitive assays. |

| Instrument Calibration Standards | Traceable standards (e.g., weights, pH buffers, conductivity standards) specific to the instrument. | Regular calibration, traceable to international standards (e.g., ISO/IEC 17025), is non-negotiable for minimizing systematic error [16]. |

| Environmental Monitors | To log temperature, humidity, and vibration in the lab space. | Allows for correlation of environmental fluctuations with measurement drift, helping to identify sources of random and systematic error [15]. |

In biomedical laboratories, a systematic error (often called bias) is a consistent, reproducible inaccuracy that skews results in the same direction across measurements [18] [19]. Unlike random errors, which vary unpredictably, systematic errors cannot be eliminated by simply repeating the experiment. They reduce the trueness of your measurements, meaning the average of your results deviates from the true value [19]. For research involving narrow concentration ranges, even small, undetected biases can lead to incorrect conclusions, making their identification and control a critical aspect of quality science.

FAQs on Systematic Error

Q1: What is the fundamental difference between a systematic error and a random error?

The table below summarizes the key differences:

| Feature | Systematic Error (Bias) | Random Error |

|---|---|---|

| Definition | A consistent, reproducible deviation from the true value [18] | An unpredictable fluctuation around the true value [18] |

| Direction | Always skews results in the same direction [19] | Varies in direction (positive or negative) |

| Cause | Flaws in method, equipment calibration, or operator technique [20] [21] | Uncontrollable environmental noise, electronic instability, or sampling variability [20] |

| Impact on Data | Affects accuracy (trueness) [18] [19] | Affects precision (reproducibility) [18] |

| Reduction Method | Corrected through calibration, improved methods, or operator training [18] [21] | Reduced by increasing the number of measurements or replicates [19] |

Q2: Why is systematic error particularly problematic for research on narrow concentration ranges?

In narrow concentration range studies, the effect size you are trying to measure is often small. A systematic error, even if minor in absolute terms, can represent a large percentage of the range you are investigating. This bias can obscure true dose-response relationships, lead to incorrect potency estimates (e.g., IC50 or EC50 values), and ultimately invalidate the research findings.

Q3: How can I detect a systematic error in my assay?

Several established methods can be used:

- Method Comparison: Analyze certified reference materials (CRMs) or samples with a known concentration using your method and a reference method. A consistent difference indicates bias [18] [19].

- Quality Control (QC) Charts: Use Levey-Jennings plots to track the values of control samples over time. Trends or shifts, as identified by Westgard rules (e.g., the 10x rule where 10 consecutive controls fall on one side of the mean), signal systematic error [19].

- Recovery Experiments: Spike a sample with a known amount of analyte and measure the recovery. Significantly low or high recovery indicates proportional bias [19].

- Patient Averages: Statistical methods like "Average of Normals" can be used to detect drift in patient population data [19].

Q4: Our lab just implemented a new reagent lot. What is the most common type of systematic error we might encounter?

A common error when changing reagent lots is proportional bias. This occurs when the new reagent has a slightly different sensitivity, causing the measured values to be a consistent percentage higher or lower across the entire concentration range, rather than a fixed amount [19]. This is distinct from a constant bias, which would add or subtract the same value regardless of concentration.

Troubleshooting Guides

Guide: Troubleshooting a Consistent Positive or Negative Bias

Problem: All measured values are consistently higher (positive bias) or lower (negative bias) than the expected or reference values.

| Possible Source | Diagnostic Experiments | Corrective Action |

|---|---|---|

| Improper Calibration [21] | Re-calibrate using fresh, certified standards. Run a calibration verification sample. | Establish and adhere to a strict calibration schedule. Verify calibration with every run. |

| Deteriorated Reagents [20] | Test a new lot of reagents or a freshly prepared standard. Perform a recovery experiment. | Implement proper inventory management (First-In, First-Out). Adhere to expiration dates and storage conditions. |

| Instrument Drift [21] | Monitor QC values over time on a Levey-Jennings chart for a gradual trend. | Allow sufficient instrument warm-up time. Perform regular preventive maintenance. |

| Matrix Interference | Perform a spike-and-recovery experiment with the sample matrix. Dilute the sample and check for non-linearity. | Change the sample preparation method (e.g., dilution, deproteinization). Use a method with higher specificity. |

Guide: Troubleshooting a Proportional Bias

Problem: The difference between your measured values and the true value increases as the analyte concentration increases.

| Possible Source | Diagnostic Experiments | Corrective Action |

|---|---|---|

| Faulty Standard Curve | Prepare the standard curve from fresh, independent stock solutions. Use a different lot of standard material. | Use certified reference materials for standard preparation. Ensure accurate serial dilution techniques. |

| Reagent Lot Variation [19] | Compare the performance of the new and old reagent lots side-by-side using patient samples or controls. | Work with the manufacturer to understand lot-specific performance. Re-calibrate specifically for the new lot. |

| Insufficient Method Specificity | Analyze the sample using a reference method and compare the results across the concentration range. | Validate the method's specificity for the analyte in your specific sample matrix. |

The Scientist's Toolkit: Research Reagent Solutions

The table below lists essential materials and their functions for managing systematic error.

| Item | Primary Function in Error Control |

|---|---|

| Certified Reference Materials (CRMs) | Provide an unbiased, traceable reference point for instrument calibration and method validation to detect and correct systematic error [18]. |

| Internal Standards (IS) | Correct for variability in sample preparation, injection volume, and matrix effects in techniques like LC-MS, thereby reducing proportional bias. |

| Quality Control (QC) Materials | Monitor the stability and accuracy of an assay over time through statistical process control (e.g., Levey-Jennings charts) to detect systematic drift [19]. |

| Calibrators | Create a standard curve that defines the relationship between the instrument's signal and the analyte's concentration, which is fundamental to avoiding proportional bias. |

Experimental Protocols for Error Detection

Protocol: Method Comparison for Bias Detection

Purpose: To quantify the systematic error (bias) between a new test method and a reference method.

Procedure:

- Sample Selection: Select 40-100 patient samples that span the clinically relevant concentration range (including the narrow range of interest).

- Analysis: Analyze each sample using both the test method and the reference method in a randomized order to avoid carry-over bias.

- Data Analysis:

- Plot the results of the test method (y-axis) against the reference method (x-axis).

- Perform a linear regression analysis (y = mx + c).

- Interpret the results: A slope (

m) significantly different from 1.0 indicates proportional bias. An intercept (c) significantly different from 0 indicates constant bias [19].

Protocol: Recovery Experiment

Purpose: To assess the accuracy of an assay and identify matrix effects that cause proportional bias.

Procedure:

- Prepare Samples:

- Base Sample: Aliquot a patient sample with a known low endogenous level of the analyte.

- Spiked Sample: Add a known, precise volume of a high-concentration standard solution to another aliquot of the base sample.

- Standard Sample: Prepare a standard solution in the same solvent (e.g., water or buffer) at a concentration similar to the spike.

- Analysis: Measure the concentration of the analyte in all three samples using the test method.

- Calculation:

- Calculate the recovery percentage:

% Recovery = ( [Spiked] - [Base] ) / [Standard] * 100 - A recovery consistently different from 100% indicates a systematic error, often a proportional bias due to matrix interference [19].

- Calculate the recovery percentage:

Workflow and Relationship Diagrams

Diagram 1: A logical workflow for the detection and correction of systematic error in the laboratory.

Diagram 2: A hierarchical breakdown of the components that contribute to total measurement error, categorizing common sources of systematic and random error [20] [18] [19].

The Critical Impact of Proportional and Constant Errors on Narrow Concentration Results

Understanding Systematic Errors in Analytical Measurements

In laboratory research, systematic errors are consistent, reproducible biases that push all measurements in one direction, either too high or too low [22]. Unlike random errors, which affect precision, systematic errors affect the accuracy of your results, creating a consistent deviation from the true value [10] [18]. For researchers working with narrow concentration ranges, such as in drug development or clinical chemistry, identifying and correcting these errors is critical, as they can distort every measurement and lead to incorrect conclusions or misdiagnoses [22].

The two main types of systematic errors that significantly impact narrow concentration results are:

Constant Error: A fixed error that is the same size regardless of the analyte concentration. It adds or subtracts a constant value to every measurement [22]. Example: A pipette consistently delivers 0.1 mL less than intended, causing all results to be equally underestimated [22].

Proportional Error: An error that scales with the analyte concentration. The higher the true value, the larger the absolute error becomes [22]. Example: A spectrophotometer with a calibration slope error that reads 5% too high. A true value of 50 mg/dL would be reported as 52.5 mg/dL, while a 200 mg/dL value would be reported as 210 mg/dL [22].

The following table summarizes the core characteristics of these errors:

| Error Type | Definition | Impact on Results | Common Causes |

|---|---|---|---|

| Constant Error | A fixed bias that is the same absolute value across all concentrations [22]. | Shifts all results by the same amount; more impactful at lower concentrations [22]. | Instrument offset, improper zeroing/taring, consistent pipetting inaccuracy [21] [23]. |

| Proportional Error | A bias that changes as a proportion of the analyte concentration [22]. | The absolute error increases with concentration; more pronounced at higher concentrations [22]. | Incorrect calibration slope, worn instrument components, faulty standard solutions [21] [23]. |

Troubleshooting Guides & FAQs

FAQ 1: How can I determine if the error in my narrow concentration range data is constant or proportional?

Answer: The most effective method is to perform a comparison of methods experiment using your test method and a well-characterized reference method [24]. Analyze at least 40 patient specimens that cover the entire working range of your method [24]. Graph the data and perform statistical analysis to identify the error pattern.

- Graphical Analysis: Plot your test method results (y-axis) against the reference method results (x-axis) [24].

- If the data cloud is parallel to but offset from the line of identity, a constant error is likely.

- If the data cloud diverges from or converges toward the line of identity, a proportional error is present.

- Statistical Analysis: Perform linear regression analysis (y = a + bx) on the comparison data [24].

- The y-intercept (a) provides an estimate of the constant error.

- The deviation of the slope (b) from 1.00 provides an estimate of the proportional error.

Immediate Action:

- Graph your data as you collect it to visually identify discrepant results early [24].

- For a narrow analytical range, calculate the average difference (bias) between your method and the comparative method. A consistent bias suggests a constant error [24].

FAQ 2: Why are constant errors particularly dangerous for measurements at the low end of a narrow concentration range?

Answer: Constant errors are especially dangerous at low concentrations because the fixed bias represents a larger relative percentage of the measured value [22]. This can lead to serious misinterpretation of clinically or experimentally critical thresholds.

Consider this scenario in glucose testing:

- True Value: 50 mg/dL (a critical low indicating hypoglycemia)

- Constant Error: -5 mg/dL (e.g., from a pipette consistently under-delivering)

- Reported Value: 45 mg/dL

In this case, the -5 mg/dL error is a 10% relative error at this low concentration, potentially causing a missed diagnosis of hypoglycemia [22]. The same -5 mg/dL error at a true value of 200 mg/dL is only a 2.5% relative error. The table below illustrates this critical impact.

| True Concentration | Constant Error | Reported Result | Absolute Error | Relative Error | Potential Clinical Impact |

|---|---|---|---|---|---|

| 50 mg/dL | -5 mg/dL | 45 mg/dL | 5 mg/dL | 10% | High risk of misdiagnosis (e.g., missed hypoglycemia) [22] |

| 200 mg/dL | -5 mg/dL | 195 mg/dL | 5 mg/dL | 2.5% | Lower risk of misinterpretation at this level |

Answer: Proportional errors often stem from issues that affect the analytical system's response factor or calibration across the concentration range [22].

Common Sources and Troubleshooting Steps:

| Source | Description | Corrective Action |

|---|---|---|

| Calibration Errors | Incorrect slope of the calibration curve due to improper preparation of standard solutions or a miscalibrated instrument [21]. | - Use fresh, accurately prepared standards.- Perform regular, full calibration using a multi-point curve.- Verify calibration with independent quality control materials. |

| Instrument Drift | Gradual changes in instrument sensitivity over time (e.g., due to aging components, temperature fluctuations) [21]. | - Implement a rigorous instrument maintenance schedule.- Allow sufficient warm-up time before analysis.- Include quality control checks at frequent intervals within a run. |

| Matrix Effects | The sample matrix (e.g., plasma, serum) can enhance or suppress the analytical signal in a concentration-dependent manner. | - Use matrix-matched calibration standards where possible.- Employ a stable isotope-labeled internal standard to correct for variable recovery. |

FAQ 4: What experimental protocol should I follow to quantify systematic error in my assay?

Answer: Follow a standardized comparison of methods protocol [24].

Detailed Methodology:

Experimental Design:

- Comparative Method: Select a reference method or a well-characterized routine method. The correctness of the comparative method dictates how you interpret differences [24].

- Specimens: Use a minimum of 40 different patient specimens. Select them to cover the entire working range of your method. Avoid using spiked samples alone, as real patient specimens represent the full spectrum of potential interferences [24].

- Replication: Analyze each specimen in a single measurement by both the test and comparative methods. However, performing duplicate measurements is ideal to identify sample mix-ups or transposition errors [24].

- Timeframe: Conduct the experiment over a minimum of 5 different days to minimize systematic errors that might occur in a single run. Integrating this with a long-term replication study over 20 days is preferable [24].

Sample Analysis:

- Analyze test and comparative methods on the same specimen within two hours of each other to minimize stability issues. Define and systematize specimen handling procedures beforehand [24].

- Analyze specimens in a randomized order to avoid bias.

Data Analysis:

- Graphing: Create a scatter plot (comparison plot) with the test method results on the y-axis and the comparative method results on the x-axis. Visually inspect for patterns and outliers [24].

- Statistics:

- For a wide concentration range, use linear regression (y = a + bx). Calculate the systematic error (SE) at a critical medical decision concentration (Xc) as:

Yc = a + b*Xc, thenSE = Yc - Xc[24]. - For a narrow concentration range, a paired t-test is often more appropriate. Calculate the average difference (bias) and the standard deviation of the differences [24].

- For a wide concentration range, use linear regression (y = a + bx). Calculate the systematic error (SE) at a critical medical decision concentration (Xc) as:

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Error Investigation |

|---|---|

| Certified Reference Materials (CRMs) | Provides a sample with a known, certified analyte concentration. Used to detect and quantify systematic bias by comparing your method's result to the certified value[cite:9]. |

| Stable Isotope-Labeled Internal Standards | Added to samples at a known concentration before processing. Corrects for proportional errors caused by variable and inefficient sample preparation, matrix effects, or instrument response drift. |

| Quality Control (QC) Materials | (e.g., commercial QC pools at multiple levels) Used to monitor both constant shifts (changes at all QC levels) and proportional trends (increasing deviation with higher concentration) over time [22]. |

| Multi-point Calibrators | A set of standards spanning the analytical range. Essential for establishing the correct calibration curve slope and intercept, thereby minimizing both proportional and constant errors [24]. |

Troubleshooting Guide: Identifying and Resolving Systematic Errors

This guide helps researchers identify, troubleshoot, and prevent systematic errors that compromise drug concentration data in clinical trials.

Problem 1: Unexplained Consistency in Inaccurate Data

The Issue: Your drug concentration measurements are consistently skewed in one direction from the known standard, even after repeating experiments.

Underlying Cause: This pattern indicates a systematic error or bias, often stemming from faulty equipment calibration, incorrect methodology, or flawed reagent preparation [25]. Unlike random errors, which vary unpredictably, systematic errors are consistent and reproducible inaccuracies that shift all measurements in the same direction [26].

Troubleshooting Steps:

- Calibrate Equipment: Regularly calibrate all instruments (e.g., pipettes, analytical balances, HPLC systems) using traceable standards [27].

- Use Controls: Incorporate known controls or standards in every assay run. A significant deviation from the expected control value signals a systematic issue.

- Method Comparison: Analyze the same samples using a different, well-validated method. Consistent discrepancies point to systematic error in your primary method.

- Blinded Re-testing: Have a second analyst, blinded to the initial results, re-prepare and re-analyze a subset of samples.

Problem 2: High Precision but Poor Accuracy

The Issue: Replicate measurements show very little variation (high precision) but consistently differ from the true value (poor accuracy).

Underlying Cause: This classic signature of systematic error suggests that your measurement process is stable but fundamentally flawed [25]. Causes include incorrect standard concentration calculations, using expired reagents, or a miscalibrated instrument.

Troubleshooting Steps:

- Audit Reagents and Standards:

- Verify the certificates of analysis for all reference standards.

- Check expiration dates for all reagents, calibrators, and quality controls.

- Ensure proper storage conditions have been maintained.

- Verify Calculations: Manually re-check all calculations used for preparing standard curves and stock solutions. Have a colleague independently verify them.

- Instrument Log Review: Check maintenance and calibration logs for the analytical equipment to ensure they are up-to-date.

Problem 3: Unexplained Patient/Subject Variability

The Issue: Drug concentration data shows unexpected, systematic deviations from the anticipated dose in a clinical trial, but the analytical method itself has been validated.

Underlying Cause: The error may originate in the clinical operations phase, not the lab. A real-world study on intravenous acetylcysteine infusions found that only 37% of infusion bags were within 10% of the anticipated dose, and about 5% of cases involved systematic calculation errors [28]. These errors can occur during dose calculation, solution preparation, or administration [28] [29].

Troubleshooting Steps:

- Review Source Documents: Scrutinize case report forms for inconsistencies in patient weight recording, dose calculation, and preparation instructions.

- Analyze Dosing Patterns: Look for systematic errors linked to specific clinical sites, study personnel, or shifts, which can indicate a localized training or process issue [30].

- Implement Quality Checks: Introduce a second-person verification step for all critical calculations and solution preparations in the trial protocol [31].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between a systematic error and a random error in my concentration data?

A: The core difference lies in consistency and origin.

- Systematic Error (Bias): A consistent, reproducible inaccuracy that shifts all measurements in the same direction by the same proportion. It is caused by flaws in the system, method, or equipment. Examples include a miscalibrated scale that always adds 0.5g or a faulty calculation formula [25] [26]. It affects accuracy.

- Random Error: Unpredictable, fluctuating variations caused by unpredictable factors in the measurement environment. Examples include electronic noise in an instrument or slight variations in manual pipetting. These errors affect precision and can be reduced by taking multiple measurements [27].

Q2: My clinical trial data shows major protocol deviations. How can I determine if these are systematic errors?

A: Look for patterns that are not random. Systematic errors will often cluster around specific procedures, sites, or personnel. In one case, a "Study Health Check" revealed that 41% of subjects were missing a primary endpoint assessment because sites systematically failed to collect the key data, and these critical errors were not caught by traditional monitoring [30]. Conduct a root cause analysis focused on processes and systems, not individual blame, to identify the source of the systematic failure [29].

Q3: What are the most common sources of systematic error in drug concentration assays?

A: Common sources include:

- Faulty Calibration: Using outdated or incorrect standard curves.

- Reagent Issues: Expired reagents, improperly prepared solutions, or contaminated solvents.

- Instrument Error: Miscalibrated pipettes, balances, or detectors.

- Methodological Flaws: Incorrect sample preparation, inadequate extraction recovery, or unaccounted-for matrix effects.

- Operator Bias: Consistently misreading an instrument or incorrectly following a protocol.

Q4: How can we prevent systematic errors during the administration of an investigational drug in a trial?

A: Prevention requires a systemic approach:

- Simplify Protocols: Complex dosing schedules are a major source of error. Simplify them where possible [28] [31].

- Standardize and Train: Use standardized formulas, dosing charts, and preparation procedures. Ensure consistent training for all staff [29].

- Technology Solutions: Implement barcode scanning for drug and patient identification [32].

- Independent Checks: Incorporate a second-person verification for dose calculations and solution preparations [28].

- Proactive Oversight: Use risk-based monitoring and protocol-specific analytics to identify systematic errors early, rather than relying solely on retrospective reporting [30].

Experimental Protocol: Case Study on Intravenous Infusion Errors

The following detailed methodology is based on a prospective study that quantified errors in administering intravenous acetylcysteine [28].

Objective

To prospectively measure the concentration of an investigational drug in intravenous infusion bags and compare it to the theoretically anticipated dose, thereby identifying and quantifying random and systematic errors in a routine clinical setting.

Materials and Reagents

| Material/Reagent | Function in the Experiment |

|---|---|

| Investigational Drug (e.g., Acetylcysteine) | The active pharmaceutical ingredient whose concentration is being verified. |

| Infusion Bags (Glucose 5% Solution) | The diluent and vehicle for the intravenous drug administration. |

| Sterile Syringes and Sample Containers | For aseptically drawing pre- and post-infusion samples from the infusion bag. |

| Freezer (-20°C) | For stable storage of collected samples prior to batch analysis. |

| HPLC System with UV/Vis Detector | Analytical equipment for quantifying the drug concentration in the samples. |

| Drug Reference Standard | Used to create a calibration curve for accurate concentration determination. |

| Quality Control Samples (Low/High) | To ensure the accuracy and precision of each analytical batch. |

Step-by-Step Procedure

- Study Design: A multi-center, prospective study is conducted. Patients, prescribers, and staff are anonymized to focus on process errors.

- Infusion Preparation: Healthcare staff prepare infusions according to the complex standard protocol, which involves different doses and volumes for sequential bags based on patient weight.

- Sample Collection:

- Pre-infusion Sample: At the start of administration, a 5 ml sample is drawn from the infusion bag into a labeled container.

- Post-infusion Sample (Where possible): A second 5 ml sample is drawn from the bag at the end of the infusion to check for inadequate mixing.

- Sample Storage and Handling: Samples are immediately frozen at -20°C and transported in batches to a central laboratory for analysis.

- Sample Analysis:

- Thaw samples and prepare them for analysis.

- Use a validated analytical method (e.g., HPLC). For acetylcysteine, an established method was used with a five-point calibration curve (e.g., 20-100 mg/ml) [28].

- Include quality control samples in each analytical batch. The cited study reported inter-assay coefficients of variation of 6.8% and 3.9% at different concentrations [28].

- Data Analysis:

- Convert raw concentration data (mg/L) into a percentage of the anticipated concentration for that specific bag and patient.

- Analyze the distribution of errors to distinguish between random variation and systematic errors. A systematic error is suspected if all bags for a patient are wrong by a similar margin.

Key Quantitative Findings from a Real-World Study

| Error Metric | Result |

|---|---|

| Bags within 10% of anticipated dose | 37% (68 of 184 bags) |

| Bags within 20% of anticipated dose | 61% (112 of 184 bags) |

| Bags with major error (>50% deviation) | 9% (17 of 184 bags) |

| Cases with systematic calculation errors | 5% (95% CI: 2%, 8%) |

| Major errors in "drawing up" the drug | 3% (95% CI: 1%, 7%) |

| Bags with inadequate mixing | 9% (95% CI: 4%, 14%) |

Source: Adapted from a study on acetylcysteine infusion errors [28].

Visualizing Error Concepts and Workflows

Systematic vs Random Error

Experimental Quality Control Workflow

Advanced Detection and Correction Methods for Systematic Bias

Implementing Standard Calibration to Identify Proportional Errors

Troubleshooting Guide: Identifying and Correcting Proportional Error

FAQ: Understanding Proportional Error

What is a proportional error and how does it differ from a constant error? A proportional error is a type of systematic error where the magnitude of the error increases in proportion to the concentration of the analyte being measured [24]. Unlike a constant error, which shifts all measurements by the same fixed amount regardless of concentration, a proportional error creates a percentage-based discrepancy. In statistical terms, this manifests as a slope different from 1.00 in a comparison of methods experiment, whereas a constant error appears as a non-zero y-intercept [24].

What are the common symptoms of proportional error in my data? The most telling symptom is that the difference between your test method and the reference method increases as the concentration increases [24]. When you plot your test results against reference values, you'll observe that the data points deviate progressively further from the line of identity at higher concentrations. In a difference plot, where (test result - reference value) is plotted against the reference value, you'll see a clear slope or trend rather than random scatter around zero [33].

Why does proportional error particularly affect research in narrow concentration ranges? In narrow concentration ranges, the distinction between proportional and constant error becomes blurred and more difficult to detect statistically [24]. A small proportional error across a narrow range can easily be mistaken for a constant error, leading to incorrect correction strategies. Furthermore, the clinical or analytical significance of a proportional error may be magnified in narrow ranges where decision points are critical, making accurate identification and correction essential.

What are the primary sources of proportional error in analytical methods? Proportional errors often arise from issues with calibration, specifically incorrect assignment of the calibration factor or multiplier [33]. Other common sources include instrument detector non-linearity, incomplete chemical reactions (where the percentage completion varies with concentration), matrix effects that become concentration-dependent, and analyte degradation that follows first-order kinetics. In immunoassays, hook effects at high concentrations can also manifest as proportional errors.

Experimental Protocol: Calibration Verification for Proportional Error Detection

Purpose: To verify the accuracy of instrument calibration across the reportable range and identify the presence of proportional systematic error [33].

Materials and Equipment:

- Minimum of 5 calibration verification materials with assigned values covering the entire reportable range (low, mid, and high concentrations)

- Reference materials with traceable values

- Test instrument and comparative method equipment

- Data collection and analysis software

Procedure:

- Select calibration verification materials with assigned values that cover the entire reportable range. CLIA requires a minimum of 3 levels, but 5 levels are recommended for better characterization of error patterns [33].

- Analyze each material in replicate (preferably triplicate) following standard operating procedures.

- Plot the measurement results (y-axis) against the assigned values (x-axis).

- Draw a line of identity (45-degree line representing perfect agreement).

- Calculate linear regression statistics (slope, y-intercept, and standard error of the estimate) for the measured values against the assigned values.

- For methods with a narrow analytical range (e.g., sodium, calcium), calculate the average difference between methods (bias) instead of regression statistics [24].

Interpretation:

- A slope significantly different from 1.00 indicates proportional error.

- The systematic error at any medical decision concentration (Xc) can be calculated as: SE = (A + bXc) - Xc, where A is the y-intercept and b is the slope [24].

- Compare the observed errors to your predefined quality requirements based on clinical needs.

Troubleshooting Workflow for Proportional Error

Quantitative Acceptance Criteria for Calibration Verification

Table 1: Criteria for assessing calibration verification performance and identifying proportional error

| Assessment Method | Calculation | Acceptance Criteria | Indication of Proportional Error |

|---|---|---|---|

| Slope Analysis | Linear regression slope (b) | Ideally 1.00 ± acceptable variance | Slope significantly ≠ 1.00 |

| Clinical Specification | For glucose: 1.00 ± 0.10For sodium: 1.00 ± 0.03 [33] | Slope within specified range | Slope outside clinical limits |

| Systematic Error at Decision Level | SE = (A + bXc) - Xc [24] | SE < Total Allowable Error (TEa) | SE exceeds TEa and increases with concentration |

| Bias Budget Approach | Allowable bias = 0.33 × TEa [33] | Observed bias < 0.33 × TEa | Pattern of increasing bias with concentration |

Research Reagent Solutions for Calibration Experiments

Table 2: Essential materials and reagents for calibration verification studies

| Reagent/Material | Specifications | Function in Experiment |

|---|---|---|

| Calibration Verification Materials | Materials with known assigned values, control solutions, or proficiency testing samples [33] | Provide reference points with known expected values to test instrument response across concentrations |

| Matrix-Matched Materials | Materials with similar properties to patient samples | Ensure calibration verification under conditions representative of actual sample analysis |

| Reference Method Materials | Materials for definitive comparative method [24] | Establish reference values for comparison studies when a true gold standard is unavailable |

| Linearity Materials | Special series with assigned values across reportable range [33] | Characterize instrument response across entire measurement range to identify proportional effects |

| Quality Control Materials | Stable materials with known characteristics | Monitor system performance before, during, and after calibration verification experiments |

Statistical Analysis Protocol for Proportional Error Identification

Linear Regression Methodology:

- Collect a minimum of 40 patient specimens covering the entire working range of the method [24].

- Analyze specimens by both test and comparative methods within 2 hours of each other to maintain specimen stability [24].

- Plot test method results (y-axis) against comparative method results (x-axis).

- Calculate slope (b), y-intercept (a), standard deviation about the regression line (s~y/x~), and correlation coefficient (r) using standard linear regression formulas [24].

- Determine if the slope is significantly different from 1.00 using appropriate statistical tests (t-test for slope).

- Calculate systematic error at critical medical decision concentrations using the formula: Y~c~ = a + bX~c~, then SE = Y~c~ - X~c~ [24].

Important Considerations:

- A correlation coefficient (r) of 0.99 or larger ensures reliable estimates of slope and intercept [24].

- For narrow concentration ranges, paired t-test calculations may be more appropriate than regression analysis [24].

- Always perform graphical analysis alongside statistical calculations to identify nonlinear patterns and outliers [33].

In analytical chemistry, particularly in research involving narrow concentration ranges, systematic errors can significantly compromise data integrity and lead to incorrect conclusions. Unlike random errors, these biases are reproducible inaccuracies that consistently skew results in one direction. Youden calibration is a powerful, yet sometimes overlooked, methodological approach specifically designed to detect and correct for a specific class of these errors: constant systematic errors. This guide provides troubleshooting support to help researchers, scientists, and drug development professionals effectively implement Youden calibration in their experimental workflows.

FAQ: Core Concepts and Troubleshooting

Q1: What is a constant systematic error, and how does it differ from a proportional error? A constant systematic error, often referred to as bias, is an inaccuracy that remains the same regardless of the analyte's concentration. For example, if every measurement is consistently 0.5 units too high due to an unaccounted blank contribution, that is a constant error. In contrast, a proportional error increases in magnitude as the analyte concentration increases [19]. Youden calibration is specifically designed to detect and correct for this constant type of error [34].

Q2: When should I consider using Youden calibration in my assay development? Youden calibration is particularly valuable in the following scenarios:

- When you suspect a constant bias in your analytical method, such as from an improperly corrected blank or a consistent matrix effect [34].

- When you are working within a narrow concentration range where constant errors can have a disproportionately large impact on accuracy [35].

- As part of initial method validation to test the underlying assumptions of your calibration model before committing to a full collaborative trial [36].

Q3: My Youden plot shows significant scatter, and the data points do not align well with the calibration line. What could be the cause? Significant scatter around the Youden plot's calibration line indicates substantial random error or imprecision in your measurements. Before you can reliably identify a constant systematic error, you must improve your method's precision. Investigate the following:

- Instrumental Noise: Ensure your instrumentation is stable and properly maintained.

- Sample Preparation: Standardize and control sample handling, extraction, and dilution steps rigorously.

- Reagent Quality: Use high-purity reagents and standards to minimize contamination-related variability [37].

Q4: According to my Youden calibration, a constant error is present. What corrective actions can I take? Once a constant systematic error is confirmed and quantified by the Youden plot, you can:

- Apply a Correction Factor: The calculated intercept (β₀) from the Youden calibration equation provides an estimate of the constant bias. This value can be subtracted from your future analytical results obtained via standard calibration to correct them [34].

- Investigate the Source: The most robust solution is to identify and eliminate the root cause. Re-examine your blank correction procedure, check for contaminated reagents or standards, and verify that your sample preparation steps are not introducing a consistent contaminant [19].

Q5: How does Youden calibration integrate with other calibration techniques? Youden calibration is part of a comprehensive strategy to ensure accuracy. It is often used in conjunction with:

- Standard Calibration: Used for routine quantification once systematic errors have been accounted for.

- Standard Additions Method: Particularly effective for detecting and correcting matrix-induced proportional errors that Youden calibration does not address [34] [38]. Using these methods together provides a more complete picture of your method's accuracy profile.

Experimental Protocol: Implementing Youden Calibration

This section provides a step-by-step methodology for performing a Youden calibration to detect constant systematic errors.

Principle The Youden calibration is performed by analyzing two different amounts of the same sample. The resulting plot of the signal from the larger portion against the signal from the smaller portion allows for the detection of constant errors, which manifest as a non-zero intercept [34].

Materials and Reagents

- Analyte Standard: High-purity reference material of known concentration.

- Sample: The test material containing the analyte at an unknown concentration.

- Solvent: A suitable solvent for preparing standard and sample solutions.

- Instrumentation: The calibrated analytical instrument (e.g., HPLC, spectrophotometer, ICP-MS) for signal measurement.

Procedure

- Prepare Sample Aliquots: Precisely weigh or pipette two different amounts of the same homogeneous sample. A typical ratio is 1:2 (e.g., 1.0 g and 2.0 g).

- Dilute to Equal Volume: Dilute both aliquots to the same final volume with an appropriate solvent. This crucial step ensures that any constant error is normalized and becomes detectable.

- Analyze the Solutions: Analyze each solution using your standard analytical procedure and record the instrumental signal (e.g., peak area, absorbance).

- Repeat for Calibration: Repeat steps 1-3 for a series of at least 5-6 standard solutions of the pure analyte, spanning the expected concentration range in the samples.

- Construct the Youden Plot: Create a scatter plot with the signal from the smaller sample/standard amount on the x-axis and the signal from the larger amount on the y-axis.

Data Analysis and Interpretation

- Perform a linear regression on the data from the pure standard solutions. The resulting plot should ideally be a straight line with a slope close to the ratio of the two amounts (e.g., ~2.0) and an intercept (β₀) close to zero.

- A statistically significant non-zero intercept indicates the presence of a constant systematic error.

- Plot the data point for your unknown sample on the same graph. Its position can be used to calculate the true analyte concentration in the sample, correcting for the identified constant error using the derived calibration function [34].

Youden Calibration Workflow

Research Reagent Solutions

The following table lists key materials required for the successful implementation of Youden calibration and related quality control procedures.

| Reagent/Material | Function in Youden Calibration | Critical Notes |

|---|---|---|

| Certified Reference Material (CRM) | To establish the primary calibration curve with known trueness. | Purity must be verified; essential for calculating the correction factor [37]. |

| Control Samples | A stable sample with a known concentration range used to monitor precision over time. | Used to set up Range (R) control charts for ongoing verification [35]. |

| High-Purity Solvents | For dissolving standards and samples and diluting to volume. | Impurities can contribute to constant error via biased blanks [34] [19]. |

| Blank Solutions | To measure and correct for the baseline signal of the matrix and reagents. | An inaccurate blank is a common source of the constant error detected by Youden calibration [34]. |

Quantitative Data for Quality Control

When performing duplicate analyses for Youden calibration or ongoing quality control, the following table summarizes key statistical limits used to interpret the range (absolute difference) between duplicate measurements. These limits help determine if the analytical process is in a state of statistical control [35].

| Control Limit | Value (Multiple of Mean Range, R̄) | Interpretation |

|---|---|---|

| 50% Limit | 0.845 R̄ | 50% of duplicate ranges should be greater than this value. |

| 95% Limit (Warning) | 2.456 R̄ | Only about 5% of points should exceed this limit. |

| 99% Limit (Action) | 3.27 R̄ | Points exceeding this limit indicate a likely out-of-control process and require corrective action. |

| Standard Deviation | S = R̄ / √N | Formula to calculate standard deviation from the mean range (R̄) of N duplicates. |

Experimental Protocols: Key Methodologies

Core Standard Addition Protocol for Biological Fluids

The standard addition method is a quantitative analysis technique used to determine the concentration of an analyte in complex sample matrices by adding known amounts of the analyte directly to the sample. This approach compensates for matrix effects that can alter the instrument's response, providing more accurate results than external calibration when analyzing biological samples such as serum, plasma, or urine [39] [40].

Step-by-Step Procedure:

- Preparation of Test Solutions: Aliquot equal volumes (Vx) of the biological sample with unknown analyte concentration (Cx) into a series of containers. To each container, except one blank, add increasing volumes (Vs) of a standard solution with known concentration (Cs). The blank contains only the sample and solvent [39].

- Measurement of Instrument Response: Process all solutions (including the blank) through the chosen analytical method (e.g., LC-MS, immunoassay) and measure the instrument's response (S) for each. The response could be absorbance, peak area, or other measurable signals [39] [41].

- Data Plotting and Analysis: Plot the measured instrument responses (S) on the y-axis against the concentration or volume of the added standard on the x-axis. Perform a linear regression analysis on the data points [39].

- Calculation of Unknown Concentration: The unknown concentration ( Cx ) in the original sample is determined using the extrapolation method. The linear regression curve is extended (extrapolated) to intersect the x-axis. The absolute value of the x-intercept corresponds to ( Cx ). It can also be calculated using the formula [39]:

( Cx = \frac{-b \cdot Cs}{m \cdot Vx} )

where:

- ( b ) is the y-intercept of the calibration curve.

- ( m ) is the slope of the calibration curve.

- ( Cs ) is the concentration of the standard solution.

- ( V_x ) is the volume of the sample aliquot.

Standard Additions in Immunoassays for Endogenous Analytes

For non-linear responses, such as in sigmoidal immunoassays, a modified standard addition method can be used. This involves spiking the sample with known standards and leveraging the linear portion of the log-log plot of the response. An initial estimate of the unknown concentration (U) is made, and the logarithm of the total concentration is calculated. The value of U is iteratively refined until the relationship between the log response and log total concentration is most linear. This can be implemented using standard software like Microsoft Excel Solver. This approach is valid for both sandwich and competitive immunoassays and has been demonstrated for detecting cortisol in serum and amyloid beta peptides in plasma with as few as four spiked concentrations [42].

Troubleshooting Guides

Common Issues and Solutions

| Problem | Possible Cause | Recommended Solution |

|---|---|---|

| Poor linearity in standard addition curve | Matrix effect is non-linear (e.g., translational effect); analyte concentration too high/low; instrumental drift [43] [41] | Verify sample dilution is within the method's linear dynamic range; use weighted regression for heteroscedastic data; ensure instrument stability [42] |

| High variability in replicate measurements | Inconsistent pipetting; insufficient sample mixing; instrument noise [39] | Use calibrated pipettes and maintain consistent technique; ensure thorough vortexing after each standard addition; check instrument performance metrics |

| Overestimation of recovered concentration | Incomplete compensation for matrix suppression; non-specific binding in immunoassays [42] [41] | Optimize sample preparation to remove interferents; use a more specific antibody or detector setting; validate with a certified reference material if available |

| Unrealistically high or low extrapolated value | Incorrect blank subtraction; error in standard solution concentration; extrapolation over too long a distance [39] | Verify blank measurement and subtraction; prepare fresh standard solutions from certified stock; ensure spike levels are appropriate to minimize extrapolation error |

Comparison of Standard Addition Evaluation Methods

A 2025 statistical analysis compared four approaches for estimating the unknown concentration (C₀) from standard addition data, assuming normally distributed and homoscedastic errors [43].

| Method | Principle | Key Findings (Trueness & Precision) |

|---|---|---|

| Extrapolation | Extrapolating the linear calibration curve to the x-intercept. | The most recommendable method with respect to low bias and variability, provided all underlying assumptions are met [43]. |

| Interpolation | Estimates C₀ within the range of the spiked concentrations. | Was developed in an attempt to reduce the variability of the estimator compared to the extrapolation method [43]. |

| Inverse Regression | Treats concentration as a function of the signal. | Performance compared to extrapolation method was detailed in the analysis [43]. |

| Normalization | A variation intended to improve robustness. | Might be of interest in cases with increased problems with outliers [43]. |

Frequently Asked Questions (FAQs)

Q: When is the standard addition method absolutely necessary? A: Standard addition is crucial when analyzing samples with complex, unknown, or variable matrices (e.g., blood, urine, soil extracts) where interfering substances alter the instrument's response—a phenomenon known as the "matrix effect." It is particularly important when a blank matrix free of the analyte is unavailable for preparing matched calibration standards [39] [40] [41].

Q: What is the main disadvantage of the standard addition method? A: The primary disadvantage is that it requires multiple measurements per sample, which increases experimental time, reagent consumption, and cost compared to a single-point calibration or external standard curve. It also demands careful and accurate pipetting to minimize errors in the standard additions [39].

Q: Can standard addition be used with techniques like LC-MS and immunoassays? A: Yes. While historically rooted in polarography and atomic spectroscopy, standard addition is now applied in a wide range of techniques, including LC-MS for contaminant analysis and immunoassays for endogenous biomarkers like cortisol. The fundamental principle remains the same, though the implementation may be adapted for non-linear response curves [42] [41] [44].

Q: How many standard additions are needed for a reliable result? A: While multiple additions (e.g., 4-6) are typical for constructing a robust linear regression, research has shown that with high precision, reliable estimations for some immunoassays can be achieved with as few as four distinct spike concentrations, including the zero spike [42].

Q: What is the difference between the standard addition method and using an internal standard? A: Standard addition involves adding known quantities of the same analyte to the sample. An internal standard involves adding a different, but similar, compound that is absent from the original sample to correct for variations in sample processing and instrument response. They are related but distinct concepts for overcoming different types of error [44].

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Standard Addition |

|---|---|

| Certified Reference Material (CRM) | A standard solution with a known, certified concentration of the analyte. Serves as the primary spike material to ensure accuracy and traceability [42] [45]. |

| Stable Isotope-Labeled Internal Standard (SIL-IS) | A structurally identical analog of the analyte labeled with heavy isotopes (e.g., ¹³C, ²H). Added to correct for sample loss during preparation and ionization suppression/enhancement in MS, complementing standard addition [41]. |

| Matrix-Matched Calibrant | A calibrant prepared in a solution that mimics the sample's matrix. Used when a full standard addition is not feasible, though it can be difficult to perfectly match unknown sample matrices [41]. |

| Charcoal-Stripped Serum/Plasma | A biological fluid processed to remove endogenous hormones and other molecules. Used as a "blank" matrix for constructing conventional calibration curves in method development, to be compared with standard addition results [42]. |

Workflow and Conceptual Diagrams

Standard Additions Experimental Workflow

Concept of Matrix Effect on Calibration

Troubleshooting Guides

Guide 1: Poor Accuracy at Lower Concentrations

Problem: Your calibration curve shows good fit at medium and high concentrations, but demonstrates significant inaccuracy (bias) at the lowest end of the narrow concentration range.

Explanation: In narrow concentration ranges, the impact of proportional systematic error is magnified at the lower end. Without proper weighting, standard linear regression gives unequal emphasis to data points, allowing higher concentrations to dominate the curve fit [46].

Solution: Implement weighted least squares regression.

- Collect sufficient data: During method validation, run a sufficiently large data set to calculate standard deviations at each calibrator concentration [46].

- Test weighting factors: Use your data analysis software to apply different weighting schemes. The most common are

1/x,1/x², and1/x^0.5[47] [46]. - Select the optimal weight: For each weighting factor, calculate the sum of the absolute values of the relative error (%RE) for all calibration points. The weighting factor that gives the smallest sum is the best choice. Use the simplest model (e.g.,

1/xbefore1/x²) that minimizes error adequately [46].

Prevention: Always evaluate curve weighting during the method development and validation phases, especially when working within narrow ranges where relative error is expected to be constant across the levels [46].

Guide 2: Establishing Traceability for Narrow-Range Standards

Problem: Difficulty ensuring that in-house prepared calibration standards for a narrow concentration range are traceable to a national standard.

Explanation: Traceability requires an unbroken, documented chain of calibration comparisons leading back to a recognized national or international standard, such as those from the National Institute of Standards and Technology (NIST) [48]. This chain must account for measurement uncertainty at each step.

Solution: Implement a documented standard preparation workflow.

- Source Certified Reference Materials (CRMs): Begin with a CRM that provides the highest accuracy and direct traceability, with an unbroken chain of comparisons to the International System of Units (SI) [47].

- Prepare stock solution gravimetrically: Use calibrated balances and pipettes to minimize volumetric and weighing uncertainties. Pipettes should be professionally calibrated regularly [48] [49].

- Create a color-coded workflow: Use a printed, color-coded spreadsheet and matching vial labels to visually track the preparation of serial dilutions, minimizing the risk of cross-contamination or using the wrong standard [49].

- Document the entire process: Maintain records that include equipment identification, calibration dates, the individual performing the preparation, and the date the next calibration is due, as required by standards like 21 CFR 820.72 [48].

Verification: The easiest way to ensure a third-party vendor or internal process is conducting calibrations properly is to check for ISO/IEC 17025 certification [48].

Guide 3: Managing Increased Uncertainty in Narrow Range Calibration

Problem: The relative measurement uncertainty becomes unacceptably high when the calibration range is constrained.

Explanation: All measurement processes have inherent random error. In a narrow concentration range, the absolute value of this error constitutes a larger percentage of the measured value, leading to a higher relative uncertainty and potentially obscuring the true systematic error you are trying to control [1] [50].

Solution: Control variables and increase effective sample size.

- Control environmental variables: In controlled experiments, carefully manage any extraneous variables (e.g., temperature, humidity, solvent batch) that could impact measurements for all samples and standards [1].

- Take repeated measurements: Increase precision by taking multiple measurements of the same standard and using their average. This helps cancel out random noise [1].

- Use a large sample size: In the context of calibration, this means using a sufficient number of replicate measurements at each calibration level. Large samples have less random error than small samples because errors in different directions cancel each other out more efficiently [1].

- Use stable equipment: Ensure instruments are properly maintained and housed in a stable environment to minimize electronic drift, which is a source of random error [51].

Frequently Asked Questions (FAQs)

Q1: Why is method validation necessary even when using a manufacturer's calibrated instrument?

It is crucial to demonstrate that the method performs well under your specific laboratory conditions. Many factors can affect performance, including different lots of reagents and calibrators, local climate control, water quality, and the skills of your analysts. Method validation studies are often required by regulations (e.g., CLIA in the US) to ensure reliable patient results [51].

Q2: What is the difference between random and systematic error, and which is a bigger concern in narrow-range analysis?

- Random Error: Affects the precision of your measurements, causing unpredictable variability around the true value. It can be reduced by taking repeated measurements and using larger sample sizes [1] [50].

- Systematic Error: Affects the accuracy of your measurements, causing a consistent or proportional difference from the true value. It skews your data in a specific direction [1] [50].

For narrow-range calibration, systematic error is generally a bigger problem because it can lead to false conclusions (Type I or II errors) about the relationship between variables. While random error can often be averaged out, systematic error biases all measurements and can be magnified in a constrained range [1] [50].

Q3: How many calibration points (levels) are sufficient for a narrow concentration range?

While a well-constructed calibration curve typically includes at least six concentration levels to ensure reliable results [47], the exact number for a narrow range depends on the required accuracy. A 3-point calibration might be sufficient to cover a narrow range, but custom point calibrations can be used for special applications [48]. The key is that the points adequately define the curve's behavior within your specific range of interest.

Q4: What statistical methods should I use to validate my calibration curve's linearity?

The coefficient of determination (R²) alone is insufficient for validating linearity. A comprehensive approach includes [47] [46]:

- Assess homoscedasticity: Use F-tests or visually inspect residual plots to see if the variance is constant across the range.