Mastering the Simplex Method for Function Minimization: A Comprehensive Guide for Scientists and Researchers

This article provides a comprehensive exploration of the Simplex Method for solving linear programming minimization problems, tailored for researchers, scientists, and drug development professionals.

Mastering the Simplex Method for Function Minimization: A Comprehensive Guide for Scientists and Researchers

Abstract

This article provides a comprehensive exploration of the Simplex Method for solving linear programming minimization problems, tailored for researchers, scientists, and drug development professionals. It covers foundational mathematical principles, including problem formulation, slack/surplus variables, and the relationship between minimization problems and their dual maximization counterparts. The guide presents detailed methodological applications with step-by-step procedures and practical examples relevant to scientific domains like chemical synthesis and process optimization. It further addresses advanced troubleshooting techniques for common pitfalls, performance optimization strategies, and a comparative analysis with modern optimization approaches like Bayesian methods. The content synthesizes traditional operational research techniques with contemporary applications in biomedical and clinical research contexts.

Linear Programming Foundations: Understanding Minimization Principles and Problem Setup

Defining Standard Minimization Problems in Linear Programming

In the broader context of research on the simplex method for function minimization, standard minimization problems represent a fundamental class of linear programming (LP) problems with distinct mathematical characteristics. Unlike maximization problems that typically involve resource allocation under limited constraints, minimization problems frequently arise in contexts such as cost reduction, resource optimization, and efficiency improvement in pharmaceutical development and industrial processes.

A linear programming problem is classified as a standard minimization problem when it exhibits the following characteristics [1] [2] [3]:

- The objective function requires minimization

- All decision variables are constrained to be non-negative

- All functional constraints are of the "greater than or equal to" (≥) form, expressed as (ax + by ≥ c)

- The right-hand side coefficients (c) in constraints are non-negative

The general mathematical form of a standard minimization problem can be expressed as:

[ \begin{align} \text{Minimize: } & Z = c_1x_1 + c_2x_2 + \cdots + c_nx_n \ \text{Subject to: } & a_{11}x_1 + a_{12}x_2 + \cdots + a_{1n}x_n \geq b_1 \ & a_{21}x_1 + a_{22}x_2 + \cdots + a_{2n}x_n \geq b_2 \ & \vdots \ & a_{m1}x_1 + a_{m2}x_2 + \cdots + a_{mn}x_n \geq b_m \ & x_1, x_2, \ldots, x_n \geq 0 \ & b_1, b_2, \ldots, b_m \geq 0 \end{align} ]

The feasible region for such problems extends indefinitely away from the origin in the first quadrant, creating an unbounded solution space. However, the objective of minimizing the function ensures movement toward the origin, where optimal solutions typically reside at the vertices of the feasible region closest to the origin [1].

Relationship to Duality Theory

The Dual Problem Transformation

A fundamental breakthrough in solving minimization problems came from John Von Neumann's duality theory, which establishes that every minimization (primal) problem has a corresponding dual maximization problem [3]. The relationship between primal and dual problems represents a cornerstone of linear programming theory with significant implications for the simplex method.

The transformation process from primal minimization to dual maximization follows these principles [3]:

- Each constraint in the primal problem corresponds to a variable in the dual problem

- The coefficients of the primal objective function become the right-hand side constants in the dual constraints

- The right-hand side constants of the primal constraints become the coefficients of the dual objective function

- The constraint coefficient matrix is transposed between primal and dual problems

The following diagram illustrates the logical relationship between primal minimization and dual maximization problems:

Primal-Dual Transformation Example

Consider the following primal minimization problem [3]:

[ \begin{array}{ll} \text{Minimize} & Z = 12x1 + 16x2 \ \text{Subject to:} & x1 + 2x2 \geq 40 \ & x1 + x2 \geq 30 \ & x1 \geq 0, x2 \geq 0 \end{array} ]

This primal problem can be represented in matrix form as:

[ \begin{array}{cc|c} 1 & 2 & 40 \ 1 & 1 & 30 \ \hline 12 & 16 & 0 \end{array} ]

The corresponding dual maximization problem is obtained by transposing the matrix and reversing the optimization objective [3]:

[ \begin{array}{ll} \text{Maximize} & Z = 40y1 + 30y2 \ \text{Subject to:} & y1 + y2 \leq 12 \ & 2y1 + y2 \leq 16 \ & y1 \geq 0, y2 \geq 0 \end{array} ]

The optimal solution to both problems yields the same objective function value of 400, achieved at (20,10) for the primal and (4,8) for the dual, demonstrating the strong duality theorem [3].

Comparative Analysis of Linear Problem Types

The table below summarizes the key differences between standard minimization and maximization problems in linear programming:

| Characteristic | Standard Minimization Problem | Standard Maximization Problem |

|---|---|---|

| Objective | Minimize cost function | Maximize profit or utility function |

| Constraint Form | Greater than or equal to (≥) | Less than or equal to (≤) |

| Feasible Region | Unbounded away from origin | Bounded toward origin |

| Graphical Solution | Vertex closest to origin | Vertex farthest from origin |

| Typical Context | Cost reduction, resource minimization | Profit maximization, output maximization |

| Slack/Surplus Variables | Surplus variables subtracted from constraints | Slack variables added to constraints |

| Simplex Application | Solved via dual problem transformation | Solved directly via simplex method |

Methodology and Experimental Protocols

Standardization Protocol for Minimization Problems

To apply the simplex method to minimization problems, a standardization protocol must be followed to transform the problem into a solvable format [2]:

Objective Function Conversion

- Multiply the entire minimization objective function by -1

- Transform Minimize Z = c₁x₁ + c₂x₂ + ... + cₙxₙ to Maximize Z' = -c₁x₁ - c₂x₂ - ... - cₙxₙ

Constraint Standardization

- Convert all "≥" constraints to equality using surplus variables

- For each constraint a₁x₁ + a₂x₂ + ... + aₙxₙ ≥ b, subtract surplus variable s: a₁x₁ + a₂x₂ + ... + aₙxₙ - s = b

- Add artificial variables when necessary to initialize the simplex method

Variable Restrictions

- Ensure all decision variables maintain non-negativity constraints (xᵢ ≥ 0)

- If unrestricted variables exist, replace with difference of two non-negative variables: x = x⁺ - x⁻

The following workflow illustrates the complete methodological approach to solving standard minimization problems:

Computational Implementation

The simplex method implementation for minimization problems follows a systematic computational procedure [4]:

Initialization Phase

- Convert minimization problem to dual maximization problem

- Set up initial simplex tableau with slack/surplus variables

- Identify initial basic feasible solution

Iteration Phase

- Identify the entering variable (most negative coefficient in objective row)

- Determine the leaving variable (minimum ratio test)

- Perform pivot operation to update tableau

- Continue until no negative coefficients remain in objective row

Solution Extraction

- Extract optimal solution from final tableau

- Translate dual solution back to primal variables

- Verify feasibility and optimality conditions

For the example minimization problem [3]:

- Initial tableau construction includes surplus and artificial variables

- The optimal solution emerges after multiple pivot operations

- Final tableau reveals the optimal values for both primal and dual variables

Research Reagent Solutions: Computational Tools

The following table details essential computational tools and their functions for implementing simplex-based minimization solutions in research environments:

| Research Tool | Function | Implementation Example |

|---|---|---|

| Simplex Algorithm Software | Implements tableau-based pivot operations | C++ implementation with arrays for constraint coefficients [5] |

| Matrix Transposition Library | Converts primal constraints to dual form | Python NumPy for efficient matrix operations |

| Linear Programming Solver | Applies simplex method to standardized problems | Scipy's linprog with method='simplex' option [4] |

| Graphical Analysis Tool | Visualizes feasible region and solution vertices | Matplotlib for 2D problem representation [4] |

| Sensitivity Analysis Module | Examines solution stability to parameter changes | Post-optimality analysis with shadow prices |

Advanced Theoretical Considerations

Computational Complexity

The simplex method, despite its exponential worst-case complexity, performs efficiently in practice for most minimization problems [6]. Recent theoretical advances by researchers including Spielman, Teng, Huiberts, and Bach have demonstrated that with incorporated randomness, the simplex method achieves polynomial-time complexity, addressing long-standing concerns about its theoretical efficiency [6].

For minimization problems specifically, the dual simplex method often proves more efficient as it maintains optimality while working toward feasibility, particularly useful when adding new constraints to already solved problems.

Alternative Methodologies

While the simplex method remains dominant for linear programming minimization problems, interior point methods (IPMs) have emerged as powerful alternatives, especially for large-scale problems [7]. Developed after Karmarkar's 1984 breakthrough, IPMs offer polynomial-time complexity and have gained traction in applications challenging traditional simplex approaches [7].

The comparative analysis reveals:

- Simplex methods excel for problems with sparse constraint matrices

- Interior point methods demonstrate advantages for large, dense problems

- Hybrid approaches leverage strengths of both methodologies

- Problem structure and size dictate optimal algorithm selection

Applications in Pharmaceutical Research

In pharmaceutical development, minimization problems frequently arise in resource allocation, cost optimization, and process efficiency contexts [8]. The modified simplex method, introduced by Nelder and Mead in 1965, has been particularly applied to pharmaceutical formulation optimization, where it adjusts its shape and size based on response surfaces to determine optimum values effectively [8].

Specific applications include:

- Formulation optimization with multiple independent variables

- Process parameter minimization for manufacturing efficiency

- Cost reduction in raw material allocation

- Resource minimization under regulatory constraints

The methodology enables researchers to systematically navigate complex experimental spaces while minimizing resource consumption and experimental iterations, accelerating development timelines while maintaining quality standards.

In the domain of mathematical optimization, particularly within linear programming, the simplex method stands as a cornerstone algorithm for solving complex resource allocation problems. Developed by George Dantzig during the late 1940s, this method provides a systematic approach for traversing the vertices of a feasible region to find the optimal solution to a linear programming problem [6] [9]. The efficacy of the simplex method is evidenced by its continued widespread use nearly eight decades later, despite the theoretical development of alternative algorithms [6] [7]. Within the context of function minimization research, understanding the three fundamental components—objective function, decision variables, and constraints—is paramount for proper implementation of this powerful optimization technique. This technical guide examines these core components in detail, providing researchers and drug development professionals with the foundational knowledge necessary to apply the simplex method effectively to complex optimization challenges in scientific domains.

Theoretical Foundations of the Simplex Method

The simplex method emerged from George Dantzig's work with the U.S. Air Force following World War II, where he sought to mechanize the planning process for allocating limited resources [6] [9]. The algorithm operates on the fundamental principle that for a linear program in standard form, if the objective function has a maximum value on the feasible region, then it has this value on at least one of the extreme points [9]. Furthermore, if an extreme point is not a maximum point, there exists an edge containing the point along which the objective function increases [9].

The geometrical interpretation of the simplex method involves visualizing constraints as boundaries of a polyhedron in n-dimensional space. The algorithm navigates from one vertex of this polyhedron to an adjacent vertex, improving the objective function value at each step until the optimum is reached [6] [9]. This systematic traversal of vertices, implemented through pivot operations, distinguishes the simplex method from other optimization approaches and provides both computational efficiency and intuitive appeal.

Standard Form and Problem Transformation

To apply the simplex method, linear programming problems must first be converted into standard form. This transformation involves:

- Converting inequalities to equations through the introduction of slack variables, one for each constraint [10] [9]. For example, the constraint (2x1 + x2 + x3 \leq 14) becomes (2x1 + x2 + x3 + x4 = 14) with (x4 \geq 0) [11].

- Ensuring all variables are non-negative by replacing unrestricted variables with the difference of two non-negative variables [9].

- Representing the objective function as a maximization problem, with minimization problems converted by negating the objective coefficients [9].

This standardized representation facilitates the algebraic manipulations central to the simplex method and enables systematic identification of basic feasible solutions.

Core Components of Linear Programming

Decision Variables

Decision variables represent the quantifiable entities over which the decision-maker has control. These variables form the foundation of any optimization model, as their values determine the quality of the solution according to the objective function.

Characteristics of decision variables:

- Typically denoted as (x1, x2, ..., x_n) [10] [11]

- Must be continuous and non-negative in standard linear programming [9]

- Dimension determines the complexity of the optimization problem [6]

In pharmaceutical applications, decision variables might represent quantities of active ingredients [12], production levels of different drug formulations, or resource allocation to various research projects. The careful definition of these variables is critical, as they must fully capture the decision space while maintaining mathematical tractability.

Objective Function

The objective function quantifies the goal of the optimization problem, providing a metric for evaluating potential solutions. In the context of the simplex method, this function is typically linear and expressed as:

[ \text{Maximize } z = c1x1 + c2x2 + \cdots + cnxn ]

where (c_i) represents the coefficient associated with each decision variable [10] [9].

Properties of objective functions:

- Coefficients indicate the contribution of each variable to the overall objective [13]

- Direction can be maximization or minimization [9]

- Serves as the guiding criterion for the simplex method's pivot operations [11]

For drug development problems, objective functions might maximize therapeutic efficacy, minimize production costs, or optimize dissolution rates [12]. The careful specification of this function is essential, as it directly influences the optimal solution identified by the simplex method.

Constraints

Constraints represent the limitations or requirements that feasible solutions must satisfy. These restrictions define the feasible region within which the optimal solution must reside.

Types of constraints:

- Resource constraints: Limit the consumption of available resources [13]

- Technical constraints: Impose technological or scientific limitations [12]

- Policy constraints: Enforce organizational rules or requirements

Mathematically, constraints are expressed as linear inequalities or equations:

[ a{i1}x1 + a{i2}x2 + \cdots + a{in}xn \leq b_i ]

where (a{ij}) represents the technological coefficient and (bi) the right-hand-side value [10] [9].

In pharmaceutical research, constraints might represent budget limitations, raw material availability, safety thresholds, or regulatory requirements [12]. The feasible region formed by the intersection of all constraints is a convex polyhedron, whose vertices represent potential candidates for the optimal solution in linear programming.

Table 1: Mathematical Representation of Core LP Components

| Component | Mathematical Notation | Role in Optimization | Example from Literature |

|---|---|---|---|

| Decision Variables | (x1, x2, ..., x_n) | Define the solution space | Quantity of HPMC, DCP, cornstarch in tablet formulation [12] |

| Objective Function | (z = \sum cixi) | Measures solution quality | Drug release rate at specific time points [12] |

| Constraints | (Ax \leq b, x \geq 0) | Delineate feasible solutions | Resource limits in furniture production problem [13] |

The Simplex Method Algorithm

Initialization and Slack Variables

The simplex method begins by converting inequality constraints into equations through the introduction of slack variables [10] [11]. Each slack variable represents the unused portion of a corresponding resource and is added to the left-hand side of ≤ constraints:

[ 2x1 + x2 + x3 \leq 14 \quad \Rightarrow \quad 2x1 + x2 + x3 + x_4 = 14 ]

where (x_4 \geq 0) is the slack variable [11]. This transformation yields the initial dictionary or tableau, which provides the first basic feasible solution [11].

Iteration and Pivoting

The core of the simplex method involves iterative improvement through pivot operations. Each iteration consists of:

- Selecting the entering variable: Identify a non-basic variable with a positive coefficient in the objective function (for maximization problems), typically choosing the variable with the largest positive coefficient [11].

- Selecting the leaving variable: Apply the minimum ratio test to determine which basic variable becomes zero first as the entering variable increases, ensuring feasibility is maintained [11].

- Performing the pivot: Exchange the roles of the entering and leaving variables through Gaussian elimination to create a new canonical form [9] [11].

This process continues until no positive coefficients remain in the objective function row, indicating optimality [11].

Termination and Solution Interpretation

The simplex method terminates when one of three conditions is met:

- Optimal solution found: All coefficients in the objective function row are non-positive [11]

- Unbounded problem: No leaving variable can be identified, indicating the objective function can increase indefinitely [9]

- Infeasible problem: Discovered during Phase I of the two-phase simplex method [9]

The final tableau provides the optimal values for all variables, with basic variables having values in the right-hand-side column and non-basic variables equal to zero [11].

Implementation in Scientific Research

Experimental Design and Optimization

The simplex method finds significant application in experimental design and optimization within pharmaceutical research. For instance, simplex lattice design has been successfully employed to develop extended-release tablets of diclofenac sodium [12]. This approach enables researchers to systematically explore the effects of multiple formulation variables on drug release characteristics.

Key aspects of simplex-based experimental design:

- Allows efficient exploration of multi-component systems [12]

- Constructs polynomial equations to predict system behavior [12]

- Optimizes multiple formulation variables simultaneously [12]

In the diclofenac sodium study, researchers varied the proportions of hydroxypropyl methylcellulose (HPMC), dicalcium phosphate, and cornstarch using a three-component simplex lattice design. The resulting polynomial equations successfully predicted drug release patterns, demonstrating the method's utility in pharmaceutical formulation development [12].

Multi-Objective Optimization

Many real-world optimization problems in science and medicine involve multiple, often conflicting, objectives. Recent research has extended the simplex method to address multi-objective linear programming problems (MOLP), where all objectives are optimized simultaneously [14]. This approach offers advantages over traditional goal programming methods through reduced computational effort and increased practicality [14].

Applications in pharmaceutical research:

- Balancing drug efficacy with minimal side effects

- Optimizing production cost against quality metrics

- Balancing stability with bioavailability in formulation development

The multi-objective simplex technique demonstrates particular strength in handling real-world situations with competing criteria, such as food processing optimization problems [14].

Table 2: Research Reagent Solutions for Formulation Optimization

| Reagent/Material | Function in Experiment | Application Context |

|---|---|---|

| Hydroxypropyl Methylcellulose (HPMC) | Controlled release polymer | Dictates primary drug release rate in extended-release formulations [12] |

| Dicalcium Phosphate | Excipient | Influences drug release based on proportion in formulation [12] |

| Cornstarch | Disintegrant/Binder | Affects dissolution characteristics in tablet formulation [12] |

| Diclofenac Sodium | Model drug | Active pharmaceutical ingredient whose release is being optimized [12] |

Advanced Considerations and Modern Developments

Computational Efficiency and Complexity

Despite its proven practical efficiency, the simplex method has intriguing theoretical complexity characteristics. In 1972, mathematicians proved that the algorithm's worst-case time complexity is exponential relative to the number of constraints [6]. However, in practice, the method consistently demonstrates linear time performance, creating a puzzling discrepancy between theory and observation [6] [15].

Recent theoretical breakthroughs by Bach and Huiberts have helped resolve this paradox by incorporating randomness into the algorithm analysis. Their work demonstrates that with appropriate randomization, the simplex method's runtime is guaranteed to be significantly lower than previously established bounds, providing mathematical justification for its observed efficiency [6].

Implementation in Modern Software

Contemporary linear programming software implements several optimizations that deviate from textbook descriptions of the simplex method [15]:

- Scaling: Variables and constraints are scaled so all non-zero input numbers are of order 1 [15]

- Tolerances: Solvers incorporate feasibility and optimality tolerances (typically around (10^{-6})) to accommodate floating-point arithmetic limitations [15]

- Perturbations: Small random numbers are added to right-hand-side values to avoid degeneracy and cycling [15]

These implementation details are critical for the robust performance of the simplex method in solving large-scale real-world problems.

Comparison with Interior Point Methods

While the simplex method remains widely used, interior point methods (IPMs) have emerged as competitive alternatives since Karmarkar's seminal 1984 paper [7]. IPMs offer polynomial-time complexity and particular advantages for certain problem classes:

- Advantages of IPMs: Better theoretical complexity, superior performance on very large-scale problems [7]

- Advantages of simplex: Efficient warm-starting for related problems, better performance on degenerate problems [7]

Modern optimization practice often involves selecting the appropriate algorithm based on problem characteristics, with both simplex and interior point methods representing valuable tools in the optimization toolkit.

The simplex method continues to be a fundamental algorithm in linear programming, with its core components—decision variables, objective function, and constraints—forming the essential building blocks of optimization models across scientific disciplines. In pharmaceutical research and drug development, this method provides a systematic approach to formulation optimization, experimental design, and resource allocation. Recent theoretical advances have enhanced our understanding of the algorithm's efficiency, while modern implementations continue to evolve through sophisticated computational techniques. As optimization challenges in science grow increasingly complex, the simplex method's unique combination of intuitive geometric interpretation and proven practical performance ensures its ongoing relevance to researchers and practitioners.

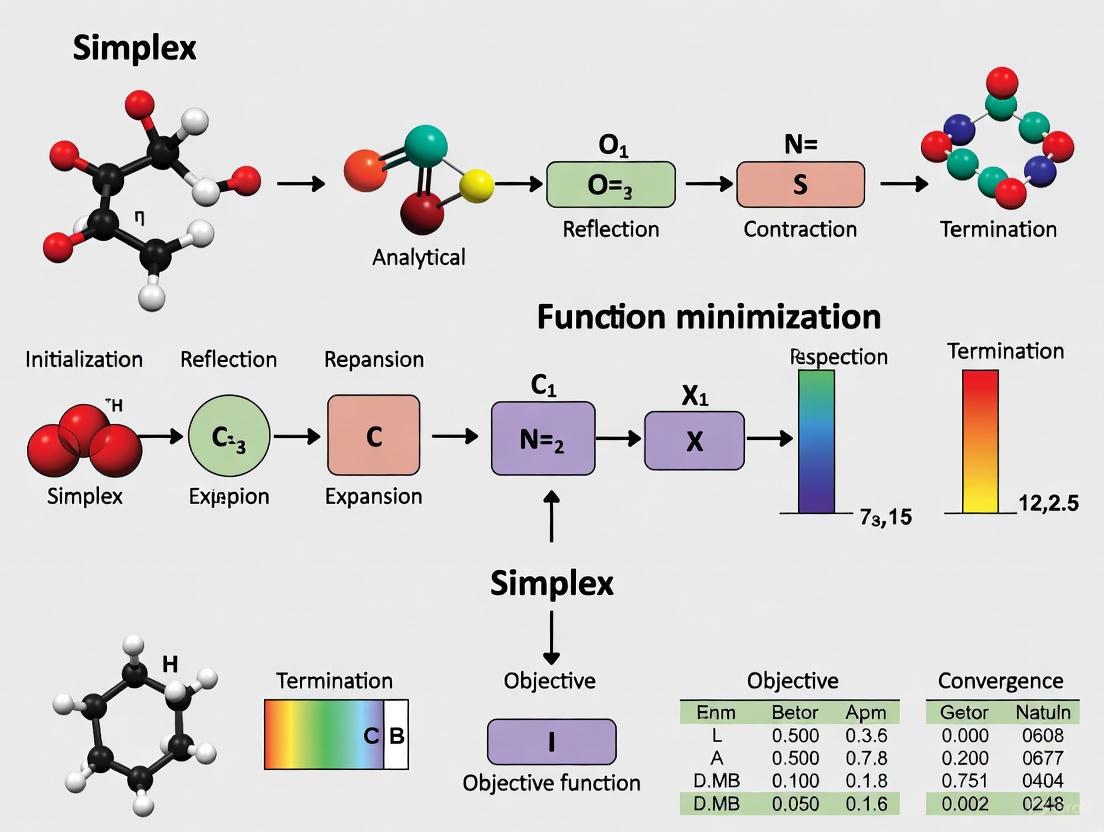

Visualizations

Simplex Method Workflow

Simplex Method Algorithm Workflow

Simplex Lattice Experimental Design

Pharmaceutical Formulation Optimization Workflow

The Role of Slack and Surplus Variables in Converting Inequalities

In the context of linear programming, the simplex method stands as a cornerstone algorithmic approach for solving optimization problems. Central to its application is the transformation of real-world constraints into a mathematically tractable standard form. This conversion process relies critically on the introduction of specialized variables that bridge the gap between inequality constraints and the equality requirements of the simplex method. Within this framework, slack and surplus variables serve as fundamental computational tools that enable the systematic processing of constraint inequalities. These variables play a pivotal role in converting a linear programming problem into its standard form, thereby facilitating the implementation of the simplex algorithm for function minimization and maximization problems across diverse fields, including pharmaceutical research and development where optimization problems frequently arise in resource allocation, process optimization, and experimental design.

The significance of these variables extends beyond mere mathematical formalism; they provide critical insights into resource utilization and constraint bindingness within optimization models. For researchers and scientists engaged in complex optimization tasks, understanding the properties and applications of slack and surplus variables is essential for proper model formulation and interpretation of computational results. This technical guide examines the theoretical foundations and practical applications of these variables within the broader context of simplex-based optimization, with particular emphasis on their role in constraint transformation and solution analysis.

Theoretical Foundations

Standard Form Requirements for the Simplex Method

The simplex method operates exclusively on linear programming problems expressed in standard form, which imposes specific structural requirements. According to the foundational principles of linear programming, the standard form necessitates that all constraints must be expressed as equations rather than inequalities, with all decision variables satisfying non-negativity conditions [10] [9]. This formulation creates a mathematically consistent framework where the system of constraints defines a convex polytope, whose extreme points correspond to potential solutions candidate for optimization.

The transformation to standard form represents a crucial preprocessing step that enables the application of the simplex algorithm's iterative logic. Without this transformation, the fundamental operations of the simplex method – moving from one basic feasible solution to an adjacent one while monotonically improving the objective function value – would not be computationally feasible [9]. The standard form creates the necessary conditions for identifying basic feasible solutions, which serve as the starting points for the simplex algorithm's traversal of the solution space.

Inequality Constraints in Practical Optimization Problems

In real-world optimization scenarios, particularly in scientific research and drug development, constraints naturally emerge as inequalities representing physical limitations, resource availability thresholds, or regulatory requirements. These inequalities typically fall into two fundamental categories: "less than or equal to" (≤) constraints that often represent resource capacity limits, and "greater than or equal to" (≥) constraints that frequently model minimum requirement specifications [16] [17].

The prevalence of inequality constraints in practical optimization problems necessitates a robust methodological approach for their incorporation into the simplex framework. For drug development professionals, these constraints might represent budgetary limitations (≤ constraints) or minimum efficacy thresholds (≥ constraints). The ability to systematically transform these practical constraints into a mathematically consistent form is therefore not merely an academic exercise but an essential step in applying optimization techniques to real-world research problems.

Variable Definitions and Core Concepts

Slack Variables: Definition and Purpose

Slack variables represent the unused resources in a linear programming model and are added to "less than or equal to" (≤) constraints to transform them into equations [16] [18]. Mathematically, for a constraint of the form (a{i1}x1 + a{i2}x2 + \dots + a{in}xn \leq bi), the corresponding equation becomes (a{i1}x1 + a{i2}x2 + \dots + a{in}xn + si = bi), where (si) is the slack variable with (s_i \geq 0) [16].

The conceptual interpretation of slack variables extends beyond their mathematical definition. In resource allocation problems, these variables quantify the amount of unused or idle resources within an optimal solution. A zero value for a slack variable indicates that the corresponding constraint is "binding" or "active," meaning that the resource is fully utilized [16]. Conversely, a positive value signals that the resource is not fully utilized in the current solution. This interpretation provides valuable insights for researchers analyzing optimization results, particularly in scenarios where resource utilization efficiency is a critical performance metric.

Surplus Variables: Definition and Purpose

Surplus variables (sometimes called negative slack variables) represent the extent to which a solution exceeds a minimum requirement and are subtracted from "greater than or equal to" (≥) constraints to transform them into equations [16] [17]. For a constraint of the form (a{j1}x1 + a{j2}x2 + \dots + a{jn}xn \geq bj), the corresponding equation becomes (a{j1}x1 + a{j2}x2 + \dots + a{jn}xn - tj = bj), where (tj) is the surplus variable with (t_j \geq 0) [16].

Surplus variables quantify the excess amount by which a solution satisfies a minimum requirement constraint. In pharmaceutical research contexts, these might represent how much a particular drug formulation exceeds minimum efficacy thresholds or safety standards. Similar to slack variables, a zero value for a surplus variable indicates that the constraint is exactly satisfied (binding), while a positive value indicates the solution exceeds the minimum requirement. This information is particularly valuable for sensitivity analysis and understanding the marginal impact of constraint modifications on the optimal solution.

Comparative Analysis of Variable Types

Table 1: Comparative Characteristics of Slack, Surplus, and Artificial Variables

| Characteristic | Slack Variable | Surplus Variable | Artificial Variable |

|---|---|---|---|

| Constraint Association | ≤ constraints | ≥ constraints | = and ≥ constraints |

| Introduction Sign in Constraint | Added (+) | Subtracted (-) | Added (+) |

| Coefficient in Objective Function | 0 | 0 | -M (maximization) or +M (minimization) |

| Physical/Economic Interpretation | Unused resources | Excess over requirement | No physical meaning |

| Role in Initial Solution | Can serve as initial basic variables | Cannot serve as initial basic variables | Used to form initial identity matrix |

| Presence in Optimal Solution | May be positive or zero | May be positive or zero | Must be zero in final solution |

The distinction between these variable types extends beyond their mathematical formulation to their conceptual interpretation and practical implementation. While slack and surplus variables have clear economic or physical interpretations in applied contexts, artificial variables serve primarily as computational devices without real-world analogs [17]. This distinction is crucial for researchers interpreting optimization results, as the values of slack and surplus variables in the final solution provide actionable insights into constraint bindingness and resource utilization.

Mathematical Formalization

Conversion Methodology for Inequality Constraints

The transformation of inequality constraints into equality form follows a systematic methodology that varies according to the direction of the inequality. For a "less than or equal to" constraint expressed as ( \sum a{ij}xj \leq bi ), the conversion involves adding a slack variable ( si ) resulting in ( \sum a{ij}xj + si = bi ), with ( si \geq 0 ) [16] [18]. Conversely, for a "greater than or equal to" constraint of the form ( \sum a{ij}xj \geq bi ), the conversion requires subtracting a surplus variable ( ti ) yielding ( \sum a{ij}xj - ti = bi ), with ( ti \geq 0 ) [16] [17].

This transformation process must be applied consistently to all inequality constraints within the linear programming model. The resulting system of equations defines the feasible region in a manner amenable to the simplex algorithm. For equality constraints (=) and ≥ constraints that would otherwise lack an initial basic feasible solution, artificial variables are introduced with a penalty coefficient (typically denoted as M) in the objective function to drive them out of the basis in Phase I of the simplex method [17] [19]. This comprehensive approach to constraint conversion ensures that any linear programming problem, regardless of its original constraint structure, can be transformed into the standard form required by the simplex algorithm.

Impact on Objective Function Formulation

The introduction of slack and surplus variables necessitates specific considerations for the objective function formulation. Since these variables represent unused resources or excess amounts beyond requirements, they typically do not directly contribute to the objective function value. Consequently, slack and surplus variables are assigned zero coefficients in the objective function [16] [17] [18]. For a standard maximization problem with objective function ( Z = \sum cjxj ), the modified objective function becomes ( Z = \sum cjxj + 0\sum si + 0\sum ti ), where ( si ) and ( ti ) represent slack and surplus variables respectively.

This treatment contrasts sharply with that of artificial variables, which receive large penalty coefficients (commonly denoted as +M for minimization problems and -M for maximization problems) to ensure their removal from the basis during the optimization process [17] [19]. The differential treatment of these variable types in the objective function reflects their distinct roles in the solution process: slack and surplus variables are legitimate components of the solution that may appear in the final basis, while artificial variables are computational artifacts that must be eliminated to obtain a genuine feasible solution.

Experimental Protocols and Implementation

Step-by-Step Conversion Protocol

The implementation of constraint conversion follows a systematic experimental protocol that ensures mathematical consistency and prepares the optimization problem for simplex method application:

- Constraint Classification: Identify and categorize all constraints based on inequality direction (≤, ≥) or equality (=) [9].

- Variable Introduction Strategy:

- Objective Function Modification:

- Non-negativity Enforcement: Ensure all decision variables, slack variables, surplus variables, and artificial variables maintain non-negativity constraints [9].

This protocol creates a standardized approach to problem transformation that can be systematically applied across diverse optimization scenarios. The resulting formulation guarantees that the initial simplex tableau can be constructed with a complete identity matrix embedded within its structure, facilitating the identification of an initial basic feasible solution.

Workflow Visualization for Inequality Conversion

The logical relationship between different constraint types and their corresponding conversion methodologies can be visualized through the following workflow:

Figure 1: Workflow for Converting Inequalities to Standard Form

Table 2: Variable Introduction Patterns in Standard Form Conversion

| Constraint Type | Slack Variables | Surplus Variables | Artificial Variables | Objective Coefficients |

|---|---|---|---|---|

| ≤ (Less than or equal) | 1 per constraint | 0 | 0 | 0 for slack variables |

| ≥ (Greater than or equal) | 0 | 1 per constraint | 1 per constraint | 0 for surplus variables, ±M for artificial variables |

| = (Equality) | 0 | 0 | 1 per constraint | ±M for artificial variables |

The quantitative relationships outlined in Table 2 provide researchers with a predictive framework for understanding how problem complexity increases during standard form conversion. The introduction of additional variables necessarily expands the dimensionality of the solution space, though the simplex method efficiently navigates this expanded space through its focus on basic feasible solutions. For large-scale problems encountered in pharmaceutical research and development, these patterns help anticipate computational requirements and inform model formulation strategies that minimize unnecessary complexity.

The Scientist's Toolkit: Research Reagent Solutions

Essential Computational Materials

Table 3: Essential Variables in Linear Programming Formulation

| Reagent/Variable Type | Primary Function | Implementation Role | Interpretation Context |

|---|---|---|---|

| Slack Variable | Converts ≤ constraints to equations | Added to left-hand side of constraint | Quantifies unused resources in optimal solution |

| Surplus Variable | Converts ≥ constraints to equations | Subtracted from left-hand side of constraint | Measures excess over minimum requirements |

| Artificial Variable | Enables initial basic feasible solution for ≥ and = constraints | Added to left-hand side with penalty coefficient in objective | Computational artifact with no physical interpretation |

| Decision Variable | Represents fundamental choices in optimization problem | Maintains original problem structure | Primary optimization targets with real-world significance |

| Big-M Coefficient | Penalizes artificial variables in objective function | Large numerical value forcing artificial variables from basis | Computational device to enforce constraint satisfaction |

This toolkit framework provides researchers with a standardized vocabulary and conceptual framework for implementing simplex-based optimization. The tabular representation facilitates quick reference during model formulation and solution interpretation phases of research projects. By understanding the distinct roles and properties of each variable type, research scientists can more effectively formulate optimization models that accurately represent their experimental constraints and objective criteria.

Interpretation and Analysis of Results

Diagnostic Interpretation of Variable Values

The values of slack and surplus variables in the final simplex solution provide critical diagnostic information about the optimization results. For slack variables, positive values indicate that the corresponding resources are not fully utilized in the optimal solution, while zero values signify that the resources are constraining the optimization [16]. Similarly, for surplus variables, positive values indicate the degree to which the solution exceeds the minimum requirement specified in the constraint, while zero values indicate exact satisfaction of the requirement.

This interpretive framework enables researchers to distinguish between binding constraints (those that exactly satisfy the inequality, with zero slack/surplus) and non-binding constraints (those with positive slack/surplus values) [16]. This distinction is crucial for sensitivity analysis and resource allocation decisions, as binding constraints represent the actual limitations governing the optimal solution, while non-binding constraints have "slack" that could be reallocated without affecting the current solution's optimality. For drug development professionals, this analysis can identify which resource limitations or regulatory requirements fundamentally govern the optimization outcome and which represent flexible boundaries.

Application in Pharmaceutical Research Context

In pharmaceutical research and development, the interpretation of slack and surplus variables extends beyond mathematical abstraction to practical decision support. For example, in drug formulation optimization:

- Slack variables in budget constraints indicate unused financial resources that could be reallocated to other research initiatives.

- Slack variables in raw material constraints reveal excess inventory capacity that might be reduced for cost savings.

- Surplus variables in efficacy constraints show how much a formulation exceeds minimum therapeutic thresholds, potentially indicating opportunities for cost reduction while maintaining efficacy.

- Zero slack in production capacity constraints highlights potential bottlenecks that merit investment for expansion.

This analytical approach transforms the mathematical solution into actionable business intelligence, enabling research managers to make informed decisions about resource allocation, process improvement, and capacity planning. The systematic interpretation of these variable values bridges the gap between computational optimization and practical research management.

Advanced Applications and Methodological Extensions

Integration with Two-Phase Simplex Method

The conversion of inequality constraints using slack and surplus variables forms the foundation for more advanced simplex implementations, particularly the two-phase simplex method [9]. In Phase I of this approach, the primary objective is to eliminate artificial variables from the basis by minimizing their sum, effectively driving the solution toward feasibility. The successful completion of Phase I yields a basic feasible solution that serves as the starting point for Phase II, where the original objective function is optimized.

This methodological extension is particularly valuable for problems with complex constraint structures that necessitate numerous artificial variables. The two-phase approach provides a systematic procedure for navigating to the feasible region before commencing optimization proper. For research scientists working with challenging optimization problems, particularly those featuring numerous ≥ and = constraints, this approach ensures robust convergence to optimal solutions while maintaining mathematical integrity throughout the computational process.

Sensitivity Analysis and Parametric Programming

The values of slack and surplus variables in the optimal solution provide critical inputs for sensitivity analysis, which examines how changes in constraint parameters (the b_i values) affect the optimal solution [16]. Constraints with positive slack or surplus values have a range of flexibility within which their right-hand side values can be modified without altering the optimal basis. Conversely, binding constraints with zero slack/surplus typically have narrower ranges of permissible variation before the basis changes.

This analytical capability is particularly valuable in pharmaceutical research environments characterized by uncertainty and changing parameters. By understanding how their optimal solutions respond to parameter variations, research managers can build robust optimization models that accommodate fluctuations in resource availability, regulatory requirements, and market conditions. The systematic analysis of slack and surplus values thus extends beyond single-point optimization to support dynamic decision-making in evolving research contexts.

Linear programming minimization problems represent a fundamental class of optimization challenges with widespread applications across logistics, finance, and resource allocation. The simplex method, developed by George Dantzig in 1947, provides the mathematical foundation for solving these problems by transforming minimization objectives into dual maximization problems [6] [9]. This approach enables researchers and practitioners to determine optimal solutions under multiple constraints, making it particularly valuable for complex decision-making in fields including pharmaceutical development and supply chain management.

The core insight driving this methodology recognizes that every minimization problem corresponds to a dual maximization problem, and the solution to this dual problem directly yields the solution to the original minimization challenge [3]. This duality principle permits efficient computation through geometrical operations on polyhedral feasible regions, systematically navigating extreme points until arriving at the optimal solution [9].

Theoretical Foundations of Duality

The Mathematical Framework of Duality

The transformation from minimization to dual maximization follows a precise mathematical procedure. For a primal minimization problem with objective function Z = cᵀx subject to constraints Ax ≥ b and x ≥ 0, the corresponding dual maximization problem has objective function Z = bᵀy subject to constraints Aᵀy ≤ c and y ≥ 0 [3].

This duality relationship emerges from the fundamental theorem of linear programming, which establishes that if both primal and dual problems have feasible solutions, then they share the same optimal objective value [3]. The dual variables (y) possess significant economic interpretations, often representing shadow prices that indicate the marginal value of additional resources in constraint-limited environments.

Consider the following primal minimization problem:

The corresponding dual maximization problem becomes:

This transformation demonstrates how constraint coefficients become objective function coefficients in the dual, and vice versa [3].

Geometrical Interpretation

Geometrically, the simplex method operates by navigating the edges of a polyhedron defined by the problem constraints [6] [9]. Each vertex of this polyhedron represents a basic feasible solution, and the algorithm systematically moves from one vertex to an adjacent vertex with improved objective function value until reaching the optimum.

The dual problem provides a complementary perspective on this geometrical structure. While the primal problem searches for the optimal vertex within the constraint polyhedron, the dual problem can be interpreted as searching for the optimal supporting hyperplane to that same polyhedron [9]. This geometrical duality ensures that when the primal minimization problem reaches its minimum, the dual maximization problem simultaneously reaches its maximum at the same objective value.

Table 1: Primal-Dual Relationship Components

| Primal Component (Minimization) | Dual Component (Maximization) |

|---|---|

| Objective function coefficients | Constraint right-hand sides |

| Constraint right-hand sides | Objective function coefficients |

| Coefficient matrix A | Transposed matrix Aᵀ |

| "Greater than or equal to" constraints | "Less than or equal to" constraints |

| Non-negative variables | Non-negative variables |

The Simplex Method Algorithm

Standard Form Transformation

The simplex method requires linear programs to be in standard form before optimization can begin. This transformation involves three key operations [9]:

Handling Lower Bounds: For variables with lower bounds other than zero, new variables are introduced representing the difference between the original variable and its bound. The original variable is then eliminated through substitution.

Inequality Conversion: For remaining inequality constraints, slack variables are introduced to convert constraints to equalities. These variables represent the difference between the two sides of the inequality and must be non-negative.

Unrestricted Variable Management: Unrestricted variables are replaced with the difference of two restricted non-negative variables.

After these transformations, the feasible region assumes the form Ax = b with all variables non-negative, creating the canonical representation required for simplex operations [9].

Tableau Representation and Pivot Operations

The simplex method utilizes a tableau representation to organize computational steps efficiently. The initial tableau takes the form:

The first row defines the objective function, while subsequent rows specify the constraints [9].

Pivot operations constitute the core mechanical process of the simplex algorithm, implementing the geometrical movement between adjacent vertices of the feasible polyhedron [9]. Each pivot operation involves:

- Selecting a non-basic variable to enter the basis (typically one with negative reduced cost for minimization)

- Identifying the basic variable to leave the basis (via the minimum ratio test)

- Performing elementary row operations to update the tableau

This process continues until no further improvements can be made, indicating an optimal solution has been reached.

Table 2: Simplex Method Computational Steps

| Step | Operation | Purpose | Mathematical Implementation |

|---|---|---|---|

| 1 | Standard Form Conversion | Prepare problem for algorithm | Introduce slack/surplus variables |

| 2 | Initial Basic Feasible Solution | Establish starting point | Identity matrix in constraint columns |

| 3 | Optimality Test | Check if solution is optimal | All reduced costs non-negative? |

| 4 | Pivot Column Selection | Choose entering variable | Most negative reduced cost |

| 5 | Pivot Row Selection | Choose leaving variable | Minimum ratio test (bᵢ/aᵢⱼ) |

| 6 | Pivot Operation | Update basis | Gaussian elimination on tableau |

| 7 | Iteration | Repeat process | Return to step 3 |

Recent Advances in Simplex Methodology

Theoretical Efficiency Breakthroughs

Despite its long history, the simplex method continues to evolve theoretically. A significant breakthrough emerged from the work of Spielman and Teng in 2001, who demonstrated that introducing randomness into the pivot selection process could transform the algorithm's worst-case performance from exponential to polynomial time [6]. This "smoothed analysis" showed that tiny perturbations to problem inputs prevent the pathological cases that cause exponential runtimes.

Building on this foundation, recent research by Huiberts and Bach has further optimized the simplex method's theoretical guarantees [6] [20]. Their work establishes that long-feared exponential runtimes do not materialize in practice and provides stronger mathematical support for the algorithm's observed efficiency. By incorporating additional randomness into the algorithm, they have demonstrated significantly lower guaranteed runtimes than previously established [20].

Complexity Analysis

The theoretical complexity of the simplex method has been a subject of intensive study since its inception. While the algorithm performs efficiently in practical applications, its worst-case complexity was proven to be exponential in 1972 [6]. This apparent contradiction between practical performance and theoretical limitations persisted for decades until the smoothed analysis framework provided a resolution.

The recent work of Bach and Huiberts has further refined our understanding of this complexity gap, demonstrating that runtimes scale significantly better than traditional worst-case analyses suggested [6]. Their approach establishes that an algorithm based on Spielman and Teng's framework cannot exceed the performance bounds they derived, essentially completing our understanding of this particular model of the simplex method [6].

Experimental Protocols and Implementation

Methodology for Minimization-to-Dual Transformation

The experimental protocol for converting minimization problems to dual maximization problems follows a systematic procedure:

Problem Formulation: Precisely define the primal minimization problem with objective function and constraints. Ensure all constraints follow the form ax + by ≥ c and all variables are non-negative [3].

Matrix Representation: Construct the matrix representation of the primal problem in the form:

where A represents the constraint coefficients, b the right-hand side values, and c the objective function coefficients [3].

Dual Construction: Create the dual problem by transposing the matrix and swapping the roles of b and c. The resulting dual problem becomes a maximization problem with constraints of the form Aᵀy ≤ c and y ≥ 0 [3].

Validation: Verify the duality relationship by ensuring the number of dual variables equals the number of primal constraints and the number of dual constraints equals the number of primal variables.

This methodology ensures the preservation of the fundamental duality relationship, guaranteeing that the optimal solution to the dual problem corresponds to the optimal solution of the primal minimization problem.

Computational Implementation Framework

Implementing the simplex method for solving dual maximization problems requires careful attention to numerical stability and computational efficiency:

Tableau Initialization: Construct the initial simplex tableau with the dual problem in standard form, incorporating slack variables as needed to convert inequalities to equalities [9].

Phase I Implementation: For problems without an obvious initial basic feasible solution, implement Phase I of the simplex method to establish feasibility before optimizing [9].

Pivot Strategy: Employ a structured pivot selection strategy, such as the steepest-edge rule or randomized selection, to minimize iterations while maintaining numerical stability [6].

Termination Conditions: Implement precise termination criteria detecting optimality (all reduced costs non-negative) or unboundedness (no positive elements in pivot column).

Solution Extraction: Recover the optimal solution to both the dual maximization problem and the original minimization problem from the final tableau.

This implementation framework ensures robust performance across diverse problem instances while maintaining computational efficiency.

Visualization of Methodological Relationships

Simplex Method Operational Workflow

Duality Relationship Mapping

Research Reagent Solutions

Table 3: Essential Computational Tools for Simplex Method Implementation

| Tool Category | Specific Implementation | Research Function | Application Context |

|---|---|---|---|

| Linear Programming Solvers | Commercial (CPLEX, Gurobi) | Large-scale problem optimization | Pharmaceutical supply chain logistics |

| Open-source Alternatives | GNU Linear Programming Kit (GLPK) | Algorithm prototyping and validation | Academic research and method development |

| Numerical Computation | MATLAB, Python with NumPy/SciPy | Matrix operations and tableau implementation | Experimental algorithm modification |

| Visualization Tools | Graphviz, MATLAB plotting | Geometrical representation of feasible regions | Educational illustration and analysis |

| Randomization Libraries | Standard template libraries | Implementation of randomized pivot rules | Smoothed analysis experimentation |

The mathematical principles governing the transformation from minimization to dual maximization problems represent a cornerstone of modern optimization theory. Through the simplex method and its recent theoretical advancements, researchers can efficiently solve complex resource allocation problems fundamental to drug development, supply chain management, and financial planning.

The enduring relevance of these principles is evidenced by continued theoretical breakthroughs, particularly in understanding the algorithm's complexity and performance characteristics. As optimization challenges grow in scale and complexity, the fundamental relationship between primal minimization and dual maximization will continue to provide the foundation for efficient computational solutions across scientific and industrial domains.

Graphical Interpretation of Minimization Solutions and Feasible Regions

Linear programming serves as a fundamental mathematical technique for determining optimal solutions in problems characterized by linear relationships, with minimization problems being particularly crucial in contexts such as cost reduction, resource conservation, and efficiency improvement. The graphical interpretation of minimization solutions and feasible regions provides an intuitive foundation for understanding the optimization landscape before proceeding to algorithmic implementations. Within the broader context of simplex method research, graphical analysis offers invaluable insights into problem structure, constraint interaction, and solution geometry that inform computational approaches. For researchers, scientists, and drug development professionals, mastering these graphical interpretations enables more effective problem formulation, solution validation, and algorithmic selection when addressing complex optimization challenges in fields ranging from pharmaceutical manufacturing to resource allocation in clinical trials.

The graphical method remains an essential pedagogical and analytical tool despite its dimensional limitations. As noted in foundational resources, "The graphical method is limited in solving LP problems having one or two decision variables. However, it provides a clear illustration of where the feasible and non-feasible regions are, as well as, vertices. Having a visual understanding of the problem helps with a more rational thought process" [21]. This visual understanding directly supports simplex method research by illuminating the geometric properties of feasible regions and the path of algorithmic traversal between vertices.

Theoretical Foundations: Feasible Regions and Optimal Solutions

Fundamental Definitions and Concepts

The graphical interpretation of linear programming minimization problems relies on several key concepts that form the vocabulary of optimization analysis:

Objective Function: The linear function Z = ax + by that requires minimization, where a and b are constants, and x and y are decision variables [22]. In minimization contexts, this typically represents cost, resource usage, or other metrics requiring reduction.

Constraints: The restrictions expressed as linear inequalities that define the conditions which must be satisfied for a solution to be valid [22]. These include both non-negative constraints (x ≥ 0, y ≥ 0) and general constraints representing resource limitations, requirements, or other boundaries.

Feasible Region: "The collection of all feasible solutions" [23] that satisfy all constraints simultaneously. Geometrically, this represents the intersection of multiple half-planes defined by the constraint inequalities [24].

Feasible Solutions: "Points within or on the boundary region [that] represent feasible solutions to the problem" [22]. Any solution outside this region violates at least one constraint and is therefore unacceptable.

Optimal Solution: "The optimal solution is always one the vertices of its feasible region (a corner point)" [21] that provides the minimum value of the objective function while satisfying all constraints.

Mathematical Principles of Minimization

The mathematical foundation for graphical minimization rests on the properties of linear systems and convex geometry. The feasible region formed by the intersection of linear constraints creates a convex polygon (in two dimensions) or polyhedron (in higher dimensions). This convexity property guarantees that any local minimum will also be a global minimum, significantly simplifying the optimization process [22]. For minimization problems, the optimal solution occurs at a vertex point where constraint boundaries intersect, or in special cases, along an entire edge or face when multiple equivalent solutions exist [3].

The fundamental theorem underlying graphical and simplex approaches states: "If a linear program has a non-empty, bounded feasible region, then the optimal solution is always one the vertices of its feasible region (a corner point)" [21]. This principle provides the theoretical justification for both graphical examination of corner points and the simplex method's vertex-hopping approach.

Graphical Methodology for Minimization Problems

Step-by-Step Graphical Solution Procedure

The graphical method for solving minimization problems follows a systematic procedure that transforms mathematical constraints into visual representations:

Step 1: Problem Formulation and Constraint Plotting Begin by plotting each constraint inequality as a linear equation on the Cartesian plane. For example, the constraint 2x + 3y ≥ 30 would be plotted as the line 2x + 3y = 30. The inequality then determines which side of the line satisfies the constraint [22]. The intersection of all these half-planes (including non-negativity restrictions) forms the feasible region.

Step 2: Feasible Region Identification Determine the feasible region by identifying the area that satisfies all constraints simultaneously. As noted in instructional resources, "The feasible region is the collection of all feasible solutions" [23]. This region may be bounded (forming a polygon) or unbounded (extending infinitely in some directions).

Step 3: Corner Point Determination Identify all corner points (vertices) of the feasible region. These occur at the intersections of constraint boundaries. For example, in a problem with constraints x + 2y ≥ 40, x + y ≥ 30, and non-negativity restrictions, the corner points would include intersections such as (0,40), (20,20), and (30,0) [3].

Step 4: Objective Function Evaluation Evaluate the objective function at each corner point. The mathematical principle is clear: "Our goal is to shift the objective function's line parallel to itself until it reaches the farthest point on the feasible region, minimizing the objective while still satisfying all constraints" [23]. For minimization, we seek the point that gives the smallest objective value.

Step 5: Optimal Solution Identification Select the corner point that yields the minimum value of the objective function as the optimal solution. If the feasible region is unbounded, additional verification is needed to ensure an optimal solution exists [22].

Workflow for Graphical Minimization

The following diagram illustrates the logical workflow for applying the graphical method to minimization problems:

Connecting Graphical Interpretation to the Simplex Method

Geometric Foundation of Algebraic Methods

The graphical interpretation provides essential geometric intuition for the simplex method's algebraic operations. While the graphical approach is dimensionally limited, "the algebraic method is designed to extend the graphical method results to multi-dimensional LP problem" [21]. Each vertex (corner point) in the graphical representation corresponds to a Basic Feasible Solution in simplex terminology, and movement along edges between vertices mirrors the simplex method's pivot operations [21].

The connection between graphical and algebraic approaches becomes evident when examining how both methods navigate the feasible region. In graphical terms, "If a linear program has a non-empty, bounded feasible region, then the optimal solution is always one the vertices of its feasible region (a corner point)" [21]. The simplex method operationalizes this principle through an iterative process that moves from one basic feasible solution to an adjacent one, improving the objective function with each pivot until optimality is reached [3].

The Dual Problem Framework

Minimization problems frequently employ the concept of duality, where every minimization (primal) problem has a corresponding maximization (dual) problem. The mathematical relationship is expressed through the transformation: "To every minimization problem there corresponds a dual problem. The solution of the dual problem is used to find the solution of the original problem" [3]. This duality principle connects graphical interpretations of minimization problems to their dual maximization counterparts.

The following table illustrates the correspondence between primal minimization and dual maximization problems:

Table 1: Primal-Dual Problem Correspondence

| Component | Primal (Minimization) Problem | Dual (Maximization) Problem |

|---|---|---|

| Objective | Minimize Z = cᵀx | Maximize W = bᵀy |

| Constraints | A x ≥ b | Aᵀy ≤ c |

| Variables | x ≥ 0 | y ≥ 0 |

The relationship between graphical solutions of primal and dual problems demonstrates the fundamental mathematical principles underlying optimization theory. As shown in sample problems, "We now graph the inequalities... The corner point (20, 10) gives the lowest value for the objective function" in the primal minimization problem, while its dual maximization problem yields the same optimal value [3].

Current Research and Algorithmic Advances

Theoretical and Practical Advances in Simplex Methodology

Recent research has addressed long-standing questions about the simplex method's performance characteristics. While the graphical method illustrates the conceptual framework, computational implementations face theoretical complexity challenges. As noted in recent investigations, "In 1972, mathematicians proved that the time it takes to complete a task could rise exponentially with the number of constraints" [6]. This exponential worst-case scenario has motivated ongoing research into the method's practical efficiency.

Breakthrough work by Huiberts and Bach has provided new theoretical explanations for the simplex method's observed performance. "They've made the algorithm faster, and also provided theoretical reasons why the exponential runtimes that have long been feared do not materialize in practice" [6]. Their research builds upon the landmark 2001 result by Spielman and Teng, which demonstrated that "the tiniest bit of randomness can help prevent such an outcome" of exponential worst-case performance [6]. This theoretical progress enhances our understanding of how simplex-based optimization navigates the geometric space of feasible regions.

Computational Considerations in Modern Applications

In contemporary optimization practice, particularly in demanding fields like drug development and pharmaceutical research, the graphical interpretation informs computational implementations. While the simplex method remains dominant for many applications, alternative approaches have emerged: "Interior point methods (IPMs) have hugely influenced the field of optimization" since Karmarkar's 1984 breakthrough, offering polynomial-time algorithms for linear programming [7]. The geometric interpretation of interior point methods—navigating through the interior rather than along the edges of the feasible region—provides a contrasting approach to the vertex-focused simplex method.

Recent research continues to refine these algorithmic approaches. In specialized domains including microwave design optimization, researchers have developed "simplex-based regressors" that "permit regularizing the objective function, which facilitates and speeds up the identification of the optimum design" [25]. These specialized implementations demonstrate how the fundamental geometric principles of linear programming continue to inform advanced optimization techniques across diverse scientific fields.

Implementation Framework: Research Tools and Experimental Protocols

Research Reagent Solutions for Optimization Studies

The experimental analysis of optimization algorithms requires specific computational tools and methodological approaches. The following table outlines essential components for implementing and testing graphical and simplex-based minimization methodologies:

Table 2: Essential Research Components for Minimization Studies

| Component | Function | Implementation Example |

|---|---|---|

| Constraint Visualizer | Plots constraint boundaries and identifies feasible regions | Graphical plotting tools with inequality support |

| Vertex Calculator | Computes corner points of feasible regions | Linear equation solver systems |

| Objective Evaluator | Tests objective function values at candidate solutions | Function optimization frameworks |

| Sensitivity Analyzer | Determines parameter impact on optimal solutions | Shadow price and reduced cost calculators |

| Simplex Implementations | Executes iterative optimization algorithm | LP solvers with tableau manipulation |

Experimental Protocol for Minimization Analysis

A systematic experimental approach enables comprehensive investigation of minimization problems:

Phase 1: Problem Formulation

- Define decision variables and their domains

- Formulate objective function for minimization

- Identify all relevant constraints

- Convert all constraints to standard form

Phase 2: Graphical Analysis

- Plot all constraint boundaries on coordinate system

- Identify feasible region and its properties (bounded/unbounded)

- Determine all corner points algebraically

- Evaluate objective function at each vertex

- Identify optimal solution and objective value

Phase 3: Simplex Verification

- Convert problem to standard simplex form

- Construct initial simplex tableau

- Execute simplex iterations until optimality

- Verify graphical and algebraic solutions match

- Perform sensitivity analysis on key parameters

Phase 4: Interpretation and Reporting

- Document optimal solution and implementation recommendations

- Analyze shadow prices for constraint sensitivity

- Identify binding and non-binding constraints

- Assess solution robustness to parameter variations

The graphical interpretation of minimization solutions and feasible regions provides an essential conceptual bridge between theoretical optimization principles and computational implementations. For researchers, scientists, and drug development professionals, this understanding enables more effective problem formulation, algorithm selection, and result interpretation across diverse application domains. The continuing evolution of simplex-based methods—with recent theoretical advances explaining their practical efficiency—ensures these foundational concepts remain relevant in contemporary optimization research.

The geometric intuition developed through graphical analysis directly informs more advanced optimization techniques, including recent innovations in interior point methods, decomposition strategies, and specialized simplex variants. As optimization challenges grow increasingly complex in pharmaceutical research and drug development, the fundamental principles of feasible region analysis and solution geometry continue to provide essential guidance for developing efficient, reliable optimization approaches to support scientific discovery and resource allocation decisions.

Step-by-Step Simplex Implementation: From Theory to Practical Application

Constructing the Initial Simplex Tableau for Minimization

Linear programming provides a powerful framework for making optimal decisions under constraints, a common scenario in various scientific and industrial domains. The simplex method, developed by George Dantzig in 1947, remains a fundamental algorithm for solving these optimization problems [6]. While early applications focused on military logistics during World War II, the method has since become indispensable in fields ranging from pharmaceutical manufacturing to supply chain management [6] [20]. This technical guide focuses specifically on constructing the initial simplex tableau for minimization problems, a crucial first step in implementing the algorithm efficiently.

Within research contexts, minimization problems often arise in cost reduction, error minimization, and efficient resource allocation. For drug development professionals, this might involve minimizing raw material costs while maintaining production standards or optimizing laboratory testing schedules to reduce downtime [20]. The construction of the initial tableau establishes the foundation for applying the simplex algorithm, transforming a real-world problem into a mathematical structure amenable to systematic optimization. Recent theoretical advances by Huiberts and Bach have further refined our understanding of the simplex method's performance characteristics, providing stronger mathematical support for its efficiency in practical applications [6] [20].

Theoretical Foundation: Primal and Dual Formulations

Standard Minimization Problem Form

A linear programming minimization problem in standard form must meet specific criteria to be suitable for the simplex method. All constraints must be of the form (ax + by ≥ c), where (a), (b), and (c) are constants, and (x) and (y) are decision variables [3]. The objective function, representing the quantity to be minimized (such as cost or resource usage), must be a linear function of these decision variables. Mathematically, this can be expressed as:

Minimize (Z = c1x1 + c2x2 + ... + cnxn)

Subject to: (a{11}x1 + a{12}x2 + ... + a{1n}xn ≥ b1) (a{21}x1 + a{22}x2 + ... + a{2n}xn ≥ b2) ... (a{m1}x1 + a{m2}x2 + ... + a{mn}xn ≥ bm) (x1, x2, ..., xn ≥ 0)

This formulation ensures that the feasibility region is properly defined for the application of the simplex algorithm to minimization problems.

Duality Theory and the Dual Maximization Problem

The procedure for solving minimization problems was developed by John Von Neumann and relies on solving an associated problem called the dual problem [3]. For every minimization (primal) problem, there corresponds a dual maximization problem. The solution to the dual problem provides the solution to the original primal problem, creating a powerful mathematical relationship that forms the basis for the simplex method applied to minimization.

The transformation from primal minimization to dual maximization follows a systematic process. Consider the following primal minimization problem:

Minimize (Z = 12x1 + 16x2) Subject to: (x1 + 2x2 ≥ 40) (x1 + x2 ≥ 30) (x1 ≥ 0, x2 ≥ 0)

This problem can be represented as a matrix:

The dual maximization problem is created by taking the transpose of this matrix (swapping rows and columns) and reformulating the problem [3]:

Maximize (Z = 40y1 + 30y2) Subject to: (y1 + y2 ≤ 12) (2y1 + y2 ≤ 16) (y1 ≥ 0, y2 ≥ 0)

This transformation converts a minimization problem with "≥" constraints into a maximization problem with "≤" constraints, which can be solved directly using the standard simplex method.

Table 1: Primal-Dual Relationship in Linear Programming

| Primal (Minimization) | Dual (Maximization) |

|---|---|

| Objective: Minimize Z = cᵀx | Objective: Maximize Z = bᵀy |

| Constraints: Ax ≥ b | Constraints: Aᵀy ≤ c |

| Variables: n decision variables | Variables: m decision variables |

| Constraints: m inequality constraints | Constraints: n inequality constraints |

| Right-hand side: constraint constants | Objective coefficients: constraint constants |

Methodology: Constructing the Initial Tableau

Transformation to Dual Maximization Problem

The first critical step in solving minimization problems using the simplex method is to convert the primal minimization problem into its dual maximization counterpart. This process begins by representing the primal problem as a matrix, where the constraint coefficients form the main body, the right-hand side values are placed in an additional column, and the objective function coefficients are positioned in a separate row [3].

The methodology for this transformation follows these precise steps:

Matrix Representation: Write the primal minimization problem as an augmented matrix including constraint coefficients, right-hand side values, and objective function coefficients.

Transposition: Generate the transpose of this matrix by swapping rows and columns. The first row becomes the first column, the second row becomes the second column, and so on.