Multi-Objective Optimization in Analytical Chemistry: Accelerating Drug Discovery and Materials Development

This article provides a comprehensive overview of multi-objective optimization (MOO) methodologies and their transformative impact on analytical chemistry, with a focus on drug discovery and materials development.

Multi-Objective Optimization in Analytical Chemistry: Accelerating Drug Discovery and Materials Development

Abstract

This article provides a comprehensive overview of multi-objective optimization (MOO) methodologies and their transformative impact on analytical chemistry, with a focus on drug discovery and materials development. It explores the foundational principles of MOO, including Pareto optimality and the challenges of navigating complex chemical spaces. The review details advanced algorithms—from evolutionary strategies like NSGA-II and MoGA-TA to Bayesian optimization—and their specific applications in molecular design and process engineering. It further addresses critical troubleshooting aspects for handling constraints and mixed-variable systems, and offers a comparative analysis of solver performance using established metrics. Aimed at researchers and drug development professionals, this guide serves as a roadmap for implementing MOO to efficiently balance conflicting objectives such as efficacy, toxicity, and synthesizability.

Pareto Frontiers and Chemical Space: The Foundations of Multi-Objective Optimization

FAQs: Core Concepts and Applications

Q1: What is Multi-Objective Optimization (MOO), and why is it particularly important in chemical research?

Multi-Objective Optimization (MOO) is an area of multiple-criteria decision-making concerned with mathematical optimization problems involving more than one objective function to be optimized simultaneously [1]. In practical chemical problems, these objectives are often conflicting, meaning improving one leads to the degradation of another [2]. For example, you might aim to maximize reaction yield while minimizing environmental impact or minimizing production cost while maximizing product purity [1] [2]. Unlike single-objective optimization which yields a single "best" solution, MOO identifies a set of optimal trade-off solutions, known as the Pareto front [1] [3]. This is crucial in chemistry and drug development because it provides researchers with a comprehensive view of the available compromises, enabling more informed and sustainable decision-making that balances economic, environmental, and performance criteria [2].

Q2: What is the "Pareto Front" and how should I interpret it?

The Pareto front (or Pareto optimal set) is the collection of solutions where none of the objectives can be improved without worsening at least one other objective [1]. For a chemist, each point on the Pareto front represents a viable set of reaction conditions (e.g., temperature, catalyst, solvent) that defines a specific trade-off between your goals.

- Interpreting the Plot: In a plot showing two objectives—for instance, Reaction Yield vs. Environmental Impact—every solution on the front is equally "optimal" from a mathematical standpoint [1]. The choice of a final solution from this set depends on the specific priorities of your project, such as whether cost or speed is more critical [2].

Q3: My optimization problem involves both continuous variables (like temperature) and categorical variables (like catalyst type). Is MOO applicable?

Yes. This is known as a Mixed-Variable Optimization problem, and it is a common challenge in reaction optimization. Recent advances have led to the development of algorithms specifically designed to handle both continuous variables (e.g., temperature, concentration) and discrete variables (e.g., catalyst, ligand, solvent selection) concurrently [4] [5]. For example, the Mixed Variable Multi-Objective Optimization (MVMOO) algorithm utilizes a Bayesian methodology to efficiently explore the complex parameter space and reveal key interactions between variable types, providing greater process understanding [4].

Q4: What are the main categories of MOO solution methods, and how do I choose?

MOO methods can be broadly categorized as follows [6]:

- A Priori Methods: Require you to specify your preferences (e.g., weightings for each objective) before the optimization run. An example is the weighted sum method.

- A Posteriori Methods: Generate a set of Pareto-optimal solutions first, allowing you to choose from the trade-offs afterwards. Evolutionary algorithms like NSGA-II fall into this category.

- Interactive Methods: Allow for human decision-maker input during the optimization process. For chemical reaction optimization, a posteriori methods are often favored because they map the entire trade-off space without requiring prior bias, thus revealing unexpected optimal conditions [5].

Troubleshooting Common Experimental and Computational Issues

Problem 1: The optimization algorithm fails to converge, or the results are inconsistent.

- Potential Cause 1: Poorly Defined Objectives and Constraints. The mathematical formulation of the problem may be incorrect.

- Solution: Ensure your objective functions and constraints are correctly implemented and computationally stable. Test the simulation model with various inputs to verify it behaves as expected before starting optimization [2].

- Potential Cause 2: Unsuitable Algorithm or Poor Hyperparameter Tuning.

- Solution: Select an algorithm suited to your problem's nature (e.g., MVMOO for mixed variables [4], NSGA-II for continuous variables [6]). Adjust hyperparameters like population size and number of generations carefully, as they significantly impact performance [6].

Problem 2: The Pareto front has poor diversity—all the solutions are clustered in one small region.

- Potential Cause: The optimization algorithm is overly exploiting one region of the search space and lacks exploratory capability.

- Solution: Implement algorithms with mechanisms to maintain diversity. Many modern Multi-Objective Evolutionary Algorithms (MOEAs), like NSGA-II, use a crowding distance operator to ensure solutions are spread out across the Pareto front [6]. You can also adjust the algorithm parameters to favor more exploration.

Problem 3: Handling many (more than three) objectives leads to confusing results and poor algorithm performance.

- Potential Cause: This is a "Many-Objective Optimization" problem, which presents unique challenges. As the number of objectives increases, the computational cost grows exponentially, and visualizing the Pareto front becomes difficult [3]. Furthermore, almost all solutions in a population can become non-dominated, reducing the selection pressure for improvement.

- Solution: Consider using algorithms specifically designed for many objectives, such as NSGA-III [3]. You could also investigate if some objectives are correlated and can be consolidated, or if some can be reformulated as constraints to simplify the problem.

Key Reagents and Computational Tools for MOO in Chemistry

Table 1: Essential "Reagent Solutions" for a Multi-Objective Optimization Experiment

| Tool Category | Example(s) | Primary Function |

|---|---|---|

| MOO Solvers & Algorithms | MVMOO [4], NSGA-II [6], MOEA/D [7], Dragonfly, TSEMO [5] | Core computational engines for finding Pareto-optimal solutions. MVMOO is specialized for mixed-variable problems. |

| Automated Flow Reactors | Self-optimizing flow platforms [4] | Automated experimental systems that physically execute reactions and measure outcomes based on algorithm-set parameters. |

| Process Modeling Software | Aspen Plus, Hysys [2] | Software for building detailed process models, which can be used as the "objective function" simulators for optimization. |

| Performance Metrics | Hypervolume (HV), Generational Distance (GD), Spacing [6] | Quantitative metrics to evaluate and compare the quality (convergence and diversity) of different Pareto fronts. |

Detailed Experimental Protocol: A MOO Case Study

Title: Multi-Objective Optimization of a Sonogashira Reaction using a Mixed-Variable Algorithm and an Automated Flow Reactor [4].

Background: The Sonogashira coupling is a crucial reaction for forming C-C bonds. Optimizing it involves balancing multiple outcomes, such as yield, selectivity, and productivity, which are influenced by both continuous (e.g., temperature, residence time) and discrete (e.g., ligand, solvent) variables.

Objective: To simultaneously identify the trade-offs between reaction yield and productivity by optimizing continuous and discrete variables concurrently.

Materials:

- Chemical Reagents: Palladium catalyst, various ligands, solvents, aryl halide, and alkyne substrates.

- Equipment: Automated continuous flow reactor system with in-line analytics (e.g., HPLC or UV-Vis).

- Software: Mixed Variable Multi-Objective Optimization (MVMOO) algorithm code [4].

Methodology:

- Problem Formulation:

- Variables: Define continuous variables (e.g.,

Temperature,Residence Time) and discrete variables (e.g.,Ligandfrom a set {L1, L2, L3},Solventfrom a set {S1, S2}). - Objectives: Define the objectives to be optimized, e.g.,

Maximize Reaction YieldandMaximize Space-Time Yield (Productivity). - Constraints: Define any operational limits (e.g.,

Temperature < 150 °C,Pressure < 10 bar).

- Variables: Define continuous variables (e.g.,

Experimental Workflow Setup: Couple the MVMOO algorithm with the control system of the automated flow reactor. The algorithm will propose new experimental conditions, the reactor will execute the experiment, and in-line analytics will feed the result back to the algorithm.

Algorithm Execution:

- Initialization: The algorithm typically starts with an initial set of experiments, often generated via a space-filling design like Latin Hypercube Sampling.

- Iterative Optimization: The algorithm runs iteratively:

- Surrogate Modeling: A machine learning model (e.g., Gaussian Process) is built based on all data collected so far to predict reaction outcomes.

- Acquisition Function: An acquisition function (e.g., Expected Hypervolume Improvement) uses the model to suggest the next most informative experiment, balancing exploration and exploitation.

- Evaluation: The suggested experiment (combination of discrete and continuous variables) is performed automatically by the flow platform.

- Update: The new data point is added to the dataset, and the process repeats until a stopping criterion is met (e.g., a set number of experiments or convergence is achieved).

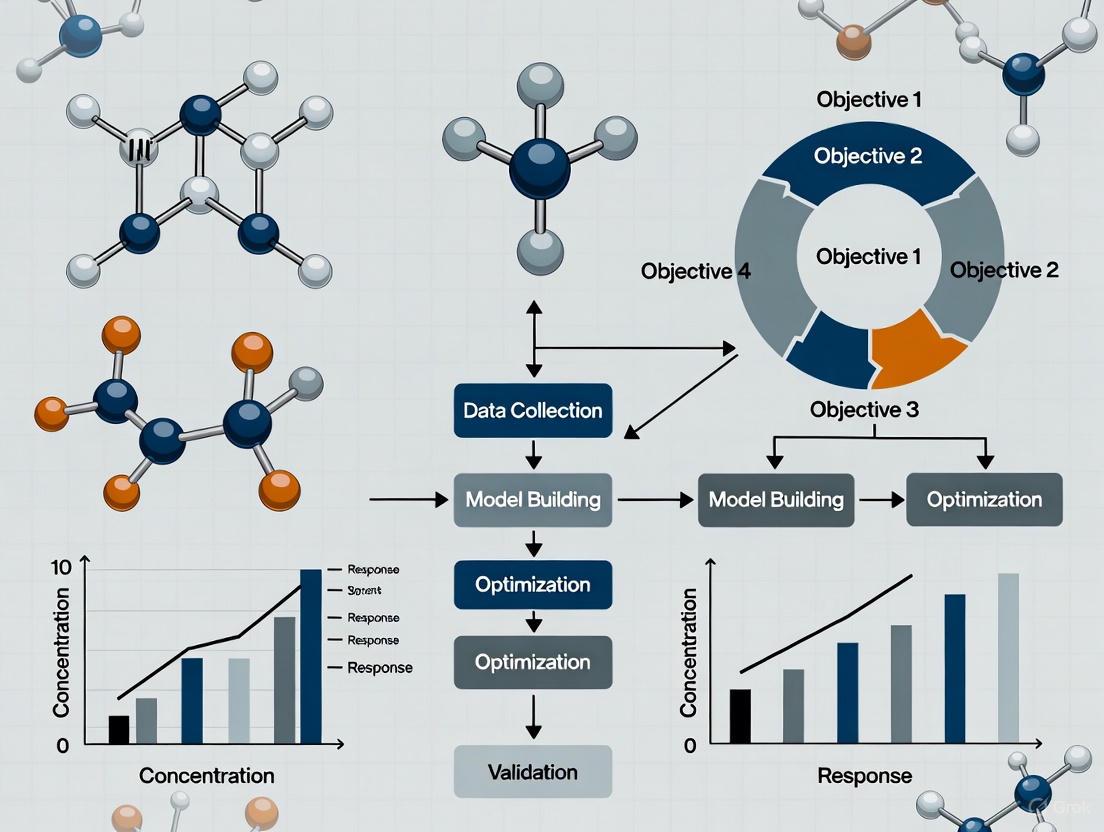

The following diagram illustrates this iterative, automated workflow:

Expected Outcome: After a predetermined number of experiments, the algorithm will output a set of non-dominated solutions, forming the Pareto front. This front will clearly visualize the trade-off between yield and productivity and identify the specific combinations of ligand, solvent, temperature, and residence time that achieve each optimal compromise.

Solver Selection Guide for Chemical Applications

Table 2: Comparison of Multi-Objective Optimization Solvers for Chemical Reaction Optimization

| Solver Name | Key Features | Best Suited For | Considerations |

|---|---|---|---|

| MVMOO [4] [5] | Handles mixed variables (continuous & categorical); Bayesian methodology. | Problems where solvent, catalyst, or ligand choice is a key variable. | High optimization efficiency; requires no prior knowledge. |

| NSGA-II [6] | A well-established, dominance-based genetic algorithm; uses crowding distance for diversity. | General-purpose MOO with continuous variables. | A robust, widely used choice; may struggle with many objectives or mixed variables. |

| TSEMO [5] | Bayesian global optimization; often very sample-efficient. | Problems where each experimental evaluation is very expensive or time-consuming. | Can find good solutions with fewer experiments. |

| Dragonfly [5] | - | Refer to specific software documentation for features. | - |

| EDBO+ [5] | Designed for experimental design and batch optimization. | High-throughput experimentation where parallel evaluation of experiments is possible. | Can optimize multiple conditions simultaneously. |

Frequently Asked Questions

Q1: What is Pareto optimality in the context of molecular optimization? Pareto optimality describes a state in multi-objective optimization where no single molecular property can be improved without worsening at least one other property. In drug discovery, a molecule is considered Pareto optimal if it represents the best possible compromise between conflicting objectives, such as potency versus metabolic stability [8]. Such molecules lie on the Pareto front, a concept that helps identify the set of non-dominated solutions from which researchers can select the most suitable candidate [9].

Q2: Why is the Pareto Principle important for designing multi-target therapeutics? Designing compounds that engage multiple targets often requires balancing different, and sometimes competing, chemical features [8]. The Pareto Principle provides a framework for identifying these optimal trade-offs. Without computational methodologies like multi-objective optimization, it is particularly challenging to design compounds with a well-balanced profile of these conflicting features [8].

Q3: How can I identify the "vital few" molecular properties to focus on during optimization? The Pareto Principle, often called the 80/20 rule, suggests that a small proportion of inputs (the "vital few") generates a disproportionately large proportion of outputs [10]. To identify these critical properties:

- Gather data on how different molecular properties contribute to your overall goal (e.g., therapeutic efficacy).

- Rank properties by their contribution and calculate cumulative percentages.

- Visualize with a Pareto chart to clearly see which few properties (e.g., potency, solubility) have the largest impact on your outcome, and focus optimization efforts there [10].

Q4: What are common pitfalls when applying Pareto analysis to experimental data?

- Obsessing over exact ratios: The principle identifies uneven distributions, not rigid 80/20 splits. Context matters enormously [10].

- Neglecting the "trivial many": While focus should be on high-impact properties, smaller issues can accumulate into significant problems if completely ignored [10].

- Assuming static relationships: The importance of molecular properties can shift as research progresses. Regular re-analysis is necessary to keep focus areas current [10].

Troubleshooting Guides

Problem: Difficulty converging on a Pareto front during in-silico compound design.

- Potential Cause 1: The algorithm is exploring a parameter space that is too large or poorly constrained.

- Solution:

- Re-evaluate the bounds of your parameter space using known physicochemical constraints.

- Incorporate prior knowledge to define a lower-dimensional manifold for more efficient searching, a approach inspired by how biological systems reduce complex parameter spaces to simpler subspaces [9].

- Potential Cause 2: Conflicting objectives are too equally weighted, causing the search to oscillate without finding clear trade-offs.

- Solution:

- Revisit the performance space and ensure your objective functions accurately reflect the biological tasks. The Pareto front forms part of the boundary between plausible and implausible regions in this space [9].

- Consider if some objectives can be reformulated as constraints to simplify the optimization landscape.

Problem: A lead compound is optimal in one key area (e.g., potency) but underperforms in another (e.g., metabolic stability).

- Explanation: This compound may be close to a performance archetype—a phenotype optimal for a single task [9]. In a multi-task environment, evolution (or a design algorithm) selects for compromises.

- Solution:

- Map your candidate compounds in a performance space defined by your key objectives.

- Identify the Pareto front—the set of compounds where no other candidate dominates across all properties.

- Select a compound from this front that offers the best balance for your specific requirements, accepting that peak performance in one area must be sacrificed for adequacy in others [9] [11].

Problem: Experimental results for optimized compounds do not match in-silico predictions.

- Potential Cause: The computational model's performance functions do not fully capture the complexity of the in-vivo environment.

- Solution:

- Iterate and Validate: Use the experimentally observed discrepancies to refine your computational model's objective functions.

- Refine the Pareto Front: Treat the initial model as a starting point. The Pareto front is not static; it should evolve with new biological data, much like biological systems refill morphospace after extinction events [11].

- Ensure your model accounts for real-world variability and unanticipated interactions not present in the simulated environment.

Key Research Reagent Solutions

The following reagents and computational resources are essential for implementing Pareto-based multi-objective optimization in drug discovery.

| Reagent / Resource | Function in Pareto Optimization |

|---|---|

| Generative Molecular Models | Used to design de novo compounds by exploring chemical space and generating candidates predicted to have a good balance between desired, conflicting properties [8]. |

| Public Bioactivity Datasets | Provide the training data for generative models, allowing for the identification of structure-property relationships even when data is limited [8]. |

| Multi-Objective Optimization Algorithms | Computational engines that identify the set of Pareto optimal solutions by balancing different, competing chemical features during the design process [8]. |

| Performance Space Mapping Tools | Software that visualizes candidate compounds based on multiple objectives, helping researchers identify the Pareto front and select the best compromises [9]. |

Experimental Protocol: Identifying a Pareto Front for Molecular Properties

1. Define Objectives and Constraints:

- Clearly specify the molecular properties to be optimized (e.g., binding affinity (pIC50), solubility (LogS), metabolic stability (CLint)).

- Define acceptable ranges for each property and other chemical constraints (e.g., molecular weight < 500, no reactive functional groups).

2. Generate Candidate Population:

- Use a generative chemical model to produce a large and diverse set of candidate molecules [8].

- Alternatively, curate a dataset of known actives from public or proprietary databases.

3. Compute Property Predictions:

- Employ quantitative structure-activity relationship (QSAR) models or other predictive tools to estimate the key properties for every candidate in the population.

4. Perform Pareto Sorting:

- For the set of molecules and properties, apply a non-dominated sorting algorithm:

- A molecule (A) is said to dominate another molecule (B) if A is better than or equal to B in all properties and strictly better in at least one property.

- Identify all molecules that are not dominated by any other molecule in the population. This is the Pareto front [9].

- Iteratively remove these front molecules and repeat the process to rank the entire population by their level of optimality.

5. Analyze and Select:

- Visualize the results, typically using 2D or 3D scatter plots where each axis represents an optimization objective. The Pareto front will form one boundary of the data cloud.

- Select final candidate(s) from the Pareto front based on the desired balance of properties for the specific therapeutic context.

Logical Workflow for Multi-Objective Molecular Optimization

The diagram below outlines the core process for applying Pareto optimality in molecular design.

Navigating the Vast Chemical Search Space (∼10^60 Molecules)

FAQs: Understanding the Chemical Search Space and Multi-Objective Optimization

What is the "chemical space" and why is its size (∼10^60 molecules) a challenge for research? The term "chemical space" (CS) or "chemical universe" refers to the total number of chemical compounds that could theoretically exist. This space is vast because organic molecules can form stable chains and rings, leading to a multitude of fascinating and complex structures [12]. The estimated ∼10^60 possible molecules represents both an opportunity and a challenge; it is prohibitively expensive and time-consuming to exhaustively search this space to find novel molecules with promising properties for applications like drug discovery or materials science [13] [14].

What is multi-objective optimization (MOO) and how does it apply to chemical research? Multi-objective optimization (also known as Pareto optimization) is an area of multiple-criteria decision-making concerned with mathematical optimization problems involving more than one objective function to be optimized simultaneously [1]. In chemistry, this is essential because researchers often need to balance conflicting goals, such as maximizing a reaction's yield while minimizing cost, waste, or energy consumption [5]. For a multi-objective problem, there is rarely a single "best" solution; instead, the goal is to find a set of optimal trade-off solutions, known as the Pareto front [1].

Which multi-objective optimization solvers are available for chemical reaction optimization? Several solvers have been developed and applied in real chemical scenarios. The choice of solver depends on the specific problem, including the types of variables (continuous or categorical) and required features like constraint handling. The table below summarizes key solvers identified in a 2024 comparative study [5].

Table 1: Multi-Objective Optimization Solvers for Chemical Applications

| Solver Name | Key Features/Notes |

|---|---|

| MVMOO | Verified in real chemical reaction scenarios [5]. |

| EDBO+ | Verified in real chemical reaction scenarios [5]. |

| Dragonfly | Verified in real chemical reaction scenarios [5]. |

| TSEMO | Verified in real chemical reaction scenarios [5]. |

| EIM-EGO | Verified in real chemical reaction scenarios [5]. |

What is the Biologically Relevant Chemical Space (BioReCS)? The Biologically Relevant Chemical Space (BioReCS) is a chemical subspace comprising molecules with biological activity—both beneficial and detrimental. It spans areas like drug discovery, agrochemistry, and natural product research. It includes not only therapeutic compounds but also toxic and allergenic molecules [14]. Public databases such as ChEMBL and PubChem are major sources for exploring this space [14].

What are some underexplored regions of the chemical space? Certain chemical structure types remain underexplored due to modeling challenges [14]:

- Metal-containing molecules and metallodrugs are often filtered out of standard analyses.

- Macrocycles (compounds with large rings), PROTACs, and mid-sized peptides.

- Protein-protein interaction (PPI) inhibitors.

- Dark chemical matter: Compounds that have repeatedly failed to show activity in high-throughput screens [14].

Troubleshooting Guides for Computational Experiments

Guide: Poor Performance in Multi-Objective Optimization

Problem: The optimization algorithm is not efficiently finding good candidate molecules or reaction conditions on the Pareto front.

Possible Causes and Solutions:

- Cause 1: Inadequate fitness function formulation.

- Solution: Ensure your fitness function accurately reflects the real-world material properties. For molecular materials, relying solely on molecular properties and ignoring solid-state crystal packing can lead to poor performance. Incorporate Crystal Structure Prediction (CSP) into the fitness evaluation to account for the significant effects of the crystal structure [13].

- Cause 2: The solver is not suited for the specific problem type (e.g., mixed variable types, constraints).

- Solution: Re-evaluate your choice of solver. Refer to Table 1 and select a solver that handles your specific variable types (continuous/categorical) and can manage any process constraints [5].

- Cause 3: Algorithm is trapped in a local optimum.

- Solution: For evolutionary algorithms like CSP-EA, ensure the parameters for mutation and crossover are tuned to maintain population diversity. Using a representative set of initial structures in the CSP step can improve the robustness of the property prediction [13].

Guide: Handling Ionizable Compounds in Chemical Space Analysis

Problem: Computational predictions for ionizable compounds (weak acids, bases, ampholytes) are inaccurate under physiological conditions.

Explanation and Solution:

- Root Cause: Most chemical space analyses assume molecular structures are neutrally charged. However, an estimated 80% of contemporary drugs are ionizable. Their ionization state profoundly impacts solubility, permeability, binding, and toxicity [14].

- Solution: Do not rely solely on molecular descriptors calculated for the neutral species. Account for the pH-dependent ionization state of the compound in your environment (e.g., physiological pH). Use tools that can calculate molecular properties like lipophilicity (log P) for the correct charged species to improve the accuracy of your models [14].

The Scientist's Toolkit: Key Research Reagent Solutions

This table details essential computational and data resources for exploring the chemical space.

Table 2: Essential Resources for Navigating the Chemical Space

| Resource Name | Type | Function |

|---|---|---|

| ChEMBL | Public Database | A major source of biologically active small molecules with extensive bioactivity annotations, crucial for defining the BioReCS [14]. |

| PubChem | Public Database | A large collection of chemical substances and their biological activities, essential for chemoinformatics and CS analysis [14]. |

| InertDB | Public Database | A curated collection of experimentally confirmed and AI-generated inactive compounds. Vital for defining the non-biologically relevant regions of chemical space [14]. |

| MAP4 Fingerprint | Molecular Descriptor | A general-purpose molecular fingerprint designed to work across diverse chemical entities, from small molecules to peptides, aiding in the comparison of different ChemSpas [14]. |

| CSP-EA | Computational Algorithm | An evolutionary algorithm that integrates crystal structure prediction to evaluate candidate molecules based on solid-state material properties, not just molecular properties [13]. |

Experimental Protocol: CSP-Informed Evolutionary Algorithm (CSP-EA)

Methodology for Crystal Structure Prediction-Informed Evolutionary Optimization

The following workflow outlines the protocol for using an evolutionary algorithm guided by crystal structure prediction to navigate the vast chemical search space for materials discovery, as demonstrated in the search for organic molecular semiconductors [13].

Detailed Procedure:

- Initialize Population: Generate a diverse starting population of molecules from the target chemical search space [13].

- Loop (for each generation): a. Select Parents: Choose molecules from the current population to act as parents for the next generation. Selection is based on a fitness score derived from predicted material properties [13]. b. Create Offspring: Apply evolutionary operators (e.g., crossover to combine molecular features, mutation to introduce random changes) to the parent molecules to create a new set of offspring molecules [13]. c. Crystal Structure Prediction (CSP): For each new offspring molecule, perform crystal structure prediction to generate a set of low-energy, plausible crystal packing arrangements [13]. d. Calculate Fitness: Evaluate the fitness of each offspring molecule based on the properties calculated from its predicted crystal structures (e.g., electron mobility for semiconductors). This is the key step that differentiates CSP-EA from methods that use molecular properties alone [13].

- Check Stopping Criteria: Repeat the loop until a predefined condition is met, such as a maximum number of generations or convergence of the fitness score.

- Output Result: Return the set of non-dominated, Pareto-optimal molecules that represent the best trade-offs between the multiple objectives [13] [1].

Frequently Asked Questions (FAQs)

Q1: What makes multi-objective optimization (MOO) necessary in modern drug discovery? Traditional drug discovery often follows a "one-drug, one-target" paradigm, which is insufficient for complex diseases like cancer and neurodegenerative disorders, where multiple pathways are involved. MOO is necessary to balance several, often competing, molecular properties simultaneously. This includes enhancing efficacy against one or multiple targets while reducing toxicity, improving solubility, and maintaining selectivity to avoid off-target effects. The goal is to identify a set of optimal compromise solutions, known as the Pareto front, rather than a single "perfect" molecule [15].

Q2: What are the most common conflicting objectives in molecular optimization? The most common conflicts arise between:

- Efficacy vs. Toxicity: A molecule highly potent against a primary target may have strong off-target interactions, leading to adverse effects [15].

- Potency vs. Solubility/Absorption: Structural features that increase binding affinity (e.g., large aromatic rings, high molecular weight) can negatively impact aqueous solubility and oral bioavailability, governed by rules like Lipinski's Rule of Five [16] [17].

- Similarity vs. Novelty/Diversity: Optimizing a molecule for high structural similarity to a known active drug can limit the exploration of chemical space and reduce the potential for discovering novel scaffolds with improved properties [16] [17].

- Multi-Target Activity vs. Selectivity: Designing a drug to act on multiple disease-relevant targets (polypharmacology) can conflict with the objective of minimizing activity on unrelated targets to avoid toxicity [15].

Q3: How do computational methods like evolutionary algorithms handle these conflicts? Multi-objective evolutionary algorithms (MOEAs), such as NSGA-II, manage conflicts by evaluating populations of candidate molecules across all objectives at once. They use techniques like non-dominated sorting to rank molecules and crowding distance to maintain population diversity. This allows the algorithm to evolve a population towards the Pareto front, providing researchers with a diverse set of candidate molecules representing different trade-offs between the objectives, such as a molecule with slightly lower potency but much better solubility [16] [17].

Q4: What are the key metrics for evaluating a successful multi-objective optimization run? Success is not measured by a single metric but by a combination that assesses the quality of the entire set of candidate molecules:

- Success Rate (SR): The percentage of generated molecules that satisfy all predefined property thresholds [17].

- Hypervolume (HV): Measures the volume in objective space covered by the non-dominated solutions, indicating both convergence and diversity. A larger hypervolume is better [16] [17].

- Geometric Mean: Calculates the central tendency of performance across multiple objectives for a comprehensive view [16] [17].

- Internal Similarity: Assesses the structural diversity within the generated population of molecules [16] [17].

Troubleshooting Guides

Issue 1: Algorithm Prematurely Converges to a Single Region of Chemical Space

Problem: The optimization algorithm produces molecules that are too structurally similar, lacking diversity and potentially missing better compromise solutions.

Possible Causes and Solutions:

- Cause: Inadequate diversity preservation mechanism.

- Cause: Excessive selection pressure towards a single objective early in the run.

- Cause: Population size is too small.

- Solution: Increase the population size to allow for a broader sampling of the chemical space.

Issue 2: Generated Molecules Fail to Meet Key Physicochemical Property Thresholds

Problem: The final candidate molecules have poor drug-like properties, such as low solubility or incorrect lipophilicity (logP).

Possible Causes and Solutions:

- Cause: Objective function weights or thresholds are improperly set.

- Cause: The chemical space defined by the initial population or allowed mutations is biased or limited.

- Solution: Curate the initial molecule set and ensure the mutation operators (e.g., atom/bond changes, fragment replacements) can generate a wide range of valid, drug-like structures.

- Cause: Inaccurate property prediction models.

Issue 3: Inability to Balance Multi-Target Efficacy with Selectivity

Problem: Designed molecules either fail to hit the desired multiple targets or show excessive promiscuity, leading to predicted toxicity.

Possible Causes and Solutions:

- Cause: The objective function for "efficacy" is too simplistic.

- Cause: Lack of high-quality bioactivity data for off-targets.

- Solution: Leverage public bioactivity databases like ChEMBL, BindingDB, and DrugBank to build more comprehensive predictive models for both on-target and off-target interactions [15].

- Cause: The model is overfitting to a narrow set of training data.

Experimental Protocols & Data

Detailed Methodology: MoGA-TA Multi-Objective Optimization

The following protocol is adapted from the MoGA-TA algorithm for multi-objective drug molecule optimization [16] [17].

1. Problem Formulation:

- Define Objectives: Clearly specify the 2-5 molecular properties to be optimized. Examples from benchmarks include Tanimoto similarity (using ECFP4, FCFP4, or AP fingerprints), QED (drug-likeness), logP, TPSA, molecular weight, and specific biological activities [16] [17].

- Define Modifier Functions: For each objective, apply a modifier function to map the raw property value to a normalized score between 0 and 1. Common modifiers include

Gaussian(mean, sigma)for targeting a specific value,MaxGaussian(max, sigma)for maximizing a property, andThresholded(value)for setting a minimum acceptable level [16] [17].

2. Algorithm Initialization:

- Initialize Population: Generate a starting population of molecules, typically from a database like ChEMBL or by sampling around a lead compound.

- Set Parameters: Define population size (e.g., 100-1000), maximum number of generations, crossover rate, and mutation rate.

3. Evolutionary Loop:

- Evaluation: For each molecule in the population, calculate its scores for all defined objectives using the modifier functions.

- Non-dominated Sorting: Rank the population into fronts (Pareto fronts) based on Pareto dominance.

- Crowding Distance Calculation (Tanimoto-based): Calculate the crowding distance for molecules in the same front using Tanimoto similarity on molecular fingerprints. This promotes structural diversity.

- Selection: Select parent molecules for the next generation using tournament selection, favoring individuals from better fronts and those with larger crowding distances.

- Variation (Crossover & Mutation): Create offspring molecules by applying a decoupled crossover and mutation strategy within the chemical space. This involves SMILES-based or graph-based operations.

- Population Update (Dynamic Acceptance): Use a dynamic acceptance probability strategy to decide whether new offspring replace existing individuals, balancing exploration and exploitation.

4. Termination and Analysis:

- The loop continues until a stopping condition is met (e.g., max generations).

- The final output is the non-dominated set of molecules from the last generation, representing the Pareto-optimal solutions.

The table below summarizes six benchmark tasks used to evaluate the MoGA-TA algorithm, detailing the objectives and key results [16] [17].

Table 1: Multi-Objective Molecular Optimization Benchmark Tasks

| Task Name (Reference Drug) | Optimization Objectives | Key Experimental Findings |

|---|---|---|

| Fexofenadine [16] [17] | Tanimoto similarity (AP), TPSA, logP | MoGA-TA showed improved success rate and hypervolume compared to NSGA-II and GB-EPI. |

| Pioglitazone [16] [17] | Tanimoto similarity (ECFP4), Molecular weight, Number of rotatable bonds | The algorithm effectively balanced similarity constraints with physicochemical goals. |

| Osimertinib [16] [17] | Tanimoto similarity (FCFP4), Tanimoto similarity (FCFP6), TPSA, logP | Successfully handled four competing objectives, generating a diverse Pareto front. |

| Ranolazine [16] [17] | Tanimoto similarity (AP), TPSA, logP, Number of fluorine atoms | Demonstrated capability to optimize for a specific structural feature (fluorine count) alongside other properties. |

| Cobimetinib [16] [17] | Tanimoto similarity (FCFP4), Tanimoto similarity (ECFP6), Number of rotatable bonds, Number of aromatic rings, CNS | Effectively managed a complex five-objective task, including a central nervous system (CNS) activity score. |

| DAP kinases [16] [17] | DAPk1 activity, DRP1 activity, ZIPk activity, QED, logP | Showcased application in multi-target optimization (polypharmacology) while maintaining drug-likeness. |

Evaluation Metrics for Multi-Objective Optimization

Table 2: Key Performance Metrics for Algorithm Evaluation

| Metric | Description | Interpretation |

|---|---|---|

| Success Rate (SR) [17] | The percentage of generated molecules that meet all target property thresholds. | Higher is better. Directly measures the ability to produce viable candidates. |

| Hypervolume (HV) [16] [17] | The volume in objective space covered by the non-dominated solutions relative to a reference point. | A larger HV indicates a better combination of convergence and diversity. |

| Geometric Mean [16] [17] | The nth root of the product of scores for n objectives. | Provides a single measure of overall performance across all objectives. |

| Internal Similarity [16] [17] | The average pairwise structural similarity (e.g., Tanimoto) within the population. | A very high value may indicate lack of diversity; a moderate value is often desirable. |

Workflow and Algorithm Diagrams

Multi-Objective Molecular Optimization Workflow

MoGA-TA's Enhanced Selection and Update Strategy

Table 3: Essential Computational Tools and Data Resources

| Resource Name | Type | Function in Multi-Objective Optimization |

|---|---|---|

| RDKit [16] [17] | Software Library | Calculates molecular descriptors (e.g., logP, TPSA), generates fingerprints (ECFP, FCFP), and handles molecular I/O and operations. |

| ChEMBL [16] [15] | Bioactivity Database | Provides curated bioactivity data for building initial populations, training predictive models, and defining target activity objectives. |

| GuacaMol [16] [17] | Benchmarking Platform | Offers standardized molecular optimization tasks to fairly evaluate and compare the performance of different algorithms. |

| DrugBank [15] | Drug/Target Database | Supplies information on known drug-target interactions, useful for defining selectivity constraints and polypharmacology objectives. |

| Tanimoto Similarity [16] [17] | Metric | Quantifies structural similarity between molecules using fingerprints; used in crowding distance and similarity objectives. |

| NSGA-II Framework [16] [17] | Algorithm | Provides the core multi-objective evolutionary optimization logic (non-dominated sorting and selection). |

The Role of Tanimoto Similarity and Other Molecular Metrics in Quantifying Objectives

Frequently Asked Questions

1. Why is the Tanimoto coefficient the most recommended metric for comparing molecular fingerprints?

The Tanimoto coefficient (also known as Jaccard-Tanimoto) is consistently identified in large-scale studies as one of the best-performing metrics for fingerprint-based similarity calculations [19]. Its performance is often equivalent to other top metrics like the Dice index and Cosine coefficient, producing rankings closest to a composite average ranking of multiple metrics [19]. It is considered a robust and versatile choice for routine similarity searching in cheminformatics.

2. My similarity search results seem biased towards smaller molecules. Is this related to my choice of metric?

Yes, this can be a known limitation of certain metrics. The Tanimoto index has been reported to have a tendency to select smaller compounds during dissimilarity selection [19]. If this is affecting your results, you might consider testing alternative metrics like the Dice or Cosine coefficients, which were identified alongside Tanimoto as top performers but may exhibit different behavioral biases [19].

3. For interaction fingerprints (IFPs), is Tanimoto still the best similarity metric to use?

While Tanimoto is the most commonly used metric for Interaction Fingerprints (IFPs), research suggests that other similarity measures can be viable or even better alternatives depending on the specific virtual screening scenario [20]. It is recommended to evaluate multiple metrics for your specific IFP configuration and target protein. The Baroni-Urbani-Buser (BUB) and Hawkins-Dotson (HD) coefficients have shown promise in related fields [20].

4. I need a true mathematical metric for my analysis. Does the Tanimoto distance satisfy the triangle inequality?

The standard Tanimoto distance, defined as 1 - Tanimoto similarity, is a proper metric only when using binary fingerprints [21]. For continuous or general non-binary vector representations, this simple transformation may not satisfy the triangle inequality. In such cases, a modified form of the Tanimoto distance must be used to ensure it is a true metric [21].

5. How do I convert a distance or dissimilarity measure into a similarity score?

Conversion depends on the range of the distance metric [22]:

- For distances with a range of 0 to 1 (e.g., Soergel distance), similarity is simply:

Similarity = 1 - Distance. - For distances with a larger upper bound (e.g., Euclidean or Manhattan distance), you can use:

Similarity = 1 / (1 + Distance). This ensures that identical molecules (distance=0) have a similarity of 1, and highly dissimilar molecules have a similarity approaching 0.

6. What is an appropriate similarity threshold to consider two molecules "similar"?

A Tanimoto coefficient of 0.85 is historically used as a general threshold for molecular similarity, particularly with Daylight fingerprints [23]. However, this should not be universally applied as a guarantee of similar bioactivity [23]. The optimal threshold can vary significantly depending on the type of molecular fingerprint used (e.g., ECFP vs. MACCS keys) and the specific application [22]. Always validate thresholds within the context of your own data and objectives.

Troubleshooting Guides

Problem: Poor Performance in Virtual Screening or Multi-Objective Optimization

Your similarity metric may not be capturing the correct structural relationships for your specific task.

- Checklist:

- Verify Fingerprint Compatibility: Ensure your chosen similarity metric is appropriate for your fingerprint type (e.g., binary vs. continuous).

- Benchmark Multiple Metrics: Do not rely solely on Tanimoto. Test other high-performing metrics like Dice, Cosine, and Soergel, as their rankings can differ and may be more suitable for your chemical space [19].

- Inspect for Size Bias: If your results are skewed towards smaller molecules, it could be a known bias of the Tanimoto coefficient. Experiment with the Dice coefficient as an alternative [19].

- Consider Data Fusion: If no single metric performs outstandingly, use data fusion techniques (similarity fusion or group fusion) to combine the results from several different similarity metrics, which can enhance overall performance [19].

Problem: Inconsistent Similarity Rankings Between Different Software or Toolkits

Differences can arise from the implementation of the fingerprint or the metric itself.

- Checklist:

- Confirm Fingerprint Parameters: For common fingerprints like ECFP or FCFP, ensure the parameters (e.g., radius, length) are identical across toolkits (e.g., RDKit vs. Schrodinger).

- Validate Metric Implementation: Double-check the formula used by your software. The Tanimoto coefficient for binary fingerprints should be defined as

T = a / (a + b + c), whereais the number of bits set in both molecules, andbandcare the bits set in only one molecule [20]. - Use Standardized Tools: For critical comparisons, use well-documented and widely used cheminformatics toolkits like RDKit or CDK, and document the exact version and function used.

Comparison of Key Molecular Similarity and Distance Metrics

The table below summarizes commonly used metrics in cheminformatics, their mathematical formulas for binary fingerprints, and their key characteristics [19] [22].

| Metric Name | Type | Formula (Binary Features) | Value Range | Key Characteristics |

|---|---|---|---|---|

| Tanimoto Coefficient | Similarity | ( T = \frac{a}{a+b+c} ) | 0 to 1 | Gold standard; best overall performer; potential bias for small molecules [19]. |

| Dice Coefficient | Similarity | ( D = \frac{2a}{2a+b+c} ) | 0 to 1 | Top performer; very similar behavior to Tanimoto [19]. |

| Cosine Coefficient | Similarity | ( C = \frac{a}{\sqrt{(a+b)(a+c)}} ) | 0 to 1 | Top performer; often used in text and data mining [19]. |

| Soergel Distance | Distance | ( S_d = \frac{b+c}{a+b+c} ) | 0 to 1 | Complement of Tanimoto; identified as a top distance metric [19]. |

| Manhattan Distance | Distance | ( M_d = b + c ) | 0 to N* | Not recommended alone; can add diversity in data fusion [19]. |

| Euclidean Distance | Distance | ( E_d = \sqrt{b + c} ) | 0 to √N* | Not recommended alone; can add diversity in data fusion [19]. |

Note: N is the length of the molecular fingerprint [22].

Experimental Protocol: Benchmarking Similarity Metrics for a Virtual Screening Task

This protocol outlines how to evaluate different similarity metrics to identify the best one for a specific virtual screening campaign, based on methodologies from the literature [19] [20].

1. Objective To compare the performance of multiple similarity metrics (Tanimoto, Dice, Cosine, etc.) in enriching known active compounds from a decoy database for a given target protein.

2. Materials and Reagents

| Item | Function in Experiment |

|---|---|

| Active Compounds | A set of known active molecules for the target (from ChEMBL or other databases). Serves as reference queries. |

| Decoy Database | A large set of inactive or presumed inactive molecules (e.g., from DUD or ZINC). The background to search. |

| Cheminformatics Toolkit | Software like RDKit or KNIME to generate molecular fingerprints and calculate similarities. |

| Molecular Fingerprints | Structural representation of molecules (e.g., ECFP4, FCFP6, MACCS keys). The basis for comparison. |

3. Procedure

- Step 1: Data Preparation

- Select a reference set of active compounds for your target.

- Prepare a screening database by combining these actives with a large number of decoy molecules.

- Step 2: Molecular Representation

- Generate a consistent type of molecular fingerprint (e.g., ECFP4 with 1024 bits) for all molecules in the dataset.

- Step 3: Similarity Calculation

- For each active compound as a query, calculate its similarity to every molecule in the screening database using each metric under investigation (Tanimoto, Dice, Cosine, etc.).

- Step 4: Performance Evaluation

- For each query and each metric, rank the database molecules by descending similarity.

- Calculate enrichment metrics, such as the Area Under the Accumulative Recall Curve (AUC), the hit rate in the top 1% of the sorted list, or use the Sum of Ranking Differences (SRD) to compare the metrics against a consensus ranking [19] [20].

- Step 5: Analysis

- Aggregate the results across all query molecules.

- Identify the similarity metric(s) that provide the highest average enrichment for your specific target and fingerprint.

4. Workflow Diagram The following diagram illustrates the key steps in this benchmarking protocol.

The Scientist's Toolkit: Essential Research Reagents & Materials

This table lists key computational "reagents" used in molecular similarity analysis and multi-objective optimization experiments.

| Item | Function / Explanation |

|---|---|

| ECFP/FCFP Fingerprints | Binary vectors (e.g., ECFP4) that capture circular substructures of a molecule, forming a standard representation for similarity search [16]. |

| RDKit | An open-source cheminformatics toolkit used for generating fingerprints, calculating similarity, and property prediction [16]. |

| Tanimoto/Dice/Coefficients | Similarity functions used to quantify the structural overlap between two molecular fingerprints in a multi-objective task [19] [16]. |

| NSGA-II Algorithm | A multi-objective evolutionary algorithm used to find Pareto-optimal solutions balancing multiple properties [16]. |

| GuacaMol Benchmark | A framework and set of benchmark tasks for evaluating generative chemistry and molecular optimization models [16]. |

Algorithmic Toolkit: From Evolutionary Algorithms to Bayesian Optimization for Chemistry

Troubleshooting Guide for NSGA-II and MoGA-TA Experiments

FAQ 1: Why is my algorithm converging prematurely to a local optimum, lacking diversity in the final Pareto front?

Answer: Premature convergence often stems from a loss of population diversity, which can be addressed by refining the crowding distance calculation and population update strategies.

- Problem Analysis: In standard NSGA-II, the crowding distance estimates diversity based on objective space, which may not translate well to structural diversity in chemical problems. This can cause the population to cluster around a few similar solutions [16] [24].

- Recommended Solution: Implement the MoGA-TA adaptation. It replaces the standard crowding distance with a Tanimoto similarity-based crowding distance. This method more effectively captures structural differences between molecules, helping to maintain a diverse population and prevent premature convergence [16] [24].

- Additional Step: Incorporate a dynamic acceptance probability population update strategy. This strategy allows for broader exploration of the chemical space in early generations and progressively focuses on exploiting high-quality solutions in later stages, effectively balancing exploration and exploitation [16] [24].

FAQ 2: My molecular optimization experiments are computationally expensive. How can I improve efficiency?

Answer: High computational costs can be managed by leveraging specialized evolutionary algorithms and verifying your experimental setup.

- Problem Analysis: Traditional methods and deep generative models can have high data dependency and computational demands. Evolutionary algorithms like NSGA-II and MoGA-TA are designed to efficiently explore vast chemical spaces without the need for extensive prior training data [16].

- Recommended Solution:

- Algorithm Selection: For drug molecule optimization, use the MoGA-TA algorithm, which is specifically designed for this task and has been shown to significantly improve efficiency and success rate [16] [24].

- Tool Verification: Ensure you are using optimized libraries. The MOEA Framework provides fast, reliable implementations of NSGA-II and other algorithms, which can help avoid performance issues stemming from suboptimal code [25].

FAQ 3: How do I handle multiple, competing objectives in my chemical process design?

Answer: The core strength of NSGA-II and related algorithms is to handle multiple competing objectives without needing to combine them into a single goal.

- Problem Analysis: In multi-objective optimization, a single solution that optimizes all objectives simultaneously often does not exist. Instead, the goal is to find a set of "trade-off" solutions [26].

- Recommended Solution: NSGA-II uses a Pareto dominance ranking and crowding distance to construct a front of non-dominated solutions, known as the Pareto front. Each solution on this front represents a different compromise between the objectives. You can then use a decision-making method, like PROMETHEE II, to select the final best compromise solution from the Pareto front based on your specific preferences [26].

FAQ 4: Why is the performance of my NSGA-II algorithm low during iterations?

Answer: Low search efficiency can be improved by integrating more sophisticated search strategies.

- Problem Analysis: Standard evolutionary operators may not efficiently guide the search in complex, high-dimensional spaces.

- Recommended Solution: Recent research suggests that new search strategies, such as the neighbor strategy and guidance strategy, can significantly enhance search performance. These strategies, when integrated into algorithms like NSGA-III and MOEA/D, have been shown to improve convergence speed by over 12% and the accuracy of the solution set by nearly 4% [27]. Consider exploring and implementing such advanced strategies tailored to your problem's characteristics.

Experimental Protocols for Key Applications

Protocol 1: Optimizing a Triplex-Tube Phase Change Thermal Energy Storage Unit

This protocol details the use of NSGA-II for the multi-objective design of a thermal energy storage system, balancing heat transfer efficiency, storage rate, and mass [28].

- 1. Problem Formulation:

- Decision Variables: Inner tube radius (

r1), casing tube radius (r2), and outer tube radius (r3) [28]. - Objective Functions: Maximize heat transfer efficiency (

ε), maximize heat storage rate (Pt), and minimize mass (M) [28]. - Constraints: Define feasible ranges for the tube radii based on physical and manufacturing limitations [28].

- Decision Variables: Inner tube radius (

- 2. Algorithm Configuration (NSGA-II):

- Population Size: Use a population size suitable for the problem complexity (e.g., 100-500 individuals).

- Termination Condition: Run until a predefined number of generations or convergence criteria are met.

- Genetic Operators: Use simulated binary crossover (SBX) and polynomial mutation with typical distribution indices (e.g., ηc = 20, ηm = 20) [28].

- 3. Execution and Analysis:

- Run the NSGA-II algorithm to generate a Pareto-optimal set of design configurations.

- Analyze the Pareto front to select a final design. For example, an optimized configuration might be

r1=0.014 m,r2=0.041 m, andr3=0.052 m, which achieved a 2.12% improvement in heat transfer efficiency and a 73.23% increase in heat storage rate in one study [28]. - Validate the selected design through experimental testing or high-fidelity simulation, investigating parameters like heat transfer fluid (HTF) temperature and flow rate [28].

Protocol 2: Multi-Objective Drug Molecule Optimization with MoGA-TA

This protocol outlines the steps for optimizing lead compounds using the MoGA-TA algorithm, which enhances NSGA-II for chemical space [16] [24].

- 1. Problem Formulation:

- Starting Point: Begin with a known lead compound (e.g., Fexofenadine, Osimertinib) [16].

- Objective Functions: Define 2-5 objectives. These typically include:

- Tanimoto Similarity: Maximize structural similarity to the lead compound (calculated using ECFP, FCFP, or Atom Pair fingerprints) [16].

- Physicochemical Properties: Optimize properties like polar surface area (TPSA), lipophilicity (logP), molecular weight, number of rotatable bonds, etc., using Gaussian or threshold-based scoring functions to map values to a [0, 1] interval [16].

- Biological Activity: Maximize predicted activity against specific targets (e.g., DAPk1, DRP1, ZIPk) [16].

- 2. Algorithm Configuration (MoGA-TA):

- Representation: Encode molecules using their SMILES strings or molecular graphs.

- Genetic Operators: Use a decoupled crossover and mutation strategy specifically designed for chemical structures.

- Selection: Implement non-dominated sorting.

- Diversity Preservation: Use Tanimoto similarity-based crowding distance instead of the standard crowding distance.

- Population Update: Apply the dynamic acceptance probability strategy for population updates [16] [24].

- 3. Execution and Analysis:

- Run MoGA-TA until a stopping condition is met (e.g., number of generations or stability of the Pareto front).

- Evaluate the performance using metrics like success rate, dominating hypervolume, geometric mean, and internal similarity [16] [24].

- Select promising candidate molecules from the Pareto front for synthesis and experimental validation.

Workflow and Algorithm Comparison Diagrams

Diagram 1: NSGA-II vs MoGA-TA for Drug Optimization

Research Reagent Solutions: Essential Materials and Tools

The following table lists key computational "reagents" and resources used in multi-objective optimization for chemical research.

| Research Reagent / Tool | Function / Purpose | Key Features / Notes |

|---|---|---|

| NSGA-II [28] [29] [30] | A multi-objective evolutionary algorithm for finding a Pareto-optimal set of solutions. | Uses non-dominated sorting and crowding distance; well-suited for problems with 2-3 objectives [16]. |

| MoGA-TA [16] [24] | An NSGA-II adaptation for drug molecule optimization. | Employs Tanimoto crowding distance and dynamic acceptance probability to enhance diversity and efficiency. |

| MOEA Framework [25] | A Java library for multiobjective optimization. | Provides open-source implementations of NSGA-II, MOEA/D, and other algorithms; includes diagnostic tools. |

| RDKit [16] | An open-source cheminformatics toolkit. | Used for calculating molecular descriptors (e.g., TPSA, LogP), fingerprints, and Tanimoto similarity. |

| Tanimoto Similarity [16] [24] | A metric for quantifying the structural similarity between two molecules. | Core to MoGA-TA's diversity preservation; based on molecular fingerprints like ECFP4 and FCFP4. |

| PROMETHEE II [26] | A multi-criteria decision-making method. | Used to select a single best-compromise solution from the Pareto front generated by NSGA-II. |

Multi-Objective Bayesian Optimization (MOBO) and Expected Hypervolume Improvement (EHVI)

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary purpose of using EHVI in Multi-Objective Bayesian Optimization? EHVI is an acquisition function that quantifies the expected increase in the hypervolume indicator, which measures the volume of objective space dominated by a set of solutions relative to a reference point. It efficiently guides the selection of new evaluation points to maximize the expansion of the Pareto front, thereby identifying optimal trade-offs between competing objectives in expensive black-box function optimization [31].

FAQ 2: When should I use qNEHVI over qEHVI for my experiment?

For batch optimization or in noisy experimental settings, qNEHVI is strongly recommended over qEHVI. qNEHVI is mathematically equivalent in noiseless settings and is far more efficient because it integrates over the posterior distribution of the function values at previously evaluated points, which provides more robust performance with parallel computations [32].

FAQ 3: How do I set an appropriate reference point for hypervolume calculation? The reference point should be set to a value that is slightly worse than the lower bound of the acceptable objective values for each objective. It can be set using domain knowledge or a dynamic selection strategy. An improperly chosen reference point can bias the optimization; it acts as a lower bound for the hypervolume calculation and influences the distribution of solutions on the Pareto front [32] [31].

FAQ 4: My MOBO experiment is stalling. How can I overcome search stagnation? Conventional hypervolume improvement can create zero-gradient plateaus that stall optimization. A novel approach is to use a Negative Hypervolume Improvement (NHVI) infill criterion. NHVI assigns negative gradients to dominated regions, transforming these plateaus into searchable landscapes that actively drive optimization momentum. This can lead to a significant increase in Pareto solution density and faster convergence [33].

FAQ 5: What are the key advantages of MOBO over scalarization methods in analytical chemistry applications? Unlike scalarization, which combines objectives into a single function and requires pre-defined weights, Pareto-based MOBO does not need prior knowledge of the relative importance of each objective. This reveals the complete set of trade-offs between objectives, making it more robust for discovery tasks, such as finding molecules that optimally balance multiple properties like potency, solubility, and synthetic cost [34].

Troubleshooting Guides

Issue 1: Poor Pareto Front Distribution

Symptoms

- Clustered solutions that do not cover the full range of trade-offs.

- Gaps in the Pareto front.

Possible Causes and Solutions

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inadequate exploration | Check the acquisition function's balance; over-exploitation can cause clustering. | Use qNParEGO with random Chebyshev scalarizations to promote diversity [32] [35]. |

| Incorrect reference point | The reference point is too close to or far from the Pareto front. | Dynamically adjust the reference point based on observed data to ensure it properly bounds the objectives [32]. |

| High observation noise | Noise can obscure the true Pareto front. | Use qNEHVI, which integrates over noise, or increase the number of bootstrap iterations in your surrogate model for better uncertainty quantification [32] [36]. |

Issue 2: Prohibitively Long Computation Time for EHVI

Symptoms

- Slow optimization loops, especially as the number of objectives or data points grows.

Possible Causes and Solutions

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| High-dimensional objectives | Computation time scales poorly beyond 3-4 objectives with exact methods. | For many objectives (>3), use efficient approximations like the WFG algorithm, Monte Carlo methods, or neural approximators like HV-Net [31]. |

| Large number of Pareto points | The partitioning step becomes slow. | Enable prune_baseline=True (in qNEHVI) to remove points with near-zero probability of being on the Pareto front [32]. |

| Inefficient computation | Algorithm is running on CPU. | qEHVI and qNEHVI aggressively exploit parallel hardware. Run experiments on a GPU for significant speed-ups [32]. |

Issue 3: Model Fitting Failures or Poor Surrogate Performance

Symptoms

- The Gaussian Process (GP) model fails to converge or provides poor predictions.

- Optimization recommendations are erratic.

Possible Causes and Solutions

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Incorrect likelihood specification | Check if the noise level is correctly set for your data. | For problems with known, heteroskedastic (varying) noise, provide the train_yvar parameter to the SingleTaskGP model. If noise is unknown, the GP will infer homoskedastic noise [32]. |

| Insufficient or poor initial data | The model is initialized with too few data points. | Increase the number of initial quasi-random samples (e.g., Sobol sequences). A common heuristic is to use 2*(d+1) initial points, where d is the input dimension [32]. |

| Inappropriate surrogate model | The model cannot capture the complexity of the response surface. | Use automatic model selection or try alternative models. For complex, nonlinear relationships, GradientBoosting can be more effective than a Gaussian Process [36]. |

Experimental Protocol: Implementing a MOBO Loop with EHVI

This protocol provides a step-by-step methodology for setting up and running a MOBO experiment using the BoTorch framework, tailored for a dual-objective problem in analytical chemistry.

Problem Setup and Initial Data Collection

Objective: Define the search space and collect an initial dataset to fit the surrogate model.

- Define the Problem: Formulate your multi-objective problem. In BoTorch, all objectives are assumed to be maximized. Minimization problems must be negated [32].

- Specify Bounds: Define the bounds of your search space (e.g.,

bounds = torch.tensor([[0., 0.], [1., 1.]])). - Generate Initial Data: Use space-filling designs like Sobol sequences to generate initial training data.

Model Initialization

Objective: Construct a probabilistic surrogate model of the objective functions.

- Model Choice: Use a

ModelListGPcomprising independentSingleTaskGPmodels, one for each objective. This is flexible and allows for different noise levels per objective. - Likelihood Variance: If the noise standard deviations (

NOISE_SE) for each objective are known from experimental replication, provide them via thetrain_yvarargument. Otherwise, the GP will infer them [32].

Acquisition Function Configuration and Optimization

Objective: Set up the EHVI acquisition function and optimize it to find the next candidate point(s) for evaluation.

- Reference Point: Set a reference point

ref_pointthat is slightly worse than the worst acceptable value for each objective. - Partitioning: For

qEHVI, partition the non-dominated space usingFastNondominatedPartitioning. - Optimize Acquisition Function: Use

optimize_acqfwith sequential greedy optimization for batches (q > 1).

Evaluation and Iteration

Objective: Evaluate the selected candidates and update the model in a closed loop.

- Observe New Values: Evaluate the expensive objective functions at the candidate points (

new_x). - Update Dataset: Append the new data

(new_x, new_obj)to the training dataset. - Update Model: Re-fit the surrogate model with the expanded dataset.

- Repeat: Continue the loop for a predetermined number of iterations or until a convergence criterion is met (e.g., minimal hypervolume improvement).

Workflow Visualization

MOBO-EHVI Experimental Workflow

MOBO Acquisition Function Selection

Performance Metrics and Comparison

Quantitative Comparison of MOBO Acquisition Functions

| Acquisition Function | Key Principle | Best for Number of Objectives | Handles Noise? | Supports Batch? |

|---|---|---|---|---|

| EHVI [35] [31] | Expected increase in dominated hypervolume | 2-3 (analytic) | No (without extension) | No (without extension) |

| qEHVI [32] | Parallel batch version of EHVI | 2-4 | No | Yes |

| qNEHVI [32] [35] | Integrates over posterior at in-sample points | 2+ | Yes | Yes |

| qNParEGO [32] [35] | Random Chebyshev scalarizations | 2+ | Yes | Yes |

| NHVI [33] | Uses negative gradients in dominated regions to avoid stagnation | 2-6 | Yes | Yes |

| Test Problem | Number of Objectives | Conventional Method(Pareto Solutions) | NHVI Method(Pareto Solutions) | Improvement |

|---|---|---|---|---|

| ZDT1 | 2 | Baseline | ~3x density | ~200% increase |

| DTLZ5 | 3 | Baseline | ~2.5x density | ~150% increase |

| Aerodynamic Airfoil | 2 | 7 | 41 | 485% increase |

| DTLZ7 | 4 | Baseline | Faster convergence | Statistically significant |

The Scientist's Toolkit: Research Reagent Solutions

| Item / Algorithm | Function / Purpose | Key Considerations |

|---|---|---|

| BoTorch [32] [35] | A flexible framework for Bayesian optimization research and implementation, providing built-in MOBO components. | Provides implementations of qEHVI, qNEHVI, and qNParEGO. Recommended for full customization. |

| Ax [32] | A user-friendly platform for adaptive experimentation, built on BoTorch. | Simplifies setup; automatically selects NEHVI for multi-objective problems. Ideal for rapid deployment. |

| MultiBgolearn [36] | A Python package tailored for multi-objective materials design and discovery. | Offers EHVI, PI, and UCB methods with automatic surrogate model selection. |

| Gaussian Process (GP) | The core surrogate model for modeling unknown objective functions. | Use SingleTaskGP for independent modeling of each objective. ModelListGP combines them. |

| Reference Point [32] [31] | A crucial parameter for hypervolume calculation that bounds the region of interest. | Should be set using domain knowledge to be slightly worse than the worst acceptable objective values. |

| Sobol Sequences | A quasi-random method for generating initial space-filling design points before optimization begins. | A common heuristic is to use 2*(d+1) initial points, where d is the input dimension. |

Frequently Asked Questions (FAQs)

Q1: When should I choose the Chebyshev scalarizing function over the Weighted Sum method?

The choice depends primarily on the characteristics of your Pareto front. Use the Weighted Sum method for problems where you know or suspect the Pareto front is convex. It is computationally simpler and efficient for such cases. In contrast, the Chebyshev scalarizing function is more versatile; it can obtain Pareto optimal solutions for both convex and non-convex Pareto fronts, making it a safer choice for complex or unknown problem geometries [37] [38].

Q2: Why is my algorithm using the Chebyshev function only finding weakly Pareto optimal solutions?

A solution obtained with the Chebyshev scalarizing function is guaranteed to be at least weakly Pareto optimal [37]. To ensure regular Pareto optimality, you need to employ a modification. Common troubleshooting strategies include:

- Using the Lexicographic Method: Among all solutions optimal for the Chebyshev problem, select the one that is also optimal for a secondary optimization, such as minimizing the sum of objectives [39].

- Using an Augmented Function: Add a small, weighted term of the L1-norm (the weighted sum) to the Chebyshev function. This small augmentation penalizes solutions that are only weakly Pareto optimal [39].

Q3: My decomposition-based algorithm performs poorly on a problem with an irregular Pareto front. What components can I adjust?

Poor performance on irregular Pareto fronts (e.g., disconnected, degenerate, or highly nonlinear) is a known challenge [40]. You can improve performance by adjusting these key design elements:

- Adaptive Weight Vectors: Instead of using a fixed set of uniform weight vectors, implement a strategy that adapts the weights during the evolutionary process. This involves removing weight vectors in crowded regions of the objective space and adding new ones in sparse regions [40].

- Scalarizing Function Parameters: For the Chebyshev and Penalty-Based Boundary Intersection (PBI) functions, carefully tune the penalty parameter. For the Chebyshev function, you can also experiment with different Lp-norms [40].

- Reference Point Strategy: Some advanced algorithms use multiple reference points instead of a single ideal point to guide the search, which can prevent the population from converging to a single region of the Pareto front [40].

Q4: Does the performance of decomposition-based methods degrade as the number of objectives increases?

All multi-objective algorithms face challenges with a high number of objectives, a field often called "many-objective optimization." While decomposition-based methods like MOEA/D are often cited as being more robust than Pareto-dominance methods for many objectives, they are not immune [37] [41]. The primary issue is that the number of subproblems needed to approximate the Pareto front well grows rapidly. However, under mild conditions, the Chebyshev scalarizing function has been shown to have an effect almost identical to Pareto-dominance relations, suggesting that the main issue may be the algorithm's ability to follow a balanced trajectory rather than the scalarization itself [37].

Troubleshooting Guides

Issue: Inability to Find Certain Pareto Optimal Solutions (Weighted Sum)

- Problem Description: The algorithm converges to a limited set of solutions, missing large portions of the Pareto front, particularly in concave regions.

- Root Cause: The Weighted Sum method can only find solutions that lie on the convex hull of the Pareto front. Any solution in a non-convex (concave) region will be inaccessible, regardless of the weights used [39].

- Solution:

- Switch Scalarizing Function: Replace the Weighted Sum with the Chebyshev scalarizing function, which can obtain all Pareto optimal solutions for a suitable choice of weights, even for non-convex problems [37] [39].

- Verify with a Simple Test: Test your algorithm on a benchmark problem with a known concave Pareto front to confirm the limitation.

Issue: Poor Diversity in the Final Solution Set

- Problem Description: The obtained solutions are clustered in some regions of the Pareto front, while other regions are unexplored.

- Root Cause: The distribution of solutions is highly dependent on the distribution of weight vectors and the shape of the Pareto front. Using a fixed set of uniform weights does not guarantee a uniform distribution of solutions if the Pareto front is irregular [40].

- Solution:

- Implement Weight Adaptation: Introduce a periodic weight vector adjustment mechanism.

- Monitor Solution Density: Identify crowded and sparse regions in the objective space.

- Reallocate Resources: Remove weight vectors associated with crowded solutions and generate new weight vectors to target sparse areas [40].

Issue: Algorithm Convergence is Slow or Stagnates

- Problem Description: The algorithm's progress toward the Pareto front is slower than expected or halts prematurely.

- Root Cause: The selection pressure toward better solutions may be insufficient. In decomposition-based algorithms, how parents are selected for recombination is a critical design element that significantly impacts convergence [38].

- Solution:

- Revisit Parent Selection Mechanism: Compared to the selection of weight vectors, the parent selection strategy has a higher influence on performance [38]. Ensure your selection mechanism provides sufficient selective pressure.

- Hybridize with Local Search: Incorporate a local search operator that optimizes the scalarizing function to refine solutions and accelerate convergence.

Comparative Analysis of Scalarizing Functions

The following table summarizes the core properties of the Weighted Sum and Chebyshev functions to aid in selection and troubleshooting.

Table 1: Comparison of Key Scalarizing Functions

| Feature | Weighted Sum | Chebyshev |

|---|---|---|

| Basic Formulation | ( s1(z, \lambda) = \sum{j=1}^J \lambdaj zj ) [38] | ( s\infty(z, z^*, \lambda) = \maxj [ \lambdaj (zj - z^*_j ) ] ) [38] |

| Pareto Front Geometry | Only finds solutions on convex hull (supported solutions) [39] | Can find solutions on both convex and non-convex regions [37] |

| Guarantee on Solutions | Produces supported efficient solutions | Produces at least weakly efficient solutions; modifications needed for strictly efficient solutions [37] [39] |

| Parameter Sensitivity | Performance sensitive to weight vector distribution | Performance sensitive to weight vectors and reference point ( z^* ) selection |

| Computational Complexity | Generally lower | Generally higher due to the max function |

Experimental Protocols

Protocol: Benchmarking Scalarizing Function Performance

This protocol outlines how to compare the performance of different scalarizing functions within an evolutionary algorithm framework.

- Algorithm Framework: Select a decomposition-based evolutionary algorithm framework like MOEA/D [40] [38].

- Variable Component: Implement different scalarizing functions (e.g., Weighted Sum, Chebyshev, Augmented Chebyshev) as interchangeable components.

- Benchmark Problems: Choose a diverse set of benchmark problems with known Pareto front geometries (e.g., ZDT, CEC 2009 test suites), including both convex and non-convex fronts [42].

- Performance Metrics: Evaluate results using standard metrics:

- Statistical Analysis: Perform multiple independent runs and use statistical tests (e.g., Wilcoxon signed-rank test) to determine the significance of performance differences.

Protocol: Adaptive Adjustment of Weight Vectors

This protocol describes a method for dynamically adjusting weight vectors to improve solution diversity on irregular Pareto fronts [40].

- Initialization: Generate an initial set of evenly distributed weight vectors using a method like Simplex Lattice Design [40].

- Optimization Cycle: Run the evolutionary algorithm for a predetermined number of generations.

- Population Assessment: Periodically (e.g., every 50 generations), analyze the current population of solutions. Cluster solutions in the objective space to identify crowded and sparse regions.

- Weight Adjustment:

- Delete: Identify weight vectors associated with a cluster of solutions in a crowded region and remove a portion of them.

- Add: Generate new weight vectors that point toward the sparse regions of the Pareto front.

- Continuation: Continue the optimization process with the updated set of weight vectors.

Diagram 1: Adaptive Weight Vector Adjustment Workflow

The Scientist's Toolkit

Table 2: Key Reagents and Computational Tools for Decomposition-Based Optimization

| Reagent / Tool | Function / Purpose | Example / Note |

|---|---|---|