Navigating ICH Q2(R2): A Complete Guide to Analytical Method Validation Parameters for 2025

This article provides a comprehensive overview of the modernized ICH Q2(R2) guideline for analytical method validation, tailored for researchers, scientists, and drug development professionals.

Navigating ICH Q2(R2): A Complete Guide to Analytical Method Validation Parameters for 2025

Abstract

This article provides a comprehensive overview of the modernized ICH Q2(R2) guideline for analytical method validation, tailored for researchers, scientists, and drug development professionals. It explores the fundamental shift from a one-time validation approach to a science- and risk-based lifecycle management model, covering core validation parameters, practical application strategies, troubleshooting common challenges, and comparative analysis with previous standards. The content synthesizes the latest regulatory expectations, including the integration with ICH Q14 for analytical procedure development, to equip professionals with actionable knowledge for ensuring regulatory compliance and data integrity in pharmaceutical analysis.

Understanding ICH Q2(R2): The New Foundation for Analytical Method Validation

The International Council for Harmonisation (ICH) Q2(R2) guideline, titled "Validation of Analytical Procedures," provides a harmonized international framework for validating analytical methods used in the pharmaceutical industry [1]. This revised guideline, which became effective in June 2024, represents the first major update in nearly three decades, replacing the previous ICH Q2(R1) standard that originated in 1994 [2] [3]. The update was developed to address significant advancements in analytical technologies and the increasing complexity of modern drug substances and products, particularly biological and biotechnological materials [2] [4].

The primary regulatory significance of ICH Q2(R2) lies in its role as a foundational document for regulatory submissions across major international markets, including the European Medicines Agency (EMA), the U.S. Food and Drug Administration (FDA), and other ICH member regulatory authorities [1] [5]. Compliance with this guideline demonstrates that analytical procedures are scientifically sound and "fit for purpose"—ensuring the quality, safety, and efficacy of pharmaceutical products throughout their lifecycle [6] [7]. The guideline applies specifically to analytical procedures used for release and stability testing of commercial drug substances and products, though it can also be applied to other procedures within a risk-based control strategy [1].

Key Evolution from ICH Q2(R1) to Q2(R2)

The transition from ICH Q2(R1) to Q2(R2) reflects the substantial evolution in pharmaceutical development and analytical science since the 1990s. While the original guideline was primarily designed around traditional small molecule drugs, the revised version addresses the unique challenges posed by complex biologics and modern analytical technologies [2]. This evolution incorporates principles from subsequent ICH guidelines (Q8, Q9, Q10) that did not exist when the original was drafted, creating a more comprehensive framework for analytical validation [3] [7].

One of the most significant conceptual shifts in Q2(R2) is the introduction of a lifecycle approach to analytical procedures [2]. This perspective moves beyond treating validation as a one-time event and instead advocates for continuous validation and assessment throughout the method's operational use [2]. The revised guideline also works in concert with the new ICH Q14 guideline on "Analytical Procedure Development," creating a cohesive framework where development and validation activities are interconnected throughout the analytical procedure lifecycle [3] [7].

Figure 1: Evolution from ICH Q2(R1) to ICH Q2(R2)

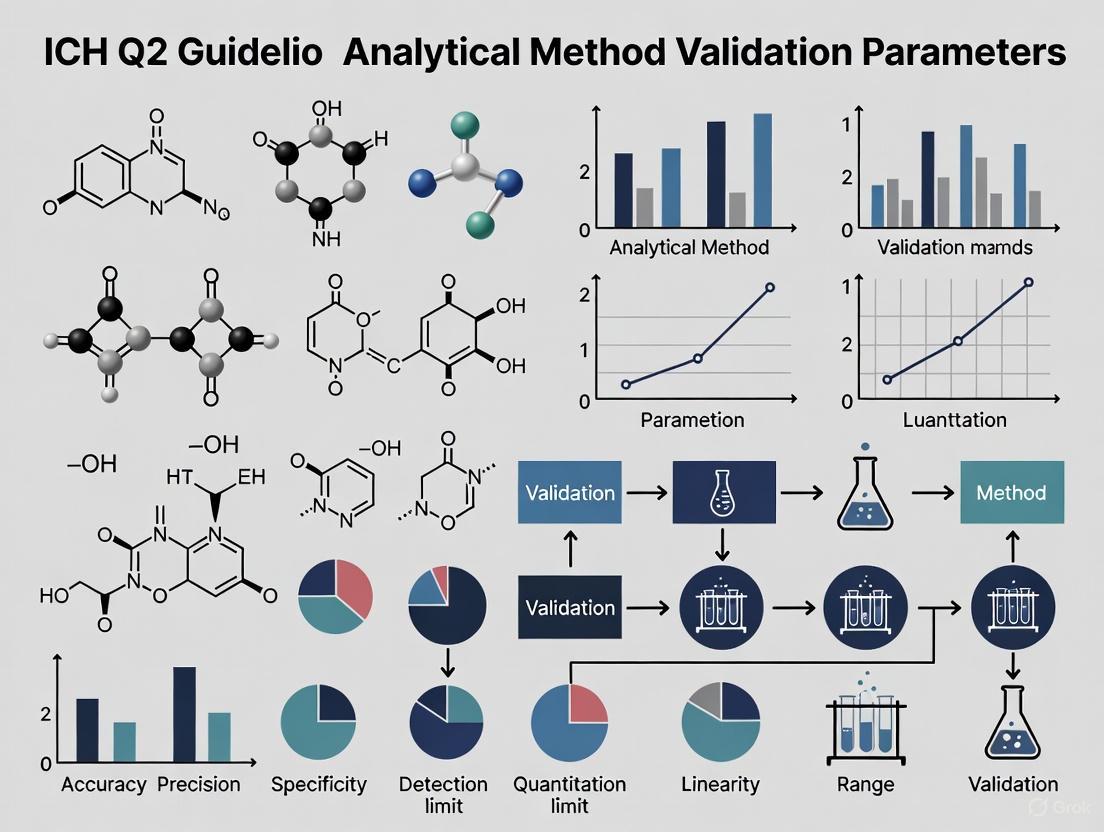

Core Validation Parameters and Requirements

ICH Q2(R2) maintains the fundamental validation characteristics established in Q2(R1) but provides enhanced guidance for their application to modern analytical techniques [4]. The guideline outlines specific experimental methodologies and acceptance criteria for each parameter to ensure analytical methods consistently produce reliable results suitable for their intended purpose [6] [4].

Structured Validation Parameters

The table below summarizes the core validation parameters and their technical requirements as outlined in ICH Q2(R2):

Table 1: Core Validation Parameters and Requirements under ICH Q2(R2)

| Parameter | Experimental Methodology | Acceptance Criteria Examples | Technical Requirements |

|---|---|---|---|

| Accuracy [6] [4] | Recovery studies using spiked samples with known analyte concentrations across specified range; minimum 9 determinations over 3 concentration levels [6]. | Drug substances: 98-102% recovery [6]. Drug products: 95-105% recovery [6]. | Results should be close to true value; demonstrated with reference materials [4]. |

| Precision [6] [4] | Repeatability: Multiple measurements under identical conditions [4]. Intermediate precision: Different analysts, instruments, or days [4]. Reproducibility: Between laboratories [2]. | Repeatability: RSD ≤2.0% for assays; ≤5.0% for impurities [6]. | Measured as % relative standard deviation (%RSD) [4]. |

| Specificity/Selectivity [4] [7] | Demonstrate ability to measure analyte unequivocally in presence of potential interferents (impurities, degradation products, matrix components) [4]. | No interference from blank; baseline separation from known interferents [4]. | Critical for stability-indicating methods; updated guidance in Q2(R2) [7]. |

| Linearity [4] | Prepare analyte solutions at minimum 5 concentration levels across specified range; measure response [4]. | Correlation coefficient (r) >0.998; visual inspection of plot for linear relationship [4]. | Direct correlation between analyte concentration and signal response [4]. |

| Range [2] [4] | Establish by confirming linearity, accuracy, and precision across specified interval from upper to lower concentration levels [4]. | Dependent on intended application; typically 80-120% of test concentration for assay [4]. | Must be linked to Analytical Target Profile (ATP) [2]. |

| Robustness [2] [4] | Deliberate variations in method parameters (mobile phase pH, column temperature, flow rate); measure impact on results [4]. | System suitability criteria met despite variations; identifies critical parameters for control [4]. | Now compulsory in Q2(R2); tied to lifecycle management [2]. |

| LOD/LOQ [4] | Signal-to-noise ratio (typically 3:1 for LOD, 10:1 for LOQ) or statistical methods based on standard deviation of response and slope [4]. | Appropriate for intended use; LOQ must demonstrate acceptable accuracy and precision [4]. | Defines lowest amount detectable (LOD) or quantifiable (LOQ) [4]. |

Expanded Scope for Modern Technologies

ICH Q2(R2) significantly expands its scope to address analytical technologies that have emerged since Q2(R1) was published [6]. The guideline now includes specific validation approaches for:

- Multivariate analytical procedures used for real-time release testing (RTRT) and process analytical technology (PAT) [3] [6]

- Advanced spectroscopic techniques including NIR, Raman, Mass Spectrometry, and NMR, with specific validation considerations for chemometric models [6]

- Biomarker detection technologies including digital PCR, next-generation sequencing, and mass spectrometry-based proteomics [6]

- Bioanalytical methods that were not comprehensively covered in the previous version [6]

Analytical Procedure Lifecycle and Q2(R2) Implementation

The lifecycle approach introduced in ICH Q2(R2) transforms analytical validation from a one-time event into a continuous process integrated with routine quality control [2]. This approach requires ongoing method performance monitoring and periodic assessment to ensure methods remain valid throughout their operational use [2].

Implementation Strategy

Successful implementation of ICH Q2(R2) requires a structured approach across pharmaceutical organizations:

Table 2: Strategic Implementation of ICH Q2(R2)

| Strategy Component | Key Activities | Expected Outcomes |

|---|---|---|

| Education & Training [2] | Train staff on changes between Q2(R1) and Q2(R2); education on ICH Q14 principles [2]. | Smooth transition to updated standards; improved regulatory compliance. |

| Process Reevaluation [2] | Gap analysis of existing methods; identify areas for improvement [2] [6]. | Alignment with new guidelines; enhanced method robustness. |

| Risk-Based Method Development [2] | Implement proactive risk management; use FMEA tools; define Analytical Target Profile (ATP) early [2]. | More robust methods; reduced failures; efficient troubleshooting. |

| Enhanced Documentation [2] | Thorough records of development, validation, and changes; improved data integrity systems [2]. | Easier regulatory audits; better traceability; streamlined submissions. |

| Lifecycle Management [2] [6] | Continuous method monitoring; periodic reviews; change control procedures [2] [6]. | Sustained method effectiveness; adaptation to new technologies/requirements. |

The Scientist's Toolkit: Essential Research Reagents and Materials

The implementation of ICH Q2(R2)-compliant validation requires specific materials and reagents to ensure accurate and reproducible results:

Table 3: Essential Research Reagent Solutions for ICH Q2(R2) Compliance

| Reagent/Material | Function in Validation | Critical Quality Attributes |

|---|---|---|

| Certified Reference Standards [4] | Establish accuracy through recovery studies; calibrate analytical instruments [4]. | Certified purity and stability; traceability to primary standards. |

| System Suitability Test Mixtures [4] | Verify chromatographic system performance before validation experiments [4]. | Reproducible retention times and peak responses. |

| Forced Degradation Samples [7] | Demonstrate specificity/stability-indicating capabilities [7]. | Controlled degradation profiles; well-characterized degradants. |

| Matrix-Matched Calibrators | Account for matrix effects in biological sample analysis; establish true method linearity. | Commutability with real samples; minimal matrix interferences. |

| Quality Control Materials [4] | Monitor precision across multiple runs; establish intermediate precision [4]. | Homogeneous and stable; concentrations at critical levels. |

Regulatory Impact and Global Harmonization

The implementation of ICH Q2(R2) has profound implications for regulatory compliance and global market access. By harmonizing validation requirements across international regulatory bodies, the guideline facilitates simultaneous submissions to multiple authorities without country-specific revalidation [6]. This standardization reduces redundant testing, lowers development costs, and accelerates time-to-market for new pharmaceutical products [6].

Regulatory agencies including the FDA and EMA now expect a science- and risk-based approach to method validation, with thorough documentation and clear justification for all acceptance criteria [4]. The enhanced focus on data integrity and robust quality systems throughout the product lifecycle represents a significant shift in regulatory expectations compared to the Q2(R1) era [4]. Modern regulatory inspections increasingly focus on complete validation lifecycles rather than mere documentation compliance, using systematic reviews of validation protocols, data, and deviations to ensure scientific soundness [6].

The harmonization achieved through ICH Q2(R2) ultimately transforms analytical validation from a regulatory obligation into a competitive advantage, enabling pharmaceutical companies to operate more efficiently in the global marketplace while maintaining the highest standards of product quality and patient safety [6].

Key Differences Between ICH Q2(R1) and ICH Q2(R2)

The evolution from ICH Q2(R1) to ICH Q2(R2) represents a fundamental shift in the philosophy and practice of analytical procedure validation within the pharmaceutical industry. Originally published in 1994, ICH Q2(R1) established a foundational framework for validating analytical methods with respect to parameters such as specificity, accuracy, precision, detection limit, quantitation limit, linearity, and range [2]. For decades, this guideline served as the primary standard for analytical methods used in the release and stability testing of commercial drug substances and products. However, significant advancements in pharmaceutical development—particularly the increasing complexity of biopharmaceutical products and analytical technologies—revealed limitations in the original guideline, which was primarily designed around the needs of traditional small molecule drugs [2].

The revised ICH Q2(R2) guideline, finalized in November 2023 and implemented by regulatory agencies including the FDA and EMA in 2024, introduces substantial changes that align analytical validation with modern scientific principles [5] [8]. Developed in conjunction with the new ICH Q14 guideline on analytical procedure development, Q2(R2) moves beyond the traditional "one-time event" validation approach toward a comprehensive lifecycle management perspective [2] [9]. This transformation addresses critical gaps in the original guideline, particularly for biologics and complex analytical techniques, while promoting more flexible, science-based approaches to ensure the robustness, reliability, and reproducibility of analytical methods throughout their operational use [2]. The updated guideline provides enhanced guidance for validating a wider range of analytical procedures, including those employing spectroscopic techniques such as NIR, Raman, NMR, or MS, which often require multivariate statistical analyses [10].

Fundamental Conceptual Shifts: From Static Validation to Dynamic Lifecycle Management

The Lifecycle Approach

The most significant philosophical shift between ICH Q2(R1) and Q2(R2) is the transition from treating validation as a discrete event to managing it as an ongoing process. Under Q2(R1), validation was typically performed as a one-time demonstration of acceptable method performance against predetermined acceptance criteria, after which the method was considered "validated" until circumstances forced revalidation [9]. This approach created what critics have termed "compliance theater"—a performance of rigor that may not reflect the method's actual capability to generate reliable results under routine operating conditions [9].

ICH Q2(R2) embraces a dynamic lifecycle perspective that integrates with ICH Q14's principles for analytical procedure development [2] [9]. This approach advocates for continuous validation and assessment throughout the method's operational use, from initial development through retirement [2]. The lifecycle management framework consists of three interconnected stages: Stage 1 (Procedure Design) focuses on developing thorough method understanding and defining an Analytical Target Profile (ATP); Stage 2 (Procedure Performance Qualification) corresponds to the traditional validation exercise; and Stage 3 (Continued Procedure Performance Verification) involves ongoing monitoring to ensure the method remains fit for purpose throughout its operational life [9]. This continuous validation model treats method capability as dynamic rather than static, requiring systems for ongoing method evaluation and improvement that integrate quality control and method optimization as perpetual activities [2].

Analytical Target Profile (ATP) and "Fitness for Purpose"

ICH Q2(R2) introduces the concept of "fitness for purpose" as an organizing principle for validation strategy, moving beyond the checkbox mentality that sometimes characterized Q2(R1) compliance [9]. This principle requires explicit articulation of how analytical results will be used and what performance characteristics are necessary to support those decisions. The guideline aligns with the Analytical Target Profile (ATP) from ICH Q14, which specifies the required quality of the reportable result—the final analytical value used for quality decisions—before method development begins [2] [9].

The ATP defines the measurement quality objective, linking method performance directly to its intended use and creating a foundation for science-based validation protocols [2]. This represents a shift from the traditional category-based approach (Categories I-IV) that prescribed specific validation parameters based primarily on method type rather than method purpose [9]. Under Q2(R2), validation strategies must now be risk-based, matching validation effort to analytical criticality and complexity, with the ATP ensuring that analytical methods are robust enough to handle specified ranges of analytical targets [2] [9].

Knowledge Management and Use of Prior Knowledge

ICH Q2(R2) formally recognizes the importance of knowledge management in analytical validation, explicitly allowing the use of data from development studies and prior knowledge to support validation activities [10]. This represents a significant departure from the Q2(R1) paradigm, which often treated validation as an isolated exercise separate from method development.

The revised guideline encourages manufacturers to leverage platform knowledge from similar methods, experience with related products, and data generated during method development as legitimate inputs to validation strategy [9] [10]. This approach can justify reduced validation for established procedures where sufficient prior knowledge exists, potentially eliminating redundant studies while maintaining scientific rigor [10] [8]. By integrating knowledge management into the validation framework, Q2(R2) supports more efficient, scientifically justified validation protocols that build upon existing understanding rather than repeating non-value-added studies [8].

Changes to Validation Parameters and Statistical Requirements

Comprehensive Comparison of Validation Parameters

The following table summarizes the key differences in validation parameters between ICH Q2(R1) and ICH Q2(R2):

| Validation Parameter | ICH Q2(R1) Approach | ICH Q2(R2) Enhancements | Practical Implications |

|---|---|---|---|

| Accuracy | Evaluated through recovery studies or comparison to reference | More comprehensive requirements including intra- and inter-laboratory studies [2] | Demonstrates method reproducibility across different settings |

| Precision | Includes repeatability and intermediate precision | Enhanced focus on the precision of the reportable result [9] | Validates the actual value used for quality decisions, not just individual measurements |

| Linearity & Range | Established through linearity studies with specified range | Streamlined requirements but stronger link to ATP; range directly tied to intended use [2] | More scientifically justified range setting based on actual analytical needs |

| Detection & Quantitation Limits | Determined by visual, signal-to-noise, or standard deviation methods | Refined determination approaches with clarified statistical basis [2] | More consistent and defensible limit determinations |

| Specificity | Demonstrated through forced degradation studies | Enhanced guidance for complex modalities and multivariate methods [10] | Better suited for biologics and advanced analytical techniques |

| Robustness | Often studied pre-validation | Now compulsory and integrated with lifecycle management [2] | Ongoing evaluation of method stability against operational variation |

Statistical Rigor and Confidence Intervals

ICH Q2(R2) introduces significantly enhanced statistical requirements compared to its predecessor. While Q2(R1) stated that confidence intervals around reported recovery/mean "should be reported," Q2(R2) expands this to require that "an appropriate 100(1-α)% confidence interval (or justified alternative statistical interval) should be reported" and that "the observed interval should be compatible with the corresponding acceptance criteria, unless otherwise justified" [8].

This enhanced focus on statistical intervals addresses a fundamental limitation of traditional validation by providing a more meaningful assessment of measurement uncertainty. However, industry surveys indicate that 76% of professionals have concerns about this new requirement, primarily due to limited replicate samples making confidence intervals potentially meaningless, insufficient experience setting appropriate acceptance criteria, and lack of internal statistical expertise [8]. One respondent to an industry survey noted concerns about "the increased risk of failures during validation against a criterion that we don't understand well enough to set meaningfully at this point" [8].

Combined Accuracy and Precision

A significant technical advancement in ICH Q2(R2) is the allowance for combined evaluation of accuracy and precision using statistical intervals that account for both bias (accuracy) and variability (precision) simultaneously [9] [8]. Traditional validation treated these as separate performance characteristics evaluated through different experiments, potentially missing important interactions between them.

The combined approach recognizes that what ultimately matters for reportable results is the total error combining both bias and variability [9]. A highly precise method with moderate bias might generate reportable results within acceptable ranges, while a method with excellent accuracy but poor precision might not. According to recent industry surveys, 58% of companies are already using or planning to use combined approaches, while 40% continue with conventional separate evaluations [8]. Some organizations report challenges with health authority acceptance of combined approaches, particularly for highly variable methods such as those used for cell and gene therapies [8].

Figure 1: Comparison of Traditional (Q2R1) and Combined (Q2R2) Approaches to Accuracy and Precision Evaluation

New Concepts and Expanded Scope

Reportable Result and Replication Strategy

ICH Q2(R2) introduces the crucial concept of reportable result—defined as the final analytical result that will be reported and used for quality decisions, not individual sample preparations or replicate injections [9]. This distinction is fundamentally important because validation historically focused on demonstrating acceptable performance of individual measurements without always considering how those measurements would be combined to generate reportable values.

The reportable result concept forces validation to focus on what will actually be used for quality decisions. If a standard operating procedure specifies reporting the mean of duplicate sample preparations, each prepared in duplicate and injected in triplicate, then validation should evaluate the precision and accuracy of that mean value, not just the repeatability of individual injections [9]. This aligns with the ATP's focus on defining required performance characteristics for the reportable result, creating an outcome-focused validation framework rather than an activity-focused one [9].

Closely related is the replication strategy concept, which addresses the disconnect between how validation experiments are conducted and how methods are actually used routinely. Validation studies often use simplified replication schemes optimized for experimental efficiency rather than reflecting the full procedural reality of routine testing [9]. Q2(R2) emphasizes that validation should employ the same replication strategy that will be used for routine sample analysis to generate reportable results, ensuring that validation captures the same sources of variability that will be encountered during actual use [9].

Platform Analytical Procedures

For the first time, ICH Q2(R2) formally recognizes the concept of platform analytical procedures for molecules that are sufficiently similar with respect to the attributes the procedure is intended to measure [8]. This approach is particularly valuable for companies developing related biological products or complex modalities where the analytical techniques remain consistent across multiple molecules.

Platform approaches allow manufacturers to leverage extensive validation data from one application to support abbreviated validation for similar applications, significantly improving efficiency [8]. According to industry surveys, over 50% of respondents have utilized platform analytical procedures in clinical development, though slightly more than 10% have successfully secured regulatory approval of platform procedures with abbreviated validation for commercial registration [8]. This gap suggests that while the scientific concept is established, regulatory acceptance for commercial products is still evolving. However, survey responses indicate that those planning to implement platform procedures for future commercial registrations increased to 45%, reflecting growing confidence in this approach [8].

Multivariate Analytical Procedures

ICH Q2(R2) provides significantly enhanced guidance for multivariate analytical procedures, addressing a critical gap in the original guideline [10] [8]. The updated guideline includes validation principles that cover analytical techniques employing spectroscopic data (e.g., NIR, Raman, NMR, or MS) that often require multivariate statistical analyses [10].

This expansion acknowledges the increasing use of multivariate calibration models and advanced analytical technologies in modern pharmaceutical analysis, particularly for complex biologics and continuous manufacturing applications [8]. The guideline provides clarity on applying validation concepts to these sophisticated techniques, though industry surveys indicate challenges remain in developing initial models and meeting expectations for multivariate calibration [8]. The inclusion of these techniques in the formal guideline represents an important modernization of the validation framework, ensuring it remains relevant to contemporary analytical technologies.

Implementation Challenges and Industry Readiness

Regulatory Implementation Timeline

The implementation timeline for ICH Q2(R2) and the complementary ICH Q14 guideline has been progressive across regulatory jurisdictions. The guidelines reached Step 4 of the ICH process on 1 November 2023, after which regulatory members are expected to implement them within their respective countries or regions [8]. As of 2024, the guidelines have been implemented by the European Commission (EC), US Food and Drug Administration (FDA), the Swiss Agency for Therapeutic Products (Swissmedic), the Egyptian Drug Authority, and the National Medical Products Administration (NMPA) in China [8]. Some non-ICH regions have also begun adopting these guidelines, though with varying timelines and approaches.

The following table outlines the key implementation milestones and current status across major regulatory jurisdictions:

| Regulatory Body | Implementation Status | Effective Date | Key Implementation Features |

|---|---|---|---|

| European Medicines Agency (EMA) | Implemented | 14 June 2024 [10] | Published revised ICH Q2 guideline in January 2024 |

| US Food and Drug Administration (FDA) | Implemented | March 2024 [5] | Issued final guidance document (FDA-2022-D-1503) |

| Swissmedic | Implemented | 2024 [8] | Adopted along with other ICH regions |

| China NMPA | Implemented | 2024 [8] | Implemented through national regulatory process |

| Argentina | Partial Implementation | 2024 [8] | Implemented Q14 but not yet Q2(R2) |

| Saudi Arabia | Partial Implementation | 2024 [8] | Implemented Q2 but not yet Q14 |

Identified Industry Challenges

Industry readiness surveys conducted in 2024 identified several significant challenges in implementing ICH Q2(R2):

Statistical Expertise Gaps: 76% of survey respondents expressed concerns about implementing confidence interval requirements, with 16% explicitly stating their organizations lack internal expertise to implement these statistical approaches effectively [8].

Regulatory Acceptance Uncertainties: Companies report uncertainty about whether regulatory authorities will accept data leveraged from prior validations or platform analytical procedures, particularly for commercial applications [8]. There is also lack of clarity on the extent of data that can be leveraged from development studies.

Legacy Product Application: Unclear expectations exist for applying the revised concepts to already-approved (legacy) products, including timing for global compliance with new requirements [8].

Global Harmonization Concerns: While ICH guidelines aim to harmonize requirements globally, companies worry that different regulatory agencies may implement or interpret the guidelines differently, potentially creating new divergence rather than uniformity [8].

Strategic Implementation Recommendations

To successfully navigate the transition from ICH Q2(R1) to Q2(R2), organizations should consider the following strategic approaches:

Education and Training: Invest in comprehensive training programs to familiarize staff with the new guidelines, particularly the enhanced statistical requirements and lifecycle approach [2]. Training should cover both the changes between ICH Q2(R1) and Q2(R2) and the complementary guidance in ICH Q14.

Process Reevaluation: Conduct gap analyses of existing analytical methods and validation processes to identify areas requiring improvement to align with the new guidelines [2] [8]. This should include assessing current replication strategies, statistical approaches, and documentation practices.

Risk-Based Method Development: Adopt proactive risk management strategies as recommended by ICH Q14, conducting thorough risk assessments during early method development stages to identify potential challenges [2].

Enhanced Documentation Systems: Implement robust documentation practices that maintain detailed records of method performance over time and the rationale behind methodological adjustments [2]. This includes upgrading electronic record-keeping systems where necessary to ensure compliance with enhanced traceability requirements.

Figure 2: Strategic Implementation Roadmap for Transitioning from ICH Q2(R1) to Q2(R2)

Key Research Reagent Solutions and Statistical Tools

Successful implementation of ICH Q2(R2) requires access to appropriate materials, statistical tools, and reference standards. The following table outlines essential resources for navigating the updated validation requirements:

| Tool/Resource Category | Specific Examples | Function in Q2(R2) Implementation |

|---|---|---|

| Reference Standards | USP/EP/BP CRS, Certified Reference Materials | Establish accuracy and traceability for method validation |

| Statistical Software | JMP, Minitab, R, SAS, Python with scipy/statsmodels | Calculate confidence intervals, combined accuracy/precision, multivariate analysis |

| DOE Software | Design-Expert, Modde, STATISTICA DOE | Plan robustness studies and method optimization experiments |

| Data Management Systems | CDS, LIMS, Electronic Lab Notebooks | Maintain data integrity and traceability throughout method lifecycle |

| Multivariate Analysis Tools | SIMCA, Unscrambler, PLS_Toolbox | Develop and validate multivariate calibration models |

| Quality Control Materials | Stable, well-characterized QC samples with known values | Ongoing performance verification and lifecycle management |

The ICH has developed comprehensive training materials to support consistent global implementation of Q2(R2) and Q14. Released in July 2025, these modules include fundamental principles, practical applications, case studies, and specific guidance on challenging topics like multivariate analytical procedures [11]. The training materials illustrate both minimal and enhanced approaches to analytical development and validation, providing valuable implementation guidance for professionals across the industry [11].

Organizations should also invest in enhanced documentation templates that incorporate the new Q2(R2) requirements, including:

- Validation protocols that explicitly link to the Analytical Target Profile

- Statistical analysis plans for confidence interval calculations

- Replication strategy documentation aligned with routine testing procedures

- Lifecycle management plans for ongoing performance verification

The transition from ICH Q2(R1) to ICH Q2(R2) represents much more than an incremental update to technical requirements—it constitutes a fundamental philosophical shift in how the pharmaceutical industry conceptualizes, implements, and maintains analytical method validation. By moving from a static, one-time validation event to a dynamic, knowledge-driven lifecycle approach, Q2(R2) addresses critical limitations of the previous guideline while accommodating the increasing complexity of modern pharmaceutical products and analytical technologies.

The enhanced focus on statistical rigor, particularly through confidence intervals and combined accuracy/precision evaluation, provides a more scientifically sound foundation for demonstrating method capability. The introduction of concepts such as reportable result, fitness for purpose, and replication strategy creates crucial alignment between validation studies and routine analytical practice. Furthermore, the formal recognition of platform approaches and multivariate methods ensures the guideline remains relevant to contemporary analytical challenges.

While implementation presents significant challenges, particularly regarding statistical expertise and global regulatory alignment, the successful adoption of ICH Q2(R2) principles will ultimately enhance analytical reliability, facilitate more science-based regulatory evaluations, and strengthen the quality foundation of pharmaceutical products worldwide. By embracing these changes proactively rather than reactively, organizations can transform their analytical validation practices from compliance exercises into genuine quality-enhancing activities that support the development and manufacture of safer, more effective medicines.

The International Council for Harmonisation (ICH) has fundamentally transformed the paradigm for analytical procedures in the pharmaceutical industry with the simultaneous introduction of Q2(R2) on validation and Q14 on development. This shift moves the industry from a discrete, one-time validation event toward an integrated, holistic Analytical Procedure Lifecycle approach. This technical guide examines the integration of these two guidelines, detailing how their synergistic implementation fosters more robust, reliable, and scientifically sound analytical methods. Designed for researchers, scientists, and drug development professionals, this document provides a comprehensive framework for navigating this significant regulatory and scientific evolution, which is crucial for maintaining data integrity and product quality throughout a method's operational life [12] [2].

The original ICH Q2(R1) guideline, established in 1994, provided a foundational framework for analytical method validation for decades. However, significant advancements in analytical technologies and the increasing complexity of biopharmaceutical products, particularly biologics, revealed limitations in the traditional approach. The prior model often treated method development, validation, and ongoing use as disconnected phases, with development receiving scant regulatory attention and documentation requirements [12] [2]. This could lead to the transfer of poorly understood methods to quality control (QC) laboratories, resulting in operational difficulties, variable results, and costly out-of-specification investigations [12].

The new framework, finalised in 2023 and now being implemented globally, addresses these shortcomings [5] [1]. ICH Q14 provides, for the first time, comprehensive harmonized guidance on Analytical Procedure Development, advocating for structured, science-based practices. The revised ICH Q2(R2) updates the validation principles to align with this lifecycle concept. Together, they form an interconnected system that emphasizes enhanced understanding and control over the entire lifespan of an analytical procedure [13] [2]. This paradigm shift is further reinforced by the United States Pharmacopeia (USP) through its revision of general chapters <1220> on the Analytical Procedure Lifecycle and <1225> on Validation of Compendial Procedures [12] [9].

Core Concepts: Q2(R2) and Q14 Explained

ICH Q14 - Analytical Procedure Development

ICH Q14 introduces a structured framework for developing analytical methods, moving away from empirical, unrecorded experimentation toward a systematic, knowledge-driven process.

- Analytical Target Profile (ATP): The ATP is a foundational element of Q14 and the entire lifecycle approach. It is a predefined objective that outlines the required quality of the reportable result produced by an analytical procedure. Essentially, the ATP is the procedure's "specification," defining the levels of accuracy, precision, and other performance characteristics necessary for its intended use, long before the method is developed [12] [9].

- Minimal vs. Enhanced Approaches: Q14 offers two approaches to development. The Minimal Approach aligns with traditional, empirical practices but within a more structured context. The Enhanced Approach is a more rigorous path that incorporates Quality by Design (QbD) principles, systematic risk assessment, and defined analytical procedure control strategies. This enhanced path facilitates a more scientific justification for post-approval changes [2].

- Risk Assessment and Robustness: A core tenet of Q14 is the use of risk assessment tools early in development to identify critical method attributes and parameters that require controlled ranges. This understanding directly informs robustness testing and ensures the method remains reliable despite minor, inevitable variations in execution [2].

- Knowledge Management: The guideline emphasizes that knowledge generated during development is a valuable asset. This knowledge, which includes understanding the relationship between method parameters and performance, must be documented and managed effectively, as it forms the basis for the control strategy and any future changes [9].

ICH Q2(R2) - Validation of Analytical Procedures

ICH Q2(R2) builds upon its predecessor by refining classical validation parameters and better aligning them with the principles introduced in Q14.

- Lifecycle Integration: While maintaining the core validation parameters (accuracy, precision, specificity, etc.), Q2(R2) explicitly positions method validation as "Stage 2: Procedure Performance Qualification" within the broader analytical procedure lifecycle [12] [2].

- Link to the ATP: The validation studies conducted under Q2(R2) are designed to demonstrate that the analytical procedure, as developed, meets the criteria predefined in the ATP. The method's range, for example, must be directly linked to the ATP's requirements [2] [9].

- Enhanced Validation Practices: The guideline introduces updates to many validation parameters. It mandates more detailed statistical methods and, in some cases, recommends a combined evaluation of accuracy and precision to understand total error. Robustness testing is now compulsory and integrated into the lifecycle management approach [2] [9].

- Focus on the Reportable Result: A critical conceptual advancement in Q2(R2) is the focus on validating the "reportable result"—the final value used for quality decisions—rather than just individual measurements or instrument outputs. This ensures that the entire analytical procedure, including sample preparation, replication strategy, and data calculation, is fit for purpose [9].

Table 1: Comparison of Traditional and Lifecycle-Focused Approaches to Analytical Procedures

| Aspect | Traditional Approach (Q2(R1)-based) | Lifecycle Approach (Q2(R2)/Q14) |

|---|---|---|

| Philosophy | One-time validation event | Continuous lifecycle management |

| Development | Often empirical, minimally documented | Structured, QbD-driven, knowledge-rich |

| Starting Point | Method parameters | Analytical Target Profile (ATP) |

| Validation Focus | Checking boxes for listed parameters | Demonstrating fitness for purpose |

| Ongoing Monitoring | Primarily via system suitability | Ongoing performance verification |

| Change Management | Often reactive and burdensome | Proactive, facilitated by enhanced knowledge |

The Integrated Lifecycle in Practice

The power of Q2(R2) and Q14 is realized through their integration into a seamless, three-stage lifecycle, as visualized below. This model replaces the linear "develop-validate-use" sequence with a dynamic system featuring feedback loops for continuous improvement.

Stage 1: Procedure Design and Development (Governed by ICH Q14)

The lifecycle begins with the Analytical Target Profile (ATP), a quality prospectus for the analytical method [12]. The ATP defines what the method needs to achieve, specifying the required quality of the reportable result for its intended use. With the ATP defined, systematic development begins, employing risk assessment tools to identify critical parameters. Experiments are designed to explore the method's operational space, establishing a robust working range for each critical parameter. The output of this stage is a well-understood method accompanied by a control strategy to ensure it remains in a state of control [2].

Stage 2: Procedure Performance Qualification (Governed by ICH Q2(R2))

This stage corresponds to the traditional "method validation" but is performed with a deeper scientific basis. The validation protocol is derived directly from the ATP, and experiments are designed to prove the method meets its pre-defined quality standards. A key concept is validating the "reportable result"—the final value used for quality decisions—which requires the replication strategy in validation to mirror that of routine use [9]. The outcome of this stage is formal confirmation that the procedure is fit for its intended purpose.

Stage 3: Ongoing Performance Verification

Acknowledging that a procedure's performance can drift over time, this stage involves continuous monitoring to ensure it remains in a state of control. This goes beyond system suitability testing (SST) to include trending of quality control (QC) data and other performance indicators from routine use. The data collected feeds back into the lifecycle, triggering method improvement, re-validation, or even re-development if a negative trend is detected, thus closing the loop on continuous improvement [12] [9].

Implementation Strategies and the Scientist's Toolkit

Successfully adopting the Q2(R2)/Q14 paradigm requires strategic planning and the application of specific technical and quality tools. The following workflow outlines the key experimental and decision points in the enhanced method development path.

Strategic Recommendations for Implementation

For researchers and organizations transitioning to the new guidelines, the following actions are critical:

- Education and Cross-Functional Training: Invest in comprehensive training programs to familiarize scientists, analysts, and quality personnel with the principles of Q2(R2), Q14, and the lifecycle model. This fosters a shared understanding and language across development and QC units [2].

- Process Reevaluation and Gap Analysis: Conduct a thorough review of existing analytical methods, validation protocols, and SOPs against the new guidelines. Identify gaps where current practices do not align with the lifecycle approach, such as a lack of formal ATPs or insufficient development documentation [2].

- Adopt a Risk-Based Method Development: Implement proactive risk management strategies, such as Failure Mode and Effects Analysis (FMEA), during the early development stages. This systematically identifies and mitigates potential failure points, leading to more robust methods [2].

- Strengthen Knowledge and Documentation Management: Enhance documentation practices to capture the wealth of knowledge generated during method development and validation. Robust documentation is not only a regulatory requirement but also the foundation for sound science and justified post-approval changes [2] [9].

The Scientist's Toolkit: Essential Elements for Lifecycle Implementation

Table 2: Key Tools and Materials for Implementing the Q2(R2)/Q14 Lifecycle

| Tool / Material | Function in the Lifecycle Approach |

|---|---|

| Analytical Target Profile (ATP) | The quality foundation; defines the required performance characteristics of the reportable result. |

| Risk Assessment Tools (e.g., FMEA) | Systematically identifies critical method parameters and potential failure modes to guide development. |

| Design of Experiments (DoE) | An efficient statistical methodology for understanding the relationship and interactions between multiple method parameters. |

| Protocol for Combined Accuracy & Precision | Experimental design to evaluate total error, ensuring the reportable result is fit for purpose [9]. |

| Replication Strategy Document | Defines how replicates will be used in validation and routine testing to ensure the validation reflects real-world variability [9]. |

| Ongoing Performance Verification Plan | A systematic plan for monitoring method performance during routine use (Stage 3), using control charts and trend analysis. |

| Knowledge Management System | An electronic or structured system for capturing, storing, and retrieving method development and lifecycle data. |

The integration of ICH Q2(R2) and ICH Q14 represents a pivotal advancement in the pharmaceutical sciences, moving the industry toward a more holistic, scientific, and robust framework for analytical procedures. This shift from a discrete validation event to a continuous Analytical Procedure Lifecycle—anchored by the Analytical Target Profile and sustained by ongoing performance verification—promises to yield methods that are better understood, more reliable, and easier to manage throughout their lifespan. For drug development professionals, embracing this integrated approach is not merely a regulatory necessity but a significant opportunity to enhance product quality, streamline post-approval changes, and ultimately, ensure the safety and efficacy of pharmaceuticals for patients worldwide. The journey requires a cultural and procedural shift, but the payoff in scientific rigor and operational excellence is substantial.

Defining the Analytical Target Profile (ATP) as a Foundational Concept

In the framework of modern pharmaceutical development, the Analytical Target Profile (ATP) is a foundational concept that establishes a prospective summary of the performance requirements for an analytical procedure [14]. It defines the criteria that a method must consistently meet to be considered "fit for purpose," ensuring the reliability and quality of the reportable values it generates throughout the entire analytical procedure lifecycle [14]. The ATP shifts the paradigm from a reactive, "check-the-box" approach to validation toward a proactive, science- and risk-based lifecycle management model [15]. This strategic document is central to the harmonized guidelines outlined in ICH Q14 on Analytical Procedure Development and the revised ICH Q2(R2) on Validation of Analytical Procedures, providing a unified objective that guides development, validation, and ongoing performance verification [14] [16].

ATP Definitions and Regulatory Context

Core Definitions from ICH and USP

The ATP is authoritatively defined by major regulatory and compendial bodies, which align on its core purpose as a prospective planning tool.

- ICH Q14 Definition: The ICH Q14 guideline defines the ATP as "A prospective summary of the performance characteristics describing the intended purpose and the anticipated performance criteria of an analytical measurement." It further states that the ATP includes a description of the procedure's intended purpose, details on the product attributes to be measured, and relevant performance characteristics with associated criteria [14].

- USP <1220> Definition: The United States Pharmacopeia (USP) general chapter <1220> reinforces this concept, describing the ATP as a tool that "defines the required quality of the reportable value and is a description of the criteria for the procedure performance characteristics that are linked to the intended analytical application and the quality attribute to be measured" [14].

The ATP within the ICH Q2(R2) and Q14 Framework

The ATP serves as a critical bridge between ICH Q14 (Analytical Procedure Development) and ICH Q2(R2) (Validation of Analytical Procedures) [15] [16]. ICH Q14 provides the framework for systematically developing a procedure based on the ATP, while ICH Q2(R2) provides guidance on how to validate that the procedure's performance characteristics meet the pre-defined criteria outlined in the ATP [4] [16]. This integrated approach ensures that validation is not a one-time event but a confirmation that the procedure, developed with a clear objective, is suitable for its intended use throughout its commercial lifecycle [15].

Key Components and Structure of an ATP

A well-constructed ATP is a multi-faceted document that precisely communicates the requirements for an analytical procedure.

Core Elements of an ATP

The table below outlines the essential components that should be included in a comprehensive ATP.

Table 1: Essential Components of an Analytical Target Profile (ATP)

| ATP Component | Description | Example |

|---|---|---|

| Intended Purpose | A clear statement of what the analytical procedure is designed to measure and its role in the control strategy [14]. | "To quantify the main active ingredient in a tablet formulation for batch release." |

| Analyte/Quality Attribute | The specific substance or attribute to be measured (e.g., assay, impurity, identity) [14]. | "Active Pharmaceutical Ingredient (API) X." |

| Performance Characteristics | The specific metrics used to evaluate the procedure's performance, derived from its intended purpose [14] [15]. | Accuracy, Precision, Specificity. |

| Performance Criteria | The predefined, justified limits for each performance characteristic that must be met [14]. | "Accuracy: 98.0-102.0%; Precision (RSD): ≤ 2.0%." |

| Reportable Value | The format and quality of the final result produced by the procedure [14]. | "A single value expressing the percentage (w/w) of the API in the sample." |

Relationship Between ATP, Development, and Validation

The ATP establishes the "what" and "why" for an analytical procedure, which in turn drives the "how" of development and provides the standards for the "how well" of validation [14]. This logical flow ensures that all subsequent activities are aligned with the initial quality objective.

Implementing the ATP: A Practical Guide for Scientists

A Roadmap for ATP-Driven Development and Validation

Translating the ATP from a theoretical concept into a practical tool requires a structured workflow. The following steps provide a actionable roadmap for implementation.

Step 1: Define the ATP: Before any development begins, clearly define the purpose of the method and its required performance characteristics. Key questions include: What is the analyte? What is the expected concentration range? What level of accuracy and precision is required for decision-making? [15]. The ATP should be defined in a way that is, ideally, independent of the specific measurement technology to maintain focus on the scientific objective [14].

Step 2: Conduct Risk Assessments: Using principles from ICH Q9 (Quality Risk Management), conduct a risk assessment to identify potential sources of variability that could impact the method's ability to meet the ATP criteria. This assessment directly informs the design of development studies and the robustness testing strategy [15].

Step 3: Select Analytical Technology and Develop Procedure: With the ATP as the goal, select an appropriate analytical technology capable of meeting the stated performance criteria [14]. Procedure development then becomes an exercise in designing and optimizing the method to control the risks identified and reliably achieve the ATP standards.

Step 4: Develop a Validation Protocol: Based on the ATP and the preceding risk assessment, create a detailed validation protocol. This protocol outlines the specific validation parameters (e.g., accuracy, precision) to be tested, the experimental design, and the predefined acceptance criteria that are directly linked to the ATP [15] [4].

Step 5: Manage the Method Lifecycle: Once validated and implemented, the procedure enters the lifecycle stage. The ATP continues to serve as the reference point for ongoing performance verification and for justifying post-approval changes through a robust change management system [15] [16].

Minimal vs. Enhanced Approaches to Development

ICH Q14 describes two complementary approaches to analytical procedure development, and the role of the ATP is pivotal in the enhanced, more systematic path.

Table 2: Comparison of Minimal and Enhanced Analytical Procedure Development Approaches

| Aspect | Minimal Approach | Enhanced Approach |

|---|---|---|

| Core Philosophy | Traditional, empirical; relies on univariate experimentation and fixed procedures [16]. | Systematic, science- and risk-based; incorporates Quality by Design (QbD) principles [16]. |

| Role of the ATP | The ATP may be informal or not explicitly documented. | The ATP is a fundamental, prospectively defined component that guides all development activities [16]. |

| Development Basis | Testing identifying attributes and selecting technology [16]. | Driven by the ATP, followed by risk assessment and knowledge management [16]. |

| Control Strategy | Typically based on fixed procedure instructions and system suitability tests [16]. | A holistic strategy may include controlled method parameters with defined ranges, in addition to system suitability [16]. |

| Change Management | Changes often require regulatory submission prior to implementation [16]. | Provides more flexibility for post-approval changes within the established design space [16]. |

Experimental Protocols and Control Strategies

Key Reagents and Materials for Analytical Development

The execution of analytical procedures and validation studies relies on a foundation of high-quality, well-characterized materials. The following table details essential research reagent solutions.

Table 3: Essential Research Reagent Solutions for Analytical Development and Validation

| Reagent/Material | Function in Development & Validation | Critical Quality Attributes |

|---|---|---|

| Reference Standards | Serves as the benchmark for quantifying the analyte and determining method accuracy and linearity [4]. | Certified purity and potency, stability, proper storage conditions. |

| Placebo/Matrix Formulation | Used in specificity and accuracy studies to demonstrate that the method can measure the analyte without interference from other sample components [15]. | Represents the final drug product composition without the active ingredient. |

| System Suitability Solutions | Used to verify that the entire analytical system (instrument, reagents, columns) is performing adequately before or during a run [4]. | Well-defined retention time, peak symmetry, and resolution characteristics. |

| Reagents and Solvents | Form the mobile phase, sample diluent, and other solutions required for the analytical procedure. | Grade (e.g., HPLC-grade), purity, consistency, and compatibility. |

Designing a Control Strategy Anchored by the ATP

The analytical procedure control strategy is the sum of all elements that ensure the procedure performs as expected during routine use, and it is directly derived from the knowledge generated during ATP-driven development [16]. Key elements of a control strategy include:

- Established Conditions (ECs): These are the legally binding, validated method parameters described in regulatory submissions. The enhanced approach to development provides a deeper understanding to justify these ECs [16].

- System Suitability Tests (SSTs): These are critical checks to ensure the analytical system is functioning correctly at the time of testing. SSTs are a minimum requirement for any control strategy [4].

- Procedure Robustness Data: Knowledge of the method's robustness—its capacity to remain unaffected by small, deliberate variations in method parameters—informs the setting of appropriate control limits and helps troubleshoot performance issues [15] [16].

- Ongoing Performance Monitoring: Data collected from routine use of the procedure (e.g., from quality control testing) should be periodically reviewed against the ATP criteria to ensure the method remains in a state of control throughout its lifecycle [14].

The Analytical Target Profile is far more than a regulatory recommendation; it is the cornerstone of a modern, robust, and scientifically sound approach to analytical sciences in pharmaceutical development. By prospectively defining what is required from an analytical procedure, the ATP provides a clear and constant target that aligns development, validation, and lifecycle management activities [14] [15]. Framing analytical methods within the context of the ATP and the integrated ICH Q14 and Q2(R2) guidelines empowers researchers, scientists, and drug development professionals to build quality and reliability into their methods from the very beginning, ultimately enhancing patient safety and accelerating the delivery of high-quality medicines.

Scope and Application for Drug Substances and Products

The ICH Q2(R2) guideline, titled "Validation of Analytical Procedures," provides a harmonized framework for the validation of analytical procedures used in the pharmaceutical industry. This guideline presents essential elements for consideration during the validation of analytical procedures submitted as part of registration applications within ICH member regulatory authorities [1].

The primary objective of ICH Q2(R2) is to ensure that analytical procedures are validated to demonstrate they are suitable for their intended purpose. The guideline offers detailed recommendations on how to derive and evaluate various validation tests for each analytical procedure and serves as a comprehensive collection of terms and their definitions [1]. This revision represents a significant evolution from the previous ICH Q2(R1) standard, addressing advancements in analytical technologies and the increasing complexity of modern pharmaceuticals, particularly biological products [2].

Detailed Scope of Application

Regulatory and Product Scope

ICH Q2(R2) applies to a broad spectrum of pharmaceutical materials and regulatory submissions, as detailed in the table below.

Table 1: Regulatory and Product Scope of ICH Q2(R2)

| Scope Category | Specific Applications | Key Considerations |

|---|---|---|

| Product Types | Commercial drug substances (chemical and biological/biotechnological) [1] | Applies to both small molecules and complex biologics [2] |

| Commercial drug products (chemical and biological/biotechnological) [1] | Includes cell/gene therapies, vaccines, and combination products [8] | |

| Testing Applications | Release testing [1] | Quality control before product distribution |

| Stability testing [1] | Monitoring quality over the product's shelf life | |

| Other analytical procedures used as part of the control strategy [1] | Implemented following a risk-based approach | |

| Procedure Status | New analytical procedures [1] | Full validation required |

| Revised analytical procedures [1] | Partial or full revalidation depending on nature of changes |

Analytical Procedure Purposes

The guideline addresses the most common purposes of analytical procedures employed in pharmaceutical analysis [1]:

- Assay/Potency: Quantitative measurement of the active ingredient's strength or biological activity.

- Purity: Determination of the main component relative to impurities or related substances.

- Impurities: Detection and quantitation of organic, inorganic, or residual solvent impurities.

- Identity: Confirmation of the substance's identity through specific characteristics.

- Other Measurements: Both quantitative and qualitative analytical determinations.

The scope extends beyond traditional small molecule drugs to address the unique challenges posed by biologics, which have driven the need for updated guidance [2]. For biological products, the guideline acknowledges the need for more flexible, science-based approaches to method validation that can accommodate their inherent complexity and variability [2].

Analytical Procedure Validation: Core Concepts and Methodologies

Validation Parameters and Terminology

ICH Q2(R2) establishes clear definitions and methodologies for key validation parameters. The guideline provides a harmonized vocabulary that ensures consistent interpretation and implementation across the global pharmaceutical industry.

Table 2: Core Validation Parameters and Their Applications

| Validation Parameter | Definition and Purpose | Typimental Methodology |

|---|---|---|

| Accuracy | Closeness of agreement between accepted reference value and value found [1] | Measure recovery of known amounts of analyte added across specified range; use minimum 9 determinations over minimum 3 concentration levels |

| Precision | Closeness of agreement between series of measurements [1] | Includes repeatability (same conditions), intermediate precision (different days, analysts, equipment), and reproducibility (between laboratories) |

| Specificity | Ability to assess analyte unequivocally with interference [1] | Demonstrate no interference from blank, placebo, impurities, degradation products; use chromatographic peak purity tools or orthogonal methods |

| Detection Limit (LOD) | Lowest amount of analyte detectable not necessarily quantifiable [1] | Visual evaluation, signal-to-noise ratio (typically 3:1), or standard deviation of response and slope |

| Quantitation Limit (LOQ) | Lowest amount of analyte quantitatively determined [1] | Signal-to-noise ratio (typically 10:1), or standard deviation of response and slope; demonstrate acceptable accuracy and precision at LOQ |

| Linearity | Ability to obtain results proportional to analyte concentration [1] | Prepare minimum 5 concentration levels across specified range; evaluate by plotting response vs concentration; calculate correlation coefficient, y-intercept, slope |

| Range | Interval between upper and lower concentration with suitable precision, accuracy, linearity [1] | Derived from linearity studies; must encompass all intended application concentrations (e.g., 80-120% of test concentration for assay) |

| Robustness | Capacity to remain unaffected by small, deliberate variations [1] | Systematically vary method parameters (pH, temperature, flow rate, mobile phase composition); measure impact on results |

Implementation of a Lifecycle Approach

A fundamental evolution in ICH Q2(R2) is the introduction of a lifecycle approach to analytical procedures, which is further reinforced through its integration with ICH Q14 on Analytical Procedure Development [9] [2]. This approach moves away from treating validation as a one-time event toward a continuous process that spans the entire operational life of an analytical procedure.

The lifecycle approach consists of three interconnected stages:

- Stage 1: Procedure Design - Developing the analytical procedure based on enhanced understanding using Quality by Design principles and defining an Analytical Target Profile (ATP) [9].

- Stage 2: Procedure Performance Qualification - Traditionally known as validation, this stage confirms the procedure performs as intended under specified conditions [9].

- Stage 3: Ongoing Procedure Performance Verification - Continuous monitoring to ensure the procedure remains in a state of control throughout its operational life [9].

This paradigm shift is further reinforced by the United States Pharmacopeia's revision of General Chapter <1225> to align with ICH Q2(R2) and Q14, creating a cohesive framework for analytical procedure lifecycle management [9].

Enhanced Statistical Approaches

ICH Q2(R2) introduces more sophisticated statistical methodologies for evaluating validation data, particularly regarding confidence intervals and combined approaches:

Confidence Interval Implementation: The guideline mandates reporting "an appropriate 100(1-α)% confidence interval (or justified alternative statistical interval)" for accuracy and precision measurements, with the requirement that "the observed interval should be compatible with the corresponding acceptance criteria, unless otherwise justified" [8]. According to a recent industry survey, implementation challenges include:

- 40% of respondents expressed concern that limited replicates may render confidence intervals meaningless [8]

- 21% reported insufficient experience to set appropriate acceptance criteria [8]

- 16% acknowledged lacking internal statistical expertise [8]

Combined Accuracy and Precision Evaluation: The guideline allows for combined approaches that simultaneously evaluate accuracy and precision, recognizing that these characteristics interact in determining total measurement error [8] [9]. Survey data indicates that 58% of companies are already using or planning to use these combined approaches, while 40% continue with conventional separate evaluations [8].

Advanced Applications and Implementation Considerations

Platform Analytical Procedures

ICH Q2(R2) formally recognizes the concept of platform analytical procedures for the first time in ICH guidance, documenting their use for molecules that are sufficiently similar with respect to the attributes the procedure is intended to measure [8]. This approach enables increased efficiency and harmonization, particularly for complex biological products.

Current implementation data reveals:

- Over 50% of respondents have utilized platform analytical procedures in clinical development [8]

- Only slightly more than 10% have successfully secured approval of platform analytical procedures with abbreviated validation for commercial registration [8]

- Willingness to implement for future commercial registrations increased significantly to 45% [8]

The primary challenge cited for limited commercial implementation is that the concept is "not implemented across entire agencies and for all modalities," with one respondent noting a "lack of understanding in health authorities and knowing what they are looking for" [8].

Application to Complex Modalities

The revised guideline explicitly addresses the needs of modern pharmaceutical development, including complex modalities that were not adequately covered in Q2(R1):

- Biological Products: The guideline provides specific considerations for the validation of analytical procedures for biologics, acknowledging their inherent complexity and variability [2] [17].

- Multivariate Analytical Procedures: Q2(R2) offers clarity on the application of advanced techniques such as ICP-MS, FT-NIR, and NMR, which are particularly relevant for biological characterization [8].

- Cell and Gene Therapies: The guideline recognizes the unique challenges posed by these advanced therapies, particularly regarding their inherent variability that may complicate combined accuracy and precision approaches [8].

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of ICH Q2(R2) requires appropriate materials and reagents tailored to the specific analytical procedure and product type.

Table 3: Essential Research Reagent Solutions for Analytical Validation

| Reagent/Material Category | Specific Examples | Function in Validation |

|---|---|---|

| Reference Standards | Certified reference materials (CRMs), USP compendial standards, in-house working standards | Establish accuracy and method calibration; serve as known quality for comparison |

| System Suitability Reagents | Chromatographic test mixtures, resolution mixtures, tailing factor solutions | Verify system performance before and during validation experiments |

| Specificity Challenge Materials | Placebo mixtures, forced degradation samples (acid/base, thermal, oxidative, photolytic), spiked impurities | Demonstrate method selectivity and ability to measure analyte despite potential interferents |

| Accuracy/Precision Materials | Samples spiked with known analyte quantities at multiple concentration levels (minimum 3 levels, 9 determinations) | Establish recovery, repeatability, and intermediate precision |

| Matrix Components | Blank excipients, formulation components, process-related impurities | Evaluate potential matrix effects and ensure accurate analyte measurement in presence of sample components |

Regulatory Implementation Status

ICH Q2(R2) reached Step 4 of the ICH process on 1 November 2023, and ICH regulatory members are expected to implement the guideline within their respective countries or regions [8]. Current implementation status includes:

- Implemented by: European Commission (EC), US Food and Drug Administration (FDA), Swiss Agency for Therapeutic Products (Swissmedic), Egyptian Drug Authority, and China's National Medical Products Administration (NMPA) [8]

- Regional variations: Argentina and Turkey have implemented Q14, while Saudi Arabia has implemented Q2 [8]

The FDA issued the final guidance document in March 2024, confirming its adoption and providing the docket number FDA-2022-D-1503 for industry comments [5]. This implementation timeline necessitates that pharmaceutical companies actively transition their validation practices to align with the updated requirements.

ICH Q2(R2) represents a significant evolution in the validation of analytical procedures for drug substances and products. Its scope encompasses both chemical and biological pharmaceuticals, applying to release, stability, and other analytical procedures within the control strategy. The guideline introduces enhanced statistical approaches, formalizes platform analytical procedures, and embraces a lifecycle management perspective through alignment with ICH Q14.

Successful implementation requires pharmaceutical companies to adopt more sophisticated statistical methodologies, embrace platform approaches where scientifically justified, and transition from a one-time validation mindset to continuous analytical procedure lifecycle management. As regulatory authorities globally adopt this revised guideline, industry professionals must ensure their validation practices and documentation meet these updated standards to maintain regulatory compliance and ensure the quality, safety, and efficacy of pharmaceutical products.

Implementing Core Validation Parameters: A Practical Guide to ICH Q2(R2)

Accuracy is a fundamental validation parameter defined as the closeness of agreement between a measured value and a true value [1] [15]. Within the framework of ICH Q2(R2) guidelines for analytical method validation, demonstrating accuracy provides critical evidence that an analytical procedure consistently yields results that accurately reflect the quality attribute being measured, thereby ensuring drug safety, efficacy, and quality [1] [18]. This parameter transitions from a one-time validation check-box to an integral component of the analytical procedure lifecycle, supported by the science- and risk-based approaches outlined in the modernized ICH Q2(R2) and its complementary guideline, ICH Q14 [15] [4].

The following diagram illustrates the typical workflow for planning and executing an accuracy study, integrating key concepts from ICH Q2(R2):

Core Principles and Regulatory Framework

Definition and Significance

In analytical method validation, accuracy quantifies the systematic error of a measurement procedure. It confirms that an analytical method produces results that are unbiased and centered around the true value of the analyte [15] [19]. Establishing accuracy is not merely a regulatory formality but a scientific necessity to ensure that data used for batch release, stability studies, and shelf-life determinations are trustworthy and scientifically sound [4].

ICH Q2(R2) in the Analytical Procedure Lifecycle

The updated ICH Q2(R2) guideline, effective from June 2024, modernizes the validation approach by explicitly integrating analytical procedures into a holistic lifecycle management model [20] [18]. This revision, developed alongside ICH Q14 (Analytical Procedure Development), emphasizes that validation is not a one-time event but a continuous process that begins with a clear definition of the method's intended purpose through an Analytical Target Profile (ATP) [15] [4]. The ATP prospectively defines the performance requirements an analytical procedure must meet, ensuring that accuracy acceptance criteria are directly linked to the method's use for controlling a specific Critical Quality Attribute (CQA) [20] [18].

Experimental Strategies for Determining Accuracy

ICH Q2(R2) describes two primary experimental approaches for demonstrating accuracy, chosen based on the nature of the sample and analytical technique [15] [19].

Comparison to a Reference Standard or Procedure

This strategy involves analyzing a sample of known concentration (e.g., a certified reference material) and comparing the result to its accepted true value [19]. It is the preferred method when a highly characterized and pure reference standard is available.

Spiked Placebo Recovery

Used for drug products where the analyte must be quantified within a complex matrix, this method involves spiking the placebo (all non-active ingredients) with a known quantity of the analyte [15] [19]. Accuracy is then calculated as the percentage of the analyte recovered from the sample matrix.

The standard experimental design for accuracy, as per ICH Q2(R2), requires a minimum of nine determinations across a specified range, typically performed at three concentration levels (e.g., 80%, 100%, 120%) with three replicates each [21] [19]. This design provides a statistical basis for assessing accuracy across the procedure's reportable range.

Methodologies and Protocols

Protocol for Assaying a Drug Substance via Comparison to a Reference Standard

This protocol is suitable for quantifying the active pharmaceutical ingredient (API) itself.

- Preparation of Standard Solutions: Accurately weigh and prepare a stock solution of a certified reference standard of the API. From this, prepare dilutions to achieve concentrations at, for example, 80%, 100%, and 120% of the test concentration.

- Analysis: Inject or analyze each concentration level in triplicate using the analytical procedure (e.g., HPLC) under validated conditions.

- Calculation: For each determination, calculate the accuracy as percent recovery using the formula:

- % Recovery = (Measured Concentration / True Concentration) × 100

- Data Evaluation: Report the individual recoveries, the mean recovery at each level, and the overall mean recovery across all nine determinations [15] [21].

Protocol for a Drug Product via Spiked Placebo Recovery

This protocol is designed to demonstrate accuracy in the presence of excipients.

- Spiking Procedure: Accurately weigh a fixed amount of placebo (all excipients without the API). Spike it with known amounts of the API to yield concentrations corresponding to the target levels (e.g., 80%, 100%, 120% of the label claim). Prepare each level in triplicate.

- Sample Preparation: Process the spiked samples according to the analytical method's sample preparation protocol.

- Analysis and Calculation: Analyze the prepared samples. The recovery is calculated as:

- % Recovery = (Measured Content of API / Added Content of API) × 100

- Data Evaluation: Assess the recovery at each level to ensure the method is accurate despite potential matrix interferences [4] [19].

Data Presentation and Acceptance Criteria

The results from accuracy studies should be summarized clearly. The following tables provide templates for data presentation and list common acceptance criteria derived from regulatory guidelines and industry practice [4] [19].

Table 1: Example Data Table for Accuracy (Spiked Placebo Recovery)

| Spiking Level (%) | Theoretical Concentration (µg/mL) | Measured Concentration, Mean ± SD (µg/mL) | % Recovery, Mean ± SD | %RSD |

|---|---|---|---|---|

| 80 | 80.0 | 79.5 ± 0.8 | 99.4 ± 1.0 | 1.01 |

| 100 | 100.0 | 99.1 ± 1.1 | 99.1 ± 1.1 | 1.11 |

| 120 | 120.0 | 119.3 ± 1.3 | 99.4 ± 1.1 | 1.11 |

| Overall | 99.3 ± 1.0 | 1.01 |

Table 2: Typical Acceptance Criteria for Accuracy [4] [19]

| Analytical Procedure Type | Typical Acceptance Criteria for % Recovery |

|---|---|

| Assay of Drug Substance / Product | 98.0 - 102.0% per level and overall mean |

| Impurity Quantitation | 80 - 120% at the specification level (e.g., 0.1%) |

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key materials required for conducting robust accuracy studies.

Table 3: Key Research Reagent Solutions for Accuracy Studies

| Item | Function and Critical Attributes |

|---|---|

| Certified Reference Standard | A highly pure, well-characterized substance used as the primary benchmark for determining accuracy via the comparison method. Its purity must be traceable and certified. |