Optimizing Chemical Reactions: A Practical Guide to the Simplex Method for Biomedical Research

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on applying the Simplex method to optimize complex chemical reactions and experimental conditions.

Optimizing Chemical Reactions: A Practical Guide to the Simplex Method for Biomedical Research

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on applying the Simplex method to optimize complex chemical reactions and experimental conditions. It covers the algorithm's foundational principles, drawn from its proven history in logistics and resource allocation, and translates them for practical use in chemical and pharmaceutical domains. The content explores step-by-step methodologies, addresses common troubleshooting scenarios, and presents a comparative analysis with modern optimization techniques like evolutionary algorithms and Bayesian methods. By synthesizing recent research and real-world applications, this guide serves as a strategic resource for enhancing efficiency, reliability, and outcomes in experimental optimization for biomedical and clinical research.

The Simplex Method Explained: From Linear Programming to Reaction Optimization

Within the context of reaction optimization research, the simplex method stands as a cornerstone computational technique for solving complex linear programming problems. Invented by George Dantzig in 1947, this algorithm provides a systematic approach for determining the optimal allocation of limited resources, a common challenge in pharmaceutical development and chemical synthesis planning [1] [2]. The power of the simplex method lies not merely in its computational procedure but in its elegant geometric interpretation, which frames optimization as navigation through a multidimensional geometric structure called the feasible region or polytope. For researchers designing chemical reactions, this geometric perspective offers intuitive insights into how the algorithm efficiently explores possible combinations of reactants, catalysts, and conditions to identify optimal yield or purity while respecting constraints like material availability, safety limits, and stoichiometric balances.

Theoretical Foundation: The Geometry of Linear Programs

From Chemical Constraints to Geometric Shapes

In reaction optimization, a typical linear program seeks to maximize or minimize an objective function (e.g., reaction yield, purity, or cost) subject to linear constraints (e.g., material balances, safety limits, stoichiometry). Mathematically, this is expressed in canonical form as [1]:

- Maximize: ( \mathbf{c^{T}} \mathbf{x} )

- Subject to: ( A\mathbf{x} \leq \mathbf{b} ) and ( \mathbf{x} \geq \mathbf{0} )

Here, ( \mathbf{x} ) represents the decision variables (e.g., concentrations, temperatures, flow rates), ( A\mathbf{x} \leq \mathbf{b} ) defines the linear constraints, and ( \mathbf{c^{T}} \mathbf{x} ) is the linear objective function [1]. The feasible region formed by these constraints constitutes a convex polyhedron in n-dimensional space, where 'n' corresponds to the number of independent variables in the optimization problem [3].

Fundamental Geometric Principles

The geometry of feasible regions follows several fundamental principles critical to understanding optimization behavior:

Extreme Point Optimality: If an optimal solution exists for a reaction optimization problem, at least one extreme point (vertex) of the polytope will be optimal [1]. This crucial insight reduces the optimization problem from searching an infinite continuum to evaluating a finite set of candidate points.

Edge-Wise Improvement: The simplex method operates by moving along the edges of the polytope from one vertex to an adjacent vertex, with each step improving the objective function value [1] [3]. This systematic traversal ensures continuous improvement toward the optimum.

Termination Conditions: The algorithm terminates when no adjacent vertex offers improvement in the objective function (indicating an optimum has been found) or when an unbounded edge is encountered (indicating the objective can improve indefinitely, often revealing an error in problem formulation) [1].

Table 1: Key Geometric Properties of Feasible Regions in Optimization

| Property | Geometric Interpretation | Optimization Significance |

|---|---|---|

| Vertices | Extreme points of the polytope | Candidate solutions for optimization |

| Edges | One-dimensional connections between vertices | Possible paths for solution improvement |

| Faces | Flat boundaries of the polytope | Representations of active constraints |

| Dimensionality | Number of decision variables | Computational complexity of the problem |

| Boundedness | Closed, finite region | Guarantees existence of an optimal solution |

The Simplex Method: A Geometric Algorithm

Algorithmic Framework

The simplex method implements the geometric principles of vertex-hopping through an algebraic procedure that operates on a tableau representation of the linear program [1]. The algorithm proceeds through two fundamental phases:

Phase I: Feasibility Search: Identifies an initial extreme point within the feasible region or determines that no such point exists (infeasible problem) [1]. For reaction optimization, this establishes a viable starting point that satisfies all experimental constraints.

Phase II: Optimality Search: Moves from the initial feasible vertex to adjacent vertices, always following edges that improve the objective function until an optimum is reached [1]. This systematic exploration mirrors an efficient experimental design strategy.

Geometric Interpretation of Pivoting

The algebraic pivot operation corresponds precisely to moving from one vertex to an adjacent vertex along an edge of the polytope [3]. Each pivot:

- Enters a new variable into the basis (moves along a new dimension)

- Exits a variable from the basis (maintains feasibility within constraints)

- Improves the objective function value (ensures monotonic progress)

Recent theoretical advances have explained why this method performs efficiently in practice despite worst-case exponential complexity. Research by Huiberts and Bach has demonstrated that with appropriate randomization and tolerance handling—techniques already employed in commercial optimization software—the simplex method achieves polynomial-time performance [2] [4].

Experimental Protocols: Implementation Methodology

Protocol 1: Problem Formulation and Standardization

Purpose: To transform a reaction optimization problem into standard form suitable for simplex implementation.

Procedure:

- Identify Decision Variables: Define all experimentally controllable factors (e.g., reactant concentrations, temperature settings, reaction times) as variables ( x1, x2, ..., x_n \geq 0 ) [1].

- Formulate Objective Function: Define the optimization target as a linear function of decision variables (e.g., maximize yield = ( c1x1 + c2x2 + ... + cnxn )) [1].

- Express Constraints: Translate all experimental limitations into linear inequalities (e.g., total volume ≤ 100 mL, temperature ≤ 80°C, catalyst amount ≥ 0.5 mol%) [1].

- Convert to Standard Form:

- Verify Feasibility: Confirm the origin (( \mathbf{x} = \mathbf{0} )) satisfies all constraints or apply Phase I procedures [3].

Validation: Verify dimensional consistency across all equations and confirm all experimental constraints are properly represented.

Protocol 2: Tableau Initialization and Pivot Selection

Purpose: To construct the initial simplex tableau and implement the pivot selection mechanism.

Procedure:

- Construct Initial Tableau: Create the matrix representation [3]: where ( c ) contains objective coefficients, ( A ) contains constraint coefficients, and ( b ) contains constraint bounds [3].

Identify Entering Variable: Select the first negative coefficient in the top row (ignoring the first column) to determine the entering variable [3].

Identify Leaving Variable: For the pivot column selected, compute ratios ( -D{i,0}/D{i,j} ) for negative entries ( D_{i,j} ), selecting the row that minimizes this ratio [3].

Apply Bland's Rule: If multiple choices exist at any selection step, choose the variable with the smallest index to prevent cycling [3].

Perform Pivot Operation:

Check Termination: Continue pivoting until no negative coefficients remain in the top row (indicating optimality) or an unbounded condition is detected [3].

Validation: After each pivot, verify that the objective function has improved and that all constraints remain satisfied.

Protocol 3: Interpretation and Experimental Validation

Purpose: To translate mathematical results back into experimental parameters and validate findings.

Procedure:

- Extract Solution: From the final tableau, read the values of basic variables at the optimum [1].

- Verify Constraints: Confirm the solution satisfies all original experimental constraints within practical tolerances [4].

- Perform Sensitivity Analysis: Assess how small changes in constraint parameters affect the optimal solution [1].

- Design Verification Experiment: Translate the mathematical optimum into practical experimental conditions.

- Execute Validation: Conduct actual reactions using the optimized parameters to confirm predicted performance.

Validation: Compare mathematical predictions with experimental results, with discrepancies triggering re-examination of problem formulation.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Optimization Research

| Research Tool | Function/Purpose | Implementation Notes |

|---|---|---|

| Linear Programming Solver | Core computational engine for simplex method | Commercial (CPLEX, Gurobi) or open-source (HiGHS) options; includes feasibility tolerances (typically ( 10^{-6} )) [4] |

| Problem Scaling Utilities | Pre-processor to normalize variable magnitudes | Ensures all non-zero input values are of order 1; improves numerical stability [4] |

| Sensitivity Analysis Tools | Post-solution analysis of constraint variations | Quantifies robustness of optimal solution to parameter uncertainties |

| Visualization Software | Geometric representation of feasible regions | Provides intuitive understanding of solution space (e.g., 2D/3D polytope plotting) |

| Randomization Modules | Adds small perturbations to constraint bounds | Introduces random uniform variations (( \varepsilon \in [0, 10^{-6}] )) to improve theoretical performance [4] |

Geometric Visualization of Optimization Pathways

Feasible Region Geometry and Solution Path

Feasible Region Geometry and Solution Path: This diagram illustrates the simplex method's traversal through adjacent vertices of the feasible region polytope, with each pivot operation moving toward improved objective values until reaching the optimal vertex or detecting an unbounded edge.

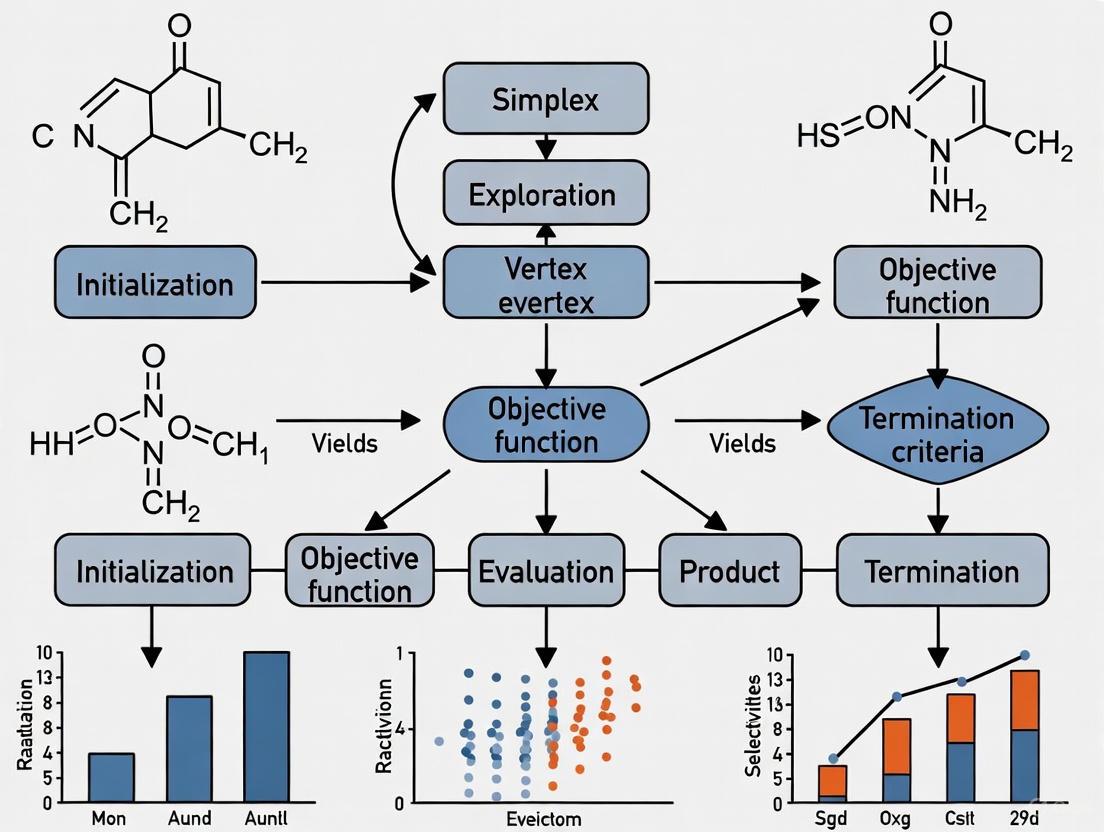

Simplex Algorithm Workflow

Simplex Algorithm Workflow: This workflow diagram outlines the complete simplex method procedure from problem formulation through feasibility search (Phase I), optimality search (Phase II), and iterative pivoting until verification of the final solution.

The geometric interpretation of the simplex method provides researchers with a powerful conceptual framework for understanding optimization processes in reaction development and pharmaceutical research. By visualizing the feasible region as a multidimensional polytope and recognizing optimization as systematic traversal between vertices, scientists can develop more intuitive approaches to experimental design and process optimization. The integration of theoretical geometric principles with practical implementation protocols creates a robust methodology for addressing complex resource allocation challenges throughout drug development pipelines. Recent theoretical advances explaining the algorithm's practical efficiency further strengthen its foundation as a preferred method for linear optimization in scientific research, ensuring its continued relevance for reaction optimization in both academic and industrial settings.

The simplex algorithm, pioneered by George Dantzig in 1947, represents a cornerstone of mathematical optimization [1]. Originally developed for linear programming problems, this method provides a systematic approach for maximizing or minimizing a linear objective function subject to linear equality and inequality constraints. Dantzig's core insight was that the optimum value of such a function, if it exists, must occur at one of the vertices (extreme points) of the feasible region defined by the constraints [1]. The algorithm operates by traversing along the edges of this polyhedral region from one vertex to an adjacent vertex with an improved objective value, continuing until no further improvement is possible [1].

In the context of chemical reaction optimization, researchers face multidimensional challenges where numerous parameters—including temperature, concentration, residence time, and catalyst selection—simultaneously influence critical outcomes such as yield, selectivity, and cost [5] [6]. The transition from traditional one-variable-at-a-time (OVAT) approaches to multivariate optimization has revolutionized process development in pharmaceutical and specialty chemical industries [5] [6]. This article traces the historical development of Dantzig's simplex algorithm and its evolutionary adaptations that now empower modern chemical applications.

Mathematical Framework: From Linear to Nonlinear Optimization

The Standard Simplex Algorithm for Linear Programming

The standard simplex algorithm addresses linear programs in canonical form:

- Maximize ( \mathbf{c^T} \mathbf{x} )

- Subject to ( A\mathbf{x} \leq \mathbf{b} ) and ( \mathbf{x} \geq 0 )

where ( \mathbf{c} ) represents the coefficients of the linear objective function, ( \mathbf{x} ) is the vector of variables, ( A ) is the coefficient matrix, and ( \mathbf{b} ) is the constraint vector [1]. The algorithm employs a tableau representation that enables systematic pivot operations to navigate from one basic feasible solution to an improved adjacent solution until optimality is achieved [1].

Adaptation for Nonlinear Chemical Systems

While Dantzig's original method excelled at linear programming, chemical optimization typically involves nonlinear response surfaces. The modified simplex algorithm (Nelder-Mead method) addresses this limitation by operating directly on the experimental space without requiring a predefined mathematical model [6]. This derivative-free approach makes it particularly valuable for optimizing complex chemical systems where the precise relationship between variables and outcomes is unknown or computationally prohibitive to model.

Table 1: Key Developments in Simplex Optimization

| Year | Development | Key Innovator | Application Domain |

|---|---|---|---|

| 1947 | Simplex Algorithm for Linear Programming | George Dantzig [1] | Operations Research |

| 1965 | Nelder-Mead (Modified Simplex) | Nelder and Mead [6] | Nonlinear Experimental Optimization |

| 1980s | Sequential Simplex in Chromatography | Multiple groups [7] | Analytical Chemistry Method Development |

| 2020 | Self-optimizing Reactors with Simplex | Fath et al. [6] | Continuous Flow Organic Synthesis |

Experimental Protocol: Simplex Optimization of Imine Synthesis in Continuous Flow

The following protocol details the application of the modified simplex algorithm for optimizing imine synthesis from benzaldehyde and benzylamine in a continuous flow microreactor system [6].

Equipment and Reagents

Table 2: Essential Research Reagent Solutions

| Item | Specification | Function |

|---|---|---|

| Benzaldehyde | ReagentPlus, ≥99% | Substrate [6] |

| Benzylamine | ReagentPlus, ≥99% | Substrate [6] |

| Methanol | For synthesis, >99% | Reaction solvent [6] |

| Syringe Pumps | SyrDos2 or equivalent | Precise reagent delivery [6] |

| Microreactor | 1/16" stainless steel capillaries, 1.87 mL total volume | Reaction environment with controlled residence time [6] |

| FT-IR Spectrometer | Bruker ALPHA with ATR diamond crystal | Real-time reaction monitoring [6] |

| Automation System | MATLAB-controlled with OPC interface | Strategy execution and data acquisition [6] |

Step-by-Step Procedure

Reactor Setup and Calibration

- Assemble the microreactor system using 5m (0.5mm ID) and 2m (0.75mm ID) stainless steel capillaries connected in series.

- Calibrate the FT-IR spectrometer using standard solutions to establish quantitative relationships between IR band intensities (1680-1720 cm⁻¹ for benzaldehyde; 1620-1660 cm⁻¹ for imine product) and concentration.

- Program the automation system to control syringe pumps, thermostat, and collect analytical data via OPC interface.

Initial Simplex Design

- Define the experimental variables to optimize: temperature (20-80°C) and residence time (0.5-6 minutes).

- Construct the initial simplex with n+1 vertices (where n is the number of variables). For two variables, this forms a triangle in the experimental space.

- Set the objective function to maximize imine yield calculated from the FT-IR data.

Sequential Optimization Cycle

- Conduct experiments at each vertex of the current simplex, measuring the objective function (yield) for each condition.

- Apply the Nelder-Mead operations: reflection, expansion, contraction, or shrinkage based on relative performance of vertices.

- Replace the worst-performing vertex with a new point according to simplex rules.

- Iterate until convergence criteria are met (typically when the standard deviation of responses in the simplex falls below a threshold or after a predetermined number of iterations).

Real-Time Disturbance Response (Advanced Implementation)

- Introduce deliberate disturbances to reactant concentration (10-20% variation) to test system robustness.

- Observe how the simplex algorithm automatically adjusts operating conditions to compensate and return to optimal performance.

- Document the new optimal conditions identified by the algorithm.

Diagram Title: Simplex Optimization Workflow

Applications in Chemical Research

Chromatographic Method Development

Sequential simplex optimization has extensively optimized reversed-phase liquid chromatographic separations [7]. The approach typically employs a chromatographic response function that balances resolution against analysis time, with factors including mobile phase composition, temperature, and flow rate. For complex separations of isomeric octanes, simplex methods have simultaneously optimized column oven temperature and carrier gas flow rate, outperforming traditional univariate approaches [7].

Table 3: Representative Chemical Applications of Simplex Optimization

| Application Domain | Key Variables Optimized | Objective Function | Reported Performance |

|---|---|---|---|

| Imine Synthesis [6] | Temperature, Residence time | Imine yield | Rapid convergence to optimum in <20 iterations |

| HPLC Method Development [7] | Mobile phase composition, Flow rate, Temperature | Resolution and analysis time | Efficient navigation of complex response surfaces |

| Nanomaterial Synthesis [5] | Precursor concentration, Temperature, Reaction time | Particle size and yield | Effective handling of multiple objectives when combined with MOBO |

Comparison with Contemporary Optimization Methods

Modern chemical optimization increasingly employs machine learning approaches like Bayesian optimization (BO), which utilizes probabilistic surrogate models to balance exploration and exploitation [5]. While BO often demonstrates superior sample efficiency, simplex methods remain valuable for their computational simplicity, transparency, and minimal data requirements. Hybrid approaches that combine simplex with model-based methods show particular promise for complex, resource-intensive optimization challenges [5].

Advanced Implementation: Multi-Objective Considerations

Chemical optimization frequently involves competing objectives, such as maximizing yield while minimizing cost, energy consumption, or environmental impact [5]. While the basic simplex method addresses single-objective problems, researchers have extended its principles to multi-objective scenarios through several strategies:

- Pareto Optimization: Identifying a set of non-dominated solutions representing optimal trade-offs between competing objectives.

- Weighted Sum Approach: Combining multiple objectives into a single scalar function using predetermined weighting factors.

- Hybrid Frameworks: Integrating simplex with multi-objective Bayesian optimization (MOBO) or evolutionary algorithms like NSGA-II to leverage the strengths of different methodologies [5].

The sequential simplex method continues to evolve, maintaining relevance in the era of artificial intelligence and autonomous experimentation through its computational efficiency, conceptual transparency, and proven effectiveness across diverse chemical applications.

In the field of reaction optimization research, particularly within drug discovery and development, achieving the best possible outcome—whether maximizing yield, minimizing cost, or optimizing purity—is a fundamental challenge. The simplex method provides a powerful algorithmic framework for systematically navigating complex experimental landscapes to find this optimal solution. This document details the core mathematical concepts of the simplex method—objective functions, constraints, and basic feasible solutions—and frames them within the context of practical experimental optimization for researchers and scientists. By treating a reaction optimization problem as a Linear Programming (LP) problem, we can apply this robust algorithm to efficiently determine the best combination of reaction parameters [8].

Key Terminology and Definitions

The simplex method operates on a standardized form of a linear programming problem. Understanding its core components is essential for applying it effectively. The following table defines and contextualizes the fundamental terminology.

Table 1: Core Terminology of the Simplex Method for Reaction Optimization

| Term | Mathematical Definition | Role in the Simplex Algorithm | Research Context Example |

|---|---|---|---|

| Objective Function [8] | A linear function, ( Z = c1x1 + c2x2 + ... + cnxn ), to be maximized or minimized. | Defines the goal of the optimization; the algorithm iteratively improves its value. | A function representing reaction yield (%) or purity (AU) to be maximized, or a function representing impurity level (mg/L) or process cost ($) to be minimized. |

| Decision Variables [8] | The variables ( x1, x2, ..., x_n ) in the objective function and constraints. | Quantities that are adjusted by the algorithm to find the optimum. | Controllable reaction parameters such as temperature (°C), pressure (atm), reactant concentration (mol/L), catalyst loading (mol%), or reaction time (hr). |

| Constraints [8] | Linear inequalities or equations that the decision variables must satisfy (e.g., ( a1x1 + a2x2 \leq b )). | Define the "feasible region" of all possible solutions that do not violate experimental or physical limits. | Limitations based on reagent availability (e.g., total catalyst ≤ 5 mg), safety thresholds (e.g., reaction temperature ≤ 150 °C), or equipment operating ranges. |

| Feasible Region [8] | The set of all points that satisfy all constraints simultaneously. | The "search space" of the algorithm. It is a convex geometric shape (a polyhedron). | The entire multidimensional combination of reaction parameters that is experimentally possible and safe. |

| Basic Feasible Solution (BFS) [8] | A solution at a vertex (corner point) of the feasible region. | The simplex method moves from one BFS to an adjacent one, improving the objective function at each step. | A specific, discrete experimental condition defined by the limits of the constraints (e.g., a trial run at the maximum safe temperature and maximum available catalyst). |

| Standard Form [9] | An LP problem where the objective is to be maximized, all constraints are equations, and all variables are non-negative. | Required format for initiating the simplex algorithm. | An optimization problem that has been algebraically manipulated to have equality constraints, for example, by adding slack variables. |

| Slack Variable [9] [10] | A variable added to a "less than or equal to" constraint to convert it into an equation. | Represents unused resources and can be a basic variable in the initial BFS. | The amount of a reagent that remains unused in a reaction trial. For example, if a constraint limits catalyst to 5 mg and a trial uses 4 mg, the slack is 1 mg. |

Experimental Protocol: Implementing the Simplex Method for Reaction Optimization

This protocol provides a step-by-step methodology for applying the simplex method to a reaction optimization problem, using the maximization of reaction yield as a representative scenario.

Problem Formulation and Modeling

- Define the Objective: Clearly state the primary goal of the optimization. In this case, the Objective Function is to maximize the reaction yield, which is a function of the decision variables.

- Identify Decision Variables: Determine the key controllable reaction parameters. For this example:

- ( x1 ): Concentration of Reactant A (mol/L)

- ( x2 ): Catalyst Loading (mol%)

- Establish Constraints: Define the practical limits within which the optimization must operate, based on experimental feasibility, cost, or safety.

- Constraint 1 (Reagent Availability): The total amount of Reactant A is limited. For instance, ( 2x1 + x2 \leq 10 ).

- Constraint 2 (Safety Limit): The catalyst loading must not exceed a certain threshold. For instance, ( x_2 \leq 4 ).

- Non-negativity Constraints: All decision variables must be positive or zero. ( x1 \geq 0, x2 \geq 0 ).

- Formulate the Linear Program:

- Maximize: ( Z = 5x1 + 3x2 ) (This represents the yield function, where coefficients 5 and 3 represent the contribution of each variable to the yield)

- Subject to:

- ( 2x1 + x2 \leq 10 )

- ( x_2 \leq 4 )

- ( x1, x2 \geq 0 )

Algorithm Execution: The Tabular Simplex Method

- Convert to Standard Form: Introduce slack variables (( s1 ) and ( s2 )) to convert inequality constraints to equalities [9].

- Maximize: ( Z - 5x1 - 3x2 = 0 )

- Subject to:

- ( 2x1 + x2 + s_1 = 10 )

- ( x2 + s2 = 4 )

- ( x1, x2, s1, s2 \geq 0 )

Initial Simplex Tableau Setup: Construct the initial tableau. The slack variables form the initial basic feasible solution (BFS), meaning ( s1 ) and ( s2 ) are the basic variables and ( x1, x2 ) are non-basic (set to zero). This corresponds to the origin in the feasible region [11] [8].

Table 2: Initial Simplex Tableau

Basic Var ( x_1 ) ( x_2 ) ( s_1 ) ( s_2 ) Solution ( s_1 ) 2 1 1 0 10 ( s_2 ) 0 1 0 1 4 Z -5 -3 0 0 0 Iteration 1:

- Optimality Check: The Z-row has negative coefficients (-5, -3). The solution is not optimal.

- Pivot Column Selection: The most negative coefficient in the Z-row is -5, so ( x_1 ) is the entering variable.

- Pivot Row Selection (Minimum Ratio Test): Calculate the ratio of the Solution column to the pivot column.

- For ( s1 )-row: ( 10 / 2 = 5 )

- For ( s2 )-row: ( 4 / 0 = \infty ) (undefined, ignore)

- The smallest non-negative ratio is 5, so the ( s1 )-row is the pivot row. ( s1 ) is the leaving variable.

- Pivot Operation: Perform Gauss-Jordan row operations to make the pivot element 1 and all other elements in the pivot column 0 [10].

- New ( x1 )-row = Old ( s1 )-row / 2: (1, 1/2, 1/2, 0, 5)

- New ( s2 )-row = Old ( s2 )-row - (0)New ( x_1 )-row: (0, 1, 0, 1, 4)

- New Z-row = Old Z-row - (-5)New ( x_1 )-row: (0, -0.5, 2.5, 0, 25)

Table 3: Simplex Tableau After Iteration 1

Basic Var ( x_1 ) ( x_2 ) ( s_1 ) ( s_2 ) Solution ( x_1 ) 1 1/2 1/2 0 5 ( s_2 ) 0 1 0 1 4 Z 0 -0.5 2.5 0 25 Current BFS Interpretation: ( x1 = 5, x2 = 0, s1 = 0, s2 = 4, Z = 25 ). This represents an experimental condition with high concentration of A but no catalyst.

Iteration 2:

- Optimality Check: The Z-row still has a negative coefficient (-0.5). The solution is not optimal.

- Pivot Column Selection: The most negative coefficient is -0.5, so ( x_2 ) is the entering variable.

- Pivot Row Selection:

- For ( x1 )-row: ( 5 / (1/2) = 10 )

- For ( s2 )-row: ( 4 / 1 = 4 )

- The smallest ratio is 4, so the ( s2 )-row is the pivot row. ( s2 ) is the leaving variable.

- Pivot Operation:

- New ( x2 )-row = Old ( s2 )-row / 1: (0, 1, 0, 1, 4)

- New ( x1 )-row = Old ( x1 )-row - (1/2)New ( x_2 )-row: (1, 0, 1/2, -1/2, 3)

- New Z-row = Old Z-row - (-0.5)New ( x_2 )-row: (0, 0, 2.5, 0.5, 27)

Table 4: Optimal Simplex Tableau After Iteration 2

Basic Var ( x_1 ) ( x_2 ) ( s_1 ) ( s_2 ) Solution ( x_1 ) 1 0 1/2 -1/2 3 ( x_2 ) 0 1 0 1 4 Z 0 0 2.5 0.5 27 Termination: All coefficients in the Z-row are non-negative. The optimality condition is satisfied. The algorithm terminates [8].

Interpretation of Results

The final tableau provides the optimal solution for the reaction optimization:

- Optimal Decision Variables: ( x1 = 3 ), ( x2 = 4 )

- Maximum Yield: ( Z = 27 )

- Slack Variables: ( s1 = 0 ), ( s2 = 0 )

Research Interpretation: To achieve the maximum predicted yield of 27 units, the experiment should be run with a Reactant A concentration of 3 mol/L and a catalyst loading of 4 mol%. Both constraints (Reagent Availability and Safety Limit) are binding, meaning all available resources are fully utilized.

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational and mathematical "reagents" essential for implementing the simplex method in an experimental research context.

Table 5: Essential Research Reagent Solutions for Simplex-Based Optimization

| Item | Function in Optimization | Example/Note |

|---|---|---|

| Slack Variable [9] | Converts a "≤" resource constraint into an equation, representing unused resources. | If a budget constraint is ( \text{Cost} ≤ \$100 ), the slack variable is the unspent money. |

| Surplus Variable | Converts a "≥" requirement constraint into an equation, representing an excess over the minimum. | If a product purity must be ( ≥ 95\% ), the surplus is the purity percentage above 95%. |

| Artificial Variable | Provides an initial basic feasible solution for problems where slack variables are insufficient (used in the Two-Phase method) [8]. | A computational tool to start the algorithm; must be driven to zero for feasibility. |

| Pivot Column Selector | Identifies the entering variable based on the most negative coefficient in the Z-row (for maximization) to most improve the objective [8]. | The core mechanism for determining the direction of improvement in the feasible region. |

| Minimum Ratio Test | Identifies the leaving variable to maintain solution feasibility by ensuring no variable becomes negative [8]. | Prevents the suggestion of experimentally impossible conditions (e.g., negative concentration). |

Advanced Applications: Multi-Objective Optimization in Drug Development

A single objective, such as maximizing yield, is often an oversimplification. In drug development, multiple, often competing, objectives are common (e.g., maximize efficacy while minimizing toxicity and cost) [12]. The simplex method can be extended to handle such scenarios through two primary techniques:

Weighted Sum Method: The multiple objectives are combined into a single objective function by assigning a weight to each, reflecting its relative importance to the researcher [13].

- Protocol: For objectives ( Z1 ) (efficacy) and ( Z2 ) (1/cost), create a new objective: ( Z = w1 Z1 + w2 Z2 ), where ( w1 + w2 = 1 ). The simplex method is then run on this composite objective.

- Considerations: This method is straightforward but requires careful selection of weights, as different weights can lead to different optimal solutions.

Lexicographic Method: Objectives are ranked in strict order of priority (e.g., Safety > Efficacy > Cost). The simplex method is applied sequentially [13].

- Protocol:

- Step 1: Optimize the highest-priority objective (e.g., minimize toxicity) to find its optimal value ( T^* ).

- Step 2: Add a new constraint that the first objective must equal its optimal value (e.g., ( \text{Toxicity} = T^* )).

- Step 3: Optimize the second-priority objective (e.g., maximize efficacy) subject to all original constraints plus the new one.

- Considerations: This method guarantees the best possible solution for the primary objective before considering secondary ones.

- Protocol:

Workflow and Signaling Pathways

The following diagram visualizes the logical flow and decision-making pathway of the simplex algorithm as applied to a reaction optimization problem.

Diagram Title: Simplex Algorithm Workflow for Reaction Optimization

The simplex method offers a rigorous and systematic mathematical framework for tackling complex optimization challenges in research and development. By precisely defining the objective function, constraints, and navigating through basic feasible solutions, it efficiently converges to an optimal set of experimental parameters. Its extension to multi-objective problems makes it particularly valuable for modern drug discovery, where balancing efficacy, safety, and cost is paramount. Integrating this computational protocol into the experimental design workflow can significantly accelerate the optimization cycle, reduce resource consumption, and lead to more robust and well-understood processes.

The simplex method, a cornerstone of linear programming, has revolutionized optimization across fields from logistics to chemical engineering. For researchers in drug development and synthetic chemistry, its power is uniquely unlocked when applied to linear or linearly-approximatable systems. This application note details how the inherent properties of linear models—convexity, predictability, and a single, globally optimal solution—make the simplex method an exceptionally robust and efficient tool for reaction parameter modeling. We frame this within a broader thesis on simplex-based reaction optimization, providing the protocols and data interpretation frameworks necessary for practical implementation in a research environment.

Theoretical Foundations: Simplex Method and Linearity

Core Principles of the Simplex Algorithm

The simplex method, invented by George Dantzig, is an algorithm designed to solve Linear Programming (LP) problems [2] [1]. An LP problem typically involves maximizing or minimizing a linear objective function subject to a set of linear inequality or equality constraints [14]. The standard form for a maximization problem is:

- Maximize: ( \mathbf{c^T} \mathbf{x} )

- Subject to: ( A\mathbf{x} \leq \mathbf{b} ) and ( \mathbf{x} \geq \mathbf{0} ) Here, ( \mathbf{x} ) is the vector of decision variables (e.g., reaction parameters), ( \mathbf{c^T} ) defines the linear objective function (e.g., yield, purity), ( A ) is a matrix of coefficients for the linear constraints, and ( \mathbf{b} ) is a vector representing resource limits or parameter boundaries [1] [14].

Geometrically, the linear constraints define a convex polyhedron known as the feasible region [1] [14]. A fundamental insight is that the optimal value of the objective function, if it exists, is always found at a vertex (corner point) of this polyhedron [1] [14]. The simplex method operates by navigating from one vertex of the polyhedron to an adjacent one, following the edges, and improving the objective function value at each step until no further improvement is possible, indicating the optimum has been reached [1] [14].

The Critical Role of Linearity

Linearity is the critical enabler for the simplex method's efficiency and reliability. Several key properties arise from linearity:

- Predictable Vertex-to-Vertex Navigation: The algorithm's strategy of moving along edges is efficient because the linearity of both the objective function and constraints guarantees that the optimum lies at a vertex.

- Convex Feasible Region: The set of points defined by linear inequalities is always convex, eliminating the risk of becoming trapped in local optima that are not global optima—a common challenge in nonlinear optimization.

- Deterministic and Interpretable Solutions: The solution is typically a single, well-defined point (or set of points), providing clear and actionable optimal conditions.

When reaction modeling data can be framed within a linear context, these properties ensure that the simplex method will find the best possible solution reliably and efficiently.

Current Applications in Research and Industry

Recent research demonstrates the adaptability of simplex-based approaches to complex, modern optimization challenges in chemical synthesis and related fields. The following table summarizes key contemporary applications.

Table 1: Current Applications of Simplex-Based Optimization in Research

| Application Area | Specific Use-Case | Key Innovation / Advantage | Source |

|---|---|---|---|

| Microwave Circuit Design | Globalized EM-driven optimization of passive microwave circuits. | Use of simplex-based regressors to model circuit operating parameters instead of full frequency responses, smoothing the objective function. [15] | |

| Organic Synthesis in Flow | Self-optimization of an imine synthesis in a microreactor system. | A modified simplex algorithm (Nelder-Mead) used for real-time, multi-variate, multi-objective optimization with inline analytics. [6] | |

| Theoretical Algorithm Development | Improving the theoretical worst-case runtime of the simplex algorithm. | Incorporation of randomness to guarantee polynomial runtime, reassuring users of the method's practical efficiency. [2] |

These applications highlight a crucial trend: the simplex method's core principles are being enhanced with modern strategies like surrogate modeling and real-time analytics to tackle highly nonlinear systems by focusing on linear subspaces or linear approximations of key performance indicators.

Experimental Protocols

Protocol 1: Real-Time Self-Optimization of a Chemical Reaction using a Modified Simplex Algorithm

This protocol is adapted from research on the self-optimization of an imine synthesis in a continuous-flow microreactor system [6].

1. Research Reagent Solutions & Essential Materials Table 2: Key Materials for the Self-Optimization Experiment

| Item | Function / Specification | Example / Note | |

|---|---|---|---|

| Microreactor Setup | Continuous flow reaction vessel; provides controlled residence time and efficient mixing. | Coiled stainless steel capillaries (total volume 1.87 mL). [6] | |

| Syringe Pumps | Precise dosage of starting material solutions. | Continuously working pumps (e.g., SyrDos2). [6] | |

| Inline FT-IR Spectrometer | Real-time, non-destructive monitoring of reaction conversion and yield. | Tracks characteristic IR bands for reactant decrease and product increase. [6] | |

| Automation & Control System | Coordinates pumps, thermostat, and spectrometer; executes optimization algorithm. | Laboratory automation system (e.g., HiTec Zang) coupled with MATLAB for control. [6] | |

| Chemicals | Reaction substrates and solvent. | Benzaldehyde, benzylamine, and methanol. [6] |

2. Workflow Diagram The following diagram illustrates the automated, closed-loop optimization process.

3. Detailed Methodology

- Step 1: System Setup & Objective Definition. Configure the automated microreactor system, ensuring all hardware (pumps, reactor, FT-IR) is connected to the control software. Prepare solutions of starting materials. Define the objective function (e.g.,

Maximize Yield = f(Temperature, Residence Time, Stoichiometry)). - Step 2: Algorithm Initialization. The modified simplex algorithm (e.g., Nelder-Mead) is initialized by defining a starting simplex in the parameter space. This requires

n+1sets of initial reaction parameters for ann-dimensional problem (e.g., for 2 parameters, 3 initial experiments are needed). - Step 3: Automated Experimental Loop. For each vertex of the simplex:

- The control system automatically sets the parameters (e.g., flow rates, temperature).

- The reaction is executed, and the stream is analyzed by the inline FT-IR.

- The IR spectrum is processed in real-time to calculate the objective function value (e.g., yield, conversion).

- Step 4: Simplex Evolution. The algorithm (running in MATLAB) compares the objective function values at all vertices and applies a transformation (e.g., reflection, expansion, contraction) to generate a new, promising set of reaction parameters, moving the simplex towards the optimum.

- Step 5: Convergence Check. The loop (Steps 3-4) continues until the simplex converges, meaning the variance in objective values between vertices falls below a predefined threshold or a maximum number of iterations is reached.

Protocol 2: Simplex Optimization using a Surrogate Model

This protocol is inspired by a machine learning approach for microwave optimization that uses simplex-based surrogates, which is highly transferable to reaction modeling [15].

1. Workflow Diagram: Dual-Resolution Surrogate Approach

2. Detailed Methodology

- Step 1: Problem Formulation & Data Collection. Identify key performance "operating parameters" of the reaction (e.g., conversion at a specific time, final yield, byproduct ratio) that can be inferred from raw data. Conduct a limited set of initial experiments using a low-resolution, computationally cheaper model (e.g., a low-fidelity simulation or a coarse experimental design) to sample the parameter space [15].

- Step 2: Surrogate Model Construction. Instead of modeling the entire, potentially complex reaction profile, construct simple, linear regression models (simplex-based surrogates) that directly predict the operating parameters from the input variables (e.g., temperature, concentration) [15]. This "regularizes" the problem, making it more linear and tractable.

- Step 3: Global Optimization on the Surrogate. Use a simplex method to rapidly and efficiently find the parameter set that optimizes the objective function on the surrogate model. Because the surrogate is cheap to evaluate, this global search can be performed extensively [15].

- Step 4: High-Fidelity Validation and Tuning. Take the best candidate(s) from the surrogate-based optimization and perform a limited number of high-resolution, high-fidelity experiments (or detailed simulations) to confirm the result and perform final, precise tuning [15]. This step ensures reliability and accuracy.

The Scientist's Toolkit: Key Optimization Algorithms

Understanding the landscape of optimization algorithms is crucial for selecting the right tool. The table below compares the Simplex Method with other common techniques.

Table 3: A Comparison of Optimization Algorithms for Reaction Modeling

| Algorithm | Class | Key Principle | Best-Suited Problem Type | Advantages | Disadvantages |

|---|---|---|---|---|---|

| Simplex (Dantzig) | Linear Programming | Moves along edges of a convex polyhedron to find an optimal vertex. [1] [14] | Linear objective functions with linear constraints. | Proven, efficient, and interpretable. Optimal solution is guaranteed if it exists. [1] | Limited to linear systems. Performance can degrade for pathological cases. [2] |

| Interior Point Methods | Linear/Nonlinear Programming | Moves through the interior of the feasible region towards the optimum. [14] | Large-scale linear and convex nonlinear problems. | Polynomial-time complexity. Often faster for very large, sparse problems. [16] [14] | Can be less intuitive than Simplex. The solution path is not along vertices. |

| Nelder-Mead (Modified Simplex) | Nonlinear Heuristic | A simplex of points evolves in parameter space via reflection, expansion, and contraction. [6] | Experimental, black-box optimization where derivatives are unavailable. | Model-free, easy to implement, and effective for a small number of parameters. [6] | No convergence guarantees, can get stuck in local optima for complex problems. |

| Population-Based Metaheuristics (e.g., PSO, GA) | Nonlinear Heuristic | A population of candidate solutions evolves based on principles of natural selection or social behavior. [15] | Highly nonlinear, multi-modal, or discontinuous problems. | Strong global search capabilities, can handle complex, non-convex spaces. [15] | Computationally very expensive, often requiring thousands of evaluations. [15] |

The simplex method remains a powerful and highly relevant tool for reaction parameter modeling when the problem exhibits or can be effectively approximated by linear relationships. Its theoretical robustness, driven by the convexity and vertex-property of linear systems, provides a guarantee of finding a global optimum that many heuristic methods lack. As demonstrated by cutting-edge applications in chemical synthesis and materials science, the fusion of the classic simplex algorithm with modern techniques like surrogate modeling and real-time analytics creates a formidable framework for research optimization. For scientists and drug development professionals, mastering the application of the simplex method to linear reaction models provides a dependable, efficient, and interpretable pathway to accelerating development cycles and improving product yields.

The simplex method, developed by George Dantzig in 1947, represents a cornerstone algorithm in the field of linear programming (LP) and remains indispensable for solving complex optimization problems across numerous scientific domains [2] [1]. Within pharmaceutical research and reaction optimization, researchers constantly face the challenge of maximizing desired product yield or minimizing resource consumption while navigating multiple constraints related to reactants, conditions, energy inputs, and time [1]. The simplex method provides a structured mathematical framework for addressing these challenges by systematically identifying the optimal combination of variables within defined limitations.

At its core, the simplex method solves linear programming problems by moving from one vertex of the feasible region, defined by the problem constraints, to an adjacent vertex with an improved objective function value, continuing this process until no further improvement is possible [1] [17]. This iterative vertex-to-vertex navigation ensures that each step brings the solution closer to the optimum, making it particularly valuable for reaction optimization where experimental resources are precious and costly. The algorithm's geometrical interpretation transforms constraint inequalities into a multidimensional polyhedron (polytope), where the optimal solution resides at one of the extreme points [2] [1]. For drug development professionals, this mathematical approach translates to a reliable methodology for optimizing complex reaction parameters in a systematic, predictable manner.

Mathematical Foundation and Standard Form

Standard Maximization Form Transformation

To apply the simplex method, reaction optimization problems must first be converted into standard maximization form. This crucial step ensures uniform treatment of constraints and objective functions within the algorithmic framework. The standard form requires [1] [17]:

- An objective function to be maximized

- All constraints expressed as equations (rather than inequalities)

- All variables to be non-negative

For constraints initially expressed as inequalities, transformation involves introducing slack or surplus variables to convert them to equalities. In reaction optimization contexts, these slack variables often represent unused resources, excess capacity, or safety margins in experimental parameters.

Table 1: Variable Transformation for Standard Form

| Constraint Type | Transformation Process | Chemical Reaction Interpretation |

|---|---|---|

| ≤ constraints | Add slack variable: (x + y \leq c) becomes (x + y + s = c) | Unused reactant or remaining resource capacity |

| ≥ constraints | Subtract surplus variable: (x + y \geq c) becomes (x + y - s = c) | Excess beyond minimum requirement or safety buffer |

| Unrestricted variables | Replace with difference of two non-negative variables: (z = z^+ - z^-) | Experimental parameters that can vary in either direction |

Linear Programming Formulation

The canonical form for a linear programming problem using the simplex method is expressed as [1]:

- Maximize: ( \mathbf{c^T} \mathbf{x} )

- Subject to: ( A\mathbf{x} \leq \mathbf{b} ) and ( \mathbf{x} \geq 0 )

Where ( \mathbf{c} ) represents the coefficients of the objective function (e.g., yield, efficiency, or profit), ( \mathbf{x} ) represents the decision variables (e.g., reactant concentrations, temperature settings, time parameters), ( A ) is the matrix of constraint coefficients, and ( \mathbf{b} ) represents the right-hand-side constraint values [1].

In pharmaceutical reaction optimization, this mathematical framework allows researchers to systematically balance multiple competing factors. For instance, maximizing product yield while respecting constraints on reactant availability, energy consumption, reaction time, and impurity thresholds becomes a tractable computational problem through this formulation.

The Simplex Tableau and Computational Framework

Initial Tableau Setup

The simplex tableau serves as the organizational structure that tracks all essential information throughout the optimization process. This tabular representation includes the objective function coefficients, constraint coefficients, right-hand-side values, and the current objective function value [1] [17].

The initial simplex tableau is structured as follows [1]:

Where the first row represents the negative coefficients of the objective function, followed by the constraint coefficients and constants. For reaction optimization problems, this tableau efficiently organizes all relevant experimental parameters and their relationships.

Algorithm Workflow and Process Navigation

The simplex method follows a systematic iterative process to navigate from initial to optimal solutions. The diagram below illustrates this workflow:

Diagram 1: Simplex Algorithm Iterative Workflow

Experimental Protocol: Reaction Optimization Case Study

Problem Formulation Protocol

Consider a pharmaceutical reaction optimization scenario where researchers aim to maximize yield of an active pharmaceutical ingredient (API) while constrained by reactant availability, processing time, and energy consumption.

PROTOCOL: Problem Formulation for Reaction Optimization

- Define Decision Variables: Identify key controllable reaction parameters (e.g., reactant concentrations, catalyst amounts, temperature, pressure, time).

- Formulate Objective Function: Establish mathematical relationship between decision variables and optimization target (e.g., yield, purity, efficiency).

- Identify Constraints: Determine all limitations (resource availability, safety thresholds, equipment capabilities, time constraints).

- Quantify Parameters: Assign numerical values to all coefficients based on experimental data or theoretical calculations.

- Validate Model: Verify that all relationships are linear and constraints properly represent the experimental system.

Simplex Implementation Protocol

PROTOCOL: Tableau Setup and Iteration

- Transform to Standard Form

- Convert all inequality constraints to equations using slack/surplus variables

- Ensure all variables are non-negative

- Express objective function as maximization

Construct Initial Tableau

- Organize objective function coefficients in first row

- Arrange constraint coefficients in subsequent rows

- Include right-hand-side values in final column

- Add identity matrix columns for slack variables

Execute Iterative Optimization

- Identify Pivot Column: Select the most negative entry in the objective row [17] [18]

- Identify Pivot Row: Calculate quotients of RHS divided by corresponding pivot column coefficients; select row with smallest non-negative quotient [17] [18]

- Perform Pivot Operations: Use Gauss-Jordan elimination to convert pivot element to 1 and all other pivot column entries to 0 [1] [18]

- Check Optimality: If no negative entries remain in objective row, solution is optimal; otherwise repeat process [17]

Chemical Reaction Optimization Example

Consider optimizing a reaction where two intermediates (X and Y) combine to form API, with constraints on processing time and catalyst availability:

Maximize: ( P = 30x + 40y ) (Total API yield) Subject to:

- ( 2x + y \leq 8 ) (Catalyst A constraint, mg)

- ( x + 2y \leq 10 ) (Catalyst B constraint, mg)

- ( x + 3y \leq 12 ) (Processing time constraint, hours)

- ( x, y \geq 0 ) (Non-negativity)

Table 2: Initial Simplex Tableau for Reaction Optimization

| Basic Var | x | y | s1 | s2 | s3 | RHS |

|---|---|---|---|---|---|---|

| s1 | 2 | 1 | 1 | 0 | 0 | 8 |

| s2 | 1 | 2 | 0 | 1 | 0 | 10 |

| s3 | 1 | 3 | 0 | 0 | 1 | 12 |

| P | -30 | -40 | 0 | 0 | 0 | 0 |

Following the simplex protocol, we identify y as the entering variable (most negative in objective row) and s3 as the leaving variable (smallest quotient: 12/3=4). After pivot operations, we obtain:

Table 3: Intermediate Tableau After First Iteration

| Basic Var | x | y | s1 | s2 | s3 | RHS |

|---|---|---|---|---|---|---|

| s1 | 5/3 | 0 | 1 | 0 | -1/3 | 4 |

| s2 | 1/3 | 0 | 0 | 1 | -2/3 | 2 |

| y | 1/3 | 1 | 0 | 0 | 1/3 | 4 |

| P | -10/3 | 0 | 0 | 0 | 40/3 | 160 |

The process continues with x entering and s2 leaving, resulting in the final optimal solution: x=2, y=5, P=260 [18]. This indicates maximum API yield of 260 units with 2 units of intermediate X and 5 units of intermediate Y.

Geometric Interpretation in High-Dimensional Spaces

The navigation process of the simplex algorithm can be visualized geometrically as movement along the edges of a feasible region polyhedron. In reaction optimization, this polyhedron represents all possible combinations of reaction parameters that satisfy the constraints.

Diagram 2: Geometric Navigation Through Solution Space

Each vertex of the polyhedron represents a basic feasible solution where a certain number of variables are at their bounds (typically zero) [2] [1]. The simplex algorithm's iterative process moves from one vertex to an adjacent one along edges that improve the objective function, continuing until no adjacent vertex offers improvement, indicating the optimal solution has been found. This geometric navigation explains why the method efficiently hones in on optimal reaction conditions without exhaustively evaluating all possible parameter combinations.

Research Reagent Solutions and Computational Tools

Table 4: Essential Research Reagents and Computational Tools for Simplex-Based Optimization

| Item/Category | Function in Optimization | Application Example |

|---|---|---|

| Linear Programming Solvers (e.g., CPLEX, Gurobi) | Implement simplex algorithm efficiently for large-scale problems | Optimizing complex reaction pathways with 100+ variables |

| Open-Source LP Libraries (Python, R) | Provide accessible simplex implementation for research prototyping | Academic research and preliminary reaction screening |

| Slack/Surplus Variables | Represent unused resources or constraint buffers | Quantifying excess catalyst or unused reaction time |

| Tableau Management Systems | Organize and track iteration progress | Manual verification of automated solver results |

| Sensitivity Analysis Tools | Evaluate solution robustness to parameter changes | Assessing impact of reactant purity variations on optimal conditions |

| Matrix Operation Libraries | Perform pivot operations efficiently | Handling large constraint matrices in metabolic pathway optimization |

Advanced Considerations for Research Applications

Computational Efficiency and Recent Advances

While the simplex method has demonstrated remarkable practical efficiency since its development, theoretical computer science has revealed important insights about its computational complexity. In 1972, mathematicians proved that the simplex method could, in worst-case scenarios, require exponential time relative to the number of constraints [2]. However, these worst-case scenarios rarely manifest in practical reaction optimization problems.

Groundbreaking work by Spielman and Teng in 2001 demonstrated that with minimal randomization, the simplex method operates in polynomial time, providing theoretical justification for its observed efficiency [2]. Recent research by Huiberts and Bach has further refined our understanding, establishing that "our traditional tools for studying algorithms don't work" for analyzing simplex method performance, and providing stronger mathematical support for its efficiency in practical applications [2].

For pharmaceutical researchers, these advances validate relying on simplex-based optimization for complex reaction development, as exponential complexity is unlikely to impact real-world applications. Modern implementations typically complete optimization in time proportional to a polynomial function of the problem size, making them suitable for even large-scale reaction optimization problems with hundreds of variables and constraints.

Application to Reaction Optimization Research

In pharmaceutical development, the simplex method's iterative navigation from initial to optimal solutions provides a systematic framework for:

- Multi-parameter reaction optimization simultaneously adjusting temperature, concentration, pH, and time variables

- Resource-constrained experimental design maximizing information gain within budget and material limitations

- Scale-up parameter identification transitioning from laboratory to production scale while maintaining yield and purity

- Robustness testing through sensitivity analysis of the optimal solution to parameter variations

The method's step-by-step improvement process mirrors the scientific method itself, making it particularly intuitive for researchers to implement and interpret. Each iteration represents a logical, measurable improvement toward the optimal reaction conditions, with clear indicators when no further improvement is possible.

Implementing Simplex for Reaction Optimization: A Step-by-Step Methodology

The systematic optimization of chemical reactions is a cornerstone of efficient research and development in synthetic organic chemistry. Properly defining the optimization problem is a critical first step that enables scientists to use computational methods, including the simplex method, to achieve goals such as increased yield, reduced waste, and more efficient resource utilization [19]. A well-formulated problem provides a clear roadmap for the optimization campaign, ensuring that the experimental effort is focused and productive.

This guide provides a structured framework for formulating objective functions and constraints tailored to chemical reaction optimization. By accurately translating a chemical challenge into a mathematical problem, researchers can effectively navigate the high-dimensional parameter spaces typical of synthetic chemistry and identify optimal reaction conditions.

Core Components of an Optimization Problem

Every optimization problem consists of three fundamental components: design variables, an objective function, and constraints. When combined, they create a complete optimization formulation [20].

Table 1: Core Components of an Optimization Problem

| Component | Mathematical Representation | Chemical Reaction Example |

|---|---|---|

| Design Variables | ( x ) | Temperature, catalyst amount, reagent equivalents |

| Objective Function | ( \min f(x) ) or ( \max f(x) ) | Maximize reaction yield (%) |

| Constraints | ( g(x) \leq 0.0 ), ( h(x) = 0.0 ) | Impurity level ≤ 2.0%, Total cost ≤ $50 |

Design Variables

Design variables are the parameters controlled by the optimizer to find the best solution. In chemical reaction optimization, these typically include both continuous and categorical parameters [19] [21].

- Continuous Variables: Can take any value within defined bounds. Examples include: temperature (°C), concentration (mol/L), reaction time (hours), and reagent equivalents.

- Categorical Variables: Represent distinct choices rather than numerical values. Examples include: solvent identity (DMSO, THF, EtOH), catalyst type (Pd, Ni, Cu), and base selection (KOH, NaOH, Et₃N).

Best Practice: Begin with the smallest number of design variables that still represents an interesting problem. This simplifies the initial optimization and helps identify issues before scaling up complexity [21].

Objective Functions

The objective function is the measure you are trying to minimize or maximize. In chemical reactions, this is typically a performance or cost metric quantified as a singular scalar value [21].

Common Objective Functions in Chemical Reaction Optimization:

- Maximize: Reaction yield, selectivity, or purity

- Minimize: Cost of goods, waste production, or reaction time

Technical Note: Most optimization frameworks, including those for the simplex method, are designed for minimization. To maximize a function like yield, apply a scaler with a negative value (e.g., -1) to convert it to a minimization problem [21].

Constraints

Constraints limit the output values of a model to ensure practical, feasible solutions. They define the boundaries of acceptable performance [20] [21].

- Inequality Constraints: Specify that a value must be greater than or less than a constraint value (e.g., impurity level ≤ 2.0%).

- Equality Constraints: Require a value to match exactly a desired value (e.g., final pH = 7.0).

A design satisfying all constraints is feasible, while one violating any constraint is infeasible. An active constraint is one that is exactly on its bound at the solution [20].

Workflow for Chemical Reaction Optimization

Chemical reaction optimization is an iterative process where scientists cycle through analysis, decision-making, and experimentation. The workflow below illustrates this process, highlighting where problem formulation guides experimental planning.

Diagram 1: Iterative Reaction Optimization Workflow. This flowchart shows the cyclic process of chemical reaction optimization, beginning with problem formulation and continuing through experimental design and analysis until an optimal solution is found.

Practical Formulation for Chemical Reactions

Defining the Parameter Space

The parameter space consists of all possible combinations of parameter values being optimized. For chemical reactions, this space grows exponentially with each additional parameter, creating a fundamental challenge known as the "curse of dimensionality" [19].

Example: Optimizing temperature (5 values), base (5 choices), and solvent (5 options) creates 5 × 5 × 5 = 125 possible experiments. Adding 10 different reagents expands this to 1,250 experiments.

Table 2: Example Parameter Space for a Catalytic Coupling Reaction

| Parameter Type | Parameter Name | Values or Range | Variable Type |

|---|---|---|---|

| Continuous | Temperature | 25°C to 100°C | Continuous |

| Continuous | Catalyst Loading | 0.5 mol% to 5.0 mol% | Continuous |

| Continuous | Reaction Time | 1 to 24 hours | Continuous |

| Categorical | Solvent | DMF, THF, Toluene, DMSO | Categorical |

| Categorical | Base | K₂CO₃, Et₃N, NaOH | Categorical |

Formulating Objectives and Constraints

A well-formulated optimization problem clearly distinguishes between objectives (what you want to optimize) and constraints (what conditions must be satisfied).

Example: Amidation Reaction Optimization

Objective: Maximize reaction yield

- Mathematical form:

maximize: Yield(%) - Implementation:

minimize: -Yield(for minimization-based optimizers)

- Mathematical form:

Constraints:

Product Purity ≥ 95%Total Impurities ≤ 3%Reaction Time ≤ 8 hoursCost of Materials ≤ $100 per mole

Common Pitfall: Avoid linearly dependent variables that control the same physical aspect of the reaction. For example, using both "catalyst loading" and "catalyst concentration" as separate variables when they represent the same fundamental factor [21].

Experimental Protocol for Initial Optimization

Protocol: Initial Parameter Space Exploration

Purpose: To systematically explore the reaction parameter space and collect initial data for optimization.

Materials:

- Research Reagent Solutions:

- Catalyst Stock Solutions (0.1 M in appropriate solvent): Pre-dissolved for accurate dispensing

- Substrate Solutions (0.5 M): Ensures consistent concentration across experiments

- Base Solutions (1.0 M): Aqueous or organic depending on compatibility

- Solvent Systems: Multiple options as defined in parameter space

Procedure:

- Design Experimental Matrix: Using the defined parameter space (Table 2), select an initial set of 8-12 experiments that broadly sample the range of conditions.

- Preparation: In a controlled environment, label reaction vessels and add substrates according to the experimental design.

- Reaction Execution:

- Add specified solvent volume to each reaction vessel

- Introduce catalyst solution at designated loading

- Add base solution at specified equivalents

- Initiate reactions simultaneously using precise temperature control

- Monitoring: Track reaction progress by:

- Sampling at predetermined timepoints (1, 2, 4, 8, 24 hours)

- Immediate quenching of samples and dilution for analysis

- Analysis:

- Quantify yield and conversion using calibrated HPLC or GC methods

- Calculate selectivity and impurity profiles

- Record all observations (precipitation, color changes, etc.)

Data Recording: Document all parameters, observations, and results in a structured format. Include both the intended design values and any measured deviations.

Data Analysis and Iteration

After completing the initial experiments:

- Analyze results to identify trends and promising regions of the parameter space

- Refine the optimization problem formulation if necessary

- Design the next set of experiments focusing on promising regions

- Continue the iterative process until convergence to an optimum

Visualization of High-Dimensional Parameter Space

Understanding complex, high-dimensional parameter spaces is challenging. Parallel coordinate plots provide an effective method to visualize how different parameters affect the objective function.

Diagram 2: Multi-Dimensional Parameter Space Visualization. This diagram illustrates how multiple reaction parameters (temperature, catalyst loading, solvent type, and time) collectively influence the reaction yield output. High-yield conditions (green) follow distinct pathways through the parameter space compared to low-yield conditions (red).

Essential Materials for Reaction Optimization

Table 3: Research Reagent Solutions for Optimization Experiments

| Reagent Category | Specific Examples | Function in Reaction | Solution Concentration |

|---|---|---|---|

| Catalyst Stocks | Pd(PPh₃)₄, NiCl₂·glyme, CuI | Facilitate bond formation, lower activation energy | 0.01-0.1 M in appropriate solvent |

| Substrate Solutions | Aryl halides, Boronic acids, Amines | Core reactants for the desired transformation | 0.1-0.5 M in reaction solvent |

| Base Solutions | K₂CO₃, Cs₂CO₃, Et₃N, DBU | Neutralize byproducts, facilitate catalysis | 0.5-1.0 M (aqueous or organic) |

| Solvent Systems | DMF, THF, 1,4-Dioxane, Toluene | Medium for reaction, can influence mechanism and rate | Neat, various polarities |

| Additives | Ligands (BINAP, dppf), Salts | Modify catalyst activity, selectivity, and stability | 0.01-0.05 M in toluene or THF |

Advanced Considerations

Formulation for Simplex Method Implementation

When applying the simplex method to chemical reaction optimization, specific formulation considerations apply:

- Linear Assumption: The simplex method assumes linearity of the objective function and constraints. For chemical systems that often exhibit nonlinear behavior, this may require linear approximation or transformation of variables.

- Vertex Solutions: The method converges to solutions at the vertices of the feasible region, which may correspond to boundary conditions in chemical parameter spaces.

- Sequential Application: In practice, the simplex method may be applied sequentially to refined regions of the parameter space as understanding of the reaction behavior improves.

Troubleshooting Poor Formulation

Common issues in optimization problem formulation and their solutions:

Problem: Optimizer fails to converge or produces nonsensical results.

- Solution: Simplify the problem by reducing the number of design variables and verify the model produces reasonable outputs across the design space [21].

Problem: Optimizer consistently violates constraints.

- Solution: Review constraint definitions for appropriateness and consider whether some constraints should be implemented as hard boundaries in the experimental design rather than optimization constraints.

Problem: Optimization results don't match chemical intuition.

- Solution: Examine whether critical parameters or constraints have been omitted from the formulation, and run diagnostic experiments to verify model predictions.

Proper formulation of objective functions and constraints is the critical foundation for successful chemical reaction optimization. By clearly defining design variables, articulating a precise objective, and establishing meaningful constraints, researchers can effectively navigate complex parameter spaces and accelerate reaction development. The structured approach outlined in this guide provides a framework for translating chemical challenges into well-posed optimization problems suitable for methods including the simplex approach, ultimately leading to more efficient, sustainable, and cost-effective chemical processes.

Within reaction optimization research, achieving the best possible yield, purity, or efficiency often depends on finding the optimal combination of multiple factors, such as temperature, reactant concentrations, and catalyst amount. The simplex method, developed by George Dantzig, is a powerful linear programming algorithm designed for exactly this type of multi-variable optimization problem [2] [17]. It uses a systematic approach to navigate the "feasible region" defined by the constraints of an experiment, moving from one potential solution to an adjacent, better one until the optimal condition is identified [17] [1]. This protocol details the practical workflow for transforming experimental reaction data into a simplex model tableau, providing researchers and drug development professionals with a structured method to optimize chemical processes.

The following workflow outlines the entire process, from experimental design to the interpretation of results.

Diagram 1: Overall Simplex Optimization Workflow for Reaction Research.

Experimental Planning and Data Collection

Defining the Optimization Problem

The first step is to formally define the linear programming problem based on the reaction optimization goal [17].

- Objective Function: This is the single metric to be optimized (e.g., reaction yield, product purity, or space-time yield). For a maximization problem, the objective is expressed as ( Z = c1x1 + c2x2 + ... + cnxn ), where ( ci ) are coefficients representing the contribution of each factor ( xi ) (e.g., concentration, temperature) to the objective [22] [1].

- Decision Variables: These are the key reaction parameters the researcher can control. In a drug development context, these often include concentrations, temperature, pressure, and reaction time.

- Constraints: These are the limitations within which the reaction must operate. They are derived from experimental boundaries, safety limits, and material availability. Examples include maximum allowable temperature for a sensitive reagent or a limited supply of an expensive catalyst [2].

Table 1: Example Components of a Reaction Optimization Problem

| Component | Description | Example from Catalytic Reaction Optimization |

|---|---|---|

| Objective Function | Mathematical expression of the goal. | Maximize Yield = ( 3A + 2B + C ) |

| Decision Variables | Controllable reaction parameters. | ( A ): Catalyst Loading (mol%), ( B ): Temperature (°C), ( C ): Reaction Time (h) |

| Constraints | Physical and experimental limitations. | Total reagent use ≤ 50 mmol, ( A ) ≤ 20 mol%, ( C ) ≤ 24 h [2] |

Data Collection Protocol

- Design of Experiments (DoE): Establish a experimental design plan that systematically varies the decision variables within the predefined constraint boundaries.

- High-Throughput Experimentation: For complex systems with many variables, employ automated platforms or parallel reactors to efficiently generate the required data matrix.

- Analytical Quantification: For each experimental condition, use calibrated analytical techniques (e.g., HPLC, GC, NMR) to accurately measure the response defined in the objective function (e.g., yield).

- Data Curation: Compile the results into a structured dataset, clearly linking each set of input variables to its corresponding output response.

Model Formulation and Standardization

The geometric interpretation of the simplex method reveals that the optimal solution lies at a vertex (corner point) of the feasible region defined by the constraints [2] [22]. The algorithm works by moving from one vertex to an adjacent one along the edges of this polyhedron, improving the objective function at each step until the optimum is found [1].

Converting to Standard Form

The simplex algorithm requires all constraints to be equations (equalities) rather than inequalities [22] [17]. This is achieved by introducing slack variables, which represent the unused resources within a constraint.

- For a ( \leq ) constraint: Add a slack variable.

- Original: ( 2x1 + 3x2 \leq 10 )

- Standard Form: ( 2x1 + 3x2 + s1 = 10 ), where ( s1 \geq 0 ) [22]

- For a ( \geq ) constraint: Subtract a surplus variable and add an artificial variable (requiring the Two-Phase or Big M method) [22].

- For an ( = ) constraint: Add an artificial variable directly [22].

Table 2: Variable Transformation for Standard Form

| Variable Type | Symbol | Role in the Model | Interpretation in Reaction Context |

|---|---|---|---|

| Decision Variable | ( x1, x2, ... ) | Represents a controllable factor. | Catalyst loading, temperature. |

| Slack Variable | ( s1, s2, ... ) | Converts "≤" constraint to equality. | Unused amount of a limiting reagent. |

| Surplus Variable | ( s1, s2, ... ) | Converts "≥" constraint to equality. | Excess beyond a minimum required safety threshold. |

| Artificial Variable | ( a1, a2, ... ) | Provides an initial basis for "≥" and "=" constraints. | A computational tool with no physical meaning [22]. |

Workflow for Model Formulation

The logical process for building the model is shown below.

Diagram 2: Logic for Converting a Model to Standard Form.

Simplex Tableau Construction and Optimization

Constructing the Initial Tableau

The simplex tableau is a matrix representation that organizes all information needed for the algorithm: the objective function, constraints, current solution, and objective value [23].

Tableau Structure:

- The first row (z-row or index row) contains the negated coefficients of the objective function and is used for the optimality test [17] [23].

- The subsequent rows represent the constraint equations.

- The right-hand side (RHS) column contains the constant terms from the constraints and the current value of the objective function.

- The identity matrix columns initially correspond to the slack and artificial variables, which form the initial basic feasible solution (BFS) [23].

Table 3: Structure of the Initial Simplex Tableau

| Basic | ( x_1 ) | ( x_2 ) | ( s_1 ) | ( s_2 ) | ( s_3 ) | RHS | Ratio |

|---|---|---|---|---|---|---|---|

| ( z ) | -3 | -5 | 0 | 0 | 0 | 0 | --- |

| ( s_1 ) | 1 | 0 | 1 | 0 | 0 | 4 | --- |

| ( s_2 ) | 0 | 2 | 0 | 1 | 0 | 12 | --- |

| ( s_3 ) | 3 | 2 | 0 | 0 | 1 | 18 | --- |

In this example BFS, the non-basic variables ( x1 ) and ( x2 ) (the decision variables) are 0, and the basic variables ( s1, s2, s_3 ) (the slack variables) are 4, 12, and 18, respectively. The objective function value ( z ) is 0 [22].

The Scientist's Toolkit: Key Reagent Solutions

Table 4: Essential Computational "Reagents" for Simplex Optimization

| Reagent / Tool | Function / Purpose | Notes for Implementation |

|---|---|---|