Optimizing Method Comparison Studies: A Framework for Robust Experimental Design in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on designing, executing, and validating robust method-comparison studies.

Optimizing Method Comparison Studies: A Framework for Robust Experimental Design in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on designing, executing, and validating robust method-comparison studies. It covers foundational principles, from defining accuracy and precision to establishing causality, and explores advanced methodological applications, including true experimental, quasi-experimental, and repeated-measures designs. The guide also addresses critical troubleshooting areas such as controlling for bias and confounding variables, and details rigorous validation techniques like Bland-Altman analysis and establishing limits of agreement. By synthesizing these elements, this framework aims to enhance the reliability and interpretability of comparative data in clinical measurement and technology assessment.

Laying the Groundwork: Core Principles of Method Comparison and Causal Inference

Frequently Asked Questions

What is the fundamental purpose of a method-comparison study? The fundamental purpose is to determine if a new measurement method (test method) can be used interchangeably with an established method (comparative method) without affecting clinical decisions or patient outcomes. It answers the clinical question of substitution: "Can one measure a given parameter with either Method A or Method B and get the same results?" [1] [2].

What is the key difference between a method comparison and a procedure comparison? This is a critical distinction. A method comparison assesses the analytical difference between two measurement devices or techniques using the same sample (e.g., analyzers placed side-by-side). A procedure comparison evaluates the total difference observed when the methods are used in their intended locations, which includes not only the analytical difference but also differences from sample handling, storage, transport, and physiological variation from different sampling sites (e.g., a point-of-care analyzer vs. a central lab analyzer) [3]. Confusing these two can lead to erroneous conclusions about a method's performance.

Why are correlation analysis and t-tests considered inadequate for method-comparison studies? These common statistical tools are inappropriate for assessing agreement [2]:

- Correlation measures the strength of a linear relationship between two methods, not their agreement. A high correlation can exist even when one method consistently gives results that are much higher than the other [2].

- The t-test primarily detects whether the average values from two methods are statistically different. With a small sample size, it may fail to detect a clinically important difference. With a very large sample size, it may indicate a statistically significant difference that is not clinically meaningful [2].

What is an acceptable sample size for a method-comparison study? A minimum of 40 different patient specimens is often recommended, but 100 or more is preferable to identify unexpected errors and ensure the data covers the entire clinically meaningful measurement range [2] [4]. The samples should be analyzed over multiple days (at least 5) to account for routine performance variations [2] [4].

How do I handle a discrepant result or a suspected outlier in my data? The best practice is to re-analyze the specimen while it is still fresh and available [4]. If the discrepancy is confirmed, it may indicate an interference specific to that patient's sample matrix or another pre-analytical error. Investigating such discrepancies can reveal important limitations in a method's specificity [4].

Our Bland-Altman plot shows that the difference between methods increases as the average value increases. What does this mean? This pattern suggests the presence of a proportional systematic error. This means the disagreement between the two methods is not a fixed amount (constant error) but is proportional to the concentration of the analyte being measured. This is a specific type of bias that regression analysis can help quantify [4].

Troubleshooting Common Experimental Issues

| Problem | Potential Cause | Solution |

|---|---|---|

| High scatter in the difference plot | Poor repeatability (precision) of one or both methods [1]. | Check the precision of each method individually using a replication experiment before comparing them. |

| A clear, consistent bias across all measurements | Constant systematic error (inaccuracy) in the test method [1] [4]. | Verify calibration of the test method. Investigate potential constant interferences. |

| Bias that increases with analyte concentration | Proportional systematic error in the test method [4]. | Use regression statistics (e.g., Deming, Passing-Bablok) to characterize the slope. Check for nonlinearity or issues with reagent formulation. |

| One or two points are extreme outliers | Sample-specific interferences, transcription errors, or sample mix-ups [4]. | Re-analyze the outlier specimens if possible. If the discrepancy is confirmed, it may indicate a specificity problem with the new method. |

| Data points cluster in a narrow range | The selected patient samples do not cover the full clinical reportable range [2]. | Intentionally procure and analyze additional samples with low, medium, and high values to adequately assess the method's performance across its entire range. |

Key Concepts and Quantitative Data

TABLE 1: Essential Terminology in Method-Comparison Studies [1]

| Term | Definition |

|---|---|

| Bias | The mean (overall) difference in values obtained with two different methods of measurement (test method value minus comparative method value). |

| Precision | The degree to which the same method produces the same results on repeated measurements (repeatability). |

| Limits of Agreement (LOA) | The range within which 95% of the differences between the two methods are expected to fall. Calculated as Bias ± 1.96 SD (where SD is the standard deviation of the differences). |

| Confidence Limit | A range that expresses the uncertainty in the estimate of the bias and limits of agreement. |

TABLE 2: Recommended Experimental Design Specifications [2] [4]

| Design Factor | Recommendation |

|---|---|

| Number of Samples | Minimum of 40; 100 or more is preferable. |

| Sample Concentration Range | Should cover the entire clinically meaningful range. |

| Number of Measurements | Duplicate measurements are recommended to minimize random variation. |

| Time Period & Analysis Runs | Conduct over a minimum of 5 different days to capture routine variability. |

| Sample Stability | Analyze paired samples within 2 hours of each other, or within a stability period defined for the analyte. |

Standard Experimental Protocol for a Method-Comparison Study

Objective: To estimate the systematic error (bias) between a new test method and a established comparative method and determine if the two methods can be used interchangeably.

Step 1: Study Design and Planning

- Define Acceptance Criteria: Before starting, define the clinically acceptable bias based on biological variation, clinical outcomes, or state-of-the-art performance [2].

- Select Samples: Obtain a minimum of 40 unique patient samples that span the full clinical reporting range of the assay [2] [4].

- Plan the Analysis: Analyze samples over multiple days (≥5 days). If possible, perform measurements in duplicate and randomize the order of analysis between the two methods to avoid carry-over and time-related biases [2].

Step 2: Sample Analysis and Data Collection

- Simultaneous Measurement: Analyze paired samples as close in time as possible (ideally within 2 hours) to prevent specimen degradation from affecting results [1] [4].

- Data Recording: Record all results in a structured format, noting the method, sample ID, date, and replicate number.

Step 3: Data Analysis and Interpretation

- Visual Data Inspection: Create a Bland-Altman plot (difference vs. average plot) to visually assess the agreement, identify outliers, and spot trends like proportional error [1] [2].

- Calculate Bias and Agreement:

- Calculate the mean difference (bias) and the standard deviation (SD) of the differences.

- Calculate the Limits of Agreement: Bias + 1.96 SD (Upper LOA) and Bias - 1.96 SD (Lower LOA) [1].

- Use Regression Analysis: For data covering a wide range, use Deming or Passing-Bablok regression to characterize the relationship (slope and intercept) between the methods and estimate systematic error at critical medical decision levels [2] [4].

- Compare to Criteria: Compare the estimated bias and LOA to the pre-defined clinically acceptable criteria to make a decision on method interchangeability.

The Scientist's Toolkit: Essential Research Reagents and Materials

TABLE 3: Key Materials for a Method-Comparison Study

| Item | Function in the Experiment |

|---|---|

| Patient Specimens | The core "reagent." Provides the matrix and biological variation necessary to assess method performance under real-world conditions [2] [4]. |

| Comparative Method | The established measurement procedure used as the benchmark for comparison. Ideally, this is a reference method, but often it is the current routine laboratory method [4]. |

| Test Method | The new measurement method or instrument whose performance is being evaluated [4]. |

| Quality Control (QC) Materials | Used to verify that both the test and comparative methods are operating within predefined performance specifications on the days of the study. |

| Calibrators | Used to establish the analytical calibration curve for the test method, ensuring its scale is accurate. |

| Statistical Software | Essential for performing complex calculations like Deming regression, Bland-Altman analysis, and generating plots (e.g., MedCalc, R, Python with SciPy/StatsModels) [1] [2]. |

Core Definitions and Troubleshooting FAQs

What is the difference between Accuracy and Bias?

- Accuracy refers to how close a measurement is to the true or reference value [5]. It is a qualitative term concerning the agreement between a measurement and the true value [5].

- Bias is a quantitative measure of the systematic difference between the average of many measurements and the true value [5]. An inaccurate experiment is often described as biased, indicating a consistent deviation in a single direction (e.g., always overestimating) [6].

What is the difference between Precision and Repeatability?

- Precision is a measure of the variability or scatter of measurements. It indicates how close repeated measurements are to each other, regardless of their relation to the true value [6] [7]. It is about the consistency of results under specified conditions [8].

- Repeatability is the variation observed when the same operator measures the same item multiple times with the same tool or gauge under the same conditions over a short period [8] [7]. It sets a practical limit for how precise a measurement process can be [8].

How can I tell if my experiment has a bias problem?

Bias can be identified through several methods, including calibrating standards with a reference laboratory, using check standards in control charts, or participating in interlaboratory comparisons where reference materials are circulated and measured [5].

My measurements are precise but not accurate. What should I do?

When you have high precision but low accuracy, your results are consistently but incorrectly grouped. Acquiring more data will not resolve the underlying issue. You must investigate and correct the root cause of the bias, which may involve instrument recalibration [6] [7].

My measurements are accurate but not precise. What should I do?

When you have high accuracy but low precision, the average of your measurements is correct, but individual results are highly variable. In this case, you can improve the reliability of your results by performing more tests, which will improve the precision of the average. You should also investigate ways to decrease the underlying variability in your measurement process [6].

Experimental Protocols for Method Comparison

Protocol: Comparison of Methods Experiment

Purpose: To estimate the systematic error (inaccuracy or bias) between a new test method and a comparative method using real patient specimens [4].

Experimental Design:

- Number of Specimens: A minimum of 40 different patient specimens is recommended. Specimens should be carefully selected to cover the entire working range of the method and represent the expected spectrum of diseases. Quality and range of concentrations are more critical than a large number of specimens [4].

- Replication: Analyze each specimen singly by both test and comparative methods. However, performing duplicate measurements (on different sample cups in different analytical runs) is advantageous for verifying measurement validity and identifying errors [4].

- Time Period: Conduct the experiment over a minimum of 5 days, and ideally up to 20 days, incorporating several different analytical runs to minimize systematic errors from a single run. Analyze 2-5 patient specimens per day [4].

- Specimen Handling: Analyze test and comparative methods within two hours of each other to maintain specimen stability. Define and systematize specimen handling procedures (e.g., refrigeration, freezing) prior to the study to prevent handling-induced differences [4].

Data Analysis:

- Graphical Analysis: Create a difference plot (test result minus comparative result vs. comparative result) or a comparison plot (test result vs. comparative result). Visually inspect for patterns and outliers [4].

- Statistical Calculations:

- For a wide analytical range: Use linear regression to obtain the slope (b) and y-intercept (a) of the line of best fit. Calculate the systematic error (SE) at a critical medical decision concentration (Xc) as:

Yc = a + b*Xcfollowed bySE = Yc - Xc[4]. - For a narrow analytical range: Calculate the average difference (bias) between the two methods using a paired t-test [4].

- For a wide analytical range: Use linear regression to obtain the slope (b) and y-intercept (a) of the line of best fit. Calculate the systematic error (SE) at a critical medical decision concentration (Xc) as:

Visualizing the Relationships

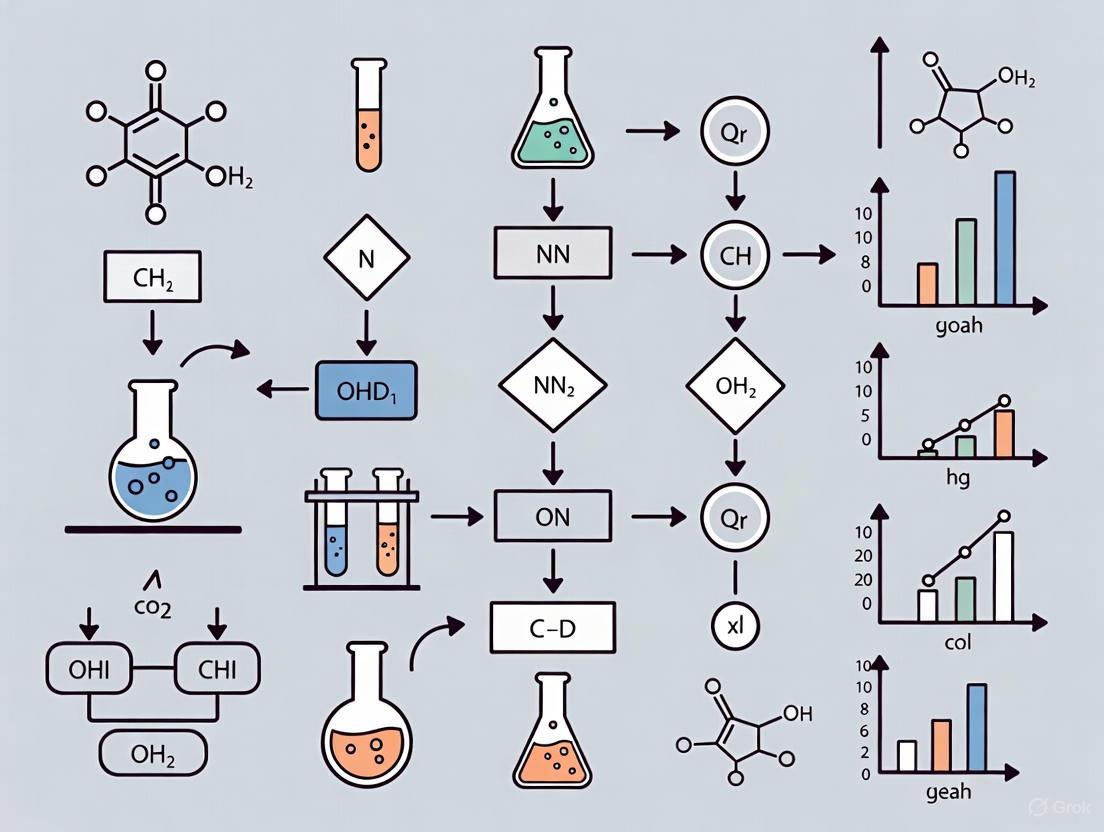

Diagram 1: Relationship of Accuracy and Precision

Diagram 2: Relationship of Precision and Repeatability

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and concepts used in method validation and comparison studies.

| Item or Concept | Function & Explanation |

|---|---|

| Reference Material | An artifact with a property value established by a reference laboratory, used to calibrate standards and identify bias via a traceable chain of comparisons [5]. |

| Check Standard | A stable material measured regularly over time and plotted on a control chart. Violations of control limits suggest that re-calibration is needed to control bias [5]. |

| Patient Specimens | In a comparison of methods experiment, 40+ patient samples covering the analytical range are tested by both the new and comparative methods to estimate systematic error using real-world matrixes [4]. |

| Linear Regression | A statistical calculation used in method comparison to model the relationship between two methods. It provides slope and intercept to estimate proportional and constant systematic error [4]. |

| Control Chart | A graphical tool used to monitor the stability of a measurement process over time by plotting results from a check standard, helping to identify when bias may be introduced [5]. |

| Term | Definition | Relationship to Other Terms |

|---|---|---|

| Accuracy | Proximity of a measurement to the true value [5]. | A measurement can be accurate but not precise, or precise but not accurate [6]. |

| Bias | Quantitative, systematic difference between the average measurement and the true value [5]. | Bias is a cause of inaccuracy [6]. |

| Precision | The variability or scatter of repeated measurements [6]. | Independent of accuracy; a process can be precise but biased [6] [7]. |

| Repeatability | Variation under conditions of same operator, tool, and short time period [8]. | A component of precision; sets a limit for how precise a process can be [8]. |

Table 2: Troubleshooting Guide for Measurement Issues

| Observed Problem | Likely Cause | Recommended Action |

|---|---|---|

| Low Accuracy, Low Precision | The measurement process is both biased and highly variable [6]. | Investigate and correct the cause of the bias, and then work to reduce variability [6]. |

| Low Accuracy, High Precision | The measurement process is biased but has low variability (precise but wrong) [6]. | Investigate and correct the cause of the bias. Do not simply collect more data, as this will only give a more precise estimate of the wrong value [6] [7]. |

| High Accuracy, Low Precision | The measurement process is unbiased on average, but individual measurements are highly variable [6]. | Perform more tests to improve the precision of the average result, and/or investigate ways to decrease measurement variability [6]. |

| Unrepeatable Measurements | High variation when the same operator measures the same item. Could be due to equipment issues or subjective judgment criteria [8]. | Check equipment and ensure the operator is following procedure correctly. Create objective, non-subjective criteria for measurement [8]. |

In scientific and drug development research, all evidence is not created equal. The "Hierarchy of Evidence" is a core principle of evidence-based practice that ranks study designs based on their potential to minimize bias and provide reliable answers to clinical and research questions. Understanding this hierarchy is fundamental to optimizing method comparison and experimental design research, as it guides researchers toward the most robust methodologies and enables proper interpretation of existing literature. This framework helps researchers distinguish between correlational observations that merely suggest relationships and controlled experiments that can demonstrate causal effects—a critical distinction when making decisions about drug efficacy and patient care.

The concept of levels of evidence was first formally described in 1979 by the Canadian Task Force on the Periodic Health Examination to develop recommendations based on medical literature [9]. This system was further refined by Sackett in 1989, with both systems placing randomized controlled trials (RCTs) at the highest level and case series or expert opinions at the lowest level [9]. These hierarchies rank studies according to their probability of bias, with RCTs considered highest because they are designed to be unbiased through random allocation of subjects, which also randomizes confounding factors that might otherwise influence results [9].

Understanding Levels of Evidence

Major Evidence Classification Systems

Different organizations have developed variations of evidence classification systems tailored to their specific needs. While all follow similar principles of prioritizing studies with less bias, the exact classifications may vary. The three most prominent systems are compared in the table below.

Table 1: Comparison of Major Evidence Classification Systems

| Johns Hopkins System | American Association of Critical-Care Nurses (AACN) | Melnyk & Fineout-Overholt System |

|---|---|---|

| Level I: RCTs, systematic reviews of RCTs | Level A: Meta-analysis of multiple controlled studies | Level 1: Systematic review or meta-analysis of all relevant RCTs |

| Level II: Quasi-experimental studies | Level B: Well-designed controlled studies | Level 2: Well-designed RCT (e.g., large multi-site) |

| Level III: Non-experimental, qualitative studies | Level C: Qualitative, descriptive, or correlational studies | Level 3: Controlled trials without randomization |

| Level IV: Opinion of respected authorities | Level D: Peer-reviewed professional standards | Level 4: Case-control or cohort studies |

| Level V: Experiential, non-research evidence | Level E: Expert opinion, multiple case reports | Level 5: Systematic reviews of descriptive/qualitative studies |

| Level M: Manufacturers' recommendations | Level 6: Single descriptive or qualitative study | |

| Level 7: Opinion of authorities, expert committees |

Study Designs in the Evidence Hierarchy

The hierarchy of evidence exists because different study designs have varying capabilities to control for bias and confounding variables. The following diagram illustrates the key questions researchers ask to determine a study's design and, consequently, its position in the evidence hierarchy.

The evidence hierarchy prioritizes study designs that minimize bias through methodological rigor. Systematic reviews and meta-analyses of RCTs sit at the pinnacle because they combine results from multiple high-quality studies, providing the most reliable evidence [10] [11]. Individual RCTs follow, as randomization distributes confounding factors equally between groups, isolating the true effect of the intervention [9]. Quasi-experimental studies lack random assignment but maintain structured interventions, while observational studies (cohort, case-control, cross-sectional) observe relationships without intervention [10]. Case series and expert opinions reside at the base, as they are most susceptible to bias and confounding [9].

Specialized Evidence Frameworks

Different research questions require specialized evidence frameworks. For example, when investigating prognosis (what happens if we do nothing), the highest evidence comes from cohort studies rather than RCTs, as prognosis questions don't involve comparing treatments [9]. Similarly, diagnostic test efficacy requires different study designs than treatment effectiveness. The American Society of Plastic Surgeons and Centre for Evidence Based Medicine have developed modified levels to address these specialized needs [9].

The Researcher's Toolkit: Essential Experimental Components

Key Research Reagent Solutions

Table 2: Essential Research Reagents and Their Functions

| Reagent/Component | Primary Function | Application Examples |

|---|---|---|

| Taq DNA Polymerase | Enzyme that synthesizes new DNA strands in PCR | Amplifying specific DNA sequences; gene expression analysis |

| MgCl₂ | Cofactor for DNA polymerase; affects reaction specificity | Optimizing PCR conditions for efficiency and fidelity |

| dNTPs | Building blocks (nucleotides) for DNA synthesis | PCR, cDNA synthesis, and other enzymatic DNA reactions |

| Primers | Short DNA sequences that define amplification targets | Specifying which DNA region to amplify in PCR |

| Antibodies (Primary) | Bind specifically to target proteins of interest | Detecting protein localization (IHC) or levels (Western blot) |

| Antibodies (Secondary) | Bind to primary antibodies with conjugated detection tags | Fluorescent or enzymatic detection of primary antibody binding |

| Competent Cells | Bacterial cells made permeable for DNA uptake | Plasmid propagation, molecular cloning, protein expression |

| Selection Antibiotics | Eliminate non-transformed cells; maintain selective pressure | Ensuring only successfully transformed cells grow |

Fundamental Statistical Concepts for Experimental Design

Table 3: Essential Statistical Concepts for Interpreting Experimental Results

| Statistical Term | Definition | Interpretation in Research |

|---|---|---|

| Confidence Interval (CI) | The range within which the population's mean score will probably fall | If a 95% CI for a difference between treatments includes 0, there is no significant difference [10] |

| p-value | The probability that the observed difference between means occurred by chance | p < 0.05 suggests a significant difference; p ≥ 0.05 suggests no significant difference [10] |

| Statistical Power | The probability that a test will detect an effect when there truly is one | Insufficient power makes it tough to detect real effects; adequate sample size is crucial [12] |

| Confounding Variables | External factors that can influence the outcome variable | If left unchecked, they can make it hard to tell if a treatment had any real effect [12] |

Technical Support Center: Troubleshooting Guides & FAQs

Systematic Troubleshooting Methodology

When experiments yield unexpected results, a systematic troubleshooting approach is far more efficient than random guessing. The following diagram outlines a proven methodology for diagnosing experimental problems.

This systematic approach can be applied to virtually any experimental problem. For example, when facing "No PCR Product Detected," researchers should list all possible causes including each PCR component (Taq polymerase, MgCl₂, buffer, dNTPs, primers, DNA template), equipment, and procedural errors [13]. Similarly, when encountering "No Clones Growing on Agar Plates," possible explanations include issues with the plasmid, antibiotic, or transformation procedure [13]. The key is moving systematically from easiest to most complex explanations rather than jumping to conclusions.

Frequently Asked Questions (FAQs)

Q1: My negative control is showing a positive result. Where should I start troubleshooting?

Begin by verifying all reagents and equipment. Check whether reagents have been stored properly and haven't expired [14]. Ensure all solutions were prepared correctly and there's no contamination in your buffers or water supply. Review your procedure step-by-step to identify any potential for cross-contamination between samples. Implement more stringent negative controls to isolate the source of the signal [15].

Q2: I'm getting extremely high variance in my experimental results. How can I reduce this variability?

High variance often stems from inconsistent technique or environmental factors. First, repeat the experiment to rule out simple human error [14]. Standardize all procedures across repetitions and users. Examine whether your sample size is adequate to detect the effect you're studying [12]. Check for equipment calibration issues and ensure environmental conditions (temperature, humidity) are controlled. In cell-based assays, pay particular attention to consistent handling during critical steps like washing, as improper aspiration can dramatically increase variability [15].

Q3: How can I determine if an unexpected result is a true finding or an experimental artifact?

Systematically evaluate your controls. A positive control confirms your experimental system is working, while a negative control helps identify false positives [16]. Consider whether there's a biologically plausible explanation for the unexpected result by reviewing the literature [14]. If possible, try an alternative method to measure the same outcome. Consult with colleagues who may have experienced similar issues—often, what seems novel may be a known artifact with a specific technique [15].

Q4: What are the most common pitfalls in experimental design that I should avoid?

The most frequent pitfalls include inadequate sample size (insufficient statistical power), lack of appropriate controls, failure to account for confounding variables, and not blinding measurements to prevent bias [12]. Other common issues include poorly defined hypotheses, inconsistent data collection methods, and not planning statistical analysis before conducting experiments [17]. Always consult with a statistician during the design phase rather than after data collection [17].

Q5: My experiment worked perfectly until I changed one reagent. Now I can't get it to work. What's wrong?

When problems follow a specific change, that change is likely the source. First, verify the new reagent's specifications, storage requirements, and preparation method [13]. Check whether concentration or formulation differs from your previous reagent. Test the old and new reagents side-by-side if possible. Contact the manufacturer—they may have changed the formulation or encountered a bad batch. Also consider whether the new reagent might interact differently with other components in your system [14].

Q6: How do I decide which variable to test first when troubleshooting a complex multi-step protocol?

Start with the easiest and fastest variables to test, then move to more time-consuming ones [14]. Begin with equipment settings and simple procedural checks before moving to reagent concentrations or incubation times. Focus on steps most likely to cause the specific problem based on literature and experience. For example, in immunohistochemistry with dim signals, first check microscope settings, then secondary antibody concentration, before examining fixation time [14]. Always change only one variable at a time to clearly identify the causative factor.

Q7: What's the role of positive and negative controls in troubleshooting?

Controls are essential for isolating the source of problems. Positive controls confirm your system can work under ideal conditions, while negative controls identify contamination or non-specific effects [16]. When troubleshooting, well-designed controls can quickly tell you whether the problem is with your samples, reagents, or procedures. For example, if both positive and negative controls show unexpected results, the issue is likely with your core reagents or methods rather than your specific experimental samples [15].

Implementing Robust Experimental Design

Avoiding Common Experimental Design Pitfalls

Even with perfect troubleshooting skills, preventing problems through robust experimental design is far more efficient. Common pitfalls include inadequate sample size, which leaves studies underpowered to detect real effects [12] [17]. Without a clear hypothesis, researchers risk collecting data without a focused analytical plan [12]. Control groups are essential—attempting experiments without them is like "trying to measure progress without a starting point" [12]. Confounding variables represent another frequent pitfall; if left uncontrolled, they can completely obscure true treatment effects [12].

Data quality issues often undermine otherwise well-designed experiments. Inconsistent data collection methods can introduce bias and errors [12]. Skipping data validation is "like driving with your eyes closed"—errors go unnoticed and compromise analysis [12]. Statistical pitfalls include peeking at interim results, which inflates false positives, and misusing statistical tests, which leads to invalid conclusions [12]. The multiple comparisons problem increases the chance of false discoveries unless appropriate corrections are applied [12].

Organizational Strategies for Success

Beyond technical considerations, organizational factors significantly impact experimental success. Lack of leadership buy-in often starves experimentation programs of necessary resources [12]. Biased assumptions and cognitive dissonance can cause teams to ignore surprising findings that might be scientifically important [12]. Poor cross-team collaboration leads to disjointed experimentation efforts that may work at cross-purposes [12]. Establishing a culture that values methodological rigor, statistical planning, and systematic troubleshooting is essential for producing reliable, high-quality research.

Formal training in troubleshooting skills remains uncommon in graduate education, despite being "an essential skill for any researcher" [15]. Initiatives like "Pipettes and Problem Solving" at the University of Texas at Austin provide structured approaches to developing these competencies through scenario-based learning [15]. Such programs help researchers acquire the systematic thinking needed to diagnose experimental problems efficiently rather than relying on trial-and-error approaches.

FAQs on Correlation and Causation

Q1: What does "correlation does not imply causation" mean? It means that just because two variables are observed to move together (correlation), this does not mean that one variable is responsible for causing the change in the other (causation). The observed relationship could be a coincidence or, more commonly, be explained by a third factor [18] [19].

Q2: What are some common reasons why correlated variables are not causal? The most common scenarios where correlation does not equal causation are [18] [19]:

- Confounding Variables (Third Factor): A third, unmeasured variable (often called a confounder or lurking variable) causes both the observed variables to change.

- Example: A correlation exists between ice cream sales and drowning deaths. The confounder here is the hot weather (summer season), which causes both more ice cream consumption and more swimming, leading to more drownings [18].

- Reverse Causation: The assumed effect is actually the cause, and vice versa.

- Example: Observing that low cholesterol is correlated with higher mortality might lead to the conclusion that low cholesterol causes death. However, it could be that serious diseases like cancer cause both weight loss (and lower cholesterol) and an increased risk of death [18].

- Coincidence: The relationship occurs purely by random chance.

Q3: How can I start to investigate a causal relationship? Begin by creating a causal diagram (or Directed Acyclic Graph, DAG). This is a graphical representation of your hypothesized data-generating process [20].

- Identify Variables: List your treatment (potential cause) and outcome (effect).

- Map Relationships: Draw arrows from known or suspected causes to their effects.

- Identify Paths: List all paths connecting your treatment and outcome. These can be:

- Causal Paths: The direct path from cause to effect (e.g.,

Treatment → Outcome). - Non-Causal Paths: Other paths that provide an "alternative explanation" for the relationship (e.g.,

Treatment ← Confounder → Outcome) [20]. This process helps you visually identify which other variables need to be accounted for to isolate the true causal effect.

- Causal Paths: The direct path from cause to effect (e.g.,

Q4: What are the gold-standard methods for establishing causality? The most robust method is a randomized controlled experiment [19].

- Methodology: Participants are randomly assigned to either a treatment group (which receives the intervention) or a control group (which does not).

- Why it Works: Randomization ensures that, on average, all other factors—both known and unknown confounders—are balanced across the groups. Any systematic difference in the outcome can then be attributed to the treatment itself [19]. For situations where controlled experiments are unethical or impossible (e.g., studying the health effects of smoking), advanced statistical methods for causal inference, such as instrumental variables or regression discontinuity, may be employed.

Troubleshooting Guides

Problem: My analysis shows a strong correlation, but I suspect a confounder.

Solution: Use your causal diagram to decide which variables to control for in your analysis to "block" non-causal paths [20].

- Step 1: Draw your causal diagram. For example, to study the effect of wet soil on flower bloom, you might identify several confounding paths [20]:

Wet soil ← Sunlight hours → Flower bloomWet soil ← Geographic info (Rain/Temperature) → Flower bloom

- Step 2: Apply the rules for blocking paths. A path is blocked if you control for a variable that is a:

- Step 3: Adjust your analysis. To isolate the direct effect of wet soil, you would statistically control for (adjust for) the confounders: sunlight hours and geographic info. This closes the backdoors and allows you to estimate the causal effect [20].

What to Avoid: Do not control for a collider variable. A collider is a variable that is affected by both the treatment and the outcome (e.g., Treatment → Collider ← Outcome). Controlling for a collider introduces "collider bias" (or endogenous selection bias) by creating a spurious association between the treatment and outcome [20].

- Example: If rain and sprinklers both cause a road to be wet, studying only wet roads will make it seem like rain and sprinkler use are correlated, even if they are not [20].

Problem: I cannot run a controlled experiment. How can I provide causal evidence?

Solution: Use a combination of observational data analysis and logical reasoning to build a case for causality.

- Method 1: Establish Temporality. Ensure that the cause unequivocally occurs before the effect. This can sometimes be achieved with longitudinal data collection [18].

- Method 2: Look for a Dose-Response Relationship. See if increasing levels of the suspected cause lead to a correspondingly stronger effect. This pattern strengthens the case for a causal mechanism.

- Method 3: Use Statistical Controls. As in the troubleshooting guide above, use regression or matching methods to control for observable confounders you have measured.

- Method 4: Seek Consistency. See if the correlation has been found repeatedly across different populations and study designs.

Key Causal Relationships and Scenarios

The table below summarizes classic examples and explanations for why correlation does not equal causation.

| Observed Correlation | Plausible Explanation | Type of Problem |

|---|---|---|

| More ice cream sales More drowning deaths [18] | Hot summer weather causes both. | Confounding Variable |

| Low cholesterol Higher mortality [18] | Serious illness (e.g., cancer) causes low cholesterol and increases death risk. | Reverse Causation |

| Children watching more TV More violent behavior [18] | Violent children may be more inclined to watch TV, not that TV causes violence. | Reverse Causation / Bidirectional |

| Sleeping with shoes on Waking with a headache [18] | Both are caused by a third factor, such as going to bed intoxicated. | Confounding Variable |

| Higher exercise levels Higher skin cancer rates [19] | People who live in sunnier climates exercise outdoors more and have greater sun exposure. | Confounding Variable |

Experimental Protocols for Establishing Causality

Protocol 1: Randomized Controlled Trial (RCT)

Objective: To determine the causal effect of a treatment or intervention by eliminating confounding through random assignment [19].

- Recruitment: Identify and recruit a pool of eligible participants.

- Randomization: Randomly assign each participant to either the Treatment Group or the Control Group. Use computer-generated random numbers to ensure true randomness.

- Blinding (if possible): Implement single-blind (participants don't know their group) or double-blind (both participants and researchers don't know) procedures to prevent bias.

- Intervention: Administer the treatment to the Treatment Group and a placebo or standard care to the Control Group.

- Data Collection: Measure the outcome variable(s) of interest in both groups after the intervention period.

- Analysis: Compare the average outcome between the Treatment and Control groups. A statistically significant difference is evidence of a causal effect.

Protocol 2: Causal Analysis via Path Adjustment

Objective: To estimate a causal effect from observational data by identifying and adjusting for confounders using a causal diagram [20].

- Define the Research Question: Clearly state the hypothesized cause (Treatment, T) and effect (Outcome, Y).

- Build a Causal Diagram (DAG): Based on subject-matter knowledge, draft a graph with nodes (variables) and arrows (causal directions). Include all common causes of T and Y [20].

- Identify All Paths: List all open paths between T and Y, distinguishing between causal paths (T → Y) and non-causal, "backdoor" paths (e.g., T ← C → Y) [20].

- Select Adjustment Set: Identify a set of variables (e.g., confounders) that, when controlled for, will block all non-causal paths while leaving causal paths open [20].

- Statistical Analysis: Perform a regression analysis (or similar) that includes the treatment and the selected adjustment set as independent variables.

- Interpretation: The coefficient for the treatment variable in this model represents the estimated causal effect, assuming the causal diagram is correct and all relevant confounders were measured and adjusted for.

Visualization of Causal Concepts

Causal Diagram: Confounding

This diagram illustrates a classic confounding scenario, where a third variable (Confounder, C) is a common cause of both the treatment (T) and the outcome (Y), creating a spurious correlation between them [18] [20].

Causal Diagram: Mediator

This diagram shows a mediator variable (M), which is part of the causal pathway between the treatment (T) and the outcome (Y). The effect of T on Y is transmitted through M [20].

Causal Diagram: Collider

This diagram depicts a collider variable (C), which is a common effect of both the treatment (T) and the outcome (Y). Conditioning on (or controlling for) a collider creates a spurious association between T and Y, which is a common source of bias [20].

The Scientist's Toolkit: Key Concepts & Methods

The table below details essential methodological concepts and tools for causal inference research.

| Tool / Concept | Function / Definition | Key Consideration |

|---|---|---|

| Randomized Controlled Trial (RCT) | The gold-standard experiment where random assignment balances confounders across groups, allowing for causal conclusions [19]. | Can be expensive, time-consuming, and sometimes unethical or impractical for certain research questions. |

| Causal Diagram (DAG) | A visual map of the assumed data-generating process, showing causal relationships between variables. Used to identify confounders and sources of bias [20]. | The accuracy of the causal conclusion is entirely dependent on the correctness of the diagram. |

| Confounder | A variable that is a common cause of both the treatment and the outcome, creating a spurious association between them. It must be controlled for to isolate the causal effect [18] [20]. | Not all confounders may be known or measurable, leading to "unmeasured confounding" bias. |

| Mediator | A variable on the causal pathway between the treatment and the outcome (Treatment → Mediator → Outcome). It explains the mechanism of the effect [20]. | Controlling for a mediator blocks part of the treatment's effect and should generally not be done if the total effect is of interest. |

| Collider | A variable that is caused by both the treatment and the outcome (Treatment → Collider ← Outcome). Controlling for it induces bias [20]. | A critical source of selection bias. Can be unintentionally controlled for by study design (e.g., only studying a specific sub-population). |

| Granger Causality Test | A statistical hypothesis test for determining whether one time series is useful in forecasting another, providing evidence for "predictive causality" [18]. | Does not establish true causality, as the relationship could still be driven by a third factor that influences both series. |

Frequently Asked Questions

Q1: What is the fundamental goal of a method comparison study? The primary goal is to determine if two measurement methods can be used interchangeably without affecting results or subsequent decisions. This is done by estimating the bias (systematic difference) between the methods and checking if it is small enough to be clinically or analytically acceptable [2].

Q2: Why are correlation coefficient (r) and paired t-test inadequate for method comparison?

- Correlation measures the strength of a linear relationship, not agreement. Methods can be perfectly correlated but have large, consistent differences [2].

- Paired t-test determines if the average difference between methods is statistically significant. With a large sample, it may flag trivial differences as significant. With a small sample, it may miss large, clinically important differences [21] [2].

Q3: What is the minimum recommended sample size for a method comparison study? A minimum of 40 samples is recommended, with 100 or more being preferable. A larger sample size helps identify unexpected errors and provides a more reliable estimate of bias [2].

Q4: How should samples be selected for the comparison? Samples should cover the entire clinically meaningful measurement range. They should be analyzed in a randomized sequence over multiple days (at least 5) and multiple runs to mimic real-world conditions [2].

Q5: What are the initial steps in analyzing method comparison data? The first steps are graphical analyses: creating a scatter plot to visualize the relationship and a difference plot (like a Bland-Altman plot) to assess agreement across the measurement range [2].

Troubleshooting Guides

Issue 1: Poor Agreement Between Methods

Problem: The analysis shows a significant bias or large discrepancies between the two methods.

| Investigation Step | Action & Interpretation |

|---|---|

| Check for Outliers | Examine scatter and difference plots for data points that fall far from the main cluster. These can disproportionately influence statistics [2]. |

| Review Sample Matrix | Assess if the bias is consistent or varies with concentration. Differences may be caused by matrix effects or interferences in specific sample types [2]. |

| Verify Procedure | Ensure the protocol was followed exactly for both methods, including sample preparation, calibration, and environmental conditions [22]. |

Issue 2: Inconclusive Results from Statistical Tests

Problem: The statistical analysis does not clearly show whether the methods are equivalent.

| Potential Cause | Solution |

|---|---|

| Sample Size Too Small | Increase the number of samples to at least 40, preferably 100, to improve the power of the analysis [2]. |

| Measurement Range Too Narrow | Select new samples to ensure the entire clinically relevant range is represented, providing a more comprehensive comparison [2]. |

| Pre-defined Acceptance Criteria Missing | Define an acceptable bias before the experiment based on clinical outcomes, biological variation, or state-of-the-art performance [2]. |

Experimental Protocol for Method Comparison

A robust protocol operationalizes the research design into a detailed, step-by-step plan to ensure consistency, ethics, and reproducibility [22].

1. Define the Research Question and Acceptance Criteria

- Clearly state the two methods being compared.

- Pre-specify the amount of bias that would be considered acceptable, based on clinical requirements or established performance specifications [2].

2. Participant/Sample Selection and Preparation

- Inclusion/Exclusion: Define clear criteria for the samples (e.g., disease state, analyte concentration) [22].

- Sample Size: Collect a minimum of 40 unique patient samples [2].

- Measurement Range: Ensure samples span the entire clinically meaningful range [2].

3. Data Collection Procedures

- Randomization: Analyze samples in a randomized sequence to avoid carry-over effects and systematic errors [2].

- Replication: Perform duplicate measurements for both methods to minimize the impact of random variation [2].

- Timing: Analyze all samples within a stable period (e.g., within 2 hours of sampling) and over multiple days and runs to account for routine variability [2].

4. Data Analysis Plan

- Graphical Analysis: Generate a scatter plot and a Bland-Altman difference plot [2].

- Statistical Analysis: Use regression methods designed for method comparison, such as Deming regression or Passing-Bablok regression, which account for errors in both methods [2].

The following workflow summarizes the key stages of a method comparison study:

Statistical Analysis and Data Presentation

Essential Statistical Methods

The table below summarizes the key statistical approaches for comparing methods, moving from basic to advanced.

| Method | Primary Use | Key Interpretation | Note |

|---|---|---|---|

| Scatter Plot | Visual assessment of the relationship and distribution of paired measurements [2]. | Points along the line of equality suggest good agreement. | First step to identify outliers and data gaps [2]. |

| Bland-Altman Plot (Difference Plot) | Visualizing agreement and bias across the average value of both methods [2]. | Plots the difference between methods (A-B) against their average ((A+B)/2). | Reveals if bias is constant or changes with concentration [2]. |

| Deming Regression | Estimating constant and proportional bias when both methods have measurement error [2]. | Intercept indicates constant bias; slope indicates proportional bias. | More appropriate than ordinary least squares regression [2]. |

| Passing-Bablok Regression | A non-parametric method for comparing two methods; robust against outliers [2]. | Intercept and slope indicate constant and proportional bias. | Makes no assumptions about the distribution of the data [2]. |

Visualizing Data Analysis Logic

The following diagram outlines the logical flow for analyzing and interpreting method comparison data:

The Scientist's Toolkit: Key Research Reagents & Materials

The table below lists essential components for a method comparison study, framed within the context of clinical laboratory science.

| Item | Function in the Experiment |

|---|---|

| Patient Samples | A panel of 40-100 unique samples representing the full clinical measurement range. Serves as the foundational material for the comparison [2]. |

| Reference Method | The established, currently used measurement procedure. Serves as the benchmark against which the new method is evaluated [2]. |

| New Method | The novel measurement procedure (instrument, assay, etc.) whose performance and interchangeability are being assessed [2]. |

| Quality Control Materials | Materials with known analyte concentrations. Used to verify that both methods are operating within specified performance limits during the study [2]. |

| Data Analysis Software | Software capable of generating scatter plots, Bland-Altman plots, and performing specialized regression analyses (Deming, Passing-Bablok) [2]. |

| Study Protocol Document | A detailed, step-by-step document outlining sample processing, measurement order, calibration procedures, and data recording to ensure consistency and reproducibility [22]. |

A Practical Guide to Experimental Designs for Method Comparison

Core Concepts and Methodological Framework

Randomized Controlled Trials (RCTs) represent the gold standard for evaluating interventions in clinical research and other scientific fields. They are prospective studies that measure the effectiveness of a new intervention or treatment by randomly assigning participants to either an experimental group that receives the intervention or a control group that receives an alternative treatment, placebo, or standard care [23] [24]. This random allocation is the defining characteristic that minimizes selection bias and balances both known and unknown participant characteristics between groups, allowing researchers to attribute differences in outcomes to the study intervention rather than confounding factors [23] [24].

The theoretical foundation of RCTs rests on the principle of causation – being able to demonstrate that an independent variable (the intervention) directly causes changes in the dependent variable (the outcome) [25]. Although no single study can definitively prove causality, RCTs provide the most rigorous tool for examining cause-effect relationships because randomization reduces bias more effectively than any other study design [23]. The null hypothesis in an RCT typically states that no relationship exists between the intervention and outcome, while the research hypothesis proposes that such a relationship does exist [25].

RCTs can be classified according to several dimensions. Based on study design, they include parallel-group, crossover, cluster, and factorial designs [24]. Regarding their hypothesis framework, they may be structured as superiority, noninferiority, or equivalence trials [24]. Additionally, RCTs exist on a spectrum from explanatory (testing efficacy under ideal conditions) to pragmatic (testing effectiveness in real-world settings) [26].

Advantages and Disadvantages of RCTs

Table 1: Key advantages and disadvantages of randomized controlled trials

| Advantages | Disadvantages |

|---|---|

| Minimizes bias through random allocation [23] [24] | High cost in terms of time and money [23] [25] |

| Balances both observed and unobserved confounding factors [23] [27] | Volunteer bias may limit generalizability [23] [25] |

| Enables blinding of participants and researchers [25] | Loss to follow-up attributed to treatment [25] |

| Provides rigorous assessment of causality [23] | May not be feasible or ethical for all research questions [28] [27] |

| Results can be analyzed with well-known statistical tools [25] | Strictly controlled conditions may limit real-world applicability [26] |

Essential Methodological Protocols

Core RCT Workflow

The following diagram illustrates the standard workflow for designing and conducting a randomized controlled trial:

Randomization and Blinding Techniques

Randomization constitutes the cornerstone of RCT methodology. Any of a number of mechanisms can be used to assign participants into different groups with the expectation that these groups will not differ in any significant way other than treatment and outcome [25]. Effective randomization requires concealment of allocation – ensuring that at the time of recruitment, there is no knowledge of which group the participant will be allocated to, typically accomplished through automated randomization systems such as computer-generated sequences [23].

Blinding (or masking) refers to the practice of preventing participants and/or researchers from knowing which treatment each participant is receiving [23] [25]. Different levels of blinding include:

- Single-blind: Participants do not know their treatment assignment

- Double-blind: Both participants and researchers (doctors, nurses, data collectors) are unaware of treatment assignments

- Triple-blind: Participants, researchers, and data analysts are all unaware of treatment assignments

Blinding experimentally isolates the physiological effects of treatments from various psychological sources of bias and is particularly important for subjective outcomes [24].

Analytical Approaches

RCTs can be analyzed according to different principles, each with distinct implications for interpreting results:

Intention-to-Treat (ITT) Analysis: Subjects are analyzed in the groups to which they were randomized, regardless of whether they actually received or completed the treatment [23]. This approach preserves the benefits of randomization and is often regarded as the least biased analytical method as it reflects real-world conditions where non-adherence occurs.

Per Protocol Analysis: Only participants who completed the treatment originally allocated are analyzed [23]. This method provides information about the efficacy of the treatment under ideal conditions but may introduce bias if the reasons for non-completion are related to the treatment or outcome.

All RCTs should have pre-specified primary outcomes, should be registered with a clinical trials database, and should have appropriate ethical approvals before commencement [23].

Troubleshooting Guide: Frequently Encountered Methodological Challenges

Table 2: Common RCT implementation challenges and solutions

| Challenge | Potential Impact | Recommended Solutions |

|---|---|---|

| Selection Bias [25] | Threatens internal validity; limits generalizability | Implement proper randomization with allocation concealment; use computer-generated random sequences [23] |

| Loss to Follow-up [23] [25] | Introduces attrition bias; reduces statistical power | Implement rigorous tracking procedures; collect baseline data to characterize dropouts; use statistical methods like multiple imputation |

| Protocol Deviations | Compromises treatment fidelity; introduces variability | Use standardized protocols; train staff thoroughly; implement monitoring systems; consider per-protocol analysis as supplementary |

| Unblinding | Introduces performance and detection bias | Use placebos that match active treatment; separate outcome assessors from treatment team; assess blinding success |

| Insufficient Sample Size [28] | Low statistical power; unreliable results | Conduct a priori sample size calculation; consider collaborative multi-center trials; use adaptive designs [27] |

FAQs: Addressing Methodological Questions

Q1: What is the difference between efficacy and effectiveness in the context of RCTs?

Efficacy refers to how well an intervention works under ideal, controlled conditions of an explanatory RCT, while effectiveness describes how well it works in real-world settings of a pragmatic RCT [26]. Pragmatic clinical trials (PCTs) are often conducted to evaluate whether a therapy is effective in the routine conditions of its proposed use, with the goal of improving practice and policy [26].

Q2: When might an RCT not be the appropriate study design?

RCTs may not be appropriate, ethical, or feasible for all research questions [28] [27]. Nearly 60% of surgical research questions cannot be answered by RCTs due to ethical concerns, prohibitive costs, or unrealistic large sample size requirements [28]. In these situations, well-designed observational studies may provide the best available evidence, though conclusions must be interpreted with caution [28].

Q3: How can we address the issue of generalizability in RCTs?

Generalizability can be improved by using less restrictive eligibility criteria that better represent the target population, conducting trials in diverse real-world settings (pragmatic trials), and using cluster randomization when appropriate [26]. Large simple trials with streamlined protocols and minimal exclusion criteria can also enhance external validity while maintaining internal validity [26].

Q4: What are the ethical considerations specific to RCTs?

Key ethical considerations include ensuring clinical equipoise (genuine uncertainty within the expert medical community about the preferred treatment), obtaining truly informed consent that addresses therapeutic misconception, and determining when placebo controls are appropriate [24]. The principle of equipoise is common to clinical trials but may be difficult to ascertain in practice [24].

Q5: How are emerging technologies influencing RCT methodologies?

Electronic health records (EHRs) are facilitating RCTs conducted within real-world settings by enabling efficient patient recruitment and outcome assessment [27]. Adaptive trial designs that allow for predetermined modifications based on accumulating data are increasing flexibility and efficiency [27]. Model-Informed Drug Development (MIDD) approaches use quantitative modeling and simulation to optimize trial designs and support regulatory decision-making [29].

The Researcher's Toolkit: Essential Methodological Components

Table 3: Key methodological components for rigorous RCT implementation

| Component | Function | Implementation Considerations |

|---|---|---|

| Randomization Scheme | Eliminates selection bias; balances known and unknown confounders [23] [24] | Computer-generated sequences; block randomization; stratification for key prognostic factors |

| Blinding Procedures | Reduces performance and detection bias [23] [25] | Matching placebos; separate personnel for treatment and assessment; blinding success assessment |

| Sample Size Calculation | Ensures adequate statistical power to detect clinically important effects [23] | A priori calculation based on primary outcome; account for anticipated attrition; consider minimal clinically important difference |

| Allocation Concealment | Prevents selection bias by concealing group assignment until enrollment [23] | Central telephone/computer system; sequentially numbered opaque sealed envelopes |

| Data Safety Monitoring Board | Protects participant safety and trial integrity | Independent experts; predefined stopping rules; interim analysis plans |

| Trial Registration | Reduces publication bias; promotes transparency [24] | Register before enrollment begins on platforms like ClinicalTrials.gov; publish protocols |

| Standardized Operating Procedures | Ensures consistency and protocol adherence across sites and personnel | Detailed manuals for all procedures; training certification; ongoing quality control |

Innovations and Future Directions in RCT Methodology

Recent methodological innovations are expanding the capabilities and applications of RCTs:

Adaptive Trial Designs: These include scheduled interim looks at the data during the trial, leading to predetermined changes based on accumulating data while maintaining trial validity and integrity [27]. This approach can make trials more flexible, efficient, and ethical.

Platform Trials: These focus on an entire disease or syndrome to compare multiple interventions and add or drop interventions over time [27]. This is particularly valuable for rapidly evolving treatment landscapes.

Sequential Trials: In this approach, subjects are serially recruited and study results are continuously analyzed, allowing the trial to stop once sufficient data regarding treatment effectiveness has been collected [27].

Integration with Real-World Data: The development of EHRs and access to routinely collected clinical data has enabled RCTs that leverage these resources for patient recruitment and outcome assessment with minimal patient contact [27].

These innovations represent a paradigm shift in how RCTs are planned and conducted, offering opportunities to increase efficiency while maintaining scientific rigor. As these methodologies continue to evolve, researchers must stay informed about emerging best practices to optimize their experimental designs.

Troubleshooting Guide: FAQs on Pre-Post and Non-Equivalent Groups Designs

FAQ 1: My pre-post study with a single group showed a significant effect, but my colleagues are concerned about confounding variables. What are the main threats to validity I should consider?

The one-group pretest-posttest design has significant limitations that may render the results difficult to interpret [30]. Key threats to internal validity include:

- History: External events between pretest and posttest can influence outcomes [30]. For example, in a study on high-intensity training for weight loss, participants might simultaneously use a new dietary supplement promoted on social media, confounding the results [30].

- Maturation: Natural changes in participants over time can affect results [30]. In the weight loss example, high-intensity training may increase muscle mass and body weight independently of fat loss, potentially skewing results if not properly accounted for [30].

- Regression to the mean: Participants with extreme initial measurements often naturally move toward average values in subsequent measurements [30]. If your initial sample had unusually high or low pretest scores, observed changes might reflect this statistical phenomenon rather than true intervention effects [30].

- Instrumentation: Changes in measurement tools or procedures between pre- and post-testing can introduce bias [31].

FAQ 2: When using non-equivalent control groups, what steps can I take to strengthen causal inferences about my intervention's effect?

When random assignment isn't feasible, several strategies can enhance your design:

- Pretest similarity: Ensure groups have similar mean scores on pretests and comparable demographic characteristics [30]. Statistical tests (p-value > .05) can confirm this similarity [30].

- Multiple control groups: Using several control groups helps rule out specific confounding variables [31].

- Switching replication: Implement the treatment with the control group after initial testing while removing it from the original treatment group [31]. Demonstrating a treatment effect in two groups staggered over time provides stronger evidence for causality [31].

- Statistical adjustment: Use ANCOVA to adjust for baseline differences between groups, which typically provides more precise effect estimates than simple change scores [32].

FAQ 3: What statistical approaches are most appropriate for analyzing pre-post data with non-equivalent groups?

Several statistical methods can be employed, each with different strengths:

- ANCOVA-POST: Models the post-treatment score as the outcome while adjusting for pre-treatment measurements [32]. This approach generally provides the most precise estimates when pre-treatment measures are similar between groups [32].

- ANCOVA-CHANGE: Models the change score as the outcome while still adjusting for baseline values [32]. This method allows assessment of whether change occurred in individual treatment groups [32].

- Generalized Synthetic Control Method (GSC): When data for multiple time points and control groups are available, this data-adaptive method can account for rich forms of unobserved confounding and relax the parallel trend assumption [33].

FAQ 4: How can I troubleshoot unexpected results or method failures in my quasi-experimental study?

Method failures requiring troubleshooting are a natural part of scientific inquiry [34]. Effective troubleshooting involves:

- Clearly define the problem: Articulate what was expected versus what was observed, examine collected data for patterns, and validate your experimental design against established literature [34].

- Analyze the design: Assess whether appropriate control groups were included, if sample size was sufficient, whether randomization was properly implemented, and if data collection methods were appropriate [34].

- Consider external variables: Environmental conditions, timing of experiments, and biological variability can all contribute to unexpected results [34].

- Implement changes systematically: Develop detailed standard operating procedures, strengthen control measures, increase sample sizes if needed, and improve data collection techniques [34].

Comparison of Quasi-Experimental Designs

Table 1: Features of Different Quasi-Experimental Designs

| Design Type | Data Requirements | Key Strengths | Key Limitations | Appropriate Statistical Methods |

|---|---|---|---|---|

| Pre-Post (Single Group) | Two time periods (before & after intervention) [33] | Simple implementation; requires minimal resources [35] | Vulnerable to history, maturation, regression to mean [30] | Paired t-test; McNemar's test [32] |

| Interrupted Time Series (ITS) | Multiple measurements before & after intervention [33] | Controls for stable baseline trends; models temporal patterns [33] | Requires many time points; vulnerable to coincidental interventions [33] | Segmented regression; autoregressive models [33] |

| Posttest Only with Nonequivalent Groups | One post-intervention measurement from treatment & control groups [31] | Provides comparison group when pretest not feasible [30] | Cannot verify group similarity; vulnerable to selection bias [30] | Independent t-test; ANOVA-POST [32] |

| Pretest-Posttest with Nonequivalent Groups | Pre & post measurements from both treatment & control groups [31] | Checks group similarity at baseline; controls for some confounding [30] | Groups may differ on unmeasured variables; differential history threat [31] | ANCOVA-POST; ANCOVA-CHANGE [32] |

| Interrupted Time Series with Nonequivalent Groups | Multiple measurements before & after in both groups [31] | Strong causal inference; controls for secular trends & many threats [33] | Data intensive; requires comparable control group with similar measurements [33] | Controlled interrupted time series; difference-in-differences [33] |

Table 2: Performance Comparison of Analytical Methods for Pre-Post Data

| Analytical Method | Variance of Treatment Effect | Appropriate Applications | Advantages | Limitations |

|---|---|---|---|---|

| ANOVA-POST | Higher variance as it doesn't adjust for baseline [32] | When pre-post correlation is low; randomized studies with minimal baseline imbalance [32] | Simple to implement and interpret [32] | Less precise; sensitive to baseline imbalance [32] |

| ANOVA-CHANGE | Moderate variance [32] | When interest is specifically in change scores; preliminary analysis [32] | Directly addresses change; intuitive interpretation [32] | Less efficient than ANCOVA; can be biased with baseline imbalance [32] |

| ANCOVA-POST | Lower variance due to adjustment for baseline [32] | Most applications, especially when pre-post correlation is moderate to high [32] | Maximizes power and precision; unbiased with proper randomization [32] | Can be biased with substantial baseline imbalance (Lord's Paradox) [32] |

| ANCOVA-CHANGE | Similar variance to ANCOVA-POST [32] | When assessing group-specific change patterns is important [32] | Combines benefits of change scores with covariate adjustment [32] | Less commonly used; limited software implementation [32] |

| Generalized SCM | Minimal bias in multiple-group, multiple-time-point settings [33] | When data for multiple control groups and time points are available [33] | Accounts for unobserved confounding; relaxes parallel trends assumption [33] | Complex implementation; requires specialized software [33] |

The Scientist's Toolkit: Key Research Design Solutions

Table 3: Essential Methodological Approaches for Quasi-Experimental Designs

| Methodological Approach | Function | Application Context |

|---|---|---|

| Matched-Pairs Design | Controls extraneous variables by matching participants on key variables before assignment to intervention/control [35] | Randomized controlled trials where specific confounding variables are known [35] |

| Repeated-Measures Design | Measures same participants multiple times to control for between-subject variability [35] | When participant matching on all relevant variables isn't feasible [35] |

| Crossover Design | Participants act as their own controls by receiving both intervention and control in different periods [35] | When carryover effects can be minimized or accounted for [35] |

| Switching Replication | Provides built-in replication by staggering intervention across groups [31] | When ethical to delay treatment for some participants; strengthens causal inference [31] |

| Fallback Strategies | Alternative approaches when primary analytical method fails [36] | When encountering convergence problems or other methodological failures [36] |

Experimental Workflows and Methodologies

Quasi-Experimental Design Selection Workflow

Experimental Design Troubleshooting Process

FAQs: Core Concepts and Design Selection

Q1: What is the fundamental difference between a Factorial and a SMART design?

The table below summarizes the core differences in their purpose, structure, and application.

Table 1: Comparison of Factorial and SMART Designs

| Feature | Factorial Design | SMART Design |

|---|---|---|

| Primary Goal | To evaluate the individual and interactive effects of multiple intervention components simultaneously. [37] | To build an optimal adaptive intervention—a sequence of decision rules that guides how to alter treatment over time. [38] [39] |

| Structure | Participants are randomized to one of several combinations of factors (e.g., A+B+, A+B-, A-B+, A-B-). | Participants are randomized at two or more decision points. Later randomizations can depend on the patient's response to prior treatment. [38] |

| Key Question | "What is the effect of each component, and do they interact?" | "What is the best treatment to start with, and what is the best next-step for responders/non-responders?" [38] |

Q2: When should a researcher choose a SMART design over a more traditional trial?

A SMART design is the appropriate choice when your research goal involves optimizing a sequence of treatment decisions, especially when there is expected heterogeneity in how individuals respond to an initial treatment. [38] [39] This is common in managing chronic conditions like obesity, substance use, or depression, where a single, static treatment is insufficient for all patients. [39] If your question is simply about the efficacy of a single treatment or the combined effect of multiple components at one point in time, a factorial or standard multi-arm trial may be more suitable. [37]

Q3: Can SMART designs be used in cluster-randomized trials (cRCTs)?

Yes, recent research indicates that adaptive designs like SMART can be applied to cluster-randomised controlled trials (cRCTs), even those with a limited number of clusters. [37] However, feasibility is influenced by the intra-cluster correlation coefficient (ICC). A high ICC can increase the risk of incorrect interim decisions (e.g., dropping the most effective arm) due to reduced effective sample size and power. [37] Bayesian hierarchical models are often used to analyse these trials because they can provide valuable insights with fewer participants and clusters. [37]

Q4: Does conducting a SMART eliminate the need for a subsequent definitive randomized controlled trial (RCT)?

Generally, no. A common misconception is that a SMART provides definitive evidence of an adaptive intervention's effectiveness. The primary objective of a SMART is to construct a high-quality adaptive intervention. Following a SMART, researchers often decide to evaluate the resulting adaptive intervention in a confirmatory, randomized trial. [38]

Troubleshooting Guides: Implementation Challenges and Solutions

Challenge 1: Low Power or Incorrect Interim Decisions in Cluster-Randomized SMARTs

Problem: Interim analyses in an adaptive cRCT with few clusters incorrectly drop a promising intervention arm, or the trial lacks the power to detect meaningful effects. [37]

Solutions:

- Simulation Study: Before the trial, conduct a simulation study to assess operating characteristics (power, Type I error) and the risk of incorrect interim decisions under various scenarios (e.g., different ICCs, cluster sizes). [37]

- Bayesian Methods: Utilise Bayesian hierarchical models for interim and final analyses, as they can perform better than frequentist methods when the number of clusters is small. [37]

- Design Parameter Adjustment: Consider adjusting design parameters. For example, the timing of the interim analysis can be calibrated to ensure sufficient data from clusters is available for reliable decision-making. [37]

Challenge 2: Defining Tailoring Variables and Response Status

Problem: Poorly defined tailoring variables (the measures used to guide treatment adaptation) lead to ambiguous or suboptimal treatment decisions. [39]

Solutions:

- Distinguish from Research Assessments: Tailoring variables should generally be assessments feasible for use in routine clinical practice, not just comprehensive research batteries. [38]

- Use Evidence-Based Cut-Points: Base definitions of "response" and "non-response" on prior empirical research and clinical expertise. For instance, in a weight loss study, non-response could be defined as losing less than 5 pounds after 5 weeks, a cutoff supported by previous studies. [39]

- Consider Baseline and Intermediate Variables: Tailoring can use both baseline tailoring variables (e.g., patient comorbidities) collected before the first stage and intermediate tailoring variables (e.g., early adherence or symptom change) collected during a stage. [39]

Challenge 3: Interpreting Results from a SMART

Problem: Misinterpreting the outcome of a SMART as a direct test of an adaptive intervention's effectiveness, rather than a construction tool.

Solutions:

- Analyze Embedded Adaptive Interventions: The primary analysis should compare the "embedded adaptive interventions" (i.e., the complete sequences of decision rules) that are represented within the SMART design. [38] For example, a prototypical SMART with two initial treatments and two secondary treatments for non-responders embeds four distinct adaptive interventions. [38]

- Avoid Causal Bias: Use appropriate statistical methods that account for the effects of interventions across multiple stages to avoid causal bias. [38]

Experimental Protocols

Protocol 1: Prototypical Two-Stage SMART for a Behavioral Intervention

This protocol outlines a standard SMART design for building an adaptive behavioral intervention, such as for weight loss. [39]

Objective: To construct an adaptive intervention for weight loss that begins with Individual Behavioral Therapy (IBT) and adapts for early non-responders.

Stage 1 (Months 1-2):

- Randomization: All participants are randomized to receive either Short-duration IBT (5 weekly sessions) or Long-duration IBT (10 weekly sessions).

- Intervention: Deliver the assigned IBT program.

- Assessment: At the end of Stage 1, assess the primary tailoring variable: weight change from baseline.

Decision Point:

- Responder: A participant who loses ≥5 lbs. [39]

- Non-responder: A participant who loses <5 lbs.

Stage 2 (Months 3-6):

- Responders: Continue with their assigned IBT program.