Quantitative Bias Analysis: A Practical Guide to Strengthening Observational Studies and Real-World Evidence

This article provides a comprehensive guide to Quantitative Bias Analysis (QBA) for researchers and drug development professionals utilizing observational studies and real-world evidence.

Quantitative Bias Analysis: A Practical Guide to Strengthening Observational Studies and Real-World Evidence

Abstract

This article provides a comprehensive guide to Quantitative Bias Analysis (QBA) for researchers and drug development professionals utilizing observational studies and real-world evidence. It covers foundational concepts, explaining why QBA is crucial for quantifying systematic error beyond simple identification. The piece details core methodological approaches—from simple deterministic to probabilistic analyses—and their practical application in real-world scenarios, such as constructing external control arms. It further offers strategies for troubleshooting common challenges like missing data and unmeasured confounding and guides validating QBA findings and comparing them against traditional methods. By synthesizing current methodologies, software tools, and regulatory perspectives, this article serves as a primer for integrating QBA to enhance the rigor, transparency, and credibility of non-randomized study designs.

Beyond the Limitation: Why Quantitative Bias Analysis is Essential for Robust Observational Research

In scientific research, systematic error, often referred to as bias, is a consistent or proportional difference between observed values and the true values of what is being measured [1]. Unlike random error, which introduces unpredictable variability and affects precision, systematic error skews data in a specific direction, thereby compromising the accuracy of measurements and leading to flawed conclusions about relationships between variables [1] [2]. This distortion can cause both false positive (Type I) and false negative (Type II) conclusions, making systematic error a more critical threat to research validity than random error [1]. Within the context of observational studies and method comparison experiments, systematic error manifests primarily through confounding, selection bias, and information bias, each representing a distinct mechanism that can invalidate study findings if not properly identified and addressed [3] [4] [2].

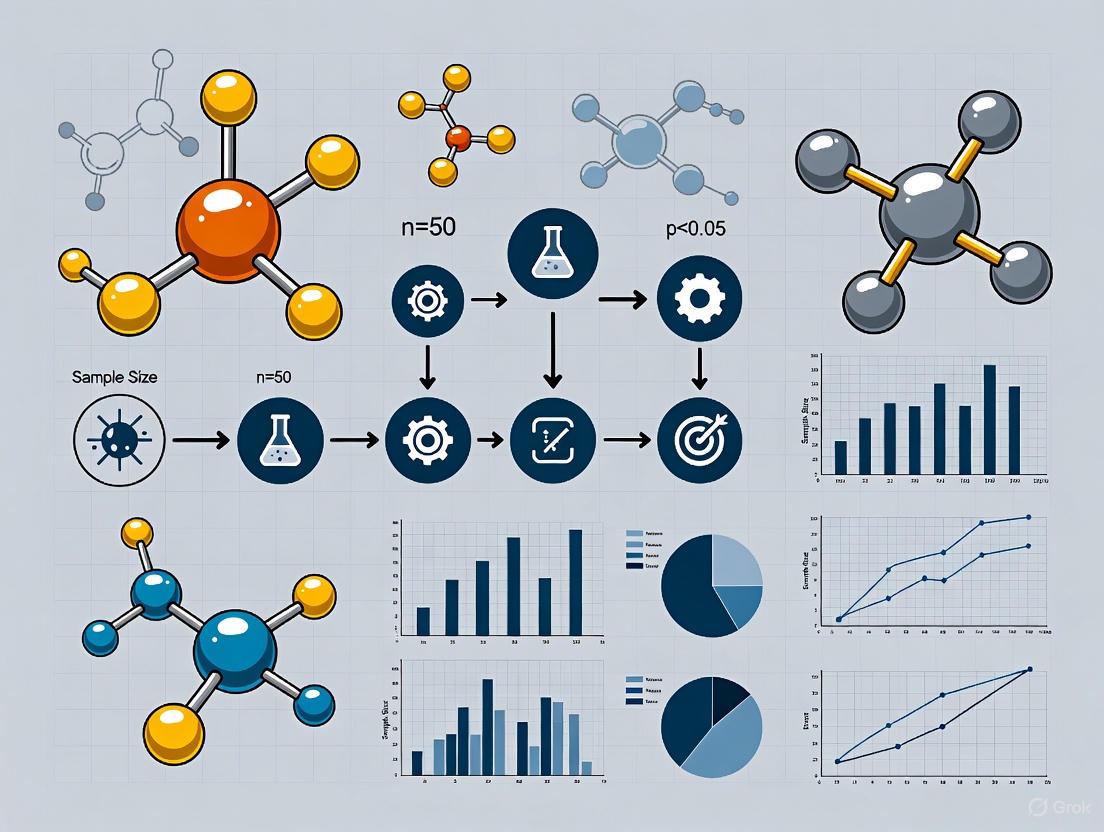

The following diagram illustrates the fundamental relationship between these core concepts of systematic error and the modern approach to addressing them.

Defining the Core Types of Systematic Error

Confounding

Confounding occurs when the observed association between an exposure and an outcome is distorted, either exaggerated or masked, by the presence of an extraneous variable, known as a confounder [2]. For a variable to be a confounder, it must meet three specific criteria, as detailed in the table below [2].

Table 1: Criteria and Mechanisms of Confounding

| Criterion | Description | Example |

|---|---|---|

| Risk Factor for Disease | The variable must be an independent risk factor for the outcome. | In a study on alcohol and heart disease, smoking must cause heart disease. |

| Associated with Exposure | The variable must be statistically associated with the primary exposure. | Smoking must be more common among drinkers than non-drinkers. |

| Not a Causal Intermediate | The variable must not lie on the causal pathway from exposure to outcome. | The disease must not cause the confounder; the confounder must be a separate factor. |

Confounding can produce either positive confounding, which biases the observed association away from the null, making an effect appear larger than it truly is, or negative confounding, which biases the association toward the null, obscuring a real effect [2]. The direction and magnitude of this bias depend on the uneven distribution of the confounder between the study groups being compared [3].

Selection Bias

Selection bias is a systematic error that arises from the procedures used to select or retain participants in a study, leading to a sample that is not representative of the target population [4] [2]. This bias introduces a systematic difference between the participants who are included in the study and those who are not, which can distort the estimated association between exposure and outcome [3] [4]. Selection bias can affect the generalizability of results (external validity) and the comparability of study groups (internal validity) [3]. It is a particular risk in case-control studies where controls are not representative of the population that produced the cases, and in cohort studies where there is differential loss to follow-up [3] [2].

Table 2: Common Types of Selection Bias

| Type of Bias | Common in Study Designs | Mechanism |

|---|---|---|

| Sampling Bias | Cross-sectional studies, cohort studies [4] | Selecting a non-representative sample of the source population, often due to low response rates [4]. |

| Confounding by Indication | Non-randomized intervention studies [4] | A physician's treatment decision is based on patient prognosis, introducing unmeasured confounders [4]. |

| Incidence-Prevalence Bias (Survivor Bias) | Cross-sectional studies, cohort using prevalent cases [4] | Over-representation of long-term survivors in a study, who may have different characteristics [4]. |

| Attrition Bias | Randomized controlled trials (RCTs), prospective cohorts [4] | Differential loss of participants from study groups, related to both exposure and outcome [3] [4]. |

| Healthy Worker Effect | Occupational cohort studies [3] | Employed populations are generally healthier than the external comparison population, which includes those unfit to work [3]. |

Information Bias

Information bias, also known as misclassification bias, is a systematic error that occurs during data collection due to inaccurate measurement or classification of disease, exposure, or other variables [4] [2]. This bias can affect the observed association between exposure and outcome by misrepresenting the true status of participants. A key distinction in information bias is whether the misclassification is differential or non-differential [5] [2].

- Non-differential misclassification occurs when the probability of misclassification is the same across all study groups (e.g., for both cases and controls, or exposed and unexposed) [2]. This type of error tends to bias the measure of association toward the null, making a real effect harder to detect [5].

- Differential misclassification occurs when the probability of misclassification differs between study groups [2]. This can bias the effect estimate either away from the null or toward the null, and is generally considered a more serious flaw [5].

Table 3: Types and Sources of Information Bias

| Type of Bias | Mechanism | Prevention Strategies |

|---|---|---|

| Recall Bias [3] [4] | Cases and controls recall past exposures with differential accuracy [3] [4]. | Use of medical records; blinding participants to hypothesis [3]. |

| Interviewer/Observer Bias [3] [4] | The interviewer or observer influences data collection based on knowledge of participant's status or the hypothesis [3] [4]. | Blinding of interviewers/observers; standardized protocols and calibrated instruments [3]. |

| Social Desirability/ Reporting Bias [3] | Participants over-report positive or under-report undesirable behaviors [3]. | Anonymous data collection; careful questionnaire design. |

| Instrument Bias [3] | An inadequately calibrated instrument systematically over/underestimates measurements [3]. | Regular calibration of instruments [3] [1]. |

Quantitative Bias Analysis: A Modern Framework

Quantitative Bias Analysis (QBA) is a suite of statistical methods designed to quantify the potential impact of biases, such as confounding, selection bias, and information bias, on a study's results [6]. Instead of merely acknowledging bias as a limitation, QBA allows researchers to assess how sensitive their conclusions are to potential systematic errors [7] [8] [6]. The core principle involves using bias parameters—values that represent assumptions about the bias—to model and adjust the observed effect estimate [6].

QBA methods can be broadly classified into two categories, each with a specific purpose and workflow, as visualized below.

- Deterministic QBA: This approach uses one or more fixed values for each bias parameter. Simple bias analysis uses a single value for each parameter to produce one bias-adjusted estimate. Multidimensional bias analysis uses multiple values for each parameter to see how the adjusted estimate varies across a range of scenarios. A particularly useful form is the tipping point analysis, which identifies how severe a bias would need to be to change a study's conclusions (e.g., from significant to non-significant) [6].

- Probabilistic QBA: This more advanced approach assigns probability distributions to the bias parameters, which allows researchers to account for uncertainty about the exact values of these parameters. Monte Carlo bias analysis involves repeatedly sampling values from these distributions and calculating a bias-adjusted estimate for each sample. Bayesian bias analysis formally combines prior distributions for the bias parameters with the observed data. Both methods result in a distribution of bias-adjusted estimates that can be summarized with an uncertainty interval [6].

The application of QBA is demonstrated in real-world research. A 2025 study on inhaled corticosteroids and COVID-19 outcomes in COPD patients used QBA to quantify potential selection bias from unobserved patients who died outside the hospital. The analysis tested four scenarios with different assumed death rates in non-hospitalized groups, finding that the odds ratio remained statistically unchanged, suggesting the conclusions were robust to this potential bias [8]. Another 2025 study emulating single-arm trials with external control arms for lung cancer treatments used QBA to adjust for unmeasured confounding. The difference between the log hazard ratios from the original trial and the emulation was reduced from 0.247 to 0.098 after QBA, highlighting its utility in improving the validity of non-randomized comparisons [7].

Experimental Protocols for Bias Assessment

The Comparison of Methods Experiment

The comparison of methods experiment is a critical study design used to estimate the systematic error, or inaccuracy, of a new (test) method against a comparative method [9]. The purpose is to quantify systematic differences using real patient specimens, providing data that can be analyzed to judge the method's acceptability [9].

- Comparative Method Selection: An ideal comparative method is a reference method with documented correctness. When using a routine method, differences must be interpreted with caution, as they may reflect errors in either method [9].

- Specimen Requirements: A minimum of 40 patient specimens is recommended, selected to cover the entire working range of the method. The quality and range of specimens are more important than a large number. To assess specificity, 100-200 specimens may be needed [9].

- Experimental Procedure: Specimens should be analyzed by both methods within a short time frame (e.g., two hours) to ensure stability. The experiment should be conducted over several different analytical runs, with a minimum of 5 days recommended, to minimize systematic errors from a single run. Duplicate measurements are advised to identify mistakes or outliers [9].

- Data Analysis Protocol:

- Graph the Data: Create a difference plot (test result minus comparative result vs. comparative result) or a comparison plot (test result vs. comparative result) to visually inspect for patterns, outliers, and systematic errors [9].

- Calculate Statistics: For data covering a wide analytical range, use linear regression to obtain the slope (b) and y-intercept (a) of the line of best fit. The systematic error (SE) at a critical medical decision concentration (Xc) is calculated as:

Yc = a + b * XcSE = Yc - Xc[9]. - Interpret Results: The calculated systematic error at decision points should be compared to medically acceptable limits to judge the method's suitability [9].

Protocol for Controlling Confounding in Study Design

Addressing confounding begins at the design stage of a study through several key techniques [2].

- Randomization: Randomly assigning participants to exposure groups, as in Randomized Controlled Trials (RCTs), is the most effective method. It balances both known and unknown confounders across groups, making the groups comparable [4] [2].

- Restriction: The study can be restricted to only include participants of a certain category of the confounder (e.g., only non-smokers). This eliminates variability in that confounder but can reduce the generalizability of the results and limits the ability to study that factor [2].

- Matching: In case-control studies, controls can be selected to match the cases on the distribution of the confounder (e.g., age, sex). This ensures comparability between cases and controls for the matched variables [2].

Table 4: Key Reagents and Resources for Bias Analysis

| Tool / Resource | Function / Description | Example Use Case |

|---|---|---|

| Calibrated Instruments [3] | Measurement tools that are regularly calibrated against a known standard to reduce instrument bias. | Using a calibrated sphygmomanometer to ensure accurate blood pressure readings across study groups [3]. |

| Standardized Protocols & Questionnaires [3] | Pre-defined, consistent procedures and data collection instruments to minimize observer and interviewer bias. | Ensuring all interviewers ask the same questions in the same order and tone to prevent leading participants [3]. |

| Blinding (Masking) [3] [1] | A procedure where investigators, participants, and/or outcome assessors are kept unaware of group assignments or the study hypothesis. | In an RCT, blinding outcome assessors to whether a participant received the drug or placebo to prevent detection bias [3]. |

| Software for QBA [6] | Specialized statistical software packages that implement quantitative bias analysis methods. | Using R or Stata tools to perform a probabilistic bias analysis for misclassification of a binary exposure [6]. |

| Validation Data [6] | Ancillary data from internal or external validation studies used to inform bias parameters in QBA. | Using a sub-study with gold-standard exposure measurements to estimate sensitivity and specificity for a main study using a proxy measure [6]. |

Systematic error in its primary forms—confounding, selection bias, and information bias—represents a fundamental challenge to the validity of observational research and method comparison studies. Confounding distorts associations through extraneous variables, selection bias arises from non-representative participant selection, and information bias stems from inaccurate measurement. While robust study design remains the first line of defense, the framework of Quantitative Bias Analysis provides a powerful, quantitative approach to move beyond qualitative caveats. By formally modeling the potential impact of these biases, QBA allows researchers and drug development professionals to assess the robustness of their findings and present a more transparent and complete picture of a study's uncertainty, ultimately leading to more reliable scientific evidence.

In observational research, the traditional approach to addressing systematic error has largely been qualitative. Researchers typically identify potential biases—such as confounding, selection bias, and information bias—in their study's limitations section, with a narrative description of how these biases might influence results [10]. While acknowledging biases is crucial, this qualitative approach fails to answer critical questions: How much could a specific bias alter the effect estimate? Would it change the study's conclusions? This limitation is particularly critical in drug development and comparative effectiveness research, where decisions about therapeutic safety and efficacy demand precise understanding of uncertainty [11] [12].

Quantitative Bias Analysis (QBA) represents a paradigm shift, moving from merely identifying biases to formally quantifying their potential magnitude and direction. QBA provides quantitative estimates of how systematic errors might affect observed associations, offering a more rigorous framework for interpreting observational study findings [10]. This shift is especially valuable for regulatory science and pharmaceutical research, where observational studies using real-world data increasingly inform decisions when randomized trials are impractical, unethical, or insufficient [13].

The Methodology of Quantitative Bias Analysis

Core Concepts and Definitions

QBA addresses systematic error, distinct from random error, which does not decrease with increasing study size [10]. The most common sources include:

- Confounding: Bias from the mixing of exposure-outcome effects with other outcome-affecting factors.

- Selection Bias: Bias from selection procedures, participation factors, or differential loss to follow-up.

- Information Bias: Systematic errors in measuring exposures, outcomes, or confounders [10].

Hierarchical Approaches to QBA

QBA methods exist on a spectrum of sophistication, allowing researchers to select approaches matching their analytical needs and available data.

Table 1: Hierarchy of Quantitative Bias Analysis Methods

| Method Type | Parameter Specification | Output | Key Applications |

|---|---|---|---|

| Simple Bias Analysis | Single values for bias parameters | Single bias-adjusted estimate | Initial, straightforward assessment of a single bias source [10] |

| Multidimensional Bias Analysis | Multiple sets of bias parameters | Set of bias-adjusted estimates | Contexts with uncertainty about parameter values [10] |

| Probabilistic Bias Analysis | Probability distributions around bias parameters | Frequency distribution of revised estimates | Incorporating maximum uncertainty; modeling combined effects of multiple biases [10] |

Experimental Protocols and Implementation

Step-by-Step QBA Implementation Guide

Implementing QBA requires a structured approach to ensure valid and interpretable results:

Determine the Need for QBA: QBA is particularly valuable when study results contradict established literature, when causal inference is an explicit goal, or when concerns about specific systematic errors exist despite rigorous design [10]. Directed Acyclic Graphs (DAGs) can help identify and communicate hypothesized bias structures [10].

Select Biases to Address: Prioritization should align with study goals—whether to broadly assess all potential biases or conduct an in-depth evaluation of the most concerning ones [10].

Select a Modeling Method: Choose an approach balancing computational complexity with the potential impact of bias, considering whether summary-level or individual-level data is available [10].

Identify Sources for Bias Parameters: Bias parameters (e.g., sensitivity/specificity for measurement error, participation rates for selection bias) are never known with certainty and must be estimated from validation studies, external literature, or expert opinion [10].

Applied Example: Misclassification of Obesity Status

A 2024 study demonstrated QBA implementation using the web tool Apisensr to correct for exposure misclassification in the relationship between obesity and diabetes [14].

Experimental Protocol:

- Data Source: National Health and Nutrition Examination Survey (NHANES) data on adults aged 18-79 years.

- Measures: Compared obesity defined by self-reported BMI versus measured BMI, calculating bias parameters (sensitivity, specificity) across demographic strata.

- QBA Application: Used Apisensr to adjust prevalence odds ratios for diabetes, inputting bias parameters to correct misclassification [14].

Key Findings: The relationship between obesity and diabetes was consistently underestimated using self-reported BMI across all demographic groups. For instance, in non-Hispanic White men aged 40-59 years, the prevalence odds ratio increased from 3.06 (95% CI: 1.78, 5.30) using self-report to 4.11 (95% CI: 2.56, 6.75) after QBA adjustment [14].

The Researcher's Toolkit: Essential Tools for QBA

Table 2: Key Research Reagent Solutions for Quantitative Bias Analysis

| Tool Name | Type/Platform | Primary Function | Key Features |

|---|---|---|---|

| Apisensr | Web-based Shiny app | QBA for misclassification, selection bias, unmeasured confounding | No programming or statistical software required; slider bars to explore parameter values; sample data for training [14] |

| R package episensr | R statistical package | Comprehensive QBA implementation | Equivalent to Stata's episens; full flexibility for custom analyses [14] |

| Bayesian Data Augmentation | Statistical methodology | Multiple imputation of unmeasured confounders | Flexible handling of non-proportional hazards data; valid under proportional hazards violation [15] |

| ROBINS-E Tool | Quality assessment tool | Risk Of Bias in Non-randomized Studies - of Exposures | Standardized framework for assessing bias in systematic reviews [16] |

Advanced Applications in Pharmaceutical Research

Addressing Unmeasured Confounding in Indirect Treatment Comparisons

A 2025 study highlighted QBA for unmeasured confounding in Indirect Treatment Comparisons (ITCs), which are increasingly used to demonstrate relative efficacy of novel therapies when head-to-head randomized trials are unavailable [15].

Experimental Protocol:

- Challenge: Standard QBA methods require proportional hazards assumptions often violated in immunotherapy studies.

- Novel Method: Simulation-based QBA using Bayesian data augmentation to impute unmeasured confounders with user-specified characteristics.

- Outcome Measure: Focused on difference in Restricted Mean Survival Time (dRMST), valid under non-proportional hazards.

- Implementation: Multiple imputation of unmeasured confounder followed by weighted analysis using imputed values [15].

This approach enables "tipping point" analyses—identifying characteristics of an unmeasured confounder that would nullify study conclusions—providing crucial sensitivity analyses for regulatory decision-making [15].

Quantitative Selection Bias Analysis in COVID-19 Studies

A 2025 pharmacoepidemiologic study quantified selection bias in COVID-19 treatment studies using QBA [8].

Experimental Protocol:

- Research Question: Effect of inhaled corticosteroids on COVID-19 death in COPD patients, restricted to hospitalized cohorts.

- Bias Concern: Potential selection bias from excluding severe COVID-19 patients who died outside hospital.

- QBA Method: Implemented multiple scenarios varying assumed death rates among non-hospitalized patients.

- Analysis: Calculated bias-adjusted odds ratios across different selection probability scenarios [8].

Findings: Quantitative bias analysis revealed that death rates in non-hospitalized patients would need to be substantially different between treatment groups to change study conclusions, providing valuable context for interpreting the null findings [8].

Comparative Analysis: Traditional vs. Quantitative Approaches

Visualization of the QBA Workflow

The following diagram illustrates the structured process of implementing quantitative bias analysis, from initial assessment to interpretation of adjusted results:

Impact Assessment Across Methodologies

Table 3: Qualitative Versus Quantitative Bias Assessment Comparison

| Assessment Aspect | Traditional Qualitative Approach | Quantitative Bias Analysis |

|---|---|---|

| Bias Description | Narrative discussion of potential direction and magnitude | Mathematical modeling of bias parameters and their effects |

| Output | Qualitative statements about possible influence | Quantitative, bias-adjusted effect estimates with uncertainty intervals |

| Interpretation | Subjective judgment of bias impact | Objective assessment of whether biases would change study conclusions |

| Regulatory Utility | Limited for decision-making | Provides evidence of robustness to systematic error |

| Tool Support | Limited to checklists (e.g., ROBINS-E) [16] | Specialized software (Apisensr, episensr) and statistical packages [14] |

| Application in Drug Development | Typically satisfies minimal requirements for discussion | Can provide supporting evidence for regulatory submissions [13] |

The critical shift from identifying to quantifying bias represents maturing methodological standards in observational research. While QBA methods have existed for decades, recent developments in accessible tools like Apisensr and sophisticated methods for complex scenarios are accelerating adoption [14] [15]. For drug development professionals and regulatory scientists, QBA offers a more rigorous framework for assessing the robustness of observational study findings—particularly important as real-world evidence plays an expanding role in therapeutic evaluation [13].

The fundamental advantage of QBA lies in transforming speculative discussions about bias into transparent, quantifiable assessments of its potential impact. As regulatory science advances, with projects like the FDA's development of QBA decision trees [13], the research community's adoption of these methods will be crucial for generating reliable evidence from observational studies and strengthening causal inference in the absence of randomization.

Quantitative Bias Analysis (QBA) represents a critical methodological approach in observational research, providing structured tools to quantify the direction, magnitude, and uncertainty caused by systematic errors [10]. Unlike random error, which decreases with increasing study size, systematic error constitutes a fundamental threat to validity that persists regardless of sample size [10]. QBA methods allow researchers to move beyond speculative discussions of limitations by quantitatively assessing how biases might affect study findings, thereby strengthening causal inference in non-randomized studies [17].

The application of QBA is particularly valuable in drug development and epidemiological research, where observational studies using external control arms and real-world evidence are increasingly submitted to regulatory and health technology assessment agencies [18]. These analyses are vulnerable to systematic errors, and QBA provides a framework to evaluate their potential impact quantitatively rather than merely acknowledging them as qualitative limitations [19].

Classification of QBA Methods

QBA methods can be classified into several distinct approaches, each with different requirements for bias parameter specification and output characteristics [20]. These methods form a hierarchy of increasing sophistication in how they handle parameter uncertainty and multiple biases.

Table 1: Classification of Quantitative Bias Analysis Methods

| Classification | Assignment of Bias Parameters | Number of Biases Accounted For | Primary Output |

|---|---|---|---|

| Simple Sensitivity Analysis | One fixed value assigned to each bias parameter | One at a time | Single bias-adjusted effect estimate |

| Multidimensional Analysis | More than one value assigned to each bias parameter | One at a time | Range of bias-adjusted effect estimates |

| Probabilistic Analysis | Probability distributions assigned to each bias parameter | One at a time | Frequency distribution of bias-adjusted effect estimates |

| Bayesian Analysis | Probability distributions assigned to each bias parameter | Multiple biases simultaneously | Distribution of bias-adjusted effect estimates |

| Multiple Bias Modeling | Probability distributions assigned to each bias parameter | Multiple biases simultaneously | Frequency distribution of bias-adjusted effect estimates |

A recent systematic review of summary-level QBA methods found that of 57 identified methods, 51% addressed unmeasured confounding, 33% addressed misclassification bias, and 11% addressed selection bias, while 5% addressed multiple biases simultaneously [20]. This distribution reflects the relative methodological challenges and prevalence of different bias types in observational research.

Bias Parameters: The Core Components of QBA

Definition and Purpose of Bias Parameters

Bias parameters are quantitative estimates that characterize the features of systematic error operating in a study [10]. These parameters cannot be estimated from the primary study data alone and must be informed by external sources such as validation studies, published literature, expert elicitation, or theoretical constraints [6]. They serve as the fundamental building blocks of all QBA methods, bridging the gap between observed data and the underlying truth by mathematically representing the bias structure.

Types of Bias Parameters by Source of Systematic Error

The specific bias parameters required for a QBA depend on the type of systematic error being addressed. Different bias sources necessitate distinct parameter sets to model their effects accurately.

Table 2: Bias Parameters by Source of Systematic Error

| Source of Bias | Key Bias Parameters | Additional Considerations |

|---|---|---|

| Unmeasured Confounding | Prevalence of unmeasured confounder among exposed and unexposed; Strength of association between confounder and outcome | Parameters often expressed as risk ratios or hazard ratios; Benchmarking against measured confounders is recommended |

| Misclassification/Information Bias | Sensitivity and specificity of key analytic variables (exposure, outcome, confounders); Determination of differential vs. nondifferential measurement error | Positive and negative predictive values may be used as alternatives; Pattern of misclassification must be specified |

| Selection Bias | Estimates of participation rates from target population within all levels of exposure and outcome | Selection probabilities are often difficult to estimate without external data |

For unmeasured confounding, a typical approach requires specifying both the association between the unmeasured confounder and the exposure and the association between the unmeasured confounder and the outcome [21]. For misclassification, the essential parameters are sensitivity (probability that a true case is correctly classified) and specificity (probability that a true non-case is correctly classified) of the measurement instrument [6].

Implementation Workflow for QBA

Implementing QBA requires a systematic approach to ensure appropriate methodology selection and valid interpretation. The following workflow outlines the key decision points in conducting a comprehensive bias analysis.

Step-by-Step Implementation Guide

Step 1: Determine the Need for QBA - Researchers should first consider whether QBA is warranted based on study context. QBA is particularly valuable when results contradict established literature, when concerns about systematic error exist, or when the explicit goal is causal inference [10]. Studies with minimized random error (large studies or meta-analyses) also benefit substantially from QBA.

Step 2: Select Biases to Address - Using directed acyclic graphs (DAGs) can help identify and communicate hypothesized bias structures [10]. The selection should be informed by the study's specific limitations and research goals, whether aiming for a comprehensive assessment of all potential biases or an in-depth evaluation of one primary concern.

Step 3: Select QBA Methodology - Method selection involves balancing computational complexity with realistic assessment needs. Simple bias analysis provides straightforward implementation but doesn't incorporate parameter uncertainty [10]. Multidimensional analysis accounts for some uncertainty while remaining relatively simple to implement. Probabilistic bias analysis enables incorporation of more uncertainty and modeling of combined effects of multiple bias sources [10].

Step 4: Identify Sources for Bias Parameters - Credible bias parameter values can be obtained from internal validation studies (preferable when available), external validation studies, published literature, or expert elicitation [10]. The choice among these sources depends on availability, quality, and relevance to the study population.

Step 5: Conduct Analysis and Interpret Results - Implementation involves applying the selected QBA method with the specified bias parameters. Interpretation should focus on both the direction and magnitude of bias adjustment and the tipping point at which study conclusions would change [21]. Results should be contextualized within the broader evidence base.

Software Tools for Implementing QBA

Multiple software tools have been developed to facilitate QBA implementation across different research contexts. A recent review identified 17 publicly available software tools accessible via R, Stata, and online web interfaces [6]. These tools cover various analysis types including regression, contingency tables, mediation analysis, longitudinal analysis, survival analysis, and instrumental variable analysis.

Table 3: Selected Software Tools for Quantitative Bias Analysis

| Software Tool | Platform | Primary Analysis Type | Key Features |

|---|---|---|---|

| EValue | R Package | General observational studies | Continuous outcome support; Benchmarking features |

| sensemakr | R Package | Linear regression | Detailed confounding assessment; Multiple unmeasured confounders |

| treatSens | R Package | Various regression models | Sensitivity for continuous/binary outcomes |

| causalsens | R Package | Linear regression | Sensitivity analysis for causal inference |

| konfound | R Package | Various models | Tipping point analysis capabilities |

Despite these available tools, challenges remain in their widespread adoption, including requirements for specialist knowledge and gaps in tools for specific scenarios like misclassification of categorical variables [6]. However, recent developments have increased accessibility, with 62% of identified software tools created after 2016 [21].

Case Study Applications in Health Research

External Control Arms in Oncology

A recent demonstration study (Q-BASEL project) applied QBA to external control arms in advanced non-small cell lung cancer research [18]. The study emulated 15 treatment comparisons using experimental arms from randomized trials and external control arms from observational data. After adjustment for measured confounders, QBA addressed potential bias from known unmeasured and mismeasured confounders using synthesized external evidence.

The results demonstrated QBA's feasibility and value in this setting. The mean difference in log hazard ratio estimates between original trials and external control analyses was reduced from 0.247 (unadjusted) to 0.139 (measured confounder adjustment) to 0.098 after adding external adjustment for unmeasured confounders [18]. This progressive reduction highlights QBA's potential to mitigate residual confounding in single-arm trials with external controls.

Matching-Adjusted Indirect Treatment Comparisons

In a study comparing elranatamab with real-world controls in multiple myeloma, researchers used QBA to assess robustness despite missing data and unmeasured confounding [19]. For an unmeasured confounder (high-risk cytogenetics), they tested a range of clinically plausible percentages (20-40%) to identify tipping points.

The QBA revealed that results remained statistically significant across all plausible scenarios. For overall survival, the tipping point required an implausibly high (58%) prevalence of the unmeasured confounder to nullify conclusions [19]. This application demonstrates how QBA can quantitatively assess the severity of evidence gaps and enhance decision-maker confidence in comparative effectiveness research.

Successful implementation of QBA requires both methodological knowledge and practical resources. Key references include:

- Textbooks: Applying Quantitative Bias Analysis to Epidemiologic Data (Fox et al., 2021) provides comprehensive guidance on QBA implementation [17]

- Practice Guidelines: Good practices for quantitative bias analysis (Lash et al., 2014) offers accessible recommendations for beginners [17]

- Software Documentation: CRAN repositories and Stata manuals provide tool-specific implementation guidance [6]

- Educational Initiatives: Organizations like the International Society for Environmental Epidemiology offer webinars and workshops on QBA fundamentals [22]

These resources collectively support proper application of QBA methods, from initial planning through interpretation and reporting of results.

Quantitative Bias Analysis represents a powerful approach to strengthen observational research by moving from qualitative speculation to quantitative assessment of systematic errors. The core principles of QBA involve identifying relevant biases, selecting appropriate methods, specifying evidence-informed bias parameters, and interpreting results in the context of study conclusions.

As observational studies continue to play crucial roles in drug development and health policy, the adoption of QBA methods will enhance the credibility and transparency of epidemiological evidence. Future developments should focus on expanding software accessibility, creating comprehensive guidelines, and educational initiatives to make QBA a standard component of observational research practice.

When is QBA Needed? Aligning Analysis with Study Goals and Existing Literature

When is QBA Needed? Aligning Analysis with Study Goals and Existing Literature

Observational studies are indispensable for investigating clinical questions where randomized controlled trials (RCTs) are not feasible, ethical, or generalizable [20]. However, their susceptibility to systematic error poses a significant threat to the validity of their findings [10]. Quantitative Bias Analysis (QBA) provides a suite of methodological techniques to quantitatively estimate the direction, magnitude, and uncertainty introduced by these biases, moving beyond qualitative speculation to a numeric assessment of their potential impact [10] [20]. Determining when to implement QBA is a critical decision that should be guided by a study's results in the context of existing literature, its design, and its overarching goals.

The Role of QBA in Observational Research

Understanding Systematic Error

Systematic error, or bias, distorts the observed association between an exposure and an outcome and, unlike random error, does not decrease with increasing study size [10]. The primary sources of systematic error in observational studies are:

- Confounding: Bias resulting from the mixing of the exposure-outcome effect with the effects of other factors that also influence the outcome [10].

- Information Bias: Bias arising from systematic errors in the measurement of analytic variables (e.g., exposures, outcomes, confounders) [10]. A common form is misclassification.

- Selection Bias: Bias introduced by selection procedures, factors influencing study participation, or differential loss to follow-up [10].

The Value Proposition of QBA

QBA shifts the discussion of limitations from a qualitative description to a quantitative evaluation. When applied, it allows researchers to:

- Quantify how far a biased effect estimate might be from the true value.

- Assess whether plausible biases could explain away an observed association.

- Provide a more realistic uncertainty interval that incorporates both random and systematic error.

- Inform decision-makers, such as health technology assessment (HTA) bodies, about the robustness of real-world evidence (RWE) used in drug development and coverage decisions [23].

Key Scenarios Necessitating Quantitative Bias Analysis

The decision to employ QBA should be deliberate. The following scenarios signal that a QBA is not just beneficial, but often necessary.

Inconsistency with Existing Literature

When the findings of an observational study are not aligned with prior research—either from previous observational studies or RCTs—the potential for systematic error should be rigorously investigated [10]. QBA can test whether specific biases, if present, could reconcile the discrepant findings.

Causal Inference as an Explicit Goal

In studies where the explicit aim is to draw causal inferences from observational data, a detailed assessment of systematic error is paramount [10]. QBA provides a framework to test the robustness of causal claims against unmeasured confounding and other biases.

Studies with Minimized Random Error

Large studies or meta-analyses often produce precise effect estimates with narrow confidence intervals due to minimal random error. In these contexts, systematic error becomes the dominant source of uncertainty, and QBA is essential to evaluate its potential impact on the overly precise findings [10].

Use of Real-World Data for Regulatory and HTA Submissions

As RWE is increasingly used to support regulatory submissions and inform HTA, assessing the impact of biases inherent in real-world data (RWD) is crucial. QBA offers a principled approach to quantify these effects, thereby increasing the trustworthiness of data-driven decision-making [23]. Applications include supporting contextual RWE in FDA diversity plans and evaluating comparative effectiveness from synthetic control arms [23].

Table 1: Decision Guide for When to Implement QBA

| Scenario | Key Question | Recommended QBA Approach |

|---|---|---|

| Findings contradict established literature | Could unmeasured confounding or selection bias explain this discrepancy? | Probabilistic analysis to model multiple bias parameters [10]. |

| Study aims to support a causal claim | How strong would an unmeasured confounder need to be to nullify the observed effect? | Simple or multidimensional sensitivity analysis for unmeasured confounding [10] [20]. |

| Large study or meta-analysis with a very precise estimate | Is the observed association robust to plausible levels of misclassification or selection bias? | Probabilistic bias analysis to incorporate uncertainty from systematic error [10]. |

| Research using electronic health records or claims data | How does outcome misclassification, measured by PPV, bias the exposure-outcome association? | Predictive value-based QBA [24]. |

| Planning for RWE use in regulatory/HTA submissions | Can we quantify and present the impact of potential biases to decision-makers? | Multiple bias modeling addressing confounding, selection bias, and measurement error [23]. |

A Structured Workflow for Implementing QBA

Implementing QBA involves a series of logical steps, from initial assessment to method selection and interpretation. The following workflow diagram outlines this process.

QBA Implementation Workflow

A Guide to QBA Method Selection

Selecting an appropriate QBA method requires balancing computational complexity with the need for a realistic assessment. Available methods, which can be applied to summary-level data from published studies, fall along a spectrum of sophistication [20].

Table 2: Classification of Quantitative Bias Analysis Methods

| Method Classification | Assignment of Bias Parameters | Biases Accounted For | Primary Output | Typical Use Case |

|---|---|---|---|---|

| Simple Sensitivity Analysis | One fixed value per parameter [20]. | One at a time [20]. | Single bias-adjusted effect estimate [20]. | Initial, simple assessment of a single bias. |

| Multidimensional Analysis | Multiple values per parameter [20]. | One at a time [20]. | Range of bias-adjusted estimates [20]. | Exploring uncertainty from a limited set of parameter values. |

| Probabilistic Analysis | Probability distributions for each parameter [10] [20]. | One at a time [20]. | Frequency distribution of bias-adjusted estimates [10] [20]. | Incorporating full uncertainty about a single bias source. |

| Bayesian Analysis | Probability distributions for each parameter [20]. | Multiple simultaneously [20]. | Distribution of bias-adjusted estimates [20]. | Complex analyses adjusting for multiple biases at once. |

| Multiple Bias Modeling | Probability distributions for each parameter [20]. | Multiple simultaneously [20]. | Frequency distribution of bias-adjusted estimates [20]. | Comprehensive analysis for studies with several major bias concerns. |

Experimental Protocols and Applied Examples

Protocol 1: QBA for Unmeasured Confounding

This protocol is designed to assess the sensitivity of an observed association to an unmeasured confounder.

- Objective: To determine the strength an unmeasured confounder would need to have to explain away the observed exposure-outcome association.

- Method: Apply simple sensitivity analysis formulas for unmeasured confounding [20].

- Required Parameters: The prevalence of the unmeasured confounder among the exposed and unexposed groups, and the estimated strength of the association between the confounder and the outcome [10].

- Procedure:

- Specify a plausible range of values for the confounder's prevalence in exposed and unexposed groups.

- Specify a plausible range for the relative risk between the unmeasured confounder and the outcome.

- Input these parameters into a sensitivity analysis formula (e.g., as presented in Schneeweiss 2019) to calculate the bias-adjusted risk ratio.

- Observe how the adjusted estimate changes across the specified parameter ranges. Determine if the association remains statistically significant after adjustment.

Protocol 2: QBA for Outcome Misclassification using Positive Predictive Values (PPVs)

This protocol is particularly relevant for studies reusing electronic health data, where PPVs are a commonly reported validity measure [24].

- Objective: To correct an observed odds ratio for bias introduced by outcome misclassification, using PPVs.

- Method: Predictive value-based quantitative bias analysis [24].

- Required Parameters: Outcome PPVs stratified by exposure group. If stratified PPVs are unavailable, overall PPVs can be used, but this may mask differential misclassification [24].

- Procedure:

- From the study's 2x2 table (Exposure vs. Observed Outcome), calculate the observed odds ratio (OR_observed).

- Obtain or assume PPVexposed and PPVunexposed (the proportion of observed outcomes in each exposure group that are true outcomes).

- Estimate the number of true outcomes in each exposure group: Atrue, exposed = Aobserved, exposed * PPVexposed; Atrue, unexposed = Aobserved, unexposed * PPVunexposed.

- Recalculate the 2x2 table using these estimated true outcomes.

- Calculate the bias-adjusted odds ratio (ORadjusted) from the corrected table.

- Compare ORadjusted to ORobserved to quantify the impact of outcome misclassification.

The Researcher's Toolkit: Essential Components for QBA

Successfully implementing QBA requires more than just statistical formulas. Researchers should assemble the following "reagents" for their analysis.

Table 3: Essential Components for Conducting QBA

| Toolkit Component | Function & Importance | Examples & Sources |

|---|---|---|

| Directed Acyclic Graph (DAG) | A visual tool to identify and communicate hypothesized structures of bias, including confounding, selection bias, and measurement error [10]. | Software like Dagitty; used in Step 1 of the workflow to select which biases to address. |

| Bias Parameters | Quantitative estimates that characterize the bias. These are the essential inputs for any QBA model [10]. | Information bias: Sensitivity, specificity, PPV, NPV [10] [24].Selection bias: Participation rates by exposure/outcome [10].Unmeasured confounding: Confounder prevalence and strength [10]. |

| Validation Studies | The optimal source for informing bias parameters. Internal validation substudies are preferred, but external literature can be used [10] [24]. | A substudy within your cohort that manually validates a sample of outcome cases to calculate PPVs and NPVs. |

| Software & Code | To implement probabilistic or complex multi-bias models. Availability of code and tools lowers the barrier to application [20]. | Statistical software (R, SAS, Stata) with custom scripts; some published methods provide online tools or code [20]. |

| Expert Knowledge & Assumptions | Used to define plausible ranges for bias parameters when validation data are limited. Critical for multidimensional and probabilistic analyses [10]. | Eliciting from clinical experts the plausible minimum, maximum, and most likely values for an unmeasured confounder's prevalence. |

Quantitative Bias Analysis is a powerful but underutilized methodology that should be a key component of the observational researcher's toolkit. Its need is most acute when study findings are unexpected, when causal claims are advanced, when random error is small, and when real-world data inform high-stakes decisions. By systematically aligning the choice of QBA method with specific study goals and the nature of potential biases, researchers can move from merely listing limitations to rigorously quantifying their impact. This practice fosters a more nuanced interpretation of results and builds greater confidence in the evidence generated from observational research, ultimately leading to more reliable scientific conclusions and better-informed policy and clinical decisions.

For researchers and drug development professionals, demonstrating the validity of evidence derived from observational data is a critical challenge. Quantitative Bias Analysis (QBA) provides a set of methodological techniques to quantitatively estimate the potential direction and magnitude of systematic error (bias) in observed associations [10]. The application of QBA is increasingly crucial for meeting the evidence standards required by regulatory and health technology assessment (HTA) bodies, which are now implementing more dynamic, lifecycle-oriented evaluation frameworks [25] [26].

A critical first step is clarifying terminology. Across regulatory documents, the acronym "QBA" can be ambiguous, referring either to the methodological approach of Quantitative Bias Analysis or to specific product classifications. The following table distinguishes these uses to ensure clear communication with agencies.

Table 1: Clarifying the "QBA" Acronym in Regulatory Contexts

| Acronym Expansion | Context/Meaning | Relevant Authority | Primary Application |

|---|---|---|---|

| Quantitative Bias Analysis | A set of methods to quantify the potential impact of systematic error (bias) on observed study results [10]. | Methodological best practice; increasingly relevant for FDA and HTA submissions. | Strengthening observational study evidence in regulatory submissions and HTA dossiers. |

| Product Code "QBA" | A specific FDA product classification code for a "normothermic machine perfusion system for the preservation of standard criteria donor lungs prior to transplantation" [27]. | U.S. Food and Drug Administration (FDA) | Device classification and premarket review processes. |

This guide focuses on the methodological perspective of Quantitative Bias Analysis, which is essential for producing robust evidence for regulatory decision-making.

The Methodology of Quantitative Bias Analysis (QBA)

Core Concepts and Definitions

QBA moves beyond qualitative discussions of study limitations by providing a quantitative assessment of how systematic errors might affect observed results [10]. Key concepts include:

- Systematic Error: Bias in observed effect estimates due to issues in measurement or study design, which does not decrease with increasing study size. Primary sources are confounding, selection bias, and information bias [10].

- Random Error: Error caused by chance or random variation, which decreases with increasing study size and is addressed through conventional statistics like confidence intervals [10].

- Bias Parameters: Quantitative estimates that characterize the bias, such as sensitivity/specificity of measurements (information bias), participation rates (selection bias), or prevalence and strength of an unmeasured confounder [10].

A Step-by-Step QBA Implementation Guide

Implementing QBA involves a structured process to ensure a thorough and defensible analysis [10]:

- Step 1: Determine the Need for QBA → QBA is particularly important when study findings are inconsistent with prior literature or when causal inference is a goal. Using directed acyclic graphs (DAGs) can help identify and communicate potential bias structures.

- Step 2: Select the Biases to Be Addressed → Prioritize biases based on their potential impact on the results, informed by the DAG and simple initial assessments.

- Step 3: Select a Modeling Method → Choose a technique that balances computational complexity with the need to realistically capture uncertainty in bias parameters.

- Step 4: Identify Sources for Bias Parameters → Gather estimates for the required bias parameters from internal validation studies, external literature, or expert opinion.

- Step 5: Implement and Report the QBA → Conduct the analysis and transparently report all inputs, assumptions, and adjusted results, including a clear interpretation of the findings.

Hierarchical Techniques for QBA

Researchers can select from a hierarchy of QBA techniques based on the analysis goals and available information [10].

Figure 1: A flowchart of QBA techniques from simple to probabilistically complex.

Table 2: Hierarchy of Quantitative Bias Analysis Techniques

| Method | Key Principle | Data Input Required | Output Delivered | Best Use Case Scenario |

|---|---|---|---|---|

| Simple Bias Analysis | Uses single, fixed values for bias parameters to adjust an effect estimate [10]. | Summary-level data (e.g., a 2x2 table) [10]. | A single bias-adjusted estimate. | Initial, rapid assessment of a single bias's potential impact. |

| Multidimensional Bias Analysis | Conducts multiple simple bias analyses using different sets of bias parameters to account for uncertainty in their values [10]. | Summary-level data [10]. | A set of bias-adjusted estimates showing a range of possible outcomes. | When parameter values are uncertain and no validation data exists. |

| Probabilistic Bias Analysis | Specifies probability distributions for bias parameters. Values are randomly sampled over many simulations to create a distribution of bias-adjusted estimates [10]. | Individual-level or summary-level data [10]. | A frequency distribution of bias-adjusted estimates, allowing for the creation of simulation intervals [10]. | Most rigorous approach; incorporates full uncertainty and models multiple simultaneous biases. |

The Regulatory and HTA Perspective on Evidence Quality

Evolving Frameworks at the FDA

The FDA is actively developing frameworks to ensure the credibility of complex analytical approaches, including artificial intelligence (AI) models used in drug development. While not exclusively about QBA, the principles align closely [28] [29].

- Risk-Based Credibility Assessment: The FDA's draft guidance for AI in drug development proposes a risk-based framework where the rigor of validation should be commensurate with the model's risk and its "Context of Use" (COU) [28] [29]. This principle can be extended to QBA, where the depth of the bias analysis should match the study's importance for regulatory decisions.

- Early Engagement: The FDA strongly encourages sponsors to engage with the agency early to set expectations regarding the appropriate assessment activities for a proposed model, including AI models used in drug development [28]. This is sound advice for researchers planning to use novel QBA methods in their submissions.

Lifecycle Approaches in Health Technology Assessment (HTA)

HTA bodies are increasingly adopting "lifecycle approaches," which involve assessing a technology at multiple points from pre-market to disinvestment [25] [26]. This creates ongoing opportunities and requirements for evidence generation, including the use of QBA to strengthen real-world evidence.

- Early HTA: Activities like Early Value Assessment (EVA) at the UK's NICE evaluate promising technologies that may still have evidence gaps [26]. In this context, a well-executed QBA can demonstrate a sponsor's thorough understanding of evidence limitations and strengthen the plausibility of the technology's value claim.

- Joint Clinical Assessments (JCAs) in the EU: Starting in 2025, the EU HTA Regulation will mandate JCAs for new oncology medicines and advanced therapies, creating a unified clinical assessment across member states [30] [31]. The process emphasizes robust and comparative clinical evidence. Proactively addressing potential biases through QBA in the JCA dossier can preempt questions from national HTA bodies and lead to more efficient market access.

Core Reagent Solutions for the QBA Researcher

Successfully implementing QBA requires a toolkit of methodological reagents. The following table details essential components for designing and executing a robust QBA.

Table 3: Research Reagent Solutions for Quantitative Bias Analysis

| Research Reagent | Function in QBA | Application Example |

|---|---|---|

| Directed Acyclic Graph (DAG) | A visual tool to identify and communicate hypothesized causal structures and sources of bias (e.g., confounding) before conducting QBA [10]. | Mapping relationships between an exposure, outcome, unmeasured confounder, and measurement error to select which biases to quantify. |

| Bias Parameters | Quantitative estimates that characterize the features of a specific bias, serving as inputs for bias adjustment models [10]. | Using values for sensitivity (0.90) and specificity (0.95) of outcome measurement to correct for information bias. |

| Validation Study Data | A high-quality sub-study or external data source used to empirically estimate bias parameters like sensitivity, specificity, or participation probabilities [10]. | Using an internal validation study where exposure was measured with a "gold standard" method to estimate misclassification parameters for the main study. |

| Probabilistic Distributions | Representations (e.g., Beta distributions) used in probabilistic bias analysis to incorporate uncertainty about the true values of bias parameters [10]. | Specifying a Beta distribution for an unmeasured confounder's prevalence instead of a single value to account for estimation uncertainty. |

The regulatory and HTA landscape is shifting towards continuous, holistic evidence assessment throughout a product's lifecycle [25] [26]. In this environment, proactively addressing evidence limitations is not just a best practice but a strategic imperative. Quantitative Bias Analysis provides a powerful, quantitative framework to meet this demand. By formally quantifying the potential impact of systematic error, researchers can generate more robust and defensible evidence for FDA submissions, EU Joint Clinical Assessments, and national HTA decisions, ultimately accelerating patient access to safe and effective technologies.

The QBA Toolkit: From E-Values to Probabilistic Analysis in Action

Quantitative bias analysis (QBA) represents a critical methodology for assessing the impact of systematic errors in observational research, where randomized controlled trials are not feasible. Deterministic QBA techniques provide researchers with structured approaches to quantify how biases such as unmeasured confounding, measurement error, and selection bias might influence study results. These methods are particularly valuable in drug development and epidemiological research, where observational studies increasingly inform regulatory decisions but remain vulnerable to systematic errors that cannot be addressed through conventional statistical adjustment alone [32] [33].

Deterministic QBA encompasses a spectrum of approaches classified by their handling of bias parameters. These methods enable researchers to move beyond qualitative discussions of limitations by providing quantitative estimates of bias direction and magnitude. The fundamental characteristic of deterministic approaches is their use of fixed values for bias parameters, as opposed to probabilistic methods that assign probability distributions to these parameters [34] [6]. This article focuses on two primary deterministic techniques: simple sensitivity analysis and multidimensional bias analysis, comparing their methodologies, applications, and implementation considerations for researchers working with observational data.

Classification and Key Characteristics of Deterministic QBA Methods

Deterministic QBA methods are systematically categorized based on their approach to handling bias parameters and their output structures. The table below summarizes the fundamental classification of QBA methods, highlighting where deterministic techniques fit within the broader QBA landscape:

Table 1: Classification of Quantitative Bias Analysis Methods

| Classification | Assignment of Bias Parameters | Number of Biases Accounted For | Output |

|---|---|---|---|

| Simple Sensitivity Analysis | One fixed value assigned to each bias parameter | One at a time | Single bias-adjusted effect estimate |

| Multidimensional Analysis | More than 1 value assigned to each bias parameter | One at a time | Range of bias-adjusted effect estimates |

| Probabilistic Analysis | Probability distributions assigned to each bias parameter | One at a time | Frequency distribution of bias-adjusted effect estimates |

| Bayesian Analysis | Probability distributions assigned to each bias parameter | Multiple biases at a time | Distribution of bias-adjusted effect estimates |

| Multiple Bias Modeling | Probability distributions assigned to each bias parameter | Multiple biases at a time | Frequency distribution of bias-adjusted effect estimates |

As illustrated in Table 1, deterministic methods encompass both simple and multidimensional approaches, distinguished by their use of fixed parameter values rather than probability distributions [20]. This fundamental distinction makes deterministic methods more accessible for researchers with standard statistical backgrounds, as they require less computational complexity while still providing valuable insights into potential bias effects.

The key characteristics of the two primary deterministic QBA approaches are:

Simple Sensitivity Analysis: This approach assigns a single fixed value to each bias parameter, addressing one bias at a time and producing a single bias-adjusted effect estimate. It serves as an introductory method that can be implemented with basic statistical knowledge [10].

Multidimensional Bias Analysis: This technique specifies multiple values for each bias parameter, still addressing one bias type at a time but generating a range of bias-adjusted effect estimates. It provides more comprehensive sensitivity testing while remaining within the deterministic framework [34] [6].

Deterministic QBA methods are particularly valuable for initial assessments of bias impact and for situations where limited information is available to inform bias parameter distributions. They provide transparent, easily interpretable results that facilitate decision-making regarding the robustness of study findings [10].

Comparative Analysis: Simple versus Multidimensional Bias Analysis

When selecting an appropriate deterministic QBA method, researchers must consider the specific requirements of their analysis context. The following table provides a detailed comparison of the two primary deterministic approaches:

Table 2: Comparison of Simple and Multidimensional Deterministic Bias Analysis Techniques

| Characteristic | Simple Sensitivity Analysis | Multidimensional Bias Analysis |

|---|---|---|

| Parameter Specification | Single fixed value for each bias parameter | Multiple values for each bias parameter, examining combinations |

| Output Generated | Single bias-adjusted effect estimate | Range of bias-adjusted effect estimates |

| Computational Complexity | Low | Moderate |

| Uncertainty Handling | Does not incorporate uncertainty around bias parameters | Accounts for some uncertainty by testing multiple parameter values |

| Implementation Requirements | Summary-level data (e.g., 2×2 tables, effect estimates) | Summary-level data |

| Interpretation Ease | Straightforward, single result | Requires interpretation of result patterns across scenarios |

| Best Use Cases | Initial bias assessment, limited computational resources | When uncertainty exists about parameter values, no validation data available |

| Common Applications | Rapid sensitivity checking, educational contexts | Comprehensive sensitivity assessment, pre-probabilistic analysis |

Simple sensitivity analysis provides a straightforward approach that is particularly valuable for initial assessments or when computational resources are limited. For example, in a study examining the relationship between preconception periodontitis and time to pregnancy, researchers might apply a simple bias analysis to quickly assess whether unmeasured confounding could plausibly explain their observed results [10]. This method generates a single adjusted effect estimate, offering a clear "what-if" scenario that is easily interpretable for stakeholders.

Multidimensional bias analysis offers greater analytical depth by testing multiple values for each bias parameter, effectively conducting a series of simple bias analyses across a range of plausible parameter combinations. This approach is particularly valuable when researchers have uncertainty about the appropriate values to assign to bias parameters and when no validation data are available to inform these choices [10]. For instance, in assessing misclassification of a binary variable, a researcher might examine different pairs of sensitivity and specificity values to understand how the bias-adjusted estimate varies across these combinations [34] [6].

A particularly valuable application of both simple and multidimensional deterministic QBA is the "tipping point" analysis, which identifies the strength of bias needed to change a study's conclusions. For example, researchers might determine how strongly an unmeasured confounder would need to be associated with both the exposure and outcome to explain away a statistically significant result [35] [21]. This approach frames QBA not only as a method for estimating bias magnitude but also as a tool for assessing the robustness of study conclusions.

Bias Parameters and Data Requirements Across Bias Types

Successful implementation of deterministic QBA requires appropriate specification of bias parameters, which vary according to the type of bias being addressed. The table below outlines key parameters for major bias categories:

Table 3: Bias Parameters and Data Requirements for Different Bias Types

| Bias Type | Key Bias Parameters | Data Sources | Common Applications |

|---|---|---|---|

| Unmeasured Confounding | Prevalence of unmeasured confounder among exposed and unexposed; strength of association between confounder and outcome | External literature, validation studies, expert elicitation | Pharmacoepidemiologic studies, health technology assessment |

| Misclassification (Information Bias) | Sensitivity, specificity, positive/negative predictive values | Validation studies, prior research, theoretical constraints | Diagnostic accuracy studies, exposure measurement error assessment |

| Selection Bias | Participation rates from target population across exposure and outcome levels | Non-participant surveys, population registries | Cohort studies with differential follow-up, survey research |

The parameters identified in Table 3 form the foundation for implementing deterministic QBA across various research contexts. For unmeasured confounding, bias parameters typically specify the prevalence of the unmeasured confounder among exposed and unexposed groups, along with the strength of association between the confounder and the outcome [35] [10]. For misclassification (a form of information bias), parameters include sensitivity, specificity, and predictive values, which may be differential or non-differential with respect to other variables [34] [10]. For selection bias, researchers must estimate participation rates from the target population within all levels of exposure and outcome in the analytic sample [10].

The information to inform these bias parameters typically comes from external sources such as validation studies, prior research, expert opinion, or theoretical constraints [35]. In some cases, benchmarking or calibration approaches can be used, where strengths of associations of measured covariates with exposure and outcome are used as benchmarks for the bias parameters [21].

Implementation Workflow for Deterministic QBA

The implementation of deterministic QBA follows a structured process that ensures appropriate method selection and interpretation. The following diagram illustrates the key decision points and workflow:

Deterministic QBA Implementation Workflow

Step-by-Step Implementation Protocol

The workflow illustrated above translates into a concrete implementation protocol:

Step 1: Determine the Need for QBA Researchers should first assess whether QBA is warranted based on study context. QBA is particularly valuable when results contradict prior literature, when concerns about systematic error exist in the literature, or when studies aim to draw causal inferences from observational data [10]. Directed Acyclic Graphs (DAGs) provide a valuable tool for identifying and communicating hypothesized bias structures at this stage [10].

Step 2: Select Biases to Address The selection of which biases to address should align with the ultimate goals of the QBA. Researchers may choose to conduct an in-depth evaluation of one primary bias source or a broader assessment of multiple potential biases. Simple bias analysis can initially assess the potential influence of different error sources, informing decisions about which to include in more comprehensive analyses [10].

Step 3: Select QBA Method Method selection involves balancing computational complexity with realistic assessment of bias impact. Simple bias analysis is easier to implement but doesn't incorporate uncertainty around bias parameters. Multidimensional analysis requires more parameter estimates but can account for some uncertainty while remaining relatively straightforward to implement [10].

Step 4: Identify Sources for Bias Parameter Estimates Appropriate sources for bias parameters are crucial for valid QBA. Internal validation studies are preferable, but external validation studies, published literature, or expert elicitation can also inform parameter estimates [35] [10]. For unmeasured confounding, parameters include the prevalence of the confounder among exposed and unexposed groups and the confounder-outcome association strength [10].

Step 5: Implement Analysis Implementation requires applying the selected QBA method using the identified bias parameters. For summary-level data, this typically involves applying bias parameters to summary 2×2 tables or effect estimates from the study [20] [10]. Numerous software tools are available to support implementation, ranging from specialized R packages to Stata modules and web-based tools [35] [21].

Step 6: Interpret Results Interpretation should consider the clinical or practical significance of bias-adjusted estimates, not just statistical significance. For multidimensional analyses, patterns across multiple scenarios should be evaluated rather than focusing on individual results. Tipping point analyses are particularly valuable for assessing how much bias would be needed to change study conclusions [35] [21].

Successful implementation of deterministic QBA requires both conceptual understanding and practical tools. The following table outlines key resources available to researchers:

Table 4: Research Reagent Solutions for Deterministic QBA Implementation

| Resource Category | Specific Tools/Approaches | Function/Purpose | Implementation Considerations |

|---|---|---|---|

| Conceptual Frameworks | Directed Acyclic Graphs (DAGs) | Identify and communicate hypothesized bias structures | Ensure all relevant variables and relationships are represented |

| Bias Parameter Sources | Internal validation studies, external literature, expert elicitation | Provide values for bias parameters needed for analysis | Prioritize internal validation data when available |

| Software Solutions | R packages (sensemakr, EValue), Stata modules, online web tools | Implement bias analysis calculations | Select tools matching analytical complexity and researcher expertise |

| Reporting Guidelines | Structured templates for documenting assumptions and parameters | Ensure transparent reporting of QBA methods and findings | Clearly document all bias parameters and their sources |

The resources identified in Table 4 represent essential components for implementing deterministic QBA in practice. Directed Acyclic Graphs (DAGs) provide a visual framework for identifying potential bias structures and guiding the selection of appropriate bias parameters [10]. Software tools have become increasingly accessible, with multiple R packages (including sensemakr, EValue, and tipr) and Stata modules available to implement various deterministic QBA methods [35] [21]. These tools help overcome computational barriers that have historically limited QBA adoption.

For parameter specification, researchers should prioritize internal validation data when available, but can also draw from external validation studies, published literature, or expert elicitation when necessary [35] [10]. Structured reporting templates ensure transparent documentation of all assumptions, parameter values, and analytical decisions, facilitating appropriate interpretation and critique of QBA results.

Deterministic quantitative bias analysis provides researchers with essential tools for quantifying the potential impact of systematic errors in observational studies. Simple and multidimensional bias analysis techniques offer complementary approaches that balance analytical rigor with practical implementability, making them valuable for assessing sensitivity to unmeasured confounding, misclassification, and selection bias. As observational research continues to inform drug development and health technology assessment, the thoughtful application of these methods will enhance the interpretation and appropriate utilization of real-world evidence. By following structured implementation workflows and leveraging available software tools, researchers can strengthen the validity and credibility of observational research across diverse scientific contexts.

Quantitative Bias Analysis (QBA) represents a crucial methodological framework for addressing systematic errors in observational health research, where randomized controlled trials are often infeasible. While deterministic QBA methods utilize fixed values for bias parameters, probabilistic QBA advances this approach by formally incorporating uncertainty through probability distributions. This sophisticated methodology enables researchers to quantify how measurement error, misclassification, and unmeasured confounding might impact study conclusions, moving beyond simple sensitivity analyses to provide more comprehensive uncertainty quantification [34] [6].

The application of QBA remains surprisingly limited in epidemiological practice. A recent review of measurement error in medical literature found that while 44% of studies mentioned measurement error as a limitation, only 7% undertook any formal investigation or correction [34] [6]. This implementation gap persists despite the potential for erroneous findings to influence government policies, health interventions, and scientific evidence bases [34]. Probabilistic QBA methods, particularly Monte Carlo and Bayesian approaches, offer powerful solutions to these challenges by enabling researchers to propagate uncertainty through their analyses systematically, thus providing more realistic assessments of how biases might affect their conclusions.

Theoretical Foundations of Probabilistic QBA

Core Principles and Terminology

Probabilistic QBA operates through a structured framework that incorporates uncertainty directly into bias adjustment. The foundation begins with a bias model that mathematically represents the relationship between observed data and measurement errors [34] [6]. This model contains bias parameters (also called sensitivity parameters) that cannot be estimated from the observed data alone and must be informed by external evidence, expert elicitation, or theoretical constraints [34]. Examples include sensitivity and specificity for misclassification analysis, reliability ratios for continuous measurement error, or strength-of-confounding parameters for unmeasured confounding scenarios.

The key distinction between probabilistic and deterministic QBA lies in how these bias parameters are handled. While deterministic methods assign fixed values, probabilistic QBA assigns probability distributions to bias parameters, allowing researchers to specify plausible value ranges, most likely values, and their uncertainty about these specifications [34] [6]. This approach generates a distribution of bias-adjusted effect estimates that more accurately reflects total uncertainty, combining random error with systematic error from potential biases.

Classification of QBA Methods