Sequential Simplex Optimization: A Foundational Guide for Scientific and Drug Development Applications

This article provides a comprehensive exploration of the Sequential Simplex Method, a cornerstone algorithm for function minimization and optimization.

Sequential Simplex Optimization: A Foundational Guide for Scientific and Drug Development Applications

Abstract

This article provides a comprehensive exploration of the Sequential Simplex Method, a cornerstone algorithm for function minimization and optimization. Tailored for researchers, scientists, and drug development professionals, we cover the method's foundational principles, from its geometric interpretation to its evolution into the Nelder-Mead algorithm. The content delves into practical, step-by-step methodologies and real-world applications, including addressing numerical challenges akin to those in heat exchanger optimization. We further offer troubleshooting guidance for common pitfalls, a comparative analysis with modern techniques like Interior Point Methods, and an examination of its role amidst contemporary AI-driven approaches in fields like cheminformatics and active learning.

The Building Blocks of Sequential Simplex: From Geometry to Core Concepts

In mathematical optimization, the "simplex" refers to a fundamental geometric concept that forms the basis of powerful algorithms for solving complex resource-allocation problems. The simplex algorithm, invented by George Dantzig in 1947, provides a systematic method for navigating the vertices of a geometric object called a polytope to find the optimal solution to a linear programming problem [1] [2]. This geometric approach transforms abstract mathematical problems into tangible spatial navigation challenges, where an optimal solution is found by moving along the edges of a multi-dimensional shape from one vertex to another.

Within the broader context of sequential simplex optimization research, this geometric foundation enables efficient problem-solving across diverse fields. The sequential simplex method represents an evolutionary approach where the algorithm systematically moves from one corner point to an adjacent one, improving the objective function at each stage until the optimal solution is found [3]. For researchers, scientists, and drug development professionals, understanding this geometric foundation is crucial for applying these methods to complex optimization challenges in fields ranging from industrial manufacturing to pharmaceutical development.

Mathematical Foundations of the Simplex Method

Standard Form and Problem Formulation

The simplex algorithm operates on linear programs in the canonical form [1]:

- Maximize $c^Tx$

- Subject to $Ax ≤ b$ and $x ≥ 0$

Where $c = (c1, ..., cn)$ represents the coefficients of the objective function, $x = (x1, ..., xn)$ represents the decision variables, $A$ is a matrix of constraint coefficients, and $b = (b1, ..., bp)$ represents the constraint bounds.

The feasible region defined by all values of $x$ satisfying $Ax ≤ b$ and $∀i, x_i ≥ 0$ forms a convex polyhedron [1]. In this geometric structure, if the objective function has a maximum value on the feasible region, then it has this value on at least one of the extreme points [1]. This crucial insight reduces what could be an infinite computation to a finite one, as there is a finite number of extreme points.

The Simplex Tableau

The simplex method uses a tableau representation to organize and manipulate the linear program algebraically [1]. A linear program in standard form can be represented as a tableau:

The first row defines the objective function, while the remaining rows specify the constraints. Through a series of pivot operations—the algebraic equivalent of moving from one vertex to another—the tableau is transformed until no further improvements can be made to the objective function, indicating an optimal solution has been found.

Algorithm Execution

The execution of the simplex algorithm follows these key steps [3]:

- Set up the problem: Write the objective function and inequality constraints

- Convert inequalities to equations: Add slack variables for each inequality

- Construct initial simplex tableau: Write the objective function as the bottom row

- Identify pivot column: Select the most negative entry in the bottom row

- Calculate quotients: Identify the pivot row by dividing the rightmost column by the pivot column

- Perform pivoting: Make the pivot element 1 and all other elements in the column 0

- Termination check: When no negative entries remain in the bottom row, the solution is optimal

Table 1: Key Components of Linear Programming for Simplex Method

| Component | Mathematical Representation | Role in Optimization |

|---|---|---|

| Decision Variables | $x = (x1, ..., xn)$ | Represent quantities to be determined in the optimization problem |

| Objective Function | $c^Tx = c1x1 + ... + cnxn$ | Function to be maximized or minimized |

| Constraints | $Ax ≤ b$, $x ≥ 0$ | Define the feasible region of solutions |

| Slack Variables | $s_i$ for $i^{th}$ constraint | Convert inequality constraints to equalities |

Sequential Simplex Optimization in Research

Evolution from Traditional Simplex Methods

Sequential simplex optimization represents a significant evolution from traditional simplex methods, particularly in its application to experimental optimization. While the classical simplex algorithm navigates a fixed polyhedron defined by linear constraints, sequential simplex methods used in experimental optimization employ a moving simplex approach that adapts based on experimental results.

This approach was developed as an efficient strategy for rapidly optimizing processes by moving through a factor space via a relatively simple geometric algorithm [4]. Unlike the "one-factor-at-a-time" strategy, which ignores possible interactions between variables and requires a large number of experiments, sequential simplex optimization changes all factor levels simultaneously, accommodating factor interactions within its scheme [4].

The Sequential Simplex Workflow

The fundamental geometric principle of sequential simplex optimization involves creating a simplex—a geometric figure with n+1 vertices in n-dimensional space—that moves through the experimental space based on objective function evaluations at each vertex. The method iteratively reflects the worst-performing vertex through the centroid of the opposite face, creating a new simplex that progressively moves toward optimal conditions.

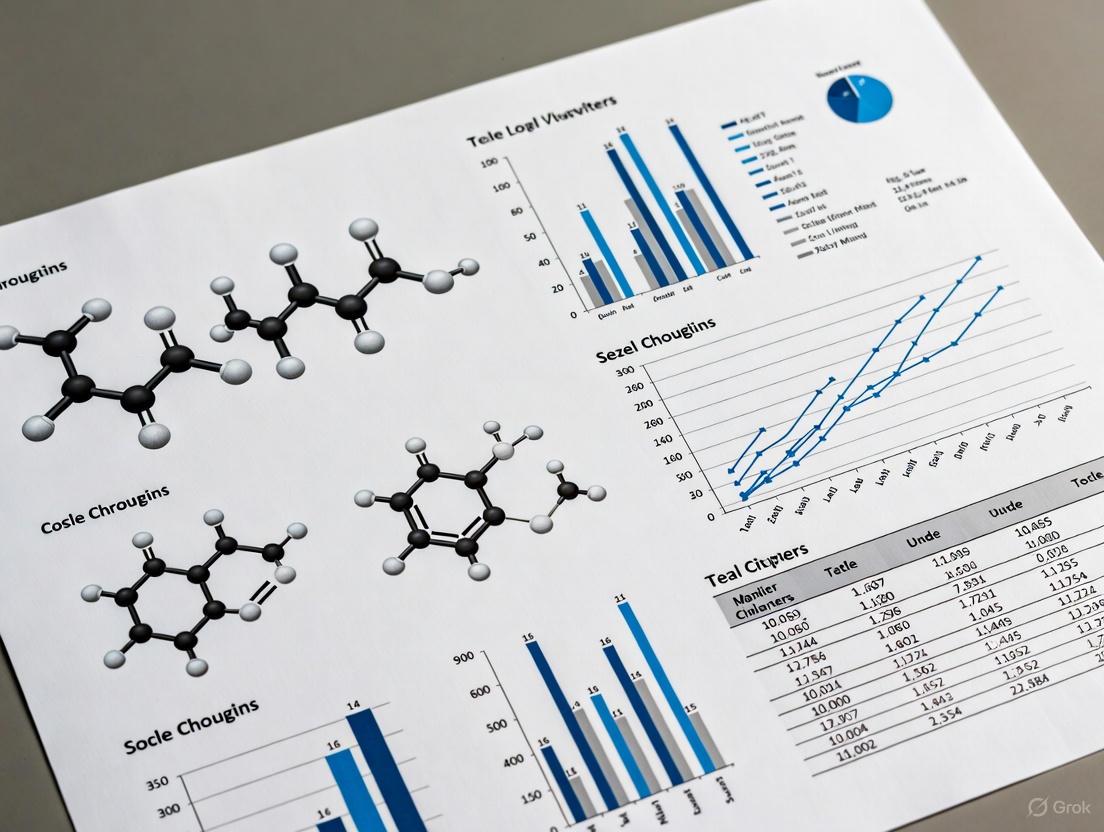

Diagram 1: Sequential Simplex Workflow

Applications in Drug Development and Biotechnology

Optimizing Recombinant Protein Production

Sequential simplex optimization has demonstrated significant utility in pharmaceutical development, particularly in optimizing bioprocess conditions. A notable application appears in the optimization of recombinant biotinylated survivin production by Escherichia coli using mineral supplementation [4]. Survivin, an apoptosis inhibitor that plays a role in cell cycle regulation, has implications for cancer research and diagnostics.

In this study, researchers applied sequential simplex methodology to optimize five experimental parameters [4]:

- Concentration of zinc sulphate

- Concentration of IPTG (isopropyl-beta-d-thiogalactopyranoside)

- pH level

- Temperature

- Agitation rate

The research found that Zn²⁺ ions were linked tetrahedrally by Cys 57, Cys 60, His 77 and Cys 84 bridges in the core beta-sheet with alpha helices in the survivin structure, making zinc concentration a critical factor in protein production optimization [4].

Table 2: Sequential Simplex Optimization of Recombinant Biotinylated Survivin Production

| Experimental Parameter | Initial Range | Optimized Value | Impact on Production |

|---|---|---|---|

| Zinc Sulphate Concentration | Up to 200 μM | 190 μM | Significant enhancement of biotinylated SVV-BCCP production |

| IPTG Concentration | 100 μM | 246 μM | Induced optimal protein expression |

| pH Level | Not specified | 7.0 | Optimal for E. coli growth and protein stability |

| Temperature | 25°C | 23.5°C | Improved protein folding and yield |

| Agitation Rate | 180 rpm | 345 rpm | Enhanced oxygen transfer for aerobic metabolism |

Advantages Over Traditional Experimental Approaches

The sequential simplex method offered distinct advantages for this biotechnological application over traditional experimental approaches. By accommodating interactions between variables and requiring fewer experiments than factorial designs, the method efficiently identified optimal conditions for recombinant protein production [4]. The optimized conditions resulted in enhanced production of biotinylated SVV-BCCP, which has important implications for cancer diagnosis and therapeutic development.

Recent Theoretical Advances and Computational Enhancements

Addressing Theoretical Complexities

Despite its proven practical efficiency, the simplex method has long been shadowed by a theoretical concern: in 1972, mathematicians proved that the time it takes to complete a task could rise exponentially with the number of constraints in worst-case scenarios [2]. This exponential worst-case complexity persisted as a theoretical limitation despite the algorithm's strong performance in practical applications.

Recent theoretical work has made significant strides in addressing this issue. In 2001, Spielman and Teng proved that introducing a tiny amount of randomness could prevent these worst-case outcomes, establishing that the running time could never be worse than the number of constraints raised to a fixed power (polynomial time) [2]. This breakthrough demonstrated that the exponential runtimes long feared do not materialize in practice.

Enhanced Sequential Designs for Second-Order Models

Recent research has developed more sophisticated sequential experimental designs that build upon simplex foundations. A 2025 paper introduced a model-driven approach to sequential Latin hypercube designs (SLHDs) tailored for second-order models [5]. Unlike traditional model-free SLHDs, this method optimizes a conditional A-criterion to improve efficiency, particularly in higher dimensions.

This approach maintains space-filling properties while allowing greater flexibility for model-specific optimization. Using Sobol sequences, the algorithm iteratively selects optimal points, enhancing conditional A-efficiency compared to distance minimization methods [5]. For pharmaceutical researchers, these advances translate to more efficient experimental designs when optimizing complex biological systems with multiple interacting factors.

Diagram 2: Efficient Sequential Design Construction

Essential Research Tools and Reagent Solutions

The practical implementation of sequential simplex optimization in experimental sciences requires specific research tools and reagents. The following table details key materials used in the referenced survivin optimization study, providing researchers with a foundation for similar applications.

Table 3: Research Reagent Solutions for Sequential Simplex Optimization

| Reagent/Material | Specification/Concentration | Function in Optimization |

|---|---|---|

| E. coli Origami B Strain | pAK400cb-SVV expression vector | Host organism for recombinant protein expression |

| Modified Super Broth Medium | 30 g/L tryptone, 15 g/L yeast extract, 10 g/L MOPS | Culture medium for bacterial growth and protein production |

| Zinc Sulphate | 190 μM (optimized) | Mineral supplementation to enhance protein structure and yield |

| IPTG | 246 μM (optimized) | Inducer for recombinant protein expression |

| d-biotin | 4 μM | Essential cofactor for biotinylation system |

| Antibiotics | Tetracycline (10 μg/mL), Chloramphenicol (25 μg/mL), Kanamycin (15 μg/mL) | Selective pressure to maintain plasmid stability |

| Buffer Components | MOPS, pH 7.0 | Maintain optimal pH for protein stability and activity |

The geometric foundation of optimization provided by the simplex concept continues to evolve, with ongoing research addressing both theoretical and practical challenges. Recent work by Bach and Huiberts has further refined our understanding of simplex performance, demonstrating that runtimes are guaranteed to be significantly lower than previously established limits [2]. This research provides stronger mathematical support for the practical efficiency observed in simplex-based applications.

For drug development professionals and researchers, the future of sequential simplex optimization lies in developing methods that scale linearly with the number of constraints—the "North Star" for this research field [2]. As these methodological advances continue to emerge, sequential simplex optimization will remain an essential tool for tackling complex optimization challenges in pharmaceutical development, bioprocess engineering, and beyond, enabling more efficient and effective research outcomes across the scientific spectrum.

Sequential simplex optimization represents a cornerstone of experimental design and numerical optimization, particularly in fields requiring robust methods for navigating complex, multi-dimensional response surfaces without reliance on derivative information. The foundational work of William Spendley, G. Richard Hext, and Frank R. Himsworth in 1962 established the core principles of simplex-based direct search methods, creating a versatile framework for experimental optimization [6] [7]. Their pioneering paper, "Sequential Application of Simplex Designs in Optimization and Evolutionary Operations," introduced a systematic approach for optimizing processes and products through iterative simplex transformations, laying the groundwork for what would become one of the most enduring algorithms in computational optimization [7].

This technical guide examines the Spendley, Hext, and Himsworth (SHH) method within the broader context of sequential simplex research, detailing its theoretical foundations, methodological framework, and practical implementations. Unlike the better-known Nelder-Mead algorithm, which it directly inspired, the SHH method maintains a constant-shape simplex throughout the optimization process, employing only reflection and shrinkage operations to navigate the response surface [6]. This characteristic makes it particularly valuable for applications requiring consistent step sizes and directional stability, including industrial process optimization, pharmaceutical development, and statistical experimental design where factor interactions present complex optimization challenges.

Historical Context and Theoretical Foundations

Predecessors and Influences

The development of simplex methods occurred alongside significant advances in optimization theory and experimental design during the mid-20th century. While George Dantzig's simplex method for linear programming emerged in 1947, it addressed fundamentally different problems involving linearly constrained optimization [2]. The SHH method, in contrast, was conceived for nonlinear, unconstrained optimization problems where derivative information is unavailable or unreliable.

A crucial conceptual influence was the emerging understanding of concentration-response surfaces in statistical experimental design. As noted in the work of John Biggers, traditional approaches of varying one component at a time proved inefficient for detecting interactions between multiple factors in mixture optimization problems [8]. This recognition that the totality of responses to a mixture of compounds could be represented as a multi-dimensional surface necessitated more sophisticated optimization strategies capable of navigating this complex terrain.

The Simplex Concept

In the context of sequential optimization, a simplex is defined as the geometric figure formed by a set of n+1 points (vertices) in n-dimensional space [6] [7]. For example:

- In 2 dimensions, a simplex is a triangle

- In 3 dimensions, a simplex is a tetrahedron

- In n dimensions, a simplex is the simplest possible polytope spanning the space

The SHH method specifically employs a regular simplex in which all edges have equal length, maintaining this regular shape throughout the optimization process through symmetrical transformations [6]. This contrasts with later approaches that allowed the simplex to adapt its shape to the local response surface topography.

Table: Simplex Properties by Dimensionality

| Dimension (n) | Vertices | Edges | Shape | Visualization |

|---|---|---|---|---|

| 1 | 2 | 1 | Line segment | Two points on a line |

| 2 | 3 | 3 | Equilateral triangle | Triangle in a plane |

| 3 | 4 | 6 | Regular tetrahedron | Pyramid with triangular base |

| n | n+1 | n(n+1)/2 | Regular polytope | Generalized beyond 3D visualization |

The Spendley, Hext, and Himsworth Algorithm

Core Principles

The SHH method operates through a sequential process of evaluating objective function values at each vertex of the current simplex, identifying the least favorable response, and generating a new simplex by reflecting the worst vertex through the centroid of the remaining vertices [6]. This reflection operation is followed by shrinkage steps when reflections fail to improve the response, creating a systematic traversal of the response surface.

Key characteristics of the original SHH method include:

- Constant shape maintenance: The simplex retains its regular geometry throughout the optimization process, unlike adaptive approaches that allow shape deformation [6]

- Fixed step size: The edge length remains constant except during shrinkage operations

- Minimal function evaluations: Typically only one function evaluation per iteration is required

- Derivative-free operation: The method relies solely on function values without gradient information

Mathematical Formulation

For an n-dimensional optimization problem, the SHH method maintains a simplex with vertices (x0, x1, \ldots, x_n \in \mathbb{R}^n). At each iteration:

Ordering: Determine indices of worst ((h)) and best ((l)) vertices: [ fh = \max{j} f(xj), \quad fl = \min{j} f(xj) ]

Centroid calculation: Compute the centroid (c) of the face opposite the worst vertex: [ c = \frac{1}{n} \sum{j \neq h} xj ]

Reflection: Generate a new vertex (xr) by reflecting the worst vertex through the centroid: [ xr = c + (c - x_h) ]

Shrinkage: If the reflected vertex does not yield improvement, shrink the entire simplex toward the best vertex

The reflection coefficient in the original SHH method is fixed at 1.0, maintaining the regular simplex geometry throughout the optimization process [6].

Algorithm Workflow

The following diagram illustrates the complete sequential simplex method workflow as established by Spendley, Hext, and Himsworth:

Figure 1: Sequential Simplex Method Workflow

Comparative Analysis: SHH vs. Nelder-Mead

The Nelder-Mead Enhancements

In 1965, John Nelder and Roger Mead introduced significant modifications to the SHH method, creating what would become the more widely known Nelder-Mead simplex algorithm [6]. Their key innovation was allowing the simplex to adapt both size and shape to the local response surface topography through additional transformation operations:

- Expansion: For significant improvements, extend the reflection further

- Contraction: For moderate improvements, contract the reflection

- Shrinkage: Maintained as in the original SHH method

This adaptive approach allowed the algorithm to "elongate down long inclined planes, change direction on encountering a valley at an angle, and contract in the neighbourhood of a minimum" [6]. The Nelder-Mead method typically requires only one or two function evaluations per iteration, maintaining the efficiency of the original approach while significantly improving performance across diverse response surfaces.

Methodological Comparison

Table: Comparison of SHH and Nelder-Mead Simplex Methods

| Characteristic | Spendley-Hext-Himsworth | Nelder-Mead |

|---|---|---|

| Publication Year | 1962 [7] | 1965 [6] |

| Simplex Geometry | Regular (constant shape) | Adaptive (changes shape) |

| Transformations | Reflection, shrinkage | Reflection, expansion, contraction, shrinkage |

| Transformation Parameters | Fixed reflection (α=1) | Adjustable parameters (α, β, γ, δ) |

| Convergence Behavior | Methodical, predictable | Adaptive, landscape-responsive |

| Performance | Robust on symmetric surfaces | Superior on anisotropic surfaces |

| Implementation Complexity | Simpler | More complex parameter tuning |

| Modern Usage | Less common | Widespread (e.g., MATLAB fminsearch) |

The mathematical representation of these transformations highlights their operational differences. For the Nelder-Mead method, the test points lie on the line defined by (x_h) and (c):

[ x(\alpha) = (1 + \alpha)c - \alpha x_h ]

With specific points including:

- Reflection point: (x_r = x(1))

- Expansion point: (x_e = x(2))

- Outside contraction: (x_{oc} = x(0.5))

- Inside contraction: (x_{ic} = x(-0.5))

This flexible approach contrasts with the single reflection operation in the SHH method [6].

Practical Implementation and Research Applications

Experimental Design Protocol

For researchers implementing the SHH method in experimental optimization, the following protocol provides a structured approach:

Initial Simplex Construction:

- Define initial vertex (x_0) based on prior knowledge

- Generate remaining vertices: (xj = x0 + h e_j) for (j = 1, \ldots, n)

- Maintain consistent step size (h) in each coordinate direction

- Verify non-degeneracy (vertices not in same hyperplane)

Iteration Procedure:

- Evaluate objective function at all vertices

- Identify worst (highest function value) and best (lowest) vertices

- Calculate centroid of remaining vertices after excluding worst

- Compute reflection point and evaluate

- Accept reflection if improvement occurs

- Implement shrinkage toward best vertex if no improvement

Termination Criteria:

- Simplex size below tolerance threshold

- Function value differences sufficiently small

- Maximum iteration count reached

Research Reagent Solutions Toolkit

Table: Essential Components for Sequential Simplex Implementation

| Component | Function | Implementation Example |

|---|---|---|

| Initial Vertex Selection | Starting point for optimization | Based on literature values or preliminary experiments |

| Step Size Parameters | Controls initial simplex size | Typically 10-20% of parameter range |

| Objective Function | Quantifies response to optimize | Yield, purity, efficiency, or cost metric |

| Convergence Threshold | Determines stopping point | Based on practical significance or measurement precision |

| Transformation Rules | Defines simplex manipulation | Reflection (α=1.0) and shrinkage (δ=0.5) operations |

| Experimental Replicates | Addresses response variability | 2-3 replicates per vertex for noisy systems |

Contemporary Relevance and Research Directions

Modern Theoretical Understanding

Recent advances in optimization theory have shed new light on simplex methods, with researchers continuing to explore their theoretical properties six decades after their introduction. Key areas of investigation include:

- Convergence behavior: Studies have identified various convergence modes, including convergence of function values to a common limit, convergence of vertices to a single point, or convergence to a non-stationary point [9]

- Matrix representations: Modern analyses represent simplex transformations as matrix operations, facilitating theoretical analysis of algorithm properties [9]

- Stochastic variants: Recent work has incorporated randomness to improve performance guarantees, drawing inspiration from advances in linear programming simplex methods [2]

Applications in Pharmaceutical Research

The SHH method and its descendants remain particularly valuable in pharmaceutical development and biological research, where:

- Culture media optimization: Sequential simplex methods have been extensively applied to optimize complex nutrient mixtures for cell culture and embryo development [8]

- Process parameter optimization: Reaction conditions, purification parameters, and formulation components can be efficiently optimized with minimal experimental resources

- Drug response surface mapping: Characterization of multi-factor drug interactions benefits from efficient experimental designs

The microdroplet method developed in John Biggers' laboratory, employing simplex-optimized media formulations in miniaturized experiments under oil, exemplifies the powerful synergy between sequential simplex optimization and experimental biology [8]. This approach enables high-throughput screening of complex mixture effects while conserving valuable reagents.

The Spendley, Hext, and Himsworth method established the fundamental principles of simplex-based direct search optimization, creating a versatile framework that continues to influence computational and experimental optimization six decades after its introduction. While largely superseded by the more flexible Nelder-Mead algorithm in general practice, the SHH approach remains relevant for applications requiring consistent experimental step sizes and methodological stability.

The sequential simplex paradigm represents a cornerstone of derivative-free optimization, particularly valuable in scientific and industrial contexts where objective functions are noisy, discontinuous, or computationally expensive to evaluate. Its enduring legacy persists not only in continuous optimization algorithms but also in experimental design methodologies across chemical, pharmaceutical, and biological disciplines. As theoretical understanding of these methods continues to evolve, their practical utility ensures ongoing relevance in an increasingly data-driven scientific landscape.

The Nelder-Mead simplex algorithm, introduced in 1965, represents a cornerstone in numerical optimization, particularly valued in chemical, medical, and statistical applications where derivative information is unavailable or unreliable [6]. This direct search method uses a simplex—a geometric shape with n+1 vertices in n-dimensional space—that adapts itself to the objective function's landscape through a series of transformations [10] [6]. While its simplicity and low computational requirements fueled widespread adoption, the algorithm suffers from well-documented limitations including susceptibility to stagnation and sensitivity to initial conditions [11] [12]. Recent enhancements, particularly the integration of Direct Inversion in Iterative Subspace (DIIS) methodology, have addressed these deficiencies, marking a landmark development in sequential simplex optimization research with significant implications for computational drug development and scientific computing.

Sequential simplex methods represent a family of direct search optimization algorithms that evolved from the original work of Spendley, Hext, and Himsworth in 1962, which utilized a regular simplex maintaining constant angles between edges [6]. Nelder and Mead's seminal 1965 modification introduced critical adaptations by allowing the simplex to change both size and shape, dramatically improving performance across diverse optimization landscapes [6]. As Nelder and Mead themselves described, their enhanced simplex "adapts itself to the local landscape, elongating down long inclined planes, changing direction on encountering a valley at an angle, and contracting in the neighbourhood of a minimum" [6].

This evolutionary step established the foundation for decades of sequential simplex research, distinguishing itself from George Dantzig's simplex method for linear programming despite the similar terminology [13] [2]. The Nelder-Mead method specifically targets multidimensional unconstrained optimization without derivatives, making it particularly valuable for problems with non-smooth functions, discontinuous regions, or where function evaluations are uncertain or subject to noise [6]. These characteristics frequently occur in drug development applications, including parameter estimation for pharmacokinetic models, quantitative structure-activity relationship (QSAR) studies, and experimental optimization of reaction conditions.

Core Algorithmic Framework

Fundamental Operations

The Nelder-Mead algorithm maintains a working simplex at each iteration, performing transformations based on function evaluations at the vertices. The standard algorithm parameters include reflection coefficient (α = 1), expansion coefficient (γ = 2), contraction coefficient (ρ = 0.5), and shrinkage coefficient (σ = 0.5) [13] [6]. Each iteration follows a systematic process:

- Ordering: Determine indices h, s, l of the worst, second worst, and best vertices based on function values [6]

- Centroid Calculation: Compute centroid c of the best side (opposite the worst vertex xₕ) [6]

- Transformation: Generate new test points through reflection, expansion, contraction, or shrinkage operations [10] [6]

Figure 1: Nelder-Mead algorithm workflow showing the logical sequence of simplex transformations and decision points

Termination Criteria

The algorithm typically terminates when the working simplex becomes sufficiently small or when function values at the vertices are close enough, indicating potential convergence [6]. Alternative implementations may use maximum iteration counts or track improvements over successive iterations [14].

Methodological Enhancements and Experimental Protocols

DIIS Acceleration Framework

The integration of Direct Inversion in Iterative Subspace (DIIS) with Nelder-Mead represents a significant methodological advancement. DIIS accelerates optimization by extrapolating better intermediate solutions from linear combinations of previously evaluated points [12]. The NM-DIIS protocol follows this experimental methodology:

- Initialization: Generate initial simplex with n+1 vertices around starting point x₀ [10] [6]

- Standard NM Steps: Perform conventional Nelder-Mead iterations, storing vertices and function values

- DIIS Extrapolation: Periodically apply DIIS to generate accelerated trial points

- Acceptance Testing: Evaluate candidate points, replacing worst vertex if improvement occurs

- Termination Check: Monitor simplex size and function value convergence [12]

Figure 2: NM-DIIS enhanced framework integrating traditional Nelder-Mead steps with DIIS extrapolation

Adaptive Parameter Control

Gao and Han developed an adaptive parameter implementation that dynamically adjusts transformation coefficients based on problem characteristics and progression, addressing stagnation issues in classical implementations [11]. This approach modifies the standard fixed parameters (α, β, γ, δ) according to problem dimensionality and observed performance.

Performance Analysis and Comparative Results

Benchmarking Methodology

Experimental evaluation of enhancement efficacy typically employs standard test functions from optimization literature, including:

- Rosenbrock function: A classic unimodal test function with a curved valley [11]

- Sphere function: A simple convex function serving as baseline [11]

- Rastrigin function: A multimodal function with many local minima [11]

- Ackley function: A multimodal function with moderate complexity [11]

Performance metrics include convergence speed (iterations and function evaluations), success rate in locating global minima, and computational time [11] [12].

Quantitative Performance Comparison

Table 1: Comparative performance of Nelder-Mead variants on benchmark functions

| Method | Problem Dimension | Average Runtime (s) | Success Rate (%) | Function Evaluations | Key Improvement |

|---|---|---|---|---|---|

| Standard NM | 10 | 12.7 | 85 | 1,250 | Baseline |

| NM-DIIS | 10 | 9.3 | 92 | 890 | 27% faster convergence [12] |

| Standard NM | 30 | 45.2 | 65 | 3,850 | Baseline |

| NM-DIIS | 30 | 29.8 | 83 | 2,420 | 34% faster convergence [12] |

| Standard NM | 50 | 128.5 | 52 | 8,960 | Baseline |

| NM-DIIS | 50 | 79.3 | 76 | 5,310 | 38% faster convergence [12] |

| Adaptive NM | 30 | 32.1 | 88 | 2,650 | Reduced stagnation [11] |

Table 2: Performance characteristics across problem types

| Problem Type | Standard NM Limitations | Enhanced NM Improvements | Recommended Variant |

|---|---|---|---|

| High-dimension unimodal | Slow convergence, excessive evaluations | 30-40% faster runtime, elimination of long tails in runtime distribution [12] | NM-DIIS |

| Noisy functions | Sensitivity to function noise | Improved stability through extrapolation | NM-DIIS with averaging |

| Ill-conditioned | Stagnation in valleys | Adaptive parameters prevent premature collapse [11] | Adaptive NM |

| Multimodal | Convergence to local minima | DIIS helps escape shallow minima [12] | Hybrid NM-DIIS |

The NM-DIIS method demonstrates particularly strong performance for high-dimensional problems, where it eliminates the long tails in runtime distribution observed in standard Nelder-Mead implementations [12]. This enhancement provides more predictable and reliable optimization performance, especially valuable in drug development applications where computational time directly impacts research cycles.

Implementation Guidelines for Research Applications

Research Reagent Solutions

Table 3: Essential computational components for Nelder-Mead implementation

| Component | Function | Implementation Notes |

|---|---|---|

| Objective Function Wrapper | Encapsulates target function evaluation | Should handle noisy evaluations and validation checks |

| Simplex Initialization Module | Generates initial simplex from starting point | Critical for performance; coordinate or regular simplex [6] |

| Transformation Controller | Manages reflection, expansion, contraction operations | Implement standard parameters (α=1, γ=2, ρ=0.5, σ=0.5) [13] |

| Convergence Monitor | Tracks termination criteria | Monitor simplex size and function value differences [14] |

| DIIS Extrapolator | Accelerates convergence through linear combinations | Store previous points; implement regularization for stability [12] |

| Adaptive Parameter Manager | Dynamically adjusts coefficients | Based on problem dimensionality and progress [11] |

Practical Implementation Considerations

For drug development applications, several implementation factors require careful attention:

- Initial Simplex Design: Proper initialization is crucial—a right-angled simplex along coordinate axes or a regular simplex with appropriate scale improves performance [6]

- Constraint Handling: For constrained optimization problems, barrier functions can transform constrained problems into unconstrained ones compatible with NM [14]

- Noise Tolerance: Experimental measurements in drug development often contain noise; function value comparisons should include appropriate tolerances [6]

- Hybrid Approaches: Combining NM with global search methods (e.g., particle swarm optimization) can improve performance on multimodal problems [12]

The enhancement of Nelder-Mead algorithm through DIIS methodology represents a landmark development in sequential simplex optimization research. By addressing fundamental limitations in convergence reliability while maintaining the method's derivative-free advantage, NM-DIIS and related adaptive approaches significantly expand the applicability of simplex methods to contemporary optimization challenges in drug development and scientific computing.

Future research directions include further refinement of DIIS extrapolation techniques, development of problem-specific parameter adaptation strategies, and hybrid approaches combining simplex methods with machine learning for initialization and convergence prediction. As optimization challenges in pharmaceutical research continue to grow in dimensionality and complexity, these enhanced simplex methods will play an increasingly vital role in accelerating discovery and development pipelines.

The continued evolution of Nelder-Mead algorithms demonstrates how classical optimization methods can be revitalized through strategic enhancements, maintaining their relevance for contemporary scientific challenges while preserving the conceptual simplicity that established their original utility.

Sequential simplex optimization is a class of direct search methods used for finding a local minimum or maximum of an objective function in a multidimensional space, particularly valuable for problems where derivatives are unknown or the function is non-differentiable [13] [15]. Unlike the Simplex method for linear programming, the Nelder-Mead simplex method operates by evolving a geometric figure called a simplex—comprising n+1 points in an n-dimensional space—through a series of geometric transformations [16] [13]. This methodology represents a hill-climbing approach where the final optimum depends strongly on the specified starting point, making it a fundamental technique in the broader context of numerical optimization research [16].

Core Components of the Simplex Method

The Simplex Structure

In n-dimensional space, a simplex is a special polytope defined by n+1 vertices [13]. For example:

- A line segment in one-dimensional space

- A triangle in two-dimensional space

- A tetrahedron in three-dimensional space

Each vertex of the simplex represents a single set of parameter values, with the entire structure serving as the exploratory framework for the optimization process [16]. The method systematically compares the values of the objective functions at these n+1 points and moves the simplex gradually toward the optimum through an iterative process [16].

Algorithm Parameters and Coefficients

The Nelder-Mead algorithm utilizes four primary coefficients to control its geometric transformations, with the following standard values and functions [13]:

Table 1: Nelder-Mead Algorithm Coefficients

| Coefficient | Symbol | Standard Value | Operation Controlled |

|---|---|---|---|

| Reflection | α | 1.0 | Reflection through centroid |

| Expansion | γ | 2.0 | Expansion along promising direction |

| Contraction | ρ | 0.5 | Contraction away from poor point |

| Shrinkage | σ | 0.5 | Uniform simplex shrinkage |

These parameters govern the behavior of the algorithm, influencing both convergence speed and solution quality [13]. The reflection coefficient (α) typically equals 1, expansion coefficient (γ) equals 2, contraction coefficient (ρ) equals 0.5, and shrinkage coefficient (σ) equals 0.5 in standard implementations [13].

Fundamental Operations of the Nelder-Mead Algorithm

Ordering and Initialization

The algorithm begins by ordering the vertices of the simplex according to their objective function values [13]:

Where:

- x₁ = Best point (lowest function value)

- xₙ = Second worst point

- xₙ₊₁ = Worst point (highest function value)

The initial simplex configuration is crucial for algorithm performance, as a poorly chosen simplex can lead to convergence to non-stationary points or excessive iterations [13]. The centroid xₒ of all points except the worst point (xₙ₊₁) is calculated as the basis for subsequent operations [13].

Reflection Operation

Reflection is the primary operation that drives the simplex away from unfavorable regions [16]. The reflected point xᵣ is computed as:

Where α > 0 is the reflection coefficient [13]. If the reflected point is better than the second worst but not better than the best (f(x₁) ≤ f(xᵣ) < f(xₙ)), the worst point xₙ₊₁ is replaced with xᵣ, forming a new simplex [13]. This operation conserves the volume of the simplex while moving it in a favorable direction [13].

Expansion Operation

When reflection identifies a significantly better point (f(xᵣ) < f(x₁)), expansion is used to explore this promising direction further [16]. The expanded point xₑ is calculated as:

Where γ > 1 is the expansion coefficient [13]. If the expanded point represents an improvement over the reflected point (f(xₑ) < f(xᵣ)), the worst point is replaced with xₑ; otherwise, xᵣ is used [16] [13]. This allows the simplex to take larger steps in productive directions.

Contraction Operations

When reflection fails to produce a satisfactory improvement, contraction operations are employed to refine the search.

Outside Contraction: If f(xᵣ) is better than xₙ but worse than xₙ₊₁ (f(xₙ) ≤ f(xᵣ) < f(xₙ₊₁)), compute:

Where 0 < ρ ≤ 0.5 [13]. If xₑ is better than xᵣ, replace xₙ₊₁ with xₑ [13].

Inside Contraction: If f(xᵣ) is worse than or equal to xₙ₊₁ (f(xᵣ) ≥ f(xₙ₊₁)), compute:

If the contracted point is better than the worst point, it replaces xₙ₊₁ [13].

Shrink Operation

If contraction fails to yield improvement, the simplex shrinks uniformly toward the best point [13]:

For all i = 2 to n+1, where 0 < σ < 1 is the shrinkage coefficient [13]. This operation represents the most conservative movement, ensuring the simplex doesn't abandon potentially productive regions prematurely.

Workflow and Logical Relationships

The logical flow of the Nelder-Mead algorithm demonstrates how these operations interact systematically:

Experimental Protocol and Implementation

Termination Criteria

The algorithm terminates when any of the following conditions are met [16]:

Table 2: Nelder-Mead Termination Criteria

| Criterion | Typical Value | Description |

|---|---|---|

| Maximum iterations | 1000 | Upper limit on algorithm cycles |

| Simplex base size | 0.001 | Minimum size of simplex base |

| Standard deviation | 0.0001 | Minimal variation between vertices |

| Goal achievement | User-defined | Optimization target reached |

Detailed Methodological Steps

For researchers implementing the Nelder-Mead algorithm, the following experimental protocol ensures proper application:

Initialization Phase

- Define the objective function f(x) for x ∈ Rⁿ

- Construct initial simplex with n+1 vertices

- Set algorithm parameters (α, γ, ρ, σ) or use defaults

- Establish termination criteria thresholds

Iteration Phase

- Evaluate objective function at each vertex

- Order vertices by performance: f(x₁) ≤ f(x₂) ≤ ... ≤ f(xₙ₊₁)

- Calculate centroid xₒ of best n points

- Execute reflection operation and evaluate

- Based on outcome, perform expansion, contraction, or shrinkage

- Form new simplex by replacing appropriate vertex

Validation Phase

- Verify convergence to stationary point

- Check solution against alternative methods

- Perform sensitivity analysis on parameters

Research Reagent Solutions

Table 3: Essential Components for Simplex Optimization Research

| Component | Function | Implementation Example |

|---|---|---|

| Objective Function | Defines optimization target | Pharmaceutical yield function |

| Initial Simplex | Starting configuration | n+1 carefully chosen parameter sets |

| Reflection Coefficient (α) | Controls reflection step size | Standard value: 1.0 [13] |

| Expansion Coefficient (γ) | Controls expansion magnitude | Standard value: 2.0 [13] |

| Contraction Coefficient (ρ) | Controls contraction step size | Standard value: 0.5 [13] |

| Shrinkage Coefficient (σ) | Controls simplex reduction | Standard value: 0.5 [13] |

| Termination Criteria | Determines stopping point | Standard deviation threshold [16] |

Algorithm Visualization

The geometric transformations of the simplex can be visualized in two dimensions as follows:

Applications in Scientific Research

The Nelder-Mead simplex algorithm has found significant application in pharmaceutical research and drug development, particularly in areas where:

- Process Optimization: Optimizing reaction conditions, purification parameters, and formulation components where derivative information is unavailable [15]

- Parameter Estimation: Fitting complex pharmacokinetic models to experimental data [15]

- Experimental Design: Determining optimal experimental conditions with multiple interacting variables

The algorithm's robustness with non-differentiable functions makes it particularly valuable for real-world optimization problems in drug development where analytical gradients are often impractical or impossible to compute [15]. Furthermore, its hybrid use with other optimization methods (e.g., particle swarm optimization) demonstrates its ongoing relevance in contemporary research methodologies [15].

Hill-descent methods, more formally known as gradient-based optimization, form the cornerstone of modern computational optimization in scientific and industrial applications. The core principle is elegantly simple: iteratively move in the direction of steepest descent of a function to locate its minimum value. This approach, fundamentally known as gradient descent, was first proposed by Augustin-Louis Cauchy in 1847 and has since become indispensable in fields ranging from drug development to machine learning [17]. Within the broader context of sequential simplex optimization research, hill-descent methods represent the foundational philosophy of iterative improvement—a philosophy that simplex methods extend into multi-directional search strategies that adaptively reshape their search pattern based on landscape geometry.

The mathematical foundation of gradient descent begins with a simple update rule. For a multivariable function ( f(\mathbf{x}) ), the algorithm generates a sequence of points ( \mathbf{x}0, \mathbf{x}1, \mathbf{x}_2, \ldots ) using the formula:

[ \mathbf{x}{n+1} = \mathbf{x}n - \eta \nabla f(\mathbf{x}_n) ]

where ( \eta ) represents the learning rate (step size) and ( \nabla f(\mathbf{x}n) ) is the gradient of the function at the current point [17]. This process creates a monotonic sequence of function values ( f(\mathbf{x}0) \geq f(\mathbf{x}1) \geq f(\mathbf{x}2) \geq \cdots ), guaranteeing progressive improvement toward a local minimum under appropriate conditions.

Fundamental Mechanisms of Gradient Descent

Core Algorithm and Mathematical Foundation

The gradient descent algorithm operates on a straightforward principle: at each point in the parameter space, compute the gradient of the objective function and take a proportional step in the opposite direction. The gradient ( \nabla f ) points in the direction of steepest ascent, so moving against it represents the path of steepest descent [17]. This seemingly simple concept requires careful implementation to balance convergence speed with stability.

The algorithm can be understood through a natural analogy: imagine being lost in mountainous terrain shrouded in heavy fog. Without visibility of the full landscape, you would feel the ground around your feet to determine the steepest downward slope and take a step in that direction. Repeating this process would eventually lead you to a valley, though not necessarily the lowest valley in the entire region [17]. This mirrors the local optimization nature of gradient descent, which can converge to local minima rather than the global minimum depending on initial conditions.

Critical Parameters and Convergence

The performance and convergence of gradient descent hinge on several key parameters, with the learning rate (( \eta )) being most critical. The learning rate determines the size of each step taken during iteration [18]. As illustrated in Table 1, this parameter must be carefully balanced—too small values lead to impractically slow convergence, while excessively large values cause overshooting and potential divergence.

Table 1: Effect of Learning Rate on Gradient Descent Performance

| Learning Rate | Convergence Behavior | Efficiency | Risk of Non-Convergence |

|---|---|---|---|

| Too Small (( \eta \ll )) | Slow, guaranteed convergence | Low | None |

| Optimal | Steady, monotonic improvement | High | Low |

| Too Large (( \eta \gg )) | Oscillations around minimum | Medium | Medium |

| Very Large (( \eta \ggg )) | Divergence, increasing error | None | High |

Beyond learning rate selection, convergence depends on the objective function's properties. For convex functions, gradient descent is guaranteed to find the global minimum, while for non-convex functions (common in complex scientific applications), it may converge to local minima [17]. The algorithm's stopping criteria typically involve either reaching a maximum number of iterations, achieving a gradient magnitude below a specified threshold, or observing minimal improvement between successive iterations.

Gradient Descent Variants and Methodologies

Algorithmic Flavors and Their Characteristics

Gradient descent implementations vary primarily in how much data they use to compute each gradient update, creating a spectrum of approaches with different computational trade-offs. The three primary variants—batch, stochastic, and mini-batch—each offer distinct advantages for different problem contexts and dataset characteristics [18] [19].

Table 2: Comparison of Gradient Descent Variants

| Variant | Data Usage per Update | Convergence Stability | Computational Efficiency | Typical Applications |

|---|---|---|---|---|

| Batch Gradient Descent | Entire dataset | Smooth, stable | Low for large datasets | Small datasets, convex functions |

| Stochastic Gradient Descent (SGD) | Single random example | Noisy, can escape local minima | High | Online learning, large datasets |

| Mini-Batch Gradient Descent | Subset of examples (e.g., 32-256 samples) | Balanced stability and efficiency | Very High | Deep learning, most practical scenarios |

Batch gradient descent computes the gradient using the entire dataset, providing a stable convergence path but becoming computationally prohibitive for large-scale problems. Stochastic gradient descent (SGD) updates parameters for each training example, introducing noise that can help escape local minima but causing oscillatory convergence behavior. Mini-batch gradient descent strikes a practical balance, using small random data subsets to leverage optimized matrix operations while maintaining reasonable convergence stability [19].

Advanced Optimization Algorithms

Building upon vanilla gradient descent, several enhanced algorithms have been developed to address specific optimization challenges. Momentum optimization accelerates convergence in relevant directions by accumulating a velocity vector from past gradients, effectively damping oscillations in ravines and steep valleys [19]. The update rule with momentum becomes:

[ \begin{align} vt &= \gamma v{t-1} + \eta \nabla\theta J(\theta) \ \theta &= \theta - vt \end{align} ]

where ( \gamma ) represents the momentum term, typically set to 0.9 or similar values [19].

Nesterov Accelerated Gradient (NAG) further refines momentum by first making a step based on accumulated velocity before computing the gradient, creating a "look-ahead" mechanism that improves responsiveness to changes in the optimization landscape [19]. Additional adaptive learning rate methods like Adagrad, Adadelta, and Adam automatically adjust learning rates for each parameter based on historical gradient information, proving particularly valuable for sparse data and non-stationary objectives common in scientific applications.

Experimental Protocol for Gradient Descent Implementation

Computational Framework and Setup

Implementing gradient descent for scientific optimization requires a structured approach encompassing problem formulation, parameter initialization, iterative updating, and convergence monitoring. The following protocol outlines a standardized methodology for applying gradient descent to minimization problems, with particular emphasis on pharmaceutical and chemical optimization contexts.

Problem Formalization: Begin by defining the objective function ( J(\theta) ) parameterized by variables ( \theta \in \mathbb{R}^d ). In drug development, this might represent a quantitative structure-activity relationship (QSAR) model, molecular docking energy function, or kinetic parameter estimation problem. The function must be differentiable with respect to all parameters either analytically or through numerical approximation [18].

Parameter Initialization: Initialize parameters ( \theta ) using domain knowledge where available or strategic sampling methods. Common approaches include random initialization within biologically plausible ranges, grid-based sampling of parameter space, or leveraging prior experimental results. Simultaneously, set algorithmic hyperparameters including learning rate ( \eta ), momentum coefficient ( \gamma ) (if applicable), batch size (for mini-batch variants), and stopping criteria [18].

Iterative Optimization Cycle:

- Gradient Computation: Calculate ( \nabla_\theta J(\theta) ) using automatic differentiation, analytical derivatives, or finite difference methods based on the problem structure and available computational resources.

- Parameter Update: Apply the gradient descent update rule ( \theta = \theta - \eta \nabla_\theta J(\theta) ) or its variant (e.g., with momentum or adaptive learning rates).

- Convergence Monitoring: Track objective function values and parameter changes across iterations, recording trajectory information for subsequent analysis.

- Termination Check: Evaluate stopping conditions including maximum iterations, gradient magnitude thresholds (( \|\nabla J(\theta)\| < \epsilon )), or minimal improvement between epochs (( |J(\theta{t+1}) - J(\thetat)| < \delta )).

Validation and Analysis Methods

Convergence Validation: Execute multiple runs from different initial conditions to assess consistency of solutions and identify potential local minima issues. For stochastic variants, perform statistical analysis of convergence behavior across different random seeds [18].

Sensitivity Analysis: Systematically vary hyperparameters (especially learning rate and batch size) to quantify their impact on convergence speed and solution quality. This analysis helps establish robust parameter settings for specific problem classes in scientific domains.

Benchmarking: Compare gradient descent performance against alternative optimization approaches relevant to the research domain, such as simplex methods, genetic algorithms, or Bayesian optimization, using standardized performance metrics including convergence speed, solution quality, and computational efficiency.

Connection to Sequential Simplex Optimization

Philosophical and Methodological Relationships

Gradient descent and sequential simplex optimization share fundamental similarities as iterative direct search methods but diverge significantly in their approach to navigating the objective landscape. While gradient descent relies explicitly on gradient information to determine search direction, the simplex method employs a geometric approach where a simplex (an n-dimensional polytope with n+1 vertices) evolves through reflection, expansion, and contraction operations [20] [9].

The original Nelder-Mead simplex algorithm, developed in 1965, creates a sequence of simplices that adaptively reshape themselves to navigate the objective function topography without requiring gradient calculations [9]. This property makes it particularly valuable for problems where objective functions are noisy, discontinuous, or computationally expensive to differentiate—common scenarios in experimental drug development and complex biological system modeling.

Table 3: Gradient Descent vs. Simplex Method Characteristics

| Feature | Gradient Descent | Simplex Method |

|---|---|---|

| Information Used | First-order derivatives | Function values only |

| Convergence Rate | Linear near minima | Generally slower |

| Memory Requirements | Low (( O(n) )) | Higher (( O(n^2) )) |

| Noise Sensitivity | High | Moderate |

| Theoretical Foundation | Strong | Weaker |

| Implementation Complexity | Low | Moderate |

Hybrid Approaches and Modern Extensions

Contemporary optimization research increasingly explores hybrid approaches that leverage the strengths of both gradient-based and simplex methods. For problems where gradient computation is possible but expensive, gradient-assisted simplex methods can accelerate convergence while maintaining robustness to noise. In pharmaceutical applications, this might involve using gradient information to guide initial search directions followed by simplex refinement for fine-tuning parameters.

Recent theoretical advances have significantly improved our understanding of simplex method convergence properties. New research has demonstrated that carefully designed simplex algorithms with enhanced randomization techniques can achieve polynomial-time convergence guarantees, addressing long-standing concerns about worst-case performance [2]. These developments strengthen the position of sequential simplex methods as valuable complements to gradient-based approaches in the scientific optimization toolkit.

Visualization of Optimization Landscapes and Algorithm Behavior

Figure 1: Gradient Descent Algorithm Workflow

Figure 2: Simplex Method Algorithm Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Computational Components for Optimization Experiments

| Component | Function | Implementation Considerations |

|---|---|---|

| Automatic Differentiation | Precisely computes gradients without numerical approximation | Use built-in frameworks (e.g., PyTorch, TensorFlow, JAX) for reliable backpropagation |

| Learning Rate Scheduler | Dynamically adjusts step size during optimization | Implement reduce-on-plateau or cosine annealing strategies for adaptive control |

| Gradient Clipping | Prevents exploding gradients in unstable landscapes | Particularly valuable for RNNs and physically-constrained optimization |

| Parallelization Framework | Distributes computation across processing units | Essential for large parameter spaces or population-based approaches |

| Convergence Diagnostics | Monitors optimization progress and identifies stalls | Combine multiple metrics (value, gradient, parameter changes) for robustness |

The computational toolkit for effective optimization extends beyond algorithmic components to include specialized diagnostic and visualization packages. Objective landscape visualization tools help researchers understand problem difficulty and algorithm behavior, while statistical comparison frameworks enable rigorous performance evaluation across multiple optimization strategies and problem instances. For scientific applications, incorporating domain-specific constraints directly into the optimization framework is essential, whether through penalty functions, projection methods, or feasible-set parameterizations.

Implementing the Simplex Method: A Step-by-Step Guide with Scientific Use Cases

The initialization of the simplex method is a critical first step in sequential optimization, a field dedicated to developing iterative algorithms for finding the optimal values of objective functions subject to constraints. For researchers in fields like drug development, where processes are often modeled by complex, multi-variable linear programs, the initial simplex establishes the starting point from which an efficient search of the feasibility region proceeds [1]. A properly constructed initial simplex ensures the algorithm begins at a feasible point, thereby reducing computational overhead and accelerating convergence to the optimal solution, such as a maximized yield or minimized impurity [2] [3]. This guide details the methodologies for constructing this initial setup within the broader context of modern simplex research, which seeks to reconcile the algorithm's proven practical efficiency with its complex theoretical worst-case behavior [2].

Theoretical Foundations: From Geometric Intuition to Algebraic Formulation

The Simplex Algorithm in Brief

The simplex algorithm, developed by George Dantzig, is a cornerstone of mathematical optimization for solving linear programming problems [1] [2]. The algorithm operates on the fundamental geometric principle that the optimum value of a linear objective function, if it exists, is attained at a vertex (or extreme point) of the feasible region, which is a convex polyhedron [1]. The algorithm works by walking along the edges of this polyhedron from one vertex to an adjacent vertex in such a way that the objective function improves with each move. The process continues until no improving adjacent vertex exists, signifying that an optimum has been found [1].

The Criticality of the Initial Simplex

The choice of the initial simplex—or more precisely, the initial basic feasible solution—is paramount. In the geometrical execution of the algorithm, this starting vertex dictates the path taken through the feasibility region [1]. An unfortunate initial choice can, in certain pathological cases, lead to a long path that visits a large number of vertices before finding the optimum. Recent research has shown that introducing randomness, as in the smoothed analysis pioneered by Spielman and Teng, can help avoid these worst-case scenarios and explains the method's efficiency in practice [2]. The initialization phase (often called Phase I) is dedicated solely to finding this starting point. If no basic feasible solution can be found, the problem is deemed infeasible [1].

Methodologies: A Dual Approach to Initialization

The term "simplex" can refer to two distinct concepts in optimization. This guide focuses on initializing the simplex algorithm for linear programming (LP). It is crucial to distinguish this from the Nelder-Mead simplex method, which is a popular direct search algorithm for non-linear optimization [1] [9]. The initialization procedures for these two methods are fundamentally different.

Table 1: Comparison of Simplex Method Types

| Feature | Dantzig's Simplex Algorithm (for LP) | Nelder-Mead Method (for Non-Linear Problems) |

|---|---|---|

| Primary Use | Linear Programming | Non-linear, derivative-free optimization |

| Problem Formulation | Maximize cᵀx subject to Ax ≤ b, x ≥ 0 |

Minimize a function f: Rⁿ → R |

| "Simplex" Meaning | A geometric polytope defining the feasible region | An operational geometric shape of n+1 points that evolves through reflection, expansion, and contraction |

| Initialization Goal | Find an initial basic feasible solution (a vertex of the polytope) | Construct an initial simplex of n+1 vertices in n-dimensional space |

| Key Reference | Dantzig (1947) [1] | Nelder and Mead (1965) [9] |

Initialization for Dantzig's Simplex Algorithm (Linear Programming)

The goal is to find an initial basic feasible solution from which the canonical simplex algorithm can begin its iterations.

Standard Form Transformation

The algorithm requires the problem to be in standard form [1]:

- Maximize: ( \mathbf{c^T x} )

- Subject to: ( A\mathbf{x} = \mathbf{b} ), and ( \mathbf{x} \geq \mathbf{0} ), with ( \mathbf{b} \geq \mathbf{0} ).

This transformation involves:

- Converting Inequalities to Equalities: Add slack variables to "≤" constraints and subtract surplus variables from "≥" constraints [1] [3]. For example, the constraint ( x2 + 2x3 \leq 3 ) becomes ( x2 + 2x3 + s1 = 3 ), with ( s1 \geq 0 ).

- Handling Unrestricted Variables: Replace each free variable with the difference of two non-negative variables [1].

The Two-Phase Method

When the initial standard form does not yield an obvious basic feasible solution (i.e., the constraint matrix A does not contain an identity matrix), the Two-Phase Method is used [1].

- Phase I: Construct an auxiliary linear program where the objective is to minimize the sum of artificial variables. These variables are added to each constraint that lacks a slack variable. The initial basic feasible solution for this auxiliary problem is composed of the slack and artificial variables. If the optimum of this auxiliary problem is zero (all artificial variables are driven to zero), a basic feasible solution to the original problem has been found.

- Phase II: The basic feasible solution found in Phase I is used as the starting point for the original objective function. The simplex tableau is updated, and the standard algorithm proceeds [1] [3].

Table 2: Phase I Initialization Protocol

| Step | Action | Purpose | Expected Outcome |

|---|---|---|---|

| 1 | Add Artificial Variables | To form an obvious starting basis (the identity matrix) | Enables the start of the simplex algorithm on an auxiliary problem. |

| 2 | Form Auxiliary Objective Function | To minimize the sum of the artificial variables | Driving this sum to zero verifies feasibility of the original problem. |

| 3 | Solve Auxiliary Problem | To find a basis where all artificial variables are non-basic (value = 0) | Provides the initial basic feasible solution for Phase II. |

| 4 | Proceed to Phase II | Initialize the simplex tableau with the original objective function and the basis from Phase I | Begins the optimization of the actual problem from a feasible vertex. |

Workflow for Simplex Initialization and Execution

The following diagram illustrates the logical sequence from problem formulation to the initiation of the iterative simplex process.

The Scientist's Toolkit: Essential Research Reagents for Optimization

Table 3: Key Reagent Solutions for Simplex-Based Experimental Optimization

| Reagent / Resource | Function in Optimization Protocol |

|---|---|

| Linear Programming Solver Software (e.g., CPLEX, Gurobi) | Implements the simplex algorithm (and its variants) efficiently, handling the computational algebra and pivot operations [2]. |

| Two-Phase Method | The core procedural "reagent" for initializing the simplex algorithm when a starting feasible solution is not readily apparent [1]. |

| Slack and Surplus Variables | Algebraic constructs that transform inequality constraints into equalities, enabling the problem to be written in standard form [1] [3]. |

| Artificial Variables | Auxiliary variables added to constraints during Phase I to create an identity matrix and an obvious initial basis. Their minimization confirms feasibility [1]. |

| Simplex Tableau | A tabular arrangement of the linear program's coefficients that organizes the data for systematic pivot operations [1] [3]. |

| Randomized Pivot Rule | A modern "reagent" inspired by smoothed analysis; introduces randomness into the choice of pivot to avoid worst-case exponential-time paths [2]. |

Current Research & Open Questions in Sequential Simplex Optimization

The simplex method remains a vibrant area of research nearly 80 years after its invention. A significant breakthrough was the 2001 work of Spielman and Teng, which used smoothed analysis to explain why the simplex method runs in polynomial time in practice, despite known exponential worst-case scenarios [2]. This line of inquiry continues, with a 2024 paper by Bach and Huiberts demonstrating a faster, more randomized algorithm and providing stronger theoretical guarantees for its performance [2]. The "North Star" for this research is to develop a variant whose runtime scales linearly with the number of constraints.

For the Nelder-Mead simplex, open questions persist regarding the convergence of the simplex vertices. While it is known that the function values at the vertices may converge, the vertices themselves may not converge to a single point, or they may converge to a non-stationary point [9]. Research continues to determine conditions under which the simplex sequence converges to a minimizer.

Sequential Simplex Optimization is an evolutionary operation (EVOP) technique that utilizes experimental results to navigate towards an optimum without requiring a complex mathematical model of the system [21]. This powerful approach is characterized by its iterative nature, following a continuous cycle of ranking, reflecting, expanding, and contracting to systematically improve solutions. In the demanding field of drug development, where processes are expensive, time-consuming, and fraught with high technical risk, efficient optimization methodologies are not merely beneficial—they are essential for success [22].

This technical guide examines the core iterative cycle of sequential simplex optimization, framing it within contemporary drug discovery and development challenges. We provide detailed methodologies, quantitative comparisons, and practical visualizations to equip researchers and scientists with the tools to implement these techniques effectively in their optimization workflows, from initial compound screening to late-stage development analytics.

The Core Iterative Cycle: Fundamental Operations

The sequential simplex method operates by iteratively transforming a geometric figure called a simplex—a polytope with n+1 vertices in n-dimensional space. Each iteration involves evaluating the performance of the current vertices and generating a new point through one of three fundamental operations: reflection, expansion, or contraction. The algorithm's power stems from its balanced approach to exploring the parameter space (expansion) while refining promising areas (contraction), all guided by a continuous ranking of solution quality.

Table 1: Fundamental Operations in the Sequential Simplex Cycle

| Operation | Mathematical Expression | Purpose | Typical Coefficient (γ) |

|---|---|---|---|

| Reflection | ( xr = xo + \alpha(xo - xw) ) | Move away from worst-performing point | ( \alpha = 1 ) |

| Expansion | ( xe = xr + \gamma(xr - xo) ) | Accelerate in promising direction | ( \gamma = 2 ) |

| Contraction | ( xc = xo + \beta(xw - xo) ) | Refine search near current best | ( \beta = 0.5 ) |

Where: ( xo ) = centroid of all points except worst, ( xw ) = worst point, ( xr ) = reflected point, ( xe ) = expanded point, ( x_c ) = contracted point.

The algorithm begins by ranking all vertices of the current simplex from best (( xb )) to worst (( xw )) based on their objective function values. This ranking determines the subsequent operation. Reflection generates a new point by moving from the worst point through the centroid of the remaining points. If the reflected point yields better performance than the current best, expansion occurs to explore further in this promising direction. If the reflected point is worse than the second-worst point, contraction occurs to refine the search more conservatively. The cycle repeats until convergence criteria are met, systematically driving the simplex toward the optimum region [21].

Implementation in Pharmaceutical Development

The sequential simplex methodology finds particularly valuable applications in pharmaceutical development, where it helps decompose complexity phase by phase under conditions of high risk and uncertainty [22]. In drug discovery, optimization challenges are ubiquitous and multidimensional, involving numerous factors that must be simultaneously balanced to achieve optimal outcomes.

Application to Clinical Trial Sequencing

A prime application of iterative optimization in pharmaceutical research involves indication sequencing—determining the optimal order to conduct clinical trials for different diseases a single compound may treat. This decision significantly influences a company's direction and future success [22]. A decision tree analysis of this problem exemplifies the "ranking, reflecting, expanding, and contracting" cycle in action:

- Ranking: Evaluating multiple strategic pathways (asthma first, IBD first, or LE first) based on their risk-adjusted Net Present Value (eNPV)

- Reflecting: Analyzing why one pathway (IBD first with eNPV of $552M) dominates others (asthma first: $348M; LE first: $346M)

- Expanding: Conducting sensitivity analysis to determine breakpoints (how low IBD POC probability of success can drop before decision changes)

- Contracting: Using Bayesian Revision to refine probability estimates and determine maximum investment for a Proof-of-Concept study ($72M in the case study) [22]

Hybrid Optimization Approaches

Recent research has demonstrated that hybrid approaches combining sequential simplex methods with other optimization techniques can yield superior results. The Genetic and Nelder-Mead Algorithm (GANMA) represents one such advanced implementation, integrating the global search capabilities of Genetic Algorithms (GA) with the local refinement strength of the Nelder-Mead Simplex Algorithm (NM) [23].

This hybrid approach directly maps to our core cycle:

- Ranking: Genetic Algorithm ranks population of potential solutions based on fitness

- Reflecting: Selection and crossover operations reflect promising solution characteristics

- Expanding: Global exploration expands search across diverse regions of parameter space

- Contracting: Nelder-Mead simplex contracts to refine solutions locally near promising candidates

GANMA has shown exceptional performance across various benchmark functions and real-world parameter estimation tasks, particularly in complex landscapes with high dimensionality and multimodality frequently encountered in pharmaceutical applications [23].

Table 2: Performance Comparison of Optimization Algorithms in Pharmaceutical Applications

| Algorithm | Exploration Strength | Exploitation Strength | Convergence Speed | Solution Quality |

|---|---|---|---|---|

| Pure Sequential Simplex | Moderate | High | Fast locally | Good for smooth functions |

| Genetic Algorithm (GA) | High | Moderate | Slow | Good for global optimum |

| GA-Simulated Annealing | High | Moderate-High | Moderate | Very good |

| GA-Particle Swarm | High | High | Moderate | Excellent |

| GANMA (Hybrid) | High | High | Fast | Superior |

Experimental Protocols and Methodologies

Protocol: Sensitivity Analysis for Indication Sequencing

Objective: Determine robustness of dominant indication sequencing strategy and identify breakpoints where alternative strategies become preferable.

Materials:

- Decision tree model with probability and value parameters

- PrecisionTree software or equivalent decision analysis platform

- Historical clinical success rates for relevant therapeutic areas

Methodology:

- Model Construction: Develop decision tree with three primary pathways (asthma first, IBD first, LE first) incorporating phase-specific probabilities of success and costs [22]

- Baseline Analysis: Calculate risk-adjusted eNPV for each pathway to identify dominant strategy

- One-Way Sensitivity: Systematically vary judgmental probability of success for dominant pathway's Proof-of-Concept phase from 0% to 100%

- Breakpoint Identification: Determine threshold probability where dominant strategy changes

- Two-Way Sensitivity: Analyze interaction between two key probabilities (e.g., POC success and Phase III success)

- Bayesian Revision: Incorporate prior information to update probability estimates using conditional probability calculations

Expected Outcomes: Identification of strategy regions, maximum investment thresholds for preliminary studies, and understanding of key value drivers in the development sequence [22].

Protocol: GANMA Hybrid Optimization

Objective: Efficiently optimize complex, multimodal functions representing pharmaceutical challenges such as molecular design or process optimization.

Materials:

- Parameterized objective function representing system to optimize

- Computational resources for population-based optimization

- Benchmark functions for validation (Sphere, Rastrigin, Ackley, etc.)

Methodology:

- Initialization: Generate initial population of candidate solutions randomly distributed across parameter space

- Genetic Operations:

- Selection: Rank parents based on fitness (tournament or roulette selection)

- Crossover: Recombine parent solutions using simulated binary crossover

- Mutation: Introduce random perturbations with specified probability

- Nelder-Mead Refinement: Apply simplex operations to best-performing solutions:

- Ranking: Order simplex vertices by performance

- Reflection: Calculate reflection point ( xr = xo + \alpha(xo - xw) )

- Expansion: If reflection improves ranking, calculate expansion point ( xe = xr + \gamma(xr - xo) )

- Contraction: If reflection worsens ranking, calculate contraction point ( xc = xo + \beta(xw - xo) )

- Termination Check: Evaluate convergence criteria (function tolerance, parameter tolerance, maximum iterations)

Expected Outcomes: Superior convergence speed and solution quality compared to standalone algorithms, particularly for high-dimensional, multimodal problems common in pharmaceutical research [23].

Visualization of Workflows and Relationships

Sequential Simplex Optimization Workflow

GANMA Hybrid Optimization Workflow

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Optimization Experiments

| Reagent/Resource | Function | Application Example |

|---|---|---|

| PrecisionTree Software | Creates multi-phase decision trees with sensitivity analysis | Indication sequencing optimization [22] |

| DecisionTools Suite | Integrated platform for risk and decision analysis | Portfolio evaluation of multi-phase projects [22] |