Sequential Simplex Optimization in Analytical Chemistry: A Practical Guide for Method Development

This article provides a comprehensive guide to Sequential Simplex Optimization, a powerful and efficient chemometric tool for method development in analytical chemistry and pharmaceutical research.

Sequential Simplex Optimization in Analytical Chemistry: A Practical Guide for Method Development

Abstract

This article provides a comprehensive guide to Sequential Simplex Optimization, a powerful and efficient chemometric tool for method development in analytical chemistry and pharmaceutical research. Tailored for researchers and scientists, the content explores the foundational principles of the simplex method, contrasting it with traditional one-variable-at-a-time approaches. It details the core algorithms, including the basic and modified simplex methods, and illustrates their practical application through real-world case studies, such as the optimization of High-Performance Liquid Chromatography (HPLC) parameters. The guide further addresses advanced strategies for overcoming common challenges like local optima and provides a critical comparison with alternative optimization techniques. The goal is to equip professionals with the knowledge to implement this methodology for achieving superior analytical performance, including enhanced sensitivity, accuracy, and cost-effectiveness in their experimental workflows.

What is Sequential Simplex Optimization? Core Principles and Advantages for Chemists

Defining Sequential Simplex Optimization and its role in the R&D workflow

Sequential Simplex Optimization (SSO) is an evolutionary operation (EVOP) technique used to find the optimal combination of factor levels that produces the best possible system response without requiring a detailed mathematical model. It is a highly efficient experimental design strategy that enables researchers to optimize a relatively large number of factors in a small number of experiments. In research and development workflows, SSO provides a systematic approach for improving quality and productivity by logically guiding experimental sequences toward optimal conditions based on measured outcomes rather than theoretical predictions. This makes it particularly valuable in chemical research, pharmaceutical development, and manufacturing processes where multiple variables interact to influence final results [1] [2].

The fundamental principle of SSO involves iteratively moving through factor space by conducting experiments, evaluating responses, and making logical decisions about which new experimental conditions to test next. Unlike classical optimization approaches that begin with screening experiments and modeling, SSO reverses this sequence by first finding the optimum combination of factor levels, then modeling the system in the region of the optimum, and finally determining which factors are most important in this optimal region. This alternative strategy has proven particularly efficient for optimizing chemical systems where experiments can be conducted relatively quickly and factors are continuously variable [1].

Key Concepts and Definitions

Sequential Simplex Optimization: An evolutionary operation method that uses a geometric pattern (simplex) to guide experimentation toward optimal conditions. The simplex evolves toward better responses by reflecting away from poor performance points, requiring no complex statistical analysis between experiments [1] [3].

Factor: An independent variable or experimental parameter that can be adjusted to influence the system response. Examples include temperature, reaction time, pH, concentration, and instrument settings [1].

Response: The measurable outcome or dependent variable that indicates system performance. The goal of optimization is to find factor levels that maximize, minimize, or achieve a target value for this response [1].

Simplex: A geometric figure with one more vertex than the number of factors being optimized. For two factors, the simplex is a triangle; for three factors, it forms a tetrahedron [3].

EVOP (Evolutionary Operation): A family of techniques for process improvement that make gradual, incremental changes to factor levels while the process operates. SSO is a member of this family [1].

Sequential Simplex Optimization versus Classical Experimental Design

The classical approach to research and development follows a sequential path of screening important factors, modeling how these factors affect the system, and then determining optimum factor levels. While this approach has proven successful, it presents significant limitations when screening experiments are based on first-order models that assume no interactions between factors. If interactions do exist, factors that truly have a significant effect on the system might be incorrectly discarded during screening. Additionally, classical modeling becomes impractical when investigating more than a few factors due to the exponentially increasing number of experiments required [1].

Sequential Simplex Optimization reverses this traditional sequence by first finding the optimum combination of factor levels, then modeling the system in the region of this optimum, and finally determining which factors are most important. This approach proves particularly efficient when the primary R&D goal is optimization rather than complete system characterization [1].

Table 1: Comparison of Classical versus Sequential Simplex Optimization Approaches

| Characteristic | Classical Approach | Sequential Simplex Optimization |

|---|---|---|

| Sequence | Screening → Modeling → Optimization | Optimization → Modeling → Screening |

| Experimental Efficiency | Less efficient for multiple factors | Highly efficient, even with multiple factors |

| Mathematical Requirements | Requires statistical analysis | No complex math between experiments |

| Model Dependency | Relies on fitted models | Model-independent approach |

| Best Application | System characterization | Finding optimal conditions quickly |

The Sequential Simplex Method: Core Algorithm

The fundamental simplex algorithm begins with an initial set of experiments representing the vertices of the simplex. For k factors, this initial simplex has k+1 vertices. The basic procedure then follows these steps:

- Evaluate Response: Conduct experiments at each vertex and measure the response.

- Identify Vertices: Determine which vertex gives the worst response (W) and which gives the best response (B).

- Reflect Worst Point: Calculate the reflected point (R) of the worst vertex through the centroid of the remaining vertices.

- Evaluate New Point: Conduct experiment at the reflected point and measure its response.

- Make Decisions: Based on the response at R, decide whether to expand, contract, or continue with reflection.

- Iterate: Form a new simplex by replacing W with R (or expanded/contracted point) and repeat the process.

The algorithm continues until the simplex surrounds the optimum and begins to oscillate or contract around it, at which point termination criteria are applied [3].

Modifications and Enhancements to the Basic Algorithm

Several modifications to the basic simplex method have been developed to improve performance. The Nelder-Mead simplex introduced variable step sizes that allow the simplex to expand in favorable directions and contract away from unfavorable ones. Modified simplex methods can handle constraints by applying penalty functions to responses that violate experimental constraints. Super-modified simplex methods incorporate regression techniques to fit a local model to the vertices of the simplex, enabling more intelligent movement toward the optimum [3].

Application Notes: SSO in Analytical Chemistry

Sequential Simplex Optimization has found extensive application in analytical chemistry, particularly in techniques where multiple instrument parameters interact to influence analytical performance. The following applications demonstrate its versatility across different analytical techniques.

Chromatographic Method Optimization

In chromatography, SSO has proven valuable for optimizing separation conditions. Krupčík et al. demonstrated the optimization of initial temperature (T₀), hold time (t₀), and rate of temperature change (r) in linear temperature programmed capillary gas chromatographic analysis (LTPCGC) of multicomponent samples. They proposed a novel optimization criterion (Cp) that balanced separation quality with analysis time:

[Cp = Nr + \frac{(t{R,n} - t{max})}{t_{max}}]

where Nr represents the number of peaks detected and the second term relates analysis time (tR,n) to maximum acceptable time (tmax) [4].

Atomic Absorption Spectroscopy

SSO significantly improved efficiency in hydride generation atomic absorption spectroscopy (HGAAS) for trace metal analysis. A 1989 study demonstrated that SSO required only 10-20 experiments to identify optimal conditions for acid concentration, reaction time, carrier gas flow rate, and sodium borohydride amount. In contrast, traditional univariate optimization needed 30-50 experiments to achieve the same goal, representing a 50-70% reduction in experimental workload [5].

Pharmaceutical Analysis

SSO has been applied to pharmaceutical analysis for optimizing chromatographic separation of drugs and excipients. Examples include the determination of nabumetone in pharmaceutical preparations by micellar-stabilized room temperature phosphorescence and the separation of vitamins E and A in multivitamin syrup using micellar liquid chromatography. In these applications, SSO efficiently identified optimal mobile phase composition, pH, and detection parameters that would have been laborious to discover using one-variable-at-a-time approaches [3].

Table 2: Sequential Simplex Optimization Applications in Analytical Chemistry

| Analytical Technique | Optimized Factors | Response Variable | Experimental Efficiency |

|---|---|---|---|

| Temperature Programmed GC | Initial temperature, hold time, heating rate | Peak resolution, analysis time | Not specified |

| Hydride Generation AAS | Acid concentration, reaction time, gas flow rate, reagent amount | Absorbance signal | 10-20 experiments vs. 30-50 for univariate |

| Micellar Liquid Chromatography | Mobile phase composition, pH, flow rate | Peak resolution, sensitivity | Not specified |

| Flow Injection Analysis | Reagent concentration, flow rate, mixing time | Detection signal, reproducibility | Not specified |

Experimental Protocol: Sequential Simplex Optimization for Analytical Method Development

Define the Optimization Problem

- Identify Critical Factors: Select the factors (independent variables) to be optimized based on prior knowledge or preliminary experiments. For chromatographic methods, this typically includes mobile phase composition, pH, temperature, and flow rate.

- Define the Response: Establish a quantifiable response (dependent variable) that measures performance. For chromatography, this could be resolution between critical peak pairs, analysis time, peak symmetry, or a composite response function combining multiple criteria.

- Set Factor Ranges: Determine feasible ranges for each factor based on instrumental limitations, chemical stability, or practical constraints.

Establish Initial Simplex

- Determine Number of Vertices: For k factors, establish k+1 initial experiments forming the simplex vertices.

- Select Initial Factor Levels: Choose factor levels for each vertex. The initial simplex can be constructed using a standard matrix or based on prior knowledge of promising experimental conditions.

- Define Step Size: Establish appropriate step sizes for each factor, considering the sensitivity of the response to factor changes.

Execute Sequential Experiments

- Run Experiments: Conduct experiments at each vertex of the initial simplex in random order to minimize effects of extraneous variables.

- Measure Responses: Quantify the response for each experiment.

- Identify Worst Vertex: Determine which vertex gives the least desirable response.

- Calculate Reflection: Compute the centroid of all vertices except the worst, then reflect the worst vertex through this centroid.

- Conduct New Experiment: Perform experiment at the reflected point.

Make Movement Decisions

Based on the response at the reflected point (R):

- If R is better than the current best: Expand further in the same direction.

- If R is better than the worst but not the best: Accept reflection and form new simplex.

- If R is worse than the worst: Contract away from this direction.

- If R violates constraints: Apply penalty function to response or contract.

Terminate Optimization

Establish termination criteria before beginning optimization:

- Simplex Size: When the simplex becomes sufficiently small (vertices close together).

- Response Improvement: When improvement in response falls below a threshold.

- Cycling: When the simplex begins to oscillate between the same points.

- Experimental Budget: When reaching a predetermined number of experiments.

Research Reagent Solutions

The following table details essential materials and reagents commonly used in analytical chemistry applications where Sequential Simplex Optimization is applied.

Table 3: Essential Research Reagents and Materials for SSO Applications

| Reagent/Material | Function/Application | Example Use Case |

|---|---|---|

| Mobile Phase Components | Chromatographic separation | HPLC method development |

| Buffer Solutions | pH control in aqueous systems | Optimization of separation pH |

| Derivatization Reagents | Enhancing detection sensitivity | GC or HPLC analysis of non-UV absorbing compounds |

| Atomic Absorption Standards | Calibration and method validation | Trace metal analysis by AAS |

| Hydride Generation Reagents | Volatile hydride formation | Determination of As, Se, Sb by HGAAS |

| Column Stationary Phases | Molecular separation | Chromatographic optimization |

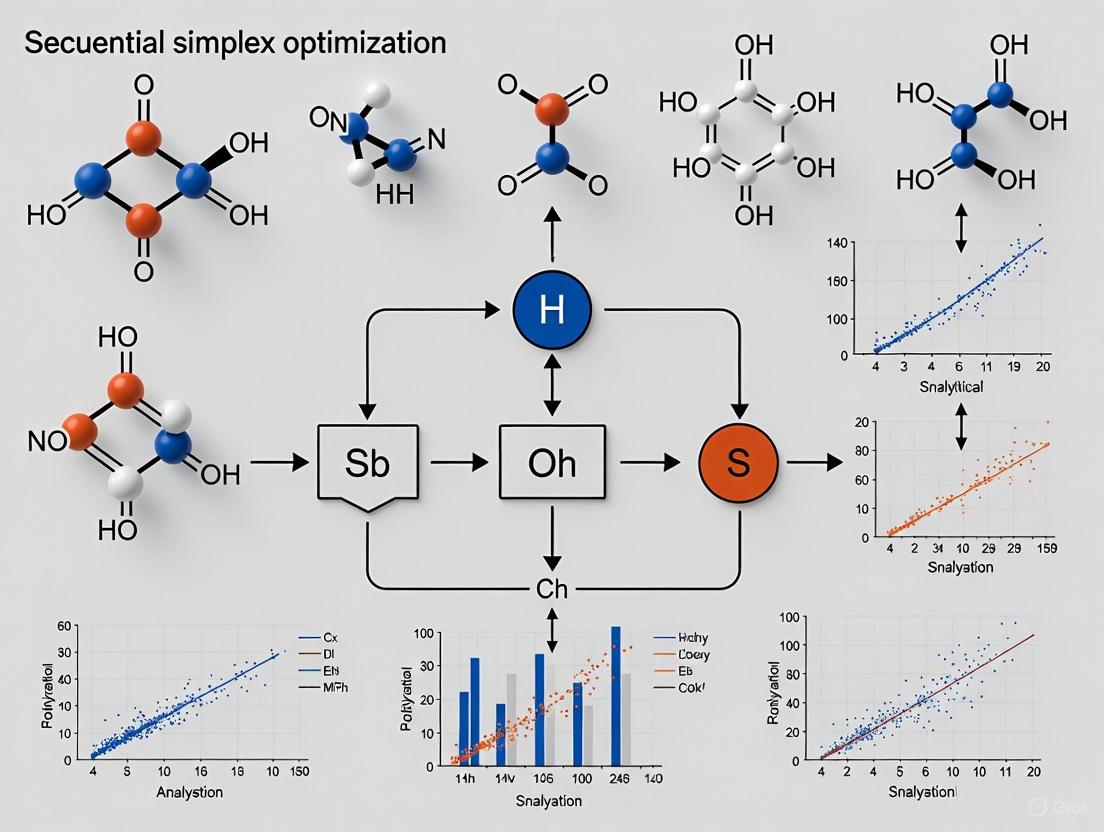

Workflow Visualization

The following diagram illustrates the logical sequence of Sequential Simplex Optimization in the research and development workflow:

SSO Logical Workflow

The movement of a sequential simplex through factor space follows a distinct pattern as it approaches the optimum. The following diagram illustrates the reflection, expansion, and contraction operations:

Simplex Movement Operations

Integration in R&D Workflow

Sequential Simplex Optimization serves as a powerful tool within the broader R&D workflow, particularly when employed in conjunction with other optimization strategies. For systems suspected of having multiple local optima (such as chromatographic separations), a hybrid approach often proves most effective. The classical "window diagram" technique can first identify the general region of the global optimum, after which SSO provides fine-tuning of the system parameters [1].

In the pharmaceutical industry, this approach accelerates method development for quality control, formulation optimization, and process development. The efficiency of SSO enables rapid adaptation of analytical methods to new drug compounds or excipient systems. For drug development professionals facing time and resource constraints, SSO offers a systematic approach to method optimization that minimizes experimental workload while ensuring robust, transferable methods [2] [3].

The implementation of SSO within quality by design (QbD) frameworks provides a structured approach to understanding method capabilities and limitations. By efficiently mapping the response surface around the optimum, SSO helps define the method operable design region (MODR), which is critical for regulatory submissions and method validation [3].

The evolution of simplex-based optimization methods represents a pivotal chapter in the history of computational optimization, particularly within analytical chemistry and drug development. The journey from the fixed-size simplex approach of Spendley, Hext, and Himsworth to the adaptive Nelder-Mead algorithm marks a significant advancement in direct search optimization techniques that remain relevant in modern scientific computing. These methods have proven indispensable for parameter estimation, instrument calibration, and process optimization where derivative information is unavailable or unreliable, offering robust solutions to complex experimental optimization challenges faced by researchers [6].

This development history demonstrates how algorithmic improvements directly address practical experimental needs. The transition between these two optimization approaches illustrates the critical balance between mathematical elegance and practical utility in scientific computing—a consideration that remains paramount when selecting optimization techniques for contemporary analytical chemistry research.

Historical Context and Evolutionary Trajectory

The 1950s and early 1960s witnessed the emergence of direct search methods alongside the growing accessibility of digital computers for scientific computation. The term "direct search" was formally introduced by Hooke and Jeeves in 1961, establishing a classification for optimization methods that rely solely on function evaluations without requiring derivative information [6]. This period represented a paradigm shift in experimental optimization, as scientists could now employ computational approaches to tackle complex multidimensional optimization problems that were previously intractable through manual experimentation.

The first simplex-based direct search method was published in 1962 by Spendley, Hext, and Himsworth. Their approach utilized a regular simplex (all edges having equal length) that moved through the parameter space using two fundamental operations: reflection away from the worst vertex and shrinkage toward the best vertex [6]. A key characteristic of this early simplex method was that the working simplex maintained a constant shape throughout the optimization process—it could change size but not shape due to the fixed angles between edges. While mathematically elegant, this rigidity limited the algorithm's efficiency across diverse optimization landscapes commonly encountered in analytical chemistry applications.

In 1965, Nelder and Mead introduced their modified simplex method, publishing what would become one of the most influential papers in computational optimization. Their key innovation was expanding the transformation rules to include expansion and contraction operations, allowing the working simplex to adapt both size and shape to the local topography of the response surface [6]. This adaptive capability represented a significant advancement, as Nelder and Mead poetically described: "In the method to be described the simplex adapts itself to the local landscape, elongating down long inclined planes, changing direction on encountering a valley at an angle, and contracting in the neighbourhood of a minimum" [6].

Table 1: Key Historical Milestones in Simplex Optimization Development

| Year | Development | Key Innovators | Primary Advancement |

|---|---|---|---|

| 1961 | Term "Direct Search" Introduced | Hooke and Jeeves | Formal classification of derivative-free optimization methods |

| 1962 | First Simplex Method | Spendley, Hext, and Himsworth | Fixed-shape simplex using reflection and shrinkage operations |

| 1965 | Adaptive Simplex Method | Nelder and Mead | Shape-adapting simplex with expansion and contraction operations |

| 1970s | Software Library Implementation | Various | Integration into major numerical software libraries |

| 1980s | "Amoeba Algorithm" in Numerical Recipes | Press et al. | Popularization through influential scientific computing handbook |

| 1998 | Convergence Analysis | Lagarias et al. | Rigorous mathematical examination of method properties |

| 2000s | Widespread Adoption in Scientific Software | MATLAB, Others | Implementation as "fminsearch" in MATLAB and other platforms |

Theoretical Foundation and Algorithmic Differences

Spendley, Hext, and Himsworth Simplex Method

The original simplex method of Spendley, Hext, and Himsworth was designed for unconstrained optimization problems of minimizing a nonlinear function (f : \mathbb{R}^n \to \mathbb{R}) without using derivative information. The algorithm operates by constructing a regular simplex in (n)-dimensional space—a geometric figure with (n+1) vertices that generalizes the triangle (2D) and tetrahedron (3D) to higher dimensions [6]. At each iteration, the algorithm:

- Ordering: Identified the worst vertex ((x_h)) with the highest function value

- Reflection: Reflected the worst vertex through the centroid of the opposite face

- Shrinkage: If reflection failed to improve the function value, shrunk the entire simplex toward the best vertex

The method's limitation stemmed from maintaining a regular simplex throughout the optimization process. While this ensured numerical stability, it constrained the algorithm's ability to adapt to the function's topography, resulting in slower convergence on anisotropic or ill-conditioned problems frequently encountered in analytical chemistry applications such as chromatography optimization or spectroscopic calibration.

Nelder-Mead Adaptive Simplex Algorithm

Nelder and Mead enhanced the original approach by introducing a more flexible simplex that could adapt its shape based on local landscape characteristics. Their algorithm incorporates four transformation operations controlled by specific parameters [6]:

- Reflection ((\alpha > 0)): Projects the worst vertex through the centroid of the opposing face

- Expansion ((\gamma > 1)): Extends the reflection further if it identifies a promising direction

- Contraction ((0 < \beta < 1)): Reduces the simplex size when reflection offers limited improvement

- Shrinkage ((0 < \delta < 1)): Systematically reduces the simplex toward the best vertex

The standard parameter values are (\alpha = 1), (\beta = 0.5), (\gamma = 2), and (\delta = 0.5), which have proven effective across diverse optimization scenarios in pharmaceutical and analytical applications [6].

The Nelder-Mead method typically requires only one or two function evaluations per iteration, making it computationally efficient compared to other direct search methods that may need (n) or more evaluations [6]. This characteristic has made it particularly valuable in chemical applications where function evaluations correspond to expensive experimental measurements or computationally intensive simulations.

Figure 1: Nelder-Mead simplex algorithm decision pathway and workflow

Comparative Analysis of Methodological Approaches

Table 2: Algorithmic Comparison: Spendley et al. vs. Nelder-Mead Simplex Methods

| Characteristic | Spendley, Hext, & Himsworth (1962) | Nelder & Mead (1965) |

|---|---|---|

| Simplex Geometry | Regular simplex (fixed shape) | Adaptive simplex (variable shape) |

| Transformation Operations | Reflection, shrinkage | Reflection, expansion, contraction, shrinkage |

| Parameter Count | 2 operations | 4 controlled parameters (α, β, γ, δ) |

| Adaptation Capability | Size adaptation only | Size and shape adaptation |

| Convergence Behavior | Methodical but slower | Faster on anisotropic functions |

| Implementation Complexity | Simpler structure | More complex decision logic |

| Practical Efficiency | Limited on ill-conditioned problems | Superior performance across diverse landscapes |

| Modern Usage | Largely historical | Widespread current application |

The fundamental difference between these approaches lies in their adaptability. The Spendley-Hext-Himsworth algorithm maintains a constant simplex shape, restricting its ability to navigate complex response surfaces efficiently. In contrast, the Nelder-Mead simplex can elongate down inclined planes, change direction when encountering valleys, and contract near minima [6]. This adaptive capability is particularly valuable in analytical chemistry applications where response surfaces often exhibit complex topography with ridges, valleys, and multiple local minima.

Recent convergence studies have identified distinct behaviors between the original Nelder-Mead approach and the ordered variant proposed by Lagarias et al. While both versions generally converge to a common function value under standard conditions, examples exist where simplex vertices may converge to different limit points or to a non-stationary point [7]. These theoretical insights help researchers understand the method's limitations when applying it to challenging optimization problems in pharmaceutical development.

Experimental Protocols and Implementation Guidelines

Nelder-Mead Algorithm Implementation Protocol

Objective: Minimize a continuous multidimensional function (f(x)) where (x \in \mathbb{R}^n) without using derivative information.

Initialization Phase:

- Define initial point (x_0) representing best prior knowledge of optimum location

- Construct initial simplex using one of two standard approaches:

- Coordinate-axis approach: Generate (n) additional vertices: (xj = x0 + hj ej) for (j = 1, \ldots, n) where (ej) are coordinate unit vectors and (hj) are step sizes

- Regular simplex approach: Generate (n+1) vertices forming a regular simplex with specified edge length

- Evaluate objective function at all (n+1) vertices

Iteration Phase:

- Ordering: Identify indices of worst ((h)), second worst ((s)), and best ((l)) vertices based on function values

- Centroid Calculation: Compute centroid (c) of the best side (opposite worst vertex): (c = \frac{1}{n} \sum{j \neq h} xj)

- Transformation Step:

- Reflection: Compute (xr = c + \alpha(c - xh)) with (\alpha = 1)

- If (fl \leq fr < fs): Accept (xr) and proceed to convergence check

- Expansion: If (fr < fl), compute (xe = c + \gamma(xr - c)) with (\gamma = 2)

- If (fe < fr): Accept (xe)

- Otherwise: Accept (xr)

- Contraction: If (fr \geq fs)

- Outside contraction: If (fr < fh), compute (x{oc} = c + \beta(xr - c)) with (\beta = 0.5)

- If (f{oc} \leq fr): Accept (x{oc})

- Inside contraction: If (fr \geq fh), compute (x{ic} = c + \beta(xh - c))

- If (f{ic} < fh): Accept (x{ic})

- Outside contraction: If (fr < fh), compute (x{oc} = c + \beta(xr - c)) with (\beta = 0.5)

- Shrinkage: If contraction fails, shrink simplex toward best vertex: (xi = xl + \delta(xi - xl)) for all (i \neq l) with (\delta = 0.5)

- Reflection: Compute (xr = c + \alpha(c - xh)) with (\alpha = 1)

Termination Criteria:

- Maximum iterations reached

- Simplex size reduced below tolerance: (\max \|xi - xl\| < \varepsilon_x)

- Function value differences below tolerance: (\max f(xi) - \min f(xi) < \varepsilon_f)

Analytical Chemistry Application Protocol: HPLC Method Development

Application Context: Optimization of mobile phase composition in reversed-phase HPLC separation of pharmaceutical compounds.

Experimental Setup:

- Objective Function: Chromatographic resolution factor or peak purity index

- Variables: 2-3 component mobile phase ratios, pH, temperature

- Constraints: Total flow rate fixed, acceptable pressure range

Implementation Steps:

- Initial Simplex Design:

- 3-variable case: 4 initial experimental conditions spanning design space

- Define practical ranges based on physicochemical constraints

- Parallel Experimentation:

- Execute all simplex vertex conditions in randomized order

- Incorporate system suitability standards for quality control

- Response Evaluation:

- Measure chromatographic resolution between critical pairs

- Calculate objective function value for each vertex

- Iterative Optimization:

- Apply Nelder-Mead decision rules to determine next experimental condition

- Continue until convergence or practical significance achieved

- Validation:

- Confirm optimal conditions with replicated experiments

- Verify robustness through deliberate small perturbations

Table 3: Research Reagent Solutions for Simplex Optimization in Analytical Chemistry

| Reagent/Material | Specification | Function in Optimization | Application Context |

|---|---|---|---|

| Mobile Phase Components | HPLC grade, < 0.1% impurities | Manipulate separation selectivity | Chromatographic method development |

| Buffer Systems | pKa ± 0.5 of target pH, aqueous | Control ionization state of analytes | pH-sensitive separations |

| Standard Reference Materials | Certified, > 99% purity | System performance qualification | Objective function calculation |

| Stationary Phases | Defined ligand density, particle size | Provide separation mechanism | Column screening studies |

| Detection Systems | Appropriate sensitivity and linearity | Response measurement | Quantitative analysis |

| Chemical Modifiers | Additive controls specific interactions | Fine-tune separation parameters | Secondary mechanism optimization |

Contemporary Relevance and Research Applications

Despite being nearly sixty years old, the Nelder-Mead method remains widely used in scientific computing and continues to be actively studied. Modern research has extended our understanding of its convergence properties, with recent results indicating that the ordered variant proposed by Lagarias et al. exhibits superior convergence characteristics compared to the original formulation [7]. These theoretical advances help explain the algorithm's practical success and guide its appropriate application in scientific domains.

The method's longevity stems from several advantageous characteristics: minimal storage requirements, computational efficiency (typically 1-2 function evaluations per iteration), and robustness to noisy or discontinuous functions [6]. These attributes make it particularly valuable for experimental optimization in analytical chemistry, where function evaluations correspond to physical experiments that may exhibit stochastic variation.

Recent research continues to demonstrate the value of simplex methods in modern computational chemistry. The integration of Nelder-Mead operations into contemporary metaheuristic algorithms exemplifies its ongoing relevance. For instance, the Simplex Method-enhanced Cuttlefish Optimization (SMCFO) algorithm successfully incorporates Nelder-Mead operations to improve local search capability and solution quality in data clustering applications [8]. This hybrid approach demonstrates how classical optimization strategies can enhance modern computational intelligence methods.

Current research addresses fundamental questions about the algorithm's convergence behavior, including whether function values at all vertices necessarily converge to the same value, whether all vertices converge to the same point, and characterization of failure modes [7]. Understanding these theoretical properties informs practical implementation decisions and helps researchers select appropriate termination criteria for specific application domains.

The historical evolution from the Spendley-Hext-Himsworth fixed simplex to the adaptive Nelder-Mead algorithm represents significant progress in direct search optimization methodology. The enhanced adaptability of the Nelder-Mead approach, achieved through expansion and contraction operations, has secured its position as a fundamental tool in scientific computing, particularly in analytical chemistry and pharmaceutical development where experimental optimization is paramount.

The continued scientific interest in the Nelder-Mead method, evidenced by recent convergence studies and novel hybrid implementations, underscores its enduring value to the research community. As optimization challenges in analytical chemistry grow increasingly complex with high-dimensional parameter spaces and computationally expensive evaluations, the principles embedded in simplex methods provide a foundation for developing next-generation optimization strategies that balance theoretical rigor with practical utility.

In geometry, a simplex (plural: simplexes or simplices) is a fundamental concept that generalizes the notion of a triangle or tetrahedron to arbitrary dimensions. It represents the simplest possible polytope in any given dimension and serves as a crucial mathematical foundation for optimization techniques in analytical chemistry. The term "simplex" originates from the Latin word simplicissimus meaning "simplest," reflecting its minimal structural properties [9]. In the context of sequential optimization, a simplex is a geometric figure defined by a number of points or vertices equal to one more than the number of factors examined. For optimizing f factors, f + 1 points define the simplex in that factor space, with the dimension of the simplex equaling the number of factors [10].

A k-simplex is formally defined as a k-dimensional polytope that is the convex hull of its k + 1 vertices. More specifically, given k + 1 points ( u0,\dots,uk ) that are affinely independent (meaning the vectors ( u1-u0,\dots,uk-u0 ) are linearly independent), the simplex determined by them is the set of points ( C = \left{\theta0u0+\dots+\thetakuk~\Bigg|~\sum{i=0}^k\thetai=1\mbox{ and }\theta_i\geq 0\mbox{ for }i=0,\dots,k\right} ) [9]. This mathematical structure provides the theoretical basis for simplex optimization algorithms used in method development across various analytical techniques.

Geometric Foundation of Simplices

Basic Properties and Dimensionality

The simplex possesses distinctive geometric properties that make it invaluable for optimization strategies. In one dimension, a simplex is a line segment; in two dimensions, it forms an equilateral triangle; in three dimensions, it becomes a tetrahedron; and in higher dimensions, it generalizes to hypertetrahedra [9] [11]. Each n-simplex is the convex hull of its n+1 vertices, and its dimension is equal to the number of factors being optimized. The boundary of a k-simplex contains elements of lower dimensionality: 0-faces (vertices), 1-faces (edges), and k-faces, with the number of m-faces given by the binomial coefficient ( \binom{n+1}{m+1} ) [9].

An n-simplex is the polytope with the fewest vertices that requires n dimensions, illustrating the fundamental relationship between dimensionality and vertex count. This property becomes particularly important when dealing with multi-factor optimization problems in analytical chemistry, where each dimension represents an experimental factor, and the vertices correspond to specific experimental conditions [9] [10].

Table 1: Elements of n-Simplexes

| Simplex Type | Vertices | Edges | Faces | Cells | 4-faces | Total Elements |

|---|---|---|---|---|---|---|

| 0-simplex (point) | 1 | 0 | 0 | 0 | 0 | 1 |

| 1-simplex (line segment) | 2 | 1 | 0 | 0 | 0 | 3 |

| 2-simplex (triangle) | 3 | 3 | 1 | 0 | 0 | 7 |

| 3-simplex (tetrahedron) | 4 | 6 | 4 | 1 | 0 | 15 |

| 4-simplex (5-cell) | 5 | 10 | 10 | 5 | 1 | 31 |

| 5-simplex | 6 | 15 | 20 | 15 | 6 | 63 |

The Standard Simplex

A particularly important variant in optimization contexts is the standard simplex or probability simplex, defined as the k-dimensional simplex whose vertices are the k+1 standard unit vectors in ( \mathbf{R}^{k+1} ). This can be expressed as ( \left{\vec{x}\in \mathbf{R}^{k+1}:x0+\dots+xk=1,x_i\geq 0{\text{ for }}i=0,\dots,k\right} ) [9]. The standard simplex finds applications in mixture designs and experimental domains where factors represent proportions that must sum to unity, commonly encountered in pharmaceutical formulation development and chromatographic mobile phase optimization.

Simplex Movement in Experimental Optimization

The Sequential Simplex Optimization Principle

In analytical chemistry, simplex optimization refers to a sequential procedure where a simplex moves through the experimental domain based on specific rules. The movement is directed by the results of previous experiments, with each vertex of the simplex corresponding to a set of experimental conditions. The simplex sequentially moves toward optimal regions of the response surface by reflecting away from points with undesirable responses [10]. This approach enables efficient navigation through multi-dimensional factor spaces with minimal experimental effort.

Two primary variants of simplex optimization exist: the basic simplex method proposed by Spendley et al., and the modified simplex method by Nelder and Mead. In the basic simplex method, only reflection operations are performed, maintaining a constant simplex size throughout the procedure. The modified simplex method incorporates reflection, expansion, and contraction steps, allowing the simplex to adapt its size and accelerate convergence toward optimal conditions [10].

Rules Governing Simplex Movement

The sequential simplex procedure follows four fundamental rules that dictate its movement through experimental space. These rules ensure systematic progression toward optimal conditions while avoiding stagnation or oscillation [10]:

Reflection Rule: The new simplex is formed by keeping the two vertices from the preceding simplex with the best results and replacing the worst vertex with its mirror image across the line defined by the two remaining vertices. Mathematically, if

wis the vector representing the worst vertex andpis the centroid of the remaining vertices, the reflected vertexris calculated asr = p + (p - w) = 2p - w.Second-Worst Rule: When the newly reflected vertex yields the worst response in the new simplex, the vertex with the second-worst response is reflected instead. This prevents oscillation and facilitates direction change, particularly important in regions near the optimum.

Retention Rule: If a vertex is retained in

f + 1successive simplexes (wherefis the number of factors), the response at this vertex should be re-evaluated. If it consistently demonstrates the best performance, it is considered the provisional optimum.Boundary Rule: If a vertex falls outside feasible experimental boundaries, it is assigned an artificially worst response, forcing the simplex back into the permissible domain.

Table 2: Vertex Operations in Modified Simplex Method

| Operation | Mathematical Expression | Application Condition | Effect on Simplex |

|---|---|---|---|

| Reflection | ( r = p + (p - w) ) | Response at R better than worst (W) but worse than next-best (N) | Moves simplex away from worst region |

| Expansion | ( e = p + \gamma(p - w) ), ( \gamma > 1 ) | Response at R better than current best (B) | Accelerates movement in promising direction |

| Contraction | ( c = p + \beta(p - w) ), ( 0 < \beta < 1 ) | Response at R worse than next-best (N) | Redces step size to locate optimum precisely |

| Shrinkage | All vertices except best move toward best | Multiple poor responses | Resizes simplex around best point |

Application in Analytical Chemistry

Response Surface Optimization

In analytical chemistry, simplex optimization is employed to navigate complex response surfaces where the system's response (e.g., absorbance, resolution, sensitivity) depends on multiple factors. These response surfaces represent the relationship between factor levels and the analytical response, which can be visualized as three-dimensional surfaces or contour plots for two-factor systems [12]. For higher-dimensional factor spaces, the response surface becomes a hyper-surface that cannot be easily visualized but can be efficiently navigated using simplex algorithms.

A key advantage of simplex optimization is its ability to locate optimal conditions without requiring prior knowledge of the response surface model. This makes it particularly valuable for optimizing analytical methods where the relationship between factors and responses may be complex or unknown. The sequential nature of the procedure allows for continuous improvement of method performance based on experimental feedback [12] [10].

Case Study: Vanadium Determination Method

An exemplary application of simplex optimization appears in the development of a spectrophotometric method for vanadium determination. In this system, vanadium forms a reddish-brown compound (VO)₂(SO₄)₃ in the presence of H₂O₂ and H₂SO₄, with absorbance measured at 450 nm for quantification. The color intensity depends critically on the concentrations of both H₂O₂ and H₂SO₄, with excess H₂O₂ decreasing absorbance as the color shifts from reddish-brown to yellowish [12].

This two-factor optimization problem represents an ideal scenario for the sequential simplex approach. The initial simplex consists of three experiments (vertices) testing different combinations of H₂O₂ and H₂SO₄ concentrations. Based on the absorbance responses, the simplex sequentially moves through the experimental domain, reflecting away from poor conditions and toward the concentration combination that maximizes absorbance at 450 nm [12].

Case Study: Chromatographic Separation Optimization

Simplex optimization has been successfully applied to the optimization of basic parameters influencing temperature in linear temperature programmed capillary gas chromatographic (LTPCGC) analysis of multicomponent samples. Researchers optimized initial temperature (T₀), hold time (t₀), and rate of temperature change (r) using a sequential simplex procedure [4].

The optimization employed a novel criterion (Cₚ) incorporating both separation quality and analysis time: ( Cp = Nr + \frac{{(t{R,n} - t{max} )}}{{t_{max} }} ), where Nᵣ represents the number of peaks detected, tᵣ,ₙ is the retention time of the last peak, and tₘₐₓ is the maximum acceptable analysis time. This case demonstrates how simplex optimization can balance multiple, potentially competing objectives in analytical method development [4].

Case Study: Flow Injection Analysis for Osmium

A modified simplex method was applied to the multivariable optimization of a new flow injection-kinetic system for the spectrophotometric determination of osmium(IV) with m-acetylchlorophosphonazo. The optimization involved six variables simultaneously, with an orthogonal array design used to establish the initial simplex. The modified simplex method required only 25 experiments to locate optimal conditions for this complex six-factor system, demonstrating the efficiency of the approach for high-dimensional optimization problems [13].

Experimental Protocols

Basic Simplex Optimization Protocol for Two Factors

Purpose: To optimize two factors (X₁, X₂) to maximize or minimize a response variable using the basic simplex method.

Materials and Equipment:

- Standard laboratory equipment for analytical measurements

- Appropriate instrumentation for response measurement (e.g., spectrophotometer, chromatograph)

- Reagents and samples specific to the analytical method

Procedure:

Define Factor Boundaries: Establish feasible ranges for both factors based on practical constraints or preliminary experiments.

Construct Initial Simplex:

- Select three initial experimental conditions (vertices) that form an equilateral triangle in the factor space.

- Vertex 1: (X₁₁, X₂₁)

- Vertex 2: (X₁₂, X₂₂)

- Vertex 3: (X₁₃, X₂₃)

Perform Initial Experiments:

- Conduct experiments at each vertex in randomized order.

- Measure the response for each vertex.

Rank Vertices:

- Order vertices from best (B) to worst (W) based on response values, with N representing the next-to-worst vertex.

Calculate Reflection:

- Compute centroid P of the face opposite W: ( P = \frac{(B + N)}{2} )

- Calculate coordinates of reflected vertex R: ( R = P + (P - W) )

Perform Experiment at Reflected Vertex:

- Conduct experiment at vertex R and measure response.

Iterate:

- Form new simplex by replacing W with R while retaining B and N.

- Repeat steps 4-6 until no significant improvement occurs or oscillation is observed.

Verify Optimum:

- If a vertex is retained in three successive simplexes, re-evaluate the response at this vertex to confirm optimal performance.

Troubleshooting:

- If reflection falls outside feasible boundaries, assign artificially worst response and apply Rule 4.

- If oscillation occurs between two simplexes, apply Rule 2 to reflect the second-worst vertex.

- If convergence is slow, consider increasing simplex size; if optimum is overshot, decrease simplex size.

Modified Simplex Optimization Protocol

Purpose: To optimize multiple factors using the modified simplex method with expansion and contraction capabilities for faster convergence.

Procedure:

Initial Steps: Follow steps 1-5 of the basic simplex protocol.

Evaluate Reflection:

- After calculating and testing R, compare its response to existing vertices.

- If R is better than current best B: Calculate expansion vertex E = P + 2(P - W), test E, and retain the better of R and E.

- If R is worse than N but better than W: Calculate contraction vertex C = P + 0.5(P - W) and test C.

- If R is worse than W: Calculate contraction vertex C = P - 0.5(P - W) and test C.

Iterate:

- Form new simplex based on the outcomes of reflection, expansion, or contraction.

- Continue until simplex size falls below predefined threshold or optimal response is consistently obtained.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Simplex-Optimized Analytical Methods

| Reagent/ Material | Function in Optimization | Application Example | Considerations |

|---|---|---|---|

| m-Acetylchlorophosphonazo | Chromogenic reagent for metal ion detection | Spectrophotometric determination of Os(IV) [13] | Concentration typically optimized via simplex |

| Hydrogen Peroxide (H₂O₂) | Oxidizing agent for color development | Vanadium determination as (VO)₂(SO₄)₃ [12] | Excess amounts can decrease response; optimal concentration critical |

| Sulfuric Acid (H₂SO₄) | Provides acidic medium for reaction | Vanadium determination method [12] | Concentration affects both reaction rate and equilibrium |

| Vanadium Standard Solution | Target analyte for method development | Optimization of spectrophotometric method [12] | Purity and stability essential for reproducible results |

| Osmium(IV) Solution | Target analyte for FIA system | Optimization of flow injection analysis [13] | Handling precautions due to toxicity |

| Mobile Phase Components | Chromatographic separation | LTPGC analysis of multicomponent samples [4] | Proportion optimization via simplex for optimal resolution |

Simplex geometry provides a powerful foundation for efficient experimental optimization in analytical chemistry and pharmaceutical research. The sequential movement of simplexes through multi-dimensional factor spaces enables researchers to locate optimal conditions with minimal experimental effort, making it particularly valuable for method development where response surfaces are complex or unknown. The integration of basic simplex methods with modified approaches incorporating expansion and contraction operations creates a robust framework for navigating diverse optimization landscapes. As analytical challenges grow increasingly complex, the fundamental principles of simplex geometry continue to offer a structured, mathematically sound approach to experimental optimization that balances efficiency with practical implementation.

Why use Simplex? Contrasting multivariate optimization with univariate (one-variable-at-a-time) methods

In analytical chemistry and drug development, optimization is a fundamental process for systematically selecting input values to maximize or minimize a real function, thereby obtaining the best solution for a given problem [14]. The choice of optimization strategy significantly impacts the efficiency, cost, and success of method development. Two predominant approaches exist: univariate optimization (one-variable-at-a-time) and multivariate optimization (simultaneous multiple variables). Univariate optimization involves finding an optimal value for a single-variable problem within a specified range, where the method iteratively evaluates different values of that single variable until an optimum is reached [15]. This approach is characterized by its simplicity and computational efficiency but overlooks potential interactions between parameters. In contrast, multivariate optimization tackles complex challenges where multiple interacting variables collectively influence the final outcome, providing a more comprehensive analysis by considering all relevant variables and their interactions simultaneously [15].

The sequential simplex method represents a particularly efficient multivariate optimization technique that has gained significant traction in analytical chemistry. Originally developed by Spendley, Hext, and Himsworth and later refined by Nelder and Mead, this method uses a geometric figure called a simplex—comprising n + 1 points for n variables—to navigate the experimental space [16]. In two dimensions, this simplex manifests as a triangle, while in three dimensions, it forms a tetrahedron, with higher-dimensional analogs for more complex problems. The fundamental principle of the downhill simplex method for minimizing n-dimensional functions relies on the geometric object's ability to move one vertex at a time toward descending function values, effectively "walking" toward the optimum solution [16].

Fundamental Differences Between Univariate and Multivariate Approaches

Conceptual Framework and Implementation

Table 1: Key Differences Between Univariate and Multivariate Optimization

| Parameter | Univariate Optimization | Multivariate Optimization |

|---|---|---|

| Variables considered | One variable at a time | Multiple variables simultaneously |

| Complexity of implementation | Simple to understand and implement | Complex to understand and implement |

| Computational resources | Minimal requirements | Significant requirements |

| Interpretability of results | Straightforward and intuitive | Challenging due to intricate relationships |

| Objective function | Single objective function | Multiple objective functions |

| Type of problem | Suitable for simple tasks | Addresses complex real-world problems |

| Constraint handling | Typically no constraints | May include equality/inequality constraints |

Univariate optimization excels in scenarios with limited interdependencies among factors, where adjusting one parameter independently does not significantly affect others. The methodology involves systematically altering one variable while holding all others constant, evaluating the objective function at each step until identifying the optimum value for that parameter [15]. This process repeats for each variable sequentially. The primary advantages of this approach include its conceptual simplicity, computational efficiency, and ease of interpretation, as results directly illustrate how adjusting the single variable affects the outcome [15]. However, this method suffers from limited scope and potential oversimplification when applied to complex systems where interdependencies exist among variables [15].

Multivariate optimization methods, including the sequential simplex procedure, consider the simultaneous interaction of multiple variables, providing a more realistic model simulation that better reflects real-world scenarios [15]. This comprehensive approach often leads to more accurate predictions and robust solutions, though at the cost of increased complexity and computational demands. The mathematical foundation differs significantly between approaches: univariate optimization relies on the first-order necessary condition f'(x) = 0 and second-order sufficiency condition f''(x) > 0, while multivariate optimization employs gradient notation (∇f(x̄) = 0) and requires that the Hessian matrix be positive definite (∇²f(x̄) > 0) for unconstrained cases [14].

Mathematical Formulations

The fundamental mathematical representation for a univariate optimization problem is: min f(x) with respect to x, where x ∈ R [14] This formulation highlights the singular focus on one decision variable within the real number space.

In contrast, multivariate optimization problems are expressed as: min f(x₁, x₂, x₃.....xₙ) [14] Here, multiple decision variables interact within the objective function, creating a more complex but more representative model of real systems.

The Sequential Simplex Method: Theory and Mechanism

Core Principles and Algorithm

The sequential simplex method operates as an efficient implementation for solving a series of systems of linear equations, using a greedy strategy to jump from one feasible vertex to the next adjacent vertex until terminating at an optimal solution [17]. The algorithm begins with establishing an initial simplex—a geometric figure formed by n+1 points in n-dimensional space. For regular simplices, these points are equidistant, creating triangles in 2D, tetrahedra in 3D, and their higher-dimensional analogs [16].

The procedure involves systematic transformations of this simplex through reflection, expansion, and contraction operations, effectively "walking" the simplex toward the optimum by iteratively moving away from the point with the worst response. The method evaluates the objective function at each vertex of the simplex, identifies the worst-performing vertex, and replaces it with a new point reflected through the centroid of the remaining points [16]. This process continues iteratively until the simplex converges on the optimal solution, with termination criteria typically based on the simplex size becoming smaller than a specified tolerance or when function values show negligible improvement.

Table 2: Sequential Simplex Operations

| Operation | Mathematical Expression | Purpose | When Applied |

|---|---|---|---|

| Reflection | xᵣ = x̄ + α(x̄ - x_w) | Move away from worst point | Standard step |

| Expansion | xₑ = x̄ + γ(xᵣ - x̄) | Accelerate progress | When reflection gives best point |

| Contraction | xc = x̄ + β(xw - x̄) | Refine search area | When reflection gives poor point |

Workflow Visualization

Figure 1: Sequential Simplex Optimization Workflow. This flowchart illustrates the iterative decision process of the sequential simplex method, showing reflection, expansion, and contraction operations.

Experimental Protocol: Sequential Simplex Optimization in Chromatography

Case Study: Gas Chromatographic Analysis Optimization

The sequential simplex procedure has demonstrated particular utility in optimizing separation parameters for gas chromatographic analysis of multicomponent samples [4]. The following protocol outlines a specific application for optimizing initial temperature (T₀), hold time (t₀), and rate of temperature change (r) in linear temperature programmed capillary gas chromatographic (LTPCGC) analysis.

Research Reagent Solutions and Materials

Table 3: Essential Materials for Chromatography Optimization

| Material/Reagent | Specification | Function in Experiment |

|---|---|---|

| Gas Chromatograph | Capillary column with flame ionization detector | Separation and detection system |

| Reference Standards | Multicomponent mixture of known compounds | Test mixture for optimization |

| Data Acquisition System | Chromatography data software | Records retention times and peak areas |

| Mobile Phase | High-purity carrier gas (He, N₂, or H₂) | Transport medium through column |

| Syringe | Precision microsyringe (0.5-1.0 µL) | Sample introduction |

Optimization Criterion Definition

For chromatography optimization, a well-defined criterion (Cₚ) is essential. The proposed optimization criterion incorporates both separation quality and analysis time efficiency [4]:

Cₚ = Nᵣ + (tR,n - tmax)/t_max

Where:

- Nᵣ represents the number of peaks detected by the integrator (main component)

- t_R,n is the retention time of the last peak

- t_max denotes the maximum acceptable analysis time

This composite criterion balances the competing objectives of maximum peak resolution (through Nᵣ) and minimum analysis time, with the secondary term penalizing analyses that exceed practical time constraints.

Step-by-Step Experimental Procedure

Define Variable Space: Establish the feasible ranges for each parameter:

- Initial temperature (T₀): 50-100°C

- Hold time (t₀): 1-5 minutes

- Temperature rate (r): 5-20°C/min

Construct Initial Simplex: Create an initial simplex with 4 points (n+1 for n=3 variables) using a tilted first design matrix, which has demonstrated superior performance compared to cornered approaches [18].

Execute Experimental Runs:

- For each vertex of the simplex, prepare the chromatographic system according to the parameter combinations.

- Inject the standardized multicomponent sample mixture.

- Record the chromatogram, noting retention times and peak areas.

- Calculate the optimization criterion Cₚ for each run.

Apply Simplex Algorithm:

- Rank vertices based on Cₚ values (higher values indicate better performance).

- Reflect the worst vertex through the centroid of the remaining points.

- Based on the response at the reflected point, decide whether to reflect, expand, or contract according to the standard simplex rules.

Iterate to Convergence: Continue the simplex transformations until no significant improvement in Cₚ occurs or the simplex size reduces below a practical threshold (typically 1-2% of parameter ranges).

Validate Optimum: Conduct triplicate runs at the predicted optimum conditions to verify reproducibility and performance.

Importance of First Design Matrix

The initial configuration of the simplex, known as the first design matrix, significantly influences the speed and efficiency of convergence. Research indicates that under simulated experimental conditions including noise and interaction effects, an optimally oriented first simplex demonstrates superior performance compared to classical tilted or cornered approaches [18]. The first design matrix determines the starting orientation of the simplex in the experimental space, affecting how quickly the algorithm can locate promising regions. For chemical applications with significant factor interactions and experimental noise, careful consideration of the initial simplex configuration can reduce the number of experimental runs required by 15-30% [18].

Comparative Analysis: Univariate vs. Simplex Performance

Efficiency Metrics in Experimental Optimization

Table 4: Performance Comparison of Optimization Methods

| Performance Metric | Univariate Approach | Sequential Simplex Method |

|---|---|---|

| Number of experiments required | High (exponential with variables) | Moderate (linear with variables) |

| Handling of factor interactions | Poor (ignores interactions) | Excellent (explicitly accounts for interactions) |

| Convergence speed | Slow for multiple variables | Rapid direct path to optimum |

| Robustness to noise | Moderate | High (with proper adaptation) |

| Risk of suboptimal solutions | High (may miss global optimum) | Lower (better global exploration) |

| Implementation complexity | Low | Moderate to high |

The sequential simplex method demonstrates particular advantages in scenarios with significant factor interactions, which are common in analytical chemistry applications. For instance, in chromatography, parameters like temperature, flow rate, and mobile phase composition often interact non-linearly, creating a complex response surface with potential local optima [4]. Univariate approaches typically fail to capture these interactions, potentially converging on suboptimal conditions. In contrast, the simplex method's multivariate nature enables it to navigate these complex response surfaces more effectively.

Case study data from gas chromatography optimization reveals that the sequential simplex method typically achieves optimum conditions within 15-20 experimental runs for a three-parameter system, whereas univariate optimization may require 30-40 runs to reach a frequently inferior solution [4]. This efficiency advantage becomes more pronounced as the number of variables increases, making simplex methods particularly valuable for complex optimization problems in drug development and analytical method validation.

Application Scope in Analytical Chemistry

Figure 2: Optimization Method Applications in Analytical Chemistry. This diagram classifies optimization approaches and their typical applications in analytical chemistry and pharmaceutical research.

The sequential simplex method represents a powerful multivariate optimization technique that offers significant advantages over traditional univariate approaches for complex problems in analytical chemistry and drug development. By simultaneously evaluating multiple parameters and explicitly accounting for factor interactions, simplex optimization more effectively navigates complex response surfaces, leading to superior solutions with fewer experimental iterations. While univariate methods retain value for simple systems with minimal factor interdependencies, the simplex approach provides a more efficient and comprehensive optimization strategy for most real-world applications encountered in analytical research.

The implementation of sequential simplex optimization in analytical method development—particularly in chromatography, extraction processes, and formulation development—can significantly reduce method development time while improving method performance. The incorporation of proper experimental design principles, including careful consideration of the first design matrix and appropriate optimization criteria, further enhances the efficiency and reliability of this multivariate approach. As analytical challenges grow increasingly complex in pharmaceutical research, multivariate optimization methods like the sequential simplex will continue to provide essential tools for developing robust, efficient, and transferable analytical methods.

Sequential simplex optimization represents a powerful, practical chemometric tool for systematically improving the performance of analytical methods and pharmaceutical formulations. As a multivariate optimization strategy, it enables researchers to efficiently navigate complex experimental landscapes involving multiple interacting variables by moving a geometric figure (a "simplex") toward optimal conditions [19]. Unlike traditional univariate approaches that modify one factor at a time, simplex methodologies simultaneously adjust all variables, offering significant advantages in experimental efficiency, particularly when factor interactions are significant [19].

Within analytical chemistry research, simplex optimization provides a methodological framework for achieving robust methods with desirable analytical characteristics without requiring excessively complex mathematical-statistical expertise [19]. The technique's sequential nature—where each experimental result informs the next condition—makes it exceptionally valuable for resource-constrained environments where rapid optimization is essential.

Fundamental Principles and Methodologies

Core Simplex Variants

Two primary simplex variants dominate practical applications in analytical and pharmaceutical research, each with distinct characteristics and advantages:

Basic Simplex (Fixed-Size): The original approach employs a regular geometric figure that maintains constant size throughout the optimization process. For k variables, the simplex consists of k+1 vertices [20]. The method proceeds by reflecting the vertex with the worst response across the opposite face, systematically moving toward more favorable regions [19] [20]. The fixed-size characteristic makes initial simplex dimension selection crucial, requiring substantial researcher intuition about the system [19].

Modified Simplex (Variable-Size): Also known as the Nelder-Mead method, this enhanced approach permits the simplex to expand or contract based on response quality, dramatically improving convergence efficiency [19] [20]. This flexibility allows the algorithm to accelerate toward optima and contract for refined localization [20]. The variable-size capability makes this variant particularly valuable for systems where the optimal region's characteristics are poorly understood a priori.

Operational Mechanics

The modified simplex method employs four fundamental operations to navigate the experimental space [20]:

- Reflection (R): Moving away from the worst-performing vertex.

- Expansion (E): Accelerating movement in promising directions.

- Contraction (C): Refining the search near suspected optima.

- Size Adjustment: Adapting the simplex dimensions to response topography.

These operations enable the simplex to traverse complex response surfaces efficiently while balancing exploration and refinement. The algorithm terminates when the simplex encircles the optimum region, indicated by oscillation around a central point with superior response characteristics [20].

Workflow Visualization

The following diagram illustrates the complete sequential simplex optimization workflow, integrating both basic and modified simplex operations:

Diagram 1: Sequential Simplex Optimization Workflow. The algorithm dynamically selects operations based on response quality at reflection points.

Ideal Application Scenarios in Analytical Chemistry

Sequential simplex optimization demonstrates particular utility in specific analytical chemistry contexts where conventional optimization approaches prove suboptimal. The methodology excels when experimental factors exhibit complex interactions, when the response surface characteristics are unknown, and when analytical systems require balancing multiple competing objectives.

Instrument Parameter Optimization

Analytical instrumentation with multiple interdependent parameters represents an ideal application domain for simplex optimization. The technique has successfully optimized systems including:

- Chromatographic separations: Efficiently optimizing mobile phase composition, column temperature, and flow rate to resolve complex mixtures [21] [19]. For example, simplex has been applied to optimize the separation of nimodipine and its impurities by investigating factors like organic modifier concentration, column temperature, and mobile phase flow rate [21].

- Spectroscopic methods: Simultaneously adjusting multiple instrument parameters to maximize sensitivity and signal-to-noise ratios [19].

- Flow injection analysis: Optimizing timing, flow rates, and reagent volumes in automated analytical systems [19].

The sequential nature of simplex optimization makes it particularly valuable for instrumental techniques where each experimental measurement requires substantial time or resources, as it minimizes the total number of experiments needed to reach optimal conditions [19].

Method Development with Multiple Responses

Many analytical methods require balancing competing responses, creating challenging optimization landscapes. Simplex optimization facilitates navigation of these complex surfaces:

- Multi-criteria decision making: Simultaneously optimizing sensitivity, resolution, and analysis time [21].

- Robustness enhancement: Identifying operational regions where method performance remains acceptable despite minor parameter variations.

- Specificity optimization: Maximizing target analyte response while minimizing interference effects.

Table 1: Simplex Applications in Analytical Chemistry

| Application Area | Key Variables Optimized | Response Criteria | References |

|---|---|---|---|

| HPLC Method Development | Mobile phase composition, temperature, flow rate | Resolution, peak symmetry, analysis time | [21] [19] |

| Atomic Spectroscopy | Fuel flow rate, observation height, nebulizer pressure | Signal intensity, signal-to-noise ratio | [19] |

| Solid-Phase Microextraction | Extraction time, temperature, desorption conditions | Extraction efficiency, reproducibility | [19] |

| Flow Injection Analysis | Reagent volumes, flow rates, reaction times | Sensitivity, sample throughput | [19] |

Ideal Application Scenarios in Pharmaceutical Development

Pharmaceutical formulation and process development present numerous multidimensional optimization challenges where simplex methodologies deliver significant advantages. The approach efficiently navigates complex excipient and process parameter interactions to identify robust formulations with desired performance characteristics.

Formulation Optimization

Pharmaceutical formulation development requires balancing multiple critical quality attributes, creating ideal conditions for simplex application:

- Optimizing capsule formulations using dissolution rate and compaction as target responses while varying levels of drug, disintegrant, lubricant, and fill weight [21].

- Developing sustained-release matrix tablets by optimizing polymer blends and excipient ratios to achieve target release profiles [22]. For example, simplex centroid design has been successfully applied to optimize carboxymethyl-xyloglucan-based tramadol tablets using polymer ratios and diluent concentration as independent variables [22].

- Nanoparticle engineering by simultaneously optimizing multiple composition and process parameters to achieve target particle characteristics [23].

The simplex approach is particularly valuable in early formulation development where the relationship between composition and performance is complex and poorly understood.

Nanoparticle Formulation Case Study

Lipid-based nanoparticle development for paclitaxel delivery demonstrates the power of combined experimental design strategies. Researchers utilized Taguchi array screening followed by sequential simplex optimization to identify optimal formulations with desired characteristics [23].

The optimization targeted specific final product attributes: paclitaxel entrapment efficiency >80%, final concentration ≥150 μg/mL, particle size <200 nm, and slow release profiles while maintaining cytotoxicity equivalent to commercial formulations [23]. Sequential simplex efficiently identified two optimized nanoparticle systems meeting all criteria [23].

Table 2: Pharmaceutical Formulation Case Studies Using Simplex Optimization

| Formulation Type | Independent Variables | Dependent Responses | Optimization Outcome | References |

|---|---|---|---|---|

| Paclitaxel Nanoparticles | Lipid composition, surfactant ratios, process parameters | Particle size, entrapment efficiency, drug loading, release rate | Two optimized nanoparticles with <200 nm size, >85% entrapment, sustained release | [23] |

| Tramadol Sustained-Release Tablets | Carboxymethyl-xyloglucan, HPMC K100M, dicalcium phosphate | Drug release at 2h and 8h | Regulated complete release over 8-10 hours, controlled burst effect | [22] |

| Capsule Formulations | Drug, disintegrant, lubricant levels, fill weight | Dissolution rate, compaction | Optimized formulation with polynomial model for response surface | [21] |

Experimental Protocols

Standard Operating Procedure: Modified Simplex Optimization

Purpose: To systematically optimize analytical methods or pharmaceutical formulations using the modified simplex algorithm.

Materials:

- Experimental system capable of measuring target responses

- Data recording system

- Computational tool for simplex calculations (spreadsheet or specialized software)

Procedure:

Define Optimization Objectives

- Identify all independent variables to be optimized and their feasible ranges.

- Define quantitative response measurement procedures.

- Establish convergence criteria (e.g., minimal improvement threshold, maximum iterations).

Construct Initial Simplex

- Design k+1 initial experiments, where k equals the number of variables.

- For two variables, create a triangle; for three variables, a tetrahedron.

- Ensure initial vertices span a substantial portion of the feasible region.

Execute Sequential Optimization

- Conduct experiments and measure responses for all initial vertices.

- Rank responses: Best (B), Next (N), Worst (W).

- Calculate reflection point (R) using the formula: R = P + α(P - W), where P is the centroid of all vertices except W, and α is the reflection coefficient (typically 1.0).

- Evaluate response at R and apply decision rules:

- If R > B: Calculate expansion point (E = P + γ(P - W), γ > 1) and evaluate; accept E if E > B, otherwise accept R.

- If N > R > W: Accept R.

- If W > R > "second worst": Calculate exterior contraction (C = P + β(P - W), 0 < β < 1) and evaluate; accept if better than W.

- If R worse than W: Calculate interior contraction (C = P - β(P - W)) and evaluate; accept if better than W.

- Replace W with the accepted new vertex.

Monitor Convergence

- Continue iterations until the simplex oscillates within a small region or response improvement falls below threshold.

- Verify optimality by conducting confirmation experiments.

Protocol for Tablet Formulation Optimization

Purpose: To optimize sustained-release tablet formulations using simplex centroid design.

Materials:

- API (e.g., tramadol HCl)

- Release-retarding polymers (e.g., carboxymethyl xyloglucan, HPMC K100M)

- Excipients: diluents (dicalcium phosphate), binders (PVP K-30), lubricants (magnesium stearate), glidants (talc)

- Tablet compression equipment

- Dissolution apparatus, UV spectrophotometer

Procedure:

Experimental Design

- Select independent variables (polymer ratios, diluent concentration).

- Define response variables (e.g., drug release at specific time points).

- Create simplex centroid design with appropriate constraint boundaries.

Formulation Preparation

- Weigh and mix powders according to experimental design.

- Granulate using appropriate binding solution.

- Dry granules, blend with lubricant and glidant.

- Compress tablets using standardized equipment and settings.

Response Evaluation

- Conduct dissolution testing using USP apparatus.

- Sample at predetermined time points and analyze drug concentration.

- Calculate cumulative drug release profiles.

- Determine key response metrics (e.g., Q2h, Q8h for similarity factor analysis).

Data Analysis and Optimization

- Fit response data to mathematical models.

- Generate response surface plots.

- Identify optimum using desirability functions.

- Confirm optimal formulation with verification experiments.

The Scientist's Toolkit: Essential Materials

Successful implementation of simplex optimization requires specific materials and reagents tailored to the application domain. The following table summarizes key components for pharmaceutical formulation development.

Table 3: Essential Research Reagents and Materials for Simplex Optimization Studies

| Material Category | Specific Examples | Function in Optimization | Application Context |

|---|---|---|---|

| Matrix Polymers | Carboxymethyl xyloglucan, HPMC K100M, Eudragit | Control drug release rate, provide matrix structure | Sustained-release formulations [22] |

| Lipid Components | Glyceryl tridodecanoate, Miglyol 812, emulsifying wax | Form lipid matrix for drug encapsulation, control release | Lipid nanoparticle systems [23] |

| Surfactants | Brij 78, TPGS, Poloxamers | Stabilize formulations, enhance drug solubility | Nanoparticles, self-emulsifying systems [23] |

| Analytical Reagents | HPLC solvents, pH modifiers, derivatization agents | Enable method performance quantification | Analytical method development [21] [19] |

| Diluents & Fillers | Dicalcium phosphate, microcrystalline cellulose, lactose | Adjust tablet properties, improve flow and compaction | Solid dosage form optimization [22] |

Strategic Implementation Framework

When to Select Simplex Optimization

Sequential simplex optimization provides maximum value in specific research scenarios. The following diagram illustrates the decision pathway for selecting simplex methodology versus alternative optimization approaches:

Diagram 2: Optimization Methodology Selection Guide. Simplex excels when interactions exist, the response surface is unknown, resources are limited, and rapid progress is needed.

Integration with Broader Research Strategies

Sequential simplex optimization functions most effectively as part of an integrated experimental strategy:

- Hybrid approaches: Combining simplex with other optimization methodologies, such as initial screening with Taguchi arrays followed by simplex refinement [23].

- Complementary techniques: Using simplex for initial optimization followed by response surface methodology for detailed characterization near the optimum.

- Multi-stage applications: Applying simplex at multiple development stages, from initial formulation screening to final parameter refinement.

This integrated approach leverages the respective strengths of different optimization methodologies while mitigating their individual limitations, providing a comprehensive framework for efficient research and development.

Implementing the Simplex Method: A Step-by-Step Guide and Real-World Applications