Signal-to-Noise vs. Calibration Curve: A Strategic Guide to LOD Determination for Researchers

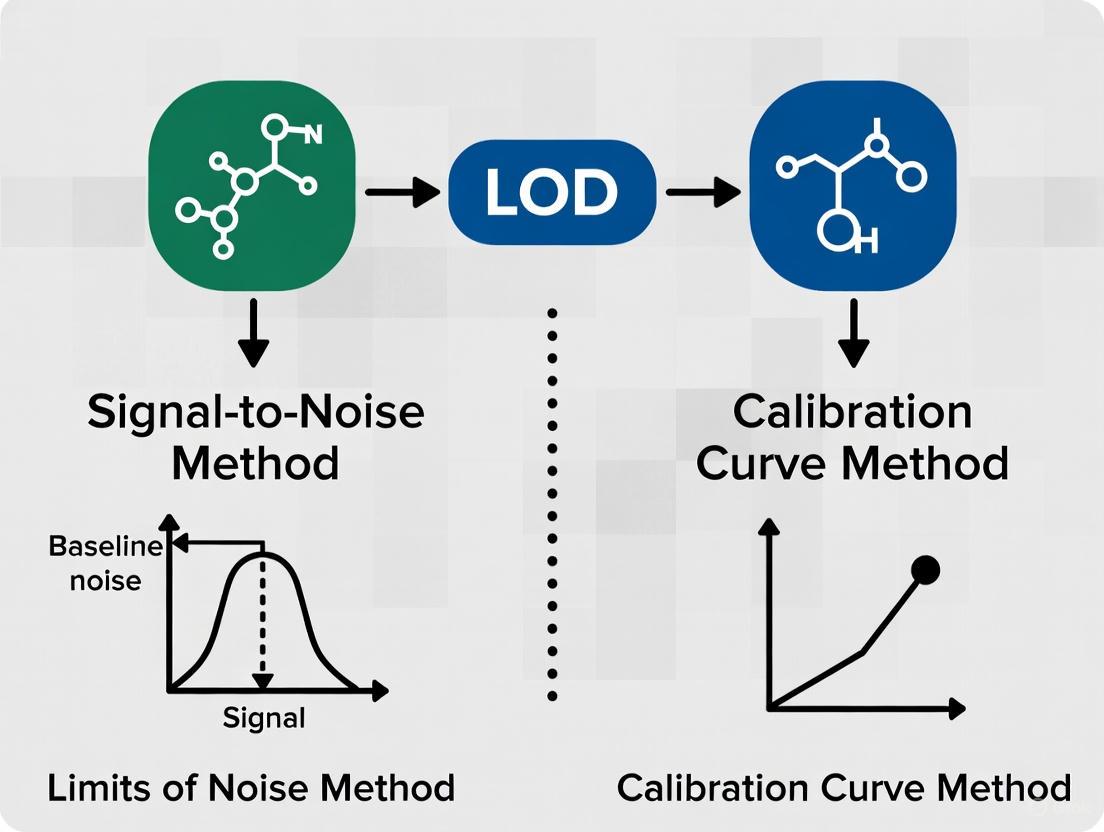

This article provides a comprehensive comparison of the signal-to-noise (S/N) and calibration curve methods for determining the Limit of Detection (LOD), a critical parameter in analytical method validation.

Signal-to-Noise vs. Calibration Curve: A Strategic Guide to LOD Determination for Researchers

Abstract

This article provides a comprehensive comparison of the signal-to-noise (S/N) and calibration curve methods for determining the Limit of Detection (LOD), a critical parameter in analytical method validation. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles, practical applications, and common pitfalls of each approach. By synthesizing current guidelines from IUPAC, ICH, and ACS, this review offers a clear framework for method selection, troubleshooting, and validation to ensure analytical procedures are fit-for-purpose in biomedical and clinical research.

Understanding LOD: Core Concepts and Regulatory Definitions

Defining LOD, LOQ, and the Critical Decision Limit (LC)

In analytical chemistry and bioanalysis, defining the lowest measurable concentrations of an analyte is crucial for method validation and reliable data interpretation. Limit of Detection (LOD), Limit of Quantification (LOQ), and Critical Decision Limit (LC) represent progressively stringent thresholds that characterize an method's capability at low concentrations. LOD represents the lowest concentration at which an analyte can be detected but not necessarily quantified, while LOQ is the lowest concentration that can be quantitatively determined with acceptable precision and accuracy [1] [2]. The Critical Decision Limit (LC), often termed the "limit of blank" in some guidelines, represents the threshold value above which a measured result is considered significantly different from the blank with a defined statistical confidence [3]. Understanding these parameters and the methodologies for their determination is essential for researchers, scientists, and drug development professionals validating analytical methods in regulated environments.

Comparative Analysis of Key Sensitivity Parameters

The table below summarizes the defining characteristics, statistical foundations, and practical applications of LOD, LOQ, and LC.

| Parameter | Definition | Statistical Basis | Primary Application |

|---|---|---|---|

| LC (Critical Decision Limit/Limit of Blank) | Highest apparent analyte concentration expected when replicates of a blank sample are tested [3] | LC = Meanblank + 1.645(SDblank) [3] | Determines if a signal is significantly different from the blank; defines the threshold for detection decisions |

| LOD (Limit of Detection) | Lowest analyte concentration reliably distinguished from the LC and at which detection is feasible [1] [3] | LOD = LC + 1.645(SDlow concentration sample) or LOD = 3.3 × σ/S [3] [4] | Confirms analyte presence without precise quantification; used for qualitative detection limits |

| LOQ (Limit of Quantification) | Lowest concentration quantified with acceptable precision and accuracy [1] [2] | LOQ = 10 × σ/S [2] [4] | Quantitative measurements at low concentrations; requires demonstration of precision and accuracy |

Methodological Approaches for Determination

Signal-to-Noise Ratio Method

The signal-to-noise (S/N) ratio method is a practical approach commonly applied in chromatographic and spectroscopic techniques where baseline noise is measurable [2] [5]. This method directly compares the magnitude of the analyte signal to the background noise of the measurement system.

Experimental Protocol:

- Baseline Noise Measurement: Inject a blank sample and record a chromatogram. Select a peak-free region and measure the vertical distance between the maximum and minimum baseline deviation over a specified time window [6].

- Analyte Signal Measurement: Inject a sample containing a low concentration of analyte and measure the peak height from the middle of the baseline noise to the peak apex [6].

- Calculation: Compute the S/N ratio by dividing the analyte signal height by the baseline noise magnitude [6].

- Threshold Application: For LOD, an S/N ratio of 3:1 is generally acceptable, while LOQ requires an S/N ratio of 10:1 [2] [5]. The ICH Q2(R2) draft explicitly states that a S/N of 3:1 is acceptable for LOD estimation, moving away from the previously accepted 2:1 ratio [5].

Data Interpretation Considerations: The S/N method translates directly to expected method precision. The relationship %RSD = 50/(S/N) predicts approximately 17% RSD at S/N=3 (LOD) and 5% RSD at S/N=10 (LOQ) [6]. This approach is particularly valuable for its simplicity but may be subject to operator bias in manual measurements [6].

Calibration Curve Method

The calibration curve method, endorsed by ICH Q2(R1), utilizes statistical properties of the calibration model to determine LOD and LOQ [7] [4]. This approach provides a more rigorous, statistically grounded alternative to the S/N method.

Experimental Protocol:

- Calibration Design: Prepare a calibration curve with standards in the range of the suspected LOD/LOQ. The highest concentration should not exceed 10 times the presumed detection limit to avoid skewing the regression [7].

- Regression Analysis: Perform linear regression on the calibration data to obtain the slope (S) and standard deviation (σ). The standard deviation can be derived as either the residual standard deviation of the regression line or the standard deviation of the y-intercepts of multiple regression lines [7] [2].

- Calculation: Apply the ICH formulas: LOD = 3.3 × σ/S and LOQ = 10 × σ/S [4].

- Experimental Verification: Analyze replicate samples (typically n=6) at the calculated LOD and LOQ concentrations to verify that they meet the required performance characteristics [4].

Data Interpretation Considerations: This approach assumes linearity in the low concentration range, normal distribution of response values, and variance homogeneity [7]. The calibration curve method is considered more scientifically rigorous than the S/N method as it incorporates the entire calibration performance rather than a single measurement point [4].

Method Comparison and Workflow Integration

The following diagram illustrates the conceptual relationship and decision workflow between LC, LOD, and LOQ in analytical method validation:

Comparative Assessment of Determination Methods

Research comparing these determination approaches reveals significant methodological differences. A 2024 study published in Scientific Reports found that the classical statistical strategy based on calibration curve parameters provided underestimated values of LOD and LOQ compared to graphical validation approaches like uncertainty profiles [8]. The uncertainty profile method, based on tolerance intervals and measurement uncertainty, provided more realistic assessments and precise uncertainty estimates [8].

The signal-to-noise and calibration curve methods may yield different results for the same analytical method [7] [8]. The S/N approach is more susceptible to operator interpretation, particularly with manual baseline measurements, while the calibration curve method depends heavily on proper experimental design in the low concentration range [7] [6]. From a regulatory perspective, justification for the chosen method is not required, but scientific justification remains important for method robustness [7].

Essential Research Reagents and Materials

The table below outlines key reagents and materials essential for conducting LOD, LOQ, and LC determinations in analytical method validation.

| Reagent/Material | Function in Analysis | Critical Specifications |

|---|---|---|

| Primary Reference Standard | Calibration curve preparation; known purity material for accurate concentration assignment | Certified purity, stability, proper storage conditions |

| Blank Matrix | Determination of LC (limit of blank); assessment of background interference | Commutable with patient specimens, analyte-free confirmation |

| Low Concentration QC Materials | Empirical determination of LOD and LOQ; verification of calculated limits | Commutability, concentration near expected limits, stability |

| Internal Standard (where applicable) | Normalization of analytical response; improvement of precision | Isotopically labeled (MS methods) or structurally similar analog |

| Mobile Phase Components | Chromatographic separation; signal optimization | HPLC/MS grade purity, low UV cutoff (for UV detection), freshly prepared |

The determination of LOD, LOQ, and LC represents a critical component of analytical method validation, providing essential information about method capability at low analyte concentrations. The signal-to-noise method offers practical simplicity and direct instrument assessment, while the calibration curve approach provides statistical rigor through regression analysis. Emerging methodologies like uncertainty profiles present promising alternatives that incorporate tolerance intervals and measurement uncertainty for more realistic assessments. For researchers and drug development professionals, method selection should consider regulatory context, analytical requirements, and the need for statistical defensibility. As methodological comparisons demonstrate, these approaches are not always equivalent, underscoring the importance of appropriate experimental design and verification in establishing reliable limits for quantitative analytical methods.

In analytical chemistry, particularly during method validation, the limit of detection (LOD) represents the lowest concentration of an analyte that can be reliably detected by an analytical method [9]. The determination of this critical value is intrinsically linked to statistical error theory, specifically the concepts of false positives (Type I errors) and false negatives (Type II errors) [9] [10]. These errors represent the fundamental trade-off between sensitivity and specificity in analytical measurements, directly impacting the reliability of detection capability claims.

When analyzing samples with concentrations near the detection limit, analysts face a decision: declare an analyte "detected" or "not detected" based on the measured signal. This binary decision creates inherent risk. If we analyze many blank samples (containing no analyte), the results will form a distribution around zero with a certain standard deviation (σ₀) due to experimental error [9]. Setting a critical level (Lc) as a decision threshold establishes a boundary: signals above Lc indicate detection, while those below indicate non-detection [9]. This threshold directly controls the probability of statistical errors, forming the foundation for LOD determination methodologies across scientific disciplines.

Theoretical Foundation: Type I and Type II Errors

Defining False Positives and False Negatives

Type I error (false positive) occurs when a true null hypothesis is incorrectly rejected [10]. In detection limit context, this means concluding an analyte is present when it is actually absent [9] [11]. The probability of committing a Type I error (α) represents the risk of false positives, typically set at 5% (α = 0.05) in analytical applications [9]. Visually, this corresponds to the portion of the blank distribution that exceeds the critical level Lc [9].

Conversely, Type II error (false negative) occurs when a false null hypothesis is not rejected [10]. For detection limits, this means failing to detect an analyte that is actually present [9] [11]. The probability of committing a Type II error is denoted by β [10]. The modern definition of LOD incorporates this probability, defining LOD as the true net concentration that will lead to the conclusion that the analyte is present with probability (1-β) [9]. This statistical framework ensures the LOD accounts for both types of potential misclassification.

The Error Relationship Visualization

The relationship between these errors, critical level, and detection limit can be visualized through their probability distributions:

Statistical Decision Framework for Detection Limits - This diagram illustrates the relationship between blank and analyte distributions, critical level (Lc), LOD, and associated error probabilities that form the statistical basis of detection capability.

The critical level Lc is calculated as Lc = z₁₋α × σ₀, where z₁₋α is the critical value from the standardized normal distribution at the chosen significance level α [9]. When α and β are both set to 0.05, and assuming constant standard deviation between blank and LOD concentrations, the detection limit can be expressed as LD = 3.3 × σ₀ [9]. This statistical foundation explains the origin of the factor 3.3 in the ICH-recommended LOD formula LOD = 3.3 × σ/S, where S is the slope of the calibration curve [4] [2].

LOD Determination Methods: Comparative Analysis

Methodological Approaches and Their Statistical Foundations

The International Conference on Harmonization (ICH) Q2(R1) guideline recognizes three primary approaches for determining LOD: visual evaluation, signal-to-noise ratio, and the calibration curve method [4] [2]. Each method implicitly or explicitly addresses the statistical error trade-offs between false positives and false negatives.

Table 1: Comparison of LOD Determination Methods

| Method | Statistical Basis | Type I Error Control | Type II Error Control | Typical Application Context |

|---|---|---|---|---|

| Signal-to-Noise Ratio [2] | Direct comparison of analyte signal to background noise variability | Fixed by S/N = 3:1 ratio convention | Implicitly controlled through the fixed ratio | Chromatographic methods with measurable baseline noise |

| Calibration Curve [4] | Based on standard deviation of response and slope of calibration curve | α ≈ 0.05 through 3.3 factor in LOD = 3.3σ/S | β ≈ 0.05 through statistical derivation | Instrumental methods where calibration curve can be obtained near LOD |

| Visual Evaluation [2] | Empirical determination by analyst observation | Variable, depends on analyst stringency | Variable, depends on analyst sensitivity | Non-instrumental methods (e.g., microbial inhibition) |

The signal-to-noise approach is commonly applied in chromatographic methods, where the LOD is defined as the concentration that yields a signal-to-noise ratio of 3:1 [2]. This empirical approach implicitly controls error probabilities by establishing a fixed threshold that significantly exceeds typical background fluctuations, thereby reducing false positives while maintaining reasonable detection capability.

The calibration curve method, mathematically expressed as LOD = 3.3 × σ/S, directly incorporates the statistical error framework into its derivation [4] [7]. The factor 3.3 specifically corresponds to the sum of z-values for α = β = 0.05 (approximately 1.645 + 1.645 = 3.29, rounded to 3.3) when standard deviations at zero concentration and at LOD are assumed equal [9]. This method uses the standard error from regression analysis as an estimate of measurement variability, providing a statistically robust approach that explicitly accounts for both Type I and Type II error probabilities.

Experimental Protocols for LOD Determination

Calibration Curve Method Protocol

For the calibration curve method, ICH Q2(R1) recommends using "a specific calibration curve studied using samples containing an analyte in the range of LOD" [7]. The experimental protocol involves:

Sample Preparation: Prepare a minimum of 5 standard solutions at concentrations in the range of the suspected LOD, typically with the highest concentration not exceeding 10 times the presumed LOD [7].

Analysis: Analyze each concentration with a minimum of 3 replicates following the complete analytical procedure [7].

Regression Analysis: Perform linear regression analysis on the concentration-response data. From the regression output, obtain the slope (S) and the standard deviation (σ), which can be either the residual standard deviation or the standard deviation of the y-intercept [4] [7].

Calculation: Apply the formula LOD = 3.3 × σ/S, ensuring all parameters are in consistent units [4]. The LOQ is similarly calculated as LOQ = 10 × σ/S [4] [2].

Validation: The calculated values must be experimentally verified by analyzing a suitable number of samples (typically n=6) prepared at the estimated LOD concentration to demonstrate reliable detection [4].

Signal-to-Noise Method Protocol

For the signal-to-noise method, the experimental approach involves:

Sample Preparation: Prepare standard solutions with decreasing concentrations in the suspected LOD region [2].

Chromatographic Analysis: Inject samples and measure the signal response at each concentration [9].

Noise Measurement: Measure the baseline noise in a blank injection, typically over a region equivalent to 20 times the peak width at half height [9].

Ratio Determination: Calculate the signal-to-noise ratio (S/N) for each concentration, where S is the analyte signal and N is the background noise [9] [2].

LOD Determination: Identify the concentration where S/N ≈ 3:1 as the LOD [2].

Comparative Method Performance and Research Context

Statistical Reliability and Error Control

Recent research comparing LOD determination methods reveals significant differences in their statistical performance and practical reliability. A 2025 study published in Scientific Reports compared approaches for assessing detection and quantitation limits in bioanalytical methods and found that "the classical strategy based on statistical concepts provides underestimated values of LOD and LOQ" compared to graphical validation approaches like uncertainty profiles [8].

The calibration curve method generally provides more statistically defensible LOD estimates because it explicitly incorporates variability through the standard deviation term and accounts for the sensitivity of the method through the slope parameter [4] [7]. This approach directly addresses both Type I and Type II error probabilities in its derivation, making it scientifically more rigorous than the signal-to-noise method, which relies on fixed, arbitrary ratios [4].

Table 2: Error Trade-offs in LOD Determination Methods

| Methodological Consideration | Impact on False Positives | Impact on False Negatives | Practical Implications |

|---|---|---|---|

| Sample Replication [9] | Reduces both error types through better variance estimation | Reduces both error types through better variance estimation | Increased analysis time and cost |

| Calibration Range Selection [7] | Critical for accurate σ estimation | Critical for accurate slope estimation | Requires preliminary LOD estimate |

| Assumption of Variance Homogeneity [7] | Underestimated if variance increases near LOD | Overestimated if variance increases near LOD | Can lead to inaccurate error control |

| Analyst Stringency in Visual Evaluation [2] | Highly variable between analysts | Highly variable between analysts | Poor reproducibility between laboratories |

The fundamental challenge in LOD determination remains the inherent trade-off between Type I and Type II errors. As noted in chromatographic literature, "defining LC in such a way that the risk is limited to, for instance, 5% (α = 0.05) seems a more logical decision in most situations" for false positives, but this necessarily increases the LOD to control false negatives [9]. This statistical reality means that no method can simultaneously minimize both error probabilities—improving one necessarily worsens the other, requiring analysts to strategically balance these risks based on their specific application requirements [9] [11].

Research Reagent Solutions for LOD Studies

Table 3: Essential Research Materials for LOD Determination Studies

| Reagent/Material | Function in LOD Studies | Critical Quality Attributes |

|---|---|---|

| Certified Reference Standards [7] | Provides known analyte concentrations for calibration curves | Purity, stability, traceability to reference materials |

| Appropriate Matrix Blanks [12] | Distinguishes analyte signal from matrix background | Represents actual sample matrix without target analyte |

| Chromatographic Solvents [4] | Mobile phase preparation and sample reconstitution | Low UV cutoff, HPLC grade, minimal impurity profile |

| Sample Preparation Materials [7] | Extraction, purification, and concentration of analytes | Low analyte background, consistent recovery performance |

The determination of detection limits in analytical chemistry remains fundamentally grounded in statistical error theory, specifically the balanced control of false positives (Type I errors) and false negatives (Type II errors). While multiple methodological approaches exist for LOD determination, the calibration curve method provides the most direct connection to statistical principles through its explicit incorporation of both α and β error probabilities in the derivation of the 3.3 factor. The signal-to-noise method, while practically convenient, relies on conventional ratios that only indirectly address underlying statistical error trade-offs.

Contemporary research continues to refine LOD determination methodologies, with emerging approaches like uncertainty profiles offering promising alternatives to classical methods [8]. Regardless of the specific technique employed, analysts must recognize that the statistical framework of hypothesis testing forms the foundation of all detection capability assessments. Effective method validation therefore requires not only technical competence in executing analytical procedures but also a thorough understanding of the statistical principles governing error probabilities in detection decisions, enabling informed trade-offs between false positive and false negative risks based on the specific requirements of each analytical application.

The Limit of Detection (LOD) represents a fundamental figure of merit in analytical chemistry, defined as the lowest quantity or concentration of a component that can be reliably detected with a given analytical method. Its determination remains one of the most controversial subjects in analytical chemistry, with multiple definitions and calculation methods contributing to ongoing scientific debate. International organizations including IUPAC, ICH, EPA, and ACS have attempted to establish consensus definitions and estimation guidelines, yet the topic continues to evolve with new methodologies and applications. Understanding the similarities and differences between these guidelines is essential for researchers, scientists, and drug development professionals who must select appropriate LOD determination methods based on their specific analytical requirements, regulatory constraints, and methodological considerations.

The fundamental concept of detection relies on the ability to discriminate between a true analyte signal and background noise or blank measurements. This discrimination inherently involves statistical risks: the probability of false positives (Type I error, α) where an analyte is falsely reported as present, and the probability of false negatives (Type II error, β) where an analyte is present but not detected. Modern LOD definitions incorporate both error types, establishing a performance characteristic that informs analysts about the minimum analyte level a method can detect with a specified degree of confidence. This article compares the perspectives of major international guidelines, providing a structured framework for selecting and implementing LOD determination methods in pharmaceutical and environmental analysis.

Comparative Analysis of International Guidelines

The following sections provide a detailed examination of how different international organizations approach LOD determination, highlighting their statistical foundations, methodological requirements, and appropriate applications.

International Union of Pure and Applied Chemistry (IUPAC)

Statistical Foundation and Definitions IUPAC provides one of the most statistically rigorous frameworks for LOD determination. The organization defines LOD as "the smallest concentration or absolute amount of analyte that has a signal significantly larger than the signal from a suitable blank." This definition emphasizes the need for statistical significance in distinguishing analyte signals from blank measurements. According to IUPAC, the critical level (LC) represents the value at which the decision is made whether the analyte is detected, calculated as LC = z₁₋α × σ₀, where z₁₋α is the critical value from the standardized normal distribution for the chosen significance level α (typically 5%), and σ₀ is the standard deviation of the blank measurements [9].

The IUPAC approach further defines the detection limit (LD) as the true net concentration that will lead to the conclusion that the analyte concentration is greater than that of the blank with probability (1-β). The formula expands to LD = LC + (z₁₋β × σD), where z₁₋β relates to the acceptable false negative rate β, and σD is the standard deviation at the detection limit. When α and β are both set at 0.05 and assuming constant variance, this simplifies to LD = 3.3 × σ₀ [9] [13]. For situations where standard deviation must be estimated from a limited number of replicates, IUPAC recommends replacing z-values with t-values from the Student's t-distribution, resulting in LD = (t₁₋α + t₁₋β) × s₀ when α = β [9].

Practical Implementation The recommended procedure for estimating LOD according to IUPAC guidelines involves analyzing a minimum of 10 portions of a test sample with concentration near the expected detection limit following the complete analytical procedure. The responses are converted to concentrations, and the standard deviation is calculated. The LOD is then computed using the appropriate statistical formula based on the number of replicates and desired confidence levels [9].

International Council for Harmonisation (ICH)

Framework for Pharmaceutical Analysis The ICH Q2(R1) guideline provides validation parameters for analytical procedures in pharmaceutical development and manufacturing. For LOD determination, ICH recognizes two primary approaches: visual evaluation and signal-to-noise ratio. The visual method involves analyzing samples with known concentrations of analyte and establishing the minimum level at which detection is feasible. The signal-to-noise approach compares measured signals from samples with known low concentrations of analyte with those of blank samples, establishing the minimum concentration at which the analyte can be reliably detected [14].

Signal-to-Noise Requirements ICH typically accepts a signal-to-noise ratio of 3:1 for declaring detection capability. This approach is particularly applicable to chromatographic methods and other techniques that display baseline noise. The guideline acknowledges that LOD can also be determined based on the standard deviation of the response or the slope of the calibration curve, using the formula LOD = 3.3σ/S, where σ is the standard deviation of the response and S is the slope of the calibration curve [14]. The ICH approach is specifically designed for pharmaceutical applications and aligns with requirements for method validation in drug development and quality control.

United States Environmental Protection Agency (EPA)

Method Detection Limit (MDL) Procedure The EPA approach focuses on the Method Detection Limit (MDL), defined as "the minimum measured concentration of a substance that can be reported with 99% confidence that the measured concentration is distinguishable from method blank results." The current procedure (Revision 2, 2016) represents a significant evolution from previous versions, incorporating both spiked samples (MDLS) and method blanks (MDLb) in the calculation [15].

Implementation Requirements The EPA procedure requires analysis of at least seven spiked samples and seven method blanks, ideally analyzed throughout the year to represent laboratory performance under varying conditions rather than a single optimal performance period. The MDL is calculated as the higher of the two values (MDLS or MDLb), reflecting the EPA's recognition that background contamination can be as significant as instrumental sensitivity in determining practical detection limits. For the spiked samples, the MDL is derived from the product of the standard deviation of the replicate measurements and the appropriate Student's t-value for the 99% confidence level with n-1 degrees of freedom [15]. This approach emphasizes real-world performance over ideal conditions, capturing instrument drift and variations in equipment conditions throughout the year.

American Chemical Society (ACS)

Environmental Applications Focus The ACS Committee on Environmental Improvement provides specific guidance for environmental analysis. The approach defines LOD as the value at which the sample value is significantly different from the value at the zero-concentration sample at a given confidence level of 95%. The ACS formula is expressed as LOD = 4.6σ, where σ represents the standard deviation of blank replicates measured more than 20 times [13].

Statistical Basis The ACS approach employs a higher multiplier (4.6 versus IUPAC's 3.3) to achieve the 95% confidence level for detection, reflecting the stringent requirements for environmental monitoring where false positives and negatives can have significant regulatory implications. This method requires extensive replication to establish reliable estimates of blank variability, making it more resource-intensive but statistically robust for its intended applications [13].

Table 1: Comparison of LOD Definitions and Statistical Foundations

| Organization | Definition | Statistical Formula | Confidence Level | Primary Application |

|---|---|---|---|---|

| IUPAC | Smallest concentration with signal significantly larger than blank | LOD = 3.3 × σ₀ (for α=β=0.05) | ~95% for α=β=0.05 | Fundamental analytical chemistry |

| ICH | Lowest amount detectable with acceptable S/N | Visual or S/N = 3:1 or LOD = 3.3σ/S | Not specified | Pharmaceutical analysis |

| EPA | Minimum concentration distinguishable from method blank with 99% confidence | MDL = t₉₉ × s (n=7-16 replicates) | 99% | Environmental monitoring |

| ACS | Value significantly different from zero-concentration sample | LOD = 4.6 × σ | 95% | Environmental analysis |

Methodological Approaches: Signal-to-Noise vs. Calibration Curve

The determination of LOD primarily follows two methodological pathways: the signal-to-noise approach and the calibration curve method. Each approach has distinct advantages, limitations, and appropriate applications within analytical chemistry.

Signal-to-Noise Ratio Approach

Fundamental Principles The signal-to-noise (S/N) approach represents one of the most widely practiced methods for LOD determination, particularly in chromatographic analysis. This method calculates LOD as the concentration providing a signal-to-noise ratio of three. The procedure involves measuring standard solutions with decreasing concentrations until a peak is found whose height is three times taller than the maximum height of the baseline noise measured adjacent to the chromatographic peak [9]. The International Council for Harmonisation, United States Pharmacopeia (USP), and European Pharmacopoeia (EP) all describe variations of this approach, though with differing implementation details [9] [16].

Regulatory Variations and Requirements The European Pharmacopoeia defines signal-to-noise ratio as S/N = 2H/h, where H is the height of the peak corresponding to the component in the chromatogram obtained with the prescribed reference solution, measured from the maximum of the peak to the extrapolated baseline of the signal observed over a distance equal to 20 times the width at half-height. The parameter h represents the range of the background noise in a chromatogram obtained after injection of a blank, observed over the same interval around the time where the peak would be found [9]. In contrast, the USP defines S/N = 2h/hₙ, where h is the height of the peak and hₙ is the difference between the largest and smallest noise values over a distance at least five times the peak width at half-height [16].

Limitations and Considerations While widely used, the S/N approach faces several significant limitations. First, it completely ignores sampling and sample preparation as sources of variability, estimating LOD only for the instrumental step. When determined from a single chromatogram, it fails to account for variability in the injection process [17]. Additionally, in certain instruments like ion-traps used in MRM mode, noise can approach zero, resulting in infinite S/N ratios regardless of peak size or shape [17]. The method also depends heavily on how and where noise is measured, with different guidelines specifying varying time windows for noise assessment [17] [16].

Calibration Curve Method

Statistical Foundation The calibration curve method, endorsed by IUPAC and other statistical approaches, determines LOD based on the standard deviation of the response and the slope of the calibration curve. The fundamental formula is LOD = 3.3 × σ/S, where σ is the standard deviation of the response (residual standard deviation of the regression line or standard deviation of y-intercepts) and S is the slope of the calibration curve [18] [14]. This approach accounts for both the sensitivity of the method (through the slope) and the variability (through the standard deviation), providing a more comprehensive statistical foundation.

Implementation Protocols To implement this approach, analysts prepare a calibration curve with a minimum of five concentrations, ideally in triplicate, spanning the expected range from blank to levels slightly above the anticipated LOD. The standard deviation can be determined through multiple approaches: from the standard deviation of blank measurements, from the residual standard deviation of the calibration curve regression, or from the standard deviation of y-intercepts of regression lines [9] [17]. The calibration curve should be constructed using the same sample preparation and analysis procedures as actual samples, ensuring that the estimated LOD reflects all sources of method variability.

Advantages Over S/N Approach The calibration curve method offers several distinct advantages. It incorporates method precision through the standard deviation estimate, accounts for method sensitivity through the calibration slope, and when properly designed, reflects all sources of variability including sample preparation and matrix effects. Furthermore, this approach aligns with the propagation of errors method, which includes terms for experimental uncertainty in both the slope and y-intercept of the calibration curve, addressing limitations of the simple IUPAC method [19]. As noted in chromatographic forums, this approach makes sense because "limits of detection are directly related to probabilities, and limits of quantification are directly related to percentage errors on the measurements" [17].

Table 2: Comparison of LOD Determination Methodologies

| Aspect | Signal-to-Noise Approach | Calibration Curve Approach |

|---|---|---|

| Fundamental Basis | Ratio of peak height to baseline noise | Statistical parameters from calibration data |

| Key Parameters | Peak height, baseline noise | Standard deviation of response, calibration slope |

| Regulatory Acceptance | ICH, USP, EP | IUPAC, EPA, ACS |

| Sources of Variability | Primarily instrumental noise | Includes sample prep, matrix effects, and instrumental variability |

| Implementation Complexity | Simple, direct measurement | Requires multiple standard concentrations |

| Applicability | Chromatography, spectroscopy | All quantitative analytical methods |

| Limitations | Ignores sample prep variability, injection variability | Requires careful experimental design, more resources |

Experimental Protocols and Workflows

Implementing appropriate experimental protocols is essential for accurate LOD determination. The following sections provide detailed methodologies for both primary approaches.

Signal-to-Noise Protocol for Chromatographic Methods

Sample Preparation

- Prepare a blank sample containing all components except the analyte.

- Prepare a reference solution at a concentration expected to yield a signal-to-noise ratio between 3:1 and 10:1.

- Ensure both solutions undergo identical sample preparation procedures.

Instrumental Analysis

- Inject the blank sample and record the chromatogram.

- Measure the baseline noise over a region free from chromatographic peaks. According to EP guidelines, this region should span a distance equal to 20 times the peak width at half-height [9] [16].

- Inject the reference solution and record the chromatogram.

- Measure the height of the analyte peak from the maximum response to the extrapolated baseline.

Calculation

- Calculate signal-to-noise ratio using the appropriate formula (EP: S/N = 2H/h; USP: S/N = 2h/hₙ).

- If the ratio is approximately 3, the concentration is the LOD.

- If the ratio differs significantly from 3, prepare additional reference solutions at adjusted concentrations and repeat until the 3:1 ratio is achieved.

Calibration Curve Protocol for LOD Determination

Experimental Design

- Prepare a minimum of five standard solutions spanning concentrations from blank to slightly above the expected LOD.

- Include a minimum of three replicates at each concentration level.

- Process all standards through the complete analytical procedure, including any sample preparation steps.

Data Collection and Analysis

- Analyze all standards in random order to avoid systematic bias.

- Record the instrument response for each standard and replicate.

- Construct a calibration curve with concentration on the x-axis and response on the y-axis.

- Perform linear regression analysis to determine the slope (S) and the standard deviation of the residuals (sᵧ/ₓ) or the standard deviation of blank measurements.

LOD Calculation

- Calculate LOD using the formula: LOD = 3.3 × sᵧ/ₓ / S

- For increased robustness, particularly with limited replicates, use the formula: LOD = (t₁₋α + t₁₋β) × s₀ / S, where t-values correspond to the appropriate degrees of freedom and confidence levels.

The following workflow diagram illustrates the decision process for selecting and implementing the appropriate LOD determination method:

Diagram Title: LOD Determination Method Selection Workflow

Essential Research Reagent Solutions and Materials

Implementing robust LOD determination requires specific materials and reagents tailored to the analytical method and sample matrix. The following table details essential research solutions for LOD studies.

Table 3: Essential Research Reagent Solutions for LOD Determination

| Reagent/Material | Function | Specification Requirements | Application Notes |

|---|---|---|---|

| High-Purity Analytical Standards | Calibration reference | Certified reference materials with documented purity ≥95% | Prepare fresh stock solutions; verify stability |

| Matrix-Matched Blank Samples | Blank measurement | Representative matrix without target analytes | Should contain all matrix components except analyte |

| High-Purity Solvents | Standard preparation and extraction | HPLC or GC grade with low background interference | Test for interference in target analyte regions |

| Internal Standards | Correction for variability | Stable isotopically labeled analogs of target analytes | Use for mass spectrometry methods |

| Derivatization Reagents | Analyte detection enhancement | High purity with minimal side reactions | Optimize for specific analyte detection |

| Solid Phase Extraction Cartridges | Sample cleanup and concentration | Appropriate sorbent for target analytes | Minimize background interference |

| Mobile Phase Additives | Chromatographic separation | MS-grade for mass spectrometry | Reduce chemical noise in detection |

The comparison of international guidelines reveals both convergence and divergence in LOD determination methodologies. While all guidelines seek to establish the lowest reliably detectable analyte concentration, their statistical approaches, implementation requirements, and application domains differ significantly. The selection between signal-to-noise and calibration curve approaches should be guided by regulatory requirements, methodological considerations, and practical constraints.

For pharmaceutical applications under ICH guidelines, the signal-to-noise approach offers simplicity and direct applicability to chromatographic methods commonly used in drug analysis. For environmental monitoring following EPA protocols, the Method Detection Limit procedure provides comprehensive assessment incorporating real-world variability. For fundamental research and method development, the IUPAC calibration curve approach offers statistical rigor and comprehensive variability assessment.

The ongoing development of tools like the Red Analytical Performance Index (RAPI), which consolidates multiple performance criteria including LOD into a unified scoring system, represents the future of analytical method assessment [20]. Regardless of the selected approach, transparent reporting of methodology, complete documentation of experimental parameters, and appropriate validation are essential for credible LOD determination in research and regulatory contexts.

In pharmaceutical analysis and drug development, accurately determining the lowest concentrations of an analyte is paramount. Two critical performance parameters form the foundation of this capability: the Limit of Detection (LOD) and the Limit of Quantification (LOQ). The LOD is defined as the lowest concentration of an analyte that can be reliably detected—but not necessarily quantified—under stated experimental conditions, answering the question "Is it there?" [21] [2]. In contrast, the LOQ represents the lowest concentration that can be quantitatively determined with acceptable precision and accuracy, answering the question "How much is there?" [8] [2]. This distinction is not merely semantic; it represents a fundamental difference in the confidence level of the analytical result, with the LOQ requiring a significantly higher degree of certainty for reliable measurement.

The international guideline ICH Q2(R1) recognizes multiple approaches for determining these limits, primarily the signal-to-noise ratio (S/N) and the calibration curve method [5] [2] [7]. While both are academically and regulatorily accepted, they operate on different principles and can yield significantly different results, leading to potential confusion or misinterpretation in analytical data. This guide provides an objective comparison of these methodologies, supported by experimental data, to inform researchers and scientists in selecting the most appropriate approach for their specific analytical challenges.

Methodological Foundations: S/N Ratio vs. Calibration Curve

The Signal-to-Noise (S/N) Ratio Approach

The S/N method is one of the most visually intuitive techniques for determining LOD and LOQ, particularly for chromatographic methods that exhibit baseline noise [5] [2]. This approach involves comparing the measured signal from a sample containing a low concentration of analyte with those of blank samples to establish the minimum concentration at which the analyte can be reliably detected or quantified.

- Fundamental Principle: The method is based on the direct comparison of the analyte signal height (or amplitude) to the peak-to-peak noise of the baseline in a blank sample [9] [5].

- Standard Acceptance Criteria: According to ICH Q2(R1), a signal-to-noise ratio between 2:1 and 3:1 is generally considered acceptable for estimating the detection limit, while a ratio of 10:1 is typical for the quantitation limit [5] [2]. It is important to note that the upcoming ICH Q2(R2) revision is expected to mandate a S/N of 3:1 for LOD, eliminating the 2:1 option [5].

- Practical Implementation: In practice, the baseline noise is measured from a peak-free section of the chromatogram, either from the current run or a previous blank run. The LOD is then defined as the concentration that yields a peak height three times the noise level, while the LOQ yields a peak height ten times the noise level [5].

The Calibration Curve Approach

The calibration curve method offers a more statistically rigorous approach to determining LOD and LOQ, relying on the standard deviation of the response and the slope of the calibration curve [21] [7].

- Fundamental Principle: This method utilizes the variability in response at low analyte concentrations to estimate the limits of detection and quantification. The standard deviation (σ) can be derived from different statistical parameters, most commonly the residual standard deviation of the regression line or the standard deviation of the y-intercepts of regression lines [2] [7].

- Calculation Methodology: The LOD and LOQ are calculated using the formulas:

- Experimental Considerations: A specific calibration curve should be studied using samples containing the analyte in the range of the LOD, not the entire working range of the method. This is crucial because using a "normal" calibration line with higher values would shift the center to a higher value, resulting in an overestimated detection limit [7].

Experimental Workflow Comparison

The fundamental differences in how the S/N ratio and calibration curve methods approach LOD/LOQ determination can be visualized in their experimental workflows.

Comparative Experimental Data Across Analytical Fields

Pharmaceutical Analysis: Carbamazepine and Phenytoin

A 2024 study compared different approaches for calculating LOD and LOQ in an HPLC-UV method for analyzing the drugs carbamazepine and phenytoin, revealing significant variability in results depending on the method used [22].

Table 1: Comparison of LOD and LOQ Values for Pharmaceutical Compounds Using Different Calculation Methods

| Analytical Method | Compound | S/N Method LOD | Calibration Curve LOD | S/N Method LOQ | Calibration Curve LOQ |

|---|---|---|---|---|---|

| HPLC-UV | Carbamazepine | Lowest value | Highest value | Lowest value | Highest value |

| HPLC-UV | Phenytoin | Lowest value | Highest value | Lowest value | Highest value |

The study concluded that the signal-to-noise ratio method provided the lowest LOD and LOQ values for both drugs, while the standard deviation of the response and slope method resulted in the highest values [22]. This highlights the substantial variability in sensitivity parameters depending on the calculation method chosen.

Food Safety: Aflatoxin in Hazelnuts

A 2015 study examining aflatoxin analysis in hazelnuts using AOAC Method 991.31 compared visual evaluation, signal-to-noise, and calibration curve approaches for determining LOD and LOQ [21].

Table 2: Method Comparison for Aflatoxin Analysis in Hazelnuts

| Calculation Method | Key Findings | Advantages | Limitations |

|---|---|---|---|

| Visual Evaluation | Provided much more realistic LOD and LOQ values | Based on actual detection capability | Subjective element in peak identification |

| Signal-to-Noise | Standard approach with defined ratios | Simple, instrument-friendly | Does not account for sample prep variability |

| Calibration Curve | Calculated using residual standard deviation | Statistical robustness | Requires multiple calibration curves |

The study concluded that the visual evaluation method provided much more realistic LOD and LOQ values compared to the other approaches [21].

Bioanalytical Methods: Sotalol in Plasma

A 2025 study in Scientific Reports compared classical statistical approaches with graphical tools (uncertainty profile and accuracy profile) for assessing LOD and LOQ in an HPLC method for determining sotalol in plasma [8]. The research found that the classical strategy based on statistical concepts provided underestimated values of LOD and LOQ, while the graphical tools offered a more relevant and realistic assessment [8]. The values found by uncertainty and accuracy profiles were in the same order of magnitude, with the uncertainty profile method providing a precise estimate of the measurement uncertainty [8].

Statistical and Regulatory Considerations

Understanding False Positives and Negatives

The statistical foundation of LOD and LOQ determination involves managing the risks of false positives (Type I error, α) and false negatives (Type II error, β) [9]. When analyzing blank samples, the results distribute around zero with a given standard deviation (σ₀). Establishing a critical level (LC) allows analysts to decide whether an analyte is present, but this decision always carries a statistical risk [9].

- False Positive Risk: Setting LC too low increases the probability (α) of concluding an analyte is present when it is not [9].

- False Negative Risk: Setting LOD at the critical level would result in approximately 50% of low-concentration samples being incorrectly reported as not detected [9].

- Modern LOD Definition: The International Organization for Standardization (ISO) defines LOD as the true net concentration that will lead, with probability (1-β), to the conclusion that the concentration of the component in the material analyzed is greater than that of a blank sample [9].

Regulatory Perspectives and Guidelines

Various international regulatory bodies provide guidelines for LOD and LOQ determination, with some variations in acceptable approaches.

- ICH Q2(R1): Recognizes visual evaluation, signal-to-noise ratio, and standard deviation of the response (calibration curve) as acceptable methods [5] [2].

- Upcoming ICH Q2(R2): Expected to implement stricter criteria, specifically requiring a S/N of 3:1 for LOD estimation rather than the current 2:1-3:1 range [5].

- CLSI EP17 Guideline: Provides a standardized approach for determining LoB (Limit of Blank), LoD, and LoQ in clinical laboratory settings, using specific formulas:

Essential Research Reagent Solutions for LOD/LOQ Studies

Table 3: Key Reagents and Materials for LOD/LOQ Determination Experiments

| Reagent/Material | Function in Analysis | Application Examples |

|---|---|---|

| Immunoaffinity Columns (IAC) | Cleanup and isolation of extracted analytes | AflaTest-P columns for aflatoxin analysis [21] |

| HPLC-Grade Solvents | Mobile phase preparation | Methanol, acetonitrile for HPLC analysis [21] |

| Certified Reference Materials | Calibration and quality control | Aflatoxin standards for hazelnut analysis [21] |

| Chromatography Columns | Analytical separation | ODS-2 RP-HPLC columns [21] |

| Internal Standards | Correction for analytical variability | Atenolol for sotalol determination in plasma [8] |

The comparative analysis of signal-to-noise versus calibration curve methods for LOD and LOQ determination reveals a complex landscape with no universal "best" approach. Each method has distinct advantages and limitations that make it more or less suitable for specific applications.

For routine quality control in regulated environments where simplicity and compliance are paramount, the signal-to-noise method offers straightforward implementation and alignment with ICH guidelines [5] [2]. However, analysts should be aware of its limitations, particularly its failure to account for sample preparation variability and its potential for over-optimistic results [17].

For method development and research applications where statistical robustness and a comprehensive understanding of method capabilities are required, the calibration curve approach provides a more rigorous foundation [21] [7]. While more labor-intensive, it accounts for variability across the entire analytical process and generates more realistic performance estimates.

The most defensible approach, particularly for method validation, may involve using multiple determination techniques to establish a consensus value. As demonstrated across numerous studies, the methodological choice significantly impacts the reported sensitivity parameters, potentially influencing decisions in drug development, regulatory submissions, and scientific conclusions. Researchers should clearly document their selected methodology and justify its appropriateness for their specific analytical challenge.

A Practical Guide to Implementing S/N and Calibration Curve Methods

In analytical chemistry, the Limit of Detection (LOD) represents the lowest concentration of an analyte that can be reliably distinguished from the absence of that analyte. Among the various approaches for determining LOD, the Signal-to-Noise (S/N) method remains one of the most widely used techniques, particularly in chromatographic and spectroscopic analyses. This method offers a practical balance between empirical assessment and mathematical calculation, providing analysts with a relatively straightforward means of establishing method detection capabilities. The S/N approach is formally recognized in major validation guidelines, including the International Conference on Harmonisation (ICH) Q2(R1) and its upcoming revision, which specifies that "a signal-to-noise ratio between 3:1 or 2:1 is generally considered acceptable for estimating the detection limit" [21] [23].

The fundamental principle underlying the S/N method is that an analyte signal must be sufficiently distinguishable from the ever-present background noise of the analytical system. As ICH Q2(R2) now states more definitively, "A signal-to-noise ratio of 3:1 is generally considered acceptable for estimating the detection limit" [5]. This ratio provides a statistical basis for detection, ensuring that the probability of false positives (Type I errors) remains acceptably low. For quantitative purposes, the Limit of Quantification (LOQ) is typically set at a higher S/N ratio of 10:1, providing sufficient signal confidence for reliable quantification with acceptable precision and accuracy [21] [23] [5].

This guide provides a comprehensive comparison of the S/N method against alternative approaches, particularly the calibration curve method, with supporting experimental data from published studies. By examining the protocols, calculations, and practical implementations of these techniques, analysts can make informed decisions about the most appropriate methodology for their specific analytical challenges.

Theoretical Foundation of the S/N Method

Fundamental Principles and Definitions

The Signal-to-Noise method operates on a straightforward premise: for an analyte to be reliably detected, its signal must be statistically distinguishable from the background noise of the measurement system. The signal refers to the analytical response attributable to the analyte, typically measured as peak height in chromatographic systems or absorption intensity in spectroscopic techniques. The noise represents the random fluctuations in the analytical signal when no analyte is present, arising from various sources including electronic instability, detector limitations, and environmental interference [5].

The mathematical foundation of the S/N method is deceptively simple, with the ratio calculated as:

S/N = Signal Height / Noise Amplitude

Despite this apparent simplicity, practical implementation requires careful consideration of noise measurement methodologies. Two primary approaches exist for quantifying noise:

- Peak-to-peak noise: The difference between the maximum and minimum baseline values over a specified region

- Root mean square (RMS) noise: A statistical measure of the magnitude of varying quantity, providing a more robust estimate of noise power [23]

The relationship between S/N ratios and detection capabilities follows statistical principles. A S/N ratio of 3:1 corresponds to a confidence level of approximately 99.7% that a measured signal represents a true analyte detection rather than random noise fluctuation, assuming a normal distribution of noise [5]. This statistical foundation makes the S/N method both practically accessible and scientifically defensible for establishing detection limits.

Regulatory Acceptance and Guidelines

The S/N method enjoys broad acceptance across regulatory frameworks, though specific implementation details may vary. The ICH Q2(R1) guideline recognizes S/N as one of three acceptable methods for determining LOD and LOQ, alongside visual evaluation and standard deviation-based approaches [23]. The upcoming ICH Q2(R2) revision further clarifies that a S/N ratio of 3:1 is specifically required for LOD estimation, eliminating the previous acceptance of 2:1 ratios [5].

Other regulatory bodies, including the United States Pharmacopeia (USP) and European Pharmacopoeia (EP), also acknowledge the S/N approach, though analysts should note potential differences in calculation methodologies between these organizations [23]. This regulatory acceptance makes the S/N method particularly valuable in pharmaceutical analysis and other highly regulated fields where method validation requirements are stringent.

Step-by-Step Experimental Protocol

Sample Preparation and Instrumentation

The S/N method requires careful preparation of samples designed to produce signals near the expected detection limit. The following protocol outlines a standardized approach:

Prepare a blank sample containing all matrix components except the analyte of interest. This sample should be representative of the actual test samples to ensure matrix effects are properly accounted for [5].

Prepare a low-concentration standard at a concentration expected to yield a signal approximately 3-5 times the baseline noise. This may require preliminary experiments to establish the appropriate concentration range [21] [5].

Analyze the blank sample using the complete analytical method, recording the chromatogram or spectrum in the region where the analyte signal is expected. The analysis should be performed under identical conditions to those used for actual samples [5].

Analyze the low-concentration standard using the same instrumental conditions, ensuring sufficient replication to account for normal method variability (typically n ≥ 6) [21].

Maintain consistent instrumental parameters throughout the analysis, as detector settings (e.g., time constant in UV detectors, slit width) can significantly impact both signal and noise measurements [5].

Measurement and Calculation Procedures

Once samples have been analyzed, the S/N ratio calculation proceeds as follows:

Measure the signal height from the low-concentration standard chromatogram or spectrum. The measurement should be from the baseline to the maximum point of the analyte peak [23] [5].

Measure the noise amplitude from the blank chromatogram or spectrum. Select a representative region free from interferences, typically immediately adjacent to the analyte retention time. The noise should be measured over a sufficient time window (typically 10-20 times the peak width at baseline) to ensure statistical significance [23].

Calculate the S/N ratio by dividing the signal height by the noise amplitude:

Verify the LOD and LOQ:

Confirm by independent injection of samples at the calculated LOD and LOQ concentrations to ensure consistency between calculated and observed S/N ratios [24].

Figure 1: Step-by-Step Workflow for S/N Method Implementation. This diagram illustrates the complete experimental protocol for determining LOD and LOQ using the signal-to-noise approach, from sample preparation to final verification.

Comparative Experimental Data: S/N vs. Alternative Methods

Direct Method Comparison Studies

Multiple studies have directly compared the S/N method with alternative approaches for LOD determination, revealing significant differences in results and practical implementation. The following table summarizes key findings from these comparative investigations:

Table 1: Experimental Comparison of LOD Determination Methods Across Different Studies

| Study Context | S/N Method LOD | Calibration Curve LOD | Visual Evaluation LOD | Key Findings | Reference |

|---|---|---|---|---|---|

| Aflatoxin in Hazelnuts (HPLC) | Not specified | Varied based on SD type | 1 μg/kg total aflatoxin | Visual method provided more realistic values; calibration curve results depended on using residual SD or y-intercept SD | [21] |

| Monoclonal Antibody Purity (cIEF) | 0.09% (relative concentration) | 0.07% (relative concentration) | Not reported | Different techniques produced substantially different results; S/N and calibration curve showed reasonable agreement | [25] |

| General HPLC Applications | 3:1 S/N ratio | Based on SD of response and slope | Subjective assessment | S/N and visual methods can be arbitrary; calibration curve provides more statistical rigor | [23] |

| Sotalol in Plasma (HPLC) | Not specified | Classical strategy provided underestimated values | Uncertainty profile provided precise estimate | Graphical strategies (uncertainty profile) more reliable than classical statistical approaches | [8] |

The data reveals that method selection significantly impacts the determined LOD values, with differences often exceeding an order of magnitude in some applications. This variability underscores the importance of both method selection and transparent reporting of the specific methodology used.

Advantages and Limitations of Each Method

Each LOD determination method presents distinct advantages and limitations that analysts must consider when selecting an appropriate approach:

Table 2: Comparative Analysis of LOD Determination Methodologies

| Method | Advantages | Limitations | Ideal Application Context |

|---|---|---|---|

| Signal-to-Noise (S/N) | - Simple, quick implementation- Direct instrumental measurement- Broad regulatory acceptance- Intuitively understandable | - Sensitive to measurement conditions- Noise measurement subjectivity- Dependent on data processing parameters- Limited statistical foundation | - Routine chromatographic analysis- Methods with stable baselines- Screening methods where speed is prioritized |

| Calibration Curve | - Strong statistical foundation- Accounts for method precision- Less operator-dependent- Utilizes existing validation data | - Requires multiple concentration levels- Assumes linearity near LOD- Sensitive to outlier points- More computationally intensive | - Regulated pharmaceutical methods- Methods requiring robust statistical support- Research applications |

| Visual Evaluation | - Practical, intuitive approach- Direct assessment of chromatograms- No complex calculations | - Highly subjective- Poor reproducibility between analysts- Difficult to validate and document- Limited regulatory acceptance | - Preliminary method development- Quick assessments during optimization- Supporting data for other methods |

The comparative analysis indicates that while the S/N method offers practical advantages for routine applications, methods based on calibration curves may provide greater statistical rigor for regulated environments where demonstration of robust validation is required.

Critical Implementation Considerations

Impact of Data Processing Parameters

The determined S/N ratio is highly dependent on data processing techniques and instrumental parameters, creating significant potential for variability between laboratories and analysts. Several factors critically influence S/N calculations:

Time constant/filter settings: Electronic filters can reduce apparent noise but may also distort or suppress legitimate analyte signals, particularly near detection limits. Over-use of smoothing filters can artificially improve S/N ratios while actually reducing detection capability [5].

Noise measurement methodology: The distinction between peak-to-peak noise and RMS noise measurements can yield significantly different S/N values from the same data set. One study noted that "the traditional signal-divided-by noise method gives a value that is half of the one used by the USP and EP" [23].

Integration parameters: Automated integration algorithms may fail to properly identify peaks near the detection limit, requiring manual intervention that introduces subjectivity [23] [5].

Baseline selection: The region selected for noise measurement significantly impacts calculated ratios. Noise should be measured "in the current chromatogram or from a previous blank run" in a "peak-free section" [5].

These dependencies highlight the importance of standardizing and thoroughly documenting data processing parameters when using the S/N method for formal method validation.

Regulatory and Practical Recommendations

Based on comparative studies and regulatory guidelines, the following recommendations emerge for implementing S/N methods in regulated environments:

Use S/N as a confirmatory approach: Given its limitations, the S/N method "should be used primarily for confirmation of less arbitrary calculations" rather than as the sole basis for LOD determination [23].

Apply realistic S/N thresholds: While ICH specifies a 3:1 ratio for LOD, "in reality with real-life samples and analytical conditions" more conservative values of "SNR between 3:1 and 10:1 for LOD" and "SNR from 10:1 to 20:1 for LOQ" are often appropriate [5].

Standardize noise measurement protocols: Implement consistent approaches for noise measurement, including defined regions for assessment and standardized data processing parameters, to improve inter-laboratory reproducibility.

Corroborate with alternative methods: "A quick look at two publications shows that the results differ depending on which method is used to determine the LOD and LOQ" [7]. Using multiple determination approaches provides more robust validation.

Document all parameters thoroughly: Given the potential for variability, complete documentation of instrumental settings, data processing parameters, and calculation methodologies is essential for method validation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of LOD determination methods requires appropriate laboratory materials and reagents. The following table outlines essential components for conducting S/N-based detection limit studies:

Table 3: Essential Research Materials for LOD Determination Studies

| Category | Specific Items | Function/Purpose | Critical Considerations |

|---|---|---|---|

| Reference Standards | - Certified analyte standard- Isotopically labeled internal standards | - Preparation of calibration solutions- Method accuracy verification | - Purity certification- Stability data- Appropriate storage conditions |

| Matrix Materials | - Blank matrix samples- Artificial matrix formulations | - Assessment of matrix effects- Preparation of fortified samples | - Commutability with real samples- Stability and homogeneity- Representative composition |

| Chromatographic Supplies | - HPLC/UHPLC columns- Mobile phase components- Sample filtration units | - Separation and detection of analytes- Sample preparation and cleanup | - Column selectivity and efficiency- Chemical purity- Compatibility with detection system |

| Instrumentation | - High-sensitivity detectors (e.g., DAD, FLD, MS)- Precision injection systems- Data acquisition software | - Signal generation and measurement- Data processing and calculation | - Detection specificity- Injection precision- Data processing capabilities |

The Signal-to-Noise method represents a practically accessible approach for LOD determination with broad regulatory acceptance, particularly in chromatographic applications. Its intuitive foundation and straightforward implementation make it valuable for routine analytical applications and initial method development. However, comparative studies consistently demonstrate that the S/N method can yield significantly different results than alternative approaches such as calibration curve methods, visual evaluation, or emerging techniques like uncertainty profiles.

The choice between LOD determination methods should be guided by the specific application context, regulatory requirements, and necessary rigor level. For screening methods where speed and simplicity are prioritized, the S/N method provides sufficient reliability. For regulated methods requiring robust statistical support and minimal subjectivity, calibration curve-based approaches offer greater scientific defensibility. In many cases, a combination of methods, using S/N for initial estimation and calibration curves for validation, represents the most comprehensive approach.

Regardless of the selected methodology, transparent reporting of the specific protocol, including detailed documentation of calculation parameters and experimental conditions, remains essential for generating comparable and reliable detection limit data. This practice ensures analytical methods are truly "fit for purpose" and capable of generating defensible data at the limits of detection.

Thesis Context: This guide provides an objective comparison of methods for determining the Limit of Detection (LOD), focusing on the calibration curve method against the signal-to-noise approach, to inform researchers and drug development professionals on their application and performance.

Theoretical Foundations of LOD Determination

In the validation of analytical and bioanalytical methods, the Limit of Detection (LOD) and Limit of Quantification (LOQ) are two crucial parameters that define the lowest concentrations of an analyte that can be reliably detected and quantified, respectively [8] [2]. The accurate determination of these limits is fundamental to ensuring that an analytical procedure is "fit for purpose," particularly in pharmaceutical development and other regulated fields where measuring very low concentrations of impurities or active compounds is required [3]. The International Conference on Harmonisation (ICH) Q2(R1) guideline outlines several accepted approaches for determining these limits, primarily the visual evaluation, the signal-to-noise ratio, and the method based on the standard deviation of the response and the slope of the calibration curve [4] [2].

The calibration curve method, expressed by the formula LOD = 3.3 σ / S, is grounded in robust statistical principles [4]. In this equation, 'σ' represents the standard deviation of the response, and 'S' is the slope of the calibration curve [7] [4]. The factor of 3.3 arises from statistical theory, accounting for a risk of 5% for both false positive and false negative detection events, providing a 95% confidence level for the detection [2]. The underlying assumption is that there is a linear relationship in the region of the suspected LOD, and that the response values are normally distributed and exhibit homogeneous variance across the calibration range [7]. This method shifts the determination of detection limits from potentially subjective assessments to a reproducible, data-driven calculation based on the fundamental performance characteristics of the analytical method itself—its sensitivity (slope) and its variability (standard deviation) at low concentrations.

Experimental Protocols for the Calibration Curve Method

Step-by-Step Procedure

Implementing the calibration curve method for LOD and LOQ determination requires a meticulous experimental setup to ensure accurate and reliable results. The following protocol, synthesizing best practices from multiple sources, provides a detailed roadmap:

Preparation of Calibration Standards: The cornerstone of this method is the construction of a specific calibration curve using samples with analyte concentrations in the range of the presumed LOD and LOQ [7]. It is critical not to use the standard working calibration curve that spans a much wider range, as its center is shifted to a higher value, which can lead to an overestimation of the LOD [7]. A recommended practice is to prepare the highest concentration for this specific curve at no more than 10 times the presumed detection limit [7]. A minimum of five concentration levels is advisable to establish a reliable regression line.

Analysis and Data Acquisition: Each calibration standard should be analyzed in replicate, typically three times (n=3), using the complete analytical procedure, including sample preparation [7]. This helps capture the method variability. The instrument response (e.g., peak area in HPLC) for each standard is recorded.

Linear Regression Analysis: The concentration (x-axis) and the corresponding instrument response (y-axis) data are subjected to linear regression analysis. This can be performed using standard software like Microsoft Excel, which provides a regression output containing the necessary statistical parameters [4]. The key values to extract from this analysis are:

Calculation of LOD and LOQ: Using the parameters derived from the regression analysis, the LOD and LOQ are calculated as follows:

Experimental Verification: The ICH guideline mandates that the calculated LOD and LOQ values are verified through experiment [4]. This involves preparing and analyzing a suitable number of samples (e.g., n=6) at the calculated LOD and LOQ concentrations. The LOD should consistently demonstrate a detectable peak, while the LOQ should demonstrate both acceptable accuracy (e.g., ±15% of the true value) and precision (e.g., ±15% relative standard deviation) [4]. This empirical confirmation is essential for validating the statistically derived limits.

Visualizing the Workflow

The following diagram illustrates the logical sequence and key decision points in the protocol for determining LOD and LOQ via the calibration curve method.

Comparative Performance Analysis

Quantitative Data Comparison

A direct comparison of the different LOD determination methods reveals significant differences in their underlying principles, computational approaches, and the resulting values.

Table 1: Objective Comparison of LOD Determination Methods

| Method | Fundamental Principle | Calculation Basis | Typical LOD Result | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Calibration Curve | Statistical model of response at low concentration | Slope (S) and standard deviation (σ) of regression: LOD=3.3σ/S [4] | Can be more realistic and lower [7] | Robust, statistical foundation; less arbitrary; uses full calibration data [4] | Requires specific low-level curve; results vary with σ estimate (y-intercept vs. residuals) [7] [8] |

| Signal-to-Noise (S/N) | Instrumental baseline noise | Ratio of analyte signal to background noise: LOD at S/N ≥ 2:1 or 3:1 [21] [2] | Can be arbitrary and higher [21] | Simple, fast, instrument-agnostic [4] | Subjective; ignores sample prep variability; less suitable for non-instrumental methods [4] |

| Visual Evaluation | Empirical observation | Lowest concentration producing a detectable peak [21] [2] | Considered more realistic in some studies [21] | Intuitive; direct assessment | Highly subjective; dependent on analyst experience; not statistically robust [4] |

The performance of these methods is not merely theoretical. A practical example from a study on aflatoxin analysis in hazelnuts using HPLC demonstrated that the visual evaluation method provided much more realistic LOD and LOQ values compared to other approaches [21]. Furthermore, a 2025 study in Scientific Reports comparing methods for sotalol in plasma found that the classical strategy based on statistical concepts (like the calibration curve method) provided underestimated values of LOD and LOQ compared to more modern graphical tools like the uncertainty profile [8]. This indicates that while the calibration curve method is scientifically robust, its results can vary and should be interpreted with an understanding of its potential to underestimate detection limits in certain contexts.

Critical Assumptions and Potential Pitfalls

The calibration curve method, while powerful, relies on several critical assumptions that, if violated, can lead to significant inaccuracies. A primary requirement is that the analytical response must demonstrate linearity in the immediate region of the presumed LOD [7]. If the dose-response relationship deviates from linearity at these low concentrations, the fundamental formula LOD = 3.3σ/S becomes invalid. Furthermore, the method assumes homogeneity of variance (homoscedasticity) across the low-concentration range used to build the curve [7]. If the variance of the instrument response increases or decreases with concentration, the standard deviation (σ) used in the calculation will not be representative.

Another notable pitfall is the variability in results depending on the chosen estimate for the standard deviation (σ). As illustrated in a practical example, calculating the LOD using the standard deviation of the y-intercept versus the residual standard deviation of the regression line can yield different results [7]. For instance, in one experiment, the LOD calculated from the y-intercept SD was 0.61 µg/mL, while the residual SD gave a value of 0.72 µg/mL [7]. This highlights the importance of consistently applying the same approach for σ estimation when comparing methods or performing longitudinal studies. Finally, the calculated LOD and LOQ are only estimates and must be empirically verified by analyzing multiple samples at those concentrations to confirm that they meet the required performance criteria for detection and quantification, a step that is mandated by the ICH guideline [4].

The Scientist's Toolkit: Essential Research Reagents and Materials