Simplex Optimization Efficiency: From Algorithmic Breakthroughs to Cutting-Edge Drug Development

This article provides a comprehensive analysis of simplex optimization efficiency, bridging recent theoretical breakthroughs with practical applications in pharmaceutical research.

Simplex Optimization Efficiency: From Algorithmic Breakthroughs to Cutting-Edge Drug Development

Abstract

This article provides a comprehensive analysis of simplex optimization efficiency, bridging recent theoretical breakthroughs with practical applications in pharmaceutical research. It explores the foundational mathematics of the simplex method, details its methodological applications in optimizing analytical procedures and ADME properties, and presents new research resolving long-standing efficiency concerns. Through comparative analysis and real-world case studies, we demonstrate how enhanced simplex algorithms deliver robust, computationally efficient solutions for complex optimization challenges in drug discovery, enabling faster development of safer, more effective therapeutics.

The Simplex Method: Unraveling 80 Years of Optimization Theory and Geometric Principles

The quest for optimal decision-making amidst constraints found a transformative solution in 1947 with George Dantzig's formulation of the simplex algorithm [1] [2]. This groundbreaking mathematical procedure provided a systematic method for solving linear programming problems, enabling the maximization or minimization of a linear objective function subject to linear equality and inequality constraints. Dantzig's work, initiated to address complex planning and resource allocation challenges for the U.S. Air Force, has since evolved into a cornerstone of operations research, influencing diverse fields from supply chain logistics to pharmaceutical development [3] [2]. The algorithm's enduring relevance stems from its elegant geometrical interpretation, where it navigates the vertices of a multidimensional polyhedron (the feasible region) to find the optimal solution [2]. This article traces the historical development of the simplex method, compares its performance against significant algorithmic alternatives, and details its modern implementations, with a special focus on applications in scientific and drug development research.

George Dantzig and the Birth of the Simplex Algorithm

The Foundational Breakthrough

George Bernard Dantzig, born in 1914, developed the simplex algorithm under a set of circumstances that would become legendary in mathematical lore [1]. As a doctoral candidate at the University of California, Berkeley, he arrived late to a statistics class taught by Professor Jerzy Neyman and, mistaking two problems on the blackboard for homework, copied them down. Upon solving them, he discovered these were not homework assignments but rather previously unsolved statistical problems [1] [3]. This fortuitous event laid the groundwork for his future work on linear programming. By 1947, while working as a mathematical adviser to the U.S. Air Force, Dantzig formalized his simplex method to address the military's pressing need to solve complex optimization problems involving hundreds or thousands of variables for resource allocation [3] [2]. His key insight was recognizing that most practical planning "ground rules" could be translated into a linear objective function that needed to be maximized, and that the optimal solution, if it existed, would be found at a vertex of the feasible region [2].

Algorithmic Mechanics and Geometrical Interpretation

The simplex algorithm operates on linear programs in canonical form, seeking to maximize an objective function ( \mathbf{c^T x} ) subject to constraints ( A\mathbf{x} \leq \mathbf{b} ) and ( \mathbf{x} \geq 0 ) [2]. Geometrically, the algorithm navigates along the edges of a convex polyhedron from one vertex to an adjacent vertex with an improved objective value, continuing until no further improvement is possible. This process is implemented through pivot operations that systematically exchange basic and nonbasic variables within a simplex tableau, a tabular representation that organizes the coefficients of the linear program [2]. The algorithm proceeds in two phases: Phase I finds an initial basic feasible solution, while Phase II iteratively improves this solution until optimality is achieved or unboundedness is detected [2].

Table: Key Historical Milestones in the Simplex Algorithm Development

| Year | Development | Key Figure/Institution | Significance |

|---|---|---|---|

| 1947 | Simplex Algorithm Formulated | George Dantzig (US Air Force) | Provided first practical method for solving linear programming problems [3] [2] |

| 1962 | Nelder-Mead Simplex Published | Nelder & Mead | Introduced pattern search variant for experimental optimization [4] |

| 1972 | Worst-Case Analysis | Klee & Minty | Demonstrated exponential worst-case complexity [3] |

| 1984 | Karmarkar's Algorithm | Narendra Karmarkar (Bell Labs) | Introduced interior-point method with polynomial complexity [5] |

| 2001 | Smoothed Complexity Analysis | Spielman & Teng | Showed simplex has polynomial smoothed complexity [3] |

| 2025 | Hardware Accelerator | Fraunhofer Institute IIS | Developed energy-efficient hardware implementation [6] |

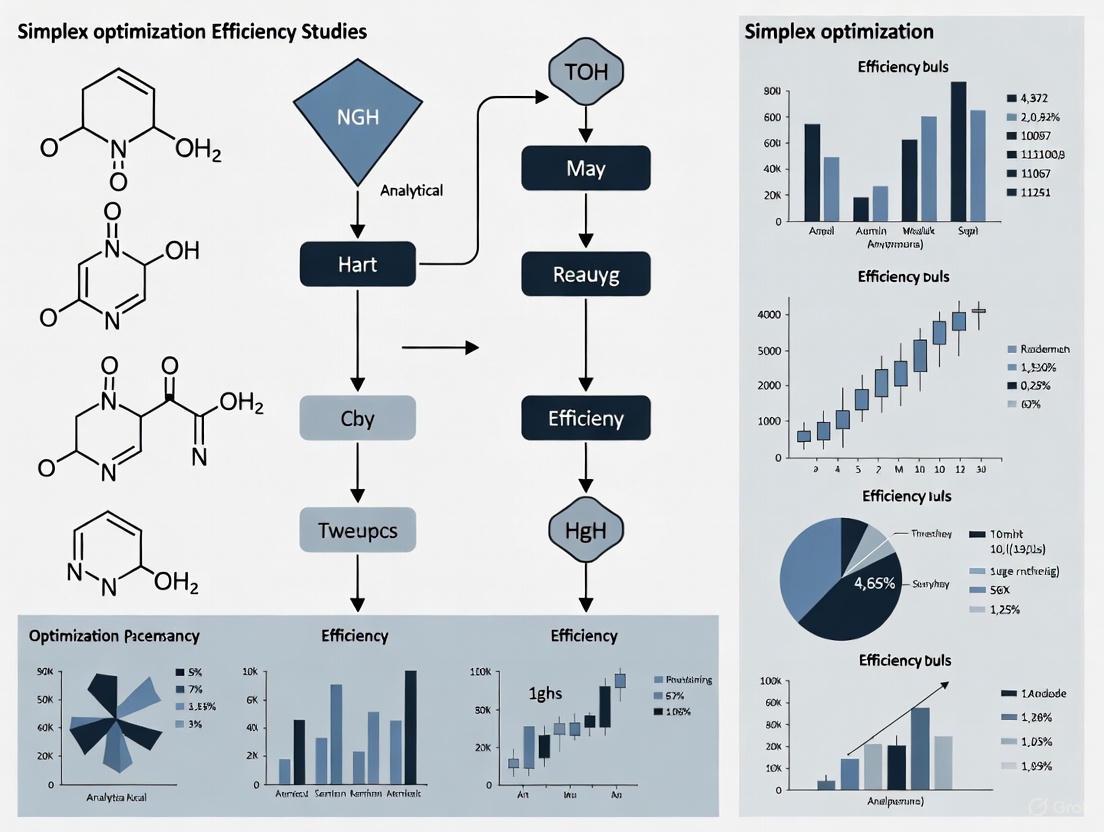

Diagram Title: Simplex Algorithm Iterative Process

Theoretical and Algorithmic Evolution

Addressing Theoretical Limitations

Despite its remarkable practical performance, the simplex algorithm faced theoretical challenges. In 1972, mathematicians established that in worst-case scenarios, the time required for the algorithm to complete could grow exponentially with the number of constraints [3]. This meant that regardless of how efficiently it performed in practice, there existed pathological cases where its performance would deteriorate dramatically. This theoretical limitation prompted decades of research into understanding the algorithm's behavior and developing improved variants. A landmark theoretical advance came in 2001 when Daniel Spielman and Shang-Hua Teng introduced the concept of smoothed analysis, demonstrating that with minimal randomization, the simplex method's running time becomes polynomial in the number of constraints [3]. Their work provided a compelling explanation for why the exponential worst-case scenarios rarely manifested in practical applications.

Recent Theoretical Advances

The most recent theoretical breakthroughs come from Sophie Huiberts and Eleon Bach, who in 2025 significantly improved the understanding of the simplex method's performance guarantees [3]. Building upon Spielman and Teng's framework, they incorporated additional randomness into the algorithm to demonstrate that runtimes are substantially lower than previously established. Their work also established that the approach pioneered by Spielman and Teng cannot proceed faster than the value they obtained, essentially providing a complete understanding of this particular model of the simplex method [3]. According to Heiko Röglin, a computer scientist at the University of Bonn, this research "marks a major advance in our understanding of the simplex algorithm, offering the first really convincing explanation for the method's practical efficiency" [3]. Despite this progress, achieving linear scaling with the number of constraints remains the "North Star" for ongoing research efforts [3].

Comparative Analysis of Simplex Algorithm Variants

Performance Metrics and Experimental Protocols

Evaluating the efficiency of simplex algorithm variants requires standardized performance metrics and experimental protocols. Key metrics include: (1) Iteration count - the number of pivot operations required to reach optimality; (2) Computational time - actual processor time required for solution; (3) Memory usage - RAM consumption during execution; and (4) Numerical stability - resistance to rounding errors in finite-precision arithmetic. Experimental protocols typically involve testing algorithms against standardized benchmark problem sets such as NETLIB, MIPLIB, or randomly generated instances with controlled characteristics. For experimental optimization applications (common in analytical chemistry and bioprocessing), performance is measured by the number of experiments required to reach the optimum and the robustness to experimental noise [7] [4].

Table: Comparison of Simplex Algorithm Variants and Alternatives

| Algorithm/Variant | Theoretical Complexity | Practical Performance | Key Applications | Key Advantages |

|---|---|---|---|---|

| Dantzig's Simplex (1947) | Exponential (worst-case) | Excellent for most practical problems [3] | General linear programming [2] | Robust, well-understood, efficient in practice [3] |

| Super-Modified Simplex | Not specified | Faster convergence than modified simplex [8] | Chemical analysis optimization [8] | Adapts size and orientation to response surface [8] |

| Statistical Simplex | Not specified | Effective with noisy data [9] | Experimental process optimization [9] | Uses correlation-based ranking for robustness [9] |

| Karmarkar's Interior-Point (1984) | Polynomial | Excellent for very large problems [5] | Large-scale resource allocation [5] | Polynomial guarantee, good for dense problems [5] |

| Bach-Huiberts Approach (2025) | Polynomial (smoothed) | Theoretical improvement [3] | Theoretical foundation [3] | Better theoretical bounds, explains practical efficiency [3] |

Key Algorithmic Variants and Their Performance

The original simplex algorithm has spawned numerous variants that differ primarily in their pivot selection rules. The Dantzig rule selects the variable with the most negative reduced cost (greatest potential improvement), while the Steepest Edge rule chooses the direction that provides the largest improvement per unit of distance traveled. Experimental studies consistently show that while different pivot rules exhibit similar worst-case performance, their practical efficiency varies significantly. The steepest edge rule generally outperforms other rules in computation time despite requiring more overhead per iteration. In analytical chemistry, the Modified Simplex (Nelder-Mead) and Super-Modified Simplex methods have demonstrated particular effectiveness, with the latter showing increased speed and accuracy by adapting its size and orientation to fit the response surface through second-order estimation [8] [4].

Modern Implementations and Hardware Accelerations

Fraunhofer Institute's Hardware Breakthrough

A groundbreaking development in simplex implementation emerged in 2025 from the Fraunhofer Institute for Integrated Circuits IIS, where researchers successfully developed a novel hardware accelerator specifically designed to reduce the computational burden of the expensive pricing step in the simplex algorithm [6]. This innovation represents a significant departure from traditional software-based solvers by implementing key algorithmic components directly in hardware. The accelerator uses an optimized architecture specifically tailored for the simplex method, offering substantial improvements in both speed and energy efficiency compared to general-purpose processors and even GPUs [6]. According to Dr.-Ing. Marcus Bednara, the leading scientist on the project, "Application-specific accelerators for embedded systems have many advantages over GPUs in terms of energy consumption, size and computing power" [6].

Application Domains and Performance Gains

The Fraunhofer hardware accelerator targets edge applications where computational resources and power are constrained, including robot control, production planning, routing, and supply chain optimization [6]. By bridging the gap between hardware development and mathematical optimization research, this implementation demonstrates how domain-specific hardware can revitalize classical algorithms for modern applications. The co-design approach explored how much of the simplex algorithm could be effectively offloaded to hardware to enhance performance while reducing energy consumption [6]. Future development directions include adapting the current hardware accelerator to further operations within the simplex algorithm and enhancements toward more realistic solver applications with advanced and faster results [6].

Diagram Title: Hardware-Software Co-Design for Simplex

Experimental Applications in Scientific Research

Bioprocess Development and Drug Manufacturing

Simplex-based optimization has demonstrated remarkable effectiveness in addressing challenging bioprocess development problems, particularly in downstream processing. A 2016 study published in Biotechnology Progress applied a simplex variant to polishing chromatography and protein refolding operations [7]. The experimental protocol involved comparing the simplex-based method against conventional regression-based Design of Experiments (DoE) approaches for three experimental systems. The simplex variant proved more effective in identifying superior operating conditions, reaching the global optimum in most cases involving multiple optima, while the regression-based method often failed and frequently converged to poor operating conditions [7]. Additionally, the simplex method demonstrated robustness in dealing with noisy experimental data and required fewer experiments than regression-based methods to reach favorable operating conditions, making it ideally suited to rapid optimization in early-phase process development.

Analytical Chemistry and Method Development

In analytical chemistry, simplex optimization has become established as a practical and reliable method for developing analytical procedures without requiring complex mathematical-statistical expertise [4]. The methodology involves the sequential displacement of a geometric figure with k+1 vertices (where k equals the number of variables) in an experimental field toward an optimal region. Applications span numerous domains: optimization of inductively coupled plasma spectrometer parameters, determination of polycyclic aromatic hydrocarbons in water samples, flow-injection analysis systems for tartaric acid determination in wines, and separation of vitamins in pharmaceutical products [4]. The robustness, easy programmability, and rapid convergence of simplex methods have led to hybrid optimization schemes combining simplex with other techniques like genetic algorithms and artificial neural networks for enhanced performance [4].

Table: Research Reagent Solutions for Simplex-Based Experimental Optimization

| Reagent/Resource | Function in Optimization | Application Context |

|---|---|---|

| Inductively Coupled Plasma Spectrometer | Response measurement instrument [4] | Analytical method optimization [4] |

| Chromatography Columns | Separation system for bioprocess optimization [7] | Downstream bioprocessing [7] |

| Protein Refolding Buffers | Experimental system for optimization [7] | Biopharmaceutical development [7] |

| Polycyclic Aromatic Hydrocarbon Standards | Target analytes for method development [4] | Environmental analysis optimization [4] |

| Sequential Injection Analysis System | Automated analytical platform [4] | Method development and optimization [4] |

From its serendipitous origins in 1947 to its contemporary hardware implementations, the simplex algorithm has demonstrated remarkable resilience and adaptability. George Dantzig's foundational breakthrough established not merely a mathematical procedure but an entire paradigm for rational decision-making under constraints. The algorithm's enduring relevance stems from its proven practical efficiency despite theoretical limitations, its conceptual clarity grounded in geometric intuition, and its remarkable adaptability to diverse application domains. Current research frontiers include the pursuit of linear scaling with problem size, further refinement of hardware accelerators for edge computing applications, and development of hybrid approaches that combine the strengths of simplex with other optimization paradigms. For researchers in drug development and scientific fields, simplex-based optimization continues to offer robust, efficient methodologies for navigating complex experimental landscapes, underscoring the enduring impact of Dantzig's visionary work on modern scientific and industrial practice.

In the realm of mathematical optimization, the geometry of polyhedra forms the fundamental landscape upon which algorithms navigate to find optimal solutions. A polyhedron is a three-dimensional solid bounded by flat polygonal faces, straight edges, and sharp corners or vertices [10] [11]. These structures provide the conceptual framework for understanding the solution spaces of linear programming problems, where constraints form half-spaces whose intersection creates a convex polyhedral feasible region. Within this context, edge-walking algorithms, most notably the simplex method, traverse along the edges of these polyhedra from one vertex to an adjacent one, continually improving the objective function value until reaching an optimal solution.

The efficiency of these algorithms is intimately connected to the combinatorial properties of polyhedra, particularly the relationship between their fundamental components: vertices (potential solutions), edges (paths between solutions), and faces (regions where constraints are active). For researchers in drug development, understanding these principles is crucial when employing optimization techniques for molecular design, dose-response modeling, or resource allocation in clinical trials, where the computational efficiency of these methods can significantly impact research timelines and outcomes.

Mathematical Foundations: Polyhedra and Their Properties

Defining Characteristics and Components

A polyhedron is mathematically defined by its basic geometric components: faces (two-dimensional polygons that form its boundary), edges (line segments where two faces meet), and vertices (points where two or more edges converge) [10] [11]. In optimization contexts, we primarily concern ourselves with convex polyhedra, where any line segment connecting two points within the polyhedron lies entirely within it. This convexity property ensures that any local optimum is also a global optimum, a crucial characteristic for optimization reliability.

The classification of polyhedra depends on several factors. A polyhedron is considered regular (a Platonic solid) when all its faces are identical regular polygons, with the same number of faces meeting at each vertex [12] [11]. Only five such regular convex polyhedra exist: the tetrahedron (4 faces), cube (6 faces), octahedron (8 faces), dodecahedron (12 faces), and icosahedron (20 faces). In contrast, irregular polyhedra such as prisms and pyramids have faces that are not all congruent or do not meet the strict symmetry requirements of Platonic solids [11].

Euler's Characteristic Formula

A fundamental relationship governing polyhedral structures is Euler's polyhedron formula, which states that for any convex polyhedron, the number of vertices (V), edges (E), and faces (F) satisfies the equation:

V - E + F = 2 [12]

This topological invariant provides a powerful tool for verifying the validity of polyhedral structures and understanding their combinatorial properties. For instance, a cube with 8 vertices, 12 edges, and 6 faces satisfies Euler's formula: 8 - 12 + 6 = 2. Similarly, an icosahedron with 12 vertices, 30 edges, and 20 faces also satisfies the formula: 12 - 30 + 20 = 2 [12]. This relationship becomes particularly valuable when analyzing the complexity of optimization problems, as it helps establish bounds on the number of potential extreme points that might need to be visited during algorithm execution.

Table 1: Euler's Formula Verification for Common Polyhedra

| Polyhedron | Vertices (V) | Edges (E) | Faces (F) | V - E + F |

|---|---|---|---|---|

| Tetrahedron | 4 | 6 | 4 | 2 |

| Cube | 8 | 12 | 6 | 2 |

| Octahedron | 6 | 12 | 8 | 2 |

| Dodecahedron | 20 | 30 | 12 | 2 |

| Icosahedron | 12 | 30 | 20 | 2 |

Edge-Walking Algorithms and the Simplex Method

Fundamental Principles of Vertex Traversal

Edge-walking algorithms operate on the principle that for linear optimization problems with convex feasible regions, the optimal solution occurs at an extreme point (vertex) of the polyhedron [13]. The simplex method, developed by George Dantzig, is the most prominent example of this class of algorithms. It proceeds by moving from one vertex to an adjacent vertex along the edges of the polyhedron, at each step choosing the direction that most improves the objective function value.

This process involves two key phases: Phase I, which finds an initial basic feasible solution (vertex), and Phase II, which iteratively moves to improving adjacent vertices until an optimal solution is found [13]. Each move between vertices is called a pivot step, and the number of pivot steps serves as a primary measure of the algorithm's efficiency. The choice of which adjacent vertex to move to is governed by a pivot rule, with common examples including the most negative reduced cost rule, the steepest edge rule, and the shadow vertex rule [13].

Computational Complexity and Performance

The theoretical worst-case performance of the simplex method is known to be exponential for certain pathological problem instances and pivot rules [13]. However, in practice, the algorithm consistently exhibits polynomial-time behavior, typically requiring a number of pivot steps that is linear or nearly linear in the number of constraints [13]. This discrepancy between worst-case theory and practical performance has motivated extensive research into explaining the efficiency of edge-walking algorithms.

The following diagram illustrates the workflow of a typical simplex edge-walking process:

Experimental Frameworks for Algorithm Analysis

Methodologies for Performance Evaluation

Analyzing the performance of edge-walking algorithms requires carefully designed experimental frameworks that capture both theoretical complexity and practical efficiency. Current research employs several complementary approaches:

Smoothed Analysis: This framework, introduced by Spielman and Teng, bridges the gap between worst-case and average-case analysis by assuming that input data are subject to small random perturbations [13]. The analysis provides bounds on expected performance over these perturbations, offering insights into why the simplex method performs well on typical instances. Recent developments in this area have established bounds of O(σ^(-1/2)d^(11/4)log(n)^(7/4)) on the number of pivot steps for specific pivot rules, where σ represents the perturbation magnitude, d the dimension, and n the number of constraints [13].

By-the-Book Analysis: This emerging framework addresses limitations of smoothed analysis by incorporating observations from algorithm implementations, input modeling best practices, and measurements on practical benchmark instances [13]. It models not only input data but also the algorithm itself, accounting for implementation details such as feasibility tolerances and numerical precision considerations that significantly impact real-world performance.

Large-Scale Benchmarking: The creation of comprehensive datasets like LOOPerSet, containing millions of labeled data points from synthetically generated polyhedral programs, enables rigorous empirical evaluation of optimization algorithms [14]. These datasets facilitate the training and benchmarking of learned cost models and provide standardized testing grounds for comparing algorithm performance across diverse problem instances.

Comparative Performance Metrics

When comparing edge-walking algorithms with alternative approaches, researchers typically evaluate several key performance dimensions:

Table 2: Performance Comparison of Optimization Methodologies

| Methodology | Theoretical Guarantees | Practical Efficiency | Implementation Complexity | Problem Scope |

|---|---|---|---|---|

| Simplex (Edge-Walking) | Exponential worst-case [13] | Excellent, O(n) pivots typical [13] | Moderate | Linear programming |

| Interior Point Methods | Polynomial worst-case | Very good for large, dense problems | High | Linear and convex programming |

| Nature-Inspired Algorithms | No guarantees | Variable, often requires 100-1000s evaluations [15] | Low to moderate | General non-convex problems |

| Surrogate-Assisted Optimization | No general guarantees | Good for expensive function evaluations | High | Computationally expensive problems |

Research Reagents: Computational Tools for Polyhedral Optimization

The experimental analysis of polyhedral algorithms relies on specialized computational tools and methodological approaches that serve as essential "research reagents" in this domain.

Table 3: Essential Research Tools for Polyhedral Optimization Studies

| Research Tool | Function | Application Context |

|---|---|---|

| Synthetic Program Generators | Creates diverse polyhedral programs for benchmarking [14] | Generating test problems with controlled characteristics |

| Transformation Space Samplers | Explores sequences of polyhedral transformations [14] | Studying optimization paths and algorithm behavior |

| Performance Profilers | Measures execution time and pivot counts [13] [14] | Empirical algorithm analysis and comparison |

| Perturbation Models | Introduces controlled randomness to inputs [13] | Smoothed analysis and robustness testing |

| Multi-resolution Simulators | Provides variable-fidelity function evaluations [15] | Reducing computational cost in initial search phases |

Implications for Drug Development Research

For researchers in pharmaceutical development, the principles of polyhedral optimization find application in multiple domains. In medicinal chemistry, they facilitate molecular design optimization by navigating high-dimensional chemical space to identify compounds with desired properties. In clinical trial design, they enable efficient resource allocation and patient cohort optimization. In pharmacokinetic modeling, they help parameterize complex biological systems from experimental data.

The computational efficiency of edge-walking algorithms makes them particularly valuable in these applications, where evaluation of candidate solutions often requires computationally expensive simulations or access to limited experimental resources. The recent advances in analysis frameworks that better explain and predict algorithm performance in practical scenarios provide greater confidence in applying these methods to critical path research problems in drug development.

The integration of simplex-based search strategies with multi-resolution modeling approaches, as demonstrated in antenna design [15], suggests promising directions for pharmaceutical applications. By employing coarse-grained models for initial global exploration followed by refined models for final optimization, researchers can achieve effective trade-offs between computational expense and solution quality—a crucial consideration when dealing with complex biological systems and expensive experimental verification.

The simplex method, a cornerstone algorithm for solving linear programming problems, presents a fascinating paradox in computational mathematics: it exhibits consistent, high-speed performance in practical applications across industries like logistics and pharmaceutical development, despite a theoretical worst-case performance that is exponential in time complexity. For decades, this disconnect between observed efficiency and pessimistic theory puzzled researchers. Recent groundbreaking work by Huiberts and Bach has provided the most robust theoretical explanation to date for this phenomenon, demonstrating that the algorithm's runtime is guaranteed to be polynomial, thereby largely resolving the long-standing fear of exponential slowdowns in real-world scenarios [3] [16]. This guide compares the theoretical and practical performance profiles of the simplex method and details the experimental protocols used to benchmark its efficiency.

Understanding Computational Complexity: Polynomial vs. Exponential

To grasp the efficiency paradox, one must first understand the fundamental difference between polynomial and exponential complexity, which represent vastly different growth rates of an algorithm's runtime as the problem size increases [17].

- Polynomial Complexity (O(n^k)) describes a manageable growth rate where the runtime increases as a polynomial function of the input size

n. Algorithms with this complexity are generally considered efficient and feasible for large inputs. Examples include linear search (O(n)) and bubble sort (O(n²)) [18] [17]. - Exponential Complexity (O(c^n)) describes an explosive growth rate where the runtime increases as an exponential function of

n. Algorithms with this complexity quickly become intractable and impractical, even for moderately sized inputs. Classic examples include the subset sum and traveling salesman problems [17].

The table below outlines the critical differences.

| Aspect | Polynomial Complexity | Exponential Complexity |

|---|---|---|

| Definition | O(n^k) for some constant k | O(c^n) for some constant c>1 |

| Growth Rate | Manageable and predictable | Rapid and unmanageable |

| Feasibility | Feasible for large inputs | Quickly becomes infeasible |

| Scalability | Highly scalable | Poor scalability |

| Example Algorithms | Merge Sort, Simplex Method (in practice) | Algorithms for NP-hard problems |

The Simplex Method: A Tale of Two Performances

The simplex method, developed by George Dantzig in 1947, is a powerful algorithm for solving linear optimization problems, such as maximizing profits or minimizing costs under specific constraints [3] [19]. Its performance, however, must be analyzed from two distinct angles.

Worst-Case Exponential Complexity

In 1972, mathematicians proved that for the simplex method, the worst-case scenario could require an exponential number of steps relative to the number of constraints [3]. Geometrically, the algorithm navigates the vertices of a multi-dimensional shape (a polyhedron) defined by the problem's constraints. In an unlucky path, it could traverse nearly every vertex before finding the optimal solution, leading to this exponential worst-case time [3].

Practical Polynomial Efficiency

Despite the daunting theory, the simplex method has been a workhorse of industry for decades. As researcher Sophie Huiberts noted, "It has always run fast, and nobody’s seen it not be fast" [3] [16]. In virtually all practical applications, from supply chain management to resource allocation, the algorithm finishes in a time that scales polynomially with the problem size.

Experimental Benchmarking of Algorithm Performance

To resolve the paradox between the simplex method's worst-case theory and its practical efficacy, researchers rely on rigorous experimental benchmarking. This process involves comparing algorithmic performance using well-characterized datasets and quantitative metrics [20].

Core Principles of Benchmarking

Essential guidelines for a high-quality benchmarking study include [20]:

- Defining Purpose and Scope: Clearly stating whether the study is a neutral comparison or for demonstrating a new method's merits.

- Comprehensive Method Selection: Including all relevant algorithms or a representative subset to avoid bias.

- Diverse Datasets: Using a variety of real and simulated datasets to evaluate performance under different conditions.

- Key Quantitative Metrics: Selecting objective, relevant performance metrics like runtime and solution accuracy.

The Role of Smoothed Analysis

A key breakthrough in understanding the simplex method's performance came from smoothed analysis, pioneered by Daniel Spielman and Shang-Hua Teng in 2001 [3] [21]. This analytical framework bridges the gap between worst-case and average-case analysis by considering small, random perturbations to the input data. Spielman and Teng proved that with this tiny injection of randomness, the running time of the simplex method becomes polynomial, bounded by a function like O(n³⁰) [3]. This provided a powerful argument for why the worst-case exponential scenarios are exceptionally rare in practice.

Recent Theoretical Breakthrough and Performance Data

Building on smoothed analysis, recent research by Huiberts and Bach has further optimized the simplex algorithm and provided a stronger theoretical guarantee of its polynomial-time performance [3] [16].

Performance Comparison: Theoretical vs. Practical

The following table synthesizes the performance characteristics of the simplex method, highlighting the resolution of its efficiency paradox.

| Performance Aspect | Theoretical Worst-Case (Pre-2001) | Practical & Smoothed Analysis (Post-2001) | Huiberts & Bach (2024) |

|---|---|---|---|

| Time Complexity | Exponential (O(2^n)) | Polynomial (e.g., O(n³⁰)) | A lower, refined polynomial bound |

| Feasibility | Intractable for large n |

Tractable and efficient in practice | Stronger guarantee of tractability |

| Theoretical Basis | Pure worst-case analysis | Smoothed analysis | Enhanced smoothed analysis |

| Expert Consensus | "Could be impractically slow" | "Efficient in practice, with theory to explain why" | "Fully understand this model of the simplex method" [3] |

Their work demonstrates that the runtimes are "guaranteed to be significantly lower than what had previously been established" and shows that this approach "cannot go any faster than the value they obtained" [3]. This offers a more complete theoretical explanation for the algorithm's observed speed and helps reassure those who feared exponential complexity [3].

Experimental Workflow for Benchmarking

The diagram below illustrates the standard experimental protocol for benchmarking the performance of optimization algorithms like the simplex method, as derived from established guidelines [20].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key methodological components and "research reagents" essential for conducting rigorous benchmarking studies in computational optimization [3] [20].

| Research Reagent / Component | Function & Description |

|---|---|

| Reference Datasets | Well-characterized real or simulated datasets used as a common ground truth for comparing algorithm performance and accuracy [20]. |

| Performance Metrics | Quantitative measures (e.g., runtime, memory usage, solution optimality gap) used to objectively evaluate and rank different algorithms [20]. |

| Simplex Method Software | Implementations of the simplex algorithm (e.g., in solvers like CPLEX, Gurobi) that are tested and configured for optimal performance [3]. |

| Smoothed Analysis Framework | The theoretical tool that introduces small random perturbations to inputs, explaining the simplex method's practical efficiency and guiding robust algorithm design [3]. |

| Benchmarking Pipeline | Automated software workflows that execute multiple algorithms on various datasets, collect results, and compute performance metrics to ensure reproducible comparisons [20]. |

The efficiency paradox of the simplex method—its theoretical exponential worst-case versus its practical polynomial speed—is no longer a deep mystery. Through the lens of smoothed analysis and reinforced by recent research, we now have a robust theoretical understanding that aligns with empirical observation. For researchers and practitioners in fields like drug development, where optimization problems are paramount, this means that the simplex method remains a reliably efficient and powerful tool. The continued refinement of its theoretical bounds assures us that the feared exponential scenarios are not a concern in practical applications, allowing for confident deployment in critical, large-scale optimization tasks.

Linear programming is a fundamental mathematical technique for optimizing a linear objective function subject to linear equality and inequality constraints. The simplex method, developed by George Dantzig during World War II, remains one of the most widely used algorithms for solving linear programming problems, particularly in resource allocation and transportation problems [3] [19]. The method operates by systematically moving from one corner point to another of the feasible region defined by the constraints, improving the objective function value at each step until an optimal solution is found [19].

To apply the simplex method effectively, linear programming problems must first be converted into standard maximization form, which requires all constraints to be equations (rather than inequalities) and all variables to be non-negative [22]. This transformation enables the construction of a simplex tableau, which serves as the computational foundation for the algorithm. The standard form provides a structured approach to organizing and analyzing complex systems, allowing for efficient problem-solving and decision-making across various domains, including pharmaceutical development and resource optimization [19] [22].

Standard Form Transformation Process

Conversion to Standard Form

The transformation of a linear programming problem to standard form involves several systematic steps to ensure compatibility with the simplex method. The standard form requires that all constraints are equations, all variables are non-negative, and the objective function is in either maximization or minimization form (with maximization being more common for simplex implementation) [22].

The conversion process involves the following key operations:

Objective Function Standardization: Convert maximization to minimization or vice versa by multiplying the objective function by -1. For example, a minimization problem can be converted to a maximization problem by maximizing the negative of the original objective function [22].

Inequality Constraint Transformation: Transform inequality constraints to equality constraints through the introduction of additional variables:

- For "≤" constraints: Add a slack variable (x + s = b)

- For "≥" constraints: Subtract a surplus variable and add an artificial variable (x - s + a = b) [22]

Variable Non-negativity Enforcement: Ensure all variables are non-negative by splitting free variables (variables that can take any value) into positive and negative components (x = x⁺ - x⁻) [22].

The resulting standard form exhibits these characteristics: all constraints are equations, all variables are non-negative, and the objective function is in the desired maximization or minimization form [22].

Role of Slack Variables

Slack variables play a crucial role in the standard form transformation process and the subsequent simplex algorithm operations. These variables are introduced specifically for "≤" constraints to convert inequalities to equations [22].

Key functions of slack variables include:

Constraint Transformation: Each slack variable represents the unused resources in a constraint, effectively converting inequality constraints to equality constraints [22].

Initial Basic Feasible Solution: Slack variables provide an initial basic feasible solution for the simplex algorithm to begin its iterative process [22].

Solution Interpretation: The values of slack variables at any solution indicate the amount of unused resources, providing valuable insights for decision-making (e.g., s = 5 means 5 units of a resource remain unused) [22].

In the simplex method, slack variables are non-negative and have coefficients of +1 in their respective constraints. They are included in the objective function with coefficients of zero, indicating they do not directly contribute to the objective value [22].

Tableau Construction and Components

Initial Simplex Tableau Setup

The simplex tableau provides a tabular representation of the linear programming problem in standard form and serves as the computational framework for the simplex algorithm. The initial tableau organizes all problem elements systematically to facilitate the iterative optimization process [22].

The construction of the initial simplex tableau involves these steps:

Objective Function Row (z-row): The top row contains the coefficients of all decision variables and slack/surplus variables from the objective function, typically with signs reversed for maximization problems [22].

Constraint Rows: Subsequent rows list the coefficients of decision variables and slack/surplus variables for each constraint equation [22].

Right-Hand Side (RHS) Column: This column displays the constant values from the constraint equations [22].

Basic Variable Column: This column identifies the current basic variables, which initially are typically the slack variables [22].

Objective Function Value Cell: Located in the bottom-right cell of the tableau, this displays the current value of the objective function [22].

The initial tableau also includes an identity matrix for the slack variables, with 1's in their corresponding rows and 0's elsewhere, establishing the initial basic feasible solution [22].

Components of Simplex Tableaus

A complete simplex tableau consists of several interconnected components that collectively represent the current solution state and facilitate the iterative improvement process [22].

Table 1: Components of a Simplex Tableau

| Component | Description | Purpose |

|---|---|---|

| Objective Function Row (z-row) | Contains coefficients of all variables in the objective function | Guides the optimization direction and identifies entering variables |

| Constraint Rows | Lists coefficients of variables for each constraint | Defines the solution space and relationships between variables |

| Right-Hand Side (RHS) Column | Shows constant values from constraints | Indicates current resource availability and solution values |

| Basic Variable Column | Identifies current basic variables | Tracks which variables form the current basis |

| Objective Function Value Cell | Located at bottom-right corner | Displays current value of the objective function |

The systematic arrangement of these components enables the simplex method to efficiently navigate the feasible region by performing pivoting operations that exchange basic and non-basic variables while maintaining feasibility [19] [22].

Experimental Comparison of Simplex-Based Methods

Methodology for Efficiency Assessment

To evaluate the efficiency of simplex-based optimization methods in practical applications, we examine experimental data from recent implementations across different domains. The assessment focuses on computational efficiency, solution quality, and convergence behavior compared to alternative optimization approaches [23] [24].

The experimental protocols for evaluating simplex method efficiency typically include:

Benchmark Problems: Application to standardized test problems with known optimal solutions to verify correctness and measure solution accuracy [23] [24].

Performance Metrics: Tracking of iteration counts, computational time, objective function evaluations, and memory usage across different problem sizes and complexities [23].

Comparison Framework: Parallel implementation of multiple algorithms on identical hardware and software platforms to ensure fair comparison [24].

Statistical Validation: Repeated runs with different initial conditions to account for variability, followed by statistical significance testing of results [24].

In the context of microwave design optimization, researchers have employed dual-fidelity electromagnetic (EM) simulations, where low-resolution models (Rc) are used for initial sampling and global search, while high-resolution models (Rf) are reserved for final parameter tuning to ensure reliability while maintaining computational efficiency [23].

Performance Data and Comparative Analysis

Recent experimental studies demonstrate the continued relevance and efficiency of simplex-based methods, particularly when enhanced with modern computational techniques. The following table summarizes comparative performance data from recent implementations:

Table 2: Performance Comparison of Optimization Algorithms

| Algorithm | Application Domain | Problem Size (Variables) | Computational Cost | Solution Quality | Convergence Speed |

|---|---|---|---|---|---|

| Simplex Method | General LP Problems | Small to Medium (≤1000) | Low to Moderate | High (Exact Solutions) | Fast for Well-Behaved Problems |

| SMCFO (Simplex-Enhanced CFO) | Data Clustering | 14 UCI Datasets | ~45 EM Analyses | Superior Accuracy | Faster Convergence |

| Dual-Fidelity Simplex | Microwave Design | 5-10 Parameters | 50-100 EM Simulations | Competitive Quality | Remarkable Efficiency |

| PSO | Data Clustering | 14 UCI Datasets | Higher than SMCFO | Lower than SMCFO | Slower than SMCFO |

| SSO | Data Clustering | 14 UCI Datasets | Higher than SMCFO | Lower than SMCFO | Slower than SMCFO |

The SMCFO algorithm, which incorporates the Nelder-Mead simplex method into the Cuttlefish Optimization algorithm, demonstrates particularly impressive performance. In comprehensive evaluations using 14 datasets from the UCI Machine Learning Repository, SMCFO consistently outperformed established clustering algorithms including CFO, PSO, SSO, and SMSHO, achieving higher clustering accuracy, faster convergence, and improved stability [24]. The robustness of these outcomes was further confirmed through nonparametric statistical tests, which demonstrated that SMCFO's performance improvements were statistically significant [24].

Research Reagent Solutions for Optimization Studies

The experimental investigation of optimization algorithms requires specific computational tools and frameworks. The following table outlines essential "research reagents" - key software and computational resources used in contemporary optimization studies:

Table 3: Essential Research Reagents for Optimization Experiments

| Research Reagent | Function | Application in Optimization Studies |

|---|---|---|

| Dual-Fidelity EM Simulators | Provide multi-resolution circuit analysis | Enable efficient pre-screening and reliable final tuning in microwave design optimization [23] |

| Simplex-Based Regressors | Model circuit operating parameters | Facilitate objective function regularization and optimum design identification [23] |

| UCI Machine Learning Repository | Source of standardized datasets | Provide benchmark problems for algorithm validation and comparison [24] |

| Statistical Testing Frameworks | Non-parametric significance tests | Validate performance improvements and algorithm robustness [24] |

| Sensitivity Update Algorithms | Restricted updating based on principal directions | Accelerate final parameter tuning stage in optimization workflows [23] |

These research reagents enable the implementation, testing, and validation of simplex-based optimization methods across various domains, from electronic design to data clustering. The dual-fidelity simulation approach is particularly valuable for computational efficiency, allowing researchers to use faster, lower-resolution models for exploratory phases while reserving high-resolution analysis for final verification [23].

Workflow and Signaling Pathways in Simplex Optimization

The simplex method follows a systematic workflow that can be conceptualized as a "signaling pathway" for mathematical optimization. The following diagram illustrates the logical relationships and procedural flow in standard form transformation and tableau-based optimization:

The simplex workflow begins with problem formulation and standard form conversion, proceeds through iterative tableau improvement via pivot operations, and terminates when optimality conditions are satisfied. This systematic process ensures reliable convergence to optimal solutions for linear programming problems [19] [22].

Recent enhancements to traditional simplex methods have incorporated additional computational techniques to improve efficiency. The following diagram illustrates a modern simplex-based optimization framework integrating multiple acceleration strategies:

This enhanced framework demonstrates how traditional simplex concepts have been integrated with modern computational techniques, including surrogate modeling and variable-fidelity simulations, to address complex optimization challenges more efficiently [23].

The transformation of linear programming problems to standard form through the introduction of slack variables and the construction of simplex tableaus remains a fundamental process in mathematical optimization. While the core simplex method developed by George Dantzig continues to be widely used, contemporary research has enhanced its efficiency through integration with other optimization paradigms and computational techniques [3] [23] [24].

Experimental comparisons demonstrate that simplex-based methods, particularly when enhanced with strategies like dual-fidelity simulations and restricted sensitivity updates, maintain competitive performance against alternative optimization approaches. The SMCFO algorithm's superior performance in data clustering applications highlights the continued potential of simplex-based approaches in computational science and operations research [24].

The structured methodology of standard form transformation and tableau construction provides a robust foundation for solving complex optimization problems across diverse domains, from pharmaceutical development to electronic design. As optimization challenges continue to evolve in scale and complexity, the principles of simplex optimization remain essential knowledge for researchers and practitioners engaged in computational problem-solving.

The long-standing debate over polynomial-time complexity, particularly concerning the practical efficiency of fundamental algorithms, has seen a major theoretical breakthrough. New research has successfully closed a key gap in the understanding of the simplex method, a cornerstone algorithm for linear optimization. This guide compares this theoretical progress against the established landscape of computational complexity.

Understanding the Complexity Debate

The P vs NP problem, a fundamental question in computer science, asks whether every problem whose solution can be quickly verified (NP) can also be quickly solved (P) [25]. This has profound implications for fields like cryptography and optimization. While this problem remains open, a parallel debate has existed for decades around the simplex method, a classic algorithm for solving linear programming problems.

- The Simplex Paradox: Despite being exceptionally fast in practice for real-world problems, the simplex method has long been shadowed by its theoretical worst-case complexity, which is exponential [3]. This meant that for specially crafted problems, the time it takes to find a solution could grow exponentially with the problem size, creating a disconnect between theory and practice [3].

- The Smoothed Analysis Bridge: In 2001, Spielman and Teng introduced smoothed analysis to resolve this paradox [26]. They showed that by adding tiny amounts of random noise to the worst-case inputs, the expected runtime of the simplex method becomes polynomial [3]. This provided a compelling explanation for its real-world efficiency, though the specific polynomial bound was high (e.g., to the power of 30) [3].

A Landmark Theoretical Advance

In 2025, researchers Eleon Bach and Sophie Huiberts announced a definitive advancement in this area. They established the optimal smoothed complexity for the simplex method, proving that a specific variant runs in (O(\sigma^{-1/2} d^{11/4} \log(n)^{7/4})) pivot steps, where (d) is the number of variables, (n) is the number of constraints, and (\sigma) is the standard deviation of the introduced noise [26]. Crucially, they also proved a matching lower bound, demonstrating that this result cannot be substantially improved and that their algorithm has optimal noise dependence [26].

The following workflow illustrates the evolution of analysis that led to this result and its theoretical implications.

Figure 1: The research trajectory from identifying the simplex paradox to resolving it with optimal smoothed analysis.

Comparative Performance Analysis

The table below quantitatively situates the performance of different algorithmic analyses, highlighting the significance of the 2025 advance.

| Analysis Framework | Theoretical Runtime Bound | Practical Relevance | Key Limitation |

|---|---|---|---|

| Worst-Case Analysis | Exponential time [3] | Low; does not reflect real-world performance | Overly pessimistic for practical scenarios |

| Spielman & Teng (2001) | Polynomial time, high exponent (e.g., ~n³⁰) [3] [26] | High; explains practical efficiency | Bound not tight; high exponent implies long runtime for large n |

| Huiberts, Lee & Zhang (2023) | (O(\sigma^{-3/2} d^{13/4} \log(n)^{7/4})) [26] | High; improved theoretical guarantee | Not proven to be the best possible |

| Bach & Huiberts (2025) | (O(\sigma^{-1/2} d^{11/4} \log(n)^{7/4})) with matching lower bound [26] | Highest; defines the best possible performance | Theoretical model; direct practical speed-ups may be limited |

This advance is primarily theoretical, providing a complete understanding of the simplex method's performance under smoothing rather than a new, directly usable software tool. It confirms that the existing family of simplex algorithms is fundamentally efficient for typical problems.

Experimental & Theoretical Protocols

The methodology behind this result is deeply mathematical, relying on a sophisticated analysis of high-dimensional geometry and probability.

- Core Methodology: Smoothed Analysis This framework blends worst-case and average-case analysis. It considers an adversary who chooses a worst-case linear program, after which a small amount of random noise (e.g., Gaussian with standard deviation (\sigma)) is added to the constraint data. The analyzed runtime is the expected runtime over this noise distribution [3] [26].

- Theoretical Workflow:

- Model Selection: Focus on a specific pivot rule (e.g., the "shadow vertex" rule) for traversing the problem's polyhedron.

- Geometric Transformation: Analyze the algorithm's path through the polyhedron's vertices as a function of the perturbed constraints.

- Probabilistic Bounding: Use techniques from probability and combinatorics to establish an upper bound on the expected number of steps (pivots) the algorithm takes.

- Lower Bound Construction: Design a family of instances that forces any simplex method to take at least a number of steps proportional to the upper bound, proving optimality.

- Validation: The proof itself, subject to peer review, is the primary validation. The result aligns with and strengthens the long-standing empirical observation of the simplex method's efficiency [3].

The Scientist's Toolkit: Research Reagent Solutions

For researchers working in computational optimization and algorithm analysis, the following "reagents" are essential.

| Research Tool / Concept | Function in Analysis |

|---|---|

| Linear Programming (LP) | The core problem class being solved; a model for resource allocation under constraints. |

| Simplex Method | The iterative algorithm that moves along the edges of the feasible polyhedron to find the optimal solution. |

| Worst-Case Analysis | Provides a performance guarantee for any possible input, establishing a problem's complexity class. |

| Smoothed Analysis | Explains an algorithm's performance on real-world, noisy data, bridging worst-case and average-case. |

| Kolmogorov Complexity | Measures the information content of a string; related frameworks can pinpoint hard problem instances [27]. |

| Polynomial vs. Exponential Time | The fundamental dichotomy in complexity theory; exponential time scales poorly with input size [25]. |

Interpretation for Drug Development Professionals

For professionals in drug discovery, these theoretical advances reinforce the reliability of optimization tools used in critical processes.

- Rationale for Efficiency: Many logistics, scheduling, and resource allocation problems in clinical trials and manufacturing can be modeled as Linear Programs. This research provides a stronger theoretical assurance that the simplex solvers used to tackle these problems are robust and efficient on real-world data, not just on paper.

- Beyond Classical Limits: The concept of "queasy instances"—problems that are easy for quantum computers but hard for classical ones—highlights a future pathway [27]. As quantum computing matures, it may open new avenues for solving complex optimization problems in molecular simulation and compound optimization that are currently intractable.

Implementing Simplex Optimization: From Analytical Chemistry to ADME Profiling

In mathematical optimization, Dantzig's simplex algorithm represents a fundamental method for solving linear programming (LP) problems by systematically examining vertices of the feasible region defined by constraints [2]. The algorithm operates on linear programs in canonical form, maximizing or minimizing a linear objective function subject to linear equality and inequality constraints [2]. In analytical chemistry and pharmaceutical development, simplex optimization has emerged as a practical methodology for improving the performance of systems, processes, and products by investigating multiple variables and their respective levels simultaneously [4]. Unlike univariate optimization, which changes one factor at a time while holding others constant, simplex methods can assess interaction effects between variables, providing significant advantages in experimental efficiency [4].

The core geometrical principle underlying simplex algorithms involves moving a geometric figure with k + 1 vertexes through an experimental field toward an optimal region, where k equals the number of variables in a k-dimensional domain [4]. In one dimension, this simplex is represented by a line; in two dimensions, by a triangle; in three dimensions, by a tetrahedron; and in higher dimensions, by hyperpolyhedrons [4]. This article examines the fundamental characteristics, performance differences, and practical applications of basic and modified simplex algorithms, providing researchers with evidence-based guidance for selecting the appropriate approach within efficiency studies.

Fundamental Principles and Historical Context

Historical Development

The simplex algorithm was developed by George Dantzig in 1947 while he worked on planning methods for the US Army Air Force [2] [3]. His fundamental insight was recognizing that most planning "ground rules" could be translated into a linear objective function requiring maximization [2]. The algorithm's name derives from the concept of a simplex, suggested by T. S. Motzkin, though the method actually operates on simplicial cones that become proper simplices with additional constraints [2]. Nearly 80 years after its development, the simplex method remains among the most widely used tools for logistical and supply-chain decisions under complex constraints [3].

The Basic Simplex Method

The basic simplex algorithm, also known as the fixed-size simplex, employs a regular geometric figure that maintains constant size during displacement toward optimum conditions [4]. This characteristic makes the initial simplex size a crucial determinant of optimization efficiency, requiring researcher experience regarding the system under investigation [4]. The algorithm transforms linear programming problems to standard form through: (1) introducing new variables for lower bounds other than zero, (2) adding slack/surplus variables to convert inequality constraints to equalities, and (3) eliminating unrestricted variables [2].

The method operates through pivot operations that move from one basic feasible solution to an adjacent one by selecting nonzero pivot elements in nonbasic columns, effectively walking along edges of the polytope to extreme points with improving objective values [2]. This process continues until reaching the maximum value or identifying an unbounded edge indicating no solution exists [2]. The algorithm always terminates due to the finite number of vertices in the polytope [2].

The Modified Simplex Approach

Nelder-Mead Enhancements

In 1965, Nelder and Mead proposed significant modifications to the basic simplex algorithm to enable additional movements better suited for locating optimum points with sufficient accuracy and speed [4]. Unlike the fixed-size basic simplex, the modified approach allows constant changes to the figure size through expansion and contraction of reflected vertices [4]. These modifications include several major moves: reflection away from the worst vertex, expansion in the reflection direction if response improves, contraction when reflection fails to improve results, and reduction toward the best vertex when no improvement occurs [4].

The modified simplex method introduces adaptive geometrical operations that dynamically resize and reshape the simplex based on response surface characteristics. This flexibility allows the algorithm to accelerate toward promising regions while contracting in areas of poor performance, providing more efficient navigation through complex experimental domains.

Advanced Computational Implementations

Recent research has developed sophisticated variants like the Funplex algorithm, which modifies the primal simplex approach to efficiently explore near-optimal spaces by reducing computational redundancy [28]. This implementation leverages the fact that solvers walk along feasible space boundaries, tracking intermediary solutions to gain additional information about near-optimal regions [28]. Funplex uses a multi-objective Simplex tableau to store and track multiple objectives simultaneously, maintaining each objective's values and relative cost vectors throughout the optimization process [28].

Comparative Performance Analysis

Theoretical Efficiency Considerations

The simplex method has demonstrated remarkable practical efficiency since its inception, though theoretical analyses have identified potential exponential worst-case scenarios for certain pivot rules [3]. In 1972, mathematicians proved that completion time could rise exponentially with constraint numbers for deterministic pivot rules like Bland's rule, Dantzig's rule, and the Largest Increase rule [3] [29]. This exponential behavior occurs when algorithms make suboptimal edge selections at each vertex, potentially following the longest possible path to the solution [3].

Recent theoretical breakthroughs have substantially improved our understanding of simplex efficiency. In 2001, Spielman and Teng demonstrated that introducing minimal randomness prevents worst-case scenarios, reducing complexity to polynomial time [3]. More recently, Huiberts and Bach established even faster guaranteed runtimes with enhanced randomness, providing mathematical justification for the method's observed practical efficiency [3].

Experimental Performance Data

Experimental comparisons in analytical and pharmaceutical contexts demonstrate significant performance differences between basic and modified simplex approaches:

Table 1: Performance Comparison in Pharmaceutical Formulation Optimization [30]

| Metric | Basic Simplex | Modified Simplex |

|---|---|---|

| Experiments to optimum | 12-15 | 8-10 |

| Disintegration time (sec) | 15-18 | <10 |

| Tablet hardness | Adequate | Optimal |

| Formulation robustness | Moderate | High |

| Interaction detection | Limited | Comprehensive |

Table 2: Computational Efficiency in Linear Programming [31] [28]

| Characteristic | Basic Simplex | Modified Simplex |

|---|---|---|

| Worst-case complexity | Exponential [29] | Polynomial [3] |

| Memory requirements | Moderate | Higher (tableau storage) |

| Convergence guarantee | Yes (finite) | Yes |

| Near-optimal space exploration | Limited | Comprehensive [28] |

| Computational speed | Variable | 5x faster in case studies [28] |

Experimental Protocols and Methodologies

Pharmaceutical Formulation Optimization

In developing fast-dissolving clozapine tablets, researchers employed a sequential simplex methodology to optimize direct compression formulations [30]. Microcrystalline cellulose and polyplasdone were selected as independent variables, with formulations evaluated for disintegration time, hardness, and friability responses [30]. The optimization success was evaluated using a total response equation generated according to response parameter priorities [30]. Based on response rankings, the experimental sequence continued through reflection, expansion, or contraction operations until achieving the desirable disintegration time of less than 10 seconds with adequate hardness [30].

Analytical Chemistry Applications

In trace heavy metal determination using square-wave anodic stripping voltammetry, researchers applied simplex optimization to enhance in-situ film electrode performance [32]. A fractional factorial design initially evaluated five factors: mass concentrations of Bi(III), Sn(II), and Sb(III); accumulation potential; and accumulation time [32]. Subsequent simplex optimization determined optimum conditions for these factors, significantly improving analytical performance compared to initial experiments and pure in-situ film electrodes [32]. This systematic approach simultaneously considered multiple analytical parameters including quantification limits, linear concentration range, sensitivity, accuracy, and precision [32].

Table 3: Essential Research Reagents and Materials

| Reagent/Material | Function/Application | Field |

|---|---|---|

| Microcrystalline cellulose | Direct compression excipient | Pharmaceutical formulation [30] |

| Polyplasdone | Disintegrant in tablet formulation | Pharmaceutical formulation [30] |

| Bi(III) solution | In-situ bismuth-film electrode formation | Electroanalytical chemistry [32] |

| Sb(III) solution | In-situ antimony-film electrode formation | Electroanalytical chemistry [32] |

| Sn(II) solution | In-situ tin-film electrode formation | Electroanalytical chemistry [32] |

| Acetate buffer (pH 4.5) | Supporting electrolyte | Electroanalytical chemistry [32] |

Selection Guidelines for Research Applications

Algorithm Selection Framework

Choosing between basic and modified simplex approaches requires careful consideration of research objectives, system characteristics, and computational resources:

Basic simplex is recommended for simpler systems with expected smooth response surfaces, limited computational resources, and when researcher experience provides good initial simplex size estimation [4].

Modified simplex is preferable for complex, multimodal response surfaces, when acceleration toward optimum is desired, and when adaptive navigation around constraints is necessary [4] [30].

Specialized variants like Funplex are optimal for exploring near-optimal spaces in energy systems modeling and capacity planning where identifying multiple comparable alternatives benefits decision-making [28].

Emerging Trends and Future Directions

Recent research developments indicate several promising directions for simplex optimization in scientific applications. Multi-objective simplex optimization and hybridization with other optimization methods represent growing areas of investigation [4]. The integration of fuzzy and intuitionistic fuzzy extensions with simplex methods enables decision-making under uncertainty conditions common in real-world applications [33]. Additionally, randomized pivot rules have demonstrated improved average performance compared to classical deterministic approaches, potentially overcoming exponential worst-case behaviors [29].

For drug development professionals, these advances suggest increasingly sophisticated optimization capabilities for complex formulation challenges involving multiple competing objectives and uncertain parameter spaces.

Basic and modified simplex algorithms offer complementary strengths for optimization challenges in scientific research and pharmaceutical development. The basic simplex provides a robust, easily implementable approach for well-behaved systems with predictable characteristics. In contrast, the modified simplex delivers enhanced efficiency and adaptability for complex, multi-dimensional optimization landscapes. Recent computational advances incorporating randomization and multi-objective capabilities further extend the method's applicability to contemporary research challenges. By understanding the fundamental characteristics, performance trade-offs, and implementation requirements of each approach, researchers can make informed selections aligned with their specific experimental objectives and system constraints.

Experimental Design Optimization (EDO) represents a paradigm shift in scientific research, moving beyond traditional heuristic approaches to a systematic methodology that maximizes information gain while minimizing resource expenditure. This approach is particularly crucial in fields like drug development, where resource constraints and ethical considerations demand maximum efficiency from every experiment. By applying mathematical optimization principles to experimental planning, researchers can significantly enhance sensitivity—the ability to detect true effects—while substantially reducing operational costs, from materials and personnel time to data collection expenses [34].

The fundamental challenge EDO addresses is the inherent trade-off in experimental science: using sufficient resources to achieve statistically valid results without wasteful redundancy. Traditional approaches often rely on balanced designs and standardized protocols that may be suboptimal for specific research questions. In contrast, optimized experimental design tailors the approach to the precise scientific inquiry, leveraging statistical principles and computational methods to identify the most efficient path to conclusive results [35]. This methodology has evolved from early statistical foundations to incorporate sophisticated algorithms, including simplex-based methods that provide efficient navigation through complex experimental parameter spaces [3] [24].

Theoretical Foundations of Sensitivity and Efficiency

Statistical Sensitivity in Experimental Design

Statistical sensitivity, typically measured through power analysis, represents the probability that an experiment will correctly reject a false null hypothesis. Traditional approaches to sensitivity enhancement often focus solely on increasing sample sizes, which directly escalates costs. However, optimized experimental design achieves sensitivity improvements through more sophisticated means, including strategic allocation of resources and refined measurement protocols [35].

The relationship between sensitivity and experimental setup can be demonstrated through the statistical power function for a two-sided test:

[ \text{Power} = 1 - \beta = P\left(t > t{1-\alpha/2} \mid \delta, \sigma, n1, n_2\right) ]

Where δ represents the true difference between groups, σ the standard deviation, n₁ and n₂ the sample sizes, and α the significance level. Optimized design manipulates these variables strategically rather than simply increasing n [35].

The Cost-Sensitivity Tradeoff Framework

The fundamental challenge in experimental design lies in balancing sensitivity against practical constraints. This tradeoff can be conceptualized through an efficiency frontier curve, where each point represents a different experimental configuration. Optimal designs reside along this frontier, providing maximum sensitivity for a given resource investment [36].

Table: Key Factors in the Cost-Sensitivity Tradeoff

| Factor | Impact on Sensitivity | Impact on Cost | Optimization Approach |

|---|---|---|---|

| Sample Size | Directly increases power | Linear increase | Strategic allocation rather than blanket increases [35] |

| Control Group Allocation | Critical for comparison precision | Fixed component | Optimal ratio to treatment groups [35] |

| Measurement Precision | Reduces variability | Often exponential cost | Balance between replication and precision [37] |

| Design Complexity | Captures interactions | Increases analysis requirements | Focus on most influential variables [36] |

Methodological Approaches to Experimental Optimization

The Simplex Optimization Framework

The simplex method represents a cornerstone of experimental optimization, providing a computationally efficient approach to navigating complex parameter spaces. Originally developed by George Dantzig for military logistics problems, simplex optimization has since been adapted for scientific experimental design [3]. The algorithm operates by constructing a polyhedral feasible region defined by experimental constraints, then systematically navigating from vertex to vertex to identify optimal conditions [3].

In practical terms, simplex methods transform experimental optimization into a geometric problem. Each experimental parameter combination represents a point in multidimensional space, with constraints forming boundaries. The simplex algorithm efficiently explores this space by evaluating points on a evolving geometric figure (simplex) that moves toward optimal regions through reflection, expansion, and contraction operations [24]. This approach has proven particularly valuable in high-dimensional optimization problems common in drug development, where numerous factors simultaneously influence outcomes [23].

Comparative Analysis of Optimization Methodologies

Table: Experimental Optimization Method Comparison

| Method | Mechanism | Best Application Context | Sensitivity Advantages | Cost/Limitations |

|---|---|---|---|---|

| Simplex Methods [3] [24] | Geometric navigation of parameter space | Continuous parameter optimization | High efficiency in constrained spaces | Moderate computational requirements |

| Population-based Metaheuristics [23] [24] | Parallel exploration with candidate solutions | Multimodal problems, global search | Robustness to local optima | High computational cost (1000s of evaluations) |

| Adaptive Design Optimization [34] | Bayesian updating with sequential designs | Cognitive science, psychophysics | Maximum information per trial | Complex implementation, computational intensity |

| Traditional Balanced Designs [35] | Equal allocation across groups | Preliminary exploration | Conceptual simplicity | Statistical inefficiency, higher resource use |

| Surrogate-assisted Optimization [23] | Hybrid approach with simplified models | Computationally expensive simulations | Enables global search with EM simulations | Model construction overhead |

Implementing Simplex Optimization in Experimental Design

The Simplex Optimization Workflow

The implementation of simplex optimization follows a structured workflow that balances systematic exploration with efficient convergence. This methodology is particularly valuable in experimental contexts where each evaluation represents substantial resource investment, such as in vitro assays or animal studies [24].

Key Operations in Simplex Optimization

The simplex method employs four fundamental operations to navigate the experimental parameter space efficiently:

- Reflection: Moving the worst-performing experimental condition away from the centroid of the remaining points. This operation preserves the simplex volume while exploring promising directions [24].

- Expansion: If reflection identifies a significantly improved experimental condition, further exploration in this direction through expansion can accelerate progress toward the optimum [24].

- Contraction: When reflection fails to yield improvement, contraction moves the worst point closer to the centroid, refining the search in a more promising region [24].

- Shrinkage: If the simplex becomes stuck or too large, shrinkage reduces all points toward the best-performing experimental condition, resetting the search scale [24].

These operations create a dynamic balance between exploration of new parameter combinations and exploitation of known promising regions, making simplex methods particularly efficient for experimental optimization where evaluation costs are substantial [24].

Applications in Pharmaceutical Research and Development

Experimental Design in Preclinical Studies

In pharmaceutical development, simplex optimization has demonstrated particular value in preclinical studies, where ethical considerations under the 3Rs framework (Replacement, Reduction, Refinement) mandate maximum information from minimal animal use [35]. A critical application lies in optimizing the allocation of subjects between control and treatment groups.

Research has demonstrated that traditional balanced designs, with equal subjects in all groups, are statistically inefficient for studies comparing multiple treatments to a single control. For such planned comparisons, mathematical analysis reveals that optimal sensitivity is achieved when the control group size is multiplied by √k compared to treatment groups, where k represents the number of treatment arms [35]. This approach can reduce total animal requirements by 15-30% while maintaining equivalent statistical power, directly addressing both ethical and cost concerns.

High-Throughput Screening Optimization