Simplex Optimization of Experimental Parameters: A Practical Guide for Biomedical Researchers

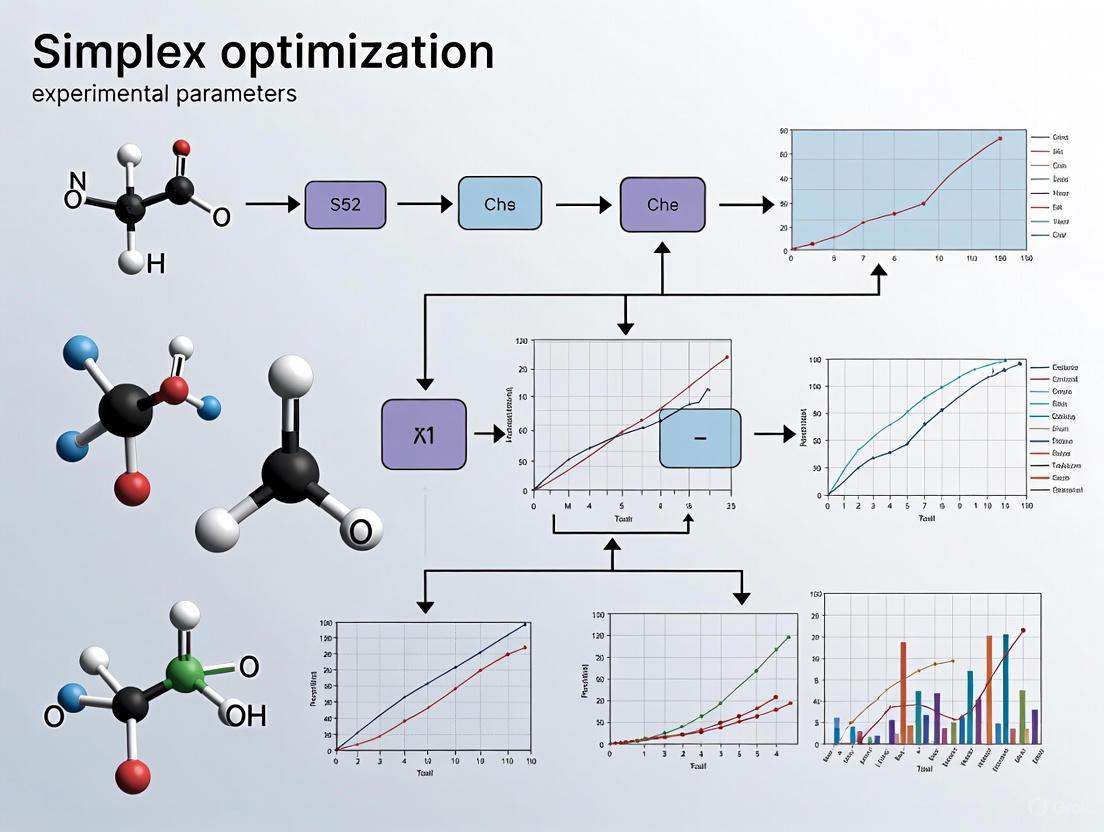

This comprehensive guide explores simplex optimization, a powerful chemometric tool for systematically improving experimental parameters in biomedical and analytical research.

Simplex Optimization of Experimental Parameters: A Practical Guide for Biomedical Researchers

Abstract

This comprehensive guide explores simplex optimization, a powerful chemometric tool for systematically improving experimental parameters in biomedical and analytical research. Covering foundational principles to advanced applications, it demonstrates how simplex methods outperform traditional one-variable-at-a-time approaches by efficiently handling multiple interacting factors. The article provides practical methodologies for implementing basic and modified simplex algorithms, troubleshooting common optimization challenges, and validating results against alternative techniques. Special emphasis is placed on applications relevant to drug development professionals, including analytical method validation, instrumental parameter optimization, and formulation development, with insights into recent theoretical advances and future directions for clinical research optimization.

Understanding Simplex Optimization: Core Principles and Historical Context

What is Simplex Optimization? Defining the Geometric Approach to Multivariate Problems

Simplex optimization refers to a family of mathematical algorithms designed for solving multivariate optimization problems. In the context of linear programming (LP), the Simplex Method, pioneered by George Dantzig in 1947, is a foundational algorithm for optimizing a linear objective function subject to linear equality and inequality constraints [1] [2]. The method's name derives from the geometric concept of a simplex—a generalization of a triangle or tetrahedron to higher dimensions—which represents the feasible region defined by the constraints [1] [3]. The algorithm operates by systematically moving along the edges of this polytope from one vertex to an adjacent vertex, improving the objective function value with each step until the optimum is reached [4] [2].

A distinct, yet related algorithm is the Nelder-Mead simplex method, developed for optimizing non-linear problems where derivatives are unavailable [5] [6]. Unlike Dantzig's method for linear problems, Nelder-Mead is a heuristic search technique that uses a simplex (a geometric shape with n+1 vertices in n dimensions) which evolves through operations of reflection, expansion, and contraction to converge toward an optimum [6]. This application note focuses primarily on the linear programming Simplex Method due to its foundational role in operational research and drug development, while acknowledging Nelder-Mead's utility in non-linear experimental parameter optimization.

Geometric and Algebraic Foundations

Geometric Interpretation

The Simplex Method's power stems from its elegant geometric interpretation. Each linear constraint defines a half-space in n-dimensional space, and the intersection of these half-spaces forms a convex polytope known as the feasible region [3]. The fundamental theorem of linear programming states that if an optimal solution exists, it must occur at one of the vertices of this polytope [4] [2]. The algorithm efficiently navigates this structure by moving from vertex to adjacent vertex along the edges of the polytope, at each step choosing the direction that most improves the objective function [1] [7].

This geometric operation corresponds to algebraically swapping basic and non-basic variables through pivot operations [8] [4]. The algorithm begins at a feasible vertex (typically the origin, if feasible) and iteratively identifies an improving direction. If no improving direction exists, the current vertex is optimal [7].

Algorithm Steps and Standard Form

To apply the Simplex Method, the problem must first be converted to standard form:

- Maximization of the objective function

- All constraints (except non-negativity) expressed as equalities

- All variables non-negative [1] [8]

Conversion involves:

- Slack variables: Convert inequalities (≤) to equalities by adding non-negative slack variables [1] [4]

- Surplus variables: Convert inequalities (≥) to equalities by subtracting non-negative surplus variables

- Unrestricted variables: Replace variables without sign restrictions with the difference of two non-negative variables [1]

The algorithm proceeds through two phases:

- Phase I: Finds an initial basic feasible solution (if one exists)

- Phase II: Moves from the initial feasible solution to the optimal solution through a sequence of pivot operations [1]

Table 1: Simplex Method Terminology

| Term | Definition | Geometric Meaning |

|---|---|---|

| Basic Feasible Solution | A solution where some variables (non-basic) are zero, and the system of constraints can be solved for the remaining (basic) variables [4] | Vertex of the feasible polytope |

| Pivot Operation | The process of exchanging a basic variable with a non-basic variable [1] [8] | Movement from one vertex to an adjacent vertex along an edge |

| Reduced Cost | The coefficient of a variable in the objective row of the simplex tableau [1] | Rate of improvement in the objective function when that variable is increased |

| Entering Variable | The non-basic variable selected to become basic in the next iteration [4] | The direction of movement along an edge |

| Leaving Variable | The basic variable that will become non-basic in the next iteration [4] | The constraint that will become active at the new vertex |

Comparative Analysis of Optimization Methods

Simplex Method vs. Interior Point Methods

While the Simplex Method traverses the boundary of the feasible region, Interior Point Methods (IPMs) approach the optimum from the interior of the feasible region [9] [2]. Developed after Karmarkar's seminal 1984 paper, IPMs offer polynomial-time complexity compared to the Simplex Method's exponential worst-case complexity [9]. However, in practice, the Simplex Method often performs efficiently, typically requiring a number of iterations that scales linearly with the number of constraints [7].

Table 2: Simplex vs. Interior Point Methods

| Characteristic | Simplex Method | Interior Point Methods |

|---|---|---|

| Solution Path | Follows edges of the polytope (vertex-to-vertex) [2] [3] | Traverses through the interior of the feasible region [9] |

| Theoretical Complexity | Exponential in worst case [7] | Polynomial time [9] |

| Practical Performance | Often efficient in practice, especially for sparse problems [7] [2] | Excellent for large, dense problems [9] |

| Solution Type | Basic feasible solutions (vertices) [4] | Intermediate solutions become feasible only at convergence |

| Implementation in Solvers | Widely available; often preferred for discrete optimization decompositions [9] | Standard in modern solvers; excellent for continuous LPs |

Recent Theoretical Advances

For decades, a shadow hung over the Simplex Method due to its exponential worst-case complexity established in 1972 [7]. However, recent breakthrough work by Huiberts and Bach (2025) has provided theoretical justification for its observed efficiency. Their research demonstrates that with appropriate randomization, the Simplex Method's runtime is guaranteed to be significantly lower than previously established bounds, confirming that "the exponential runtimes that have long been feared do not materialize in practice" [7]. This work builds on the landmark 2001 result by Spielman and Teng that showed adding slight randomness makes the algorithm run in polynomial time [7].

Applications in Drug Development and Experimental Design

Resource Allocation and Process Optimization

In pharmaceutical research, the Simplex Method provides powerful solutions for multiple challenges:

- Resource Allocation: Optimizing limited resources (budget, equipment, personnel) across multiple drug development projects to maximize portfolio value or accelerate timelines [2]

- Manufacturing Optimization: Determining optimal production levels for various drug formulations given constraints on raw materials, production capacity, and storage [2]

- Clinical Trial Design: Optimizing patient allocation across trial arms or determining optimal sampling schedules while respecting ethical and operational constraints

- Supply Chain Management: Designing efficient distribution networks for pharmaceuticals, minimizing costs while ensuring availability [2]

Experimental Parameter Optimization

The Nelder-Mead simplex method is particularly valuable for optimizing experimental parameters in drug development, especially when working with complex, non-linear systems where analytical gradients are unavailable [5] [6]. Applications include:

- Analytical Method Development: Optimizing HPLC/UPLC method parameters (mobile phase composition, pH, temperature, gradient profile) to achieve optimal separation

- Formulation Optimization: Determining optimal ratios of excipients and API to achieve desired release profiles and stability

- Process Parameter Optimization: Optimizing bioreactor conditions (temperature, pH, nutrient feed rates) for maximum yield in biopharmaceutical production

Experimental Protocols

Protocol 1: Linear Resource Optimization Using Simplex Method

Objective: Optimize resource allocation across multiple drug development projects to maximize expected return.

Materials and Software:

- Linear programming solver (e.g., CPLEX, Gurobi, Google OR-Tools, or open-source alternatives)

- Computational environment (Python with scipy.optimize.linprog or equivalent)

Procedure:

- Problem Formulation:

- Define decision variables (e.g., budget allocation to each project)

- Formulate objective function (e.g., maximize net present value of portfolio)

- Define constraints (total budget, personnel capacity, timeline constraints)

Standard Form Conversion:

- Convert all constraints to equality constraints using slack variables

- Ensure all variables have non-negativity restrictions

Tableau Setup:

- Construct initial simplex tableau

- For problems without obvious initial feasible solution, use Phase I method

Iteration:

- Identify entering variable (most negative reduced cost for maximization)

- Compute ratios to determine leaving variable (minimum ratio test)

- Perform pivot operation

- Update tableau

Termination:

- Continue iterations until no further improvement possible (all reduced costs non-negative for maximization)

- Extract optimal solution values from final tableau

Validation:

- Verify solution satisfies all original constraints

- Perform sensitivity analysis to understand shadow prices and constraint binding

Protocol 2: Non-linear Parameter Optimization Using Nelder-Mead

Objective: Optimize experimental parameters for drug formulation to maximize desired performance metric.

Materials:

- Experimental apparatus for formulation testing

- Analytical instruments for response measurement

- Computational software implementing Nelder-Mead (e.g., MATLAB fminsearch, Python scipy.optimize.minimize)

Procedure:

- Initial Simplex Construction:

- Identify n critical parameters to optimize

- Construct initial simplex with n+1 points in parameter space

- Evaluate objective function at each vertex

Iteration Cycle:

- Order vertices by objective function value

- Calculate centroid of best n points

- Reflect worst point through centroid

- If reflection is better than second-worst but not best: Replace worst point with reflection

- If reflection is better than best point: Expand in reflection direction

- If reflection is worse than second-worst: Contract toward centroid

- If contraction fails: Shrink entire simplex toward best point

Termination:

- Continue until simplex size falls below tolerance or maximum iterations reached

- Take best vertex as optimal parameter set

Validation:

- Confirm optimal parameters through confirmatory experiments

- Evaluate robustness of optimum through small parameter variations

Research Reagent Solutions

Table 3: Essential Computational Tools for Simplex Optimization

| Tool/Resource | Function | Application Context |

|---|---|---|

| Commercial Solvers (CPLEX, Gurobi) | High-performance mathematical optimization software | Large-scale linear programming problems in resource allocation and production planning |

| Open-Source Alternatives (SCIP, GLPK) | Free alternatives to commercial solvers | Academic research and prototyping optimization models |

| Python Scientific Stack (NumPy, SciPy) | Libraries providing simplex and Nelder-Mead implementations | Algorithm prototyping, educational use, and moderate-scale problems |

| R Optimization Packages (lpSolve, optim) | Statistical programming environment with optimization capabilities | Optimization integrated with statistical analysis of results |

| MATLAB Optimization Toolbox | Comprehensive optimization environment | Engineering design and parameter optimization in experimental settings |

| Custom Implementation | Purpose-coded simplex algorithm | Educational understanding and specialized problem requirements |

Simplex optimization provides a powerful framework for addressing multivariate optimization problems across drug development and pharmaceutical manufacturing. The geometric foundation of these algorithms offers both computational efficiency and intuitive interpretation of results. Recent theoretical advances have strengthened our understanding of why the Simplex Method performs so well in practice, alleviating long-standing concerns about its worst-case complexity [7].

For linear programming problems, the Simplex Method remains a cornerstone of operations research, while the Nelder-Mead method offers valuable capabilities for non-linear experimental parameter optimization. The continued development of hybrid approaches, such as those combining simplex concepts with other optimization paradigms [10] [6], promises further enhancements to our ability to solve complex multivariate problems in pharmaceutical research and development.

The Simplex Method, developed by George Dantzig in 1947, represents a cornerstone of mathematical optimization and has fundamentally shaped operational research and scientific computing [1] [7]. This algorithm for solving linear programming problems emerged from military planning requirements during the post-World War II era, specifically from Dantzig's work with the U.S. Army Air Force under Project SCOOP (Scientific Computation of Optimum Programs) [7] [11]. The method's core insight involves navigating along the edges of a polyhedral feasible region from one vertex to an adjacent one, systematically improving the objective function value until reaching an optimal solution [1] [12]. Despite the discovery of worst-case exponential time complexity in 1972, the algorithm's remarkable practical efficiency has sustained its relevance across diverse fields including logistics, economics, engineering, and drug development [7] [12]. This application note examines the historical evolution, theoretical underpinnings, and contemporary implementations of the Simplex Method, with particular emphasis on experimental parameter optimization in scientific research.

Historical Context and Algorithm Fundamentals

Origins and Military Applications

George Dantzig's pioneering work emerged from his position as a mathematical adviser to the newly formed U.S. Air Force following World War II [7]. The global scale of the war demonstrated the critical importance of optimal resource allocation, prompting military interest in solving complex optimization problems involving hundreds or thousands of variables [7]. Dantzig's "core insight was to realize that most such ground rules can be translated into a linear objective function that needs to be maximized" [1]. The algorithm was conceived in mid-1947 when Dantzig, drawing upon his earlier doctoral work on the Neyman-Pearson Lemma, applied a "column geometry" approach to linear programming, which he described as "climbing the bean pole" [11]. By August 1947, this conceptual framework had evolved into the formal Simplex Method [11].

Mathematical Foundation

The Simplex Method operates on linear programs in canonical form [1]:

- Maximize cᵀx

- Subject to Ax ≤ b and x ≥ 0

Where c = (c₁, ..., cₙ) represents the coefficients of the linear objective function, x = (x₁, ..., xₙ) is the vector of decision variables, A is the constraint coefficient matrix, and b = (b₁, ..., bₚ) is the right-hand-side constraint vector [1].

The algorithm transforms inequality constraints into equalities by introducing slack variables, converting the problem to the standard form [1]:

Maximize cᵀx Subject to Ax = b and x ≥ 0

The fundamental theorem underlying the Simplex Method states that if a linear program has an optimal solution, then it possesses an optimal basic feasible solution corresponding to a vertex of the feasible region [1]. The algorithm proceeds through the following phases:

- Phase I: Identifies an initial basic feasible solution or determines that the feasible region is empty

- Phase II: Iteratively moves from one basic feasible solution to an adjacent one with improved objective function value until reaching optimality or determining unboundedness [1]

Table 1: Key Historical Milestones in Simplex Method Development

| Year | Development | Key Contributors |

|---|---|---|

| 1947 | Original Simplex Algorithm | George Dantzig |

| 1948 | First public presentation at UCLA symposium | George Dantzig |

| 1951 | First published description | George Dantzig |

| 1972 | Exponential worst-case complexity discovery | Klee & Minty |

| 1984 | Polynomial-time Interior Point Method | Narendra Karmarkar |

| 2001 | Smoothed Analysis Framework | Spielman & Teng |

| 2025 | "By the Book" Analysis Framework | Bach & Huiberts |

Theoretical Advances and Performance Analysis

Complexity and Efficiency Explanations

The 1972 discovery that the Simplex Method could require exponential time under certain pivot rules created a significant theoretical paradox, given its consistently efficient performance in practice [7] [13]. This discrepancy between worst-case complexity and observed efficiency motivated decades of research into explaining the algorithm's practical performance.

In 2001, Spielman and Teng introduced smoothed analysis, demonstrating that with slight random perturbations to constraint coefficients, the Simplex Method's expected running time becomes polynomial [7] [13]. Their work showed that "the tiniest bit of randomness" could prevent the pathological cases that cause exponential behavior, providing a compelling explanation for the algorithm's practical efficiency [7].

Recent research has further advanced this theoretical understanding. In 2025, Bach and Huiberts introduced a "by the book" analysis framework that models not only input data but also the algorithm itself, incorporating implementation details such as feasibility tolerances and input scaling assumptions [13]. This approach addresses limitations of smoothed analysis, particularly regarding the handling of sparse linear programs commonly encountered in practice [13].

Comparison of Analysis Frameworks

Table 2: Theoretical Frameworks for Analyzing Simplex Method Performance

| Framework | Key Principle | Complexity Bound | Limitations |

|---|---|---|---|

| Worst-Case Analysis | Considers most unfavorable input instance | Exponential [7] | Overly pessimistic for practical use |

| Average-Case Analysis | Assumes inputs follow probability distribution | Polynomial [13] | Structural mismatch with practical LPs |

| Smoothed Analysis | Adds slight random perturbations to adversarial inputs | Polynomial in expectation [7] [13] | Does not preserve sparsity of practical LPs |

| "By the Book" Analysis | Models algorithm implementation details and input scaling | Polynomial under practical assumptions [13] | New framework requiring further validation |

Modern Implementations and Applications

Algorithmic Variations and Extensions

Contemporary implementations of the Simplex Method have evolved significantly from Dantzig's original formulation. Key developments include:

- Dual Simplex Algorithm: Particularly effective in mixed-integer programming and sensitivity analysis

- Revised Simplex Method: Reduces computational burden by maintaining and updating only essential information

- Interior Point Methods (IPMs): Polynomial-time alternatives that complement rather than replace simplex algorithms in modern optimization software [9]

The Downhill Simplex Method (Nelder-Mead algorithm), while sharing nomenclature, represents a distinct derivative-free optimization technique for nonlinear problems [14]. Recent enhancements to this method include degeneracy correction through volume maximization and reevaluation strategies to address noise-induced spurious minima, extending its applicability to high-dimensional experimental optimization [14].

Implementation Protocols

Protocol 1: Basic Simplex Implementation for Experimental Parameter Optimization

Purpose: To provide researchers with a foundational protocol for implementing the Simplex Algorithm to optimize experimental parameters in drug development and scientific research.

Materials and Software Requirements:

- Linear programming solver (GLPK, CPLEX, Gurobi, or custom implementation)

- Programming environment (C++, Python, MATLAB, or similar)

- Numerical linear algebra libraries

Procedure:

- Problem Formulation:

- Define decision variables corresponding to experimental parameters

- Formulate objective function (e.g., maximizing yield, minimizing cost)

- Specify linear constraints representing experimental limitations

Standard Form Conversion:

- Convert inequalities to equalities using slack variables

- Replace unrestricted variables with difference of non-negative variables

- Ensure right-hand-side coefficients are non-negative

Initial Tableau Construction:

- Construct the initial simplex tableau

- Identify initial basic feasible solution using Phase I method if needed

Iterative Optimization:

- Entering Variable Selection: Identify non-basic variable with most negative reduced cost (maximization)

- Leaving Variable Selection: Apply minimum ratio test to maintain feasibility

- Pivot Operation: Perform elementary row operations to update tableau

- Termination Check: Repeat until no negative reduced costs remain

Solution Extraction:

- Extract optimal values for decision variables

- Verify feasibility and optimality conditions

Troubleshooting:

- Cycling: Implement Bland's rule or perturbation techniques

- Numerical Instability: Use LU factorization for basis updates

- Infeasibility: Analyze Phase I results to identify conflicting constraints

Protocol 2: AI-Assisted Implementation for Complex Experimental Design

Purpose: To leverage modern AI coding assistants for efficient implementation of Simplex-based optimization in complex experimental parameter spaces.

Materials:

- AI-assisted development environment (e.g., Amazon Q Developer, GitHub Copilot)

- Optimization test suites for validation

- Benchmark problem instances

Procedure:

- Algorithm Specification:

- Provide pseudo-code or mathematical description to AI assistant

- Specify programming language and numerical precision requirements

Iterative Code Development:

- Generate initial implementation with core pivot operations

- Refine data structures for sparse constraint matrices

- Implement numerical safeguards for ill-conditioned problems

Validation and Testing:

- Verify implementation against benchmark problems

- Test degenerate cases and special structures

- Perform comparative analysis with established solvers

Integration with Experimental Frameworks:

- Develop interfaces with experimental data systems

- Implement parameter transformation routines

- Create visualization modules for optimization trajectories

Applications in Drug Development:

- Optimal resource allocation in high-throughput screening

- Experimental parameter optimization for reaction conditions

- Blending problems in pharmaceutical formulation

- Portfolio optimization in research project selection

Research Reagent Solutions

Table 3: Essential Computational Tools for Simplex-Based Experimental Optimization

| Tool/Category | Function | Example Implementations |

|---|---|---|

| Linear Programming Solvers | Core optimization engines | Gurobi, CPLEX, GLPK, SCIP |

| Numerical Computation Libraries | Matrix operations and linear algebra | NumPy, LAPACK, Eigen |

| AI-Assisted Development Environments | Algorithm implementation acceleration | Amazon Q Developer, GitHub Copilot |

| Simplex Variant Implementations | Specialized solution algorithms | Dual Simplex, Revised Simplex, Network Simplex |

| Benchmarking and Testing Suites | Algorithm validation and performance analysis | NETLIB LP Test Set, MIPLIB |

| Visualization Tools | Optimization trajectory analysis | MATLAB, Python matplotlib, Graphviz |

Visual Representations

Simplex Method Algorithm Workflow

Historical Development Timeline

The Simplex Method has demonstrated remarkable resilience and adaptability since its inception in 1947, maintaining its relevance despite the discovery of theoretically superior algorithms. Its continued utility stems from proven practical efficiency, conceptual clarity, and robust implementations in commercial and open-source optimization software. For researchers in drug development and experimental science, mastery of both the theoretical foundations and practical implementations of the Simplex Method provides powerful capabilities for optimizing experimental parameters, resource allocation, and research portfolio management. The ongoing theoretical developments in understanding its performance, particularly through frameworks like "by the book" analysis, continue to enhance our confidence in applying this classical algorithm to contemporary research challenges.

In the development of analytical methods and pharmaceutical processes, investigators must find the proper experimental conditions to achieve the best possible responses, such as superior accuracy, higher sensitivity, and lower quantification limits. Traditionally, this optimization has been performed using univariate optimization, where the influence of one variable is monitored at a time while keeping all other factors constant. Although straightforward, this technique possesses a critical limitation: it cannot assess the effects of interactions between variables [15].

In contrast, simplex optimization represents a multivariate approach that suggests the optimization of various studied factors simultaneously without requiring complex mathematical-statistical expertise. By evaluating multiple factors concurrently, simplex methods can efficiently navigate the experimental response surface, directly accounting for and exploiting factor interactions to locate optimal conditions more effectively and with fewer experimental runs [15]. This application note details the practical advantages of simplex optimization, with specific emphasis on its capacity to handle factor interactions, and provides detailed protocols for implementation in research and development settings.

Theoretical Foundation: Simplex Optimization and Factor Interactions

Fundamental Principles of Simplex Optimization

Simplex optimization is performed by the displacement of a geometric figure with k + 1 vertexes in an experimental field toward an optimal region, where k equals the number of variables in a k-dimensional domain. In practical terms, a simplex in one dimension is represented by a line, in two dimensions by a triangle, in three dimensions by a tetrahedron, and in higher dimensions by hyperpolyhedrons [15]. The method operates through a series of logical rules that dictate the movement of this geometric figure across the experimental landscape:

- Initialization: The process begins by establishing an initial simplex with k + 1 experiments, where k represents the number of variables to be optimized.

- Evaluation and Reflection: Each vertex of the simplex represents a specific combination of factor levels. The system evaluates the response at each vertex, rejects the worst-performing vertex, and replaces it with a new point reflected through the centroid of the remaining points.

- Progression: Through iterative reflection, expansion, and contraction operations, the simplex moves toward regions of more favorable response, ultimately locating the optimum conditions [15] [16].

This systematic movement through the factor space enables the simplex method to navigate response surfaces where factors interact, meaning the effect of one variable depends on the level of another variable.

The Critical Limitation of Univariate Approaches

Univariate optimization (one-factor-at-a-time approach) suffers from fundamental methodological constraints when dealing with interacting factors. As highlighted in studies of dynamic headspace (DHS) extractions coupled to gas chromatography, this classical approach is "not capable of evaluating interactions among the variables and their combined effects on the process" [17]. Consequently, the optimal conditions identified through a series of single-factor experiments may represent merely local optima rather than globally optimal conditions for the system [17].

Table 1: Comparative Characteristics of Optimization Methods

| Feature | Univariate Approach | Simplex Optimization |

|---|---|---|

| Factor Interaction Assessment | Cannot evaluate interactions | Explicitly accounts for interactions |

| Number of Experiments Required | Often excessive | Minimized through systematic approach |

| Identification of Global Optimum | Unlikely, may find local optimum | High probability with proper implementation |

| Computational Complexity | Low | Moderate, but does not require complex statistical expertise |

| Practical Implementation | Simple but inefficient | Methodical and efficient |

Quantitative Comparison: Experimental Efficiency

The efficiency of simplex optimization becomes particularly evident when examining the number of experimental runs required to locate optimal conditions. Research demonstrates that simplex methods can achieve comparable or superior optimization with significantly fewer experiments than univariate approaches [15].

In a representative case study optimizing dynamic headspace extractions for volatile organic compound analysis, a multivariate design using Design of Experiments (DoE) principles required only 15 experiments with three replicates at the center point to thoroughly investigate three critical factors and their interactions [17]. A comparable univariate investigation would have necessitated substantially more experimental runs while still failing to characterize the interaction effects between parameters such as incubation temperature, purge flow rate, and purge volume [17].

Table 2: Experimental Requirements for Investigating Three Factors

| Optimization Approach | Minimum Experiments | Interaction Assessment |

|---|---|---|

| Univariate (One-Factor-at-a-Time) | 15-20+ (estimated) | Not possible |

| Basic Simplex | Approximately 10-15 | Built into methodology |

| Modified Simplex (Nelder-Mead) | Variable, typically fewer than basic simplex | Built into methodology with adaptive size |

The modified simplex algorithm, introduced by Nelder and Mead in 1965, further enhances optimization efficiency by allowing the simplex to change size through expansion and contraction operations, accelerating convergence toward the optimum region while maintaining sensitivity to factor interactions [15].

Practical Implementation: Protocols for Simplex Optimization

Protocol 1: Establishing the Initial Simplex for Method Development

This protocol outlines the steps for implementing a modified simplex optimization to develop an analytical method, using the optimization of instrumental parameters for inductively coupled plasma optical emission spectrometry (ICP OES) as a representative example [15].

Research Reagent Solutions and Materials

| Item | Function in Optimization |

|---|---|

| Analytical Standard Solutions | Provide consistent response measurement across experiments |

| Mobile Phase Components | Factors for optimization (e.g., composition, pH, buffer strength) |

| Chromatographic Column | Fixed system component for separation performance assessment |

| Detection System | Provides quantitative response measurement |

| Data Acquisition Software | Records and processes response data for decision making |

Procedure

- Define the Optimization Goal: Clearly specify the objective function (e.g., maximize peak resolution, minimize analysis time, optimize signal-to-noise ratio).

- Select Critical Factors: Identify the key variables to be optimized (e.g., temperature, flow rate, gradient profile, injection volume).

- Establish Factor Ranges: Define feasible operating ranges for each factor based on instrument specifications and methodological constraints.

- Determine Initial Simplex Size: Calculate the step size for each factor, typically 10-20% of the factor range, depending on the expected complexity of the response surface.

- Construct Initial Simplex: Generate k + 1 experimental conditions (where k = number of factors) to form the initial simplex vertices.

- Execute Experiments: Perform experiments according to the vertex conditions in randomized order to minimize systematic error.

- Evaluate Responses: Quantify the performance at each vertex using the predefined objective function.

- Iterate the Simplex: Apply Nelder-Mead rules to reflect, expand, or contract the simplex away from the worst-performing vertex.

- Continue Until Convergence: Proceed with iterations until no significant improvement occurs or the simplex contracts below a predefined size threshold.

Diagram 1: Simplex Optimization Workflow

Protocol 2: Simplex Optimization of Dynamic Headspace Extraction Parameters

This protocol adapts the generalized optimization procedure for dynamic headspace (DHS) extractions coupled to gas chromatography, utilizing simplex principles to efficiently optimize multiple interdependent parameters [17].

Research Reagent Solutions and Materials

| Item | Function in Optimization |

|---|---|

| Sorbent Tubes (Tenax TA) | Trap and concentrate volatile analytes |

| High-Purity Nitrogen Gas | Inert purging gas for volatile transfer |

| Sample Matrix (e.g., Sourdough) | Representative material for method development |

| Internal Standard Solutions | Quality control and response normalization |

| Thermal Desorption Unit | Introduces extracted volatiles to analytical system |

Procedure

- Factor Selection: Identify critical DHS parameters known to interact: incubation temperature, purge flow rate, and purge volume.

- Experimental Design: Establish initial simplex vertices covering the operational range for these three factors.

- Response Definition: Define the objective function incorporating both the total number of detected compounds and the summed peak area of analyte signals.

- Initial Experiments: Conduct DHS extractions at each vertex of the initial simplex.

- Chromatographic Analysis: Perform GC×GC–TOF-MS analysis on all extracts using consistent instrument parameters.

- Data Processing: Integrate chromatographic data to quantify total peak areas and number of detected compounds.

- Response Surface Modeling: Fit a predictive model to optimize the response based on the factor levels and their interactions.

- Simplex Progression: Apply modified simplex rules to navigate toward optimal conditions, giving particular attention to interaction effects between temperature and flow parameters.

- Verification: Confirm optimized conditions with replicate experiments to ensure robustness.

Visualization of Factor Interaction Handling

The following diagram illustrates how simplex optimization efficiently navigates factor interactions compared to univariate approaches, specifically highlighting the movement through a response surface where significant interactions exist between factors.

Diagram 2: Factor Interaction Handling Comparison

Applications in Pharmaceutical and Analytical Development

Simplex optimization has demonstrated particular utility in pharmaceutical and analytical method development where multiple interacting factors influence the final outcome. Published applications include:

- Optimization of Chromatographic Systems: Simplex methods have been successfully applied to optimize separation conditions in high-performance liquid chromatography (HPLC), where factors such as mobile phase composition, pH, temperature, and flow rate exhibit significant interactions that affect resolution and analysis time [15].

- Spectroscopic Method Development: In ICP OES optimization, simplex approaches have efficiently navigated the complex interactions between instrumental parameters including plasma power, nebulizer flow rate, and viewing height to maximize signal-to-noise ratios [15].

- Drug Formulation Development: The method has proven valuable in pharmaceutical formulation where excipient ratios, processing parameters, and active ingredient characteristics interact non-linearly to affect product performance.

The robustness of simplex optimization, combined with its relatively straightforward implementation, has established it as a powerful tool for method development in regulated environments where understanding factor interactions is critical for method validation and robustness testing.

Simplex optimization provides researchers with a computationally accessible yet powerful approach for navigating complex experimental landscapes where factor interactions significantly influence system behavior. Unlike univariate methods that cannot characterize these critical interactions, simplex optimization explicitly incorporates them into the search strategy, leading to more efficient identification of globally optimal conditions with fewer experimental runs. The provided protocols and visualizations offer practical guidance for implementing this valuable methodology in diverse research and development settings, particularly in pharmaceutical and analytical applications where understanding and exploiting factor interactions is essential for developing robust, high-performing methods.

Within the broader thesis on simplex optimization for experimental parameters research, this document serves as a detailed protocol for applying simplex-based methods. Simplex optimization provides a structured, efficient framework for experimentalists, particularly researchers and drug development professionals, to navigate multi-parameter spaces and locate optimal conditions with a minimal number of experiments. This approach is invaluable in fields like analytical chemistry and pharmaceutical development, where resource efficiency is paramount. These notes detail the fundamental terminology and provide two core, actionable protocols: the Fixed-Size Simplex Optimization and the implementation of the Simplex Algorithm for Linear Programming [18] [19].

Fundamental Terminology

The following table defines the core terminology essential for understanding and applying simplex methods.

| Term | Definition | Context in Simplex Optimization |

|---|---|---|

| Variables | The independent factors or parameters being controlled in an experiment. | In the simplex procedure, these are the factors whose optimal levels are sought (e.g., pH, temperature, concentration). Also called "factors." [18] |

| Vertices | The specific sets of factor levels that define the corners of the current simplex. | Each vertex represents one experiment. In a two-factor optimization, a simplex is a triangle defined by three vertices. [18] |

| Responses | The measured outcome or result of an experiment. | This is the dependent variable to be optimized (e.g., yield, resolution, purity). The goal is to find the vertex that gives the best response. [18] |

| Experimental Domain | The multi-dimensional space defined by all possible combinations of the factors' levels. | The simplex moves through this domain. The domain can be bounded by practical constraints, leading to asymmetric, feasible regions. [19] |

| Simplex | A geometric figure used in optimization, defined by a number of points equal to the number of variables plus one. | For two factors, the simplex is a triangle; for three, it is a tetrahedron. The method proceeds by moving this figure across the response surface. [18] |

| Basis | The set of basic variables in a linear programming dictionary. | In the Simplex Algorithm, the basic variables are those that are non-zero at a given vertex (extreme point) of the feasible region. [20] |

| Feasible Region | The set of all points that satisfy all constraints of an optimization problem. | In linear programming, this region is a polyhedron. The Simplex Algorithm moves along the edges of this polyhedron from one vertex to another. [1] [21] |

Core Protocols

Protocol 1: Fixed-Size Simplex Optimization for Experimental Parameters

This sequential procedure is ideal for empirical optimization when a mathematical model of the system is not known a priori.

Workflow Visualization

The following diagram illustrates the logical workflow and decision process for a fixed-size simplex optimization.

Detailed Methodology

Initial Simplex Setup

- For

kfactors, the initial simplex is defined byk+1vertices. - For two factors (e.g., Factor A and B), select a starting point

(a, b). - The remaining two vertices are placed at

(a + s_a, b)and(a + 0.5s_a, b + 0.87s_b), wheres_aands_bare the step sizes for each factor [18]. - Execute the experiments defined by these initial vertices and record the responses.

- For

Iteration and Movement Rules

- Rule 1: Rank and Reflect. Rank the vertices from best (

v_b) to worst (v_w) response. Reject the worst vertex and generate a new vertex (v_n) by reflecting it through the midpoint (centroid) of the remaining vertices.- Calculation for Factor A:

a_{v_n} = 2 * [(a_{v_b} + a_{v_s}) / 2] - a_{v_w}(wherev_sis the other retained vertex). - Calculation for Factor B:

b_{v_n} = 2 * [(b_{v_b} + b_{v_s}) / 2] - b_{v_w}[18].

- Calculation for Factor A:

- Rule 2: Handling Failure. If the new vertex

v_nyields the worst response in the new simplex, do not return to the previous worst vertex. Instead, reject the second-worst vertex (v_s) and reflect it to generate the next new vertex. - Rule 3: Boundary Control. If a new vertex exceeds a pre-defined boundary condition (e.g., a pH where the reagent degrades), assign it the worst possible response value and apply Rule 2.

- Rule 1: Rank and Reflect. Rank the vertices from best (

Termination

Protocol 2: Simplex Algorithm for Linear Programming

This algorithm is used for solving linear optimization problems with constraints, which can model various resource allocation problems in research and development.

Workflow Visualization

The following diagram outlines the systematic steps of the Simplex Algorithm for solving a linear program.

Detailed Methodology

Standard Form and Slack Variables

- Formulate the Linear Program (LP) in standard form: Maximize cᵀx, subject to Ax ≤ b and x ≥ 0 [1] [22].

- Introduce slack variables (

s) to convert inequality constraints to equalities. For a constraintA_x ≤ b, it becomesA_x + s = b, wheres ≥ 0[1] [20] [16]. - The objective function is also written as

z - cᵀx = 0.

Initial Tableau Construction

Pivoting Procedure

- Optimality Check: If all entries in the objective row (the indicators) are non-negative, the current solution is optimal. If not, proceed [22] [16].

- Pivot Column Selection: Choose the column with the most negative value in the objective row. This "entering variable" will increase the objective value [22] [20].

- Pivot Row Selection: For each row, calculate the ratio of the right-hand side (

b) to the corresponding positive coefficient in the pivot column. The row with the smallest non-negative ratio is the pivot row. This "leaving variable" ensures feasibility is maintained [22] [16]. Bland's Rule (choosing the variable with the smallest index in case of ties) can prevent cycling [8]. - Pivot Operation: Use row operations to make the pivot element 1 and all other elements in the pivot column 0, forming a new tableau [1] [20].

Solution Extraction

- Once optimal, the solution is read from the final tableau. Variables not in the basis (columns not part of the identity matrix) are zero. Basic variables (columns that are part of the identity matrix) have values equal to the corresponding entry in the right-hand side column. The optimal value of the objective function

zis found in the top-right corner of the tableau [22] [16].

- Once optimal, the solution is read from the final tableau. Variables not in the basis (columns not part of the identity matrix) are zero. Basic variables (columns that are part of the identity matrix) have values equal to the corresponding entry in the right-hand side column. The optimal value of the objective function

The Scientist's Toolkit: Research Reagent Solutions

The following table lists key materials and computational tools used in simplex optimization experiments, particularly in chromatographic method development.

| Item | Function in Simplex Optimization |

|---|---|

| Mobile Phase Components (e.g., water, methanol, acetonitrile, buffer salts) | These are the factors/variables being optimized. Their proportions and pH directly influence the response (e.g., chromatographic resolution). In mixture designs, they are the core variables. [19] |

| Analytical Standard/Reference Material | Used to generate the response data (e.g., retention time, peak area, resolution) at each vertex of the simplex, allowing for the quantitative ranking of experimental conditions. [19] |

| Chromatographic System (HPLC/UHPLC) | The platform on which the experiments are run. It must provide precise control over factors like mobile phase composition, temperature, and flow rate. [19] |

| Simplex Optimization Software (e.g., custom Python scripts, MATLAB, dedicated chemometric packages) | Automates the calculation of new vertices after each iteration based on the reflection rules, streamlining the optimization process. [8] |

Linear Programming Solver (e.g., online solvers, Python with scipy.optimize.linprog, R) |

Used to implement the Simplex Algorithm for resource optimization problems, handling the tableau construction and pivoting operations efficiently. [16] |

Application in Drug Development: A Chromatographic Case Study

In pharmaceutical analysis, method development is crucial for separating a drug substance from its related compounds or impurities. The following diagram integrates simplex optimization into a comprehensive method development workflow.

- Problem Framing: The goal is to optimize chromatographic resolution (

R_s) and analysis time. Key variables are the pH of the aqueous buffer and the percentage of organic modifier (e.g., methanol) in the mobile phase [19]. - Protocol Application:

- Screening: A fractional factorial design might first be used to identify that pH and methanol percentage are the most influential factors.

- Optimization: A fixed-size simplex optimization (Protocol 1) is then employed. The initial simplex consists of three different combinations of pH and methanol percentage. After running each experiment, the vertex giving the worst resolution (or a composite metric considering both resolution and time) is reflected to generate a new experimental condition.

- Result: The simplex efficiently moves toward the optimal combination of pH and methanol, maximizing resolution within an acceptable analysis time. The final method is then subjected to a robustness test, itself a small experimental design, to ensure it is reliable under minor, expected variations in operating conditions [19].

The table below summarizes core quantitative relationships and rules from the protocols.

| Concept | Mathematical Relation / Rule | Reference |

|---|---|---|

| Initial 2-Factor Simplex | Vertex 1: (a, b)Vertex 2: (a + sa, b)Vertex 3: (a + 0.5sa, b + 0.87s_b) | [18] |

| Reflection Rule | New Factor Level = 2 × (Average of retained vertices' levels) - (Worst vertex's level) | [18] |

| LP Standard Form | Maximize cᵀx, subject to Ax ≤ b, x ≥ 0 | [1] [22] |

| Slack Variable Introduction | Inequality a₁x₁ + ... + aₙxₙ ≤ b becomes a₁x₁ + ... + aₙxₙ + s = b, s ≥ 0 | [1] [20] |

| Optimality Criterion (LP) | All coefficients (indicators) in the objective row of the tableau are ≥ 0 | [22] [16] |

Simplex optimization represents a family of practical and efficient mathematical strategies for solving optimization problems where the goal is to find the best possible outcome given a set of constraints. In biomedical research, where experimental conditions must frequently be optimized for processes ranging from analytical chemistry to bioprocess development, simplex methods provide a structured approach to navigating complex experimental landscapes. The fundamental principle behind simplex optimization involves the sequential movement of a geometric figure (a simplex) through an experimental domain toward optimal conditions. For k variables, the simplex is a geometric shape with k+1 vertices in a k-dimensional space, which evolves based on experimental feedback to locate the region delivering the best performance [15].

The relevance of simplex optimization to biomedical research stems from its ability to efficiently handle multivariate optimization without requiring complex mathematical-statistical expertise. Unlike univariate approaches that change one variable at a time, simplex methods allow researchers to assess the effects of multiple variables and their interactions simultaneously, leading to more comprehensive optimization while reducing the number of experiments needed, thereby saving reagents, time, and costs [15]. This article examines the ideal scenarios for deploying simplex optimization and provides detailed protocols for its application in biomedical research contexts, framed within broader thesis research on experimental parameter optimization.

When to Choose Simplex Optimization: Key Scenarios and Comparative Advantages

Ideal Application Scenarios

Simplex optimization is particularly well-suited for specific scenarios commonly encountered in biomedical research. One prime application is the optimization of analytical methods where multiple variables influence the measured response. For instance, in chromatography, variables such as mobile phase composition, pH, temperature, and flow rate can be simultaneously optimized to achieve the best separation, peak shape, and detection sensitivity [15]. Similarly, in spectroscopy, simplex methods can optimize instrumental parameters like nebulizer gas flow, radiofrequency power, and viewing position in inductively coupled plasma optical emission spectrometry (ICP OES) to maximize signal-to-noise ratios [15].

Another key scenario involves bioprocess development and optimization. This includes optimizing chromatography conditions for protein purification, fermentation media composition, or reaction conditions in synthetic chemistry. A notable example is the application of a simplex variant combined with dummy variables to optimize chromatographic processes involving both numerical (e.g., pH, ionic strength) and categorical inputs (e.g., resin type, buffer composition) [23]. This approach successfully identified global optima in High Throughput (HT) chromatography case studies for monoclonal antibody purification and model protein separation, preventing the algorithm from becoming stranded at local optima [23].

Simplex optimization also excels in experimental domains where the mathematical relationship between variables and response is complex or not well-defined. When the response surface is unpredictable or contains multiple local optima, the semiglobal simplex (SGS) approach proves valuable. Although SGS does not guarantee finding the global minimum, it facilitates a more thorough exploration of local minima than traditional minimization methods [24]. This makes it suitable for problems such as determining the preferred solvation sites of proteins, where it located the same minimum free energy positions as an exhaustive multistart simplex search with less than one-tenth the number of minimizations [24].

Comparison with Other Optimization Methods

Understanding when simplex optimization is preferable requires comparing its characteristics against alternative methodologies. The table below summarizes key distinctions.

Table 1: Comparison of Optimization Methods in Biomedical Research

| Method | Key Principle | Best-Suited Scenarios | Advantages | Limitations |

|---|---|---|---|---|

| Simplex Optimization | Sequential movement of geometric figure toward optimum based on experimental feedback [15] | Multivariate optimization with limited theoretical model; Numerical and categorical inputs; Robustness prioritized over speed [15] [23] | Does not require derivatives; Handles numerical and categorical variables; Relatively simple to implement [15] [23] | Convergence can be slow near optimum; Does not guarantee global optimum [24] [15] |

| Univariate Optimization | One variable changed at a time while others held constant | Simple systems with no variable interactions; Preliminary screening | Simple to implement and interpret | Ignores variable interactions; Inefficient; Can miss true optimum [15] |

| Response Surface Methodology (RSM) | Statistical, theoretical modeling of response surface based on experimental design | Well-behaved systems where mathematical relationships can be modeled; When understanding precise factor effects is crucial [15] | Provides detailed model of system behavior; Can precisely locate and characterize optimum | Requires specific statistical expertise; Less efficient for complex or categorical variable spaces [15] |

| Interior Point Methods (IPMs) | Traverse through interior of feasible region toward optimum [9] | Large-scale linear programming problems; Problems requiring polynomial-time solutions [9] | Proven polynomial complexity for large problems; High accuracy for linear programs [9] | Primarily for linear programming; Less suitable for experimental optimization with categorical variables [9] |

Practical Advantages for Biomedical Research

For biomedical researchers, simplex optimization offers several practical benefits. Its computational efficiency makes it particularly valuable when function evaluation is computationally inexpensive and the search region is large [24]. The extreme simplicity of the method also lowers the barrier to implementation, as it doesn't require advanced mathematical-statistical tools [15]. Furthermore, certain simplex variants demonstrate robust performance with complex problems. While methods like the Convex Global Underestimator (CGU) deliver better success rates for simple problems, simplex methods become comparable as problem complexity increases, and they are generally faster [24].

The following diagram illustrates the decision-making process for selecting an optimization method in biomedical research:

Figure 1: Optimization Method Selection Guide for Biomedical Experiments

Applications in Biomedical Research: Case Studies and Experimental Parameters

Optimization of Analytical Chemistry Methods

Simplex optimization has been extensively applied to optimize analytical methods in biomedical research, particularly in chromatography and spectroscopy. These applications typically involve adjusting multiple continuous variables to achieve optimal analytical performance in terms of sensitivity, resolution, or throughput.

Table 2: Experimental Parameters in Analytical Chemistry Optimization

| Application Area | Key Variables Optimized | Response Metric | Simplex Variant Used | Reference |

|---|---|---|---|---|

| Micellar Liquid Chromatography | Surfactant concentration, organic modifier percentage, pH | Resolution of vitamins E and A, analysis time | Modified Simplex | [15] |

| Solid-Phase Microextraction-GC-MS | Extraction time, temperature, desorption time | Peak areas of PAHs, PCBs, phthalates | MultiSimplex | [15] |

| Flow Injection Analysis | Reagent concentration, flow rate, injection volume | Detection signal for tartaric acid | Modified Simplex | [15] |

| ICP OES | Nebulizer gas flow, RF power, viewing position | Signal-to-noise ratio for elemental analysis | Basic Simplex | [15] |

Bioprocess Development and Chromatography Optimization

In early bioprocess development, researchers frequently encounter optimization spaces comprising both numerical and categorical inputs. A grid-compatible Simplex variant combined with dummy variables has been successfully deployed for such scenarios, which are intractable by traditional Simplex methods [23]. The dummy variable methodology allows the concurrent optimization of numerical and categorical inputs, including multilevel and dichotomous factors.

In one case study involving the purification of a monoclonal antibody using filter-plate HT techniques, the Simplex-based method identified and characterized global optima while preventing stranding at local optima due to the arbitrary handling of categorical inputs [23]. Another study dealing with the separation of a binary system of model proteins using miniature columns (RoboColumns) demonstrated equivalent efficiency to Design of Experiments (DoE)-based approaches, specifically D-Optimal designs [23].

Table 3: Research Reagent Solutions for Bioprocess Optimization

| Reagent/Material | Function in Optimization | Application Context |

|---|---|---|

| Filter Plates | High-throughput screening of binding/elution conditions | Monoclonal antibody purification [23] |

| RoboColumns | Miniaturized column chromatography studies | Binary protein separation optimization [23] |

| Binding Buffers | Systematic variation of binding conditions | Identification of optimal binding pH and conductivity [23] |

| Elution Buffers | Examination of elution profiles under different conditions | Optimization of elution step in column chromatography [23] |

| Resin Types (Categorical Variable) | Evaluation of different separation chemistries | Selection of optimal chromatographic media [23] |

The following workflow diagram illustrates a typical simplex optimization process for chromatographic bioprocess development:

Figure 2: Simplex Optimization Workflow for Bioprocess Development

Biomolecular Structure and Solvation Studies

In structural biology and computational chemistry, simplex optimization has been applied to problems such as determining preferred solvation sites of proteins. The Semiglobal Simplex (SGS) algorithm performs a local minimization in each step of the simplex algorithm, carrying out the search on a surface spanned by local minima [24]. This approach has been used to locate the most preferred (minimum free energy) solvation sites on a streptavidin monomer, identifying the same lowest free energy positions as an exhaustive multistart Simplex search with significantly fewer minimizations [24].

Detailed Experimental Protocols

Protocol 1: Basic Simplex Optimization for Analytical Method Development

Purpose: To optimize an analytical method (e.g., chromatographic separation, spectroscopic detection) by identifying the best combination of continuous variables using the basic simplex algorithm.

Materials and Equipment:

- Analytical instrument (HPLC, GC, spectrometer, etc.)

- Standards and reagents

- Data acquisition and analysis software

- Simplex optimization software (commercial or custom-coded)

Procedure:

Define the System:

- Identify the response to be optimized (e.g., peak resolution, detection sensitivity, analysis time).

- Select the factors (variables) to be optimized and their reasonable ranges based on preliminary experiments or literature values.

Design the Initial Simplex:

- For k factors, design an initial simplex of k+1 experiments.

- The size of the initial simplex should be chosen based on researcher experience with the system, as this is crucial for optimization efficiency [15].

Run Experiments and Evaluate Responses:

- Execute the k+1 experiments in randomized order to avoid systematic error.

- Measure the response for each experiment.

Apply Simplex Rules:

- Identify the experiment with the worst response.

- Reject this vertex and replace it with a new one by reflecting the worst point through the centroid of the remaining points.

- Calculate the coordinates of the new vertex using the formula:

- New = Centroid + (Centroid - Worst)

- Maintain the size and shape of the simplex throughout the process [15].

Iterate Until Convergence:

- Continue the process of rejection and reflection.

- Termination occurs when the simplex begins to circle around the optimum or when the response no longer improves significantly.

Verify the Optimum:

- Conduct confirmation experiments at the predicted optimum conditions.

- Validate the method performance using the optimized parameters.

Protocol 2: Modified Simplex for Bioprocess Parameter Optimization

Purpose: To optimize bioprocess parameters (e.g., chromatography conditions, fermentation parameters) using the modified simplex method, which allows changes in simplex size for faster convergence.

Materials and Equipment:

- Bioprocess equipment (chromatography system, bioreactor, etc.)

- Relevant biological materials (proteins, cell cultures, etc.)

- Analytics for response measurement (HPLC, ELISA, activity assays)

- Software for simplex optimization

Procedure:

Initial Setup:

- Define the objective function to maximize or minimize (e.g., yield, purity, productivity).

- Identify controlled variables and their operational ranges.

- Design the initial simplex with k+1 experiments.

Experimental Execution:

- Run initial experiments and rank vertices from best to worst response.

Transformation Steps:

- Reflection: Calculate the reflection vertex (as in basic simplex).

- Expansion: If the reflected vertex gives a better response than the current best, calculate an expansion vertex further in the same direction:

- Expansion = Centroid + γ(Centroid - Worst), where γ > 1 (typically 2.0)

- Contraction:

- If the reflected vertex is worse than the worst vertex, perform a contraction:

- Contraction = Centroid + β(Centroid - Worst), where 0 < β < 1 (typically 0.5)

- If the contracted vertex is worse than the worst vertex, perform a reduction by moving all vertices toward the best vertex.

- If the reflected vertex is worse than the worst vertex, perform a contraction:

Iteration and Convergence:

- Replace the worst vertex with the new vertex (reflected, expanded, or contracted).

- Continue iterations until the simplex size becomes smaller than a predetermined threshold or the response improvement falls below a minimum acceptable level.

Process Validation:

- Validate the optimized conditions in a controlled bioprocess run.

- Assess performance metrics to confirm improvement over baseline conditions.

Protocol 3: Handling Categorical Variables in Bioprocess Optimization

Purpose: To optimize bioprocess parameters that include both numerical and categorical variables using a simplex variant with dummy variables.

Materials and Equipment:

- High-throughput bioprocess screening platform (e.g., filter plates, RoboColumns)

- Different resin types, buffer systems, or other categorical factors

- Analytics for response measurement

- Simplex optimization software capable of handling dummy variables

Procedure:

Variable Identification:

- Identify numerical variables (e.g., pH, ionic strength, temperature) and categorical variables (e.g., resin type, buffer composition).

- For each categorical variable with m levels, assign m-1 dummy variables [23].

Experimental Design:

- Incorporate both numerical and dummy variables into the simplex design.

- The total dimensionality of the problem becomes k + (m-1) for the categorical variables.

Grid-Compatible Simplex Execution:

- Execute the simplex algorithm as in Protocol 2, but when categorical variables change, ensure compatibility with the experimental grid.

- The dummy variables allow the simplex to handle categorical factors without becoming stranded at local optima [23].

Response Evaluation and Iteration:

- Evaluate responses for each experimental condition.

- Apply simplex transformation rules, treating dummy variables similarly to continuous variables.

Optimum Identification:

- Identify the optimal combination of both numerical and categorical factors.

- Verify the global optimum through confirmation experiments.

The following diagram illustrates the reflection, expansion, and contraction operations in the modified simplex method:

Figure 3: Simplex Transformation Operations (Reflection, Expansion, Contraction)

Simplex optimization represents a powerful, practical approach for addressing multivariate optimization challenges across biomedical research. Its particular strengths shine in scenarios involving mixed variable types (both numerical and categorical), when computational evaluation costs are low, and when robustness is prioritized over theoretical guarantees of global optimality. The method's simplicity of implementation, combined with its ability to thoroughly explore complex experimental spaces, makes it an invaluable tool for researchers developing analytical methods, optimizing bioprocesses, or studying biomolecular interactions.

As biomedical research continues to embrace high-throughput methodologies and complex experimental designs, simplex optimization—particularly in its enhanced forms such as the modified simplex and categorical variable-handling variants—will remain a relevant and efficient approach for navigating multidimensional optimization landscapes. Its successful application across diverse domains from analytical chemistry to structural biology underscores its versatility and practical utility in advancing biomedical research.

Comparison with Traditional One-Factor-at-a-Time Optimization Limitations

In experimental science, the pursuit of optimal conditions is paramount for developing efficient and robust analytical methods, chemical syntheses, and drug formulations. For decades, the One-Factor-at-a-Time (OFAT) approach has been a commonly used, traditional method for this purpose. However, OFAT possesses significant limitations, particularly its inability to detect interaction effects between factors, which frequently leads to the identification of local, rather than global, optima and results in suboptimal process performance [25] [26].

This application note, framed within a broader thesis on simplex optimization, contrasts the OFAT method with the more advanced simplex optimization algorithm. We provide a detailed, practical protocol for implementing the modified Nelder–Mead simplex method, demonstrated through a case study on optimizing an electrochemical sensor for heavy metals. The simplex method, a cornerstone of multivariate optimization, systematically explores the experimental parameter space by simultaneously varying all factors, thereby efficiently guiding the search toward the true optimum [27].

Comparative Analysis: OFAT vs. Simplex Optimization

The table below summarizes the fundamental differences in methodology and outcomes between the OFAT and Simplex optimization approaches.

Table 1: Fundamental Differences Between OFAT and Simplex Optimization

| Characteristic | One-Factor-at-a-Time (OFAT) | Simplex Optimization |

|---|---|---|

| Basic Principle | Varies one factor while holding all others constant [26] [28]. | Varies all factors simultaneously in a structured, iterative manner [27]. |

| Experimental Efficiency | Low; requires a large number of runs for the same precision [25] [26]. | High; typically locates an optimum in fewer experimental runs [29] [10]. |

| Handling of Interactions | Cannot estimate interaction effects between factors [25] [28]. | Inherently accounts for and exploits factor interactions to find better optima. |

| Risk of Finding Optima | High risk of missing the global optimum, finding only a local improvement [29] [26]. | High probability of locating the global or a superior local optimum. |

| Underlying Assumption | Assumes factors are independent [28]. | Makes no assumption of independence; effective for dependent factors. |

| Path to Optimum | Path-dependent; efficiency relies on the order of factor optimization [28]. | Path-independent; algorithm autonomously finds the most efficient path. |

The core limitation of OFAT is its failure to account for factor interactions. When factors are independent (e.g., changing Factor A has the same effect regardless of Factor B's level), OFAT can successfully find the optimum, though it may be inefficient. However, in cases of dependent factors, where the effect of one factor changes based on the level of another, OFAT fails. This is visualized in the contour maps below, where the OFAT path gets trapped and requires multiple cycles to reach the optimum, unlike with independent factors [28].

Diagram 1: OFAT Path with Factor Interaction. The path shows multiple direction changes as factors are optimized sequentially, illustrating inefficiency when interactions exist.

Detailed Experimental Protocol: Simplex Optimization of an In-Situ Film Electrode

This protocol details the application of simplex optimization to enhance the analytical performance of an in-situ film electrode (FE) for detecting trace heavy metals (Zn(II), Cd(II), Pb(II)) via square-wave anodic stripping voltammetry (SWASV) [29]. The goal is to simultaneously optimize multiple factors to achieve the best combination of low detection limits, high sensitivity, wide linear range, accuracy, and precision.

Research Reagent Solutions and Materials

Table 2: Essential Materials and Reagents for the SWASV Experiment

| Item Name | Function / Description | Specifics / Example |

|---|---|---|

| Glassy Carbon Electrode (GCE) | Working electrode substrate. | 3.0 mm diameter disc, sealed in Teflon [29]. |

| Ag/AgCl (sat'd KCl) | Reference electrode. | Provides a stable potential reference. |

| Platinum Wire | Counter electrode. | Completes the electrical circuit. |

| Bi(III), Sn(II), Sb(III) Standards | Film-forming ions for in-situ FE. | Aqueous standards, 1000 mg L¯¹ [29]. |

| Zn(II), Cd(II), Pb(II) Standards | Target analytes. | Aqueous standards, 1000 mg L¯¹. |

| Acetate Buffer | Supporting electrolyte. | 0.1 M, pH 4.5. |

| Polishing Supplies | Electrode surface preparation. | 0.05 μm Al₂O₃ slurry. |

Step-by-Step Workflow and Protocol

The following diagram and protocol outline the complete experimental workflow, from initial electrode preparation to the final simplex optimization cycle.

Diagram 2: Simplex Optimization Workflow. The cyclic process of measurement, evaluation, and new condition generation continues until convergence at the optimum.

Electrode Preparation

- Polish the Glassy Carbon Electrode (GCE) surface thoroughly using 0.05 μm Al₂O₃ slurry on a polishing cloth [29].

- Rinse the electrode extensively with ultrapure water to remove all alumina residues.

- Clean the electrode via sonication in ultrapure water for 1 minute.

- Immerse the GCE in 15 wt.% HCl for approximately 10 minutes for chemical cleaning.

- Validate the surface cleanliness using cyclic voltammetry in a hexacyanoferrate solution.

Solution Preparation and Experimental Setup

- Prepare a supporting electrolyte of 0.1 M acetate buffer at pH 4.5.

- To this buffer, add precise mass concentrations (γ) of film-forming ions: Bi(III), Sn(II), and Sb(III). The initial simplex will be constructed based on a range of concentrations for these three factors (e.g., 0–1 mg/L) [29].

- Introduce the target analytes (Zn(II), Cd(II), Pb(II)) at environmentally relevant concentrations.

- Transfer a 20.0 mL aliquot of the final solution to the electrochemical cell.

SWASV Measurement Parameters

Conduct Square-Wave Anodic Stripping Voltammetry (SWASV) using the following parameters [29]:

- Accumulation Potential (Eacc): A factor to be optimized (e.g., -1.4 V to -0.8 V).

- Accumulation Time (tacc): A factor to be optimized (e.g., 60–300 s).

- Amplitude: 50 mV.

- Potential Step: 4 mV.

- Frequency: 25 Hz.

- Equilibration Time: 15 s.

- Stirring: ~300 rpm during accumulation and cleaning steps.

Defining the Objective Function

A key advantage of this approach is the use of a composite objective function that balances multiple analytical performance criteria, rather than just maximizing a single peak current [29]. Calculate the objective function (OF) for each experimental vertex as follows: The specific weighting factors can be adjusted based on the primary goal of the analysis.

Executing the Simplex Optimization

- Initial Simplex: Construct the initial simplex. For k factors, this requires k+1 initial experiments. In this case, with 5 factors (γBi(III), γSn(II), γSb(III), Eacc, tacc), 6 initial experiments are designed.

- Simplex Progression: Follow the standard Nelder–Mead algorithm rules [27]:

- a. Order: Evaluate and rank all vertices from worst (lowest OF) to best (highest OF).

- b. Reflect: Calculate the reflection of the worst vertex through the centroid of the remaining vertices. Evaluate the OF at this new vertex.

- c. Expand: If the reflected vertex is better than the current best, further expand in that direction.

- d. Contract: If the reflected vertex is worse than the second-worst, perform a contraction.

- e. Shrink: If contraction fails, shrink the entire simplex towards the best vertex.

- Termination: The optimization is concluded when the objective function no longer improves significantly, or the simplex vertices converge to a small region of the factor space.

Critical Data Presentation

The quantitative superiority of the simplex method over both OFAT and non-optimized methods is demonstrated in the following data, derived from the referenced heavy metal sensor study [29].

Table 3: Comparison of Analytical Performance Before and After Simplex Optimization

| Analytical Parameter | Performance Before Optimization (Typical Values) | Performance After Simplex Optimization |

|---|---|---|

| Limit of Detection (LOD) | Baseline (e.g., ~0.5 μg/L for Pb(II)) | Significantly Lower |

| Sensitivity (Slope) | Baseline | Markedly Higher |

| Linear Concentration Range | Baseline | Substantially Wider |

| Accuracy (Recovery) | Baseline (~95%) | Closer to 100% |

| Precision (RSD) | Baseline (~5%) | Improved (Lower RSD) |

Troubleshooting and Notes

- Initial Simplex Design: The choice of the initial vertices is critical. They should span a reasonable range of the experimental space believed to contain the optimum. Preliminary OFAT scans or literature data can inform this choice.

- Noise and Robustness: The simplex method is generally robust, but highly noisy systems (large experimental error) can impede its progress. Incorporating replication at the centroid or best vertex can help mitigate this.

- Dealing with Constraints: Practical experiments often have constraints (e.g., concentrations cannot be negative). The algorithm must be modified to reject new vertices that violate these constraints, for example, by assigning them a very poor objective function score.

- Real-Time Adaptation: A powerful application of this method, as demonstrated in organic synthesis within microreactors, is its ability to respond to process disturbances in real-time. If a disturbance shifts the optimum during operation, the simplex algorithm can automatically re-initiate to find the new optimal conditions [27].

Implementing Simplex Methods: Practical Protocols for Experimental Optimization