Simplex vs Factorial Design: A Strategic Guide for Optimizing Biomedical Research and Drug Development

This article provides a comprehensive comparison of simplex and factorial experimental designs, tailored for researchers and professionals in drug development and biomedical sciences.

Simplex vs Factorial Design: A Strategic Guide for Optimizing Biomedical Research and Drug Development

Abstract

This article provides a comprehensive comparison of simplex and factorial experimental designs, tailored for researchers and professionals in drug development and biomedical sciences. It covers the foundational principles of both optimization methods, explores their practical applications through case studies in analytical chemistry and virology, and offers strategic guidance for troubleshooting and selecting the appropriate design. By synthesizing current methodologies and validation techniques, this guide aims to empower scientists to enhance the efficiency, reliability, and cost-effectiveness of their experimental optimization processes.

Core Principles: Understanding Simplex and Factorial Design Fundamentals

In the pursuit of optimal conditions for complex processes, from drug formulation to industrial manufacturing, researchers require robust statistical tools. Among the most powerful of these is Response Surface Methodology (RSM), a collection of statistical and mathematical techniques used to develop, improve, and optimize processes where the response of interest is influenced by several variables [1] [2]. This guide explores the core concepts of RSM and objectively compares it with another prevalent optimization approach, the Taguchi method, providing a clear framework for selecting the appropriate tool for your research.

What is Response Surface Methodology (RSM)?

Response Surface Methodology (RSM) is a mid-twentieth-century statistical tool for optimizing processes and understanding complex relationships between variables [1]. Its primary goal is to efficiently explore the relationships between several explanatory variables and one or more response variables.

The core principle of RSM is to use a sequence of designed experiments to create an empirical model of the process. This model, often a second-order polynomial equation, describes how the input factors influence the output response [3]. Once a model is established, it can be used to:

- Navigate the experimental space to find factor settings that produce a maximum or minimum response.

- Understand the interaction effects between different factors.

- Visualize the relationship between factors and the response through 3D surface and 2D contour plots.

The methodology was pioneered by statisticians George E. P. Box and K. B. Wilson and has its roots in the foundational work of Sir Ronald A. Fisher on experimental design and analysis of variance (ANOVA) [1]. Its development was driven by the industrial need to optimize complex processes efficiently without resorting to costly one-factor-at-a-time experiments.

Key Experimental Designs in RSM

Two of the most common experimental designs in RSM are the Central Composite Design (CCD) and the Box-Behnken Design (BBD).

- Central Composite Design (CCD): A CCD efficiently combines a two-level factorial design with axial (or "star") points and center points. This structure allows for the estimation of the curvature of the response surface, making it highly effective for fitting second-order models [1] [2].

- Box-Behnken Design (BBD): Developed by George E. P. Box and Donald Behnken, this design is a more resource-efficient alternative. BBD is a spherical, rotatable design that does not include points at the extremes of the variable space (factorial corners), requiring fewer experimental runs than a CCD while still providing accurate estimates of the response surface [1] [3].

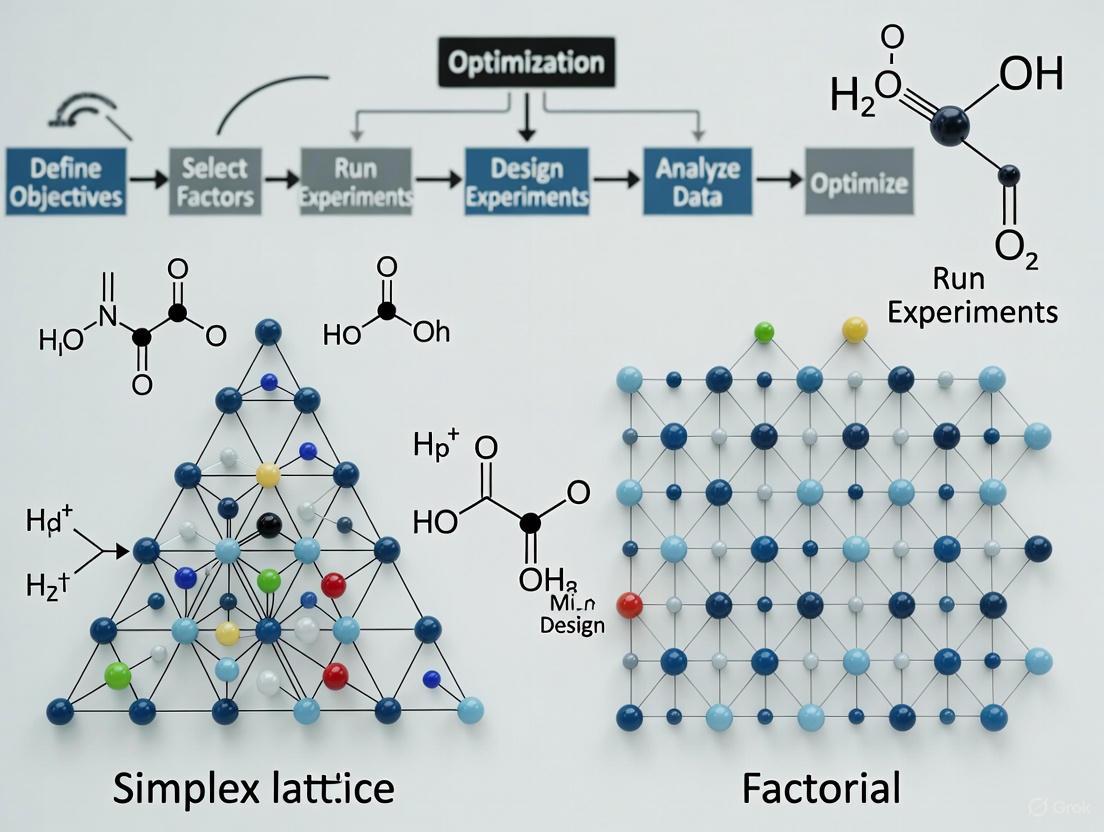

The following diagram illustrates a typical RSM workflow, from initial design to final optimization.

RSM vs. Taguchi Method: A Comparative Analysis

While RSM is a powerful tool, it is one of several approaches for process optimization. The Taguchi method, developed by Genichi Taguchi, is another widely used strategy. The table below summarizes the core differences between these two methodologies.

| Feature | Response Surface Methodology (RSM) | Taguchi Method |

|---|---|---|

| Primary Goal | Model and optimize a response within a continuous factor space [3]. | Determine robust factor levels that minimize performance variation [3]. |

| Experimental Design | Uses designs like CCD and BBD that require more runs to model curvature [4] [3]. | Uses orthogonal arrays to conduct a fraction of full-factorial experiments [3]. |

| Model Complexity | Employs complex second-order equations with interaction terms [4]. | Provides a more straightforward, often additive, model [4]. |

| Key Output | A predictive mathematical model and a map of the response surface. | An optimal factor-level combination and their percentage contribution. |

| Data Analysis | Regression Analysis and ANOVA to assess model and term significance [1]. | ANOVA and Signal-to-Noise (S/N) ratios to assess factor effects. |

Quantitative Performance Comparison

A comparative analysis of RSM and the Taguchi method for optimizing a hydraulic ram pump's performance revealed distinct outcomes. RSM, which required 20 experimental runs, identified an optimal configuration with an input height of 3 m, input length of 12 m, and a vacuum tube length of 120 cm. In contrast, the Taguchi method, requiring only 9 experiments, found an optimum at an input height of 3 m, input length of 6 m, and a vacuum tube length of 120 cm [4].

Another study focusing on optimizing dyeing process parameters provided clear data on accuracy and efficiency, as shown in the following table.

| Method | Number of Experimental Runs | Reported Optimization Accuracy |

|---|---|---|

| Taguchi Method | 9 runs (for 4 factors at 3 levels) [3] | 92% [3] |

| Box-Behnken Design (BBD) | Not explicitly stated, but fewer than CCD [3] | 96% [3] |

| Central Composite Design (CCD) | More than BBD and Taguchi [3] | 98% [3] |

Supporting Experimental Data: In the dyeing process study, the most significant factor for color strength was dye concentration, with a 62.6% contribution. The analysis of variance (ANOVA) was used to evaluate the relationship between variables and their contributions, confirming the higher predictive accuracy of RSM designs (CCD and BBD) compared to the Taguchi method [3].

A Practical Example: Simplex Lattice Design with Process Variables

Beyond traditional RSM, other designs like Simplex Lattice Designs are used for mixture experiments, where the factors are components of a blend and their proportions sum to a constant, typically 100% (or 1.0) [5] [2].

Case Study: Optimizing Vinyl for Seat Covers An experiment was set up to study three plasticizers (X1, X2, X3), whose total formulation contribution was 40%. The remaining components were fixed at 60%. A {3, 2} simplex lattice design was used for the mixture. Furthermore, two process variables—rate of extrusion (Z1) and drying temperature (Z2)—were included using a two-level factorial design. This created a combined design where the simplex mixture was tested under each of the four combinations of the process variables [5].

The measured response was vinyl thickness, with a target value of 10. After building a model and refining it by removing statistically insignificant terms, the optimization function identified several optimal solutions. One optimum solution was: X1 = 0.349, X2 = 0, X3 = 0.051, with process factors Rate of Extrusion = 10.000 and Temperature = 50.000. Under this setting, the predicted thickness was exactly 10.00 [5].

Diagram: Simplex-Process Hybrid Design Structure

The following diagram illustrates the structure of this combined simplex and factorial design, showing how mixture points are evaluated across different process conditions.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and software solutions used in the experiments cited in this guide, which are also fundamental for researchers conducting similar optimization studies.

| Research Reagent / Solution | Function in the Experiment |

|---|---|

| Evercion Red EXL Dye [3] | The active coloring agent whose concentration was a key factor in optimizing fabric dyeing strength. |

| Maltodextrin [2] | A carrier agent used in spray drying processes to improve the yield and stability of fruit and vegetable juice powders. |

| Sodium Sulfate (Na₂SO₄) [3] | An electrolyte used in textile dyeing to promote the adsorption of dye onto the fabric. |

| Sodium Carbonate (Na₂CO₃) [3] | A fixing agent used in reactive dyeing to create a covalent bond between the dye and the cellulose fiber. |

| Statistical Software (e.g., R, ReliaSoft Weibull++) [5] [3] | Used for generating experimental designs, performing complex regression analysis, ANOVA, and numerical optimization. |

Key Takeaways for Researchers

Selecting the right optimization strategy is critical for efficient and effective research and development.

- Choose RSM (CCD or BBD) when your goal is to build a detailed predictive model of the process and you need to understand complex curvatures and interaction effects. This is ideal for fine-tuning processes where the precise location of the optimum is unknown, and a high accuracy (e.g., 96-98%) is worth the additional experimental effort [3] [2].

- Choose the Taguchi Method when the primary objective is to quickly identify a robust set of factor levels that reduce performance variation, especially when dealing with many factors and experimental cost is a major constraint. It provides a good initial optimization (e.g., 92% accuracy) with significantly fewer runs [4] [3].

- Consider Simplex Designs when your experiment involves formulating a mixture, and the factors are components that must sum to a constant total [5]. These can be effectively combined with factorial designs to also study process variables.

Ultimately, RSM provides a powerful framework for mapping the optimization landscape, offering researchers a "response surface" to guide their journey toward the peak of process performance.

Factorial design represents a fundamental methodology in experimental science for efficiently investigating the effects of multiple variables simultaneously. Unlike traditional one-factor-at-a-time (OFAT) approaches, factorial design systematically studies how multiple factors interact to influence a response variable. R.A. Fisher demonstrated that combining the study of multiple variables in the same factorial experiment provides significant advantages, including reduced experimental runs and the ability to detect interaction effects between factors [6].

In pharmaceutical development and other research fields, factorial designs offer substantial efficiency benefits over randomized controlled trial (RCT) designs. They permit evaluation of multiple intervention components with good statistical power and present the opportunity to detect interactions amongst intervention components [7]. This efficiency has led methodologists to advocate for their increased use in clinical intervention research, particularly within frameworks like the Multiphase Optimization Strategy (MOST) for treatment development and evaluation [7].

A full factorial experiment with k factors, each comprising two levels, contains 2^k unique combinations of factor levels [7]. In this structure, a "factor" represents a type or dimension of treatment that the investigator wishes to evaluate experimentally, while a "level" constitutes a value that a factor can assume. The complete crossing of factors ensures that every possible combination of factor levels is represented in the experimental design [7].

Fundamental Principles and Mathematical Foundation

Basic Structure and Notation

The 2^k factorial design notation specifies a factorial design where k represents the number of factors, each with exactly 2 levels, resulting in 2^k experimental runs [8]. The notation system commonly uses (-, +) or (-1, +1) to represent the two factor levels, which may correspond to "low/high," "absent/present," or other dichotomous conditions relevant to the experimental context [9] [8].

For quantitative factors, the two levels typically represent two different values of a continuous variable (e.g., temperatures or concentrations), while for qualitative factors, they might represent different types of catalysts or the presence/absence of an entity [8]. This coding system facilitates the development of general formulas and methods for analyzing factorial experiments, particularly in regression analysis and response surface methodology [9].

Calculating Main and Interaction Effects

The mathematical foundation of factorial designs relies on calculating main effects and interaction effects. The main effect of a factor represents the average change in response when that factor moves from its low to high level, averaged across all levels of other factors [9] [8]. Mathematically, the main effect of factor A is calculated as:

ME(A) = ȳ(A+) - ȳ(A-)

where ȳ(A+) is the average response at the high level of A and ȳ(A-) is the average response at the low level of A [8].

Interaction effects occur when the effect of one factor depends on the level of another factor. The two-factor interaction between A and B can be calculated as:

INT(A,B) = ½[ME(A|B+) - ME(A|B-)]

where ME(A|B+) is the effect of A when B is at its high level and ME(A|B-) is the effect of A when B is at its low level [8]. This calculation captures whether the effect of factor A remains consistent across different levels of factor B.

Table 1: Comparison of Experimental Effects in Factorial Designs

| Effect Type | Calculation Method | Interpretation | Visualization Pattern | ||

|---|---|---|---|---|---|

| Main Effect | ȳ(A+) - ȳ(A-) | Average change in response when factor moves from low to high level | Consistent trend across factor levels | ||

| Interaction Effect | ½[ME(A | B+) - ME(A | B-)] | Degree to which effect of one factor depends on level of another | Non-parallel lines in interaction plot |

| Null Effect | No difference between level means | Factor does not influence the response | Flat line with no slope change | ||

| Strong Interaction | Large difference between conditional effects | Effect direction/magnitude changes substantially across factor levels | Crossing or widely diverging lines |

Mathematical Model and Regression Equations

For quantitative independent variables, an estimated regression equation can be developed from the calculated main effects and interaction effects. The full regression model with two factors (each with two levels) including interaction can be expressed as:

y = β₀ + β₁x₁ + β₂x₂ + β₁₂x₁x₂ + ε

where y is the response, β₀ is the intercept, β₁ and β₂ are coefficients for the main effects, β₁₂ is the interaction coefficient, x₁ and x₂ are coded factor levels (-1 or +1), and ε represents error [9].

The regression coefficients are calculated as one-half of the respective estimated effects, while the constant term is the average of all responses [9]. This model can be extended to accommodate more factors and higher-order interactions, providing a comprehensive mathematical representation of the factor-response relationships.

Factorial vs. Simplex Optimization Approaches

Comparative Framework

In optimization research, factorial designs and simplex methods represent distinct strategies with different strengths and applications. While factorial designs systematically explore a defined experimental space, simplex optimization represents a sequential approach that moves toward optimal conditions through iterative adjustments based on previous results [10].

Table 2: Comparison of Factorial and Simplex Optimization Approaches

| Characteristic | Factorial Design | Simplex Method |

|---|---|---|

| Experimental Approach | Systematic exploration of all factor combinations | Sequential movement toward optimum based on previous results |

| Optimum Determination | Exact optimum can be determined through response surface methodology | Optimum is encircled through iterative adjustments |

| Information Yield | Comprehensive mapping of factor effects and interactions | Focused information on direction to optimum |

| Experimental Efficiency | High for screening multiple factors simultaneously | High for refining conditions near optimum |

| Model Development | Supports detailed empirical model building | Limited model development capabilities |

| Best Application Context | Initial factor screening and understanding interactions | Refining conditions after significant factors identified |

Strategic Implementation in Research Workflows

The choice between factorial and simplex approaches depends on the research stage and objectives. Factorial designs are particularly valuable in early research phases where multiple factors need evaluation, and interactions between factors are suspected [10] [7]. They provide comprehensive information about the experimental space, allowing researchers to identify significant factors and their interactions efficiently.

Simplex methods excel in later optimization stages when the general region of optimum performance has been identified and refined adjustment is needed [10]. The sequential nature of simplex optimization makes it efficient for honing in on precise optimal conditions without mapping the entire experimental space.

In practice, many research programs benefit from integrating both approaches: using factorial designs for initial factor screening followed by simplex optimization for fine-tuning [10]. This combined approach leverages the strengths of both methodologies while mitigating their individual limitations.

Experimental Protocol for Implementing Factorial Designs

Step-by-Step Methodology

Step 1: Define Experimental Objectives and Factors Clearly articulate the research question and identify potential factors that may influence the response. Determine which factors are continuous versus discrete and define appropriate level settings for each factor [10]. This stage includes establishing the experimental domain - the "area" to be investigated through factor variation [10].

Step 2: Select Experimental Design Choose an appropriate factorial structure based on the number of factors and available resources. For initial screening with many factors, a 2^k design provides an efficient starting point [8]. The number of experimental runs required is 2^k, so practical constraints often limit k to 4-5 factors in initial experiments [6].

Step 3: Randomize Run Order Implement complete randomization of run order to minimize confounding from extraneous variables. This approach creates a completely randomized design (CRD), ensuring that all factor level combinations have equal probability of being assigned to any experimental unit [9].

Step 4: Execute Experiments and Collect Data Conduct experiments according to the randomized sequence, measuring all relevant response variables for each run. Maintain consistent experimental conditions except for intentional factor variations [6].

Step 5: Calculate Effects and Perform Statistical Analysis Compute main effects and interaction effects using the formulas in Section 2.2. Develop ANOVA tables to assess statistical significance, with sum of squares calculated as the square of the effects for two-level designs [9]. Construct regression models to quantify factor-response relationships.

Step 6: Interpret Results and Visualize Create main effects plots and interaction plots to visualize findings. Interpret significant main effects and interactions in the context of the research question [9] [8]. Use contour plots and response surfaces to represent the fitted models for continuous factors [9].

Workflow Visualization

Applications in Pharmaceutical and Nanotechnology Research

Case Study: Nanogel Formation Optimization

A recent study demonstrated the application of full factorial design for optimizing nanogel formation parameters [11]. Researchers employed factorial design to determine the optimal irradiation dosage and DMAEMA concentration for P(NIPAAM-PVP-PEGDA-DMAEMA) nanogels. The concentration of nanogels in solution was proportional to the intensity of photon scattering rates, with higher count rate values indicating preferable conditions [11].

The factorial approach enabled researchers to systematically classify and quantify cause-and-effect relationships between process variables and outputs, leading to discovery of settings and conditions under which the nanogel formation process became optimized [11]. This application highlights how factorial designs facilitate efficient process optimization in nanotechnology applications.

Clinical Intervention Development

Factorial designs have shown increasing utility in clinical intervention research, particularly for evaluating multiple intervention components efficiently. For example, a smoking cessation study implemented a 2^5 factorial design examining five different intervention components: medication duration, maintenance phone counseling, maintenance medication adherence counseling, automated phone adherence counseling, and electronic monitoring adherence feedback [7].

This design enabled researchers to evaluate all 32 possible combinations of intervention components using the same number of participants that would typically be required for a simple two-group RCT comparing one active treatment to control [7]. The efficiency of factorial designs makes them particularly valuable for complex intervention development where multiple components need evaluation.

Research Implementation Tools

Statistical Software and Visualization

Specialized statistical software facilitates implementation and analysis of factorial designs. JMP provides comprehensive DOE platforms including Custom Design, Screening Design, Full Factorial Design, and Response Surface Design modules [12]. These tools help researchers construct designs that accommodate various types of factors, constraints, and disallowed combinations.

For data visualization, SPSS syntax solutions enable creation of transparent graphs displaying raw data along with summary statistics for various factorial designs [13]. These visualization approaches enhance interpretation by revealing underlying data distributions and individual response patterns, which is particularly important for assessing interaction effects and individual response consistency.

Essential Research Reagents and Materials

Table 3: Essential Research Materials for Factorial Experiments

| Material/Resource | Function in Factorial Experiments | Application Context |

|---|---|---|

| Coded Factor Level Templates | Standardizes representation of factor levels (-1/+1) | All factorial experiments for consistent mathematical treatment |

| Randomization Tools | Ensures unbiased assignment of experimental runs | All experimental contexts to minimize confounding |

| Response Measurement Instruments | Quantifies outcomes of interest | Domain-specific (e.g., HPLC for chemistry, surveys for clinical) |

| Statistical Software with DOE Capabilities | Design construction, randomization, and analysis | All factorial experiments for design and analysis |

| Experimental Run Tracking System | Documents execution order and conditions | All experiments to maintain protocol integrity |

| Data Visualization Tools | Creates interaction plots and surface responses | All experiments for interpretation and communication |

Factorial designs represent a powerful methodology for efficiently screening significant factors across multiple research domains. Their ability to evaluate multiple factors simultaneously while detecting interaction effects provides substantial advantages over one-factor-at-a-time approaches. The structured mathematical foundation enables comprehensive analysis through main effects, interaction effects, and regression model development.

When positioned within the broader context of optimization strategies, factorial designs complement approaches like simplex optimization, with each method serving distinct phases of the research process. The implementation protocol outlined in this guide provides researchers with a systematic framework for deploying factorial designs in practical settings, from initial factor screening through final optimization.

As research questions grow increasingly complex, the efficient screening capabilities of factorial designs will continue to make them invaluable tools for researchers across scientific disciplines, particularly in pharmaceutical development and nanotechnology applications where multiple factors often interact to determine outcomes.

Defining the Optimization Paradigms

In the field of optimization, particularly within pharmaceutical development, the choice of experimental strategy profoundly influences the efficiency and outcome of research. Two fundamental methodologies employed are sequential simplex optimization and simultaneous factorial design. Simplex optimization is a sequential algorithm that navigates the experimental space by moving from one vertex to an adjacent, more promising vertex, continually refining the solution based on immediate previous results [14] [15]. In contrast, a full factorial design is a comprehensive approach that investigates all possible combinations of the levels of multiple factors simultaneously. This strategy provides a complete picture of individual factor effects and their interactions in a single, extensive experimental set-up [16] [17].

The core distinction lies in their search logic: the simplex method is a sequential, iterative procedure that converges toward an optimum through a series of guided steps, while factorial design is a parallel, single-shot experiment that maps the entire experimental domain at once. This article provides a objective comparison of these methodologies, framing them within the broader context of optimization research for drug development.

Comparative Analysis: Simplex vs. Factorial Design

The following tables summarize the core characteristics, advantages, and disadvantages of the simplex and factorial design optimization methods.

Table 1: Fundamental Characteristics of Simplex and Factorial Methods

| Feature | Simplex Optimization | Full Factorial Design |

|---|---|---|

| Basic Principle | Sequential search from an initial point towards the optimum [15] | Simultaneous study of all possible factor combinations [17] |

| Experimental Approach | Iterative; each experiment depends on the previous results [14] | Single, comprehensive set of experiments conducted in one block [16] |

| Nature of Search | Efficient path-following through the solution space [15] | Mapping of the entire experimental domain [17] |

| Primary Goal | Find an optimal solution with fewer experiments [15] | Understand main effects and all interaction effects [16] |

| Typical Use Case | Rapid process optimization and improvement | Screening factors and modeling complex response surfaces |

Table 2: Advantages and Limitations in a Research Context

| Aspect | Simplex Optimization | Full Factorial Design |

|---|---|---|

| Key Advantages | - High efficiency for a small number of variables [15]- Requires fewer experiments to reach an optimum [15]- Well-suited for hill-climbing in continuous spaces | - Captures all interaction effects between factors [16] [17]- Provides a comprehensive model of the system- Conclusions are valid over the entire range studied [17] |

| Major Limitations | - Can converge to a local, rather than global, optimum- May not fully model complex interactions | - Number of experiments grows exponentially with factors (Curse of Dimensionality) [16]- Can be resource-intensive (cost, time, materials) [16] |

| Data Interpretation | Relatively straightforward, focused on the path of improvement | Requires sophisticated statistical analysis (e.g., ANOVA, regression) [16] |

Experimental Protocols and Methodologies

Protocol for a Simplex Optimization Study

The simplex algorithm operates on a geometric structure (a simplex) defined by k+1 points in a k-dimensional factor space. The following workflow outlines its core procedure.

Title: Simplex Optimization Iterative Workflow

Detailed Methodology:

- Initialization: Define the initial simplex. For k factors, this involves running k+1 initial experiments. For example, with two factors (e.g., Temperature (T) and Pressure (P)), the initial simplex would be a triangle defined by three experimental runs: (T1, P1), (T2, P2), (T3, P3) [15].

- Evaluation and Ranking: Run the experiments at each vertex of the simplex and rank them based on the objective function (e.g., yield, purity). Identify the worst-performing point (W), the best-performing point (B), and the next-best point (G) [15].

- Transformation Steps: The algorithm then iteratively moves the simplex away from the worst region.

- Reflection: Calculate the reflection (R) of the worst point through the centroid (average) of the remaining points.

- Expansion: If the reflected point (R) is better than the current best (B), an expansion point (E) is calculated further in that direction.

- Contraction: If the reflected point (R) is worse than the next-worst (G), a contraction point (C) is calculated.

- Shrinkage: If contraction fails, the entire simplex shrinks towards the best point (B).

- Termination: The algorithm terminates when the simplex converges, meaning the differences in the objective function values between the vertices become smaller than a pre-defined threshold, or a maximum number of iterations is reached [14] [15].

Protocol for a Full Factorial Design Study

Factorial design investigates the effects of multiple factors and their interactions by testing all possible combinations of factor levels. The following workflow details its structure.

Title: Full Factorial Design Experimental Workflow

Detailed Methodology (Using a Pharmaceutical Example): A study optimizing an HPLC method for Valsartan nanoparticles exemplifies a rigorous 3-factor, 3-level (3³) full factorial design [18].

Factor and Level Selection: The independent factors were Flow Rate (A), Wavelength (B), and pH of buffer (C), each at three levels (coded as -1, 0, +1). The response variables were Peak Area (R1), Tailing Factor (R2), and Number of Theoretical Plates (R3) [18]. Table 3: Experimental Factors and Levels from Valsartan Study [18]

Independent Factor Level (-1) Level (0) Level (+1) A: Flow Rate (mL/min) 0.8 1.0 1.2 B: Wavelength (nm) 248 250 252 C: pH of Buffer 2.8 3.0 3.2 Experimental Execution: The design required 27 experimental runs (3³). These runs were executed, and the responses for each combination were measured [18].

Data Analysis: The data was analyzed using Analysis of Variance (ANOVA) to determine the statistical significance of the main effects and interaction effects. For example, the analysis revealed that the quadratic effect of flow rate and wavelength was highly significant (p < 0.0001) on the peak area response [18].

Optimization: Based on the statistical model, the optimal factor settings were identified as a flow rate of 1.0 mL/min, a wavelength of 250 nm, and a pH of 3.0 [18].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and reagents commonly employed in optimization experiments, drawing from the cited pharmaceutical example.

Table 4: Key Research Reagent Solutions for Optimization Studies

| Reagent / Material | Function / Role in Experiment | Example from Literature |

|---|---|---|

| Ammonium Formate Buffer | A volatile buffer used in HPLC mobile phase preparation; reduces system backpressure and column precipitation [18]. | Used at 20 mM concentration, with pH adjusted to 3.0 using formic acid for the analysis of Valsartan [18]. |

| Acetonitrile (HPLC Grade) | An organic solvent with low viscosity used in reversed-phase HPLC mobile phases; improves separation efficiency [18]. | Used in a 57:43 ratio with ammonium formate buffer in the Valsartan method optimization [18]. |

| C18 Chromatography Column | A standard reversed-phase stationary phase for separating non-polar to moderately polar compounds. | A HyperClone C18 column (250 mm × 4.6 mm, 5 μm) was used for the separation [18]. |

| Formic Acid | A solvent and pH modifier; helps improve peak shape and characteristics in chromatographic analysis [18]. | Used to adjust the pH of the ammonium formate buffer to the desired level (2.8 - 3.2) [18]. |

| Statistical Software | Used for designing experiments and analyzing results via ANOVA and regression modeling to quantify factor effects [16]. | Essential for analyzing the 27-run factorial design and determining significant effects and interactions [18]. |

Simplex optimization and full factorial design represent two powerful but philosophically distinct approaches to experimentation. The sequential, path-following nature of the simplex method makes it highly efficient for climbing a known response gradient, making it ideal for late-stage process refinement. The comprehensive, parallel nature of full factorial design is indispensable for understanding complex systems, discovering critical factor interactions, and building robust predictive models, especially in early-stage development and formulation.

The choice between them is not a matter of which is superior, but of which is appropriate for the research question at hand. An effective optimization strategy in drug development may even leverage both: using factorial designs for initial screening and understanding, followed by simplex optimization for fine-tuning the final process conditions.

In computational research and development, two dominant strategies for problem-solving emerge: modeling and searching. While often viewed as competing approaches, they represent fundamentally different philosophies for tackling complex challenges. Modeling strategies, particularly in machine learning (ML), focus on creating data-driven predictive systems that learn from patterns in historical data [19]. In contrast, searching strategies employ systematic exploration of possible solutions to identify optimal outcomes within a defined search space [19]. This distinction is particularly crucial in optimization research, where the choice between simplex (focused on boundary solutions) and factorial (exploring factor combinations) design approaches mirrors the broader modeling-searching dichotomy. For researchers, scientists, and drug development professionals, understanding this strategic divide is essential for selecting appropriate methodologies for specific problem types, resource constraints, and desired outcomes.

The fundamental distinction lies in their core operational paradigms: modeling strategies excel at pattern recognition and prediction based on learned experience, while searching strategies specialize in systematic exploration and optimization across possible solution spaces [19]. This article provides a comprehensive comparison of these approaches, supported by experimental data and practical implementation frameworks tailored to scientific research applications.

Theoretical Foundations and Key Concepts

Modeling Strategies: The Predictive Approach

Machine learning modeling operates on the principle that algorithms can improve automatically through data exposure and experience [19]. These systems detect patterns in training data to make predictions or decisions without explicit programming for each specific case. Modeling approaches include:

- Supervised learning: The algorithm learns from example inputs and their desired outputs, essentially mapping inputs to outputs based on labeled training data [19].

- Uninformed search: This approach operates without domain knowledge or heuristics, systematically exploring possibilities without information about goal proximity [19].

- Deep learning: This approach mimics human brain functions using neural networks to perform human-like tasks without direct human intervention, requiring substantial data and computational resources [19].

- Reinforcement learning: Programs learn to maximize cumulative reward signals through trial-and-error interactions with their environment [19].

The effectiveness of modeling strategies heavily depends on data quality and quantity, following the "Garbage In, Garbage Out" (GIGO) principle [19].

Searching Strategies: The Exploratory Approach

Searching strategies conceptualize problems through defined states and transitions [19]. The core framework includes:

- State: A potential solution to a problem

- Transition: The action of moving between states

- Start State: The initial point where search begins

- Goal State: The target state where searching stops

- Search Space: The collection of all possible solutions [19]

Searching navigates from a starting state to a goal state through intermediate states, typically represented as a "search tree" where nodes correspond to various state solutions [19]. Search strategies are categorized as:

- Informed search: Utilizes domain knowledge or heuristics to estimate distance to the goal state

- Uninformed search: Explores without knowledge of goal proximity

- Local search: Identifies optimal solutions when multiple goal states exist [19]

Economic and Platform Applications

Search-theoretic models have substantial applications in economics and platform operations, formalized in frameworks like the Diamond-Mortensen-Pissarides (DMP) model that explains why unemployment exists despite job openings due to search frictions and costs [20]. These models utilize concepts like Nash bargaining, where outcomes depend on each party's bargaining power and outside options [20]. Similarly, lending platforms like Upstart, LendingClub, and Prosper employ search-based matching mechanisms to connect borrowers with banks, facing challenges in demand forecasting, supply management, and matching mechanism design [20].

Experimental Comparison: Performance Metrics and Data

Methodology for Strategy Evaluation

A comprehensive controlled experiment compared five search strategies for Feature Location in Models (FLiM), analyzing 1,895 feature location problems extracted from 40 industrial Software Product Lines (SPLs) [21]. The study implemented these key methodologies:

Search Strategies Evaluated:

- EA (Evolutionary Algorithm): Population-based heuristic inspired by natural selection

- EHC (Evolutionary Hill Climbing): Hybrid approach combining EA with local search

- HC (Hill Climbing): Local search algorithm iteratively moving to better neighboring solutions

- ILS (Iterated Local Search): Metaheuristic performing repeated local search with perturbation

- RS (Random Search): Baseline strategy selecting solutions randomly from search space [21]

Performance Metrics:

- Precision: Proportion of correctly identified elements among all retrieved elements

- Recall: Proportion of correctly identified elements among all relevant elements

- F-Measure: Harmonic mean of precision and recall

- MCC (Matthews Correlation Coefficient): Balanced measure considering true/false positives/negatives [21]

Problem Characteristics Measured:

- SS-Size: Search space size measured by number of model elements

- SS-Volume: Total elements in the search space

- MF-Density: Ratio of feature implementation elements to total elements in containing model

- MF-Multiplicity: Number of model elements implementing the feature

- MF-Dispersion: Distribution of feature elements across the model [21]

Quantitative Performance Results

Table 1: Overall Performance Comparison of Search Strategies

| Search Strategy | Precision | Recall | F-Measure | MCC |

|---|---|---|---|---|

| EHC (Hybrid) | 0.79 | 0.83 | 0.81 | 0.78 |

| EA | 0.75 | 0.80 | 0.77 | 0.74 |

| HC | 0.72 | 0.76 | 0.74 | 0.71 |

| ILS | 0.70 | 0.74 | 0.72 | 0.69 |

| RS | 0.45 | 0.48 | 0.46 | 0.43 |

Source: Adapted from Echeverría et al. [21]

Table 2: Performance by Problem Characteristic (Top Performing Strategy)

| Problem Characteristic | High Precision | High Recall | Best F-Measure |

|---|---|---|---|

| Small SS-Size | HC (0.85) | EA (0.87) | EA (0.86) |

| Large SS-Size | EHC (0.76) | EHC (0.80) | EHC (0.78) |

| High MF-Dispersion | EHC (0.74) | EHC (0.78) | EHC (0.76) |

| Low MF-Density | ILS (0.72) | EA (0.76) | EA (0.74) |

| High MF-Multiplicity | EHC (0.77) | EHC (0.82) | EHC (0.79) |

Source: Adapted from Echeverría et al. [21]

The experimental results demonstrate that the hybrid EHC strategy achieved superior overall performance across most metrics, particularly for complex problems with large search spaces, high dispersion, and high multiplicity [21]. The study found that problem characteristics significantly influence strategy effectiveness, enabling evidence-based selection according to specific problem constraints.

Decision Framework and Implementation Guidelines

Strategy Selection Protocol

Table 3: Decision Matrix for Strategy Selection

| Problem Characteristics | Recommended Strategy | Rationale |

|---|---|---|

| Large search space, limited domain knowledge | EHC (Hybrid) | Combines exploration diversity with local optimization |

| Small-medium search space, good heuristics | EA (Evolutionary) | Effective heuristic search with population diversity |

| Focused optimization, smooth solution landscape | HC (Hill Climbing) | Efficient local optimization without complex implementation |

| Multi-modal landscape, avoidance of local optima | ILS (Iterated Local) | Escape local optima through periodic perturbation |

| Baseline comparison, simple problems | RS (Random) | Benchmarking only - not recommended for production use |

Based on experimental evidence [21], researchers should:

- Characterize problem dimensions using SS-Size, SS-Volume, MF-Density, MF-Multiplicity, and MF-Dispersion metrics before strategy selection

- Prioritize EHC hybrid approaches for complex, poorly-understood problems with large search spaces

- Select specialized strategies when specific problem characteristics are dominant and well-defined

- Establish baseline performance with random search before implementing sophisticated approaches

Research Reagent Solutions: Computational Toolkit

Table 4: Essential Research Components for Strategy Implementation

| Component | Function | Implementation Example |

|---|---|---|

| Search Space Formulator | Defines possible solutions, constraints, and optimization criteria | Model elements, feature constraints, objective functions |

| State Transition Engine | Implements movement between potential solutions in the search space | Neighborhood operators, crossover/mutation mechanisms |

| Fitness Evaluator | Assesses solution quality against optimization objectives | Precision, recall, F-measure, or domain-specific metrics |

| Termination Condition | Determines when satisfactory solution is found or search should conclude | Max iterations, convergence thresholds, time limits |

| Hyperparameter Optimizer | Tunes strategy-specific parameters for optimal performance | Population size, mutation rates, temperature schedules |

Visualization of Strategic Relationships

Search Strategy Decision Pathway

Search Strategy Decision Pathway

Modeling vs. Search Conceptual Framework

Modeling vs. Search Conceptual Framework

The critical difference between modeling and searching strategies reveals a fundamental division in computational problem-solving approaches. Modeling strategies excel in environments with rich historical data where pattern recognition and prediction are paramount, while searching strategies dominate when systematic exploration of possible solutions is required [19]. The experimental evidence clearly demonstrates that hybrid approaches like EHC frequently achieve superior performance by leveraging the strengths of multiple paradigms [21].

For researchers, scientists, and drug development professionals, these findings suggest several strategic implications. First, problem characterization should precede strategy selection, with specific attention to search space size, solution dispersion, and available domain knowledge. Second, hybrid strategies warrant strong consideration for complex, poorly-understood problems where no single approach dominates. Finally, the modeling-searching dichotomy mirrors broader methodological divisions in experimental science, including the simplex-factorial design optimization continuum, suggesting opportunities for cross-disciplinary methodological exchange.

Future research directions should explore adaptive strategies that dynamically shift between modeling and searching approaches based on problem characteristics and intermediate results. Additionally, the integration of machine learning models to guide search processes represents a promising avenue for enhancing computational efficiency and solution quality in complex scientific domains, particularly pharmaceutical development and research optimization.

In the scientific and industrial pursuit of optimal conditions—whether for a chemical synthesis, a fermentation process, or a drug formulation—researchers frequently encounter complex, multi-variable systems. Navigating these intricate landscapes to find the best possible outcome requires systematic optimization strategies. Among the most established methodologies are Response Surface Methodology (RSM) and Simplex-based optimization, two approaches with fundamentally different philosophies and mechanisms [22] [23].

RSM is a collection of statistical and mathematical techniques for modeling and optimizing systems where multiple input variables influence a performance measure or response [24] [25]. It focuses on building a global, empirical model of the process, typically using designed experiments, to understand the shape of the response surface and locate the optimum [26] [27]. In contrast, Simplex optimization, particularly the Evolutionary Operation (EVOP) and related methods, is a sequential, heuristic procedure that uses small perturbations to gradually move the operating conditions toward an optimum without building an explicit global model [22]. It operates like a "walk" through the experimental domain, guided by local rules rather than a pre-constructed map.

This guide objectively compares these two methodologies, detailing their experimental protocols, visualizing their workflows, and presenting performance data to aid researchers, scientists, and drug development professionals in selecting the appropriate tool for their optimization challenges.

Fundamental Principles and Conceptual Frameworks

Response Surface Methodology (RSM)

RSM is a model-based approach that relies on fitting a mathematical function—often a first or second-order polynomial—to experimental data. The core idea is to approximate the unknown true response function, ( f ), which describes how a response ( y ) depends on a set of input variables ( (x₁, x₂, ..., xₖ) ) [23]. The general form with statistical error ( ε ) is:

Y = f(x₁, x₂, ..., xₖ) + ε

For optimization, a second-order model is frequently used because of its flexibility in representing surfaces like hills, valleys, and saddle points [23]. This model for two variables is:

η = β₀ + β₁x₁ + β₂x₂ + β₁₁x₁² + β₂₂x₂² + β₁₂x₁x₂

Where η is the predicted response, β₀ is the constant term, β₁ and β₂ are linear coefficients, β₁₁ and β₂₂ are quadratic coefficients, and β₁₂ is the interaction coefficient [28] [29]. Once this model is fitted and validated, it can be visualized as a 3D surface plot or a 2D contour plot, allowing researchers to identify the optimum conditions graphically [24] [25].

Simplex Evolution (Evolutionary Operation)

Simplex optimization, specifically the Evolutionary Operation (EVOP) method, is an improvement technique designed for online, full-scale process optimization [22]. Unlike RSM, it does not construct an explicit global model. Instead, it sequentially imposes small, carefully designed perturbations on the process to gain information about the direction toward the optimum [22]. The basic Simplex method requires the addition of only one new data point in each iteration or phase, making it computationally simple [22]. Its heuristic nature means it "evolves" toward the optimum by reflecting the worst-performing point in the simplex across the centroid of the remaining points, thus creating a new simplex closer to the optimal region. This makes it particularly suited for tracking drifting optima in processes affected by batch-to-batch variation or environmental changes [22].

Experimental Protocols and Workflows

A clear understanding of the step-by-step procedures for each method is crucial for their successful application.

The RSM Workflow

The following diagram illustrates the sequential, multi-stage process of a typical RSM study, which often employs the Method of Steepest Ascent for initial exploration.

Figure 1: The Sequential Workflow of Response Surface Methodology.

The key stages in this workflow are:

- Problem Identification and Screening: The process begins by clearly defining the optimization objective and identifying the input variables (factors) and the output (response). Preliminary screening designs, such as Plackett-Burman, are often used to identify the most influential factors [24] [23].

- Initial First-Order Experiment: A first-order design (e.g., a 2^k factorial design with center points) is conducted. A first-order model (e.g., ( y = β₀ + β₁x₁ + β₂x₂ + ε )) is fitted to the data [28].

- Curvature Test and Steepest Ascent: The center points are used to test for curvature in the response. If curvature is not significant, it indicates the experiment is far from the optimum. The first-order model's coefficients then define the path of steepest ascent (for maximizing a response) or descent (for minimizing). The experimenter "marches" along this path, conducting experiments at each step until the response no longer improves [28].

- Second-Order Experiment: Once the vicinity of the optimum is reached (indicated by a significant curvature test or a decrease in response during the ascent), a more detailed second-order experiment is set up. Common designs include Central Composite Design (CCD) or Box-Behnken Design (BBD) [24] [27] [23].

- Model Fitting and Optimization: A second-order model is fitted to the new data. This model is then analyzed using ANOVA and residual diagnostics to ensure its adequacy [29]. Finally, the mathematical model is used to locate the optimal factor settings, either analytically or through graphical examination of contour plots [25].

The Simplex Evolution Workflow

The Simplex method follows a more iterative and self-directed procedure, as shown in the workflow below.

Figure 2: The Iterative Workflow of the Simplex Evolution Method.

The key stages in this workflow are:

- Initialization: The algorithm starts by defining an initial simplex in the k-dimensional factor space. This is a geometric figure with (k+1) vertices. For two factors, this is a triangle [22].

- Evaluation and Ranking: The response is evaluated at each vertex of the simplex. The points are ranked from best (e.g., highest yield) to worst (e.g., lowest yield).

- Iteration - Reflection: The core step is to reflect the worst point through the centroid of the remaining points, generating a new candidate point.

- Evaluation and Replacement: The response at this new point is evaluated. If it is better than the worst point, it replaces the worst point in the simplex, forming a new simplex closer to the optimum.

- Termination: This process repeats until the simplex converges at an optimum or a predetermined number of iterations is completed. Convergence is often determined when the differences in response between the vertices become very small [22].

Comparative Performance and Applications

The choice between RSM and Simplex depends heavily on the specific problem context, including the number of factors, the presence of noise, and the experimental goals.

Quantitative Performance Comparison

A simulation study comparing EVOP (a form of Simplex) and basic Simplex provides valuable insights into their performance under different conditions [22]. The study varied key settings: the Signal-to-Noise Ratio (SNR), the step size (dxi), and the dimensionality (number of factors, k).

Table 1: Comparison of RSM and Simplex Characteristics Based on Simulation Studies [22].

| Aspect | Response Surface Methodology (RSM) | Simplex Evolution |

|---|---|---|

| Underlying Principle | Builds a global polynomial model of the process [24] [23] | Uses heuristic rules to sequentially move toward the optimum [22] |

| Experimental Perturbation | Can require larger perturbations for model building [22] | Designed for small perturbations to avoid non-conforming product [22] |

| Computational Load | Higher for model fitting and validation [30] | Very low computational requirements [22] |

| Noise Robustness | Model averaging in designs (e.g., center points) improves robustness [22] [29] | More prone to noise as it relies on single new measurements per step [22] |

| Dimensionality (k) | Becomes prohibitively expensive with many factors [22] | More efficient in higher dimensions (>4) for reaching optimum region [22] |

| Optimal Application Scope | Stationary processes, detailed process understanding, model validation [22] [25] | Non-stationary processes (drifting optima), online optimization, high-dimensional spaces [22] |

Key Experimental Tools and Reagents

The practical implementation of these optimization strategies relies on a suite of methodological "tools." The table below details key solutions and their functions in the context of experimental optimization.

Table 2: Research Reagent Solutions for Optimization Experiments.

| Research Reagent / Solution | Function in Optimization |

|---|---|

| Central Composite Design (CCD) | A widely used second-order experimental design that combines factorial, axial, and center points to efficiently fit quadratic models and model curvature [24] [23]. |

| Box-Behnken Design (BBD) | A spherical, rotatable second-order design that avoids extreme factor-level combinations. It requires fewer runs than CCD for 3-5 factors and is often preferred for practical and safety reasons [27] [23]. |

| Method of Steepest Ascent | A sequential procedure used with first-order models to rapidly move from a remote experimental region to the vicinity of the optimum [28]. |

| Coded Variables (x₁, x₂...) | Unitless transformations of natural factor levels (e.g., -1, 0, +1) that normalize factors of different units and magnitudes, making model coefficients comparable and improving numerical stability [28] [23]. |

| Desirability Functions | A multiple response optimization technique that transforms individual responses into a composite desirability score, allowing for the balanced optimization of several, potentially conflicting, goals [24] [29]. |

Both Response Surface Methodology and Simplex Evolution are powerful tools in the scientist's optimization toolkit, but they serve different primary purposes.

RSM is the preferred approach when the goal is to build a thorough understanding of the process. It provides a predictive model that can be visualized and analyzed to understand factor interactions and the shape of the response surface. It is ideal for stationary processes, detailed research and development, and when the number of critical factors is relatively low [22] [25]. Its requirement for a structured design before analysis makes it a more offline, planning-intensive methodology.

Simplex Evolution is the preferred approach for online optimization of full-scale processes, especially when the optimum is expected to drift over time due to factors like raw material variability or machine wear [22]. Its strengths are computational simplicity, adaptability, and efficiency in higher-dimensional spaces. It is a pragmatic choice for tracking a moving optimum or when a detailed empirical model is not required.

Ultimately, the choice is contextual. RSM provides a detailed map of the entire region of interest, while Simplex offers an efficient, step-by-step guide to the top of the hill, even if the hill itself is slowly moving.

Practical Implementation: Methodologies and Real-World Applications in Biomedicine

Executing a Fractional Factorial Design for High-Throughput Factor Screening

In the realm of experimental design for research and development, particularly within drug discovery and process optimization, fractional factorial designs (FFDs) serve as a powerful screening tool for efficiently identifying significant factors among a large set of potential variables. These designs strategically investigate a carefully chosen subset of all possible factor-level combinations, enabling researchers to screen numerous factors with a dramatically reduced number of experimental runs compared to full factorial designs [31] [32]. This approach is exceptionally valuable in high-throughput settings, such as early-stage drug development, where the goal is to rapidly identify the "vital few" factors influencing a biological response, process yield, or product quality from a large pool of candidates [33] [34].

Framed within the broader methodological debate of simplex vs. factorial design optimization, FFDs represent a model-dependent, often parallel, strategy ideal for situations with sufficient prior knowledge to define factors and levels. This contrasts with simplex methods, which are typically model-agnostic, sequential, and excel at navigating towards an optimum with very limited initial knowledge [35]. The core value proposition of a screening FFD is its resource efficiency, making large-scale experimentation feasible where resource constraints would otherwise prohibit investigation [36].

Core Concepts and Key Trade-offs

The Principle of Fractionation and Aliasing

The efficiency of FFDs is achieved through fractionation, which deliberately confounds, or "aliases," higher-order interactions with main effects and lower-order interactions that are presumed negligible [31] [37]. This aliasing structure is defined by a design generator (e.g., I = ABCD), a mathematical relationship that specifies which effects are indistinguishable from one another in the subsequent analysis [31]. While this leads to a loss of information, the underlying assumption is that system behavior is primarily driven by main effects and low-order interactions (e.g., two-factor interactions), a principle known as effect sparsity [34].

Understanding Design Resolution

Design resolution is a critical concept that classifies FFDs based on their aliasing structure and ability to separate effects, providing a direct measure of the design's clarity and the severity of its trade-offs [31] [33]. It is denoted by Roman numerals (III, IV, V, etc.), with higher numerals indicating a clearer separation of effects but requiring more experimental runs.

Table: Classification and Characteristics of Fractional Factorial Design Resolutions

| Resolution | Aliasing Structure | Primary Use Case | Interpretability |

|---|---|---|---|

| Resolution III | Main effects are confounded with two-factor interactions [31] [33]. | Initial screening of a large number of factors to identify the most critical ones [34]. | Critical; main effects cannot be clearly distinguished from two-factor interactions [31]. |

| Resolution IV | Main effects are not confounded with other main effects or two-factor interactions, but two-factor interactions are confounded with each other [31] [33]. | Screening when clear estimation of main effects is essential, and interactions are less likely [33]. | Good for main effects; limited for specific two-factor interactions [33]. |

| Resolution V | Main effects and two-factor interactions are not confounded with any other main effect or two-factor interaction. They are confounded with three-factor interactions [31] [33]. | Detailed analysis when understanding both main effects and two-factor interactions is crucial [33]. | High; provides a comprehensive view of the system's main effects and two-factor interactions [31]. |

Experimental Protocol for a High-Throughput Screening DOE

Executing a robust screening DOE requires a disciplined, sequential approach to ensure reliable and interpretable results.

Step 1: Define Objective and Factors

Clearly articulate the goal of the experiment (e.g., "Identify factors critical for compound solubility"). Assemble a cross-functional team to list all potential factors that could influence the response. For each factor, define the two levels (e.g., high/low, present/absent) to be tested [36] [32].

Step 2: Select Design Type and Resolution

Choose an appropriate screening design based on the number of factors and the importance of interactions.

- Plackett-Burman Designs: Ideal for very quickly screening a large number of factors (e.g., 11 factors in 12 runs) but assume all interactions are negligible [34].

- 2-Level Fractional Factorial Designs: The most common choice, allowing for a balance between run economy and the ability to detect some interactions. The choice of resolution (III, IV, or V) is made here based on the trade-offs outlined in Table 1 [31] [34].

- Definitive Screening Designs (DSDs): A modern alternative that can estimate main effects, quadratic effects, and two-way interactions with relatively few runs, though they require more runs than a Resolution III design [34].

Step 3: Create Experimental Plan (Runs)

Using statistical software (e.g., JMP, Minitab, R), generate the specific set of experimental runs. The software will create a run sheet that defines the exact factor-level combinations for each experiment, ensuring the design's orthogonality and desired resolution [31] [34].

Step 4: Randomize and Execute Runs

Randomize the order of all experimental runs. This is a critical step to protect against the influence of lurking variables and time-related effects, thereby ensuring the validity of the statistical conclusions [36]. Execute the experiments according to the randomized plan, controlling for known sources of noise to the greatest extent possible [32].

Step 5: Analyze Data and Identify Key Factors

Input the response data into the statistical software to analyze the results.

- Calculate the estimated effects of each factor and interaction.

- Use half-normal plots or Pareto charts to visually identify factors whose effects are larger than expected from random noise.

- Perform analysis of variance (ANOVA) to statistically test the significance of the effects [34] [32].

Step 6: Plan Follow-up Experiments

A screening DOE is rarely the final step. Use the results to plan subsequent experiments, which may include:

- Foldover Designs: Adding a second, complementary fraction to a Resolution III design to de-alias specific main effects from two-factor interactions [33] [34].

- Full Factorial Designs: Conducting a full factorial experiment on the 2-4 critical factors identified by the screen to fully characterize all interactions and locate an optimum [36] [32].

- Response Surface Methodologies (RSM): Using designs like Central Composite Designs (CCD) to model curvature and find precise optimal conditions [35].

Comparison with Simplex Optimization

The choice between factorial and simplex approaches represents a fundamental strategic decision in experimental optimization, hinging on the level of prior knowledge and the specific goal of the investigation.

Table: Factorial vs. Simplex Experimental Optimization Approaches

| Feature | Fractional Factorial Design (FFD) | Simplex Optimization |

|---|---|---|

| Core Philosophy | Model-dependent; maps a defined experimental space to build a predictive model [35]. | Model-agnostic; uses geometric rules to sequentially navigate towards an optimum [35]. |

| Experimental Strategy | Typically parallel; all runs from the designed set are executed (often in randomized order) [35]. | Inherently sequential; each experiment's result dictates the conditions for the next run [35]. |

| Primary Goal | System Understanding & Screening: Identify influential factors and model their effects [34] [32]. | Direct Optimization: Rapidly find a local optimum with minimal prior knowledge [35]. |

| Best Application Context | Early-mid stages of investigation; many factors; need to understand factor influence and interactions [33] [36]. | Mid-late stages; few factors; goal is to quickly improve a response without building a full model [35]. |

| Key Advantage | Provides broad insight into the system, quantifying main and interaction effects [31]. | Highly efficient in terms of the number of runs needed to find an optimum [35]. |

| Key Limitation | Requires predefined factor levels and can be inefficient if only an optimum is sought [36]. | Provides limited system understanding; can get trapped in local optima [35]. |

The following diagram illustrates how these two methodologies can complement each other within a complete research program.

Essential Research Reagent Solutions

The successful execution of a high-throughput screening assay relies on a suite of reliable reagents and materials. The following table details key components for a generalized screening platform, adaptable to specific applications like the cited SLIT2/ROBO1 TR-FRET assay [38].

Table: Essential Research Reagents for High-Throughput Screening Assays

| Reagent / Material | Function in the Screening Workflow |

|---|---|

| Recombinant Target Proteins | Purified proteins (e.g., SLIT2, ROBO1) that serve as the primary molecular targets in the interaction assay [38]. |

| TR-FRET Donor/Acceptor Probes | Fluorescent labels (e.g., Eu3+-cryptate as donor, XL665 as acceptor) that enable time-resolved detection of molecular binding events via energy transfer [38]. |

| Assay Plates (e.g., 384-well) | Miniaturized, high-density microplates that facilitate the parallel testing of thousands of compound-condition combinations [38] [39]. |

| Chemical Library / Test Compounds | A curated collection of small molecules, inhibitors, or other chemical entities screened for their ability to modulate the target interaction [38]. |

| Automated Liquid Handling Systems | Robotic instrumentation that ensures precise, rapid, and reproducible dispensing of nanoliter-to-microliter volumes of reagents and compounds [39]. |

| Buffer & Stabilizing Agents | A defined biochemical environment (pH, salts, detergents, etc.) that maintains protein stability and ensures specific binding interactions [38]. |

Fractional factorial designs stand as an indispensable methodology in the researcher's toolkit, offering a structured and statistically rigorous path for efficiently navigating complex factor spaces in high-throughput environments. Their power lies in the deliberate trade-off of information for efficiency, enabling the rapid discrimination of significant factors from insignificant ones. When viewed within the broader paradigm of experimental optimization, FFDs are not in direct competition with simplex methods but are a complementary tool. The strategic integration of both approaches—using FFDs for initial system understanding and factor screening, followed by simplex or RSM for precise optimization—represents a powerful, holistic strategy for accelerating discovery and development cycles in research and drug development.

Step-by-Step Guide to Running a Simplex Optimization

In the field of computational and experimental optimization, researchers and drug development professionals are often faced with a critical choice: which algorithmic strategy will most efficiently and reliably navigate the parameter space to find an optimal solution? Two prominent methodologies are the Simplex method for numerical optimization and Factorial Design for experimental optimization. The Simplex method, an iterative algorithm for solving linear programming problems, is prized for its empirical efficiency in practice, particularly for large-scale problems [40]. Factorial Design, a statistical approach, systematically investigates the effects of multiple factors and their interactions on a response variable [41]. This guide provides a direct, step-by-step protocol for executing a Simplex optimization and objectively compares its performance and application with Full Factorial Design, framing this discussion within the broader research question of selecting an appropriate optimization strategy.

Understanding the Core Methodologies

The Simplex Method

The Simplex Method is a cornerstone algorithm in linear programming. It operates by traversing the edges of the feasible region polyhedron, moving from one vertex to an adjacent one in a direction that improves the objective function value, until no further improvement is possible and an optimum is found [40]. While its worst-case theoretical complexity is exponential, its practical performance is often remarkably efficient. Recent smoothed analysis has shown that a specific variant of the Simplex method can achieve a smoothed complexity of approximately O(σ^(-1/2) d^(11/4) log(n)^(7/4)) pivot steps [42]. This analysis helps bridge the gap between its theoretical worst-case and observed real-world performance.

Full Factorial Design

Full Factorial Design (FFD) is a systematic experimental approach used to study the effects of multiple factors, each at discrete levels. In an FFD, experiments are conducted at every possible combination of the factor levels. For example, with k factors each at 2 levels, a total of 2^k experiments are required. The results are then analyzed, typically using Analysis of Variance (ANOVA), to determine the statistical significance of the main effects of each factor and the interaction effects between factors [41]. The "best" setting is identified from the tested combinations. Its strength lies in its ability to comprehensively explore a discrete experimental space.

A Step-by-Step Protocol for a Simplex Optimization

The following section outlines a generalized workflow for conducting a Simplex optimization, synthesizing concepts from modern computational practices [43].

Prerequisite: Problem Formulation

The first and most critical step is to formulate your optimization problem as a Linear Program (LP).

- Define Decision Variables: Identify the quantities you can control (e.g., concentration of a reagent, processing time). Represent them as variables

x1, x2, ..., xn. - Formulate the Objective Function: Create a linear function

Z = c1*x1 + c2*x2 + ... + cn*xnthat you wish to maximize (e.g., yield, purity) or minimize (e.g., cost, impurities). - Specify Constraints: Define the linear inequalities or equalities that represent the limitations of your system (e.g., total budget, resource availability, mandatory minimums).

Workflow and Process

The following diagram illustrates the iterative workflow of a standard Simplex optimization.

Protocol Steps

Initialization:

- Convert the LP into standard form (equality constraints and non-negative variables).

- Identify an initial basic feasible solution (a starting vertex of the feasible region). This can be a non-trivial step for problems not originating from a standard resource-allocation context.

Check for Optimality:

- Calculate the reduced costs for all non-basic variables.

- If all reduced costs are non-negative (for a maximization problem), the current solution is optimal. The algorithm terminates. Otherwise, proceed.

Identify Entering Variable:

- Select a non-basic variable with a negative reduced cost (for maximization) to enter the basis. Common rules are the steepest-edge or most-negative reduced cost rules.

Identify Leaving Variable:

- Using the minimum ratio test, determine which basic variable will first become zero as the entering variable increases. This variable will leave the basis.

Pivot and Update:

- Perform the pivot operation. This is a Gaussian elimination step that makes the entering variable basic and the leaving variable non-basic, updating the entire tableau.

- Return to Step 2.

Performance Comparison: Simplex vs. Full Factorial Design

The choice between Simplex and Factorial Design is not a matter of which is universally better, but which is more appropriate for a given problem type. The table below summarizes their core characteristics.

Table 1: High-Level Comparison of Simplex Optimization and Full Factorial Design

| Feature | Simplex Optimization | Full Factorial Design (FFD) |

|---|---|---|

| Problem Domain | Mathematical, continuous Linear Programming [40]. | Physical experiments or simulations with discrete factors [41]. |

| Primary Goal | Find the exact optimal solution mathematically. | Identify significant factors and a high-performing discrete combination. |

| Nature of Solution | A single optimal vertex solution. | A "best" setting from a pre-defined set of tested combinations. |

| Handling Constraints | Directly and natively integrated into the algorithm. | Managed by not running experiments that violate constraints. |

| Scalability | Highly efficient in practice for large-scale problems [40]. | Suffers from combinatorial explosion; becomes infeasible with many factors/levels [41]. |

| Theoretical Basis | Linear Algebra & Pivoting; Polynomial-time Interior Point variants exist [40]. | Statistical Inference (ANOVA) [41]. |

To move beyond a theoretical comparison, we can analyze performance based on published experimental data and computational analyses.

Table 2: Performance and Application Analysis

| Aspect | Simplex Optimization | Full Factorial Design |

|---|---|---|

| Computational/Experimental Cost | Recent ML-enhanced Simplex surrogates reported costs of ~50 EM simulations for globalized search [43]. | An 11-experiment FFD was used to optimize a 3-factor membrane process [44]. Cost grows as (n^k). |

| Efficiency & Complexity | Optimal smoothed complexity: (O(\sigma^{-1/2} d^{11/4} \log(n)^{7/4})) pivot steps [42]. Highly efficient for high-dimensional continuous spaces. | Efficient for a low number of factors (e.g., 2-5). Efficiency plummets as factors/levels increase, e.g., 5 factors at 3 levels requires 3^5=243 experiments [41]. |

| Key Strength | Proven efficiency on large-scale problems. Its accuracy and reliability are "particularly appreciated when... applied to truly large scale problems which challenge any alternative approaches" [40]. | Captures interaction effects. In a chemical process, FFD identified that the interaction between Temperature and Catalyst (AC) was a significant factor for the outcome [41]. |

| Key Weakness | Performance can be sensitive to problem structure; primarily for convex (linear) problems. | Inability to guarantee a true optimum, as it only tests a pre-selected grid of points. |

The Scientist's Toolkit: Essential Reagents & Materials

This table details key resources for setting up and running the optimization experiments discussed in this guide.

Table 3: Research Reagent Solutions for Optimization Studies

| Item | Function / Description | Example in Context |

|---|---|---|

| Linear Programming (LP) Solver | Software library (e.g., CPLEX, Gurobi, open-source alternatives) that implements the Simplex (and Interior Point) algorithm. | Used to computationally solve the formulated LP model to find the optimal resource allocation or process parameters [40]. |

| Statistical Analysis Software | A software package (e.g., R, JMP, Minitab, Python with statsmodels) capable of performing ANOVA and regression analysis. | Essential for analyzing the data generated from a Full Factorial Experiment to determine factor significance and build regression models [41] [44]. |

| Process/Experimental Factors | The independent variables (continuous or discrete) that are adjusted during the optimization. | In a chemical process, this could be Temperature (A), Concentration (B), and Catalyst type (C) [41]. In membrane filtration, Trans-Membrane Pressure and Crossflow Velocity [44]. |

| Response Variable Metric | The measurable output that defines the objective or quality of the system. | In drug development, this could be % Yield, Purity, or Activity. In other fields, it is Permeate Flux or Sulfate Rejection [44]. |

| High-Fidelity Model (Rf(x)) | A detailed, computationally expensive simulation model of the system. | Used for final verification in a Simplex-based ML framework to ensure reliability (e.g., a high-resolution EM simulation) [43]. |

The following chart provides a logical pathway for researchers to select the most appropriate optimization method based on their problem's characteristics.