Simplex vs. Multidirectional Search (MDS): A Strategic Guide for Optimization in Drug Development

This article provides a comprehensive comparative analysis of the Simplex Method and Multidirectional Search (MDS) for researchers and professionals in drug development.

Simplex vs. Multidirectional Search (MDS): A Strategic Guide for Optimization in Drug Development

Abstract

This article provides a comprehensive comparative analysis of the Simplex Method and Multidirectional Search (MDS) for researchers and professionals in drug development. It explores the foundational principles of both algorithms, detailing their methodological applications in areas like pharmaceutical formulation and process optimization. The guide offers practical troubleshooting strategies for overcoming common pitfalls and presents a rigorous framework for validating and selecting the appropriate optimization technique based on specific project goals, constraints, and problem structures encountered in biomedical research.

Core Principles: Deconstructing the Simplex and MDS Algorithms

In computational optimization for drug discovery, two distinct algorithmic philosophies have emerged for navigating complex search spaces: the simplex method, a vertex-traversing approach for linear programming, and multidirectional search (MDS) algorithms, typified by the Nelder-Mead method, designed for nonlinear optimization without derivatives. While both approaches leverage geometric simplex structures, their underlying mechanisms and application domains differ significantly. The simplex method, developed by George Dantzig in the 1940s, operates by systematically moving along the edges of a feasible region defined by linear constraints to find the optimal solution [1] [2]. In contrast, multidirectional search methods like Nelder-Mead work by iteratively transforming a simplex (geometric figure) through reflection, expansion, and contraction operations to optimize nonlinear objective functions [3]. This comparison guide examines the fundamental differences, performance characteristics, and appropriate domains of application for these approaches within drug discovery research, providing experimental protocols and analytical frameworks for researchers navigating optimization challenges in pharmaceutical development.

Fundamental Principles and Mechanisms

The Simplex Method: Linear Programming with Theoretical Guarantees

The simplex method addresses linear programming problems typically formulated as maximizing or minimizing a linear objective function subject to linear equality or inequality constraints [2]. In canonical form, this is expressed as:

- Maximize ( \mathbf{c^T x} )

- Subject to ( A\mathbf{x} \leq \mathbf{b} ) and ( \mathbf{x} \geq 0 )

The algorithm transforms these constraints through the introduction of slack variables to convert inequalities to equalities, then navigates the convex polytope defined by these constraints [4] [2]. Geometrically, this polytope represents the feasible solution space, with the optimal solution residing at one of its extreme points or vertices [4]. The algorithm proceeds by moving from vertex to adjacent vertex along the edges of this polytope, at each step choosing the direction that most improves the objective function [2]. This systematic traversal ensures eventual convergence to the global optimum for linear problems.

Recent theoretical advances have solidified the simplex method's foundational status. In 2025, researchers demonstrated that the leading approach to the simplex method represents the pinnacle of efficiency, proving it theoretically unbeatable in worst-case efficiency for its core operations [5]. This optimality proof hinges on advanced concepts from convex geometry and complexity theory, showing that any attempt to accelerate the simplex algorithm would violate fundamental lower bounds on computational steps [5].

Multidirectional Search (Nelder-Mead): Nonlinear Heuristic Optimization

The Nelder-Mead algorithm, often called the "simplex method" for nonlinear optimization but more accurately classified as a multidirectional search approach, addresses unconstrained nonlinear problems [3]. The method maintains a simplex of ( n+1 ) points in ( n )-dimensional space, iteratively transforming this simplex based on function evaluations at its vertices. Unlike the linear programming simplex method, Nelder-Mead uses no derivative information, making it suitable for problems with non-smooth functions or noisy evaluations [3].

The algorithm employs four principal operations:

- Reflection: Moving away from the worst-valued vertex

- Expansion: Extending further in promising directions

- Contraction: Shrinking around better-valued vertices

- Shrinkage: Reducing size toward the best vertex [3]

These transformations allow the working simplex to adapt its shape and size to the local landscape, elongating down inclined planes, changing direction when encountering valleys, and contracting near minima [3]. This flexibility makes MDS effective for various nonlinear problems but without the theoretical convergence guarantees of the linear programming simplex method.

Performance Comparison: Experimental Data and Quantitative Analysis

Theoretical and Empirical Performance Metrics

Table 1: Algorithmic Characteristics Comparison

| Characteristic | Simplex Method | Multidirectional Search (Nelder-Mead) |

|---|---|---|

| Problem Domain | Linear Programming | Nonlinear Unconstrained Optimization |

| Derivative Requirements | No explicit derivatives | No derivatives required |

| Theoretical Guarantees | Global optimum for linear problems [5] | No convergence guarantees to local minimum [3] |

| Typical Applications | Supply chain optimization, resource allocation [1] | Parameter estimation, statistical modeling [3] |

| Computational Complexity | Polynomial time (recent proofs) [1] | Varies by problem dimension and landscape |

| Key Transformations | Pivot operations [2] | Reflection, expansion, contraction [3] |

| Geometric Interpretation | Vertex-to-vertex traversal on polytope [4] | Simplex shape/size modification in search space [3] |

Experimental Performance in Drug Discovery Applications

Table 2: Performance in Drug Discovery Contexts

| Application Scenario | Simplex Method Performance | Multidirectional Search Performance |

|---|---|---|

| Multi-target Drug Optimization | Limited for nonlinear systems | Effective with DEL framework [6] |

| Chemical Space Exploration | Not directly applicable | Suitable for generative chemistry [6] |

| Binding Affinity Prediction | Linear approximations only | Direct optimization possible [6] |

| Molecular Property Optimization | Constrained linear properties | Multiple physicochemical properties [6] |

| Supply Chain Optimization | Highly effective [1] | Less efficient than specialized methods |

Recent experimental implementations in drug discovery demonstrate these differential performances. In one study, a graph-fragmentation molecular representation combined with deep evolutionary learning for multi-objective molecular optimization successfully employed MDS approaches to generate novel molecules with improved property values and binding affinities [6]. The method utilized protein-ligand binding affinity scores alongside other physicochemical properties as objectives, demonstrating MDS's flexibility for complex, nonlinear objective functions common in pharmaceutical applications [6].

For classical linear optimization problems in drug manufacturing and distribution, the simplex method remains unchallenged. As noted in recent proofs, "the simplex method, a 1940s algorithm for optimizing linear programming problems in logistics and finance, is theoretically unbeatable in worst-case efficiency" [5]. This theoretical foundation ensures continued dominance in applications like production planning, resource allocation, and logistics within the pharmaceutical industry.

Experimental Protocols and Methodologies

Simplex Method Implementation Protocol

The standard implementation protocol for the simplex method involves:

Problem Formulation Phase:

Algorithm Execution Phase:

- Phase I: Find an initial basic feasible solution

- Phase II: Iterate toward optimal solution through pivot operations [2]

- Select entering variables using chosen pivot rules (e.g., largest coefficient rule)

- Select leaving variables via the minimum ratio test

- Perform row operations to update the tableau

Termination and Validation:

- Terminate when all coefficients in the objective row are non-negative (maximization)

- Verify solution feasibility and optimality conditions [2]

The geometric interpretation involves traversing from vertex to vertex along the edges of the feasible region polytope, with each pivot operation corresponding to moving to an adjacent vertex [4]. Recent theoretical work has optimized pivot selection strategies, with researchers demonstrating that "runtimes are guaranteed to be significantly lower than what had previously been established" [1].

Multidirectional Search Experimental Protocol

For implementing Nelder-Mead multidirectional search:

Initialization Phase:

- Define objective function ( f: \mathbb{R}^n \to \mathbb{R} )

- Construct initial simplex with ( n+1 ) vertices around starting point ( x_0 ) [3]

- Evaluate function at all vertices

Iteration Cycle:

- Ordering: Identify worst (( xh )), second worst (( xs )), and best (( x_l )) vertices

- Centroid: Calculate centroid ( c ) of the best side (opposite worst vertex)

- Transformation Sequence:

- Compute reflection point ( xr = c + \alpha(c - xh) )

- If ( f(xr) < f(xl) ), compute expansion point ( xe = c + \gamma(xr - c) )

- If ( f(xr) \geq f(xs) ), compute contraction point

- If contraction fails, implement shrinkage toward best vertex [3]

Termination Criteria:

- Simplex size becomes sufficiently small

- Function values at vertices become sufficiently close

- Maximum iteration count reached [3]

Application in Drug Discovery: Case Studies and Implementation

Optimization Challenges in Pharmaceutical Research

Drug discovery presents diverse optimization challenges across the development pipeline, from initial compound design to manufacturing and distribution. The simplex method excels in structured, linear problems such as:

- Resource Allocation: Optimizing limited research budgets across competing projects

- Production Planning: Minimizing manufacturing costs while meeting demand constraints

- Supply Chain Optimization: Logistics for raw material sourcing and distribution [1]

Multidirectional search approaches address fundamentally different challenges characterized by nonlinearity and uncertainty:

- Molecular Optimization: Designing compounds with multiple desired physicochemical properties [6]

- Binding Affinity Prediction: Optimizing complex molecular interactions with protein targets [6]

- Pharmacokinetic Modeling: Parameter estimation for nonlinear pharmacodynamic models [3]

- Experimental Design: Optimizing assay conditions across multiple parameters [3]

Case Study: Multi-Objective Molecular Optimization

A recent implementation demonstrates the power of multidirectional search approaches in drug discovery. Researchers developed a deep evolutionary learning (DEL) framework integrating graph-fragmentation-based deep generative models with multi-objective optimization [6]. The methodology:

- Represented molecules using graph fragmentation via the Junction Tree Variational Autoencoder (JTVAE)

- Optimized multiple objectives including binding affinity and physicochemical properties

- Employed evolutionary algorithms with multidirectional search principles

- Generated novel compounds with improved property profiles compared to initial candidates [6]

This approach successfully navigated the complex trade-offs between often-competing molecular properties, demonstrating how MDS-type optimization can address the multi-criteria decision analysis inherent to modern drug discovery [6].

Research Reagent Solutions: Computational Tools for Optimization

Table 3: Essential Research Tools for Optimization Studies

| Tool Category | Specific Examples | Function in Research | Applicable Algorithm |

|---|---|---|---|

| Linear Programming Solvers | CPLEX, Gurobi, LINDO | Implement simplex method for large-scale problems [2] | Simplex Method |

| Nonlinear Optimization | fminsearch (MATLAB), scipy.optimize | Implement Nelder-Mead and related algorithms [3] | Multidirectional Search |

| Molecular Representation | JTVAE, FragVAE [6] | Graph-based molecular encoding for optimization | Multidirectional Search |

| Drug-Target Interaction | AutoDock Suite, Rosetta [6] | Binding affinity prediction for objective functions | Both Algorithms |

| Chemical Databases | ChEMBL, DrugBank, BindingDB [7] | Source of known interactions and properties | Both Algorithms |

| Multi-Criteria Decision | VIKOR, TOPSIS, AHP [8] | Ranking and selection from Pareto fronts | Multidirectional Search |

The simplex method and multidirectional search represent fundamentally different approaches to optimization, each with distinct strengths and application domains in pharmaceutical research. The simplex method provides mathematically rigorous solutions for linear optimization problems with theoretical guarantees, making it indispensable for resource allocation, production planning, and supply chain optimization [1] [5]. In contrast, multidirectional search algorithms like Nelder-Mead offer flexible approaches for nonlinear problems where derivative information is unavailable or unreliable, particularly valuable in molecular design, parameter estimation, and multi-objective optimization [6] [3].

The emerging research paradigm recognizes these methods as complementary rather than competitive. Hybrid approaches that leverage the strengths of both algorithms represent the future of optimization in drug discovery. As recent theoretical work has established the optimality of the simplex method for linear problems [5], and machine learning advances have enhanced multidirectional search for complex molecular optimization [6], researchers now have a robust toolkit for addressing the diverse optimization challenges throughout the drug development pipeline. Strategic algorithm selection based on problem structure, domain constraints, and objective function characteristics will continue to drive efficiency and innovation in pharmaceutical research.

In the realms of computational chemistry, drug development, and scientific research, optimization is a fundamental challenge. Researchers constantly strive to find the best possible outcomes—whether it's the ideal reaction conditions to synthesize a new compound, the optimal dosage for a drug therapy, or the perfect parameters for a material's properties. This process of navigating a complex multidimensional search space to find a maximum or minimum response is both crucial and computationally demanding. For decades, the Simplex algorithm has been a cornerstone method for such nonlinear optimization problems. Its sequential nature, however, presents significant limitations in an era where automated workstations enable parallel experimentation.

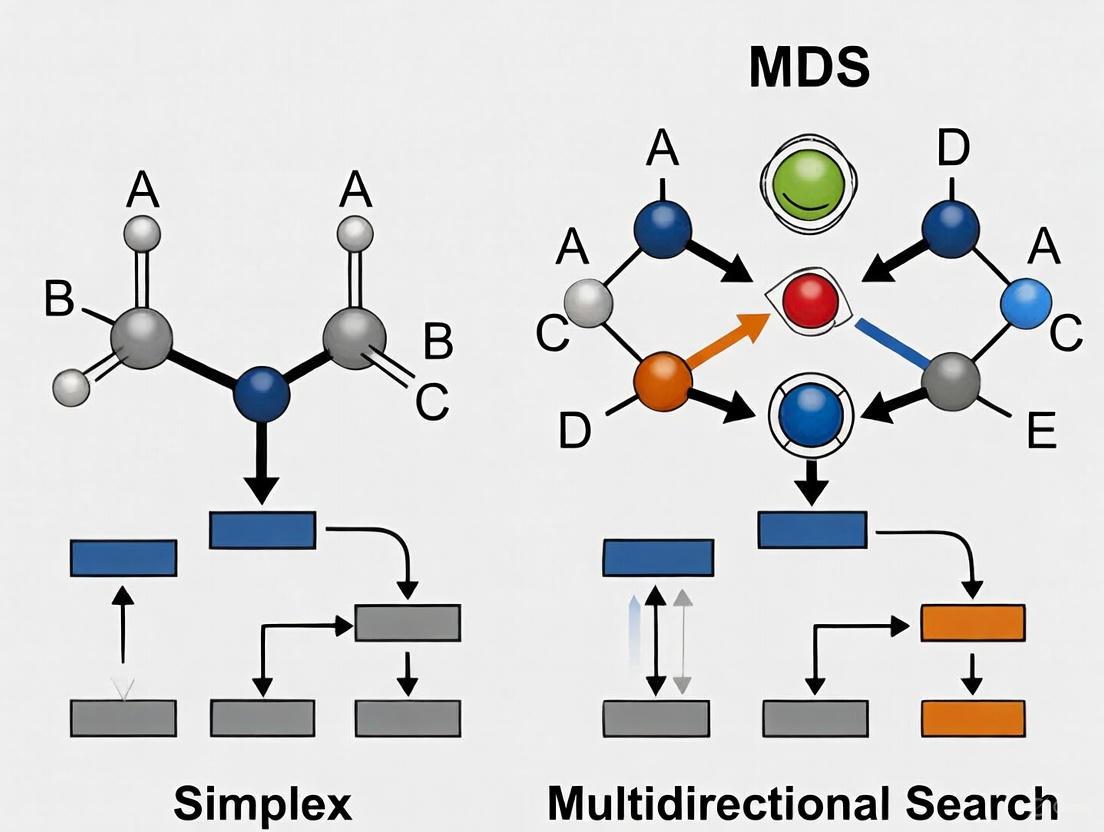

This article explores Multidirectional Search (MDS), a powerful pattern search method that operates in n+1 dimensions and is designed from the ground up for parallel implementation. Developed by Torczon, the MDS algorithm represents a significant evolution from traditional Simplex methods by combining the systematic exploration of factorial designs with the adaptive, evolutive nature of Simplex approaches [9]. By framing this comparison within the context of modern automated chemistry workstations and high-performance computing, we will demonstrate how MDS offers researchers a more efficient pathway to optimization, particularly in time-sensitive and resource-intensive fields like pharmaceutical development.

Core Algorithmic Principles: Simplex vs. MDS

The Traditional Simplex Approach

The Nelder-Mead Simplex method is a direct search algorithm for unconstrained nonlinear optimization. A simplex is an n-dimensional geometric figure with (n+1) vertices, where n is the number of experimental control variables [9]. In two dimensions, this forms a triangle; in three dimensions, a tetrahedron; and so forth. The algorithm proceeds through a series of geometric transformations—reflection, expansion, and contraction—that allow the simplex to move through the search space and adapt its shape to the function's landscape.

The process is fundamentally serial. After initializing the simplex, each iteration requires evaluating the objective function at a new point, comparing it to existing vertices, and then performing a transformation based on that single data point before proceeding to the next iteration. This one-at-a-time approach makes inefficient use of modern parallel computing resources and automated laboratory systems capable of running multiple experiments simultaneously [9].

The Multidirectional Search (MDS) Framework

The MDS algorithm shares the simplex structure of (n+1) vertices but revolutionizes how it explores the search space. Rather than evaluating one new point per iteration, MDS evaluates n new points simultaneously during each cycle [9]. This parallelism is achieved through a pattern of operations that maintains the simplex structure while exploring multiple directions at once.

The key distinction lies in what drives the search. While Simplex methods use a strict ranking of vertices to determine the next step, MDS utilizes simplex derivatives, which are approximations of the function's gradient computed from the simplex vertices [10]. This provides a more informed basis for movement decisions. The algorithm has been shown to be the most powerful member of a family of pattern search algorithms, combining the exploratory power of factorial designs with the focused convergence of evolutionary methods [9].

Table: Fundamental Characteristics of Simplex vs. MDS Algorithms

| Feature | Traditional Simplex | Multidirectional Search (MDS) |

|---|---|---|

| Basic Structure | (n+1) vertices in n-dimensional space | (n+1) vertices in n-dimensional space |

| Experiments per Iteration | 1 (serial) | n (parallel) |

| Decision Basis | Vertex ranking | Simplex derivatives/pattern search |

| Resource Utilization | Low (sequential) | High (parallel) |

| Information Usage | Uses only the worst vertex to generate new point | Uses all vertices to generate multiple new points |

Operational Workflow and Implementation

MDS Algorithm Process

The implementation of MDS on an automated system follows a structured workflow that enables parallel yet adaptive experimentation:

Implementation in Automated Chemistry Workstations

The power of MDS becomes particularly evident when implemented on automated chemistry workstations capable of parallel, adaptive experimentation [11]. These systems consist of robotic components for sample manipulation, multiple reaction vessels, and integrated analytical instruments, all coordinated by sophisticated experiment-management software.

For MDS implementation, each vertex of the simplex corresponds to one chemistry experiment in one reaction vessel, with its coordinates representing specific values for the n parameters under investigation (e.g., temperature, concentration, pH) [9]. The experiment-planning module for MDS studies incorporates features drawn from both factorial design and Simplex experimentation modules, including:

- Experimental Plan Editor: A menu-driven interface for composing experimental plans

- Template Conversion: Transforming plans into experimental templates

- Search Space Definition: Establishing parameter ranges and constraints

- Automated Scheduling: Optimizing the sequence of parallel operations to maximize throughput [11]

This implementation requires modifications to the original MDS algorithm for chemical application, particularly in movement selection, testing for parallelism, and resource analysis [9]. The closed-loop operation of these workstations enables the system to respond to collected data, focusing experimentation in pursuit of the scientific goals and eliminating futile lines of inquiry [11].

Comparative Performance Analysis

Experimental Data and Performance Metrics

Rigorous comparisons between optimization algorithms require examination of multiple performance dimensions. The following table summarizes key quantitative differences based on experimental implementations:

Table: Experimental Performance Comparison of Optimization Methods

| Performance Metric | Factorial Design | Simplex Method | MDS Algorithm |

|---|---|---|---|

| Total Experiments Required | High (exponential with dimensions) | Variable (depends on landscape) | Lower than Simplex in comparative studies |

| Time to Convergence | Fixed (all points predetermined) | Slow (serial evaluation) | Fast (parallel evaluation) |

| Parallelization Efficiency | High (all experiments can run simultaneously) | None (inherently serial) | High (n experiments per cycle) |

| Resource Utilization | Low (no adaptive focusing) | Medium (sequential adaptation) | High (parallel adaptation) |

| Resilience to Noise | Medium (averaging possible) | Low (single-point decisions) | High (pattern-based decisions) |

| Implementation Scenarios | 25 experiments in single batch [9] | 5-40 sequential steps [9] | 25 experiments in adaptive batches [9] |

The performance advantage of MDS is particularly evident in scenarios with larger batch capacities. Research shows that with a batch capacity of 25 experiments, MDS can converge on optimal conditions more rapidly and efficiently than sequential methods [9]. The adaptive nature of the workstation enables searches to be implemented with two levels of decision-making: algorithmically through the MDS method itself, and strategically through higher-level decision trees that can override the search based on chemical intuition or secondary criteria [9].

Application in Drug Development Context

The SELECT-MDS-1 phase 3 study in higher-risk myelodysplastic syndromes (HR-MDS) illustrates the critical importance of efficient optimization in pharmaceutical development [12]. While this clinical trial (focused on a drug rather than the algorithm) ultimately did not meet its primary endpoint, it highlights the complex optimization challenges in drug development where multiple parameters—including dosage, scheduling, and patient selection criteria—must be optimized simultaneously.

In such high-stakes environments, computational optimization methods like MDS can significantly accelerate the identification of optimal conditions for drug synthesis, formulation, and administration protocols. The ability of MDS to efficiently navigate high-dimensional spaces makes it particularly valuable for these complex, multi-parameter optimization problems common in pharmaceutical research.

Successfully implementing Multidirectional Search requires both computational tools and experimental infrastructure. The following toolkit outlines essential components:

Table: Essential Research Reagents and Resources for MDS Implementation

| Resource Category | Specific Examples | Function in MDS Implementation |

|---|---|---|

| Computational Software | MATLAB, Python (SciPy), custom implementations | Provides algorithmic foundation and numerical computation capabilities [9] |

| Automated Chemistry Workstation | Robotic sample manipulators, multiple reaction vessels, syringe pumps | Enables parallel experimentation essential for MDS efficiency [11] |

| Experiment Management Software | Scheduler modules, resource management systems | Coordinates parallel operations and manages experimental resources [11] |

| Analytical Instruments | HPLC, spectrophotometers, real-time monitoring systems | Provides quantitative response measurements for each experimental condition |

| Mathematical Libraries | Linear algebra routines, optimization utilities | Supports calculation of simplex derivatives and pattern movements [10] |

The comparative analysis between Multidirectional Search and traditional Simplex methods reveals a significant evolution in optimization strategy for scientific research. MDS represents a paradigm shift from sequential trial-and-error to intelligent, parallel exploration of complex parameter spaces. Its ability to evaluate multiple directions simultaneously while maintaining the adaptive focus of direct search methods makes it particularly suited for modern automated laboratories and high-performance computing environments.

For researchers in drug development and related fields, where optimization problems are increasingly multidimensional and resource-intensive, MDS offers a compelling alternative to conventional approaches. The integration of simplex derivatives and pattern search principles enables more efficient navigation of complex response surfaces, potentially accelerating the discovery and development process. As automated experimentation platforms become more sophisticated and accessible, algorithms like MDS that can fully leverage parallel capabilities will become increasingly essential tools in the scientist's toolkit, enabling more aggressive and rapid assaults on fundamental scientific problems [11].

Historical Context and Evolution in Scientific and Optimization Fields

The pursuit of optimal solutions is a cornerstone of scientific and industrial progress, driving efficiency and innovation across fields from logistics to drug discovery. Within computational optimization, two strategies have played particularly significant roles: the Simplex Method, developed by George Dantzig in the 1940s, and Multidirectional Search (MDS) methods, a class of pattern search techniques. The Simplex Method was born from military logistics needs during World War II, where Dantzig tackled the challenge of "prudent allocation of limited resources" for the U.S. Air Force [1]. In contrast, MDS emerged as part of the broader family of direct-search pattern methods, designed for situations where derivative information is unavailable, unreliable, or impractical to obtain [13].

This guide provides a comparative analysis of these two influential algorithmic families, framing them within the context of modern research and application demands. While the Simplex Method operates by navigating the vertices of a feasible region defined by linear constraints, multidirectional search and its relatives, like the Compass Search method, perform exploratory moves based on pattern searches without using derivative information [13]. Understanding their distinct historical contexts, theoretical foundations, and performance characteristics is essential for researchers and practitioners selecting the appropriate tool for contemporary optimization challenges.

Historical Context and Development

The Simplex Method

The origin of the Simplex Method is a landmark story in computational mathematics. In 1946, George Dantzig, serving as a mathematical adviser to the U.S. Air Force, developed the method to solve complex logistical planning problems [1]. Its creation was influenced by his earlier work, begun in 1939, on solving two famous open problems in statistics that he had mistaken for homework [1]. The algorithm transforms resource allocation problems into a geometric framework. For example, maximizing a profit function like 3a + 2b + c (where a, b, and c represent product quantities) subject to linear constraints (like a + b + c ≤ 50) is equivalent to navigating the vertices of a multi-dimensional shape called a polyhedron to find the point that maximizes profit [1].

Despite its enduring popularity and widespread use in supply-chain and logistical software, a theoretical shadow long loomed over the method. In 1972, mathematicians proved its worst-case time complexity could be exponential, meaning solution time might skyrocket disproportionately to problem size [1]. However, this worst-case scenario rarely manifests in practice. As one researcher noted, "It has always run fast, and nobody's seen it not be fast" [1]. Recent theoretical work, incorporating elements of randomness, has begun to bridge this gap between practical efficiency and theoretical guarantees, further solidifying its foundational status [1].

Multidirectional Search (MDS) and Pattern Search

Multidirectional Search belongs to the category of direct search methods, which emerged as a practical alternative for optimization problems where the objective function is non-smooth, noisy, or whose derivatives are unavailable [13]. These methods are categorized into pattern search, simplex search, and methods with adaptive sets of directions [13]. Unlike the Simplex Method for linear programming, the "simplex search" used in derivative-free optimization refers to the Nelder-Mead algorithm, which uses a geometric simplex of n+1 points to explore the search space [14].

Methods like Compass Search (a type of pattern search) operate by making exploratory moves from a current point along a set of predefined directions or patterns [13]. If an improving point is found, the algorithm moves there and begins a new iteration. If no improvement is found, the step length is reduced, allowing for a finer, more localized search [13]. This characteristic makes them particularly robust and versatile for experimental and simulation-based optimization where the objective function landscape is complex or expensive to evaluate.

Comparative Analysis: Simplex vs. Multidirectional Search

The following table summarizes the core characteristics of the Simplex Method and Multidirectional Search, highlighting their distinct strengths and optimal application domains.

Table 1: Core Characteristics Comparison

| Feature | Simplex Method | Multidirectional Search (Pattern Search) |

|---|---|---|

| Primary Domain | Linear Programming Problems | Derivative-Free Nonlinear Optimization |

| Theoretical Basis | Navigates vertices of a constraint-defined polyhedron [1] | Exploratory moves based on pattern searches without derivatives [13] |

| Historical Origin | 1940s, Military Logistics (George Dantzig) [1] | 1960s+, Numerical Analysis & Engineering |

| Key Strength | High efficiency for large-scale linear problems; proven track record [1] | Handles non-smooth, noisy, or simulation-based functions [13] |

| Derivative Requirement | Not applicable (works with constraint matrix) | No derivatives required [13] |

| Global Optimization | Not designed for global optimization; finds a single optimum. | Can be integrated into broader global search strategies [13] |

Performance and Application in Scientific Research

In practical scientific applications, the choice between these algorithms hinges on the problem structure. The Simplex Method remains the gold standard for linear optimization problems prevalent in logistics, production planning, and resource allocation [1]. Its reliability in these domains is unmatched.

For experimental sciences and engineering, where models are often non-linear and based on empirical data, derivative-free methods like Simplex (Nelder-Mead) and MDS are preferred [15]. A key recommendation from analytical chemistry literature states: "For functions with several variables and unobtainable partial derivatives, the simplex method is then the best option" [15]. This approach is valued for being a "fast and derivative free approach," making it "less computationally intensive compared to the steepest descent method" in many practical scenarios [14].

Recent research in antenna design demonstrates a novel hybrid approach, using simplex-based regression models to perform a globalized search in the space of the antenna's operating parameters [16] [17]. This technique leverages the regular relationship between antenna geometry and its performance figures, allowing for efficient optimization. The process is accelerated using variable-resolution simulations, where initial global search uses low-fidelity models, and final tuning employs high-fidelity models with gradient-based methods [16] [17]. This reflects a modern trend of combining the robustness of direct searches with local refinement for computational efficiency.

Table 2: Application-Oriented Comparison

| Aspect | Simplex Method | Multidirectional Search |

|---|---|---|

| Optimal Use Case | Large-scale linear resource allocation, supply chain planning [1] | Parameter tuning for experimental setups, simulation-based optimization [15] [13] |

| Computational Efficiency | Highly efficient for its target (linear) problems; practical performance often better than theoretical worst-case [1] | Efficient for problems with costly function evaluations; step reduction refines search efficiently [13] |

| Handling of Complexity | Formulates problem as a set of linear constraints [1] | Navigates complex, non-convex landscapes without derivative information [13] |

| Modern Hybridization | Inspired surrogate-assisted strategies (e.g., simplex regressors) for globalized search [16] [17] | Serves as a robust component in memetic algorithms or hybrid optimization frameworks [13] |

Experimental Protocols and Methodologies

Workflow for Simplex-Based Optimization

The following diagram illustrates a modern, computationally efficient workflow that incorporates a simplex-based global search, as applied in fields like antenna design.

This workflow, derived from cutting-edge engineering design protocols, begins with a global search phase using a fast, low-fidelity model (e.g., a coarse-discretization simulation) [16] [17]. A simplex-based regression predictor is used to model the relationship between design parameters and target performance figures (like an antenna's center frequency), which regularizes the objective function and guides the search [16] [17]. Once the algorithm finds a design that satisfies the target operating parameters at this low-fidelity level, it proceeds to a local tuning phase. This final phase uses a high-fidelity model for verification and employs gradient-based optimization. To reduce computational cost, sensitivity updates can be calculated only along principal directions that most significantly affect the output, rather than for all parameters [16]. This hybrid approach balances global exploration with efficient local exploitation.

Workflow for Multidirectional (Pattern) Search

The foundational workflow for a Multidirectional Search method like Compass Search is outlined below.

The process initiates from a starting point and a given step length. The algorithm then evaluates the objective function at trial points generated by moving the current step length along each coordinate direction (the "pattern") [13]. If this exploratory move discovers a trial point that improves the objective function value, the algorithm relocates to this new point, and a new iteration begins [13]. If no improving point is found within the pattern, the iteration is deemed unsuccessful, and the step length is reduced to enable a finer, more localized search in subsequent iterations [13]. This loop continues until the step length falls below a predefined tolerance, indicating convergence to a local optimum. This method is simple, robust, and does not require gradient information, making it suitable for a wide range of black-box optimization problems.

The Scientist's Toolkit: Key Research Reagents

The following table lists essential conceptual "reagents" or components crucial for implementing and understanding the discussed optimization methods.

Table 3: Essential Components in Optimization Research

| Research Reagent | Function & Description |

|---|---|

| Objective Function | The function to be minimized or maximized. It quantifies the performance or cost of a system given a set of input parameters. |

| Constraints | Equations or inequalities that define feasible values for the parameters. In the Simplex Method, these form the geometric polyhedron [1]. |

| Gradient Vector | A vector of partial derivatives indicating the direction of the steepest ascent of the objective function. Central to gradient-based methods [15]. |

| Geometric Simplex | A convex geometric figure with n+1 vertices in n-dimensional space. Used as a regression model in modern global search [16] and in the Nelder-Mead algorithm. |

| Low/High-Fidelity Models | Computational models of varying accuracy and cost. Low-fidelity models enable efficient global exploration, while high-fidelity models ensure final design validity [16] [17]. |

| Principal Directions | A subset of directions in the parameter space along which the system's response is most sensitive. Updating sensitivities only along these directions reduces computational cost [16]. |

The historical evolution of the Simplex Method and Multidirectional Search algorithms demonstrates a consistent principle in optimization: tool selection is dictated by problem structure. The Simplex Method continues to be indispensable for linear programming problems, with its theoretical understanding still advancing, as recent work provides better explanations for its practical efficiency [1]. Multidirectional Search and other derivative-free pattern methods remain vital for the vast landscape of non-linear, simulation-heavy, and experimental optimization tasks where gradients are unavailable [13].

A prominent trend in modern scientific computing is hybridization. Researchers are increasingly combining the strengths of different paradigms, such as using a simplex-based global search to identify promising regions before applying a fast, gradient-based local optimizer with variable-fidelity models [16] [17]. This approach leverages the robustness of direct-search methods for global exploration and the precision of derivative-based methods for efficient convergence. For researchers in drug development and other data-intensive fields, this evolving toolkit offers powerful pathways to navigate complex optimization landscapes and accelerate scientific discovery.

In the field of derivative-free optimization, direct search methods provide powerful alternatives for problems where gradients are unavailable, unreliable, or impractical to compute. Among these methods, the Simplex algorithms (exemplified by the Nelder-Mead method) and the Multidirectional Search (MDS) algorithm represent two distinct yet fundamentally connected approaches to navigating complex parameter spaces [13]. These algorithms are particularly valuable in chemical reaction optimization and drug development, where experimental outcomes often depend on multiple interacting variables and objective functions may be noisy or non-differentiable [9] [18].

The core distinction between these approaches lies in their search philosophies: Simplex methods operate through an evolutive, serial process of geometric transformation, while MDS employs a parallel, pattern-oriented search that can evaluate multiple points simultaneously [9] [18]. This comparison guide examines their mathematical foundations, focusing on how each method formulates objective functions and handles constraints, with particular emphasis on applications in automated chemistry workstations and pharmaceutical development environments.

Mathematical Foundations and Algorithmic Structures

Simplex Search Methodology

The Simplex method, particularly the Nelder-Mead variant, operates using a geometric structure called a simplex—an n-dimensional polygon formed by n+1 vertices, where n represents the number of optimization parameters [9] [18]. For a two-dimensional problem, this simplex manifests as a triangle; for three dimensions, a tetrahedron; and so forth for higher-dimensional problems [14].

The algorithm progresses through a series of geometric transformations designed to navigate toward optimal regions of the parameter space:

- Reflection: Moving away from the worst-performing vertex

- Expansion: Extending further in promising directions

- Contraction: Shrinking the simplex in less productive regions

- Shrinkage: Reducing all vertices toward the best point when other operations fail [19]

These operations rely solely on direct objective function evaluations without gradient calculations, making the approach particularly suitable for experimental optimization where derivatives are unavailable [13] [19].

Table 1: Nelder-Mead Simplex Operations and Parameters

| Operation | Mathematical Formulation | Typical Parameter Value | Purpose |

|---|---|---|---|

| Reflection | ( xr = xm + \alpha(xm - xw) ) | ( \alpha = 1 ) | Move away from worst point |

| Expansion | ( xe = xm + \beta(xm - xw) ) | ( \beta = 2 ) | Explore promising direction |

| Outside Contraction | ( x{oc} = xm + \gamma(xm - xw) ) | ( \gamma = 0.5 ) | Moderate adjustment |

| Inside Contraction | ( x{ic} = xm - \gamma(xm - xw) ) | ( \gamma = 0.5 ) | Refine search area |

Multidirectional Search (MDS) Formulation

The Multidirectional Search algorithm represents a parallel evolution of simplex concepts, specifically designed to leverage multiple processing units or experimental stations simultaneously [9]. Unlike traditional simplex methods that replace one point per iteration, MDS retains only the single best point from the current simplex and generates an entirely new simplex around it at each iteration [18].

The MDS algorithm exhibits distinctive characteristics that differentiate it from traditional simplex approaches:

- Parallel Evaluation: All points in the new simplex can be evaluated simultaneously

- Fixed Pattern Search: Utilizes regular simplices moving through a grid of regularly spaced points

- Exploratory Flexibility: Can evaluate additional exploratory points based on available resources [18]

This parallel capability makes MDS particularly advantageous for implementation on automated chemistry workstations where multiple reaction vessels can process experiments concurrently [9].

Objective Function Formulations

Both simplex and MDS methods require careful formulation of objective functions to guide the optimization process effectively. In chemical reaction optimization, these functions typically quantify reaction yield, purity, or efficiency [9]. For drug development applications, objective functions might characterize binding affinity, selectivity, or pharmacokinetic properties.

A properly formulated objective function should:

- Quantitatively measure the quality of a solution

- Exhibit sensitivity to parameter changes

- Remain computationally feasible to evaluate repeatedly

- Capture the essential characteristics of the desired outcome [13]

In derivative-free optimization, the objective function must be designed to provide meaningful guidance without gradient information, often requiring careful balancing of multiple performance criteria through weighting schemes or Pareto-based approaches in multi-objective scenarios [13].

Constraint Handling Methodologies

Bound Constraints and Parameter Limitations

In experimental optimization, parameters typically have physical limitations—reaction temperatures cannot exceed solvent boiling points, concentrations must remain positive, and catalyst loadings have practical upper bounds [9]. Both simplex and MDS implementations employ similar strategies for handling these bound constraints:

- Parameter Transformation: Using mathematical transformations to convert constrained problems into unconstrained ones

- Projection Methods: Moving infeasible points to the nearest boundary of the feasible region

- Rejection Approaches: Discarding proposed points that violate constraints and generating alternatives [9] [13]

The MDS algorithm, with its parallel nature, can more efficiently explore constrained spaces by evaluating multiple boundary points simultaneously, potentially providing better characterization of constraint interfaces [9].

Nonlinear and Expensive Constraints

For constraints requiring experimental evaluation (e.g., purity thresholds, side product limits), direct search methods typically employ penalty functions that incorporate constraint violations directly into the objective function [13] [20]. This approach avoids separate constraint-handling procedures that would require additional experiments.

The composite modified simplex (CMS) incorporates specific mechanisms to handle challenging constraint scenarios:

- Boundary avoidance to prevent oscillatory behavior near constraints

- Adaptive step adjustment when constraints are encountered

- Recovery procedures for when simplices become infeasible [18]

Comparative Experimental Analysis

Computational Efficiency and Resource Utilization

Experimental comparisons between simplex and MDS approaches reveal distinct performance characteristics suited to different optimization scenarios. The following table summarizes key performance metrics based on automated chemistry workstation implementations:

Table 2: Performance Comparison in Chemical Reaction Optimization

| Performance Metric | Composite Modified Simplex (CMS) | Multidirectional Search (MDS) | Parallel Simplex Search (PSS) |

|---|---|---|---|

| Experiments per Cycle | 1 (after initial simplex) | n new points + exploratory points | Multiple (depends on parallel capacity) |

| Chemical Resource Usage | Low | High | Moderate |

| Time Efficiency | Low (serial nature) | High (parallel implementation) | High (parallel implementation) |

| Risk of Local Optima | High | Moderate | Low (multiple searches) |

| Implementation Complexity | Low | Moderate | High |

The serial nature of traditional simplex methods makes them parsimonious in chemical consumption but inefficient in time, while MDS can rapidly consume resources but achieves significantly faster convergence [18]. The Parallel Simplex Search (PSS) method represents a hybrid approach, conducting multiple simplex searches concurrently to balance resource utilization and convergence reliability [18].

Convergence Behavior and Reliability

Convergence properties differ substantially between the approaches. Traditional simplex methods may become trapped in local optima, particularly on complex response surfaces with multiple peaks [18]. MDS, with its broader exploratory capability, exhibits reduced susceptibility to local optima but may require more function evaluations to refine solutions precisely [9].

Modified Nelder-Mead approaches address convergence issues by maintaining a fixed simplex structure and optimizing the reflection parameter α, rather than relying on fixed values [19]. This modification enhances convergence reliability while preserving the derivative-free nature of the algorithm.

Experimental Protocols and Methodologies

Automated Chemistry Workstation Implementation

For both simplex and MDS algorithms, implementation on automated chemistry workstations follows a systematic protocol:

- Experimental Plan Composition: Defining the search space and parameter linkages using an Experimental Plan Editor [9]

- Template Generation: Converting the plan to an experimental template that directs robotic systems

- Initial Design Selection: Choosing starting simplex configurations or initial patterns

- Iterative Experimentation: Conducting experiments, evaluating responses, and generating new experimental conditions

- Convergence Detection: Monitoring improvement rates and terminating when thresholds are met [9] [18]

The MDS implementation incorporates specific modifications for chemical applications, including movement selection criteria, tests for parallelism, and resource analysis to manage experimental expenditure [9].

Response Surface Characterization

In pharmaceutical applications, understanding the response surface topology is crucial for interpreting optimization results. Both simplex and MDS methods implicitly map response surfaces through their exploration patterns:

- Simplex Methods: Characterize local curvature through simplex shape transformations

- MDS: Explores broader regions through its parallel pattern search

- PSS: Provides multiple local characterizations through concurrent simplex searches [18]

This implicit mapping facilitates understanding of parameter interactions and response robustness, valuable information for quality-by-design approaches in drug development.

Visualization of Algorithmic Workflows

Simplex Search Process

Simplex Search Decision Workflow

Multidirectional Search Architecture

Multidirectional Search Parallel Workflow

Research Reagent Solutions and Experimental Materials

Successful implementation of simplex and MDS optimization in pharmaceutical and chemical development requires specific experimental infrastructure and computational resources:

Table 3: Essential Research Materials and Resources

| Resource Category | Specific Components | Function in Optimization |

|---|---|---|

| Automated Chemistry Workstation | Reaction vessels, robotic liquid handlers, automated sampling systems | Enables parallel experimentation with precise parameter control |

| Analytical Instrumentation | HPLC systems, GC-MS, NMR, UV-Vis spectroscopy | Provides quantitative objective function measurements |

| Computational Infrastructure | Experiment planning modules, response analysis software, convergence monitoring | Supports algorithm implementation and data interpretation |

| Chemical Reagents | Solvents, catalysts, substrates, reactants | Forms the experimental system being optimized |

| Parameter Control Systems | Temperature controllers, pH meters, pressure regulators | Manipulates independent variables during optimization |

The comparative analysis of simplex and multidirectional search algorithms reveals complementary strengths suited to different optimization scenarios in pharmaceutical research and chemical development.

For resource-constrained environments where experimental materials are limited or expensive, the composite modified simplex (CMS) offers a conservative approach that minimizes consumption while providing reliable local optimization. Its serial nature represents a limitation for time-sensitive projects, but its straightforward implementation makes it accessible for most laboratory settings.

When rapid optimization is prioritized and resources are adequate, multidirectional search (MDS) provides superior time efficiency through parallel experimentation. This approach is particularly valuable for reaction screening and initial process characterization where broad exploration of parameter spaces is necessary.

The emerging parallel simplex search (PSS) represents a promising middle ground, balancing resource utilization with convergence reliability through concurrent simplex operations. This approach may offer the most practical solution for many pharmaceutical development scenarios where both efficiency and robustness are valued.

Selection of the appropriate optimization strategy should consider specific project constraints, including material availability, time requirements, and the complexity of the response surface being investigated. Understanding the fundamental mathematical formulations of each approach enables researchers to make informed decisions about constraint handling, objective function design, and experimental implementation.

In the rigorous field of pharmaceutical research, optimization algorithms are fundamental tools for navigating complex decision-making processes. The Simplex Algorithm and Multidirectional Search (MDS) represent two distinct philosophical approaches to optimization: one traverses the vertices of a feasible region defined by linear constraints, while the other explores search directions through geometric pattern transformations. Within Model-Informed Drug Development (MIDD), these algorithms provide structured, quantitative frameworks that enhance drug development by accelerating hypothesis testing, improving candidate selection, and reducing costly late-stage failures [21]. The strategic selection between simplex-based linear programming and pattern search methods depends critically on the problem's mathematical structure—whether it involves linear relationships amenable to the simplex method or requires derivative-free optimization for complex, non-linear models. Understanding the geometric foundations of these algorithms empowers researchers to align their computational tools with key questions of interest and specific contexts of use, ultimately streamlining the path from discovery to clinical application [21].

Theoretical Foundations: Geometry of Search Algorithms

The Simplex Algorithm: Vertex-Hopping on a Polytope

The Simplex Algorithm, developed by George Dantzig, operates on a powerful geometric principle: for a linear program with an optimal solution, that solution resides at least at one extreme point (vertex) of the convex polytope defined by the constraints [2]. This polytope represents the feasible region where all constraints overlap. The algorithm navigates by moving from one vertex to an adjacent vertex along the edges of the polytope, with each step improving the objective function value until no further improvement is possible [2]. This process is implemented algebraically through pivot operations that exchange basic and nonbasic variables in the simplex tableau, effectively moving the solution to an improving adjacent vertex [22]. The algorithm's efficiency stems from this deliberate traversal along the polytope's edges rather than exhaustively enumerating all vertices, which would be computationally prohibitive for high-dimensional problems common in pharmaceutical applications like resource allocation or production optimization [2].

Multidirectional Search: Geometric Pattern Transformation

In contrast to the vertex-hopping approach of simplex, multidirectional search operates through a different geometric metaphor. Rather than leveraging constraint-defined structures, MDS employs a simplex-shaped pattern of points in the search space (distinct from the linear programming simplex concept) that expands, contracts, and reflects based on function evaluations. This geometric pattern—typically an n-dimensional simplex with n+1 vertices—undergoes transformations that enable it to adapt to the function's topography. The algorithm reflects the worst point through the opposite face of the simplex, expanding if improvement occurs or contracting if not, effectively "walking" the pattern across the optimization landscape. This derivative-free approach is particularly valuable in drug development for optimizing complex simulation models where objective functions may be noisy, non-differentiable, or computationally expensive to evaluate, such as in quantitative systems pharmacology models or clinical trial simulations [21].

Comparative Analysis: Simplex versus Multidirectional Search

Algorithmic Characteristics and Applicability

Table 1: Fundamental Characteristics of Simplex and Multidirectional Search Algorithms

| Characteristic | Simplex Algorithm | Multidirectional Search (MDS) |

|---|---|---|

| Problem Domain | Linear Programming | Nonlinear, Derivative-Free Optimization |

| Geometric Interpretation | Moves along edges of constraint polytope from vertex to vertex | Transforms a simplex pattern through reflection, expansion, and contraction |

| Optimality Criteria | Reaches optimum when no adjacent vertex improves objective function | Converges when simplex pattern becomes sufficiently small |

| Constraint Handling | Native through feasible region definition | Requires special transformations or penalty functions |

| Derivative Requirements | No function derivatives required | No function derivatives required |

| Primary Applications in Pharma | Resource allocation, blending problems, transportation logistics | Parameter estimation in QSP/PBPK models, clinical trial simulation optimization |

The Simplex Algorithm's strength lies in its deterministic nature and guaranteed convergence to a global optimum for linear problems, making it ideal for resource allocation in drug manufacturing or transportation logistics in pharmaceutical supply chains [22]. Its geometric progression along the feasible region's boundary ensures systematic improvement at each iteration. Multidirectional Search, particularly the Nelder-Mead variant, excels where derivatives are unavailable or unreliable, such as when calibrating complex physiologically-based pharmacokinetic (PBPK) models to experimental data [2] [21]. However, this flexibility comes with potential convergence to local optima in multimodal landscapes, requiring careful implementation and validation when used in critical path applications like first-in-human dose prediction [21].

Performance Metrics and Convergence Behavior

Table 2: Performance Comparison in Pharmaceutical Applications

| Performance Metric | Simplex Algorithm | Multidirectional Search (MDS) |

|---|---|---|

| Convergence Speed | Finite number of iterations (typically proportional to constraints) | Variable; depends on problem dimension and topology |

| Solution Guarantee | Global optimum for linear problems | Local convergence only; no global guarantees |

| Dimensional Scalability | Efficient for problems with many variables but structured constraints | Performance degrades with high dimension (>10 parameters) |

| Implementation Complexity | Moderate (tableau operations) | Low (function evaluations only) |

| Robustness to Noise | Low (assumes exact arithmetic) | Moderate (inherently heuristic) |

| Regulatory Acceptance | High for well-defined linear problems | Context-dependent; requires validation |

The Simplex Algorithm demonstrates polynomial-time performance for most practical problems despite its theoretical exponential worst-case complexity [2]. This efficiency makes it suitable for large-scale linear optimization in pharmaceutical applications like production planning and chemical composition optimization. Multidirectional Search typically requires more function evaluations, particularly in high-dimensional parameter spaces common in quantitative systems pharmacology models, but provides greater flexibility for problems where the objective function arises from complex simulations [21]. In regulatory contexts, simplex-derived solutions often face less scrutiny due to the algorithm's deterministic nature, while MDS applications require comprehensive sensitivity analysis and validation, particularly when supporting critical decisions in new drug applications [21].

Experimental Protocols and Methodologies

Standardized Testing Framework for Optimization Algorithms

To objectively compare algorithm performance, researchers implement a standardized testing protocol using benchmark problems with known optima. For simplex evaluation, linear programming problems from NETLIB library provide validated test cases, while multidirectional search assessment employs nonlinear test functions with varied topography (convex, multimodal, ill-conditioned). The experimental workflow begins with problem formulation, proceeds through algorithm configuration and execution, and concludes with solution validation and performance metrics collection. Controlled experimentation measures both computational efficiency (iteration count, function evaluations, CPU time) and solution quality (objective value accuracy, constraint satisfaction, convergence precision).

Implementation in Drug Development Contexts

In pharmaceutical applications, algorithm testing incorporates domain-specific problems including dose optimization, clinical trial simulation, and chemical property prediction. For simplex methods, this involves formulating linear constraints representing biological boundaries (e.g., maximum tolerated dose, resource limitations) and linear objectives (e.g., efficacy maximization, cost minimization). For multidirectional search, testing focuses on parameter estimation in nonlinear pharmacodynamic models or optimization of trial design parameters. The experimental protocol requires multiple replicates with randomized initial conditions to account for algorithmic stochasticity, with statistical analysis of results using appropriate tests (e.g., paired t-tests for performance comparisons). Implementation fidelity is verified through convergence diagnostics and constraint adherence monitoring, with special attention to numerical stability in finite-precision computation [21].

Applications in Pharmaceutical Research and Development

Model-Informed Drug Development (MIDD) Implementation

The Simplex Algorithm finds natural application in MIDD for resource-constrained optimization problems, such as determining optimal clinical trial site allocation or manufacturing process optimization [21]. Its deterministic nature and global convergence properties make it suitable for problems with clear linear relationships, such as balancing production costs against capacity constraints in active pharmaceutical ingredient manufacturing. Multidirectional Search, conversely, addresses challenges in computational pharmacology where researchers must estimate parameters for complex, nonlinear systems pharmacology models without explicit gradient information [21]. These applications include refining quantitative structure-activity relationship (QSAR) models and calibrating physiologically-based pharmacokinetic (PBPK) models to observed clinical data, where the objective function may involve complex simulations of drug disposition [21].

Regulatory Considerations and Validation Requirements

For optimization algorithms supporting regulatory submissions, validation and interpretability are paramount. The Simplex Algorithm's transparent operations and deterministic path to optimality facilitate regulatory review, particularly when the mathematical formulation directly represents physical constraints or resource limitations [21]. In contrast, applications of Multidirectional Search require comprehensive documentation of convergence behavior, sensitivity analysis, and robustness testing, as outlined in FDA fit-for-purpose modeling guidance [21]. Recent draft guidance on drug development for complex conditions like myelodysplastic syndromes emphasizes rigorous endpoint optimization and trial design, areas where both algorithms contribute but with different evidentiary requirements [23]. The evolving regulatory landscape for Model-Informed Drug Development, including ICH M15 guidance, promises greater standardization in algorithm application and validation across global regulatory jurisdictions [21].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for Optimization Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| Linear Programming Solvers | Implement simplex algorithm with numerical stability enhancements | Large-scale resource allocation and production planning |

| Derivative-Free Optimization Libraries | Provide multidirectional search and pattern search implementations | Parameter estimation for complex biological models |

| PBPK/PD Platform Software | Integrate optimization algorithms for model calibration | Preclinical to clinical translation and dose optimization |

| Clinical Trial Simulation Environments | Enable optimization of trial design parameters | Adaptive trial design and endpoint optimization |

| Quantitative Systems Pharmacology Platforms | Incorporate optimization for systems model parameterization | Mechanism-based drug effect prediction |

| Statistical Analysis Packages | Provide convergence diagnostics and performance metrics | Algorithm validation and comparative performance assessment |

The research toolkit for optimization in pharmaceutical sciences increasingly incorporates both established and emerging methodologies. Traditional simplex-based linear programming solvers remain essential for structured problems with linear constraints, while modern derivative-free optimization libraries address challenges in complex biological systems modeling [21]. Specialized platforms for physiologically-based pharmacokinetic modeling and quantitative systems pharmacology incorporate these algorithms specifically for model calibration and simulation optimization [21]. With the growing role of artificial intelligence and machine learning in drug development, hybrid approaches that combine the geometric interpretation of traditional algorithms with adaptive learning represent the frontier of optimization research in pharmaceutical sciences [21].

Future Directions and Emerging Applications

The convergence of traditional optimization approaches with artificial intelligence methodologies presents promising avenues for enhanced decision support in drug development. Machine learning techniques may guide initial simplex formation or pattern direction selection, potentially accelerating convergence for complex problems [21]. As pharmaceutical research addresses increasingly complex therapeutic modalities, including gene therapies and personalized medicine approaches, the geometric interpretation of optimization landscapes will continue to inform algorithm selection and implementation. Future applications may include adaptive design optimization for basket trials, combination therapy dose optimization, and synthetic control arm creation—all areas where understanding the geometric properties of feasible regions and search paths enhances algorithmic efficiency and regulatory acceptance [21] [23]. The continued harmonization of regulatory guidance regarding model-informed drug development promises greater clarity in algorithm validation requirements, supporting more confident application of both simplex and multidirectional search methods across the drug development continuum [21].

Practical Implementation: Applying Simplex and MDS in Pharmaceutical Research

Simplex-Centroid and Simplex-Lattice Designs for Drug Formulation Optimization

In the realm of drug formulation development, researchers constantly seek efficient methodologies to optimize the composition of various ingredients, such as active pharmaceutical ingredients (APIs), excipients, binders, and disintegrants. Mixture experiments represent a specialized branch of Design of Experiments (DoE) that addresses the unique challenge of formulating these multi-component systems where the proportion of each component is the critical factor, and the combined total must equal a constant sum, typically 1 or 100% [24] [25]. Unlike traditional factorial designs where factors can be varied independently, mixture components are inherently interdependent; increasing the proportion of one component inevitably decreases the proportion of one or more other components [26] [25]. This constraint defines the experimental region as a geometric structure known as a simplex—a line for two components, an equilateral triangle for three, and a tetrahedron for four [24] [26].

Within this structured approach, two designs have emerged as fundamental tools: the Simplex-Lattice Design and the Simplex-Centroid Design. Both are used to systematically explore the simplex region and model the relationship between component proportions and critical quality responses, such as dissolution rate, tablet hardness, or bioavailability [27]. This guide provides an objective comparison of these two designs, detailing their theoretical foundations, experimental protocols, and applications within the broader context of optimization research, particularly in contrast to multidirectional search (MDS) algorithms.

Theoretical Foundations and a Comparative Framework

The Simplex-Lattice Design

A {q, m} Simplex-Lattice Design is constructed for q components where each component's proportion takes m+1 equally spaced values from 0 to 1 (i.e., 0, 1/m, 2/m, ..., 1) [28]. The total number of distinct design points is given by the combinatorial formula (q + m - 1)! / (m! (q - 1)!) [28]. This design systematically covers the simplex with points located on a grid, making it particularly suited for fitting canonical polynomial models of degree m [29] [28]. For example, a {3, 2} simplex-lattice includes points where each of the three components has proportions of 0, 0.5, or 1, resulting in 6 design runs that include all pure blends and binary blends in equal proportions [28].

The Simplex-Centroid Design

The Simplex-Centroid Design for q components consists of (2^q - 1) distinct points [29]. These points correspond to all possible subsets of the components. Specifically, it includes:

- q pure blends (where one component has a proportion of 1 and all others are 0).

- All binary mixtures (where two components have equal proportions of 1/2 and the rest are 0).

- All ternary mixtures (where three components have equal proportions of 1/3), and so on.

- The overall centroid point, where all q components are present in equal proportion (1/q) [29] [25]. This design intentionally includes points representing the centroids of various combinations of components, providing inherent information about higher-order interactions in a more efficient point distribution compared to a high-degree lattice.

Table 1: Fundamental Characteristics of Simplex-Lattice and Simplex-Centroid Designs

| Characteristic | Simplex-Lattice Design | Simplex-Centroid Design |

|---|---|---|

| Primary Objective | Fitting a polynomial model of a specific degree (m) | Estimating all possible component interactions |

| Number of Points | (q + m - 1)! / (m! (q - 1)!) | 2^q - 1 |

| Point Distribution | Evenly spaced grid on the simplex | Includes vertices, edge centroids, and face centroids |

| Model Flexibility | Excellent for a pre-specified model degree (m) | Naturally captures binary and higher-order interactions |

| Example (q=3, m=2) | 6 points: (1,0,0), (0,1,0), (0,0,1), (0.5,0.5,0), (0.5,0,0.5), (0,0.5,0.5) | 7 points: All 6 from the lattice plus the overall centroid (1/3, 1/3, 1/3) |

Experimental Protocols and Methodologies

Design Generation and Implementation

The practical implementation of both designs follows a structured workflow. Software tools like R (with the mixexp package), Minitab, and JMP are commonly used to generate the design matrices and analyze the resulting data [29] [30] [31].

Protocol for Simplex-Lattice Design using R:

- Load the required library:

library(mixexp) - Generate the design: Use the

SLD(fac, lev)function, wherefacis the number of components (q) andlevis the number of levels besides 0 (which corresponds to m, the degree of the polynomial) [29]. Example for a {3, 2} design: This code produces a design table with 6 runs. - Export the design for laboratory execution:

write.csv(design_sld, file="design_sld.csv", row.names=FALSE)

Protocol for Simplex-Centroid Design using R:

- Load the library:

library(mixexp) - Generate the design: Use the

SCD(fac)function, wherefacis the number of components (q) [29]. Example for a 3-component design: This code produces a design table with 7 runs. - Export the design:

write.csv(design_scd, file="design_scd.csv", row.names=FALSE)

The following workflow diagram generalizes this experimental process from design to optimization, applicable to both simplex-centroid and simplex-lattice approaches.

Model Fitting and Analysis

After conducting the experiments and recording the response(s) for each run, the next step is to fit a canonical polynomial model. These models lack an intercept due to the mixture constraint [29] [28].

Model Fitting with R:

- Using

lm(): The linear model function can be used without an intercept. - Using

MixModel(): The specialized function from themixexppackage simplifies the process. In this function,model = 4often specifies a special cubic model [29].

Interpretation of Coefficients:

- The linear term

βirepresents the estimated response for the pure component i [28]. - The binary interaction term

βijindicates synergistic (if positive) or antagonistic (if negative) blending effects between components i and j [28]. For instance, in a polymer blend study, a significant positiveβ12of 19.0 indicated a synergistic effect on yarn elongation when the two components were mixed [28]. - The ternary interaction term

βijkin special cubic models captures the effect of simultaneously blending three components.

Comparative Analysis and Practical Application Data

Performance Comparison in Formulation Optimization

The choice between a simplex-lattice and a simplex-centroid design depends on the research goals, resources, and desired model complexity. The table below summarizes key performance and applicability criteria.

Table 2: Design Performance and Application Comparison

| Criterion | Simplex-Lattice Design | Simplex-Centroid Design |

|---|---|---|

| Modeling Goal | Ideal for fitting a specific, pre-determined model order (linear, quadratic, cubic). | Ideal for screening interactions and building models with up to full interaction terms. |

| Experimental Runs | More runs required for higher-order models (e.g., {3,3} has 10 runs). | Fewer runs for the same number of components (e.g., 3 components requires only 7 runs). |

| Information on Interactions | Requires a higher-degree design (m>1) to detect interactions. A {q, 2} design estimates all 2-factor interactions. | Inherently provides data on all 2-factor and higher-order interactions with its centroid points. |

| Prediction Accuracy | Excellent within the defined lattice structure for the intended model. | Often provides better interior prediction due to the presence of the overall centroid. |

| Handling Constraints | Can be challenging; often requires algorithmic (D-optimal) designs for constrained regions [32]. | Similarly challenging for highly constrained spaces; D-optimal designs are preferred. |

Case Study: Solvent System Optimization for Bioactive Extraction

A 2025 study optimizing the extraction of methylxanthines from cocoa bean shell provides a clear example of a Simplex-Centroid Design in action [33]. The goal was to find the optimal mixture of three solvents (ethanol, methanol, and water) to maximize the yield of theobromine and caffeine.

Experimental Data and Results: The design consisted of 7 experimental runs, and the total methylxanthine content (mg g⁻¹ dry matter) was the response [33].

Table 3: Experimental Matrix and Responses from Simplex-Centroid Solvent Optimization

| Run # | Ethanol (%) | Water (%) | Methanol (%) | Methylxanthines (mg g⁻¹ DM) |

|---|---|---|---|---|

| 1 | 50 | 50 | 0 | 25.3 |

| 2 | 50 | 0 | 50 | 23.7 |

| 3 | 0 | 0 | 100 | 24.5 |

| 4 | 0 | 100 | 0 | 22.7 |

| 5 | 100 | 0 | 0 | 20.6 |

| 6 | 33.33 | 33.33 | 33.33 | 25.1 |

| 7 | 0 | 50 | 50 | 23.6 |

Outcome: The data was fitted to a model, and analysis revealed that a binary mixture of water and ethanol in a 3:2 ratio provided the optimal extraction yield. This was followed by a subsequent optimization of process variables (temperature and time) using a Doehlert design, ultimately achieving a yield of 23.67 mg g⁻¹ of total methylxanthines [33]. This case demonstrates the effective use of a simplex-centroid design for screening and optimizing a ternary mixture system.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The following table lists key materials and software tools commonly employed in mixture design studies for drug formulation.

Table 4: Essential Research Reagents and Software Solutions

| Item | Function / Application | Example Context |

|---|---|---|

R with mixexp package |

Open-source software for generating mixture designs (SLD, SCD, constrained) and analyzing the resulting data. | Used to create a {3,2} simplex-lattice design for a tablet formulation study [29]. |

| JMP DOE Platform | Commercial statistical software with dedicated modules for constructing and analyzing various mixture designs. | Employed to create an optimal mixture design for a constrained formulation space in pharmaceutical development [31]. |

| Design-Expert Software | Another commercial software package widely used for response surface methodology and mixture design. | Applied to optimize the solvent mixture for the extraction of methylxanthines [33]. |

| Canonical Polynomial Models | Specialized regression models (linear, quadratic, special cubic) that respect the mixture constraint ∑xᵢ=1. | Fitted to data from a {3,2} simplex-lattice to understand blending effects in a polymer fiber experiment [28]. |

| Pseudo-Components | A mathematical transformation used when components have lower and/or upper bound constraints, rescaling the proportions to a smaller, full simplex. | Allows the use of standard simplex designs and models when a component cannot be used at 0% or 100% [24]. |

Simplex-lattice and simplex-centroid designs are powerful, yet distinct, tools for tackling the complex challenge of drug formulation optimization. The Simplex-Lattice Design offers a structured approach for fitting a specific polynomial model, making it suitable when the relationship between components and response is already somewhat characterized. In contrast, the Simplex-Centroid Design provides a more efficient screening tool that naturally elucidates interaction effects with fewer runs for the same number of components, which is highly valuable in early-stage formulation development.