Single-Group vs. Multiple-Group Quasi-Experimental Designs: A Strategic Guide for Clinical and Biomedical Research

This article provides a comprehensive overview of single-group and multiple-group quasi-experimental designs, methodologies essential for clinical and biomedical research where randomized controlled trials are not feasible or ethical.

Single-Group vs. Multiple-Group Quasi-Experimental Designs: A Strategic Guide for Clinical and Biomedical Research

Abstract

This article provides a comprehensive overview of single-group and multiple-group quasi-experimental designs, methodologies essential for clinical and biomedical research where randomized controlled trials are not feasible or ethical. Tailored for researchers, scientists, and drug development professionals, it explores the foundational concepts, core methodologies, and practical applications of these designs. The content addresses common challenges and threats to validity, offering strategic guidance for selecting and optimizing the appropriate design based on research goals, context, and ethical considerations to ensure robust and interpretable results in real-world settings.

Core Concepts: Understanding the Spectrum of Quasi-Experimental Designs

Defining Quasi-Experimental Research and Its Niche in Biomedical Science

Quasi-experimental research represents a category of scientific inquiry that occupies a crucial methodological space between observational studies and true randomized experiments. These designs estimate the causal impact of an intervention when random assignment of participants to treatment and control groups is not feasible due to ethical, practical, or logistical constraints [1] [2]. In biomedical science, this methodology enables researchers to investigate cause-and-effect relationships in real-world settings where randomized controlled trials (RCTs) cannot be implemented, thus providing valuable evidence for clinical and public health interventions when gold-standard trials are impractical or unethical [3] [4].

The fundamental characteristic that distinguishes quasi-experiments from true experiments is the absence of random assignment [2]. While true experiments randomly assign participants to experimental and control conditions to ensure group equivalence, quasi-experiments utilize existing groups, natural occurrences, or predetermined criteria to form comparison groups [5]. This key difference introduces specific methodological challenges but maintains the capacity to support causal inference when designed and analyzed rigorously [6]. Quasi-experimental designs meet several requirements for establishing causality, including temporality (the cause precedes the effect), strength of association, and in some cases, dose-response relationships [4].

Core Concepts and Terminology

Fundamental Principles

Quasi-experimental designs share three core components with true experiments: experimental units (typically patients or populations), treatments or interventions (the independent variable), and outcome measures (the dependent variable) [2] [7]. What differentiates them is how participants are assigned to these conditions. Without randomization, researchers must employ alternative strategies to minimize confounding and strengthen causal inferences [8].

Internal validity—the degree to which observed changes can be correctly attributed to the intervention rather than external factors—is a primary concern in quasi-experimental research [1] [2]. Key threats to internal validity include selection bias, history effects, maturation, testing effects, instrumentation changes, regression to the mean, and attrition [3] [7]. Understanding these threats is essential for both designing robust quasi-experiments and interpreting their findings appropriately.

Advantages and Disadvantages in Biomedical Research

Table: Advantages and Disadvantages of Quasi-Experimental Designs in Biomedical Science

| Advantages | Disadvantages |

|---|---|

| Higher external validity in real-world settings [5] [4] | Lower internal validity due to confounding variables [5] |

| Practical and ethical applicability when RCTs are infeasible [1] [4] | Risk of selection bias from non-random assignment [8] [5] |

| Retrospective analysis of policy changes or natural events [4] | Incompletely measured or unknown confounders [8] |

| Includes patients often excluded from RCTs [4] | Requires large sample sizes for multivariable analyses [8] |

Quasi-Experimental Designs: Single-Group Approaches

Single-group designs represent the most basic form of quasi-experimental research, utilizing only one group of participants who receive the intervention. While practical and efficient, these designs have significant limitations for establishing causality.

One-Group Posttest Only Design

The one-group posttest only design exposes a single group to an intervention and measures the outcome afterward with no pretest or control group [9]. For example, researchers might implement an anti-drug education program in a school and measure students' attitudes toward illegal drugs immediately afterward [9].

Methodological Considerations: This design provides essentially no basis for causal inference as there is no comparison point to evaluate change [9]. It cannot account for pre-existing conditions or external influences. Results from such designs are frequently misinterpreted in media reports, where claims of effectiveness may be made without appropriate context [9].

One-Group Pretest-Posttest Design

This design improves upon the posttest-only approach by measuring the dependent variable both before (pretest) and after (posttest) the intervention [1] [9]. The effect is inferred from the difference between these measurements. For instance, researchers might measure participants' weight before implementing a high-intensity training program, then measure again after three months of the intervention [1].

Methodological Considerations: Despite including a pretest, this design faces multiple threats to internal validity [1]. History effects (external events during the study), maturation (natural changes over time), testing effects (familiarity with measures), instrumentation changes (shifts in measurement tools), and regression to the mean (statistical tendency for extreme scores to move toward average) can all confound results [1] [9]. In biomedical contexts, spontaneous remission presents a particular challenge, as many medical conditions naturally improve over time without intervention [9].

Interrupted Time-Series Design

The interrupted time-series design strengthens the pretest-posttest approach by collecting multiple measurements both before and after the intervention [6] [9] [4]. This design tracks outcomes at regular intervals over an extended period, with the intervention introduced at a specific point. For example, a hospital might measure medication error rates monthly for a year before and after implementing a new electronic health record system [4].

Methodological Considerations: The multiple data points allow researchers to identify underlying trends and distinguish intervention effects from normal variability [9]. This design is particularly valuable for evaluating policy changes, public health initiatives, and system-wide interventions in biomedical settings [6] [4]. Statistical techniques such as segmented regression analysis are typically used to analyze time-series data.

Quasi-Experimental Designs: Multiple-Group Approaches

Multiple-group designs incorporate comparison groups that do not receive the intervention or receive a different intervention, substantially strengthening causal inference.

Nonequivalent Control Group Design

The nonequivalent control group design includes both an experimental group and a control group, but participants are not randomly assigned to these conditions [1] [10]. Groups are typically formed based on pre-existing characteristics or natural groupings. For example, researchers might study the effect of an app-based memory game by implementing it at one senior center (treatment group) while using another similar senior center as a control that continues usual activities [1].

Methodological Considerations: This design controls for many threats to internal validity, including history, maturation, testing, and regression to the mean, provided these factors affect both groups similarly [1]. The primary limitation is selection bias—the groups may differ systematically at baseline in ways that influence outcomes [1] [3]. Statistical techniques like analysis of covariance (ANCOVA) can adjust for pretest differences, while propensity score matching can create more comparable groups [8] [6].

Regression Discontinuity Design

Regression discontinuity design assigns participants to treatment and control groups based on a cutoff score on a pre-intervention measure [2] [6]. For example, students scoring below a certain threshold on a standardized test might receive remedial tutoring, while those above the threshold do not [6]. This approach comes closest to experimental design in methodological rigor [2].

Methodological Considerations: This design requires large sample sizes and precise modeling of the relationship between the assignment variable and outcome [2]. The key advantage is that it eliminates selection bias around the cutoff point, as assignment is determined solely by the predetermined threshold [6].

Difference-in-Differences Analysis

Difference-in-differences analysis compares changes in outcomes between treatment and control groups before and after an intervention [6]. This approach calculates the intervention effect as the difference in pre-post changes between groups. For example, this method was used to study the employment effects of minimum wage increases by comparing changes in employment between states that implemented increases and those that did not [6].

Methodological Considerations: This design controls for time-invariant confounders and selection bias related to fixed group differences [6]. It requires the parallel trends assumption—that in the absence of the intervention, both groups would have experienced similar changes over time.

Comparative Analysis: Single-Group vs. Multiple-Group Designs

Table: Comparison of Single-Group and Multiple-Group Quasi-Experimental Designs

| Design Characteristic | Single-Group Designs | Multiple-Group Designs |

|---|---|---|

| Basic Structure | One group measured before/after intervention [9] | Two or more groups compared [1] |

| Control for History | No | Yes [1] |

| Control for Maturation | No | Yes [1] |

| Control for Testing Effects | No | Yes [1] |

| Control for Selection Bias | No | Partial [8] |

| Implementation Feasibility | High | Moderate |

| Causal Inference Strength | Weak | Moderate to Strong [1] [2] |

| Statistical Power | Lower (within-group comparisons) | Higher (between-group comparisons) |

| Primary Threats | History, maturation, testing, instrumentation, regression [9] | Selection bias, interaction of selection with other threats [1] |

Applications in Biomedical Science

Healthcare Interventions and Policy Evaluation

Quasi-experimental designs are extensively used to evaluate healthcare interventions and policy changes where randomization is impractical or unethical [3] [4]. For example, researchers employed a quasi-experimental design to assess the effectiveness of a childhood obesity prevention program, finding that while the program reduced obesity risk, it was also expensive to implement [6]. Similarly, these designs have been used to evaluate the impact of electronic health record systems on medication errors, the effectiveness of hand hygiene interventions, and the outcomes of antimicrobial stewardship programs [3] [4].

Pharmaceutical and Clinical Research

In drug development and clinical research, quasi-experiments provide valuable evidence when RCTs cannot be conducted. For instance, comparing pregnancy outcomes in women who did versus did not receive antidepressant medication during pregnancy represents a classic quasi-experimental application in pharmacology, as random assignment would be unethical [8]. These designs are particularly valuable for studying rare diseases, special populations, or real-world medication effectiveness where traditional trials face recruitment challenges or ethical constraints.

Public Health and Epidemiology

Public health research frequently employs quasi-experimental designs to evaluate population-level interventions, such as the impact of public health policies, educational campaigns, or environmental changes [1] [4]. The interrupted time-series design has been used to study the effects of public smoking bans on cardiovascular events, the impact of vaccination programs on disease incidence, and the effectiveness of traffic safety laws on accident rates [6] [4].

Methodological Protocols and Statistical Considerations

Design and Implementation Protocols

Step 1: Design Selection

- Assess feasibility of random assignment [3]

- Identify available comparison groups or natural experiments [10]

- Determine appropriate pretest and posttest measures [1]

- Consider time-series approaches for longitudinal data [4]

Step 2: Sampling and Group Formation

- Implement matching techniques (individual, aggregate, or propensity score) to enhance group comparability [6] [10]

- Identify and measure potential confounding variables [8]

- Ensure adequate sample size through power analysis, accounting for anticipated effect sizes and potential clustering [6]

Step 3: Data Collection

- Standardize measurement procedures across groups [1]

- Implement blinding when possible to reduce ascertainment bias [4]

- Collect multiple pre-intervention and post-intervention measurements in time-series designs [9] [4]

Analytical Approaches

Multivariable regression represents the foundational analytical approach for quasi-experimental data, allowing researchers to adjust for measured confounding variables [8]. Propensity score matching creates statistical equivalence between groups by matching participants based on their probability of receiving the treatment [8] [6]. Instrumental variable analysis addresses unmeasured confounding by identifying variables that affect treatment assignment but not outcomes directly [6]. Segmented regression analyzes interrupted time-series data by modeling level and trend changes following interventions [4].

Research Reagent Solutions: Methodological Tools

Table: Essential Methodological Tools for Quasi-Experimental Research

| Methodological Tool | Function | Application Context |

|---|---|---|

| Propensity Score Matching | Creates balanced treatment and control groups by matching on probability of treatment assignment [6] | Controls for selection bias when groups differ at baseline |

| Instrumental Variables | Addresses endogeneity (confounding) using variables related to treatment but not outcome [6] | Controls for unmeasured confounding when valid instruments available |

| Difference-in-Differences Analysis | Compares changes over time between treatment and control groups [6] | Evaluates policy interventions with longitudinal data |

| Regression Discontinuity | Exploits arbitrary cutoff points for treatment assignment [2] [6] | Studies interventions with eligibility thresholds |

| Multivariable Regression | Adjusts for confounding variables statistically [8] | Standard approach for most quasi-experimental analyses |

| Interrupted Time Series Analysis | Models intervention effects using multiple pre/post observations [9] [4] | Evaluates effects when single pre/post measures are insufficient |

Quasi-experimental research designs occupy an essential niche in biomedical science, enabling causal inference when practical or ethical constraints preclude randomized experiments. While single-group designs offer implementation efficiency, multiple-group approaches provide substantially stronger evidence for causal relationships through comparison groups and advanced statistical adjustments. The rigorous application of these methodologies—including proper design selection, careful measurement, appropriate statistical analysis, and acknowledgment of limitations—allows biomedical researchers to generate valuable evidence for clinical practice, public health policy, and healthcare decision-making when traditional trials are not feasible. As methodological advancements continue to strengthen quasi-experimental approaches, their role in generating actionable evidence for complex biomedical questions will likely expand further.

Within the framework of a broader thesis on quasi-experimental research, understanding the distinction between single-group and multiple-group designs is paramount. This guide details the core methodological feature that separates these designs from true experiments: the absence of random assignment and the consequent challenges in establishing control [3]. In fields like drug development and public health, where randomized controlled trials (RCTs) are often impractical or unethical, quasi-experimental designs provide a critical alternative for evaluating causal relationships [1] [3]. These designs bridge the gap between observational studies and true experiments, allowing for investigation in real-world settings where researchers cannot control all influencing factors [1].

The following sections will dissect the role of randomization and control, compare specific quasi-experimental designs, and provide methodological guidance for applied researchers.

Fundamental Concepts: Randomization and Control

The Role of Randomization in Experimental Design

Randomization is the cornerstone of a true experiment. It refers to the process of randomly assigning study participants to either the treatment or control group [11] [12]. This procedure ensures that each participant has an equal chance of being placed in any group, thereby distributing both known and unknown confounding variables evenly across groups [13] [12]. The primary advantage of randomization is that it neutralizes systematic differences between groups at the outset of a study, allowing researchers to attribute any post-intervention differences in outcomes to the treatment itself [12].

The Concept of Control in Causal Inference

Control in research design serves as a benchmark for comparison. In a true experiment, the control group does not receive the intervention whose effect is being studied [13]. This group is essential for isolating the impact of the independent variable. Because of random assignment, the control group should be virtually identical to the treatment group in all respects except for the receipt of the intervention. Any difference in outcomes between these groups can then be more confidently inferred as the causal effect of the treatment [12].

How Quasi-Experiments Differ

Quasi-experimental designs are characterized by the lack of random assignment to treatment and control groups [11] [2]. In their place, researchers often use a comparison group, which is similar to a control group but is not formed through randomization [14]. This group may consist of units that are matched based on specific criteria or that naturally occur in the environment, such as students from a different school or patients from a different hospital [1] [14].

The critical limitation of this approach is selection bias [6]. Without randomization, there is no guarantee that the treatment and comparison groups are equivalent at baseline. Any observed differences in outcomes could therefore be due to these pre-existing differences rather than the intervention [3]. Consequently, while quasi-experiments can demonstrate that a relationship exists between an intervention and an outcome, they are less able to rule out alternative explanations, thus threatening the internal validity of the study [3] [15].

Table 1: Key Characteristics of True vs. Quasi-Experimental Designs

| Feature | True Experiment | Quasi-Experiment |

|---|---|---|

| Random Assignment | Yes [11] [12] | No [11] [2] |

| Control Group | Yes, formed via randomization [13] | Uses a non-randomly assigned comparison group [14] |

| Primary Strength | High internal validity; strong causal inference [12] | High external validity; feasibility in real-world settings [1] [2] |

| Primary Limitation | Can be impractical or unethical; may lack external validity [3] [12] | Lower internal validity due to potential confounding [3] [15] |

| Context | Controlled laboratory or field settings [13] | Natural, real-world environments [1] [2] |

Quasi-experimental designs can be broadly categorized into single-group and multiple-group designs, a distinction central to the overarching thesis of this research. This classification is based on whether the design incorporates an external group for comparison, which directly influences the strategy for establishing a counterfactual [16].

Single-Group Designs

Single-group designs are those in which all included units are exposed to the treatment [16]. The counterfactual is constructed using only data from the treated group itself, typically from time periods before the intervention.

One-Group Pretest-Posttest Design: This common design involves measuring the dependent variable in a single group both before (pretest) and after (posttest) an intervention [1] [6]. The change from the pretest to the posttest is inferred to be the effect of the intervention. However, this design is highly susceptible to threats to internal validity, including:

- History: External events occurring between the pretest and posttest that could affect the outcome [1] [3].

- Maturation: Natural changes within participants over time, such as growth or fatigue, that could be mistaken for a treatment effect [1] [3].

- Testing: The effect of taking the pretest on the scores of the posttest [3].

- Regression to the Mean: The statistical phenomenon where subjects selected for their extreme scores (e.g., very high or very low) naturally tend to score closer to the average on subsequent measurements [1] [3].

Interrupted Time-Series (ITS) Design: This design strengthens the one-group pretest-posttest by collecting multiple observations of the dependent variable both before and after the intervention [16] [15]. By analyzing the underlying trend before the intervention and seeing if the intervention "interrupts" this trend, researchers can make more robust causal claims. This design is particularly valuable for assessing the impact of policies or interventions at a population level [6].

Multiple-Group Designs

Multiple-group designs incorporate data from both a treated group and an untreated comparison group [16]. The use of a comparison group helps control for some of the threats to validity that plague single-group designs.

Nonequivalent Groups Design (Pretest-Posttest with a Control Group): This design mimics a true experiment but without random assignment. It involves a treatment group and a control group, both of which are measured before and after the intervention [1] [14]. Any difference in the change between the pretest and posttest for the two groups is attributed to the intervention. While stronger than single-group designs, the core threat remains selection bias, as the groups may not be comparable at baseline [1].

Difference-in-Differences (DID): This is a statistical technique used with nonequivalent group designs. It calculates the effect of an intervention by comparing the change in outcomes over time for the treatment group to the change in outcomes over time for the comparison group [6]. This method helps control for fixed differences between groups and for common trends over time [16].

Regression Discontinuity Design (RDD): This is considered one of the most methodologically rigorous quasi-experimental designs [2]. Participants are assigned to the treatment or control group based on a cutoff score on a continuous variable (e.g., students below a certain test score receive remedial tutoring) [6] [15]. By comparing outcomes of individuals just on either side of the cutoff, researchers can estimate the causal effect of the treatment with high internal validity, as assignment is based solely on the predetermined cutoff [2].

Table 2: Comparison of Single-Group and Multiple-Group Quasi-Experimental Designs

| Design Feature | Single-Group Designs | Multiple-Group Designs |

|---|---|---|

| Definition | All included units receive the treatment; no external control group [16]. | Includes both treated and untreated groups for comparison [16]. |

| Core Counterfactual | The group's own pre-intervention state [16]. | An external, untreated comparison group [16]. |

| Key Threats to Validity | History, Maturation, Testing, Instrumentation [1] [3]. | Selection Bias, Differential Attrition [1] [3]. |

| Data Requirements | Pre- and post-intervention data for the treated unit(s) [16]. | Pre- and post-intervention data for both treated and comparison units [16]. |

| Relative Strength | Useful when no comparable control group is available [16]. | Provides better control for external events (history) and maturation [1]. |

| Examples | One-Group Pretest-Posttest, Interrupted Time Series [1] [16]. | Nonequivalent Control Group, Difference-in-Differences, Regression Discontinuity [1] [6]. |

Methodological Protocols and Statistical Control

When random assignment is not possible, researchers must employ rigorous methodological protocols and statistical techniques to strengthen the validity of their quasi-experimental studies.

Design-Based Control Methods

Matching: This technique involves pairing each participant in the treatment group with one or more participants from a potential comparison pool who are similar on key pre-intervention characteristics (e.g., age, disease severity, socioeconomic status) [14]. This creates a comparison group that is more analogous to the treatment group at baseline.

- Propensity Score Matching (PSM): A popular matching method where a statistical model predicts the probability (propensity) of each unit receiving the treatment, given its observed covariates. Treatment and control units are then matched based on these scores, simulating the condition of randomization [6].

Instrumental Variables (IV): An instrumental variable is one that is correlated with the independent variable but does not have a direct effect on the dependent variable, except through its correlation with the independent variable [6]. If a valid instrument can be found, it can be used to isolate the part of the independent variable that is not correlated with the error term, thus addressing unmeasured confounding [6].

Analytical Workflows for Causal Inference

The following diagram illustrates a generalized analytical workflow for a quasi-experimental study, highlighting key decision points for mitigating bias.

Diagram 1: Quasi-Experimental Analysis Workflow

The Researcher's Toolkit: Essential Methodological Solutions

For researchers employing quasi-experimental designs, the "toolkit" consists not of physical reagents but of methodological and statistical solutions to address the inherent challenge of confounding.

Table 3: Essential Methodological Solutions for Quasi-Experimental Research

| Tool | Primary Function | Key Considerations |

|---|---|---|

| Propensity Score Matching | To create a comparison group that is statistically similar to the treatment group by matching on the probability of receiving treatment [6]. | Computationally complex; sensitive to the choice of matching algorithm; cannot control for unobserved confounding [6]. |

| Difference-in-Differences (DID) | To control for pre-existing, time-invariant differences between groups and common temporal trends by comparing the change in outcomes [16] [6]. | Relies on the "parallel trends" assumption; can be confounded by events that affect groups differently during the study period [16] [6]. |

| Instrumental Variables (IV) | To address unmeasured confounding by using a variable that influences the treatment but affects the outcome only through the treatment [6]. | Finding a valid instrument is very difficult; instruments must be strongly correlated with the treatment and satisfy exclusion restrictions [6]. |

| Regression Discontinuity | To estimate causal effects by comparing units on either side of a predetermined assignment cutoff [6] [2]. | Requires a large sample size near the cutoff; results are only directly generalizable to units close to the cutoff [2]. |

| Sensitivity Analysis | To test how robust the study's conclusions are to potential unmeasured confounding [3]. | Does not eliminate bias but quantifies how much hidden bias would be needed to alter the study's conclusions [3]. |

Quasi-experimental designs are indispensable in the researcher's arsenal for situations where RCTs are not a viable option. The fundamental distinction from true experiments lies in the absence of randomization, which is replaced by various design and statistical methods to approximate a counterfactual. The choice between single-group and multiple-group designs, a central theme of this research, involves a direct trade-off between feasibility and validity. Single-group designs are applicable when no comparison group exists but are vulnerable to many threats. Multiple-group designs offer greater internal validity but require the identification of a suitable comparison group and rigorous methods like matching or DID to mitigate selection bias. By carefully selecting the appropriate design and applying robust methodological tools, researchers in drug development, public health, and policy can derive causally plausible and impactful evidence from real-world data.

In scientific research, particularly in fields where randomized controlled trials are not feasible for ethical or practical reasons, quasi-experimental designs provide a critical methodological foundation. These designs attempt to establish cause-and-effect relationships between an independent and dependent variable, but unlike true experiments, they do not rely on random assignment of subjects to groups [17]. Instead, participants are assigned to groups based on non-random criteria, such as existing characteristics, geographical location, or timing of an intervention [17]. This paper explores the spectrum of quasi-experimental approaches, focusing on the comparative strengths and limitations of single-group and multiple-group designs within the context of applied research settings, including drug development and public health evaluation.

Quasi-experimental designs occupy a middle ground on the control continuum—offering more rigor than pre-experimental designs but less than true experimental designs [18]. They are particularly valuable when researchers need to evaluate real-world interventions that cannot be administered in laboratory settings or when withholding treatment for control purposes would be unethical [1] [17]. For instance, studying the effects of a new health policy or the impact of a natural disaster on community health outcomes typically necessitates quasi-experimental approaches because random assignment is impossible [1] [10].

Core Concepts and Terminology

Understanding quasi-experimental research requires familiarity with several key concepts:

- Independent Variable (IV): The treatment, intervention, or condition manipulated or studied for its effects on outcomes [18]. In quasi-experiments, researchers often do not have direct control over this variable [17].

- Dependent Variable (DV): The outcome measure that is hypothesized to be influenced by the independent variable [18].

- Internal Validity: The degree to which a cause-and-effect relationship between variables can be established without interference from other factors [1]. Threats to internal validity are a primary concern in quasi-experimental designs.

- External Validity: The generalizability of research results beyond the study context [1].

- Control Group: A group that does not receive the experimental treatment, used for comparison purposes [17]. In quasi-experiments, this is often called a "comparison group" since assignment is not random [10].

- Confounding Variables: Extraneous factors that may inadvertently influence the dependent variable, creating misleading interpretations about cause-and-effect relationships [1].

Single-Group Quasi-Experimental Designs

Single-group designs involve studying one group of participants who receive an intervention or treatment. These approaches are generally considered weaker than multiple-group designs but are necessary when comparison groups are unavailable or unethical to implement.

One-Group Posttest Only Design

The one-group posttest only design represents the simplest quasi-experimental approach. Researchers implement a treatment and then measure the dependent variable once after the treatment is completed [9]. For example, a researcher might implement an anti-drug education program and then immediately measure students' attitudes toward illegal drugs [9].

Key Limitations: This is considered the weakest type of quasi-experimental design due to the complete absence of both a control group and a pretest [9]. Without a comparison point, it is impossible to determine what participants' attitudes or behaviors would have been without the intervention. Results from such designs are frequently reported in media and often misinterpreted by the general public [9].

One-Group Pretest-Posttest Design

In the one-group pretest-posttest design, researchers measure the dependent variable once before implementing the treatment and once after implementation [9] [1]. This approach is similar to a within-subjects experiment where each participant is tested under both control and treatment conditions, though without counterbalancing [9].

This design improves upon the posttest-only approach by providing a baseline measurement, but it remains vulnerable to several threats to internal validity [9] [1]:

- History: External events between pretest and posttest may influence outcomes.

- Maturation: Natural changes in participants over time may affect results.

- Testing: Exposure to the pretest may influence posttest performance.

- Instrumentation: Changes in measurement tools or procedures may create apparent effects.

- Regression to the Mean: Extreme scores on the pretest may naturally move toward average on the posttest.

- Spontaneous Remission: Natural improvement over time without treatment.

Table 1: Threats to Internal Validity in One-Group Pretest-Posttest Designs

| Threat Type | Description | Example |

|---|---|---|

| History | External events between pretest and posttest influence outcomes | A celebrity drug overdose occurs during an anti-drug program [9] |

| Maturation | Natural developmental changes affect results | Participants become less impulsive with age during a year-long program [9] |

| Testing | Taking the pretest influences posttest performance | Completing a drug attitude survey prompts reflection that changes attitudes [9] |

| Instrumentation | Changes in measurement tools or procedures affect scores | Observers become more skilled or fatigued over time [9] |

| Regression to the Mean | Extreme pretest scores naturally move toward average | Students with extremely high drug attitudes scores show lower scores at posttest without program effect [9] |

| Spontaneous Remission | Natural improvement over time without treatment | Depression symptoms improve without therapeutic intervention [9] |

Interrupted Time Series Design

A more robust single-group approach is the interrupted time series design, which involves multiple measurements of the dependent variable both before and after an intervention [9] [10]. This design strengthens inference by establishing trends before the intervention and tracking persistence of effects afterward [9].

For example, a researcher might measure student absences per week for several weeks, implement an attendance-tracking intervention, then continue measuring absences for several more weeks [9]. If an immediate and sustained drop in absences follows the intervention, this provides stronger evidence for treatment effect than a simple pretest-posttest design [9]. The multiple measurement points help distinguish true treatment effects from normal variability [9].

Diagram 1: Interrupted Time Series Design

Multiple-Group Quasi-Experimental Designs

Multiple-group designs provide stronger evidence for causal relationships by including comparison groups that do not receive the experimental treatment or receive different versions of it.

Nonequivalent Groups Design

The nonequivalent groups design is the most common multiple-group quasi-experimental approach [17]. Researchers select existing groups that appear similar, with only one group receiving the treatment [17] [10]. The critical limitation is that without random assignment, the groups may differ in important ways—they are "nonequivalent" [17] [10].

For example, a researcher might study the effect of an app-based memory game on cognitive function by recruiting older adults from two similar senior centers [1]. One center receives the game intervention, while the other continues with usual activities. Both groups complete memory tests before and after the intervention period [1]. Any differences in posttest scores between the groups, assuming similar pretest scores, might be attributed to the intervention [1].

Posttest-Only Design with Control Group

This design includes both an experimental group that receives an intervention and a control group that does not, with both groups measured only after the intervention [1]. For instance, researchers might implement a new hand hygiene intervention at one hospital but not at a similar hospital, then compare infection rates after three months [1].

Key Limitations: The absence of pretest measurements makes it impossible to determine if groups were equivalent before the intervention [1]. Observed differences in posttest measures could result from either the intervention or pre-existing differences between groups [1].

Pretest-Posttest Design with Control Group

This stronger multiple-group design includes pretest measurements for both treatment and control groups before the intervention, followed by posttest measurements after [1]. Similar pretest scores between groups increase confidence that any posttest differences result from the intervention [1].

Despite being one of the strongest quasi-experimental designs, it remains vulnerable to threats, particularly selection biases and differential history effects [1]. If participants are not randomized, unmeasured confounding variables might explain observed effects [1]. Additionally, external events might differentially affect the treatment and control groups between pretest and posttest measurements [1].

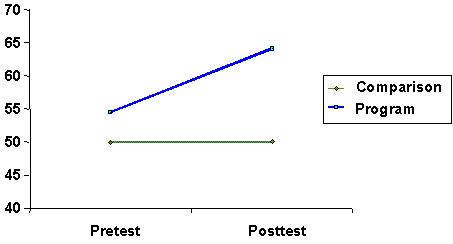

Diagram 2: Pretest-Posttest Design with Control Group

Comparative Analysis: Single-Group vs. Multiple-Group Designs

The choice between single-group and multiple-group designs involves trade-offs between practical feasibility and scientific rigor. The table below summarizes key comparative aspects:

Table 2: Comparison of Single-Group and Multiple-Group Quasi-Experimental Designs

| Design Characteristic | Single-Group Designs | Multiple-Group Designs |

|---|---|---|

| Control Requirements | Minimal control needed; suitable when only one group is accessible [9] | Requires access to multiple comparable groups [1] |

| Internal Validity | Lower; vulnerable to history, maturation, testing, instrumentation, regression to the mean [9] | Higher; controls for several threats through comparison groups [1] |

| External Validity | Potentially higher for the specific population studied [17] | May be limited if groups are not representative [1] |

| Implementation Practicality | Generally more practical and cost-effective [9] | More complex and resource-intensive [19] |

| Statistical Power | Limited without comparison group [9] | Enhanced through between-group comparisons [20] |

| Causal Inference Strength | Weak; cannot rule out many alternative explanations [9] | Moderate; can rule out some alternative explanations [1] |

| Common Applications | Preliminary studies, program evaluations with limited resources [9] | Policy evaluations, comparative effectiveness research [1] [17] |

Methodological Considerations and Best Practices

Enhancing Internal Validity in Quasi-Experimental Designs

Researchers can employ several strategies to strengthen quasi-experimental designs:

Matching Techniques: When random assignment is impossible, researchers can match participants between treatment and control groups based on key demographic or clinical variables [10]. Individual matching pairs participants with similar attributes, then splits the pair between groups [10]. Aggregate matching ensures the overall comparison group is similar to the treatment group on important variables [10].

Statistical Control: Advanced statistical methods can adjust for pre-existing differences between groups, though this cannot completely compensate for lack of randomization [1].

Multiple Pretest Measurements: Collecting several baseline measurements helps establish trends and account for normal variability before intervention [9].

Careful Selection of Comparison Groups: Choosing comparison groups from similar settings (e.g., same hospital system, similar communities) reduces confounding [1] [10].

Analytical Approaches for Multiple-Group Designs

When analyzing data from multiple-group designs, researchers must select appropriate statistical methods:

Multiple Comparison Procedures: When comparing more than two groups, researchers must account for increased Type I error rates using specialized procedures [20]:

Handling Overlapping Group Membership: When participants belong to multiple groups, standard ANOVA becomes problematic, and pairwise comparison methods with appropriate corrections are recommended [21].

Applications in Drug Development and Public Health Research

Quasi-experimental designs have particular relevance in pharmaceutical and public health research where randomized trials may be impractical or unethical.

Natural Experiments in Health Policy Research

Natural experiments occur when comparable groups are created by real-world differences rather than researcher manipulation [17] [10]. The Oregon Health Study represents a classic example, where a lottery system for Medicaid enrollment created natural treatment and control groups for studying health insurance effects [17]. Such approaches enable research on important policy questions that could not be studied through randomized designs for ethical reasons [17].

Interrupted Time Series in Medication Adherence Studies

Time series designs can evaluate the impact of new medications or adherence interventions by examining trends in health outcomes before and after implementation [9]. For instance, researchers might analyze hospital admission rates for heart failure patients before and after introducing a new medication management program, using multiple data points to establish causal inference [9].

Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Quasi-Experimental Studies

| Research Component | Function/Application | Implementation Example |

|---|---|---|

| Validated Assessment Tools | Standardized measurement of dependent variables | Memory tests in cognitive intervention studies [1] |

| Data Collection Platforms | Efficient gathering of pretest/posttest data | Electronic survey systems for patient-reported outcomes [1] |

| Statistical Software with Multiple Comparison Capabilities | Appropriate analysis of group differences | Software implementing Tukey, Dunnett, and Games-Howell procedures [20] |

| TREND Statement Guidelines | Reporting standards for nonrandomized designs | 22-item checklist for transparent reporting of quasi-experimental studies [1] |

| Matching Algorithms | Creating comparable treatment and control groups | Procedures for individual or aggregate matching on prognostic variables [10] |

The spectrum from single-group to multiple-group quasi-experimental designs offers researchers a range of methodological options for studying causal relationships when randomized trials are not feasible. Single-group designs, including posttest-only, pretest-posttest, and interrupted time series approaches, provide practical solutions for preliminary investigations and situations with limited resource availability, though they suffer from significant threats to internal validity. Multiple-group designs, particularly nonequivalent group designs with pretest and posttest measurements, offer stronger causal inference through comparison groups, though they require greater resources and remain vulnerable to selection biases.

The choice between these approaches should be guided by research questions, practical constraints, and ethical considerations. While multiple-group designs generally provide more rigorous evidence, well-executed single-group designs—particularly interrupted time series—can yield valuable insights when enhanced with multiple measurement points and careful attention to threats to internal validity. As quasi-experimental methodologies continue to evolve, they remain indispensable tools for researchers addressing critical questions in drug development, public health, and social policy.

Independent and Dependent Variables in Quasi-Experimental Contexts

Quasi-experimental designs are research methodologies used to estimate causal relationships when true experimental controls are not feasible [15]. These designs occupy a crucial space between observational studies and true experiments, sharing similarities with randomized controlled trials but specifically lacking random assignment to treatment or control groups [2]. Instead, assignment to treatment condition typically proceeds as it would naturally occur in the absence of an experiment [2]. The fundamental purpose of quasi-experimental research is to investigate cause-and-effect relationships between variables in real-world settings where researchers cannot employ traditional experimental methods due to ethical constraints, practical limitations, or resource restrictions [22].

In these designs, researchers actively study the effects of independent variables on dependent variables without full experimental control [22]. This approach is particularly valuable in social sciences, public health, education, and policy analysis where manipulating variables or randomly assigning participants could be unethical or impractical [2]. For example, studying the health effects of a natural disaster or evaluating the impact of a new public policy often necessitates quasi-experimental approaches because researchers cannot control who receives the "treatment" (e.g., who experiences the disaster or is affected by the policy) [1] [17].

Core Components: Independent and Dependent Variables

Independent Variables in Quasi-Experiments

In quasi-experimental research, the independent variable (IV) is the factor or condition that researchers aim to study, though they often cannot manipulate it directly as in true experiments [22]. Unlike controlled experiments where investigators deliberately manipulate the IV, quasi-experimental designs frequently deal with naturally occurring variables or pre-existing conditions [2] [22]. These are sometimes termed "quasi-independent" variables because they lack the controlled manipulation characteristic of true experiments [15].

Examples of independent variables in quasi-experimental contexts include:

- Naturally occurring events: Natural disasters, policy changes, or public health interventions [1] [17]

- Pre-existing group characteristics: Age, gender, diagnostic status, or institutional affiliation [2] [15]

- Program participation: Enrollment in an educational intervention, exposure to a training program, or receipt of a social benefit [10] [23]

A key characteristic of independent variables in quasi-experiments is that their "levels" or variations occur without researcher intervention. For instance, in studying the health impacts of a hurricane, the independent variable (hurricane exposure) occurs naturally, and researchers simply identify groups with different exposure levels [1].

Dependent Variables in Quasi-Experiments

The dependent variable (DV) represents the outcome or response that researchers measure to assess the effects of changes in the independent variable [22]. These variables capture the anticipated effects or consequences of the quasi-independent variable and are measured through quantitative data collection methods [15].

Examples of dependent variables in quasi-experimental research include:

- Behavioral measures: Healthcare utilization rates, academic performance, or employment outcomes [1] [24]

- Psychological indicators: Stress levels, attitudes, or cognitive test scores [1] [9]

- Physiological measures: Blood pressure, infection rates, or mortality statistics [1]

- System-level outcomes: Organizational productivity, public health statistics, or economic indicators [23]

The dependent variable must be precisely defined and reliably measured, as the validity of causal inferences depends on accurately detecting changes that might be attributed to the independent variable [1] [22].

Table 1: Characteristics of Variables in Quasi-Experimental Designs

| Variable Type | Definition | Researcher Control | Examples |

|---|---|---|---|

| Independent Variable | The presumed cause or intervention being studied | Limited or none; often naturally occurring or pre-existing | Policy changes, natural disasters, pre-existing group characteristics [1] [2] [22] |

| Dependent Variable | The outcome measured to assess intervention effects | Direct control over measurement but not the outcome itself | Test scores, health outcomes, behavioral observations [1] [22] |

| Quasi-Independent Variable | A specific type of independent variable using inherent characteristics | None; characteristics are inherent to participants | Eye color, diagnostic status, age, gender [15] |

Quasi-Experimental Design Typologies

Single-Group Quasi-Experimental Designs

Single-group designs involve studying one group of participants who receive an intervention or are exposed to a condition, with measurements taken to assess potential effects [9]. These designs are particularly useful when no comparable control group is available, though they present significant challenges for establishing causal inference [9] [24].

One-Group Posttest-Only Design is the simplest form of quasi-experimental design [9] [10]. In this approach, a single group is exposed to the independent variable, and data on the dependent variable is collected only after the intervention [9] [22]. For example, researchers might implement a new teaching method and then measure student performance immediately afterward [9]. The major limitation of this design is the absence of both a control group and a pretest, making it difficult to determine what outcomes would have occurred without the intervention [9] [10].

One-Group Pretest-Posttest Design extends the previous approach by including a measurement of the dependent variable before the intervention [1] [9] [10]. Participants are measured on the dependent variable (pretest), exposed to the independent variable, and then measured again (posttest) [1] [9]. The effect of the intervention is inferred from differences between pretest and posttest results [1]. For example, researchers might measure weight loss program participants before and after a three-month high-intensity training intervention [1]. Despite the inclusion of a pretest, this design remains vulnerable to multiple threats to internal validity, including history effects, maturation, testing effects, instrumentation, and regression to the mean [1] [9] [23].

Interrupted Time-Series Design incorporates multiple observations of the dependent variable both before and after the implementation of the independent variable [9] [10] [24]. This approach involves collecting data at regular intervals before the intervention to establish a baseline trend, then continuing data collection after the intervention to observe any changes in that trend [9] [23]. For example, a manufacturing company might measure worker productivity weekly for a year, implement a change in work shift length, and continue measuring productivity to assess the intervention's impact [9] [23]. This design strengthens causal inference by demonstrating whether changes persist beyond normal variability [9] [23].

Multiple-Group Quasi-Experimental Designs

Multiple-group designs incorporate comparison groups that do not receive the experimental intervention, providing valuable reference points for interpreting results [1] [17] [10].

Nonequivalent Groups Design (also called pretest-posttest with control group design) involves selecting groups that appear similar but where only one receives the treatment [1] [17] [23]. The researcher selects a group to receive the treatment and another with similar characteristics to serve as the control group [1] [10]. Both groups complete a pretest, after which the treatment group receives the intervention, and finally, both groups complete a posttest [1] [23]. For example, in a study examining memory improvement in older adults, participants from one senior center might use an app-based game while those from another similar center engage in usual activities, with both groups completing memory tests before and after the intervention period [1]. The primary limitation is that without random assignment, the groups may differ in important ways that affect the outcome [1] [17] [23].

Posttest-Only Design with Nonequivalent Groups uses two groups—an experimental group that receives an intervention and a control group that does not—with measurements taken only after the intervention [1] [25]. For example, researchers might compare infection rates between two similar hospitals after implementing a new hand hygiene protocol at only one facility [1]. This design does not include pretest measurements, making it difficult to determine if groups were comparable before the intervention [1] [25].

Regression Discontinuity Design assigns participants to treatment conditions based on a specific cutoff score on a predetermined measure [17] [22] [15]. Those just above and below the cutoff are considered comparable, allowing for causal inference about the treatment's effect [17] [15]. For example, students scoring just below a proficiency threshold might receive additional tutoring while those just above do not, with subsequent academic performance compared between groups [17] [22]. This design provides strong causal evidence when implemented properly [2].

Methodological Considerations and Protocols

Implementation Protocols for Key Designs

Protocol for One-Group Pretest-Posttest Design

- Participant Selection: Identify participants based on convenience and suitability for the study [1]. Develop specific eligibility criteria related to the research question [1].

- Pretest Administration: Measure the dependent variable before implementing the intervention [1] [9]. Ensure measurement tools are valid and reliable for the construct being assessed [1].

- Intervention Implementation: Expose participants to the independent variable under controlled conditions when possible [1]. Document intervention details thoroughly for replication.

- Posttest Administration: Measure the dependent variable again after the intervention using the same protocol as the pretest [1] [9].

- Data Analysis: Compare pretest and posttest scores using appropriate statistical tests (e.g., paired t-tests) [1]. Calculate effect sizes to determine practical significance.

Protocol for Nonequivalent Groups Design

- Group Selection: Identify existing groups that appear similar in relevant characteristics [1] [17] [23]. Select one group to receive the treatment and another to serve as the control [1].

- Pretest Administration: Measure the dependent variable in both groups before the intervention [1] [23]. Collect demographic and other potentially confounding variables to assess group similarity [1].

- Treatment Implementation: Implement the intervention in the treatment group only [1]. The control group continues with standard conditions or receives an alternative intervention [1] [17].

- Posttest Administration: Measure the dependent variable in both groups after the intervention period [1] [23].

- Data Analysis: Test for pretest similarity between groups [1]. Analyze posttest scores using statistical methods that control for pretest differences (e.g., ANCOVA) [1] [23]. Examine whether demographic variables need statistical control [1].

Protocol for Interrupted Time-Series Design

- Baseline Establishment: Collect multiple measurements of the dependent variable at regular intervals before the intervention [9] [23]. Ensure sufficient data points to establish a stable trend (typically at least 5-10 observations) [9].

- Intervention Implementation: Introduce the independent variable at a clearly defined point in time [9] [23].

- Post-Intervention Monitoring: Continue collecting measurements at the same intervals after the intervention [9] [23]. Maintain data collection long enough to detect sustained effects.

- Data Analysis: Use time-series analysis techniques to determine if the intervention caused a change in the level or trend of the dependent variable beyond what would be expected based on pre-intervention patterns [9] [23].

Threats to Validity and Mitigation Strategies

Quasi-experimental designs face significant threats to internal validity—the approximate truth about inferences regarding cause-effect relationships [2] [22]. Understanding these threats is essential for designing robust studies and interpreting results appropriately.

Table 2: Threats to Internal Validity in Quasi-Experimental Designs

| Threat | Description | Most Vulnerable Designs | Mitigation Strategies |

|---|---|---|---|

| History | External events between pretest and posttest that influence outcomes [1] [9] [23] | One-group pretest-posttest [1] [9] | Include comparison groups; use time-series with multiple measurements [9] [10] |

| Maturation | Natural changes in participants over time that affect results [1] [9] [23] | One-group pretest-posttest [1] [9] | Include control groups; statistical controls [1] [23] |

| Selection Bias | Systematic differences between groups at baseline due to non-random assignment [1] [17] [22] | All multiple-group designs [1] [17] | Careful group matching; statistical controls; propensity score matching [10] [22] |

| Regression to Mean | Extreme scores tending toward average on retesting [1] [9] [23] | Designs selecting participants based on extreme scores [1] [9] | Use comparison groups; avoid selecting based on extreme scores [9] [23] |

| Testing Effects | Changes in scores due to familiarity with measures [9] | Pretest-posttest designs [9] | Use different but equivalent forms; include comparison groups [9] |

| Instrumentation | Changes in measurement tools or procedures over time [9] | Time-series; pretest-posttest [9] | Standardize measurement procedures; calibrate instruments [9] |

Table 3: Research Reagent Solutions for Quasi-Experimental Research

| Research Tool | Function | Application Context |

|---|---|---|

| TREND Guidelines | 22-item checklist for transparent reporting of nonrandomized designs [1] | Improving reporting quality and methodological rigor [1] |

| Propensity Score Matching | Statistical technique to create comparable treatment and control groups by matching on predicted probability of group membership [22] | Balancing groups on observed covariates in nonrandomized studies [22] |

| Statistical Control Methods | Techniques like regression analysis to statistically adjust for group differences [2] [25] | Accounting for confounding variables in analysis phase [2] |

| Standardized Measurement Instruments | Validated tools for assessing dependent variables [1] | Ensuring reliable and valid outcome measurement across groups and time [1] |

| Time-Series Analysis | Statistical methods for analyzing data collected at regular intervals over time [9] [23] | Identifying trends and intervention effects in time-series designs [9] [23] |

Comparative Analysis and Design Selection

Comparative Strengths and Limitations

The choice between single-group and multiple-group quasi-experimental designs involves trade-offs between practical feasibility and scientific rigor. Single-group designs are typically easier to implement, require fewer participants, and are more feasible when suitable comparison groups cannot be identified [9] [10]. However, they suffer from significant limitations in establishing causal relationships due to the inability to rule out many threats to internal validity [1] [9].

Multiple-group designs provide stronger evidence for causal inference by offering a reference point for comparing outcomes [1] [17]. The inclusion of comparison groups helps researchers account for external events, maturation effects, and other threats that might otherwise be attributed to the intervention [1] [23]. However, these designs require identifying appropriate comparison groups and managing potential selection biases [17] [23].

Table 4: Comparative Analysis of Quasi-Experimental Designs

| Design Type | Internal Validity | External Validity | Implementation Practicality | Causal Inference Strength |

|---|---|---|---|---|

| One-Group Posttest-Only | Very Low [9] [10] | Moderate [9] | High [9] | Very Weak [9] [10] |

| One-Group Pretest-Posttest | Low [1] [9] | Moderate [1] | High [1] | Weak [1] [9] |

| Interrupted Time-Series | Moderate-High [9] [23] | High [9] | Moderate [9] | Moderate [9] [23] |

| Nonequivalent Groups | Moderate [1] [17] | High [17] | Moderate [1] | Moderate [1] [17] |

| Regression Discontinuity | High [2] [15] | Limited to cutoff area [17] | Moderate [17] | Strong [2] [15] |

Design Selection Framework

Selecting an appropriate quasi-experimental design requires careful consideration of research questions, practical constraints, and ethical considerations. The following decision framework can guide researchers:

Assess Feasibility of Comparison Groups: If suitable comparison groups are available, multiple-group designs are generally preferred for their stronger causal inference capabilities [1] [17]. When comparison groups cannot be identified, single-group designs may be the only option, though researchers should strengthen them through multiple pretest and posttest measurements when possible [9] [10].

Consider Measurement Opportunities: When only post-intervention measurement is possible, posttest-only designs may be necessary despite their limitations [1] [9]. When both pre- and post-intervention measurements are feasible, pretest-posttest designs provide valuable baseline data [1] [9]. When resources allow for multiple measurements over time, time-series designs offer stronger causal evidence [9] [23].

Evaluate Assignment Mechanisms: When participants are naturally assigned to conditions based on a cutoff score, regression discontinuity designs provide particularly strong causal evidence [17] [2] [15]. When groups are formed through self-selection or administrative processes, nonequivalent group designs with careful matching are appropriate [17] [10].

Balance Practical and Scientific Considerations: While more complex designs generally offer stronger causal inference, they also require greater resources and methodological expertise [22]. Researchers must balance scientific ideals with practical constraints when selecting designs [17] [22].

Quasi-experimental designs provide essential methodological approaches for investigating causal relationships when randomized experiments are not feasible or ethical [1] [17]. These designs span a continuum from single-group approaches, which offer practical advantages but limited causal inference, to multiple-group designs that provide stronger evidence for causal relationships while requiring more complex implementation [1] [9] [17].

The core components of any quasi-experimental study are the independent variable (the presumed cause or intervention) and the dependent variable (the measured outcome) [22]. The relationship between these variables is investigated under naturalistic conditions where researchers lack full control over assignment to treatment conditions [2] [22]. This fundamental characteristic distinguishes quasi-experiments from true experiments and introduces unique methodological challenges, particularly regarding internal validity [2] [22].

When implementing quasi-experimental research, careful design selection is crucial [17] [22]. Researchers must balance practical constraints with methodological rigor, selecting designs that maximize causal inference within the limitations of their research context [17] [22]. Additionally, comprehensive reporting using guidelines like TREND enhances transparency and allows consumers of research to properly evaluate findings [1]. Through thoughtful application of these principles, quasi-experimental designs continue to make valuable contributions to knowledge across diverse fields of inquiry [1] [15].

Quasi-experimental designs (QEDs) represent a critical class of research methodologies employed when randomized controlled trials (RCTs) are not feasible or ethical. These designs enable researchers to estimate causal effects in real-world settings where random assignment is impractical. This technical guide provides an in-depth examination of the ethical and practical rationales for selecting QEDs, framed within the context of a broader thesis comparing single-group and multiple-group approaches. Aimed at researchers, scientists, and drug development professionals, the article synthesizes current methodological frameworks, presents structured comparisons of design features, and outlines detailed experimental protocols. Through standardized data presentation and visual workflows, we aim to equip practitioners with the necessary tools to implement rigorous quasi-experimental research in applied settings, particularly where ethical constraints or practical limitations preclude randomized designs.

Quasi-experimental design is a research methodology that occupies the strategic space between the rigorous control of true experimental designs and the observational nature of non-experimental studies [1]. These designs aim to establish cause-and-effect relationships but lack the random assignment to treatment and control groups that characterizes randomized controlled trials (RCTs) [2] [17]. The fundamental characteristic of QEDs is that assignment to treatment conditions occurs through non-random mechanisms, often through self-selection, administrative decisions, or natural circumstances [5]. This key difference makes QEDs particularly valuable for research questions where randomization is impossible or unethical, while still allowing for stronger causal inferences than purely observational approaches.

The conceptual foundation of QEDs rests on their ability to approximate the counterfactual logic of experimental design through methodological creativity rather than random assignment [26]. In a true experiment, random assignment ensures that, on average, treatment and control groups are equivalent in both observed and unobserved characteristics, allowing any post-intervention differences to be attributed to the treatment [2]. QEDs, by contrast, must address potential selection bias and confounding through design features such as pre-test measurements, multiple comparison groups, or statistical controls [1] [27]. This methodological approach enables researchers to draw reasonable causal inferences when practical or ethical constraints prevent randomization.

Within the research methodology hierarchy, QEDs are characterized by several key features: they manipulate an independent variable, measure a dependent variable, but lack random assignment [17]. They typically employ comparison groups rather than true control groups, with these groups often being "nonequivalent" due to the absence of randomization [28]. The internal validity of QEDs—the confidence that observed effects are truly caused by the intervention—is generally lower than in true experiments, but their external validity—the generalizability to real-world settings—is often higher [5] [17]. This tradeoff positions QEDs as particularly valuable for evaluating interventions in authentic contexts where perfect laboratory control is neither possible nor desirable.

Table 1: Fundamental Characteristics of Quasi-Experimental Designs

| Characteristic | True Experiments | Quasi-Experiments | Observational Studies |

|---|---|---|---|

| Assignment Mechanism | Random assignment | Non-random assignment | No assignment |

| Control Over Treatment | Researcher-controlled | Often studies pre-existing treatments | No researcher control |

| Control Groups | Required | Not required but commonly used | Not applicable |

| Internal Validity | High | Moderate | Low |

| External Validity | Often lower | Higher | Highest |

| Primary Use Case | Efficacy under controlled conditions | Effectiveness in real-world settings | Identifying associations |

Ethical and Practical Rationales for Selection

Ethical Rationales

Ethical considerations frequently necessitate the use of quasi-experimental designs when randomized assignment would be morally questionable or directly harmful. In healthcare research, it is often ethically impermissible to withhold standard treatment or proven interventions from patients solely for research purposes [27]. For instance, studying the effect of a new surgical technique alongside a base treatment would be unethical if researchers created a control group that received no treatment at all, as leaving patients without care violates fundamental medical ethics [27]. Similarly, in public health policy evaluation, randomly providing interventions such as health insurance to some while deliberately withholding it from others would be ethically problematic, as demonstrated by the Oregon Health Study, which instead leveraged a natural lottery system to study coverage effects [17].

Ethical constraints also emerge when studying vulnerable populations or sensitive behaviors where random assignment could cause harm or infringe upon rights [2]. Research on topics such as child discipline practices (e.g., spanking effects) cannot randomly assign parents to implement potentially harmful behaviors, making quasi-experimental approaches that leverage existing differences the only ethically viable option [2]. Likewise, in educational settings, deliberately withholding beneficial programs from students for research purposes typically violates ethical standards, leading researchers to use quasi-experimental comparisons between naturally occurring groups [1]. These ethical imperatives make QEDs not merely a methodological alternative but an ethical necessity across many research domains.

Practical Rationales

Practical constraints constitute the second major rationale for selecting quasi-experimental designs, occurring when randomization is theoretically possible but practically unfeasible. Financial limitations often preclude true experiments, as RCTs typically require substantial funding for participant recruitment, implementation of interventions, and management of control conditions [17] [26]. QEDs can frequently leverage existing data sources and naturally occurring interventions, significantly reducing research costs [17]. Logistical challenges also favor quasi-experimental approaches, particularly when studying interventions at organizational, community, or policy levels where random assignment is administratively impossible [1]. For example, evaluating the impact of a new law or large-scale public health initiative typically requires quasi-experimental methods because researchers cannot randomly assign jurisdictions to implement different policies [26].

Practical feasibility issues also arise when researchers lack authority to control treatment assignment, such as when studying existing organizational practices, policy changes, or medical treatments determined by clinicians rather than researchers [17] [27]. In these situations, methodological flexibility becomes essential, with QEDs allowing researchers to study important questions that cannot be answered through true experiments. Additionally, timeline constraints often favor quasi-experimental approaches, as they can frequently be implemented more quickly than RCTs, which require extensive planning for randomization procedures and control condition management [5]. This practical advantage makes QEDs particularly valuable for rapidly evaluating emerging public health threats or policy responses, as evidenced by their extensive use during the COVID-19 pandemic to assess restriction effects [26].

Table 2: Ethical and Practical Rationales for Quasi-Experimental Designs

| Rationale Category | Specific Scenarios | Exemplary Research Contexts |

|---|---|---|

| Ethical Constraints | Withholding proven treatment is unethical | Medical procedures research [27] |

| Random assignment to harmful conditions is unethical | Studies of harmful environments or practices [2] | |

| Equity concerns in resource distribution | Public health interventions [17] | |

| Practical Constraints | Researcher lacks control over assignment | Policy evaluations, organizational changes [17] |

| Financial limitations | Studies using existing administrative data [26] | |

| Timeline constraints | Rapid response to emerging public health issues [26] | |

| Participant recruitment challenges | Studies of rare conditions or hard-to-reach populations |

Core Methodological Approaches: Single-Group Versus Multiple-Group Designs

Single-Group Quasi-Experimental Designs

Single-group designs represent the most fundamental category of quasi-experimental approaches, characterized by the absence of a separate comparison group. The one-group posttest-only design involves implementing a treatment and measuring the outcome once after implementation [9]. This approach provides no information about pre-intervention status and lacks any comparison, making it vulnerable to numerous validity threats [9]. For example, if researchers implement an anti-drug education program and then measure student attitudes, they cannot determine whether the attitudes resulted from the program or pre-existing dispositions [9]. Despite these limitations, this design is frequently employed in preliminary investigations or when no other option is feasible.

The one-group pretest-posttest design enhances the basic posttest-only approach by incorporating a measurement before the intervention [1] [9]. This allows researchers to document change over time within the same group, providing some basis for inferring treatment effects. However, this design remains vulnerable to multiple threats to internal validity, including history (external events occurring between measurements), maturation (natural changes in participants over time), testing (effects of taking the pretest on posttest performance), instrumentation (changes in measurement tools or procedures), and regression to the mean (statistical tendency for extreme scores to become less extreme upon retesting) [1] [9]. For example, in a study examining high-intensity training for weight loss, participants might simultaneously begin using a new dietary supplement promoted on social media, creating a historical threat to validity [1].

The interrupted time-series design strengthens the basic pretest-posttest approach by incorporating multiple measurements both before and after the intervention [9]. This design allows researchers to document trends prior to the intervention and determine whether the intervention alters these trends, providing stronger causal evidence than single pretest-posttest comparisons [9] [24]. For instance, researchers might measure worker productivity weekly for a year before and after implementing a reduced work shift, with a clear change in the trend following the intervention providing evidence of effectiveness [9]. The multiple data points help distinguish intervention effects from normal fluctuations and strengthen causal inferences [26].

Multiple-Group Quasi-Experimental Designs

Multiple-group designs incorporate comparison groups to strengthen causal inferences by providing approximations of what would have happened without the intervention. The nonequivalent groups design is the most common multiple-group approach, featuring both treatment and comparison groups that are not established through random assignment [17] [28]. Researchers typically select existing groups that appear similar, with one group receiving the treatment and the other serving as a comparison [17]. For example, in evaluating a new hand hygiene intervention, researchers might implement it in one hospital while using another similar hospital as a comparison, measuring infection rates at both locations after implementation [1]. The critical limitation is that the groups may differ in unknown ways that influence outcomes, potentially confounding results [1].