Solving Recovery Issues in Accuracy Testing: A Strategic Guide for Robust Drug Development

This article provides a comprehensive framework for researchers and drug development professionals to address critical recovery issues in predictive model accuracy testing.

Solving Recovery Issues in Accuracy Testing: A Strategic Guide for Robust Drug Development

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to address critical recovery issues in predictive model accuracy testing. Covering foundational principles, methodological application, troubleshooting, and advanced validation, it synthesizes current best practices to enhance the reliability and generalizability of models in biomedical research. Readers will learn to navigate common pitfalls like data leakage and overfitting, implement robust data splitting strategies, and leverage cross-validation to build models that deliver trustworthy, real-world performance, ultimately accelerating the path to successful clinical applications.

Why Recovery Accuracy is the Cornerstone of Reliable Predictive Models in Biomedicine

Troubleshooting Guide: Common Scenarios and Solutions

No Assay Window in TR-FRET or Z'-LYTE Assays

A complete lack of an assay window often stems from instrument setup issues or development reaction problems [1].

- Instrument Setup: For TR-FRET assays, the most common reason is incorrect emission filters. Verify your microplate reader's TR-FRET setup using the recommended filters for your specific instrument [1].

- Development Reaction Test: To isolate the problem, perform a control test using buffer in place of missing reagents. For Z'-LYTE assays, test a 100% phosphopeptide control (no development reagent) against a 0% phosphopeptide substrate (with 10-fold higher development reagent). A properly functioning system should show a 10-fold ratio difference [1].

Inconsistent Results Between Laboratories

When different labs obtain varying EC50/IC50 values for the same compound, the primary culprit is often stock solution preparation [1].

- Solution Preparation: Pay careful attention to 1 mM stock solution preparation methods, as minor differences can significantly impact results [1].

- Cellular vs. Biochemical Assays: If a compound is active in biochemical assays but inactive in cell-based assays, it may be unable to cross the cell membrane, be pumped out of cells, or be targeting an inactive kinase form [1].

Clinical Trial Rescue Indicators

When a clinical trial shows significant distress, these red flags indicate a potential "recovery failure" requiring immediate intervention [2]:

- Poor Enrollment: Stalled patient recruitment or delayed site activation [2] [3]

- Data Issues: Inconsistent data collection, protocol deviations, or data integrity concerns [2]

- Operational Breakdowns: Lack of oversight, misaligned CRO performance, or high dropout rates [2] [3]

Frequently Asked Questions (FAQs)

Q: What are the primary reasons for clinical drug development failure?

Approximately 90% of clinical drug development fails, with four main reasons identified from 2010-2017 trial data [4]:

- Lack of clinical efficacy (40-50%): The drug doesn't work as expected in human populations [4]

- Unmanageable toxicity (30%): Safety concerns outweigh potential benefits [4]

- Poor drug-like properties (10-15%): Suboptimal pharmacokinetics or bioavailability [4]

- Commercial/strategic issues (10%): Lack of market need or poor planning [4]

Q: How can I determine if my method's accuracy is acceptable?

Use interference and recovery experiments to estimate systematic error [5]:

- Interference Experiments: Test for constant systematic error caused by substances that may be present in patient specimens [5]

- Recovery Experiments: Estimate proportional systematic error by adding known amounts of analyte to patient samples [5]

- Acceptability Criteria: Compare observed error with allowable error based on clinical requirements (e.g., CLIA proficiency testing criteria) [5]

Q: What is the STAR approach and how can it improve drug optimization?

The Structure–Tissue Exposure/Selectivity–Activity Relationship (STAR) classifies drug candidates based on [4]:

- Potency/Selectivity: Drug's affinity and specificity for its target [4]

- Tissue Exposure/Selectivity: How the drug distributes between disease and normal tissues [4]

- Required Dose: Amount needed to balance clinical efficacy and toxicity [4]

STAR categorizes drugs into four classes to guide development decisions and improve success rates [4].

Table: STAR Drug Classification System

| Class | Specificity/Potency | Tissue Exposure/Selectivity | Clinical Outcome | Development Recommendation |

|---|---|---|---|---|

| I | High | High | Superior efficacy/safety with low dose | High success rate; advance |

| II | High | Low | Efficacy with high toxicity at high dose | Cautiously evaluate |

| III | Adequate | High | Efficacy with manageable toxicity at low dose | Often overlooked; promising |

| IV | Low | Low | Inadequate efficacy/safety | Terminate early |

Q: What are the core steps in rescuing a failing clinical trial?

A clinical trial rescue involves five key steps [2]:

- Root Cause Analysis: Diagnose fundamental issues using operational metrics and site feedback [2]

- Site Strategy Reboot: Close underperforming sites and activate high-recruiting ones, potentially in new regions [2]

- Retraining & Realignment: Provide rapid protocol refreshers to fix eligibility, compliance, and data-entry errors [2]

- Oversight Upgrade: Implement KPIs, dashboards, weekly reviews, and clear issue escalation processes [2]

- Regulatory & Operational Clean-up: Ensure protocol amendments, consent updates, and data re-validation with full auditability [2]

Experimental Protocols for Accuracy Testing

Recovery Experiment Methodology

Purpose: Estimate proportional systematic error whose magnitude increases with analyte concentration [5].

Procedure [5]:

- Sample Preparation: Prepare pairs of test samples

- Add a small volume (≤10% of total) of standard analyte solution to patient specimen

- Add equal volume of pure solvent to a second aliquot of the same specimen

- Analysis: Analyze both test samples by the method of interest

- Calculation:

- Calculate the difference between results: Found concentration minus Base concentration

- Calculate percent recovery: (Difference / Added concentration) × 100

- Acceptance Criteria: Compare observed recovery with clinically allowable error

Interference Experiment Protocol

Purpose: Estimate constant systematic error caused by substances that may be present in patient specimens [5].

Procedure [5]:

- Sample Preparation:

- Add suspected interfering material to a patient specimen

- Dilute another aliquot of the same specimen with interference-free solvent

- Analysis: Analyze both samples by the method of interest

- Data Calculation:

- Analyze multiple specimen pairs with replicates

- Calculate average differences between paired samples

- Compare observed interference with allowable error for the test

Table: Common Drug Development Failure Reasons (2013-2015)

| Phase | Primary Failure Reason | Percentage | Common Issues |

|---|---|---|---|

| Phase II | Lack of Efficacy | 52% | Poor target validation, inadequate tissue exposure [4] [6] |

| Phase II | Safety | 24% | Unmanageable toxicity, poor therapeutic index [4] [6] |

| Phase III | Lack of Efficacy | 57% | Insufficient clinical effect, strategic commercial decisions [6] |

| Phase III | Safety | 17% | Unacceptable risk-benefit profile [6] |

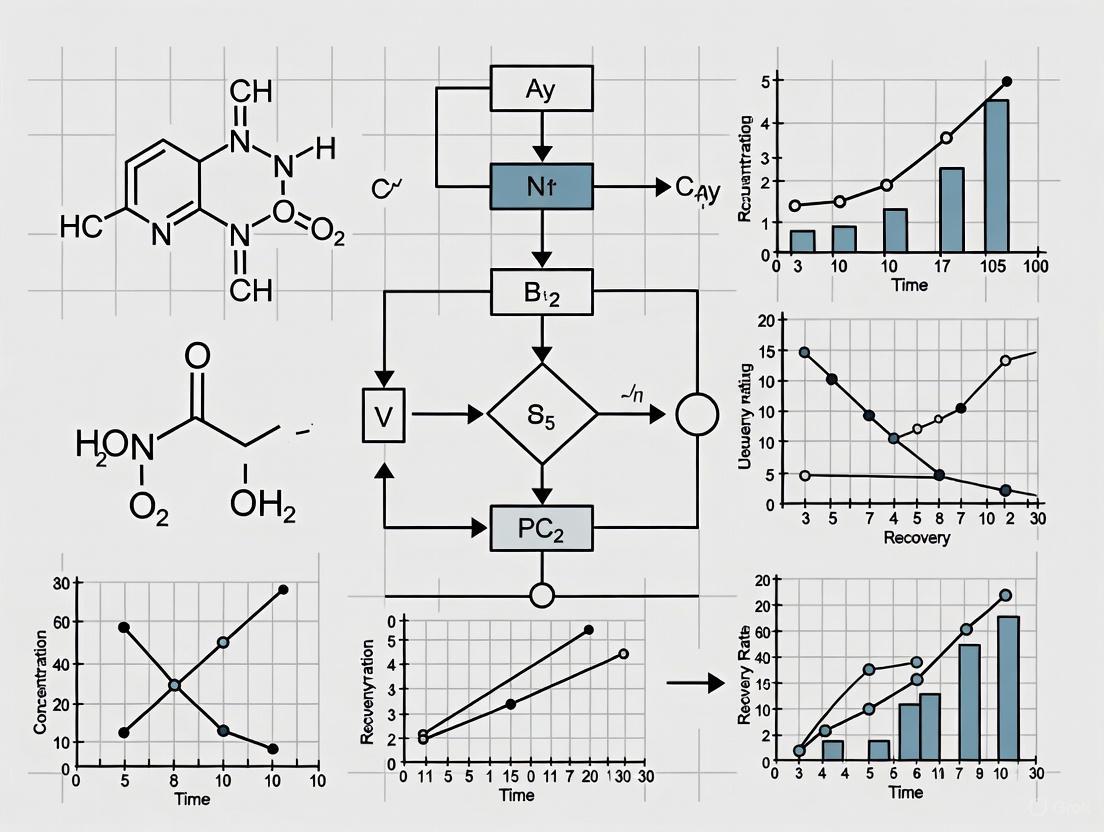

Workflow Diagrams

Clinical Trial Rescue Pathway

STAR-Based Drug Candidate Evaluation

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Recovery and Interference Experiments

| Item | Function | Application Notes |

|---|---|---|

| Certified Reference Materials (CRM) | Provide traceable accuracy control | Preferred when available; directly traceable to international standards [7] |

| Standard Solutions | Prepare known concentrations for recovery testing | Use high concentrations to minimize sample dilution [5] |

| Interferent Solutions | Test specific interference effects | Use soluble materials at clinically relevant concentrations [5] |

| Patient Specimens/Pools | Provide real-world matrix for testing | Conveniently available and contain substances found in real specimens [5] |

| Quality Control Materials | Monitor assay performance | Use for daily quality control and troubleshooting [1] |

| Lipemic/Hemolyzed Specimens | Test common interference sources | Use commercial emulsions or patient specimens before/after processing [5] |

In the pursuit of solving recovery issues in accuracy testing research, a foundational step is the rigorous validation of predictive models. A critical and often underestimated source of error stems from improper data handling during model development. This guide addresses the core concepts of data splitting—using training, validation, and test sets—to provide researchers and scientists in drug development with clear protocols to avoid common pitfalls, obtain unbiased performance estimates, and ensure their models generalize reliably to new, unseen data.

FAQs & Troubleshooting Guides

FAQ 1: What is the fundamental difference between training, validation, and test sets?

These three datasets serve distinct purposes in the machine learning pipeline to prevent overfitting and provide an honest assessment of a model's performance [8] [9].

- Training Set: This is the sample of data used to fit the model [10]. The model learns the underlying patterns and relationships from this data by adjusting its internal parameters (weights). It is the primary "learning material" for the algorithm [9].

- Validation Set: This is a separate sample of data used to provide an unbiased evaluation of a model fit on the training dataset while tuning the model's hyperparameters [10]. It acts as a checkpoint during development to assess how well the model is generalizing and to select the best performing model from multiple candidates. It is crucial for tasks like early stopping to halt training before overfitting occurs [8].

- Test Set: This is a separate sample of data used to provide a final, unbiased evaluation of a fully-specified classifier [10]. It is used only once, after the model development and hyperparameter tuning are completely finished, to estimate the model's real-world performance on truly unseen data [9].

The table below summarizes the key differences:

| Feature | Training Set | Validation Set | Test Set |

|---|---|---|---|

| Purpose | Model learning | Model tuning & hyperparameter optimization [11] [9] | Final model evaluation [8] |

| Used in Phase | Model training | Model validation | Final testing |

| Impact on Model | Directly used to learn parameters | Indirectly used to guide tuning [9] | Never used during training or tuning [9] |

| Common Pitfalls | Overfitting if too small or overused [9] | Overfitting if used excessively for tuning [11] | Data leakage if used before final evaluation [12] |

FAQ 2: Why is a separate test set necessary if I already have a validation set?

The validation set is used repeatedly during the model development cycle to tune hyperparameters and select the best model. Through this process, the model indirectly "learns" from the validation set, as you are making decisions based on its performance. Consequently, the model may become overfitted to the validation set, and its performance on that set becomes an overoptimistic estimate of its true generalization ability [13] [10].

The test set, kept completely untouched and unseen until the very end, acts as a simulation of real-world data. It provides a single, unbiased estimate of the model's skill, confirming that the model can perform well on genuinely new data and has not been over-optimized for the validation set [9] [12].

Troubleshooting Guide: My model performs well on the validation set but poorly on the test set. What went wrong?

This is a classic sign of overfitting and often indicates that information from the test set has leaked into the model training process, or that the model was tuned too specifically to the validation set [13]. Below is a workflow to diagnose and resolve this issue.

Diagnosis and Solutions:

- Problem: Data Leakage. Information from the test set contaminated the training process [12] [14].

- Solution: Ensure the test set is kept completely separate. All data preprocessing (e.g., normalization, imputation) and feature selection must be fit on the training data and then applied to the validation and test sets, not performed on the entire dataset before splitting [12].

- Problem: Overfitting to the Validation Set. The model's hyperparameters were tuned too aggressively based on the validation set performance [13].

- Problem: Non-representative Validation Set. The validation set is too small or does not follow the same probability distribution as the training and test data, leading to unreliable feedback during tuning [8] [13].

FAQ 3: How should I split my data between training, validation, and test sets?

There is no universally optimal split ratio; it depends on the total size and characteristics of your dataset [8]. The following table outlines common strategies and when to use them.

| Scenario | Recommended Split | Rationale & Protocols |

|---|---|---|

| Large Dataset (n > 10,000) | 70% Training / 15% Validation / 15% Test or 80/10/10 [11] [9] | With abundant data, even a small validation/test set is large enough to provide reliable performance estimates. More data for training generally leads to better models. |

| Medium Dataset (n ~ 1,000) | 60% Training / 20% Validation / 20% Test [9] | A balanced split ensures sufficient data for both training a robust model and obtaining reasonably stable evaluation metrics on the hold-out sets. |

| Small Dataset (n < 1,000) | Use Nested Cross-Validation (CV) [15] [17] | When data is limited, dedicating a fixed portion to a hold-out test set is inefficient and can lead to high variance in performance estimates. Nested CV uses all data for both training and testing in a structured way. |

Experimental Protocol: Nested Cross-Validation for Small Datasets

Nested cross-validation is a gold-standard method for obtaining an unbiased performance estimate when you also need to tune hyperparameters on a small dataset [15]. It consists of two layers of cross-validation:

- Outer Loop (Performance Estimation): The data is split into k folds (e.g., 5 or 10). Each fold takes a turn being the test set.

- Inner Loop (Hyperparameter Tuning): For each iteration of the outer loop, the remaining k-1 folds (the training set) are used to perform another k-fold cross-validation. This inner loop is used to tune the model's hyperparameters.

- Final Evaluation: A model is trained on the entire k-1 training folds using the best hyperparameters found in the inner loop and is then evaluated on the held-out outer test fold.

- The process is repeated for each of the k outer folds, resulting in k performance estimates, which are then averaged to produce a final, robust estimate of the model's generalization error [15].

The Scientist's Toolkit: Essential Reagents & Materials

This table details key methodological "reagents" for robust model development and validation.

| Item | Function & Explanation |

|---|---|

| Stratified Splitting | A data splitting method that ensures the relative class frequencies (e.g., case vs. control) are preserved in the training, validation, and test sets. This is crucial for imbalanced datasets common in medical research [13] [9]. |

| k-Fold Cross-Validation | A resampling technique used for performance estimation and/or hyperparameter tuning. It divides the data into k subsets (folds). The model is trained on k-1 folds and validated on the remaining fold, repeating the process k times. The results are averaged to reduce the variance of the estimate [13] [14]. |

| Nested Cross-Validation | A protocol that combines two layers of cross-validation to rigorously separate hyperparameter tuning from model evaluation. It is the recommended method for obtaining an almost unbiased performance estimate when dealing with small datasets [13] [15]. |

| Learning Curves | A diagnostic plot with training set size on the x-axis and model performance (e.g., error) on the y-axis. It helps identify whether a model is suffering from high bias (underfitting) or high variance (overfitting), and can inform decisions about whether collecting more data would be beneficial [17]. |

| Subject-Wise Splitting | A critical splitting strategy for data with multiple records per subject (e.g., longitudinal studies). It ensures all records from a single subject are placed in the same partition (training, validation, or test) to prevent optimistic bias from the model "recognizing" a patient rather than learning a generalizable pattern [15]. |

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: Why does my AI model perform well in validation but fails to deliver measurable business value after deployment?

A: This common issue, often called the "deployment gap," typically stems from three root causes:

- Misaligned Success Metrics: The model's accuracy metric (e.g., 95% accuracy) may not be tied to a concrete business outcome (e.g., reducing customer churn by 15%) [18].

- Data Drift: The data the model was trained and validated on does not match the real-world, live data it encounters in production, leading to degraded performance [18].

- Lack of Actionability: A model can be highly accurate but useless if there is no business process in place to act on its predictions. For example, a accurate customer churn model provides no value if the marketing team has no plan to retain those customers [18].

Q2: What are the primary reasons clinical drug development fails after promising preclinical results?

A: Over 90% of drug candidates that enter clinical trials fail to gain approval. The top reasons for this failure are summarized below [4] [19]:

Table: Primary Causes of Clinical Drug Development Failure

| Cause of Failure | Approximate Percentage of Failures | Description |

|---|---|---|

| Lack of Clinical Efficacy | 40-50% | The drug does not work effectively in human patients despite promising preclinical data [4]. |

| Unmanageable Toxicity | 30% | The drug exhibits safety issues or toxic side effects in humans that were not predicted by animal models [4]. |

| Poor Drug-Like Properties | 10-15% | Issues with pharmacokinetics, such as absorption, distribution, metabolism, or excretion (ADME) [4]. |

| Commercial/Strategic Factors | ~10% | Lack of commercial need or poor strategic planning [4]. |

Q3: How can AI assistance sometimes lead to worse performance than unaided human experts?

A: Studies in high-stakes fields like healthcare have found a double-edged sword effect. AI tools can create hidden vulnerabilities in human workflows [20]:

- When the AI's predictions are correct, human performance can improve by 53-67% compared to working alone.

- However, when the AI's predictions are misleading or wrong, human performance can degrade dramatically—by 96% to 120% worse than unaided performance [20].

- This occurs because AI assistance can unconsciously change how experts think and process information, making them susceptible to AI mistakes without realizing it [20].

Q4: Our AI project is underway but showing signs of trouble. What recovery strategies can we employ?

A: If your AI project is faltering, consider these evidence-based recovery tactics [18]:

- Re-evaluate and Re-scope: Break down a large, ambiguous goal into a smaller, measurable objective. For example, instead of "automate customer service," target "auto-resolve 40% of password reset requests." [18]

- Conduct a Full Data Audit: Poor data quality is a common failure point. Audit your data pipelines for accuracy, completeness, and potential biases [18].

- Implement MLOps Practices: Adopt Machine Learning Operations (MLOps) to automate model retraining, monitor for performance drift, and manage model versions [18].

- Roll Out in Phases: Deploy the AI to a small team or customer segment first. Use their feedback and performance metrics to refine the model before a full-scale rollout [18].

Troubleshooting Guides

Guide 1: Troubleshooting AI Model Performance Drift

Symptoms: Model accuracy in production is declining over time, or user complaints about irrelevant outputs are increasing.

Diagnostic Steps:

- Verify Data Integrity: Check for sudden changes or anomalies in the input data feed.

- Monitor for Drift: Implement statistical tests to continuously compare live input data with the model's original training data distribution.

- Re-evaluate Business KPIs: Confirm that the model's outputs are still aligned with the current business goals and processes.

Resolution Protocol:

- Retrain the Model: Feed the model new, representative data collected from the production environment.

- Fine-tune or Rebuild: If retraining is insufficient, the model may need fine-tuning or a complete rebuild using an updated dataset.

- Enhance Governance: Establish a continuous monitoring and retraining schedule to prevent future drift.

Guide 2: Troubleshooting Preclinical-to-Clinical Translation Failure

Symptoms: A drug candidate shows strong efficacy in animal models but fails in human clinical trials due to lack of efficacy or unexpected toxicity.

Diagnostic Steps:

- Inter-species Validation: Critically assess whether the animal model accurately recapitulates the human disease pathophysiology. Many models do not [19] [21].

- Analyze Tissue Exposure & Selectivity (STR): Review data on whether the drug accumulates in the intended human tissues at the required concentration. Over-reliance on potency (SAR) while ignoring tissue exposure is a major cause of failure [4].

- Audit Biomarker Relevance: Determine if the biomarkers used to predict efficacy in animals are relevant and predictive in humans.

Resolution Protocol:

- Adopt a STAR Framework: Use Structure–Tissue Exposure/Selectivity–Activity Relationship (STAR) for candidate selection. This classifies drugs based on both potency and tissue exposure, helping to balance clinical dose, efficacy, and toxicity [4].

- Incorporate Human-Relevant Models: Integrate human-based models like Induced Pluripotent Stem Cells (iPSCs) into the preclinical workflow to better predict human responses [21].

- Leverage AI and Machine Learning: Use AI platforms to gain deeper insights from complex cellular data and patient-based information, improving target identification and candidate selection [21].

Experimental Protocols & Methodologies

Protocol 1: Joint Activity Testing for Human-AI Collaboration

Purpose: To evaluate how an AI tool impacts human decision-making across a range of scenarios, especially in safety-critical settings. This method reveals hidden vulnerabilities that standard AI-only testing misses [20].

Methodology:

- Participant Selection: Recruit domain experts (e.g., nurses, engineers).

- Scenario Design: Create a set of historical or simulated cases that represent a spectrum of challenges, including scenarios where the AI is known to perform well, mediocrely, and poorly.

- Testing Conditions: Each participant reviews cases under different conditions:

- Condition A: No AI assistance.

- Condition B: With AI-generated predictions (e.g., a risk score).

- Condition C: With AI-generated data annotations.

- Condition D: With both predictions and annotations.

- Data Collection: Measure the accuracy and quality of the experts' decisions in each condition.

- Analysis: Analyze results separately for cases of strong, mediocre, and poor AI performance. Do not average results, as this can mask rare but catastrophic failures [20].

Protocol 2: Structure-Tissue Exposure/Selectivity-Activity Relationship (STAR) Analysis

Purpose: To improve drug candidate selection and balance clinical dose, efficacy, and toxicity by systematically evaluating both a compound's potency and its tissue exposure profile [4].

Methodology:

- Compound Potency & Specificity Assessment: Determine the drug's affinity (Ki or IC50) and selectivity for its intended molecular target using structure-activity relationship (SAR) studies [4].

- Tissue Exposure & Selectivity Profiling: Conduct quantitative whole-body autoradiography or mass spectrometry imaging to measure the drug's concentration in both disease-relevant tissues and vital normal tissues over time. This establishes its structure-tissue exposure/selectivity relationship (STR) [4].

- STAR Classification: Classify drug candidates into one of four categories based on the integrated data:

- Class I: High potency/specificity & high tissue exposure/selectivity. (Ideal candidate, requires low dose).

- Class II: High potency/specificity & low tissue exposure/selectivity. (High dose needed, high toxicity risk).

- Class III: Adequate potency & high tissue exposure/selectivity. (Often overlooked; low dose, manageable toxicity).

- Class IV: Low potency & low tissue exposure/selectivity. (Terminate early) [4].

- Candidate Selection: Prioritize Class I and III drugs for clinical development, as they are more likely to achieve a favorable efficacy-toxicity balance [4].

Data Summaries

Table: Quantitative Analysis of AI Project Failures and Recovery

| Metric | Statistic | Source / Context |

|---|---|---|

| AI Models Reaching Production | Only ~13% | Industry surveys indicate 87% of AI models never make it to production [18]. |

| Generating Measurable Value | Even fewer than 13% | Fewer than the models that reach production actually demonstrate clear business value [18]. |

| Primary Cause of AI Failure | Poor ROI calculation & unrealistic expectations | Failure is rarely due to bad technology, but more often flawed expectations and planning [18]. |

| Key Recovery Tactic Success | Phased Rollout | Piloting in a small department first allows for validation and refinement without major resource commitment [18]. |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Recovery-Focused Research

| Tool / Technology | Function | Application in Recovery Research |

|---|---|---|

| Induced Pluripotent Stem Cells (iPSCs) | Human-derived cells differentiated into disease-relevant cell types. | Creates more human-relevant disease models for preclinical testing, helping to bridge the translation gap from animal models to human trials [21]. |

| AI-Driven Phenotypic Screening Platforms | Uses machine learning to analyze complex cellular behaviors and images. | Provides deeper insights into disease mechanisms and drug effects, improving target identification and predicting off-target effects [21]. |

| MLOps (Machine Learning Operations) Platforms | Automated pipelines for model training, deployment, monitoring, and retraining. | Establishes discipline in AI projects, enabling detection of performance drift and facilitating model recovery in production environments [18]. |

| Structure-Tissue Exposure/Selectivity Relationship (STR) Analysis | Quantitative imaging/mass spectrometry to measure drug concentration in tissues. | Critical for the STAR framework; helps select drug candidates with a higher likelihood of clinical success by optimizing tissue-specific delivery [4]. |

Visual Workflows and Diagrams

STAR Framework for Drug Candidate Selection

Three-Phase AI Recovery & Implementation

Definitions and Core Concepts

What is a Recovery Time Objective (RTO)?

The Recovery Time Objective (RTO) is the maximum acceptable amount of time that an application, system, or business process can be offline after a failure or disaster before the consequences become unacceptable [22] [23]. It answers the question: "How long can we afford to be down?"

RTO is a targeted duration for restoration, guiding the selection of disaster recovery technologies and strategies to resume normal business operations promptly [24]. It focuses on minimizing downtime and its associated operational and financial impacts.

What is a Recovery Point Objective (RPO)?

The Recovery Point Objective (RPO) is the maximum acceptable amount of data, measured in time, that an organization can tolerate losing after a disruptive event [25] [26] [27]. It answers the question: "How much data can we afford to lose?"

RPO determines the maximum age of files in backup storage needed for recovery and directly dictates the required frequency of data backups [25] [27]. It is concerned with data integrity and loss prevention.

The table below summarizes the key differences between these two critical metrics.

| Aspect | Recovery Time Objective (RTO) | Recovery Point Objective (RPO) |

|---|---|---|

| Primary Focus | Downtime duration & service availability [26] [27] | Data loss & data integrity [26] [27] |

| Core Question | "How long does it take to recover operations?" [22] | "How much data is lost during a recovery?" [25] [26] |

| Governs | Disaster recovery technologies & restoration speed [24] | Data backup frequency & strategy [25] [27] |

| Measured In | Time to restore systems and applications (e.g., minutes, hours) [22] | Time of data lost (e.g., minutes, hours of data) [25] |

| Key Driver | Business process criticality & downtime costs [28] | Data criticality & data change frequency [26] |

Diagram: The distinct but complementary timelines of RTO and RPO following a disruption.

Calculation and Methodology

Determining RTO and RPO

There is no single standard formula for calculating RTO and RPO, as they are unique to each organization and system [29]. The process is typically conducted during a Business Impact Analysis (BIA) and involves a systematic evaluation of operational and financial risks [29].

Key Steps for Calculation:

- Create a Comprehensive Inventory: Identify all systems, business-critical applications, and data [24].

- Evaluate Criticality and Impact: Assess the value and loss tolerance for each service, considering:

- Define Tolerances: Establish the maximum tolerable downtime (MTD) for RTO and the maximum tolerable data loss for RPO for each system [23].

- Align with Risk Appetite: Ensure the defined objectives align with the organization's overall risk tolerance [28].

A key consideration is that (RTO + RPO) < Maximum Tolerable Downtime (MTD). It is recommended to keep their sum at less than half or a third of the MTD to account for potential complications during recovery, such as a failed restore attempt [23].

Industry-Specific Tiers and Examples

RTO and RPO are not one-size-fits-all. Different systems within an organization will have different objectives based on their criticality. The following table provides common tier intervals and examples relevant to research and healthcare environments.

| Tier / RPO Interval | System Criticality | RPO & RTO Examples | Common Technologies |

|---|---|---|---|

| 0 - 1 Hour (Tier 0) | Mission-Critical | RPO: Near-zero for Electronic Health Records (EHR), payment transactions, clinical trial data capture systems [26] [24].RTO: Near-zero for patient information systems, diagnostic imaging services [24]. | Continuous data replication, real-time backup, failover systems [22] [25] [27]. |

| 1 - 4 Hours (Tier 1) | Semi-Critical | RPO: 1-4 hours for file servers, CRM data, customer chat logs [26] [27].RTO: ~4 hours for email applications, telemedicine platforms [28] [24]. | Frequent snapshots, near-continuous data protection [25]. |

| 4 - 12 Hours (Tier 2) | Important | RPO: 4-12 hours for marketing data, sales information [26] [27].RTO: 1 day for CRMs, administrative systems [28] [24]. | Scheduled daily backups (incremental/differential) [30]. |

| 13 - 24+ Hours (Tier 3) | Low Priority | RPO: 13-24 hours for historical data, purchase orders, HR records [26] [27].RTO: 1-2 days for finance systems, archival data [28]. | Tape archiving, less frequent cloud backups [30]. |

Diagram: Tiered approach to RPO and RTO based on system criticality. Fewer systems should be in the top, more costly tiers.

Troubleshooting and FAQs

Common Recovery Issues and Solutions

| Issue Scenario | Potential Cause | Corrective Action |

|---|---|---|

| Actual recovery time exceeds RTO. | Inadequate recovery strategy; insufficient testing; unexpected recovery complexity [23]. | Re-evaluate and upgrade disaster recovery technology (e.g., implement failover). Conduct regular disaster rehearsals to measure Recovery Time Actual (RTA) [22] [23] [29]. |

| Data loss after recovery exceeds RPO. | Backup frequency is too low; last backup was corrupted or incomplete [28]. | Increase backup frequency to match RPO. Implement backup integrity checks (e.g., automatic verification of backup recoverability) [30]. |

| Backup process is consuming excessive resources and impacting system performance. | Backups are too large or scheduled during peak operational hours. | Switch to incremental backups instead of full backups. Schedule backups during off-peak hours. Use source global deduplication to reduce resource load [22]. |

| Backup is corrupted and unusable for recovery. | Media degradation; software error; ransomware encryption. | Maintain multiple backup sets (3-2-1 rule: 3 copies, on 2 different media, 1 offsite). Use immutable storage to protect against ransomware. Regularly test restore procedures from different recovery points [30] [23]. |

Frequently Asked Questions (FAQs)

1. Can RTO and RPO be zero? Yes, this is known as "zero RTO/RPO," but it is very costly to achieve [22] [27]. It requires continuous data replication and instantaneous failover capabilities, which may only be justified for the most critical systems, such as those handling real-time financial transactions or directly supporting life-saving medical equipment [22] [27].

2. How do RTO and RPO relate to a Business Impact Analysis (BIA)? The BIA is the foundational process for determining RTO and RPO [29]. It identifies mission-critical business processes, predicts the consequences of disruption, and provides the operational and financial impact data needed to set realistic and business-aligned recovery objectives [29].

3. Why is it important to test RTO and RPO? Planned objectives (RTO/RPO) often differ from actual performance (Recovery Time Actual/RPA) [22] [25]. Only through regular testing, drills, and disaster rehearsals can you validate your recovery strategies, expose weaknesses, and ensure you can meet your targets during a real incident [23] [29].

4. How do compliance regulations affect RPO and RTO? Regulations like HIPAA, GDPR, and PCI DSS often have implicit or explicit requirements for data availability and loss prevention [26] [30] [27]. These requirements can dictate maximum acceptable RPOs and RTOs for protected data types, such as patient health information or payment card details, to ensure contingency plans are adequate [26] [27].

The Researcher's Toolkit: Essential Solutions for Data Recovery

For researchers and scientists, ensuring the integrity and availability of experimental data is paramount. The following table outlines key technologies and methodologies that form the foundation of a robust data recovery strategy.

| Tool / Solution | Primary Function | Relevance to Research Data |

|---|---|---|

| Backup Integrity Checks | Automatically verifies the recoverability and consistency of backup data [30]. | Crucial for validating that complex, irreplaceable datasets (e.g., genomic sequences, longitudinal study data) are not corrupted and can be restored accurately. |

| Immutable Storage | Creates a write-once-read-many (WORM) copy of data that cannot be altered or deleted for a set period [30]. | Protects primary research data and backups from tampering, accidental deletion, or ransomware encryption, which is critical for maintaining data integrity for publication and regulatory submissions. |

| Snapshot-Based Backups | Captures the state of a system, database, or file volume at a specific point in time [30]. | Allows for rapid recovery to a known good state, such as before a software error corrupted an analysis or a failed experiment affected a dataset. |

| Failover Systems | Automatically switches to a redundant or standby system upon the failure of the primary system [22] [27]. | Maintains the availability of critical research applications and data collection systems (e.g., laboratory equipment monitoring), supporting a near-zero RTO. |

| Continuous Data Protection (CDP) | Continuously captures and replicates every data change to a secondary location [25]. | Enables a near-zero RPO for high-velocity data generation systems, ensuring minimal data loss from continuously running instruments or sensors. |

| Cloud Archiving | Securely stores data in an offsite cloud environment for long-term retention [30]. | A cost-effective solution for archiving large volumes of historical research data that must be retained for decades to meet grant, publication, or regulatory requirements (e.g., FDA) [30]. |

Diagram: A continuous cycle for developing and maintaining an effective recovery strategy.

Proven Data Splitting and Validation Methodologies for Accurate Performance Estimation

FAQs on Data Splitting for Accuracy Testing Recovery

Q1: My model performs well during validation but fails on real-world data. What is the most likely cause?

The most probable cause is information leakage or an inappropriate data split that does not reflect the real-world data distribution [31] [32]. This creates an over-optimistic performance estimation during testing. For models intended for Out-of-Distribution (OOD) scenarios, a standard random split is insufficient as it tests the model on data that is too similar to the training set [31]. To recover accuracy:

- For OOD Applications: Use similarity-aware splitting tools like DataSAIL that minimize similarity between training and test sets, providing a more realistic performance estimate [31].

- For Medicinal Chemistry: Employ time-split validation. If the compound order is unknown, use algorithms like SIMPD to generate splits that mimic the temporal property shifts of a real drug discovery project [33].

- Always Use a Blind Test Set: Permanently set aside a portion of data as a final test set, only used once to evaluate the fully tuned model [16] [34].

Q2: With a very small dataset, how can I reliably estimate model performance without a large hold-out test set?

With small datasets, using a single, large hold-out set (like 10%) is unreliable. You should use resampling techniques that maximize data usage for both training and evaluation [16] [35].

- K-Fold Cross-Validation: This is a robust standard. It divides data into k folds, using k-1 for training and one for validation, repeating the process k times. A value of k=5 or k=10 is common. It provides a good balance between bias and variance [36] [35] [37].

- Leave-One-Out Cross-Validation (LOO-CV): Useful for very small datasets, LOO-CV uses a single sample as the validation set and the rest for training. This is computationally expensive but maximizes training data [16] [35].

- Bootstrapping: This method creates multiple training sets by randomly sampling the original data with replacement. Samples not selected in a given round (out-of-bag samples) are used for validation. Bootstrapping is excellent for estimating the uncertainty of your performance metrics [16] [37]. Studies have shown that bootstrapping can work better than cross-validation in many cases, with out-of-bootstrap estimates having more bias but less variance than corresponding CV estimates [35] [37].

Q3: Why did my model's segmentation performance collapse when I switched from a file-based to a pixel-randomized data split?

This collapse is due to the destruction of data structure. In tasks like image segmentation, the spatial relationship between neighboring pixels is critical [38]. A randomized split scatters these related pixels across training, validation, and test sets. The model learns to classify individual pixels in isolation but fails to learn the contextual patterns necessary for coherent segmentation [38]. To recover accuracy:

- Use Structured Splitting: Split the data in a way that preserves inherent structures, such as keeping all pixels from one image in the same split or ensuring all classes are represented in every training epoch [38].

- Problem-Specific Methods: For drug-target prediction (2D data), use methods that account for similarities across both dimensions (e.g., drug and target) to avoid information leakage [31].

Comparative Analysis of Data Splitting Methods

The table below summarizes the core characteristics of common data splitting methods to guide your selection.

| Method | Core Principle | Key Parameters | Best-Suited For | Advantages | Disadvantages / Cautions |

|---|---|---|---|---|---|

| Hold-Out (80/10/10) | Single random partition into training, validation, and test sets [36] [34]. | Split ratios (e.g., 80/10/10). | Large, balanced datasets; initial model prototyping [34]. | Computationally fast and simple to implement. | High variance in error estimate; risky with small datasets [36]. |

| K-Fold Cross-Validation | Data divided into k folds; each fold serves as a validation set once [36] [35]. | Number of folds (k). | Model selection and hyperparameter tuning with small to medium datasets [36] [35]. | Reduces variance of error estimate compared to hold-out; makes efficient use of data. | Can be computationally expensive; stratified folds are crucial for imbalanced data [34]. |

| Leave-One-Out CV | A special case of k-fold where k = number of samples [16] [35]. | None. | Very small datasets [35]. | Maximizes training data; almost unbiased estimate. | High computational cost; high variance as an estimator [35] [37]. |

| Bootstrapping | Creates multiple datasets by random sampling with replacement [16] [37]. | Number of bootstrap samples. | Estimating parameter uncertainty; ensemble methods (bagging) [37]. | Good for estimating uncertainty and stability of metrics. | Can produce over-optimistic estimates; requires bias correction (e.g., .632 bootstrap) for error estimation [16] [37]. |

| Time-Split / SIMPD | Splits data based on temporal order or simulated temporal property shifts [33]. | Date column; property objectives (for SIMPD). | Medicinal chemistry projects; any data with temporal drift. | Gold standard for prospective validation; mimics real-world use. | Requires timestamped data or project data for simulation [33]. |

| Similarity-Aware (DataSAIL) | Splits data to minimize similarity between training and test sets [31]. | Similarity measure; dimensionality (1D/2D). | Realistic OOD evaluation for biological data (proteins, molecules). | Provides realistic OOD performance estimates. | More complex to set up; may require domain-specific similarity metrics [31]. |

Experimental Protocols for Data Splitting

Protocol 1: Implementing k-Fold Cross-Validation with Stratification

This protocol is essential for obtaining a robust performance estimate on a classification dataset, especially when it is imbalanced [34].

- Data Preparation: Load and preprocess your data. Ensure features and labels are separated.

- Stratified K-Fold Initialization: Import

StratifiedKFoldfromsklearn.model_selection. Initialize it with the number of splits/folds (n_splits=5or10) and a random state for reproducibility [36]. - Model Training & Validation Loop: Iterate over each fold generated by the

StratifiedKFoldsplit.- In each iteration, the original data is split into training and validation indices, preserving the percentage of samples for each class.

- Use the training indices to subset your data and train the model.

- Use the validation indices to subset the data and validate the model, storing the performance metric (e.g., accuracy, F1-score).

- Performance Aggregation: Calculate the mean and standard deviation of all stored validation scores. The mean represents the model's expected performance, while the standard deviation indicates its stability [36].

Protocol 2: Generating a Realistic Split for Medicinal Chemistry using SIMPD

This protocol is for validating models intended for use in a lead optimization project where temporal drift is a key concern [33].

- Data Curation: Assemble a project-specific bioactivity dataset (e.g., pAC50 values) with associated compound structures. Apply necessary filters for data quality and reliability [33].

- SIMPD Algorithm Configuration: Define the multi-objective genetic algorithm's goals based on known temporal shifts in project data. Objectives typically include maximizing the difference in mean potency and other key molecular properties between the early (training) and late (test) sets [33].

- Split Generation: Run the SIMPD algorithm to partition the data into an 80% training set and a 20% test set. The algorithm will optimize the split to meet the configured property shift objectives.

- Model Validation: Train your model on the generated training set and evaluate its performance on the test set. This performance is a more realistic indicator of its utility in a prospective project setting compared to a random split [33].

Workflow and Relationship Diagrams

The following diagram illustrates the logical decision process for selecting an appropriate data splitting strategy based on your data and problem context.

The diagram below visualizes the mechanics of K-Fold Cross-Validation and Bootstrapping, two fundamental resampling methods.

| Tool / Resource | Type | Primary Function | Reference |

|---|---|---|---|

| Scikit-learn | Software Library | Provides implementations for train_test_split, KFold, StratifiedKFold, cross_val_score, and hyperparameter tuning with GridSearchCV. |

[36] |

| DataSAIL | Python Package | Specialized tool for generating similarity-aware data splits for 1D and 2D biomedical data to minimize information leakage and enable realistic OOD evaluation. | [31] |

| SIMPD Algorithm | Algorithm/Code | Generates training/test splits for public bioactivity data that mimic the property shifts observed in real-world medicinal chemistry projects. | [33] |

| MixSim Model | Simulation Tool | Generates multivariate datasets with a known probability of misclassification, providing a controlled ground truth for comparing data splitting and modeling approaches. | [16] |

| Stratified Splitting | Methodology | A splitting technique that preserves the percentage of samples for each class in the training and validation/test sets, crucial for working with imbalanced datasets. | [34] |

Troubleshooting Guide: Resolving Common Experimental Issues

This guide addresses specific challenges you might encounter when implementing k-Fold Cross-Validation and Bootstrapped Latin Partitions to solve recovery issues in accuracy testing for pharmaceutical research.

FAQ 1: My model performs well during validation but fails in real-world deployment. What is the issue and how can I fix it?

- Problem: This indicates overfitting and an overly optimistic validation error, often because your test data was used during model tuning, causing information "leakage."

- Solution: Implement a nested cross-validation structure.

- Step 1: Split your data into training and a final hold-out test set. Do not use this test set for any tuning.

- Step 2: On the training set, perform k-fold cross-validation only to tune hyperparameters or select models.

- Step 3: Once the model is finalized, perform a single, final evaluation on the untouched hold-out test set to estimate the true generalization error [36].

- Diagram: The workflow below illustrates this protective data splitting strategy.

FAQ 2: My performance metrics have high variance across different data splits. How can I get a more stable and reliable estimate?

- Problem: A single k-fold split can be unstable, and a simple train/test split can give a misleading result if the split is not representative [39].

- Solution: Use Bootstrapped Latin Partitions.

- This technique combines the thoroughness of k-fold (every data point is used for validation once per partition) with the statistical power of bootstrapping (multiple random samples with replacement) [40] [41].

- The process is repeated many times (e.g., 100-1000 bootstrap samples), and the average prediction error is reported with a measure of precision like the standard deviation of prediction error (SDEP). This SDEP quantifies the precision of your performance estimate with respect to variations in the training data composition [40].

- Diagram: The following workflow details the Bootstrapped Latin Partitions procedure.

FAQ 3: When separating my data, one set ended up with a different class distribution. How do I prevent this bias?

- Problem: Random splitting can accidentally create training and prediction sets with different proportions of classes, profoundly biasing the model [40].

- Solution: Use stratified splitting.

- Most modern libraries, like scikit-learn, offer

StratifiedKFold. This ensures that each fold is a good representative of the whole by preserving the percentage of samples for each class [36]. - The Bootstrapped Latin Partition method inherently addresses this by design, as it requires "the relative proportions of the class distributions are maintained between training and prediction sets" [40].

- Most modern libraries, like scikit-learn, offer

Experimental Protocols for Robust Accuracy Testing

Protocol: Standard k-Fold Cross-Validation

This protocol provides a reliable estimate of model performance while mitigating overfitting [39] [36].

- Objective: To evaluate the generalization performance of a predictive model and detect overfitting.

- Procedure:

- Shuffle your dataset randomly to avoid order effects.

- Split the data into

k(e.g., 5 or 10) mutually exclusive folds of approximately equal size. - For each fold

i:- Use fold

ias the validation set. - Use the remaining

k-1folds as the training set. - Train your model on the training set.

- Validate the model on the validation set and record performance metrics (e.g., Accuracy, R², MSE).

- Use fold

- Calculate the average and standard deviation of the

kperformance metrics.

- Key Considerations:

Protocol: Bootstrapped Latin Partitions for High-Variance Data

This protocol is ideal for obtaining a statistically robust performance estimate with a measure of precision, especially valuable with smaller or highly variable datasets common in drug development [40] [41].

- Objective: To obtain a precise and statistically consistent estimate of prediction error and model stability.

- Procedure:

- Set Parameters: Define the number of bootstrap samples (B, e.g., 100-1000) and the number of partitions (P, e.g., 2 for a 50:50 split).

- For each bootstrap iteration

bfrom 1 to B:- Create a bootstrap sample by randomly sampling from the original dataset with replacement (same size as the original dataset).

- Partition this bootstrap sample into P subsets (Latin partitions), ensuring the relative class distributions are maintained in each subset.

- For each partition

pfrom 1 to P:- Use partition

pas the validation set. - Use the remaining P-1 partitions as the training set.

- Train the model on the training set.

- Validate the model on the validation set and record the performance metric.

- Use partition

- Pool all validation results from all B × P models.

- Calculate the average prediction error (e.g., RMSEP) and its precision, the Standard Deviation of Prediction Error (SDEP), from the pooled results [40].

The following tables summarize key quantitative data for easy comparison of the techniques.

Table 1: Comparison of k-Fold CV and Bootstrapped Latin Partitions

| Feature | k-Fold Cross-Validation | Bootstrapped Latin Partitions |

|---|---|---|

| Primary Goal | Reliable performance estimation | Precise performance estimation with stability measure |

| Data Usage | Every data point used once for validation per k-fold run | Multiple random samples with replacement |

| Key Output | Average performance ± standard deviation across k folds | Average performance ± SDEP across many bootstraps |

| Computational Cost | Moderate (k model trainings) | High (B × P model trainings) |

| Advantages | Simple, efficient, maximizes data use [39] | Provides a measure of precision for the error estimate, more robust [40] |

| Ideal Use Case | Standard model evaluation and hyperparameter tuning | Final model validation, reporting results in publications, high-stakes accuracy testing |

Table 2: Common Performance Metrics for Accuracy Testing

| Metric | Formula | Interpretation in Accuracy Testing Context |

|---|---|---|

| Accuracy | (TP+TN)/(TP+TN+FP+FN) | Overall correctness of the model. A recovery metric for classification tasks. |

| Root Mean Square Error (RMSE) | √[ Σ(Ŷᵢ - Yᵢ)² / n ] | Measures the average prediction error magnitude. Sensitive to large errors. Key for calibration models [42]. |

| R-squared (R²) | 1 - [ Σ(Ŷᵢ - Yᵢ)² / Σ(Ȳ - Yᵢ)² ] | Proportion of variance in the response variable that is explained by the model. A recovery metric for regression. |

| Standard Deviation of Prediction Error (SDEP) | Standard deviation of the prediction errors across all bootstrap samples [40] | Quantifies the precision and reliability of your performance estimate. A lower SDEP indicates a more stable model. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Computational Reagents for Model Validation

| Item | Function in Experiment | Example in Pharmaceutical Context |

|---|---|---|

| Stratified Splitting Algorithm | Ensures training and test sets have proportional representation of classes, preventing biased models. | Crucial for clinical trial data where patient subgroups (e.g., by disease severity) must be fairly represented in all splits [40] [36]. |

| Bootstrap Resampling Routine | Generates multiple simulated datasets by sampling with replacement to estimate the sampling distribution of a statistic. | Used in Bootstrapped Latin Partitions to calculate the SDEP, providing a confidence interval for model accuracy [40] [42]. |

| Multiple Metric Scorer | Evaluates model performance from different angles (e.g., precision, recall, RMSE) for a comprehensive view. | Essential for a holistic view; e.g., a diagnostic model must be evaluated for both sensitivity (recall) and specificity [36]. |

| Pipeline Constructor | Chains together data pre-processing (e.g., scaling) and model training to prevent data leakage during validation. | Ensures that any data transformation (like normalization of biomarker levels) is learned from the training fold and applied to the validation fold, mimicking real-world deployment [36]. |

Frequently Asked Questions

1. What is the core problem with using systematic sampling methods like K-S and SPXY for validation? The core problem is that these methods are designed to select the most representative samples for the training set. While this can be good for building a model, it means the remaining samples that form the validation set are often less representative of the overall dataset. When you test your model on this poor, unrepresentative validation set, you get an unreliable and often overly pessimistic estimate of how well your model will perform on new, unknown data [43].

2. I have a small dataset. Is K-S or SPXY a good choice? Research shows that the negative effects of having a non-representative validation set are more pronounced with smaller datasets [43]. In such cases, the performance gap between what you measure on the flawed validation set and the true performance on a blind test set can be significant. It is often better to use repeated cross-validation or bootstrap methods, which make more efficient use of limited data [43] [44].

3. Are there any scenarios where systematic sampling is recommended? Systematic sampling can be very effective for dividing a dataset when the goal is to create a representative calibration or training set, and the test set is either a separate, truly external dataset, or the validation of model performance is not the primary aim. However, for the specific task of estimating the generalization error of a model, our comparative studies show it performs poorly [43].

4. What are the best practices for model validation to avoid these pitfalls? To ensure a reliable estimate of your model's performance:

- Use Repeated Cross-Validation: Repeatedly split the data into training and validation folds multiple times to get a more stable performance estimate [44].

- Apply Bootstrap Methods: These methods can provide a good measure of model stability and performance [43].

- Always Use a Blind Test Set: Keep a completely separate, untouched set of data to finally assess the model's performance after the model selection and validation process is complete [43].

- Ensure Proper Nesting: Variable selection and parameter tuning must be performed within each cross-validation fold, not on the entire dataset before splitting, to avoid over-optimistic results [44].

Troubleshooting Guide

Use the following flowchart to diagnose and address issues related to misleading validation results in your research.

Observed Symptom: A large performance gap between training and validation sets.

- Potential Cause: The sampling method used to create the validation set (e.g., K-S, SPXY) has selected a set of samples that are not representative of the variation modeled in the training data [43].

- Solution:

- Re-partition your data using a random method like k-fold cross-validation.

- Repeat the cross-validation multiple times (e.g., 50-100x) to obtain a stable distribution of performance metrics and ensure your results are not dependent on a single, fortunate data split [44].

- Compare the new performance estimates with the original ones. A significant improvement in validation performance suggests the original validation set was indeed biased.

Observed Symptom: The model performs well during validation but fails on a truly blind test set.

- Potential Cause: This is a classic sign of over-optimism introduced during validation, which can occur even with random splits if model selection and tuning are not done correctly. However, with systematic sampling, the validation set is inherently poor for performance estimation [43].

- Solution:

- Implement a nested cross-validation protocol [44]. This involves an outer loop for performance estimation and an inner loop for model selection, rigorously separating the two processes.

- Ensure that all steps of model building (including variable selection and parameter tuning) are performed within each training fold of the validation process and never on the validation set itself [44].

Comparative Data on Sampling Methods

The table below summarizes key findings from a comparative study on data splitting methods, highlighting the performance of systematic sampling methods [43].

Table 1: Comparison of Data Splitting Methods for Model Validation

| Method Category | Example Methods | Key Finding | Recommendation for Validation |

|---|---|---|---|

| Systematic Sampling | Kennard-Stone (K-S), SPXY | Designed to select the most representative samples for training, leaving a poorly representative validation set. Leads to poor estimation of model performance [43]. | Not recommended for creating a validation set to estimate generalization error. |

| Cross-Validation | k-fold, Leave-One-Out (LOO) | Provides a better balance, but a single split can be unreliable. Repeating the process multiple times gives a more stable performance estimate [43] [44]. | Recommended. Use repeated k-fold cross-validation. |

| Bootstrap | Bootstrap, .632 Bootstrap | An effective alternative for measuring model stability and performance, especially useful with smaller datasets [43]. | Recommended. |

| Systematic Random | Classic Systematic Sampling | A form of probability sampling where every nth member is selected. It is simple but risks bias if a pattern in the data list aligns with the sampling interval [45] [46]. | Use with caution. Requires a randomly ordered list and awareness of potential hidden patterns. |

Experimental Protocols for Robust Validation

Protocol 1: Implementing Repeated Cross-Validation

This protocol is defined to minimize the variance in performance estimation that arises from a single, arbitrary split of the data [44].

- Define Dataset: Start with your full dataset

D. - Set Parameters: Choose the number of folds

V(e.g., 5 or 10) and the number of repetitionsN_exp(e.g., 50 or 100). - Repeat Splitting: For each of the

N_exprepetitions:- Pseudo-randomly shuffle the dataset

Dand split it intoVfolds of approximately equal size.

- Pseudo-randomly shuffle the dataset

- Train and Validate: For each split:

- For

i = 1toV:- Set the

i-th fold aside as the validation set. - Use the remaining

V-1folds as the training set. - Train the model on the training set.

- Test the trained model on the validation set and record the performance metric(s).

- Set the

- For

- Calculate Aggregate Performance: After all repetitions and folds, aggregate all the recorded performance metrics (e.g., calculate the mean and standard deviation of the accuracy or error rate). This distribution provides a robust estimate of model performance.

Protocol 2: Nested Cross-Validation for Model Selection and Assessment

This protocol provides an almost unbiased estimate of the true error of a model, especially when you need to perform both model selection (e.g., parameter tuning) and final performance assessment [44].

- Define Outer Loop: Split the dataset

DintoKfolds (e.g., 5 or 10). These are the outer folds. - Model Assessment Loop: For each outer fold

i(this fold will serve as the test set for final assessment):- Let the data not in fold

ibe your temporary datasetD_temp. - Model Selection Loop (Inner CV): Perform a full cross-validation (e.g., repeated k-fold) on

D_tempto find the best model parameters. This involves splittingD_tempinto multiple training/validation sets to tune parameters without ever using the outer test set (foldi). - Train Final Model: Using the best parameters found in the inner loop, train a model on the entire

D_tempdataset. - Assess Performance: Evaluate this final model on the held-out outer test set (fold

i) and record the performance.

- Let the data not in fold

- Final Performance: The performance metrics collected from each of the

Kouter test sets provide the final, unbiased estimate of your model's generalization error.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Key Components for a Robust Validation Workflow

| Item / Solution | Function in Validation |

|---|---|

| Repeated Cross-Validation Script | A script (e.g., in R or Python) that automates the process of repeatedly splitting data, training models, and aggregating results to provide a stable performance estimate. |

| Nested CV Framework | A software framework that facilitates the correct implementation of nested cross-validation, ensuring a strict separation between the model selection and model assessment phases. |

| Stratified Sampling Code | Code that ensures that the relative proportions of different classes (in classification) are preserved in each training and validation fold, which can be important for model assessment [44]. |

| Performance Metric Aggregator | A tool to compute not just the mean, but also the variance, confidence intervals, and distribution of performance metrics across all validation repeats, highlighting the stability of the model. |

| True Blind Test Set | A dataset, collected separately or rigorously held out from the initial analysis, used for the final, one-time assessment of the selected model's real-world performance [43]. |

In the context of a broader thesis on solving recovery issues in accuracy testing research, this technical support center addresses the critical challenges researchers face when validating preclinical ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) prediction models. Poor ADMET properties remain a significant cause of drug development failure, contributing to high attrition rates in later stages [47] [48]. Machine learning (ML) models have emerged as transformative tools for early ADMET assessment, but their real-world implementation faces substantial validation hurdles including data quality issues, model interpretability challenges, and generalization limitations [47] [49] [50]. This guide provides targeted troubleshooting assistance to help researchers recover and maintain model accuracy throughout their experimental workflows.

Troubleshooting Guides

Issue 1: Poor Model Generalization to Novel Chemical Structures

Problem Description: Model performs well on training data but shows significantly degraded performance when applied to new compound libraries or external datasets.

Root Cause Analysis:

- Training data may lack diversity in chemical space representation

- Domain shift between training and application compounds

- Over-reliance on specific molecular descriptors that don't transfer well

Resolution Steps:

- Implement Scaffold-Based Splitting: Ensure your training/test splits separate compounds by molecular scaffolds rather than random splitting [50]

- Conformity Evaluation: Assess the similarity between your application compounds and training data using Tanimoto similarity or other distance metrics

- Feature Engineering: Combine multiple molecular representations including Mol2Vec embeddings, RDKit descriptors, and Mordred descriptors to capture broader chemical context [49] [50]

- Transfer Learning: Pre-train on larger general chemical datasets before fine-tuning on specific ADMET endpoints

Validation Protocol:

Issue 2: Inconsistent Performance Across Different ADMET Endpoints

Problem Description: Model shows strong predictive capability for some ADMET properties (e.g., solubility) but poor performance for others (e.g., toxicity).

Root Cause Analysis:

- Variable data quality and experimental consistency across different ADMET endpoints

- Inadequate feature representation for specific property types

- Class imbalance in categorical endpoints

Resolution Steps:

- Endpoint-Specific Feature Selection: Use statistical filtering to identify optimal molecular descriptors for each ADMET property [50]

- Multi-Task Learning Architecture: Implement shared feature extraction with task-specific heads to leverage correlations between endpoints [49]

- Data Quality Assessment: Apply rigorous data cleaning protocols including SMILES standardization, salt removal, and duplicate resolution [50]

- Ensemble Methods: Combine predictions from multiple model architectures specialized for different endpoint types

Validation Metrics Table:

| ADMET Endpoint | Recommended Algorithm | Key Features | Expected AUC-ROC | Critical Validation Step |

|---|---|---|---|---|

| Solubility | LightGBM with Mordred descriptors | Topological polar surface area, LogP | >0.85 | Temporal validation split |

| CYP450 Inhibition | Random Forest with ECFP6 | Molecular weight, H-bond acceptors | >0.80 | Scaffold split with novel chemotypes |

| hERG Toxicity | Graph Neural Networks | Molecular charge, aromatic ring count | >0.75 | External benchmark dataset |

| Bioavailability | Multi-task DNN | Mol2Vec + PhysChem properties | >0.82 | Cross-species consistency check |

Issue 3: Discrepancies Between Computational Predictions and Experimental Results

Problem Description: Significant differences observed between in silico predictions and subsequent in vitro or in vivo experimental validation.

Root Cause Analysis:

- Experimental condition variability not accounted for in training data

- Species-specific differences in metabolic pathways

- Inadequate representation of physiological complexity in models

Resolution Steps:

- Experimental Condition Mapping: Extract and standardize experimental conditions (buffer type, pH, cell lines) using LLM-based data mining approaches [51]

- Human-Specific Modeling: Prioritize human-specific ADMET endpoints and use human-derived experimental data when available [49]

- PBPK Integration: Incorporate physiologically-based pharmacokinetic modeling to bridge in vitro-in vivo gaps [52] [48]

- Uncertainty Quantification: Implement calibrated confidence estimates for predictions to flag low-reliability compounds

Condition Standardization Workflow:

Frequently Asked Questions

Data Quality and Preparation

Q: How should we handle inconsistent measurements for the same compound across different datasets?

A: Implement a rigorous data cleaning pipeline that includes:

- SMILES standardization using tools like those described by Atkinson et al. [50]

- Salt stripping and parent compound extraction using truncated salt lists

- Deduplication with consistency checks - remove entire compound groups if measurements are inconsistent

- Experimental condition harmonization using multi-agent LLM systems to identify and standardize buffer conditions, pH, and methodology [51]

Q: What constitutes a "clean" ADMET dataset for model training?

A: A validated clean dataset should have:

- Consistent SMILES representations after canonicalization

- Removal of inorganic salts and organometallic compounds

- Resolved tautomers to consistent functional group representations

- No duplicate measurements with conflicting values

- Documented experimental conditions for proper context

Model Development and Validation

Q: What validation strategy best predicts real-world model performance?

A: Beyond simple train-test splits, implement:

- Scaffold splitting to assess performance on novel chemical classes [50]

- Temporal validation using time-separated data to simulate real deployment

- External dataset validation against completely independent sources [50]

- Statistical hypothesis testing with cross-validation to ensure significance of improvements [50]

Q: How can we address the "black box" problem for regulatory acceptance?

A: Enhance model interpretability through:

- Feature importance analysis using SHAP or similar methods

- Multi-representation approaches that combine interpretable descriptors with deep learning embeddings [49]

- Decision consistency checks across related endpoints

- Comprehensive documentation of model limitations and domain of applicability

Implementation and Scaling

Q: What computational resources are typically required for robust ADMET model development?

A: Resource requirements vary by approach:

| Model Type | Training Data Size | Memory Requirements | Training Time | Inference Speed |

|---|---|---|---|---|

| Random Forest | 10,000-50,000 compounds | 16-64 GB RAM | Hours | Fast |

| Graph Neural Networks | 50,000-500,000 compounds | 32-128 GB RAM, GPU | Days | Moderate |

| Multi-task DNN | 100,000+ compounds | 64+ GB RAM, Multiple GPUs | Weeks | Fast after setup |

| Transformer-based | 1M+ compounds | 128+ GB RAM, High-end GPUs | Weeks | Variable |

The Scientist's Toolkit: Research Reagent Solutions

Essential Computational Tools and Databases

| Tool/Resource | Type | Primary Function | Application in ADMET Validation |

|---|---|---|---|

| PharmaBench [51] | Benchmark Dataset | Curated ADMET data with standardized conditions | Model benchmarking and transfer learning |

| RDKit [50] | Cheminformatics | Molecular descriptor calculation and manipulation | Feature engineering and data preprocessing |

| Chemprop [50] | Deep Learning | Message passing neural networks for molecules | State-of-the-art property prediction |

| ADMETlab [47] | Prediction Platform | Comprehensive ADMET endpoint prediction | Baseline model comparison |

| TDC [50] | Data Commons | Therapeutic data aggregation and benchmarking | Access to multiple standardized datasets |

| Mol2Vec [49] | Representation Learning | Molecular embedding generation | Alternative to traditional fingerprints |

| Mordred [49] | Descriptor Calculator | 2D/3D molecular descriptor computation | Comprehensive feature representation |

| Assay/Platform | Measurement Type | Throughput | Key Validation Parameters |

|---|---|---|---|

| Caco-2 Permeability [48] | Intestinal Absorption | Medium | Transport efficiency, TEER values |

| Human Liver Microsomes [48] | Metabolic Stability | Medium | Intrinsic clearance, metabolite profiling |

| hERG Patch Clamp [49] | Cardiotoxicity | Low | IC50 values, channel inhibition |

| PAMPA [48] | Passive Permeability | High | Effective permeability coefficients |

| Hepatocyte Assays [49] | Clearance Prediction | Medium | Metabolic half-life, intrinsic clearance |

| Plasma Protein Binding [48] | Distribution | Medium | Fraction unbound, binding constants |

Advanced Validation Workflow

The complete validation strategy incorporates multiple verification stages:

Protocol: Cross-Source Dataset Validation

Purpose: To evaluate model performance consistency when applied to data from different experimental sources.

Materials:

- Internal curated dataset

- At least two external public datasets (e.g., from TDC, PharmaBench, or Biogen data) [50] [51]

- Standardized molecular featurization pipeline

- Multiple ML algorithms (Random Forest, LightGBM, GNN)

Procedure:

- Data Harmonization: Apply consistent cleaning protocols across all datasets

- Feature Alignment: Ensure identical feature representation across sources

- Model Training: Train separate models on each data source

- Cross-Testing: Evaluate each model on all other sources

- Performance Delta Analysis: Calculate performance differences between internal and external tests

- Consistency Thresholding: Flag models showing >15% performance drop across sources

Acceptance Criteria:

- AUC-ROC degradation < 0.15 between internal and external tests

- Consistent feature importance rankings across datasets

- Statistical significance in performance metrics (p < 0.05)

This comprehensive technical support framework enables researchers to implement robust validation strategies that recover and maintain model accuracy throughout the ADMET prediction lifecycle, directly addressing the core challenges in accuracy testing research for drug discovery.

Identifying and Solving Common Recovery Pitfalls: From Overfitting to Data Leakage

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Model Overfitting

Problem: Your model performs with high accuracy on training data but shows a significant drop in performance when applied to new, unseen validation or test data [53] [54].

Symptoms & Diagnosis:

- Primary Symptom: A large gap between performance metrics (e.g., accuracy, R²) on the training dataset versus the validation or test dataset [55] [56]. For instance, your model may achieve 98% accuracy on training data but only 65% on test data.

- Visual Inspection: In model loss curves, the validation loss stops decreasing and begins to increase, while the training loss continues to fall [54].

- Model Complexity: The model is highly complex (e.g., a deep decision tree or a neural network with many layers) and may be attempting to pass through every single data point in the training set, including outliers and noise [57] [58].

Solution Steps:

- Verify Data Splits: Ensure your data has been properly shuffled and split into training, validation, and test sets. The validation/test set must be statistically similar to the training set to serve as a reliable proxy for unseen data [54].

- Apply Regularization: Introduce penalty terms to your model's loss function to discourage complexity. L1 (Lasso) and L2 (Ridge) are common techniques that help prevent the model from relying too heavily on any single feature [55] [59].

- Implement Early Stopping: Monitor the validation loss during training. Halt the training process as soon as the validation loss fails to improve for a pre-defined number of epochs [53] [60].

- Simplify the Model: Reduce model complexity by using fewer parameters, shallower networks, or pruning decision trees [57] [59].

- Increase Training Data: If possible, collect more high-quality, representative training data. A larger dataset makes it harder for the model to memorize noise and forces it to learn generalizable patterns [55] [61].

Guide 2: Addressing Convolutional Neural Network (CNN) Failures on Novel Image Data