Specificity vs Selectivity in Analytical Method Validation: Key Concepts for Reliable Pharmaceutical Analysis

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on distinguishing between specificity and selectivity in analytical method validation.

Specificity vs Selectivity in Analytical Method Validation: Key Concepts for Reliable Pharmaceutical Analysis

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on distinguishing between specificity and selectivity in analytical method validation. It clarifies the foundational definitions as per ICH and other regulatory guidelines, explores practical methodologies for assessment, addresses common troubleshooting scenarios, and outlines the requirements for successful method validation. By synthesizing regulatory standards with practical applications, this resource aims to enhance the reliability, accuracy, and regulatory compliance of analytical data in pharmaceutical and bioanalytical workflows.

Demystifying the Definitions: Specificity and Selectivity in Regulatory Contexts

In the realm of analytical method validation, specificity stands as a fundamental parameter, ensuring the reliability and accuracy of data generated in drug development and quality control. Within the broader research context of specificity versus selectivity, it is critical to establish precise, unambiguous definitions. According to the International Council for Harmonisation (ICH) guideline Q2(R1), the core definition of specificity is unequivocal: "Specificity is the ability to assess unequivocally the analyte in the presence of components which may be expected to be present" [1] [2] [3].

The term "unequivocal" itself means unambiguous, clear, and having only one possible meaning or interpretation [4]. This definition underscores that a specific analytical method can accurately identify and quantify the target analyte amidst a sample matrix that typically contains other constituents, such as impurities, degradants, or excipients [1] [3]. It is the guarantee that the measured signal is derived solely from the analyte of interest, free from interference.

Specificity vs. Selectivity: A Critical Distinction

While often used interchangeably, specificity and selectivity represent distinct concepts in analytical chemistry.

- Specificity is the ideal term for methods that respond exclusively to one single analyte. It implies that the method can confirm the identity of a known analyte within a mixture without needing to identify the other components present [1]. Using a common analogy, if you have a bunch of keys, a specific method can identify the one key that opens the lock, without requiring knowledge of the other keys in the bunch [1].

- Selectivity, on the other hand, is a term more often applied to methods that can simultaneously differentiate and quantify multiple different analytes in a single sample [1]. Extending the analogy, a selective method would be able to identify all the keys in the bunch, not just the one that fits the lock [1]. The IUPAC recommends the use of "selectivity" for analytical methods in a broader context [1].

For the purposes of this whitepaper, focused on the core definition, the discussion will center on specificity as defined by ICH.

Experimental Protocols for Demonstrating Specificity

Demonstrating specificity is a procedural cornerstone of method validation. The following detailed protocols outline the key experiments required to prove a method can assess the analyte unequivocally.

Protocol for Forced Degradation Studies

Forced degradation studies, also known as stress testing, are critical for demonstrating specificity by showing that the method can accurately measure the analyte even when decomposition products are present.

- Objective: To prove the method's stability-indicating property by separating the analyte from its degradation products.

- Materials:

- Purified analyte (drug substance)

- Relevant stress agents: Acid (e.g., 0.1M HCl), Base (e.g., 0.1M NaOH), Oxidizing agent (e.g., 3% H₂O₂), Thermal stress (e.g., oven at 70°C), Photolytic stress (e.g., UV light chamber)

- Appropriate analytical instrument (e.g., HPLC system with a DAD or MS detector)

- Methodology:

- Sample Preparation: Subject separate portions of the analyte to various stress conditions to induce approximately 5-20% degradation. Include an unstressed control sample.

- Acid/Base Hydrolysis: Treat the analyte with acid and base at elevated temperatures (e.g., 60°C) for a defined period (e.g., 1-24 hours). Neutralize before analysis.

- Oxidative Degradation: Expose the analyte to an oxidizing agent at room temperature for a set duration.

- Thermal Degradation: Heat the solid analyte in an oven at a specified temperature.

- Photolytic Degradation: Expose the analyte to UV and/or visible light as per ICH Q1B guidelines.

- Analysis: Inject the stressed samples and the control into the analytical system. The chromatogram of the stressed sample should demonstrate baseline resolution (typically Rs ≥ 2.0) between the analyte peak and all degradation peaks [2]. The analyte peak should also be spectrally pure (e.g., confirmed by diode-array detection or mass spectrometry).

Protocol for Interference Testing with Sample Matrix

This protocol verifies that the sample matrix itself does not cause interference at the retention time of the analyte.

- Objective: To confirm that excipients, placebo, or biological matrix components do not co-elute with or obscure the analyte signal.

- Materials:

- Methodology:

- Blank Matrix Analysis: Inject the blank matrix and analyze. The resulting chromatogram should show no peak at the retention time of the analyte [3].

- Standard Analysis: Inject a solution of the analyte standard to confirm its retention time and peak shape.

- Spiked Matrix Analysis: Inject the sample of the blank matrix that has been spiked with a known concentration of the analyte.

- Data Interpretation: The chromatogram from the spiked matrix should show a single, well-defined peak for the analyte. The recovery of the analyte from the spiked matrix should be within acceptable limits (e.g., 98-102%), confirming the matrix does not cause suppression or enhancement of the signal [2]. The resolution between the analyte peak and the closest eluting matrix peak should be sufficient, ideally Rs ≥ 1.7 [2].

Protocol for Critical Peak Separation (Chromatographic Methods)

For chromatographic techniques, specificity is quantitatively demonstrated by the resolution of critical peak pairs.

- Objective: To demonstrate the method's power to separate the analyte from the closest eluting potential interferent.

- Methodology:

- Identify Critical Pair: Prepare a mixture containing the analyte and the component expected to be the most challenging to separate from it (e.g., a structurally similar impurity or a known matrix component).

- Chromatographic Analysis: Inject the mixture and record the chromatogram.

- Calculate Resolution: Determine the resolution (Rs) between the two closest-eluting peaks. The ICH Q2(R1) guideline states that for critical separations, "specificity can be demonstrated by the resolution of the two components which elute closest to each other" [1]. A resolution of Rs ≥ 2.0 is often targeted for baseline separation [2]. The formula for resolution is: Rs = [2(t₂ - t₁)] / (w₁ + w₂) where t is retention time and w is peak width at baseline.

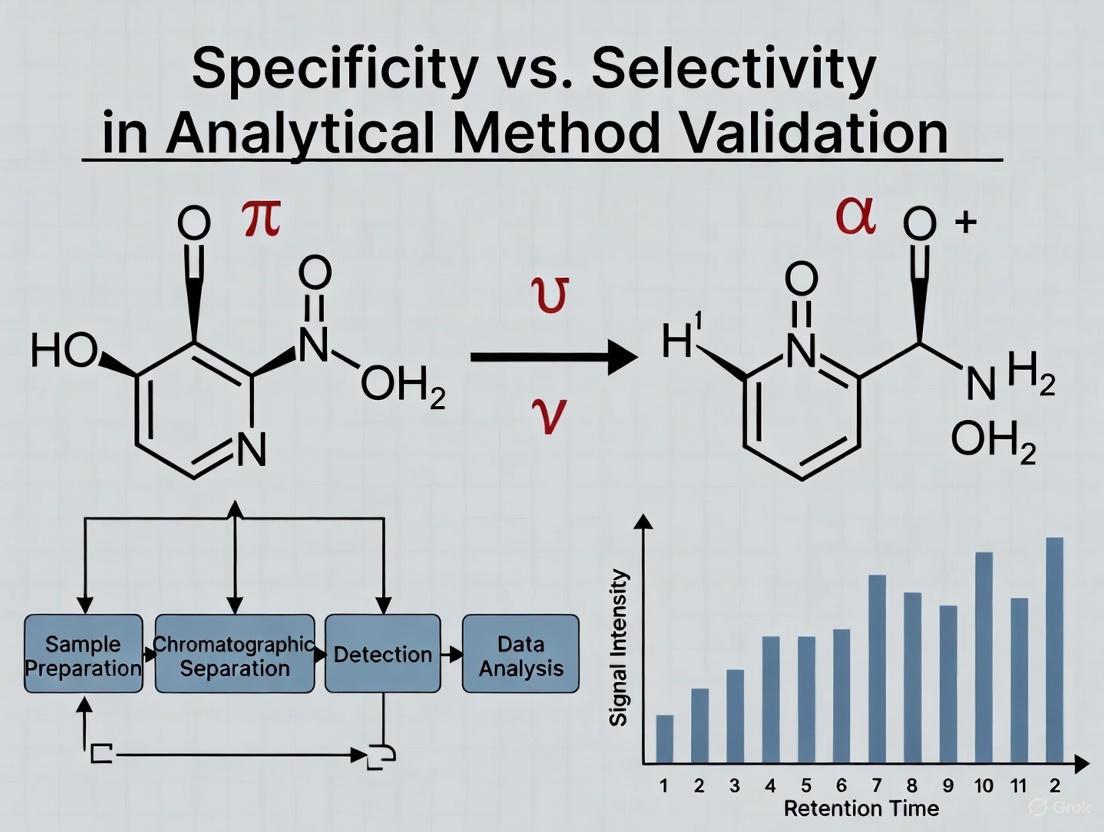

Visualizing Specificity in Analytical Method Validation

The following diagram illustrates the logical workflow and decision points for establishing method specificity, integrating the core protocols.

Specificity Validation Workflow

Quantitative Data and Acceptance Criteria

The demonstration of specificity yields quantitative data that must meet pre-defined acceptance criteria to confirm the method is fit-for-purpose. The table below summarizes the key parameters and their targets.

Table 1: Key Quantitative Parameters for Specificity Assessment

| Parameter | Experimental Approach | Acceptance Criterion | Rationale |

|---|---|---|---|

| Chromatographic Resolution (Rs) [1] [2] | Analysis of a mixture of the analyte and closest-eluting interferent. | Rs ≥ 2.0 (Baseline separation) [2] | Ensures complete separation for accurate integration of analyte and impurity peaks. |

| Peak Purity [2] | Diode-array detection (DAD) or mass spectrometry (MS) of the analyte peak in a stressed sample. | Purity match factor or MS spectrum confirms a single, homogeneous component. | Confirms the analyte peak is not co-eluting with another substance. |

| Analyte Recovery in Matrix [2] | Comparison of analyte response in spiked matrix vs. neat solution. | Typically 98-102% recovery. | Demonstrates the matrix does not suppress or enhance the analyte signal. |

| Blank Matrix Interference [2] [3] | Analysis of blank sample matrix (placebo, untreated plasma, etc.). | No peak at the retention time of the analyte. | Verifies the signal is from the analyte alone. |

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key reagents and materials essential for conducting rigorous specificity experiments.

Table 2: Essential Research Reagent Solutions for Specificity Testing

| Item / Reagent | Function in Specificity Assessment |

|---|---|

| Placebo Formulation / Blank Matrix [2] [3] | Serves as the negative control to test for interference from excipients, buffers, or endogenous components. |

| Forced Degradation Reagents (Acid, Base, Oxidant) [1] [3] | Used to intentionally degrade the analyte, generating impurity and degradation product profiles to challenge the method's separating power. |

| Structurally Related Impurities/Standards | Used to spike into the analyte sample to prove the method can resolve the analyte from known, similar compounds. |

| Chromatographic Column (HPLC/UPLC) | The stationary phase is critical for achieving the necessary separation; robustness testing often involves evaluating columns from different lots or manufacturers [2] [3]. |

| Mass Spectrometry (MS) Detector [2] | Provides definitive confirmation of peak identity and purity, orthogonal to chromatographic retention time. |

In analytical chemistry, selectivity is a fundamental parameter that refers to a method's capability to distinguish and quantify multiple target analytes in a complex mixture without interference from other components in the sample matrix [5] [6]. This concept is often incorrectly used interchangeably with specificity, though they represent distinct methodological attributes. According to IUPAC guidelines, specificity describes the ideal scenario where a method responds exclusively to a single analyte and is considered the ultimate expression of selectivity—a binary property that cannot be graded [5]. In contrast, selectivity is a gradable property that expresses the extent to which a method can determine particular analytes in complex matrices without interference from other components [5].

Within pharmaceutical research and environmental monitoring, establishing method selectivity is crucial for generating reliable data that supports regulatory submissions and ensures product safety [6] [7]. The distinction becomes particularly significant when analyzing complex samples where structurally similar compounds, isomers, impurities, degradants, or matrix components may coexist with the target analytes [8]. A highly selective method can accurately measure each analyte of interest despite these potential interferents, thereby preventing false positives or negatives that could compromise quality control decisions or environmental risk assessments [8].

Theoretical Foundation: The Specificity-Selectivity Distinction

The conceptual relationship between specificity and selectivity represents a critical foundation for understanding analytical method performance. As defined by IUPAC and other scientific organizations, specificity refers to the situation where a method is completely free from interferences and measures only the intended analyte [5]. This represents an absolute characteristic that cannot be graded—methods are either specific or not. In practical analytical chemistry, however, truly specific methods are rare, particularly when working with complex matrices such as biological fluids, environmental samples, or formulated pharmaceutical products [5].

Selectivity, conversely, represents a graduated capability of a method to determine particular analytes in mixtures or matrices without interference from other components [5]. This gradable nature means methods can demonstrate varying degrees of selectivity, from low to high, depending on their ability to distinguish between the target analyte and potential interferents. The relationship between these concepts is hierarchical: specificity represents the ultimate degree of selectivity, where cross-reactivity or interference is reduced to zero [5].

The distinction becomes particularly evident in techniques such as immunological methods, which are sometimes erroneously described as specific. As these methods often demonstrate cross-reactivity with structurally similar compounds, they are more accurately classified as selective rather than specific [5]. This precision in terminology ensures proper methodological characterization and prevents overstatement of analytical capabilities in scientific literature and regulatory submissions.

Quantitative Assessment of Selectivity

Selectivity is evaluated through systematic challenge tests that determine a method's ability to produce accurate results for target analytes despite the presence of potential interferents [6]. The assessment involves demonstrating that measurements of the analytes of interest remain unaffected by other components that are likely to be present in the sample matrix, such as impurities, degradants, excipients, or structurally similar compounds [7].

Experimental Protocols for Selectivity Assessment

For Pharmaceutical Analysis:

- Sample Preparation: Prepare individual solutions of the target analyte, known impurities, degradation products (generated through forced degradation studies), and excipients at expected concentration levels [6] [7].

- Chromatographic Separation: Inject each solution separately into the analytical system (typically HPLC or UHPLC) to determine retention times and peak responses [7].

- Interference Testing: Prepare a mixture containing all components to demonstrate resolution between the analyte peaks and potential interferents. Critical peak pairs should show resolution greater than 1.5 [7].

- Forced Degradation: Subject the analyte to stress conditions (acid/base hydrolysis, oxidation, thermal degradation, photolysis) and demonstrate that degradation products do not interfere with the quantification of the target analyte [6].

For Environmental Analysis (e.g., Pharmaceutical Monitoring in Water):

- Matrix Spiking: Fortify blank water samples (representing different water types: surface water, wastewater, drinking water) with target analytes at relevant concentration levels [9].

- Interference Assessment: Analyze both fortified and unfortified samples to identify potential matrix interferences. In mass spectrometry, monitor for ion suppression or enhancement effects [9].

- Specificity Confirmation: For LC-MS/MS methods, use Multiple Reaction Monitoring (MRM) transitions to confirm analyte identity based on molecular mass and specific fragmentation patterns, ensuring they are distinct from co-eluting matrix components [9].

Table 1: Key Parameters for Selectivity Assessment in Chromatographic Methods

| Parameter | Assessment Method | Acceptance Criteria |

|---|---|---|

| Chromatographic Resolution | Measure separation between analyte and closest eluting potential interferent | Resolution ≥ 1.5 between critical pairs [7] |

| Peak Purity | Use diode array detection or mass spectrometry to evaluate peak homogeneity | Peak purity index ≥ 990 (indicating homogeneous peak) [7] |

| Matrix Effects | Compare analyte response in neat solution versus matrix | Signal suppression/enhancement ≤ 15% [9] |

| Retention Time Stability | Measure consistency of analyte retention times across different conditions | RSD ≤ 1% for retention times [6] |

Methodological Approaches to Generate Selectivity

Different analytical techniques offer varying degrees of inherent selectivity, with methodological choices significantly impacting the ability to distinguish multiple analytes from interferences.

Chromatographic Separation Methods

Chromatographic techniques form the foundation for achieving selectivity in complex mixture analysis through differential partitioning of compounds between stationary and mobile phases [5]. The degree of selectivity depends on the specific interactions between analytes, stationary phase, and mobile phase composition. High-performance liquid chromatography (HPLC) and ultra-high-performance liquid chromatography (UHPLC) achieve selectivity by exploiting differences in analyte polarity, hydrophobicity, ion-exchange properties, or molecular size [7]. Gas chromatography (GC) provides selectivity based on volatility and polarity interactions with the stationary phase [5].

Hyphenated Techniques

The combination of separation techniques with sophisticated detection methods represents a powerful approach to enhance selectivity [5]. Hyphenated techniques such as gas chromatography-mass spectrometry (GC-MS) and liquid chromatography-tandem mass spectrometry (LC-MS/MS) provide orthogonal selectivity mechanisms by combining physical separation with spectral identification [5] [9]. In these systems, the separation technique resolves analytes in time, while the detection method adds another dimension of selectivity based on mass-to-charge ratios, fragmentation patterns, or spectral signatures [9].

Table 2: Selectivity Comparison Across Analytical Techniques

| Analytical Technique | Selectivity Mechanism | Typical Applications | Selectivity Level |

|---|---|---|---|

| Immunoassays | Antigen-antibody molecular recognition | Clinical diagnostics, biomarker detection | Moderate (subject to cross-reactivity) [5] |

| HPLC with UV Detection | Retention time + spectral information | Pharmaceutical analysis, impurity profiling | Moderate to High [7] |

| GC-MS | Volatility + retention time + mass spectrum | Environmental analysis, volatile organic compounds | High [5] |

| LC-MS/MS (MRM mode) | Retention time + precursor ion + product ion | Trace analysis in complex matrices (e.g., pharmaceuticals in water) | Very High [9] |

| Ion-Selective Electrodes | Molecular recognition at membrane interface | Ion concentration measurement | Low to Moderate (subject to interference) [5] |

Case Study: Selective Pharmaceutical Monitoring in Water

A recent implementation of selective analysis demonstrates the determination of carbamazepine, caffeine, and ibuprofen in water and wastewater using UHPLC-MS/MS [9]. This method exemplifies modern approaches to achieving high selectivity through:

- Chromatographic Separation: UHPLC provides initial selectivity by separating compounds based on hydrophobicity and column chemistry with high efficiency [9].

- Mass Spectrometric Detection: Tandem mass spectrometry in Multiple Reaction Monitoring (MRM) mode adds orthogonal selectivity by monitoring specific precursor-to-product ion transitions for each compound [9].

- Sample Preparation Optimization: Solid-phase extraction selectively concentrates target analytes while reducing matrix interferences without requiring solvent evaporation, aligning with green chemistry principles [9].

The method successfully demonstrated specificity (as defined in ICH guidelines) with correlation coefficients ≥0.999, precision (RSD <5.0%), and accurate recovery rates from 77-160% across the target analytes, highlighting the practical achievement of high selectivity in a complex environmental matrix [9].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Selectivity Experiments

| Reagent/Material | Function in Selectivity Assessment | Application Examples |

|---|---|---|

| Chromatographic Columns | Differential separation of analytes based on chemical properties | C18, phenyl, cyano, HILIC, chiral stationary phases [7] |

| Mass Spectrometry Reference Standards | Method calibration and analyte identification | Certified reference materials for target analytes and internal standards [9] |

| Forced Degradation Reagents | Generation of potential degradants for interference studies | Acid (HCl), base (NaOH), oxidant (H₂O₂), thermal and photolytic stress conditions [6] |

| Sample Preparation Sorbents | Selective extraction and cleanup of target analytes | Solid-phase extraction cartridges (C18, mixed-mode, polymeric) [9] |

| Matrix Components | Challenge testing with potential interferents | Placebo formulations (excipients), biological fluids, environmental matrix samples [6] [7] |

Selectivity represents a fundamental gradable property of analytical methods that enables reliable measurement of multiple analytes in the presence of potential interferents. The precise distinction between selectivity and specificity is crucial for proper method characterization, with specificity representing the ultimate, non-gradable form of selectivity where no interferences occur [5]. Through strategic implementation of chromatographic separation, hyphenated techniques, and appropriate sample preparation, analytical scientists can achieve the necessary selectivity to address complex analytical challenges in pharmaceutical and environmental analysis [5] [9].

The experimental protocols and case studies presented provide a framework for systematically evaluating and demonstrating method selectivity, emphasizing the importance of challenge tests with potential interferents relevant to the sample matrix [6] [7]. As analytical challenges continue to evolve with increasing matrix complexity and lower detection limit requirements, the fundamental role of selectivity in ensuring data quality and reliability remains paramount in analytical method validation.

In the highly regulated world of pharmaceutical development, the validation of analytical methods is a critical prerequisite for ensuring drug safety, efficacy, and quality. Among the various validation parameters, the concepts of specificity and selectivity are fundamental, yet their distinction often creates confusion among even experienced scientists. The International Council for Harmonisation (ICH) guideline Q2(R1) provides definitions, but practical understanding requires clear, relatable illustrations [10]. Within this context, the "bunch of keys" analogy emerges as an exceptionally powerful tool for delineating the precise difference between these two parameters. This whitepaper explores this analogy in depth, framing it within the broader scope of analytical method validation research and providing the experimental protocols necessary for its practical demonstration in a regulatory-compliant laboratory setting.

The consistent mix-up between specificity and selectivity stems not from a lack of technical knowledge, but from the absence of a persistent mental model. Analogies bridge abstract regulatory concepts with tangible, everyday objects, thereby enhancing comprehension and retention. For researchers, scientists, and drug development professionals, a firm grasp of this distinction is not merely academic; it is essential for designing validation protocols, interpreting data correctly, and successfully navigating regulatory audits [1] [11].

Defining the Concepts: Specificity vs. Selectivity

Official Definitions and the Core Distinction

According to the ICH Q2(R1) guideline, specificity is defined as "the ability to assess unequivocally the analyte in the presence of components which may be expected to be present" [1]. In essence, a specific method can accurately identify and/or quantify the target analyte amidst a mixture of potentially interfering substances, such as impurities, degradation products, or sample matrix components. The European guideline on bioanalytical method validation further refines the concept of selectivity, defining it as the ability "to differentiate the analyte(s) of interest and IS from endogenous components in the matrix or other components in the sample" [1].

The fundamental distinction lies in the scope of analysis. A method is specific when it is concerned with a single analyte, ensuring that the measured response is due to that analyte alone. A method is selective when it can successfully identify and/or quantify multiple different analytes within the same sample, distinguishing each one from all others [1] [11]. The International Union of Pure and Applied Chemistry (IUPAC) notes that "specificity is the ultimate of selectivity" and often recommends the use of the term 'selectivity' in analytical chemistry, as very few methods respond to only one analyte [10].

The "Bunch of Keys" Analogy

The "bunch of keys" provides a perfect, intuitive model for understanding this distinction [1] [11].

- The Scenario: Imagine a bunch of keys, where each key is a different chemical entity in a sample mixture. The lock on a specific door represents the detector or the analytical method.

- Illustrating Specificity: In this context, specificity is the ability of the lock to be opened by one, and only one, correct key—the analyte of interest. The goal is not to identify what the other keys are, but simply to ensure that they do not open the lock. The method is specific if it responds only to the target key (analyte) and not to any others (potential interferents) [1].

- Illustrating Selectivity: Selectivity, however, requires the identification and differentiation of all keys in the bunch. It demands that the method can not only identify the one correct key for the lock but also recognize and distinguish all other keys present—be they for a car, a cabinet, or a safe. In analytical terms, a selective method can resolve, identify, and quantify all analytes of interest in a multi-component mixture [1] [11].

The following diagram visualizes this logical relationship, mapping the analogy to the technical parameters and their outcomes.

Regulatory and Experimental Framework

Validation Requirements Across Guidelines

The requirement to demonstrate either specificity or selectivity is mandated by all major regulatory bodies, though the terminology can vary. The following table summarizes the position of key international guidelines, highlighting that while ICH Q2(R1) focuses on "specificity," other frameworks acknowledge both terms or emphasize "selectivity" for multi-analyte methods [10].

Table 1: Regulatory Stance on Specificity and Selectivity in Method Validation

| Regulatory Guideline | Primary Terminology Used | Context and Requirements |

|---|---|---|

| ICH Q2(R1) | Specificity | Required for identification, impurity, and assay tests. For chromatography, critical separation is demonstrated by the resolution of the two closest-eluting components [1] [10]. |

| FDA | Specificity/Selectivity | Acknowledges both terms, requiring demonstration that the method can differentiate the analyte in the presence of other components [10]. |

| European Pharmacopoeia | Specificity | Follows ICH definitions, emphasizing the need to detect the analyte unequivocally among potential interferents [10]. |

| USP | Specificity | Validation parameter includes specificity, with emphasis on peak purity for chromatographic methods [10]. |

Experimental Protocols for Demonstration

Demonstrating specificity and selectivity involves a series of deliberate experiments designed to challenge the method with potential interferents. The specific protocols depend on the type of analytical procedure (e.g., identification, assay, or impurity test).

Protocol for Specificity (Assay and Purity Tests)

This protocol is designed to prove that the assay result for the active ingredient is unaffected by the presence of impurities, degradation products, or excipients [1] [10].

Sample Preparation:

- Pure Analyte (Reference): Prepare a sample of the pure drug substance (analyte) at the target concentration.

- Placebo/Matrix Blank: Prepare a sample containing all excipients or the full sample matrix without the analyte.

- Spiked Mixture: Spike the pure analyte with appropriate levels of all available impurities, degradation products, and excipients. For forced degradation studies, stress the drug product (e.g., with heat, light, acid, base, oxidant) to generate degradation products [1] [12].

Analysis and Acceptance Criteria:

- Analyze all samples using the method.

- The placebo/matrix blank should show no interference, meaning no peak or signal at the retention time/migration position of the analyte.

- The spiked mixture or stressed sample should demonstrate that the analyte peak is pure (e.g., as determined by a Diode Array Detector or Mass Spectrometer) and that the assay result for the analyte is equivalent (within predefined acceptance criteria) to the result obtained from the unspiked pure analyte reference [10].

Protocol for Selectivity (Multi-Analyte Methods)

This protocol is used for methods that quantify multiple analytes simultaneously, such as impurity profiling or bioanalytical methods.

Sample Preparation:

- Individual Analyte Standards: Prepare separate solutions of each individual analyte of interest at the target concentration.

- Mixed Standard: Prepare a solution containing all analytes of interest at their target concentrations.

- Matrix Sample Spiked with Analytes: Spike a representative blank matrix (e.g., plasma, placebo) with the mixture of all analytes.

Analysis and Acceptance Criteria:

- Analyze all samples.

- The method must be able to resolve all analytes from each other in the mixed standard and the spiked matrix sample. For chromatographic methods, the resolution between the pair of analytes that elute closest to each other should be greater than a specified limit (e.g., Rs > 1.5) [1] [10].

- The peak purity of each analyte should be confirmed in the mixed standard.

- The quantitation of each analyte in the mixed standard and the spiked matrix sample should be accurate and precise when compared to the individual standard solutions.

The workflow for these experimental studies, from sample preparation to data interpretation, is outlined in the following diagram.

The Scientist's Toolkit: Essential Materials for Validation

Successfully conducting these experiments requires a set of well-characterized reagents and materials. The following table details the essential components of a "Research Reagent Solution" for specificity/selectivity studies.

Table 2: Key Research Reagents and Materials for Specificity/Selectivity Studies

| Reagent/Material | Function in Validation | Critical Quality Attributes |

|---|---|---|

| Drug Substance (Analyte) Reference Standard | Serves as the primary benchmark for identity, retention time, and response factor. | High purity (>98%), fully characterized structure, known impurities profile. |

| Known Impurity and Degradation Product Standards | Used to spike samples to demonstrate resolution from the main analyte and from each other. | Certified purity and concentration, structural confirmation. |

| Placebo Formulation (for Drug Product) | Represents the sample matrix without the active ingredient to test for interference from excipients. | Representative of the final drug product composition, batch-to-batch consistency. |

| Blank Matrix (e.g., Plasma, Serum) | For bioanalytical methods, used to test for interference from endogenous components. | Sourced from appropriate species, confirmed to be free of analytes. |

| Appropriate Solvents and Mobile Phases | Used for sample preparation, dilution, and as the eluent in chromatographic systems. | HPLC/GC grade, low in UV absorbance, free of particulates. |

| System Suitability Standards | A reference mixture used to verify that the total analytical system is performing adequately before and during the analysis. | Contains key analytes to confirm parameters like resolution, precision, and tailing factor. |

The 'bunch of keys' analogy transcends being a mere memory aid; it provides a robust conceptual framework that aligns perfectly with regulatory definitions and practical laboratory workflows. By internalizing this model, scientists can more effectively design, execute, and interpret the validation studies that are the bedrock of pharmaceutical quality control. As analytical techniques continue to evolve towards the simultaneous analysis of increasingly complex mixtures, the principle of selectivity—the ability to identify every key in the bunch—will only grow in importance. A deep and intuitive understanding of the distinction between specificity and selectivity, therefore, remains an indispensable asset for any professional committed to excellence in drug development and validation research.

Analytical method validation stands as a cornerstone of pharmaceutical development, ensuring the reliability, accuracy, and reproducibility of data supporting drug safety and efficacy. The comparative analysis of validation guidelines issued by major international regulatory bodies reveals a complex landscape of harmonized and divergent requirements. Understanding the nuances between the International Council for Harmonisation (ICH), the United States Food and Drug Administration (FDA), and the European Medicines Agency (EMA) is crucial for global drug development strategies. This technical examination frames these regulatory perspectives within a broader scientific investigation into specificity versus selectivity, fundamental analytical parameters that define a method's ability to measure the analyte accurately amidst interfering components [13].

The regulatory harmonization achieved through ICH provides a foundational framework, while regional implementations by FDA and EMA introduce critical distinctions in application and emphasis. For researchers and drug development professionals, navigating these aligned yet distinct pathways demands both technical precision and strategic regulatory insight. This guide provides a detailed comparison of these frameworks, emphasizing their practical implications for analytical method validation, particularly through the lens of specificity and selectivity requirements [13] [14].

Regulatory Frameworks and Governance

ICH: The Global Standard-Setter

The ICH Q2(R1) guideline, titled "Validation of Analytical Procedures: Text and Methodology," represents the internationally harmonized foundation for analytical method validation. Established through collaboration between regulatory authorities and pharmaceutical industries from the European Union, United States, Japan, and other regions, ICH Q2(R1) unifies principles previously contained in separate Q2A and Q2B documents. This guideline provides the core validation parameters and methodology for experimental data required for registration applications, creating a common scientific language for analytical procedure validation across most major markets [15].

FDA: The Prescriptive Regulator

The FDA's approach to method validation is characterized by a rule-based, prescriptive framework codified primarily in 21 CFR Parts 210 and 211. The FDA emphasizes strict adherence to predefined protocols with detailed requirements for validation data generation and documentation. The agency's current thinking reflects a lifecycle approach to validation, incorporating risk management principles and emphasizing method robustness throughout its application. FDA inspectors focus heavily on data integrity and ALCOA principles (Attributable, Legible, Contemporaneous, Original, Accurate) during audits, with particular attention to documentation traceability and raw data verification [13] [14].

EMA: The Principle-Based Coordinator

The EMA operates as a coordinating network across EU Member States rather than a centralized authority like FDA. Its scientific guidelines, including those for method validation, are compiled in EudraLex Volume 4. The EMA's approach is principle-based and directive, expecting manufacturers to interpret guidelines within a comprehensive quality system framework. Unlike the FDA's prescriptive style, EMA emphasizes risk-based thinking and integrated quality management systems (QMS), requiring more extensive justification of scientific decisions rather than strict protocol adherence. The EMA has recently adopted the ICH M10 guideline for bioanalytical method validation, replacing its previous standalone guidance, demonstrating the ongoing harmonization efforts across regions [16] [14] [17].

Table 1: Fundamental Regulatory Structures and Approaches

| Aspect | ICH | FDA | EMA |

|---|---|---|---|

| Primary Guidance | Q2(R1) Validation of Analytical Procedures | 21 CFR Parts 210/211; Lifecycle Approach | ICH M10 (Bioanalytical); EudraLex Volume 4 |

| Regulatory Style | Scientifically harmonized | Prescriptive, rule-based | Principle-based, quality system focused |

| Geographical Scope | International (EU, US, Japan, etc.) | United States | European Union member states |

| Decision-Making | Consensus-based | Centralized federal authority | Network of national authorities |

| Key Emphasis | Analytical performance parameters | Data integrity, protocol adherence | Risk management, QMS integration |

Core Validation Parameters: Comparative Analysis

Specificity and Selectivity: Foundational Concepts

Within analytical method validation, specificity and selectivity represent complementary parameters addressing a method's ability to measure the analyte unequivocally in the presence of interfering components. While terminology differs slightly between guidelines, the fundamental requirement remains consistent: demonstration that the method can accurately quantify the target analyte despite potential interferents from impurities, degradation products, matrix components, or other analytes.

Specificity is often described as the ultimate expression of selectivity – the ability to measure accurately in the presence of all potentially interfering substances. In chromatographic methods, this typically requires demonstration of peak purity using diode array detection or mass spectrometry, while for spectroscopic methods, the absence of spectral overlaps must be verified. For bioanalytical methods, the EMA (through ICH M10) emphasizes matrix effect evaluation specifically, requiring assessment of ionization suppression or enhancement in mass spectrometry-based methods [16].

Comprehensive Parameter Comparison

The following table provides a detailed comparison of validation parameter requirements across the three regulatory frameworks, highlighting distinctions in emphasis and acceptance criteria that impact method development and validation strategies.

Table 2: Analytical Method Validation Parameters Comparison

| Validation Parameter | ICH Q2(R1) | FDA Approach | EMA/ICH M10 Approach |

|---|---|---|---|

| Specificity/Selectivity | Required; demonstrate unequivocal assessment | Required; forced degradation studies expected | Required; matrix effects assessment for bioanalytical |

| Accuracy | Required; recovery studies 80-120% typically | Required; protocol-specific criteria | Required; may emphasize patient population relevance |

| Precision | Required (repeatability, intermediate precision) | Required; includes system suitability | Required; may require additional ruggedness testing |

| Detection Limit (LOD) | Required for impurity methods | Required when applicable | Required when applicable |

| Quantitation Limit (LOQ) | Required for impurity methods | Required when applicable | Required when applicable |

| Linearity | Required; minimum 5 concentration points | Required; protocol-specific range | Required; may emphasize therapeutic range |

| Range | Required; established from linearity studies | Required; justified based on application | Required; may consider clinical relevance |

| Robustness | Recommended; often tested during development | Expected; system suitability controls | Required; quality by design approach encouraged |

Regulatory Emphasis and Documentation

The regulatory emphasis on certain validation parameters differs between agencies, reflecting their distinct philosophical approaches. The FDA's prescriptive nature manifests in detailed expectations for protocol pre-specification and strict adherence to predefined acceptance criteria. Any deviation triggers rigorous investigation and documentation. FDA submissions require comprehensive raw data presentation with explicit statistical analysis supporting validation conclusions [14].

In contrast, EMA's principle-based approach emphasizes scientific justification behind selected parameters and acceptance criteria. The EMA may place greater emphasis on the clinical relevance of validation results, particularly for bioanalytical methods supporting pharmacokinetic studies. Documentation for EMA submissions must demonstrate how validation parameters ensure patient safety and reliable results within the context of clinical use, with stronger integration into the overall Pharmaceutical Quality System [16] [14].

Experimental Protocols for Specificity and Selectivity Assessment

Chromatographic Method Protocol

For HPLC/UV-DAD methods, the following protocol provides comprehensive specificity/selectivity validation:

Materials and Equipment:

- HPLC system with diode array detector (DAPI)

- Reference standard of analyte (highest available purity)

- Potentially interfering substances (impurities, degradation products, matrix components)

- Appropriate chromatographic column and mobile phase components

Experimental Procedure:

- System Preparation: Equilibrate HPLC system with mobile phase at specified flow rate and column temperature

- Individual Solutions: Prepare separate solutions of analyte and each potential interfering compound at expected concentration levels

- Forced Degradation Samples: Subject analyte to stress conditions (acid/base, oxidation, thermal, photolytic) and analyze degraded samples

- Resolution Solution: Prepare mixture containing analyte and all potential interferents at expected maximum concentrations

- Chromatographic Analysis: Inject individual solutions and mixture using validated method parameters

- Peak Purity Assessment: Use DAD to collect spectra across each peak and verify purity through spectral overlay and match factor calculations

- Resolution Calculation: Measure resolution between analyte peak and closest eluting interferent

Acceptance Criteria:

- Resolution between analyte and all interferents ≥ 2.0

- Peak purity index ≥ 990 (on scale of 0-1000)

- No co-elution observed in mixed standard injection

- Spectral homogeneity confirmed across entire peak

Ligand Binding Assay Protocol

For immunoassay methods requiring selectivity assessment:

Materials and Equipment:

- Reference standard and quality control samples

- Potentially cross-reacting structurally similar compounds

- Target population and normal control matrices

- Required reagents, plates, and detection equipment

Experimental Procedure:

- Preparation of Cross-reactivity Solutions: Spike potentially interfering compounds at 100x expected physiological concentration into analyte solutions at low, medium, and high QC levels

- Matrix Selection: Collect matrices from at least 10 individual sources of relevant population (disease state if applicable)

- Parallelism Assessment: Prepare serial dilutions of analyte in different matrices and compare dose-response curves to reference standard

- Recovery Assessment: Spike known analyte concentrations into different matrices and calculate percentage recovery

- Assay Performance: Analyze all samples in validated assay format

Acceptance Criteria:

- Cross-reactivity with structurally similar compounds < 5%

- Parallelism demonstrated with curves parallel to reference standard

- Mean recovery within 85-115% across all matrices

- No significant matrix effects observed across individual sources

Visualization of Regulatory Relationships

Regulatory Guideline Relationships and Emphases

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Reagents for Analytical Method Validation

| Reagent/Material | Function in Validation | Specific Application |

|---|---|---|

| Reference Standard | Primary measurement standard | Quantification and method calibration |

| Forced Degradation Reagents | Specificity demonstration | Acid, base, oxidants, heat, light sources |

| Matrix Components | Selectivity assessment | Plasma, serum, tissue homogenates |

| System Suitability Mixtures | System performance verification | Resolution and precision testing |

| Stability Solutions | Method robustness evaluation | Short-term and long-term stability |

| Cross-reactivity Compounds | Specificity confirmation | Structurally similar molecules |

Strategic Implementation and Compliance

Alignment with Specificity vs Selectivity Research

The regulatory perspectives on specificity and selectivity reflect the ongoing scientific discourse around these fundamental analytical concepts. The FDA's emphasis on forced degradation studies aligns with a rigorous approach to specificity verification, demanding demonstration that methods can distinguish the analyte from all potential degradation products. The EMA's focus on matrix effects in bioanalytical methods through ICH M10 represents a selectivity-centered approach, ensuring accurate measurement despite biological matrix variations [16] [14].

Within a broader thesis on specificity versus selectivity, these regulatory distinctions highlight how theoretical concepts manifest in practical requirements. The harmonization through ICH establishes common definitions, while regional implementations reflect different risk tolerance and historical approaches to analytical validation. Understanding these nuances enables development of validation strategies that satisfy both specific technical requirements and overarching regulatory expectations [13] [15].

Strategic Compliance Recommendations

Successful navigation of the FDA and EMA regulatory landscapes requires both technical excellence and strategic planning:

- Adopt ICH Q2(R1) Foundation: Implement ICH Q2(R1) as the core validation framework, then build agency-specific elements upon this foundation

- Document Rationale: Maintain thorough documentation justifying validation approaches, particularly for parameters where guidelines differ or allow flexibility

- Implement Risk Assessment: Apply risk-based principles to validation designs, focusing resources on critical methods with greatest impact on product quality and patient safety

- Prepare Agency-Specific Documentation: Adapt validation summaries to emphasize elements each agency prioritizes – raw data and protocol adherence for FDA, scientific justification and QMS integration for EMA

- Leverage Harmonization: Utilize the convergence between EMA and FDA through adoption of ICH M10 for bioanalytical methods to streamline global development [16]

The evolving regulatory landscape continues to emphasize lifecycle approach to method validation, with increasing harmonization through ICH initiatives. Maintaining awareness of guideline updates and their practical implementation remains essential for successful global regulatory strategy and efficient market access for pharmaceutical products.

In the rigorous world of analytical chemistry and bioanalytical method validation, the precise use of terminology is not merely academic—it forms the bedrock of reproducible science, regulatory compliance, and clear scientific communication. Among the most persistent sources of confusion lies in distinguishing between specificity and selectivity. While often used interchangeably in casual laboratory parlance, these terms carry distinct technical meanings with significant implications for method validation protocols. This whitepaper examines the nuanced relationship between these two fundamental analytical concepts, with a particular focus on the International Union of Pure and Applied Chemistry (IUPAC) recommendations that frame specificity as the ultimate expression of selectivity. Within the context of analytical method validation research, understanding this hierarchy is essential for researchers, scientists, and drug development professionals who must design validation experiments that meet both scientific and regulatory standards.

The debate is not purely semantic; it strikes at the heart of how we characterize a method's ability to measure an analyte accurately within a complex matrix. As per IUPAC's recommendations, the term specificity should describe the ideal, but often theoretically unattainable, scenario where a method responds exclusively to one single analyte. In contrast, selectivity refers to the practical ability of a method to determine several analytes simultaneously in the presence of potential interferents [1] [18]. This paper will explore the technical definitions, practical applications, experimental protocols for demonstration, and the ongoing scientific discourse surrounding these pivotal analytical properties.

Defining the Concepts: IUPAC's Evolving Terminology

The Official Definitions and Historical Context

The IUPAC, as the international authority on chemical nomenclature and terminology, provides the foundational definitions for the analytical sciences [19] [20]. According to IUPAC recommendations, selectivity is defined as the "property of a measuring system, used with a specified measurement procedure, whereby it provides measured quantity values for one or more measurands such that the values of each measurand are independent of other measurands or other quantities in the phenomenon, body, or substance being investigated" [18]. In simpler terms, selectivity is the ability of a method to differentiate and quantify multiple analytes within a complex sample, ensuring that the measurement of each is not skewed by the presence of the others.

Specificity, within this framework, is considered the ultimate degree of selectivity [18]. It represents an ideal scenario where a method is capable of responding to one, and only one, analyte. The IUPAC Compendium of Terminology in Analytical Chemistry (the "Orange Book") serves as the authoritative resource for these definitions, with the latest edition published in 2023 reflecting the ongoing evolution in the field [19]. The historical development of this terminology reveals a gradual shift towards precision, moving away from the interchangeable usage that has long clouded the field.

The Practical Distinction: A Conceptual Analogy

A commonly used analogy effectively illustrates the practical distinction between these concepts:

- Specificity is akin to identifying a single, specific key in a bunch that can open a particular lock. The focus is solely on finding that one correct key; identifying the other keys on the ring is not required [1] [11].

- Selectivity, using the same analogy, requires the identification of all keys in the bunch, not just the one that opens the lock [1] [11].

This analogy clarifies that specificity concerns itself with a single target, while selectivity involves a broader analytical scope, characterizing multiple components within a mixture. In practical analytical chemistry, achieving true specificity is often considered nearly impossible because real-world samples may contain numerous chemicals that could potentially interfere [18]. Therefore, selectivity is the more commonly demonstrated and practical property for most analytical methods.

Regulatory Landscape and Guidelines

ICH vs. IUPAC: A Comparative Analysis

The regulatory landscape for analytical method validation features guidelines that sometimes diverge in their terminology, creating a source of ongoing debate. The International Council for Harmonisation (ICH) guideline Q2(R1), a cornerstone for pharmaceutical analysis, defines specificity as "the ability to assess unequivocally the analyte in the presence of components which may be expected to be present" [1]. This definition, heavily focused on the demonstration of a lack of interference, is the primary term used in the guideline for validation parameters, and it is a required validation parameter for identification tests, impurity tests, and assays [1].

Notably, the term "selectivity" does not appear in ICH Q2(R1), highlighting a fundamental divergence from IUPAC's lexicon. In contrast, other guidelines, such as the European guideline on bioanalytical method validation, do employ the term "selectivity," defining it as the ability "to differentiate the analyte(s) of interest and IS from endogenous components in the matrix or other components in the sample" [1]. This regulatory patchwork means that professionals must be conversant with both sets of terminology, applying the appropriate terms based on the regulatory context of their work.

Table 1: Comparing Analytical Terminology Across Guidelines

| Term | IUPAC Recommendation | ICH Q2(R1) Guideline | Practical Implication |

|---|---|---|---|

| Selectivity | The primary, preferred term. The ability to measure multiple analytes without mutual interference. | Not explicitly mentioned or defined. | A practical, measurable property for multi-analyte methods. |

| Specificity | The ultimate degree of selectivity; an ideal where a method responds to only one analyte. | The key term used; defined as the ability to assess the analyte unequivocally in the presence of expected components. | Often treated as a synonym for selectivity in regulated pharma labs. |

| Philosophy | Views selectivity as a scalable property, with specificity being its absolute, ideal form. | Uses specificity as the catch-all term for a method's ability to distinguish the analyte. | Creates a disconnect between scientific and regulatory language. |

The Case for IUPAC's Preference

IUPAC's preference for "selectivity" as the overarching term is rooted in scientific pragmatism. Given that very few analytical techniques are truly specific to a single analyte in all possible scenarios, selectivity is a more honest and accurate descriptor [18]. It acknowledges that methods can possess varying degrees of ability to distinguish an analyte from interferents. This conceptualization allows for a more granular and quantitative assessment of a method's performance. The recommendation is that the term "specificity" should be reserved for those rare cases where absolute selectivity has been demonstrated, a situation that is more theoretical than practical [18]. This nuanced view encourages a more critical and evidence-based approach to method validation.

Experimental Protocols for Demonstrating Selectivity and Specificity

Core Methodological Principles

Demonstrating selectivity (or specificity, as per ICH) is a fundamental requirement in method validation. The core principle is to provide evidence that the analytical signal attributed to the analyte is unequivocally derived from that analyte and is not significantly influenced by other substances present in the sample [18]. This involves a series of experiments designed to challenge the method with potential interferents.

A method is considered selective when the analytical signal of the analyte can be separated from other signals, and where each signal depends on a specific property of the analyte to be measured [18]. The experimental design must be tailored to the type of method (e.g., chromatographic vs. ligand binding assay) and the nature of the sample matrix.

Detailed Experimental Workflows

The following workflows outline the key experiments required to demonstrate selectivity for different analytical purposes.

Diagram 1: General Selectivity Assessment Workflow

Protocol for Chromatographic Methods (e.g., HPLC, LC-MS)

- Analysis of Blank Matrix: Inject a sample of the blank matrix (e.g., plasma, formulation buffer) to confirm the absence of interfering peaks at the retention times of the analyte and internal standard [1].

- Analysis of Spiked Matrix: Inject a sample of the matrix spiked with the analyte at the target concentration to confirm the analyte's retention time and response.

- Interference Testing: Inject samples containing potential interferents individually. These interferents should include:

- Excipients from the drug formulation.

- Pharmacologically relevant co-administered drugs.

- Known metabolites.

- Degradation products generated from forced degradation studies [1].

- Co-injection: Inject a sample containing both the analyte and the potential interferents to demonstrate that the resolution between the analyte peak and the closest eluting interference meets predefined criteria (e.g., resolution factor Rs > 2.0) [1].

- Forced Degradation Studies: Subject the analyte to stress conditions (e.g., acid, base, oxidative, thermal, photolytic) to generate degradation products. Then, analyze the stressed sample to demonstrate that the analyte peak is pure and free from co-eluting degradants, and that the degradation products are resolved from the analyte and from each other [1].

Protocol for Ligand Binding Assays (LBAs)

- Assessment of Cross-Reactivity: Test the assay against structurally similar compounds, related substances, active forms, and degradation products [18]. The concentrations of these interfering substances should be similar to or higher than their expected physiological concentrations.

- Parallelism Testing: Demonstrate that the measured concentration of the analyte is consistent when the sample is diluted, indicating a lack of matrix interference.

- Spiked Recovery in Different Matrices: Spike the analyte into different lots of the matrix (e.g., multiple lots of human plasma) and measure recovery. The results should be consistent across lots.

Table 2: Key Experiments for Demonstrating Selectivity in Method Validation

| Experiment Type | Purpose | Acceptance Criteria (Example) | Applicable Techniques |

|---|---|---|---|

| Blank Matrix Analysis | To verify the absence of endogenous interference. | No significant response (e.g., < 20% of analyte response at LLOQ) at the retention time of the analyte. | Chromatography, Spectrometry |

| Interference Spiking | To check for interference from known compounds (e.g., drugs, metabolites). | Resolution between analyte and closest interfering peak > 2.0. Signal change < ±5% for accuracy. | Chromatography, LBAs |

| Forced Degradation | To demonstrate stability-indicating properties and resolution from degradants. | Peak purity of analyte confirmed; all degradants are baseline resolved. | Chromatography (primarily) |

| Cross-Reactivity | To ensure antibodies or receptors do not bind to similar molecules. | Cross-reactivity < 1% for all listed related compounds. | Ligand Binding Assays |

The Scientist's Toolkit: Essential Reagents and Materials

The experimental protocols for establishing selectivity require carefully selected reagents and materials to generate reliable and defensible data.

Table 3: Essential Research Reagent Solutions for Selectivity/Specificity Studies

| Reagent / Material | Function in Selectivity Assessment | Critical Quality Attributes |

|---|---|---|

| High-Purity Analytical Reference Standard | Serves as the benchmark for the target analyte's behavior (retention time, signal). | Certified purity (>98%), proper identity confirmation (e.g., via NMR, MS). |

| Potential Interferent Standards | Used to challenge the method's ability to distinguish the analyte from similar compounds. | Should include known impurities, degradation products, metabolites, and common co-formulants. |

| Blank Matrix | The analyte-free biological fluid or sample material used to assess background interference. | Should be representative of the test samples; for bioanalysis, use from at least 6 different sources. |

| Stressed Samples (Forced Degradation) | Generated by exposing the analyte to stress conditions to create potential degradants for interference testing. | Should typically produce 5-20% degradation; avoid excessive degradation (>30%) which may create secondary degradants. |

| Chromatographic Columns | The stationary phase for separation; critical for achieving resolution between analyte and interferents. | Multiple columns from different batches/lots should be evaluated during robustness testing. |

| Specific Antibodies (for LBAs) | The binding reagent that provides the basis for recognition and measurement in ligand binding assays. | High affinity and, crucially, low cross-reactivity against a panel of structurally similar molecules. |

Visualization of the Specificity-Selectivity Relationship

The conceptual relationship between selectivity and specificity, as defined by IUPAC, can be visualized as a spectrum or hierarchy of analytical discrimination.

Diagram 2: The Specificity-Selectivity Hierarchy

This diagram illustrates that selectivity is a scalable property. A method can have poor, moderate, or high selectivity. Specificity sits at the apex of this hierarchy as the theoretical ideal of perfect selectivity—a state where the method is affected by one and only one analyte. In practice, the goal of method development is to achieve sufficient selectivity for the intended purpose, acknowledging that absolute specificity may be an unattainable ideal for most techniques when faced with the infinite complexity of real-world samples [18].

The debate between specificity and selectivity is more than a matter of terminology; it reflects a fundamental understanding of the capabilities and limitations of analytical methods. IUPAC's stance—promoting selectivity as the preferred, scalable term and reserving specificity for the ultimate, ideal state—provides a scientifically rigorous framework. This perspective encourages a more nuanced and evidence-based approach to method validation, where scientists actively investigate and document a method's ability to distinguish an analyte from a defined panel of potential interferents, rather than simply claiming "specificity."

For the drug development professional, this means that validation protocols must be thoughtfully designed to challenge the method with all reasonably expected interferents. The experimental protocols outlined in this paper—from forced degradation studies to interference testing—provide a roadmap for this essential work. As analytical technologies continue to evolve, pushing the boundaries of sensitivity and resolution, the practical performance of our methods will increasingly approach the theoretical ideal of specificity. However, a clear understanding of the distinction, grounded in IUPAC recommendations, will remain vital for scientific accuracy, regulatory compliance, and the advancement of analytical science.

In the rigorous world of analytical science, particularly within pharmaceutical development, the terms "specificity" and "selectivity" are often used interchangeably. However, they describe distinct method characteristics whose proper identification is critical for method validation integrity. The International Council for Harmonisation (ICH) and regulatory bodies like the U.S. Food and Drug Administration (FDA) provide a framework for validation, defining fundamental performance characteristics that ensure a method is suitable for its intended purpose [21] [22]. Within this framework, understanding whether a method is specific or selective dictates the entire validation strategy, influencing experimental design, acceptance criteria, and ultimately, the degree of confidence in the generated data.

Specificity is the ability of a method to measure the analyte accurately and exclusively in the presence of other components that are expected to be present in the sample matrix. It is the highest expression of method discrimination, often described as "absolute selectivity" [22]. A specific method can unequivocally assess the analyte without interference from impurities, degradation products, or the sample matrix itself. In contrast, selectivity is the ability of the method to measure the analyte accurately in the presence of a smaller number of potential interfering substances. A selective method can distinguish the analyte from a limited set of other analytes or interferences, but may not be immune to all components in a complex matrix. This distinction is not merely semantic; it is foundational to demonstrating that an analytical procedure can generate reliable results that support critical decisions in drug development, manufacturing, and quality control [21].

Regulatory and Scientific Significance

From a regulatory perspective, the distinction between specificity and selectivity is embedded within modern analytical guidelines. The ICH Q2(R2) guideline on analytical procedure validation mandates the evaluation of specificity as a core parameter, requiring that it be demonstrated for identification tests, impurity tests, and assay methods [21] [22]. For identification, the method must be able to discriminate between compounds of closely related structure. For purity and assay methods, it must demonstrate a lack of interference from other components.

The adoption of a lifecycle approach to method validation, as emphasized in the modernized ICH Q2(R2) and ICH Q14 guidelines, further elevates the importance of this distinction [21]. Under this model, validation is not a one-time event but a continuous process beginning with method development. Defining a method's discriminatory power—as either specific or selective—at the Analytical Target Profile (ATP) stage ensures that the subsequent validation plan is scientifically sound and risk-based. A method intended for the release of a final drug product, where the sample matrix is well-defined but complete, requires a demonstration of specificity. A method used for in-process testing or for a biomarker in a complex biological matrix may be validated as selective, with a clear understanding of its limitations [23]. Mischaracterization at this stage can lead to a validation package that fails to adequately challenge the method, creating regulatory and product quality risks.

Table 1: Key Differences Between Specificity and Selectivity

| Feature | Specificity | Selectivity |

|---|---|---|

| Core Definition | Measures only the target analyte with no interference from other components. | Measures the target analyte in the presence of a limited number of potential interferences. |

| Scope | The highest degree of selectivity; "absolute" [22]. | A relative measure of discrimination; exists on a spectrum. |

| Interferences Considered | All components expected to be present (e.g., impurities, degradants, matrix) [22]. | A defined set of potential interfering substances. |

| Regulatory Emphasis | Explicitly required by ICH Q2(R2) for identification, assay, and impurity tests [21] [22]. | Often discussed as a broader concept; demonstrated when full specificity is not achievable. |

| Typical Application | Finished product release testing, stability-indicating methods. | In-process controls, biomarker assays in complex matrices [23]. |

Experimental Protocols for Demonstrating Specificity and Selectivity

The experimental design for proving a method's discriminatory power depends on its intended claim and the nature of the analyte and matrix. The following protocols outline the standard methodologies cited in industry practices and regulatory guidances.

Protocol for Specificity Testing

The objective is to prove the method's response is due solely to the target analyte, even when other components are present.

Materials and Reagents:

- Analyte of Interest (Drug Substance): High-purity reference standard.

- Forced Degradation Samples: Samples of the drug substance and product stressed under conditions of light, heat, acid, base, and oxidation.

- Sample Matrix Placebo: The formulation blank containing all excipients but no active ingredient.

- Known Impurities and Synthetic Intermediates: Authentic standards, where available.

Methodology:

- Chromatographic Peak Purity Assessment: For chromatographic methods (e.g., HPLC-UVDAD, LC-MS), inject the following and compare the chromatograms:

- Analyte Standard: A known concentration of the pure analyte.

- Placebo/Blank Matrix: To confirm no interfering peaks co-elute with the analyte.

- Forced Degradation Samples: To demonstrate that the analyte peak is pure and free from overlapping peaks from degradants. Peak purity is assessed using a photodiode array detector (DAD) or mass spectrometry (MS) to confirm a homogeneous peak.

- Spiked Mixtures: The placebo or blank matrix spiked with known impurities and the analyte. This confirms baseline separation of the analyte peak from all potential interferents [22].

Quantitative Recovery (for Assays): Compare the results for the analyte in the presence and absence of the other components. The recovery of the analyte should be within validated accuracy limits (e.g., 98-102%), demonstrating that the matrix or impurities do not suppress or enhance the analyte's signal.

Detection and Quantification of Impurities: The method should be capable of detecting and quantifying known and unknown impurities at or below the reporting threshold, with clear resolution from the main analyte peak.

Protocol for Selectivity Testing

The objective is to prove the method can distinguish and quantify the analyte in the presence of a defined set of other analytes or potential interferences.

Materials and Reagents:

- Target Analyte(s): Reference standard.

- Potential Interferents: A defined list of compounds that are structurally similar, metabolically related, or known to be present in the sample type (e.g., concomitant medications, key endogenous compounds for biomarker assays).

- Representative Sample Matrix: A pool of biological fluid (e.g., plasma, serum) or other complex matrix.

Methodology:

- Resolution of Analyte Mixtures: Prepare a mixture containing the target analyte and all defined potential interferents at their expected maximum concentrations. Analyze the mixture and demonstrate that the method provides baseline resolution (resolution factor, Rs > 1.5) between the analyte peak and each interferent peak.

Interference Check in Matrix: Analyze at least six independent sources of the blank sample matrix (e.g., plasma from six different donors).

- Ensure that at the retention time of the analyte, the response from the blank matrix is less than 20% of the lower limit of quantitation (LLOQ) for the analyte.

- Ensure that no endogenous compounds co-elute with the analyte or any of the defined interferents.

Cross-Reactivity Assessment (for Ligand Binding Assays - LBAs): Test the method's response against the panel of potential interferents. A significant response (e.g., >5% of the signal at the LLOQ) indicates cross-reactivity and a limitation in the method's selectivity, which must be reported and justified for the Context of Use [23].

Table 2: Key Reagents and Their Functions in Specificity/Selectivity Testing

| Reagent / Material | Function in Validation |

|---|---|

| Reference Standard | Serves as the benchmark for the pure analyte's properties (retention time, spectral profile). |

| Placebo / Blank Matrix | Identifies interference from the sample matrix or formulation excipients. |

| Forced Degradation Samples | Challenges the method's ability to distinguish the analyte from its degradation products. |

| Authentic Impurity Standards | Used to verify resolution and confirm the method can detect and quantify known impurities. |

| Independent Matrix Lots | Assesses variability in endogenous components that could affect method selectivity. |

A Framework for Decision-Making

The following workflow diagrams the logical process for determining and validating a method's discriminatory power, integrating the concepts of risk and Context of Use.

Implications for Different Analytical Fields

The specificity/selectivity distinction has varying implications across analytical applications.

Pharmaceutical Quality Control (PK Assays)

For pharmacokinetic (PK) assays, which measure drug concentration, the analyte is a fully characterized reference standard (the drug itself). The matrix, while complex, is consistent (e.g., human plasma). The goal is to achieve specificity by demonstrating no interference from the matrix or metabolites. The ICH M10 framework provides a prescriptive path for this, often using spike-recovery experiments [23].

Biomarker Assay Validation

This area highlights the critical nature of the distinction. Biomarker assays measure endogenous molecules for which a pristine reference standard identical to the analyte may not exist [23]. The sample matrix is highly variable. Achieving absolute specificity is often impossible. Therefore, a "fit-for-purpose" approach is used, and methods are validated for selectivity [23]. The validation must demonstrate that the method can reliably measure the biomarker in the presence of known, variable interferents. Key experiments include parallelism assessment (to show the calibrator behaves like the endogenous analyte) and testing in many individual matrix lots to establish the range of selectivity [23]. The 2025 FDA BMVB guidance explicitly recognizes these differences and discourages the blind application of the ICH M10 PK framework to biomarker assays [23].

The integrity of an analytical method is inextricably linked to a scientifically rigorous and honest assessment of its discriminatory capabilities. The distinction between specificity and selectivity is not a pedantic exercise but a fundamental principle of method validation. Correctly characterizing a method forces a deeper understanding of the analyte, the matrix, and the method's technical limitations. As the regulatory landscape evolves towards a more holistic, lifecycle-based approach grounded in Science and Risk-Based Planning [21], this clarity becomes paramount. By meticulously defining and demonstrating whether a method is specific or selective, scientists provide the transparency and robust evidence that regulators demand, ensuring that analytical data is trustworthy and fit-for-purpose in the journey to deliver safe and effective medicines.

From Theory to Practice: Assessing Specificity and Selectivity in Analytical Methods

Experimental Designs for Demonstrating Specificity in Assay and Impurity Methods

In analytical method validation, the concepts of specificity and selectivity are fundamental, yet they are often used interchangeably despite having distinct meanings. Specificity refers to the ability of a method to assess unequivocally the analyte in the presence of components that may be expected to be present, such as impurities, degradants, or matrix components [1]. It is the ability to measure accurately and specifically the analyte of interest despite these potential interferents [24]. Selectivity, meanwhile, describes the ability of the method to differentiate and quantify multiple analytes in a mixture, requiring the identification of all components rather than just the target analyte [1] [11].

This distinction frames a critical challenge in pharmaceutical development: designing experimental approaches that adequately demonstrate a method's capacity to measure the intended analyte without interference from closely related substances. This technical guide explores advanced experimental designs for establishing method specificity, particularly for potency assays and impurity methods, providing researchers with structured approaches for generating defensible validation data.

Conceptual Framework: Specificity Versus Selectivity

Regulatory Definitions and Distinctions

According to ICH guidelines, specificity is the ability to assess unequivocally the analyte in the presence of components that may be expected to be present [1]. A commonly used analogy describes specificity as identifying "the correct key for the lock" among a bunch of keys, without needing to identify all other keys [1] [11]. Selectivity, while similar, requires the identification of all components in a mixture [11]. The International Union of Pure and Applied Chemistry (IUPAC) actually recommends the term "selectivity" over "specificity" in analytical chemistry, recognizing that few methods respond to only a single analyte [1].

For impurity methods, specificity requires demonstrating that the method can separate and quantify individual impurities from each other and from the main analyte, often through resolution measurements between closely eluting peaks [24]. For assay methods, specificity must demonstrate that the measured response is due solely to the target analyte, achieved through studies showing no interference from blank matrices, placebos, impurities, or degradation products [25] [24].

Analytical Significance in Pharmaceutical Development