Standard Operating Procedure for Bias Estimation: A Comprehensive Guide for Biomedical Researchers

This article provides a definitive guide to bias estimation for researchers, scientists, and drug development professionals.

Standard Operating Procedure for Bias Estimation: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a definitive guide to bias estimation for researchers, scientists, and drug development professionals. It covers the foundational principles of systematic error, detailing robust methodological frameworks for conducting measurement procedure comparisons in accordance with CLSI guidelines like EP09 and EP15. The content extends to advanced troubleshooting techniques, optimization strategies for complex data, and rigorous validation procedures to ensure results meet pre-defined acceptability criteria. By integrating theoretical concepts with practical applications, this SOP empowers professionals to enhance data reliability, improve methodological transparency, and strengthen the evidentiary value of biomedical research.

Understanding Bias: Core Concepts and Definitions for Robust Research

In scientific research and drug development, accurate measurement forms the foundation of reliable data and valid conclusions. Understanding and quantifying measurement error is therefore paramount in establishing robust standard operating procedures. This document outlines the critical distinction between systematic error (bias) and random error, focusing on their definitions, estimation methodologies, and implications for research integrity. Within the context of bias estimation research, trueness refers to the closeness of agreement between the average of an infinite series of measurement results and a reference value, primarily affected by systematic error or bias. In contrast, precision refers to the closeness of agreement between independent measurement results obtained under specified conditions, affected by random error [1]. Properly distinguishing and estimating these error components enables researchers to improve methodological rigor, refine experimental protocols, and enhance the reliability of scientific findings in drug development and other research domains.

Theoretical Framework: Error Components in Measurement

Systematic Error (Bias)

Systematic error, or bias, represents a consistent, directional deviation of measurements from the true value. Unlike random errors, biases do not cancel out with repeated measurements and can lead to inaccurate conclusions if uncorrected. In the context of self-reported data, response bias occurs when individuals offer systematically biased estimates of self-assessed behavior, potentially due to factors such as social-desirability bias, where the respondent wants to present favorably in a survey, even under anonymous conditions [1]. A specific and problematic form of this is response-shift bias, which occurs when a respondent's frame of reference changes between measurement points, particularly when this change is caused by the treatment or intervention itself. This shift can confound the true treatment effect with a recalibration of the response metric, potentially leading to underestimates of program effects [1].

Random Error

Random error manifests as unpredictable, non-directional variations in measurement results that occur due to chance. These fluctuations affect the precision of measurements rather than their average accuracy. In statistical modeling, this is often represented by a random error term, typically assumed to follow a normal distribution with a mean of zero [1]. While random errors cannot be eliminated completely, their impact can be reduced through increased sample sizes, improved measurement instrument sensitivity, and standardized experimental protocols.

Trueness and Precision in Quantitative Analysis

The concepts of trueness and precision provide the framework for understanding measurement quality. Trueness reflects the absence of systematic error, while precision reflects the absence of random error. A measurement system can be precise but not true (consistent but systematically biased), true but not precise (accurate on average but with high variability), both, or neither. The distinction becomes crucial when interpreting quantitative data analysis, where descriptive statistics (mean, median, mode, standard deviation) help characterize both central tendency and variability in data sets [2] [3].

Table 1: Key Characteristics of Systematic vs. Random Error

| Characteristic | Systematic Error (Bias) | Random Error |

|---|---|---|

| Direction | Consistent and directional | Non-directional and unpredictable |

| Effect on Results | Affects trueness (accuracy) | Affects precision (reliability) |

| Reduction Methods | Calibration, method optimization, bias estimation techniques | Increased sample size, improved instrumentation |

| Statistical Representation | Component of measurement model (e.g., truncated-normal distribution in SFE) [1] | Random noise component (e.g., normally distributed error term) [1] |

| Detection | Comparison with reference standards, specialized statistical tests | Repeated measurements, analysis of variance |

Quantitative Methodologies for Bias Estimation

Stochastic Frontier Estimation (SFE) for Response Bias

Stochastic Frontier Estimation (SFE) provides a powerful econometric approach for measuring response bias and its covariates in self-reported data. Originally developed for economic and operational research, SFE has been adapted to identify bias in behavioral and healthcare research where objective measures are unavailable [1]. The SFE framework models the observed self-reported outcome (Yit) as a combination of the true outcome (Y*it) and a response bias component (Y^R_it):

Yit = Y*it + Y^R_it [1]

The true outcome is modeled as: Y*it = Tβ0 + Xitβt + ε_it [1]

where T represents treatment or intervention, Xit represents other explanatory variables, and εit is the random error term. The bias component Y^Rit follows a truncated-normal distribution and can be expressed as: Y^Rit = uit · (uit > 0) [1]

where uit is a random variable accounting for response shift away from a subjective norm response level, distributed independently of εit with a mean μit that can be modeled as: μit = Tδ0 + zitδ_t [1]

This formulation allows researchers to separately identify treatment effects (β0) from changes in response bias (δ0), enabling more accurate estimates of true intervention effects while accounting for systematic measurement biases.

Sensor Network Approaches for Bias Estimation

In physical measurement systems, sensor networks provide another framework for bias estimation. Research in this domain has established that for nonbipartite graphs, biases can be determined even when all sensors are corrupted, whereas for bipartite graphs, more than half of the sensors must be unbiased to ensure correct bias estimation [4]. When biases are heterogeneous, the number of required unbiased sensors can be reduced to two. These topological considerations inform the design of robust measurement systems where direct calibration against standards may be impractical.

Table 2: Comparison of Bias Estimation Methodologies

| Methodology | Application Context | Data Requirements | Key Advantages |

|---|---|---|---|

| Stochastic Frontier Estimation (SFE) | Self-reported data in behavioral and healthcare research [1] | Single or multiple temporal observations; can work with single measures | Identifies individual-level bias covariates; less data intensive than SEM approaches |

| Structural Equation Modeling (SEM) | Psychometrics, social sciences | Multiple temporal observations and multiple measures per construct | Established framework for latent variable modeling |

| Sensor Network Algorithms | Physical measurement systems, sensor arrays [4] | Network topology information; relative state measurements | Can estimate biases without direct reference standards; adaptable to network topology |

Experimental Protocols for Bias Assessment

Protocol for SFE-Based Bias Assessment in Intervention Studies

Purpose: To identify and quantify response bias and response-shift bias in self-reported outcomes before and after an intervention.

Materials:

- Validated self-report measures for constructs of interest

- Demographic and covariate assessment tools

- Statistical software with SFE capability (e.g., STATA, R with frontier package)

- Data collection platform (paper-based or electronic)

Procedure:

- Pre-intervention Assessment:

- Administer self-report measures to participants prior to intervention

- Collect relevant demographic and covariate data (e.g., age, gender, prior experience)

- Establish baseline measurements for all variables of interest

Implementation of Intervention:

- Apply standardized intervention protocol to treatment group

- Maintain control group without intervention or with placebo condition

- Ensure consistent implementation across all participants

Post-intervention Assessment:

- Re-administer self-report measures following intervention completion

- Maintain identical measurement conditions to pre-assessment

- Document any contextual factors that may influence responses

Data Analysis:

- Specify SFE model with appropriate distributional assumptions for error terms

- Test truncation assumption statistically

- Estimate model parameters including treatment effect (β0) and bias components (δ0)

- Conduct robustness checks with heteroscedastic error models

- Interpret treatment effects after accounting for estimated response biases

Interpretation: A statistically significant δ0 coefficient indicates that the intervention affected response bias, suggesting response-shift bias. The adjusted treatment effect (β0) provides a more accurate estimate of the true intervention effect after accounting for measurement biases [1].

General Experimental Design Principles for Bias Minimization

Proper experimental design provides the foundation for controlling both systematic and random errors. The key steps in designing experiments that minimize bias include:

Variable Definition: Clearly define independent, dependent, and potential confounding variables. Create diagrams showing possible relationships between variables, including expected direction of effects [5].

Hypothesis Formulation: Develop specific, testable hypotheses including both null and alternative hypotheses that clearly specify expected relationships [5].

Treatment Design: Determine appropriate variations in independent variables that balance experimental control with external validity. Decide how widely and finely to vary independent variables to optimize inference from results [5].

Group Assignment: Implement random assignment to treatment groups using completely randomized or randomized block designs. For within-subjects designs, employ counterbalancing to control for order effects [5].

Measurement Planning: Select reliable and valid measurement approaches for dependent variables. Choose measurement precision appropriate for planned statistical analyses [5].

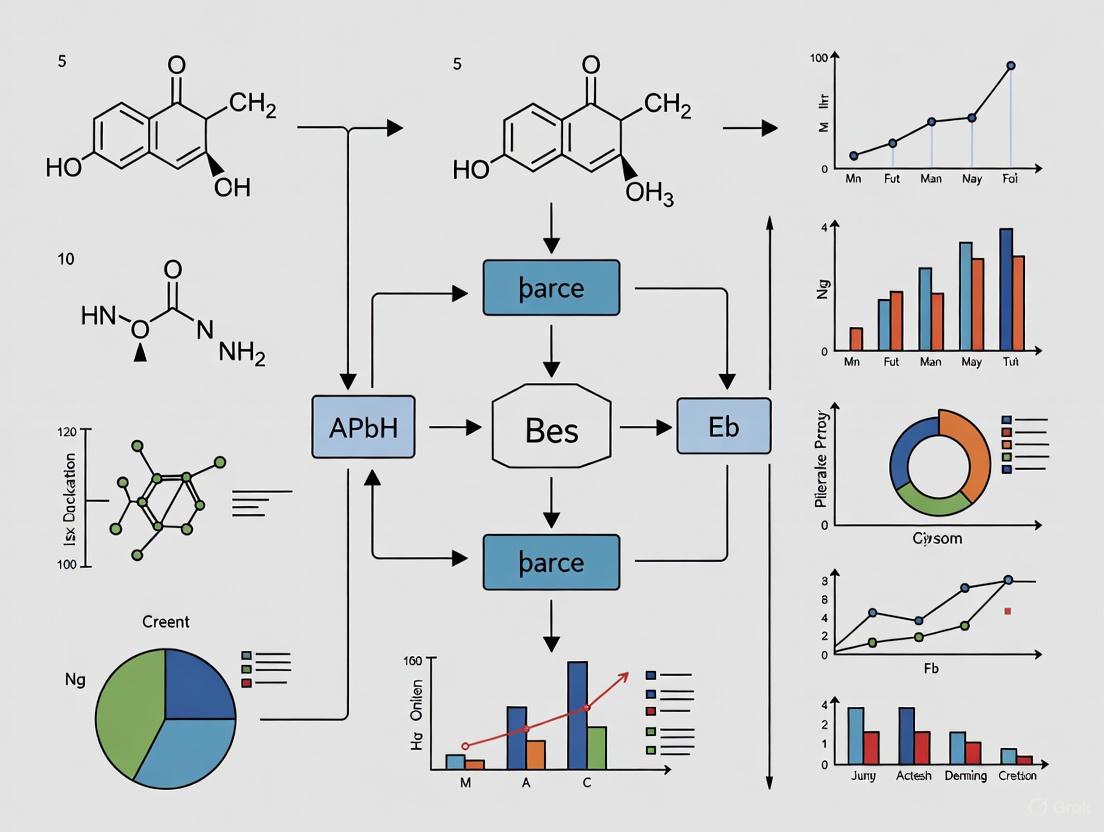

Diagram 1: Experimental Design Workflow for Bias Control. This workflow outlines key stages in designing experiments to minimize and assess measurement bias, aligning with established experimental design principles [5].

Research Reagent Solutions for Bias Estimation Studies

Table 3: Essential Research Materials for Bias Estimation Research

| Research Reagent | Specification Purpose | Application Context |

|---|---|---|

| Validated Self-Report Measures | Standardized instruments with established psychometric properties | Assessment of subjective outcomes in clinical and behavioral research |

| Stochastic Frontier Analysis Software | Statistical packages implementing SFE (e.g., STATA frontier command, R frontières package) | Estimation of response bias and response-shift bias in self-reported data [1] |

| Sensor Network Platforms | Configurable sensor arrays with known topological properties | Physical measurement systems requiring bias estimation without reference standards [4] |

| Reference Standards | Certified reference materials with traceable values | Establishment of ground truth for method validation and bias quantification |

| Data Collection Platforms | Electronic data capture systems with audit trail capabilities | Standardized administration of measures and documentation of contextual factors |

Data Presentation and Analysis Framework

Effective presentation of quantitative data analysis requires clear tabular organization that highlights both central tendency and variability measures. Standard quantitative papers typically include descriptive statistics tables with columns for each type of statistic (mean, median, mode, standard deviation, etc.) and rows for each variable [2]. Appropriate presentation formats vary by analysis type:

- Univariate Analysis: Descriptive statistics presented through graphs (line graphs, histograms) and charts (pie charts, descriptive tables) [3]

- Bivariate Analysis: T-tests, ANOVA, Chi-square results presented in summary tables or contingency tables [3]

- Multivariate Analysis: ANOVA, MANOVA, regression results in structured summary tables [3]

For bias estimation studies specifically, results should include:

- Parameter estimates for both the substantive model (treatment effects) and bias components

- Goodness-of-fit statistics for the specified SFE model

- Comparisons between naive models (ignoring bias) and adjusted models

- Tests of distributional assumptions for error terms

Diagram 2: Components of Measurement Error. This diagram illustrates how systematic error (bias) and random error contribute to the difference between observed measurements and true values, forming the conceptual basis for bias estimation methodologies.

Proper distinction between systematic and random errors, coupled with rigorous estimation methodologies, forms an essential component of standard operating procedures for bias estimation research. The frameworks presented here, particularly Stochastic Frontier Estimation for self-reported data and sensor network approaches for physical measurements, provide researchers with practical tools for quantifying and adjusting for systematic measurement biases. By implementing these protocols and analytical approaches, drug development professionals and researchers can enhance the trueness of their measurements, leading to more accurate estimates of treatment effects and more reliable scientific conclusions. Future methodological developments should focus on integrating these approaches across measurement contexts and developing standardized reporting guidelines for bias assessment in experimental research.

The Critical Role of Bias Estimation in Method Validation and Compliance

In the highly regulated pharmaceutical and biopharma sectors, analytical method validation is a cornerstone for ensuring the quality, safety, and efficacy of drug products. Bias estimation, a core component of method accuracy, provides documented evidence that an analytical procedure delivers results that are close to the true value. Establishing a Standard Operating Procedure (SOP) for bias estimation is therefore not merely a technical formality but a regulatory requirement essential for compliance with FDA, ICH, and USP guidelines [6]. In an era increasingly reliant on AI and machine learning in drug development, the principles of bias assessment have expanded to include algorithmic fairness, making rigorous bias estimation protocols more critical than ever [7] [8]. This document outlines detailed protocols and application notes for integrating robust bias estimation into analytical method validation, ensuring both scientific integrity and regulatory adherence.

The Regulatory and Scientific Framework for Bias Estimation

Key Definitions and Regulatory Mandates

Regulatory bodies mandate that all analytical methods used for decision-making on product quality must be validated. Method validation is defined as "the process of demonstrating that an analytical procedure is suitable for its intended purpose" [6]. Within this framework, accuracy embodies the concept of bias, defined as "the agreement between value found and an expected reference value" [6].

The reliability of analytical results hinges on using accurate standards or certified reference materials. Without proper calibration, analytical results will be systematically wrong, regardless of analyst skill or equipment sophistication [6]. The recent integration of AI/ML tools in GxP-impacting processes, such as drug discovery and clinical trials, further emphasizes the need for bias control. Regulatory guidance now requires sponsors to ensure that "all algorithms, models, datasets, and data pipelines… meet legal, ethical, technical, scientific and regulatory standards," which includes demonstrating a lack of harmful algorithmic bias [7].

Consequences of Inadequate Bias Control

Failure to adequately estimate and control bias during method validation can lead to severe consequences, including:

- Regulatory Actions: FDA inspectional observations, Warning Letter violations, and rejection of regulatory submissions (NDA, ANDA) [6].

- Compromised Product Quality: Reliance on inaccurate test results can lead to the release of substandard products, posing significant patient risks.

- Algorithmic Discrimination: In the context of AI-driven tools, biased models can lead to discriminatory outcomes, potentially violating emerging AI-specific laws like Colorado’s SB 24‑205, which mandates reasonable care against algorithmic discrimination [7].

Quantitative Framework for Bias Estimation

Bias is quantitatively estimated by analyzing the difference between measured values and a known reference value across a specified range. The following data exemplifies a typical bias recovery study for an assay method.

Table 1: Exemplary Data for Bias (Accuracy) Recovery Assessment

| Nominal Concentration (µg/mL) | Mean Measured Concentration (µg/mL) | Standard Deviation | % Recovery | Bias (%) |

|---|---|---|---|---|

| 50 (QL) | 49.1 | 1.8 | 98.2 | -1.8 |

| 100 (Low) | 102.5 | 2.1 | 102.5 | +2.5 |

| 500 (Medium) | 497.8 | 5.6 | 99.6 | -0.4 |

| 1500 (High) | 1515.3 | 12.4 | 101.0 | +1.0 |

Acceptance criteria for bias are typically set based on the method's intended use. For assay methods, a recovery of 98.0% to 102.0% is often acceptable, with tighter criteria for impurities. Statistical analysis, such as a t-test against the nominal value or the use of confidence intervals, is employed to determine if the observed bias is statistically significant.

The principles of bias estimation also apply to data-driven algorithms. The 2025 algorithmic frameworks in Medicare audits, for instance, require "bias monitoring and correction procedures... as prudent risk management" [8]. This highlights the universal applicability of bias assessment across traditional and modern analytical techniques.

Experimental Protocols for Bias Estimation

This section provides a detailed, step-by-step SOP for conducting a bias estimation study.

Protocol 1: Bias Estimation Using a Certified Reference Material (CRM)

1.0 Purpose To establish a standard procedure for estimating the bias of an analytical method by analyzing a Certified Reference Material (CRM) with a known certified value and acceptable uncertainty.

2.0 Scope This protocol applies to the validation of quantitative analytical methods for drug substance and drug product testing.

3.0 Materials and Reagents

- Certified Reference Material (CRM) of the analyte.

- Appropriate solvent(s) of known purity and grade.

- Volumetric glassware and pipettes, calibrated.

4.0 Procedure

- Sample Preparation: Accurately prepare a minimum of six independent samples of the CRM at 100% of the test concentration as per the analytical method.

- Instrumental Analysis: Analyze all prepared samples in a single sequence or across multiple sequences to assess intermediate precision, as required.

- Data Collection: Record the individual measured values for each preparation.

5.0 Calculation and Acceptance Criteria

- Calculate the mean (%) of the measured values.

- Calculate the percentage recovery (Accuracy) using the formula: % Recovery = (Mean Measured Concentration / Certified Value) x 100

- Calculate the bias as: Bias (%) = 100% - % Recovery

- Acceptance Criteria: The mean % recovery should fall within the pre-defined range (e.g., 98.0% - 102.0%). A statistical t-test (at a significance level of α=0.05) should show no significant difference between the mean measured value and the certified value.

Protocol 2: Bias Estimation by Standard Addition (Spike/Recovery)

1.0 Purpose To estimate method bias in a complex matrix (e.g., drug product) where a CRM for the matrix is unavailable, using a standard addition technique.

2.0 Scope This protocol is suitable for drug product assay and impurity methods.

3.0 Materials and Reagents

- Placebo sample (matrix without the active analyte).

- Certified Reference Material (CRM) of the active analyte.

- All other reagents and equipment as listed in the analytical method.

4.0 Procedure

- Sample Preparation:

- Weigh a placebo sample equivalent to the test preparation.

- Accurately spike (add) a known amount of the analyte CRM to the placebo to achieve the target concentration (e.g., 100%). Prepare a minimum of six such samples.

- Prepare the samples according to the analytical procedure.

- Analysis: Analyze all spiked samples.

- Data Collection: Record the individual measured values for the analyte in the spiked samples.

5.0 Calculation and Acceptance Criteria

- Calculate the % recovery for each spiked sample: % Recovery = (Measured Concentration in Spiked Sample / Theoretical Spiked Concentration) x 100

- Calculate the mean recovery, standard deviation, and relative standard deviation (RSD) of the recoveries.

- Acceptance Criteria: The mean % recovery and the RSD should meet pre-defined criteria suitable for the method (e.g., mean recovery of 98-102% with an RSD ≤ 2.0%).

Workflow Visualization of Method Validation with Integrated Bias Estimation

The following diagram illustrates the integrated workflow for analytical method validation, highlighting the critical decision points for bias estimation.

Figure 1: Workflow for method validation with integrated bias estimation. This process ensures that bias is assessed early, and the method is optimized before proceeding to other validation parameters, thereby ensuring efficiency and compliance.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and tools required for conducting robust bias estimation studies.

Table 2: Essential Reagents and Tools for Bias Estimation Research

| Item Name | Function / Purpose | Critical Quality Attributes |

|---|---|---|

| Certified Reference Material (CRM) | Provides a ground truth value with known uncertainty against which method bias is estimated. | Certified purity and assigned uncertainty from a certified supplier (e.g., NIST, USP). Stability and appropriate storage conditions. |

| Ultra-Pure Solvents | Used for sample and standard preparation to prevent interference and contamination. | Grade appropriate for the technique (e.g., HPLC, GC-MS). Low UV absorbance, low particle count, and minimal volatile impurities. |

| Calibrated Volumetric Glassware | Ensures accurate and precise measurement of volumes during sample preparation, which is critical for calculating theoretical concentrations. | Class A tolerance, with valid calibration certificate. Traceable to national/international standards. |

| Professional Statistical Software | Used for statistical evaluation of bias data (e.g., t-tests, confidence intervals, regression analysis). | Validated software, GMP/GLP compliant features (e.g., audit trail), ability to perform appropriate statistical tests. |

| Stable Placebo Formulation | Essential for spike/recovery studies to mimic the sample matrix without the active analyte, allowing for accurate bias estimation in drug products. | Represents the final drug product formulation exactly, without the active ingredient. Must be stable and homogenous. |

| Data Integrity and Management System | Ensures the integrity, traceability, and long-term storage of raw data and results from bias studies, as required by FDA 21 CFR Part 11 and EU GMP rules [7] [6]. | System validation, access controls, audit trails, and electronic signature capabilities. |

Integrating a rigorous, well-documented SOP for bias estimation is a non-negotiable element of analytical method validation. It forms the bedrock of data integrity, ensures patient safety, and is a fundamental requirement for regulatory compliance across global jurisdictions. As the industry evolves with the adoption of AI/ML, the principles of bias estimation must be adapted to address algorithmic models, ensuring they are fair, transparent, and validated. The protocols and frameworks provided herein offer a concrete pathway for researchers and drug development professionals to embed robust bias estimation into their quality systems, thereby upholding the highest standards of scientific excellence and regulatory diligence.

In quantitative research and method comparison studies, systematic bias (also known as fixed or constant bias) and proportional bias represent two fundamental forms of measurement error that can compromise data integrity [9]. Understanding their distinct characteristics, detection methods, and implications is crucial for developing robust standard operating procedures in bias estimation research, particularly in drug development and clinical studies.

Systematic bias occurs when one measurement method consistently yields values that are higher or lower than those from another method by a constant amount, regardless of the concentration or level of the measured variable [9] [10]. This type of bias affects all measurements uniformly and can often be corrected through calibration.

Proportional bias manifests when the difference between methods is dependent on the magnitude of the measured variable [9] [10]. Unlike fixed bias, proportional bias increases or decreases proportionally with the concentration level, meaning measurements diverge more significantly at higher or lower values.

The following workflow outlines the standardized process for bias assessment discussed in this document:

Key Characteristics and Statistical Definitions

Comparative Analysis of Bias Types

Table 1: Fundamental characteristics of systematic and proportional bias

| Characteristic | Systematic (Fixed) Bias | Proportional Bias |

|---|---|---|

| Definition | Constant difference between methods across all values | Difference between methods proportional to measurement magnitude |

| Mathematical Representation | y = x + b (where b ≠ 0) | y = mx (where m ≠ 1) |

| Primary Detection Method | Confidence interval for intercept does not encompass zero | Confidence interval for slope does not encompass one |

| Visual Pattern on Regression Plot | Parallel shift from line of identity | Diverging pattern from line of identity |

| Typical Correction Approach | Single adjustment factor | Slope-dependent correction factor |

| Impact on Clinical Decisions | Consistent across all values, potentially affecting classification | Magnitude-dependent, may only affect extreme values |

Statistical Formulations

In method comparison studies, the relationship between a new method (Y) and reference method (X) can be expressed as Y = mX + b, where m represents the slope and b represents the intercept [10].

Systematic bias is statistically identified when the confidence interval for the intercept (b) does not encompass zero, indicating a consistent over-estimation or under-estimation across the measuring range [10].

Proportional bias is identified when the confidence interval for the slope (m) does not encompass one, indicating that the difference between methods changes with concentration levels [10].

In some cases, both types of bias may coexist, resulting in a relationship where both the intercept and slope differ significantly from their ideal values (Y = mX + b, where m ≠ 1 and b ≠ 0) [10].

Experimental Protocols for Bias Detection

Method Comparison Study Design

Sample Requirements and Measurement Protocol

- Select 40-100 samples covering the entire measuring range of clinical interest [9]

- Ensure sample matrix matches clinical specimens (serum, plasma, etc.)

- Analyze all samples in duplicate using both test and reference methods

- Randomize measurement order to avoid systematic sequence effects

- Complete all measurements within analyte stability period

Data Collection Standards

- Record raw data without transformation initially

- Document all experimental conditions (instrument lot, reagent batch, operator)

- Flag any technical errors or outliers for potential exclusion

- Maintain blinding of operators to method identity where possible

Statistical Analysis Protocol

Regression Analysis Methodology

- Use Model II regression (Least Products regression) rather than ordinary least squares [9]

- Calculate slope and intercept with 95% confidence intervals

- Perform residual analysis to check for pattern violations

- Assess agreement using Bland-Altman plots as supplementary evidence

Bias Detection Decision Rules

- For proportional bias: If slope confidence interval excludes 1, significant proportional bias exists

- For systematic bias: If intercept confidence interval excludes 0, significant systematic bias exists

- Document magnitude and direction of all detected biases

Validation Criteria

- Establish predefined acceptability limits based on clinical requirements

- Compare estimated biases to total allowable error specifications

- Determine if bias magnitude necessitates method modification or recalibration

Research Reagents and Materials

Table 2: Essential research reagents and materials for bias estimation studies

| Reagent/Material | Specification Requirements | Primary Function in Bias Research |

|---|---|---|

| Reference Standard | Certified reference material with documented traceability | Provides accuracy baseline for method comparison |

| Quality Control Materials | At least three levels covering low, medium, and high values | Monifies assay performance and precision |

| Clinical Samples | Appropriately characterized and stored specimens | Represents actual patient matrix for validation |

| Calibrators | Traceable to higher-order reference methods | Establishes measurement scale and accuracy |

| Statistical Software | Capable of Model II regression and confidence interval calculation | Enables proper statistical analysis of bias |

Visualization and Interpretation of Bias

The following diagnostic plot illustrates how to differentiate between various types of bias in method comparison studies:

Implications for Research and Development

Impact on Data Integrity

The presence of undetected or unaddressed bias has profound implications for research validity and decision-making:

Systematic bias affects all measurements equally, potentially leading to consistent overestimation or underestimation of treatment effects [9]. In drug development, this could impact dosage determinations or efficacy assessments across all study subjects.

Proportional bias has differential effects depending on measurement magnitude [10]. This is particularly problematic when measuring analytes across a wide concentration range, as it may disproportionately affect subsets of patients with extreme values.

Regulatory and Compliance Considerations

Proper bias assessment is essential for regulatory submissions and method validation:

- Documentation of bias estimation must be included in all method validation reports

- Established tools like the Cochrane Risk of Bias (RoB) tool provide structured assessment frameworks [11]

- Statistical methods such as Inverse Probability of Censoring Weighting (IPCW) address bias in clinical trial data [12]

- Bias-aware protocols should be incorporated into Good Clinical Practice (GCP) and Good Laboratory Practice (GLP) guidelines

Standard Operating Procedure Framework

Protocol for Bias Assessment

Pre-Analysis Phase

- Define acceptable bias limits based on clinical requirements

- Establish sample size using statistical power calculations

- Validate reference method performance characteristics

- Document acceptance criteria for bias assessment

Analysis Phase

- Perform measurements across clinically relevant range

- Apply appropriate regression analysis (Model II/Least Products)

- Calculate confidence intervals for slope and intercept parameters

- Compare results to predefined acceptability limits

Decision Phase

- Classify bias type(s) present using statistical evidence

- Determine clinical significance of detected biases

- Implement correction protocols if biases exceed limits

- Document all findings in standardized reporting format

Documentation and Reporting Standards

Comprehensive documentation is essential for bias estimation research:

- Report both statistical significance and clinical relevance of findings

- Include graphical representations of method comparisons

- Document all methodological decisions and statistical approaches

- Maintain raw data for potential regulatory audit or reanalysis

- Update standard operating procedures based on methodological advances

Bias in laboratory measurement is defined as the systematic deviation of measured results from the true value of an analyte [13]. Unlike random error, which varies unpredictably, bias represents a consistent directional difference that can compromise the validity of clinical data, research findings, and patient care decisions [13] [14]. In quantitative terms, bias is expressed as the difference between the average of repeated measurements and a reference quantity value [13] [15].

The distinction between bias and inaccuracy is crucial in laboratory medicine. Inaccuracy refers to how closely a single measurement agrees with the true value and includes contributions from both systematic and random error. Bias, however, relates specifically to how the average of a series of measurements agrees with the true value, with imprecision minimized through averaging [14]. This systematic deviation can lead to misdiagnosis, inappropriate treatments, and erroneous research conclusions, with documented cases showing significant patient harm and healthcare costs [13].

Fundamental Bias Types

Bias in laboratory measurements manifests in several distinct forms, each with different characteristics and implications for data integrity:

Constant Bias: A fixed difference between measured and true values that remains consistent regardless of analyte concentration [13] [14]. This type of bias affects all measurements equally across the analytical range.

Proportional Bias: A difference between measured and true values that changes in proportion to the analyte concentration [13] [14]. This type of bias becomes more pronounced at higher concentrations and can be particularly problematic when using fixed clinical decision limits.

Measurement Condition Bias: Bias can be evaluated under different measurement conditions that affect its detection and significance [13]:

- Repeatability Conditions: Same procedure, instrument, operator, and location over a short period

- Intermediate Precision Conditions: Variations within a single laboratory over time with different instruments, operators, and reagents

- Reproducibility Conditions: Variations across different laboratories, instruments, and methods

Multiple factors throughout the testing process can introduce bias into laboratory results:

Methodological Differences: Varying analytical methods for the same analyte can produce significantly different results. For example, bromocresol green methods for serum albumin measurement may overestimate concentrations by 1.5-13.9% compared to immunoassays, potentially affecting clinical decisions regarding anticoagulation therapy in nephrotic syndrome patients [16].

Lack of Harmonization: When laboratory tests produce different results depending on the instrument platform or method used, aggregation of data becomes problematic. This is particularly concerning for artificial intelligence and machine learning algorithms that require large healthcare datasets for training [16].

Instrument and Reagent Variations: Differences between instruments, even of the same model, and variations between reagent lots can introduce bias that affects result comparability [17].

Pre-analytical Factors: Sample collection methods, transportation conditions, and interference substances can systematically alter measured values before analysis begins [13].

Reference Value Assignment: Imperfections in reference materials, calibration protocols, and the traceability chain can introduce bias at the fundamental level of measurement standardization [17].

Population-Specific Factors: Inadequate representation of diverse populations in clinical trials and inaccurate race attribution in datasets may perpetuate erroneous conclusions in healthcare research [16].

Bias Estimation Methodologies

Fundamental Protocol for Bias Estimation

Bias estimation requires comparison of measured values against a reference quantity with known accuracy. The following protocol outlines the core approach:

Table 1: Core Components for Bias Estimation

| Component | Description | Examples |

|---|---|---|

| Reference Material | Substance with one or more properties sufficiently homogeneous and well-established to be used for calibration or measurement verification | Certified Reference Materials (CRMs), proficiency testing materials, reference laboratory samples [13] |

| Measurement Replication | Repeated measurements of the same sample to establish a reliable mean value | Duplicate or triplicate measurements under specified conditions [14] |

| Statistical Analysis | Methods to compare measured values against reference values and determine significance | t-tests, confidence interval analysis, regression methods [13] [18] |

| Acceptance Criteria | Pre-defined limits for allowable bias based on clinical requirements | Biological variation data, regulatory guidelines, clinical decision limits [14] |

The basic equation for bias calculation is: Bias(A) = O(A) - E(A) where O(A) is the observed (measured) value of analyte A and E(A) is the expected (reference) value [13].

Experimental Protocol: Method Comparison for Bias Assessment

This protocol describes the procedure for evaluating bias between a new candidate method and an established comparative method using patient samples.

Purpose: To estimate the systematic difference between measurement methods and characterize the nature (constant or proportional) of any observed bias.

Materials and Reagents:

- Test specimens (n=20-40), ideally fresh patient samples spanning the clinical reportable range

- Reference materials or proficiency testing samples with assigned values (optional but recommended)

- All reagents, calibrators, and controls for both measurement methods

- Laboratory information system or data collection template

Procedure:

- Sample Selection: Collect 20-40 patient samples covering the analytical measurement range. Include at least 5 samples near clinically important decision points [14].

Experimental Design: Analyze samples in multiple small batches over several days rather than in a single run to account for between-day variation. Run both methods in parallel within the same analytical batch when possible [14].

Measurement Protocol: Perform at least duplicate measurements for each sample on both methods. Maintain standard operating procedures for sample handling and analysis.

Data Collection: Record all results with appropriate identifiers, including sample information, measurement values, and date/time of analysis.

Statistical Analysis:

- Create a scatter plot with the comparative method on the x-axis and the candidate method on the y-axis

- Perform appropriate regression analysis based on data characteristics

- Construct a difference plot (Bland-Altman plot) to visualize agreement between methods

Interpretation: Evaluate the presence of constant bias (y-intercept significantly different from zero) and proportional bias (slope significantly different from 1) [14].

Method Comparison Workflow for Bias Assessment

Experimental Protocol: Reference Material-Based Bias Estimation

This protocol describes the procedure for estimating bias using materials with known assigned values, following CLSI EP15-A3 guidelines [18].

Purpose: To estimate measurement bias relative to a reference value and determine if the bias is statistically significant.

Materials and Reagents:

- Certified reference materials or proficiency testing materials with assigned values

- Documentation of uncertainty for assigned values

- All necessary reagents, calibrators, and controls for the test method

- Data analysis software capable of statistical significance testing

Procedure:

- Material Preparation: Reconstitute or prepare reference materials according to manufacturer instructions. Include multiple concentration levels if possible.

Measurement Replication: Analyze each reference material level in replicate (typically 3-5 repetitions) under repeatability conditions.

Data Collection: Record all measurement values along with the assigned value and its uncertainty for each material.

Statistical Analysis:

- Calculate the mean of replicate measurements for each level

- Compute bias as: Mean(measured) - Assigned value

- Perform hypothesis testing (t-test) to determine if bias is statistically significantly different from zero

- Apply familywise error rate correction when testing multiple levels [18]

Interpretation: Compare both statistical significance and magnitude of bias against predefined acceptance criteria based on clinical requirements.

Statistical Analysis of Bias

Determining Statistical Significance

The significance of calculated bias should be evaluated before drawing conclusions about method acceptability [13]. This can be accomplished through:

Hypothesis Testing: Using a t-test to evaluate whether the measured bias is statistically different from zero, typically at a 5% significance level [13] [18].

Confidence Interval Approach: Examining whether the 95% confidence interval of the mean measurements overlaps with the reference value. Non-overlap suggests significant bias [13].

The evaluation must consider that the significance of bias is affected by the imprecision of the measurement method - highly imprecise methods may mask statistically significant bias [13].

Regression Methods for Bias Characterization

Several statistical approaches can characterize the relationship between methods and identify bias patterns:

Passing-Bablok Regression: A non-parametric method that calculates the median of all possible slopes between individual data points. This approach is robust to outliers and does not assume normal distribution of errors [13] [14].

Deming Regression: Accounts for measurement error in both methods compared, making it more appropriate than ordinary least squares regression for method comparison studies [14].

Difference Plots (Bland-Altman): Visualize the agreement between methods by plotting the differences between paired measurements against their averages. This approach helps identify concentration-dependent bias patterns [14].

Establishing Acceptance Criteria for Bias

Determining whether estimated bias is clinically acceptable requires predefined performance goals based on clinical requirements:

Biological Variation-Based Criteria: Desirable bias should generally not exceed 25% of the within-subject biological variation for a particular analyte. This limits the proportion of results falling outside reference intervals to less than 5.8% [14].

Clinical Decision Limits: For tests with specific clinical cutpoints (e.g., glucose for diabetes diagnosis), deviation at these critical concentrations is more important than average bias across the entire range [14].

Regulatory and Proficiency Testing Criteria: Performance specifications from regulatory bodies or proficiency testing programs provide practical acceptance limits for bias [17].

Table 2: Bias Assessment Decision Framework

| Assessment Step | Key Considerations | Acceptance Indicators |

|---|---|---|

| Statistical Significance | Is bias statistically different from zero? | p-value > 0.05 or 95% CI includes zero [13] |

| Magnitude Evaluation | How large is the bias in clinical context? | Bias < desirable specification based on biological variation [14] |

| Pattern Analysis | Is bias constant or proportional? | Consistent pattern across concentration range [13] |

| Clinical Impact | How does bias affect patient care? | No impact on clinical decisions at critical values [14] |

Research Reagent Solutions for Bias Assessment

Table 3: Essential Materials for Bias Estimation Studies

| Reagent/Material | Function in Bias Assessment | Application Notes |

|---|---|---|

| Certified Reference Materials (CRMs) | Provides reference quantity values with metrological traceability for fundamental bias estimation [13] | Select commutable materials that behave like fresh patient samples; verify expiration dates and storage requirements |

| Proficiency Testing Materials | Allows bias estimation relative to peer group or reference method performance [17] | Use as secondary validation; be aware of potential matrix effects in processed materials |

| Fresh Patient Samples | Serves as commutable test material for method comparison studies [13] [14] | Ideal for assessing real-world performance; ensure adequate sample volume and stability |

| Calibrators and Controls | Establishes measurement traceability chain and monitors ongoing performance [17] | Use multiple concentration levels; verify commutability with patient samples |

| Statistical Software | Performs regression analysis, significance testing, and data visualization [18] [14] | Utilize specialized method validation modules; ensure proper implementation of statistical models |

Bias Assessment Ecosystem Relationships

Impact of Bias on Artificial Intelligence and Advanced Applications

The integration of laboratory data into artificial intelligence (AI) and machine learning (ML) models introduces new dimensions of concern regarding measurement bias:

Algorithmic Bias Propagation: Biased laboratory results can strongly influence AI/ML algorithms that require large healthcare datasets for training, potentially perpetuating and exacerbating health disparities [16].

Generalizability Limitations: Models trained on data from specific measurement platforms may perform poorly when applied to data from different methods or instruments due to lack of harmonization [16].

Interoperability Challenges: Limited interoperability of laboratory results at technical, syntactic, semantic, and organizational levels represents a source of embedded bias that affects algorithm accuracy [16].

Mitigation strategies include increased transparency about measurement methods used in clinical trials, adoption of standardized data formats and ontologies, and local validation of models using site-specific data to assess and correct for bias [16].

Effective management of bias in laboratory measurements requires a systematic approach incorporating appropriate experimental designs, statistical methodologies, and clinical relevance assessments. By implementing standardized protocols for bias estimation and establishing clinically relevant acceptance criteria, laboratories can ensure the reliability of data used for patient care, clinical research, and advanced analytical applications. Regular monitoring and correction of significant bias remains essential for maintaining measurement quality and supporting accurate healthcare decisions.

The Clinical and Laboratory Standards Institute (CLSI) develops international standards and guidelines to ensure the quality and reliability of clinical laboratory testing [19]. Among its extensive portfolio, the EP09 and EP15 guidelines provide structured approaches for evaluating measurement procedures, with particular importance for bias estimation in quantitative laboratory medicine [20] [21].

CLSI EP09, titled "Measurement Procedure Comparison and Bias Estimation Using Patient Samples," provides comprehensive guidance for determining the bias between two measurement procedures [20]. This guideline is designed for both laboratorians and manufacturers, outlining procedures for experimental design and data analysis when comparing measurement methods using patient samples [22]. The third edition, published in 2018, incorporates significant enhancements including improved visualization techniques, expanded regression methods, and more robust statistical approaches for bias estimation [20].

CLSI EP15, "User Verification of Precision and Estimation of Bias," offers a practical protocol for laboratories to verify manufacturers' precision claims and estimate bias relative to materials with known concentrations [21] [23]. The third edition of EP15, reaffirmed in 2019, enables laboratories to complete both precision verification and bias estimation in a single experiment lasting as few as five days [21]. This guideline strikes a balance between statistical rigor and operational feasibility, making it suitable for laboratories of varying sizes and complexities [23].

Together, these guidelines form a critical component of method validation and verification protocols, serving different but complementary purposes in the ecosystem of laboratory quality assurance. Their proper implementation helps ensure that laboratory results are accurate, reliable, and comparable across different measurement platforms and locations [22] [24].

Theoretical Framework and Key Concepts

Understanding Measurement Bias

Measurement bias, or systematic error, refers to the consistent difference between results obtained from a candidate measurement procedure and an accepted reference value [20] [22]. In clinical laboratory science, quantifying bias is essential because it directly impacts medical decision-making at specific clinical concentrations [20]. Bias can arise from various sources including instrument calibration, reagent lots, operator technique, and methodological differences between measurement procedures [24].

The theoretical foundation for bias estimation rests on statistical principles of comparison between measurement methods. Both EP09 and EP15 provide frameworks for quantifying this error, though they approach the problem from different perspectives and with different statistical methodologies [20] [21]. Understanding the nature and magnitude of bias allows laboratories to determine whether a measurement procedure meets required performance specifications before implementing it for patient testing [23].

Relationship Between EP09 and EP15

While both guidelines address bias estimation, they serve distinct purposes within the laboratory quality framework:

EP09 focuses on comprehensive method comparison using patient samples, typically involving 40-100 samples analyzed by both candidate and comparative methods [20] [24]. It provides detailed protocols for characterizing the relationship between two measurement procedures across their measuring intervals [22].

EP15 offers an efficient protocol for verifying manufacturer claims regarding precision and bias, requiring fewer samples and measurements collected over a shorter timeframe [21] [23]. It is designed for situations where the performance of a procedure has been previously established through more extensive studies [21].

The following diagram illustrates the decision-making process for selecting the appropriate guideline based on research objectives:

Regulatory Recognition and Importance

Both EP09 and EP15 have been evaluated and recognized by the U.S. Food and Drug Administration (FDA) as consensus standards for satisfying regulatory requirements [20] [21]. This recognition underscores their importance in the regulatory landscape for in vitro diagnostic devices and laboratory-developed tests.

For drug development professionals and researchers, understanding these guidelines is essential for ensuring that laboratory measurements used in clinical trials meet necessary quality standards. The principles outlined in these documents support data integrity and reliability throughout the drug development process, from preclinical studies to post-marketing surveillance [20] [21].

CLSI EP09: Detailed Methodology

Experimental Design and Sample Requirements

The EP09 guideline outlines specific requirements for designing a method comparison experiment using patient samples [20]. The recommended experiment involves:

Sample Size: Typically 40-100 patient samples distributed across the measuring interval [20] [24]. In a practical application evaluating three biochemistry analyzers, researchers used 40 samples for comparing 40 different analytes [24].

Sample Characteristics: Samples should cover the clinically relevant range, with particular attention to medical decision points [20]. The samples should be stable and representative of the patient population for which the test will be used [22].

Replication: Depending on the precision of the methods being compared, duplicate or triplicate measurements may be recommended to account for random variation [20].

Comparator Method: The ideal comparator is a reference method with established accuracy. Alternatively, a currently used routine method with well-characterized performance may serve as the comparator [22].

Statistical Analysis and Data Interpretation

EP09 provides comprehensive guidance on statistical approaches for analyzing method comparison data:

Visual Data Exploration: The guideline recommends creating scatter plots and difference plots (Bland-Altman plots) for initial visual assessment of the relationship between methods and identification of potential outliers, nonlinear relationships, or concentration-dependent biases [20].

Regression Techniques: For quantifying the relationship between methods, EP09 describes several regression approaches:

Bias Estimation: The relationship established through regression analysis allows for estimation of bias at specific medical decision concentrations [20]. Statistical significance of bias is assessed through confidence intervals [20].

Outlier Detection: The guideline recommends using the extreme studentized deviate method for objective identification of outliers that might unduly influence the statistical analysis [20].

The following workflow diagram illustrates the key steps in implementing the CLSI EP09 protocol:

Practical Application Example

A research study demonstrated the practical application of EP09 by comparing three distinct biochemistry analytical systems in one clinical laboratory [24]. The researchers followed EP09-A2 (the previous edition) to evaluate 40 different analytes across Vitros5600, Hitachi7170, and Cobas 8000 analyzers [24]. Their findings revealed that while most analytes showed good correlation (R² > 0.95 for 36 of 40 analytes between Hitachi7170 and Cobas8000), several analytes exhibited significant bias exceeding acceptable criteria [24]. Particularly noteworthy was their observation that bias between dry chemical and conventional wet chemical analyzers could reach 30% for some analytes, highlighting the importance of rigorous method comparison studies [24].

CLSI EP15: Detailed Methodology

Experimental Design for Verification Studies

EP15 provides a streamlined approach for verifying manufacturer claims regarding precision and bias [21] [23]. The experimental design includes:

Duration: As few as five days, making it efficient for laboratory implementation [21].

Sample Materials: Two or more materials at different medical decision concentrations, which can include patient samples, reference materials, proficiency testing samples, or control materials [23].

Replication: Five measurements per run for five to seven runs, generating at least 25 replicates for each sample material [23]. This design allows estimation of both within-run and between-run components of imprecision.

Materials with Assigned Values: For bias estimation, materials with known target concentrations are essential [21]. The quality of the bias estimate depends on the uncertainty of the assigned values [23].

Data Analysis and Interpretation

The EP15 protocol employs specific statistical approaches for data analysis:

Precision Verification: Analysis of variance (ANOVA) is used to calculate repeatability (within-run) and within-laboratory (total) standard deviations [23]. These calculated values are compared to manufacturer claims using verification limits that account for statistical variability in the estimation process [23].

Bias Estimation: The mean of all measurements for each material is compared to the assigned value [23]. A verification interval is calculated around the target value, considering both the uncertainty of the target value and the standard error of the mean from the experiment [23].

Decision Rules: If the mean measured value falls within the verification interval, there is no statistically significant bias [23]. If it falls outside this interval, statistically significant bias exists, and the user must compare the estimated bias to allowable bias based on clinical requirements [23].

Comparison with Previous Versions

The third edition of EP15 introduced significant changes from previous versions:

Combined Experiment: Creation of a single experiment for verifying both precision and bias, improving efficiency [23].

Elimination of Patient Comparison: Removal of the small patient sample comparison experiment (previously 20 samples) that was included in earlier versions [23]. The committee determined that this approach had limited value and recommended that laboratories needing patient comparisons should use EP09 instead [23].

Simplified Calculations: Incorporation of tables to simplify complex statistical calculations, making the protocol more accessible to laboratories without advanced statistical expertise [21] [23].

Comparative Analysis: EP09 vs. EP15

Application Scopes and Limitations

Understanding the distinct applications and limitations of each guideline is crucial for appropriate implementation:

EP09 is designed for comprehensive characterization of the relationship between two measurement procedures, making it suitable for method validation, comparison of different instruments or platforms, and thorough bias assessment across the measuring interval [20] [22]. However, it requires more resources, time, and a larger number of patient samples [24].

EP15 is optimized for verification of manufacturer claims in routine laboratory practice, offering a streamlined approach that can be completed quickly with fewer resources [21] [23]. Its limitations include less statistical power for rejecting precision claims and dependence on the quality of assigned values for bias estimation [21].

Statistical Approaches and Outputs

The guidelines employ different statistical methodologies suited to their respective purposes:

Table: Comparison of Statistical Approaches in EP09 and EP15

| Aspect | CLSI EP09 | CLSI EP15 |

|---|---|---|

| Primary Statistical Methods | Deming regression, Passing-Bablok regression, difference plots | Analysis of Variance (ANOVA), verification limits, verification intervals |

| Sample Size Requirements | 40-100 patient samples [20] [24] | 25+ measurements per material (5 days × 5 replicates) [23] |

| Bias Estimation Approach | Regression-based estimation at any concentration, particularly clinical decision points [20] | Comparison to assigned values of reference materials [21] |

| Precision Assessment | Not the primary focus (see EP05 for comprehensive precision evaluation) [20] | Integrated precision verification using ANOVA components [23] |

| Outputs | Regression equations, bias estimates with confidence intervals, difference plots | Verification of precision claims, bias estimates relative to assigned values |

Selection Criteria for Research Applications

Choosing between EP09 and EP15 depends on the specific research objectives:

Use EP09 when:

- Establishing the performance of a new measurement procedure

- Comparing two measurement procedures to determine equivalence

- Comprehensive characterization of bias across the measuring interval is needed

- Research requires estimation of bias at specific clinical decision points

Use EP15 when:

- Verifying manufacturer claims for precision and bias

- Resources or time are limited

- Implementing a previously validated method in a new laboratory setting

- Patient samples are difficult to obtain for a comprehensive comparison [23]

Implementation in Standard Operating Procedures

Integration into Quality Management Systems

Both EP09 and EP15 protocols should be formally incorporated into laboratory Standard Operating Procedures (SOPs) to ensure consistency and compliance with quality standards [25]. Well-conceived SOP templates assure completeness and comprehension, with CLSI guideline QMS02-A6 recommending a comprehensive structure including purpose, scope, reagents, equipment, safety precautions, sample requirements, quality control, procedural steps, calculations, interpretation, and references [25].

When developing SOPs for bias estimation research, several key elements should be addressed:

Clear Statement of Purpose: Define whether the procedure is for comprehensive method comparison (EP09) or verification of performance claims (EP15) [25]

Detailed Experimental Protocols: Specify sample requirements, replication schemes, and acceptance criteria based on the selected guideline [20] [21]

Statistical Analysis Plans: Document specific statistical methods, software tools, and decision rules for data interpretation [23]

Roles and Responsibilities: Identify qualified personnel responsible for executing the protocol and interpreting results [25]

Essential Materials and Reagent Solutions

Implementing EP09 and EP15 protocols requires specific materials and reagents tailored to the research context:

Table: Essential Research Reagents and Materials for Bias Estimation Studies

| Item | Function in EP09 | Function in EP15 |

|---|---|---|

| Patient Samples | Primary test material for method comparison; should cover measuring interval and clinical decision points [20] | Optional material; may be used if demonstrating commutability is essential |

| Certified Reference Materials | Validation of comparator method accuracy; establishing traceability [22] | Primary material for bias estimation; provides target values with known uncertainty [23] |

| Quality Control Materials | Monitoring stability of measurement procedures during comparison | Precision verification across multiple runs [23] |

| Calibrators | Ensuring proper calibration of both candidate and comparator methods | Ensuring proper calibration of candidate method |

| Reagent Kits | Consistent reagent lots recommended throughout study | Consistent reagent lots recommended throughout study |

Practical Implementation Considerations

Successful implementation of EP09 and EP15 protocols requires attention to several practical aspects:

Timeline Planning: EP09 typically requires several weeks to complete due to sample collection and analysis requirements, while EP15 can be completed in approximately one week [20] [23].

Resource Allocation: EP09 demands more extensive resources including significant analyst time, reagent consumption, and data analysis effort compared to the more streamlined EP15 approach [20] [21].

Data Management: Both protocols generate substantial data requiring careful organization, appropriate statistical analysis, and comprehensive documentation for regulatory compliance [20] [21].

Personnel Training: Technicians should receive proper training on the specific protocols, statistical methods, and acceptance criteria to ensure consistent implementation and accurate interpretation of results [25].

CLSI guidelines EP09 and EP15 provide robust, statistically sound frameworks for bias estimation in clinical laboratory measurement procedures. While EP09 offers a comprehensive approach for method comparison using patient samples, EP15 provides an efficient protocol for verification of manufacturer claims. Understanding the distinct applications, methodological approaches, and implementation requirements of these guidelines enables researchers and laboratory professionals to select the appropriate framework based on their specific research objectives and resource constraints.

Proper implementation of these guidelines through well-designed standard operating procedures ensures the reliability and accuracy of quantitative measurement procedures, ultimately supporting quality patient care and valid research outcomes. As the field of laboratory medicine continues to evolve, these guidelines remain essential tools for maintaining and verifying the quality of laboratory measurements in both research and clinical practice.

Executing a Bias Estimation Study: Experimental Design and Statistical Analysis

This document establishes a standard operating procedure for the design of experiments within bias estimation research. A rigorous approach to sample size determination, participant selection, and stability assessment is fundamental to producing reliable, reproducible, and interpretable results. This protocol provides detailed methodologies and actionable frameworks to minimize systematic errors and enhance the validity of scientific findings in drug development and related fields.

Sample Size Determination Protocols

Selecting an appropriate sample size is a critical step that balances statistical power, practical constraints, and the risk of bias. The following section outlines validated methodologies.

Table 1: Sample Size Calculation Methods for Common Experimental Designs

| Experimental Design | Key Formula / Method | Parameters Required | Application Context |

|---|---|---|---|

| Discrete Choice Experiments (DCE) | Regression-based method or new rule of thumb [26] | Desired power, significance level, number of choice sets, alternatives per set | Estimating patient or healthcare professional preferences for treatment attributes. |

| Neyman-Pearson Inference | Power Analysis [27] | Effect size (from lowest available estimate), significance level (α), power (≥0.95) [27] | Hypothesis-driven research, such as comparing the efficacy of two drug formulations. |

| Bayesian Hypothesis Testing | Bayes Factor (BF) calculation [27] | Specified distribution for theory's predictions, target BF (e.g., ≥10), maximum feasible sample size [27] | Sequential analysis or when incorporating prior knowledge into trial design. |

| Bias Estimation Studies | Familywise error rate control [18] | Significance level (e.g., 5% familywise), number of comparison levels, assigned value uncertainty [18] | Method validation and verification studies to estimate systematic error relative to a reference. |

Protocol: A Priori Power Analysis for Hypothesis-Driven Research

This protocol ensures the sample size is sufficient to detect a predefined effect size with high probability, minimizing false negatives.

- Materials and Reagents: Statistical computing software (e.g., R, PASS, G*Power).

- Procedure:

- Define the Effect Size: Justify the smallest effect size of scientific interest. To counteract publication bias, base this on the lowest available or meaningful estimate from prior literature, not the largest or most optimistic [27].

- Set Error Rates: Fix the Type I error rate (α) at 0.05 and the Type II error rate (β) at 0.05 or lower, corresponding to a power (1-β) of 0.95 or higher [27].

- Select Statistical Test: Identify the planned test for the primary endpoint (e.g., two-sample t-test, ANOVA, chi-square).

- Calculate Sample Size: Using statistical software, input the effect size, α, power, and test-specific parameters (e.g., group allocation ratio) to compute the required sample size per group.

- Validation: The calculation is validated by its adherence to the pre-specified parameters. No data collection may commence until this calculation is documented in the study protocol [27].

Protocol: Bayesian Sample Size Determination for Sequential Analysis

This protocol is suitable for studies where data is evaluated as it is collected, allowing for a potential early stopping rule.

- Materials and Reagents: Bayesian statistical software (e.g., R, Stan).

- Procedure:

- Specify Predictions: Define the predictions of the theory by choosing a distribution (e.g., half-normal for a likely effect size) and its parameters [27].

- Set Stopping Rules:

- Primary Rule: Data collection continues until the Bayes Factor in favor of the experimental hypothesis over the null hypothesis (or vice versa) reaches a pre-defined threshold, typically at least 10 [27].

- Contingency Rule: If resource limitations exist, specify a maximum feasible sample size. At this point, data collection ceases regardless of the Bayes factor, acknowledging that an inconclusive result is still informative [27].

- Interim Analysis Plan: Pre-specify the data inspection points and the method for calculating the Bayes factor at each interval.

- Validation: Adherence to the pre-registered stopping rules and analysis plan is critical. Any deviation may invalidate the study's conclusions [27].

Sample Selection and Stability Assessment

Protocol: Defining Sample Characteristics and Exclusion Criteria

A clear definition of the sample population and rules for exclusion is essential to prevent selection bias.

- Procedure:

- Inclusion Criteria: Define all characteristics a subject must possess to be included in the study (e.g., disease stage, demographic range, treatment-naïve status).

- Exclusion Criteria: Objectively define all conditions that would lead to a subject's exclusion prior to data analysis. This includes criteria related to technical errors, safety, or protocol adherence [27].

- Data Replacement Policy: Specify under what conditions, if any, excluded data points will be replaced. The procedure for replacement must be objective and documented [27].

Protocol: Estimating and Testing for Bias (Trueness)

This protocol follows CLSI EP15-A3 guidelines to estimate the bias of a measurement procedure against reference materials with known assigned values [18].

- Materials and Reagents:

- Samples with known assigned values (e.g., reference standards, proficiency testing materials).

- Documentation of the standard uncertainty for each assigned value.

- Procedure:

- Measure Assigned Samples: Analyze each reference sample according to the standard operating procedure of the method under evaluation.

- Record Values: Document the observed value for each sample.

- Calculate Bias: For each level, compute the difference between the observed value and the assigned value. This is the bias [18].

- Statistical Testing: Perform a hypothesis test for each level to determine if the bias is statistically significantly different from zero. To control the familywise error rate across multiple comparisons, use a significance level of 5%/n, where n is the number of levels tested [18].

- Data Analysis:

- A significant test (p-value < adjusted significance level) indicates evidence that the bias is not zero.

- The bias estimate should be compared to a user-specified allowable limit to determine if it is clinically or analytically acceptable [18].

Experimental Workflow for Bias-Resistant Experimental Design

The following diagram summarizes the integrated workflow for designing an experiment that incorporates the protocols for sample size, selection, and stability.

Diagram 1: Integrated workflow for robust experimental design.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Bias Estimation and Experimental Research

| Item | Function & Importance in Bias Mitigation |

|---|---|

| Reference Materials | Substances with one or more properties that are sufficiently homogeneous and well-established to be used for the calibration of an apparatus or the verification of a measurement method. Critical for estimating trueness (bias) [18]. |

| Statistical Software | Tools for performing a priori power analysis, Bayesian analysis, and complex statistical modeling. Essential for objective, pre-registered sample size determination and data analysis [27] [26]. |

| Laboratory Log | A detailed, chronological record of all experimental procedures, deviations, and environmental conditions. Provides traceability and is a key tool for troubleshooting failed experiments and identifying sources of instability [27]. |

| Proficiency Testing (PT) Materials | Samples distributed by an external provider to multiple laboratories for comparative analysis. Used to assess a laboratory's testing performance and identify potential bias against a consensus value [18]. |

| Unique Resource Identifiers | Persistent, unique identifiers for key biological resources (e.g., antibodies, cell lines, plasmids). Ensures precise reporting and enables accurate replication of experiments, reducing variability and ambiguity [28]. |

In the establishment of a standard operating procedure for bias estimation research, the selection of an appropriate comparative method is a foundational decision. This choice directly influences the validity and interpretation of the systematic error, or bias, identified in a new measurement procedure [29]. Bias is quantitatively defined as the average deviation from a true value, representing the component of measurement error that remains constant in replicate measurements on the same item [14]. Within clinical and laboratory sciences, the process of estimating this bias is typically conducted through a method comparison experiment, where a set of specimens is assayed by both an existing method and a new candidate method [30] [14]. The central distinction in this process lies in whether the comparison is made against a reference method or a routine comparative method; this distinction determines whether observed discrepancies are definitively attributed to the new method or must be interpreted as relative differences between two imperfect techniques [29] [30].

Defining Reference and Routine Comparative Methods

Reference Methods

A reference method carries a specific, technical meaning implying a high-quality method whose results are known to be correct. This correctness is established through comparative studies with an accurate "definitive method" and/or through the traceability of standard reference materials [30]. When a test method is compared to a reference method, any observed differences are conclusively assigned as bias of the test method, because the correctness of the reference is well-documented and accepted [29]. The average bias estimated from such a comparison is, therefore, a direct measure of the trueness of the new method [29] [21].

Routine Comparative Methods

A routine comparative method (or comparative method) is a more general term for any existing laboratory method used for comparison. It does not carry the implication that its correctness has been rigorously documented. Most methods used in daily laboratory operation fall into this category [30]. When a new method is compared against a routine method, observed differences must be interpreted with caution. If the differences are small and medically acceptable, the two methods can be said to have the same relative accuracy. However, if differences are large, additional investigations—such as recovery and interference experiments—are necessary to identify which method is the source of the inaccuracy [30].

The table below summarizes the core differences between these two types of comparative methods.

Table 1: Key Characteristics of Reference and Routine Comparative Methods

| Characteristic | Reference Method | Routine Comparative Method |

|---|---|---|

| Definition | A method with documented correctness via definitive methods or traceable standards [30]. | A method used for routine laboratory analysis without verified reference-level status [30]. |

| Purpose in Comparison | To definitively measure the trueness/bias of a new candidate method [29]. | To assess the relative agreement between the new method and the current operational method [29]. |

| Interpretation of Differences | All differences are attributed to the bias of the candidate (test) method [29] [30]. | Differences must be interpreted carefully; the source of error (old or new method) is not known a priori [30]. |