Strategies to Lower LOD and LOQ: A Comprehensive Guide for Enhanced Trace Analysis in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals seeking to improve the sensitivity and reliability of their analytical methods.

Strategies to Lower LOD and LOQ: A Comprehensive Guide for Enhanced Trace Analysis in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals seeking to improve the sensitivity and reliability of their analytical methods. It covers the fundamental definitions of Limit of Detection (LOD) and Limit of Quantification (LOQ), explores practical methodologies for their enhancement across various techniques, addresses common troubleshooting scenarios, and outlines rigorous validation frameworks. By integrating foundational knowledge with advanced optimization strategies, this resource aims to equip scientists with the tools necessary to achieve robust, low-level detection capabilities critical for advancing biomedical and clinical research.

LOD and LOQ Demystified: Core Concepts and Calculation Methods

Core Definitions and Distinctions

In analytical chemistry, characterizing an method's capability at low concentrations is crucial. The Limit of Blank (LoB), Limit of Detection (LOD), and Limit of Quantitation (LOQ) are hierarchical parameters that describe this capability, each with a distinct purpose [1].

The following table summarizes the core features of each parameter:

| Parameter | Definition | Typical Statistical Basis | Primary Question Answered |

|---|---|---|---|

| LoB | The highest apparent analyte concentration expected to be found when replicates of a blank sample (containing no analyte) are tested [1]. | LoB = mean~blank~ + 1.645(SD~blank~) [1] | What is the upper limit of the background noise? |

| LOD | The lowest analyte concentration that can be reliably distinguished from the LoB. Detection is feasible, but quantification may be unreliable [1] [2]. | LOD = LoB + 1.645(SD~low concentration sample~) OR LOD = 3.3 × σ / S [1] [2] | Is the analyte present or absent? |

| LOQ | The lowest concentration at which the analyte can be quantified with acceptable precision and accuracy, as defined by pre-set goals [1] [3]. | LOQ = 10 × σ / S [2] [3] | How much of the analyte is present? |

The conceptual relationship between LoB, LOD, and LOQ is hierarchical, with each representing a higher, more reliable level of measurement certainty. This relationship can be visualized as a progression from measuring noise to reliable quantification.

Detailed Experimental Protocols

Determining Limit of Blank (LoB)

The LoB is established by repeatedly measuring a blank sample to characterize the background signal of the method [1].

- Sample Type: A blank sample containing no analyte, but with a matrix commutable with real patient or test specimens (e.g., a zero-level calibrator) [1].

- Replicates: For a robust establishment, 60 replicate measurements are recommended. For verification of a manufacturer's claim, 20 replicates may suffice [1].

- Procedure:

- Analyze the 60 blank samples using the full analytical method.

- Calculate the mean (mean~blank~) and standard deviation (SD~blank~) of the results.

- Calculate the LoB using the formula: LoB = mean~blank~ + 1.645(SD~blank~) [1].

- Statistical Note: The factor 1.645 is derived from a one-sided confidence interval, assuming 95% of the blank measurements will fall below this value if the data follows a Gaussian distribution [1].

Determining Limit of Detection (LOD)

The LOD is determined using both the previously measured LoB and a sample with a low concentration of analyte [1].

- Sample Type: A sample containing a low concentration of analyte, such as a dilution of the lowest non-negative calibrator [1].

- Replicates: Again, 60 replicates are recommended for establishment, and 20 for verification [1].

- Procedure:

- Analyze the 60 low-concentration samples.

- Calculate the mean and standard deviation (SD~low concentration sample~) of the results.

- Calculate the LOD using the formula: LOD = LoB + 1.645(SD~low concentration sample~) [1].

- Alternative Approach (Calibration Curve): The LOD can also be determined from a calibration curve using the formula: LOD = 3.3 × σ / S, where 'σ' is the standard deviation of the response (e.g., from the blank or the residuals of the regression) and 'S' is the slope of the calibration curve [2]. This factor of 3.3 corresponds to a confidence level of about 90-99% for distinguishing the signal from the blank [2] [4].

Determining Limit of Quantitation (LOQ)

The LOQ is the lowest concentration that meets predefined goals for bias and imprecision (e.g., a relative standard deviation of 10% or 20%) [1] [3].

- Sample Type: Samples with concentrations at or just above the estimated LOD [1].

- Procedure:

- Analyze multiple replicates (e.g., n=20) of a sample with a concentration near the expected LOQ.

- Calculate the precision (% CV) and accuracy (bias) of the measured results.

- If the precision and accuracy meet the predefined goals (e.g., ≤20% CV and ≤20% bias for bioanalytical methods [3]), that concentration is the LOQ.

- If the goals are not met, repeat the process with a slightly higher concentration until a concentration is found that satisfies the criteria [1].

- Alternative Approach (Signal-to-Noise): For chromatographic methods, an LOQ is often estimated as a signal-to-noise ratio of 10:1 [2] [3].

- Alternative Approach (Calibration Curve): Similar to the LOD, the formula LOQ = 10 × σ / S can be used, where 'σ' is the standard deviation and 'S' is the slope of the calibration curve [2].

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My analyte signal falls between the LOD and LOQ. What does this mean, and what should I do?

A: A signal between the LOD and LOQ indicates that the analyte is highly likely to be present, but its concentration cannot be determined with the required precision and accuracy [5]. For reporting, you may use "< LOQ" or "detected but not quantifiable." To obtain a quantitative result, consider:

- Repeating the analysis with more replicates to reduce variability [5].

- Increasing sample concentration via techniques like solid-phase extraction or evaporation [5] [6].

- Using a more sensitive analytical technique (e.g., LC-MS/MS instead of UV detection) [5].

- Optimizing instrument parameters or the sample preparation process to enhance the signal and improve the signal-to-noise ratio [5] [6].

Q2: What are the most effective strategies to lower the LOD and LOQ of my analytical method?

A: Lowering LOD and LOQ is fundamentally about increasing the signal-to-noise ratio. Strategies can be categorized as follows:

| Strategy Category | Specific Examples | Brief Rationale |

|---|---|---|

| Increase Signal | - Increase injection volume (if possible) [6].- Use a detector with higher inherent sensitivity for the analyte (e.g., MS vs. UV) [5].- Use on-column concentration (for weak solvents) [6]. | Puts more analyte mass into the system, leading to a larger signal. |

| Reduce Noise | - Improve sample cleanup to reduce matrix interference [6].- Use cleaner reagents and solvents.- Ensure proper instrument maintenance. | Reduces the baseline variability, making the signal easier to distinguish and measure. |

| Improve Chromatography | - Use a column with smaller internal diameter [6].- Use a column with smaller particle size [6].- Optimize the mobile phase composition. | Sharpens the peak, increasing peak height (signal) relative to baseline noise. |

Q3: Can the LOQ ever be the same as the LOD?

A: Theoretically, if the bias and imprecision at the LOD concentration already meet the predefined goals for quantification, then the LOQ can be set equal to the LOD [1]. However, in practice, the LOQ is almost always found at a higher concentration because the imprecision is too large (e.g., >20% CV) at the LOD to allow for reliable quantification [1] [7].

Q4: Why are there different formulas and factors (e.g., 1.645, 3.3, 10) for calculating LOD and LOQ?

A: The different factors reflect different statistical confidence levels and approaches. The factor 1.645 is used in the EP17 protocol for a 95% one-sided confidence level for a non-Gaussian distribution [1]. The factors 3.3 and 10 are commonly used with the standard deviation and slope of the calibration curve and represent approximately 99% confidence for detection and 10% RSD for quantification, respectively [2] [4]. The specific factor and formula used depend on the guiding regulatory or standards body (e.g., CLSI, ICH).

Essential Research Reagent Solutions

The following materials are critical for properly establishing and validating LoB, LOD, and LOQ.

| Material / Solution | Critical Function |

|---|---|

| Blank Matrix | A sample with the same matrix as the unknown samples (e.g., plasma, water, buffer) but without the analyte. It is essential for determining the LoB and characterizing background noise [1]. |

| Certified Reference Materials (CRMs) | Samples with a known and traceable concentration of the analyte. Crucial for preparing accurate low-concentration standards to empirically determine LOD and LOQ [1]. |

| Matrix-Matched Standards | Calibration standards prepared in the same blank matrix as the unknown samples. This corrects for matrix effects that can suppress or enhance the analyte signal, leading to more accurate LoB, LOD, and LOQ estimates [5]. |

| Solid-Phase Extraction (SPE) Cartridges | Used for sample cleanup and preconcentration. Removing interfering matrix components reduces noise, while preconcentration increases the analyte signal, both of which can help lower the practical LOD and LOQ [5] [6]. |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between LOD and LOQ?

The Limit of Detection (LOD) is the lowest concentration of an analyte that can be reliably distinguished from a blank sample (containing no analyte), but it cannot be precisely quantified. In contrast, the Limit of Quantitation (LOQ) is the lowest concentration at which the analyte can not only be reliably detected but also measured with acceptable precision and bias (accuracy) [1] [8]. Think of LOD as the point where you know something is there, and LOQ as the point where you can confidently say how much is there.

Q2: When should I use the signal-to-noise ratio method versus the CLSI EP17 protocol?

The Signal-to-Noise Ratio (S/N) method is most suitable for chromatographic and spectroscopic techniques that exhibit a consistent baseline noise [9] [10]. It is a direct and quick approach, ideal for system suitability tests or early method development. The CLSI EP17 protocol provides a more rigorous statistical foundation and is particularly critical for clinical laboratory methods, immunoassays, or when a full validation is required to satisfy regulatory requirements. EP17 is essential when you need to comprehensively understand the overlap in distributions between blank and low-concentration samples [11] [1] [12].

Q3: My calculated LOD seems too high for my assay's intended use. What are the most effective ways to lower it?

Lowering the LOD requires either increasing the analyte signal, reducing the background noise, or both [13]. Key strategies include:

- Optimizing Detector Settings: Adjusting the detector wavelength to the analyte's maximum absorbance or using a more selective detector (like fluorescence or MS) can significantly boost the signal [13].

- Improving Sample Cleanup: Reducing sample matrix interference through solid-phase extraction or other purification techniques lowers baseline noise [13].

- Enhancing Signal Strength: When sample volume allows, injecting a larger mass of analyte can directly increase the signal. For immunoassays, minimizing non-specific binding is crucial to reduce the background (Limit of Blank) [8].

Q4: How many replicates are necessary to properly determine LOD and LOQ according to CLSI EP17?

The CLSI EP17 guideline recommends a robust experimental design to capture expected instrument and reagent variability. For a manufacturer to establish these parameters, it is recommended to use at least 60 replicates for both blank and low-concentration samples. For a laboratory verifying a manufacturer's claims, a minimum of 20 replicates is typically sufficient [1].

Troubleshooting Guides

Issue 1: High Baseline Noise Leading to Poor LOD

Problem: The chromatographic or spectroscopic baseline is noisy, obscuring low-level analyte peaks and resulting in an unacceptably high LOD.

Solution:

- Check and Stabilize Temperature: Ensure the column and detector cell are properly thermostatted and protected from drafts, as temperature fluctuations are a common source of noise [13].

- Use High-Purity Solvents and Reagents: Always use HPLC-grade solvents and high-purity reagents to minimize chemical background noise [13].

- Optimize Data System Settings: Adjust the detector time constant (or response time) and data sampling rate. A general rule is to set the time constant to about one-tenth the width of your narrowest peak of interest. Over-smoothing can distort or hide small peaks [10] [13].

- Improve Mobile Phase Mixing: For isocratic methods, adding a pulse damper or manually pre-mixing solvents can create a quieter baseline. For gradient methods, ensure the mixer is functioning correctly [13].

- Implement Column Flushing: Regularly flush the column with a strong solvent to elute strongly retained compounds that can contribute to background noise [13].

Issue 2: Inconsistent LOD/LOQ Values During Method Verification

Problem: When verifying a manufacturer's claims, your calculated LOD and LOQ values are inconsistent and do not fall within the expected range.

Solution:

- Verify Sample Preparation: Meticulously confirm the preparation of your blank and low-concentration samples. Use the appropriate matrix, and ensure the low-concentration sample is commutable with patient specimens [1].

- Adhere to the Replication Plan: Strictly follow the CLSI EP17-recommended replication (e.g., 20 measurements for verification) and perform the tests over multiple days to capture inter-assay variation [1] [14].

- Check Instrument Calibration and Performance: Ensure the instrument is properly calibrated and that key performance parameters (e.g., lamp energy, detector sensitivity) are within specification before starting the verification study.

- Use Non-Parametric Methods if Needed: If the data from your replicates does not follow a normal (Gaussian) distribution, the CLSI EP17 protocol allows for the use of non-parametric statistical techniques to calculate LoB and LoD more accurately [1].

Core Calculation Methods and Protocols

The following table summarizes the primary standard methods for determining LOD and LOQ.

Table 1: Summary of Standard Calculation Methods for LOD and LOQ

| Method | Principle | Typical Application | Key Formulas / Criteria | Experimental Protocol |

|---|---|---|---|---|

| Signal-to-Noise (S/N) [9] [10] | Compares the height of the analyte signal to the amplitude of the background noise. | Chromatographic methods (HPLC, UHPLC), spectroscopic techniques. | LOD: S/N ≥ 2:1 or 3:1. LOQ: S/N ≥ 10:1. | 1. Inject a blank and a low-concentration sample.2. Measure peak-to-peak noise in a blank region.3. Measure analyte peak height.4. Calculate S/N = (Analyte Signal) / (Baseline Noise). |

| Standard Deviation of the Blank and Slope [9] | Uses the variability of the blank and the sensitivity (slope) of the calibration curve. | General analytical procedures, often referenced in ICH Q2 guidelines. | LOD = 3.3 × σ / SLOQ = 10 × σ / S(σ = std dev of response; S = slope of calibration curve) | 1. Analyze multiple (n≥10) blank samples.2. Construct a calibration curve at low concentrations.3. Determine the standard deviation of the blank (or the residual std dev of the regression) and the slope. |

| CLSI EP17 Protocol [11] [1] | Statistically distinguishes the distribution of blank samples from low-concentration samples. | Clinical laboratory measurement procedures, immunoassays, IVDs. | LoB = mean(blank) + 1.645 × SD(blank)LoD = LoB + 1.645 × SD(_low concentration sample) | 1. Test ≥60 (establish) or ≥20 (verify) replicates of blank samples.2. Test the same number of replicates of a low-concentration sample.3. Calculate LoB and LoD using the formulas, confirming ≤5% of low-concentration results fall below the LoB. |

| Visual Evaluation [9] | Determines the concentration at which an analyte is visually detected by an analyst or instrument. | Qualitative or semi-quantitative assays, gel electrophoresis, particle analysis. | LOD/LOQ set at a predefined probability of detection (e.g., LOD at 99%). | 1. Prepare samples at 5-7 known low concentrations.2. Perform 6-10 determinations per concentration.3. Use logistic regression to model the probability of detection vs. concentration. |

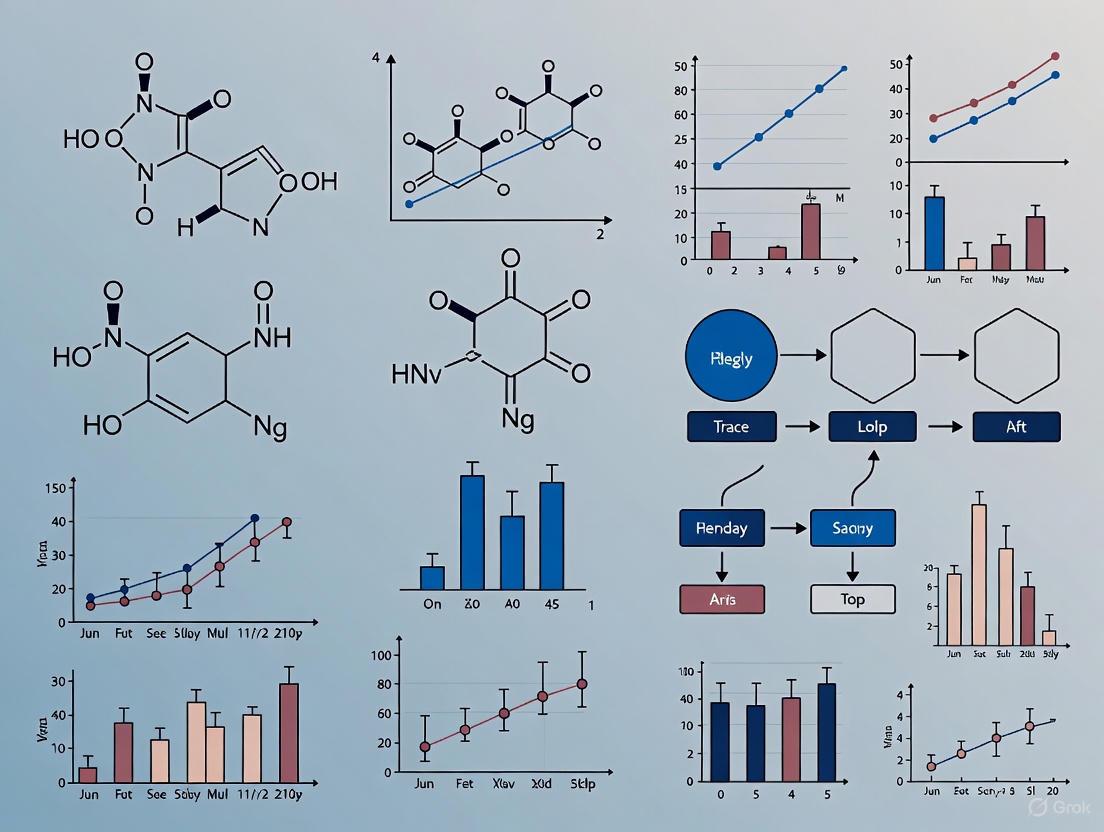

Experimental Workflow and Relationships

The following diagram illustrates the logical relationship between key concepts in detection capability and the primary pathways for its determination.

Essential Research Reagent Solutions

This table outlines key materials and their functions when characterizing detection capability, particularly for immunoassays.

Table 2: Key Reagents and Materials for Detection Capability Experiments

| Item | Function in Experiment | Critical Consideration |

|---|---|---|

| Blank (Zero) Matrix | A sample containing no analyte, used to determine the LoB and background signal. | Must be commutable with real patient samples (e.g., stripped serum, artificial urine) to reflect true assay background [1] [8]. |

| Low-Level Quality Control (QC) Material | A sample with a known, low concentration of analyte, used to determine LoD and LoQ. | Should be close to the expected LoD and prepared in the same matrix as the blank to ensure a fair comparison of distributions [1]. |

| High-Purity Analytical Standards | Used to prepare precise calibrators and the low-level QC material. | Purity must be certified to ensure accurate assignment of target concentrations for LoQ bias assessment. |

| Matrix-Specific Buffers & Blockers | Reagents used to minimize non-specific binding in immunoassays and other binding assays. | Critical for achieving a low LoB, which directly enables a lower LoD. Optimization is required for each assay [8]. |

Definitions and Core Concepts: Understanding LOD, LOQ, and LoB

What are LOD and LOQ, and how do they differ?

In drug development, the Limit of Detection (LOD) and Limit of Quantification (LOQ) are fundamental parameters that describe the sensitivity of an analytical method. According to ICH guidelines, the LOD is the lowest amount of an analyte that can be detected, but not necessarily quantified as an exact value. In contrast, the LOQ is the lowest amount of an analyte that can be quantitatively determined with suitable precision and accuracy [15] [9].

A third related term is the Limit of Blank (LoB). The LoB is defined as the highest apparent analyte concentration expected to be found when replicates of a blank sample (containing no analyte) are tested. It represents the measurement result at the threshold for a false positive [1].

Why are low LOD and LOQ values critical in pharmaceutical development?

Achieving low LOD and LOQ values is paramount for several reasons:

- Impurity and Degradant Profiling: They enable the detection and quantification of low-level impurities and degradation products, ensuring product safety and stability [16].

- Metabolite Identification: In bioanalysis, sensitive methods are required to track and quantify drug metabolites in complex biological matrices like plasma [17] [3].

- Adherence to Regulatory Standards: Global regulatory bodies like the FDA and EMA continuously lower acceptable limits for contaminants, requiring increasingly sensitive methods for compliance [18].

- Biomarker and Therapeutic Drug Monitoring: Low LOQs allow for the precise measurement of biomarkers and drug concentrations at low levels, which is essential for early disease detection and ensuring patient safety through therapeutic drug monitoring [18] [3].

Determination Methods and Calculations: A Practical Guide

What are the standard methods for determining LOD and LOQ?

There are multiple accepted approaches for determining LOD and LOQ, as outlined in guidelines from ICH, IUPAC, and CLSI. The choice of method depends on the nature of the analytical technique [9] [19]. The table below summarizes the most common methodologies.

| Method | Basis of Calculation | Typical LOD | Typical LOQ | Best Suited For |

|---|---|---|---|---|

| Signal-to-Noise Ratio [9] [20] | Comparison of analyte signal to background noise. | S/N ≥ 2 or 3 | S/N ≥ 10 | Chromatographic methods (HPLC, UHPLC). |

| Standard Deviation of the Blank [1] [9] | Mean and standard deviation (SD) of blank sample measurements. | LoB + 1.645(SDlow concentration) | Meanblank + 10(SDblank) | Methods where a true blank matrix is available. |

| Standard Deviation and Slope of Calibration Curve [9] [19] | Uses the standard error of the regression and the calibration curve's slope. | 3.3σ / Slope | 10σ / Slope | Quantitative assays without significant background noise. |

| Visual Evaluation [9] | Analysis of samples with known concentrations to determine the minimum level for reliable detection. | Determined by analyst/instrument | Determined by analyst/instrument | Non-instrumental methods (e.g., visual color change). |

How do I calculate LOD and LOQ using the standard deviation of the blank?

The CLSI EP17 guideline provides a robust statistical framework [1]:

- LoB: Analyze a minimum of 20 (for verification) to 60 (for establishment) replicates of a blank sample.

- Calculate the mean and standard deviation (SDblank).

- LoB = meanblank + 1.645(SDblank) (This one-sided calculation assumes a 95% probability that a blank measurement will be below this limit).

- LOD: Analyze a low-concentration sample (near the expected detection limit) with a minimum of 20 replicates.

- Calculate the standard deviation (SDlow concentration).

- LOD = LoB + 1.645(SDlow concentration sample) (This accounts for both the blank variability and the imprecision at a low analyte level, ensuring a low probability of false negatives) [1].

What is the graphical "Uncertainty Profile" approach?

The Uncertainty Profile is a modern, graphical validation tool that combines tolerance intervals and measurement uncertainty to define the LOQ. A method is considered valid when the uncertainty limits are fully contained within pre-defined acceptability limits. The LOQ is determined as the lowest concentration where this condition is met, providing a realistic and reliable assessment of the method's quantitative capability [17].

The following diagram illustrates the workflow for determining LOD and LOQ using the standard deviation of the blank, as per CLSI EP17 guidelines:

Troubleshooting Common Scenarios and FAQs

My analyte signal falls between the LOD and LOQ. What should I do?

A signal between the LOD and LOQ indicates the analyte is detected but not quantifiable with confidence [5]. To resolve this:

- Pre-concentrate the Sample: Use techniques like solid-phase extraction (SPE), liquid-liquid extraction, or evaporation to increase the analyte concentration [5] [18].

- Optimize Instrument Parameters: Adjust detector settings, increase injection volume, or extend signal integration time to enhance the signal-to-noise ratio [5].

- Switch to a More Sensitive Technique: If possible, use a more sensitive detection system (e.g., LC-MS/MS instead of UV detection) which can offer significantly lower LOD/LOQ values, potentially down to the pg/mL range [5] [18].

- Repeat the Analysis: Perform multiple replicates to check for consistency and reduce the impact of random error [5].

What are the common technical barriers to achieving low LOD/LOQ in HPLC?

Achieving low LOD/LOQ in HPLC is challenged by several factors [18]:

- High Instrumental Noise: Baseline fluctuations, pump pulsations, and electronic detector noise establish a "noise floor" that can mask weak analyte signals.

- Matrix Effects: In complex samples (e.g., plasma), co-eluting compounds can cause ion suppression (in MS) or interfere with optical detection, dramatically reducing sensitivity.

- Carryover: The transfer of analyte from a previous sample can create false positives and elevate the baseline. Modern autosamplers aim to keep this below 0.1%.

- Limited Detector Sensitivity: Conventional UV-Vis detectors often struggle with concentrations below 10⁻⁸ M.

- Sample Loss: Inefficient sample preparation can lead to the loss of trace analytes, directly impacting the achievable LOD/LOQ.

How can I reduce LOD/LOQ in my HPLC method?

Key methodologies for lowering LOD/LOQ in HPLC include [18]:

- Advanced Sample Preparation: Implement efficient pre-concentration and clean-up techniques like solid-phase extraction (SPE) to enrich the analyte and remove interfering matrix components.

- Enhanced Detection Systems: Couple HPLC to mass spectrometry (MS), which provides superior sensitivity and selectivity compared to UV detection.

- Instrument Optimization: Use microfluidic or nano-LC systems that handle smaller volumes and flow rates, improving mass sensitivity. Also, optimize mobile phase composition, column temperature, and gradient programs for sharper peaks.

- Signal Processing: Apply advanced data algorithms for baseline noise reduction and signal smoothing.

How do regulatory guidelines like ICH Q2(R2) impact LOD/LOQ determination?

The recent ICH Q2(R2) and ICH Q14 guidelines modernize the approach to analytical method validation [15]. They emphasize:

- A Lifecycle Management Model: Validation is not a one-time event but continues throughout the method's use, including post-approval changes.

- Science- and Risk-Based Approach: Encourages a deeper understanding of the method through the Analytical Target Profile (ATP), which prospectively defines the required performance criteria (including LOD/LOQ) based on the method's intended use.

- Inclusion of Modern Technologies: The guidelines have been expanded to provide clearer guidance for advanced techniques like chromatography-mass spectrometry.

The Scientist's Toolkit: Essential Reagents and Materials

This table lists key materials used in developing sensitive methods for trace analysis in drug development.

| Tool / Reagent | Function in Low LOD/LOQ Analysis |

|---|---|

| Mass Spectrometry Detector | Provides highly sensitive and specific detection, often lowering LOD/LOQ by orders of magnitude compared to optical detectors [18]. |

| Solid-Phase Extraction (SPE) Cartridges | Used for sample clean-up and pre-concentration of analytes from complex matrices, directly improving the effective concentration reaching the instrument [18]. |

| UHPLC Columns (Sub-2µm Particles) | Provides higher chromatographic efficiency and sharper peaks, which improves the signal-to-noise ratio and lowers detection limits [18]. |

| High-Purity Solvents and Reagents | Minimize background noise and interference from impurities in the mobile phase or solvents, which is critical for low-level detection [19]. |

| Stable Isotope-Labeled Internal Standards | Corrects for analyte loss during sample preparation and matrix effects in LC-MS, significantly improving the accuracy and precision at low concentrations near the LOQ [3]. |

Troubleshooting Guides

Guide 1: Resolving Issues with Blank Samples

Problem: High background noise or false positives in blank samples. This indicates that the signal from the blank is too high, which will artificially raise your method's Limit of Detection (LOD) and Limit of Quantification (LOQ) [5] [21].

Investigation and Resolution Steps:

- Step 1: Verify Reagent Purity. Ensure all solvents, acids, and water used are of high-grade purity suitable for trace analysis. Contaminated reagents are a primary source of blank interference [5].

- Step 2: Inspect Labware Cleanliness. Implement a rigorous labware cleaning protocol (e.g., using acid baths for glassware) to prevent carryover contamination from previous uses or the environment [5].

- Step 3: Assess Instrument Contamination. Analyze a pure solvent blank to check if the instrument itself (e.g., HPLC autosampler, spectrometer source) is introducing contamination. Flush the system thoroughly if needed [5].

- Step 4: Control for Environmental Contamination. Perform blank preparations in a clean laboratory environment, such as a fume hood or laminar flow cabinet, to minimize atmospheric contamination [5].

Guide 2: Addressing Signal Instability at Low Concentrations

Problem: Inconsistent or non-reproducible signals for samples with concentrations near the LOD. This leads to an unreliable LOQ and poor precision, a key figure of merit in the Red Analytical Performance Index (RAPI) [21].

Investigation and Resolution Steps:

- Step 1: Evaluate Instrument Stability. Allow the instrument to warm up sufficiently and ensure critical components (e.g., lamp in a spectrophotometer) are not failing. Check for fluctuations in baseline noise [5].

- Step 2: Increase Replicate Measurements. Perform a higher number of replicate analyses (n≥7) of the low-concentration sample and the blank. This provides a more robust estimate of the standard deviation used in LOD/LOQ calculations [5].

- Step 3: Optimize Sample Preparation. Ensure the sample preparation method is robust and reproducible. For very low concentrations, employ pre-concentration techniques like solid-phase extraction or liquid-liquid extraction to increase the analyte concentration above the LOQ [5].

- Step 4: Check for Matrix Effects. If the sample is in a complex matrix (e.g., blood, wastewater), use matrix-matched standards to identify if background components are causing signal suppression or enhancement [5] [21].

Guide 3: Handling Samples with Concentrations Between LOD and LOQ

Problem: A sample produces a detectable signal (above LOD) but the concentration cannot be quantified with precision (below LOQ). This is a common challenge where the presence of the analyte is confirmed, but its exact amount remains uncertain [5].

Investigation and Resolution Steps:

- Step 1: Confirm Detection. Since the signal is above the LOD, the analyte is likely present. Report the result as "detected but not quantifiable" or "< LOQ" [5].

- Step 2: Improve Quantification. To accurately quantify the analyte, you can:

- Pre-concentrate the Sample: Use techniques like evaporation or extraction to increase the analyte concentration above the LOQ [5].

- Use a More Sensitive Technique: Switch to an instrument with lower inherent noise and better detection capabilities (e.g., GC-MS/MS instead of GC-FID, or ICP-MS instead of AAS) [5].

- Optimize Instrument Parameters: Adjust detector settings, integration times, or injection volumes to enhance the signal-to-noise ratio [5].

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between LOD and LOQ?

The Limit of Detection (LOD) is the lowest concentration at which the analyte can be reliably detected but not necessarily quantified. It represents the threshold for distinguishing the analyte's signal from background noise. The Limit of Quantification (LOQ) is the lowest concentration that can be measured with acceptable precision and accuracy [5]. It is the threshold for performing reliable quantitative analysis.

FAQ 2: How are LOD and LOQ practically calculated?

A common and practical method uses the signal-to-noise ratio and the standard deviation of the blank.

- LOD Calculation: Typically defined as a concentration that gives a signal 3 times the standard deviation of the blank (σ). Formula: LOD = 3 × σ [5] [22].

- LOQ Calculation: Typically defined as a concentration that gives a signal 10 times the standard deviation of the blank (σ). Formula: LOQ = 10 × σ [5].

FAQ 3: Our method validation shows poor intermediate precision. How does this affect LOQ?

Poor intermediate precision (high variation between days, analysts, or instruments) directly increases the standard deviation used in LOQ calculations. A higher standard deviation leads to a higher LOQ, meaning your method becomes less capable of reliably quantifying low concentrations. Improving the method's robustness is essential to lowering the LOQ [21].

FAQ 4: What should I do if my sample matrix is too complex and interferes with the analysis?

Complex matrices (e.g., soil, plasma) are a major challenge. To minimize interference:

- Use Matrix-Matched Standards: Prepare your calibration standards in a solution that mimics the sample matrix.

- Apply Sample Cleanup: Techniques like solid-phase extraction (SPE) can remove interfering compounds.

- Employ Background Correction: Use instrumental techniques like baseline subtraction or derivative spectroscopy to correct for matrix background [5].

FAQ 5: What is the Red Analytical Performance Index (RAPI) and how is it relevant?

The Red Analytical Performance Index (RAPI) is a modern, standardized scoring system (0-100) that consolidates key analytical performance parameters—including LOD, LOQ, precision, and robustness—into a single, comparable score. It helps objectively evaluate and compare methods, ensuring that the "red dimension" (analytical performance) is rigorously assessed when developing new low-level methods [21].

Table 1: Key Performance Parameters for Method Validation

This table outlines critical figures of merit and their target values for a robust analytical method, as emphasized in validation guidelines like ICH Q2(R2) [21].

| Parameter | Description | Target Value / Calculation |

|---|---|---|

| LOD | Lowest detectable concentration. | 3 × σ (std. dev. of blank) [5] |

| LOQ | Lowest quantifiable concentration. | 10 × σ (std. dev. of blank) [5] |

| Precision (Repeatability) | Closeness of repeated measurements under same conditions. | RSD < 2-3% [21] |

| Intermediate Precision | Variation under changed conditions (e.g., different days). | RSD < 5% (method dependent) [21] |

| Trueness | Closeness to a true or reference value. | Bias < ±5-10% [21] |

| Linearity | Proportionality of signal to analyte concentration. | R² ≥ 0.995 [21] |

| Working Range | Interval between LOQ and upper quantification limit. | Must encompass intended sample concentrations [21] |

Table 2: Research Reagent Solutions for Trace Analysis

Essential materials and their functions for reliable low-concentration analysis.

| Item | Function in Analysis |

|---|---|

| High-Purity Solvents | To minimize background signal and contamination from impurities in reagents [5]. |

| Certified Reference Materials (CRMs) | To establish method accuracy (trueness) and for calibration at trace levels [21]. |

| Solid-Phase Extraction (SPE) Cartridges | To clean up complex samples and pre-concentrate analytes to levels above the LOQ [5]. |

| Matrix-Matched Standards | To compensate for matrix effects that can suppress or enhance the analyte signal [5] [21]. |

Experimental Protocols & Workflows

Workflow 1: Standard Method for Determining LOD and LOQ

This protocol describes the standard signal-to-noise method for determining LOD and LOQ [5].

Materials: Analytical instrument (e.g., HPLC, spectrophotometer), high-purity blank solution, standard solution of analyte at a low concentration.

Procedure:

- Analyze the Blank: Perform multiple measurements (n≥10) of the blank solution containing no analyte.

- Calculate Noise: Determine the standard deviation (σ) of the response (e.g., peak area, absorbance) of the blank measurements.

- Analyze Low-Concentration Standard: Measure a standard with a low concentration of the analyte to obtain an average signal (S).

- Calculate LOD and LOQ:

- LOD = 3 × (σ / S) × Concentration of Standard

- LOQ = 10 × (σ / S) × Concentration of Standard

Workflow 2: Strategy for Analyzing Samples with Concentrations Between LOD and LOQ

This decision tree outlines the steps to take when an analyte is detected but not quantifiable [5].

Workflow 3: Holistic Method Assessment using RAPI

The Red Analytical Performance Index (RAPI) provides a comprehensive framework for scoring a method's performance, encouraging improvements across all key parameters [21].

Practical Strategies for Enhanced Sensitivity: Sample and Instrument Optimization

In trace analysis research, the goal of achieving lower Limits of Detection (LOD) and Limits of Quantification (LOQ) is fundamentally dependent on effective sample preparation. Solid-phase extraction (SPE) serves as a powerful technique for purifying, concentrating, and isolating target analytes from complex sample matrices, directly addressing challenges in sensitivity and reliability. By selectively retaining analytes and removing interfering matrix components, SPE significantly reduces background noise and enhances signal response in subsequent chromatographic analyses [23]. This process is indispensable for accurate quantification at trace levels, as it effectively preconcentrates target compounds while eliminating matrix effects that can compromise data accuracy and instrument performance [24]. Within the framework of modern analytical chemistry, optimizing SPE protocols represents a critical pathway toward achieving the stringent detection limits required in pharmaceutical development, environmental monitoring, and clinical research.

Standard SPE Workflow

The following diagram illustrates the standard, multi-step protocol for Solid-Phase Extraction. Adherence to this procedure is fundamental to achieving high analyte recovery and effective matrix cleanup.

Diagram Title: Standard Solid Phase Extraction Workflow

This standardized five-step process—condition, equilibrate, load, wash, and elute—forms the foundation of effective SPE [25]. Proper execution of each stage ensures optimal interaction between the analytes and the sorbent, maximizing recovery and the effectiveness of the matrix cleanup, which is a direct contributor to lowered LOD and LOQ [23].

Troubleshooting Common SPE Challenges

Even with a standardized workflow, analysts may encounter issues. The following table diagnoses common SPE problems, their root causes, and practical solutions to improve recovery and reproducibility.

Table 1: Troubleshooting Guide for Common Solid-Phase Extraction Challenges

| Problem & Symptom | Root Cause | Solution for Improvement |

|---|---|---|

| Poor Recovery [23]Analyte is not adequately recovered from the sample. | - Insufficient binding: Analyte has greater affinity for sample solvent than sorbent [26].- Column overload: Sample volume or concentration exceeds sorbent capacity [23].- Incomplete elution: Elution solvent is too weak or volume is insufficient [26]. | - Adjust sample pH to increase analyte affinity for sorbent [26] [23].- Use a sorbent with higher selectivity or capacity [26].- Increase elution solvent strength or volume; elute in two aliquots [26] [23]. |

| Lack of Reproducibility [23]High variation in extraction results between samples. | - Inconsistent flow rates.- Improper column conditioning.- Variable sorbent drying times after wash step [23]. | - Use a controlled, slow flow rate (~1 mL/min) for loading and elution [23].- Follow recommended conditioning protocol; do not let sorbent dry before loading [26] [23].- Ensure consistent and complete drying of the sorbent bed after washing, especially for aqueous samples [23]. |

| Impure Extractions [23]Interfering compounds co-elute with the target analyte. | - Wash solvent is too weak to remove impurities.- Co-extraction of matrix components like phospholipids or proteins [24]. | - Optimize wash solvent strength to remove impurities without displacing the analyte [23].- Use selective sorbents designed for enhanced matrix removal (e.g., Strata-X PRO) [24].- Pre-treat sample (e.g., protein precipitation, filtration) before SPE [23]. |

| Slow Flow Rates [23]Sample passes through the sorbent bed too slowly or gets blocked. | - Particulate matter in the sample clogs the frits.- Sample is too viscous.- Inadequate vacuum or pressure [23]. | - Filter or centrifuge the sample to remove particulates [26].- Dilute the sample with a weak solvent [23].- Check vacuum manifold or positive pressure system for proper function [23]. |

Frequently Asked Questions (FAQs) on SPE for Trace Analysis

Q1: How does Solid-Phase Extraction directly contribute to lower LOD and LOQ? SPE lowers LOD and LOQ through two primary mechanisms: preconcentration and matrix cleanup [23]. Preconcentration increases the absolute amount of analyte entering the analytical instrument, thereby enhancing the signal. Simultaneously, matrix cleanup removes interfering compounds that contribute to background noise and signal suppression [24]. Since LOD is defined as 3 times the signal-to-noise ratio (S/N) and LOQ as 10 times S/N, reducing noise and boosting the signal directly improves these limits [5].

Q2: What can I do if my analyte concentration falls between the LOD and LOQ? When an analyte is detected (above LOD) but cannot be accurately quantified (below LOQ), several strategies can be employed:

- Preconcentration: Increase the sample load volume or use techniques like evaporation or solid-phase extraction to concentrate the analyte above the LOQ [5].

- Method Optimization: Adjust instrument parameters (e.g., detector settings, injection volume) to enhance sensitivity [5].

- Alternative Techniques: Switch to a more sensitive analytical method, such as using LC-MS/MS instead of HPLC-UV, or employing a sorbent with higher affinity for your analyte [5] [27].

Q3: What are "matrix effects" and how can SPE mitigate them? Matrix effects refer to the suppression or enhancement of an analyte's signal caused by co-eluting components from the sample matrix [24]. These effects are a major source of inaccuracy, particularly in mass spectrometry. SPE mitigates matrix effects by selectively isolating the target analyte and removing interfering matrix components—such as phospholipids, salts, and proteins—resulting in a cleaner extract and a more reliable signal [24].

Q4: My analyte recovery is low. Where should I start troubleshooting? Begin by collecting and analyzing the fractions from each step of the SPE process (load, wash, elute) [23]. This will pinpoint where the analyte is being lost:

- Lost in Load Fraction: The analyte is not binding. Check conditioning, adjust sample pH/solvent, or try a stronger sorbent [23].

- Lost in Wash Fraction: The wash solvent is too strong. Reduce its strength or volume [23].

- Not Eluted: The elution solvent is too weak. Increase its strength or volume, or use a less retentive sorbent [26] [23].

Advanced Strategies: Sorbents and Automation

The field of SPE is continuously evolving, with new sorbent technologies and automated platforms offering significant advantages for trace analysis.

Table 2: Research Reagent Solutions - Advanced SPE Sorbents

| Sorbent / Technology | Function & Mechanism | Application in Trace Analysis |

|---|---|---|

| Polymeric Sorbents (e.g., Strata-X) [25] | Hydrophilic-Lipophilic Balanced (HLB) copolymers retain a wide spectrum of analytes (polar, non-polar, acidic, basic) through multiple interactions. | Ideal for multi-class, multi-residue analysis of emerging contaminants in environmental water samples, improving recovery of diverse compounds [28]. |

| Mixed-Mode Sorbents [27] | Combine reversed-phase (e.g., C8, C18) and ion-exchange functionalities. Retention is based on both hydrophobicity and ionic charge. | Excellent for selective extraction of ionizable analytes (e.g., drugs, metabolites) from complex biological matrices like plasma, enabling superior cleanup [27]. |

| Molecularly Imprinted Polymers (MIPs) [27] | "Smart polymers" with pre-designed cavities complementary to a specific target molecule, offering antibody-like specificity. | Provide highly selective sample clean-up for target compounds in complex samples (e.g., biological fluids), drastically reducing interferences and lowering LOQ [27]. |

| Stimuli-Responsive Polymers (SRPs) [27] | Engineered sorbents that change properties (e.g., release analyte) in response to stimuli like pH, temperature, or magnetic fields. | Simplify and greenify the elution process. Magnetic SPE (MSPE) uses a magnet for phase separation, eliminating need for centrifugation or vacuum [27]. |

Simplified and Automated SPE Modes: Recent developments focus on simplifying and miniaturizing SPE to save time and solvents. Dispersive Micro-SPE (DMSPE) involves directly adding a small amount of sorbent to the sample, simplifying the process and is seen as a quick, green alternative [28]. Furthermore, on-line SPE fully automates the extraction by coupling the SPE cartridge directly to the LC system via a valve, enhancing repeatability, sensitivity, and throughput [28].

Experimental Protocol: Preconcentration for Trace Metal Analysis

The following diagram and protocol outline a specific methodology for the preconcentration of trace metals, such as Mercury, from challenging matrices like foliage, demonstrating the application of SPE principles to achieve low LOD/LOQ in ultratrace analysis.

Diagram Title: Hg Preconcentration for Isotopic Analysis

Objective: To preconcentrate trace levels of Mercury from foliar samples for reliable isotopic analysis using MC-ICP-MS, overcoming challenges of low natural concentrations [29].

Materials:

- Sample: Foliage (up to 2 g) [29].

- Reagents: Concentrated HNO₃ (65% Suprapur), HCl (37% Suprapur), H₂O₂ (30% Suprapur), SnCl₂, High-purity inverse aqua regia (3:1 HNO₃:HCl) [29].

- Equipment: Microwave digestion system (e.g., Milestone ETHOS 1), impinger setup, Hg-free N₂ gas supply, MC-ICP-MS [29].

Step-by-Step Methodology:

- Pre-digestion: Place the foliar sample in a microwave digestion vessel. Add 10 mL HNO₃, 1 mL HCl, and 1 mL H₂O₂. Allow the mixture to react initially with the vessel open or loosely capped to release gaseous fumes and prevent pressure build-up [29].

- Microwave Digestion: Securely seal the vessels and digest using the controlled microwave program. This ensures complete decomposition of the organic matrix and liberation of Hg into the solution [29].

- Transfer and Reduction: Quantitatively transfer the digested sample to an impinger. Add SnCl₂ to reduce ionic Hg (Hg²⁺) to volatile elemental Hg (Hg⁰) [29].

- Purging and Trapping: Purge the solution with Hg-free N₂ gas for an optimized duration of 30 minutes. The volatile Hg⁰ is carried over and trapped in a small volume (e.g., 2.25 mL) of concentrated inverse aqua regia [29].

- Analysis: The resulting solution is now preconcentrated, has an optimal acid matrix, and is suitable for high-precision Hg isotopic analysis via MC-ICP-MS [29].

Key Consideration for Low LOD/LOQ: This protocol is designed to process larger sample masses (up to 2 g) than conventional methods, effectively preconcentrating the analyte. The optimized purging time and efficient trapping ensure high recovery (studies show ~99%) and minimize isotopic fractionation, which is critical for accurate trace-level analysis [29].

FAQs: Fundamental Concepts and Troubleshooting

Q1: What are LOD and LOQ, and why are they critical for trace analysis? The Limit of Detection (LOD) is the lowest concentration of an analyte that can be reliably distinguished from the background noise. The Limit of Quantification (LOQ) is the lowest concentration that can be measured with acceptable precision and accuracy [30].

- Calculations: LOD is typically calculated as 3.3σ/slope of the calibration curve, while LOQ is 10σ/slope, where σ is the standard deviation of the response [30].

- Signal-to-Noise Ratio: In practice, LOD is often defined by a signal-to-noise ratio (S/N) of 3:1, and LOQ by a ratio of 10:1 [30] [31] [5]. These parameters are foundational for validating methods in pharmaceutical testing, environmental monitoring, and food safety, where detecting and quantifying trace levels is essential [30].

Q2: My HPLC baseline is noisy. What are the common causes and fixes? A noisy baseline can stem from various sources. The table below outlines common culprits and solutions [32] [33].

| Cause | Symptom | Solution |

|---|---|---|

| Air Bubbles | Jagged, irregular baseline noise. | Degas mobile phases thoroughly. Purge the system. |

| Contaminated Detector Cell | Sustained high-frequency noise. | Clean the flow cell with a strong organic solvent. |

| Detector Lamp Failure | Increased noise across wavelengths. | Replace the UV lamp. |

| Mobile Phase Contamination | Ghost peaks or baseline shifts. | Prepare fresh, high-quality mobile phases. |

| Leaks | Unstable baseline and pressure. | Check and tighten all fittings; replace damaged seals. |

Q3: I have observed a sudden drop in MS sensitivity. What should I investigate? Signal loss in LC-MS can be due to ion suppression or instrumental issues [34] [35].

- Ion Suppression: Caused by co-eluting matrix components that interfere with analyte ionization. To diagnose, post-infuse analyte into the MS detector while injecting a blank sample extract; a dip in the signal at the analyte's retention time indicates suppression [35]. Mitigation strategies include improving sample clean-up, modifying the chromatography to separate the interferent, or using an appropriate internal standard [34] [35].

- Instrumental Issues: Check for a clogged nebulizer or sampling orifice, contaminated ion source, or incorrect gas flow settings. Routine maintenance and cleaning are essential [34].

Q4: My chromatographic peaks are tailing. How can I resolve this? Peak tailing often indicates unwanted interactions or void volumes within the flow path [32] [33].

- Active Sites in Column: For basic analytes, secondary interactions with acidic silanols on the stationary phase can cause tailing. Solutions include using a mobile phase buffer at an appropriate pH, selecting a column designed for basic compounds, or adding a competing amine to the mobile phase.

- Void Volume or Inadequate Fittings: A poorly cut tubing end or a poorly installed fitting at the head of the column can create a mixing chamber, leading to tailing and loss of efficiency. Ensure all connections are tight and properly made [32].

Q5: What are the key parameters to optimize in an ESI-MS source for better S/N? Electrospray Ionization (ESI) efficiency is crucial for sensitivity. Key parameters to optimize include [34]:

- Nebulizing and Drying Gas Flow/Temperature: Adequate settings are critical for stable spray formation and efficient desolvation of droplets to release gas-phase ions. Higher temperatures can help but may degrade thermally labile compounds.

- Capillary Voltage: This voltage stabilizes the electrospray. Incorrect settings can lead to poor reproducibility and signal instability.

- Capillary Tip Position: The distance of the tip from the orifice affects ion transmission. At slower flow rates, placing the tip closer can increase ion plume density and improve signal [34].

Troubleshooting Guides

GC/MS Troubleshooting for Enhanced S/N

A low signal-to-noise ratio in GC/MS can compromise detection limits. The following guide addresses common issues.

| Problem | Potential Cause | Solution |

|---|---|---|

| High Chemical Noise | Contaminated inlet liner, column, or ion source. | Replace or clean the inlet liner, cut the first 10-15 cm of the column, and perform routine ion source cleaning. |

| Low Signal Intensity | Inactive liner causing analyte degradation or poor injection technique. | Use a deactivated liner or one with glass wool, ensure proper syringe handling, and check injector temperature. |

| Broad Peaks | Column degradation or incorrect carrier gas flow. | Condition or replace the column and optimize the carrier gas linear velocity. |

| Poor Peak Shape (Tailing) | Active sites in the liner or column. | Use a deactivated liner, ensure the column is properly cut and installed, and consider column trimming. |

HPLC Signal and Noise Optimization

This guide helps diagnose and resolve common HPLC issues that affect the signal-to-noise ratio [32] [33].

| Problem | Investigation | Resolution |

|---|---|---|

| Loss of Sensitivity | Check injection volume and needle for blockages. Inspect detector time constant and mobile phase. | Increase injection volume if linear. Flush or replace needle. Decrease detector time constant. Prepare fresh mobile phase [32] [33]. |

| Broad Peaks | Verify mobile phase composition and flow rate. Check for column contamination or overloading. Check for extra-column volume. | Prepare fresh mobile phase/buffer. Increase flow rate (if within pressure limits). Replace guard/analytical column. Reduce injection volume or sample concentration. Use shortest, narrowest ID tubing possible between injector and detector [32] [33]. |

| Retention Time Drift | Monitor column temperature. Confirm mobile phase composition and pump performance. | Use a thermostatted column oven. Prepare fresh mobile phase. Check for faulty pump check valves or leaks [32] [33]. |

MS System Tuning and Calibration

Regular tuning and calibration are fundamental for maintaining optimal MS performance and low LOD/LOQ.

| Component | Tuning Action | Impact on S/N |

|---|---|---|

| Calibration | Use certified calibration solutions to ensure mass accuracy and resolution. | Proper calibration ensures the detector is measuring the correct analyte mass, reducing chemical noise. |

| Ion Source Parameters | Optimize source temperatures, gas flows, and voltages for your specific analyte and LC flow rate [34]. | Maximizes the production and transmission of gas-phase ions, directly boosting the signal. |

| Mass Analyzer | Tune lens voltages, collision energy (for MS/MS), and detector voltage. | Optimizes transmission of ions through the analyzer to the detector, maximizing signal intensity and specificity. |

Workflows and Protocols

Systematic Instrument Optimization Workflow

The following diagram outlines a logical sequence for holistically optimizing your instrumental analysis to achieve the best signal-to-noise ratio.

Experimental Protocol: Determining LOD and LOQ via Signal-to-Noise

This protocol provides a detailed methodology for experimentally determining LOD and LOQ using the signal-to-noise ratio approach, a common and practical technique [30] [5].

1. Equipment and Reagent Setup:

- Calibrated HPLC, GC, or MS system.

- High-purity analytical grade solvents and reagents.

- Stock solution of the target analyte.

- Appropriate blank matrix (e.g., pure solvent for standards, or processed sample matrix for real-world analysis).

2. Experimental Procedure:

- Step 1: Prepare a low-concentration standard. Dilute the stock solution to a concentration expected to be near the anticipated detection limit.

- Step 2: Analyze the blank. Inject the blank matrix (e.g., mobile phase) multiple times (n ≥ 5-10). This establishes the baseline noise level.

- Step 3: Analyze the low-concentration standard. Inject the prepared low-concentration standard. This provides the analyte signal.

3. Data Analysis and Calculation:

- Step 4: Measure the noise (N). From the blank chromatogram, measure the peak-to-peak noise over a region close to the analyte's retention time.

- Step 5: Measure the signal (S). From the standard chromatogram, measure the height of the analyte peak.

- Step 6: Calculate the Signal-to-Noise Ratio (S/N). ( S/N = \frac{\text{Analyte Peak Height (S)}}{\text{Baseline Noise (N)}} )

- Step 7: Determine LOD and LOQ.

- ( LOD = \text{Concentration of Standard} \times \frac{3}{S/N} )

- ( LOQ = \text{Concentration of Standard} \times \frac{10}{S/N} ) Note: This calculation assumes a linear response and that the standard concentration is in the linear range. Verify these calculated limits with experimental measurements [30] [5].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and materials used in optimizing methods for trace analysis, along with their specific functions.

| Item | Function & Purpose in Optimization |

|---|---|

| HPLC/MS Grade Solvents | High-purity solvents (water, acetonitrile, methanol) minimize baseline noise and prevent source contamination in MS [34]. |

| Volatile Buffers | Ammonium formate and ammonium acetate are MS-compatible buffers that help control mobile phase pH without causing ion suppression [34] [35]. |

| Solid Phase Extraction (SPE) Cartridges | Used for sample clean-up and pre-concentration to remove matrix interferents and increase analyte concentration, thereby improving S/N and lowering LOQ [34] [35]. |

| Guard Columns | A small cartridge placed before the analytical column to trap particulates and contaminants, protecting the more expensive analytical column and maintaining peak shape [32] [33]. |

| Certified Reference Materials | Standards with known purity and concentration used for instrument calibration, method development, and validation to ensure accuracy [30]. |

| Matrix-Matched Standards | Calibration standards prepared in the same blank matrix as the sample. This is critical for compensating for matrix effects in LC-MS, leading to more accurate quantification [35]. |

In trace analysis, the Limit of Detection (LOD) is the lowest concentration of an analyte that can be reliably detected from a blank, though not necessarily quantified with precision. The Limit of Quantitation (LOQ), a higher concentration, is the lowest level at which an analyte can be quantified with acceptable accuracy and precision [1] [2]. For researchers and scientists in drug development, optimizing these parameters is crucial for accurately measuring trace-level impurities, degradation products, or low-abundance metabolites, ensuring product safety and efficacy.

This technical resource provides a structured guide to enhancing method sensitivity through strategic column selection and method parameter optimization, framed within the context of a broader thesis on advancing trace analysis capabilities.

FAQs: Core Principles for Lowering Detection Limits

Q1: What is the fundamental relationship between signal, noise, and detection limits?

The LOD and LOQ are fundamentally governed by the signal-to-noise ratio (S/N). The signal is the analytical response from the analyte, while the noise is the fluctuation of the baseline [2]. A ratio of 3:1 is generally accepted for estimating the LOD, whereas a 10:1 ratio is required for the LOQ [2]. Therefore, the primary strategies for lowering detection limits are to increase the analyte signal and reduce the system noise.

Q2: How does stationary phase chemistry influence detection limits for different compound classes?

The choice of stationary phase directly affects the separation factor (α), which has the greatest impact on resolution [36]. Selecting a phase with appropriate polarity and selectivity for your target analytes enhances retention and separation, leading to sharper, more resolved peaks. This improved peak shape translates to a higher signal (taller, narrower peaks) and reduces the chance of co-elution, which can contribute to baseline noise. For example, a trifluoropropylmethyl polysiloxane phase (e.g., Rtx-200) is highly selective for analytes containing lone pair electrons, such as halogen, nitrogen, or carbonyl groups [36].

Q3: What physical column parameters most significantly affect peak height and sensitivity?

Three key column parameters dramatically influence peak shape and sensitivity:

- Inner Diameter (ID): Reducing the column ID significantly increases peak height and sensitivity. For example, comparing two columns of equal length, reducing the diameter from 4.6 mm to 3.0 mm can increase the peak height by up to 5 times [37].

- Particle Size: Smaller particles (e.g., moving from 5 μm to sub-2 μm or 3 μm) provide higher efficiency, leading to narrower, sharper peaks and improved resolution [37].

- Particle Technology: Core-shell particles (also known as fused-core) consist of an impermeable core surrounded by a porous shell. This design reduces eddy diffusion and longitudinal diffusion, resulting in narrower peaks and often shorter retention times compared to fully porous particles of the same size [37].

Q4: How can a method be optimized from isocratic to gradient elution to improve LOD/LOQ?

Switching from an isocratic to a gradient elution is a powerful technique for "peak sharpening." In an isocratic run, peaks tend to broaden over time, especially for later-eluting compounds. A gradient elution, where the mobile phase strength is increased over time, compresses the analyte bands as they travel through the column, resulting in narrower and taller peaks [37]. This increase in peak height directly improves the signal-to-noise ratio, thereby lowering the LOD and LOQ.

Troubleshooting Guides: Resolving Common Sensitivity Issues

Symptom: Poor Signal-to-Noise Ratio in HPLC

A poor S/N ratio manifests as small, broad analyte peaks on a noisy, fluctuating baseline.

| Possible Cause | Investigation & Verification | Corrective Action |

|---|---|---|

| Sub-optimal Detection Wavelength | Check the analyte's UV spectrum to confirm detection is at or near λmax. | Optimize the detection wavelength for the target analyte(s) [38]. |

| High Baseline Noise | Observe the baseline for excessive short-term fluctuation. | Use UV-transparent solvents (e.g., acetonitrile over acetone); ensure mobile phase additives are pure and do not contribute to absorbance [38]. |

| Broad, Flat Peaks | Compare peak width and height to a known good chromatogram. | Switch from isocratic to a sharper gradient program [37]; consider a column with smaller particles or a smaller inner diameter [37]. |

| Peak Tailing | Calculate the asymmetry factor for target peaks. | For amines, use 0.1% formic acid; if not using LC-MS, 0.1% TFA can improve peak shape [38]. |

Symptom: Inadequate Separation Leading to Poor Quantitation

This occurs when analyte peaks are not baseline-resolved, making integration and accurate quantification difficult.

| Possible Cause | Investigation & Verification | Corrective Action |

|---|---|---|

| Incorrect Stationary Phase | Check if the phase polarity matches the analyte. A different chemical class may co-elute. | Select a stationary phase with a selectivity that exploits differences in analyte intermolecular forces (e.g., hydrogen bonding, dipole-dipole) [36]. |

| Column Degradation | Run a manufacturer's test chromatogram and compare plate numbers. | Replace the column if efficiency has dropped significantly [39]. |

| Non-ideal Mobile Phase pH | Check if retention times have shifted and peak shape has degraded. | Prepare a fresh batch of mobile phase with the correct pH and additives [39]. |

| Blocked In-line Filter/Column Frit | Check system pressure against the normal operating pressure. | Replace the in-line filter or guard column frit. If the analytical column frit is blocked, reverse the column if allowed or replace it [39]. |

Essential Methodologies & Workflows

A Systematic Workflow for GC Column Selection

This diagram outlines the decision process for selecting a GC column to optimize separations, a key step in method development.

Strategic Pathway to Lower LOD/LOQ in HPLC

This flowchart illustrates the primary strategies for enhancing sensitivity in HPLC methods by targeting the signal-to-noise ratio.

Experimental Protocol: Determining LOD and LOQ via Signal-to-Noise

This protocol is suitable for chromatographic methods where a baseline noise can be measured [2].

- Preparation: Prepare a standard solution of the analyte at a concentration that produces a signal approximately 3 to 10 times the baseline noise.

- Chromatographic Analysis: Inject the standard and record the chromatogram under the optimized method conditions.

- Noise Measurement: Measure the peak-to-peak noise (N) on the baseline in a blank chromatogram over a range equivalent to about 10 times the width of the analyte peak.

- Signal Measurement: Measure the height of the analyte peak (H) from the baseline.

- Calculation:

- Calculate the Signal-to-Noise ratio: S/N = H / N.

- The LOD is the concentration that yields S/N ≥ 3.

- The LOQ is the concentration that yields S/N ≥ 10.

- Verification: Independently prepare and analyze samples at the calculated LOD and LOQ concentrations to confirm the S/N criteria are met.

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key materials and their functions in developing sensitive chromatographic methods.

| Item Category | Specific Examples | Function & Rationale |

|---|---|---|

| GC Stationary Phases | Rxi-1ms (100% Dimethyl polysiloxane), Rxi-17 (50% Diphenyl/50% dimethyl polysiloxane), Rtx-200 (Trifluoropropyl methyl polysiloxane) | Provides selectivity for different compound classes through varied intermolecular interactions (dispersion, dipole-dipole, π-π, etc.) [36]. |

| HPLC Column Technologies | Columns with smaller IDs (e.g., 2.1 mm), smaller particles (e.g., 3 μm, sub-2 μm), and core-shell particles. | Increases peak height and efficiency, directly improving signal-to-noise ratio and lowering LOD/LOQ [37]. |

| Mobile Phase Additives | 0.1% Formic Acid, 0.1% Trifluoroacetic Acid (TFA) | Improves peak shape for ionizable compounds (e.g., reduces tailing for amines), leading to taller, sharper peaks and better detection limits [38]. |

| Signal Enhancement Phases | Diamond Hydride Column (for Aqueous Normal Phase) | Particularly effective for retaining and separating hydrophilic analytes, often providing superior peak shape and signal intensity compared to standard reversed-phase [38]. |

Frequently Asked Questions (FAQs)

What is the primary function of a deuterated internal standard? A deuterated internal standard (SIL-IS) is a known quantity of a reference compound where atoms in the target analyte are replaced with stable isotopes (like ²H, ¹³C, or ¹⁵N). Its primary function is to correct for analyte loss and signal variability during sample preparation and analysis. It does this by tracking fluctuations caused by incomplete extraction, matrix effects, and instrumental instability, allowing for normalization of the target analyte's signal and significantly improving the accuracy and precision of quantification [40].

How do deuterated analogs help in lowering LOD and LOQ? By correcting for variable analyte losses and matrix effects that contribute to background noise and signal instability, deuterated internal standards improve the signal-to-noise ratio and the reliability of measurements at low concentrations. This enhanced reliability allows a method to confidently detect and quantify analytes at lower levels, thereby reducing the method's Limit of Detection (LOD) and Limit of Quantification (LOQ) [40].

When should the deuterated internal standard be added to the sample? For the most effective correction of analyte losses throughout the entire analytical process, the internal standard should be added as early as possible, typically pre-extraction [40]. This ensures it undergoes the same sample preparation steps (like extraction, dilution, and reconstitution) as the native analyte, allowing it to accurately track and correct for losses at every stage.

What are the key considerations when selecting a deuterated analog?

- Mass Difference: Ideally, the SIL-IS should have a mass difference of 4–5 Da from the native analyte to minimize mass spectrometric cross-talk [40].

- Isotope Label: ²H (deuterium)-labeled standards may undergo deuterium-hydrogen exchange and can exhibit slight retention time shifts in chromatography. Standards labeled with ¹³C or ¹⁵N are generally preferred as they do not have these issues [40].

- Purity: The isotopic purity of the standard must be high to avoid interference with the signal of the target analyte [40].

What is a typical Signal-to-Noise (S/N) ratio for calculating LOD and LOQ? A common approach, particularly in chromatographic analysis, is to use a S/N ratio of 3:1 for the LOD and 10:1 for the LOQ [30] [20]. The LOD is the lowest concentration that can be reliably distinguished from background noise, while the LOQ is the lowest concentration that can be quantified with acceptable accuracy and precision [5] [30].

What should I do if the calculated concentration of my analyte falls between the LOD and LOQ? A result between the LOD and LOQ indicates the analyte is likely present but cannot be quantified with high confidence. To improve accuracy, you can [5]:

- Repeat the analysis with multiple replicates to check for consistency.

- Concentrate the sample using techniques like solid-phase extraction or evaporation.

- Use a more sensitive instrumental technique (e.g., LC-MS/MS instead of HPLC-UV).

- Optimize instrument parameters to enhance sensitivity and reduce noise.

Troubleshooting Guides

Problem: Inconsistent or Poor Recovery of the Deuterated Standard

| Symptom | Potential Cause | Resolution Steps |

|---|---|---|

| Low and variable IS response across all samples. | Systematic error (e.g., autosampler injection issue, blocked needle). | 1. Check the autosampler for obstructions [40]. 2. Verify the liquid phase and instrument performance. 3. Ensure the IS stock solution is stable and properly prepared. |

| Low IS recovery in specific sample matrices. | Strong matrix effects or adsorption to container surfaces. | 1. Use a different container type (e.g., low-binding plastic or silanized glass) [40]. 2. Increase the concentration of the internal standard to compete for binding sites [40]. 3. Modify the sample preparation to include a better clean-up step. |

| Abnormally high IS response in a few samples. | Human error in standard addition (e.g., accidental double-spiking) [40]. | 1. The data from these specific samples may be compromised. 2. Visually check the sample preparation logs and re-prepare the affected samples. |

Problem: Signal Suppression or Enhancement (Matrix Effects)

| Symptom | Potential Cause | Resolution Steps |

|---|---|---|

| Reduced response for both analyte and deuterated standard in complex samples. | Ion suppression in the MS source due to co-eluting matrix components. | 1. Improve chromatographic separation to shift the analyte/IS retention time away from the suppression zone [40]. 2. Optimize sample clean-up (e.g., solid-phase extraction) to remove interfering compounds. 3. Ensure the deuterated standard co-elutes with the analyte for optimal correction [40]. |

Problem: Aberrant Chromatography (Retention Time Shifts or Peak Shape)

| Symptom | Potential Cause | Resolution Steps |

|---|---|---|

| The deuterated standard does not perfectly co-elute with the native analyte. | Deuterium isotope effect, where the ²H-labeled standard is slightly less retained than the ¹H-analyte [40]. | 1. This is a known limitation of deuterated standards. Use a ¹³C- or ¹⁵N-labeled standard for a better match [40]. 2. If using a ²H-standard, ensure the chromatographic method is robust enough that the small shift does not cause differential matrix effects. |

| Poor peak shape for both analyte and standard. | Column degradation or non-optimal mobile phase. | 1. Replace or rejuvenate the chromatographic column. 2. Re-optimize the mobile phase pH or organic solvent composition. |

Problem: High Background or Elevated Noise Affecting LOD/LOQ

| Symptom | Potential Cause | Resolution Steps |

|---|---|---|

| High baseline noise in the mass spectrometer, obscuring low-level signals. | Contaminated instrument source or mobile phase. | 1. Perform thorough cleaning and maintenance of the ion source. 2. Prepare fresh, high-purity mobile phases and solvents. 3. Use a longer signal integration time or adjust detector settings to improve the signal-to-noise ratio [5]. |

Quantitative Data for Method Validation

The following parameters are often calculated and used to validate an analytical method that employs internal standards.

Table 1: Key Method Validation Parameters (LOD & LOQ)

| Parameter | Typical Calculation Method | Acceptable Threshold / Value |

|---|---|---|

| LOD (Limit of Detection) | 3.3 × (σ / S) | The lowest concentration that can be detected, but not necessarily quantified [30]. |

| σ = standard deviation of the blank's response S = slope of the calibration curve | ||

| Signal-to-Noise Ratio (S/N) = 3 [30] [20] | ||

| LOQ (Limit of Quantification) | 10 × (σ / S) | The lowest concentration that can be quantified with acceptable precision and accuracy [30]. |

| Signal-to-Noise Ratio (S/N) = 10 [30] [20] |

Table 2: Guidelines for Internal Standard Concentration

| Factor | Consideration | Recommendation |

|---|---|---|

| Cross-Interference | Contribution of IS signal to the analyte channel, and vice-versa. | IS concentration should be set to ensure interference is ≤20% of LLOQ for IS-to-analyte, and ≤5% of IS response for analyte-to-IS [40]. |

| Matrix Effects | To ensure the IS response is within a relevant range for correction. | Set IS concentration to be in the range of 1/3 to 1/2 of the Upper Limit of Quantification (ULOQ) concentration [40]. |

| Sensitivity | The IS must produce a reliable signal. | The concentration should be high enough to achieve an adequate signal-to-noise (S/N) ratio to minimize the impact of random noise [40]. |

Experimental Protocol: Using Deuterated Standards to Lower LOD/LOQ

A Detailed Methodology for Trace Analysis in Biological Matrices

1. Goal: To develop and validate a sensitive LC-MS/MS method for quantifying a target drug molecule in plasma, using a deuterated internal standard to achieve a low LOD and LOQ.

2. Materials and Reagents:

- Analyte: Target drug molecule.

- Internal Standard: Deuterated analog of the drug (e.g., [²H₇]-analyte).

- Biological Matrix: Control plasma.

- Solvents: High-purity methanol, acetonitrile, and water.

- Equipment: LC-MS/MS system, solid-phase extraction (SPE) kit, calibrated pipettes.

3. Procedure:

- Step 1: Sample Preparation

- Aliquot 100 µL of plasma samples (blanks, calibrators, and unknowns) into microcentrifuge tubes.

- Add the deuterated internal standard at this initial stage to correct for all subsequent preparation losses. The concentration should be set as per the guidelines in Table 2 [40].

- Add a precipitation solvent (e.g., 300 µL of cold acetonitrile) to precipitate proteins. Vortex mix and centrifuge.

- Transfer the clean supernatant for further analysis or SPE clean-up if needed.

Step 2: LC-MS/MS Analysis

- Chromatography: Inject the processed sample onto the LC system. Use a C18 column and a gradient elution with water and methanol (both with 0.1% formic acid) to achieve good separation of the analyte from matrix interferences.

- Mass Spectrometry: Operate the MS in Multiple Reaction Monitoring (MRM) mode. Use unique mass transitions for the native analyte and its deuterated standard to avoid cross-talk.

Step 3: Data Analysis and Calculation

- Plot a calibration curve using the peak area ratio (Analyte/IS) against the nominal concentration of the calibrators.

- Use linear regression with 1/x weighting to generate the curve.

- Calculate the LOD and LOQ by analyzing multiple blank samples and low-level standards, using the S/N method (3:1 for LOD, 10:1 for LOQ) or the standard deviation method [5] [30].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Internal Standardization with Deuterated Analogs

| Item | Function / Explanation |

|---|---|

| Stable Isotope-Labeled Internal Standard (SIL-IS) | A compound with atoms replaced by stable isotopes (e.g., ²H, ¹³C). It has nearly identical chemical properties to the analyte but a different mass, allowing for accurate mass spectrometric differentiation and loss correction [40]. |

| Matrix-Matched Calibration Standards | Calibration standards prepared in the same biological or sample matrix (e.g., plasma, urine) as the unknown samples. This helps account for matrix effects during quantification [41]. |

| High-Purity Solvents and Water | Essential for minimizing background noise and chemical interference in chromatographic separation and mass spectrometric detection, which is critical for achieving low LOD/LOQ. |

| Solid-Phase Extraction (SPE) Plates/Cartridges | Used for sample clean-up and pre-concentration of analytes, which helps remove interfering matrix components and can lower the overall LOQ by increasing the effective concentration of the analyte [5]. |

Workflow and Conceptual Diagrams

Diagram 1: Experimental workflow for using a deuterated internal standard, showing early addition to track the entire process.

Diagram 2: Logical relationship showing how the deuterated internal standard corrects for different sources of variability and loss.