Strategies to Minimize Offset Error in Instruments: A Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and scientists in biomedical and clinical fields on understanding, identifying, and correcting offset errors in instrumentation.

Strategies to Minimize Offset Error in Instruments: A Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and scientists in biomedical and clinical fields on understanding, identifying, and correcting offset errors in instrumentation. Covering foundational concepts of measurement uncertainty, practical calibration methodologies, advanced troubleshooting techniques, and validation protocols, the content synthesizes current best practices from metrology and engineering to enhance data reliability and reproducibility in critical applications such as diagnostic imaging, drug development, and electrochemical analysis.

Understanding Offset Error: Foundations in Measurement Science

Defining Offset and Steady-State Error in Measurement Systems

Troubleshooting Guides

What is offset error and how can I identify it in my experimental setup?

Offset error is a systematic inaccuracy where your entire measurement is shifted by a constant value from the true value, independent of the measurement magnitude. This means even a zero input will produce a non-zero output [1].

Identification Protocol:

- Step 1: Apply a known zero-input condition to your measurement system.

- Step 2: Record the system's output reading over a stabilized period.

- Step 3: If the output consistently deviates from zero by a fixed value, you have identified an offset error.

- Practical Example: A bathroom scale that reads 5 pounds when nothing is placed on it exhibits classic offset error, consistently adding this 5-pound bias to all weight measurements [1].

Why does my control system maintain a small permanent error even after stabilizing?

This permanent residual error is known as steady-state error (e_ss). It represents the difference between desired setpoint and actual process variable after all transient responses have decayed [2] [3].

Troubleshooting Steps:

- Check System Type: Determine if your system is Type 0, Type 1, or Type 2, as this fundamentally affects steady-state error capability [3].

- Verify Input Signal Type: Steady-state error varies significantly with different input types (step, ramp, parabolic) [4].

- Evaluate Controller Gain: For proportional controllers, increasing controller gain (

K_P) typically reduces offset error [2].

How can I distinguish between offset error and other measurement inaccuracies?

Understanding error types is crucial for effective troubleshooting. The table below compares key measurement errors:

Table: Comparison of Measurement System Errors

| Error Type | Definition | Effect on Response | Correction Method |

|---|---|---|---|

| Offset Error | Constant shift across entire measurement range [1] | Shifts entire response curve vertically | Add/subtract constant correction value [1] |

| Gain Error | Proportional error that increases with input magnitude [1] | Alters slope of response curve | Multiply by correction factor [1] |

| Linearity Error | Non-uniform deviation across measurement range [1] | Creates curved rather than straight-line response | Apply complex correction algorithms [1] |

Frequently Asked Questions (FAQs)

Offset error typically originates from:

- Component Mismatch: Manufacturing variations in differential circuits like operational amplifiers [1]

- Temperature Effects: Thermal drift causing component expansion/contraction at different rates [1]

- Calibration Issues: Incorrect zero-point reference during instrument setup [5]

- Aging Components: Gradual degradation of electronic components over time [1]

How does steady-state error relate to different types of control system inputs?

Steady-state error varies dramatically with both input type and system type. The following table summarizes these relationships:

Table: Steady-State Error Based on Input and System Type

| System Type | Step Input | Ramp Input | Parabolic Input |

|---|---|---|---|

| Type 0 | A/(1+K) |

∞ | ∞ |

| Type 1 | 0 | A/K |

∞ |

| Type 2 | 0 | 0 | A/K |

Where A is input amplitude and K is system gain [3].

What practical methods can minimize or eliminate offset error in measurement systems?

Hardware Compensation:

- Use trimming potentiometers for manual zero adjustment [1]

- Implement laser trimming of integrated circuit resistors [1]

- Utilize chopper-stabilized operational amplifiers that actively compensate offset in real-time [1]

Software Compensation:

- Perform zeroing procedures by measuring output at known zero input [1]

- Store offset value in memory and subtract digitally from all measurements [1]

- Implement periodic recalibration routines to account for thermal drift and aging [1]

Advanced Techniques:

- Apply dynamic face offset compensation utilizing coordinate measuring machine data [6]

- Implement robust nonlinear model predictive control with symbolic regression models [7]

Experimental Protocols for Error Characterization

Protocol 1: Quantitative Offset Error Measurement in ADC Systems

This methodology characterizes offset and gain errors in analog-to-digital converters (ADCs), common in digital measurement systems [8].

Materials Required:

- Table: Research Reagent Solutions for ADC Error Characterization

| Item | Function |

|---|---|

| Precision Voltage Source | Generates known reference signals |

| Data Acquisition System | Captures digital output codes |

| Thermal Chamber | Controls environmental temperature |

| MATLAB/Simulink Software | Implements error calculation algorithms |

Procedure:

- Apply a precision ramp signal spanning the ADC's full dynamic range [8]

- Record the digital codes output by the converter for each known analog input

- Identify the center of the least significant code for offset error calculation [8]

- Determine the center of the most significant code for gain error calculation [8]

- Calculate errors in LSB (Least Significant Bit) units using:

Error(LSB) = Error(Volts) / LSB_Size[8]

Protocol 2: Steady-State Error Analysis for Control Systems

This procedure evaluates steady-state error for different test inputs using final value theorem.

Procedure:

- Determine your system's transfer function

G(s)[4] - Apply standard test signals (step, ramp, parabolic) to the system [3]

- Measure the steady-state difference between input and output

- Calculate error constants:

- Apply final value theorem:

e_ss = lim_{s→0} s·E(s)[4]

Visualization of Error Relationships

Error Types in Measurement and Control Systems

Research Reagent Solutions

Table: Essential Materials for Offset Error Investigation

| Item | Function | Application Context |

|---|---|---|

| Trimming Potentiometers | Manual offset nulling | Circuit-level compensation [1] |

| Chopper-Stabilized Op-Amps | Real-time offset cancellation | Precision analog designs [1] |

| Thermal Chambers | Environmental testing | Characterizing temperature drift [1] |

| Coordinate Measuring Machines | Dimensional metrology | Machine tool compensation [6] |

| Symbolic Regression Software | Interpretable surrogate modeling | Robust nonlinear MPC [7] |

Offset Error Compensation Workflow

Distinguishing Between Accuracy, Precision, and Uncertainty

Frequently Asked Questions

1. What is the core difference between accuracy and precision? Accuracy indicates how close a measurement is to a true or accepted value. Precision, in contrast, describes the repeatability of measurements—how close repeated measurements are to each other, regardless of whether they are near the true value [9] [10]. A common analogy is target shooting: accurate shots cluster around the bullseye, while precise shots cluster tightly in one spot, which may not be the bullseye [9].

2. How does uncertainty differ from error? Error is the difference between a measured value and the true value. However, since a true value is often unknowable, the concept of measurement uncertainty is used. Uncertainty is a quantified parameter that characterizes the dispersion of values that could reasonably be attributed to the measurand. It is an admission that no measurement can be perfect and provides a range within which the true value is expected to lie [11] [9].

3. Why is it vital to distinguish these terms in instrument research? In instrument research and development, understanding these distinctions is fundamental to correctly diagnosing performance issues and implementing effective strategies to minimize offset errors (a type of inaccuracy). For instance, poor precision points to random variability in the measurement process, while poor accuracy suggests a systematic offset. Each problem requires a different troubleshooting approach [12] [9].

4. What are common sources of offset error (inaccuracy) in instruments? Common sources include incorrect instrument calibration, systematic biases in the measurement method, environmental factors that consistently influence the reading (e.g., temperature effects), and matrix effects in analytical samples that interfere with the measurement [13] [9].

5. How can I quantify precision and accuracy in my data?

- Precision can be quantified statistically as the standard deviation or range of repeated measurements [10].

- Accuracy can be estimated using percent error, which compares an experimental value to an accepted reference value [10]. The formula is:

( \text{Percent Error} = \frac{|\text{Accepted Value - Experimental Value}|}{|\text{Accepted Value}|} \times 100\% )

6. What is the relationship between uncertainty and significant figures? The uncertainty in a measurement determines the number of significant figures that are meaningful. The last digit reported in a measured value is considered uncertain. For example, reporting a length as ( 1.50 \text{ m} \pm 0.01 \text{ m} ) implies the value is known to three significant figures, with the '0' being the uncertain digit [10].

Troubleshooting Guides

Guide 1: Diagnosing Poor Precision (High Random Variability)

Symptoms: Large scatter in repeated measurements of the same quantity; high standard deviation; inconsistent results.

| Possible Cause | Investigation Steps | Corrective Action |

|---|---|---|

| Environmental Fluctuations | Monitor and log lab conditions (temperature, humidity, vibrations) during measurement. | Use environmental controls (e.g., air tables, temperature-stable rooms). Allow instrument to equilibrate. |

| Operator Technique | Have multiple trained operators perform the same measurement. | Standardize and document the measurement procedure. Provide additional training. |

| Instrument Instability | Run repeated measurements on a stable reference standard over time. | Service or maintain the instrument. Ensure proper power supply and grounding. |

| Sample Inhomogeneity | Take multiple measurements from different parts of the same sample. | Improve sample preparation protocol. Ensure sample is representative and homogeneous. |

Guide 2: Diagnosing Poor Accuracy (Offset Error)

Symptoms: Measurements are consistently biased away from the reference value; low percent error but potentially high precision.

| Possible Cause | Investigation Steps | Corrective Action |

|---|---|---|

| Incorrect Calibration | Measure a traceable certified reference material (CRM). | Recalibrate the instrument using the appropriate CRMs. Verify calibration regularly. |

| Systematic Method Bias | Compare results from your method against a standard reference method. | Identify and correct for the bias (e.g., use a correction factor). Validate the method. |

| Matrix Interference | Perform a spike-and-recovery study on the sample matrix. | Modify the method to remove interferences (e.g., sample purification). Use standard addition. |

| Instrument Wear or Damage | Check for physical damage to critical components. Review service history. | Service or replace faulty components. Perform preventative maintenance. |

Experimental Protocols for Assessment and Minimization

Protocol 1: Gauge Repeatability and Reproducibility (GR&R) Study

This protocol helps quantify the precision of your measurement system.

- Select a representative sample that covers the expected measurement range.

- Have multiple (e.g., 3) operators measure the same sample multiple (e.g., 10) times in a randomized order.

- Ensure operators do not know which sample they are measuring to prevent bias.

- Calculate the standard deviation for each operator (repeatability) and the variation between operators (reproducibility).

- Analyze the data: A high repeatability standard deviation indicates issues with the instrument or procedure. High variation between operators points to a need for better training and standardization [14].

Protocol 2: Method Validation for Accuracy and Uncertainty Estimation

This protocol, based on Quality by Design (QbD) principles, ensures your method is fit for purpose and characterizes its uncertainty [13].

- Define the objective and quality attributes of the measurement.

- Test specificity: Confirm the method can distinguish the target analyte from interferences.

- Establish a calibration curve using certified standards to evaluate linearity and the working range.

- Determine accuracy by analyzing a CRM and calculating the percent recovery or bias.

- Assess precision by performing repeatability (same day, same operator) and intermediate precision (different days, different operators) tests.

- Calculate uncertainty: Combine all significant uncertainty sources (e.g., from calibration, precision, bias) to estimate the overall measurement uncertainty [13] [11].

The Scientist's Toolkit: Key Reagents & Materials

| Item | Function in Experiment |

|---|---|

| Certified Reference Materials (CRMs) | Provides a traceable, known value for instrument calibration and method validation, directly addressing accuracy and offset error [13]. |

| Quality Control (QC) Materials | A stable material run alongside patient or test samples to monitor the precision and accuracy of the analytical process in real-time [14]. |

| Standard Operating Procedures (SOPs) | Documents the exact measurement protocol to minimize operator-dependent variability, thereby improving precision [14]. |

| Statistical Software | Used for calculating standard deviation, percent error, performing Gage R&R studies, and estimating measurement uncertainty [13]. |

Conceptual Relationships and Workflow

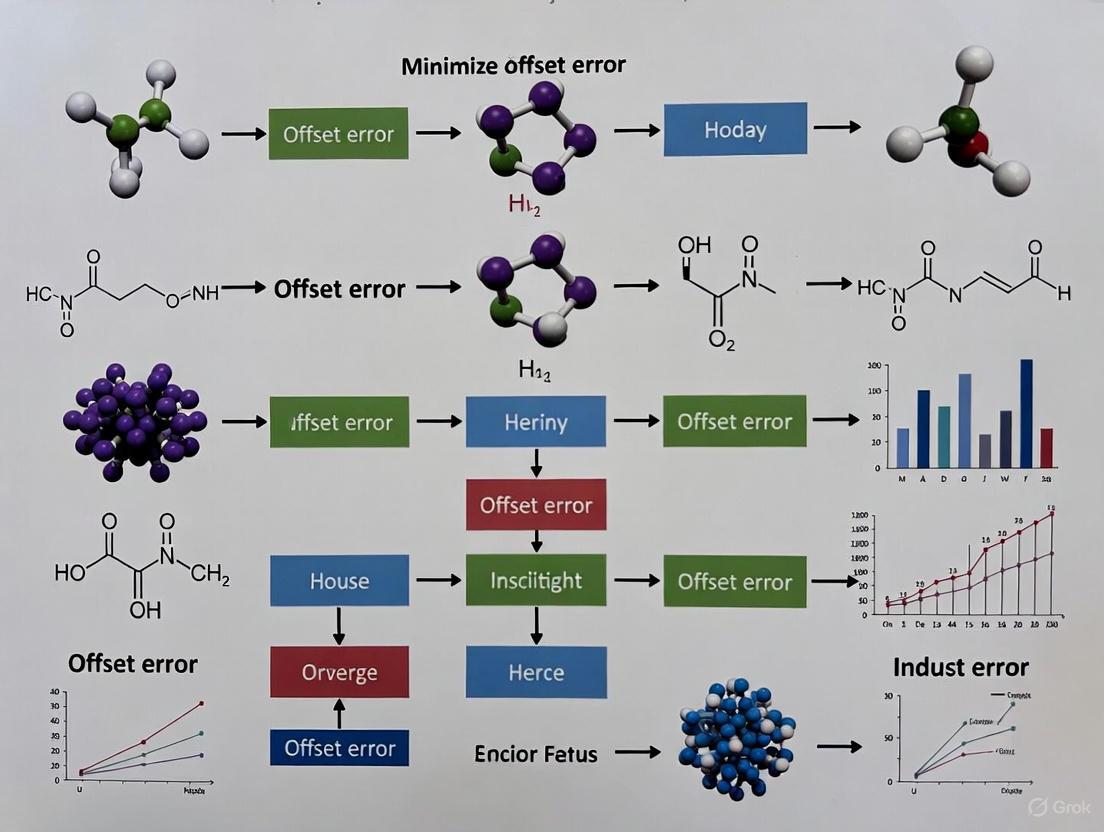

The following diagram illustrates the logical relationship between the core concepts and the general workflow for addressing measurement issues in instrument research.

Figure 1: A logical workflow for diagnosing and addressing measurement issues related to accuracy, precision, and uncertainty.

FAQs

What is the fundamental difference between a systematic error and a random error?

The fundamental difference lies in their predictability and impact on your data.

- Systematic Error (Bias): This is a consistent, predictable error that occurs in the same direction for every measurement. It skews your data away from the true value, affecting accuracy. For example, a miscalibrated scale that always reads 0.5 grams heavy introduces a systematic error [15] [16].

- Random Error: This is an unpredictable, chance-based error that causes measurements to vary randomly above and below the true value. It does not affect the average accuracy but impacts the precision (reproducibility) of your measurements [15] [17].

Which type of error is more problematic for my research?

In most research contexts, systematic error is considered more problematic [15] [18] [16]. Because it is consistent, it leads to biased conclusions and incorrect relationships between variables. Averaging multiple measurements does not reduce systematic error [16]. Random error, while it reduces precision, tends to cancel out when many measurements are averaged, and its impact can be reduced with large sample sizes [15].

How can I identify a systematic offset error in my instruments?

You can identify an offset error, a type of systematic error where the instrument does not read zero when the quantity to be measured is zero, through these methods [18] [19]:

- Calibration Check: Measure a known standard or a "blank" sample with a known value (often zero). A consistent difference between the instrument's reading and the known value indicates an offset error [15] [20].

- Comparison with a Reference Method: Use a different, highly accurate method or instrument to measure the same quantity. A consistent discrepancy points to a systematic error in your primary instrument [20].

- Instrument Zeroing: Before taking a measurement, ensure the instrument is properly zeroed. Failure to do so is a common cause of offset error [18].

What are the most effective strategies to minimize random error?

Random error can be minimized by increasing the number of observations and controlling experimental conditions [15] [17] [16].

- Take Repeated Measurements: Measure the same quantity multiple times and use the average value. This allows the positive and negative random errors to cancel each other out [15] [16].

- Increase Your Sample Size: Collecting data from a larger sample improves precision and statistical power, as random variations have less overall impact [15].

- Control Environmental Variables: Conduct experiments in controlled settings (e.g., stable temperature, humidity) to reduce unpredictable fluctuations [15].

Troubleshooting Guides

Issue: Consistent Inaccuracy in Measurements (Suspected Systematic Error)

Problem: Your measurements are consistently skewed in one direction away from the known or expected value.

Solution: Follow this systematic error hunting protocol.

Experimental Protocol:

- Calibrate Instrument: Compare your instrument's readings against a traceable standard across its measurement range. Note any consistent deviation (offset or scale factor) [15] [18].

- Review Methodology: Check for procedural biases. Are you using the instrument correctly? Could environmental factors (e.g., temperature) be consistently influencing the result? Is there observer bias in reading analog scales? [18] [21].

- Triangulate with Alternative Method: Measure the same quantity using a different instrument or a completely different experimental technique. If the discrepancy disappears, you have isolated the systematic error to your original setup [15] [16].

- Apply Correction: If a consistent bias is found and quantified, apply a correction factor to all future measurements. Remember to include the uncertainty of this correction in your overall uncertainty budget [20].

Issue: High Variability in Repeated Measurements (Suspected Random Error)

Problem: Repeated measurements of the same sample yield different results, showing low precision and poor reproducibility.

Solution: Implement procedures to enhance measurement stability and consistency.

Experimental Protocol:

- Increase Sample Size or Repeats: For a single sample, take a large number of repeated measurements (e.g., n≥10). For an experiment, ensure you have a sufficiently large sample size to ensure statistical power. Calculate the mean and standard deviation to quantify the precision [15] [16].

- Stabilize Experimental Conditions: Identify and control for environmental fluctuations (drafts, temperature, voltage supply). Use stricter protocols to minimize human variability, such as using jigs for positioning or defining clear criteria for observational data [15] [17].

- Upgrade Equipment: If possible, use instruments with better resolution and lower inherent noise. Ensure equipment is maintained and used within its specified operating range [16].

Data Presentation

Comparison of Error Types

| Parameter | Systematic Error | Random Error |

|---|---|---|

| Definition | Consistent, predictable deviation from the true value [15] | Unpredictable, chance-based fluctuation around the true value [15] |

| Also Known As | Bias [15] | Noise, uncertainty [15] [16] |

| Impact on | Accuracy (closeness to true value) [15] [16] | Precision (reproducibility) [15] [16] |

| Direction | Consistently in one direction (always high or always low) [18] [17] | Equally likely in both directions (high and low) [17] [16] |

| Cause | Miscalibrated instrument, faulty method, observer bias [15] [18] | Environmental fluctuations, instrument sensitivity, human reading errors [15] [17] |

| Elimination | Cannot be eliminated by averaging; requires calibration or method change [15] [16] | Reduced by averaging repeated measurements and increasing sample size [15] [17] |

| Error Type | Source | Example |

|---|---|---|

| Systematic | Offset Error | A scale that does not return to zero, adding a fixed amount to every measurement [18] [19]. |

| Systematic | Scale Factor Error | A thermometer that consistently reads temperatures 5% too high due to a calibration drift [18] [19]. |

| Systematic | Researcher Bias | A researcher consistently misinterpreting a faint line on a measurement scale due to parallax error [18]. |

| Random | Environmental Noise | Slight variations in voltage supply causing fluctuations in an electronic balance's reading [19] [17]. |

| Random | Instrument Limitations | The inherent limitation of a tape measure only being accurate to the nearest millimeter, causing rounding variations [15]. |

| Random | Sampling Variability | Measuring the height of a small, non-representative group of plants to estimate the average height of the entire population [21]. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Error Reduction |

|---|---|

| Certified Reference Materials (CRMs) | Provides a known, traceable standard with certified properties essential for calibrating instruments and quantifying systematic offset errors [20]. |

| Calibration Weight Set | Used to verify the accuracy and linearity of analytical balances, directly identifying and helping correct for offset and scale factor errors [18]. |

| Data Logging Software | Automates data collection from instruments, minimizing human transcription errors (a source of random error) and improving reproducibility [22]. |

| Environmental Control Chamber | Creates a stable, controlled environment (temperature, humidity) to minimize random errors caused by external fluctuations [15]. |

| Standard Operating Procedure (SOP) | A detailed, written protocol ensures all researchers follow the same methods, reducing both systematic procedural biases and random operational variations [22]. |

The Clinical and Research Impact of Uncorrected Offset

Frequently Asked Questions (FAQs)

1. What is an offset error, and why is it a critical concern in research instruments? An offset error occurs when a measurement instrument reports a non-zero value despite a zero input signal. This is critical because it introduces a constant bias into all measurements, compromising data integrity and leading to incorrect conclusions in sensitive applications like drug development and clinical diagnostics [23] [24].

2. What is the difference between a zero offset error and a span error?

- Zero Offset Error: A constant deviation across all measurement levels. The instrument provides a non-zero output even when the input is zero [24].

- Span Error: A proportional inaccuracy relative to the input. The instrument's output signal does not change correctly with changes in the input pressure, affecting sensitivity across the entire measurement range [24].

3. My data acquisition (DAQ) system shows consistent offset; how can I troubleshoot it? Begin by verifying the input signal with a calibrated digital multimeter to isolate the DAQ device as the source. Then, run a self-calibration of the device in its configuration software (e.g., NI-MAX). Ensure that any hardware jumper settings on the device match the software configuration [25].

4. Are offset errors a known issue in clinical imaging like Cardiovascular Magnetic Resonance (CMR)? Yes. In CMR phase-contrast flow imaging, phase offset errors are a significant source of inaccuracy. They can lead to miscalculation of net blood flow, incorrect assessment of valvular regurgitation severity, and errors in shunt quantification. These errors vary substantially between different CMR scanners [26] [27] [28].

5. I have a faulty pH analyzer that fails calibration. How should I proceed? As detailed in a real-world case, first try connecting the pH probe to a known-good analyzer. If the readings are acceptable, the fault lies with the original analyzer. If substitution points to the analyzer, check for corroded or damaged connecting cables, as increased resistance can severely affect the low-voltage pH signal. Replacing the cable often resolves the issue [29].

Troubleshooting Guides

Guide 1: Troubleshooting Offset in Data Acquisition (DAQ) Systems

Applicability: NI and other multifunction DAQ devices.

| Troubleshooting Step | Key Actions | Reference/ Rationale |

|---|---|---|

| 1. Signal Verification | Verify the signal at the DAQ input terminals using a calibrated digital multimeter or oscilloscope. | Isolates the DAQ device as the source of error [25]. |

| 2. Device Calibration | Perform a self-calibration via the driver software (e.g., NI-MAX). | Corrects offsets caused by an analog-to-digital (A/D) converter that needs re-calibration [25]. |

| 3. Configuration Check | Ensure software settings for analog input mode match the physical hardware jumper settings. | Mismatched settings cause LabVIEW to incorrectly convert raw measurements [25]. |

| 4. Environmental Check | Use shielded cables; avoid long wires (>15 ft); ensure correct analog input mode. | Mitigates environmental noise that can cause bad readings [25]. |

| 5. Custom Scale | For persistent DC offset, configure a Custom Scale in NI-DAQmx. | Programmatically corrects for a consistent DC offset in software [25]. |

Guide 2: Correcting Phase Offset Errors in CMR Flow Quantification

Applicability: Cardiovascular Magnetic Resonance (CMR) for blood flow measurement.

Background: CMR phase-contrast (PC) flow measurements are compromised by phase offset errors caused by eddy currents and concomitant gradients. These errors can significantly impact net flow quantification and regurgitation assessment [26] [28].

Comparative Table: Phase Offset Correction Methods in CMR

| Correction Method | Principle | Pros | Cons / Clinical Impact |

|---|---|---|---|

| Uncorrected | No correction for phase offset is applied. | Least clinically significant differences in net flow and regurgitation classification in one multi-scanner study [26]. | Underlying offset error remains, potentially causing significant inaccuracies on some scanners [27]. |

| Stationary Tissue Correction | Estimates offset using velocity in stationary tissue near the vessel. | Does not require additional phantom scans; available in commercial software [26]. | Can worsen accuracy vs. no correction; led to net flow differences >10% in 19-30% of measurements [26] [28]. |

| Phantom Correction | Scans a stationary phantom with identical parameters to measure offset directly. | Considered a reliable reference method; directly measures error at vessel location [26]. | Requires extra acquisition time; assumes temporal stability of phase offset errors [26]. |

Experimental Protocol: Phantom-Based Correction for CMR Flow [26]

- Patient Scan: Perform the clinical through-plane 2D PC flow acquisition (e.g., of the aorta or pulmonary artery).

- Phantom Preparation: Use a stationary gel phantom (e.g., 10L paraben gelatin gel with 50 mL Gadovist).

- Phantom Scan: Directly after the patient scan, with the patient still connected to the ECG, position the phantom at the identical location of the heart on the scanner table.

- Data Acquisition: Use the exact same PC sequence parameters (FOV, slice thickness, VENC, etc.) to scan the static phantom.

- Analysis: In the analysis software, subtract the velocity map generated from the phantom acquisition from the patient's PC data to correct for the phase offset error.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Materials for Offset Error Investigation and Correction

| Item | Function in Experiment |

|---|---|

| Static Gel Phantom | A stationary object made of gelatin and gadolinium contrast, used in CMR to directly measure phase offset errors when scanned with identical patient parameters [26]. |

| Calibrated Digital Multimeter (DMM) | A reference instrument used to verify the true electrical signal at the input terminals of a DAQ device, helping to isolate the source of an offset [25]. |

| Shielded Cables | Cables designed with a protective shield to minimize the pickup of environmental electrical noise, which can cause offset and noisy readings in sensitive measurements [25]. |

| Pre-calibrated Pressure Transducer | A sensor that undergoes extensive factory temperature compensation and calibration to minimize inherent zero and span offsets, providing plug-and-play accuracy [23]. |

| Buffer Solutions (pH 4.01, 7.00, 10.01) | Standardized solutions with known pH values, used to calibrate and troubleshoot pH measurement systems like analyzers and probes by identifying offset and linearity errors [29]. |

Gain Error, Integral Nonlinearity (INL), and Relative Uncertainty

A technical resource for researchers refining instrument precision in drug development.

FAQs: Core Concepts and Troubleshooting

Q1: What is the relationship between Gain Error, Offset Error, and Integral Nonlinearity (INL) in a data converter?

Gain Error, Offset Error, and INL are distinct but related specifications that describe different aspects of a data converter's performance.

- Gain Error indicates how well the slope of the converter's actual transfer function matches the slope of the ideal transfer function. It is typically expressed in LSB or as a percent of the full-scale range (FSR) and can be calibrated out in hardware or software [30].

- Offset Error is the vertical (DC) shift in the transfer function, often represented as the error in the 'b' term (y-intercept) in the line equation y = mx + b [31].

- Integral Nonlinearity (INL) is a measure of the deviation between the actual output and the ideal output value at any given code after the offset and gain errors have been compensated. It is a measure of the converter's linearity [32] [33]. The relationship can be summarized as: Gain Error = Full-Scale Error - Offset Error [30].

Q2: How is INL measured, and what is the difference between the "end-point" and "best-fit" methods?

INL is the maximum deviation of the actual transfer function from the ideal straight line. Two common methods define this "ideal" line [32] [33]:

- End-Point Method: The ideal line is drawn directly between the first and last data points of the converter's actual transfer function after it has been operated. The maximum deviation from this line is the INL.

- Best-Fit Method: The ideal line is a straight line that minimizes the sum of squared deviations across all codes. The maximum deviation from this "best-fit" line is the INL. This method typically provides a better representation of overall linearity.

Q3: When and why should I use Relative Uncertainty instead of Absolute Uncertainty?

Relative uncertainty provides a normalized view of measurement quality, making it invaluable for comparison and application.

- Purpose: It simplifies the understanding and application of measurement uncertainty, especially for complex functions. It is also common practice in many technical fields, including chemical, electrical, and thermodynamic measurements [34].

- When to Use: It is particularly useful when you need to apply an uncertainty budget to a value different from the one it was calculated on (e.g., applying a percent uncertainty across the range of an instrument). However, caution is needed, as relative uncertainties based on input quantities might overstate or understate the final expanded uncertainty without proper sensitivity coefficients [34].

Q4: My measurement system has a significant Gain Error. What are the first steps to minimize its impact on my experimental results?

A significant gain error introduces a scaling inaccuracy across your measurements.

- Characterize: Precisely measure the gain error by applying a known, high-precision input near the full-scale range and comparing the actual output to the ideal output.

- Calibrate: Implement a software calibration routine that applies a multiplicative correction factor to all readings. This factor is the ratio of the ideal gain to the actual measured gain. For example, if a DAQ system has a +1% gain error, you would multiply all readings by a factor of 1/1.01.

- Validate: After applying the correction, re-measure the gain error with a different set of known inputs to verify the effectiveness of the calibration.

Troubleshooting Guide: Error Identification and Mitigation

| Symptom | Potential Cause | Diagnostic Steps | Corrective Actions |

|---|---|---|---|

| Consistent scaling inaccuracy across the measurement range. | Gain Error [30]. | Measure output at zero and full-scale input. Calculate slope deviation from ideal. | Apply a multiplicative correction factor in software to adjust the slope of the transfer function [30]. |

| Non-proportional output; deviation changes at different input levels. | Integral Nonlinearity (INL) [32]. | Perform a full-scale sweep of inputs after compensating for offset and gain errors. Plot the deviations. | Implement an INL lookup table to correct each specific code or use a best-fit linearization algorithm [32] [33]. |

| Measurement results are inconsistent or lack confidence intervals. | Unaccounted Relative Uncertainty. | Calculate the relative uncertainty of key components and the final result [34]. | Report final results with their expanded uncertainty (e.g., Value ± U, where U is calculated from the relative uncertainty budget with a coverage factor k=2). |

| DC shift in all measurements, even at zero input. | Offset Error [31]. | Apply a zero input and measure the output deviation. | Apply an additive correction (offset nulling) in hardware or software to bring the zero point to the ideal value [31]. |

Experimental Protocol: Minimizing Offset Error in Instrumentation

This protocol outlines a systematic approach to characterize and minimize offset error, a critical step in improving overall data acquisition accuracy for precision research.

1. Objective: To quantify the offset error of a data acquisition channel and implement a corrective measure to minimize its impact on experimental data.

2. Materials and Reagent Solutions:

- Device Under Test (DUT): The instrument or data converter (ADC or DAC) being characterized.

- Precision Voltage Reference: A stable, low-noise source to establish a known "zero" input condition.

- Calibrated Digital Multimeter (DMM): Used to verify the voltage reference and instrument output with traceable accuracy.

- Data Analysis Software: (e.g., Python, MATLAB, or LabVIEW) for recording data and performing calculations.

- Temperature-Controlled Environment: To minimize thermal drift during characterization.

3. Methodology: 1. System Setup: Place the DUT and reference sources in a temperature-stable environment. Allow all equipment to power on and stabilize for the manufacturer's recommended time. 2. Zero Input Application: Connect the precision voltage reference set to 0V (or the defined zero-scale point) to the input of the DUT. 3. Data Acquisition: Record a large number of output codes from the DUT (e.g., 10,000 samples) to get a statistically significant dataset. 4. Error Calculation: Average the sampled data and convert this average output code to a voltage using the instrument's ideal transfer function. This measured output voltage at a zero-input condition is the Offset Error. 5. Software Correction: Program the instrument's firmware or data processing software to subtract the calculated offset error value from all subsequent measurements.

4. Data Interpretation: The quantified offset error should be documented in the instrument's calibration record. Post-correction, the protocol should be repeated to verify that the residual offset error is now within an acceptable limit for the specific application, such as high-sensitivity analyte detection.

The following table summarizes the core definitions, measurement techniques, and common units for the three key terminology.

| Terminology | Core Definition | Primary Measurement Method | Common Units of Measure |

|---|---|---|---|

| Gain Error [30] | Deviation in the slope of the actual transfer function from the ideal slope. | Measure output at full-scale input after offset removal. | LSB, % of Full-Scale Range (FSR), ppm. |

| Integral Nonlinearity (INL) [32] [33] | Maximum deviation of the actual transfer function from the ideal line after offset and gain error compensation. | End-point method or Best-fit line method across all codes. | LSB, % of FSR, Volts. |

| Relative Uncertainty [34] | The ratio of the absolute measurement uncertainty to the absolute value of the measured quantity. | (Absolute Uncertainty / Measured Quantity Value) multiplied by a scale factor. | %, ppm, micro-units per unit (e.g., µV/V). |

Calibration and Correction Methodologies in Practice

Digital Domain Correction refers to a suite of techniques used to minimize errors, particularly offset errors, in instrumentation systems for research and drug development. By applying mathematical corrections either through computed algorithms (Mathematical Models) or pre-computed arrays (Lookup Tables), these methods enhance the accuracy and reliability of experimental data. In the context of high-precision fields like mass spectrometry and liquid chromatography, such corrections are not merely beneficial—they are fundamental to ensuring data integrity.

The core premise is to replace or supplement potentially noisy or biased physical measurements with digitally processed values. Mathematical models achieve this by continuously calculating corrections based on a functional understanding of the system's error sources. Lookup tables (LUTs), by contrast, offer a simpler, often faster, alternative by storing pre-calculated output values for a given set of inputs, replacing runtime computation with a straightforward array indexing operation [35]. The strategic application of these techniques directly supports the broader thesis of implementing robust strategies to minimize offset error in instrument research.

Core Concepts and Definitions

What is a Lookup Table (LUT)?

A Lookup Table (LUT) is an array that replaces the runtime computation of a mathematical function with a simpler array indexing operation, a process known as direct addressing [35]. The savings in processing time can be significant because retrieving a value from memory is often faster than carrying out an computationally expensive calculation [35].

- Operation: In a LUT, to retrieve a value

vwith a keyk, the valuevis stored at thek-th entry in the table. The key is used directly as the memory address or index [35]. - Comparison with Hash Tables: LUTs differ from hash tables in that they use the key

kdirectly as the index, whereas hash tables use a hash functionh(k)to compute the index, which introduces complexity and potential for collisions [35]. - Applications: In image processing, LUTs (sometimes called 3DLUTs) are used to transform input data, such as applying a colormap to a grayscale image to emphasize differences [35]. They are also fundamental in digital logic design, where they can model combinatorial logic defined by a truth table [36].

What is a Mathematical Model?

A Mathematical Model in this context is an equation or set of equations that describes the relationship between a system's inputs and its outputs, including the characterization of inherent errors. Unlike LUTs, models perform real-time calculations to derive a corrected output. The process of creating these models often involves system identification, where the model's parameters are tuned using calibration data to accurately reflect the system's behavior, including its offset and gain errors.

Troubleshooting Guides & FAQs

FAQ 1: When should I use a lookup table instead of a mathematical model for correction?

Answer: The choice hinges on the specific constraints of your application, particularly regarding computational resources, memory, required precision, and the nature of the function you are implementing.

The following table outlines the key considerations for choosing between a Lookup Table and a Mathematical Model:

| Factor | Lookup Table (LUT) | Mathematical Model |

|---|---|---|

| Computational Speed | Very fast (O(1) complexity). Ideal for functions that are "expensive" to compute [35]. | Speed depends on the complexity of the model's equation. Can be slower for intricate functions. |

| Memory Usage | Can be high, especially for high-resolution input domains. The table size grows with the number of inputs and their precision [35]. | Typically very low, as only the model parameters (e.g., coefficients) need to be stored. |

| Accuracy & Resolution | Accuracy is limited by the table's resolution and size. Interpolation between points can improve this [35]. | Can provide continuous, high-resolution output, but accuracy depends on the model's fidelity to the real system. |

| Flexibility | Inflexible; the correction is fixed once the table is populated. Changing the correction requires regenerating the entire table. | Highly flexible; the correction can be easily adjusted by updating the model's parameters. |

| Best Use Cases | Correcting highly complex, non-analytic functions; applications where speed is critical and memory is plentiful [35]. | Correcting well-understood, smooth functions; systems where parameters may drift and require periodic re-tuning; memory-constrained environments. |

FAQ 2: My instrument's calibrated output still has a consistent offset after applying a LUT. What could be wrong?

Answer: A consistent offset often points to an error in the calibration process or a bias in the source data used to populate the lookup table. Follow this systematic troubleshooting guide:

- Verify Calibration Standards: Ensure the calibrants used to generate the LUT data are appropriate for your analyte and mass range. For instance, using clusters or polymers like CsI or PEG may cause issues due to memory effects or signal interference [37].

- Check for Signal Interference: Overlapping signals from contaminants or improperly resolved peaks can cause shifts that manifest as offsets. Ensure your instrument and solutions are free from contamination [37].

- Inspect LUT Population Methodology:

- Was the LUT generated using a sufficient number of data points?

- If interpolation is used, is the method (e.g., linear, cubic) appropriate for the function's behavior?

- Confirm the LUT's Default Value (the output for an input not found in the table) is set correctly and is not introducing a bias [36].

- Review the Data Collection Environment: In electronic systems, verify that the power supply is stable and that there is no significant ground loop or EMI noise that could be introducing a DC offset during the LUT data acquisition phase.

- Re-calibrate with Internal Standard: For analytical instruments like mass spectrometers, the highest accuracies are obtained with internal calibration, especially with calibrants that bracket the analyte's mass. This can correct for drift that an external LUT might not capture [37] [38].

FAQ 3: How do I validate that my digital correction is working effectively?

Answer: Validation requires testing the system with known reference points that were not used in the creation of the correction model or LUT.

- Use a Validation Set: Reserve a portion of your calibration data (e.g., 20%) solely for validation. Do not use it to build the model or LUT.

- Quantify Performance Metrics:

- Offset/Accuracy: Calculate the mean error between the corrected output and the known true value. This should be close to zero.

- Precision: Calculate the standard deviation of the error. This indicates the reproducibility of the correction.

- Overall Error: Metrics like Root Mean Square Error (RMSE) combine both bias and precision into a single value.

- Test Across Operating Range: Ensure validation is performed across the entire intended operational range (e.g., different masses, concentrations, temperatures) to verify robustness.

- Instrument Performance Check: For mass spectrometers, evaluate both accuracy and precision before measuring unknowns. This may involve checking parameters like resolution and sensitivity, as these can affect ion statistics and, consequently, precision [37].

Experimental Protocols for Minimizing Offset Error

Protocol: Implementing a Lookup Table for Instrument Linearization

Aim: To create and implement a lookup table that corrects for non-linearity and offset in a sensor's output.

Materials:

- Device Under Test (DUT): e.g., a sensor, transducer, or instrument channel.

- High-accuracy reference standard (e.g., calibrated mass, voltage source, or chemical calibrant).

- Data acquisition system.

- Software environment (e.g., Python, MATLAB, C++) for LUT generation and application.

Methodology:

Characterization:

- Apply a series of known, highly accurate input stimuli (

X_true) from the reference standard, covering the entire operational range of the DUT. - Record the corresponding raw output values (

Y_raw) from the DUT. - Ensure environmental conditions (temperature, humidity) are stable and recorded, as they may influence the offset.

- Apply a series of known, highly accurate input stimuli (

LUT Population:

- The LUT will be structured with

Y_raw(or a quantized version of it) as the input index and the correspondingX_trueas the output value. This architecture directly corrects the raw reading to a true value. - For

Y_rawvalues that fall between the characterized indices, plan for an interpolation method. Linear interpolation is often a sufficient and efficient starting point [35].

- The LUT will be structured with

Implementation:

- In the instrument's firmware or software, program the correction routine.

- For each new

Y_rawmeasurement from the DUT, the routine will:- Find the two closest indices in the LUT that bracket

Y_raw. - Perform interpolation (e.g., linear) between the two corresponding

X_trueoutput values. - Return the interpolated

X_correctedas the final result.

- Find the two closest indices in the LUT that bracket

Validation:

- Using the reserved validation dataset, apply the LUT to correct new

Y_rawvalues and compare theX_correctedoutputs to the knownX_truevalues. - Calculate the mean error (offset) and standard deviation (precision) to quantify performance.

- Using the reserved validation dataset, apply the LUT to correct new

The workflow for this protocol is as follows:

Protocol: Tuning and Calibration for Mass Spectrometry (LC-MS)

Aim: To perform tuning and mass axis calibration of a liquid chromatography-mass spectrometry (LC-MS) system to ensure accurate mass assignment and minimize measurement offset.

Materials:

- LC-MS system.

- Proprietary tuning solution appropriate for the instrument and mass range (e.g., a mixture of compounds from the manufacturer) [38].

- Suitable syringe pump for direct infusion.

Methodology:

System Preparation:

- Ensure the instrument is properly serviced and the ion source is clean. Contamination is a primary source of performance degradation [38].

- Set up the LC-MS for direct infusion of the tuning solution, bypassing the chromatography column.

Ion Source Tuning:

- Infuse the tuning solution and initiate the automated tuning procedure.

- The software will adjust voltages applied to ion source components (e.g., ESI needle, cone) to optimize ion transmission and achieve target ion abundances for the various masses in the calibrant [38]. This process ensures optimal sensitivity and a predictable response.

Mass Axis Calibration:

- The instrument software will acquire a spectrum of the tuning compound, which contains ions of known mass-to-charge (

m/z) ratios. - It will then construct a calibration curve by tuning the mass axis to a set of pre-programmed tune masses. This is done by altering the electronic configuration of the mass analyzer (e.g., mass gain and offset for a quadrupole) so that the measured peaks align with their known

m/zvalues [38]. - This step is critical for eliminating mass assignment offset.

- The instrument software will acquire a spectrum of the tuning compound, which contains ions of known mass-to-charge (

Peak Shape and Abundance Adjustment:

- Parameters for the mass analyzer and detector are adjusted to achieve the specified resolution (peak width) and to standardize the abundance versus mass response, correcting for any mass discrimination inherent in the analyzer [38].

Validation:

- Verify that the measured

m/zvalues for the calibrant ions are within the required mass accuracy tolerance (e.g., within 0.1 Da for a quadrupole, or ppm for a high-resolution instrument). - Confirm that the relative abundances of the fragment ions match the expected standard to ensure quantitative reliability [38].

- Verify that the measured

The Scientist's Toolkit: Key Research Reagents & Materials

The following table details essential materials used in calibration and tuning experiments, particularly within the field of mass spectrometry.

| Item Name | Function / Application | Key Considerations |

|---|---|---|

| Proprietary Tuning Solutions (Vendor-specific) | Used for automated tuning and mass calibration of LC-MS systems. Contains a mixture of compounds with known m/z fragments [38]. |

Ensures consistency and instrument-to instrument reproducibility. Follow manufacturer's recommendations for use. |

| Cesium Iodide (CsI) | Forms clusters for high m/z calibration points [37]. |

Suitable for calibrating the high mass range. May not be ideal for all mass analyzers (e.g., unsuitable for ion traps) [37]. |

| Polyethylene Glycol (PEG) / Polypropylene Glycol (PPG) | Polymers that provide closely spaced m/z signals over a limited mass range, useful for calibration [37] [38]. |

Caution: Prone to "memory effects" as they are sticky and can contaminate the ion source for extended periods [37] [38]. |

| Protein & Peptide Standards (e.g., Bovine Ubiquitin, Lysozyme) [38] | Used for calibration in proteomics and high-molecular-weight analysis. | Offer high customization for specific analyses. Their use is often preferred for analyzing similar molecules [37]. |

| Internal Standard Compounds | A known amount of a non-interfering compound added to both calibration and unknown samples. | Corrects for analyte loss during preparation and instrument drift. Essential for high-accuracy quantitation [37]. |

| Loop Calibrator | A handheld instrument used to simulate and measure the 4-20 mA signals in analog loops from sensors/transmitters. | Crucial for troubleshooting and verifying the accuracy of the input signal to a data acquisition system before digital correction is applied. |

Frequently Asked Questions

1. What is the primary advantage of performing error correction in the analog domain versus the digital domain?

The key advantage of analog domain correction is that it does not introduce Integral Nonlinearity (INL) error, a penalty often incurred when using digital calibration methods. Analog calibration adjusts the hardware's actual operating parameters, ensuring the inherent signal path is accurate. Digital correction, while often easier to implement, typically works by applying a mathematical function or lookup table to the digital output, which can add up to ±0.5 LSB of INL error [39].

2. How does autocalibration in a data acquisition system maintain accuracy over time and temperature?

Advanced autocalibration circuits use an ultra-stable +5V reference voltage IC as a calibration source. The system periodically measures this reference and calibrates both the Analog-to-Digital (A/D) and Digital-to-Analog (D/A) circuits by adjusting 8-bit "TrimDACs" that control the offset and gain settings of the analog circuits. The calibration values are stored in EEPROM and are automatically loaded on power-up, ensuring consistent accuracy regardless of environmental drift [40].

3. Why is it necessary to have separate calibration settings for different analog input ranges?

Amplifier circuits with high accuracy (e.g., 16-bit) exhibit gain and offset errors that vary with the gain setting. Calibration settings that are perfect for one range, such as ±5V, may be insufficient for another, like ±10V, potentially introducing errors larger than the system's resolution. A robust autocalibration system stores a separate set of calibration coefficients for each input range in the EEPROM and loads the appropriate set when the range is changed [40].

4. My system has multiple analog sensors, and the readings on several of them seem unreasonable. What is a likely cause and a basic troubleshooting step?

In systems where multiple analog sensors share a common ground, a short-circuit in the cable or one sensor can disrupt the signal for all of them. A fundamental troubleshooting step is to unplug each analog sensor one at a time, waiting up to 30 seconds after each disconnection, and observe if the other sensor readings return to reasonable values. The sensor which, when unplugged, causes the other readings to normalize is likely the source of the problem [41].

Troubleshooting Guide: Common Analog Offset and Gain Errors

| Problem | Possible Causes | Diagnostic Steps | Solution |

|---|---|---|---|

| DC Offset Error | Component aging, temperature drift, imperfect initial calibration [39]. | Measure output at zero-scale input code; observe deviation from ideal (e.g., 0V) [39]. | Adjust offset TrimDAC or apply a compensating voltage in the analog signal path [40] [39]. |

| Gain/Scaling Error | External voltage reference drift, resistor tolerance in amplifier stages [39]. | Measure output at full-scale input code; compare to ideal value (e.g., VREF) [39]. | Adjust gain TrimDAC to correct the slope of the input-output characteristic [40] [39]. |

| Inaccurate Readings Across Multiple Sensors | Short-circuit in one sensor or its cable, faulty common ground [41]. | Systematically unplug each sensor; monitor if other readings become reasonable [41]. | Identify and replace the faulty sensor or repair the damaged cable [41]. |

| Loss of Calibration After Power Cycle | Corrupted or uncommitted EEPROM data, faulty "boot range" setting [40]. | Verify calibration values were stored correctly post-autocalibration. | Re-run autocalibration and ensure new TrimDAC values are saved to EEPROM. Confirm the correct input range is set as the "boot range" [40]. |

Experimental Protocol: Autocalibration of a Data Acquisition System

The following protocol details the procedure for performing a full autocalibration of a data acquisition system's analog circuits, as described in the Helios hardware manual [40].

1. Objective To calibrate the offset and gain errors of all A/D and D/A conversion circuits across all analog input ranges, ensuring maximum accuracy and minimizing instrumental offset error.

2. Materials and Equipment

- Data acquisition board with autocalibration capability (e.g., Helios).

- Host computer with Universal Driver software installed.

- Ultra-stable voltage reference (internal to the board).

3. Procedure

- Step 1: Initialization. Ensure the board is powered on and correctly recognized by the Universal Driver software.

- Step 2: Function Call. Trigger the autocalibration process using the single function call provided within the driver software API.

- Step 3: A/D Calibration. The internal calibration circuit will automatically route the stable +5V reference to the A/D converter. The system will then measure this reference and iteratively adjust the offset and gain TrimDACs for each analog input range until the measurement error is minimized.

- Step 4: D/A Calibration. Upon A/D calibration completion, the system will route the D/A outputs back into the now-calibrated A/D converter. It will then adjust the D/A TrimDacs so that the output voltages precisely match the commanded digital codes.

- Step 5: Data Storage. The newly calculated TrimDAC values for all ranges and circuits are stored in the non-volatile EEPROM on the board.

4. Timing and Notes

- The complete process for all A/D ranges takes approximately 10-20 seconds. The D/A calibration takes a similar amount of time.

- One analog input range is designated as the "boot range." Its calibration values are the default loaded on power-up. Set this to the range most frequently used in your application [40].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function / Explanation |

|---|---|

| TrimDACs | Digital-to-Analog Converters dedicated to calibration. They adjust the offset and gain settings of the main analog circuits by injecting small correction voltages or currents, based on values stored in EEPROM [40]. |

| Ultra-Stable Voltage Reference IC | Provides the precision benchmark voltage against which all other analog measurements and calibrations are compared. Its stability over time and temperature is critical for long-term accuracy [40]. |

| Calibration EEPROM | Non-volatile memory that stores a unique set of calibration coefficients for each analog input range. This allows the system to recall and apply the precise corrections needed when the gain setting is changed [40]. |

| Precision DAC with Integrated Registers | Integrated circuits (e.g., MAX5774) that contain dedicated gain and offset calibration registers for each channel. This allows for digital calibration of analog errors without external hardware, simplifying system design [39]. |

Workflow and Signaling Diagrams

Analog Autocalibration Workflow

Precision DAC Error Correction Pathways

Integral Action in PID Controllers for Eliminating Steady-State Error

Frequently Asked Questions (FAQs)

What is steady-state error or "offset" in a control system?

Offset, or steady-state error, is the persistent difference between the desired setpoint (SP) and the actual process variable (PV) once the system has settled. It is the residual error that remains after all transient effects have died down [42]. In a temperature control system, for example, this would be the consistent few degrees by which the actual temperature misses the target.

How does the Integral term in a PID controller eliminate this offset?

The Integral (I) term in a PID controller eliminates offset by accounting for the accumulated history of the error. While the Proportional term only considers the present error, the Integral term sums (integrates) the error over time. This means that even a very small, persistent error will cause the Integral output to grow continuously until it is large enough to push the process variable to the setpoint, thereby driving the steady-state error to zero [43] [44] [45].

What are the trade-offs when using Integral action?

The primary trade-off for eliminating offset is the potential for reduced stability and slower system response. An overly aggressive integral gain ((K_i)) can lead to:

- Increased Oscillations: The system may overshoot the setpoint and oscillate before settling [46].

- Integral Windup: If a large error persists for a long period (e.g., during system startup or when an actuator is saturated), the integral term can accumulate a very large value ("winds up"). When the setpoint is finally reached, this large stored value can cause significant overshoot as it unwinds [44].

When should I use a PI controller versus a PID controller?

- PI Controller: This is the most common configuration and is ideal for most processes where derivative action would be sensitive to measurement noise. It provides the crucial benefit of eliminating steady-state error without the complexity of the derivative term [43] [46].

- PID Controller: The addition of the Derivative (D) term is beneficial for slower processes where it can help predict future error trends, dampen oscillations, and reduce overshoot. However, it should be used cautiously as it can amplify high-frequency sensor noise [46] [45].

Troubleshooting Guide

Problem 1: Persistent Offset After Setpoint Change

Symptoms: The process variable stabilizes at a value consistently above or below the setpoint and does not correct itself over time.

Possible Causes and Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Integral Gain Too Low | Check controller tuning. If the system responds slowly and never reaches setpoint, the integral action is likely too weak. | Increase the integral gain ((K_i)) gradually. Follow a structured tuning method like Ziegler-Nichols to find an optimal value [44] [46]. |

| Integral Term Disabled | Verify the controller configuration. The controller may be in P-Only or PD mode. | Ensure the controller is in PI or PID mode to activate the integral action [43] [45]. |

| Controller Saturation & Windup | Observe if the controller output is maxed out (e.g., at 100% or 0%) for an extended period while the error remains. | Implement anti-windup strategies [44]. This can involve limiting the integral term's growth when the output is saturated or using advanced controller features designed to prevent windup. |

Problem 2: Slow System Response or "Sluggish" Control

Symptoms: The system takes a very long time to reach the setpoint after a change, even though it eventually eliminates offset.

Possible Causes and Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Overly Conservative Tuning | The integral time ((T_i)) may be too long, meaning the integral acts too slowly. | Decrease the integral time ((T_i)) to make the integral action more aggressive. Be careful, as making it too small can lead to oscillations [43] [46]. |

| Excessive Process Dead Time | A delay between the controller's action and its effect on the process can limit the performance of any feedback controller. | Evaluate the process model. Consider advanced control strategies like Smith Predictors or model-based tuning that explicitly account for dead time [46]. |

Problem 3: Sustained Oscillations

Symptoms: The process variable continuously cycles above and below the setpoint without settling.

Possible Causes and Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Excessively High Integral Gain | Oscillations with a long period are a classic sign of an over-aggressive integral term. | Reduce the integral gain ((K_i)). To diagnose, place the controller in manual mode; if oscillations stop, the controller tuning is the likely cause [47]. |

| External Oscillatory Disturbance | Another loop or a cyclic process in the system could be causing the oscillation. | Isolate the system. If oscillations persist with the controller in manual, the source is an external load disturbance, not the controller tuning [47]. |

Experimental Protocols for Minimizing Offset

Protocol 1: Empirical PI Controller Tuning (Trial and Error)

This method is a practical approach for initial tuning of a new system [44] [45].

- Initial Setup: Start with the controller in P-Only mode. Set the integral and derivative gains to zero.

- Proportional Tuning: Increase the proportional gain ((K_p)) until the system responds quickly to a setpoint change but begins to exhibit a small, consistent oscillation. Note the steady-state offset.

- Introducing Integral Action: Introduce a small integral gain ((K_i)). Apply a step change to the setpoint.

- Observe and Adjust: Observe the response.

- If the offset is eliminated slowly, gradually increase (Ki).

- If the system begins to oscillate, reduce (Ki).

- Iterate: Fine-tune (Kp) and (Ki) together until you achieve a fast response that eliminates offset with minimal overshoot and oscillation.

Protocol 2: The Ziegler-Nichols Tuning Method

This is a classic, systematic method for determining PID parameters [44].

- Establish P-Only Control: Set the controller to P-Only mode ((Ki = 0), (Kd = 0)).

- Find the Ultimate Gain: Increase the proportional gain ((Kp)) until the system exhibits sustained, constant oscillations for the first time. This gain value is the Ultimate Gain, (Ku).

- Measure the Oscillation Period: Measure the time period of these constant oscillations. This is the Ultimate Period, (P_u).

- Calculate PI Parameters: Use the following table to calculate the initial tuning parameters:

| Control Type | (K_p) | (T_i) | (T_d) |

|---|---|---|---|

| P-Only | (0.5 \cdot K_u) | - | - |

| PI | (\mathbf{0.45 \cdot K_u}) | (\mathbf{P_u / 1.2}) | - |

| PID | (0.60 \cdot K_u) | (0.5 \cdot P_u) | (P_u / 8) |

Quantitative Performance Data from Optimized Controllers

The following table summarizes performance improvements achievable with optimized controllers, as demonstrated in research. These metrics provide a benchmark for what is possible when advanced tuning is applied to minimize offset and improve response [48].

| Controller Type | Optimization Method | Rise Time Improvement | Settling Time Improvement | Overshoot Reduction |

|---|---|---|---|---|

| FOPID (Fractional-order PID) | Jellyfish Search Optimizer (JSO) | 25% reduction vs. PID | 30% improvement vs. PID | 20% decrease vs. PID |

| PI | Particle Swarm Optimization (PSO) | Not Specified | Improved frequency response & overshoot | Minimized overshoot |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Control Experiments |

|---|---|

| PID Autotuning Software | Advanced software tools (e.g., in LabVIEW [44] or dedicated packages like LOOP-PRO [46]) can automatically perturb the process and calculate optimal PID parameters, minimizing manual effort and subjective judgment. |

| Data Acquisition (DAQ) System | High-accuracy hardware for reading sensor data (Process Variable) and outputting control signals. Essential for implementing digital PID controllers and must be properly calibrated to avoid introducing offset [25]. |

| First Order Plus Dead Time (FOPDT) Model | A mathematical model that simplifies process dynamics into key parameters: gain, time constant, and dead time. It forms the backbone of many modern, model-based tuning methods [46]. |

| Custom Scale Configuration | A software function (e.g., in NI-DAQmx [25]) used to correct for a constant DC offset in sensor measurements, ensuring the controller receives an accurate Process Variable reading. |

Diagrams of Control System Operation

PID Control Loop

Integral Action Eliminating Offset

Phantom-Based Calibration for Medical Imaging Systems

Phantom-based calibration is a foundational practice in medical imaging research, providing a controlled and reproducible method to quantify and minimize offset errors in imaging systems. These errors, if unaddressed, can manifest as inaccuracies in tumor targeting, quantitative measurements, and diagnostic interpretations. This technical support center provides researchers with practical guidance and troubleshooting protocols to implement robust calibration methodologies, ensuring the precision and reliability of their experimental data.

Troubleshooting Guides

Guide 1: Addressing Low Calibration Accuracy and High Residual Offset Errors

Problem: After calibration, validation tests show high residual errors in spatial targeting or quantitative measurements. Solution: Implement a multi-faceted calibration and validation approach.

Action 1: Verify Phantom Selection and Configuration

- Ensure the phantom's material properties (e.g., x-ray attenuation, acoustic properties) are appropriate for your imaging modality (e.g., CT, ultrasound) [49] [50].

- For spatial targeting calibration, use a phantom with well-defined, high-contrast internal features. An agar-based phantom with alternating layers has been shown to be effective for visualizing mechanical disintegration and measuring targeting offsets [51].

- Confirm that the phantom's geometry and fiducial markers adequately cover the system's entire field of view to avoid extrapolation errors [52].

Action 2: Optimize the Calibration Data Collection Strategy

- Increase Sampling: Do not rely on a single data point. Creating and analyzing four adjacent bubble clouds (or other fiducials) together has been demonstrated to produce more accurate and reproducible 3D offset measurements than analyzing individual clouds [51].

- Control Acquisition Parameters: For imaging phantoms, a treatment or exposure duration that is too short may not produce a sufficient signal. Evidence suggests that a 20-second treatment duration was associated with the greatest change in CBCT intensity for bubble cloud detection [51]. Adhere to established protocols for parameters like matrix size and count acquisition in nuclear medicine [53].

Action 3: Validate with an Independent Method

- After calibration, test the system's accuracy against a known ground truth that was not used in the calibration process. This confirms the generalizability of the calibration correction.

Guide 2: Managing System Performance Drift and Inconsistent Results

Problem: Calibration results are not stable over time, leading to inconsistent performance in longitudinal studies. Solution: Establish a rigorous quality assurance (QA) program.

Action 1: Implement a Scheduled Re-calibration Routine

- Define a re-calibration schedule based on manufacturer recommendations and the criticality of your research application. For systems under heavy use, this may be more frequent.

Action 2: Use a Stable, Dedicated QA Phantom

- For routine performance checks, use a durable, commercial phantom designed for quality control [49] [54].

- Avoid Degrading Materials: Be aware that phantom materials like gelatine or agar can degrade over time, affecting reproducibility [50]. For long-term studies, consider more stable materials like polyvinyl alcohol cryogel (PVA-c), which maintains its acoustic properties and resists dehydration [50].

Action 3: Monitor Key Performance Metrics

- Track metrics like uniformity, spatial resolution, and contrast over time. The American College of Radiology (ACR) provides specific scoring criteria for these metrics in nuclear medicine, which can be adapted for other modalities [53].

- Document any deviations and trigger a full re-calibration if metrics fall outside acceptable tolerances.

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary types of phantoms used in calibration, and how do I choose? Phantoms are broadly classified as synthetic (standard or anthropomorphic), biological (animal or plant tissue), or mixed [49] [54]. Your choice depends on the trade-off between realism and reproducibility.

- Choose synthetic phantoms (commercial or 3D-printed) for high reproducibility, system performance comparison, and quality assurance [49] [54].

- Consider biological or anthropomorphic phantoms when anatomical realism is critical for validating a procedure, such as testing a new surgical navigation technique [49] [50].

FAQ 2: How can I design a effective calibration phantom for a custom imaging system? Key considerations for phantom design include:

- Fiducial Markers: Incorporate a sufficient number of non-coplanar markers (at least six) that span the imaging field of view to accurately compute projection matrices [52].

- Material Properties: Select materials that mimic the acoustic, x-ray attenuation, or magnetic properties of the tissue being studied. For example, a mixture of Polyvinyl Alcohol (PVA) and Silicon Carbide (SiC) can effectively replicate the acoustic properties of soft tissues for ultrasound imaging [50].

- Workflow Integration: The phantom should be easy to integrate into the existing experimental workflow without requiring extensive additional steps [51].

FAQ 3: Our calibrated system is producing ring artifacts in CT reconstructions. What is the likely cause and solution?

- Cause: Ring artifacts are typically caused by pixel-wise nonuniformity in the detector response [55].

- Solution: Standard flat-field correction may be insufficient, especially for multi-material imaging in photon-counting CT (PCCT). Implement a more advanced calibration framework like the Signal-to-Uniformity Error Polynomial Calibration (STEPC), which uses multi-material slab phantoms (e.g., PMMA, aluminum, iodinated contrast) to model and correct for nonuniformity errors across different energy bins [55].

FAQ 4: What are the common pitfalls in the experimental design of a phantom study? Common pitfalls include [49] [54]:

- Unclear objectives: Not having a precise, measurable scientific question.

- Inadequate phantom selection: Using a phantom that does not appropriately represent the clinical or research scenario.

- Poorly described methods: Failing to document phantom fabrication, imaging protocols, and analysis steps in sufficient detail for others to reproduce.

- Ignoring clinical relevance: Focusing solely on technical metrics without considering the ultimate clinical or biological application.

Experimental Protocols & Data

Protocol 1: Calibration Correction for CBCT-Guided Histotripsy Targeting

This protocol details a method to correct the offset between a planned treatment location and the actual delivered location [51].

1. Phantom Preparation:

- Fabricate an agar-based phantom with approximately 11 layers of alternating high and low x-ray attenuation, spaced about 3 mm apart.

2. Bubble Cloud Creation:

- Using the histotripsy system, create a bubble cloud treatment in the phantom. A treatment duration of 20 seconds is recommended for optimal CBCT visualization.

- Repeat this to create four adjacent bubble clouds for improved accuracy [51].

3. Imaging and Analysis:

- Acquire CBCT images of the phantom before and after treatment.

- Use an automated algorithm to localize the bubble cloud treatments by minimizing a cost function based on the intensity difference within the treatment region on the pre- and post-treatment CBCT.

- The algorithm compares the actual treatment location to the theoretical focal point to calculate a 3D offset (X, Y, Z).

4. Application of Correction:

- The calculated 3D offset is applied as a calibration correction between the therapeutic bubble cloud location and the histotripsy robot arm.

Table 1: Quantitative Results of Multi-Bubble Cloud Calibration vs. Single Cloud [51]

| Offset Axis | Single Bubble Cloud Mean Absolute Deviation (MAD) | Four Adjacent Bubble Clouds MAD | Improvement |

|---|---|---|---|

| X | 0.2 mm | 0.1 mm | 50% |

| Y | 1.1 mm | 0.0 mm | ~100% |

| Z | 1.2 mm | 0.2 mm | 83% |

Protocol 2: Phantom-Based Geometric Calibration for Digital Tomosynthesis

This protocol reduces reconstruction artifacts caused by geometric misalignments in a tomosynthesis system [52].

1. Phantom Design:

- Use a calibration phantom with at least ten fiducial markers distributed non-coplanarly to cover the imaging field. A design with two parallel panels, each with five circular apertures, has been successfully used [52].

2. Projection Matrix Extraction:

- Acquire projection images of the calibration phantom from all view angles.

- For each projection view, use the known 3D coordinates of the fiducial markers and their 2D projections in the image to calculate a 3x4 projection matrix (M3x4) using a singular value decomposition (SVD) algorithm. This matrix defines the mapping from object space to image coordinates for that specific view [52].

3. Calibrated Reconstruction:

- During reconstruction of an actual object, use the stored projection matrices to accurately map the backprojection rays for each view, correcting for geometric misalignments.

- A reciprocal-cosine weighted compensation can be added to correct for axial intensity fall-off [52].

Workflow Visualization

Calibration Workflow

Diagram Title: Phantom-Based Calibration Workflow

Phantom Selection Logic

Diagram Title: Phantom Selection Guide

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Phantom-Based Calibration Research

| Material / Reagent | Primary Function in Calibration | Example Application / Notes |

|---|---|---|

| Agar-based Phantom | Provides a tissue-mimicking medium with creatable internal structures for visualizing and measuring targeting errors [51]. | Used in histotripsy to create and localize bubble cloud treatments for robot arm calibration [51]. |