System Suitability Testing: A Foundational Guide for Reliable Analytical Results

This article provides a comprehensive guide to System Suitability Testing (SST) for researchers, scientists, and drug development professionals.

System Suitability Testing: A Foundational Guide for Reliable Analytical Results

Abstract

This article provides a comprehensive guide to System Suitability Testing (SST) for researchers, scientists, and drug development professionals. It covers the foundational principles of SST as a critical quality control check to verify that the entire analytical system—instrument, column, reagents, and operator—is performing adequately before sample analysis. The scope extends from defining key chromatographic parameters and their acceptance criteria, through the practical implementation of protocols, to troubleshooting common failures and understanding regulatory requirements. By synthesizing methodological applications with validation principles, this guide aims to equip professionals with the knowledge to ensure data integrity, maintain regulatory compliance, and uphold the quality of biomedical and clinical research.

What is System Suitability Testing? The Bedrock of Data Integrity

System Suitability Testing (SST) serves as a critical quality assurance checkpoint in analytical chemistry, ensuring that instruments and methods perform adequately before sample analysis. This technical guide explores SST's fundamental role within pharmaceutical research and drug development, framing it as an indispensable gatekeeper for data integrity. We examine core SST parameters, regulatory requirements, and implementation protocols that collectively provide scientists with verified confidence in their analytical results. Within a broader research context, SST represents the operational bridge between validated methods and reliable daily performance, establishing that the analytical system remains "fit-for-purpose" for each specific use [1] [2].

System Suitability Testing comprises a set of verification procedures performed to confirm that an analytical system operates appropriately for its intended application before sample analysis begins [2]. Unlike method validation—which comprehensively establishes a method's reliability through parameters like accuracy, precision, and specificity—SST provides ongoing verification that the analytical system (including instruments, reagents, columns, and operators) functions properly for each specific test session [2]. This distinction positions SST as the final operational gatekeeper before analytical data generation, ensuring that previously validated methods continue to perform as expected during routine laboratory use [1].

The strategic importance of SST emerges from its role in safeguarding irreplaceable biological samples and ensuring the integrity of resulting data. As noted in metabolomics research, clinical samples require "careful planning, recruitment, financial support, and investment of time," making it "imperative that actions are put in place to minimise the loss of potentially irreplaceable biological samples" [1]. System suitability samples and blank samples "designed to test analytical performance metrics... qualify the instrument as 'fit for purpose' before biological test samples are analysed" [1]. This pre-analytical verification represents the ultimate quality control checkpoint before commitment to sample analysis.

Core Parameters and Acceptance Criteria

System suitability testing evaluates specific chromatographic performance characteristics against predefined acceptance criteria. These parameters collectively verify that the separation, detection, and measurement capabilities of the system meet the requirements for reliable analysis [3].

Critical Chromatographic Parameters

Table 1: Essential System Suitability Parameters and Acceptance Criteria

| Parameter | Definition | Calculation | Typical Acceptance Criteria | Purpose |

|---|---|---|---|---|

| Resolution (Rₛ) | Measure of separation between two peaks | ( RS=\frac {tRB – tRA}{0.5 (WA + W_B) } ) [3] | ≥2.0 for baseline separation [2] | Ensures complete separation of analytes |

| Tailing Factor (T) | Measure of peak symmetry | ( T = \frac {a+b}{2a} ) (at 5% peak height) [3] | ≤2.0 [3] | Indicates proper column condition and appropriate mobile phase |

| Theoretical Plates (N) | Measure of column efficiency | ( N =16\left[\frac{(tR)}{W}\right]^2 ) or ( N = 5.54\left[\frac{(tR)}{W_{1/2}}\right]^2 ) [3] | ≥2000 [3] | Quantifies chromatographic column performance |

| Precision (RSD) | Measure of injection reproducibility | ( RSD = \frac{Standard\ Deviation}{Mean} \times 100\% ) | ≤2.0% for 5-6 replicate injections [3] [2] | Verifies system precision and injection volume accuracy |

| Signal-to-Noise Ratio (S/N) | Measure of detection sensitivity | ( S/N = \frac{Peak\ Height}{Baseline\ Noise} ) | ≥10:1 for quantitation; ≥3:1 for detection [2] | Ensures adequate detection capability |

| Retention Factor (k') | Measure of compound retention | ( k' = \frac{tr – tm}{t_m} ) [3] | >2.0 [3] | Confirms appropriate retention and separation |

These parameters must be evaluated collectively, as they provide complementary information about system performance. For example, adequate resolution between peaks is fundamental for accurate quantification, while appropriate tailing factors indicate proper column condition and suitable mobile phase composition [3]. Precision across replicate injections demonstrates system stability, and theoretical plates quantify overall column efficiency [3].

Regulatory Framework and Guidelines

System suitability testing requirements are established across multiple regulatory frameworks, with slight variations in emphasis between organizations. The Food and Drug Administration (FDA) emphasizes data integrity, typically requiring five replicate injections for precision verification [2]. The United States Pharmacopeia (USP) provides detailed procedural instructions for chromatographic methods, focusing particularly on resolution and tailing factor criteria [2]. The International Council for Harmonisation (ICH) guidelines emphasize method reproducibility, with specific attention to retention time stability [2].

The European Directorate for the Quality of Medicines (EDQM) clarifies that in monograph assays that cross-reference purity tests, "the system suitability test (SST) is part of the analytical procedure" and must be included even when not explicitly stated [4]. Recent revisions to pharmacopeial standards aim to enhance clarity, with new and revised monographs explicitly stating "which of the SST criteria described in the purity test need to be checked" [4].

Table 2: Regulatory Requirements for System Suitability Testing

| Regulatory Authority | Primary Focus | Key Requirements | Documentation Emphasis |

|---|---|---|---|

| FDA | Data integrity | 5 replicate injections, precision verification | Comprehensive audit trails, instrument IDs, analyst information |

| USP | Method performance | Resolution, tailing factor, theoretical plates | Detailed system suitability parameters and acceptance criteria |

| ICH | Method reproducibility | Retention time stability, intermediate precision | Validation parameters, cross-laboratory consistency |

| EDQM | Monograph compliance | Selectivity testing, resolution or peak-to-valley ratio | Explicit reference solutions, justified exceptions |

Regulatory inspections rigorously review system suitability documentation, including "instrument IDs, software versions, analyst names, and timestamps" to ensure complete traceability [2]. Any deviations from established parameters "must be thoroughly investigated and documented with appropriate justifications" [2].

Experimental Protocols and Methodologies

Implementing effective system suitability testing requires standardized protocols for sample preparation, system qualification, and data assessment. The following methodologies represent best practices for establishing robust SST protocols.

System Suitability Sample Preparation

System suitability samples typically contain "a small number of authentic chemical standards (typically five to ten analytes) dissolved in a chromatographically suitable diluent" [1]. These analytes should be "distributed as fully as possible across the m/z range and the retention time range so to assess the full analysis window" [1]. This composition allows comprehensive assessment of system performance across the entire analytical scope.

Blank Sample Analysis

The SST protocol should commence with "a 'blank' gradient with no sample as this will reveal problems due to impurities in the solvents or contamination of the separations system including the LC/GC/CE column" [1]. Only after verifying a clean blank should the system suitability sample be analyzed.

Assessment Criteria and Corrective Actions

Acceptance criteria should be established before analysis. Example criteria include: "(i) m/z error of 5 ppm compared to theoretical mass, (ii) retention time error of < 2% compared to the defined retention time, (iii) peak area equal to a predefined acceptable peak area ± 10% and (iv) symmetrical peak shape with no evidence of peak splitting" [1]. When acceptance criteria are not met, "corrective maintenance of the analytical platform should be performed and the system suitability check solution reanalysed" [1].

Testing Frequency and Placement

System suitability testing should be performed "at the beginning of each batch analysis, after significant instrument maintenance, or when questionable results appear" [2]. For extended analytical sequences, "a system suitability sample can be analysed at the end of each batch to act as a rapid indicator of intermediate system level quality failure" [1]. This strategic placement ensures continuous system monitoring throughout analytical sequences.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for System Suitability Testing

| Item | Function | Application Notes |

|---|---|---|

| Authentic Chemical Standards | System suitability test compounds | Select 5-10 analytes distributed across retention time and m/z range [1] |

| Chromatographically Suitable Diluent | Solvent for SST samples | Must not interfere with analysis; typically matched to initial mobile phase conditions |

| Isotopically-Labelled Internal Standards | System stability assessment | Added to each sample to monitor system performance during analysis [1] |

| Pooled QC Samples | Intra-study reproducibility | Used to condition analytical platform and perform reproducibility measurements [1] |

| Standard Reference Materials (SRMs) | Inter-laboratory standardization | Certified reference materials for cross-laboratory data comparison [1] |

| Long-Term Reference (LTR) QC Samples | Longitudinal performance monitoring | Track system performance over extended periods [1] |

| Blank Samples | Contamination assessment | Analyze without injection to identify system contaminants [1] |

Troubleshooting Common SST Failures

Despite careful method development, system suitability testing may reveal performance issues requiring intervention. The following troubleshooting strategies address common SST failure modes.

Resolution Challenges

When chromatographic resolution falls below acceptance criteria (typically Rs ≥ 2.0), corrective actions may include: "Change of mobile phase polarity, increase of column length, [or] reducing particle size of stationary phase" [3]. Method adjustments should systematically address the underlying separation mechanism while maintaining other critical parameters.

Precision Deviations

When relative standard deviation (RSD) exceeds acceptance criteria (typically ≤2.0%), investigators should "check sample preparation techniques, verify instrument performance, and examine autosampler stability" [2]. Precision failures often indicate inconsistent injection volumes, sample degradation, or instrumental drift.

Peak Tailing Issues

Peak tailing (tailing factor exceeding limits, typically ≤2.0) can be addressed by "cleaning or replacing columns, adjusting pH of mobile phase, or reducing sample concentration to minimize overloading effects" [2]. Peak tailing often indicates active sites in the chromatographic system or inappropriate mobile phase conditions.

Integration in Analytical Method Lifecycle

System suitability testing represents a critical component throughout the analytical method lifecycle rather than a standalone activity. During method development, system suitability tests establish baseline acceptance criteria reflecting the method's critical quality attributes [2]. Through method validation and transfer, SST parameters provide objective evidence of consistent performance across instruments and operators [2]. During routine use, "continuous monitoring during routine testing detect[s] subtle changes in system performance before they affect results" [2].

This integrated approach "ensures your analytical methods remain fit for purpose throughout their lifecycle, supporting effective quality control and regulatory compliance" [2]. The strategic application of SST data trends informs lifecycle management decisions, "including when to initiate method improvements or revalidation" [2].

System Suitability Testing stands as the final gatekeeper of data quality, providing scientifically sound verification that analytical systems perform appropriately before sample analysis. Through standardized assessment of critical parameters including resolution, precision, and peak symmetry, SST ensures the integrity of analytical results supporting pharmaceutical research and drug development. When implemented within a robust regulatory framework and integrated throughout the method lifecycle, system suitability testing provides researchers with verified confidence in their data, ultimately supporting the development of safe and effective medicines. As analytical technologies advance, the fundamental principles of SST remain essential for maintaining scientific rigor in chemical measurement.

In pharmaceutical analysis, ensuring the reliability of data is paramount. Two fundamental processes that underpin data quality are method validation and system suitability testing (SST). Though often discussed together, they serve distinct and complementary roles in the analytical method lifecycle. Method validation is a comprehensive, one-time process that establishes a method's performance characteristics, proving it is fit-for-purpose [5] [6]. In contrast, system suitability testing is an ongoing, routine verification that the entire analytical system—instrument, column, reagents, and operator—functions correctly on the specific day of analysis [7] [2].

Understanding this distinction is critical for researchers, scientists, and drug development professionals. It ensures not only regulatory compliance but also the scientific integrity of the data generated throughout the drug development pipeline. This guide explores the unique purposes, protocols, and regulatory contexts of each process, framing them as the essential, interconnected pillars of quality in analytical science.

Core Conceptual Frameworks and Definitions

Method Validation: Establishing Fitness for Purpose

Method validation is the documented process of proving that an analytical procedure is suitable for its intended use [5]. It provides comprehensive evidence that the method consistently generates accurate, precise, and reliable data across its defined operational range. Validation is typically performed when developing a new method, significantly altering an existing one, or applying a method to a new product or matrix [5] [6].

The core objective is to characterize and quantify the method's performance by evaluating key parameters such as accuracy, precision, and specificity. This process creates a validated state for the method, which serves as the performance baseline for its entire operational lifetime [5].

System Suitability Testing (SST): The Point-of-Use Quality Gate

System suitability testing is a formal, prescribed test conducted prior to sample analysis to verify that the complete analytical system performs according to the criteria established during method validation [7] [2]. While method validation proves the procedure works in theory, SST proves the specific instrument, on that specific day, is capable of delivering the validated performance [7].

SST acts as the final quality gatekeeper before sample analysis begins. It is not a substitute for method validation but rather a complementary process that ensures the validated method performs as expected under actual conditions of use [2]. SST confirms real-time system functionality and helps prevent the costly re-analysis of samples due to system malfunctions [7].

Table: Core Definitions and Objectives

| Aspect | Method Validation | System Suitability Testing (SST) |

|---|---|---|

| Primary Objective | Establish method performance characteristics | Verify analytical system performance at time of use |

| Timing & Frequency | One-time (at method development, transfer, or major change) | Ongoing (before each analytical run or batch) |

| Scope | Comprehensive evaluation of the analytical procedure | Targeted check of the instrument-system combination |

| Regulatory Basis | ICH Q2(R2), USP <1225> [5] | USP <621>, FDA guidance [7] [8] [9] |

Comprehensive Comparison: Purpose, Timing, and Scope

The distinction between method validation and SST extends beyond their definitions into their fundamental purposes, timing within the method lifecycle, and scope of assessment. Method validation is a forward-looking, comprehensive investigation that defines a method's capabilities and limitations before it is deployed for routine use. It answers the question, "Is this method capable of consistently producing reliable data for its intended application?" [5] [6]. This requires a substantial investment of time and resources, often taking weeks or months to complete, and is a regulatory requirement for new method submissions [6].

In contrast, SST is a retrospective, focused verification conducted with each use of the method. It answers the question, "Is my system working properly today?" [7] [2]. SST is a day-to-day operational check designed to catch performance drift or failure resulting from various factors including column degradation, mobile phase decomposition, minor instrument malfunctions, or environmental fluctuations. Its scope is narrower, focusing only on the critical parameters needed to confirm the system's readiness for the immediate analysis [7].

This complementary relationship creates a robust quality framework: validation sets the performance standards, and SST ensures those standards are met every time the method is executed.

Table: Detailed Comparative Analysis of Method Validation vs. SST

| Comparison Factor | Method Validation | System Suitability Testing (SST) |

|---|---|---|

| Purpose & Philosophy | Establishes method reliability and fitness for purpose [5] | Verifies instrument-system functionality for a specific run [7] [2] |

| Timing in Lifecycle | Pre-implementation; at development, transfer, or major change [5] [6] | Pre-analysis; at the start of each batch or analytical run [7] |

| Scope of Assessment | Comprehensive (Accuracy, Precision, Specificity, Linearity, Range, Robustness, LOD/LOQ) [5] [6] | Targeted (Resolution, Precision, Tailing Factor, Plate Count, S/N) [7] [2] [9] |

| Regulatory Guidance | ICH Q2(R2), USP <1225> [5] | USP <621>, FDA guidance, ICH [7] [8] [9] |

| Resource Intensity | High (time, cost, materials) [6] | Relatively low (quick to perform) [6] |

| Outcome | Validated method and documented performance characteristics [5] | Pass/Fail decision for the analytical run [7] |

Experimental Protocols and Key Parameters

Method Validation Protocols and Parameters

Method validation involves a series of structured experiments to define the operational boundaries and reliability of an analytical procedure. The key parameters assessed are defined by guidelines such as ICH Q2(R2) and USP <1225> [5]. A critical component for assessing the method's real-world performance with actual samples is the Comparison of Methods experiment, which estimates systematic error or inaccuracy [10].

Protocol for Comparison of Methods Experiment:

- Purpose: To estimate inaccuracy or systematic error by comparing results from a test method against a comparative method using patient samples [10].

- Comparative Method: Ideally, a well-characterized reference method. If a routine method is used, discrepancies may require additional experiments (e.g., recovery, interference) to identify the source of error [10].

- Specimens: A minimum of 40 patient specimens is recommended, selected to cover the entire working range of the method and represent the expected spectrum of sample matrices [10].

- Experimental Design: Specimens should be analyzed by both methods within a short timeframe (e.g., 2 hours) to maintain stability. The study should span multiple days (minimum of 5 days) to account for run-to-run variability [10].

- Data Analysis: Data should be graphed (e.g., difference plot, comparison plot) for visual inspection to identify outliers or trends. Statistical analysis, such as linear regression (for wide analytical ranges) or a paired t-test (for narrow ranges), is used to quantify systematic error at medically relevant decision concentrations [10].

System Suitability Testing Protocols and Parameters

SST is performed by injecting a reference standard or mixture and measuring key chromatographic parameters against predefined acceptance criteria derived from method validation [7] [2]. A robust SST protocol is critical for ensuring daily data quality.

Protocol for System Suitability Testing:

- Develop SST Protocol: During method validation, define the specific parameters, acceptance criteria, and testing frequency (e.g., start of run, every 24 hours) [7].

- Prepare SST Solution: Use a reference standard at a concentration representative of a typical sample [7].

- Perform the Test: Conduct replicate injections (typically 5-6) to assess reproducibility [7].

- Evaluate Results: The software calculates SST parameters and compares them against criteria.

- Act on Outcome: Pass allows sample analysis to proceed. Fail requires immediate run stoppage, troubleshooting, and re-running SST after corrective actions [7].

Key SST Parameters and Acceptance Criteria:

- Resolution (Rs): Measures separation between adjacent peaks. A value of ≥ 2.0 is typically required for baseline separation [7] [2] [9].

- Tailing Factor (T): Measures peak symmetry. Acceptance criteria are typically between 0.8 and 1.5, indicating a symmetrical peak [7] [9].

- Theoretical Plates (N): Indicates column efficiency. A minimum plate count is required to ensure the column is performing adequately [7].

- Relative Standard Deviation (%RSD): Measures the reproducibility of replicate injections. Acceptance is typically ≤ 1.0% or 2.0% for peak areas or retention times [7] [9].

- Signal-to-Noise Ratio (S/N): Assesses detector sensitivity, especially for impurities. A minimum S/N of 10:1 is standard for quantitation [7] [8] [2].

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing robust method validation and SST requires high-quality materials and reagents. The following table details key solutions and their critical functions in ensuring data integrity.

Table: Key Research Reagent Solutions and Their Functions

| Reagent/Material | Function in Analytical Quality Control |

|---|---|

| Certified Reference Standards | Provide traceable, high-purity materials for accurate quantification and system suitability testing. Essential for preparing SST solutions and for method validation experiments [7]. |

| Chromatography Columns | The stationary phase responsible for analyte separation. Column performance directly impacts critical SST parameters like resolution, tailing factor, and plate count [7]. |

| Qualified Mobile Phase Solvents | High-purity solvents and buffers form the mobile phase. Their quality and consistent preparation are vital for maintaining retention time reproducibility and baseline stability [7]. |

| System Suitability Test Mixes | Proprietary or custom-blended solutions containing specific analytes designed to challenge the system and verify performance against all SST parameters in a single injection [7]. |

Regulatory Landscape and Compliance Framework

The regulatory framework governing method validation and SST is well-established, with guidelines from international bodies providing clear expectations.

- Method Validation: Governed primarily by ICH Q2(R2) - "Validation of Analytical Procedures" and USP <1225> - "Validation of Compendial Procedures" [5]. These guidelines outline the specific performance characteristics that must be validated for a new method, depending on its category (e.g., identification, assay, impurity test) [5].

- System Suitability Testing: Primarily covered by USP <621> - "Chromatography," a mandatory general chapter [8] [9]. This chapter details the principles and allowable adjustments for chromatographic methods and underscores that SST is a prerequisite for analysis. Recent updates to USP <621>, effective May 2025, include refined definitions for "System Sensitivity" (signal-to-noise) and "Peak Symmetry," emphasizing their role in impurity methods [8]. Regulatory agencies like the FDA require documented evidence that SST was passed before sample analysis and mandate investigation of any failures [7] [9].

It is critical to distinguish these processes from Analytical Instrument Qualification (AIQ). As defined in USP <1058>, AIQ ensures the instrument itself is fit-for-purpose, independent of any specific method. SST, in contrast, is method-specific and verifies the entire system's performance on the day of analysis [9]. Together, AIQ, method validation, and SST form a comprehensive data quality triangle [9].

Method validation and System Suitability Testing are not interchangeable but are fundamentally interdependent components of a robust quality system in pharmaceutical analysis. Method validation provides the foundational proof that a procedure is capable of producing reliable data, while SST offers ongoing assurance that the validated performance is delivered with every use.

A deep understanding of their distinct roles—from purpose and timing to specific parameters and protocols—empowers scientists to design more reliable methods, operate more predictable analytical systems, and generate data of the highest integrity. As regulatory frameworks evolve, such as the recent updates to USP <621> and the move toward an Analytical Procedure Lifecycle approach, this foundational knowledge remains the cornerstone of scientific excellence in drug development.

System Suitability Testing (SST) serves as an indispensable gatekeeper in the analytical laboratory, providing the final verification that an entire chromatographic system is fit-for-purpose before valuable samples are analyzed [7]. In the high-stakes environment of pharmaceutical development, where a single analytical run can represent a significant investment of time and resources, SST acts as a critical quality assurance measure to prevent wasted effort and uphold the integrity of every data point generated [7]. This guide explores the core principles, parameters, and practical implementation of SST, framing it within the broader context of analytical research and drug development fundamentals.

System Suitability Testing is a formal, prescribed test of an analytical system's performance, conducted by injecting a reference standard and measuring the response against predefined acceptance criteria [7]. It is crucial to distinguish SST from method validation; while method validation proves a method is reliable in theory, Suitability Testing confirms that the specific instrument, on a specific day, is capable of generating high-quality data according to the validated method's requirements [7]. This real-time verification is a proactive measure that protects against subtle performance shifts caused by a failing column, minor temperature fluctuations, or mobile phase degradation—issues that could otherwise quietly compromise an entire analytical run [7].

In regulated environments, SST is not merely a best practice but a mandatory requirement. The United States Pharmacopeia (USP) general chapter <621> provides the foundational framework for chromatographic testing, with recent updates to system suitability requirements becoming effective May 1, 2025 [8]. These updates, which include refined definitions for system sensitivity and peak symmetry, underscore the living nature of regulatory standards and the need for laboratories to maintain current awareness [8].

Core SST Parameters and Quantitative Acceptance Criteria

The parameters evaluated during SST are carefully chosen to reflect the most critical aspects of chromatographic separation. These metrics collectively quantify separation quality, column efficiency, and instrument reproducibility. The following table summarizes the key SST parameters and their critical roles in ensuring data integrity.

Table 1: Essential System Suitability Test Parameters and Purposes

| Parameter | Abbreviation | Purpose | Typical Acceptance Criteria |

|---|---|---|---|

| Resolution | Rs | Measures how well two adjacent peaks are separated; critical in complex matrices [7]. | Set during method validation; ensures separation meets standard [7]. |

| Tailing Factor | T | Measures peak symmetry; ideal peak has factor of 1.0 [7]. | Factor >1.0 indicates tailing, which can cause inaccurate integration [7]. |

| Plate Count | N | Also called column efficiency; measures number of theoretical plates in a column [7]. | Must be above minimum required count; decreases as column degrades [7]. |

| Relative Standard Deviation | %RSD | Measures instrument reproducibility from multiple injections of the same standard [7]. | Typically <1.0% or 2.0% for replicate injections [7]. |

| Signal-to-Noise Ratio | S/N | Assesses detector performance, especially for trace-level analysis [7]. | Minimum set to ensure sufficient method sensitivity [7]. |

The updated USP <621> guidelines provide specific directives for measuring two key parameters. For system sensitivity, the requirement applies when determining impurities, and the signal-to-noise (S/N) ratio must be measured using the pharmacopoeial reference standard, not a sample [8]. Furthermore, the S/N measurement must demonstrate that the limit of quantification (LOQ) meets the monograph requirement, which is typically based on an S/N of 10 [8]. For peak symmetry, the new definition provides a more precise calculation, emphasizing the need for consistent measurement at a specified percentage of the peak height (e.g., 5% or 10%) to ensure reliable and comparable results [8].

The SST Workflow: From Test to Action

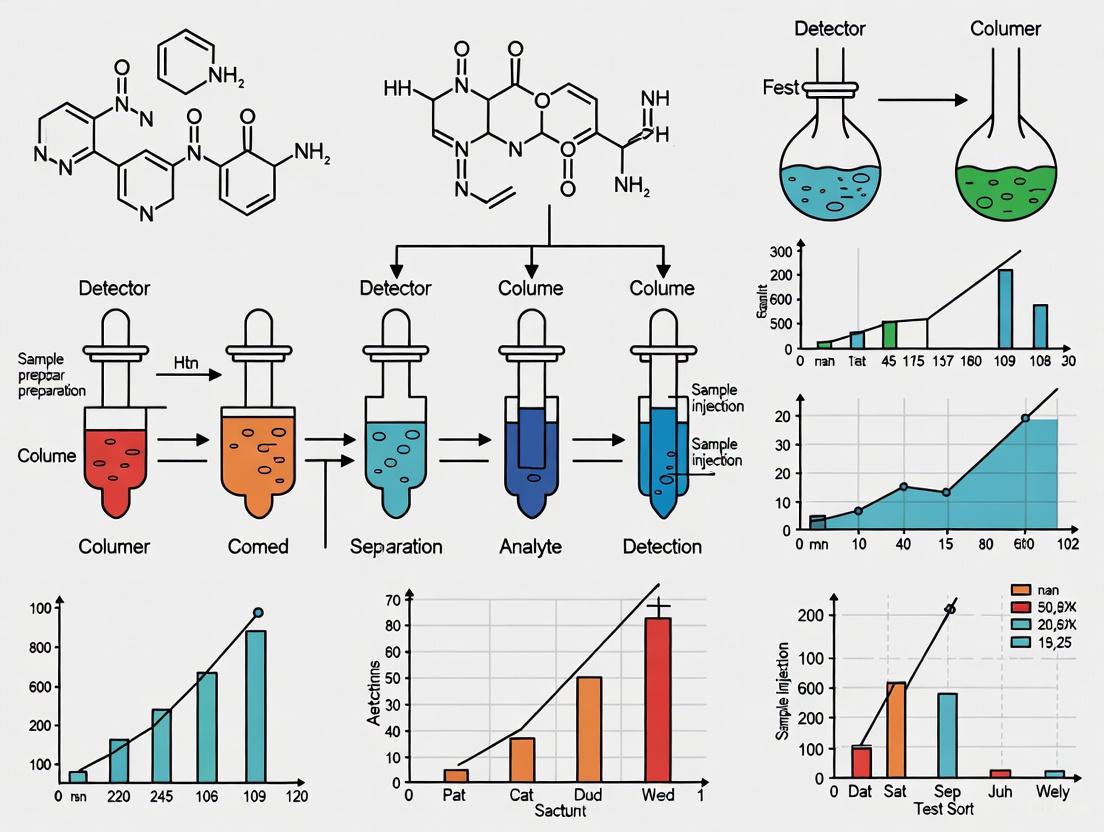

A successful SST protocol requires a clear, actionable plan for execution and response. The following diagram illustrates the logical workflow for implementing SST, from initial preparation through to the critical decision points that determine the fate of an analytical run.

Diagram 1: System Suitability Testing Workflow

This workflow transforms SST from a passive check into an active quality control mechanism. Adherence to this process ensures that every reported result is generated on a system that was demonstrably suitable for the task, thereby building an unassailable foundation of confidence in the data [7].

Essential Research Reagents and Materials

The execution of robust SST relies on specific, high-quality materials. The following table details key research reagent solutions and their critical functions in the SST process.

Table 2: Essential Research Reagent Solutions for SST

| Reagent/Material | Function in SST | Critical Specifications |

|---|---|---|

| SST Reference Standard | Injected to generate system response; serves as the benchmark for performance [7]. | Certified Reference Material (CRM) or pharmacopoeial reference standard; concentration representative of sample [7] [8]. |

| HPLC-Grade Mobile Phase | Carries the analyte through the chromatographic system; its consistency is vital [7]. | Prepared accurately to method specification; degassed to prevent air bubbles [7]. |

| Qualified Chromatographic Column | Stationary phase where chemical separation occurs [7]. | Meets method specifications for chemistry, dimensions, and particle size; performance verified by plate count [7]. |

The Broader Context: SST's Role in Drug Development and Regulatory Compliance

The critical purpose of SST becomes even more apparent when viewed within the extensive and costly framework of drug development. The process from target identification to regulatory approval often exceeds 12 years with an average cost of about $2.6 billion [11]. In this context, the failure of a single analytical run due to an unsuitable system can cause significant delays and increase costs. SST serves as a vital checkpoint to "fail fast, fail early," preventing the propagation of unreliable data that could lead to costly late-stage failures or the need to repeat entire batches of samples [7] [11].

SST is a cornerstone of the modern quality landscape that includes Analytical Instrument Qualification (AIQ) and Computer Software Assurance (CSA). A proposed update to USP <1058 reframes it as Analytical Instrument and System Qualification (AISQ), introducing a three-phase integrated lifecycle approach: Specification and Selection, Installation and Qualification, and Ongoing Performance Verification (OPV) [12]. In this model, SST functions as a primary tool for OPV, providing ongoing, day-to-day evidence that the system remains in a state of control [12]. Furthermore, the FDA's recent final guidance on Computer Software Assurance promotes a risk-based approach to software validation, aligning with the SST principle of focusing assurance activities where they are most needed to ensure data integrity and patient safety [13].

System Suitability Testing is far more than a regulatory checkbox. It is a fundamental practice that directly supports the integrity of scientific data and the efficiency of research and development. By preventing wasted effort on compromised analytical runs and guaranteeing that every result is generated by a system proven to be fit-for-purpose, SST provides a definitive return on investment. As analytical technologies and regulatory frameworks evolve, the core purpose of SST remains constant: to act as the final, critical gatekeeper, ensuring that every data point reported can be met with qualified confidence.

System Suitability Testing (SST) is a critical quality control measure that verifies the performance of an analytical system at the time of analysis. It ensures that the entire system—comprising the instrument, reagents, analytical method, and operator—is fit for its intended purpose before sample analysis commences. SST is not merely a regulatory formality but a fundamental scientific requirement that provides documented evidence of data integrity and reliability. Within the pharmaceutical industry, SST serves as the practical bridge between validated analytical procedures and the daily operation of analytical equipment, guaranteeing that the results generated are accurate, precise, and defensible [7] [14].

The regulatory framework for system suitability is underpinned by a harmonized approach from major international bodies, including the U.S. Food and Drug Administration (FDA), the United States Pharmacopeia (USP), and the International Council for Harmonisation (ICH). These organizations provide the guidelines and enforceable standards that govern the implementation and acceptance criteria for SST. For instance, the FDA emphasizes that if an assay fails system suitability, the entire run is discarded, and no results are reported other than the failure itself, highlighting the critical role of SST in decision-making [14]. The ongoing collaboration between these bodies is evidenced by workshops aimed at increasing stakeholder awareness of USP standards, which are essential for regulatory predictability throughout the drug product lifecycle [15].

The Regulatory Framework and Its Key Players

United States Pharmacopeia (USP)

The USP publishes legally recognized standards for medicines in the United States, including comprehensive chapters detailing SST requirements. USP Chapter <621> specifically addresses chromatography and provides detailed procedures and acceptance criteria for system suitability parameters. USP Chapter <1058> on Analytical Instrument Qualification (AIQ) clarifies the distinct but complementary roles of AIQ and SST, establishing that SST is a method-specific test to verify performance at the time of use, while AIQ ensures the instrument itself is qualified [16] [14]. The USP's stance is unequivocal: SST is an integral part of chromatographic methods, and its failure invalidates the entire analytical run [14].

U.S. Food and Drug Administration (FDA)

The FDA enforces the use of compendial standards, including those in the USP, as required by the Federal Food, Drug, and Cosmetic Act [16]. Through its guidance documents and inspection activities, the FDA mandates that laboratories implement robust SST protocols. The agency has issued warning letters to firms for incorrect practices related to SST, underscoring its importance in current Good Manufacturing Practices (cGMP) [14]. The FDA's participation in the USP standards development process further solidifies the integral role of SST in the regulatory landscape for ensuring drug quality and safety [15].

International Council for Harmonisation (ICH)

The ICH provides internationally harmonized guidelines that form the foundation for method validation. While ICH Q2(R1) focuses on the validation of analytical procedures itself, it establishes the principles for accuracy, precision, and specificity that SST later monitors during routine use [17]. The robustness of an analytical method, a key validation parameter defined in ICH guidelines, is directly assessed through system suitability testing. As stated in ICH guidelines, one consequence of robustness testing should be the establishment of a series of system suitability parameters to ensure the validity of the analytical procedure is maintained whenever used [18].

The following diagram illustrates the interconnected roles of these regulatory bodies and foundational processes in establishing a compliant system suitability testing regimen.

Core System Suitability Parameters and Acceptance Criteria

System suitability testing evaluates specific, predefined parameters that collectively demonstrate the chromatographic system's performance. These parameters are established during method validation and are checked against strict acceptance criteria before any sample analysis. The following table summarizes the key parameters, their definitions, and typical acceptance criteria as guided by USP, FDA, and ICH.

Table 1: Key System Suitability Parameters and Acceptance Criteria for Chromatographic Methods

| Parameter | Definition & Measurement | Regulatory Basis & Typical Acceptance Criteria |

|---|---|---|

| Resolution (Rs) | Measures separation of two adjacent peaks. Formula: Rs = 2 * (tR2 - tR1) / (W1 + W2) where tR is retention time and W is peak width at baseline [17]. | Critical for accurate quantification. USP/ICH typically require Rs > 1.5 between the analyte and the closest eluting potential interferent [17]. |

| Tailing Factor (T) | Assesses peak symmetry. Formula: T = (b + c) / 2a, where 'a' is the distance from peak front to the peak maximum at 5% height, and b+c is the total peak width at 5% height [17]. | A perfectly symmetrical peak has T = 1.0. USP typically specifies T ≤ 2.0 to ensure accurate integration and quantification [17] [7]. |

| Theoretical Plates (N) | Measures column efficiency. Calculated from the retention time and peak width [17]. | A higher 'N' indicates a more efficient column. A minimum value is set during method validation based on the performance of a new column. |

| Precision/Repeatability (%RSD) | Measures instrumental precision via the Relative Standard Deviation of peak areas or retention times from multiple replicate injections of a standard [7] [14]. | USP <621> provides calculations; for assays, %RSD ≤ 1.0% for 5-6 replicates is common. Criteria are stricter for impurities or narrower specifications [14]. |

| Signal-to-Noise Ratio (S/N) | Assesses detector sensitivity and the ability to distinguish the analyte signal from background noise [7] [14]. | Essential for trace analysis. A minimum S/N ≥ 10 is often required for quantification, and S/N ≥ 3 for detection limits [7]. |

Establishing System Suitability: Protocols and Methodologies

Experimental Workflow for SST in HPLC

A standardized protocol is essential for executing a reliable System Suitability Test. The following workflow, applicable to a High-Performance Liquid Chromatography (HPLC) method for related substance analysis, details the key experimental steps.

Table 2: Experimental Protocol for SST in an HPLC Related Substance Method

| Step | Activity | Details & Specification |

|---|---|---|

| 1. Preparation | Prepare System Suitability Solution | A mixture containing the main analyte and critical known impurities at specified concentrations. For Docetaxel analysis, a solution with ~2 mg in 2 mL diluent was used [17]. |

| 2. Chromatographic Conditions | Set Operating Parameters | As defined in the validated method. Example: Column: Zorbax XDB C18 (150 mm x 4.6 mm, 5µm); Flow Rate: 1.5 mL/min; Detection: 230 nm; Column Oven: 30°C [17]. |

| 3. System Equilibration | Condition the System | Flush the column with mobile phase until a stable baseline is achieved, indicating system readiness. |

| 4. Injection Sequence | Run SST and Check Precision | Inject the sequence: (1) Blank, (2) System Suitability Solution (to check resolution), (3) Five or six replicates of the Standard Solution (to check precision) [17] [14]. |

| 5. Data Analysis | Calculate SST Parameters | From the chromatograms, software automatically calculates Resolution, Tailing Factor, Theoretical Plates, and %RSD. |

| 6. Acceptance Check | Compare to Pre-set Criteria | Compare calculated parameters against the method's acceptance criteria (e.g., Resolution ≥ 3.0, Tailing Factor ≈ 1.0, %RSD ≤ 0.6%) [17]. Proceed only if all criteria are met. |

The Scientist's Toolkit: Essential Research Reagents and Materials

The integrity of SST relies on the quality of materials used. The following table lists essential reagents and their functions in conducting a robust SST.

Table 3: Essential Research Reagent Solutions for System Suitability Testing

| Reagent / Material | Function & Importance in SST |

|---|---|

| System Suitability Test Solution | A reference standard or mixture of standards used to challenge the analytical system. It verifies that key performance parameters (e.g., resolution, peak shape) are met [7]. |

| High-Purity Reference Standards | Certified reference materials of known identity and purity. The FDA expects the use of highly pure primary or secondary reference standards, qualified against a former standard and not from the same batch as test samples [14]. |

| Chromatography Column | The stationary phase specified in the method. Its performance is critical for achieving the required separation, efficiency (theoretical plates), and peak symmetry [17] [7]. |

| HPLC-Grade Solvents & Mobile Phase | High-purity solvents and mobile phase components are essential to minimize background noise, maintain detector stability, and prevent column contamination that could degrade performance [1]. |

| SST for Non-Chromatographic Methods (e.g., ELISA, SDS-PAGE) | For ELISA, verifying that control standards fall within the manufacturer's specification acts as an SST [14]. For SDS-PAGE, a well-separated molecular weight marker serves as the SST [14]. |

Determining SST Limits via Robustness Testing

A scientifically rigorous approach to setting SST limits involves robustness testing, as defined by ICH. Robustness evaluates the capacity of an analytical procedure to remain unaffected by small, deliberate variations in method parameters (e.g., mobile phase pH, flow rate, column temperature) [18]. The experimental design for robustness often uses fractional factorial or Plackett-Burman designs to efficiently examine multiple factors simultaneously. The system suitability limits can then be defined based on the "worst-case" results from the robustness test. This ensures that the SST criteria are wide enough to accommodate normal operational variations but tight enough to detect meaningful performance degradation, thereby guaranteeing the method remains valid throughout its use [18].

System Suitability Testing is a non-negotiable pillar of pharmaceutical analysis, firmly embedded in the regulatory requirements of the FDA, USP, and ICH. It is the final, practical checkpoint that ensures an analytical method, executed on a specific instrument on a specific day, produces data that is reliable, accurate, and fit for its intended purpose—whether for releasing a drug product, supporting its stability, or ensuring patient safety. By understanding the regulatory landscape, meticulously implementing the core parameters and experimental protocols, and leveraging robustness testing to set scientifically justified limits, researchers and drug development professionals can build an unassailable foundation of data integrity and regulatory compliance.

Implementing SST: Key Parameters, Protocols, and Best Practices

System Suitability Testing (SST) is a critical pharmacopeial requirement that verifies the performance of the chromatographic system at the time of analysis. According to the United States Pharmacopeia (USP), system suitability tests are an integral part of chromatographic methods, used to verify that the resolution and reproducibility of the chromatographic system are adequate for the intended analysis [16]. These tests are based on the concept that the equipment, electronics, analytical operations, and samples constitute an integral system that can be evaluated as a whole [16]. SST serves as a final checkpoint confirming that the entire analytical system—comprising the instrument, column, mobile phase, and software—is functioning properly for a specific method on the day of analysis [9]. This testing is distinct from Analytical Instrument Qualification (AIQ), which ensures instruments are fit for purpose independent of any specific method [9].

The core principle of SST is to detect any inadequacies in the chromatographic system before valuable samples are analyzed, thereby providing assurance that the system will yield reliable and reproducible results. Regulatory authorities, including the FDA, emphasize that if SST results fall outside acceptance criteria, the analytical run may be considered invalid [9]. This underscores the critical role of SST in maintaining data integrity and regulatory compliance in pharmaceutical analysis and drug development.

Core SST Parameters and Acceptance Criteria

System suitability testing evaluates several key chromatographic parameters to ensure optimal system performance. The four core parameters—resolution, precision, tailing factor, and theoretical plates (plate count)—provide a comprehensive assessment of separation efficiency, analytical precision, peak morphology, and column efficiency.

Table 1: Core System Suitability Parameters and Their Acceptance Criteria

| Parameter | Definition | Acceptance Criteria | Scientific Basis |

|---|---|---|---|

| Resolution (Rₛ) | Measure of separation between two adjacent peaks [19]. | ≥ 1.5 [19] [3] [9] | Ensures baseline separation for accurate quantitative analysis. |

| Precision | Measure of repeatability for replicate injections of a standard [9]. | %RSD ≤ 2.0% for peak areas and retention times (typically from 5-6 injections) [3] [9] | Demonstrates the system's reliability and analytical sensitivity [20]. |

| Tailing Factor (Tₛ) | Measure of peak symmetry [19] [3]. | ≤ 2.0 [3] [9] | Indicates a well-behaved chromatography and a healthy column. |

| Theoretical Plates (N) | Measure of column efficiency [19] [3]. | > 2000 is generally recommended [3] | A higher number indicates a more efficient column. |

These parameters are interdependent. For instance, a column with a sufficient number of theoretical plates contributes to achieving the required resolution and acceptable peak symmetry. The acceptance criteria are derived from pharmacopeial standards and are designed to be the minimum performance requirements before any sample analysis can proceed.

Experimental Protocols for SST

General Workflow for System Suitability Testing

A standardized workflow ensures that system suitability testing is performed consistently and correctly. The process begins with preparation and ends with a data-driven decision on whether the system is fit for use.

Detailed Methodology

The following protocols detail how to execute the tests for each core SST parameter.

1. Resolution (Rₛ)

- Procedure: Separately inject standard solutions of the two components of interest. Alternatively, use a system suitability reference solution containing a pair of closely eluting peaks that are critical to the separation. From the resulting chromatogram, measure the retention time of the second peak (tᵣ₂) and the first peak (tᵣ₁), and the baseline peak widths of the first peak (W₁) and the second peak (W₂).

- Calculation: Apply the USP formula to calculate resolution [19] [3]: [ RS = \frac{2(t{R2} - t{R1})}{W1 + W_2} ] Where W is the peak width at the baseline.

- Troubleshooting: If resolution is below 1.5, consider adjusting the mobile phase composition (polarity, pH), increasing the column length, or using a stationary phase with a smaller particle size [3].

2. Precision (Repeatability)

- Procedure: Prepare a standard solution as specified in the method. Inject this solution multiple times (typically n=5 or 6) using the same chromatographic conditions [3].

- Calculation: For the peak areas (and/or retention times) from the replicate injections, calculate the Relative Standard Deviation (RSD) [9]: [ \%RSD = \frac{Standard\ Deviation}{Mean} \times 100\% ] The %RSD for both area and retention time should typically be Not More Than (NMT) 2.0% [21] [9].

3. Tailing Factor (T)

- Procedure: Inject a standard solution and obtain a chromatogram with a well-defined peak. Measure the peak widths as per the USP definition.

- Calculation: Calculate the tailing factor using the formula [3]: [ T = \frac{a + b}{2a} ] Where, at 5% of the peak height, 'a' is the width of the leading edge and 'b' is the width of the tailing edge. A value of 1 indicates a perfectly symmetrical peak. Values NMT 2.0 are generally required [9]. Peak tailing often results from multiple analyte retention mechanisms and can be reduced by optimizing mobile phase pH or using end-capped stationary phases [3].

4. Theoretical Plates (Plate Count, N)

- Procedure: Inject a standard solution and obtain a chromatogram. Measure the retention time (tᵣ) and the peak width at half height (W₁/₂) or at the baseline (W).

- Calculation: Calculate the column efficiency using one of the following formulas [19] [3]: [ N = 16 \left( \frac{tR}{W} \right)^2 \quad \text{or} \quad N = 5.54 \left( \frac{tR}{W_{1/2}} \right)^2 ] While the acceptance criterion can be method-specific, the number of theoretical plates should generally be NLT 2000 for the peak of interest [3].

The Scientist's Toolkit: Essential Reagents and Materials

Successful and reproducible system suitability testing requires the use of high-quality, standardized materials.

Table 2: Essential Research Reagent Solutions and Materials for SST

| Item | Function & Importance |

|---|---|

| Chromatographically Pure Water | Used for preparing mobile phases and standards. Critical for minimizing baseline noise and ghost peaks, especially in gradient elution [1]. |

| HPLC/Grade Solvents | High-purity solvents (e.g., Methanol, Acetonitrile) for the mobile phase. Impurities can affect detection (UV) and degrade column performance [22]. |

| Certified Reference Standards | Well-characterized compounds of known purity and identity used to prepare system suitability test solutions. They are essential for generating accurate and reproducible SST results [1] [9]. |

| Characterized HPLC Column | A column that has been tested and demonstrated to provide the required efficiency (theoretical plates), selectivity, and peak shape for the specific method [20]. |

| System Suitability Test Solution | A solution containing a small number (e.g., five to ten) of authentic chemical standards that are distributed across the retention time and mass range to assess the full analytical window of the system prior to sample analysis [1]. |

Regulatory and Practical Considerations

System suitability testing is a mandatory requirement in regulated laboratories. The FDA has stated that if SST results fall outside acceptance criteria, the analytical run may be invalidated [9]. It is crucial to understand that SSTs are method-specific and are not a substitute for initial Analytical Instrument Qualification (AIQ) or Analytical Method Validation [9] [23].

The USP chapter <621> provides a degree of flexibility, permitting certain adjustments to methods (e.g., changes to column dimensions, flow rate, or mobile phase pH) without full re-validation, provided that all system suitability requirements are still met [9]. This allows laboratories to adapt methods for optimization while maintaining regulatory compliance.

For robust method development, SST parameters should be challenged during validation through robustness studies, where deliberate, small variations are made to operating parameters (e.g., mobile phase composition ±2%, column temperature ±5°C, flow rate ±0.1 mL/min) to ensure the method remains reliable under normal operational fluctuations [20].

The four core system suitability parameters—Resolution, Precision, Tailing Factor, and Theoretical Plate Count—form the foundation of reliable chromatographic analysis in drug development. They provide a standardized, quantitative means to ensure an analytical system is performing adequately and is "fit-for-purpose" at the moment of use. Adherence to the established acceptance criteria is not merely a regulatory formality but a fundamental scientific practice that guarantees the integrity, accuracy, and reproducibility of generated data. As such, a thorough understanding and rigorous application of SST are indispensable for every researcher and scientist working in pharmaceutical analysis.

System Suitability Testing (SST) serves as a critical verification step to ensure that an analytical system operates as intended for its specific application at the time of analysis [14]. It confirms the fitness-for-purpose of the entire chromatographic system—comprising the instrument, column, software, and reagents—immediately before a batch of samples is analyzed [7]. The foundation of a reliable SST lies in the careful selection of analytes and the precise preparation of reference standards used in the SST solution. This guide details the technical considerations and methodologies for these fundamental processes, providing a foundation for robust system suitability testing protocols in pharmaceutical research and development.

Core Principles and Purpose of SST

System Suitability Testing is a mandatory quality control measure in regulated laboratories, required by pharmacopoeias such as the United States Pharmacopoeia (USP) and the European Pharmacopoeia (Ph. Eur.) [14]. It is a method-specific check performed each time an analysis is conducted, distinguishing it from the broader Analytical Instrument Qualification (AIQ) [14]. The primary purpose of SST is to detect subtle performance shifts that can compromise data integrity, such as column degradation, mobile phase issues, or instrumental drift, thereby preventing the costly analysis of samples on an unsuitable system [7]. If an SST fails, the entire analytical run must be discarded, and no sample results can be reported [14].

Selection of Analytes for SST Solutions

The choice of analytes incorporated into the system suitability test solution is a deliberate process that directly impacts the test's effectiveness.

Ideal Characteristics of SST Analytes

An analyte selected for an SST solution should possess the following characteristics [24]:

- Easily available and cost-effective

- Chemically stable under normal storage and analytical conditions

- Good UV absorbance or detector response for the intended technique

- Good solubility in common solvents used in the method

- Relevance to the analytical process

Strategies for Composition of the SST Solution

The composition of the SST solution can be approached in several ways, depending on the analytical method's goals.

- SST Marker: A common approach involves using a "System Suitability Test marker" or "SST marker," which is a mixture containing all critical components upon which the SST acceptance criteria are decided [24]. This provides a comprehensive check of the system's performance for all relevant analytes in a single injection.

- Retention Time (RT) Marker: For methods with impurities of varying polarities, a Retention Time (RT) marker is recommended as the SST standard. This cost-effective approach helps monitor and control for variations in analyte retention times [24].

- Analyte-Specific Spiking: In mass spectrometry (LC-MS/MS), the SST material is typically specific to the assay and often includes the target analyte(s) and their corresponding internal standard(s) [25]. For assays detecting low-abundance species, it can be beneficial to spike known concentrations of impurity or variant peptides into a standard digest to explicitly define the method's sensitivity and detection limits [26].

Selecting the Number of SST Parameters

A robust SST should evaluate a sufficient number of chromatographic parameters to ensure overall system performance. It is recommended to include at least two chromatographic parameters in the SST, with 2 to 5 parameters being typical [24]. The specific parameters chosen should be based on the method's critical attributes, such as the presence of closely eluting peaks that require resolution checks [24].

Preparation of Reference Standards

The preparation of the reference standard solution used for SST is critical for obtaining consistent and reliable results.

Solution Preparation Guidelines

During establishment of the SST, several practical points should be considered to ensure the solution's integrity [14]:

- The sample and reference standard should be dissolved in the mobile phase or a similar amount of organic solvent whenever possible.

- The concentration of the sample and reference standard should be comparable to ensure representative performance.

- If filtration is necessary, one must account for the potential adhesion of the analyte to the filter membrane, which can be particularly significant at lower analyte concentrations.

Concentration Selection for SST Solutions

The concentration of the SST solution should be chosen based on the analytical method's specific challenges and requirements [25].

- For methods with a challenging Lower Limit of Quantitation (LLoQ), setting the SST concentration at 1x or 1.2x the LLoQ is advisable.

- If an assay is prone to carry-over, an SST concentration at the Upper Limit of Quantitation can help assess this issue.

- A general recommendation for initial setup is to use a concentration of 1.5x or 2x the LLoQ, which provides a confident signal to assess sensitivity and distinguish it from a complete loss of signal [25].

Regulatory and Best Practice Considerations

Regulatory agencies expect that SST criteria are established scientifically, supported by trend data from multiple lots [24]. The U.S. Food and Drug Administration (FDA) has clarified that high-purity primary or secondary reference standards should be used for SST in chromatographic methods. These standards must be qualified against a former reference standard and cannot originate from the same batch as the test samples, ensuring independence and objectivity [14].

SST Parameters and Acceptance Criteria

The performance of the SST solution is evaluated against predefined acceptance criteria for key chromatographic parameters. These criteria are typically established during method validation and are derived from the method's performance characteristics and robustness testing [27].

Table 1: Key Chromatographic Parameters for System Suitability Testing

| Parameter | Description | Typical Acceptance Criteria | Rationale |

|---|---|---|---|

| Precision (%RSD) | Measure of injection repeatability from multiple replicates [14] [7]. | RSD ≤ 2.0% for 5-6 replicates is common [14]. | Ensures the instrument provides consistent, reproducible results essential for accurate quantification [7]. |

| Resolution (Rs) | Measure of separation between two adjacent peaks [14] [7]. | Rs ≥ 1.5 between critical pairs [24]. | Verifies that the method can cleanly separate compounds of interest from potential interferences [14]. |

| Tailing Factor (T) | Measure of peak symmetry [14] [7]. | Typically between 0.8 and 1.8 [24]. | Asymmetric peaks (tailing or fronting) can lead to inaccurate integration and quantification [7]. |

| Theoretical Plates (N) | Measure of column efficiency [7] [24]. | Method-specific, e.g., N ≥ 4000 [24]. | A higher plate count indicates a more efficient column, which is vital for achieving good separations [7]. |

| Signal-to-Noise (S/N) | Ratio of analyte signal to background noise [7] [24]. | S/N ≥ 10 for quantitation limit solutions [24]. | Assesses detector performance and confirms the method's sensitivity is adequate, particularly for trace analysis [7]. |

| Capacity Factor (k') | Measure of a peak's retention relative to the void volume [14] [24]. | Method-specific; ensures peaks are well-retained and free from the void volume [14]. | Confirms that the analyte interacts with the stationary phase, which is necessary for a reliable separation [14]. |

Establishing Scientifically Sound Criteria

SST limits should not be arbitrary. A robust strategy involves deriving SST limits from a robustness test [27]. In a robustness test, method parameters (e.g., pH, flow rate, mobile phase composition) are deliberately varied within a realistic range. The performance data generated across these variations provide a empirical basis for setting realistic and suitable SST limits that the system should be able to meet, even with the minor changes expected during method transfer between laboratories or instruments [27].

Experimental Protocol: Preparation and Execution of an SST

The following workflow outlines the key steps for the preparation of an SST solution and its use in an analytical run.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Materials for SST Solution Preparation and Analysis

| Item | Function & Importance |

|---|---|

| High-Purity Reference Standard | A primary standard, qualified against a pharmacopoeial standard, used to prepare the SST solution. It must not originate from the same batch as the test samples [14]. |

| SST Marker / RT Marker | A ready-made mixture of critical analytes used to verify that the chromatographic system performs as expected for all key separations and retentions [24]. |

| Internal Standards (IS) | Isotopically labeled analogs of the target analyte(s), particularly critical for mass spectrometry. They correct for variability in sample preparation and instrument response [26] [25]. |

| Mobile Phase Solvents | High-purity solvents and buffers used to dissolve the SST standards and as the eluent. Their quality and composition are critical for reproducible retention times and peak shapes [14]. |

| Vials and Caps | Chemically inert containers for holding the SST solution. Proper sealing prevents evaporation and contamination, ensuring solution stability during the analytical run. |

The selection of appropriate analytes and the meticulous preparation of reference standards form the bedrock of a scientifically sound and regulatory-compliant System Suitability Test. By adhering to the principles outlined in this guide—selecting stable, relevant analytes, preparing standards with precision, and establishing criteria based on robustness data—researchers and drug development professionals can ensure their chromatographic systems consistently generate accurate, precise, and defensible data. A well-designed SST solution acts as the final gatekeeper, safeguarding data integrity and ultimately contributing to the quality and safety of pharmaceutical products.

System Suitability Testing (SST) serves as the final gatekeeper of data quality in analytical chemistry, providing documented evidence that an entire analytical system—comprising instrument, column, reagents, and software—operates within pre-established performance limits immediately before sample analysis [7]. Unlike method validation, which proves a method is reliable in theory, SST demonstrates that a specific instrument on a specific day generates high-quality data according to validated method requirements [7]. This verification is particularly crucial in pharmaceutical analysis and regulated environments, where failure to implement proper SST protocols can result in regulatory actions [14].

The fundamental purpose of SST is to prevent wasted effort and maintain data integrity by confirming instrument performance, assessing method stability, and guaranteeing that all analytical results derive from a system verified as fit-for-purpose [7]. This protocol outlines the comprehensive, step-by-step process from initial test injection to final run acceptance, providing researchers and drug development professionals with a standardized framework for implementing SST across chromatographic applications.

Prerequisites and Preparation

Materials and Reagents

Before initiating system suitability testing, ensure all necessary materials and reagents are available and properly documented. The selection of appropriate reference standards is critical for obtaining meaningful SST results.

Table 1: Essential Research Reagent Solutions for System Suitability Testing

| Reagent/Material | Specification | Function in SST Protocol |

|---|---|---|

| Reference Standard | High-purity primary or secondary standard, qualified against former reference standard [14] | Serves as test analyte for system performance verification |

| Mobile Phase | Prepared according to method specifications, filtered and degassed | Carries analyte through chromatographic system |

| System Suitability Test Solution | Reference standard dissolved in mobile phase or similar organic solvent [14] | Injected to generate chromatographic data for evaluation |

| Blank Solution | Mobile phase or sample diluent [1] | Identifies system contamination prior to sample analysis |

Instrument and Method Verification

Prior to SST execution, verify that the analytical instrument has undergone appropriate Analytical Instrument Qualification (AIQ). SST should not be confused with or substitute for AIQ [14]. While AIQ proves the instrument operates as intended by the manufacturer across defined operating ranges, SST demonstrates that the specific method works correctly on the qualified instrument at the time of analysis [14].

Confirm that the chromatographic method parameters are correctly configured, including:

- Mobile phase composition and flow rate

- Column temperature (if applicable)

- Detection wavelengths or mass spectrometry parameters

- Injection volume

- Data acquisition settings

Step-by-Step SST Protocol

Pre-Analytical System Checks

Step 1: System Purification and Equilibration Initiate with a blank gradient containing no sample to reveal potential impurities in solvents or contamination within the separation system [1]. Monitor the baseline for unusual noise, drift, or ghost peaks that might indicate contamination. Following the blank injection, equilibrate the system with the initial mobile phase composition until a stable baseline is achieved, typically requiring 5-10 column volumes.

Step 2: Preparation of SST Reference Solution Prepare the system suitability test solution using a reference standard dissolved in mobile phase or a similar amount of organic solvent [14]. The concentration should be representative of typical sample concentrations. For chromatographic methods, filter the solution if necessary, recognizing that analyte adhesion to filters may occur, particularly at lower concentrations [14].

Test Injection and Parameter Measurement

Step 3: Execute Test Injections Perform 5-6 replicate injections of the SST reference solution to assess system reproducibility [7] [14]. These replicates allow for statistical evaluation of critical parameters, particularly injection repeatability expressed as Relative Standard Deviation (RSD).

Step 4: Measure Critical SST Parameters For each injection, measure the following parameters, which represent the core metrics for evaluating system performance in chromatographic methods:

Table 2: System Suitability Test Parameters and Acceptance Criteria

| Parameter | Calculation Method | Typical Acceptance Criteria | Purpose |

|---|---|---|---|

| Resolution (Rs) | Rs = (tR2 – tR1)/(0.5(wb1 + wb2)) [28] | Monograph-specific, typically >1.5 [7] | Measures separation between adjacent peaks |

| Tailing Factor (T) | T = W0.05/2f [7] | Typically 0.9-1.8 [7] | Assesses peak symmetry and column health |

| Plate Count (N) | N = 16(tR/wb)² [28] | Method-specific, monitors column efficiency [28] | Evaluates column performance and efficiency |

| Relative Standard Deviation (%RSD) | %RSD = (Standard Deviation/Mean) × 100 [7] | <1.0-2.0% for replicate injections [7] [14] | Measures injection precision and repeatability |

| Signal-to-Noise Ratio (S/N) | S/N = Peak Height/Background Noise [8] | Typically ≥10 for quantification [8] | Assesses detector sensitivity and method detection limits |

Step 5: Calculate and Document Results The data system should automatically calculate SST parameters and compare them against predefined acceptance criteria derived from method validation [7]. Modern chromatography data systems typically include SST modules that automatically calculate these parameters and generate compliance reports.

Acceptance Decision and Action

Step 6: Evaluate System Suitability Review all calculated parameters against the predefined acceptance criteria. The system is considered suitable only if all parameters meet their respective criteria. As specified in USP chapter <1034>, "If an assay (or a run) fails system suitability, the entire assay (or run) is discarded and no results are reported other than that the assay (or run) failed" [14].

Step 7: Proceed with Analysis or Investigate Failure If the system passes SST, proceed with the analysis of unknown samples. If the system fails, immediately halt the run and initiate troubleshooting procedures [7]. Document the failure and all subsequent investigations according to quality system requirements.

Advanced SST Considerations

Specialized Application Protocols

Liquid Chromatography-Mass Spectrometry (LC-MS) Applications For LC-MS characterization of protein therapeutics, traditional SST parameters may be insufficient. Additional metrics should include sequence coverage, mass accuracy, and detection limits for spiked peptides [26]. A recommended approach incorporates a protein digest standard (e.g., BSA) spiked with synthetic peptides at known concentrations (0.1% to 100% of BSA digest peptide concentration) to simulate detection of low-abundance species [26].

Untargeted Metabolomics Applications System suitability in untargeted metabolomics requires assessment of mass-to-charge (m/z) ratio accuracy (<5 ppm error), retention time stability (<2% error), peak area reproducibility (±10% of predefined area), and peak symmetry [1]. A system suitability sample containing 5-10 authentic chemical standards distributed across the m/z and retention time ranges provides comprehensive system assessment [1].

Regulatory and Harmonization Updates

Recent updates to pharmacopeial standards have enhanced SST requirements. The harmonized USP <621> chapter, with updates effective May 1, 2025, includes new requirements for system sensitivity (signal-to-noise ratio) and peak symmetry [8]. Similarly, the European Pharmacopoeia has clarified that SST requirements referenced in monograph assays must be followed even when using cross-referenced procedures [4].

These regulatory updates emphasize that system suitability testing remains an evolving discipline, with increasing emphasis on detection capability and separation quality to ensure the reliability of analytical data in pharmaceutical development and quality control.

This step-by-step protocol provides a comprehensive framework for implementing robust system suitability testing from test injection to run acceptance. By adhering to this structured approach—encompassing systematic preparation, parameter measurement against validated criteria, and definitive acceptance decisions—researchers and drug development professionals can ensure the generation of reliable, defensible analytical data. Proper implementation of SST serves not merely as a regulatory requirement but as a fundamental scientific practice that underpins data integrity throughout the analytical workflow.

Best Practices for Effective SST Implementation and Documentation

System Suitability Testing (SST) is a critical quality assurance process in analytical chemistry, ensuring that an analytical system operates within predetermined specifications at the time of sample analysis. Within a broader research context on fundamentals of system suitability testing, SST provides the foundational confidence that generated data is reliable, accurate, and precise. For researchers and drug development professionals, robust SST protocols are indispensable for acquiring high-quality data in any high-throughput analytical laboratory, particularly in regulated environments like pharmaceutical development [1].

The concepts of Quality Assurance (QA) and Quality Control (QC) are integral to SST success. QA encompasses all planned and systematic activities implemented before sample collection to provide confidence that the analytical process will fulfill predetermined quality requirements. In contrast, QC involves the operational techniques and activities used to measure and report these quality requirements during and after data acquisition [1]. This distinction is crucial for designing effective SST protocols where system suitability samples assess instrument performance prior to biological sample analysis, while QC samples monitor performance throughout the analytical run.

Core Components of a System Suitability Testing Framework

System Suitability Samples and Blanks

System suitability samples are specifically designed to assess the operational readiness and lack of contamination of an analytical platform before sample analysis commences. The simplest approach involves running a "blank" gradient with no sample to reveal impurities in solvents or contamination of the separation system [1].

A more comprehensive check involves analyzing a solution containing a small number of authentic chemical standards (typically five to ten analytes) dissolved in a chromatographically suitable diluent. These analytes should be distributed as fully as possible across the mass-to-charge (m/z) and retention time ranges to assess the complete analytical window [1]. The resulting data is assessed against pre-defined acceptance criteria covering key chromatographic parameters.

Table 1: System Suitability Test Acceptance Criteria

| Parameter | Acceptance Criteria | Purpose |

|---|---|---|

| Mass-to-Charge (m/z) Accuracy | ≤ 5 ppm error compared to theoretical mass | Verifies mass spectrometer calibration |

| Retention Time Stability | < 2% deviation from defined retention time | Confirms chromatographic system stability |

| Peak Area Response | Predefined acceptable peak area ± 10% | Ensures detection sensitivity stability |

| Peak Shape | Symmetrical peak with no evidence of splitting | Indicates proper column performance and injection technique |

Quality Control Samples in the Analytical Workflow

Complementary to system suitability samples, various QC samples serve different functions throughout the analytical process:

- Isotopically-labelled Internal Standards: Added to each sample to assess system stability for individual analyses [1]

- Pooled QC Samples: Created by combining small aliquots of all study samples; used to condition the analytical platform, perform intra-study reproducibility measurements, and mathematically correct for systematic errors [1]

- Standard Reference Materials (SRMs) and Long-Term Reference (LTR) QC Samples: Applied for inter-study and inter-laboratory assessment of data quality and comparability [1]

Implementation Workflow and Experimental Protocols

SST Implementation Workflow

The following diagram illustrates the complete SST implementation workflow, from initial system checks through to data acquisition and reporting:

Detailed Experimental Protocol for System Suitability Testing