Systematic Bias in Analytical Instruments: A Comprehensive Guide for Biomedical Researchers

This article provides a foundational understanding of systematic bias in analytical instruments, a critical challenge in biomedical research and drug development.

Systematic Bias in Analytical Instruments: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a foundational understanding of systematic bias in analytical instruments, a critical challenge in biomedical research and drug development. It explores the core concepts and real-world impact of bias, from skewed clinical trial data to flawed diagnostic tools. The content details advanced methodological approaches for detecting and quantifying bias, including statistical models and error frameworks. Practical troubleshooting and optimization strategies for bias mitigation, such as recalibration and improved study design, are presented. Finally, the article establishes rigorous validation and comparative frameworks to assess instrument performance and ensure data integrity, equipping scientists with the knowledge to enhance the reliability and equity of their research outcomes.

What is Systematic Bias? Foundational Concepts and Real-World Impact in Biomedicine

In scientific research, particularly in fields such as drug development and analytical instrument analysis, measurement error is defined as the difference between an observed value and the true value of a quantity [1]. Properly characterizing and mitigating these errors is fundamental to research integrity, as uncorrected errors can lead to research biases, invalid conclusions, and compromised decision-making [1]. Within a broader thesis on systematic bias in analytical instruments, this guide provides a technical framework for understanding, identifying, and correcting the two primary classes of measurement error: systematic error (bias) and random error (noise) [1] [2].

The distinction is not merely academic; it dictates the very strategies researchers must employ to ensure data quality. Systematic error skews measurements in a consistent, predictable direction, affecting accuracy, while random error causes unpredictable fluctuations around the true value, impairing precision [1] [3]. For drug development professionals, this is critical when comparing patient outcomes across clinical trials and real-world data, where differences in assessment protocols can introduce systematic measurement error that must be corrected to avoid biased estimates of treatment efficacy [4].

Core Theoretical Foundations

Defining Systematic Error (Bias)

Systematic error, often termed "bias," is a consistent or proportional difference between the observed and true values of something [1]. Unlike random fluctuations, its behavior is reproducible and non-compensating. If a measurement process contains systematic error, repeating the measurement under the same conditions will yield values that are consistently displaced from the true value in a specific direction [3].

Systematic errors are generally considered a more significant problem than random errors in research because they can systematically lead to false positive or false negative conclusions about the relationship between variables [1].

- Offset Error (Additive Error): This occurs when a scale is not calibrated to a correct zero point, causing a fixed amount to be added to or subtracted from every measurement [1]. For example, a weighing scale that always reads 0.5 grams heavy exhibits an offset error.

- Scale Factor Error (Multiplicative Error): This occurs when measurements consistently differ from the true value by a proportional amount (e.g., by 10%) across the instrument's range [1]. An example is a sensor whose readings are precisely 2% above the real values throughout its operational scale [3].

Distinguishing Random Error (Noise)

Random error is a chance difference between the observed and true values that varies unpredictably from one measurement to the next [1]. It does not consistently push measurements in one direction but creates a spread of values around the true value, thereby affecting the precision or reproducibility of the data [1] [2].

In an ideal scenario with only random error, multiple measurements of the same quantity will form a distribution that clusters around the true value. When averaged, these measurements will converge toward the true value, as the errors in different directions cancel each other out, especially in large samples [1]. This makes random error less problematic than systematic error for large-sample studies.

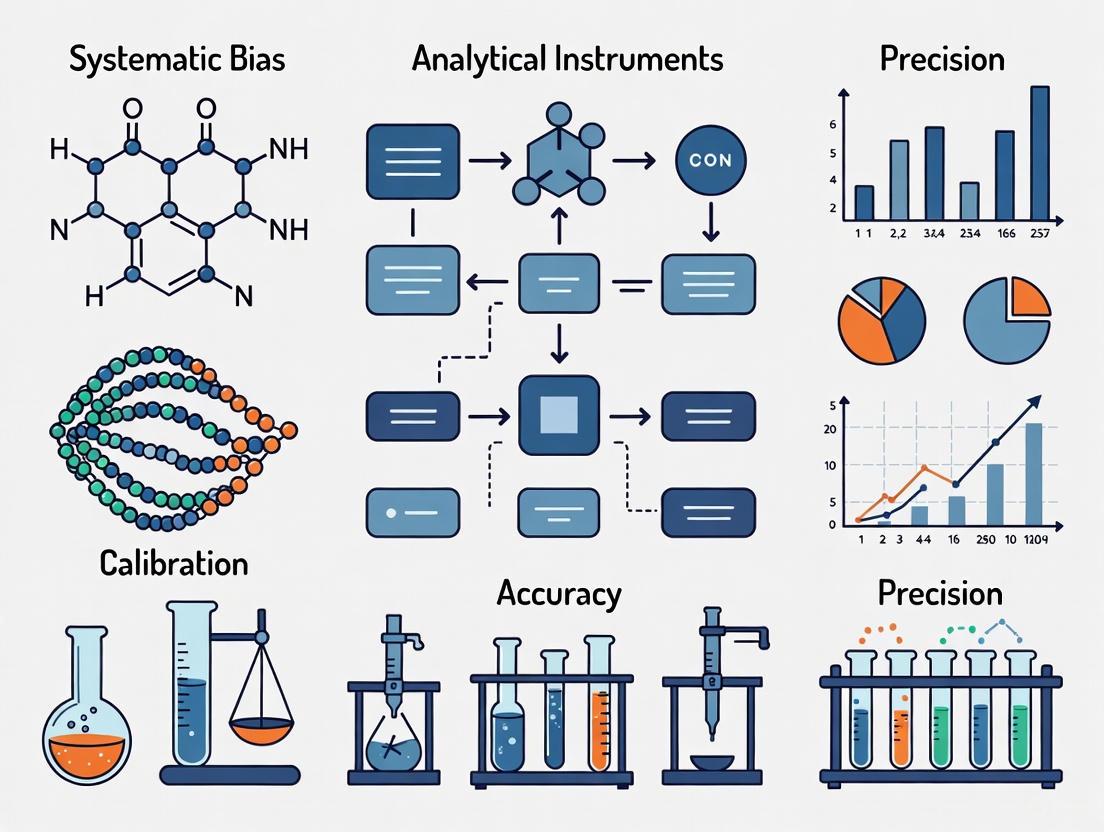

Accuracy vs. Precision: A Visual Metaphor

The concepts of accuracy and precision are effectively illustrated by the analogy of a dartboard [1]:

- High Accuracy, Low Precision: The darts are scattered widely but their average position is near the bullseye (low random error, low systematic error).

- Low Accuracy, High Precision: The darts are clustered tightly together but far from the bullseye (low random error, high systematic error).

- High Accuracy, High Precision: The darts are clustered tightly around the bullseye (low random error, low systematic error).

- Low Accuracy, Low Precision: The darts are scattered widely and nowhere near the bullseye (high random error, high systematic error).

Table 1: Comparative Analysis of Systematic and Random Error

| Feature | Systematic Error (Bias) | Random Error (Noise) |

|---|---|---|

| Definition | Consistent, predictable deviation from true value [1] | Unpredictable, chance-based fluctuation [1] |

| Impact on | Accuracy (closeness to true value) [1] | Precision (reproducibility of measurement) [1] |

| Direction | Unidirectional (always high or always low) [3] | Equally likely to be high or low [1] |

| Reducible by | Improved methods, calibration, blinding [1] | Averaging, increasing sample size [1] |

| Source Examples | Miscalibrated instrument, observer bias [1] [2] | Environmental fluctuations, electronic noise [1] [2] |

Advanced Error Models and Contemporary Challenges

A Refined Model: Constant vs. Variable Systematic Error

Emerging research proposes a more nuanced model that distinguishes between two components of systematic error, challenging the traditional view that it is always constant [5]:

- Constant Component of Systematic Error (CCSE): This is a stable, correctable bias. It can be quantified and removed from measurements through calibration against a known standard [5].

- Variable Component of Systematic Error (VCSE(t)): This behaves as a time-dependent function that cannot be efficiently corrected. It manifests as a slow drift or unpredictable shift in the measurement system's baseline performance over time, even under seemingly stable conditions [5].

This model explains why long-term quality control data in clinical laboratories are often not normally distributed and why standard deviations calculated from such data include contributions from both random error and this variable bias component [5]. This complexity necessitates ongoing quality control and monitoring.

Error and Bias in Modern AI Systems

In the context of artificial intelligence (AI) and large language models (LLMs) in healthcare, the concept of bias shares a conceptual foundation with systematic error. Algorithmic bias is defined as any systematic and unfair difference in how predictions are generated for different patient populations, which could lead to disparate care delivery [6]. This bias can originate from human biases (implicit, systemic, confirmation), algorithm development processes, or deployment settings, and it must be mitigated throughout the entire AI model lifecycle [6].

Frameworks for auditing these models are being developed, emphasizing stakeholder engagement, model calibration to specific patient populations, and rigorous testing through clinically relevant scenarios to identify and correct for these systematic skews [7].

Practical Methodologies for Error Identification and Mitigation

Experimental Protocol for Error Assessment

A robust protocol for characterizing error in an analytical instrument involves a structured repeated-measures design. The following workflow provides a methodology to quantify both random and systematic error components.

Title: Experimental Workflow for Error Assessment

Procedure:

- Select a Certified Reference Material (CRM): Obtain a sample with a known and traceable true value (μ_true). This serves as the ground truth for the assessment.

- Conduct Repeated Measurements: Using the analytical instrument under evaluation, perform a sequence of measurements (n > 30 is recommended for statistical power) on the CRM. Ensure conditions (e.g., operator, environment, instrument settings) are held as constant as possible to isolate the error components.

- Data Analysis:

- Calculate the mean (x̄) of the observed measurements.

- Systematic Error (Bias): Compute the difference:

Bias = x̄ - μ_true. This quantifies the average displacement from the true value, representing accuracy. - Random Error (Precision): Calculate the standard deviation (s) of the observed measurements. This quantifies the spread or dispersion of the data, representing precision.

- Characterization: The measurement system's total error profile is now characterized by its bias (systematic error) and standard deviation (random error).

Strategies for Reducing Systematic Error

Mitigating bias requires targeted strategies that address its root causes [1] [3].

- Regular Calibration: Compare instrument readings against a reference standard of higher accuracy and adjust the instrument accordingly. For example, a 2022 study on industrial pressure sensors showed periodic calibration cut measurement inaccuracies from ±5% to ±1.2% [3]. Automation can further reduce human error in this process by up to 15% [3].

- Method Triangulation: Measure the same quantity using multiple, fundamentally different instruments or techniques. If all methods converge on a similar result, confidence in the absence of significant systematic error is high [1].

- Blinding (Masking): In experiments involving human assessment, hide the condition assignment from both participants and researchers to prevent subconscious influences on measurements (e.g., experimenter expectancies, demand characteristics) [1].

- Randomization: Use random sampling to ensure the study sample does not systematically differ from the population. In experiments, use random assignment to place participants into different treatment conditions, which helps balance unmeasured confounding variables across groups [1].

Strategies for Reducing Random Error

Reducing noise increases the signal-to-noise ratio and improves the detectability of true effects.

- Increase Sample Size: Collecting data from a large sample is one of the most effective ways to reduce the impact of random error. The errors in different directions cancel each other out more efficiently, leading to a more precise estimate of the population mean [1].

- Take Repeated Measurements: For a given sample or subject, taking multiple readings and using their average brings the final value closer to the true value by averaging out the random fluctuations [1].

- Control Experimental Variables: Carefully control extraneous variables that could impact measurements, such as temperature, humidity, and vibration, for all participants or samples to remove key sources of random noise [1].

Table 2: Key Research Reagent Solutions for Error Mitigation

| Reagent/Material | Function in Error Control |

|---|---|

| Certified Reference Materials (CRMs) | Provides a ground truth with known property values for instrument calibration and trueness assessment, directly combating systematic error [3]. |

| Quality Control (QC) Materials | Stable, characterized materials run at regular intervals to monitor the stability of the measurement system over time, detecting both variable systematic error and increases in random error [5]. |

| Calibration Standards | A set of reference materials used to establish the relationship between instrument response and analyte concentration, correcting for offset and scale factor errors [1] [3]. |

| Stable Environmental Chambers | Controls ambient conditions (temperature, humidity) to minimize environmentally induced random error and systematic drift in sensitive instruments [2]. |

Error Analysis in Practice: A Case Study in Clinical Oncology

The challenge of measurement error is acutely present in oncology drug development. There is growing interest in using Real-World Data (RWD), such as electronic health records from routine clinical care, to augment or construct external control arms for clinical trials. However, disease assessments in RWD are often less standardized and frequent than in rigorous trials, introducing systematic measurement error when comparing endpoints like progression-free survival [4].

Experimental Protocol: Survival Regression Calibration (SRC)

To mitigate this bias, a novel statistical method called Survival Regression Calibration (SRC) has been developed [4]:

- Validation Sample: Obtain a sample of patients for whom both the "true" trial-like outcome measures and the "mismeasured" real-world-like outcome measures are available.

- Model Fitting: Fit separate Weibull regression models to the true and mismeasured outcome measures in this validation sample.

- Bias Estimation: Quantify the systematic bias by comparing the parameters (e.g., shape, scale) of the two models.

- Calibration: Apply this estimated bias to calibrate the parameter estimates in the full RWD study population. The SRC method is specifically designed for time-to-event outcomes and has been shown to yield greater reduction in measurement error bias than standard regression calibration methods [4].

This case demonstrates how understanding the nature of systematic error enables the development of sophisticated tools to correct for it, thereby strengthening evidence of treatment efficacy derived from real-world sources.

The rigorous distinction between systematic and random error is not a mere taxonomic exercise but a foundational element of robust scientific research. Systematic error (bias) poses a greater threat to the validity of research conclusions by consistently skewing results away from the truth, while random error (noise) obscures precision but can be managed through replication and large sample sizes. For researchers and drug development professionals, a disciplined approach involving regular calibration, methodological triangulation, controlled experimental design, and advanced statistical correction methods is essential for recognizing, quantifying, and mitigating these errors. As analytical technologies and data sources, including AI and RWD, continue to evolve, so too must the frameworks for ensuring the accuracy and reliability of the measurements upon which critical health decisions depend.

Traditional error models in clinical metrology often conflate distinct components of systematic error, leading to miscalculations of total error and measurement uncertainty. This whitepaper presents a novel error model that distinguishes between constant and variable components of systematic error (bias), challenging conventional approaches to quality control and measurement uncertainty estimation. Through mathematical deduction and simulation, we demonstrate that standard deviation derived from long-term quality control (QC) data includes both random error and the variable bias component, rendering it inappropriate as a sole estimator of random error. This refined model defines the constant component of systematic error (CCSE) as a correctable term, while the variable component (VCSE(t)) behaves as a time-dependent function that resists efficient correction. Implementation of this model enables clinical laboratories to enhance decision-making accuracy, improve measurement error estimation, and advance patient safety through more reliable diagnostic results.

Systematic bias represents a fundamental challenge in analytical metrology, particularly in clinical laboratory medicine where measurement inaccuracies can directly impact patient diagnosis, treatment monitoring, and therapeutic outcomes. According to the International Vocabulary of Metrology (VIM3), measurement bias is defined as the "estimate of a systematic measurement error" [8]. This systematic deviation of laboratory test results from actual values can cause misdiagnosis or misestimation of disease prognosis, ultimately increasing healthcare costs [8].

Traditional metrological approaches, developed alongside the concept of the normal distribution, were originally created to describe measurements in stable, non-biological systems. However, clinical laboratory measurements involve biological materials and complex systems exhibiting inherent variability that complicates the application of these traditional models [5]. A significant limitation of conventional approaches is the treatment of systematic error as a monolithic entity, despite evidence that its behavior varies substantially under different measurement conditions.

This whitepaper introduces a paradigm-shifting error model that distinguishes between constant and variable components of systematic error, addressing critical gaps in current clinical laboratory quality control practices. By examining bias through this novel framework, researchers and laboratory professionals can develop more sophisticated approaches to measurement uncertainty that reflect the complex reality of diagnostic testing environments.

Theoretical Foundations: Deconstructing Systematic Error

The Novel Error Model: Constant vs. Variable Bias Components

The proposed error model fundamentally redefines systematic error by separating it into two distinct components: the Constant Component of Systematic Error (CCSE) and the Variable Component of Systematic Error (VCSE(t)) [5]. This distinction represents a significant advancement over traditional models that treat systematic error as a single, monolithic entity.

The CCSE manifests as a stable, correctable offset between measured values and true reference values. This component remains relatively constant over time and can be effectively addressed through calibration against certified reference materials or reference methods [5] [8]. In contrast, the VCSE(t) behaves as a time-dependent function that fluctuates unpredictably and cannot be efficiently corrected through standard calibration procedures [5]. This variable component arises from multiple sources including reagent lot variations, environmental fluctuations, instrument aging, and operator differences.

The separation of these components challenges conventional approaches to total error calculation, which typically express total measurement error (TE) as the sum of systematic error (SE) and random error (RE). According to the novel model, what has traditionally been classified as "random error" in long-term quality control data actually contains both true random error and the variable component of systematic error [5].

Mathematical Formulation

The relationship between different error components can be mathematically represented as follows:

- Traditional Error Model: TE = SE + RE

- Novel Error Model: TE = CCSE + VCSE(t) + RE

Where:

- TE = Total Error

- CCSE = Constant Component of Systematic Error

- VCSE(t) = Variable Component of Systematic Error (time-dependent)

- RE = Random Error

This reformulation has profound implications for how clinical laboratories estimate measurement uncertainty and establish quality control limits [5].

Metrological Principles Underpinning the Model

The novel error model rests on four quintessential principles valid across all fields of metrology [5]:

- QP1: A parameter must be determined under the same conditions under which it is used in calculations and predictions.

- QP2: When applying a law or using an equation, we assume that all conditions of applicability are fulfilled.

- QP3: A corrective action cannot efficiently correct an error if the average error introduced by the corrective action is larger than the original error.

- QP4: Adding or multiplying by a constant does not reduce the natural variation present in measurements.

These principles highlight why traditional calibration approaches effectively address CCSE but fail to correct VCSE(t), as corrective factors applied to highly variable systematic errors may introduce more uncertainty than they resolve [5].

Types of Bias in Clinical Laboratory Measurements

Constant vs. Proportional Bias

In addition to the temporal distinction between constant and variable bias, systematic errors in laboratory medicine can be categorized based on their relationship to analyte concentration [8] [9]:

Table 1: Types of Measurement Bias in Clinical Laboratories

| Bias Type | Mathematical Representation | Characteristics | Detection Method |

|---|---|---|---|

| Constant Bias | Difference between target and measured values is constant across concentrations | Consistent offset regardless of analyte level; intercept (b) ≠ 0 in regression analysis | Evaluate if 95% confidence interval of intercept excludes 0 |

| Proportional Bias | Difference between target and measured values changes with analyte concentration | Bias magnitude proportional to measurand concentration; slope (a) ≠ 1 in regression analysis | Evaluate if 95% confidence interval of slope excludes 1 |

| Variable Bias (VCSE(t)) | Fluctuates unpredictably over time | Time-dependent; not correctable through standard calibration; affected by multiple factors | Analysis of long-term quality control data trends |

These bias types can occur independently or in combination. For example, a method might exhibit both constant and proportional bias simultaneously, where the regression equation shows both intercept (b) significantly different from 0 and slope (a) significantly different from 1 [9].

Measurement Conditions and Their Impact on Bias Detection

The conditions under which measurements are performed significantly influence the observed bias and its components. VIM3 defines three primary measurement conditions [5] [8]:

- Repeatability Conditions: Same measuring procedure, operators, system, location, and operating conditions over a short period.

- Intermediate Precision Conditions: Conditions including different instruments, operators, reagents, and calibrators over an extended period within a single laboratory.

- Reproducibility Conditions: Conditions including different laboratories, procedures, locations, and operators.

The variable component of systematic error (VCSE(t)) becomes increasingly pronounced under intermediate precision and reproducibility conditions, whereas repeatability conditions primarily reveal constant bias and random error [5].

Methodologies for Bias Assessment and Quantification

Experimental Protocol for Distinguishing Bias Components

Objective: To separate and quantify constant and variable components of systematic error in clinical laboratory measurements.

Materials and Reagents:

- Certified reference materials (CRMs) with known target values

- Stable control materials spanning clinically relevant concentrations

- Patient samples for commutation studies

- Calibrators traceable to reference methods

- Appropriate instrumentation and reagents

Procedure:

- Short-term Repeatability Study: Perform 20 consecutive measurements of CRMs and control materials within a single run under identical conditions. Calculate mean, standard deviation (s_r), and bias for each level.

- Long-term Intermediate Precision Study: Analyze control materials once daily for 20-30 days under routine laboratory conditions. Record results along with documentation of reagent lot changes, calibration events, maintenance, and operator shifts.

- Data Analysis:

- Calculate overall mean and standard deviation (s_RW) for each control level from long-term data.

- The difference between the overall mean and CRM target value represents the total systematic error.

- The difference between the short-term mean and CRM target value estimates the constant component (CCSE).

- The difference between total systematic error and CCSE provides an estimate of the variable component (VCSE(t)).

- Statistical Evaluation:

- Perform significance testing on bias estimates using t-tests or confidence interval analysis.

- Use regression analysis (e.g., Passing-Bablok) to identify constant and proportional bias components.

- Analyze temporal patterns in control data to characterize the behavior of VCSE(t).

Establishing Significance of Bias

The clinical and statistical significance of estimated bias should be evaluated before implementation of corrections. The significance of bias can be determined using several approaches [8]:

Statistical Significance Testing: Perform a one-sample t-test comparing measured values to target reference values. A p-value < 0.05 suggests statistically significant bias.

Confidence Interval Analysis: Calculate the 95% confidence interval of the mean of repeated measurements. If the interval does not include the target value, bias is considered statistically significant.

Medical Relevance Assessment: Evaluate whether the observed bias magnitude exceeds acceptable limits based on biological variation, clinical guidelines, or regulatory requirements.

Table 2: Key Reagents and Materials for Bias Assessment Experiments

| Material/Reagent | Specification Requirements | Function in Experiment |

|---|---|---|

| Certified Reference Materials (CRMs) | Commutable with patient samples; value assigned by reference method | Provides true target value for bias calculation; traceability to higher-order standards |

| Stable Control Materials | Multiple concentration levels covering measuring interval; well-characterized stability | Monitoring long-term performance; detecting variable bias component |

| Calibrators | Traceable to reference method; matrix-matched to patient samples | Establishing measurement traceability; correcting constant bias |

| Patient Samples | Fresh samples representing typical clinical cases | Commutability assessment; verifying performance with real samples |

Visualization of the Novel Error Model

Component Relationship Diagram

Bias Detection Workflow

Implications for Laboratory Practice and Quality Control

Quality Control Strategy Reform

The distinction between constant and variable bias components necessitates a fundamental reassessment of quality control practices in clinical laboratories. Traditional QC approaches that rely solely on standard deviation calculated from long-term data (s_RW) inherently overestimate random error because this parameter includes both random error and the variable component of systematic error [5].

This overestimation explains the frequently observed phenomenon in laboratory practice where theoretically predicted error rates based on normal distribution assumptions do not align with actual experience. Laboratories often observe "impossible" QC graphs with fewer rule violations than would be statistically expected, suggesting that decision limits based on s_RW are inappropriately wide [5].

Measurement Uncertainty Estimation

The novel error model provides a more sophisticated framework for estimating measurement uncertainty in clinical laboratories. By separately quantifying constant and variable bias components, laboratories can develop uncertainty budgets that more accurately reflect the true sources of variation in their measurement systems.

This approach acknowledges that while the constant component of systematic error can be corrected through calibration, the variable component contributes directly to measurement uncertainty and must be accounted for in uncertainty estimates [5].

Method Validation and Verification

When implementing new analytical methods or instruments, laboratories should specifically assess both constant and variable bias components during validation studies. This requires designing experiments that separately evaluate performance under repeatability conditions and intermediate precision conditions, allowing for discrimination between these different error sources.

The presence of significant variable bias may necessitate more frequent calibration, enhanced environmental controls, or modified QC rules to ensure result quality remains within acceptable limits.

Future Directions and Research Applications

Integration with Advanced Technologies

The growing adoption of artificial intelligence (AI) and machine learning in clinical laboratories presents opportunities for more sophisticated management of variable bias components [10] [11]. AI algorithms could potentially model and predict VCSE(t) behavior based on multiple laboratory parameters, enabling proactive correction approaches rather than reactive responses to QC failures.

Digital solutions and enhanced connectivity between instruments through the Internet of Medical Things (IoMT) may facilitate real-time monitoring of systematic error components, allowing for more dynamic quality management systems [10].

Implications for Diagnostic Research and Drug Development

In pharmaceutical research and diagnostic development, proper characterization of analytical bias components is essential for generating reliable data. The novel error model provides a framework for more accurate assessment of biomarker performance, potentially identifying previously overlooked sources of variation that could impact clinical trial results or diagnostic accuracy claims.

For researchers developing novel assays, understanding the distinction between constant and variable bias can inform more robust assay design, potentially reducing the impact of VCSE(t) through improved reagent formulations, more stable instrumentation, or optimized calibration strategies.

The distinction between constant and variable components of systematic error represents a paradigm shift in how clinical laboratories should conceptualize and manage measurement bias. This novel error model challenges long-standing assumptions in metrology and provides a more accurate framework for understanding the behavior of analytical systems under real-world conditions.

By recognizing that what has traditionally been classified as "random error" in long-term quality control data actually contains both true random error and variable systematic error, laboratories can develop more appropriate quality control strategies, more accurate measurement uncertainty estimates, and ultimately, more reliable patient results.

Implementation of this refined error model requires changes to method validation approaches, quality control practices, and data analysis techniques. However, the potential benefits in improved patient safety, reduced laboratory errors, and more efficient resource utilization justify this evolution in laboratory metrology practice.

Systematic bias represents a fundamental threat to the integrity of clinical research and drug development. Unlike random error, which averages out over multiple measurements, systematic bias introduces predictable, non-random distortions that can compromise the validity of study results and lead to erroneous conclusions. In the high-stakes environment of pharmaceutical development, where decisions affect both patient safety and billions of dollars in investment, understanding and mitigating these biases is not merely academic—it is a scientific and ethical imperative. Systematic bias in clinical trials can manifest through flawed participant selection, unrepresentative demographics, biased outcome measurements, and selective reporting of results. These distortions subsequently propagate through the entire drug development pipeline, potentially resulting in treatments that are less effective or even harmful for populations inadequately represented during research phases [12].

The contemporary drug development landscape operates at the intersection of immense scientific innovation and staggering financial risk. The traditional path from discovery to market approval spans 10 to 15 years with capitalized costs averaging $2.6 billion per approved drug [13]. This lengthy, expensive process is characterized by high attrition rates, with approximately 90% of drugs that enter human testing ultimately failing to receive regulatory approval [13]. Within this vulnerable ecosystem, systematic bias acts as an invisible tax, distorting critical go/no-go decisions and potentially allowing ineffective or unsafe compounds to advance while overlooking promising therapies. As artificial intelligence and machine learning become increasingly integrated into drug discovery and clinical trial design, new forms of algorithmic bias emerge that can amplify and scale existing healthcare disparities at an unprecedented rate [14].

Quantitative Evidence of Systematic Bias in Clinical Trials

Documented Demographic Skews

A quantitative meta-analysis of 690 clinical decision instruments (CDIs) provides compelling evidence of systematic bias in clinical research development. The analysis revealed significant demographic imbalances in participant populations that undermine the generalizability of research findings [15]:

Table 1: Demographic Skews in Clinical Decision Instrument Development

| Demographic Factor | Representation in Studies | Implication for Generalizability |

|---|---|---|

| Racial Composition | 73% White participants | Underrepresentation of minority groups despite often having higher disease burdens |

| Gender Distribution | 55% Male participants | Insufficient enrollment of female participants despite known sex-based differences in drug metabolism |

| Geographic Distribution | 52% in North America, 31% in Europe | Limited data from Asian, African, and South American populations |

These demographic skews are particularly concerning given that differences in medical product safety and effectiveness can emerge based on factors such as age, ethnicity, sex, and race [16]. Without adequate representation of the populations most affected by a disease, clinical trial data risks being biased, potentially resulting in treatments that are less effective—or even harmful—for underrepresented groups [16].

Beyond demographic imbalances, the same meta-analysis identified several methodological factors that introduce systematic bias into clinical research [15]:

Variable Selection Bias: 13 CDIs explicitly used race and ethnicity as predictor variables, potentially encoding societal biases directly into clinical algorithms.

Outcome Definition Bias: 28% of CDIs involved follow-up procedures, which may disproportionately skew outcome representation based on socioeconomic status, as patients with greater resources are more likely to complete extended follow-up requirements.

Geographic Concentration: With 52% of studies conducted in North America and 31% in Europe, the research fails to capture the genetic, environmental, and healthcare system diversity of global populations.

The documented underrepresentation is especially pronounced for specific disease areas. For example, a 2022 study in JAMA Oncology found that fewer than 5% of participants in U.S. cancer clinical trials were Black, despite Black Americans making up approximately 13% of the population [17]. This disparity persists despite evidence that diverse trial populations lead to more generalizable results, improved safety data, and better public trust [17].

Root Causes and Mechanisms of Systematic Bias

Data-Related Origins

The foundational principle of "bias in, bias out" is particularly relevant to clinical research and algorithm development. Historical medical data itself is profoundly biased due to decades of clinical research that systematically excluded or underrepresented women and ethnic minorities, focusing primarily on white males [14]. When artificial intelligence models are trained on these skewed datasets, they inevitably learn a distorted view of medicine that perpetuates existing disparities. For example, an algorithm trained on cardiovascular data from men may fail to recognize a heart attack in a woman, whose symptoms often present differently, leading to misdiagnosis and poorer outcomes [14].

This problem of unrepresentative data is compounded by several factors:

Geographic and Socioeconomic Skews: Most training data is sourced from a few large, urban academic medical centers, failing to capture the health realities of rural, lower-income, or geographically diverse populations [14].

Missing Metadata: Crucial information on race, ethnicity, and social determinants of health is often not collected or associated with patient records, making it impossible for developers to test for demographic bias, let alone correct it [14].

The Proxy Trap: Algorithm designers sometimes use easily measured variables (proxies) that correlate with the true variable of interest but introduce bias. A landmark study published in Science analyzed a widely used algorithm designed to identify patients who would benefit from high-risk care management programs that used patients' past healthcare costs as a proxy for their current health needs [14]. Because historically less money has been spent on Black patients compared to white patients with the same level of illness, the AI falsely concluded that Black patients were healthier and thus less likely to be flagged for the additional care they needed [14].

Human and Institutional Factors

Systematic bias in clinical research is not solely a technical problem—it is fundamentally a human and institutional one. The teams building healthcare algorithms often lack the racial, gender, and socioeconomic diversity of the patient populations the tools are meant to serve [14]. This homogeneity can lead to blind spots, where developers fail to consider the unique needs and contexts of different groups.

Additional human factors include:

Subjective Data Labeling: For many AI models, humans must first label the training data (e.g., identifying a tumor in an image). This process is subjective and can introduce the annotators' own biases and stereotypes into the "ground truth" from which the AI learns [14].

Problem Formulation: Bias can be introduced at the very genesis of a research project. A developer's choice of which problem to solve, what data to use, and which performance metrics to prioritize is a value judgment that can have discriminatory downstream effects [14].

Operational Barriers: Practical challenges such as transportation, time off work, and childcare responsibilities disproportionately affect participation among lower-income and minority groups, while lack of awareness about clinical trial opportunities and historical mistrust of the medical research community further exacerbate representation gaps [18] [17].

Assessment Methodologies and Experimental Protocols

Risk of Bias Assessment Tools

Researchers have developed standardized tools to systematically evaluate potential biases in clinical studies. The most widely adopted instruments include:

Table 2: Risk of Bias Assessment Tools for Clinical Research

| Assessment Tool | Study Type | Key Domains Evaluated | Interpretation |

|---|---|---|---|

| Cochrane RoB Tool | Randomized Controlled Trials (RCTs) | Sequence generation, allocation concealment, blinding, incomplete outcome data, selective reporting | Low risk, high risk, or unclear risk in each domain |

| ROB-2 | RCTs | Randomization process, deviations from interventions, missing outcome data, outcome measurement, selection of reported results | Low concern, some concern, or high risk of bias |

| ROBINS-I | Non-randomized studies | Confounding, participant selection, intervention classification, deviations, missing data, outcome measurement, result selection | Categorizes bias risk across seven domains |

| Newcastle-Ottawa Scale (NOS) | Cohort and case-control studies | Selection, comparability, outcome/exposure | Quality assessment using a star system |

These tools enable systematic critical appraisal of research methodology rather than relying on potentially subjective judgments of study quality [12]. For the assessment of bias, the protocol typically requires two independent reviewers to perform the risk of bias assessment for all studies that fulfil the inclusion criteria, with a third reviewer adjudicating any discrepancies [12].

Bias Evaluation in AI Systems

As artificial intelligence becomes increasingly integrated into drug development and clinical decision-making, specialized evaluation methodologies have emerged to assess algorithmic bias. Recent studies have employed multi-phase experimental designs to evaluate AI system performance across different demographic groups and clinical scenarios [19].

A representative evaluation protocol for assessing bias in medical AI systems includes:

AI Bias Assessment Workflow

This experimental workflow was implemented in a recent study evaluating GPT-4o on the Chilean anesthesiology exam, which employed 30 independent simulation runs with systematic variation of the model's temperature parameter to gauge the balance between deterministic and creative responses [19]. The generated responses underwent qualitative error analysis using a refined taxonomy that categorized errors such as "Unsupported Medical Claim," "Hallucination of Information," and "Incorrect or Vague Conclusion" [19].

Table 3: Research Reagent Solutions for Bias Assessment

| Tool/Resource | Function | Application Context |

|---|---|---|

| Cochrane RoB Tool (RevMan) | Generates traffic light plots and summary plots of bias assessment | Systematic reviews of randomized controlled trials |

| ROBVIS Web Application | Visualizes risk-of-bias assessments using traffic light and weighted bar plots | Creating publication-quality bias assessment graphics |

| Jadad Scale | Assesses methodological quality of clinical trials using 8 criteria | Quick quality assessment of randomized controlled trials |

| Newcastle-Ottawa Scale (NOS) | Evaluates quality of non-randomized studies across three domains | Observational studies, cohort studies, and case-control studies |

| QUADAS-2 | Assesses risk of bias in diagnostic accuracy studies | Studies evaluating diagnostic tests or biomarkers |

| Real-World Data (RWD) Platforms | Provides real-world patient data to assess representativeness | Setting enrollment targets, identifying recruitment barriers |

These tools enable researchers to implement comprehensive bias assessment protocols throughout the drug development lifecycle. The integration of real-world data is particularly valuable for understanding how well clinical trial populations represent the intended treatment populations in actual practice [20]. By comparing the demographic, clinical, and socioeconomic characteristics of trial participants with those of real-world patient populations, researchers can identify specific representation gaps and develop targeted strategies to address them [20].

Impact on Drug Development Processes and Outcomes

Consequences for Safety and Efficacy

The downstream effects of systematic bias in clinical research manifest in multiple concerning ways throughout the drug development pipeline:

Reduced Generalizability: When clinical trials fail to adequately represent the demographic and clinical diversity of real-world patient populations, the applicability of trial results to clinical practice becomes limited. This is particularly problematic for chronic conditions that manifest differently across populations, such as cardiovascular disease, diabetes, and many cancers [16] [17].

Limited Detection of Subgroup Effects: Homogeneous trial populations decrease the likelihood of identifying differential treatment effects across demographic groups. Without sufficient representation of diverse populations, potentially important variations in drug metabolism, efficacy, or adverse event profiles may remain undetected until after market approval, when the drug is exposed to a much larger and more diverse patient population [16].

Perpetuation of Health Disparities: Systematic bias in clinical research can exacerbate existing health inequities. For example, a University of Florida study found that the accuracy of an AI tool for diagnosing bacterial vaginosis was highest for white women and lowest for Asian women, with Hispanic women receiving the most false positives [14]. Similarly, numerous skin cancer detection algorithms have been trained predominantly on images of light-skinned individuals, resulting in significantly lower diagnostic accuracy for patients with darker skin—a critical failure, given that Black patients already have the highest mortality rate for melanoma [14].

Financial and Regulatory Implications

Systematic bias introduces significant financial and operational risks into the drug development process:

Late-Stage Failures: Biases in early research phases can propagate through the development pipeline, leading to costly late-stage failures when efficacy or safety issues emerge in broader, more diverse populations. Phase II clinical trials represent the single largest hurdle in drug development, with a success rate of only 29% to 40%, and between 40% and 50% of all clinical failures are due to a lack of clinical efficacy discovered at this stage [13].

Regulatory Scrutiny: Regulatory bodies are increasingly emphasizing the importance of diverse clinical trial populations. The FDA's diversity action plan requirements for Phase III clinical trials, set to take effect in mid-2025, reflect this heightened focus on representative research populations [16]. Similar initiatives are underway globally, with both the European Medicines Agency and the World Health Organization issuing guidance on improving enrollment of diverse populations in clinical trials [18].

Market Limitations: Drugs developed on narrow evidence bases may face market restrictions or require additional post-market studies, limiting their commercial potential. Furthermore, as regulatory requirements for representative evidence continue to evolve, drugs developed using predominantly homogeneous populations may face challenges in obtaining or maintaining market authorization across different jurisdictions [18].

Mitigation Strategies and Best Practices

Methodological Interventions

Addressing systematic bias requires intentional strategies throughout the research lifecycle:

Structured Bias Assessment Protocols: Implementing standardized risk of bias assessment using validated tools like Cochrane RoB 2.0 or ROBINS-I at the study design phase helps identify potential sources of bias before data collection begins. This proactive approach allows researchers to implement safeguards against common biases in randomization, allocation concealment, blinding, outcome assessment, and data analysis [12].

Comprehensive Reporting Standards: Adhering to established reporting guidelines such as CONSORT for randomized trials, STROBE for observational studies, and TRIPOD for prediction model studies improves transparency and enables critical appraisal of potential biases. Pre-registering study protocols and analysis plans reduces selective reporting bias and publication bias [12].

Diversity-by-Design Framework: Incorporating representativeness as a core design consideration rather than an afterthought. This includes using real-world data to understand the epidemiologic characteristics of the target disease population and setting enrollment goals that reflect this diversity [20]. The diversity dimension framework encompasses demographic, clinical, treatment environment, and social determinants of health elements [20].

Operational and Engagement Approaches

Successful mitigation of systematic bias extends beyond methodology to encompass operational and community engagement strategies:

Inclusive Site Selection: Placing trial sites in communities with historically underserved populations increases accessibility and relevance. Additionally, establishing satellite locations, mobile health units, and community-based participatory research centers can reduce geographic and socioeconomic barriers to participation [17].

Reduced Participant Burden: Implementing flexible protocol designs that account for logistical barriers like transportation, work conflicts, and caregiving responsibilities. This may include offering virtual visits, after-hours appointments, transportation assistance, and decentralized trial components [17].

Community Partnership: Building authentic, long-term relationships with community organizations, healthcare providers, and community leaders from underrepresented populations. These partnerships should be established early in the research process and maintained throughout, with clear mechanisms for community input and benefit-sharing [18] [17].

Bias Mitigation Framework

Regulatory and Policy Initiatives

The evolving regulatory landscape is creating additional impetus for addressing systematic bias in clinical research:

Diversity Action Plans: The FDA's guidance recommending that sponsors submit Diversity Action Plans to outline how they intend to enroll participants from underrepresented populations represents a significant step toward institutionalizing diversity in clinical research [17]. Though implementation has faced challenges, the concept continues to evolve, with some experts proposing reframing them as "Inclusive Research Action Plans" to preserve intent while navigating political landscapes [17].

Transparency Requirements: Regulatory agencies are increasingly expecting detailed reporting of participant demographics and analyses of treatment effects across demographic subgroups. These requirements help identify when safety or efficacy profiles may differ across populations and inform personalized treatment approaches [16] [18].

Real-World Evidence Integration: Regulatory acceptance of real-world evidence to supplement traditional clinical trial data creates opportunities to enhance understanding of how treatments perform in diverse patient populations encountered in actual clinical practice [20]. This is particularly valuable for understanding treatment effects in populations typically excluded from or underrepresented in traditional clinical trials.

Systematic bias in clinical trials is not merely a methodological concern—it represents a fundamental challenge to the scientific validity, ethical foundation, and economic sustainability of drug development. The quantitative evidence demonstrates persistent demographic skews, with clinical trial populations frequently failing to represent the diversity of real-world patient populations who will ultimately use the treatments being studied. These representational gaps, combined with methodological biases in study design, implementation, and analysis, undermine the generalizability of research findings and can perpetuate health disparities.

Addressing these challenges requires a multifaceted approach that integrates methodological rigor, operational innovation, and regulatory alignment. The implementation of structured bias assessment protocols, diversity-by-design frameworks, and inclusive trial operational strategies can significantly reduce systematic bias throughout the drug development lifecycle. Furthermore, the growing regulatory emphasis on representative research populations, exemplified by the FDA's diversity action plan requirements, creates both imperative and opportunity for meaningful change.

For researchers, scientists, and drug development professionals, the mandate is clear: systematic bias must be recognized as a critical threat to research integrity and patient safety rather than a secondary consideration. By adopting the assessment tools, methodological approaches, and mitigation strategies outlined in this technical guide, the research community can generate more reliable, generalizable, and equitable evidence, ultimately leading to better treatments and outcomes for all patient populations.

Clinical Decision Instruments (CDIs) are data-driven tools designed to standardize and improve patient care by assisting healthcare providers in predicting, diagnosing, and managing diseases. Ranging from simple flowcharts to complex machine learning algorithms, these instruments promise to enhance diagnostic accuracy and treatment efficacy [21]. However, this very standardization risks perpetuating and amplifying pre-existing societal and healthcare disparities if systemic biases are embedded within their development framework [15]. This case study documents the quantitative evidence of racial and gender bias in CDI development and provides a technical guide for researchers to identify, assess, and mitigate these biases, framing the issue within the broader context of systematic bias in analytical instruments research.

The pursuit of equity in CDIs presents a fundamental dilemma. On one hand, they can reduce subjective variations in care. On the other, when developed from biased data or flawed methodologies, they risk codifying discrimination into clinical practice, often under a misleading veneer of objectivity [21]. This analysis synthesizes findings from a quantitative meta-analysis of 690 CDIs, historical reviews, and contemporary data science research to provide a comprehensive examination of this critical issue [15] [21].

Quantitative Evidence of Systematic Bias

A recent large-scale meta-analysis of 690 clinical decision instruments provides stark evidence of systematic biases in their development lifecycle [15]. The findings reveal significant skews in multiple dimensions, from participant demographics to geographical representation, which collectively threaten the generalizability and fairness of these tools.

Table 1: Documented Biases in CDI Development from Meta-Analysis of 690 Instruments [15]

| Bias Dimension | Metric | Finding | Implied Risk |

|---|---|---|---|

| Participant Demographics | Racial Composition | 73% of participants identified as White | Underrepresentation of racial/ethnic minorities limits validation across populations |

| Gender Composition | 55% of participants identified as Male | Underrepresentation of women and gender-diverse individuals | |

| Geographical Skew | Investigator Location | 52% of studies in North America, 31% in Europe | Limited validation in diverse global healthcare settings |

| Predictor Variables | Use of Race/Ethnicity | 13 CDIs explicitly used Race and Ethnicity as variables | Potential reinforcement of biological race concepts without proven basis |

| Outcome Definition | Follow-up Requirements | 28% of CDIs involved follow-up for outcome determination | Potential skew based on socioeconomic status affecting follow-up capacity |

The over-reliance on predominantly White and male participant cohorts means that CDIs may perform suboptimally for women, gender-diverse individuals, and racial minorities [15] [22]. Furthermore, the explicit use of race as a biological variable in 13 identified instruments is particularly problematic, as it often lacks a robust scientific basis and may instead serve as a proxy for unmeasured social determinants of health [21].

Historical Context and Origins of Bias

The Legacy of Race Correction

The practice of race correction in clinical algorithms has deep historical roots, many originating in now-debunked scientific theories. A seminal example can be traced to the mid-19th century with the invention of the spirometer by Dr. John Hutchinson [21]. This device was subsequently co-opted by American physician Samuel Cartwright, who used it to compare lung function between enslaved Black Americans and free White Americans, incorrectly attributing the observed 20% lower lung function in Black individuals to innate biological inferiority rather than environmental factors and living conditions [21].

This flawed premise persisted for centuries and was formally encoded into medical devices and software in the 1970s, when a study in The International Journal of Epidemiology reported a 13% difference in lung function between Black and White asbestos workers without adequately accounting for social and environmental confounders [21]. These race-based adjustments became standard worldwide, artificially elevating the measured lung function for people identified as Black or Asian and consequently raising the threshold for diagnosis of lung disease, potentially leading to systematic underdiagnosis in these populations [21].

Gender Bias in Biomedical Research

Gender bias similarly stems from long-standing historical practices in biomedical research. The field has traditionally relied on the male body as the default model, often treating women as "smaller men" [22]. This approach has created significant knowledge gaps in sex- and gender-specific health responses and outcomes. A well-documented manifestation of this bias is in cardiovascular disease, where women have historically been offered fewer diagnostic tests and medications than men, contributing to poorer healthcare outcomes [22].

The conceptualization of gender bias itself lacks clarity in healthcare literature, with outdated definitions often failing to consider modern gender constructs and intersectionality [22]. This definitional ambiguity complicates efforts to systematically identify and address such biases in CDI development.

Modern Data Science and the Reproduction of Structural Bias

Contemporary data science practices often perpetuate these historical biases under the guise of technological neutrality. This phenomenon has been powerfully critiqued as "The New Jim Code" - describing how seemingly progressive technologies can reinforce racial hierarchies [21]. In the context of clinical algorithms, this manifests in models that may exclude race as an explicit variable but still encode racial bias through proxies such as ZIP codes, insurance status, or comorbidity patterns that correlate with racially segregated neighborhoods or disparities in healthcare access [21].

Clinical datasets themselves are not neutral; they encode historical disparities in healthcare access, diagnosis, and treatment. When these datasets train machine learning models without critical scrutiny, they risk automating and amplifying existing inequities [23]. This is particularly problematic with proprietary algorithms and opaque AI systems where lack of transparency limits scrutiny and accountability [21] [23].

Table 2: Sources of Bias in Clinical Machine Learning Models [23]

| Bias Source | Stage of ML Development | Description | Example in Clinical Context |

|---|---|---|---|

| Historical Bias | Data Collection | Reflects pre-existing societal and healthcare disparities | Underdiagnosis of certain conditions in specific demographic groups creates biased training data |

| Representation Bias | Data Selection | Underrepresentation of certain populations in datasets | Lack of diverse racial groups in medical imaging datasets |

| Measurement Bias | Data Collection | Unequality in measurement quality across groups | Pulse oximeters less accurate on darker skin tones |

| Algorithmic Bias | Model Training | Model optimization favors majority groups | Model overfits to well-represented demographics at expense of minority groups |

| Evaluation Bias | Model Validation | Test sets lack diversity | Model performs well on White male cohort but poorly on other groups |

| Deployment Bias | Model Implementation | Context mismatch between development and use settings | Model trained on academic medical center data deployed in community clinics |

The following diagram illustrates how bias propagates through the clinical algorithm development lifecycle:

Bias Propagation in Clinical Algorithm Development

Experimental Protocols for Bias Assessment

Risk of Bias Assessment Tools

Systematic assessment of potential biases in clinical instruments and the studies that validate them requires standardized methodologies. Several validated tools exist for this purpose, each with specific applications and domains:

- Cochrane Risk of Bias (RoB) Tool: Assesses selection, performance, detection, attrition, reporting, and other potential bias sources in randomized controlled trials (RCTs) [12].

- ROBINS-I (Risk of Bias In Non-randomised Studies of Interventions): Evaluates bias in non-randomized intervention studies across confounding, participant selection, intervention classification, deviations from intended interventions, missing data, outcome measurement, and selective reporting [12].

- ROBINS-E (Risk Of Bias In Non-randomised Studies - of Exposures): Specifically designed for observational epidemiological studies, evaluating confounding, selection, classification, departures, missing data, measurement, and reporting biases [12].

- Newcastle-Ottawa Scale (NOS): An eight-item instrument for assessing the quality of non-randomized studies in clinical trials across three categories: selection, comparability, and exposure or outcome [12].

Algorithmic Fairness Metrics

For clinical machine learning models, specific fairness metrics are necessary to quantify potential racial and gender bias:

- Equal Opportunity Difference: Measures the difference in true positive rates between protected and unprotected groups [23].

- Disparate Impact: Calculates the ratio of the rate of favorable outcomes for the protected group versus the unprotected group [23].

- Average Odds Difference: Compares the average of true positive and false positive rates between groups [23].

- Calibration: Assesses how well the model's predicted probabilities match actual outcome rates across different groups [23].

These metrics should be applied consistently during model development and validation phases, with particular attention to intersectional analyses that consider the compounded effects of multiple protected attributes (e.g., Black women) [22] [23].

Systematic Bias Correction Model

Advanced statistical approaches can help identify and correct for systematic bias in research data. A nonlinear B-spline mixed-effects model provides one such methodology for detecting and correcting systematic sample bias in timecourse data, such as longitudinal clinical studies or metabolomic analyses [24].

The model formulation accounts for the concentration of each metabolite (or clinical measurement) at time point i as:

y_ij = S_i × f_j(t_i) + ε_ij

Where:

y_ij= measured concentration of metabolite j at time iS_i= scaling term representing systematic bias across all metabolites in sample if_j(t_i)= bias-free B-spline curve for each metabolite jε_ij= random error term, assumed to be normally distributed [24]

The systematic bias term S_i is estimated as a nonlinear random effect assumed to be normally distributed with an expected value of 1 (representing no error). This approach can correct systematic biases of 3-10% to within 0.5% on average for typical data [24].

The following workflow diagram illustrates the implementation of this bias detection and correction method:

Systematic Bias Detection and Correction Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Bias Assessment and Mitigation in Clinical Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| Cochrane RoB Tool | Assesses risk of bias in randomized controlled trials | Systematic reviews of clinical studies [12] |

| ROBINS-I | Evaluates bias in non-randomized intervention studies | Observational studies of treatment effects [12] |

| ROBINS-E | Assesses bias in observational epidemiological studies | Environmental exposure and health outcome studies [12] |

| robvis | Visualizes risk-of-bias assessments | Creating traffic light plots and summary plots for publications [12] |

| Nonlinear B-spline Mixed-Effects Model | Detects and corrects systematic sample bias | Timecourse metabolomics and longitudinal clinical data [24] |

| AI Fairness 360 (AIF360) | Comprehensive suite of fairness metrics and algorithms | Evaluating and mitigating bias in machine learning models [23] |

| Diverse Population Datasets | Representative data across racial, gender, and socioeconomic groups | Training and validating clinical algorithms [15] [25] |

| Community Engagement Frameworks | Participatory research methodologies | Ensuring inclusive CDI development, particularly for marginalized groups [25] |

Bias Mitigation Strategies

Preprocessing, In-Processing, and Postprocessing Techniques

Effective mitigation of racial and gender bias in clinical algorithms requires interventions throughout the development pipeline:

- Preprocessing Methods: Techniques applied to training data before model development, including resampling underrepresented groups, generating synthetic data for minority populations, and adjusting data labels to remove biased correlations [23].

- In-Processing Methods: Modifications to the model training process itself, such as incorporating fairness constraints into the objective function, using adversarial debiasing to remove protected attribute information from representations, and employing regularization techniques that penalize disparate outcomes [23].

- Postprocessing Methods: Adjustments to model outputs after training, including group-specific threshold tuning to ensure equalized odds or demographic parity, and calibration of predicted probabilities across different demographic groups [23].

Transitioning to Race-Neutral Equations

Several medical specialties have begun transitioning from race-based to race-neutral clinical algorithms with promising results. In pulmonary function testing, the European Respiratory Society (ERS) and American Thoracic Society (ATS) have endorsed new Global Lung Function Initiative (GLI) equations that entirely remove race as a factor [21]. Similar transitions have occurred in nephrology, where race-based adjustments in estimated glomerular filtration rate (eGFR) equations have been removed, eliminating artificial elevation of kidney function values for Black patients that previously delayed disease diagnosis and transplant eligibility [21].

These transitions require careful consideration of the clinical context and potential unintended consequences, but generally demonstrate improved equity without compromising diagnostic accuracy [21].

Inclusive Data Collection and Community Engagement

Fundamental to bias mitigation is addressing the root cause: unrepresentative data and exclusionary development processes. Researchers should prioritize:

- Comprehensive Demographic Data Collection: Gathering robust self-reported racial, ethnic, and gender identity data, while ensuring appropriate informed consent and privacy protections [22] [26].

- Intentional Representation: Oversampling historically underrepresented groups to ensure sufficient statistical power for subgroup analyses [15].

- Community-Engaged Research: Adopting participatory methodologies that actively involve marginalized communities throughout the research process, such as the communicative methodology which emphasizes egalitarian dialogue and recognizes cultural intelligence [25].

Documenting and addressing racial and gender bias in clinical decision instrument development is both an ethical imperative and a scientific necessity. The quantitative evidence reveals systematic skews in participant demographics, geographical representation, and outcome definitions that threaten the validity and equity of these tools [15]. Historical practices of race correction and gender exclusion continue to influence modern algorithms, often reproduced through seemingly neutral data science practices [21].

Moving forward, researchers and drug development professionals must implement comprehensive bias assessment protocols throughout the CDI development lifecycle, from initial data collection through model deployment. This includes utilizing standardized risk of bias tools, applying appropriate fairness metrics for machine learning models, employing statistical methods for bias detection and correction, and adopting community-engaged approaches that center equity [12] [25] [24]. Only through such rigorous, transparent, and inclusive methodologies can clinical decision instruments fulfill their promise of improving patient care for all populations, without perpetuating the very disparities they aim to reduce.

In the pursuit of scientific truth, analytical instruments are our most trusted tools. Yet, these very instruments can harbor hidden systematic biases that distort measurements, compromise data integrity, and ultimately perpetuate health inequities. Systematic bias, or method bias, refers to a consistent, directional deviation from the true value, often arising from flaws in instrument design, calibration, or data processing algorithms [27]. Unlike random error, which averages out over repeated measurements, systematic bias skews results in a predictable direction, making its effects particularly insidious and difficult to detect without rigorous validation.

In the context of health research, these are not merely technical problems; they are ethical imperatives. When biases in analytical instruments remain unaddressed, they can systematically disadvantage specific population groups, reinforcing existing disparities in diagnosis, treatment, and drug development [28] [29]. This paper provides a technical examination of instrument bias, exploring its mechanisms, its role in exacerbating health inequities, and the experimental methodologies researchers can employ to identify and correct for it, thereby fostering more equitable health outcomes.

Conceptual Framework: Defining Instrument Bias

A Metrological Foundation

At its core, the bias of a measurement result is understood through a fundamental model:

x̂ = x + δ + ε

Here, the true value of a measurand, x, is estimated by x̂, which differs from it by a systematic component (bias, δ) and a random component (ε). The random error is typically normally distributed with an expectation of zero, meaning multiple measurements will center on (x + δ), not on the true value x [27]. This systematic component, δ, is the instrument bias.

Typology of Instrument Biases in Health Research

Instrument bias in health research manifests in several key forms, each with distinct implications for equity:

- Technical Bias: Arises from physical instrument limitations, improper calibration, or reagent variability. For example, a biochemical analyzer uncalibrated for certain analyte levels may consistently under-measure concentrations in samples from individuals with specific physiological conditions [27].

- Algorithmic Bias: Embedded in the software and statistical models used to process raw data. This is prevalent in AI-driven diagnostic tools and genomic analyzers. If a model is trained on non-representative data (e.g., predominantly from one ethnic group), it will perform poorly on underrepresented groups, creating a ripple effect of misdiagnosis [30].

- Interpretive Bias: Stemming from the analytical concepts and risk proxies built into an instrument's output. For instance, a risk-prediction tool might use a proxy like "prior healthcare contacts," which is not a direct measure of health but is correlated with factors like poverty and systemic lack of access to preventative care. This can unfairly label certain communities as "high-risk" [29].

Table 1: A Typology of Instrument Biases in Health Research

| Bias Type | Source | Example in Health Research | Potential Equity Impact |

|---|---|---|---|

| Technical | Instrument calibration, reagent variability | Pulse oximeters providing inaccurately high oxygen saturation readings for patients with darker skin [31]. | Delayed or withheld treatment for patients from specific racial/ethnic groups. |

| Algorithmic | Unrepresentative training data, flawed model assumptions | An AI skin cancer detector trained primarily on images of light skin, reducing accuracy for darker skin tones [30]. | Lower diagnostic accuracy and poorer health outcomes for underrepresented populations. |

| Interpretive | Use of biased risk proxies or interpretive concepts | Child protection risk assessment tools using "parental prior arrests" as a risk proxy, disproportionately impacting communities subject to over-policing [29]. | Reinforcement of structural inequities and over-surveillance of marginalized groups. |

The Ripple Effect: From Measurement Error to Health Inequity

Case Studies in Diagnostic and Clinical Research

The path from a biased instrument to a health disparity is often direct. Quantitative findings demonstrate the scale of the problem:

- Pulse Oximetry: During the COVID-19 pandemic, research revealed that Black and Hispanic patients were significantly less likely than White patients to receive pain medication for acute injuries like bone fractures. When they did receive analgesics, they were prescribed lower dosages despite reporting higher pain scores [28]. This disparity, driven by implicit bias, could be exacerbated by diagnostic tools that are less accurate for certain skin tones.

- Predictive Policing in Public Health: So-called "predictive policing" tools used in some criminal justice systems often rely on historical arrest data. Because this data reflects existing patterns of racial profiling, the algorithms reinforce and amplify these disparities, leading to the disproportionate targeting of minority communities. The application of similar logic to public health surveillance (e.g., predicting "hotspots" of disease or neglect) risks creating a vicious cycle of over-surveillance and inequitable resource allocation [30].

- Generative AI in Medicine: A 2023 analysis of over 5,000 images generated by Stable Diffusion found that the tool simultaneously amplified gender and racial stereotypes. When generating images of workers, it associated high-paying jobs with men and particular ethnicities, while low-paying jobs and crime-related images were linked to other groups. If such biased AI is used in medical education or patient communication materials, it can perpetuate harmful stereotypes that affect clinical judgment [30].

Table 2: Quantitative Evidence of Bias in Healthcare and Technology

| Domain | Findings | Source |

|---|---|---|

| Pain Management | Black and Hispanic patients are significantly less likely to receive pain medication for acute fractures. When treated, they receive lower dosages despite higher pain scores. | [28] |

| Generative AI (Stable Diffusion) | An analysis of >5,000 images found the tool amplifies gender and racial stereotypes, misrepresenting professions and crime-related categories. | [30] |

| Māori Health Outcomes (NZ) | Māori people have 7.3 years lower life expectancy and experience less access to investigations, interventions, and medicine prescriptions. | [28] |

| Child Protection (NZ) | Children in the most deprived decile were 21x more likely to be substantiated for abuse and 9.4x more likely to be in care than those in the least deprived. | [29] |

Mechanisms of Inequity: How Instrument Bias Propagates

Instrument bias creates a ripple effect through several interconnected mechanisms, as illustrated below.

Diagram 1: The Ripple Effect of Instrument Bias. This workflow shows how an initial instrument bias propagates through the research and healthcare system, creating a self-reinforcing cycle of inequity.

Experimental Protocols for Bias Identification and Mitigation

A Nonlinear B-Spline Mixed-Effects Model for Correcting Systematic Sample Bias

In metabolomics and other fields analyzing multiple metabolites or biomarkers simultaneously, systematic sample bias (e.g., from dilution, extraction, or normalization variability) can affect all measurements within a sample. The following protocol outlines a method to identify and correct for this bias.

1. Experimental Objective: To estimate and correct for sample-specific systematic bias in time-course metabolomic data, where bias influences all metabolites within a sample in a similar fashion.

2. Model Formulation:

The concentration of each metabolite j at time point i, y_ij, is expressed as:

y_ij = S_i * f_j(t_i) + ε_ij

where:

S_iis a scaling term representing the systematic bias for all metabolites in samplei.f_j(t_i)is a bias-free B-spline curve for metabolitejat timet_i.ε_ijis the remaining random error, assumed to be normally distributedN(0, σ_j²). The random effectS_iis assumed to be normally distributed with an expected value of 1 (signifying no error):S_i ~ N(1, τ²)[24].

3. Protocol Steps:

- Step 1: Data Collection. Collect time-course metabolomic data using standard analytical instruments (e.g., NMR, MS). Ensure the data set includes multiple time points and multiple detected metabolites.

- Step 2: Initial Estimation. For each time point

i, calculate an initial estimate of the biasS_iby ranking points according to the median relative deviation across all metabolites, following the process outlined in Sokolenko and Aucoin (2015) [24]. - Step 3: Threshold Application. Apply a threshold to determine which time points require a scaling term. The default model threshold is 50% of the estimated median average relative standard deviation of the measurement noise. This avoids spurious corrections where bias is minimal.

- Step 4: Ensure Unique Solution. Address the collinearity in the product